Abstract

The present study acts as preliminary research to examine the use of a socially assistive robot on the educational outcomes of children with mild and severe symptoms of autism spectrum disorder. Participants included three children, ages 5–7 years old, recruited from a public elementary school in the Northeastern United States. The study comprised two distinct conditions using an adapted alternating treatment design. First, a pre-assessment gauged participants proficiency in life skills and science vocabulary, with the aim of selecting of target sets for the intervention. In the human condition, participants engaged in two activities guided by a human instructor, while in the robot condition, they participated in two activities led by a robot instructor. Each condition had one set of life skills vocabulary and one set of science vocabulary. Finally, during the post-assessment phase, participants were re-evaluated using the vocabulary assessment and completed a social validity measure to indicate their preference for either the human or robot instructor. Two of the three children completed all conditions. Findings revealed that both participants successfully acquired all nine vocabulary words across both conditions. However, participants demonstrated faster mastery of the vocabulary in the human compared to the robot condition. Interestingly, despite this difference, both participants expressed a preference for the robot instructor over the human instructor.

Introduction

Autism Spectrum Disorder (ASD) refers to a neurological developmental disorder characterized by deficits in social communication, nonverbal communication, and the development and maintenance of relationships (American Psychiatric Association, 2013). The American Psychological Association classifies autism severity from level 1 to level 3, with level 3 requiring substantial support (American Psychiatric Association, 2013). As of 2020, 1 in 36 children is diagnosed with autism (Maenner et al., 2023), and 26.7% of these cases are classified as severe or profound (Hughes et al., 2023).

Educational Needs and Challenges in Special Education

The Individuals with Disabilities Education Act (2001) ensures free and appropriate public education (FAPE) for all students with disabilities. Such students benefit from evidence-based practices such as explicit instruction in one-to-one or small-group settings (Cannella-Malone et al., 2021) and interventions grounded in applied behavior analysis (National Standards Project, 2009), including discrete trial training (Leaf & McEachin, 1999), picture exchange communication systems (Lerna et al., 2014), and visual schedules (Macdonald et al., 2018). Schools must have well-trained educators who can deliver individualized instruction within structured routines (Hurwitz et al., 2022).

Unfortunately, schools often experience barriers in providing the intensive educational support students with severe symptoms of autism require. The roadblocks arise from both the perspective of the teacher and the child. Schools have observed growing concerns in hiring and maintaining teams of quality special education teachers. In a 2011-2012 and 2012-2013 survey, special education teachers reported a 15.6% turnover rate (Carver-Thomas & Darling-Hammond, 2017). In a longitudinal study of 47 special education teachers, none of the teachers assigned to special education classrooms in year one remained by year five (Hopkins et al., 2019). In a larger sample of 366 special educators from across the United States, Hester et al. (2020) found that the primary complaints revolved around being overworked, underpaid, and under-supported, with teachers citing responsibilities related to legal mandates as one of the most stressful aspects of the job. Fifty-two percent reported plans to leave the profession in the next five years, explaining that managing individualized education programs, large workloads, and student support fuel their decisions.

Even when schools with a strong teaching staff, students may still face challenges that hinder educational progress, mainly due to a lack of attention and motivation. For example, when teachers successfully evoke eye contact from a student with autism before sessions begin, students demonstrate improved skill acquisition (Silva & Fiske, 2021). Yet, limited eye contact during social situations is a core characteristic of autism, and many educators struggle to elicit it. Similarly, student motivation often proves difficult to establish without additional reinforcers.

However, identifying effective reinforcers for students with autism is often complex, as many commonly used items or activities fail to function as motivating stimuli (Leaf et al., 2012).

Role of Socially Assistive Robots in Autism Intervention

Researchers of human-robot interaction (HRI) propose that socially assistive robots could support teachers, families, and behavioral practitioners in providing interventions for children with autism (Dickstein-Fischer et al., 2018; Kim et al., 2012). The socially assistive robots can take various forms, including humanoid models like Nao (Rakhymbayeva et al., 2021; Rudovic et al., 2017), Kasper (Mengoni et al., 2017; Robins & Dautenhahn, 2014), and Kebbi (Halkowski et al., 2025), animal-like designs such as KiliRo (Bharatharaj et al., 2017) and Keepon (Costescu et al., 2016), and toy-like robots like Cozmo (Ghiglino et al., 2021). A systematic review of 38 studies found robotic interventions effectively improved behavioral aspects such as, imitation (Mengoni et al., 2017), turn-taking (Taheri et al., 2018), eye contact (Yun et al., 2016), emotion recognition (Bharatharaj et al., 2017), and joint attention (Ghiglino et al., 2021), highlighting their potential to enhance social skills in children with autism (Alabdulkareem et al., 2022).

Application of SARs in Educational Settings

Socially assistive robots (SARs) may help address barriers to high-quality instruction, including teacher shortages, lack of trained staff, and student engagement. Preprogrammed robots have the potential to supplement 1:1 instruction, allowing teachers to focus on other students or responsibilities while ensuring consistent instruction without breaks.

Research suggests that socially assistive robots increase student attention and eye contact. In a multiple-baseline study, Halkowski et al. (2025) found that when four children receiving early intervention were taught by a human, eye contact was minimal, but when a robot was introduced, students-maintained eye contact throughout the session, with some even waving or hugging the robot at the end. Kim et al. (2013) observed increased engagement in children with autism interacting with a robot, comparable to neurotypical peers. Fachantidis et al. (2020) found that students with autism displayed more eye contact, approach behaviors, and verbal interactions when engaging with a robot rather than a teacher. Similarly, Desideri et al. (2018) demonstrated that using a robot to prompt requests for music led to increased engagement and spontaneous verbalizations.

Aligning HRI with Special Education Research Best Practices

Socially assistive robots show considerable promise for supporting children with autism. However, for the field of human-robot interaction to position these tools as legitimate, evidence-based interventions, researchers must address three key priorities: (1) include participants across the full spectrum of autism, (2) adopt more rigorous experimental methodologies, often favoring single-case designs over traditional group designs, and (3) measure academic outcomes in addition to social engagement.

While some SARs are intended for educational use, most existing research has focused on feasibility and social interaction. Early studies emphasized robot design and user engagement over targeted skill acquisition or behavior change (Begum et al., 2016). For example, Albo-Canals et al. (2018) used KIBO, a coding robot, with 12 children with severe autism and reported increased engagement in 10 participants. Teachers noted that students interacted more with the robot than during typical classroom activities. Although these findings are promising, increased engagement alone does not establish educational effectiveness. Future studies must prioritize direct measures of student learning to build an evidence base for instructional use.

Equally important is the inclusion of individuals across the full autism spectrum, particularly those with severe autism and co-occurring communication disorders, who are frequently excluded from research. In a systematic review, Begum et al. (2016) found that only 2 of 22 studies regarding robots and autism included participants with severe autism, representing just 4 of the 204 total individuals reviewed. The exclusion mirrors a broader trend in autism research, with the Lancet Report (Lord et al., 2022) highlighting the underrepresentation of individuals with severe symptoms. Advocacy groups, such as the IACC and the International Society of Autism Research, have called for increased inclusion of nonverbal individuals (McKinney et al., 2021). A meta-analysis of 301 studies found that 94% of participants either had no intellectual disabilities or lacked IQ data (Russell et al., 2019), raising concerns about sampling bias and generalizability (Rødgaard et al., 2022).

Finally, methodological rigor remains a persistent concern in SAR research. Although some studies employ experimental designs, many fail to incorporate core features such as comparison conditions, control groups, or adequate sample sizes (Begum et al., 2016). Given the heterogeneity of learners with severe autism, large group designs may be impractical. In such cases, single-case experimental designs offer a viable and rigorous alternative. Single-case designs use repeated measures of skill or behavior across conditions to evaluate intervention effects (Kazdin, 2011). Because they support experimental control with small samples and can be tailored to individualized educational settings, single-case designs represent a powerful tool for advancing SAR research in applied contexts.

Purpose

This preliminary study serves as a first step in evaluating the potential of robotic instruction to improve educational outcomes for children with severe autism, an area that remains largely unexplored in behavior-analytic research. By directly measuring academic skill acquisition, the current study moves beyond engagement metrics to assess the effectiveness of socially assistive robots in an applied setting.

The selected robot, Kebbi, delivers structured lessons via a tablet interface. Although Kebbi has appeared in prior research (Halkowski et al., 2025), its effectiveness for promoting academic learning remains untested. To strengthen the evidence base for socially assistive robotics in education, the current study includes participants with severe autism and employs a rigorous experimental methodology. Specifically, an adaptive alternating treatment design enables the establishment of experimental control within a small sample, aligning with best practices in individualized intervention research. By prioritizing instructional outcomes, including a historically excluded population, and using a methodologically sound design, this study advances the integration of SAR within behavior-analytic practice. It aims to generate actionable insights regarding both the benefits and practical challenges of implementing SAR in educational settings. The central research question guiding this work is: What are the comparative effects of socially assistive robotic instruction and human-delivered instruction on the acquisition of science and life skill vocabulary in children with severe autism?

Method

Participants and Setting

An elementary school in the northeast served as the experimental location for the study. Each session occurred in a private office space with a table, two chairs, and two video cameras for data collection redundancy. For inclusion, the participants must have been: (1) Between the ages of 5–8 years old, (2) Diagnosed with autism, (3) Present as minimally verbal or non-verbal, (4) Ability to use a touchscreen device, and (5) Ability to complete 1-step task demands.

The elementary teachers in the school selected potential participation based on the inclusionary criteria. Parents received information about the study and, if interested, received a consent form to allow their child to participate.

Demographic Data

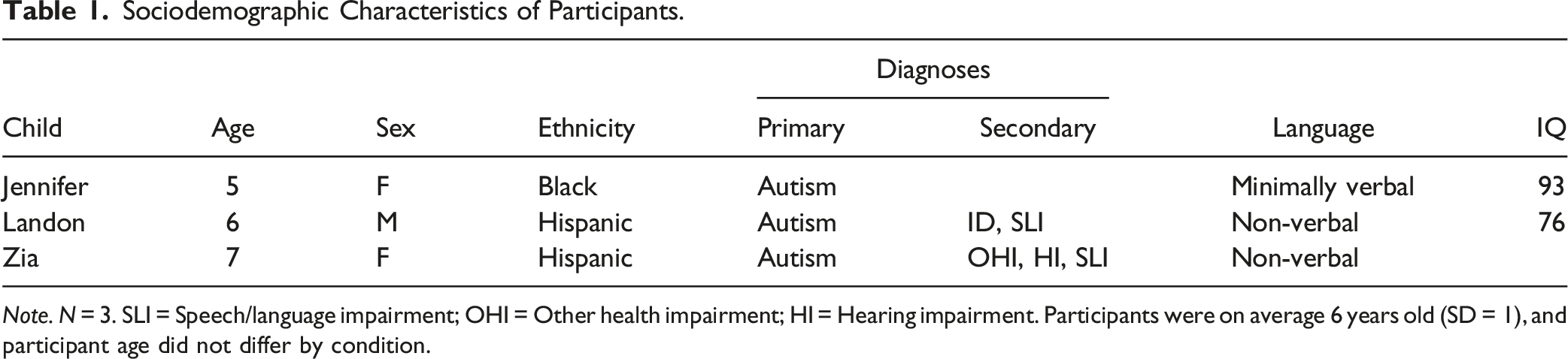

Sociodemographic Characteristics of Participants.

Note. N = 3. SLI = Speech/language impairment; OHI = Other health impairment; HI = Hearing impairment. Participants were on average 6 years old (SD = 1), and participant age did not differ by condition.

Materials

Robot system

The robot system consists of a socially assistive robot, a tablet for the child, and a computer for the human instructor. The robot, Kebbi, is a 32 cm tall social assistive robot equipped with a face, two arms, and a torso (see Figure 1). Its face is a digital screen capable of displaying eyes and a mouth that animate to convey emotions and speech. The robot features nine degrees of freedom: torso (2), right shoulder (2), left shoulder (2), and neck (2) (Matarić, 2017). The system is operated by staff using a personal laptop, which allows them to load short educational activities from MOVIA’s proprietary WOZ™ Teacher’s Aide software into a sequence to create a customized learning session for each student. Once initiated, the robot autonomously interacts with the student without requiring additional input from the staff. However, the system incorporates a “Wizard of Oz” functionality, enabling staff to intervene during the session. This feature allows for the delivery of customized or preprogrammed phrases, such as encouragement or calming strategies (e.g., guided deep breathing exercises), to modulate student behavior as needed. Kebbi from Movia robotics Inc

WOZ™ Teacher’s Aide Software (Curriculum)

The Kebbi system includes a Lenovo tablet, a laptop, and MOVIA’s proprietary WOZ™ Teacher’s Aide software, preprogrammed with activities for educational, social, emotional, and life skill goals. This study used two life skills activities (i.e., identifying U.S. currency coins and methods of transportation) and two science activities (i.e., biomes and plant cycles). Each activity has an introduction and an assessment.

In part one, the robot introduced each stimulus (i.e., penny, nickel, dime, quarter) one at a time and asked the child to touch them on the screen. In part two, the robot displayed three target stimuli on the screen and asked the student to select one. For example, the tablet displayed a penny, a nickel, and a quarter, and the robot instructed the child to “Touch the nickel.” The Teacher’s Aid software repeated the process until the robot assessed the child’s ability to identify all stimuli in the set.

Instructional Script

Each activity included a pre-programmed instructional script created by the Movia design team. In the robot condition (intervention), the robot delivered the script to the child. In the human condition (control), a human delivered the script to the child. We created the human condition script by recording the robot conducting the activity and transcribing its words. The script included the system’s design trees, detailing the robot’s responses to correct and incorrect child responses.

Visual Schedule

Each child received a visual schedule with pictures representing their assigned activities. As each activity was completed, the human instructor moved its image to the “all done” section at the bottom of the board. Figure S1 shows an example of a visual schedule. The visual schedule had two purposes: to improve participation and decrease challenging behaviors (Lequia et al., 2012; Zimmerman et al., 2017), and to help the children learn the association between the pictures and activities, which was later used in the social validity assessment.

Camera Equipment

Two cameras recorded each session to ensure data accuracy and incorporate redundancy in data collection. One camera was positioned behind the child on a tripod to record the material in front of them and their touch responses. The second camera was placed on the desk facing the child to record their facial expressions (see Figure S2). The children’s families completed consent forms allowing the research team to record the sessions and use the recordings post-study for research dissemination.

Dependent Variable

The dependent variable included correct and incorrect responses to the review questions at the end of each activity. The life skill activities had four review questions, and the science activities had five, for a total of nine potential correct responses per condition per session. During the question section, the instructor (human or robot) presented three target stimuli and asked the child to select one. A correct response was signaled by tapping the image on the screen in the robot condition or on the index card in the human condition. An incorrect response occurred when the child selected a stimulus other than the one requested by the instructor.

Procedures

The study had three phases. In the first phase, each child completed a one-time pre-assessment to ensure they had not yet mastered the target stimuli. The second phase was the primary intervention, consisting of two conditions (human and robot). In the final phase, each child completed a social validity assessment.

Pre-Assessment

Before the study began, each child received a pre-assessment to determine their performance on each vocabulary set (identifying coins, transportation, biomes, and stages of a plant cycle). We created flashcards for each item using the same images that appeared on the tablet in the intervention condition. During the session, the instructor presented each item in groups of three and asked the child to select one. An assessment sheet for each stimulus group ensured equal distribution of distractor stimuli across trials.

Intervention

The intervention had two conditions, with children completing both conditions each session. An adaptive alternating treatments design randomized the presentation of the conditions to avoid a serial order effect. Each condition included one life skills activity (identifying U.S. coins or modes of transportation) and one science activity (identifying biomes or plant life cycles). Table S1 shows which activities each participant completed for each condition. Each child received a visual schedule with images representing their activities, following a standard discrete trial training format used in applied behavior analysis (Leaf & McEachin, 2016).

In the robot condition, the child completed two activities on the tablet with instruction from the robot. In the human condition, the child completed two activities using flashcards with instruction from the researcher. The flashcards had the same images as the robot’s tablet display. During the human condition, the robot was removed from the child’s gaze. The instructor read the instructional script exactly as the robot presented it and terminated a condition if the child either (a) failed to respond after three prompts or (b) left the table and refused to sit after three prompts.

Social Validity Assessment

Social validity refers to a person’s acceptance and preference for an intervention’s procedures, goals, and outcomes (Alberto & Troutman, 2008; Callahan et al., 2017). To assess social validity from the children’s perspectives, we conducted a two-part post-study evaluation. First, we asked each student, “Do you want to complete an activity with the robot?” while gesturing toward the experimental room. If the student responded affirmatively, they were permitted to enter the room and begin the session.

Second, we conducted a multiple-stimulus preference assessment without replacement using visual schedules created with Picture Exchange Communication (PEC) cards. The available PECs included: one train card, one five-biomes card, one coin card, one plant cycle card, four human cards, and four Kebbi cards. Students were prompted to construct their activity schedule by responding to questions such as, “Which activity would you like to do first?” and “Which activity would you like to do second?” For each chosen activity, students were also asked to indicate a preferred activity partner by selecting either a human PEC or a Kebbi PEC. These PECs were identical to those used throughout the study, ensuring consistency and familiarity.

Treatment Integrity

We developed a procedural checklist for each session and video recorded the sessions to assess implementation fidelity. An independent observer reviewed the videos, calculating a procedural integrity score by dividing the number of steps implemented by the total number of steps and multiplying by 100. The procedural integrity score was 99%.

Accuracy

The Kebbi system automatically collected student responses to determine the exact number of times a behavior occurred. In tandem, we recorded the students’ responses on a data collection sheet and compared them with the system’s data to verify measurement accuracy (Johnston & Pennypacker, 2009).

Experimental Design

We used an adaptive alternating treatment design (ATD) for the study, allowing us to compare multiple instructional procedures on non-reversible behaviors (Wolery et al., 2014). Each condition ran for 9–10 days, exceeding the minimum required to meet WWC Pilot Single-Case Design Standards Without Reservations (What Works Clearing House). The study spanned two to three weeks, depending on students’ availability. The intervention had two conditions to evaluate robot instruction’s effectiveness on vocabulary acquisition. Randomization controlled for order effects by (1) randomly assigning activities to each participant and (2) counterbalancing the order in which each participant completed each condition. An ATD does not need a baseline to demonstrate experimental control (Cooper et al., 2020).

In single-case research, each condition must be independent and equivalent (Byiers et al., 2012). Our conditions met these criteria as the activities differed between the human and robot conditions for each participant, yet were similar enough to replicate the independent variable’s effect. All session components, such as the visual schedule, stimuli, task demands, and verbal reinforcers, were held constant to ensure equivalence. Ledford et al. (2019) recommend counterbalancing target sets across conditions and participants, so we assigned each target set to a distinct condition across participants (Cariveau et al., 2022) and did not mix stimuli to analyze task difficulty.

Group one had two categories of activities: life skills and science, each consisting of two activities. Life skills included US coin ID and transportation, while science covered the plant life cycle and identifying five biomes. Each session had one science and one life skills activity, resulting in four possible combinations: (a) transportation and plant life cycle, (b) transportation and five biomes, (c) US coin ID and plant life cycle, (d) US coin ID and five biomes.

The study contains two conditions: the robot and the human. Table S1 shows the distribution of the target sets to a unique condition and across participants.

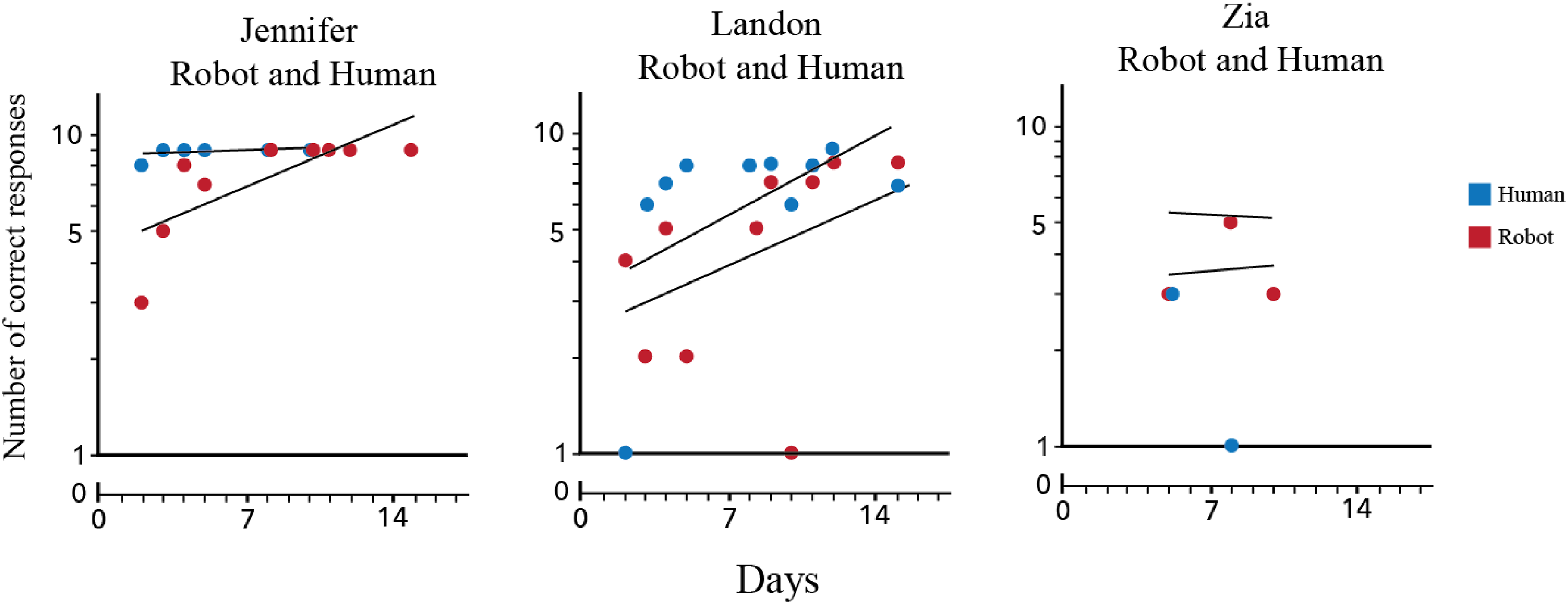

Data Display

We collected and displayed the data on a Standard Celeration Chart segments (SCC; Wertalik & Kubina, 2018). Each segment showed successive calendar days along the horizontal axis and proportional behavior along the vertical axis, allowing visual analysis of level, level multiplier, and celeration. The level describes the mean performance for correct and incorrect responses within a condition, assessed using the geometric mean (Kubina, 2019). For example, for the following hypothetical data (e.g., 5, 6, 3, 8, 9, 8) the multiplied product of the six data points equals 51,840. The sixth root of this product is 6.1, rounded down to 6.

The level comparison analysis shows the difference between the human condition level and the robot condition level, expressed using a level multiplier (Kubina, 2019; Wertalik & Kubina, 2018). The multiplier quantifies the difference between correct responses in both conditions. We calculated the level multiplier by dividing the larger value by the smaller value and using a multiplication sign if the robot condition shows an increase, or a division sign if it shows a decrease. For example, a level of 4 in the human condition compared to 6 in the robot condition yields 6 ÷ 4 = 1.5, resulting in a x1.5 level multiplier, indicating a 1.5 times greater level of responding in the robot condition.

Celeration values denote the overall trend in data, indicating growth, decay, or maintenance of behavioral patterns (Johnston & Pennypacker, 2009; Kubina, 2019). A division sign signals a downward trend, while a multiplication sign indicates growth. For example, x2.0 means the behavior doubled per week, while ÷2 means a 50% decay rate per week.

The celeration comparison analysis uses a celeration multiplier to compare the speed or learning rate between baseline and intervention. The process involves two rules (Wertalik & Kubina, 2018): (1) If the signs are the same (e.g., “x” and “x”), divide the larger value by the smaller value and use the sign indicating the direction of change from control to intervention. (2) If the signs differ (e.g., “x” and “÷“), multiply the two values and use the sign indicating the direction of change from control to intervention. For example, if the intervention celeration is x1.5 per week and the control is x1.15 per week, the celeration multiplier is x1.3 (1.5 ÷ 1.15 = 1.3), indicating a higher celeration rate for the intervention.

Results

Descriptive Outcomes

A total of 28 sessions occurred during the study. Jennifer and Landon completed one pre-intervention assessment session, about nine days of intervention, and one post-intervention assessment session. Zia completed one pre-intervention assessment session followed by four days of intervention.

Skill Acquisition

Jennifer

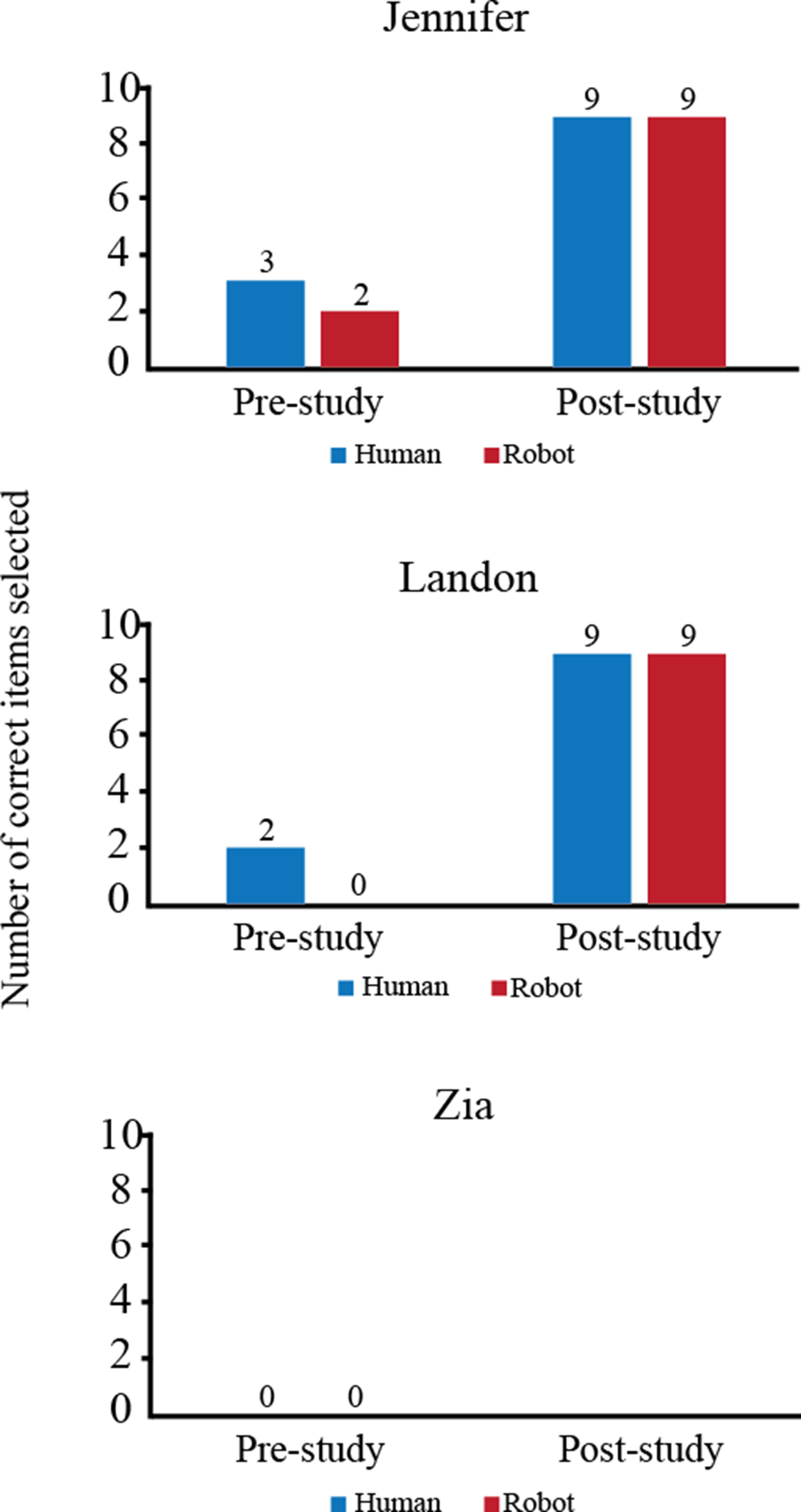

Jennifer’s human-instructed targets included transportation and plant cycles, while her robot-instructed targets covered coin identification and life biomes. In the pre-assessment, she correctly answered three human-set and two robot-set items. Post-study, she achieved 100% accuracy on both sets (se Figure 2). The results of the pre-assessment and post-assessment for each child.

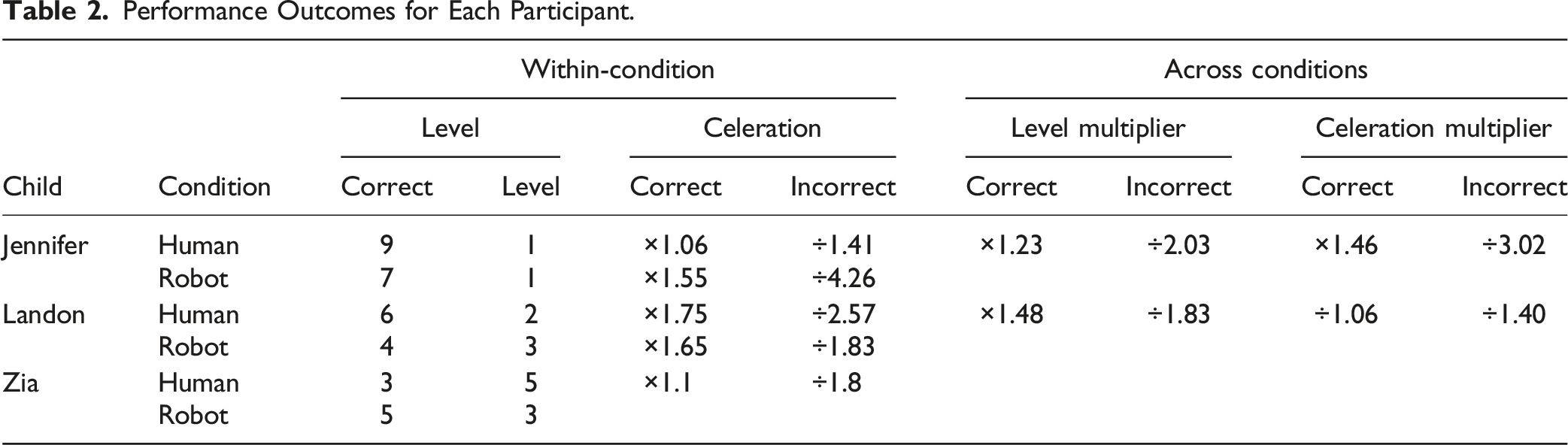

Performance Outcomes for Each Participant.

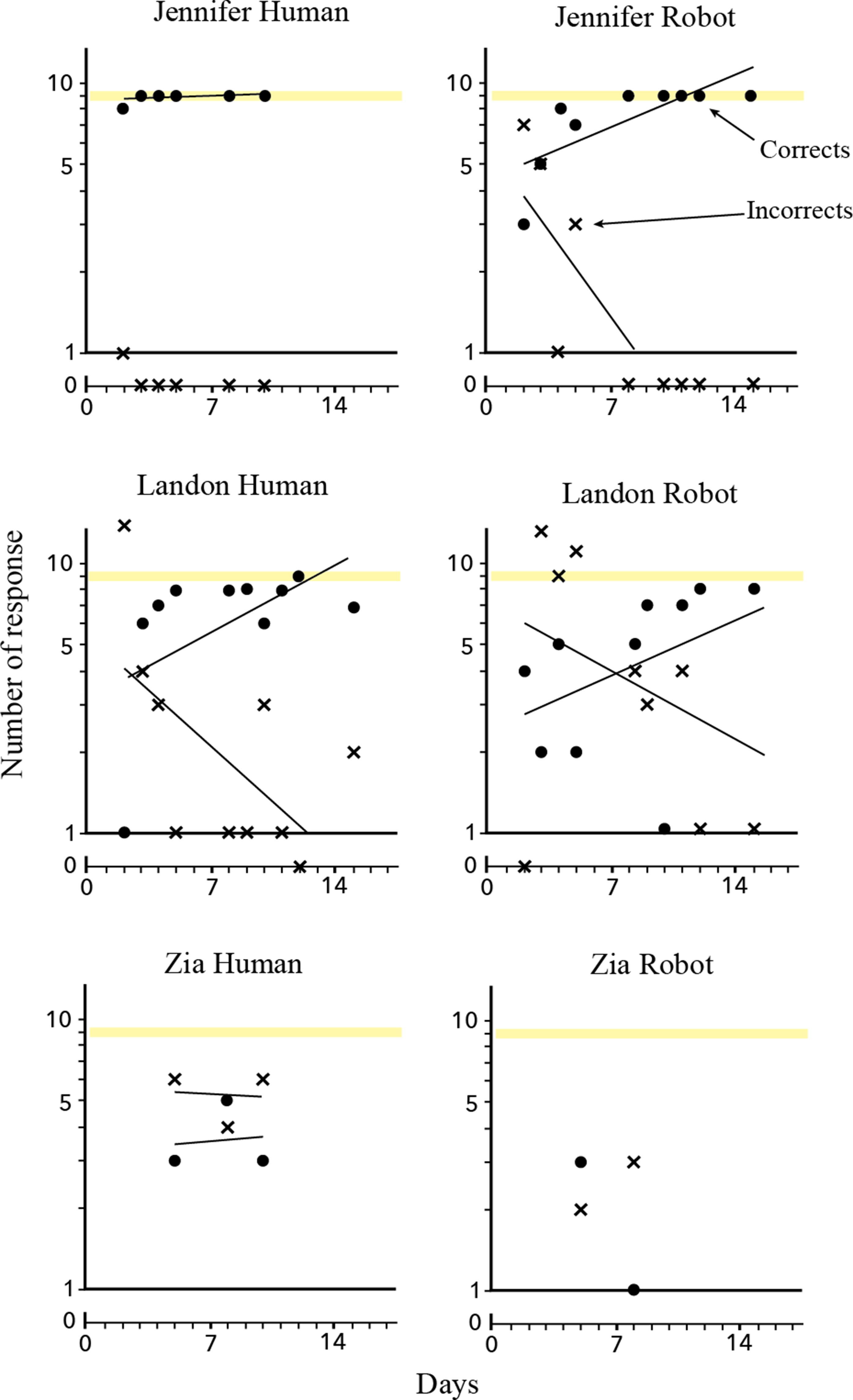

Total count of correct and incorrects for each child.

Overlap data for total count of correct responses for each child. Note. The data points that overlap are staggered to allow for visual analysis.

Landon

Landon’s human-instructed targets included coin identification and life biomes, while his robot-instructed targets covered transportation and plant life cycles. In the pre-assessment, he scored two correct and seven incorrect on human targets, and zero correct with nine incorrect on robot targets. Post-study, he achieved 100% accuracy in both conditions.

Over ten intervention days, Landon did not meet mastery criteria (five consecutive days at 100%). On average, he scored six correct and two incorrect responses with human instruction, and four correct and three incorrect with the robot. He required more redirect prompts during robot sessions, decreasing from eight prompts in session one to three in session ten. Landon terminated two sessions during the robot condition by refusing to respond to the instructor’s (i.e., the robot’s) task demand after three consecutive prompts. His celeration rates showed a ×1.75 per week increase in corrects and ÷2.57 decrease in incorrects during human instruction, compared to ×1.65 and ÷1.83, respectively, with the robot. Comparing conditions, the level multiplier showed correct responses were ÷1.48 lower, and incorrects ÷1.83 higher in the robot condition (see Table 3). His celeration rates showed a ÷1.06 difference in corrects and ÷1.40 in incorrects between human and robot instruction.

Zia

Zia’s human-instructed targets included transportation and life biomes, while her robot-instructed targets covered coin identification and the plant life cycle. Pre-assessment scores were zero correct and 9 incorrect for both sets. She participated in four intervention days but did not meet mastery criteria. On average, she scored 4 correct and 9 incorrect responses with human instruction and 2 correct and 3 incorrect with the robot. Her celeration in the human condition was ×1.1 per week for corrects and ÷1.8 for incorrects. Due to behaviors that ended two of her four robot sessions, only two data points were available, preventing celeration calculations or condition comparisons. Session-terminating behaviors included pushing the tablet away three consecutive times and leaving her seat, refusing to return after three prompts. Additionally, Zia did not complete the post-study assessment.

Social Validity

Social Validity Assessment

When asked if she wanted to work with the robot, Jennifer responded, “Yes, robot,” and willingly entered the experimental room. She constructed her visual schedule by placing the activities in the following order: biomes, coin identification, plant cycle, and transportation, opting to complete all with the robot. Similarly, when asked if he wanted to complete an activity with the robot, Landon nodded and pointed to room. Once inside, Landon built his visual schedule by placing the activity PECs in the following order: plant cycle, transportation, biomes, and coin identification. For each task, he selected the robot PEC to indicate his preference for working with the robot rather than the human instructor.

Zia did not complete the social validity assessment, as she exited the study after four sessions. Given her limited exposure to the material, we determined that she had not formed sufficient associations between the activity images and the activities themselves, thus lacking the necessary pre-requisite skills to complete the assessment.

Discussion

This study evaluated the efficacy of a socially assistive robot in teaching basic skills to children with severe autism and limited verbal abilities. Using an adaptive alternating treatment design, three children participated in life skills and science activities with both a human instructor using physical flashcards and a robot presenting digital images on a tablet. The primary goal was to compare skill acquisition across the two instructional conditions. Additionally, a post-study social validity assessment examined the children’s preference for the robot versus the human instructor.

Effects of Intervention on Skill Acquisition

The analysis of participant performance across conditions revealed faster skill acquisition and fewer errors in the human condition. However, an analysis of overlapping data points in the AATD showed significant overlap between conditions. For a functional relation to be established, data points must cluster separately, yet Jennifer and Landon’s performance showed near-complete overlap. In this study, the instructional conditions were clearly differentiated and threats to internal validity low, thus the results likely reflect that robot-led instruction was comparably effective to human instruction in promoting vocabulary acquisition for these participants.

Despite the initial latency in behavior change in the robot condition, participants showed steady improvement and ultimately achieved perfect scores on the final assessment, demonstrating successful vocabulary acquisition. While human instruction yielded slightly faster performance, these findings suggest socially assistive robots have potential in education and warrant further research into their role in instructional interventions.

Divergent Results and Possible Explanations

The results of the current study align with previous studies on the use of socially assistive robots in vocabulary acquisition in that previous research found socially assistive robots can successfully act as instructional agents. For example, Kory-Kory Westlund, Dickens, et al. (2017) found that under two types of robotic instruction, children were able to learn vocabulary words. Similarly, Alemi et al. (2014) found that children learned significantly more vocabulary from a robot-human instructional pair than with only a human. Both studies, however, included neurotypical children, limiting generalizability to the autism population.

A notable distinction in this study was the high number of redirection prompts required, particularly for Landon and Zia, which contrasts with prior studies like Kory-Kory Westlund, Jeong, et al. (2017), where redirection was not reported. While redirection was expected due to potential distractions, observations revealed that children were not distracted by external stimuli but instead hyper-focused on the robot itself, often disengaging from the instructional content on the tablet. This was especially evident with Landon, whose fixation on the robot led to the termination of two sessions due to non-responsiveness. These findings suggest that while socially assistive robots can enhance engagement, their presence may also create challenges in maintaining instructional focus, particularly in children with autism.

Factors Influencing Progress

Four factors likely influenced skill acquisition in this study: novelty effect, language ability, stimulus control, and HRI design.

Novelty Effect

All participants exhibited signs of a novelty effect, which may have impacted their ability to focus on instructional content. Jennifer reached mastery faster in the human condition, suggesting that the robot’s presence may have initially distracted her from the learning task. Landon frequently engaged in physical interactions with the robot, such as reaching or touching the robot, sometimes without looking at the instructional content. Zia seemed hindered most by the novelty of the robot, in that she exhibited an inability to complete intervention sessions due to her heightened focus on the robot, leading her to refuse engagement with the tablet and instead repeatedly reach and grab for the robot. These findings highlight the need for strategies to manage initial novelty effects when introducing socially assistive robots in educational settings.

Language Ability

Language complexity also played a role in participants’ progress. Research suggests that children with autism can benefit from longer, more natural speech patterns rather than simplified telegraphic speech (i.e., “more ball”), an argument for simplified instruction remains (Frost et al., 2022; Wolfe & Heilmann, 2010). Evidence of this may be present in the children’s progress on individual activities; the results indicate that instructional complexity may have hindered learning, as all three children struggled most with the plant cycle activity, which contained the highest number of words per script. This suggests that while more complex language structures can be beneficial, interventions should balance language complexity with individual needs to optimize learning outcomes.

Stimulus Control

Participants demonstrated stronger stimulus control in the human condition, likely due to the familiarity of traditional teaching formats. Stimulus control occurs when a stimulus reliably produces a response in its presence but not its absence (Halbur et al., 2021). In this condition, the setup was predictable: the instructor sat in front of the child, placed cards on the table, and provided prompts—an instructional format the children had previously encountered. By contrast, the robot condition introduced new variables, with instructional content delivered on a tablet rather than through direct human interaction. Jennifer and Landon eventually adjusted, but their learning was slower compared to the human condition, and Zia never established stimulus control with the robot.

HRI Design

The robot’s design likely influenced engagement and learning. Research shows that children respond better to robots that use personalized speech and an animated voice rather than a flat tone (Baxter et al., 2017; Kory Westlund, Jeong, et al., 2017). The Kebbi robot incorporated engaging features such as verbal praise using the children’s names and a high-pitched, positive tone, which may have contributed to learning gains.

However, there is room for improvement in other aspects of the design. For instance, providing children with a robot equipped with personalized instruction tailored to their needs can significantly benefit skill acquisition (Leyzberg et al., 2018). Additionally, curricula that provide extra practice for incorrect responses have been shown to be effective when paired with socially assistive robots (de Wit et al., 2018). Incorporating more target sets could allow researchers to better evaluate the robot’s effectiveness across different learning tasks.

Another potential improvement involves integrating gestures into instruction. While the Kebbi robot used gestures, they remained static and did not correspond to specific vocabulary words. Research indicates that pairing novel gestures with spoken words can enhance vocabulary learning (de Wit et al., 2018).

Child Perceptions of Intervention

At the end of the intervention, Jennifer and Landon participated in a social validity assessment, both choosing to work with the robot for all four activities. This aligns with previous research showing children prefer robotic instructors (Hong et al., 2016; Hsiao et al., 2015; Leyzberg et al., 2018). The Kebbi robot’s personalized feedback made both children turn to face the robot and exhibit signs of happiness. For instance, when Jennifer heard phrases such as “Great work, Jennifer!” she smiled, rocked in her chair, and responded, “Yes, robot!”— behavior consistent with studies showing children favor personalized interactions (Baxter et al., 2017).

How the children chose to organize the visual schedules provided further potential insight into their preferences. Jennifer chose to complete biomes and coin ID first, the two activities she typically completed with the robot. Similarly, Landon chose to complete plant cycle and transportation first, the activities he completed with the robot during the intervention. Interestingly, Landon selected the plant cycle activity first, despite routinely scoring the lowest on this activity. These findings contribute to HRI research by using an adaptive alternating design which took place across multiple sessions, reducing novelty effects that often influence preference in one-time exposure studies (Begum et al., 2016).

Limitations and Future Research

Despite valuable insights, this study has three key limitations. First, only two children completed the study. While adaptive alternating treatment designs allow for experimental control with small samples, a more diverse cohort would improve external validity.

Second, the instructional design limited the material to static picture-word associations without multiple exemplars. For example, children always saw the same train image when prompted to identify “train,” preventing assessment of generalization. Future research should test students with novel stimuli depicting different types of trains to determine if they learned the concept.

Third, participants were not given time to acclimate to the robot before instruction began. This lack of familiarization may have impacted their initial responsiveness. Future studies should consider including a brief introductory period in which children can interact with the robot prior to formal instruction.

Additionally, future research should explore three potential innovations in Kebbi’s design. First, personalized instruction tailored to children’s needs can significantly enhance skill acquisition (Leyzberg et al., 2018). Second, providing more practice for incorrectly answered items than correctly answered ones has also been shown to be effective when paired with socially assistive robots (de Wit et al., 2018). Third, adjusting Kebbi’s gestures so that it demonstrates novel gestures for each vocabulary word instead of remaining consistent regardless of spoken words since novel gestures have been shown to lead to better vocabulary acquisition (de Wit et al., 2018). Incorporating these design elements could improve educational robots like Kebbi.

The results from Zia warrant further investigation. Although companies encourage the use of socially assistive robots for children with autism, the robot’s success in this study varied considerably, partly due to slight differences in each child’s autism diagnosis. Researchers should accurately note the severity of their participants’ autism diagnoses to help future studies better understand their outcomes. Future research must investigate the use of social robots for children with a wide range of autism expressions, including those with severe ASD symptoms, to provide additional evidence on their potential as teaching assistants.

Conclusion

In conclusion, this preliminary study provides evidence for the efficacy of socially assistive robots for educational purposes in children with ASD by comparing human and robot instruction. Addressing the instructional needs of children with severe symptoms of autism requires substantial efforts and resources from educators and paraprofessionals. The findings of this research indicate that children can indeed acquire knowledge from a robot instructor suggesting that schools could consider such technology as a potentially useful educational tool. Notably, all three children also exhibited an immediate and persistent interest in the robot and could work with the robot for extended time without additional reinforcers. Nevertheless, it is crucial to acknowledge that the suitability of socially assistive robots may vary among students, and educators should carefully consider individual behaviors and prior language abilities before implementing such technology.

Supplemental Material

Supplemental Material - Socially Assistive Robotic Instruction for Children With Autism Spectrum Disorder

Supplemental Material for Socially Assistive Robotic Instruction for Children With Autism Spectrum Disorder by Madeline Jürgensen, Richard M. Kubina in Journal of Special Education Technology.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.