Abstract

Keywords

Introduction

Along with other advancements in educational technologies, students with special needs have used computer-based accommodations more frequently in the classroom than in the past. In the present study we found that a noticeable number of bundled accommodation packages used by Grade 10 students with mild intellectual disabilities who participated in Ontario’s mandatory literacy assessment in 2012–2013 involved a computer and/or assistive technology (63%). Appropriate accommodations play an important role in student learning, classroom instruction and assessments, because they ensure that students’ test results can be validly interpreted in terms of their actual knowledge and skills (American Educational Research Association et al., 2014; Sireci et al., 2005). These accommodations are meant to help students with special needs bypass various difficulties and provide access to the content for both instruction and assessment. In other words, appropriate accommodations do not guarantee increased student performance, but they can help ensure that low student performance is less likely to be attributed to a lack of access to instruction or test content.

Previous research has yielded inconclusive findings which reflect the variations among the studies, including the heterogeneity of participants’ learning characteristics, the age of the studied groups, the subject domains, the sample sizes, and the research methods employed (Laitusis, 2010; Lewandowski et al., 2008; Lovett & Leja, 2013). In addition, the type(s) of accommodations investigated in the previous research adds complexity to the effectiveness of accommodations regarding academic achievements. Accommodations often come in bundled packages that contain multiple combinations of accommodations for conventional paper-and-pencil assessments (e.g., the provision of extra testing time, computer, and individual test administration). However, far too little attention has been paid to a wide range of accommodations that have been offered to students with special needs who need to participate in large-scale assessments over the years (Elliott et al., 2001; Fletcher et al., 2006; Fuchs et al., 2005).

Studies on students’ perceptions of accommodations often involve those with high incidence disabilities such as learning disabilities and emotional or behavioural disorders (Kosciolek & Ysseldyke, 2000; Nelson et al., 2000), or mixed disability groups (e.g., intellectual disabilities, speech and language disorders, chronic health conditions) (Baker et al., 2009, 2012; Elliott & Marquart, 2004; Feldman et al., 2011). Moreover, how a student perceives accommodations may depend on the specific types of accommodations under investigation. Generally speaking, students felt that they were less anxious about tests while using test accommodations. They also preferred accommodated tests over non-accommodated testing. On the other hand, a mixed disability group (including one student with intellectual disabilities in Grade 9) in Baker & Scanlon, 2016 reported that they did not fully understand and were not aware of the accommodations they used for their instruction.

Given that provincial assessments collect varied information about test takers’ accommodation and disability status, the empirical data should be systematically evaluated in order to inform policy makers and stakeholders such as teachers, school administrators, and parents about assessment policies and practices in classrooms. Furthermore, results of comprehensive examinations conducted by Wehmeyer and his colleagues suggest that students’ varied characteristics substantially affected technology use (Wehmeyer et al., 2004, 2004b). They pointed out that limited expressive (oral and writing) and receptive (visual and reading) language and communication skills have significant impacts on how individuals use technology effectively and efficiently. Operating a computer system, software, or apps often requires a certain level of language and communication skills- varied designs and features of some of these technologies may be too complex to be understood and memorized by people with cognitive challenges. Features such as different layers, overlays, or windows that benefit typically developing users may overwhelm persons with intellectual disabilities as they place more demands on cognitive loads, processing speed, working and long-term memory (Wehmeyer et al., 2004, 2004b). Moreover, it is evident that these limitations of cognitive abilities and language skills also contribute to difficulties in math and reading (e.g., Child et al., 2019; Compton et al., 2012; Fletcher, 2005; Geary & Hoard, 2005; Swanson & Jerman, 2006).

The present study set out to investigate whether there were differential accommodation effects for students with mild intellectual disabilities writing math and literacy assessments. This group was chosen because they have received little attention in accommodation research (Davies et al., 2017; Hall et al., 2012; Thompson et al., 2018). These results suggest more attention should be paid to this student population. The research question examined in the present study is: How likely is it that students with mild intellectual disabilities would achieve the math and literacy standards if they received bundled accommodations that included computer and/or assistive technologies compared with non-accommodated counterparts in the same disability group?

In the present study, we hypothesized that accommodated students with mild intellectual disabilities would have the same likelihood of achieving the provincial standards as their non-accommodated counterparts with special needs. In contrast, the accommodations may not be beneficial if accommodated students have a significantly lower probability of meeting the standards than did the non-accommodated peers and vice versa. In other words, we would conclude that a disability group benefited from accommodations if they had a better chance of meeting the provincial standards compared with their non-accommodated peers in the same disability group. The evaluation conducted in the present study can help investigate the effectiveness of accommodation policies and assessment practices of a variety of computer-based accommodations. Although students may use a wide range of accommodations, this paper focuses on computer-based accommodations, especially for those that have been commonly used by students with mild intellectual disabilities.

Review of Computer-Based Accommodations

Because students may read and/or respond to learning materials or test items using a computer and assistive technology, the research on computer administration and assistive technology (e.g., speech-to-text software, text-to-speech software, a wide variety of mobile and tablet-based devices or applications) is growing exponentially.

A large body of evidence, derived primarily from intervention studies, has reported that high- and low- tech strategies or devices are beneficial for students with intellectual disabilities in completing various vocational tasks (e.g., packing, table setting) (Davies et al., 2001, 2002a, 2002b, 2003; Copeland & Hughes, 2000; Lancioni et al., 2000; Lancioni et al., 1998; Lancioni et al., 1999), developing social skills (Dattilo & Camarata, 1991; Embregts, 2002; Glaser, Rieth, & Kinzer, 1999), as well as improving functional daily living skills at home or community environments (e.g., cooking, cleaning, grocery shopping) (Ayres & Langone, 2002; Bouck et al., 2012; Bouck et al., 2013; Hucherson et al., 2004; Singh et al., 1995; Trask-Tyler et al., 1994).

A large body of literature on computer-assisted instruction (CAI) produced promising results that supported the idea of the effectiveness of this pedagogy to teach students with intellectual disabilities how to read and spell (Cullen et al., 2013; Mechling et al., 2007; Wehmeyer et al., 2004a). The effectiveness of CAI for basic math skills such as addition and subtraction, and word problems was also examined- although the evidence is sparse and inconclusive (Fitzgerald et al., 2008). In addition, a more recent study on the CAI was conducted to fill the gap in research on teaching science to students with intellectual disabilities and autism spectrum disorders (Smith et al., 2013). It concluded that students who received the CAI intervention were able to use science terms in the inclusive classroom. The study of Davies et al. (2017) developed a cognitively accessible multimedia computer piece of software for adults and adolescents with intellectual disabilities for two assessments of functional skills- transportation readiness and money skills. Their findings indicate that participants who used the software made significantly fewer errors, needed less assistance, and required less time to complete assessments than those writing the traditional paper-and-pencil assessments. Similarly, a group of adolescents and adults who completed a short multimedia test online needed fewer prompts than when they wrote a written test (Stock et al., 2004).

Despite the advantages of assistive technology, Wehmeyer et al. (2004a) pointed out that the use, accessibility, and effectiveness of technologies may be hindered by the nature of cognitive, language sensory and motor skills or abilities in individuals with intellectual disabilities, including cognitive speed and reasoning, memory, visual and auditory perception, reading, and writing skills. For instance, they indicated that the instructions, formats, drop-down menus or features of software are often too complex to be comprehended by these users. They urged educators to consider and address these limitations while using assistive technology for students with intellectual disabilities.

Methods

Participants

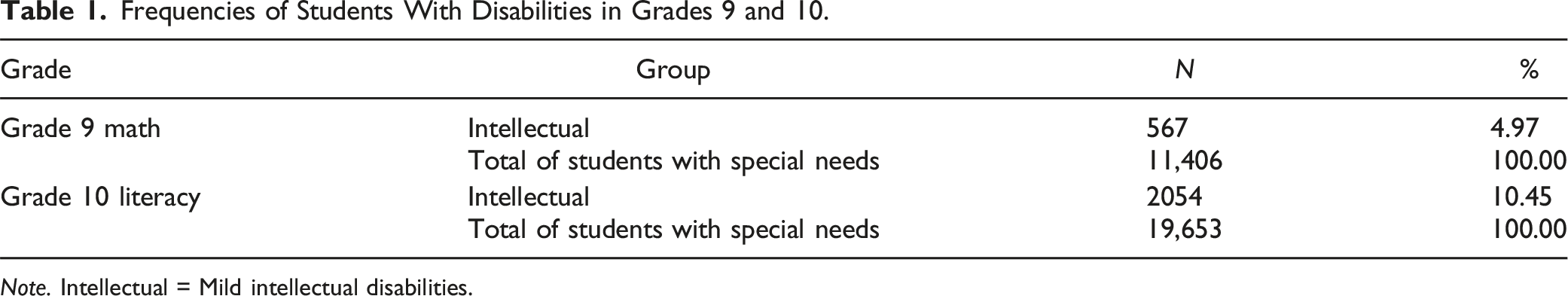

Frequencies of Students With Disabilities in Grades 9 and 10.

Note. Intellectual = Mild intellectual disabilities.

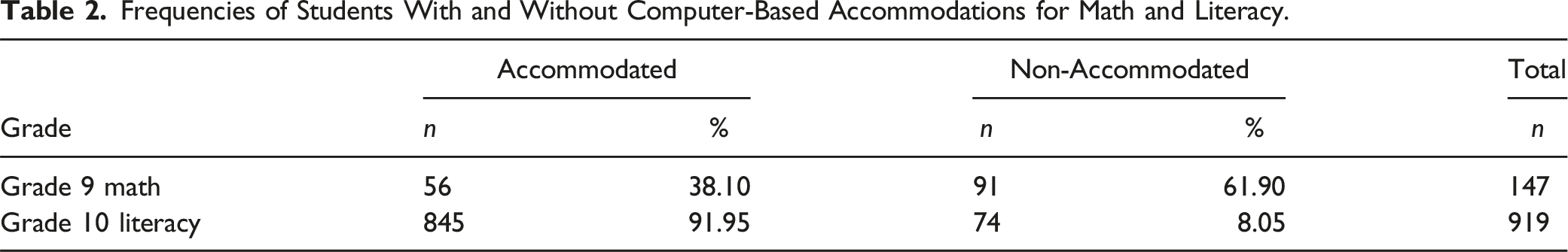

Frequencies of Students With and Without Computer-Based Accommodations for Math and Literacy.

According to the guide for educators provided by the Ontario Ministry of Education (2017), mild intellectual disabilities is a category of identified learning needs. Mild intellectual disability is: A learning disorder characterized by: (a) an ability to profit educationally within a regular class with the aid of considerable curriculum modification and support services; (b) an inability to profit educationally within a regular class because of slow intellectual development; (c) a potential for academic learning, independent social adjustment, and economic self-support. (Ontario Ministry of Education, 2017, A16)

The AAIDD (American Association on Intellectual and Development Disabilities) defines intellectual disabilities as “a disability characterized by significant limitations both in intellectual functioning and in adaptive behaviour as expressed in conceptual, social, and practical adaptive skills” (AAIDD, n.d.). We acknowledge that mild intellectual disabilities are associated with a spectrum of needs and levels of support in key areas identified by AAIDD (e.g., teaching and learning, home living, health and safety, social and behavioural activities). One cannot therefore assume that participants of the present study represent a homogenous group as their level of needs in each key area may vary on an individual basis.

We excluded examinees from our final datasets if they met the following criteria: (a) did not have a score in math or literacy (e.g., because they were not required to write the math or literacy assessment, or results were withheld) (Grade 9 = 1; Grade 10 = 2743); (b) did not have any information about IEP or IPRC (Grade 9 = 0; Grade 10 = 3); (c) did not have any record of accommodations (Grade 9 = 0; Grade 10 = 3); and (d) were identified as having dual or multiple exceptionalities (Grade 9 = 0; Grade 10 = 0). Consequently, we excluded 2749 test takers with intellectual disabilities in Grade 10; one test taker with intellectual disabilities in Grade 9 from the study.

Accommodation Policy

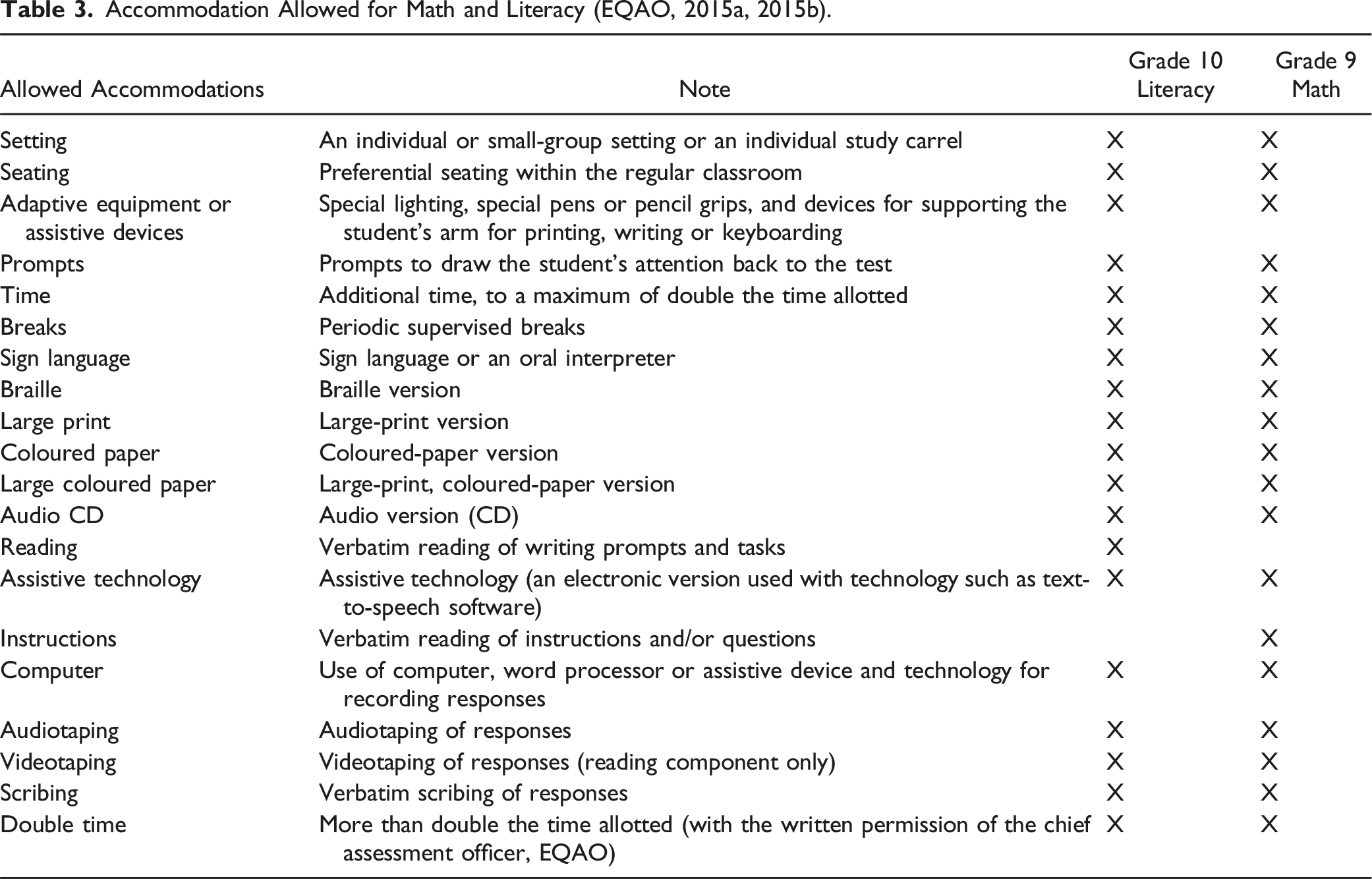

Accommodation Allowed for Math and Literacy (EQAO, 2015a, 2015b).

In this study, the computer-based accommodations are those allowed for Ontario’s paper-and-pencil assessments, and not for other tests given on a computer platform.

Measures

Students in the Grade 9 academic and applied math courses participated in the provincial math test. The math assessment for students in Grade 9 academic math was developed by the EQAO to assess the knowledge and skills across four math strands in The Ontario Curriculum, Grades 9 and 10: Mathematics (Ontario Ministry of Education, 2005): (1) number sense and algebra, (2) linear relations, (3) analytic geometry, and (4) measurement and geometry. Students in Grade 9 applied math were assessed across three math strands: (1) number sense and algebra, (2) linear relations, and (3) measurement and geometry. The assessment for students in academic and applied math consists of 24 multiple-choice items and 7 constructed-response items).

The OSSLT was developed by the EQAO to assess Grade 10 students’ literacy skills in relation to Ontario’s reading and writing curriculum expectations in The Ontario Curriculum across all subjects to the end of Grade 9 (EQAO, 2007, 2014). The reading component of the OSSLT measures students’ understanding of explicit and implicit stated information and ideas as well as making connections between their understanding of the text and personal knowledge and experience. In the writing component of this assessment, students were asked to demonstrate their ability to communicate and organize main ideas and information clearly and coherently as well as to develop their ideas with sufficient supporting details by following English writing conventions that did not distract from clear communication (EQAO, 2007, 2014). The OSSLT includes 39 multiple-choice items, 4 constructed-response items, and 8 short- and long-writing.

The EQAO has been using a number of quality assurance measures monitored throughout the entire process of item and test development, field testing, test administration, scoring, equating, and reporting to ensure that annual test results are reliable, valid, and comparable- from year to year- since 1996 (EQAO, 2014). The EQAO also employed a variety of statistical analysis techniques such as the three-parameter logistic model (3PL) of the Item Response Theory for multiple-choice items and Classical Test Theory to ensure that the level of item difficulty of each assessment was consistent from year to year. Moreover, the EQAO reported that Cronbach’s alpha coefficients ranged from 0.82 to 0.84 for applied math, 0.86 to 0.87 for academic math, and 0.90 for literacy (EQAO, 2014). An arm’s-length agency, the Office of the Auditor General of Ontario, concluded that the EQAO had employed adequate assessment processes and procedures to ensure that each assessment accurately reflected students’ academic achievements in relation to Ontario’s curriculum expectations (Office of the Auditor General of Ontario, 2009).

Data Analysis

Due to the number of students with mild intellectual disabilities in a given student population, sample sizes for each bundled package are often very small and zero cells may occur in an accommodated (treatment) or non-accommodated (control) group. For example, among the entire Ontario student population, only one student with intellectual disabilities had a scribe, extended time, assistive technology and on-task prompts, and 74 students with intellectual challenges did not receive any accommodation for literacy. Note that students receiving only one accommodation were not excluded from the data analyses. As one can see, the accommodation data was sparse and did not allow us to control for various teacher- or student-level factors in statistical analyses.

To address the limitation of sparse accommodation data, the present study employed a quantitative method, odds ratio (OR) (Tabachnick & Fidell, 2012), to examine the relationships between multiple types of accommodations and the math and literacy outcomes (whether or not examinees achieved provincial standards of levels 3 and 4 in Grade 9 math assessment and whether or not examinees passed the Grade 10 literacy assessment). An estimate of the odds ratio is a common indicator of effect size that can measure the magnitude of group differences instead of relying on significant test results only (Grimes & Schulz, 2008; Szumilas, 2010). Moreover, odds ratio analysis can handle very low expected frequencies in a contingency table (Fienberg, 2007). Lin and Lin (2016) suggests that this method is particularly appropriate for examining small samples of accommodated and non-accommodated students when adopting an adjustment method of Diamond et al. (2007) to overcome the zero-cell problems in odds ratio analyses. If the odds ratio is larger than 1, this indicates that the probability of the accommodated group achieving the math or literacy standards was higher than the non-accommodated group.

The processes of data management and odds ratio analyses were performed separately for math and literacy datasets by coding each bundled accommodation package in SPSS 22.0 (IBM Corp., 2013). The codes created in this study are mutually exclusive; in other words, no examinee was assigned to more than one package. For instance, “extended time” was coded as one package and “extended time and scribing” was coded as another. Each package involving single or multiple accommodations, a total of 85 packages for Grade 9 math and 312 packages for Grade 10 literacy were offered to students with mild intellectual disabilities. These packages may or may not include computer and/or assistive technology. Of these packages, we focused our analysis on the packages involving computer and/or assistive technology in order to address the purpose of the present study (20 and 197 packages for math and literacy, respectively). Moreover, we also conducted additional odds ratio analyses in order to compare the packages with and without computer and/or assistive technology.

Among students with mild intellectual disabilities receiving accommodations, 96% of them received multiple accommodations for math (e.g., extended time, setting, prompts) and 99% of them received multiple accommodations for literacy. In other words, approximately 4% of them received one accommodation for math (e.g., setting); 1% received a single accommodation for literacy. Results suggest that a majority of accommodated students received more than one accommodation for math and literacy.

Results

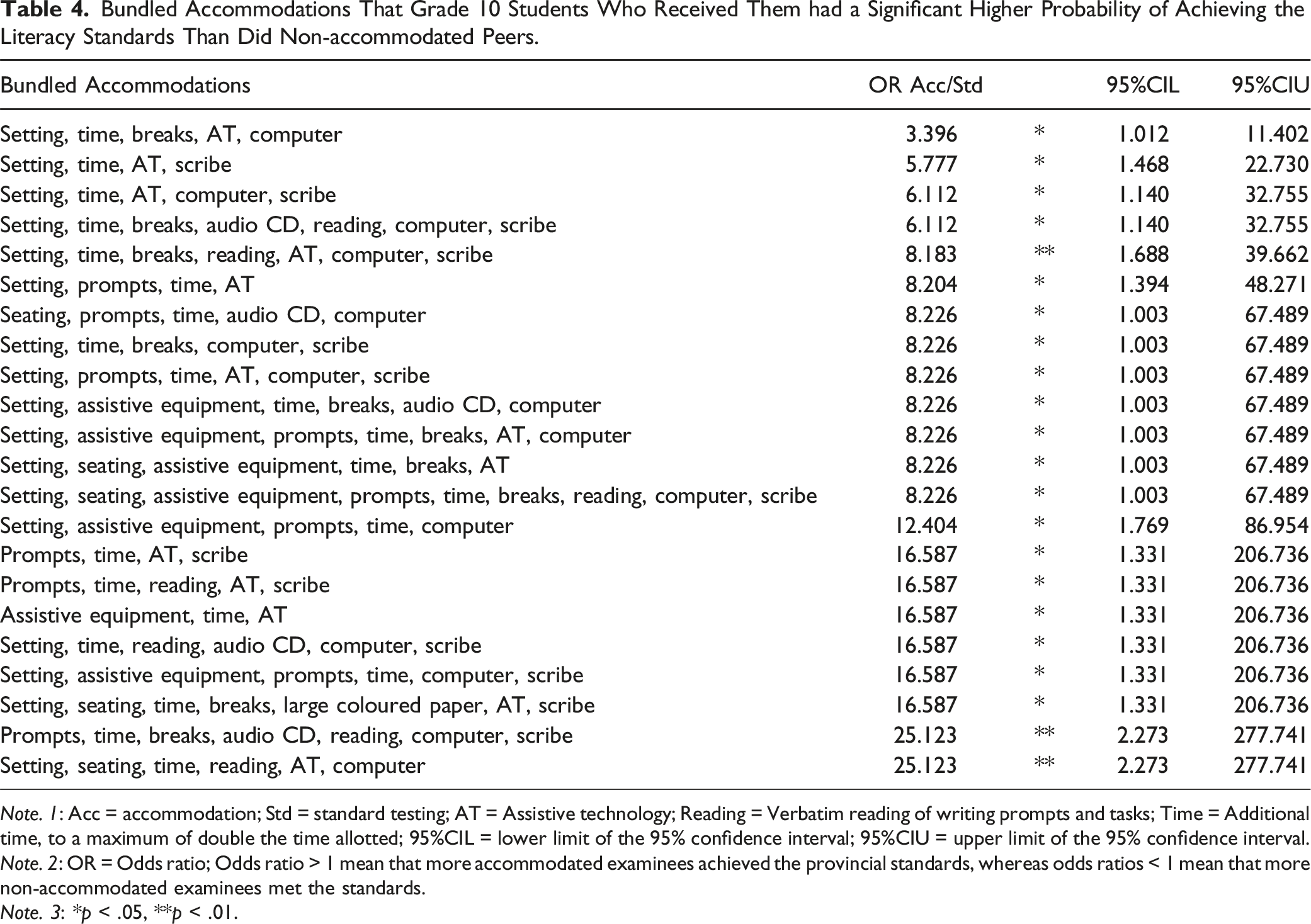

Bundled Accommodations That Grade 10 Students Who Received Them had a Significant Higher Probability of Achieving the Literacy Standards Than Did Non-accommodated Peers.

Note. 1: Acc = accommodation; Std = standard testing; AT = Assistive technology; Reading = Verbatim reading of writing prompts and tasks; Time = Additional time, to a maximum of double the time allotted; 95%CIL = lower limit of the 95% confidence interval; 95%CIU = upper limit of the 95% confidence interval.

Note. 2: OR = Odds ratio; Odds ratio > 1 mean that more accommodated examinees achieved the provincial standards, whereas odds ratios < 1 mean that more non-accommodated examinees met the standards.

Note. 3: *p < .05, **p < .01.

Interestingly, our results showed that packages, regardless of significant or non-significant results, involved computer and/or assistive technology. One may question if students with mild intellectual disabilities did benefit from the use of computers and/or assistive technology. To scrutinize this question, we further compared the packages that yielded positive results (e.g., prompts, extended time, verbatim reading of writing prompts and tasks, assistive technology, and scribe) with comparable accommodations that produced positive results but did not include computer and/or assistive technology (e.g., prompts, extended time, verbatim reading of writing prompts and tasks, and scribe). Note that our real-world data did not allow us to perform all possible comparisons since certain packages did not exist in the dataset. For example, we were able to compare the package - seating, prompts, and extended time – with another package - seating, prompts, extended time, audio CD, and computer. However, we cannot compare the latter with the other package - seating, prompts, extended time, audio CD - because this package was not assigned to any students for literacy. We analyzed available literacy data to test if students who received packages with computer and/or assistive technology have a higher likelihood of passing the literacy test than those who received the comparison packages. Interestingly, our results support this notion; for example, the chances test takers who used the package - prompts, extended time, verbatim reading of writing prompts and tasks, assistive technology, and scribe – and passed the literacy test were about 17 times more likely to pass than their non-accommodated peers, whereas examinees who received the comparison package - prompts, extended time, verbatim reading of writing prompts and tasks, and scribe – passing the literacy test were about 4.5 times more likely to pass than their non-accommodated peers. Take another example, the likelihood of examinees who were offered the package - setting, extended time, assistive technology, and scribe – passing the literacy test was about 5.7 times higher than their non-accommodated peers, while the chances of passing were about 3.1 times higher for examinees using the package - setting, extended time, and scribe - than their non-accommodated counterparts.

Overall, these results suggest that examinees with mild intellectual disabilities who received particular bundled accommodations that involved computer and/or assistive technology for literacy had a better chance of meeting the standards than they were for the math assessment compared with their non-accommodated counterparts. It is worth noting that some packages showing positively significant effects have a wider range of 95% confidence intervals of odds ratio (Table 4). This is likely because of the unbalanced sample sizes in those packages, and this means that the effect sizes of those packages therefore need to be interpreted with caution.

Discussion

The present study was designed to examine the effects of a variety of computer-based accommodations on the math and literacy performances of students with mild intellectual disabilities who participated in provincial assessments (20 and 197 packages for math and literacy, respectively). The results of our investigation show that accommodated students with mild intellectual disabilities receiving 22 bundled accommodation packages outperformed their non-accommodated counterparts in the same group on the literacy test, though none of the differences were statistically significant for the math results (Table 4). Furthermore, we also compared these 22 packages with existing comparable bundles that did not include computer or assistive technology to test whether students benefited from the use of computers and/or assistive technology for literacy. Results indicated that test takers had a higher chance to pass the literacy test if they received packages with computer and/or assistive technology than their counterparts who received comparable packages without such accommodations. The findings of the present study haven’t been reported in the existing literature so far, and so they denote the importance of examining the common special education assessment practices that involve computers and/or assistive technology.

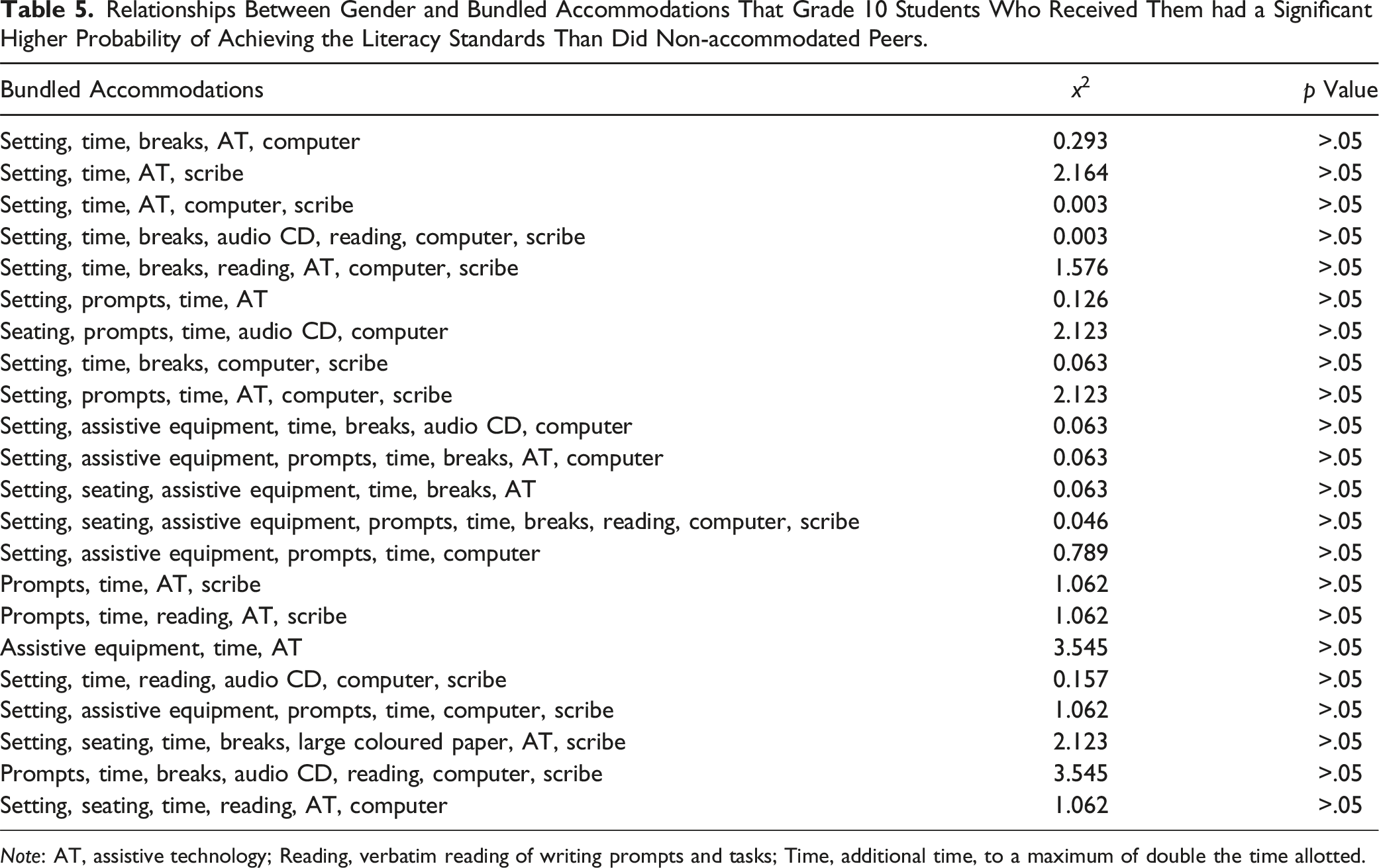

The results also show that students’ test performances and types of bundled accommodations received for literacy do not significantly relate to gender which is the only construct-irrelevant indicator in the dataset (Table 5). This finding suggests that teachers did not assign accommodations based on a student’s gender. In addition, gender doesn’t have a detrimental effect on students’ literacy outcomes.

One of the most substantial findings is that we did not find a significant difference between accommodated and non-accommodated students with mild intellectual disabilities for math. Given that previous studies found CAI beneficial in improving basic math computational skills or word problem-solving strategies for students with intellectual disabilities (Bouck et al., 2009; Hasselbring & Moore, 1996), this study revealed that secondary students did not benefit from computer-based accommodations for math. Due to the lack of empirical evidence on multiple accommodations in the existing literature, we discuss our findings by referring to the existing literature on CAI, while keeping in mind that the CAI may not cover the use of other accommodations that are not computer-related. In addition, previous studies were mostly conducted on CAI for elementary children, instead of accommodations for high school students- as in the present study. Moreover, CAI research on math skills is still limited and inconclusive due to great variations in participants’ characteristics and the purposes of CAI in each study (Fitzgerald et al., 2008; Weng et al., 2014; Weng & Bouck, 2016; Snyder & Huber, 2019); future research on computer-based accommodations and math achievements of secondary students is therefore recommended.

Although the nature of the disability may affect the use of technology for math and reading assessments (Wehmeyer et al., 2004, 2004b), accommodations such as assistive technology (e.g., speech-to-text software), computer or word processer for audio recording or verbatim scribing of responses have been classified as direct linguistic accommodations (Abedi et al., 2004; Rivera & Collum, 2006; Thurlow & Kopriva, 2015; Willner et al., 2008). The direct linguistic accommodations that do not pose threats to the valid interpretation of test results have been provided to address the language aspects of a test to a student who needs language support (e.g., allowing students to respond orally, scribing or tape-recording responses). Results of the present study suggest that direct linguistic accommodations involving computers and assistive technology may increase access to test content for literacy. Given that these accommodations may also enhance access to math test content, test takers still need to acquire grade-level math skills. The student’s use of the tool did not compromise what the math items were supposed to measure. Thus, our results may be attributable, in part, to the fact that these accommodations were merely a tool with which students could respond to math items. In other words, the accommodations did not help boost students’ math performance.

Of 197 bundled packages, this study produced significantly positive results in 22 packages and none of the studied packages yielded significantly negative results for literacy; that corroborates the findings of a great deal of the previous work on the use of CAI to help students with intellectual disabilities develop literacy skills (Table 4) (Cullen et al., 2013; Mechling et al., 2007; Wehmeyer et al., 2004a); however, those findings from previous studies cannot be extrapolated to 175 accommodation packages that did not produce significant results in the present study. Moreover, it also begs an important question rarely tackled in the literature: How equitable were the accommodation policies and practices for non-accommodated students? It is questionable whether non-accommodated students would have benefited from the packages that were found to be effective in the present study (Lin & Lin, 2016).

Although not all bundle packages involving computer and/or assistive technology produced significantly positive or negative results for literacy, further analysis of data suggests that computer-based accommodations tended to enhance the positive effects of bundled packages. For example, the chances examiners who used the package - setting, extended time, assistive technology, and scribe – and passed the literacy test were about 5.7 times more likely to pass than their non-accommodated counterparts, while test takers who received the comparison package - setting, extended time, and scribe – passing the literacy test were about 3.1 times more likely to pass than their non-accommodated peers (see Results section). As such, these findings indicated that computer-based accommodations may amplify the effects of the comparison package on the literacy test for students with mild intellectual disabilities. As studies on multiple accommodations are still sparse (Fletcher et al., 2009; Kettler, 2012), these findings call for further investigations of single and multiple combinations of accommodations in future research.

Relationships Between Gender and Bundled Accommodations That Grade 10 Students Who Received Them had a Significant Higher Probability of Achieving the Literacy Standards Than Did Non-accommodated Peers.

Note: AT, assistive technology; Reading, verbatim reading of writing prompts and tasks; Time, additional time, to a maximum of double the time allotted.

Conclusions, Implications, and Limitations

The current study was undertaken to evaluate the effectiveness of varied computer-based accommodation packages used by a group of Ontario students with mild intellectual disabilities who were participating in provincial math and literacy assessments. A total of 217 bundled accommodation packages for students with mild intellectual disabilities were examined in the present study. This study has shown that some computer-based accommodations that involved computers and/or assistive technologies produced significant differential effects on literacy for students. More specifically, we found that students with mild intellectual disabilities using 22 bundled packages had a substantially higher chance of meeting the literacy standards (Table 4). Another more significant finding to emerge from this study is that students who received bundled accommodations that involved computer and/or assistive technologies were more beneficial with regard to literacy, rather than the math assessment, for accommodated students with mild intellectual disabilities.

The present study examined large-scale population-based data to evaluate multiple combinations of accommodations. It may be unethical to randomly assign students with intellectual disabilities to an accommodation group as the decisions on accommodations should be made at the local level, and it is difficult to conduct experimental or quasi-experimental studies on a variety of accommodations for one or more groups of students with specific disabilities (Sireci et al., 2005) to examine the differential accommodation effects. Although it is not possible to describe or control for each student’s particular characteristics and evaluate schools’ or teachers’ decision-making processes or classroom assessment practices in this large-scale population-based study, our results may serve as a filtering tool to pinpoint the accommodations that should be reviewed and re-evaluated by stakeholders, such as general classroom and special education teachers, school administrators, and policy makers. It is recommended that further research be conducted to investigate the decision-making processes occurring at the local classroom and school levels. Moreover, future studies investigating teachers’ accommodation practices will be important in order to gain a better understanding the relationship between accommodations for instruction and assessment, and how they relate to students’ academic achievements and special needs.

Although Ontario’s literacy assessment we examined in this paper consists of reading and writing components, further studies with more focus on writing component are suggested. In future investigations, researchers should explore whether or not and to what extent the bundled packages would benefit students for the writing component of the literacy assessment. In addition, the computer-based bundled packages examined in this study are those used only for Ontario’s paper-and-pencil assessments, assessments that are different from other tests given on computer platforms. The findings of the present study should be interpreted and used with caution.

Researchers have argued that the differential effects of multiple combinations of accommodations should be evaluated individually (Elliott et al., 2001; Fletcher et al., 2006; Fuchs et al., 2005). As such, the present study offers at least two advantages for education policy, practices, and research applications. First, the thorough examination undertaken in this study can inform policy makers and stakeholders about the effectiveness and fairness of accommodation policies and practices for students with mild intellectual disabilities in the general classroom (Lin & Lin, 2016). Second, the empirical data reported in the present study can enhance teacher judgments of accommodations for math and literacy and help educators formulate data-driven accommodation decisions for students with mild intellectual disabilities (Fuchs & Fuchs, 2001). We recommend that educators and policy makers further re-evaluate significant and non-significant packages for this student population participating in assessments of interest to educators and policy makers. In particular, we caution that educators and policy makers should take all factors into account, including individual learning needs, effectiveness, appropriateness, and test validity while using those bundled packages showing statistically positive effects and making important decisions on accommodations for students with mild intellectual disabilities.

Footnotes

Acknowledgements

I am very grateful to the Education Quality and Accountability Office (EQAO) in Ontario, Canada, for permission to use the data.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Social Sciences and Humanities Research Council of Canada.