Abstract

This study examines interactions between students with atypical motor and speech abilities, their teachers, and eye tracking devices under varying conditions typical of educational settings (e.g., interactional style, teacher familiarity). Twelve children (aged 4–12 years) participated in teacher-guided sessions with eye tracking software that are designed to develop augmentative and alternative communication (AAC) skills. Assessments of expressive communication skills before and after the testing period demonstrated significant improvements. 164 sessions conducted over a 3-month period were analyzed for positive engagement (e.g., gaze direction, session time) and system effectiveness (e.g., lag time, gaze registration) between integrated and non-integrated systems. Findings showed that integrated systems were associated with significantly longer sessions, more time spent looking at the screen, greater proportion of gaze targets registered by the system, and higher response rate to prompts from teachers. We discuss the implications for the facilitated use of eye tracking devices in special education classrooms.

Keywords

Children typically acquire speech skills that enable them to express and communicate their needs and interact socially with their surroundings. However, children with multiple disabilities such as language, motor, and other impairments have limited opportunities for communication and engaging in everyday activities (Light, 1997). Augmentative and alternative communication (AAC) is used to enhance or establish social and communicative behavior for individuals with significant communication challenges. Broadly, AAC encompasses any method (device, system, or technique) that helps a person communicate more effectively and can range from low-technology (e.g., Picture Exchange Communication System; PECS, manual signs, books) or high-technology forms that provide written or spoken output (Gilroy et al., 2017). Traditional AAC communication boards have pictorial representations of language concepts, which allow individuals to convey intents through eye gaze, nose pointing, or hand gestures, depending on preference and ability. Advances in eye tracking technologies offer individuals with severe motor and speech impairments opportunities to interact with software that support communication. For example, children with Rett syndrome (a neurodevelopmental disorder caused by the mutation of the X-linked MECP2 gene) often have difficulty speaking and using their hands for sign language or using a computer mouse and traditional touch screen interfaces. This is partly due to difficulty in planning and coordinating motor movement (i.e., apraxia; Cass et al., 2003; Djukic & Valicenti McDermott, 2012). An emerging body of research suggests that eye tracking assistive technologies show promise for improving communication skills for those who do have the ability to control their eye movements (Ahonniska-Assa et al., 2018; Amantis et al., 2011; Borgestig et al., 2015; Gillespie-Smith & Fletcher-Watson, 2014; Sigafoos et al., 2011; Townend et al., 2016). However, there is very little research investigating communicative interactions with eye tracking technology within neurodiverse populations in educational settings (Karlsson et al., 2017). This study serves to fill this gap by examining interactions between students with severe motor and speech impairments, their teachers, and eye tracking devices under varying conditions typical of educational settings (e.g., interactional style, teacher familiarity).

Literature Review

High-Tech AAC and Eye Gaze Control Technology

Among the latest developments, high-tech AACs such as tablets; speech-generating devices; and mobile devices (Still et al., 2014) are increasingly studied as an intervention strategy in support of individuals with complex communication needs, such as those with Rett syndrome, cerebral palsy, or seizure disorder (McEwen et al., 2020; Gilroy et al., 2017; Nepo et al., 2015; Rispoli et al., 2010). While some technologies, like switch access scanning, afford the user the ability to independently select letters or words using a wrist or hand-controlled input device to move a cursor on a screen, knowledge of the alphabet and the ability to read are requirements. Conversely, dynamic display technologies use images instead of words and are particularly effective for populations who can control finger or hand movements and have lower or no literacy skills. In a recent systematic review conducted by Gilroy and colleagues (2017) investigating the use of high-tech AAC (i.e., speech-generating devices with a touchscreen interface) in adults and children across a range of clinical populations, 56 studies were coded as they aligned with the training components of the PECS protocol. They found that the majority (93%) of the studies included aspects of requesting, with few studies (10%) addressing more complex issues of social communication (i.e., targeted labeling and naming). The study found support for high-tech AAC to be pursued as a replacement for more traditional, low-tech approaches. The authors further noted the need for established guidelines for the use of assistive devices—for beginning communicators to more advanced social learners.

However, while the previously described high-tech AAC can be effective for those who can isolate finger and hand movement, for others, eye gaze is the only movement that they can control voluntarily. A number of studies examine the use of eye gaze control technology as an assistive technology. In a longitudinal study, Borgestig and colleagues (2015) examined the eye gaze performance of ten children (with severe physical impairments) before and after a daily gaze-based assistive technology intervention over 20 months. Results indicate improvements in performance (i.e., time on task and accuracy with eye selection of targets on screen) but suggested the need for practice to acquire faster eye gaze performance (Borgestig et al., 2015). Others have investigated eye tracking technology for assessing the cognitive function of children with Rett syndrome (Ahonniska-Assa et al., 2018; Baptista et al., 2006), and as a means for delivering music therapy interventions in promoting and motivating them to communicate and to learn (Elefant & Wigram, 2005; Lariviere, 2014).

Another recent systematic review focused on the use of eye gaze control technology for facilitating communication across different social contexts for children, adolescents, and adults with complex communication needs (Karlsson et al., 2017). Karlsson and colleagues identified two eligible studies for discussion out of a pool of 756 articles, highlighting the need for future research to guide the assessment for optimal configurations of hardware and software technology, training of users, and their communication partners for people with significant disability.

With the potential for eye gaze control technology to make a substantial impact on the lives of people with complex communication needs, there is a considerable need to better understand how to design and use eye gaze control technology for people with significant disability, as well as how to train users and their communication partners in its use.

Eye Tracking Technology for Communication in Special Education Settings

In selecting eye gaze technology for classroom communication, one consideration is the system type. Integrated systems (i.e., manufacturer combined infrared eye control device, touch screen, and computer to work as one unit) offer ease of use, while non-integrated eye tracking devices (i.e., individual components acquired and assembled by the user to perform as a system) offer flexibility for how the resource may be configured (e.g., choice of computer, brand of eye control device, etc.). One consideration when selecting a system type is the efficacy of the device, and in this regard, the ability of the system to accurately register and process the eyes of the user is paramount. In a study specifically investigating the accuracy of a non-integrated eye tracking device, researchers found that the non-integrated eye tracking device to be comparable to a commercial video-based device (Mantiuk et al., 2012). In a study evaluating the reliability between a fixed-display system and a portable head-mounted eye tracking device with an infrared sensor, researchers found comparable, modest calibration changes across trials between the two systems (Hong et al., 2005). However, neither of these investigations could be generalized to a classroom setting where it is more difficult to control the environment and where equipment is moved during the course of the week for cleaning and maintenance.

Another factor is the cost of system types. In publicly-funded educational settings, cost is a key concern due to limited budgets. Therefore, there is an economic driver to find lower-cost alternatives. In an earlier study, researchers compared a low-cost device to a well-established eye-tracker in different experimental setups (Ooms et al., 2015). The researchers found that obtaining acceptable results with the low-cost device was possible but that a correct setup and selection of software for recording and processing the data was key mediating factors. A number of lower-cost systems have also been developed, such as those using web camera technology and portable head-mounted eye tracking devices (Hong et al., 2005; Mantiuk et al., 2012). These studies indicate that while a variety of eye tracking systems can produce comparable results, the configuration and setup may impact outcomes. In this study, we explore how eye tracking systems can affect user interactions in a naturalistic special education setting.

This paper is situated within a larger study that explored a number of characteristics typical of technology-mediated communication in educational settings, which relate to key issues around the system type, verbal interaction style, and familiarity of the communication partner (McEwen et al., 2020). This paper focuses on the relationship between eye tracking system types and user interactions in an eye gaze technology-mediated communication activity. Drawing from Luhmann’s theory of communication (Luhmann, 1992) and expanded by McEwen et al., 2020, we framed communicative interaction as a triad (student-teacher-technology), rather than the traditional dyad (student-teacher), as such we considered all three participants as sources of data and units for analysis. We used session time as an indicator of positive engagement (Janssen et al., 2012), highlighting sustained communication as a key indicator of communicative interaction success. Gaze direction towards the screen offers another measure of visual attention and on-task behavior (Frutos-Pascual & Garcia-Zapirain, 2015), with responses to partner cues as indicators of the quality of teacher-student interaction.

Research Questions

We posed the following questions: What are the effects of facilitated sessions with eye tracking software on the communication skills of learners with complex communication needs? (RQ1) What is the effectiveness of integrated and non-integrated eye tracking system types in special education classrooms? (RQ2) How do eye tracking system types influence interactions between eye tracking devices and learners with complex communication needs (RQ3)

Method

Participants

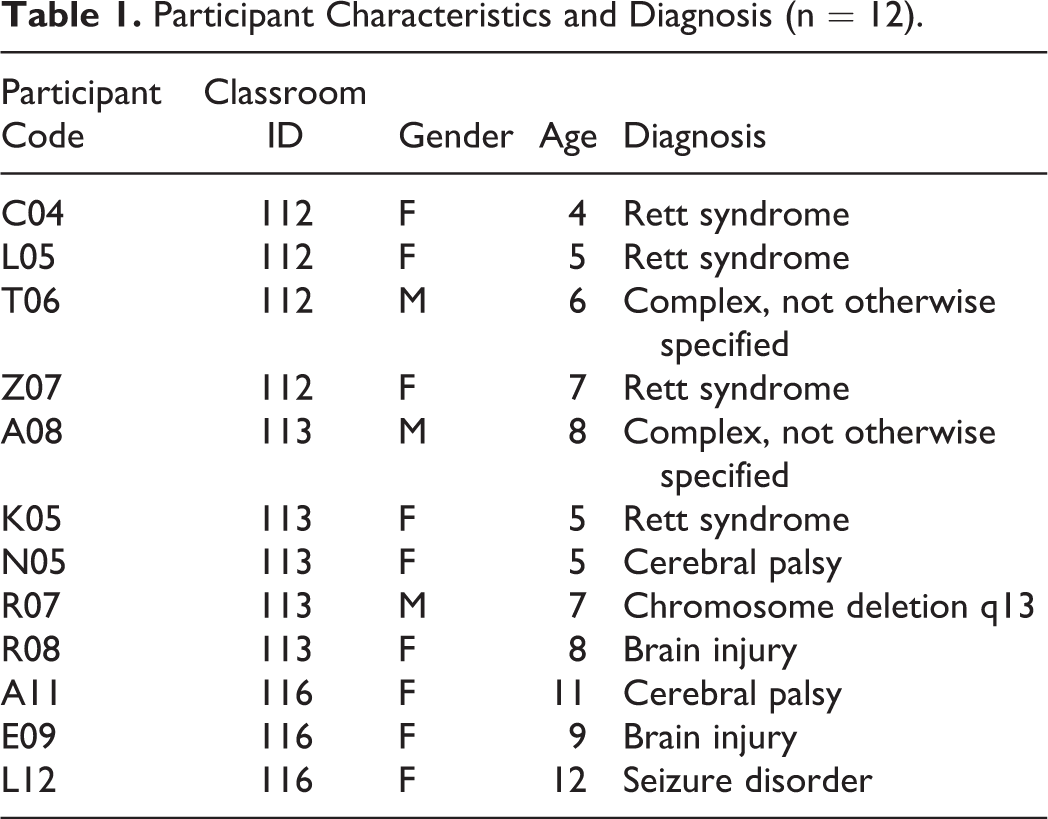

The study followed 12 children with complex communication disabilities ranging from four to 12 years old (Table 1). Participants were selected from three classrooms with the following inclusion criteria: 1) limited fine motor control (i.e., have difficulty operating a mouse or touch screen easily), 2) limited speech (i.e., little to no spoken language), and 3) corrected-to-normal vision. Prior to the study, most of the children had little to no experience with eye tracking technology. Two additional participants from the same set of classrooms (who fit within the reported age range and inclusion criteria) completed the pre and post-intervention communication skills assessments but did not take part in the eye tracking sessions.

Participant Characteristics and Diagnosis (n = 12).

Research Setting

This study took place in a public urban elementary school in Toronto, Canada that delivers educational services to students with developmental and or physical disabilities. Data collection was conducted by teachers, in the children’s own classrooms, in familiar surroundings during scheduled learning times as to minimize the impact on the classroom. Session times were designed to flow in the natural schedule of the classroom over a 3-month period.

Materials and Procedure

Pre/post communication skills assessment

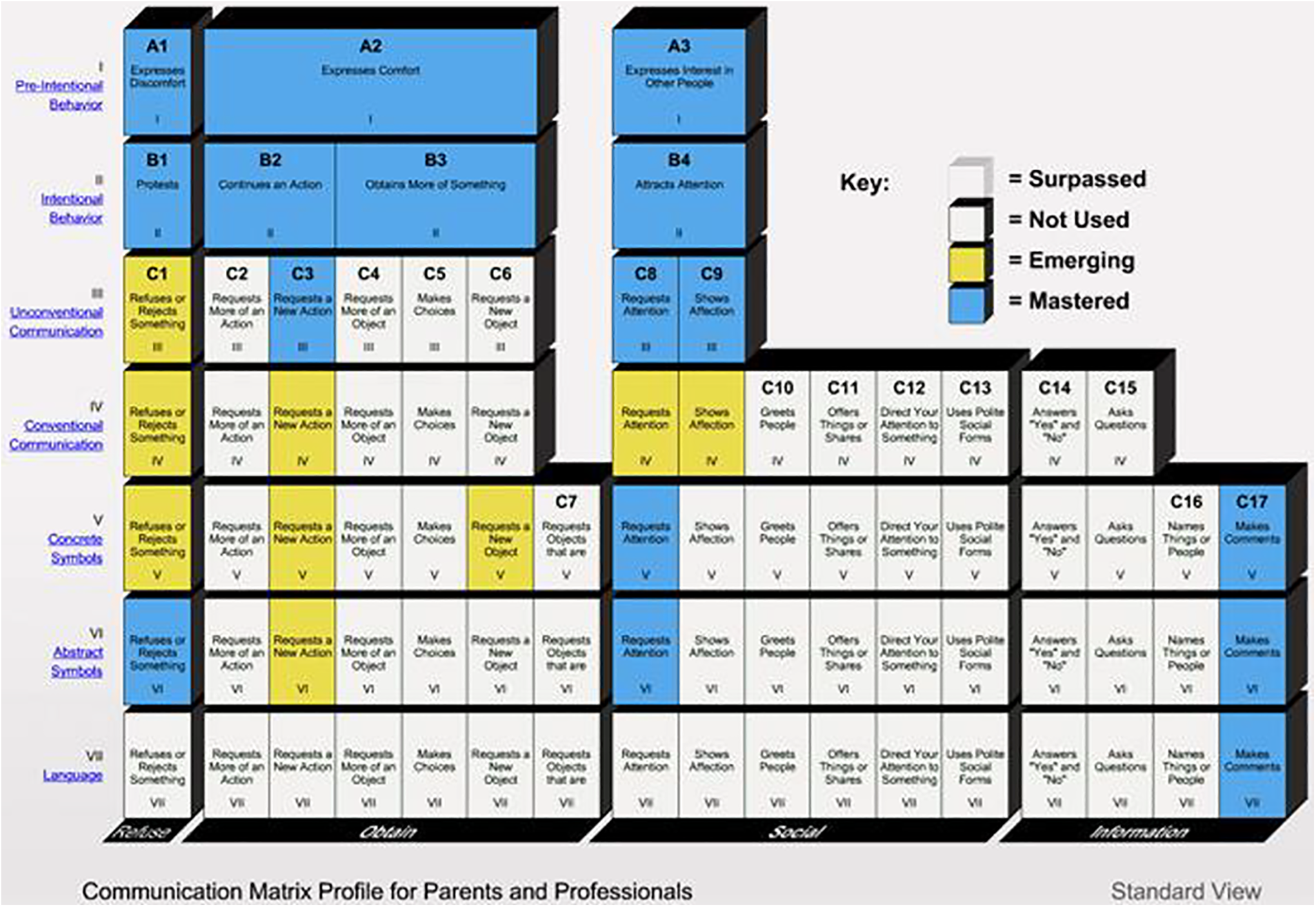

The Communication Matrix (Rowland, 2011) is a research-based, online communication assessment tool specifically developed for emergent communicators and those who use alternative communication systems (https://communicationmatrix.org/). The Matrix is organized into four major categories based on reasons to communicate: refusing things that we do not want, obtain things that we do want, engaging in social interaction, and providing or seeking information. Under each of the four major reasons are 24 more specific messages or communicative intents, which correspond to the questions that users must answer to complete the Matrix. The Matrix is further organized into seven levels of communicative behavior, ranging from Level 1, “pre-intentional behavior” that reflects a general state (e.g., how a baby shows sleepiness or discomfort) to Level 7, using language (see Figure 1).

Communication matrix chart.

Using the online tool, an assessment was completed once at the start and again at the end of the study by each child’s classroom teacher (to be referred to pre- and post-intervention Communication Matrix assessments in the remainder of the paper). The instrument was used to monitor communication development and determine if there were any improvements during the study period.

Eye tracking devices and software

The Tobii-Dynavox eye-tracker system was used to collect data from software designed to assist the children in facial recognition, scanning, targeting, and task identification. Our testing consisted of using 1) an integrated system, the Tobii I-12 (i.e., computer, eye-tracker, speakers as one device; Tobii Dynavox Ltd.), and 2) a non-integrated system, the portable PCEyeGo camera (Tobii Dynavox Ltd.) with a PC laptop computer. With both systems, we used the Sono Primo software (version 1, Tobii Dynavox Ltd.), which included eye tracking software for developing AAC skills. In the software, learners looked around interactive scenes (e.g., farm, birthday party). A range of visual targets played different sounds, including phrases and labels, when triggered.

Eye tracking session procedure

During eye tracking sessions, the teacher first calibrated and setup for the individual student, then the teacher sat beside the student as they interacted with the software on an eye tracking device (Figure 2). The student led the interaction with the teacher engaging in natural conversation with the student. While the teacher did not give specific instruction, they responded to communication outputs from the system and to the student’s reactions. Sessions were conducted twice per week, alternating between varying conditions (i.e., system type, familiarity of communication partner, verbal interaction style) for each participant. Familiar partners were the students’ regular teachers, who spent time with them during regular classroom instruction. Unfamiliar partners were other teachers or assistants who worked elsewhere in the school. Unfamiliar partners were familiar with the instructional environment and had experience working with children with disabilities and developmental delays but didn’t know the students personally. Both partner types used a mix of inflected and neutral tones in different sessions to allow for researchers to analyze the impact of both variables against the different partner types. The conditions were determined by the teachers and monitored by the research team to ensure the number of sessions per condition was counterbalanced. Throughout the study period, the eye tracking software was only used during the scheduled sessions.

Image of interactive scene taken during data collection. Note. When the participant’s eye gaze is registered on the doll, the device plays an audio clip saying “doll.”

Teachers collected data using Sesame (Sesame HQ Inc.), a website for educators to record and track student competencies. Profiles for each student were created in Sesame. For each session conducted, field notes were added, videos of the sessions, and screenshots were uploaded to the student profiles. Names and medical conditions were not linked to the online account during data collection. Instead, an alphanumeric code was assigned to each participant.

Coding and Analysis

Teachers were responsible for collecting in-class data, and we provided training materials to document the protocol at the outset. Researchers met regularly with the lead teacher, twice weekly for the first month, then once a week for the remainder of the data collection periods. The meetings served to review the procedures and modify them when needed. For example, teachers were unclear whether they should continue filming the session when calibration of the system failed. During these meetings, it was determined that in the case of calibration failures that continued beyond 1 min, that the teacher should stop the session and consider it no longer valid. In such a case, protocol documents were updated and communicated to all other teachers.

The Communication Matrix presents answers from 24 multi-part questions in 80 cells. We computed an aggregate score by summing the possible categorizations attributed to each cell: Not Used (0), Emerging (1), Mastered (2), and Surpassed (3). The aggregate score was used as a general measure of the child’s expressive communication skills. The data from the pre and post-testing period assessments were compared using a paired samples t-test with the IBM SPSS software.

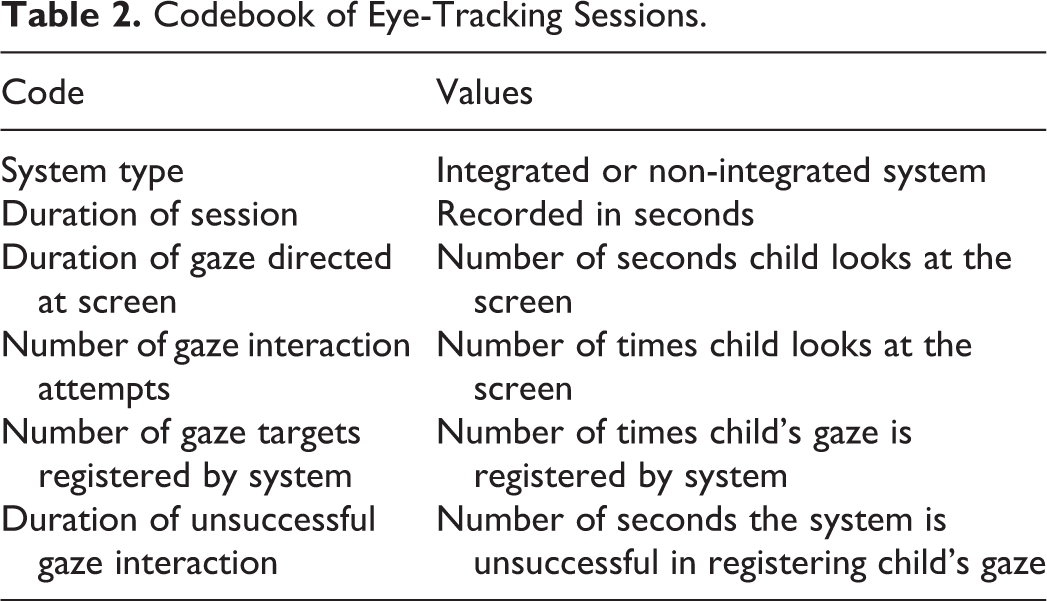

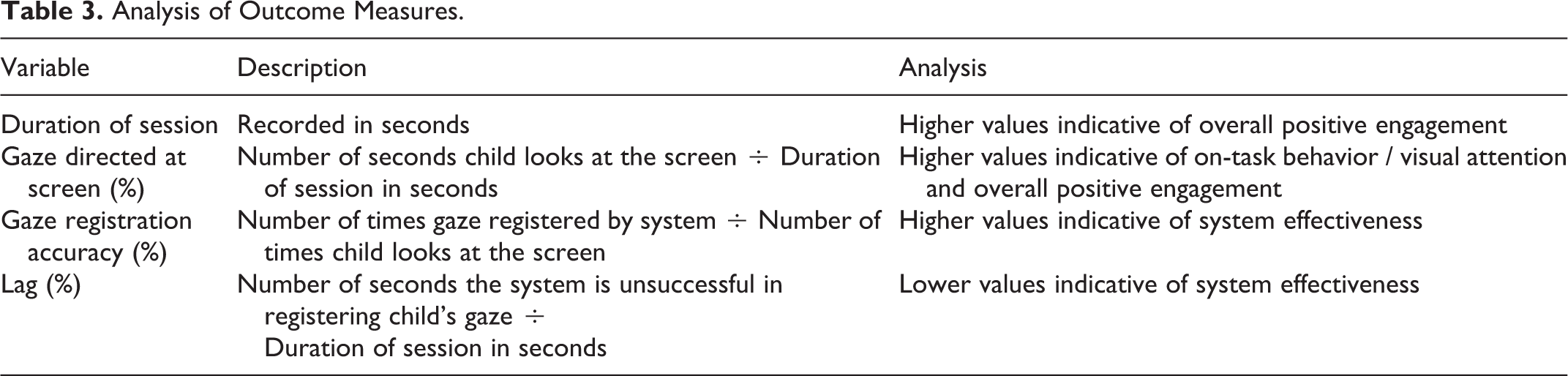

Using a secure, online repository of the data collected, three project researchers coded the session screenshots and videos collected from the classroom teachers. The classroom sessions included information such as the system type (integrated and non-integrated), and the sessions were also coded for the duration of session time, duration of gaze directed at system screen, number of gaze interaction attempts, number of gazes registered by the system, duration of unsuccessful gaze interaction, number of prompts and number of responses (Table 2). The codebook was developed collaboratively between the research team and the senior researcher, and questions about coding were discussed as a group throughout the process. The data was analyzed using IBM SPSS software, with a paired samples t-tests for the analysis of pre- and post-intervention Communication Matrix assessments. Session data (Table 3) was evaluated with Linear Generalized Estimating Equations (GEE), which is appropriate for the analysis of data collected in repeated measures designs (Ballinger, 2004).

Codebook of Eye-Tracking Sessions.

Analysis of Outcome Measures.

We reviewed all of the session videos and selected representative sessions with comparable external conditions (i.e., same teacher, same game) but varying system type. Fourteen videos from sessions with K05, E09, C04, A08 were transcribed. Using a multimodal interaction methodological framework (Norris, 2004), the transcriptions include descriptions of the surrounding environment, the position of the participants and their partners, non-verbal utterances and facial expressions, and body movements.

Results

Pre/Post Communication Assessments

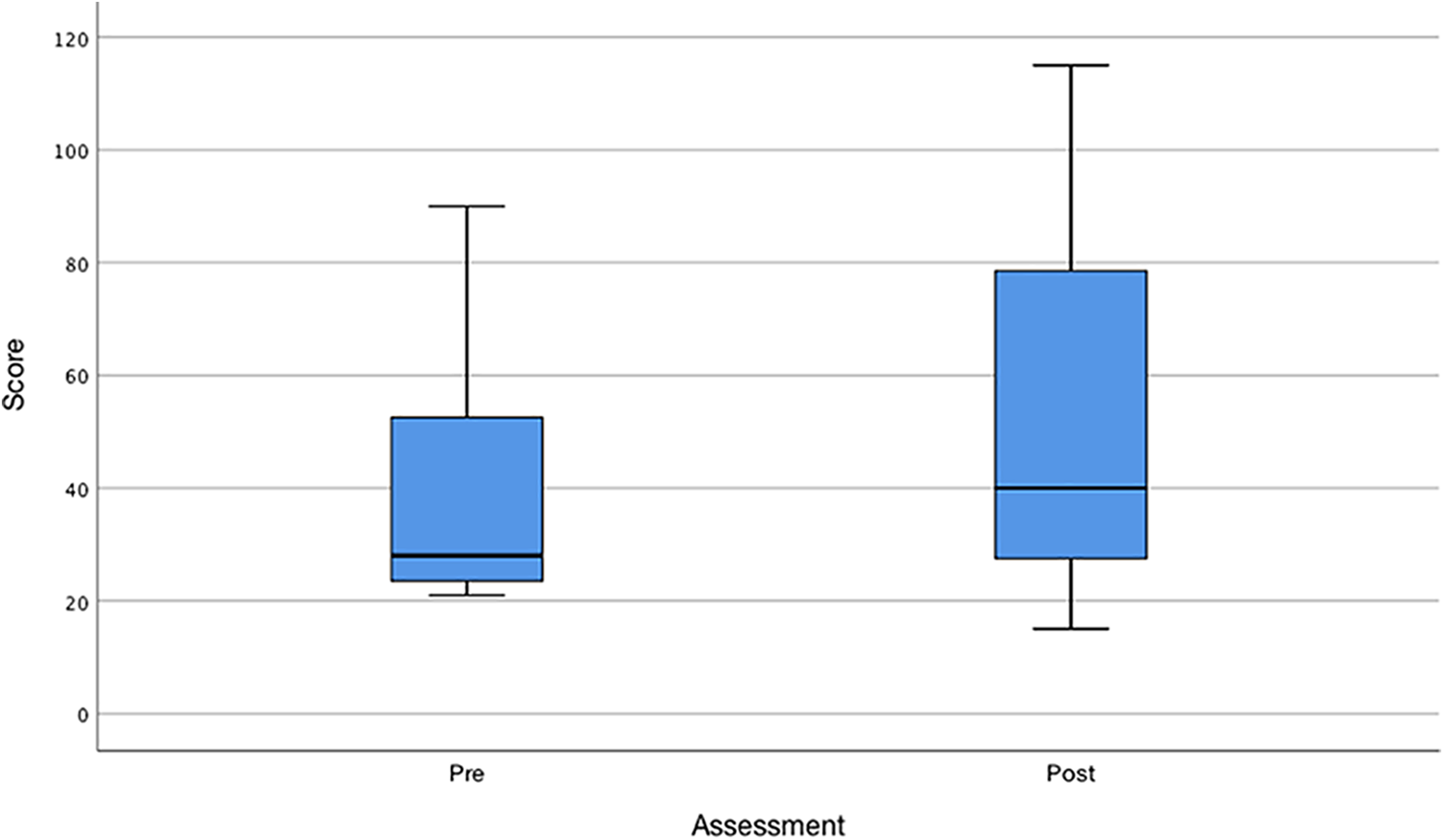

Communication Matrix assessments were completed both pre and post-intervention for 11 of 12 participants. With regard to the first research question, what are the effects of facilitated sessions with eye tracking software on the communication skills of learners with complex communication needs? We found a significant improvement in their communication skills. The scores for the pre-test (M = 41.00, SD = 23.99) and post-test (M = 52.91, SD = 34.90), resulted in t(10) = 3.01, p < .05, from a paired samples t-test. Two learners achieved the same score in both assessments, while eight of eleven learners showed improvements between pre- and post-assessments (Figure 3). Many participants improved levels from unconventional communication (Communication Matrix level II), to conventional communication (level III) or concrete symbols (level V). Others improved within levels, for example, completing mastery of skills within conventional communication level III. It is interesting to note that students who scored the greatest difference between pre and post-intervention assessments (top 25%) were diagnosed with Rett syndrome.

Pre/post communication skills assessment scores.

As a general comparison, the additional participants (n = 2) who did not take part in eye tracking sessions showed much smaller gains with scores averaging 24.00 (SD = 29.70) in the post-assessment and 21.50 (SD = 26.16) in the pre-assessment.

Eye Tracking Sessions

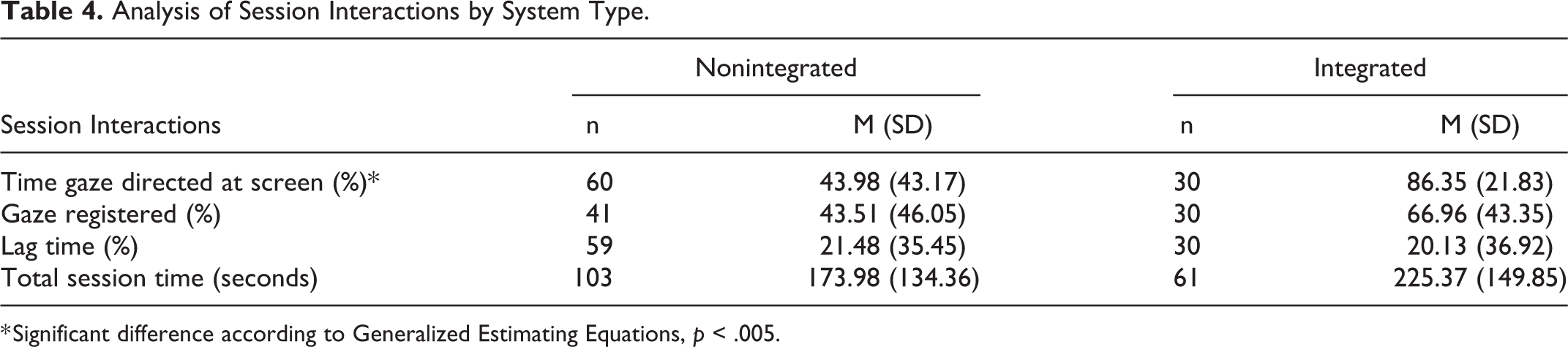

A total of 164 testing sessions were conducted with an average number of 13.67 sessions per student (SD = 7.10; range = 6–24). Note that some scheduled sessions were missed from absences or resource unavailability. On average, each session lasted 193.09 s (SD = 142.07). The descriptive statistics for session data (broken down by outcome measures) are shown in Table 4. The reported sample values (n) reflect missing data for certain variables at the data collection phase.

Analysis of Session Interactions by System Type.

* Significant difference according to Generalized Estimating Equations, p < .005.

To address the issue of effectiveness of different system types (RQ2), we analyzed the data comparing outcomes between sessions using integrated and non-integrated eye tracking systems (Table 3). A GEE approach was used to evaluate whether system type is a predictor of the length of sessions. The results of this analysis were not significant (p > .05). As well, system type was not found to be a significant predictor of gaze registration accuracy, the response rate of the learner to teacher prompts, nor lag time between integrated and non-integrated systems. However, the system type can predict the proportion of the session in which a student’s gaze was directed at the screen. Specifically, the average length of sessions with integrated eye-tracking devices was almost twice as long as the average session length with non-integrated eye-tracking devices.

Discussion

Facilitated Eye Tracking Software for Developing Communication Skills

Our findings suggest that engaging in facilitated eye tracking software can help children with severe motor and speech impairments develop communication skills and offer evidence for eye tracking AAC software to be used as part of an intervention strategy to support children with complex communication needs in special education settings. We found significant differences between pre and post-intervention assessments for expressive communication after a 3-month period, with improvements for a majority of the children. The 3-month research study period, with session data collected twice weekly for each participant, provided the time to allow students to learn how the technology worked and to become familiar with its use for communication. It is possible that a longer intervention may result in the development of communication skills for all participants, and future research is warranted to test this potential. Studies examining eye gaze performance in children with profound impairments suggest that a longer period (e.g., 10 months) may be needed to see improvements (Borgestig et al., 2015). The present study suggests that communication outcomes can be observed even when there are time limitations, which is encouraging when working within the constraints of the traditional 9-month academic school year.

A strength of this study is that it was conducted in the children’s own classrooms, offering a strong indication of communication outcomes if the eye tracking intervention was to be adopted more widely. Future studies that examine communication outcomes between children who engaged in eye tracking AAC software and those who did not are needed. In the current study, statistical analysis of communication outcomes between the two groups was not possible due to having only two participants in the latter condition. However, our general comparison of communication outcomes demonstrates marked improvements for the group that participated, supporting the case for eye tracking sessions.

An interesting finding is that the students who saw the greatest difference between pre and post-intervention assessments were diagnosed with Rett syndrome, suggesting that they are particularly well suited to this type of intervention. According to surveys and interviews of parents and professionals (e.g., teachers, speech-language pathologists) who work with this population, eye gaze has been reported as the most common modality used for expressive communication in girls with Rett syndrome (Bartolotta et al., 2011; Urbanowicz et al., 2014). Anecdotally, one of the teachers (who continued to work with the eye tracking systems after the study concluded) observed remarkable eye gaze communication skills in her students with Rett syndrome in the months that followed. This highlights a need for more studies about eye tracking interventions in this clinical population.

Integrated and Nonintegrated Eye Tracking Systems in the Classroom

The literature on the effectiveness of eye tracking systems cites comparable accuracy metrics between different integrated and non-integrated systems (Mantiuk et al., 2012), our study confirms their findings. There were no differences found between systems with respect to lag time and gaze registration. With both measures speaking to the effectiveness of the system types, the findings are quite clear. Our paper contributes to the field with results from an in-the-wild setting in a specialized population (RQ2).

While the effectiveness of both systems was comparable, the system type was a factor in the engagement of the child, with integrated systems offering greater accuracy in eye-gaze registration, producing better outcomes. This result offered an answer to the third research question, how do eye tracking system types influence interactions between eye tracking devices and learners with complex communication needs. Although it did not have an effect on the length of the sessions, the system type could predict the amount of visual attention paid to the screens. The amount of time a student’s gaze was directed at the screen was almost twice as high in sessions with integrated systems (86% of session spent with eye gaze directed toward the screen) compared to non-integrated systems (44% of session), suggesting a greater motivation for children to use the system. This could be because the students were encouraged by the comparatively better functioning of the integrated system—their actions resulted in desired effects. The non-integrated systems had more calibration difficulties and may have been demotivating for students.

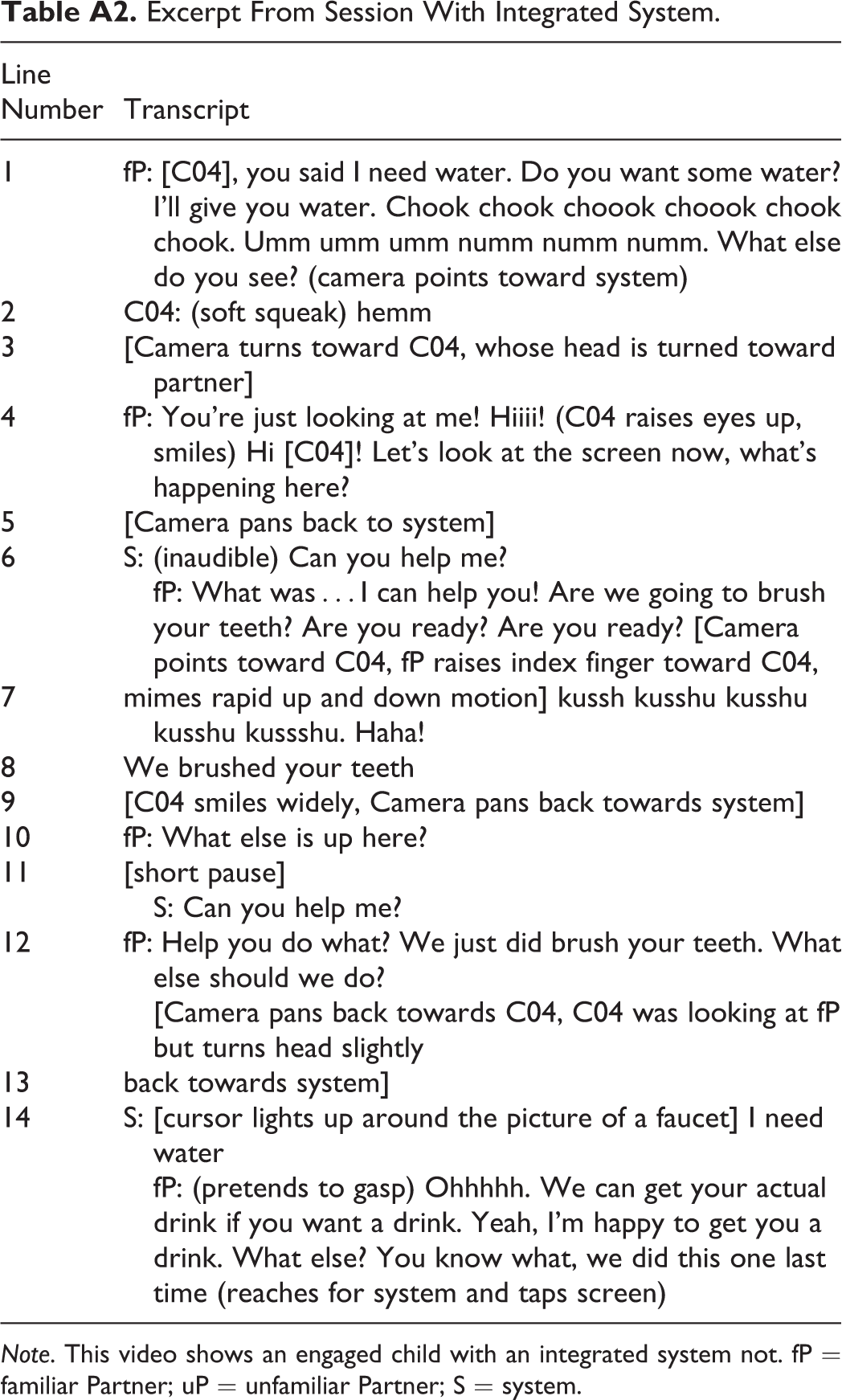

Calibration can be more difficult with children who have motor impairments since involuntary movements can interrupt the process. Certain body positions (e.g., reclined) also require a unique setup of the eye trackers. However, the setup of non-integrated systems required exceptional patience and persistence on the part of teachers and students. Field notes from individual sessions captured numerous instances when calibration was unsuccessful even after following previously successful processes. In many instances, the cursor on the screen would not move when the user shifted their gaze, which resulted in frustration on the part of the user. This had notable effects on the social interaction that followed. When children with complex communication needs encounter problems interacting with their environments, it can lead to high levels of stress and difficulties in remaining focused (Hersh, 2013). From a cause and effect perspective, the labor invested (on the part of the student) did not lead to expected outcomes, increasing the likelihood to abandon the system. That is, after trying to use their eyes to engage in communication when the system would sometimes not accurately reflect the student’s intention, they may have given up more easily. Many sessions with non-integrated systems demonstrate this effect. In contrast, with integrated systems, once users were positioned in front of the device and their track status was confirmed, there was little discrepancy between where they were looking and where the camera registered their gaze. Teachers concluded that the accuracy of both systems was similar when they worked but that the calibration and setup for non-integrated systems were often so lengthy that students were no longer interested in playing with the eye tracking software. To offer readers the qualitative differences between the sessions with two different system types, we present two transcripts in the appendix to better characterize the quality of interactions observed (Tables A1 and A2).

Although devices that are required for AAC should be of low cost, interactive, easy to use, convenient, and requires little maintenance (Sharma & Abrol, 2013), and as we wait for the cost of eye tracking technology to lower, our experience demonstrates that it is imperative we consider the impact of system type and make ‘ease of use’ a priority within the context of the special education classrooms. This is especially pertinent when considering the limited time available for setting up equipment within curriculum-directed periods in a day.

Limitations

Data collection occurred at times that minimized impact to the classroom and was subject to resource availability. As a result, the frequency of sessions across various conditions varied (e.g., there were more sessions conducted with familiar communication partners). At the same time, our findings may be more generalizable to other educational settings and reflect more genuine communicative contexts. We also note that in this preliminary study, the sample size is small, although typical of school settings supporting learners with complex communication needs. The results would benefit from replicated studies using eye tracking devices in other educational settings. Future research with larger sample sizes would be encouraged, perhaps by engaging multiple research sites.

Conclusions

In this study, we aim to determine the extent to which eye tracking devices serve as functional assistive communications systems for children with complex communication needs. We addressed the effects of facilitated sessions with eye tracking software on the communication skills of learners with complex communication needs (RQ1). We found significant improvements in the expressive communication skills of children assessed before and after the intervention.

The session data allowed us to examine the effectiveness of integrated and non-integrated eye tracking system types in educational settings (RQ2). System type was significant for four of five outcomes (lag time being the exception). Integrated systems proved to be more effective in a naturalistic educational setting.

In educational settings, children with limited language skills often have reduced opportunities for communication (Bartolotta, 2014). This study serves as a step forward in understanding the role of the system and communication partner in supporting learners who have difficulty finding a consistent alternate form of communication when using eye gaze technologies. Both system type and partner characteristics influenced interactions between: 1) eye tracking devices and learners with complex communication needs, and 2) communication partners (i.e., teachers) and learners with complex communication needs (RQ3). The system type was an important factor in the engagement and responsiveness of children, with integrated systems producing better outcomes. Our findings underline the importance of interaction style in encouraging learners to sustain engaged interaction, with inflected voice being an important aspect that contributed to higher success rates. The familiarity of the partner did not produce significantly different outcomes and may not be a limiting factor in interactions with eye tracking systems, but further research is required.

It is worth noting that eye gaze communication systems in AAC can reduce control from communication partners. Future design considerations can look towards reducing the prompting necessary to encourage children to express themselves as they gain proficiency in social communication. Systems designed to impart communication and control can have a powerful impact on the lives of everyone, especially for people with physical disabilities.

Footnotes

Appendix

Excerpt From Session With Integrated System.

| Line Number | Transcript |

|---|---|

| 1 | fP: [C04], you said I need water. Do you want some water? I’ll give you water. Chook chook choook choook chook chook. Umm umm umm numm numm numm. What else do you see? (camera points toward system) |

| 2 | C04: (soft squeak) hemm |

| 3 | [Camera turns toward C04, whose head is turned toward partner] |

| 4 | fP: You’re just looking at me! Hiiii! (C04 raises eyes up, smiles) Hi [C04]! Let’s look at the screen now, what’s happening here? |

| 5 | [Camera pans back to system] |

| 6 | S: (inaudible) Can you help me? fP: What was…I can help you! Are we going to brush your teeth? Are you ready? Are you ready? [Camera points toward C04, fP raises index finger toward C04, |

| 7 | mimes rapid up and down motion] kussh kusshu kusshu kusshu kussshu. Haha! |

| 8 | We brushed your teeth |

| 9 | [C04 smiles widely, Camera pans back towards system] |

| 10 | fP: What else is up here? |

| 11 | [short pause] S: Can you help me? |

| 12 | fP: Help you do what? We just did brush your teeth. What else should we do? [Camera pans back towards C04, C04 was looking at fP but turns head slightly |

| 13 | back towards system] |

| 14 | S: [cursor lights up around the picture of a faucet] I need water fP: (pretends to gasp) Ohhhhh. We can get your actual drink if you want a drink. Yeah, I’m happy to get you a drink. What else? You know what, we did this one last time (reaches for system and taps screen) |

Note. This video shows an engaged child with an integrated system not. fP = familiar Partner; uP = unfamiliar Partner; S = system.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Social Science and Humanities Research Council of Canada (SSHRC), under grant #950-231395, and Canada Research Chairs (grant ID: 501959).