Abstract

Digitalization of the grid promises a sustainable energy system. However, we do not fully understand how innovative technologies are introduced—and challenged—in legacy infrastructures. In this article, I explore how energy industry practitioners in the UK perform alignment work to incorporate novel digital tools into existing systems. I present my argument in three parts. First, a new wave of digitalization challenges an established order of preplanned and highly assured infrastructure engineering with agile and experimental innovation practices. However, this is not happening without pushback from professionals responsible for safety and stability of supply. Second, I show how software innovators and legacy maintainers temporarily leave their roles to align their practices and bridge their respective communities. By arriving at shared understanding of “testing” and “errors,” both groups advance digitalization while remaining cognizant of the unique temporalities and materialities of energy infrastructures. Third, the negotiations are aligned by the shared goal of cybersecurity, which is reframed as a solution to bring together the divergent epistemic cultures found across the industry. What emerges as a result is digitalization enacted on terms shared by innovators and maintainers; a piecemeal, contested, and slow process, resistant to the dominant cultural narrative of urgency and haste.

Keywords

Introduction

The scale and urgency of the climate crisis act as a catalyst for a complete overhaul of the power grid in the United Kingdom. A confluence of accelerated electrification, developments in battery technologies, and hype surrounding artificial intelligence (AI) has inspired a new wave of promises of the “twin transition”—where the adoption of contemporary digital technologies is said to accelerate sustainability transition in the energy industry (Kovacic et al. 2024; Michalec 2025). However, the causal relationship between digitalization and decarbonization should not be taken for granted (Fouquet and Hippe 2022). We should not assume that it is easy to adopt digital innovations, especially in the context of large organizations operating critical infrastructures (Mohanty et al. 2024).

Previous anthropological and historical research on energy digitalization has showed that it is not as simple as installing a layer of new technologies on top of the old ones (Cohn 2015; Özden-Schilling 2016). The introduction of new tools clashes with the “old world” of legacy systems. In the “old world,” the power grid used to be operated by computers disconnected from the internet, built decades ago for safety and reliability rather than data sharing or interoperability (Michalec, Milyaeva, and Rashid 2022). Therefore, the prospect of adopting innovations which rely on connectivity (e.g., the Internet of Things (IoT), real-time consumer analytics, cloud computing, AI-based predictive maintenance, digital twins) poses challenges to the fundamental goal of energy infrastructure: the safe and reliable delivery of electricity. As such, cybersecurity expertise in the energy industry presents a novel domain for inquiry in STS.

The recent turn to cybersecurity practices in the United Kingdom and elsewhere is taking place under the banner of energy digitalization, bringing forth a fresh set of sociotechnical questions. How do experts introduce new technologies and practices while avoiding the problems of accidental errors, safety failures and increased vulnerability to attackers? When working toward critical infrastructure protection, to what extent are software innovation practices compatible with the engineering expertise found in aging control rooms and substations? What kinds of expert networks are mobilized through the introduction of cybersecurity measures in the energy sector? Addressing these issues requires renewed attention to the matters of professional expertise and situated practices found in the energy industry.

Broadly, this article is motivated by the desire to capture the dynamics of change in large scale infrastructures like power grids. I focus on the interplay between innovation and maintenance of infrastructures against the backdrop of recent cybersecurity initiatives. My analysis specifically centers on how cybersecurity knowledge bridges two communities I refer to as “legacy maintainers” and “software innovators.” This categorization refers to how those roles are commonly framed by peers in the industry rather than by the practitioners themselves (Evripidou, Daniel, and Watson 2024). The first group represents people responsible for the protection of the existing, aging infrastructures. The latter is interested in introducing contemporary digital technologies into those spaces. The work of alignment via cybersecurity makes it possible for those groups to not only collaborate with each other but also to get involved in discussions on regulating both new and old technologies. As such, this work shows that the categories of “legacy maintainers” and “software innovators” are subverted when faced with dual challenges of continuing grid stability while modernizing its information infrastructure for internet connectivity.

In this article, I share findings from a qualitative study of expertise to further our understanding about the practices of alignment in contemporary energy infrastructures. I present my argument in three parts. First, I show that digitalization initiatives challenge an established order of preplanned and highly assured legacy maintenance with the agile and experimentation-oriented software innovation practices. However, this is not happening without pushback from professionals responsible for safety and stability of the grid. As a result, digitalization is not only the act of introducing new technologies but also maintaining legacy systems, institutions, and expert collaborations. By arriving at a shared understanding of “testing” and “errors,” diverse practitioners advance digitalization projects while retaining the unique temporality and materiality of energy infrastructures. The negotiations are aligned thanks to the goal of cybersecurity, which is reframed as a solution to bridge the divergent epistemic cultures found across the energy industry. What emerges as a result is digitalization as a piecemeal, contested, and slow process enacted on terms shared by innovators and maintainers.

The Past, the Present and the Future(s) of Energy Digitalization

Digital technologies have been assisting with the practices of grid operation for several decades. 1 Cohn (2015) showed how shortly after the Second World War, analog machines and digital computers developed in parallel in the US electric utilities. This is because the older generation of equipment, despite being slower and less accurate, better addressed the user need of being able to simulate the electrical network, hence providing a better “feel” for the issue at hand. The expertise of energy practitioners is in constant flux, resisting neat categorization. For example, engineering practitioners might face the challenge of stepping out of their traditional remit, for example, to create economic models (Özden-Schilling 2016) or manage increasingly digitalized control rooms (Abram and Silvast 2021). As power grids expand, so do the configurations of expert collaborations required to uphold its functionality.

The resulting energy infrastructures are now composed of multiple layers of equipment and knowledge, as Abram and Silvast (2021) write: “any study of contemporary energy systems in the UK inevitably has to address the jumble of old and new ‘assets’ …. Far from being an ideal rational system, energy distribution relies on layers of infrastructure that date back many decades.” In terms of workers’ practices, researchers found instances of many exercising flexibility and creativity in otherwise tightly controlled environments (e.g., a degree of freedom in choosing one's shifts or time taken to complete rigorous safety training; Abram and Silvast 2021; Silvast, Virtanen, and Abram 2022). Finally, there is also a matter of materiality. Technologies regarded as mature in domestic and professional contexts elsewhere might be novel in the energy industry due to the prevalence of air-gapped (i.e., disconnected) systems, such as cloud architecture for industrial control systems (NCSC The National Cyber Security Centre 2024).

Recently, a combination of several factors gave rise to a “new wave” of digitalization in the United Kingdom. From the record sales of electric vehicles (EVs) in 2023 (National Grid 2023), through over 68% rise in the battery storage capacity (Renewable UK 2023), to the excitement about the potential of AI to maintain energy assets (Energy Systems Catapult 2024), digitalization promises to decarbonize the grid through accelerating the electrification of transport and heat as well as improved operations of existing assets. Some prospective applications include, for example, digital twins of batteries which would allow trading of spare energy capacity at short notice (Krakenflex 2024). Another use case pertains to the predictive maintenance of remote generation assets such as wind turbines with machine learning, which could potentially reduce the cost of manual inspections (Energy Systems Catapult 2024; Mohanty et al. 2024).

Likewise, energy digitalization is a rising policy priority in the United Kingdom since early 2020s (Energy Systems Catapult 2022). Several policy initiatives labeled this way by the industry and the government include the “Presumed Open Data” license condition, the Network and Information Systems Security Regulations (NIS), and the recent policy framework on Smart Secure Electricity Systems Programme (DESNZ Department for Energy Security and Net Zero 2024). With regards to the Presumed Open Data license, Ofgem, the electricity market regulator, requires Distribution Network Operators (i.e., energy distribution companies) to share their operational data. In doing so, Ofgem hopes to provide opportunities for software innovators (e.g., companies selling services like EV charging optimization) to create new flexibility services (Ofgem 2021a). Further, the NIS Regulations, the major EU and UK cybersecurity directive, have introduced a new regime of cybersecurity risk assessment for the so-called “Operators of Essential Services,” 2 which includes all major transmission, generation and energy supply companies. A major enabling part of the NIS Regulations was a requirement to create up to date “asset inventories” so the Operators of Essential Services understand the extent of data and equipment under their purview. Finally, the Smart Secure Electricity Systems Programme has spent the past 2 years consulting on the requirements for heat pumps, domestic battery energy storage systems, and EV chargers (DESNZ 2024). Recent proposals set out suggestions for standardized security measures and data sharing formats to allow the development of a competitive market for internet-enabled appliances.

A sense of excitement and momentum in the industry across Europe is palpable, although the associated hype is far from neutral. Typically, the buzz justifies digitalization as inevitable and essential for sustainability transitions (Kovacic et al. 2024). It suggests that there is a “natural order” witnessed in other industries and a consequence of current systems reaching their anticipated lifespan. This narrative becomes troubling once a set of basic assumptions are uncovered. Although the concept of legacy infrastructures is commonly acknowledged in the industry (Hirsch and Ribes 2021), there is no systematized account of the issue (CDDO Central Digital and Data Office 2023). Historically, this has been linked to the “security by obscurity” paradigm, where the practice of secrecy was a main way to protect mission-critical infrastructures (Mercuri and Neumann 2003). In doing so, secrecy was not conducive to keeping or sharing records. Nowadays, legacy maintainers working for the energy industry still do not fully know about the range of hardware and software within their remit, hence improving data and equipment visibility has become one of the priorities of the NIS Regulations (NCSC 2018).

Aside from insufficient record keeping of their own systems, maintainers do not hold a single agreed definition of “legacy.” Instead, they point at number of generations of industrial computers over time (Green et al. 2017). 3 In practice, infrastructures become legacy once embedded in the context of impending innovations, posing interoperability challenges such as security patching (CDDO 2023). The notion of legacy can also be used strategically, by implying that the tardy infrastructure upgrades ought to keep in time with the ever accelerating research and development breakthroughs (McKinsey 2022). The following section will problematize this sentiment and summarize several key STS insights on the interplay between innovation and maintenance.

Studying Innovation and Maintenance in Infrastructures

Infrastructures have an elusive quality, escaping attempts to clearly define and bound them—there is no tipping point between an artifact and infrastructure (Edwards et al. 2009). In that vein, STS has taken an interest in how infrastructures come into being, rather than what they essentially are: how they are coproduced, what they are dependent on, how they fail (Sandvig 2013). Rethinking infrastructures as a verb rather than a noun (“infrastructuring,” see Pipek and Wulf 2009) allows to shift focus to the dynamic processes of collaboration. The commitment to understand infrastructures as practices rather than things opens the possibility to inquire about what appears to be a key tension in the industry literature (UK Cyber Security Council 2023). Namely, a tension between maintenance and innovation.

A recent wave of STS studies has taken interest in temporalities and chrono-politics in the context of energy system transitions (Moss 2016; Pfotenhauer et al. 2022; White-Nockleby 2022). Foregrounding temporality adds richness to the analysis by highlighting how time-related concepts (e.g., “real time insights,” “regulations always lag behind innovations”) can be deployed strategically to promote certain vision of technologies (Riles 2004). Similarly, studies concerned with the introduction of digital innovations commented on the market pressures to act evoked by “big tech” companies and software ventures. For example, Thylstrup et al. (2021, 191) point out that “big data ventures operate with a temporality that favors speed over patience: a medium-quality answer obtained quickly is thus often preferred over a high-quality answer obtained slowly.” Meanwhile, Schiølin (2020) identifies discursive tactics of tech evangelists, arguing they frame digitalization as urgent and inevitable and justifying it with the argument of “living in exceptional times.”

Alongside innovation, STS studies of stability, whether conceptualized as stabilization, maintenance, or repair, also offer relevant insight (Kocksch et al. 2018; Nova and Bloch 2023). The stability of infrastructures has been associated with their normalization and embedding in society (Shove and Trentmann 2019). Yet, durability should not be assumed of infrastructures, rather, it is a result of continuous work of maintenance and repair, always depending on the adequate investment and political will. In recognition of infrastructures’ fragility, STS scholars turned their attention to expertise of maintainers, theorizing the work of repair as based on improvisation, capacity to give/receive care, and collectivism (Kocksch et al. 2018; Ojala 2022). Above all, the work of maintenance studies had an overtly normative mission: to give recognition to the labor and knowledge of overlooked, unglamorous and taken-for-granted practitioners (Denis, Mongili, and Pontille 2016).

Likewise, cybersecurity has been previously conceptualized vis-à-vis maintenance and innovation. Kocksch et al. (2018, 1) state that “caring for IT security requires continuous, often invisible work that relies upon tinkering and experimentation and addresses perennial oscillations between in-/securities.” Maintenance and care in cybersecurity point to the paradigm of “fixing broken objects” (Dunn Cavelty 2018). Perhaps inadvertently, linking security to the matters of repair and maintenance has led to a widespread belief in workplaces that security is a blocker of digital innovations (Subramanian 2020). This view has been recently challenged in STS, with Spencer and Pizio (2023) accounting for how shifts in the market of cybersecurity innovations enabled new working arrangements and ways of doing business.

In bringing together literatures on innovation and maintenance, my intention is to understand the interplay between these two sets of practices as well as workers typically responsible for them. I agree with Russell and Vinsel's (2018, 3) observation that innovation is essential in maintenance practices and routines, “in other words, some maintainers can be innovative, and new technologies can play important roles in maintenance regimes.”

Theorizing Collaboration Across Engineering and Science

In order to analyze collaboration practices, it is first crucial to understand the parties involved. Here, the term “epistemic cultures” becomes particularly helpful, pointing at “strategies and principles that are directed toward the generation of truth or equivalent cognitive goals” (Knorr Cetina 1999, 1). They are amalgams “which, in a given field, make up how we know what we know” (Knorr Cetina 1999, 1).

Although epistemic cultures were initially theorized through science ethnographies located in university labs, Knorr Cetina (1999, 246) claimed the concept could be applied to “expert cultures outside sciences.” In this article, the work of two groups of energy industry practitioners (namely, legacy maintainers and software innovators) is not typically concerned with the production of new knowledge in an academic sense. I argue that my case study is relevant to the notion of new knowledge in an applied context because it analyzes attempts to establish novel “best practices” in typically routinized and rigid environments. Understanding how practitioners introduce new ways to discover computer vulnerabilities or assure the reliability of increasingly complex systems is a vital aspect of “engineering epistemology” (Bucciarelli 1994; Liote 2024).

A recent articulation of the usefulness of epistemic cultures for a wide range of empirical contexts can be found in Kruse and Silvast (2023) who introduce the term of “alignment work” (building on Strauss et al. (1985) and Vertesi (2014)). They argue that after bringing attention to professional differences through the lens of epistemic cultures, one needs to consider how to bridge those differences fruitfully: “alignment work is the continuous work that bridges those seams, 4 aligning epistemic cultures—perhaps temporarily—to make a seamless and stable movement of knowledge possible” (Kruse and Silvast 2023).

Let us now consider the work on expertise and collaboration in the context of large-scale infrastructures. In the domain of critical infrastructure computing, there is a well-established distinction between Operational Technologies (OT) and Information Technologies (IT), and their associated workers (Evripidou et al. 2023; Hazell, Novitzky, and van den Oord 2023; Michalec, Milyaeva, and Rashid 2022; Slayton and Clark-Ginsberg 2018). OT systems are within the purview of “legacy maintainers,” that is mechanical and control systems engineers whose machines typically sense, actuate and control industrial processes. OTs are traditionally unnetworked, come in ruggedized hardware and are built to prioritize physical safety and durability. In contrast, IT systems refer to computers responsible for the management of the business side and consumers; they are usually connected to the Internet, run by software practitioners, range in age between established (e.g., a billing system) and recent (e.g., a digital twin of a wind turbine) systems, and typically fall outside of the stringent safety standards applied to OT.

With industry calls for “converging IT and OT” (Palo Alto Networks 2024) it is vital to understand how practitioners characterize themselves, their coworkers and how they mobilize collaborations across cultural boundaries. In the context of this article, it is important to remember that professional identities are often dynamic, especially in emerging fields which lack codification (e.g., charterships, established degrees; Downey and Lucena 2004). And so, despite a common emic division between OT and IT, practitioners often “wear multiple hats,” representing plural organizations, attending working groups and committees, or advocating for novel career paths (Michalec, Milyaeva, and Rashid 2022; NCSC 2022). In what follows, I seek to convey this ever-shifting dynamic between stable identities and change, as experienced by the research participants.

Methodology

The analysis is grounded in a qualitative case study of energy digitalization in the United Kingdom. 5 First, between June 2021 and March 2022, I conducted individual and group interviews with 42 experts working on digitalization initiatives for government bodies, energy suppliers, energy networks, security consultancies, research institutions, energy startup incubators, and so on (Appendix 1). For example, I interviewed a manager of the Energy Systems Operator, a head of energy team in the civil service organization, a head of risk working for a major energy generation company, a senior security practitioner at an energy distribution company and a data scientist working at an energy supply company. I asked participants about their involvement in industry guidance, smart energy trials, standardization initiatives, and relevant working groups, seeking to understand how they enact their expertise when faced with questions about critical infrastructure protection: how do they navigate tensions between legacy technologies and innovations? How do they introduce new technologies while keeping the grid stable, safe and secure? How do they collaborate with diverse actors, mobilize allies and promote their agendas?

I sought to speak to practitioners involved in flagship energy digitalization initiatives (see Appendix 2) such as the NIS Regulations (the major EU and UK infrastructure cyber security regulations; NCSC 2018), Data Best Practice Guidance (a UK-based guidance outlining best practices of sharing data and metadata in secure and privacy-preserving formats; Ofgem 2021a), or smart energy appliances standards (a set of high-level standards for vendors of smart home products describing their minimum security, privacy and other functionality requirements, BSI 2021). For recruitment, I collaborated with a project partner, Energy System Catapult, an umbrella organization facilitating networking among energy companies. This enabled a long-term immersion in the field and helped overcome security's culture of secrecy (Muller and Welfens 2023). As a result, I managed to recruit participants through my attendance at industry events, targeted introductions to relevant stakeholders, and through social media sites. All participants provided written consent, as agreed with the University of Bristol Research Ethics Committee, who provided institutional approval for this project.

I was provided with further opportunities to participate in workshops, organize roundtable events disseminating preliminary results, contribute to policy consultations, and cocreate infographics with practitioners to explore the promises and challenges of digitalization (see Appendix 3). Acting as an engaged researcher (de Goede 2020), allowed me to continuously reflect on the dynamics of change and stabilization in energy infrastructures beyond the content of interview transcripts. Finally, I have augmented my analysis with a close reading of Computer Science texts (Amoore et al. 2023) in order to better analyze how the discipline makes sense of the world and what values are prioritized when building digital technologies. 6

Contesting Innovation in Energy Infrastructures

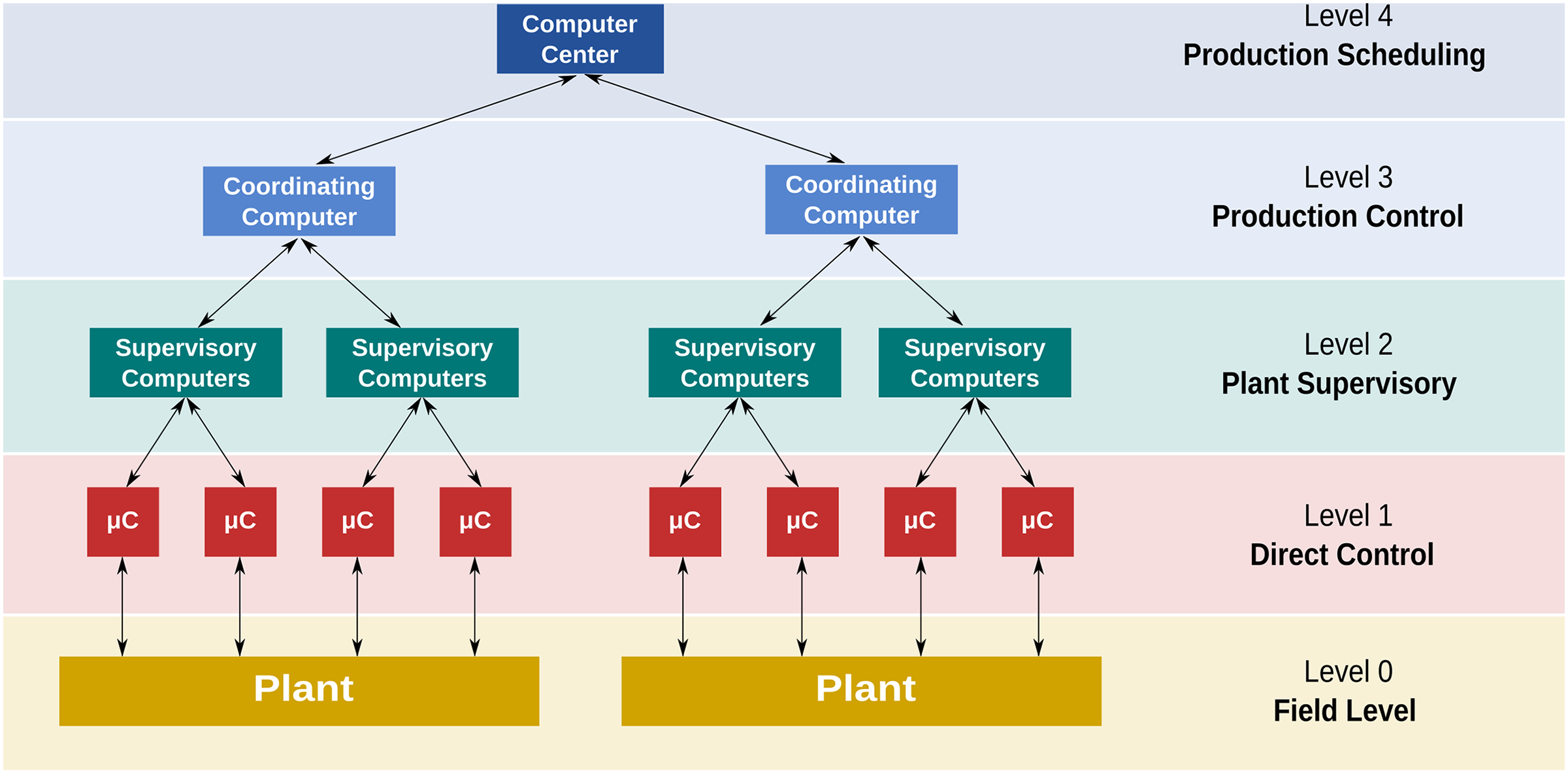

The digitalization of the energy industry presents a major infrastructural shift. So far, their management has been predominantly guided by a control system architecture called Supervisory Control and Data Acquisition (SCADA; see Figure 1), encompassing a layer of field devices (e.g., sensors), communicating with industrial computers (called Programable Logic Controllers) programed to control field devices’ inputs and outputs. Infrastructure workers then use monitoring systems and screens called Human Machine Interfaces to analyze the information and supervise operations. Data is used to control and schedule operations on site. It is important to note that change in operational technology infrastructures is piecemeal as various “generations” of SCADA systems coexist in parallel. For example, it is common for a single power plant to use both older air-gapped systems and newer, remotely controlled IoT sensors. This suggests that digitalization is not only about introducing the new but also about getting rid of the old. This analytical shift enables us to ask: what is being replaced and why? What cannot be replaced easily even though it should be? In this case, what is at stake is not only old technologies but also discarding concomitant priorities, governance regimes and tacit expertise. For example, digitalization could transform the practices of reliability testing from a regime of regulators evaluating trust in engineers performing tests on physical components to certify them (Downer 2010), to a regime of governance grounded in simulations calibrated to represent engineering assets, like wind turbines or heat networks.

Functional levels of SCADA. Level 0: Field devices, sensors, actuators, valves, switches and other components that can measure and control variables like temperature, pressure, flow and levels. Level 1: Programable logic controllers or remote terminal units processing data from field devices. Level 2: Supervisory control station that collects and analyzes data from multiple local control stations, contains a graphical user interface for operators to monitor and control processes. Level 3: Systems used to track orders, schedule production runs and manage inventory. Level 4: Enterprise business systems responsible for the management of organization, for example, Enterprise Resource Planning or Customer Relationship Management (Daniele Pugliesi 2014, CC License 3.0).

Although digitalization is widely promoted across the industry and policy (Energy Systems Catapult 2022; Ofgem 2021a), it is no secret that preserving the core functionality of the grid remains a priority to legacy maintainers: “Critical sectors like nuclear, for example, are extremely slow. As I say, the focus is very much on safety” (P31). Legacy maintainers do not hope that all aging infrastructure will be replaced—at least not for a while. However, they do expect that any innovations will be introduced on their terms rather than guided by the priorities of software innovators: “some of the old systems don’t go to modern, you know, they don’t easily incorporate AI. A lot of it is on paper” (P11). This contestation ought to be read productively; it is an attempt to maintain infrastructures while also advancing innovation, rather than reject it completely.

In the second half of this article, I argue that through these practices of productive contestation, the epistemic cultures of software innovators and legacy maintainers are converging through the development of a shared set of cybersecurity practices, vocabularies, and opportunities for negotiation. By reaching an alignment in their understanding of cybersecurity, digitalization becomes more acceptable in the context of energy infrastructures.

A Clash of Epistemic Cultures?

Energy digitalization cannot be understated in terms of the complexity of the challenge. The source of difficulty is inherently sociotechnical as it lies in the recognition of contrasting epistemic cultures guiding legacy maintainers and software innovators. In fact, participants themselves pleaded for an improved cultural analysis of their field: P07: That is a word I have written down (laughter). That is absolutely the word I have written down. It is the culture piece which is really critical. P06: We are embarking on a culture change program, and it is in its early days, but a lot of things we are talking about is iterating and doing small experiments, failing fast, learning, and then going onto the next iteration.

Broadly, this research found that the differing cultures of testing and errors across legacy maintainers and software innovators lay out the possible conditions for digitalization as well as their limits. Having realized this themselves, practitioners bridged the contradictory aspects of these cultures by realigning toward a new shared goal—robust cybersecurity as an enabler of safe innovation—and working toward tailored solutions to that end. In doing so, their common goal of cybersecurity enabled the translation of practices and development of a shared vocabulary.

The initial rift between these two communities broadly follows the OT/IT distinction described above. 7 In the words of one of the participants: “The sector has two sides: The OT is operating the grid, and their major need is availability and safety. The IT is the business side, and they want to improve pricing, customer service, etc. But the OT only cares about systems working without disruptions” (P23). During my fieldwork, I sought out events and communities which would help me to draw (and challenge!) the contours around the relevant communities. First, among the groups open to legacy maintainers, there is the United Kingdom's National Cyber Security Centre-led Industrial Control Systems Community of Interest, gathering some 350 OT experts across companies like National Gas, National Grid, consultancies and research organizations. Their core focus is releasing guidance on safety and security of mission-critical systems. Another similar group, the Energy Emergencies Executive Committee Cyber, gathered “twenty-five energy companies to benchmark their current security posture and communicate as the whole sector collectively” (P41). On the other end of the spectrum, the software innovation ecosystem is orbiting around the government-funded incubators and competitions. For example, Energy Systems Catapult provides startup support and policy advice, while the Ofgem's Strategic Innovation Fund (SIF) is a government-funded innovation competition with an explicit goal of funding ambitious projects and firms which can drive the digitalization of the energy industry. Within that, energy operators maintaining legacy systems are not only expected to engage with the Energy Systems Catapult for guidance on best practice, but also, they are required to collaborate with tech startups to receive innovation funding. And so, communities known as belonging to different epistemic cultures are now starting to collaborate on the energy digitalization agenda. The following sections analyze how these distinctive groups have traditionally demarcated their boundaries around two concepts fundamental to software innovation and legacy maintenance: testing and errors.

Testing

For decades, legacy maintainers working in the energy sector have negotiated regimes of testing, validation, and verification to achieve grid reliability (Hughes 1993). But perhaps reliability should not be taken for granted, but rather, appreciated as a subject of the ongoing labor of maintenance. Testing in mission-critical fields of engineering highlights the need for ordering, planning and building trust in the day-to-day operations of the grid. Given the extreme complexity of critical infrastructures, accidents cannot ever be fully “designed out” (Perrow 1999). Hence, a rigorous regime of testing (e.g., electrical safety testing for ground resistance or earth leakage, formal verification of the most critical components of software and hardware) has traditionally been the favored pathway to equip regulators and users with confidence to interact with the grid. Meanwhile, the prospect of introducing digital innovations is met with caution and anxiety about introducing new risks: At the moment it's at the trial stage. Before they [energy companies maintaining legacy systems] migrate data to the cloud, they need to think about all the implications. I’d be more worried that it creates a bigger threat landscape than it needs to, because you open the door to more malicious actors. Also, what if that cloud system goes down? What is the redundancy and backup for that? (P14)

The meaning of testing changes once applied to the software innovation culture. Testing here is more akin to experimentation and demonstration, a safe space to try out new ideas and convince the investors that the product is worth their capital. Innovators rely on mobilizing the promissory capabilities of software and using testbeds as devices, proving the feasibility of whatever the potential investor wants to see: “[with test beds] you have to prove the work that you’re doing, to then secure further investment and to get another extension on funding. It's also about accessing licenses to use novel equipment

Most importantly, testing is also characterized by differing temporalities found across legacy maintainers and software innovators. In the legacy maintenance culture, testing is primarily carried out in a routinized way to certify equipment before it is ready to use. There is an emphasis on getting things right the first time in case they cannot be easily fixed later. For software innovators, iterative prototyping, testing, acting on feedback, regulating and using is envisaged to act in a closely meshed cycle, without clear boundaries between each stage. As a result, innovators wouldn’t have to wait for accreditation of their products, in the hope that regulators will set high level “agile” principles rather than detailed product standards: It will take a lot of iterations, but what we are doing with the National Energy System Map

8

is a proof of concept, we have got something that is live, we have taken account of all the security regulator's concerns, and we have addressed them. What we need to do now is gather a lot of feedback and then move into our next iteration as quickly as possible, doing it reasonably cheaply and making sure we have a bounded, limited scope that we have set out to achieve so we can achieve success, but not trying to boil the ocean. (P06)

Software innovators hope to run their tests in an “agile” way, involving multiple rounds of iterative testing, prototyping, and receiving feedback. There is an expectation of working with imperfect products, as any emerging faults and errors can be captured by the stakeholders’ feedback in a timely manner. This reconceptualization of testing encourages us to question the social acceptability of experimentation regimes we are increasingly faced with in the context of digitalization of infrastructures. What kinds of tests can we be subjected to, what can go wrong and what cannot? What kinds of infrastructures are too critical to be experimented on?

Errors

Maintainers of legacy energy infrastructures are aware that a seemingly negligible error can spur a chain of unfortunate events, ultimately leading to fatal accidents. Errors are not taken lightly, and the industry is characterized by risk-aversion. Accidents are followed by rigorous, forensic investigations to determine the root cause and culpability (Herkert, Borenstein and Miller 2020). This means that any proposal to upgrade systems and scale up innovations are met with caution: A lot of it is built on Operational Technology that has been tried and proved and has been around for a long time. The biggest challenge in that environment is as you digitalize it, is understanding what impact does that have on resilience? Because you are marrying old technology where the parameters are very well known by the operators [i.e., legacy maintainers working for energy operator companies] with new capability, which might interfere in the way that that operates. (P06).

In contrast, software innovators reconfigure errors as productive practices leading to new discoveries (Thylstrup et al. 2021). Here, “error is a minor part of the total state of the system, a discrete deviation that will not necessarily lead to complete system failure if the system is resilient” (Thylstrup et al. 2021, 193). As such, building enterprise software is much more diffused and dematerialized than operating wind turbines or power plants. In the software innovation world, the line of accountability between the developer writing an “erroneous” line of code (due to a bug, a security vulnerability, or a bias) and the end user experiencing harm is blurred and poorly defined by law, with the UK AI regulatory approaches remaining in the design stage (Ofgem 2024). As a result, software innovators first focus on scaling and only then anticipate risks: [An energy operator] wanted to build a new substation instead of fixing all the security problems they had in their old one. When I asked them about security, they said, “We’re just going to buy a load of vendor products.” Whether the products work or not, they can’t test until they’ve built the new substation. (P14)

This is a crucial remark as it points that, at one level, the ways of working across legacy maintenance and software innovations are insurmountable. The mode of work where scaling innovation is done ahead of testing and risk appraisal is antithetical to the best practice in the UK power industry, which prides itself in safe and reliable provision of electricity above all (National Grid 2025). However, we cannot afford to passively wait and understand the risks of digitalization in hindsight, because by then, complex energy infrastructures will be in place, locked in, together with their potentially harmful dependencies. To prevent that, anticipatory discourses of safety, harm and “high-risk” systems dominate the policy landscape. For example, the EU's AI Act (European Parliament 2023) and the UK's AI Strategy (DSIT 2023) indicate that a lengthy assurance process will be required for compliance. Yet still, marketing brochures urge energy practitioners to harness the power of innovations to get ahead of the competition (McKinsey 2022). Another site of contestation emerges: errors are simultaneously unacceptable and expected, depending on who you speak to. “A few months ago, the digitalization team in an energy company accidentally released some sensitive data as part of a data sharing initiative. To them, it was part of a normal learning curve. But the National Cyber Security Centre took the matter much more seriously and told them off quite badly” (P42). This quotation illustrates that software innovators, typically embedded in digitalization teams, are happy to start new initiatives, even if it means getting things wrong. However, their judgement of what is considered “sensitive data” does not always agree with the traditional best practice of cybersecurity maintenance advocated by the government agency.

Practices of Infrastructural Alignment

So far, I have analyzed the troubling dynamics between innovation and maintenance in the context of energy infrastructures. The convergence of software innovations in IT and legacy maintenance in OT cannot be taken for granted due to diverging cultures, practices and temporalities present in each community. To finish our story here, however, would be a disservice to the participants I have engaged since 2021. After all, digitalization continues to feature high on the energy industry agenda. Throughout the inquiry, cybersecurity (whether understood as a community, set of requirements, or a set of tools) has become a shared goal for both legacy maintainers and software innovators. But how exactly was cybersecurity conducive to alignment work? I argue that the success of alignment work in cybersecurity relies on diffusing epistemic tensions toward a common, overarching goal of advancing safe and secure digitalization. While the contestations are far from fully solved, cybersecurity has made digitalization more acceptable to both parties.

The following section discusses how practitioners re-aligned their cultural clashes into a common approach to digitalization through the shared goal of security. This is shown with the examples of penetration testing, security test beds, and root cause analysis. Cybersecurity, despite being concerned with protection and breaking into information networks, has lots in common with the epistemic culture of legacy maintenance. For instance, they both share practices of verification, access management, or anomaly/error detection. These similarities helped to de-risk digitalization in the eyes of legacy maintainers. As a result, digitalization of energy infrastructures is not only the act of introducing the new priorities but also maintaining the old goals, such as ensuring system stability. The following examples of alignment work show that the goals of innovation and maintenance are not always opposed.

Penetration Testing and Test Beds as Reinventing Assurance

Legacy maintainers consider reliability as a primary goal. But how to build software which is free of dangerous and costly vulnerabilities if software is a product of human (and hence error-prone) labor? For decades, formal verification has been proposed to build error-free software. The methodology refers to the act of using formal methods of mathematics to prove the correctness of intended algorithms underlying a system with respect to a certain formal specification or a property (MacKenzie and Pottinger 1997). Although applied to critical systems, from NASA (2022) to Amazon Web Services (2015), the future of verification is questioned due to the associated costs, depth of expertise required to write proofs, and the increasing complexity of infrastructures (Dodds 2022).

As such, security practitioners are exploring other, less mathematically certain, avenues to assure software. One of the proposed techniques is penetration testing (pentesting), or a “simulated attempt to breach some or all of that system's security, using the same tools and techniques as an adversary might” (NCSC 2017). Pentesting is an example of what Coleman (2012) describes as “hacker culture,” characterized by a deep interest in tool building, tinkering and autonomy: “Oh, it's one of those more fun things, when I’m told ‘hey here's a control system from one of our vendors, let's break it!’” (P22).

However, pentesting is challenging in the legacy infrastructure environment because “OT systems have grown organically, no one really understands what's on them” (P32). The stakes are high because a poorly managed penetration test can break the whole system, not just simulate a cybersecurity attack. To conduct testing without the risk of breaking critical systems, practitioners created another layer of simulation, a security test bed as shown in Figure 2: an environment comprising both software and hardware components aiming to represent a simplified critical infrastructure setting (e.g., a power plant), where practitioners and scientists can perform a range of security tests, from vulnerability scanning or intrusion detection, to usability of security interfaces (Gardiner et al. 2019).

Electric power and intelligent control security testbed at the Singapore University of Technology and Design. It is designed to enable cybersecurity researchers to conduct experiments to assess the effectiveness of novel cyber defense mechanisms in the context of generation, transmission, microgrids and smart homes (Singapore University of Technology and Design 2023).

Testbeds and pentesting are gathering momentum among OT professionals and academics interested in maintaining legacy systems, with facilities developed by the Queen's University Belfast, the University of Bristol, and the University of Glasgow (RITICS 2023). Additionally, the NCSC Industrial Control Systems Community of Interest released relevant guidance following a series of working groups in partnership between public and private organizations (NCSC 2023). Similarly, the evaluation report of the Ofgem SIF contains numerous mentions of security test beds, demonstrating how the concept captured the imagination of both legacy maintainers and software innovators (Ofgem 2021b). However, security test beds and pentesting are by no means commonly accepted or refined solutions. With concerns about the accuracy of simulations and sufficiently random training data, the debate about “best practice” in infrastructure security continues (Michalec, Milyaeva, and Rashid 2022; Spencer 2022). In the meantime, legacy maintainers accept that testing slows down the procurement of equipment, despite resistance from software innovators: “Our procurement guys always want to make sure that everything is tested to a limit that our chiefs in security are happy with. It can take a while; some vendors aren’t happy with the more rigorous testing we put them through” (P22). In this case, energy digitalization is an act of resistance to the narratives of fast-paced innovation. The need to satisfy security requirements buys time to reach agreements about accepted practices.

Redefining Errors Through Root Cause Analysis

Across the conversations with energy infrastructure workers, I witnessed much aversion to the current understanding of errors shared by many software innovators: “In IT, there is a lot of error tolerance because of the speed of the market. So, I worry critical infrastructure security will be driven by IT too much and IT have a very, very high-risk tolerance” (P21). Another issue pertains to inherent epistemic difficulties with error classification. When faced solely with a harmful outcome (e.g., a blackout or loss of data), it is often challenging to ascertain the root cause of an error. Anything from a malicious attack, operator's fatigue, or extreme weather could be causing issues. Harmonizing the reporting of safety accidents and security incidents has been proposed in the NIS Regulations (NCSC 2018), alongside calls to deepen industry expertise at the intersection of these domains: “I think what is underappreciated is actually a loss of service, not to a cyberattack, but to misconfiguration of a system that you are reliant on” (P07). In recognizing the multiple causes of errors and incidents, practitioners link back their expertise to the practice of assurance, advocating for improved root cause analysis during working group meetings on energy security standards, convened by the government and manufacturers of smart energy products. For example, security assurance obtained via testing would allow to notice the difference between security incidents and accidental errors impacting the functionality of the system: “Usually when you do user testing, you can quickly spot functionality errors, whereas with security you don’t know it's not good until it's really bad. So, if you require assurance, you can shorten the feedback loop and act on errors quicker” (P15).

It is important to note that what is concerning for experts does not always translate to the public realm. With data on security breaches and threat actors in infrastructure settings largely absent from mainstream media discourse, lay members of the public are exposed to other kinds of errors which discourage them from trustworthy adoption: “when I see people worrying about those [smart energy] devices, they are rarely concerned about the data security. They’re more annoyed about accidental error like a massive server outage or API access turned off and suddenly thousands of people have a bricked device” (P25). Here errors worry practitioners not only because of their potential to set off catastrophic events. The second argument for redefining errors as serious matters of concern is the need for public engagement with innovations: The electric vehicles charging system [of my supplier] went offline for about a week and if you were reliant on the app, you couldn’t charge the thing. So, you suddenly go into a place where people aren’t confident that the system is going to be working when it should be working. Okay, so that's a public EV network, a national network, but if it affected the whole of the national grid? (P07)

This is crucial as citizens’ mistrust or disengagement from energy innovations could delay the government's sustainability strategies, which currently rely on the enthusiastic adoption of smart energy devices and infrastructures. Even the recent case of delays in the United Kingdom's smart meters installation program has demonstrated that politicians underestimated citizens’ possible resistance to technology (The Times 2023). Ultimately, whether the grid is faced with an adversarial attack or extreme weather, mundane errors will remain a feature of the energy infrastructure. Just like with the previous cases, the following questions remain open: What can go wrong and what cannot? What kinds of errors and how many are unacceptable? Who deals with errors unrelated to cybersecurity? What kinds of infrastructures are too critical to afford a higher error tolerance?

Conclusion

Energy, despite appearing as a singular service upon reaching our homes, is made up from myriad technologies, companies, and regulations. How to make sense of this complexity and multiplicity when studying change and maintenance of infrastructures? This article has argued that the digitalization of the energy industry is a site of two distinct epistemic cultures: legacy maintainers and software innovators. As shown through the examples of “testing” and “errors,” these two cultures at first appear incommensurable. The initial hype around digitalization of the energy industry was “troubled” by the legacy maintainers who expressed resistance to narratives about fast-paced innovation. However, to make innovation acceptable to the legacy maintenance community, cybersecurity practices were introduced and framed as necessary practices to bridge two epistemic cultures. This suggests that successful innovation in energy infrastructure needs to be made acceptable by legacy maintainers who are attending to safety, reliability and—as of recently—cybersecurity of the grid. Power grids can neither be operated nor analyzed in the same way IT products are.

This paper opens three main avenues for future research. First, I call for a longitudinal observation of epistemic cultures found in energy infrastructures. Although the existence of alignment work via shared cybersecurity “best practices” does not necessarily imply that distinct cultures will converge, we cannot assume that the cultures of legacy maintainers and software innovators will remain the same. Second, it is vital to consider the accountability of practitioners writing and maintaining code in energy infrastructures. This is especially important for energy systems falling outside of the category of “mission-critical” in regulatory frameworks. Following from that, future research should explore the social shaping of the notion of “criticality” over time and space. Future studies of “criticality” will shed a light on what we value in infrastructures and how these values could be put into question in the context of climate crisis. Ultimately, STS should find opportunities for productive engagement with engineers, developers, and policymakers. In light of the climate crisis, we only have one chance to get energy innovation right.

Footnotes

Acknowledgments

Many thanks to research participants for sharing their views for the research project. I would like to also express gratitude to Energy Systems Catapult for assistance with event organizing and participant recruitment. Finally, I am grateful to anonymous reviewers and my critical friends (Sam Young, Salman Khan, and Ben Marshall) for engaging with the early drafts of this work.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Engineering and Physical Sciences Research Council/PETRAS.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Notes

Author Biography

Appendix 1—Participants

| Participant | Role |

|---|---|

| P01 | Senior researcher in cyber threats |

| P02 | Senior manager in energy network operator |

| P03 | Principal cyber security consultant in an international IT firm |

| P04 | Head of cyber and energy team in civil service |

| P05 | Senior cybersecurity practitioner in the civil service (energy team) |

| P06 | Head of a trade body for UK energy companies working on innovation |

| P07 | Security policy expert in a consultancy and government |

| P08 | Senior security practitioner at energy distribution company |

| P09 | Senior security practitioner at energy distribution company |

| P10 | Cyber psychologist in a major international engineering and energy consultancy |

| P11 | Head of risk in a major international energy generation company |

| P12 | Data consultant at energy startup accelerator |

| P13 | Cybersecurity consultant in a major international engineering consultancy |

| P14 | AI and security researcher working at energy regulatory body |

| P15 | Engineer working on technical energy and data standards in the civil service |

| P16 | Founder of smart energy security startup |

| P17 | Head of engineering at smart energy startup |

| P18 | Senior data consultant at energy startup accelerator |

| P19 | Data consultant at energy startup accelerator |

| P20 | Data consultant at energy startup accelerator |

| P21 | Founder of Internet of Things (IoT) security startup |

| P22 | Operational Technology (OT) security practitioner at energy transmission company |

| P23 | Research lead at a major international IoT security consultancy |

| P24 | Sociotechnical cybersecurity researcher in the civil service |

| P25 | IoT and data scientist at energy supply company |

| P26 | Lead OT security practitioner at energy regulatory body |

| P27 | Digitalization practitioner at energy regulatory body |

| P28 | Digitalization practitioner at energy regulatory body |

| P29 | Security compliance lead at energy regulatory body |

| P30 | Sociotechnical security practitioner in the civil service |

| P31 | OT security consultant |

| P32 | OT practitioner in civil nuclear industry |

| P33 | Energy markets regulatory body |

| P34 | Partnership liaison in cross-sectoral digitalization initiative |

| P35 | Social scientist of digital innovations in infrastructure context |

| P36 | Professor of cybersecurity and IoT |

| P37 | Lecturer in digital humanities |

| P38 | Director of communications company |

| P39 | Consultant for infrastructure standard organization with specialism in horizon scanning |

| P40 | Data scientist for energy supplier |

| P41 | Energy industry working group member |

| P42 | Digitalization practitioner for energy startup incubator |

Appendix 2—Digitalization Initiatives and Secondary Data

| Number | Organization | Title | Year |

|---|---|---|---|

| 1 | BEIS 9 | Digitalizing our energy system for net zero: Strategy and action plan | 2021 |

| 2 | BEIS/Ofgem | Transitioning to a net zero energy system: Smart systems and flexibility plan | 2021 |

| 3 | BEIS | Delivering a smart and secure electricity system: the interoperability and cybersecurity of Energy smart appliances and remote load control. Closed consultation | 2022 |

| 4 | Energy Networks Association | Data and digitalization steering group. |

2021 |

| 5 | Energy Systems Catapult | Modernizing Energy Data Applications: |

2022 |

| 6 | NCSC | The Network and Information |

2018 |

| 7 | NCSC | Technology Assurance Whitepaper | 2021 |

| 8 | NCSC | Cyber Assessment Framework changelog | 2022 |

| 9 | Ofgem | Data Best Practice Guidance | 2021 |

| 10 | UK Parliament | Energy Sector Digitalization. POST Note | 2021 |

| 11 | Department for Business and Trade | Case study. Energy system: National Digital Twin Programme | 2023 |

| 12 | Innovate UK | Ofgem Strategic Innovation Fund (SIF) Annual Report 2023 | 2023 |

| 13 | NCSC ICS COI | Guidance: Visibility for Industrial Control System/Operational Technology Environment Asset Management | 2023 |

| 14 | Ofgem | Strategic Innovation Fund (SIF) 2021 Round 1 Innovation Challenges—Discovery Phase. Expert Assessors’ Recommendations Report. | 2021 |

Appendix 3—Engagement Activities

| Item | Date | Link |

|---|---|---|

| Publication of Infographics cocreated with Energy Systems Catapult |

March 2022 | https://www.bristol.ac.uk/bristol-digital-futures-institute/research/seedcorn-funding/energy-systems/security-and-data-sharing-platforms-get-on-the-right-track/ |

| Policy Roundtable. Online Webinar organized in partnership with Energy Systems Catapult | April 2022 | N/A |

| “How to Talk about Cybersecurity of Emerging Technologies.” By Ola Michalec | April 2022 | https://petras-iot.org/wp-content/uploads/2022/03/How-to-talk-about-cybersecurity-of-emerging-technologies.pdf |

| Consultation response: “Delivering a smart and secure electricity system: the interoperability and cyber security of energy smart appliances and remote load control.” By Ola Michalec | July 2022 | https://energyfutures.co.uk/assets/documents/Consultation_response_form-ESA-Michalec270922.pdf |

| Publication of comics and posters for public engagement: |

May 2023 | https://petras.cs.ucl.ac.uk/update/electric-feels/ |

| Researchers and artists Q&A at a public event, Electric Feels project launch at café Kino, Bristol | June 2023 | N/A |

| Exhibitions at museums: V &A Digital Design Showcase (London) and Futures Festival (Bristol) | Two events in September 2023 | https://www.vam.ac.uk/event/5Oz7Jmvd6Q/digital-design-weekend-2023 https://2023.futuresnight.co.uk/ |