Abstract

This article explores how an innovative animal welfare methodology (Qualitative Behavior Assessment) negotiates subjectivism and objectivism in its distinctive epistemology, as it strives to produce a certain kind of laboratory mouse—a complex, social subject. Through an ethnographic study of the development of a Qualitative Behavior Assessment (QBA) tool for laboratory mouse welfare, I show how QBA foregrounds the animal’s lived emotional experience by using qualitative language to assess their welfare, while also relying on statistical methods of validation. Drawing on Mol et al.’s understanding of care as something that parses, handles, and balances diverse “goods,” I argue that QBA practitioners’ care for the data must balance competing priorities and values. I take particular interest in what makes a “good” assessor as they transform between subject and object. When two observers are found to be outliers, with their divergent judgments marring the successful statistical validation of the QBA mouse tool, the situated nature of knowledge is brought to the fore. I argue that turning to the embodied practice of attention, as distinct from care, helps us understand why, and raises questions about the epistemic culture of conventional animal welfare science and the extent to which the human observer risks reification within QBA’s formal methodological practice.

Our encounters and relations with nonhuman animal others have the potential to induce destabilizing and transformative reflections upon our own ‘nature’ as humans. (Nimmo 2010, 6)

Introduction

In a cramped meeting room at Moor University, three animal welfare scientists bow their heads with puzzled frowns toward a computer screen. The mood so far has been buoyant in this presentation of results from a Qualitative Behavior Assessment validation exercise. Experiments with student observers have revealed a high level of statistical agreement over the welfare of pairs of laboratory mice. Moreover, agreement has been achieved through formalizing the kinds of emotionally descriptive judgments—this mouse as “playful,” that one as “anxious”—that are usually forcefully discouraged in scientific publication as too subjective and “anthropomorphic.” It is a potentially groundbreaking set of findings. The fly in the ointment is the test of statistical significance, which is notably low for two of the observers. In short, Observer Number 8 did not score the mice consistently over two sessions, while Observer Number 11 did not agree with any of the other assessors about the overall welfare of the mice, spoiling the final results. How could this be? The speculated psychological state of these observers comes unexpectedly to the fore, as the team debate whether these “outliers” and their unruly judgments can justifiably be removed from their data. (Field Note 2017)

This article takes the controversial case of Outliers 8 and 11 as a keystone for the tensions between objectivist and subjectivist approaches in a scientific animal welfare methodology known as Qualitative Behavior Assessment (QBA). Animal welfare science, often developed for animals in industrial settings, researches the likely impacts of different environments and interventions on animals’ physical and mental well-being and develops validated tools to assess them. The growth of laboratory animal welfare science is of increased interest to Science and Technology Studies (STS) because of its alignment with the turn to care and situated ethics in scientific practice (Puig de la Bellacasa 2011; Haraway 2008), as well as for the oft-cited link between good animal welfare and good science (Druglitrø 2018).

Taking a long time to be accepted as a respectable discipline due to long-standing skepticism over the empirical availability of animal consciousness, animal welfare was gradually granted scientific status on the basis of a strict adherence to objectivist methods. Subjective experiences of welfare must usually be understood as cautiously inferred from measurable, physiological correlates, rather than directly empirically observed—an approach that has been criticized by social scientists for its reductiveness and reification of animals (Lestel 2011; Birke 2014, 75). QBA, however, is different. It has a distinctive epistemological architecture, combining a methodological recognition of animals as whole, feeling subjects with a series of quantitative, statistical checks on those interpretations. If animal welfare science, as a hybrid science that must continually suppress emerging intersubjectivities (Nimmo 2012), already presents a challenge to the modern, dualist separation of subject and object in scientific ontologies, then QBA, as I will show, presents a greater challenge still, not just to the ontological enactment of the animal but also to the role of the human observer.

QBA was developed by Professor of Animal Science Françoise Wemelsfelder in the early 2000s for use with farmed animals, but has gained popularity over the last decade in numerous settings, from working equines, to zoos, to sanctuaries. Initially highly contested, and still treated with skepticism in some circles, it has now won awards (SRUC, n.d), been endorsed by the EU Welfare Quality Protocol, and is used across 500 Waitrose & Partners farms. QBA methodology is underpinned by Wemelsfelder’s belief that a sole dependence on objectivist welfare indicators (such as coat condition or ear position) reproduces the very notion of animals as mindless, mechanistic systems that welfare science should ultimately challenge. Instead of asking assessors to use species-specific ethograms that give a “textbook” reading of separate, isolated actions, QBA asks assessors to use the kind of emotionally resonant language that is typically discouraged—words like “confident” or “depressed.” However, QBA also employs statistics, both to help refine the choice of descriptive terms and to evaluate the reliability of such judgments. Wemelsfelder (1997, 84) defends her approach by arguing that qualitative human judgments, worked with constructively instead of being erased, carry epistemological advantages, being able to integrate many different features of an animal’s behavior in context.

QBA is expanding its presence in its own field and is also increasingly referenced in the humanities as well (Birke and Hockenhull 2012; Aatola 2013; Birke 2014; Buller 2012). This is because of QBA’s radical rehabilitation of animal subjectivity within science in terms of Wemelsfelder’s (2012) philosophically erudite defense of her methodology and QBA’s notable overlap with qualitative sensibilities in the social sciences. For example, Greenhough and Roe (2011, 57) suggest that its “progressive” animal welfare protocol might offer a way of engaging with laboratory animals that promotes care, cooperation, and consent. Charles et al. (2018) used QBA to incorporate dog experiences into their sociological study of police-dog training, while historian Erica Fudge (2018) argues that it echoes early modern ways of relating to animals before the mechanistic dogma of Enlightenment thinkers gained traction. Yet QBA is not premodern but deliberately located in the natural sciences. While qualitatively led, it is still a hybrid methodology, whose dependence on objectivist epistemologies is often overlooked by humanities scholars. Despite being used as a “progressive” touchstone, there have been no social science investigations of QBA’s methodology beyond this author’s own contribution (Tomlinson 2019).

Understanding how QBA negotiates its sometimes uneasy intersection between conventional science and tacit, interpretivist knowledge would, therefore, give a richer account of QBA’s scientific practice. Moreover, since methodologies always enact ontologies (Mol 1999), tracing how diverse epistemologies are held in careful balance, with different degrees of attenuation before subject transforms into object and vice versa, helps us understand the possibilities and challenges that a more “response-able” laboratory animal welfare science (Haraway 2008), more attuned to the intersubjectivity of the research encounter, could face. Laboratory animal welfare science operates in more highly controlled environments than, say, farm animal welfare. It places particularly stringent pressures on objectivist measures of validity and reliability, since it is rare for new welfare interventions to be accepted due to the risk of losing standardization with previous or international studies. Understanding how a methodology like QBA intersects with this environment would also, therefore, provide a particularly sharp insight into the epistemological politics of laboratory animal science. Laboratory mice are intensely objectified in this context and bred in vast numbers to provide tissues, organs, and dynamic models of human biological responses. QBA’s goal is to retransform the mouse into an ethical subject, a lively, sentient being that not only processes its treatment but experiences it. This has important moral implications but also, arguably, epistemological ones, as failure to incorporate the sentient experience into an understanding of behavior can distort findings (Wemelsfelder 2012) and possibly even inhibit a proper understanding of human clinical applications (Lahvis 2017). Laboratory mice, therefore, may be particularly interesting and generative subjects for QBA, and QBA for STS.

To advance these questions, I take up Latour’s (1987, 4) classic edict that entering the laboratory at a point of controversy helps unpack the ontological and epistemological “black boxes” that lie behind the construction of scientific facts. The debate over whether to exclude Observer Numbers 8 and 11 becomes a starting point for my own “upstream” pursuit of how various epistemological and ontological commitments come into play, as a new QBA tool for laboratory mice is tested for validity and reliability. At stake is not only the success of a particular methodology but also a fuller epistemological grasp of an experimental model, the potential for a better account of the reality of animals’ lives under conditions of industrial experimentation, and even a more radical mouse ontology, capable of resisting intense objectification in laboratory environments.

I begin by foregrounding Mol, Moser, and Pols’s (2010) understanding of “care” as something that parses, handles, and balances diverse goods or values, so-conceived. In understanding how Wemelsfelder and team care for their data in caring for animals, I take particular interest in what makes a “good” assessor as they transform between subject and object in this hybrid method. I then argue that a turn to the embodied practice of attention, as related to but distinct from care, helps us understand how the “epistemic culture” (Knorr Cetina 1999) of animal welfare science can distort theoretical commitments to the liveliness of subjects. I suggest that without wider acceptance of, and training in, the situated nature of knowledge (Haraway 1988), the epistemic culture into which QBA was introduced encouraged attentional blind spots in the construction of the experiment, ironically contributing to the outlier’s presence and thus collapsing the subjective presence of the mice. I conclude by suggesting that a more formalized integration of attention into QBA, indeed, in all animal welfare practices, could contribute to the robustness of the findings. Below, I situate QBA within the existing turn to lab animal “care.”

Care and Laboratory Animal Welfare

Studies of laboratory animal welfare and its situated ethical and epistemological commitments have been growing in recent years, including in the pages of this journal (Greenhough and Roe 2018; Ashall and Hobson-West 2018; Druglitrø 2018). This is fostered, in part, by a timely convergence in the theoretical turn to “matters of care” (Puig de la Bellacasa 2011), and the animal research sector’s increasingly formalized commitment to fostering so-called cultures of care in animal research—that is, humane and respectful attitudes and practices toward animals within research facilities (Gorman and Davies 2020). Since laboratory animals are both ethical subjects of legal regulation and also scientific tools of the laboratory whose welfare is, to some extent, linked to data quality (Kirk 2014), this offers a number of possible perspectives on care.

Crucially, care is understood, not as something adjunct to the “real” business of science but something that shapes perception and experience with world-making effects: it is “the relational practices through which some things rather than others come into existence” (Latimer and Miele 2013, 19). QBA offers a way of doing care differently and thus of producing different kinds of mice—complex experiencing agents instead of mechanical tissue-bearers. But for this to change in practice, data must first be cared for through epistemological and methodological negotiation, or “tinkering” (Mol, Moser, and Pols 2010). Mol, Moser, and Pols’s (2010) understanding of care as situated attunement to the costs, benefits, and values at stake in its practice, is particularly useful here. Care, they say, parses, handles, and balances different “goods” and “bads” with varying levels of tension or attenuation. This involves negotiations over that which is to be fostered and that which is to be avoided, amplifying certain realities and denying others: “In care practices, after all, it is taken as inevitable that different ‘goods,’ reflecting not only different values but also involving different ways of ordering reality, have to be dealt with together…care implies a negotiation about how different goods might coexist in a given, specific, local practice” (Mol, Moser, and Pols 2010, 11). Sometimes, this negotiation involves explicit discussion. In other cases, this balancing of outcomes may be more intuitive and accomplished through situated practice rather than prior judgments based on principle. “Seeking a compromise between different ‘goods’ does not just depend on talk, but can also be a matter of practical tinkering, of attentive experimentation…. The good is not something to pass a judgement on, in general terms and from the outside, but something to do, in practice, as care goes on” (Mol, Moser, and Pols 2010, 13).

Scholars have often written about the attentive tinkering of animal carers in the laboratory, or explored, for example, the epistemological tensions of animal care that enrolls animals as subjects for the practice of research while treating them as objects for the purposes of scientific publication (Rees 2017; Lynch 1988; Birke 1994). Yet few studies have explored the particular tensions that animal welfare science, as a process of simultaneous care, attention, and objectification, give rise to. There has been a growing interest in the attentive labor and intuitive knowledge of animal technicians (care staff) (Greenhough and Roe 2018, 2019; Friese 2019; Holmberg 2011), and some work on the ethical dilemmas of laboratory veterinarian practice (Ashall and Hobson-West 2018), which yields important insights. However, given that as a scientific practice, animal welfare research must adhere more strongly to objectivist principles to produce authoritative accounts of animal subjectivities, it carries particularly interesting conflicts. Although subject to substantial political constraints, animal welfare research also has the most power to affect laboratory animal welfare, and on a global scale. Yet it has received little attention, or has been conceptually conflated with the work of technicians and veterinarians, neither of whom have the same kind of epistemological expertise. This is important because the ontological determination of mouse-ness, the ethical legitimation of certain animal procedures, and the distribution of human responsibility often depend on how such care is achieved.

Understanding how a “perspective-based” (Wemelsfelder 2012, 225) methodology such as QBA is received into a highly objectivist epistemic culture, and how these often conflicting ontological and epistemological “goods” are negotiated and balanced, can illuminate not only the potential and challenges of QBA as a method but also the broader epistemological and ontological politics of the hybrid and unstable field of animal welfare science.

Methods

Fieldwork for this study was conducted at an anonymized animal research facility in the United Kingdom, referred to here as “Moor University,” between 2017 and 2018. Animal welfare science PhD student, Maria, assisted by her supervisor Howard, a laboratory mouse welfare scientist, was developing a suite of laboratory mouse welfare indicators that they hoped could replace the typically ad-hoc arrangements across facilities. QBA was to form the sole “psychological” welfare assessment indicator. However, a fixed list of QBA terms had not yet been developed and validated for mice, so, with supervision from Françoise Wemelsfelder, Maria and Howard were developing such a tool, enlisting various colleagues for help. Both scientists held a strong interest in QBA, with Maria appreciating, for example, the direct incorporation of animal emotions into welfare assessment. Her supervisor Howard, whose views appear below, had been a student of Wemelsfelder’s. He believed that many people were “innately” very good at interpreting animal behavior if allowed to be. He was hopeful that a more intuitive approach might help overcome what he saw as some of the problems with objectivist mouse assessment, such as overreliance on prestandardized indicators or a “tick-box approach” to welfare assessment, which potentially missed welfare problems. He also believed that to some extent, objectivist scores were often partly dependent on an a-priori intuition. In effect, what he wanted was a kind of “hybrid,” comprised of both indices and intuition, something he hoped QBA would harness (Howard, unpublished research interview, 2017).

Fieldwork at Moor University included three interviews with Howard, five interviews with Maria, three interviews with Françoise Wemelsfelder, and one team meeting with all three to discuss some key results. They also included interviews with ten colleagues of Maria and Howard and a focus group with eleven students who had participated in the assessments discussed below. The interviews and focus group each lasted sixty to ninety minutes. I also conducted one week of ethnographic work, participating in the QBA mouse film assessments and in its piloting with live laboratory mice. The wider project had a particular emphasis on sensory methodologies, where ethnographic encounters were worked through a wider range of senses such as smell, sound, and embodied interactions. This meant that in QBA fieldwork, close attention was paid to the type of sensory information exchanged, the dynamics of embodied interaction between human and nonhuman participants, and how participants’ knowledge practices were shaped as a result. It is important to recognize that Wemelsfelder would normally lead a QBA project. However, the findings from this study should be understood in the context of a doctoral student project, primarily carried out by Maria with support from Françoise when required.

All research was approved by the University of Manchester, and participants signed informed consent forms before the research began. Names have been changed, and job titles generalized. It was mutually decided with Françoise Wemelsfelder that, as the sole developer of QBA, she could not feasibly remain anonymous. She has preapproved the sections of her transcripts that I quote directly.

Holding Subject and Object Together

In order to understand the significance of the observer within QBA, one first needs to understand how the role of the observer relates to the ontology of the “whole-animal” subject, and how these subject-object ontologies are squared throughout the QBA methodology. QBA’s development in the 1990s was a response to much of twentieth-century animal science, which was so reluctant to treat animal feelings as empirically ascertainable that even describing a piglet as “in pain” was frowned upon (Wemelsfelder 2016). Animal emotions are now far more openly accepted and discussed, yet some influential welfare scientists still argue that it is not necessary to believe emotions exist to improve animal welfare (Dawkins 2012). Where emotions are acknowledged, they are usually carefully described as theoretically inferred rather than empirically observed, and technical language often replaces ordinary language, such as “prosocial” instead of “empathetic.” Any intersubjective relationships necessary for the experiment, such as “habituation” to the researcher, are usually downplayed or erased from publication.

Wemelsfelder disagrees with this approach. She writes that while all scientific judgments are theory-laden and thus indirect, there is a particular risk of agnostically bracketing animal emotions through inference. Firstly, science’s dualist separation of subject and object makes it difficult to, as it were, put animals back together again after an objectivist conclusion has been reached, especially when their subjectivity is inconvenient for human industries (Wemelsfelder 1997, 76). Secondly, erasing an animal’s experience of welfare “limits or distorts our understanding” of it (Wemelsfelder 2012, 224). “In losing sight of others’ perspectives,” she writes, “we create explanations that lack psychological immediacy, and risk having little relevance to their actual lives” (Wemelsfelder 2012, 233-34). Finally, understanding animals as living, sentient beings creates, according to Wemelsfelder (2007, 21), empathetic sensitivities more capable of questioning poor treatment.

For these reasons, Wemelsfelder wanted to bring the subjectivity of animals back into welfare assessment and, having trained in phenomenology, found philosophical support in Ludwig Wittgenstein’s ([1953] 1974, 178) arguments that subjectivity was not the private, invisible experience of a conceptually separated mind and body but something that exists through embodied expression. For Wemelsfelder, this means that an animal’s experience is, in principle, directly empirically available to the assessor, even if in practice it may be difficult to see or interpret. In other words, there is no inherent ontological dividing line.

What makes this subjectivity much more visible, she argues, is recognizing that an animal’s subjectivity inhabits the whole of its body, possessing an emergent level of organization and agency that is irreducible to its parts (Wemelsfelder 1997, 81). The more typical attempt to measure fragmented features of behavior at random intervals, she says, makes the animal itself disappear. In contrast, using a “whole-animal” methodology (Wemelsfelder 2007, 4) means looking at the whole animal’s body in its context—at the movement of tails and ears, the level of tension in its body and its direction of travel, as well as what might have just happened to it. In this way, she argues, not only is the animal’s subjectivity empirically observable but the risk of misinterpretation is vastly reduced.

In acknowledging the significance of this subjectivist epistemology within animal science, the humanities literature almost never discusses QBA’s relationship to objectivism. However, objectivist validations have been integral to its acceptance into the animal welfare community. For example, the observers’ choice of qualitative terms has been correlated with objectivist, physiological indicators such as temperature, heart rate, or cortisol levels (Wickham et al. 2012). The enrolment of novel statistical approaches adapted from food science (Free Choice Profiling and General Procrustes Analysis) was able to show that different observers consistently agreed on the broad emotional dimensionality of an animal, even when they chose their own terms to describe it (Wemelsfelder et al. 2000). Emotional dimensionality here is expressed either as strings of words (e.g., playful-confident-sociable) or on a box graph (positive/negative mood, high/low energy).

This dimensionality, for Wemelsfelder, is ontologically appropriate, because it reflects subjects’ constantly shifting emotional expressions, rather than reifying them as singular, objectifying “states.” It is one way in which she tries to preserve the subjectivity of the animal within these objectifying practices, combined with other techniques, such as including hyperlinks to video of the animals from graph plot points, so that the animals are “still speaking” (Wemelsfelder, unpublished research interview, 2017).

QBA, therefore, treats animals simultaneously as both unknowable subjects-of-a-life and reified objects-on-a-graph, carefully balancing the benefits of a “perspective-based” approach (the animal’s perspective) with those of an objectivist approach. The latter might be summarized as the belief in a reality external to the assessor’s understanding of it (validity), the ontological significance of repeating events (reliability), and the assumption that a reality is best known by diminishing the significance of any individual’s interpretation (Nagel 1986, 6). In this sense, QBA is a kind of “critical anthropomorphism” 1 (Burghardt 1991), a middle ground between naive or uninformed interpretivism, and an objectivist skepticism. It asserts the value of commonsense interpretations but places critical checks on them in an effort to move closer toward an animal’s lifeworld. These commitments to a “whole-animal” methodology, however, also affect the ontological and epistemological position of the assessor-observer.

The Role of the Observer in QBA

QBA’s whole-animal methodology, with subjectivities understood as inherently visible and available but also dynamic, shifting, and fully comprehensible only at an emergent level of organization, requires an observer that can accomplish this holistic integration. The subject-as-assessor is believed to have a particular epistemological privilege here, through an inherent ability to integrate and contextualize, something that Wemelsfelder (1997, 84) believes should be harnessed: “Human observers, as agents, may well be capable of accessing a level of information which, given its dynamic variability and subtlety, evaporates within object-based methods of measurement.” Wemelsfelder does believe that this ability is socially shaped to some extent, improving, for example, with ethological knowledge and familiarity with the species and/or individual over time. She also believes that mechanistic training in animal behavior can inhibit this skill. But it is fair to say that the inherent capacity of the human-as-subject remained extremely important to her: “The integrated nature makes it easy, you don’t have to measure that the side of the ears and the tail are in position four and five, there’s so much more information than that in the moving animals, and we see it all at once. We have no idea how the brain does it, it just happens. I think that’s what happens. It’s the whole sentient animal. Speaking to us!” (Wemelsfelder, unpublished research interview, 2017). It is qualitative language, she argues, which allows the expression of all these integrated nuances of behavior with psychological immediacy in words like “lethargic” or “friendly.” Again, there are relational consequences: qualitative description of animals “makes visible” their subjectivity and liveliness to the assessors, transporting the “speaking animal,” whose subjectivity is diffused throughout its body language, more or less directly into view.

Some training is involved in this process, at least when professionally delivered by Françoise Wemelsfelder. This is in contexts where QBA assessment tools are being formally developed for use by animal caretakers in new species and new applied contexts, such as farms, zoos, or sanctuaries. Taking anywhere between half an hour and three hours, the QBA training works through the meaning of the terms and allows learners to practice the scoring (cf. Phythian et al. 2013). But there are also, it seems, more enigmatic learnings. Talking of animal stockpeople, Wemelsfelder (unpublished research interview, 2017) says, The way I introduce it and the way we develop the terms together, all of that brings about a shift in their way of looking at the animal as a sentient being, which of course was already always there…. But it’s seldomly talked about explicitly. Both because they don’t have time for it, and because they’re often told it’s not valid. And suddenly that shifts, and then they get it, and then they see it, and then they realize it’s tacit knowledge, how much they already have. And so it’s more my goal of—waking that up.

“Waking up” this tacit knowledge involves, for Françoise, a certain amount of training and a collaborative process of term development. But it also involves encouraging what she calls a “subconscious” process of integration. She does this by insisting scorers work quickly, and by ensuring that the visual analog scale is not predivided into its usual numerical categories: “I also think that there is a very good chance that it’s actually a sharper tool quantitatively if you don’t force people into preconceived categories…. And to an extent that is subconscious, so…if that’s what you mean by intuitive…it may to some extent include what people feel or their level of empathy, but in the end, people are not scoring what they feel, they are scoring the animal” (Interview, 2017).

Note here how important it seemed to Françoise that this “subconscious” methodology remains primarily visual. Visual metaphors peppered her descriptions of how the animal’s reality is apprehended, and she quickly challenged my suggestion that the scoring method was a more “intuitive” process. The subject-as-assessor may have emotions as they observe the animal, but the notion that emotions form an important source of knowledge is quickly downplayed. Taking “feelings” into account here seemed to challenge the reality of the animal’s experience. Her discomfort can be traced, perhaps, to her intense efforts over decades to establish the reality of the animal’s emotional experience in the face of skepticism about QBA as nothing more than “a very interesting exercise in human perception” (Wemelsfelder, Interview, 2017). Given that objectivist scientists habitually consider themselves to be accessing an unmediated reality, she may feel that QBA must be defended in the same terms. Still, some assertions of direct, unmediated access to the animal’s experience (“it’s where all theoretical argument stops, at the videos”) sit somewhat uneasily, I suggest, against her recognition of all scientific observation as theoretically mediated (Wemelsfelder 1997, 78), as improving with knowledge and experience, and certainly against a social science understanding of perception as socially and materially situated (Haraway 1988; Grasseni 2004).

So, while Wemelsfelder believes the subjectivity of the observer to be deeply important for subjectivity’s qualitative, integrative, and linguistic capabilities, arguably the dimensions of that subjectivity are somewhat essentialized. The observer’s intuitive ability is conceived as primarily innate, with socially situated emotions in danger of distorting the animal’s reality. Moreover, while the observers undergo their own objectification process through validation, it is less clear than with animals how the object-assessor becomes a subject-assessor again.

In what follows, I argue that failing to explicitly recognize and account for observers’ “situated knowledge” (Haraway 1988) as more-than-innate—as socially and culturally situated and sensorily emplaced—can have problematic consequences for the resulting data. I first explain, very briefly, how a fixed list of terms for laboratory mice is developed and validated, before delving into the validation meeting to explore the challenge that the subject-observer posed to the careful balance of “goods” in QBA.

Validity and Reliability of a Fixed Term List

QBA was originally developed so that assessors could use their own words to describe the emotional condition of their mice. Although such “Free Choice Profiling” still takes place in some projects, developing a standardized Fixed List for each species allows different inspectors to work separately and then remotely compare the welfare of different animals in different facilities. In this way, the Fixed List instrument becomes what Latour (1987, 7) calls an “immutable mobile,” a device that may be circulated without being changed and which mobilizes others to carry out projects along the same lines.

Yet the terms for the fixed list are still developed through a Free Choice Profiling method (Wemelsfelder et al. 2001; Tomlinson 2019). Once they have been chosen, the QBA list is tested for validity and reliability, and there are two criteria the tool must meet before it can be used in the ongoing assessment of live animals. The first is to assess whether the assessors’ collective scores of the mice correlate with an experimental control. Here, the control was a variance in handling methods (handled by the tail versus being coaxed into a clear tunnel), because tunnel handling has been shown to reduce anxious behaviors (Hurst and Gouveia 2017). After ten days of this handling, the behavior of the mouse was filmed, and these films (see Figure 1) were the behavioral material assessed by observers, who were not aware of this prior treatment. Although live assessments have been used at the validation stage, video recordings more easily allow the second criteria, that of intra-observer reliability, to be assessed, comparing the score of the same observer across identical sessions (Wemelsfelder et al. 2001, 213; see also Cooke et al. 2022, for a discussion).

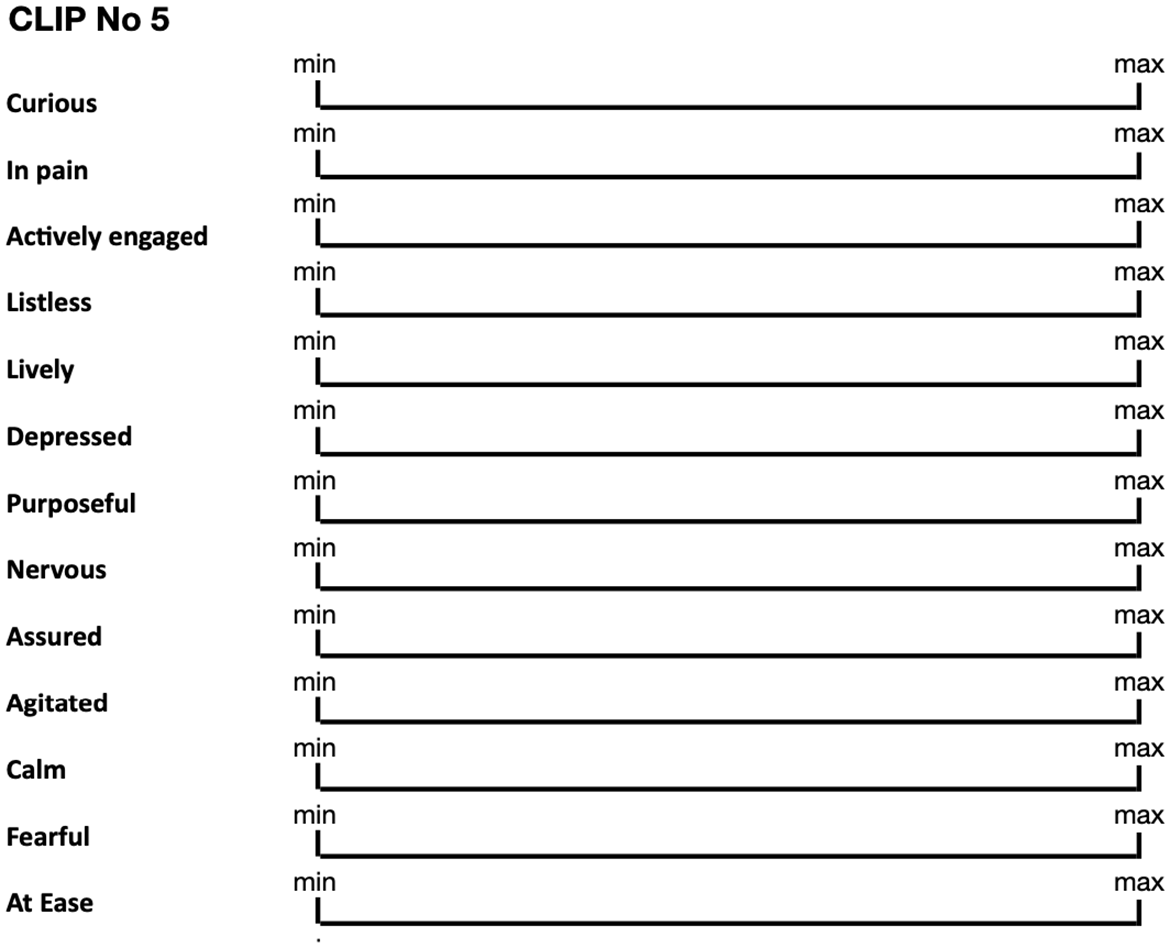

Undergraduate animal science students were chosen as assessors in this project because they were understood to be usefully familiar with animal behavior assessments but usefully naive to laboratory-specific control treatments (such as handling methods) that appeared in the videos. Assessors worked individually, in silence, to score the animals by placing a cross on the unmarked visual analog scale for each of twenty terms, ranging from the Minimum behavior observed on the left to the Maximum score on the right (see Figure 2).

An extract from the fixed list of twenty terms for laboratory mice (paraphrased for anonymity). This list was given to participants in a Qualitative Behavior Assessment [QBA] experiment conducted by doctoral candidate Maria, which sought to establish the validity and reliability of QBA as a welfare assessment tool for laboratory mice.

Experiment participants were asked to work quickly and not worry about being exact with their mark. The same assessors participated in two separate sessions on different days. While led to believe they were watching a different series of videos, unbeknown to them, they were assessing the same mice as before in order to test the consistency of their own scores (intra-observer reliability).

After the assessment took place, Maria measured in millimeters the distance between the beginning of the line and the mark made by the assessor and recorded it. This is the “score” for each qualitative term. The scores given by all observers were then statistically analyzed by the team to ascertain, firstly, how successfully their scores correlated with the expected behaviors for the handling treatment, that is, whether the assessors tend to give higher scores on terms such as “anxiety” for tail-handled mice. Secondly, the level of agreement on the mice’s behavior was assessed—both between observers, and for the same observer across two sessions. Finally, a number of “effects” on the assessment (observer effects, session effects) were statistically controlled for using an ANOVA statistical test.

In order for the new, species-specific QBA tool to be judged reliable, the statistical analysis should show rough agreement between observers and between the two sessions for each observer. If these also correlate with the experimental control, so that “tube-handled” animals are consistently described as less anxious than “tail-handled” animals, it means that the QBA tool is working well. Statistical nonagreement can indicate a fault in the process of development or a difficulty in understanding a particular kind of animal in a particular context.

As described earlier, all the scientists leading on this project, including Howard and Maria, were theoretically committed to the epistemological skills of the subject-observer in QBA. However nuanced the methodological caveats may be in conversation, I argue that only when observing the methods in practice do epistemological blind spots arise, revealing the purification of complex social practices out of science as a “bad” to be excluded (Mol, Moser, and Pols 2010, 11). QBA’s objectivism is still fundamentally situated knowledge, inescapably embodied and emplaced, and there are, I will show, consequences to this being overlooked. This issue arose in a team meeting in which Françoise, Howard, Maria, and I were all present.

Outliers Numbers 8 and 11

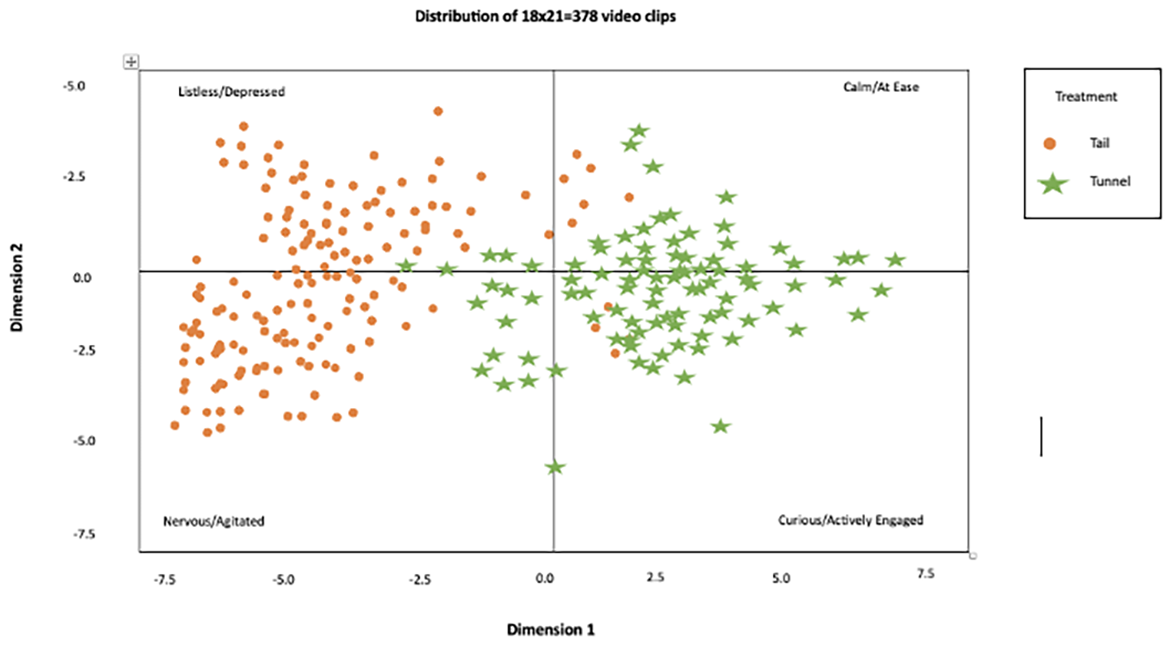

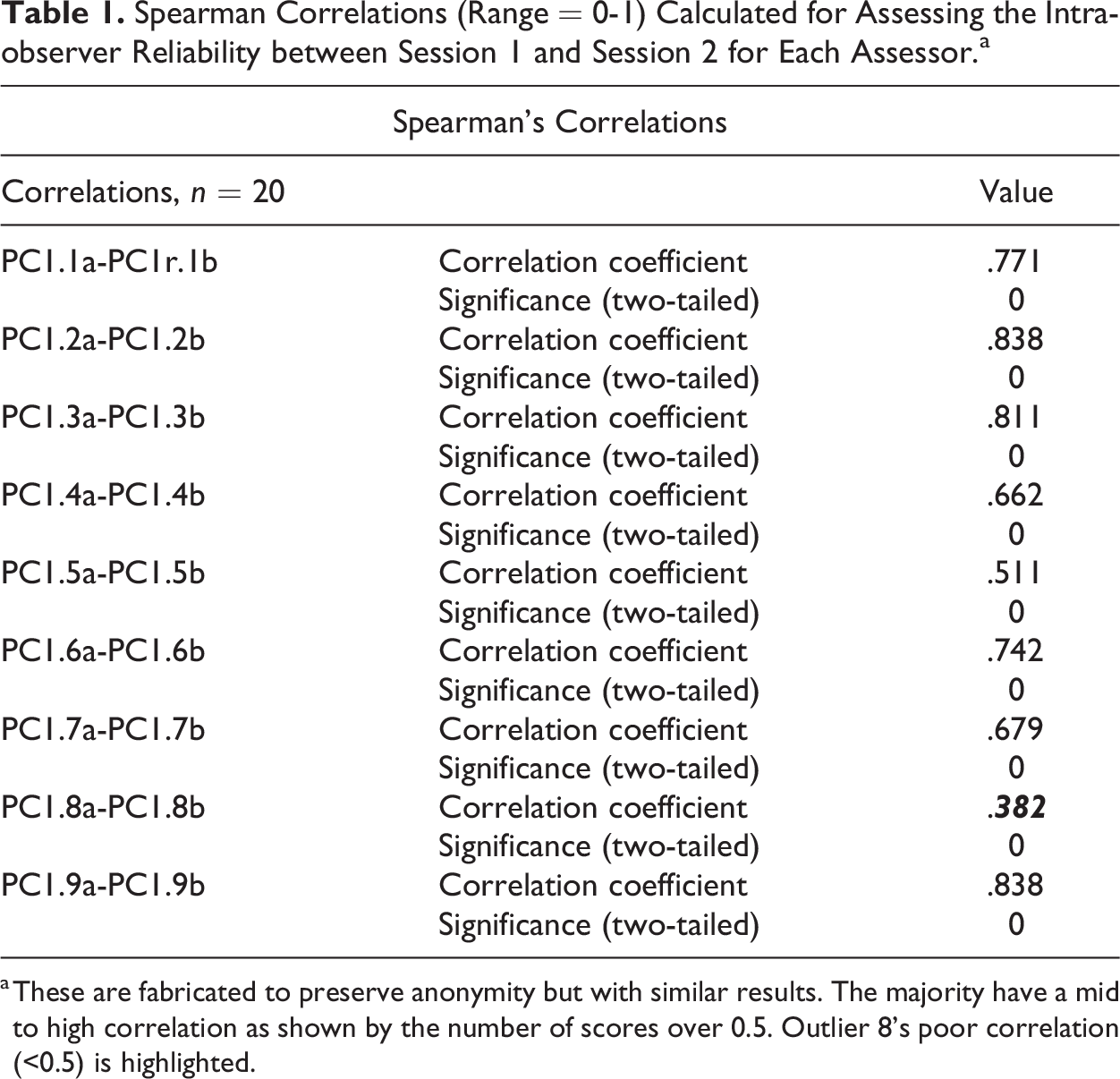

On first results, as can be seen in Figure 3, all participants appeared to show good agreement on whether each mouse was in a positive or negative mood and mapped that more or less “correctly” onto the tail/tunnel treatment structure. On further analysis of the data for nine observers, however, there were two observers who were statistical “outliers” in inter and intra-observer reliability. Observer 11’s assessment of the mice did not agree with the rest of the group, whereas Observer 8 did not agree with their own findings of the week before. This spoiled the overall statistical assessment of QBA’s reliability, putting its efficacy for mice into question. One of the correlation tables is shown as Table 1.

Results from a statistical analysis of nineteen assessors’ fixed list assessments. Results have been fabricated for anonymity, but with similar results. Each plot represents a mouse pair, and the colored shapes show how they have been handled. The graph shows the distribution of the mice across four quadrants, varying by mood and energy.

Spearman Correlations (Range = 0-1) Calculated for Assessing the Intra-observer Reliability between Session 1 and Session 2 for Each Assessor.a

a These are fabricated to preserve anonymity but with similar results. The majority have a mid to high correlation as shown by the number of scores over 0.5. Outlier 8’s poor correlation (<0.5) is highlighted.

The presence of outliers is a common problem in any statistical analysis, where one or more respondent diverges so wildly from an otherwise regular pattern that they ruin the overall statistical relationship. Their removal can sometimes vastly improve the statistical findings but should usually be explicitly justified (cf. Wemelsfelder et al. 2000). When Françoise reveals the problem with the outliers, Howard makes the case for removing them from the data by positing the possible undesirable emotional condition of the assessor:

So is there a justifiable reason for removing person 8 from our analysis?…Because…the inherent problem we’ve got with the two sessions is that the emotional state of the observer in those two sessions could be completely different. Observer 8 might have been having a bad day in Session 2, and that’s going to influence the outcome of how they observe the videos. And that’s something we can’t control for….

[Françoise comments on number 11 being unhelpful for the statistical result]

You did record who it was?

Yes, of course.

Because…it’s worth identifying who that participant was and asking them that question—was there anything different?…Because we didn’t anticipate that, and maybe I should have thought about that…because what I’ve been doing subsequently is trying to get some level of the observer’s emotional valence when doing multiple testing over time to see if…that factor has an influence…. So that particular student may have had, God knows what, there may be some crisis that had occurred on that particular day…“I was just feeling off that day”—that doesn’t work. But if they turn around and say, “I crashed my car that morning,” then I think you may have a slightly more tangible reason to look at that. Just a thought.

Here, it is only through an apparent error or statistical anomaly that attention is drawn to the situated nature of observation—both those of the student assessors whose emotions pose a risk to the stability of the findings in this instrument’s “trial of strength” (Latour 1987, 78) and those of the QBA development team, for whom statistics involve continuous and active modeling, using subjective judgments to decide what to exclude in the care of their data. Echoing Wemelsfelder’s ambiguous relationship to observers’ feelings, the emotional situation of the observers is problematized, threatening the balance of objectivist/subjectivist judgments, as well as the stability of the Fixed List instrument and its ability to “speak for” the mice (Latour 1987, 71). Howard’s suggestion that emotional “valence” (mood) tests would be useful prior to the validation assessments is an acknowledgment of the role of affect, but the purpose seems to be to exclude bearers of unwanted emotions rather than place the responsibility onto the experiment itself to reflexively manage group attention. Instead, one can be simply eliminated for having the wrong kinds of feelings, where “feeling a bit off” doesn’t affect one’s judgment but a car crash does. The assessor is an unruly variable, contravening the order of the experiment, and their attentive care isn’t regarded as malleable—it’s “something we can’t control for.”

What was not considered was how the conditions of the experiment itself fostered and distributed certain kinds of (in)attention. Françoise conceded in interviews that she did not usually discuss with assessors their embodied style of observation and acknowledged that she probably could do more. She did, however, speak vigorously in interviews about the importance of the experimental setup with regard to the intersubjectivity of the encounter and the importance of training in increasing inter-observer reliability. “You’re priming your instrument,” she explained, “you’re setting up people’s motivation to pay proper attention…you’re trying to get them to another space I think…you have to take them there.” Françoise, then, seems to believe that the attentive quality of observers is important, that it requires some initial training and is perhaps even different from conventional assessment: it’s “another space” for which affective interest or “motivation” is needed. However, the extent to which fostering observers’ attention is methodologically prioritized and embedded for others taking up QBA is uncertain. In the case of Maria’s research, there may well have been instructions from Françoise, but arguably more training and support were needed. In interviews, Maria expressed frustration with the practical difficulties she experienced in organizing people for her QBA assessments and her emotional embarrassment in asking for their time. Perhaps as a consequence of this discomfort, when the QBA sessions that I attended were introduced by Maria, very little “priming” of attention was in evidence. The situation of each observer was assumed to be settled once sampled and recruited. Having spent two weeks preparing the mice for the experiment, the student assessors were presumed to be experiment-ready. Perhaps unwilling to acknowledge the intersubjective basis on which participants’ assessments were produced, the task was introduced somewhat mechanistically, with Maria making little attempt, it seemed, to fully engage their interest and omitting to check if they understood what to do. The challenge was increased by the twenty mouse videos being almost indistinguishable from each other, and in alignment with the visual culture of science, the sensory experience, already flattened by the mediation of a video screen, was further reduced by the sound being turned off, because Maria had yelped upon being bitten by a mouse and was embarrassed about this escape of feeling. The silent video made that mouse’s aggression more difficult to spot. The experiment took almost an hour, and the planned break was missed because of a late start. This was due, in part, to Maria’s reluctance to challenge a senior tutor whose seminar continued to significantly run over time, even as Maria waited at the back of the room, suggesting hierarchical departmental power relations. Perhaps as a result of the long session, I noted a marked distractedness from the students, many of whom would score and then instantly pick up their phones in the few seconds before the next video.

2

In the focus group that followed, the students supported this impression. They expressed some worry and confusion about the instructions, exchanging questions about what they were supposed to do. Some admitted their attention and interest had wandered, like Joanna: “I was engaged at the start [others: mmm] and then just tailed off. Cos it was quite long [another: yeah]. And I did try to stay focused but toward the end you were just like ach, it looks a bit like…[others: yeah] You didn’t really think about it as much.”

Throughout the session with Observer 8, Maria’s attention was absorbed in the computer from which she was controlling the videos, and I did not observe her looking up to scan the room and her subjects. If she had, she might have seen Observer 8, eyes closed, head nodding, waking up at the sound of pens across paper and scoring completely blind, in more than one sense of the word.

This analysis is not intended to attribute responsibility to any individuals, especially not in a student project with less specialist QBA supervision than would be the norm in professional contexts. I do not see this as Maria’s, Howard’s, or Françoise’s individual responsibility or failure. This is because, as Citton (2017, 17, emphasis in the original) puts it: “attention [i]s an essentially collective phenomenon: ‘I’ am only attentive to what we pay attention to collectively.”

What I do want to draw attention to is how the epistemic culture of animal welfare assessment in conventional environments, in which individuals’ assessment practices are learned, may be less attuned to the kind of social attentiveness required to produce accurate results, especially in a method that requires a more interpretive “whole-animal” attention. Karin Knorr Cetina coined the term “epistemic culture” to recognize the tacit styles, levers, and “textures” (Knorr Cetina 1999, 192) of knowledge production within different contexts, disciplines, and schools of thought: “the strategies and policies of knowing that are not codified in textbooks but do inform expert practice” (Knorr Cetina 1999, 2). Reflecting on conventional animal welfare science as an epistemic culture that has historically been excluded for its assumed subjectivism, aligns itself strongly with science’s visual modalities, and is perhaps a little hierarchical and authoritarian, explains why QBA’s theoretical commitments to hybridity may become undermined in practice, at least when not conducted by Françoise herself, leaving all parties—humans, data, and animals—vulnerable.

And yet, ironically, this lack of intersubjective attention did not apply to relationships with the mice, whose ability to feel threatened or safe, alert or indifferent when handled was well-understood, taken experimental advantage of, and actively cultivated over two whole weeks of tail- and tunnel-handling. QBA finds itself in a difficult position, promoting the importance of qualitative, integrative, and subjective abilities, but struggling to adequately incorporate the socially situated nature of perception into its methods in a way that can be circulated and reproduced. As a result, careful epistemic balances can become destabilized, objects-on-a-graph transgress into historic, situated subjectivities, and the mice, instead of becoming a group of expressive, complex beings, are rereified into a confusing mess of numbers.

If, as Mol, Moser, and Pols (2010) have argued, good care implies a negotiation about how such different elements of scientific practice are balanced, involving both explicit guidelines and situated, attentive tinkering, then it is this question of situated attentiveness which has come to the fore. As is common in this literature, “care” and “attention” are conceptually conflated in Mol, Moser, and Pols’s (2010) account. However, viewing attention as at least partially distinct from care can help us understand the presence of Outlier 8. Semel (2022, 275) has argued that we need to attend to “how attention is enacted alongside, in tandem with, or against care,” and Jablonsky, Karppi, and Seaver (2022) argue that we need to study how its distribution relates to what is epistemologically and morally valued.

For Lavau and Bingham (2017, 3, emphasis in original), ethnographic work reveals that attention is a directional precondition of care—it is “the practices of attending through which situations and their tensions are made to matter,” dictating how some situations prompt “thought, hesitation, action and care” while others do not. In other words, care as an embodied practice might diverge from care for a principle or idea. Their study of food hygiene inspectors’ work shows how attention is relational across bodies, species, instruments, and policies. It is not just about visual surveillance but involves multisensory engagements such as touch (Lavau and Bingham 2017, 10).

In contrast, this study of QBA-in-action, carried out within a highly objectivist epistemic culture, has suggested that while in theory the situation of the observer and their embodied skills are valued, in practice this may be disregarded, at least when not introduced and supervised by Wemelsfelder. Ethnographic work revealed that the researchers were able to overlook the significance of observer attention as an experimental condition and treated emotions and more-than-visual senses as phenomena that should ideally be repressed or excluded. At the very least, feelings and sensations are not practices that prompt “thought, hesitation, action and care”—they are not “made to matter” (Lavau and Bingham 2017, 3). As a result, the students became bored. Anderson (2004, 751) describes boredom as “a malady of a body’s capacity to affect and be affected.” This sense of meaninglessness and indifference can, he argues, lessen a sense of care and generosity. Arguably, then, failing to care for the affective tonalities of a QBA space, perhaps through a generalized suspicion of observer “feelings”, can produce a failure of care for the experiment and its ultimate beneficiaries. Yet as a result of purifying the social subject out of its practice, the human subject, paradoxically, vigorously reemerged as an outlier. This revealed far more clearly both the situated nature of scientific assessment and the attentive blind spots in objectivist scientific conduct, even when objectivity was theoretically acknowledged to be flawed and emotions conceded to be relevant.

Conclusion

In raising these tensions and paradoxes, the article has contributed to wider STS engagements with care by supporting Lavau and Bingham’s (2017) claim that the direction of care is significant and that at certain points care and attention might diverge. Howard, Françoise, and Maria cared about mice and cared about their science, but the direction of their methodological attention was sometimes inconsistent with those commitments. If care orders and manages realities (Mol, Moser, and Pols 2010), shapes perception, fosters new speculative practices, and is bound up with relations of power (Puig de la Bellacasa 2011), then care’s attentive direction and quality explain why certain realities emerge above others—a disregarded table of numbers over a mouse’s emotional “voice,” or a “curious” silent mouse over an “aggressive,” shriek-inducing one. An approach—perhaps an interdisciplinary approach—that ensures the affective cultivation of attention is routinely and appropriately incorporated into the QBA experimental setup, may be beneficial. That said, QBA should not be singled out from other scientific practices: as Wemelsfelder (2012, 225) often argues, the mechanistic perception of animals under objectivism is no less socially situated than one that recognizes the holistic, dynamic nature of subjectivity.

More broadly, this article has given an insight into how attentional practices are woven into the “textures” (Knorr Cetina 1999, 192) of different epistemic cultures, not so much as so-called “attention regimes” or “envelopes” of attention (Boullier 2019) but as attentional praxes, embodied and affective. This reinforces the importance of the emergent sensory and affective turn in STS (Salter, Burri, and Dumit 2017) and contributes to methodological discussions about how attentive practices, as expressed in bodily orientations, gaze, affects, gestures, and spatial environments, can be investigated in the field (Sormani et al. 2017).

As an animal welfare methodology that more explicitly engages with subjectivities, QBA must more consciously hold subjectivist and objectivist “goods” together and decide what to foster or exclude: the qualitative assessor whose “subconscious” process produces the animal’s reality, versus the self-indulgent assessor whose feelings obscure it; the outlier that condemns a validation test, against a scientist’s perhaps partial professional judgment. The researchers must balance these costs and benefits as a practice of care, both for the data and its consequences. This is important, because the acceptance of QBA as a validated tool for laboratory mouse welfare—yet to be fully accomplished at the time of writing—might have significant implications for the practice of laboratory animal welfare science and ultimately for the mice themselves. If it is successfully validated, then a method will be available, across many countries and contexts, that explicitly recognizes the agency, subjectivity, emotional complexity, and responsivity of mice every time they are assessed, subtly but persistently challenging anthropocentrism, and mice’s normative status as an object-tool of the lab. QBA will also have epistemological implications, supporting the validity of more tacit and intuitive perspectives on mice and thus empowering new perspectives. And of course, the animals themselves may become more articulated and complex models of human behavior. If the experiment fails, this knowledge is undermined, and something important about mice may be lost: the ethical recognition of the living subject every time a mouse is scored.

Footnotes

Acknowledgements

I would like to thank “Howard”, “Maria” and all of the students and scholars at “Moor University” who so kindly opened up their practices and ideas to sociological inquiry. Special thanks are due to Prof. Françoise Wemelsfelder for her intellectual and personal generosity. My gratitude also to Dr. Mariam Motamedi Fraser and Prof. Lynda Birke who commented on early drafts of this article, as well to the two anonymous peer reviewers and the editors of ST&HV for their careful reviewing and processing of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Economic and Social Research Council (SeNSS) Postdoctoral Fellowship (ES/W007061/1) and PhD studentship funding from the University of Manchester.