Abstract

This article builds a theoretical framework with which to confront the racializing capabilities of artificial intelligence (AI)-powered real-time Event Detection and Alert Creation (EDAC) software when used for protest detection. It is well-known that many AI-powered systems exacerbate social inequalities by racializing certain groups and individuals. We propose the feminist concept of performativity, as defined by Judith Butler and Karen Barad, as a more comprehensive way to expose and contest the harms wrought by EDAC than that of other “de-biasing” mechanisms. Our use of performativity differs from and complements other Social Studies of Science and Technology (STS) work because of its rigorous approach to how iterative, citational, and material practices produce the effect of race. We focus on Geofeedia and Dataminr, two EDAC companies that claim to be able to “predict” and “recognize” the emergence of dangerous protests, and show how their EDAC tools performatively produce the phenomena which they are supposed to observe. Specifically, we argue that this occurs because these companies and their stakeholders dictate the thresholds of (un)intelligibility, (ab)normality, and (un)certainty by which these tools operate and that this process is oriented toward the production of commercially actionable information.

Introduction

In 2015, Baltimore County Police Department (BPD) deployed an artificial intelligence (AI)-powered real-time Event Detection and Alert Creation (EDAC) tool to arrest peaceful protesters with outstanding warrants who attended Black Lives Matter (BLM) protests (ACLU 2016, 2). EDAC, which is utilized in sectors including retail, security, and meteorology, are of great ethical concern because the data they generate and prepare—often through social media surveillance (Ozer 2016)—increasingly contributes to violent outcomes when used to support racist policing. Their deployment is not limited to the United States: the UK’s Foreign, Commonwealth and Development Office, Ministry of Defense, and the Cabinet Office recently entered into multi-million contracts with Dataminr, an EDAC provider discussed in this paper (ACOBA 2019). The likelihood of EDAC being deployed in the United Kingdom to similar effect is being investigated by civil society. 1

What we term EDAC is not a discrete group, because many of the technologies that have this functionality can also be classified as surveillance, social media monitoring, automated detection, or real-time analytics systems. The Citizens argues this makes it challenging to identify and monitor EDAC providers. The products we label EDAC use machine learning (ML) to detect anomalous events and trigger subsequent reports (Francis 2019), which sets them apart from traditional systems that require humans to monitor sensors and CCTV cameras, encouraging a new generation of clients enticed by the promises of AI. 2 In this paper, we focus specifically on EDAC used to detect protests. Protest detection tools poignantly show the harmful consequences of the belief that EDAC identifies preexisting events. We argue that EDAC should instead be conceptualized as systems that produce events and their criticality by incorporating racial prejudices. We argue that the term “biased” is inadequate in describing the harmful effects produced by EDAC, because these harms are coproduced with its clients, for whom EDAC products are made actionable. Instead, the safe use of EDAC depends on recognizing it as “performative.” Performativity demonstrates that EDAC always produces the gendered and racialized bodies it purports to “describe,” “recognize,” or “identify” and bias can therefore never be extracted from the software in isolation from its clients—for whom it describes, recognizes and identifies phenomena. Attempts to isolate and remove bias is inappropriate in the context of EDAC tools because this would distract from the wider systemic issues that direct its functionality, as critics have argued in relation to other AI products (Prescod-Weinstein 2018; Gebru 2021). Performativity tackles these issues head on by documenting how EDAC iteratively cites social norms when making observations. It therefore shows that what EDAC observes is consistent with hegemonic value systems.

We specifically draw on the feminist understanding of performativity, as defined by philosopher Judith Butler and feminist physicist Karen Barad, as a more comprehensive way to look at the racializing effects of EDAC and of AI more broadly (Butler 1990, Barad 2007). We illustrate the applicability of feminist and gender studies’ work on performativity to the social study of AI, and we explain what theoretical and methodological contribution it brings to the field of Social Studies of Science and Technology (STS). We argue that a feminist understanding of performativity exposes how EDAC shifts power toward already powerful institutions. Performativity can explain at a socio-technical level how, if our aim is for systems to be “neutral,” they will merely reproduce an unequal status quo; instead, they must be reconfigured to promote justice (Costanza-Chock 2018).

We turn first to Butler’s influential theory of normative citationality, in which she argues that gender does not preexist a body but is iteratively reproduced through the citation of social norms. Second, we draw on Barad’s mode of “posthumanist performativity” to demonstrate how bodies materialize in the act of observing. These theories enable us to analyze AI as an assemblage of socio-technical processes where racialization happens at all stages of development and deployment—hence our expansive conception of AI. In grounding this work in gender and feminist science studies, we demonstrate a commitment to the harms that result from AI’s reproduction and accentuation of social norms. While Barad’s work mobilizes a key tenet of feminist STS—that all knowledge is situated and therefore cannot pertain to objectivity (Haraway 1988)—Butler’s theory of gender performativity is key to our study for its radical rejection of a gendered body that precedes contact with socio-technical systems, as well as its overarching concern for the suffering of those who fail to meet normative expectations.

We combine Barad’s and Butler’s work to analyze two EDAC tools, produced by US-based Dataminr and Geofeedia, respectively. Geofeedia was the first tool to establish partnerships with social media platforms. In 2015, a massive scandal ensued when it gave BPD access to social media data. While it appears to be no longer active, the strategy it uses to identify events is replicated across many other new software that claim to detect events in real time, including Dataminr. We argue that the actionability of the data provided by EDAC must be interrogated to better understand why it can be harmful. These two examples are not presented as detailed case studies; instead, they substantiate and clarify our conceptual discussion of the performativity of AI, which is the focus of this paper. Both examples have AI-powered and non-AI powered components, but identifying which is less important than demonstrating that EDAC tools’ perceived objectivity leads customers to misunderstand them as neutral detection tools. It is imperative that EDAC providers and their customers understand that these tools are performative when deciding which applications are (in)appropriate.

We address the following stakeholders in this paper: designers and marketers of EDAC tools, the organizations deploying them as third-party products (including law enforcement, fire safety, homeland security, corporations tracking customers’ experience in shopping malls through the aggregation of social media posts and reviews, organizations in charge of disaster, and outbreaks monitoring), impacted communities, and the wider public. While we acknowledge that each party requires a different explanation of EDAC’s performativity, this paper is intended as a starting point to understand how these systems are performative. We do not argue that EDAC’s performativity is intrinsically good or bad: it simply is a basic characteristic of these systems. Although we cannot intervene in its intentional misuse, some of the negative effects of EDAC are avoidable if organizations and agencies understand its performative nature and are therefore able to engage more meaningfully with how their own involvement shapes the realities that it produces. Such an understanding would also be useful to the wider public and could have a positive impact by prompting the limitation of certain applications, including protest detection.

To make this argument, we analyze publicly available materials from Dataminr and Geofeedia including corporate blogs, product launch communication documents, publicly available correspondence with clients, scientific papers coauthored by Dataminr, a Dataminr patent, and conference presentations to explore how they communicate their strategic aims, depict their tools as “objective,” and how these narratives affect their deployment by clients. EDAC companies’ commercial protection of their materials (e.g., Dataminr only offers product demos in the form of presentations, does not give anyone except clients access to their system, and does not publicly share screenshots of their interface) should not prevent investigations into how EDAC functions. Company communications and what has been revealed by activists’ investigations are still relevant to our area of inquiry.

Drawing on Barad’s posthumanist performativity, we urge that appropriate policy be introduced that requires companies to communicate how EDAC observationally produces any given protest. Using Butler we demonstrate that EDAC always references (or `cites') previous protests that have been targeted by the police when it makes a decision about whether a crowd is likely to become a dangerous protest. The application of feminist and gender studies’ work on performativity potentially extends to the racializing and discriminatory effects of numerous types of AI systems, thus opening up further directions of investigation (e.g., facial detection and recognition, recruitment automation, recidivism prediction, fraud detection, and welfare eligibility automation). Each warrants further study, but for the sake of clarity and rigor, this paper remains focused on EDAC.

We have structured this scoping paper as follows: in Performativity, we give an overview of Butler’s theory of performativity, of Derrida’s notion of the citational nature of language (Derrida 1990), and of Barad’s intra-active understanding of performativity. We then explain what contribution this understanding of performativity brings to the field of STS. We also argue that existing studies that draw on Derrida’s and Butler’s notion of performativity to explain how ML functions require elaboration and redirection. In Shaking-up Critiques of “Biased Algorithms,” we focus on how performativity can reorient and reinvigorate anti-bias work in AI, exploring which stakeholders and processes performativity makes visible that other methods of making AI less harmful otherwise obscure or overlook. In the two following sections, we demonstrate how Butler’s understanding of performativity as citational and Barad’s as intra-active can together explain AI’s citation of norms and what this looks like when people interact with protest recognition software deployed in particular contexts. In Dataminr: Making Data Actionable section, we show how Dataminr makes bodies observable based on the customer’s goals and purposes. In Geofeedia: Producing the Normal and the Abnormal section, we extend this analysis to show how racialized interpretations of bodies are produced by iteratively citing social norms. Thus, both Dataminr and Geofeedia are powerful examples of EDAC tools performatively producing the events they purport to identify. We show that the harms experienced by participants can be attributed to its production of thresholds of (un)intelligibility, (ab)normality, and (un)certainty.

Performativity

AI’s performativity is what makes it so adept at exacerbating social biases. We take “performativity” in the sense devised by Butler, drawing on Jacques Derrida’s own rereading of J. L. Austin, and in the reinterpretation later proposed by Barad. Drage and Frabetti (forthcoming) discuss how establishing a dialogue between Butler’s and Barad’s theories can enable a close reading of neural networks as performative. In the 1990s, Butler used the concept of performativity to radically transform gender studies’ understanding of how a person is “gendered.” She elaborated on Jacques Derrida’s argument that language is citational to show how authoritative speech “performatively” sediments gender norms. Derrida’s work, for its part, rereads Austin’s conception of the “performative utterance”—authoritative speech acts that execute actions (notably foundational performative “let there be light” in Genesis). Extending this line of argument, Butler argues that gender does not preexist a person but emerges through their interaction with the world (Butler 1990). Drawing on Derrida and Austin’s (2014) linguistic theory, she emphasizes that the acts and practices that constitute gender are citational: they always reference and re-animate a previous source. Normative gender is subsequently naturalized, reinforced, and embodied through these repetitions, so that the performative pre- or post-partum declaration “it’s a girl!” refers to a gender ideal, which remains forever out of reach and exists only through citation (p. 232). This is why Butler (2011) contends that gender is “an imitation without an origin” (p. 188). Some feminists—particularly from STS—including Barad, argue that Butler’s focus on language somewhat left materiality out of the picture.

Barad attempted to remedy this loss by developing the onto-epistemological framework of “agential realism” and focusing on how the observed world and the observing scientist and apparatus performatively constitute one another (Barad 2007). Drawing on Nils Bohr’s foundational contributions to quantum mechanics, Barad states that the known and the knower are inseparable and configure each other differently depending on how the process of observation is carried out (for instance, whether light materializes as a particle or a wave depends on the observational apparatus used to detect it). Barad names this process “intra-action” to emphasize the inseparability of the material and the discursive, society and science, human and nonhuman, and nature and culture. This process of iterative intra-activity stabilizes to produce a measurement or observation according to its observing apparatuses, concepts, and theories, each of which introduces a different “agential cut.” An agential cut is an epistemological act that materializes the observer and the observed and therefore has ontological implications. In this sense, for Barad, ontology and epistemology are not separable. Every act of knowing makes something intelligible while unavoidably obscuring something else. Knowledge is always also obfuscation. Retaining Butler’s emphasis on the world-building capacity of repeated actions that “cite” a norm, Barad uses quantum theory to foreground the material implications of performativity’s thesis that (scientific) discourse can produce that which it seeks to name. Indeed, for Barad apparatuses are not just a system of lenses “that magnify and focus our attention on the object world, rather they are laborers that help constitute and are an integral part of the phenomena being investigated” (Barad 2007, 232). In this way, Barad’s mode of performativity finds a productive application in the analysis of the harmful effects of AI.

The concept of performativity has gained traction in varied fields in the last two decades, including STS. For example, performativity is used to explain how communications events confer on data the meaning they have acquired in previous iterations (Licoppe 2010), to argue that economic knowledge is performative because it molds the market economy (MacKenzie, Muniesa, and Siu 2007; Healy 2015), and to explore how predictive ML models can trigger actions that influence the “predicted” outcome (e.g., Perdomo et al. 2020; Varshney, Keskar, and Socher 2019). 3 This body of work demonstrates the explicative power of performativity. A complementary reading of Butler and Barad would work with and increase the applications of these landmark contributions to STS by explicitly engaging with EDAC’s inseparable materiality and discursivity, thus aligning with Donna Haraway’s (1988) crucial synthesis of natural and cultural spheres (“nature-cultures”). It would specifically unveil how performative phenomena involving technology reproduce harmful social norms that work in favor of social inequality and how this takes the form of an iterative and citational process involving human and nonhuman agents. In doing so, it would disprove the supposed “objectivity” of AI-powered protest recognition software, and explicitly demonstrate how this software reinforces inequalities, minoritizes certain groups, and makes institutional violence on those populations acceptable. This study seeks to complement current uses of performativity in STS by strengthening the focus on social inequality and AI ethics.

Other STS work draws on performativity to address racial and gender harms, for example, in facial recognition (Scheuerman, Paul, and Brubaker 2019). However, this work largely views performativity as a human phenomenon that relates only to the way people enact gender. Conversely, this paper suggests that performativity is what happens when a human and nonhuman set of actors create the world in the process of measuring it. Foundational work in STS, such as Latour’s actor network theory, also deals with distributed agents and rejects the human/nonhuman binarism. We complement Latour’s take on performativity through Barad’s observational view to draw attention to the structural violence and discrimination enacted by AI.

It is crucial to view the AI apparatus as an assemblage of multiple human and nonhuman actors to understand the broader social inequalities that shape the tools that bring the world to life. For this reason, this paper combines gender studies and STS approaches to streamline its approach to harmful consequences of AI systems with multiple interconnected actors. Indeed, EDAC is often collectively authored and revised by engineers and is also shaped by the interventions made on its functioning by customers and users. 4 The harmful effects that it provokes result from interactions between these actors, which are also influenced by commercial incentives to participate in these systems. This is why trying to decide where the “extraction” of bias should take place is an impossible task. Many components must work together to develop responses to inputs, and these responses are influenced by the system’s history and context in unpredictable ways. Even software engineers often do not have a complete understanding of the systems they develop. This has always been the case with software development (Frabetti 2015) and is even truer with AI systems given their extreme complexity. Knowledge about how AI systems work and what their effects are therefore precedes—and is distributed throughout—the development and deployment pipeline. This is why the popular de-biasing and auditing technique of feeding data into an algorithm and assessing the results for fairness using predefined metrics is insufficient in isolating and quantifying EDAC’s harmful outputs. 5

Shaking Up Critiques of Biased Algorithms

Performativity can redirect “anti-bias” work by demonstrating how technology and the effects of social hierarchization—including gender, race, age, class, and disability—cocreate. It can also strengthen existing critical socio-technical scholarship on power and AI. Scholars have demonstrated historical continuity between histories of racism, sexism, and AI ( Mohamed, Png, and Isaac 2020; Phan 2017, 2019) by showing how race is produced through the exposure of certain populations to tools that perpetuate colonial models of domination, or how gender norms are materially reembodied by technologies. Phan (2017, 28) explains how Siri requires gender to exist at both a technical and a conceptual level and makes some use of the concept of performativity by suggesting that algorithms are “speech acts.” In her examination of Amazon’s Echo, Phan (2019) exposes how the Echo produces whiteness as neutrality, reinstates women in the domestic space, and reinscribes the hierarchy between a privileged white woman and a servant who fulfills her domestic demands, which both reproduces and invisibilizes hierarchized domestic relationships. Other extant work offers new strategies for anti-racist ML based, for example, on a reparative model which promotes systemic redress through algorithms that actively counter racism and sexism in the prediction of recidivism (Davism, Williams, and Yang 2021). Finally, scholars including Scheuerman, Pape, and Hanna (2021) identify AI’s high error rate, for example, in facial recognition as proof that sex classification systems don’t work.

The concept of performativity would support the above work by pointing to how algorithms create the events they are trained to recognize, grounded in a deep understanding of normative recognition processes. In the case of Davis et al., it could show how non-reparative practices citationally and materially produce race and gender. It would also look beyond explanations that hinge on in/accuracy and prove that systems cannot ascertain gender from looking at a person—not just because gender is self-identified and relates in complex ways to self-presentation—but because gender recognition systems performatively produce and ascribe gendered values to the body . In sum, our concept of performativity responds to socio-technical scholarship’s question of how social hierarchies, language ideologies, and AI systems are mutually constituted (Blodgett 2020) by explicitly detailing how these ideologies and discourses create and are recreated by technology. It also answers Pratyusha Kalluri’s (2020) question of “how is AI shifting power?” by exposing how AI amplifies powerful institutions, groups, individuals, and ideologies at scale.

Importantly, we do not wish to critique the technique of locating bias in all domains; it can be an appropriate strategy when attempting technical fixes such as increasing or improving the quality of training data (Hutchinson 2020) or classification labels Scheuerman, Wade, Lustig, and Brubaker (2020). These attempts to pinpoint and extract the source of a system’s racism or sexism are of most value when they acknowledge that race and gender are both materially lived and socially constructed (Hanna et al. 2020). However, their effectiveness would be limited when applied to de-biasing EDAC. For example, while Trewin's (2018) technical approach to mitigating bias advocates for incorporating outlier data to make data more representative of disabled people, EDAC requires us to critically engage with how the concept of an “outlier” is produced by a system in the first place, and then materially embodied. This is crucial in determining how EDAC—like many AI systems—produces thresholds of “normality” and “abnormality” that mark black people as suspicious when deployed by predominantly white institutions (Browne 2015, 28).

For political geographer Louise Amoore (2020, 6), these fields of normality and abnormality also determine which protests are marked as risks. Instead of asking at what level of accuracy EDAC operates, Amoore (2020, 139) suggests that we interrogate the threshold at which something is flagged as “abnormal.” As scholars bringing a humanities-based approach to the study of technology, we expand on Amoore’s suggestion to argue that the abnormal is defined and created by an EDAC system through a process of normative citationality, where abnormal events are repeatedly “cited” in a configuration of software and law enforcement. We also follow Blodgett et al.’s call for the motivations behind anti-bias work to match the methods employed by using humanities-based approaches to illuminate social justice issues. This means responding to the question of which kinds of system behaviors are harmful, in what ways, to whom, and why—rather than attempting to “locate” bias at any given stage of EDAC’s development. Attempts to locate bias may perpetuate the vague notion of bias often employed in anti-bias work that neither engages in relevant literature beyond technical explanations nor matches exploratory techniques to motivations (Blodgett et al. 2020). While the prevailing view in AI companies is that most harms can be corrected mathematically, this often cannot remedy the harms produced by complex systems that are integrated into and complicit in structural inequality (Lewis 2021). An example of this beyond EDAC is when Internet of Things devices are used by domestic abusers to monitor their partners (Nuttall et al. 2019). The drive to correct bias mathematically is understandable, given that data scientists and engineers often use their mathematical understanding of bias precisely to argue for (and strive toward) the moral neutrality of ML (Amoore 2020; Lewis 2021). However, EDAC is harmful and impactful not because of data or ML-related “errors” but because stakeholders exploit its ability to performatively produce phenomena that validate and strengthen racialized policing. If the way bias is defined and conceptualized is determined by different social values (Blodgett et al. 2020), the question must be instead, which kinds of partnerships and uses are harmful, and why? A performative understanding of AI can also demonstrate why incremental attempts to “detect and mitigate bias” in systems, adopted by many institutions including the UK government (CDEI 2020), is not appropriate for all kinds of AI. As Timnit Gebru (2019) has made clear, framing anti-bias measures as equalizing performance across groups doesn’t respond to questions about “whether a task should exist in the first place, who creates it, who will deploy it on which population, who owns the data and how it is used.” It also keeps development and deployment decisions in the hands of technologists (Blodgett 2020).

Performativity would help all stakeholders take a more active view of how EDAC creates “anomalous events” (protests) which are subsequently violently policed because of the clients for whom the data are made actionable. The politics and goals of law enforcement at any given historical moment should be understood as part of how Dataminr and Geofeedia’s AI systems function rather than as a consequence or secondary use of these technologies. This hypothesis is dependent on an expansive definition of AI as a system that is both—and at once—human and technological. Perhaps unsurprisingly given that AI is still being debated at a conceptual level, 6 definitions of AI used in certain domains are often remarkably reductive and incomplete. To explore what makes AI “performative,” we need to take a broad view of AI and its component processes. A performative definition of AI that hinges on what it “does” rather than “is” allows for contextualizing technical issues, for example, in their deployment by border control and law enforcement. It also makes the way that AI operates accessible, thus opening up debates on how AI shifts power to technical and nontechnical stakeholders: the people building, selling, and buying this software as well as those who are subject to it, including populations whose data have been extracted or stolen to make the system function.

Given the variation of stakeholders and component parts required for AI to operate, Heather Murray’s definition of biometric technology as a set of practices is applicable to most forms of AI (Murray 2007, 349). We define AI as a set of socio-technical practices that begin at inceptive planning meetings and continue beyond its deployment, extending to human interpretations of AI’s outputs as well as its secondary uses. This definition is important to better understand how AI always makes bodies intelligible through the lens of cultural norms and assumptions, which are incorporated in the design, engineering, policy, and regulation processes that contribute to the transformation of an idea into an “AI.” We reference all these components when we use the term AI and recognize their overlappings and imbrications. In sum, our conceptual approach adopts a more capacious definition of AI that exposes how EDAC tools are always complicit in power dynamics that produce the very realities they supposedly identify.

Dataminr: Making Data Actionable

We focus now on Dataminr and Geofeedia protest detection tools to explain how they generate gendered and racialized interpretations of what a dangerous crowd looks like. First, we look at Dataminr to illustrate how it integrates with its clients’ infrastructures, business goals, and politics—and how these influence the results Dataminr produces. Through its collaboration with US law enforcement, it cannot live up to its advertised claim of being objective and human-free. That is because US law enforcement is an institution made up of humans and has a long history of surveilling and targeting black activists who struggle for justice (ACLU 2022). Dataminr, like other EDAC tools, is imbricated with social institutions with which it enters into political alliances, which we conceptualize as a techno-human assemblage. As such, it captures and fixes the world in a particular way each time it identifies and demarcates an event. This amounts to a Baradian agential cut, where each cut incorporates power dynamics. These agential cuts create dangerous protests—that is, events with threatening connotations that function as speech acts. We then use the Geofeedia tool to expand on the citational aspect of performativity and to illustrate how protests are produced as iterative citations of racialized indicators (e.g., racialized hashtags). Although we focus more on the observational aspect of performativity with Dataminr, and on the citational aspect with Geofeedia, we show that both aspects apply to both tools. Finally, as a consequence of our analysis, we demystify the predictive abilities of these systems and reformulate them as performative. While acknowledging with Amoore that AI potentially occludes the ambiguity of the future through the act of attempting to calculate it, a performative understanding of how AI cites norms would give the AI community further insight into how AI produces the future that it claims to predict.

Following the murder of George Floyd in May 2020, Dataminr partnered with New York and Minneapolis Police Departments to alert them to BLM protests by tracking social media data. The ACLU exposed how Dataminr’s interventions were contributing to racist policing, and subsequently Twitter asked Dataminr not to grant fusion centers in the United States direct access to Twitter's application programming interface, Dataminr complied. 7 Yet law enforcement continue to receive Dataminr alerts, meaning that Dataminr still contributes to domestic surveillance, albeit not through fusion centers (Crowell 2016). Other nations are yet to put in place access limitations to the use of Dataminr’s full services by law enforcement. In 2016, Dataminr even worked with South African law enforcement to monitor students at the 2016 #Shackville protests, which aimed to combat black students’ lack of access to decent university accommodation at the University of Cape Town (led by the Rhodes Must Fall movement). 8

To analyze Dataminr’s performativity in more detail, we look at how it processes data gathered from social media to generate textual messages (“First Alerts”) directed to the client (law enforcement) that trigger an action (police dispatch to the place where a supposedly dangerous protest is happening). Dataminr defines and detects anomalous events by analyzing social media data streams using a combination of AI techniques such as natural language processing, computer vision, and audio processing. It describes this process in technical jargon as “a Multi-modal Understanding and Summarization of Critical Events for Emergency Response” (Jaimes 2019). This means that Dataminr simultaneously analyzes many different kinds of data (multimodality) with the aim of creating a complete picture of what’s going on at an event. Appropriating the language of big data, it emphasizes the “volume, velocity, and variety” of its data processing (Jaimes 2020). 9 This supports the idea that EDAC is not only omniscient but neutral, operating with minimal human input: “97% of our alerts are generated purely by AI without any human involvement” (Biddle 2020). The implication that it provides a “view from nowhere” of disciplinary objectivity (Stitzlein 2004; Gebru 2019) is emphasized in its claim to “detect” and “track” protests while customers “listen” (Kinsey 2019; Dataminr c n.d.).

However, a performative reading of Dataminr shows that “tracking” and “listening” are not activities conducted extra-contextually. Dataminr’s marketing materials largely spotlight benign scenarios (such as fire detection based on rapid analysis of Instagram images and associated texts). Yet in the context of protest detection tracking and listening should be understood not as acts of observation but as racializing processes that create a policeable landscape. Although Dataminr claims to “build a greater understanding of important events and threats” (Dataminr b 2016). it actually makes active decisions about when an event becomes a threat, and when that threat becomes worthy of police intervention. These decisions are mediated by power dynamics between institutions—invested in certain sociocultural beliefs and values—and different parts of the population. This means that when Dataminr scans an area for events on behalf of law enforcement that has an adversarial default position toward people of color, it does so through a racializing lens regardless of whether an event concerns racial issues. Therefore, an event that is constituted by Dataminr as less threatening, following its integration with law enforcement’s policing infrastructure, is also more likely to infer whiteness. Protests characterized by whiteness are ones that the system decides are neutral or nonviolent. This finding is supported by extensive work that conceptualizes race not as fixed identity but as shifting relations of power and domination, with the effect of racial difference being played out against a white norm (hooks 1992; Carby 1999, 249-50; 1992, 12). When Dataminr operates in places where white people have traditionally occupied positions of power, it also reflects the primacy of whiteness in these spaces. With whiteness made invisible by being the norm, blackness is made hyper visible in the form of deviation or deviance. Blackness emerges in Dataminr’s system as an abnormal crowd that triggers an alert. Performativity can help us understand these racial dynamics by showing that Dataminr doesn’t simply locate, extract, and share information but actively engages in normative meaning-making. Protests materialize observationally and citationally according to law enforcement’s existing concerns and perceptions of what a protest is and what kind of people are likely to make it turn dangerous.

A technical analysis supports this theory. Examining a Dataminr patent from 2016 (Bailey et al. 2016), we find it uses language centered on human agency to foreground the fact that Dataminr works with clients to produce what, in technical terms, is called an “ontology” and a “taxonomy” with which to classify and interpret the world. In other words, Dataminr claims to produce a representation of the world that aligns with the clients’ own worldview. Therefore, by working with the police, their system participates in the mechanisms and infrastructure of racialized policing. The patent describes in abstract terms a system and method for receiving and analyzing textual microblogging messages (such as Tweets), which allows the client to search analyzed messages using key words that represent their interests. It also alerts the client to unusual levels of activity, like increased quantities of messages about a topic deemed of interest by the client. The analysis of messages includes the input of microblogging messages, their tokenization into relevant components (words, hashtags, etc.) and the translation of each message into a “vector”—that is, a digital representation of the message as a set of components (words and/or word phrases, metadata, and a sentiment score indicating if the message is positive, negative, or neutral). It also clusters messages according to time intervals that range from one minute to one year, classifies the clustered messages according to classification rules tailored to the client to produce sets of scored messages, and matches the scored messages to search requests from the user. It calls this process of aggregation a “prediction.” The system also includes methods for alerting the user when a prediction exceeds a predetermined level—that is, when an abnormally high quantity of messages is detected in a certain time interval. Again, these are messages about a certain topic that the user deems of interest.

Importantly, the classification rules are stored in a database and they are dynamically generated by the system using a “knowledge base,” whose contents are provided by the user. The knowledge base contains the aforementioned “ontology” and “taxonomy,” based on topics (words, hashtags, etc.) of interest to the client. Dataminr’s performativity results from the fact that its taxonomies and ontologies are created in a circular process, where the client shapes how the product works because adjustments are made on the basis of clients’ feedback. If the client feeds back to the system that certain alerts are or are not working for them, this reinforces or adjusts the knowledge base and how the system generates classification rules. Not only does a racist client worldview result in a racist technology, but it also results in a technology that is actually impossible to de-bias through purely technical means. This is because the decision-making process that transforms Tweets into attention-worthy “events” is distributed across multiple human and nonhuman agents and continually adjusted according to past and present decisions. In Baradian terms, Dataminr’s knowledge base functions as an observing apparatus that produces the world in the act of observing it.

To expand on this point, it is worth looking at Dataminr’s explanation of how it flags events as critical. It claims to provide clients with actionable data by transforming massive multifaceted data streams into “a very small set of highly valuable and customer-readable alerts,” delivered to clients in real time (Jaimes 2019). An alert is therefore the translation of social media data into a textual summary legible to clients and aligned with their priorities. Of course, what data are gathered and how it is prepared and processed depends on what will be important in its deployment context (Seaver 2017). For example, when analyzing a combination of images and text from social media platforms, Dataminr uses various AI techniques to determine which features of a given pair of text (word embeddings) and image (feature maps) are most salient for the detection of anomalous events (Jaimes 2020). To return to the fire scenario, Dataminr gives the example of an image wherein a portion of the sky which appears to denote the presence of smoke is the most salient area when assessing the possibility of a fire. But it is only when the image of smoke is associated with certain words (“burning”) that the image is constituted as a critical event (a fire). This is because a patch of smoke in the sky is statistically “anomalous” and such deviation from the norm is reinforced by cross-referencing the image with human-generated text (typically, a social media post from a nearby witness). In Baradian terms, apparatuses of observation (in this case, the software and the human and nonhuman agents involved in data generation, collection, analysis, and processing) are material-discursive processes that determine which data constitute “digital evidence” of an event (Jaimes 2020). Following this logic, we can also say that data are made intelligible and meaningful through the system’s existing understanding of what a real-time breaking event looks like. In fact, interpretations of an event will only be validated as correct if they align with past data (Chun 2021, 160). This process is therefore iterative, because what has already been constructed as an event influences the construction of current and future events. For example, once it has identified flames as critical in determining the nature of a fire (Jaimes 2020), and once it has associated it with significant word embeddings confirming this, Dataminr classifies the image/text pair as a critical event requiring an alert to be sent to the customer. It also memorizes it so it can be used to identify future similar events. This process is no bird’s-eye view detection, because the system is citing the client’s previous observations of abnormality. It is both linguistic—occurring at the level of computer code, text embeddings, and visual cues—and material, where materiality comprises data scientists, neural networks, and the physical environment of the event. Once again, in Barad’s terms, the observational apparatus determines the onto-epistemological cut, wherein embodied reality and knowledge about that reality emerge together.

When EDAC associates gatherings of black people with violent protests, it “interpellates” or “hails” (Butler 1997, 25) these gatherings into the prevailing ideology. It does this to alert law enforcement, saying “look, a protest!” EDAC’s perception of violence is based on racialized indicators consistent with those of social and legal institutions. What is treated as a potentially violent protest—and therefore unjustly policed—is subsequently fed back into the system, shaping Dataminr’s identification of future protests.

The final step in the process of protest detection is the construction of a “valuable alert,” which is created using Natural Language Generation 10 techniques and takes the form of “a textual description that summarizes the event as an alert” (Jaimes 2019). At this point, the “event” (for now still just a combination of numerical values constituting the AI system’s internal representation of the relevant parts of a post on social media) is translated into a piece of text stating that an event is taking place and asserting its level of criticality—that is, the likelihood of violence to occur. The very existence of this description and its rapid dispatch to the chosen agency (law enforcement, the fire brigade) is part of the performative constitution of the event. By triggering action, the alert functions as a performative utterance (a speech act) that changes things in the world. When integrating EDAC into, for example, law enforcement procedures, these institutions must have greater awareness of how existing practices affect the system’s reliability in detecting events into the future.

Once again, the decision-making process that transforms social media data into a breaking event is distributed across multiple agents, so technical de-biasing mechanisms such as interpretability tools could not reveal the source of harm in Dataminr’s system. Instead, EDAC should be understood as implicated in the values, ideologies, and power dynamics that preexist the constitution of the event—and which play out in and as the event. Thus, we begin to see why the (racialized) constitution of a gathering of people as a protest cannot be reduced to a bias issue. Instead, we must understand that potentially violent protests are performatively produced by AI systems—not in the sense that they do not exist in reality but that whether a gathering of bodies in public space is constructed as a peaceful crowd or as a violent protest reflects previous operators’ perceptions about whether there is implicit menace in black, brown, and white people being together in public space.

Geofeedia: Producing the Normal and the Abnormal

Another influential private company that gained notoriety by profiling the risk of particular events to facilitate institutional intervention is the US-based social media intelligence platform Geofeedia. The company also contributed to AI-driven racialized policing (Amoore 2020). With Dataminr, we have shown that a future protest is identified according to past protest patterns and how this constitutes both an agential cut and an authoritative speech act. Along complementary lines, with Geofeedia, we explore in more detail how racialization is built into this process performatively and citationally. Like Dataminr, Geofeedia’s software implicitly makes decisions about whether a crowd is normal or abnormal, with abnormality indicating the threat of violent protest. Geofeedia is a search-by-location tool that allows customers to access and filter real-time social media feeds anywhere in the world. From providing companies with customer feedback on retail experiences to “event monitoring” (Geofeedia 2015), the tool demonstrates what Browne (2015) calls the multiple forms of surveillance that pertain to all stages of the moral spectrum. The value proposition for this piece of software is providing “relevant and actionable data” that corresponds with the customer’s chosen “lens” (Geofeedia). The company even appears to point to its performative capabilities when allowing clients to produce different worlds through the application of filters (“lenses”) to data. Its flexibility of function means customers can choose what they survey and how they action the data accrued. This lens does not aid or crispen their vision but determines the threshold of intelligibility which distinguishes an innocuous crowd from a dangerous protest. Therefore, the reality of what the lens does undermines Geofeedia’s simultaneous claim to offer an objective and comprehensive view of reality. This adaptable machine interface requires careful and precise deployment, particularly when stakeholders such as law enforcement affect how that data are made actionable.

The following brief analysis of Geofeedia’s partnership with BPD in 2015 exposes three aspects of this performative process. First, as with Dataminr, this process is iterative because protests emerge as copies without an original; second, it citationally racializes protests in keeping with the existing norms of racialized policing; third, it produces the future events it claims to predict. Following the death of Freddie Gray, a 25 year old African-American man who suffered a fatal injury in police custody in April 2015, multiple protests took place across the United States. Geofeedia attracted law enforcement clients that sought to shut down the protests by positioning itself as a platform that facilitated “anomaly detection.” This amounted to producing and quantifying “anomalousness” in crowds and qualifying them as protests.

In April and May 2015, BPD collaborated with Geofeedia, running posts provided by Instagram 11 against its own facial recognition software. Geofeedia scraped photos and text from protesters’ social media accounts (Dataminr c n.d.). This meant that when faces in the Instagram posts corresponded with faces in a database of citizens with outstanding warrants, BPD went into the protests to make arrests in a deliberate effort to shut them down (Amoore 2020, 3). In Cloud Ethics, Amoore explains how in 2015 the Geofeedia algorithms began to flag crowds of people as potentially dangerous if they resembled the Freddie Gray protests—that is, gatherings predominantly made up of African Americans carrying placards with “police terror” messages (Amoore 2020, 3). Their system did this by producing “scored outputs of the incipient propensities of the assembled people protesting Gray’s murder” (Amoore 2020, 4). In Butlerian terms, this process is citational because the protests “recognized” by the system are always repetitions of the same model of a protest, but are also not identical to that model, and are therefore repetitions with a difference. In a circular movement, the system produces and establishes what constitutes a protest according to the customer’s lens, which in turn determines which data are used to train the protest-recognition algorithms. Therefore, there is no “original” protest: only data that keep being fed back into the system. This process necessarily involves exposing protesters to the racist interpretative framework produced by Geofeedia’s collaboration with law enforcement (Google Developers 2012) and therefore contradicts Geofeedia co-founder and CTT Scott Mitchell’s claim that the software offers uniquely contextualized information from the perspective of social media users (Google Developers 2012). On the contrary, Geofeedia decontextualized Freddie Gray protesters’ social media posts so that they no longer expressed the perspective and sentiments of the people who posted them (sadness, anger) but were instead seen as threatening and indicating violent intent from the adversarial default posture of the BPD.

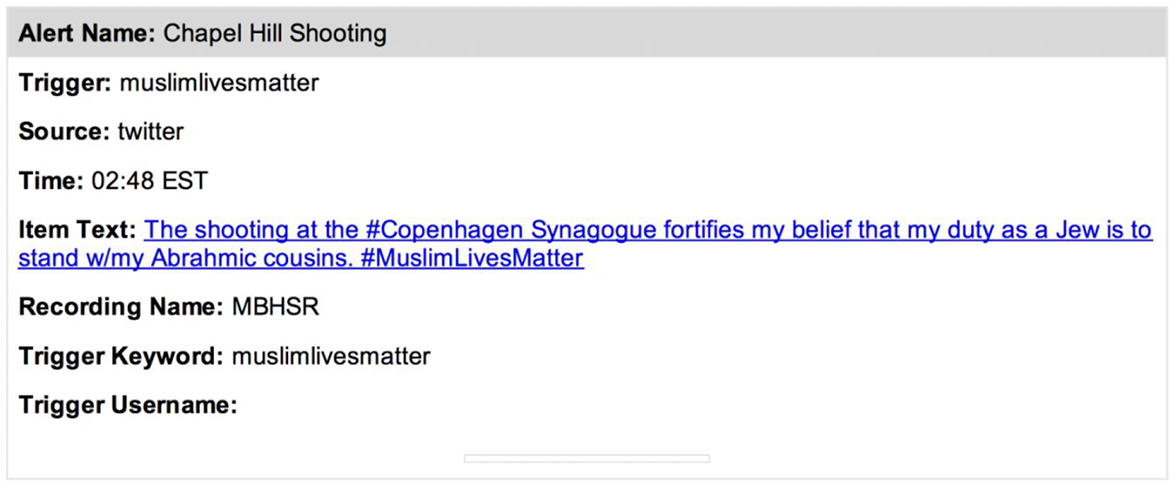

Protests are racialized citationally not only because African American bodies are consistently identified as dangerous through image recognition but also because Geofeedia flags text that contains racialized indicators. This process extends to other racial groups too. When the ACLU conducted an investigation into Geofeedia in 2016, they revealed that key words used by BPD to track protests on Twitter included #muslimlivesmatter, a search mechanism matching the current Islamophobic bent of North American and European policing and juridical systems, and which was specifically used to train Geofeedia’s algorithms. “Muslimlivesmatter” became a “trigger” for BPD (see Figure 1).

Geofeedia, b. 1727.

Law enforcement’s choices reinforced and expanded this performative process of racialization. The ACLU found that local law enforcement in Oakland, Denver, and Seattle deployed Geofeedia to “target neighborhoods where people of color live, monitor hashtags used by activists and allies, or target activist groups as ‘overt threats’” (Cagle 2016). It also found that San Jose PD used Geofeedia to explicitly monitor South Asian, Muslim, and Sikh protesters (Ozer 2016). In other words, the perceived attributes of a crowd of protesters in Baltimore were entangled with BLM protests following the Chapel Hill Shooting, setting the parameters for what may be perceived as future protests worldwide. Geofeedia’s explicitly racialized construction of what constitutes a protest made the software appeal to the aims and intentions of law enforcement in several locations across the United States, for example, by positioning activists as threats in the language of domestic terrorism (Geofeedia b). Herein lies the intra-actional knowledge created by the software, which serves as a focal point for the legal system, the police force, and the capitalist drive to commodify personal data at any ethical cost. The metrics used to signal an abnormal gathering emerge from within the racialized landscape of US law enforcement, a context which directly informs how the statistical probability of violence occurring is generated. If “event monitoring,” “community engagement,” and “sentiment management” translates to shutting down BLM protests, then Geofeedia is true to its word (Geofeedia 2015).

Amoore has explored how AI occludes the ambiguity of the future through the act of attempting to calculate it, thus limiting people’s ability to engage in meaningful political action. Taking this one step further, a performative understanding of how AI cites norms would give the AI community better insight into how AI produces the future that it claims to predict. Both Dataminr and Geofeedia reassure customers about the accuracy of their predictions. Long lists of ML techniques and tools on Dataminr’s website imply that an increase in AI systems results in more objectivity, namely in a stronger ability to detect anomalous events. It implies that the combination of these tools turns fragments of information into a cohesive view of the event in the present, as well as a warning about a possible critical outcome in the future if their client (i.e., law enforcement) does not take preventative action. This is an ontological sleight of hand, where reality comes into being through arrangements of data extracted from social media. By claiming that AI identifies people’s propensities to turn a protest violent, Geofeedia and Dataminr claim they know which people are likely to cause trouble for their clients. Yet protesters’ alleged potential for violence is the result of EDAC’s onto-epistemological violence, which results in further violent repression by the police. Demystifying the predictive abilities of these systems and reformulating them as performative is therefore crucial to prevent private social media monitoring companies from influencing how situations are policed.

The lens of performativity also provides some clarity about what political and policy interventions are needed. First, it responds to the demand to stop asking how to de-bias AI and start compiling proof that AI shifts power in society. Second, it demonstrates why ethical AI should account for how it makes decisions and prompts actionable results rather than merely abiding by formalized ethical imperatives. This responds to our theoretical conclusion that AI systems are agential cuts into the world that unavoidably obscure something while making something else visible. EDAC must not claim to “detect,” “identify,” and “observe” with any degree of neutrality.

Conclusion

This work translates concepts from feminist, anti-racist, and gender studies into meaningful ways forward for AI practitioners, clients, and other stakeholders when responding to the issue of AI’s implication in existing power structures. We have applied our study of AI’s performativity to interrogate specific cases of EDAC where AI harmfully racializes groups or individuals, and we have explained how the language of bias can be usefully supplanted by that of performativity to better express why and how this occurs, at both a social and technical level. We are aware that Dataminr and Geofeedia deserve a more extensive empirical investigation in the context of predictive policing and surveillance studies. We have used them here only as concrete examples to support and clarify our conceptual discussion. Equally, we do not argue that attempts to make the development and deployment of these systems more equitable should be abandoned—only that these “improvements” will only instigate superficial change if practitioners, clients, and the public are unaware that these systems are performative. Stakeholders must have a better understanding of how protest-recognition technology produces the protests that it claims to “detect” and “monitor.” This can help clarify why it is not possible to “de-bias” a system in isolation from, for example, racialized policing and protest management and unethical data collection practices. Understanding AI’s performative production of the disciplinary mechanisms of power is the first step to rethinking how information about protesters is produced, interpreted, and racialized.

Footnotes

Authors’ Note

Elsewhere, we have already discussed how establishing a dialogue between Butler’s and Barad’s theories can enable a close reading of neural networks as performative (Drage and Frabetti, Feminist AI: Critical Perspectives on Algorithms, Data and Intelligent Machines, Oxford University Press, July 2023, p. 7).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received financial support for the research, authorship, and/or publication of this article: Eleanor Drage received funding from a Christina Gaw postdoctoral research grant at the University of Cambridge.