Abstract

Roboticists are faced with a striking discrepancy between vision and demonstration of care with robots. On the one hand, research funders, policy makers, and entrepreneurs expect robots to become a panacea to impending demographic change. On the other hand, efforts to demonstrate that vision in care practice have largely remained unfulfilled. In this article, I investigate how roboticists manage and deal with this discrepancy between high expectations toward robotics research and what robots are capable of doing in practice. I will offer an extensive analysis of the efforts by roboticists and others to install, repair, stage, and, surprisingly, suspend robot dramas. Robot dramas comprise an ambivalent mix of experimental practices that seek to stage visions of care robotics while at the same time testing precarious phenomena of human–robot interaction. This relates two prominent but still largely disconnected strands of research in Science and Technology Studies: works on techno-scientific demonstrations, and on high and low expectations. Here, robot dramas are a crucial site for studying the conflicting interrelation between the theatrical performativity of demonstrations and the interplay of high and low expectations.

Introduction

Care robots have become real in two, largely disconnected ways in recent decades. On the one hand, there is the sometimes hopeful, sometimes gloomy vision of a future with robots often depicted as humanoid machines seamlessly interacting with older people and supporting their caregivers. Such accounts can be found in science fiction and popular media (DeFalco 2020; Meinecke and Voss 2018), but they also pervade scientific research and policy agendas. For instance, the belief that care robots will be necessary in an aging society has become ubiquitous and helped the field to gain increasing political weight (Lipp 2019; Wright 2021). On the other hand, efforts to demonstrate how that vision is realized in care practice have remained a difficult endeavor and largely confined to research (Maibaum et al. 2021). Occasionally, care facilities feature tele-robots or robotic pets, but such exceptions only confirm the overall impression that care robotics still has not managed to live up to its promises.

Because of this persistent discrepancy, roboticists are increasingly expected to demonstrate the usefulness of their robots. In this article, I investigate how roboticists manage and deal with this discrepancy between future-oriented expectations toward robotics research, and robots’ current capabilities. Instead of using this apparent gap to make ontological arguments about what robots are and thus can or cannot do, I analyze efforts by roboticists and others to install, repair, stage, and, surprisingly, suspend robot dramas in the course of so-called realistic tests. I use the term robot dramas to describe an ambivalent mix of experimental practices that seek to stage visions of care robotics and at the same time test phenomena of human–robot interaction through theatrical practices. Robot dramas are not a means to dissolve that discrepancy, but a medium to render actionable the gap between vision and research practice. Analyzing robot dramas relates two prominent but still largely disconnected strands of research in Science and Technology Studies (STS): studies on techno-scientific demonstrations, and works on high and low expectations. Here, I show that robot dramas are a crucial site for studying the sometimes conflicting interrelation between the theatrical performativity of demonstrations and the interplay of high and low expectations. Furthermore, robot dramas harbor and materialize certain imaginaries of care and old age, which inform both the vision and demonstration of care with robots. The critical analysis of robot dramas thus opens up new entry points for questioning and reflecting the ongoing interconnection of robotics and care (Lipp and Maasen 2022). My study also contributes and expands on the emerging body of critical literature at the intersection of STS and aging studies (Peine et al. 2021).

I will begin with a short overview of STS on technical demonstrations and works in the sociology of high and low expectations. Next, I will show that theatrical practices play a particular role in robotics. They perform and materialize the link between technical tests and broader visions of an aging society. I theorize this in terms of an interplay between two different modes of experimentation: as-if and what-if. Then, I will briefly introduce the empirical case of this paper: a European care robotics project that seeks to develop and test robotic services for older people in realistic home environments. My analysis is grounded in a video-assisted ethnography that is specifically geared to register and amplify the simultaneity of front- and backstage activities in robot dramas. Next, I analyze how roboticists create robot dramas in the course of these realistic tests. I conclude by discussing the potential openings that the analysis of robot dramas provides for the critical study of care technology in the broader STS context.

Visions, Demonstrations, and the Interplay of High and Low Expectations

One of the central arguments of STS is that the success and failure of technology cannot be explained by technical and scientific factors alone. Social and political factors have to be included in the equation (Kuhn 1962; Collins 1981; Pinch and Bijker 1987). A crucial avenue for bolstering the social construction of technology and science has been to show their dependency on different forms of staging vis-à-vis public audiences (Latour 1988; Latour 1996; Shapin and Schaffer 1989). In the following, I will draw together two traditions that have made this claim in different but connected ways: On the one hand, scholars in the sociology of expectations have focused on the performativity of promissory visions in forming new techno-scientific fields. On the other hand, scholars have attended to the manifold, decidedly theatrical practices by which scientists seek to align their research with such promises by way of technical or scientific demonstrations. These largely disconnected traditions overly emphasize optimistic visions as well as the affirmative activities by scientists that seek to align with them. I argue that the more recent interest in the interplay of high and low expectations offers a much-needed update in considering these strands in a more connected manner and show how scientists themselves recalibrate overly optimistic expectations.

Science and technology are constitutively future-oriented activities. They are intimately entangled with promises concerning the prospective impact and meaning of techno-scientific projects. These promises are generative in that they drive, structure, and legitimize those very activities (Borup et al. 2006). Their ubiquity has motivated works in the sociology of expectations to take promissory discourses of emerging technology as the entry point for studying their social construction (van Lente 1993; Brown and Michael 2003). Such promissory discourses are constitutive and performative in that they can attract significant interest in a given scientific field. Here, scientists and others connect their “vanguard visions” to existing socio-technical imaginaries and narratives of what is deemed desirable (Hilgartner 2015, 50). Such wider imaginaries are often embedded in national or transnational narratives of progress and urgency (Jasanoff and Kim 2015). Care robots, for instance, are not only seen as caring machines but also as a potential new market that promises economic growth and new jobs (Lipp and Maasen 2022). Promissory work can then range from simply making bold claims about future trajectories in certain technological fields to infrastructuring those fields, for example, by way of industry reports or research agendas (Pollock and Williams 2010). Robotics denotes an especially illustrative case in this regard. It harbors and uses tropes taken from popular culture, especially science fiction, to bolster its claims about robots’ benevolence in society. Such technological visions shape the present by incorporating those claims into funding infrastructures (Lipp 2019, 115-20; Wright 2021) and research practices (Bischof 2017; Voss 2021). In the case of care robotics, this includes imaginaries about care and old age, which often harbor rationalistic (Vallès-Peris and Domènech 2020) and agist biases (Neven 2011).

Scientists commonly struggle to align their day-to-day work with those promissory discourses of which they are often an active part. They need to stage what is not yet possible or certain by performing a vision as if it was already real. This is to both justify their research in front of funders (Möllers 2016) but also to translate their expectations into do-able problems (Fujimura 1987). Demonstrations have been central to the social construction of scientific facts in modern science (Latour 1988; Shapin and Schaffer 1989) and of technology’s benevolence for society (Latour 1996). Here, STS works have used the metaphor of theater to describe how scientists and engineers establish links between their work and broader societal concerns. For instance, in his study of the pasteurization of France, Latour (1988) investigates the many “theaters of proof” (pp. 86-87) through which Pasteur could render his method scientifically indisputable and socially robust in front of a diverse audience of farmers, politicians, and other interest groups. More recently, scholars have studied contemporary forms of “techno-scientific dramas” (Möllers 2016): instances of scientists staging their work in front of public audiences such as users or funders. Here, the dimension of the theatrical, that is, the creation of a dramatic frame in which actors act “as-if” a certain technology was already fully operational, plays a crucial role (Smith 2009). This line of micro-studies has made extensive use of Goffman’s terminology of front- and backstage as well as his frame analysis (Goffman 1956) to describe the manifold and, at times, precarious ways by which scientists seek to convince nonscientific audiences about their projects or products.

Both of these traditions mostly focus on optimistic, promissory discourses or scientists’ attempts to align with them. However, of late, scholars have pointed to the interrelation of high and low expectations (Gardner, Samuel, and Williams 2015), especially in the context of an emerging bio-tech industry and neuroscientific research (Pickersgill 2011; Des Fitzgerald 2014; Martin 2015). Studies in this vein have shown that scientists and other actors engage in calibrating the expectations of different audiences vis-à-vis their research and technological promises. They mediate between a regime of hope described above and a regime of truth that relates to the everyday uncertainties of science in the making, for instance, in the context of translational neuroscience (Gardner, Samuel, and Williams 2015, 1003-4). Additionally, such activities aim to align expectations on different levels between broader policy imperatives and individual technological projects (Konrad 2006). On the one hand, scientists seek to stabilize and feed into those established visions (van Lente 1993) often by way of demonstrations. On the other hand, this alignment work may fail. It thus potentially contests the promissory discourses that have been instrumental in forming and funding these fields in the first place (Roßmann 2021). Failed prototypes, experiments, or simulations may seriously put into question the prospect put forward in those visions. However, as Fitzgerald (2014, 242) argues in the case of neuro-scientific autism research, lowering expectations is not simply threatening but may be important for the maintenance of ambiguous techno-scientific projects, namely for enrolling patients and other users in ongoing innovation work. The present article will investigate this ambivalence and friction between vision and demonstration in the case of care robotics.

Robot Dramas: Frictions between Vision and Demonstration

Visions and demonstrations in robotics have been the object of increasing scholarly attention in STS. On the one hand, there are works which focus on the visions and imaginaries inspired by and embodied in robotic technology. Castañeda and Suchman (2014) have investigated and compared roboticists’ demonstrations of their robots with other figures that have been taken as surrogate for the human, that is, the child and the primate. They show that robots taken as a model for human qualities work by being staged in a subjective manner, as representations of the almost human. Robots become “subject-objects” as a result of these staging efforts (Suchman 2011). The human remains an important benchmark for development in robotics. Hence, scholars have traced the different ideas of “the human” as they materialize in robotic objects linking their design to long-standing histories of racial and gendered hierarchies (Rhee 2018; Atanasoski and Vora 2019). More closely connected to the topic of care robots, Vallès-Peris and Domènech (2020) have analyzed roboticists’ imaginaries about care work illustrating the discursive conditions under which automating care becomes actionable in robotics research. For instance, roboticists imagine care as fragmented, dissectible into discrete tasks that can be matched with certain robotic capabilities (see also Lipp 2019, 108-9). Hence, care robotics research renders care work into programmable features that largely disregard the situatedness of care work (for a critique, see Maibaum et al. 2021).

On the other hand, there is an increasing body of literature investigating the epistemic practices of roboticists in the laboratory and beyond. Alač and colleagues (2011) have studied roboticists’ coordinative efforts to test social robots in a preschool setting. They argue, contrary to the imaginary of social robotics, that robotic sociality does not reside inside the machine but rather in roboticists’ efforts to both spatially and socially arrange the test setting by, for example, directing the attention of school children toward the machine. Bischof (2017), too, investigates the epistemic practices in social robotics laboratories. He argues that robot experiments rely on both introducing and reducing complexity in test settings. By way of this double strategy roboticists aim to expose their machines to increasing levels of complexity (as is promised in robot visions) but also shield them from complications that these machines cannot (yet) deal with (as is usually obscured in those discourses). Finally, Lipp (2022) has shown how roboticists protect their robots from these unforeseen complexities in testing environments. Contrary to the imaginary of robots caring for people, roboticists extensively care for their machines by rendering testing environments robot-friendly.

My contribution of robot dramas sits in between these two foci, between the imaginations about what to expect from robots and the technical practices of programming, building, and operating those machines in concrete environments. Few have hitherto investigated this nexus, in particular, the ways roboticists and others deal with the discrepancy between vision and epistemic practice. One particularly instructive example is Treusch’s work on companion robots (Treusch 2015, 2017). She combines an investigation of service robotics’ vision (and the status of domestic labor therein) with a study of the situated practices of demonstrating a humanoid robot in a kitchen setting. Here, she observes a constitutive ambivalence in how roboticists attribute both failure and success in front of external audiences. Roboticists interpret failure as realizing human traits of fallibility in the robot thus rendering it “legible as human” (Treusch 2015, 203). In doing so, she argues that demonstrations interrelate “the familiar with the not-yet-familiar as well as the present with the future and therefore constantly [negotiate] possible forms of robot companionship” (Treusch 2017, 13). Strikingly, failure does not necessarily lead to breakdown but rather encompasses the need to re-embed (and potentially displace) the robotic performance within wider imaginaries of robot companionship.

This nexus is where I contribute my analysis of robot dramas, between robot imaginaries and their processing in the course of robot demonstrations, between high expectations and their recalibration. In this paper, I will demonstrate that robot dramas sit in between a theatrical and a technical logic between what Vermeulen (2016) calls “as-if” and “what-if.” On the one hand, testing robots in realistic environments should facilitate the immersion process of users and other witnesses in imagining a future where robotic machines might indeed care for older people. For this purpose, roboticists stage alignment by performing scenarios and environments as if these machines were already able to do what they are expected to do in the future. On the other hand, robot dramas exhibit a decidedly technical purpose, namely creating challenges for robotics research to test what happens if older people are interacting with machines in home-like environments. In this logic, roboticists foreground the technicalities of making robots work under realistic conditions and often lower expectations and assumptions about robots in care by conceding that robotics denotes a very complex and challenging endeavor still. Crucially, in robot dramas, these logics do not simply run in parallel. Rather, they frequently interfere and challenge one another. I argue that robot dramas seek to interface what has come to be expected of robots and what is do-able given their relatively precarious technicity. In other words, robot dramas engender frictions that result from the complex interplay between vision and demonstration of care robotics.

Case and Methods

In this article, I report the findings of a video-assisted ethnographic study conducted in a European care robotics project that was funded under the European Commission’s (EC) Seventh Framework Program and ran from 2012 until 2015. My analysis focuses on the project’s “second experimental loop,” where participants demonstrated and tested robotic services in the course of so-called realistic tests in a test apartment in a Northern European country. These tests took place during the month of August 2015. 1 They were organized according to different scenarios, each containing services that care robots could perform for older people in their home, including object transportation (e.g., fetching a glass of water), providing communication services to relatives, or reminding older people to take their medicine. Such services should allow older people to live longer at home and to increase their quality of life (for a critique of this discourse, see Neven 2015; López Gómez 2015). This policy rationale of aging-in-place has led the home to become an increasingly important arena for robotics research (Søraa 2021).

The project was interdisciplinary in nature, featuring twelve partners from academia, industry, and healthcare institutions. It also included the local municipality that operated the test apartment. Academic partners mostly came from engineering, human–robot interaction, and computer science. The consortium also included a gerontological research institute charged with developing the transportation, communication, and reminder services performed by the robots, as well as a company specialized in User Experience (UX) design. I accessed the project through this private company, which also defined my role in the project. I mainly helped the UX company in observing and evaluating the realistic tests. The main objective of these tests was to demonstrate care robotics’ technical feasibility and social acceptability under more or less realistic conditions. After each test users were asked about their opinion on the services and the robotic platform in a qualitative, guided interview.

The tests took place in an apartment situated within a care home and advertised as “one of the world’s largest and most homelike testbeds” (website). The apartment was believed to roughly represent the living environment of older people and thus provide realistic conditions for testing care robots. The apartment consisted of four parts that were relevant for the tests: a large living room in the center, a kitchen, and a control room, from where researchers would monitor and, if needed, intervene into the tests. Most of the test runs’ action took place in the living room, where the user was seated in a particular chair. The apartment was operated by an innovation network that included the local university and the public housing company that ran the care facility. The network also received funding from the European Union and featured numerous regional and national companies involved in healthcare technology.

In this article, I describe how roboticists and others create robot dramas. Next to field notes, interview transcripts, and project documents, I use video data from a set of cameras positioned in different places of the test apartment. Permission by all participants was obtained to publish these results while ensuring their anonymity. 2 Video is well established as a form of ethnographic research to understand socio-material practice (Pink 2010; Suchman and Trigg 1991), and I use video specifically to capture the simultaneous activities at the front- and backstage in the course of robot dramas. While roboticists use cameras themselves to observe the experiments’ front stage (i.e., the living room), the activities on the backstage are seen as outside of the experimental situation (i.e., the control room). Hence, I have complemented roboticists’ use of video by installing cameras in the control room too, simultaneously capturing activities on the front- and backstage. This highlights the specific strength of video analysis to capture distributed social situations and their mediation through different scopic media (Tuma 2019). Here, it is important to note that, against the lure of objective video data, I follow Law (2004) in arguing that any method participates in the production of its data. Hence, the analysis of roboticists’ backstage activities in the control room should not indicate a privilege given to their technical perspective on the robot from behind the scenes. Rather, it allows to register and amplify the frictions that arise in the course of robot dramas between the front- and backstage, between the designers’ and the users’ perspective on performing with robots.

Friction Points in Robot Dramas

I begin by reporting on the results of investigating roboticists’ practices of managing robot dramas in the course of the project’s realistic tests. I focus specifically on four friction points, where high and low expectations, vision and demonstration, and theatrical and technical modes of robotics experimentation coincide and interfere with one another.

Staging Home and Accommodating Robots

The space where the realistic tests take place, the test apartment, inhabits an ambivalent position in robotics research. On the one hand, roboticists are supposed to test care robots in realistic environments such as a fully furnished homelike apartment. In this sense, roboticists (and users) need to act as if robots were already a reality in older people’s everyday lives. On the other hand, this environment is specifically designed to accommodate and test robotic platforms under more or less controlled conditions. In this technical sense, the apartment is host to epistemic practices common in robotics experiments in the laboratory (Alač et al. 2011; Bischof 2017; Lipp 2022).

The apartment is supposed to allow for development and testing “grounded in reality” (Apartment website) by approximating the living environment of older people at home. For this, the apartment is situated within an actual care facility hosting residents of different ages who require different levels of care. Casual encounters between roboticists and residents happen in the hallway on a daily basis. This closeness to residents’ everyday life also makes it easier to recruit them as test subjects. The apartment is modeled after an actual apartment in the care facility, where all rooms are fully equipped with functional kitchenware and feature all sorts of decorations like plants or canvases on the wall. This should create a “pleasant and inviting” atmosphere for guests (Apartment manual). The homelike environment is also meant to stage a highly technologized, yet prototypical future of aging. The apartment is full of devices, machines, and gadgets (see Figures 1 and 2) that are not necessarily used for tests but rather serve as props for the broader vision of using robot technology in the care of older people. For instance, next to each device, there is a small frame hung to the wall explaining what this particular device is called and what it can supposedly do. In some sense, this apartment is used like a showroom allowing a glimpse into what is imagined as older people’s everyday life and into the broader vision of using robots at home.

Living room setup taken from apartment manual. Source: Photo taken by author.

Living room setup taken from apartment manual Source: Photo taken by author.

This careful design of homelike conditions also serves a decidedly technical function. It is important to recall that robotics has developed as laboratory science in recent decades (Bischof 2017). This means that robotic functions or capabilities are usually tested under very controlled conditions inside the laboratory. While tests with users are not entirely new for robotics research, they usually happen in spaces that grant roboticists almost complete control over the conditions of tests. In the test apartment, this is not entirely the case, because many of the environmental conditions may change throughout the tests, such as the influx of daylight because the living room’s rear wall is fully glazed. This may interfere with the robot’s visual sensors, which may not recognize objects or users anymore. Hence, the main aim of robotics research in this context is to test and improve the robustness of robots in such dynamic environments. Of course, this does not mean that roboticists do not exert any control. Rather they adapt and strategically simplify the test situation in order to support the robot’s operation (for an extensive analysis of this in the same project, see Lipp 2022). As an example, roboticists restrict and define the user’s position (in this case, a particular chair), they fix or abort the robotic system from the control room in case of error, or they adapt the environment, for example, by removing carpets or covering certain parts of the apartment’s decoration. Robot dramas are not simply about staging older people at home but also about accommodating robots by controlling home environments.

The Theater of Autonomy and (In)Visible Repairs

Experimental control is a contentious issue in robotics research. As claims about robotic autonomy become increasingly important to the promissory discourse of this field, the persistent use of invisible, human control is seen in a more critical way. This is especially true in so-called Wizard of Oz experiments, where experimenters remotely control the robots to engender phenomena of human–robot interaction (Riek 2012). One common criticism is that such experiments study human–human interaction mediated via a robot and not human–robot interaction. The project studied in this article aims to apply as little control as possible and thus test robots under realistic homelike conditions. Roboticists do control the environment and the robot, but this control is only allowed to maintain situations of human–robot interaction, if they fail or are on the verge of failing.

A crucial aspect of this form of control is that it mostly remains concealed from the eye of the user. During realistic tests, roboticists have to remain in the control room to stay “‘invisible’ and…monitor the situation through a screen” (experimental protocol, 68). For instance, upon the test subject’s arrival, roboticists would hastily close the door of the control room so the user cannot look “behind the scenes.” However, there are times when roboticists violate that order. In one instance, the team leader, a computer scientist, came into the control room after a test run asking his colleagues to be quieter. Their conversations and sometimes laughter should not be heard by the user. In this sense, the test apartment works as a “mundane technology” (Pinch 2010) to create the sense of robotic autonomy by rendering invisible (and inaudible) the constitutive human and technical labor that goes into maintaining it (Shapin 1989).

This is what I call a theater of autonomy. The realistic tests are constituted by a separation of two different social worlds: the experimental situation where user and robot interact with one another, and the control room where roboticists monitor the situation from behind the scenes. This is not a just a feature of human–robot interaction experiments but integral to digital technology in general (Suchman 2007). Phenomena of invisible human work stretch from the maintenance and repair of digital infrastructures (Jackson 2014) through ongoing “ghost work,” for example, in flagging inappropriate content on social media (Gray, Sury 2019) to public demonstration videos of robots (Winthereik et al. 2008; Bischof 2017, 249-65). In this sense, theaters (in the plural) of autonomy are constitutive for the promise and practice of seemingly autonomous, digital technology.

Narrative description of scenario 1 as contained in the experimental protocol.

Roboticists frequently intervene into the experimental situation by, on the one hand, controlling the robot system and, on the other, instructing the test subject through the interviewer. These coordinative activities are mediated by a number of “scopic media” (Knorr-Cetina 2014) that configure roboticists’ access to the experimental situation. For instance, the experiments are recorded by four cameras and directly streamed onto one fourfold split screen in the control room. This setup is used to evaluate the interaction between robot and user and, at the same time, monitor the experimental situation and check for potential mishaps. Roboticists compare what they see on the video feed with computational processes “inside” the robot system, which can be accessed and manipulated via a desktop computer and a laptop. 3 This “synthetic situation” (Knorr-Cetina 2009) is also used to communicate with the user through the interviewer, who is the only person of the team present with the user. Roboticists in the control room communicate to the interviewer via chat, which is displayed on a laptop in the kitchen. The interviewer, while guiding the test subject through the tests, would frequently check the laptop’s display for new messages, going back and forth between living room and kitchen. Roboticists often instruct the interviewer to inform the test subject about technical problems and ask for patience.

Interventions are sometimes tactically revealed to the user, especially when the interaction between user and robot gets stuck for a while. There are, at times, long periods where simply nothing happens. This and other factors mean that test runs last for up to ninety minutes and longer. In these situations, roboticists instruct the interviewer to tell the user why they have to wait. For instance, they tell him to reveal that “artificial vision is difficult” (Skype log, August 16, 2015) or to ask the user to be patient while the rest of the team fixes the issue. Roboticists and, by extension, the interviewer react to these frequent mishaps with conversational repairs—statements that seek to correct or clarify a situation perceived to be in crisis (Sidnell and Stivers 2013). In this case, it is the discrepancy between the vision of robotic autonomy and its frequent breakdown during the tests. In the case above, the interviewer is instructed to repair the situation by at least verbally revealing the backstage, referring to people working hard behind the scenes. This shows how the backstage, while remaining invisible for most of the time, is also tactically revealed as a way to indicate the precarious technicity of robotics. If the robot system exhibits an error that cannot be repaired remotely, roboticists would also—albeit infrequently—exit the control room and enter the living room to, for example, force a restart on the robot platform. In these instances, they do not talk to either the interviewer or the user. Such theatrical glitches render explicit a constitutive contradiction of robot dramas: in order to maintain the theatrical order of realistic tests, the theater of autonomy has to be temporarily suspended either by conversational or technical repairs. Roboticists are actively using these instances to foreground the limitations of robotics research vis-à-vis the (self-induced) expectation of robotic autonomy.

The Drama of Helplessness and Narrative Suspension

Helping “the frail elderly” denotes a common theme in the vision of care robotics (Neven 2011). Robots are positioned as compensating that frailty by providing assistive services in everday life. Hence, the configuration of older people as frail is an important element in staging the benefit and benevolence of robots. Within the context of the care robotics project I studied, this frailty is not necessarily a characteristic of the test subjects but, rather, of the fictitious scenarios used to make robotic services more plausible to users. At the same time, roboticists temporarily suspend such dramas of helplessness in order to safeguard the flow of testing.

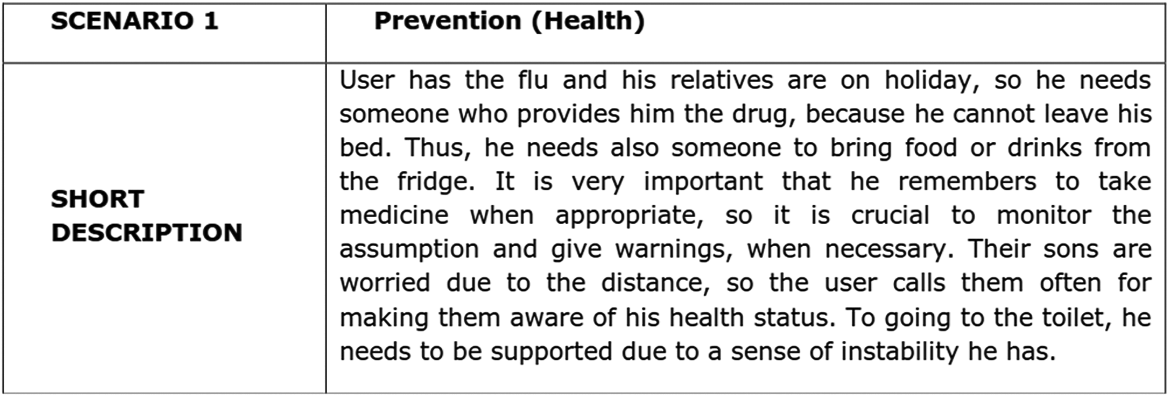

Those scenarios are described at length in the experimental protocol. Similar to a play or movie script, it lists the involved actors, both “technological actors” (robot platform, sensor network) and “human actors” (user, caregivers) as well as the different robotic services that can be activated within a particular scenario. Moreover, the scenario descriptions contain narrative accounts of what the situation of the user is like, as Figure 3 shows.

The scenario assembles the social and spatial milieu of the user, where robotic services can be placed. Here, it narratively produces a precarious situation (“sons are worried”) where human carers are unavailable (“relatives are on holiday”) or completely absent from the story (e.g., professional caregivers) and the user has certain needs (“he needs someone to bring food or drinks”). This concisely staged situation is then paired with particular services such as object transportation to bring a drink, which the robot together with the other technological actors can provide. Hence, these scenarios create particular situations where robotic care services make sense.

Yet these storylines are repeatedly confronted with the precarious technicity of care robots. Test runs are not only struck by glitches and long periods of waiting but also by narrative breakdowns. Take the scenario described above. In it, the “[u]ser has the flu and…cannot leave his (sic) bed” (experimental protocol, 1). This and some other narrative assumptions are suspended in the following sequence, to the surprise of the user. “Not stuck in bed”, field note 12 June 2015 The test person is directed to her chair. Henry, the interviewer, briefs her with regard to the upcoming test scenario. He holds the use case cards in his hand. “This is the scenario you are in right now.” He shows her the scenario description on one of the cards. During the Skype service the test person wants to call her mum. For about a minute nothing happens. She repeats “my mum.” Nothing happens. Henry turns to her: “I think the only name you can call is Brian.” In this moment, the robot approaches them but stops about a meter in front of the chair. Henry prompts her to get up and pick up the tablet fastened to the side of the robot. The test person responds surprised: “Oh, so I’m not stuck in the bed” (as prescribed by the scenario). “No, no,” Karl says and chuckles. The user stands up, leans forward, unfastens the tablet and takes her chair again.

Fragmented Care and Diegetic Immersion

Robots have a hard time dealing with ambiguity and complexity in situations of use (Lipp 2022). They need rather clearly defined, discrete instructions to perform a service in an autonomous manner. Here, roboticists couple certain technical capabilities of robots (i.e., grasping or navigation) with standardized gerontological scales of daily activities (Lawton and Brody 1969). As a result, care is usually understood as a discrete collection of identifiable tasks and activities that can be programmed in a robot and clearly distinguished for the purposes of technical evaluation. In other words, care is imagined as “fragmented” while the robot is positioned as a way to integrate those different tasks (Vallès-Peris and Domènech 2020). This speaks to how robotics conceptualizes elderly care rendering it compatible with the task of engineering robotic services (Bischof 2017; Maibaum et al. 2021). In the particular case of the realistic tests I studied, the tests are organized discontinuously as a menu of services, from which users can choose.

Despite this rather artificial experimental situation, the succession of chosen services can sometimes create a sense of narrative plausibility in the sense that robotic care attains prototypical reality within the diegetic order of the scenarios (Kirby 2010). For example, in one instance, a male user receives the warning of a fictitious gas leak. This is a service that is triggered by roboticists in the control room. The user knows that this can happen during the test run. It does not require any reaction by the user. The robot simply approaches and informs the user that there is a gas leak and that he should seek help and leave the apartment. Right after, the robot prompts the user to choose another service continuing within the technical logic of testing. This is the source of some amusement behind the scenes because it emphasizes the artificiality of the setting. After informing the user about the existential threat of a gas leak it simply continues with business as usual. There is no overarching narrative embedding those services within the fictitious living environment of the user. However, in this particular instance, the user asks the robot to help them leave the apartment, choosing the so-called escort service. 4 While this is not technically possible (the robot can only navigate inside the apartment), the user manages to produce a diegetic ark that links otherwise disembedded robotic services. He reacts to the robot’s prompt as-if it was a real danger, consequently embarking on a rescue mission with the robot. Success here does not only mean that the robot works in a technical sense but that it allows for spontaneous interfacing of the user’s fictitious everyday situation and the technical possibilities of the robot.

This frame holds only temporarily. In the control room, one of the roboticists points out that the robot is in fact not performing the escort service. Rather, it is programmed to return to its home position, to which the robot automatically goes after performing certain services. “He’s not escorting, he’s driving!” he exclaims (video transcript). He knows this by looking at the console of the robot’s software. This cue initiates a chain of communications between the roboticists in the control room and the interviewer, who is instructed to abort the service and explain to the user that something is wrong. The user hurries back to his chair and waits for the interviewer to continue the test.

There is friction between the two logics of experimentation, the “what if” and the “as if,” materialized in the discrepancy between the robot’s inner workings and what happens at the interface of user and machine. Roboticists break this tension by calling attention to this discrepancy and thus suspend the “as if” logic of the experiment. I argue that it is this intervention that suspends the performance rather than the discrepancy per se. Until that point, the discrepancy between escorting and driving did not make a difference at the human–machine interface. However, roboticists privilege what is happening inside the robot over the frontstage action. Their partial view of the situation, mediated by various scopic media produces that discrepancy in the first place. In other words, in this incidence, the roboticists themselves suspend the robot drama by aborting the robot’s operation and by instructing the interviewer to explain the situation as a failure to the user, who then runs back to his seat as if he did something wrong. In a way, they are caught up in the precarious technicity, the strict what-if logic of robotics research suspending the imaginary of the autonomous machine.

Discussion

In this article, I have shown how roboticists and others install, repair, stage, and suspend robot dramas. I have also identified four friction points: first, roboticists stage approximated, realistic home environments that pose complex challenges for operating robots, but they also tactically adapt and simplify these environments. The test apartment is located at the intersection of older people’s everyday lives and robotics’ laboratory practices. Second, roboticists stage autonomy by introducing a spatial divide between the front- and backstage, but they also frequently breach this divide by way of (in)visible repairs. In fact, robot autonomy denotes a rather precarious phenomenon that needs to be maintained and repaired not only technically but also in a conversational sense with the users. Third, roboticists prompt users to immerse themselves into dramas of helplessness but also tactically suspend these situations in favor of continuing with the experiments. This renders visible the active contributions of users to maintaining robot dramas and their performances. Fourth, roboticists aim to organize robot dramas according to a dissectible menu of robotic services to render care practice programmable and do-able for robots. However, the recombinability of services can also be used as a resource to embed interactions between robots and users within more or less coherent storylines, and in this way achieve (temporary) alignment with what is deemed plausible within visions of care robotics.

These friction points illustrate the ambivalent and specific role of theater in robotics. Robot dramas encapsulate both the vision of care robotics and its demonstration. In robotics, theater does not only signify a way to stage robots in front of public audiences including users or funders (Latour 1988; Shapin and Schaffer 1989). Instead, theater in robotics denotes a form of knowledge production in its own right: it provides an epistemic frame against which otherwise ambiguous behavior can be evaluated and categorized at the human–machine interface (Lu and Smart 2011). On the one hand, theater provides the conditions for producing robust knowledge about interactions between older people and machines. It renders human–robot interaction an epistemic object. On the other hand, robot dramas, in their constitutive open-endedness, produce new ambiguities between technical processes and human–machine performances, especially when it comes to robots “in the wild.” These ambiguities and frictions are never fully resolved in the course of robot dramas and instead they are used as a resource for generating new interaction phenomena between older people and machines. This provokes breakdowns but also allows roboticists to suppress disappointment. The playful and open character of robot dramas, in the end, also helps to safeguard that link. We can witness robots fail persistently (what-if) but still exit the drama and retain the impression that we just witnessed a potential future (as-if). Hence, robot dramas denote testing grounds in precisely this sense of probing interfaces between what we expect of robots and what they can do in practice.

The analysis of robot dramas has shown that older and younger users play an active role in these performances. Contrary to other cases of IT demonstrations, robot dramas do not involve users as passive spectators (Smith 2009) but rather as involved participants—both as actors and interpreters of robot dramas. As described above, users need to invest time and effort in immersing themselves in different scenarios of care with robots. This is to say that they do not simply consume roboticists’ imaginaries but are also in a position to reconfigure them. For instance, the reattribution of agency following the failure of the “escort” service attests to the potential subversions enacted by users in the course of robot dramas. Human–robot performances may transgress what is usually described as the configuration of users (Woolgar 1991), and the inscription of technical objects (Akrich 1992). They do this in at least two ways. First, the precarious technicity and “creativity” of robots means that robots go beyond designers’ intentions (Gemeinboeck and Saunders 2016). As most other artificially intelligent systems, their purpose is to react to changing environments in a way that is not completely preprogrammed. In other words, robots are not fully determined in a technical sense. This relative openness also applies to the use of robots or, rather, the performance of human–robot interaction. As I have shown above, users frequently break with the script of robot dramas either because they are prompted to save the experiment or because they act on a different interpretation of what the robot intends to do (as in the escort vs. driving example). Of course, this does not mean that users or robots have full control over their scenario. On the contrary, roboticists repeatedly enact a regime of technical determinism from behind the scenes. The simple fact that these supposed failures or mishaps occur frequently and that roboticists also acknowledge their value at times points to a dynamic that sets robot dramas apart from other theaters of use.

How does this contribute to critical studies of care technology? First, it foregrounds the interplay of high and low expectations in techno-scientific practice (Gardner, Samuel, and Williams 2015). Roboticists do not simply hype their technology or align their research with political discourses around grand challenges. Roboticists also need to balance their efforts to stage the usefulness and benevolence of their machines, while at the same time suspending those visions in favor of repairing the precarious technicity of their machines. I describe these practices as robot dramas and argue that attending to such balancing acts opens up new avenues for responsible research and reflective critique of the ubiquitous interconnection of robotics and care. The notion of the robot drama allows for deconstructing some of the assumptions prevalent in robotics’ theaters of autonomy and helplessness. For instance, contrary to the promise of robotics, seemingly autonomous machines still need human intervention and repairs to operate in conjunction with humans (see also Lipp 2022). Furthermore, the activity of users in enabling human–machine performances subverts the imaginary of the frail older person (Neven 2011). Following roboticists as they try to test robots in realistic environments allowed me to render visible the vast discrepancy between promise and reality, while also accounting for its recalibration. For instance, the suspension of robot dramas might be used as a lever to aspire to mutual models of robotic care, acknowledging the help of the user in their care as well as the fragility of robots (Lammer et al. 2014). Robot dramas do not simply materialize a gap between promise and reality, and their generative performativity should be better understood. This means acknowledging their productiveness in exploring alternative human–machine relations in care. Robot dramas are constitutively ambivalent: they can both threaten the common vision of care robotics while at the same time offering a medium for more responsibly interfacing what we can expect from robots in the future—and what they are able to achieve now.

Footnotes

Acknowledgments

I would like to thank two anonymous reviewers for extensive and meticulous feedback. In addition, I am grateful to Henning Mayer, Carlos Cuevas, and Pat Treusch who have read and commented on earlier versions of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.