Abstract

Recently, high media visibility was reached by an experiment that involved “hoaxlike deception” of journals within humanities and social sciences. Its aim was to provide evidence of “inadequate” quality standards especially within gender studies. The article discusses the project in the context of both previous systematic studies of peer reviewing and scientific hoaxes and analyzes its possible empirical outcomes. Despite claims to the contrary, the highly political, both ethically and methodologically flawed “experiment” failed to provide the evidence it sought. The experiences can be summed up as follows: (1) journals with higher impact factors were more likely to reject papers submitted as part of the project; (2) the chances were better, if the manuscript was allegedly based on empirical data; (3) peer reviews can be an important asset in the process of revising a manuscript; and (4) when the project authors, with academic education from neighboring disciplines, closely followed the reviewers’ advice, they were able to learn relatively quickly what is needed for writing an acceptable article. The boundary between a seriously written paper and a “hoax” gradually became blurred. Finally (5), the way the project ended showed that in the long run, the scientific community will uncover fraudulent practices.

Introduction

The present article discusses a recent case of what can been termed “hoaxlike deception in science” (Schnabel 1994, p. 459). The project is already widely known by the authors’ self-chosen name, “The Grievance Studies Affair.” In 2017-2018, three authors, Helen Pluckrose (MA in early modern studies), James A. Lindsay (with a doctorate in mathematics), and Peter Boghossian (assistant professor of philosophy) compiled a series of hoax articles and submitted them to journals of gender studies, sociology, ethnography, and related fields. Boghossian and Lindsay had previously (in 2017) gained visibility with a hoax article, “The Conceptual Penis as a Social Construct,” submitted for publication to a peer-reviewed gender studies journal. It was refused but then published in a fee-charging Open Access journal. Despite the actual failure, they presented the hoax as evidence of inferior quality control within gender studies.

With the new project, now the three authors wished to show that “especially in certain fields within the humanities”—which they refer to as “grievance studies”—scholarship is “corrupt” (Pluckrose, Lindsay, and Boghossian 2018). They claim a need to “begin a thorough review of these areas of study (gender studies, critical race theory, postcolonial theory, and other ‘theory’-based fields in the humanities and reaching into the social sciences, especially including sociology and anthropology).” The name of the project, with its connotative relations to “The Dreyfus Affair” and beyond, seems to signal the authors’ ambition to initiate a media debate of high visibility and, maybe, divisiveness.

The authors published their “experiment” on October 2, 2018. The media coverage so far, including the project’s own media communication (Pluckrose, Lindsay, and Boghossian 2018), explicitly makes a connection with the ongoing US and global “cultural war,” part of which is a political distrust of some fields of research, especially gender studies. The problem (the authors claim) is “leaking” to other disciplines—education, politics, public discussion, activism, and so on—and “needs to be dealt with.” According to them, radical constructivist theory has reached an authoritative position in these fields: This problem is most easily summarized as an overarching (almost or fully sacralized) belief that many common features of experience and society are socially constructed. These constructions are seen as being nearly entirely dependent upon power dynamics between groups of people, often dictated by sex, race, or sexual or gender identification. All kinds of things accepted as having a basis in reality due to evidence are instead believed to have been created by the intentional and unintentional machinations of powerful groups in order to maintain power over marginalized ones. (Pluckrose, Lindsay, and Boghossian 2018)

Studies of Editorial Practice in Academic Publications

An experimental way of testing the publishing criteria of the editors and reviewers of academic publications is to submit purpose-written texts for evaluation. Armstrong (1997) provides an overview of seventy studies on peer review, among them twelve experiments (or quasi-experiments) made between 1975 and 1997. Bornmann’s (2011) overview concentrates on research from the first decade of the 2000s. The experimental studies explore biases, that is, the fairness of the peer-review process, and the reliability of the peer-review process, mostly measured as the rate of inter-reviewer agreement.

Researchers in psychology and related fields were the first to focus on different forms of reviewer bias. Abramowitz, Gomes, and Abramowitz (1975) prepared a brief bogus manuscript on student activism in two versions with different political implications. They sent it for review to psychology scholars with different assumed political leanings and found some support for their hypothesis about reviewers’ tendency to favor papers whose political tone corresponded with their own (p. 193). Mahoney (1977), and later on, Epstein (1990), approached the impact of confirmatory bias, that is, of granting credibility primarily to findings that seem to confirm the editors’ and reviewers’ previous beliefs. Both used as experimental stimuli bogus articles in two versions, where the empirical results seemed either to confirm or disconfirm the perspective originally chosen. Both found evidence of reviewers’ “confirmation bias.” Whereas Abramowitz, Gomes, and Abramowitz (1975) and Mahoney (1977) asked for reviews from selected scholars, Epstein’s (1990) study included the editorial decisions as well. He approached 146 journals within social work and neighboring disciplines. In addition to a statistical analysis of acceptance rates for the two versions, he also made a qualitative analysis of the reviewers’ statements, identifying the issues raised, and the use of subjective and objective arguments. His overall impression was that the statements were generally of poor quality. They were often subjective and focused on formalities. The best ones came from the “allied disciplines,” not from within the social work field itself (Epstein 1990, 24-25). Other possible sources of bias include the gender of the reviewer and of the author, and the latter’s academic position, institutional affiliation, and prestige. Both have been studied by experiments, but the results are mixed (Bornmann 2011, 215).

All studies of reliability show low inter-referee agreement rates, typically falling in the range from 0.2 to 0.4 when corrected for chance (Bornmann 2011, 207). A comparison between natural and physical sciences, humanities, and social sciences shows that this is the case irrespective of discipline (Bornmann, Mutz, and Daniel 2010, 6). Moreover, peer review rarely recognizes fraud or conflict of interest (Cowley 2015, 4). Recent discussions have, however, paid attention to its other possible functions. If the reviews are substantial and helpful, they can contribute to the final shaping of the paper. Peer reviewing also seems to create and reshape networks of collaboration (Dondio et al. 2019). The most strongly held argument for new, “open peer review” practices (where neither authors nor reviewers are anonymous) is about increased interaction, which in turn is believed to result in better publications (Ross-Hellauer, Deppe, and Schmidt 2017, 17). In short, the debate about peer reviewing is currently less about its ability to determine the quality of scholarship and more about its contribution to dialogue.

“Hoaxlike” Deception in Science

When discussing the “Grievance Studies Affair” project, systematic experiments are, however, not the most obvious context of comparison. There are examples of another type of “experiment,” for which the term “hoaxlike deception in science” (Schnabel 1994) seems adequate. It consists of manipulative deception of a researcher or a research-related institution with the aim of demonstrating their putative incompetence. Based on five historical cases from the twentieth century, Schnabel (1994) concluded that the “success,” or the academic and media reception of the hoax, was heavily influenced by the previous position of the target vis-à-vis prevailing scientific orthodoxy. The weaker the institutional backing, the less weight was attributed to the target person’s counterarguments about research ethics, lacking validity of the experiment, and so on.

What in the strict language of research ethics would be called fraud is often, in a more conciliatory manner, referred to as “hoax.” The perpetrators themselves tend to favor the term “experiment,” implying both knowledge creation and a context that can be argued to justify manipulative practices. However, there are important differences between hoaxes and experiments worth the name. Even when submitting bogus papers in order to test the reviewers for biases and the review process for reliability, the systematic experimenters’ aim is not to embarrass but to find out how these institutional practices function. In contrast, the hoax is done explicitly in order to discredit its targets. There are many signs of this: sensationalism in reporting, lack of systematic experimental design, and the fact that we seldom hear about those hoaxes that have failed.

A well-known case of hoaxlike deception was performed by the physicist Alan Sokal (1996). Sokal managed to publish an article criticizing his own discipline in Social Text, a non-peer-reviewed cultural studies journal. Immediately after publication, Sokal revealed the hoax in another journal and received loads of mostly positive media coverage. Hilgartner’s (1997) comparison of the “Sokal Affair” with Epstein’s (1990) study and its reception has been a major source of inspiration to the present article. In Sokal’s “experiment,” no peer-review process was involved; Sokal actually allowed the journal to publish the parody. His experiment had no clear hypotheses, controls, or explicit analyses of what had been achieved; and the population experimented with consisted of just one avant-garde journal outside the academic mainstream of its research field (Hilgartner 1997, 517). The most interesting difference was, however, the reception. At the time Epstein conducted his study, he was an individual social worker with no university affiliation (Hilgartner 1997, 516). He had targeted a well-organized professional field and was summoned to defend himself in front of an ethics panel. Sokal, holding a prestigious academic position in a prestigious discipline, targeted “downward” at a nonprestigious journal in a field under constant political attack. The hoax propelled his career toward the status of an academic celebrity (Hilgartner 1997, 515-19). In a way, Sokal thus proved a point in science and technology studies, which he himself had set out to criticize (cf. Schnabel 1994). As Hilgartner (1997, 519) puts it, he ended up producing “a parody not only of cultural studies but also a parody of himself.”

The emergence of Open Access, online publications has created, among other things, (sometimes-justified) suspicion about editorial standards. This concerns above all the so-called predatory journals, which charge author’s fees and publish texts unselectively. In 2013, fee-charging Open Access journals were targeted by an experiment devised by the science journalist John Bohannon (2013), who sent a fabricated and clearly flawed pharmaceutical study to 304 journals with a biological, chemical, or medical title. Out of 255 journals that announced their final decision during the time span of the project, 157 accepted the paper and 98 rejected it. Bohannon always withdrew his paper before publication. Finally, there is a piece of computer software called SCIgen (Stribling, Krohn, and Aguayo 2005), developed in 2005 by three MIT students, to produce meaningless fake computer-science papers. It is freely accessible and has been used countless times for fooling fake conference organizers and journals. “Predatory journals” in all disciplines seem easy prey for such hoaxes.

The Grievance Studies Affair: The Media Response

Within the project calling itself “The Grievance Studies Affair,” the three members of the group authored and offered for the publication of nineteen, twenty, or possibly twenty-one 1 manuscripts, all of which are available through a link on their website (with the exception of two that are said to be rewritten adaptations of other papers). Of the decisions received from journal editors and reviewers, the first is dated September 22, 2017, and the last September 6, 2018. These documents are likewise available through a link. The group went public with the project on October 2, 2018, when one of the published articles had attracted media attention, including suspicions of fraud.

Within days, the project web page was referred to by both US and European

2

mainstream media, by fashionable alt-right intellectuals, and even by blog posts of serious academics. As al-Gharbi (2018) summarized on October 10, 2018: the incident has become something of a Rorschach Test within academic and media spaces: Those already disposed towards skepticism of “critical” scholarship on race, gender or sexuality view the incident as damning proof of a deep rot within these subfields, and perhaps with social research or academia more broadly. Those more sympathetic to “critical studies” instead see a deeply flawed and limited experiment—and accuse the authors of overstating their findings, speaking beyond their data and, intentionally or not, feeding into the agenda of right-wing reactionaries. There have been relatively few unexpected validators or critics.

The more positive responses either echoed the project authors’ claims about corrupt scholarship in the academia (e.g., Mounk 2018b) or dismissed the use of scientific or ethical standards when evaluating the project (Mounk 2018a; Smith 2018).

An Attempt at Empirical Analysis

The project website (Pluckrose, Lindsay, and Boghossian 2018) presents almost no analysis of the results. It focuses instead on the motifs behind the project and on colorful descriptions of the texts written (both those published and those refused). However, the website provides links to most of the manuscripts and to the correspondence with those journals that used peer review. The manuscripts are presented in their most recent versions, sometimes rewritten after several submissions. This means that it is not possible to trace the revisions undertaken. What an outsider can analyze, however, are several editorial decisions, review processes, and the finalized manuscripts.

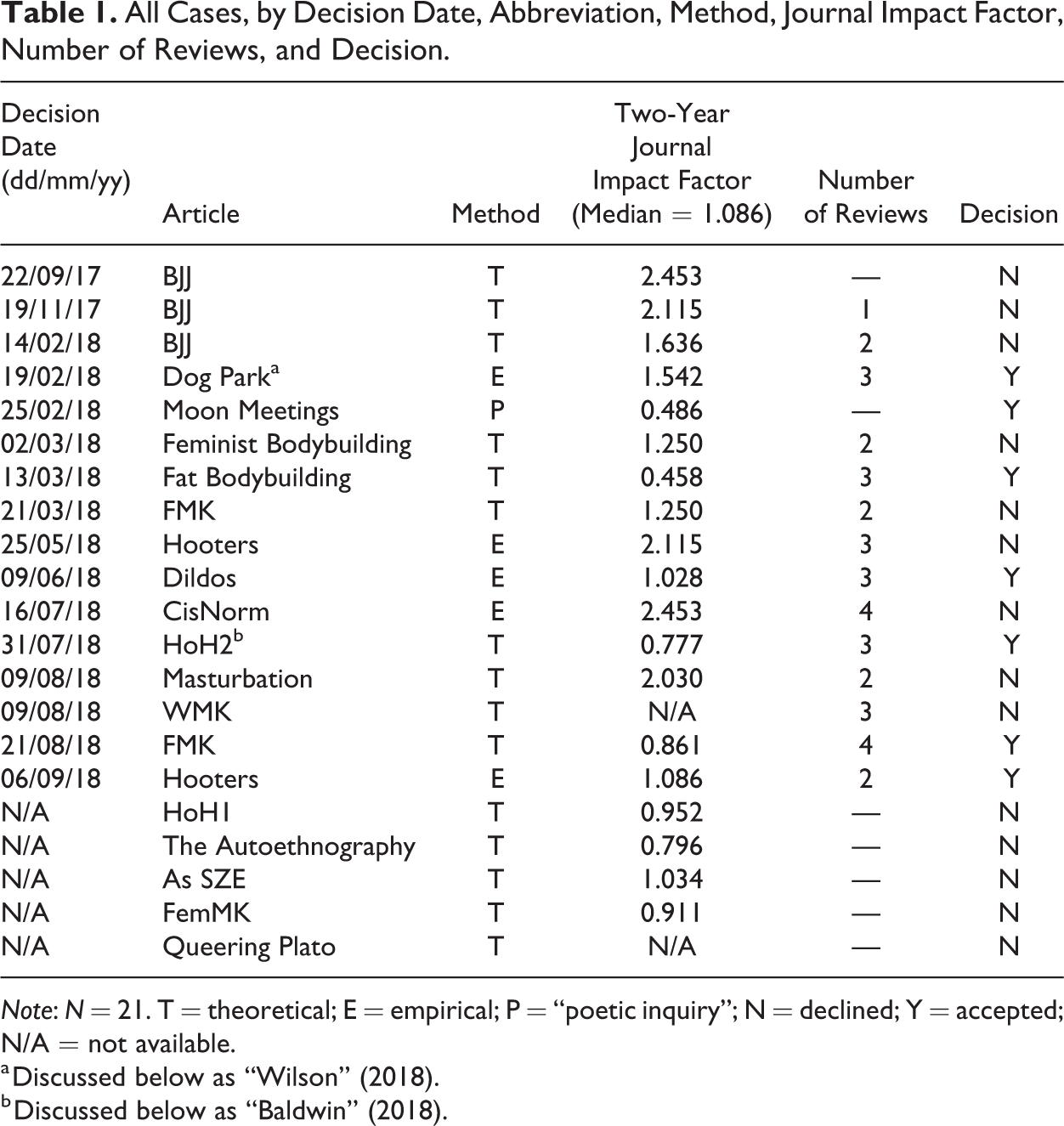

If the aim is to test the probability of a journal accepting an article proposal, the proper unit of analysis is one final decision (accept/reject) about one manuscript by one journal. In other words, cases of resubmission of a revised manuscript to a journal after initial rejection by the same journal count together as one single case. The seven unresolved cases (submissions without final decision) are left outside the analysis. This leaves us with twenty-one cases, that is, twenty-one final decisions concerning seventeen manuscripts (see Table 1). Seven final decisions were positive (with four texts actually published—“Dog Park” [i.e., “Wilson” 2018], “Fat Bodybuilding,” “Dildos,” “Hooters,” all of them later retracted), fourteen were negative. The authors argue on their website that if the project had been allowed to continue, some of the seven unresolved cases would have yielded a positive decision; however, the present analysis only concerns the outcomes actually achieved (and reported; see Note 2). In Table 1, the manuscripts are referred to by the abbreviations that appear on the project website. I have decided not to give the names of the journals targeted by the deception or full references to the texts. Exception is made for two texts, which I will discuss in detail and refer to as “Wilson” (2018) and “Baldwin” (2018). As my discussion will show, I do not find the fact that they were accepted for publication as particularly embarrassing for the journals that did so.

All Cases, by Decision Date, Abbreviation, Method, Journal Impact Factor, Number of Reviews, and Decision.

Note: N = 21. T = theoretical; E = empirical; P = “poetic inquiry”; N = declined; Y = accepted; N/A = not available.

a Discussed below as “Wilson” (2018).

b Discussed below as “Baldwin” (2018).

The submitted texts show extreme variation in the issues discussed (from dog parks to political satire, from martial arts to masturbation), styles, and methods. Hence, no strict comparison of the journals’ standards is possible. Nor is it possible to compare the peer-review statements in the way done by Epstein (1990). One cannot tell whether all manuscripts (stimuli) were equally “inferior” or “good.” Some manuscripts predictably call for more, some for less, attention on, for example, theory, the conceptual apparatus, the empirical method, the conclusions, the language and formalities of writing, and so on.

The authors presume that they have unmasked the peer-review systems of the journals they experimented with as “inadequate” (Pluckrose, Lindsay, and Boghossian 2018). They are keen to stress that this does not concern the peer-review system across all disciplines. However, no control experiment was done with other fields of scholarship, nor were the results compared with previous similar experiments. In addition, not all journals targeted represent the academic mainstream of their respective fields.

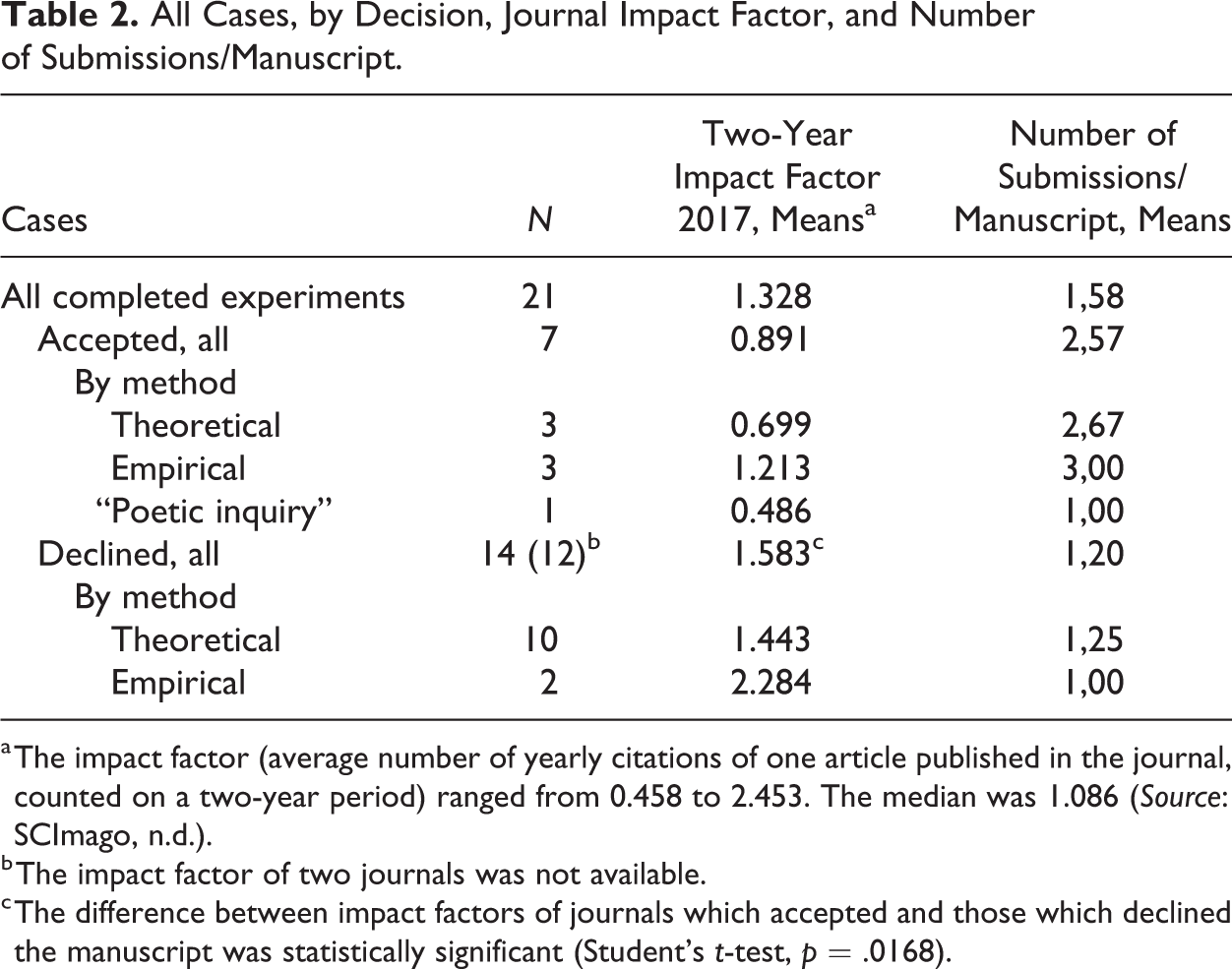

The information provided on the project’s website and the documents linked to it make it possible to find information on some variables other than the discipline. Two-year impact factors, as of 2017, can be found for most of the journals involved. The figure used here is the average number of citations received by one article in the journal in question, calculated for the latest two years (SCImago n.d.). As to the manuscripts themselves, they vary with respect to one important variable: twelve manuscripts were “theoretical,” that is, not supported by original empirical data, while one was a “poetic inquiry,” not presenting itself as a research article in the first place. Five manuscripts were “empirical,” that is, referred to (fabricated) empirical data. Interestingly, three of the latter were among the seven manuscripts accepted for publication. As shown by Table 2, the “empirical” manuscripts were also more successful as to the impact factor of the journals that accepted them. In general, publication was more easily achieved when the impact factor was low. This negative correlation was statistically significant (see remark to Table 2). The necessary conditions for publication were, thus, that the article was either empirical or the journal impact below median.

All Cases, by Decision, Journal Impact Factor, and Number of Submissions/Manuscript.

a The impact factor (average number of yearly citations of one article published in the journal, counted on a two-year period) ranged from 0.458 to 2.453. The median was 1.086 (Source: SCImago, n.d.).

b The impact factor of two journals was not available.

c The difference between impact factors of journals which accepted and those which declined the manuscript was statistically significant (Student’s t-test, p = .0168).

Of the twenty-one editorial final decisions, six were direct refusals by the editor and one was the acceptance without peer reviewing of the “poetic inquiry” by a journal specializing in such texts. A further fourteen decisions were based on peer reviews. The reviews concerned altogether eleven manuscripts, of which three were submitted to more than one journal. Also when resubmitted to the same journal, a manuscript could receive peer reviews twice or thrice. Altogether, there were thirty-seven reviews (see Table 1), totaling to 204,171 characters (without spaces) or 38,076 words. On average, one review contained 1,029 words; the average length was about the same for the published and for the refused manuscripts. For comparison, the reviews received in Epstein’s (1990, 19) study were typically just 150-300 words, and he cites previous research stating that referee reports in psychology averaged more than 500 words.

What can be concluded from the analysis is, first, the unsurprising fact that editors and peer reviewers were not equally demanding in all journals. Chances for being published were better in journals representing less well-established disciplines (such as fat studies), scarcer or zero within the established ones (such as sociology). Journals focusing on new and very specific fields also have lower impact factors. Secondly, the claim to present original empirical data clearly made the journals more inclined to publish. One cannot tell, of course, whether this applies only to the set of journals selected for the study or also to peer-reviewed journals in general. Finally, the peer reviewers’ role in the publication process appears essential in more than one way. They are not mere gatekeepers recommending publication or refusal. In the cases that resulted in publication, they first recognized the potential of developing the manuscript and then assisted the writer with feedback impressive already by its sheer volume. The eleven manuscripts that finally went through the review process received a total of ninety-seven suggestions of new literature. One of the articles, “FMK,” submitted to two journals, received in all six reviews. Similarly with the reviews of other articles, they addressed the manuscript contents in detail. Apparently, the authors also considered the advice. “I have seldom had the joy of working with a revision in which the reviewers’ feedback was so diligently and thoughtfully attended,” one reviewer puts it in her or his final statement before the article was accepted for publication.

How to Recognize a Hoax

Both Mahonney (1977) and Epstein (1990) started their experiment with a hypothesis about confirmational bias. Their primary aim was not to test the reviewers’ quality standards; they delivered credible papers that varied with reference to the central variable of the hypothesis (“confirming” or “disconfirming” the theory discussed). On the other hand, Sokal (1996) and the various hoaxes with predatory journals used obviously flawed or outright nonsensical papers as stimuli. The “Grievance Studies Affair” project seems to have done something in between. The authors describe their papers as “outlandish and intentionally broken in significant ways.” At the same time, the authors were clearly keen to have their papers accepted, and (judged based on comments in peer reviews), they meticulously followed the advice given. In a way, they ended up experimenting with themselves: with their own ability (as trained academicians from neighboring disciplines) to amass “what appears to be significant evidence and sufficient expertise” (Pluckrose, Lindsay, and Boghossian 2018).

One of the papers was a combination of poetry with individual experiences interpreted in a theoretical framework. It was accepted without peer review. Was that one also a hoax? Maybe. However, I will leave it outside the present discussion, just noting that as to fiction literature, “beauty is in the eye of the beholder.”

We saw in the previous section that reference to new empirical findings was an important factor in favor of publication. The project’s flagship, the “Dog Park” paper, was about dogs’ sexual behavior in a dog park, and about humans’ reactions to that behavior. Commenting on that paper, the authors mention “incredibly implausible statistics.” What could that mean? Obviously, statistical data can be “implausible” when highly surprising (and thus, potentially interesting); or whenever there is reason to suspect flawed methodology. The reviewers did, in fact, ask for clarifications about the method, which were duly added to the paper. According to “Wilson” (2018, 6), those statistics were based on “nearly 1000 h of public observations of dogs and their human companions.” “She” also provides a close description of how the data were supposedly gathered (“Wilson” 2018, 8). Looking at the final version myself and not knowing that the data were fraudulent, I would probably also have accepted the methodology. Besides, there exists little research on the context addressed—the relationship between dogs and humans. As a peer reviewer, I would myself have expected more conclusions about the dogs’ human companions’ practice of attributing human feelings, thoughts, motivations, and beliefs to the behavior of dogs (cf. Serpell 2003, 83-84) and less about the more distant political implications. I might nevertheless have welcomed, presumably with many others, this empirical addition to research on a poorly covered area.

Another published empirical article, the “Hooters” paper claimed to be based on weekly observations in a “breastaurant” during two years. The editor summarized the reviewers’ concerns about methodological rigor as such: “This then takes me to a core challenge […]: trustworthiness. All three reviewers share my concern about the lack of demonstrated methodological integrity in the present paper.” The published version is then provided, in due course, with a detailed account of the method, including a mention about its “accordance with the ethical standards of the institution and with the 1964 Helsinki declaration and its later amendments […].”

In theoretical studies, fraud is harder to define. Outright plagiarism is one type, misrepresentation of references is another one (which the authors claim not to have done). The papers “FMK,” “WMK,” and “FemMK” are said to be based on a passage in Hitler’s “Mein Kampf” (and this claim has greatly added to the project’s media visibility). According to a philosopher colleague, the passage in question discusses strategies of political communication and of forging alliances. However, everything historically specific in Hitler’s text (racism, references to the First World War, and so on) has been removed, and the remaining similarities between the texts are merely about the article structure (and besides, “Hitler was a better stylist”). Plagiarism is not the type of fraud applied here. The authors’ claim to have fooled the review process is based on the expectation that a competent reviewer would have uncovered the hoax. Nevertheless, when the authors heed the reviewers’ advice and adjust their papers accordingly, the border between a hoax and a seriously written paper gradually becomes more and more blurred.

Ethical Considerations

In experimental studies, deception is sometimes regarded as defensible because of its benefit to scientific ends and, in turn, to society (Hegtvedt 2007, 154). In the present case, the issue of deception enters the discussion from five different angles. (1) At least some of the papers were in fact fraudulent when presented and published as empirical research; (2) the experimental design was based on deceiving the editors and reviewers; (3) the authors motivated their mode of action by claiming that the journals and disciplines targeted were themselves deceptive, presenting political standpoints and academic gibberish as “scholarship”; (4) importantly, the authors present their own project as a scientific experiment that has given “strong evidence” of that claim. Accordingly, also their reporting of the project must be judged by the standards of good scientific practice; (5) finally, reporting the sources of research funding is also a part of that practice.

On their website (Pluckrose, Lindsay, and Boghossian 2018), the authors do not waste many words on the issue of the ethics and integrity of their own project. They comment on the way their experiment ended: With major journalistic outlets and (by then) two journals asking us to prove our authors’ identities, the ethics had shifted away from a defensible necessity of investigation and into outright lying. We did not feel right about this and decided the time had come to go public with the project.

The authors had no informed consent of the persons (editors, reviewers) with whom they were experimenting. A possible justification would be that no alternative ways of testing their practices were feasible (see Hegtvedt 2007, 154). Other studies of peer reviewing have also used deception. However, as a rule the researchers did not allow their experimental stimuli to be published; yet, Epstein still received heavy accusations of misconduct (Hilgartner 1997, 514). As to “The Grievance Studies Affair” project, one must ask whether the actual publication of the stimuli as journal articles added to any data possibly to be discovered by the experiment. The answer is clearly in the negative: publication added to journalistic appeal and to the embarrassment of the subjects experimented with, but not to its content.

Manuals and textbooks on research ethics stress the imperative of minimizing any possible harm to the research subjects. “The Grievance Studies Affair” project clearly intentionally aimed to harm certain journals and disciplines. The justification the authors give for that, however, is not easy to accept at face value. It includes no accusation of deceptive business practices (such as can be used to justify attacks on “predatory journals”). The authors’ attack is on the purportedly “fatally flawed research” of others within fields influenced by “critical constructivism and radical skepticism.” They want to “push for universities to fix this problem” (Pluckrose, Lindsay, and Boghossian 2018). However, challenging theoretical standpoints or university politics does not require deceptive experiments (submitting one’s own intentionally fatally flawed research to journals to see what will happen); it can be more properly done in regular academic debate. Interestingly, one of the (refused) manuscripts written as a part of the project, “HoH1,” also formulates a matching justification: “hoaxes on unethical fields are morally justifiable, and hoaxes on ethical fields are unjustifiable” (p. 3). When the authors on their website describe this article and what it claims, they confess that it advocates “a blatant double standard.”

Research-based knowledge has a powerful position in public discourse. This calls for ethical standards higher than those acceptable in other media debate. Therefore, did the experiment bring about “strong evidence” of the “Corruption of Scholarship” in “especially some fields of the humanities,” as the authors claim? The present analysis has shown that it did not. If we treat the project as the kind of empirical experiment it claims to be, its external communication becomes a case of an “attempt to exaggerate the importance and practical applicability of the findings” (European Science Foundation 2011, 14).

The project obviously included time-consuming commitment by three researchers for more than a year, plus the work of a filmmaker making a documentary. Everything was funded by an anonymous “benefactor.” Secrecy about the funding source opens questions about conflicts of interest. Did it have effect on which journals were chosen as targets or on the way findings were reported? Political activism and research communication have become intermingled in a way that is potentially harmful to the credibility of research in general. Paradoxically, this is what the authors themselves claim to be against.

Gatekeeping and Scientific Dialogue

Finally, what is the view of science and scientific communication behind the project? The authors criticize (their own representation of) constructivism, and the alternative they offer is simple: “scholarship […] must be rigorous […] we should be able to rely upon research journals, scholars, and universities upholding academic, philosophical, and scientific rigor” (Pluckrose, Lindsay, and Boghossian 2018). Authors of “The Grievance Studies Affair” tried to show the lack of “rigor” by submitting for review manuscripts they depict as “nutty” and as including “some little bit of lunacy or depravity.” They are about issues seldom addressed by research: masturbation, dogs’ sexual behavior, dildos, “breastaurants,” and so on. The argument seems to be that journals publishing, or even considering, texts on such unserious issues are themselves not to be taken seriously. However, the very novelty of a research topic or an argument often led to favorable comments from the reviewers. Reviewer #1 of the published “Dildos” article comments, I think it is true that there is “virtually no rigorous scholarly work [that] investigates the topic of improving straight male partner sensitivity by means of receptive anal eroticism,” but I do wonder what such a study would look like. Right now, it seems, that much of this is speculative, but nonetheless interesting and provocative. To say that woman bodybuilders (and athletes more broadly) and their capacity to build large muscles have been limited by societal standards of femininity is a fair argument. But it is not tantamount to saying socialization is ALL that has restricted women and biology plays no role, which is a much more difficult argument to make. The latter seems to be the author’s claim, and it isn’t adequately substantiated. My initial feeling was confusion about why everything needs to be discussed in such convoluted ways. At the same time, I have to say that what I interpreted as the point [of the text] is important: that humor has different meanings (as subversive or hegemony supporting) depending on power relations and positionality of those involved. That is how I still think. The point is of course not a new one, and I wondered why the author did not refer to the usual classical treatises on the functions of humor (e.g., Aristotle, or Freud). […] I was in a somewhat suspicious mood when reading, but I could see a lot of humor in the article itself, too. I hope that also in a more usual situation, I would have recognized the ironic distance.

The project authors accuse the peer reviewers for bias and laxity of quality standards. As we have seen, such criticism is not new and applies to all disciplines. At the same time as its deficiencies as a quality screening mechanism have become clearer, peer reviewing has paradoxically reached growing importance in the industry of measuring “excellence” and ranking universities, research groups, and researchers. Within research politics, to claim shortcomings of the process within some specific field thus amounts to questioning the field’s academic legitimacy and accordingly, that of any financing it receives.

Conclusions

The “Grievance Studies Affair” project is part of the more general phenomena of the US culture wars and anti-intellectualism, by now turned global. The project’s primary focus on gender studies might reflects its authors’ personal frustration with that specific field, but it also coincides with concerted political action both in the United States and in Europe (Kuhar and Paternotte 2017). Before learning more about the project’s funding, it is too early to say whether this connection is incidental. The present analysis focuses on the question of what the experiment demonstrated empirically and its ethics.

First, the study lacked the comparative element that could make it possible to argue that the disciplines that were targeted have laxer quality standards than those that were not. The percentage of submissions with positive decisions (33 percent) is close to that of the social work journals studied by Epstein (1990), but a comparison is impossible because of the different character of the stimuli. In addition, some of the project’s later communication suggests that the total number of submissions and refusals during the project’s entire life span was in fact substantially higher than during the later phase that the project website reports about, and the real percentage of positive decisions thus substantially lower (see Note 2). The experiment did not demonstrate the peer reviewers’ negligence. Instead, the reviews linked to the project’s website are thorough (which is also shown by their length compared with those referred to by earlier studies of peer reviewing).

The journals targeted by the project were not chosen in any systematic way to be representative of all journals in their respective fields. Accordingly, all possible conclusions will concern the project’s haphazardly chosen set of data only. No external validity can be claimed. With these severe limitations, experiences from the project can be summed up as follows: (1) journals with higher impact factors were more likely to reject papers submitted as part of the Grievance Studies Affair project; (2) the chances of having a submission accepted were much better if the manuscript claimed to be based on an empirical study; (3) peer reviews are an important asset for the process of revising a manuscript; (4) closely following the reviewers’ advice, the project authors, with academic education from neighboring disciplines, were able to learn relatively quickly what is needed for writing an acceptable article; finally, (5) the way the Grievance Studies Affair project ended suggests that the scientific community will ultimately uncover fraudulent practices.

The first conclusion needs little comment. Less established journals in new and specific fields of research have fewer manuscripts to choose from, which may lead to lower thresholds for publication. However, from the positive side, this may also mean more risk-taking, which can prove worthwhile in the end. Even a less-than-perfect article may include an interesting point that deserves to enter the scientific discussion. The second conclusion about the relative priority given to empirical studies seems important. Studies of peer-review seldom address this issue. The project exploited one important vulnerability of the review process: The reviewers have most often no realistic possibility of uncovering the fabrication of data. They can only assess what the writer decides to tell them about his or her methodology and, when in doubt, demand clarification.

The project’s experiences with peer reviews run contrary to some of the recent criticism that has been aired in discussions about academic publishing (e.g., Wagenknecht 2018), in which authors have said they found reviews unhelpful. In this project, they were long, detailed, and obviously written by dedicated people. It is possible that some shortcomings of the peer-review process are more poignant within other disciplines than those targeted. With substantial help from the reviewers, the authors were in fact able to produce articles that are not recognizably amateurish, as was shown by my own little “experiment” of asking an independent opinion about “Baldwin” (2018). Sokal’s memorable hoax illustrated, if anything, that journals tend to be benevolent toward an author with well-established academic credentials. At least some cases within the Grievance Studies Affair project point at benevolence of another kind: the editors and reviewers were not barring the entry to their research field of unknown authors from other disciplines but went through the (unpaid) voluminous process of providing beginners with thorough comments and advice. 3

The final uncovering of the hoax by journals and media shows one more point that is important: scientific studies and articles are part of an ongoing discussion, not separate pieces of knowledge. Fraud tends to be unmasked. For this, dialogue is more important a function than gatekeeping. On the other hand, even less “rigorous” articles may add something to the realm of academic debate. A far-fetched treatment of Plato (as in the unpublished “Queering Plato” manuscript) shows at least the possibility of even that interpretation.

Footnotes

Acknowledgments

The author has received help and support from the sociological research seminar of Åbo Akademi University, from two other colleagues (a philosopher and a sociologist), and helpful comments from the anonymous reviewers of this journal.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.