Abstract

This paper reviews the transformative role of Generative Artificial Intelligence (GenAI) in Human Resource (HR) management, from a practice perspective, highlighting both opportunities and challenges and laying out a use-inspired future research agenda. This scoping review is grounded in insights from a unique Summit held in Spring 2024, which brought together HR academic scholars with dozens of Fortune 500 Chief Human Resource Officers (CHROs) and their top technical leaders to discuss the workforce implications of GenAI. The paper identifies six key themes from the Summit practitioners: GenAI as disruptive and transformative, data as competitive advantage, adoption challenges, potential ethical abuses, the experimentation imperative, and the critical role of CHROs. These six themes provide a foundation for future research directions, which are discussed regarding six functional HR areas: recruitment and selection, training and development, performance management, job and work design, talent management, and compensation and benefits. The research agenda in each area emphasizes the need for academic researchers to understand and address the practical challenges posed by GenAI. Overcoming these substantive challenges will demand meaningful effort and a keen willingness to learn, on the part of both HR leaders and scholars. The paper concludes with a call to action for management scholars to engage in use-inspired research that bridges the gap between academic knowledge and practical HR challenges.

Keywords

Exploring the role of artificial intelligence (AI; the broad field of computer science focused on creating machines capable of learning patterns and making predictions) in Human Resource (HR) management research reveals both opportunities and challenges. Recent overviews of AI (e.g., Budhwar et al., 2023) highlight its potential to transform HR functions from recruitment and selection to compensation and benefits. This potential to revolutionize workplace dynamics and promote new HR practices also raises challenges for researchers and practitioners (Tambe, Cappelli, & Yakubovich, 2019), including concerns about biases, ethics, the nature of work, and the evolving role of humans in organizations (Cappelli & Rogovsky, 2023; Chowdhury et al., 2023). We argue that to contribute to the resolution of these challenges, academic researchers must first understand key elements of this revolution in practice.

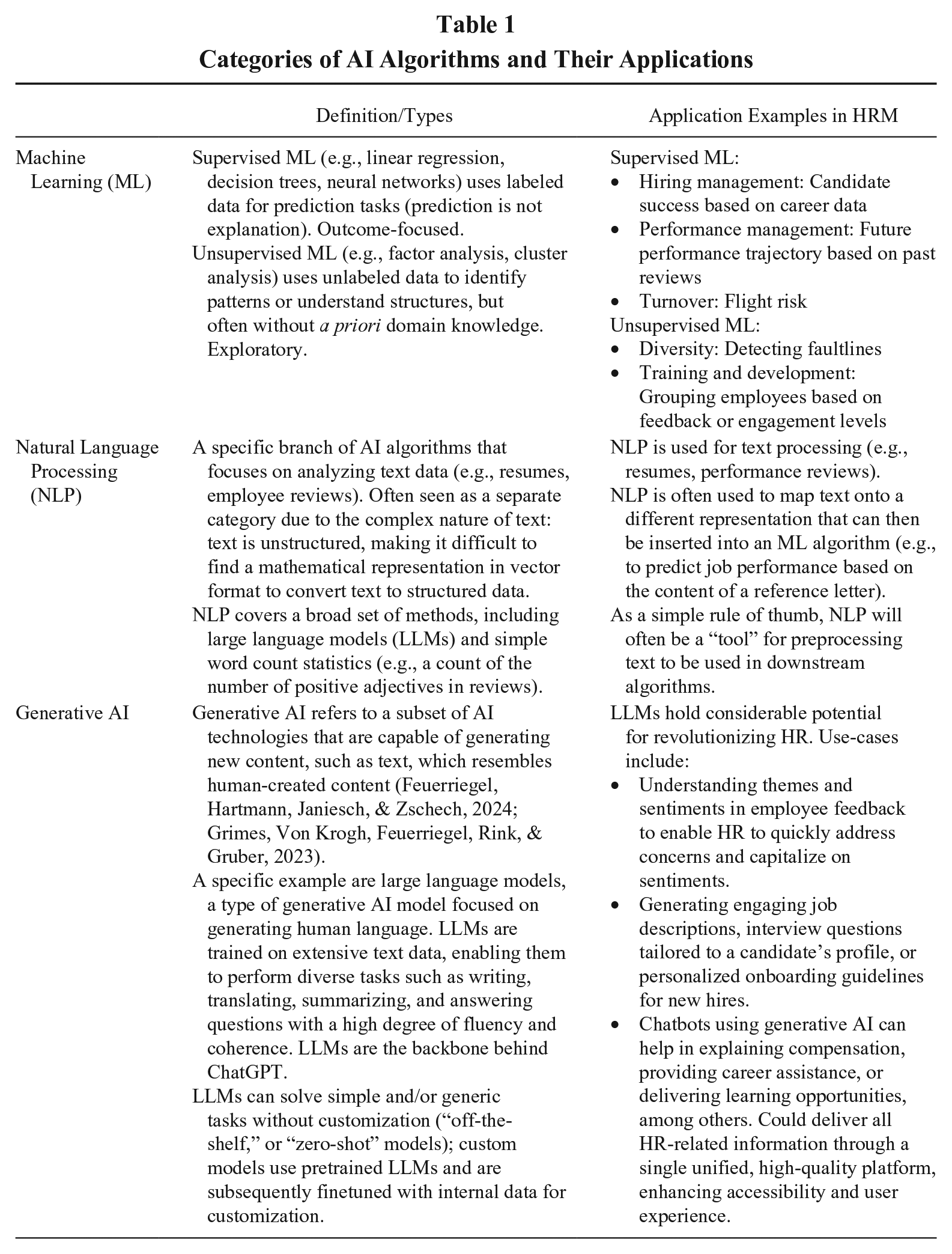

AI, often equated with machine learning (ML), refers to algorithms that learn to perform a task from data (Bishop, 2006), and it offers new opportunities to support the decision-making of managers based on causal reasoning. For example, AI can predict which employees are at risk of leaving, enabling HR to develop targeted retention strategies, or future skill needs, and helping organizations to strategically invest in hiring and developing employees. AI systems can sift through vast amounts of resumes to identify candidates with a strong fit, reducing the time and effort required to hire. AI systems can also handle administrative HR tasks (e.g., chatbots can explain compensation, offer career advice, or provide learning opportunities; Nyberg, Cragun, Conroy, & Weller, 2023), freeing up productive time for decision-makers. Different AI algorithms have different strengths and limitations and use cases; for the interested reader, Table 1 provides an overview of the various types of AI algorithms and how they can be applied to HRM. The focus in this paper is Generative AI (GenAI) specifically.

Categories of AI Algorithms and Their Applications

GenAI is a subset of AI that specializes in generating new content based on vast amounts of data. GenAI has been popularized due to breakthroughs in the ease and accessibility of large language models (LLM). The burgeoning interest and exploding popularity have positioned GenAI as a focal point in 2024 for boards of directors (Edelman & Sharma, 2023) and CHROs. This surge in attention reflects not only the anticipated potential upside but also the anticipated disruptive impact of GenAI on HR.

In response, in the Spring of 2024, we hosted a unique Summit that brought together leading HR scholars with dozens of Fortune 500 Chief Human Resource Officers (CHROs) and the CHROs’ top technology leaders. The focus of the Summit was the workforce implications of GenAI, and the Summit’s purpose was twofold: to enhance strategic thinking among HR business leaders about GenAI, and to deepen HR scholars’ understanding of AI’s complexities in practice as a foundation for future research—with the latter culminating in this review paper. We position this paper as a “scoping review” (Munn, Peters, Stern, Tufanaru, McArthur, & Aromataris, 2018), one which is based on the experience and expertise of practitioners and seasoned HR scholars. Scoping reviews are a form of knowledge synthesis with the aim of informing practice and policy and providing direction for future research priorities (Arksey & O’Malley, 2005; Sucharew & Macaluso, 2019). The primary characteristics of scoping reviews are that they are (a) broad in scope but less detailed (theoretically or empirically) than a systematic review; (b) useful for exploring emerging areas of research; (c) often precede systematic reviews to define research questions; and (d) have an ideal use case of initial exploration of new or under-researched fields (as is the case here). 1 Although not frequently seen in our field, practice-informed insights are a natural fit for a scoping review, especially one aimed at identifying research questions of interest when current practice and research are nascent and fragmented (Munn et al., 2018). Following best practices for reviews in management (Simsek, Fox, Heavey, & Liu, 2025), we begin by establishing the purpose and nature of our work.

Both the Summit and this review paper were guided by the twin beliefs that (a) scholars have a unique opportunity and responsibility to guide GenAI’s application to HR and (b) it is valuable to first understand GenAI-related concerns of HR business leaders and their technical leaders for research to be both relevant and influential. That is, there have been other reviews in this area (i.e., Budhwar et al., 2023; Chowdhury et al., 2023), but they have been more “traditional” academic reviews that start from the perspective that the academic literature is the best source of requisite knowledge on the phenomenon itself (despite its emergent nature) and they then use this scholarly knowledge to either create conceptual models to guide future work (e.g., Chowdhury et al., 2023) and/or to “inform HR professionals [how to] understand and adapt to the changing AI landscape” (Budhwar et al., 2023: 608). Missing from these treatments of GenAI in our literature is a true privileging of practice (especially as informed by top business leaders) in the identification of research needs. In response, we designed this unique collaborative Summit (bringing together academic researchers and business leaders) not only to uncover practitioners’ views on the transformative potential and challenges of GenAI but also to use this information as a key contextualizing foundation for future research directions.

The resulting paper aims to (a) share with a wider audience (i.e., management scholars) the insights from the Summit—namely, the themes identified by the CHROs and their technical leaders; (b) encourage academics to adopt a strong voice in this GenAI revolution, including via an explicit focus on use-inspired research; and (c) outline specific research needs and grand challenges in HR functional domains that are implicated by the themes from practice. Related to this final point, during the second day of the Summit, HR scholars involved in this collaboration met to discuss the practice themes heard in and identified from the previous day’s session, and the implications of these themes for HR research in general and for specific domains within HR. In total, 17 renowned academic HR scholars, with deep yet varied expertise across different HR areas and data analytics, were involved in that process and the creation of this paper.

The paper is organized into two main parts. In the first, targeted toward management scholars more generally, we summarize the key themes identified from the Summit, with the goal of bringing the essence of the Summit to a much broader academic audience. Returning to the title of this paper, these themes make clear why this is a revolution, what is going to be new and different, and why this requires bravery. As such, they represent an important impetus and contextualization for future research in multiple areas of management. These themes also provide critical contextualization for the implications of GenAI for future HR-related research in particular. In short, they encapsulate the opportunities, challenges, and potential pitfalls of applying GenAI in HR, setting the stage for an in-depth examination of implications and future research needs in multiple HR domains, which is the second main section of our paper.

We use Posthuma, Campion, Masimova, and Campion’s (2013) taxonomy of HR practices (with some minor adaptations, as specified here) to organize our discussion of the impact of GenAI on practice and future research needs within the following six key areas of HR: (1) recruitment and selection; (2) training and development; (3) performance management; (4) job and work design; (5) talent management (our broader label for the “promotions” function from the Posthuma et al. model); and (6) compensation and benefits. (Although part of the Posthuma et al. model, we excluded employee relations and communications as separate sections, because these were themes that cut across HR functional areas; as such, these issues are covered in multiple places in the paper.) These sections, informed by the Summit’s discussions, outline areas where GenAI’s integration into people management presents substantive research opportunities and needs, providing readers with insights on both what future AI-related research questions are essential as well as how future research should proceed. Finally, we conclude with a discussion of the broader scholarly needs in this area, including an explicit mobilizing call to action for management researchers.

Themes From the Summit

Reflecting the Summit’s goal to blend HR and technological expertise to guide research, 37 CHROs, primarily from Fortune 500 firms, were joined by their top technology advisors and strategists, facilitating a day-long comprehensive dialogue on GenAI in HR through four panels and a keynote. Panels began with insights from senior business leaders discussing GenAI-related challenges and opportunities in their respective fields, and each was followed by small roundtable discussions (with a mix of academic researchers and HR leaders at each table) and then a full debrief with all participants. This structured approach enabled a rich exchange of ideas among both diverse HR academics and HR business and technical leaders, aligned with the goal of bridging the gap between academic research and practical HR challenges in the realm of GenAI. Participants agreed to abide by “Chatham House Rules,” meaning that specific examples and learnings could be discussed and disseminated outside the Summit but that no practitioner or organization would be cited as connected to these specifics, thus allowing presenters and other participants to freely share. For this reason, we do not attribute any quotations throughout the paper to any specific practitioners or organizations represented at the Summit (Dave Ulrich, the keynote speaker for the Summit, is an exception in terms of attributing quotations).

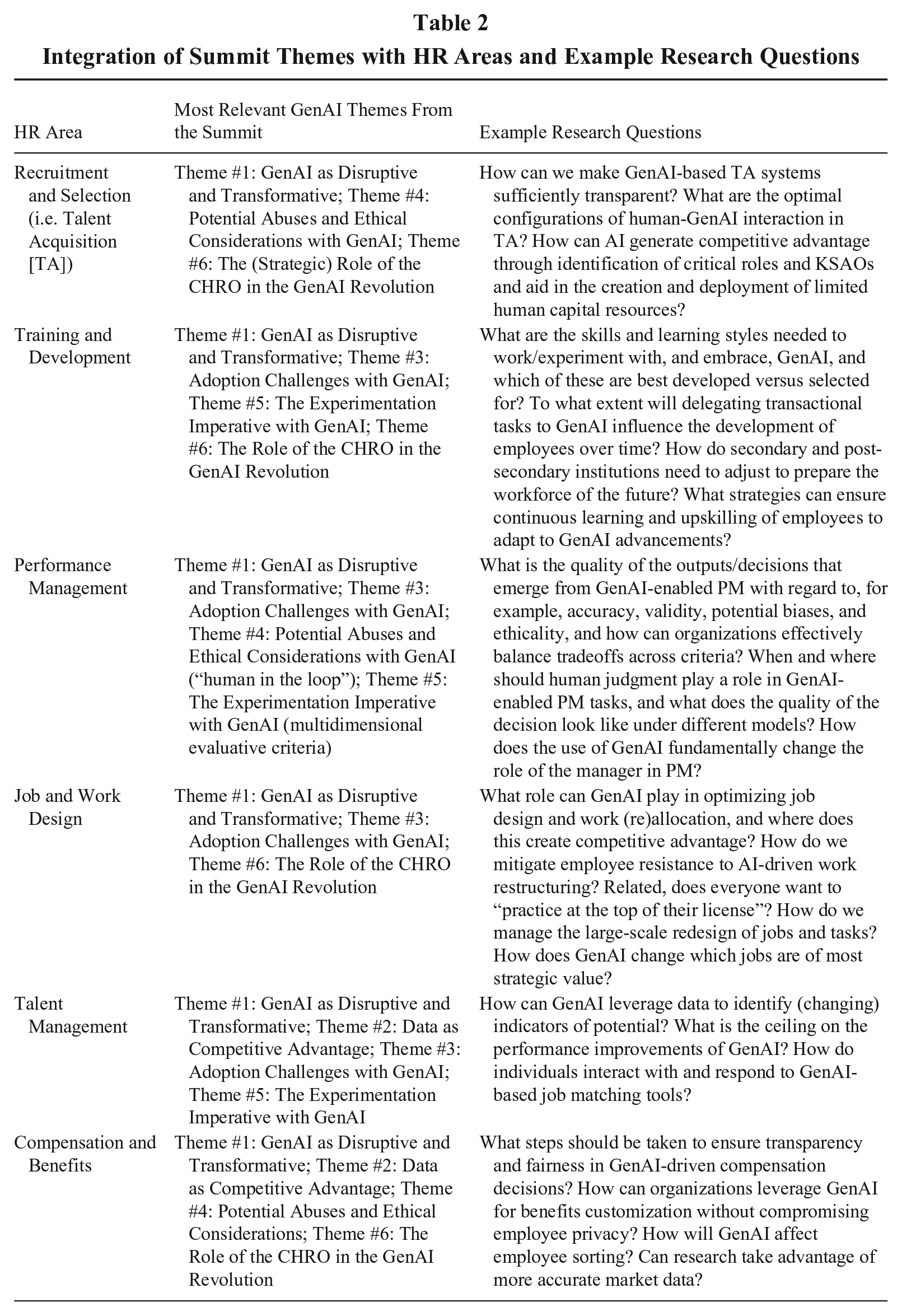

In the identification of the key GenAI-related challenges for future HR research, we privilege the themes emerging inductively from the HR business leaders’ and technical strategists’ contributions at the Summit. Thus, the first need was to identify these themes from the transcripts of the Summit sessions. We used GenAI tools to create the initial list of themes, which was then vetted by the academic researchers the following day; the vetting process largely involved condensing several more specific themes into six broader themes. These six themes are introduced and discussed in turn in each of the following sections (Table 2 maps the relevance of these themes across the HR functional areas, with implications to be discussed in later sections).

Integration of Summit Themes with HR Areas and Example Research Questions

Theme #1: GenAI as Disruptive and Transformative

The first, and arguably most important, theme emerging from the Summit is that GenAI will disrupt the nature of work. CHROs resoundingly confirmed that “second generation” GenAI (e.g., ChatGPT) “changed everything,” with the capability to radically transform both the content and process of work. Summit participants emphasized that the revolutionary use of GenAI is not a technology decision; instead, it is about process transformation and work transformation. (“Let’s not forget our most important task as HR business leaders is to answer the question of ‘What is the

CHROs at the Summit felt that GenAI will actually be more disruptive to white-collar than blue-collar workers, characterizing it as the first big disruption to the world of white-collar work (other than perhaps the work from home/hybrid transformation in the wake of the pandemic; Barrero, Bloom, & Davis, 2023). In this context, GenAI could create value for organizations by replacing knowledge workers (as was an underlying fear of Hollywood writers in the recent strikes), making workers more effective, and/or by creating new jobs where workers can create more value. But CHROs also argued that the media has been a bit misleading about the likely impact of GenAI on the workforce, exaggerating both how many jobs will be lost and how many new jobs (e.g., prompt engineer) will be created. In a Fortune 500 survey (Murray, 2023), 74% of CEOs expected AI to reduce headcount in the next 5 years, compared to 15% expecting headcount increases. However, the CHROs felt that what had been obscured by such headlines is the fact that the greatest impact of GenAI may be in how it radically transforms the work performed by most employees. This idea is supported by the OECD survey on AI effects (https://www.oecd.org/employment-outlook/2023/#ai-jobs, Lane, Williams, & Broecke, 2023), which showed, in contrast to the Fortune 500 CEO survey, that only 15% of employers anticipated “attrition or redundancies”; instead, their strongest expectations were around retraining or upskilling current workers (67%) and hiring new workers (41%). That same OECD survey found that although 60% of employees were worried about job loss due to AI in the next 10 years, a majority reported improved job performance, enjoyment of their job, and mental health as a result of AI (79%, 63%, and 54%, respectively). Important factors in these positive reactions are likely that respondents were twice as likely to say that AI had automated repetitive and dangerous tasks as had created them, and 63% agreed that AI assisted their decision-making.

Theme #2: Data as Competitive Advantage

A second theme emerging from the Summit was that GenAI’s potential is not just as a tool or technology, but also as data. As the keynote speaker, Dave Ulrich, reminded us, “any problem we have (including any HR problem) can be better solved by having better information. So, this is the source of advantage to AI.” Summit participants strongly endorsed the idea that, in this revolution, organizations will compete on the quality and quantity of data.

Many organizations have found data curation overwhelming. HR has been awash in data for more than a decade—applicant attributes, employee performance, absenteeism outcomes, and so forth—with no ability to analyze it because the database management and processing tasks are so daunting. CHROs have struggled with building a modern data infrastructure and consolidating their data across functions since the dawn of data science 15 years ago. GenAI certainly can enable easier analysis of that data, and unstructured data that was ignored before can now be turned into useful knowledge. Assembling that data to create value is, however, still a formidable task, and one that will likely only become more important as the GenAI revolution continues.

Theme #3: Adoption Challenges With GenAI

The third theme involved a challenge to the potential transformative benefits of GenAI: people must want to use it. That is, to realize the potential gains, employees and managers will have to want to use GenAI, but there are many human nature-based hurdles to adoption. For example, in one panel, a disagreement arose between a CHRO and their IT expert regarding how soon employees would be ready to adopt the organization’s most innovative GenAI tools: the latter viewed this as their most exciting and transformative tool, yet the former was skeptical about how ready employees were for it. 2 The resulting discussion made it clear that although the potential to do something with GenAI may exist, not everything will be implemented quickly, due to employee resistance. Other CHROs strongly reinforced the importance of the question of how we can, en masse, get employees to be more receptive to GenAI.

One part of employee resistance is related to a general fear of change and one part is specific to GenAI, based in (a) perceptions that human recommendations are more fair, accurate, and authentic, even when human advice is demonstrably worse (Jago, 2019); and (b) a lack of information about previous AI usage by others (Alexander, Blinder, & Zak, 2018). But another large part of this resistance is tied to trust in management. One CHRO shared research that shows that although a large majority of employees think GenAI tools are good and will have positive effects, a markedly smaller group of those same employees trust their organizational leaders not to abuse these tools (an issue also tied to Theme #4). These discussions led to a prediction from Summit participants that there will be more labor-management tension as a result of GenAI than we have seen in a long time, and also that there may be more white-collar unionization compared to any time in the history of work. These barriers to adoption, resistance to change, and the resulting strife and conflict between employees and management are questions that HR scholars and management researchers are uniquely positioned to address.

Theme #4: Potential Abuses and Ethical Considerations With GenAI

A fourth theme from the Summit concerns another key challenge, and that is the need to be mindful of potential ethical abuses and dilemmas with GenAI. Science and technology often move forward based on the desire to do something that has never been done before, but there is a need to balance innovation with ethics. This was probably the largest and loudest theme emerging from both the practice and academic camps at the Summit, and we therefore provide a bit more elaborated treatment of it here, via an organizational justice lens (Colquitt, Hill, & De Cremer, 2023), to organize the multiple points made in this area. For example, distributive justice (i.e., the fairness of the outcome/decisions such as pay, promotion, etc.) is at considerable risk when GenAI is used to make HR decisions, because the models can be biased if the training data used to develop them reflect existing patterns of inequality (Kellogg, Valentine, & Christin, 2020). GenAI is also prone to random error in the form of hallucination, wherein generated content does not align with the source content (Ji, et al., 2023), which can lead to arbitrary and inaccurate decisions.

AI’s record is mixed across various components of procedural justice. For example, given that GenAI follows a set of rules, it provides consistent application of procedures. Although it is free of personal bias, the potential for bias and inaccuracy still exists due to the quality of training data. There is also the issue of privacy, particularly the risk of invasion of privacy, which is considered a breach of ethics and procedural justice (Alge, 2001). There is heightened tension between employees’ expectations for privacy and employers’ right to monitor employees. GenAI tends to be more invasive as it enables access to vast amounts of employee data, including minute-level monitoring of work behavior and data not directly relevant to work, such as personal habits (Kellogg et al., 2020). The capability to gather extensive behavioral data may exceed the intended purpose of monitoring, resulting in unmeaningful and invasive metrics (Schafheitle, Weibel, Ebert, Kasper, Schank, & Leicht-Deobald, 2020). As one Summit executive stated about gathering such data, “just because you can, doesn’t mean you should.” Moreover, data initially collected for one purpose may subsequently become accessible to other parties and used for other purposes. Concerns about data access and use extend beyond organizational boundaries, as GenAI training often involves acquiring data without the informed consent of the sources of that data (Charlwood & Guenole, 2022), such as with vendors who have access to client data.

Informational justice (i.e., decisions are adequately and transparently explained) is often compromised in GenAI given the opacity of its interpretations and predictions (Bankins & Formosa, 2023). Moreover, employees are often unaware of the data collected and the purpose of such data collection (Hunkenschroer & Luetge, 2022). Indeed, some suggested that organizations may intentionally withhold such information to avoid employees gaming the system to manufacture positive results.

Of the four justice components, it appears that interactional justice is most consistently negatively impacted by GenAI (Narayanan, Nagpal, McGuire, Schweitzer, & De Cremer, 2024). Interactional justice (the extent to which employees feel they were treated with dignity and respect) is a distinctly human aspect of organizational justice (Tyler & Bies, 2015); as such, it is arguably the most difficult aspect of justice to imbue in GenAI systems. Indeed, the very act of employing GenAI for HR-related tasks may signal that the organization does not care about its employees (Narayanan et al., 2024). At the same time, returning to the OECD survey, almost five times as many employees (43%) reported that AI improved management fairness as those (9%) saying AI worsened it.

Summit participants emphasized that the potential for ethical and justice violations suggests the need for equal focus on questions of what ought to be done, not just what can be done with GenAI. Managers will undoubtedly play a large role in either mitigating or exacerbating these ethical risks. The executives we heard from advocated for two key governance mechanisms in this regard: a set of guiding principles and a governing body that reviews and approves use cases (we discuss the latter in the next section below). Several HR business leaders noted that an organization (especially the CHRO’s team) must first set its strategies, principles, and policies around GenAI. One firm shared that they have a simple two-part strategy in this regard: AI is used (a) to improve productivity (tied to their strategy to leap ahead vis-à-vis their competitors) and (b) to increase employee satisfaction.

Important considerations in guiding principles are whether and how managers are in the loop, the degree of informed consent and control over data, and data use (Spisak, Rosenberg, & Beilby, 2023). For example, another firm shared their seven basic principles of AI (founded on their staunch belief that it is important to have guardrails for how AI is used): (a) Respect human rights; (b) Enable human oversight; (c) Ensure transparency and explainability; (d) Ensure security and reliability; (e) Protect privacy (noting that, because privacy means something different to different people, this principle is a big challenge); (f) Promote equity and inclusion (i.e., AI for all); and (g) Protect the environment (noting that large language models are massive users of energy). Regarding the “human oversight,” many of the Summit organizations have adopted the practice of having “a human in the middle” (e.g., having a human, rather than GenAI, make the decisions) or “a human in the loop” (having human oversight/review of everything that comes from GenAI; Monarch & Munro, 2021). The extent to which human judgment is incorporated into the decision process in GenAI is important for the correctability principle, a positive component of procedural justice. Of course, it is unknown how representative the Summit companies are on these issues; there was a clear sense from the participants that organizational abuses will occur with GenAI. Finally, participants noted that the principles and policies set around GenAI must also be grounded in what regulatory compliance will look like in this area (with regard to, for example, transparency and data privacy).

To date, much of the extant research on fairness in GenAI has been rooted in computer science (Narayanan et al., 2024), generally focused on comparing human versus AI decision-making, or how to embed fairness principles into AI design. The focus of research on GenAI ethics must expand from its emphasis on design features to the experience of the employee (Colquitt et al., 2023). To that end, it is imperative to examine the role of organizational governance and managerial compliance in the effective and responsible use of GenAI in HR, a focus to which management scholars seem ideally suited. We will return to this theme in some of the specific HR sections below, and we summarize the ethics-related research questions that cut across HR areas in the final section of the paper.

Theme #5: The Experimentation Imperative With GenAI

A fifth theme emerging from the leaders at the Summit, and one with great relevance for specific areas of HR, was the importance of experimentation. That is, after setting the above-referenced strategies, principles, and policies for GenAI, there is an imperative for HR leaders to engage quickly and continuously in experimentation. CHROs at the very forefront of GenAI application emphasized treating all initiatives as experimental. This echoes the core principles of evidence-based management (Pfeffer & Sutton, 2006), but at an unprecedented scale and depth. A notable assertion from a CHRO at the Summit, “80% planned plus feedback is better than 100% planned,” underscored the value of quick deployment and iterative learning and adaptation with GenAI. This perspective also influenced views on regulation issues, with a consensus that policy and laws should target GenAI use cases rather than the technology itself, facilitating nimble experimentation while addressing ethical concerns.

In the context of rapid and continuous experimentation, Summit executives also emphasized the importance of a governing body to review proposed GenAI uses, to ensure alignment with the company’s guiding principles, to evaluate the strategic benefits, and to ask rigorous questions about potential risks and unintended consequences. They recommended that use case proposals should be clear on the strategic intent (e.g., cost savings or value creation), with benefits weighed against both direct financial costs and potential costs associated with ethical risks. Accordingly, a breadth of perspectives should be represented in these bodies—at a minimum, representing technological, strategic, human, ethical, and legal areas of expertise—and in several of the Summit organizations these bodies reported directly to the CEO. While having the best interests of the company as their top priority, these governing bodies should represent the interests of customers, employees, and the larger society by requiring answers about how the technology could negatively impact stakeholder groups. They also need to serve a continuing review function, evaluating the validity of evolving GenAI models on an ongoing basis, as well as the currency of static models, and ensuring that cases are evaluated for their intended purpose, as GenAI applications are susceptible to function creep (Schafheitle et al., 2020).

The complexity of the charge for these GenAI governing bodies highlights an important subtheme: the necessity of thinking about the outcomes of GenAI in a multidimensional (and multi-level) way. That is, evaluating the effectiveness of GenAI applications (or any aspect of GenAI policies), whether during rapid experimentation or the longer-term, encompasses multiple types and levels of criteria such as stakeholder satisfaction, cost, efficiency, productivity, outcome quality (e.g., validity, accuracy, acceptability of decisions, freedom from bias), trust, ethical considerations, organizational effectiveness, and societal impact, among others. As one example, although having a GenAI governing board should foster perceptions of justice and trust, such a board might also hinder rapid innovation and timely value creation. Thus, both organizations and researchers must be explicit about the evaluative criteria of greatest interest, and the tradeoffs among them, including at different levels (e.g., the effect on employee performance or satisfaction vs. the effect on organizational effectiveness). With regard to decision/outcome quality specifically as an evaluative criterion, an interesting question was raised at the Summit about the relevant standard when evaluating GenAI. That is, do we expect GenAI-enabled recommendations and outcomes to be “perfect,” or merely as good as (or better than) humans? Given that human judgment is itself rife with bias and error, it is not clear whether, overall, GenAI will result in more or less flawed decision-making compared to humans (Glikson & Woolley, 2020). These evaluative elements of experimentation should be made explicit by organizations and must be studied by researchers.

Theme #6: The Role of the CHRO in the GenAI Revolution

Emerging from prior themes, and as suggested by the Summit’s focus on CHROs as key informants, these top HR business leaders play a unique and critical role in the GenAI revolution. In discussing this final theme, we summarize the strategic, cultural, and moral responsibilities of CHROs when it comes to GenAI, along with examples from the Summit.

Strategic role

Any digital disruption (like GenAI) requires reassessing business strategy, and CHROs must help build the organizational capabilities necessary to enable that strategy and create value in a way that benefits shareholders (Snell & Morris, 2014). They must focus on developing the dynamic capabilities of sensing opportunities offered by GenAI, seizing its value by designing innovative ways for value creation, and transforming by streamlining, improving, and altering organizational routines. For example, as frequently shared at the Summit, fulfilling the potential of GenAI will necessitate substantial upskilling and reskilling for all employees, including HR professionals. Managers will likely also need upskilling to take on greater leadership responsibility. The executives we heard from envision GenAI as freeing managers from the routine to focus on the complex (including leading people). However, lower- and middle-level managers may lack the skills and efficacy to assume such responsibilities. These efforts must be a focus of CHROs. Tied to previous themes, active experimentation will also be essential for uncovering how HR professionals and GenAI tools need to interact for the emergence of dynamic organizational capabilities. This includes experimenting with augmented integration (where humans collaborate closely with machines to perform a task, Raisch & Krakowski, 2021) in areas with the greatest potential to create value (e.g., talent management), while leaving automation (where machines do the task themselves) to processes where efficiency and cost concerns are paramount (e.g., initial screening of applicants).

Cultural role

CHROs also play a meaningful role in defining, building, and maintaining organizational culture, and GenAI impacts culture in multiple ways. For example, it requires employees to develop new skills, both in using GenAI and performing higher-value activities. As leaders at the Summit noted, cultures that emphasize continuous learning and self-transformation have an advantage, as ongoing learning can simply be redirected. Conversely, cultures that promote stability and consistency may resist learning new ways of working. Similarly, when a culture of mistrust exists, there is likely to be substantial employee resistance to GenAI (including undermining and sabotaging technology). Thus, culture can either enable or hinder the realization of the full potential value of GenAI. CHROs must actively build a culture that reinforces the use of GenAI and avoids “algorithmic aversion” (i.e., employees' and managers’ reluctance to use AI in their work; Dietvorst, Simmons, & Massey 2018). Explicit culture building can also help address another concern raised at the Summit, regarding whether managers will actually use GenAI in the way intended and to its best potential; executives at the Summit were not in agreement about a clear path to ensuring this, other than it being a core CHRO responsibility.

Moral role

CHROs also have moral responsibilities in organizations, and GenAI implementation heightens the need for CHROs to act. As discussed, those involved with the tech aspects of GenAI may myopically focus on what they are able to do and not pay sufficient attention to the question of whether they should (i.e., ethical issues such as privacy, transparency, and potential bias). Summit participants felt that CHROs must truly play a leadership role in these sorts of discussions. In addition, CHROs need to go beyond articulating values and principles related to GenAI. GenAI technologies need a formal framework and structure for governance that includes accountability, values/principles, and a review process, and CHROs must be intimately involved in creating these structures, principles, and processes. After all, the ultimate accountability for any people-related GenAI outcomes/decisions (even when purchased from a vendor) lies with the company (and especially the CHRO). Thus, companies (under the direction of the CHRO) need to both seek assurances from the vendor up front and then rigorously monitor the results post hoc to ensure that no bias impacts decisions.

This critical theme about the role of the CHRO in the GenAI revolution is reflected in several of the specific HR sections that follow. We also summarize the CHRO-related research questions that cut across HR areas in the final section of the paper.

From Summit Themes to HR Research Needs

Derived from the themes garnered at the Summit, we next highlight current practices and associated research opportunities in six HR functional areas. The specific application of the themes and identification of relevant research questions were driven by our academic content experts, based on the combination of what they heard at the Summit as well as their extensive scholarly knowledge of the HR area in question. Although all six Summit themes are relevant across the HR functional areas, Table 2 highlights the most applicable and significant Summit themes as they apply to each HR area and how they provide a foundation for exploring transformative research opportunities and challenges in that area. This framework further highlights the role of HR functional research in addressing GenAI’s implications and challenges.

One topic of frequent discussion among Summit participants was differentiating AI use on agentic dimensions. As we move into the context and research needs for each HR functional domain, it is useful to briefly discuss these types. Specific AI models or platforms (e.g., ChatGPT) can be classified into three general types based on their agentic properties and corresponding disruption. Because GenAI is evolving quickly, academic research on a specific platform (e.g., ChatGPT) may be out of date by the time it is published. GenAI learns, and so research must focus on foundational principles rather than specific software (see McFarland & Ployhart [2015] for an example). The most basic type of AI is an AI Assistant, which completes fairly routine, scripted actions. For example, chatbots can answer common applicant questions with known answers. The next higher level of agency is AI Copilot. This works in coordination with the user, often based on GenAI (i.e., LLM), and employs data that may be housed within or outside the firm. For example, Copilots generate process checks on interview decisions. Finally, the highest level is called Autonomous AI. This type can perform tasks with limited, and in some cases no, human direction. For example, Autonomous AI could identify a hiring need and provide a list of potential prospects before a manager is even aware of the need. As the agency increases (i.e., “less of a human in the loop,” Theme #4), there is an even greater need for research to understand implications of these GenAI tools.

GenAI and Recruitment and Selection

The process of attracting (i.e., recruiting) and selecting human capital resources needed for a job, role, and organization is referred to as talent acquisition (TA; Ployhart, Weekley, & Dalzell, 2018). GenAI will radically transform and disrupt TA as we know it (Campion & Campion, 2024; Woo, Tay, & Oswald, 2024). Here we focus on the current context and research needs for TA implicated in the Summit themes (especially Themes 1, 4, and 6).

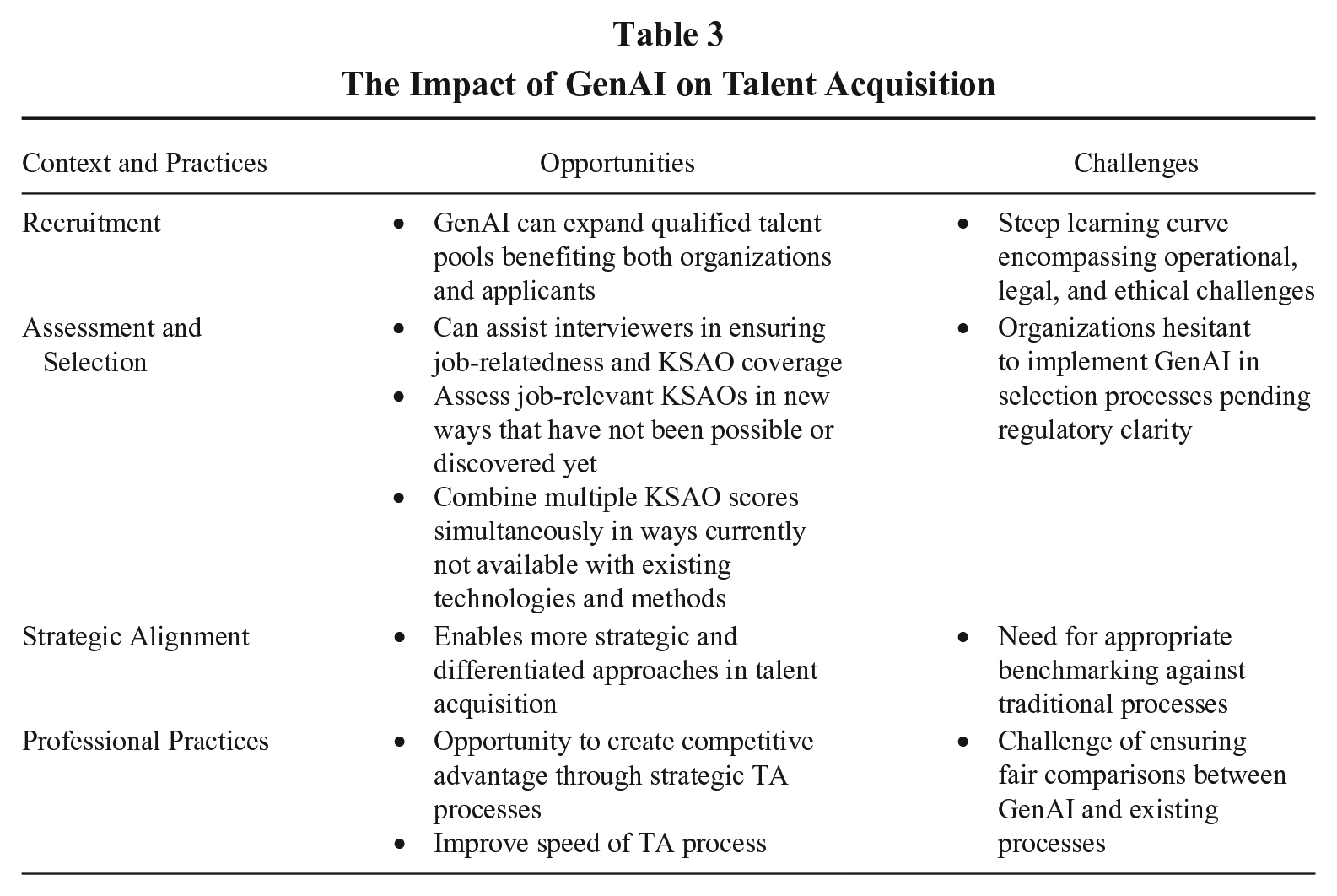

Context: Practices, Opportunities, and Challenges

There are several potential use cases for GenAI in TA (see Table 3 for examples). Throughout its 100-year history, the science and practice of TA has experienced many technological innovations. Early research considered the fusion of humans and “machines” in a way not so different than today’s conversations about humans and GenAI (Dunlap, 1947). From TA’s adoption of new technologies in the past, we know several things. First, the learning curve is steep (in terms of operational challenges, legal risks, and ethical dilemmas). Second, an early lack of regulatory guidance leads to a wide variety of practices that are of questionable legality. However, third, professional guidelines do not change much with each evolution. Finally, the new technologies have led to operational innovations that made TA faster, cheaper, and easier to implement, and many also increased the candidate experience. Overall, GenAI has the potential to improve TA if it delivers some combination of the implications shown in Table 3.

The Impact of GenAI on Talent Acquisition

Currently the use of GenAI for recruitment seems the most immediately valuable opportunity with the least amount of risk, as expanding the pool of qualified talent has many benefits for organizations and applicants. Use of GenAI for selection, on the other hand, is currently being avoided by many firms at the Summit until there is clearer regulatory guidance. We suggest this should be limited to AI Assistants and possibly AI Copilots, where the technology is more transparent and its consequences clearer. For example, using GenAI to assist interviewers by ensuring their questions are job-related and cover the relevant KSAOs could add value by saving time, increasing validity, and reducing bias.

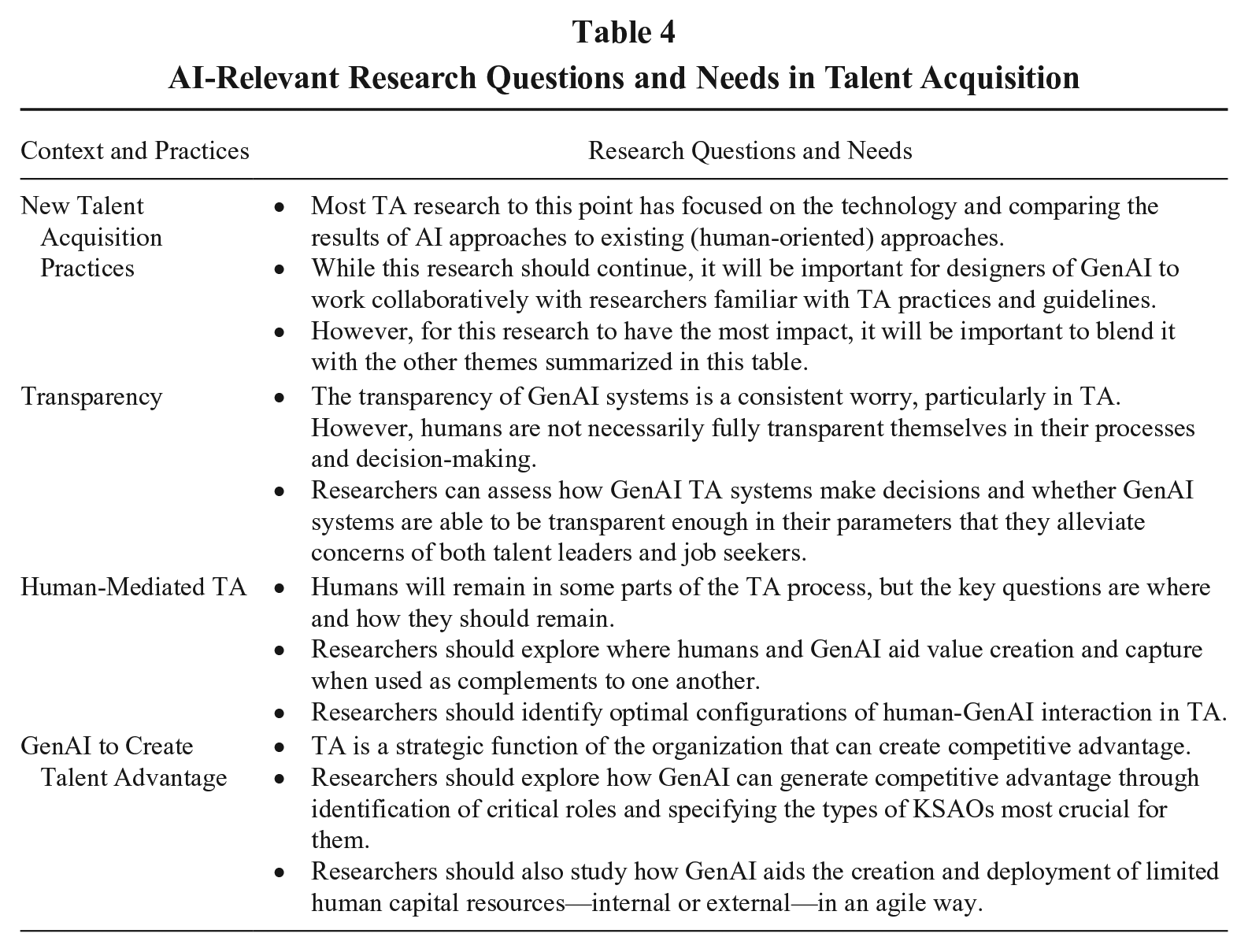

Research Questions and Needs

Table 4 provides a summary of areas where research can most advance TA-related science and practice. First, it is important to ensure “fair” comparisons between GenAI and existing TA processes. Current practice is always the benchmark against which new innovations are evaluated. Human-dominated TA is far from perfect. Many of the reasons for not using GenAI are based on a belief that human-dominated TA is an appropriate standard. For example, “AI Agents are not transparent and can’t be trusted in hiring.” We know a lot about how humans make decisions, but there are certainly many aspects of human decision-making processes that remain unobservable. Human decision-makers also lack transparency to some degree. Second, there is work required to identify the best approach that keeps humans “in the loop” with TA. The field is not yet ready to turn over TA entirely to GenAI.

AI-Relevant Research Questions and Needs in Talent Acquisition

Research also needs to consider how GenAI can strategically influence the TA process (Theme #6). Where does GenAI add to value creation and capture, where does human input add to value creation and capture, and how are these elements best combined? Research should consider ways that GenAI can make TA more differentiated and strategic. Such a “talent to value” approach offers a means of using TA to create competitive advantage by agile creation and deployment of limited human capital resources (Barriere, Owens, & Pobereskin, 2018). GenAI might be the technology needed to make such precision-guided TA possible, and such benefits are likely to apply to internal placement and classification as much as external TA.

GenAI and Training and Development

Context: Practices, Opportunities, and Challenges

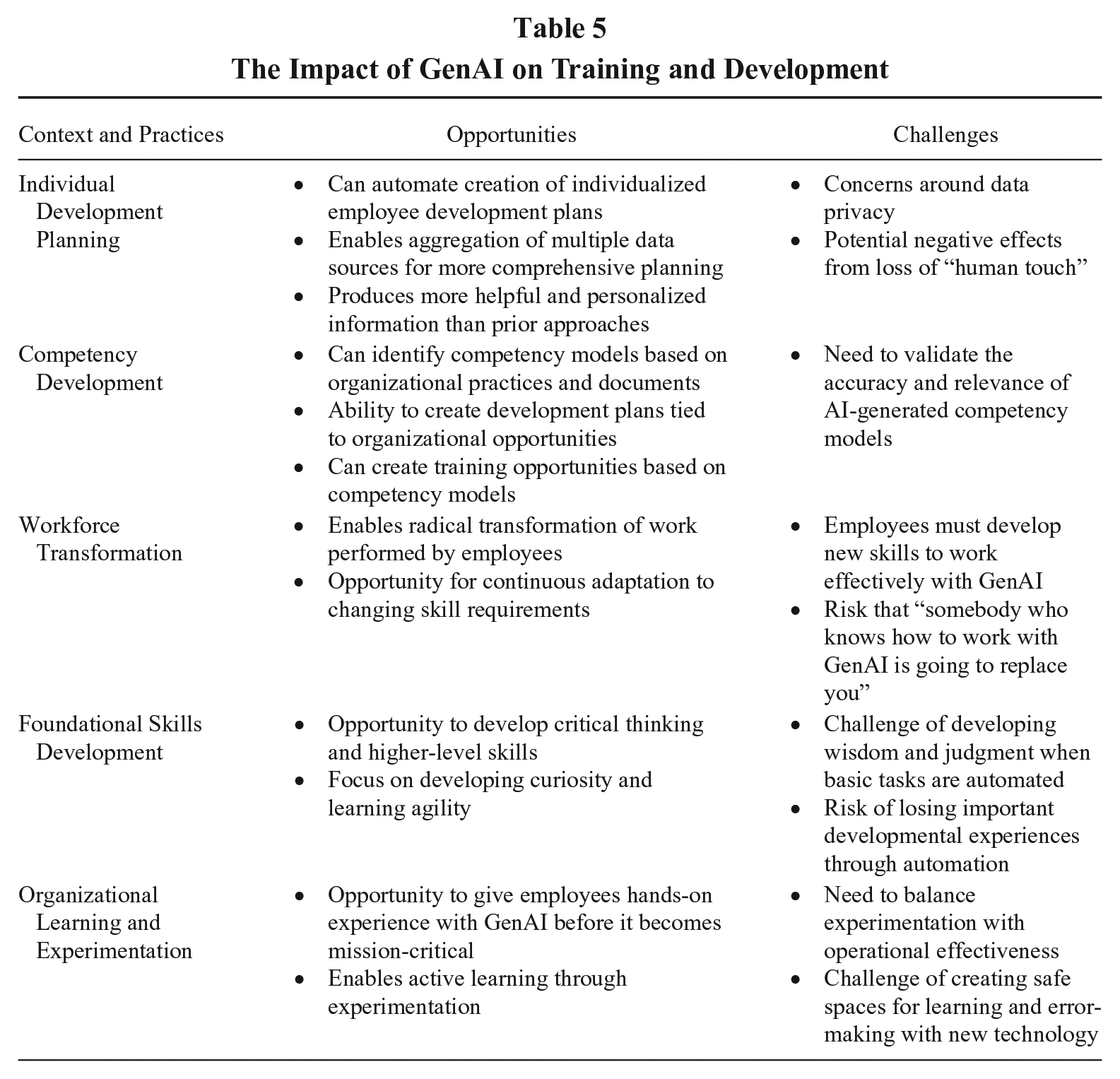

At the Summit, it became clear that many organizations are using GenAI for training and development (T&D) and see great potential in this (see Table 5 for a summary; these practices and the related research needs are especially relevant to Summit Themes 1, 3, 5, and 6). For example, GenAI can automate creation of individualized employee development plans, saving time and cost while also ensuring that the development plan is robust and tied to opportunities within the organization. More foundationally, it can also identify competency models based on other practices and documents in the organization and then create both development plans and training opportunities that are tied to these competency models. Both employees and managers report the resulting information to be vastly more helpful than prior development plans (both more comprehensive and more personalized, especially because it allows for aggregation of multiple data sources). At the same time, there are challenges around data privacy, and we need longer term research on the effects of the loss of “human touch” in this area.

The Impact of GenAI on Training and Development

There are also broader T&D implications of the GenAI revolution. Summit participants widely believed that the greatest impact of GenAI will be how it radically transforms the work performed (and therefore the characteristics required) by employees (Theme #1). As one HR leader noted, “GenAI is not going to replace you [in your job]. Somebody who knows how to work with GenAI is going to replace you!” Accordingly, on the minds of all Summit participants (especially the CHROs, Theme #6) was the question of “what is the reskilling and upskilling necessary to help employees adapt to these changes?” This is, of course, a T&D question.

Research Questions and Needs

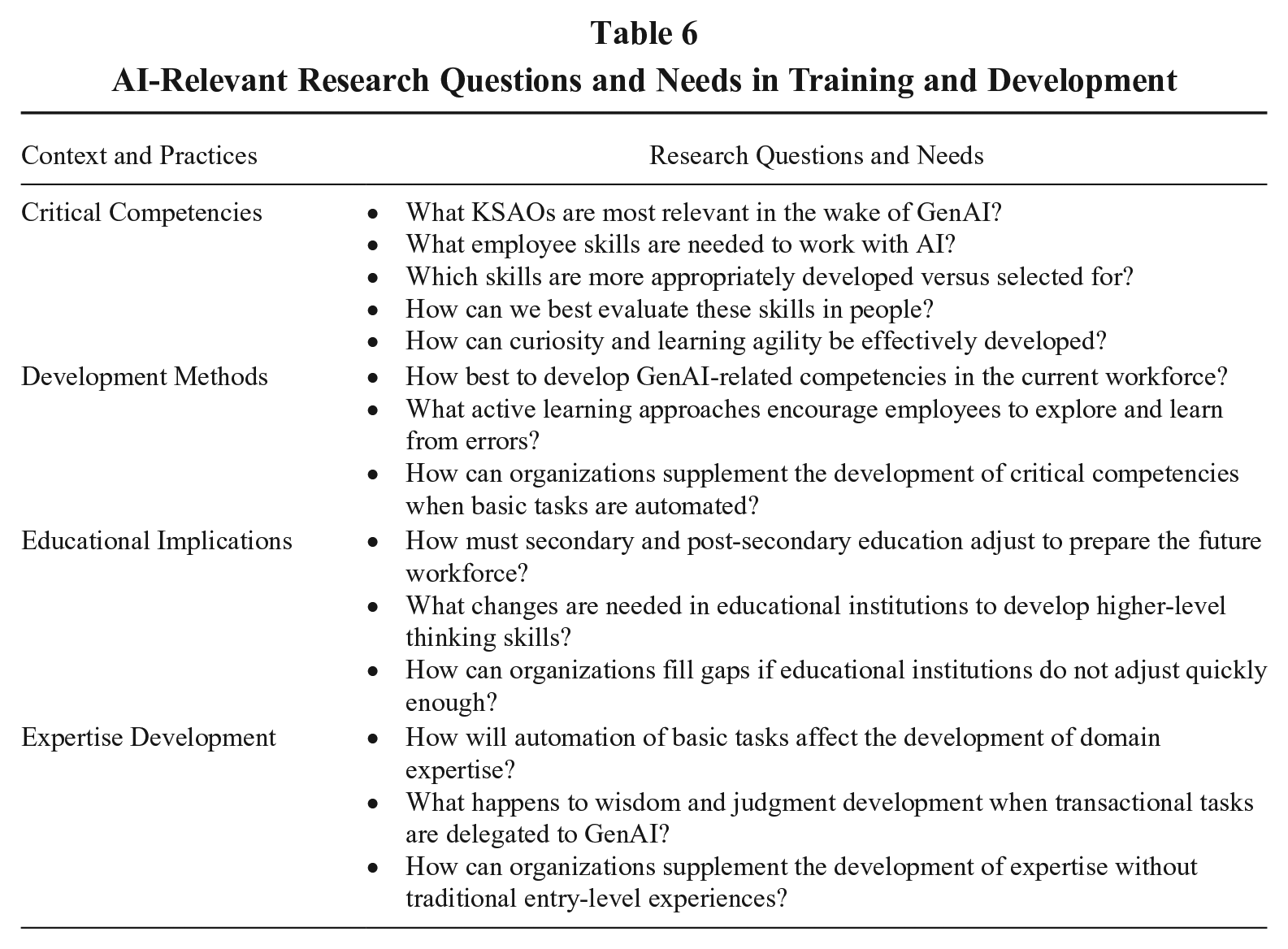

There are several pressing questions in this area, questions that T&D scholars are uniquely qualified to help answer; these questions are summarized in Table 6. What KSAOs are most relevant in the wake of GenAI? What are the employee skills needed to work with AI, which of these are most appropriately developed versus selected for, and how can we best evaluate these skills in people? For example, curiosity and learning agility will likely be essential, as well as characteristics related to engaging in constant experimentation with GenAI (including and especially at the level of individual contributors). In addition, if GenAI is going to be “the great first draft” (as mentioned several times at the Summit) that someone must review and edit, this puts renewed emphasis on these competencies. Interestingly, compared to many extant competencies, GenAI-related skills are likely to be highly generalizable across organizations, suggesting that researchers have an especially critical role to play in identifying and investigating these constructs. Non-governmental organizations (e.g., the World Economic Forum, 2016, 2018) have been working to map job transitions and predict demand for different skills related to this transformation; academics need to contribute to these conversations.

AI-Relevant Research Questions and Needs in Training and Development

Second, there is the critical question of how best to develop these competencies in the current (and future) workforce. A Summit presenter suggested that organizations need to give employees a chance to experiment with GenAI technology before it becomes “mission critical” for their jobs. Academics likely have valuable advice to offer in terms of active learning approaches (i.e., error management training) that encourage employees to proactively explore and to make and learn from errors (Bell, Tannenbaum, Ford, Noe, & Kraiger, 2017). In addition, Summit participants argued that these sorts of competencies will significantly change what organizations need from secondary and post-secondary education (e.g., more emphasis on higher-level thinking skills). If our educational institutions do not adjust sufficiently or quickly enough to this demand, organizations will need to fill the gap. Another provocative question tied to this issue was posed at the Summit: if we need employees with the wisdom and experience to effectively edit and improve GenAI outputs (and to serve as the “human in the loop”), what happens if we remove the opportunities to develop this wisdom and judgment by virtue of having delegated the component transactional tasks to GenAI? A generation from now, how are we going to supplement the development of these critical competencies most effectively?

We believe that there are broad risks related to the reduction of development opportunities due to the automation of basic tasks in various fields, and organizations are going to have to be mindful of the implications of this for developing talent. It is well established that early entry into an occupation (MacDonald, 1988) and deliberate practice (“the individualized training activities specially designed by a coach or teacher to improve specific aspects of an individual's performance through repetition and successive refinement,” Ericsson & Lehmann, 1996: 278–279) play a significant role in the development of domain expertise and the emergence of stardom (Call, Nyberg, & Thatcher, 2015). For many novice learners, this happens through engagement with relatively routine and potentially automatable activities early in their careers. Think, for example, of a lawyer working their way through cases in the law library, or a software engineer writing or debugging code. When these formative experiences are no longer available, how will this impact the development of the domain experience necessary to achieve exceptional performance? For example, Beane (2019) highlighted the ineffectiveness of traditional training practices in the context of robotic surgery. Indeed, the emergence of what he termed “shadow learning” in response to these limitations led to further challenges, including hyper-specialization and a decreasing supply of experts relative to demand. Thus, the impact of the automation of critical foundational experiences on the attainment of the KSAOs required to enable exceptional performance is an important empirical question. How we can supplement the absence of these experiences through formal education, on-the-job learning, and employee-led upskilling, is an important challenge (Dell’Acqua et al., 2023), and one with which HR researchers must engage.

GenAI and Performance Management

Performance management (PM) is defined as “a continuous process of identifying, measuring, and developing the performance of individuals and teams and aligning performance with the strategic goals of the organization” (Aguinis, 2013: 2). There is both a question of how GenAI can be used for tasks within PM (i.e., how GenAI might revolutionize PM itself), as well as a question of what the GenAI age means for PM in organizations (i.e., how PM needs to evolve to support the GenAI revolution). Both questions are important and tied to Summit themes (especially Themes 1, 3, 4, and 5), and can be used to chart the course of future work in this area.

Context: Practices, Opportunities, and Challenges

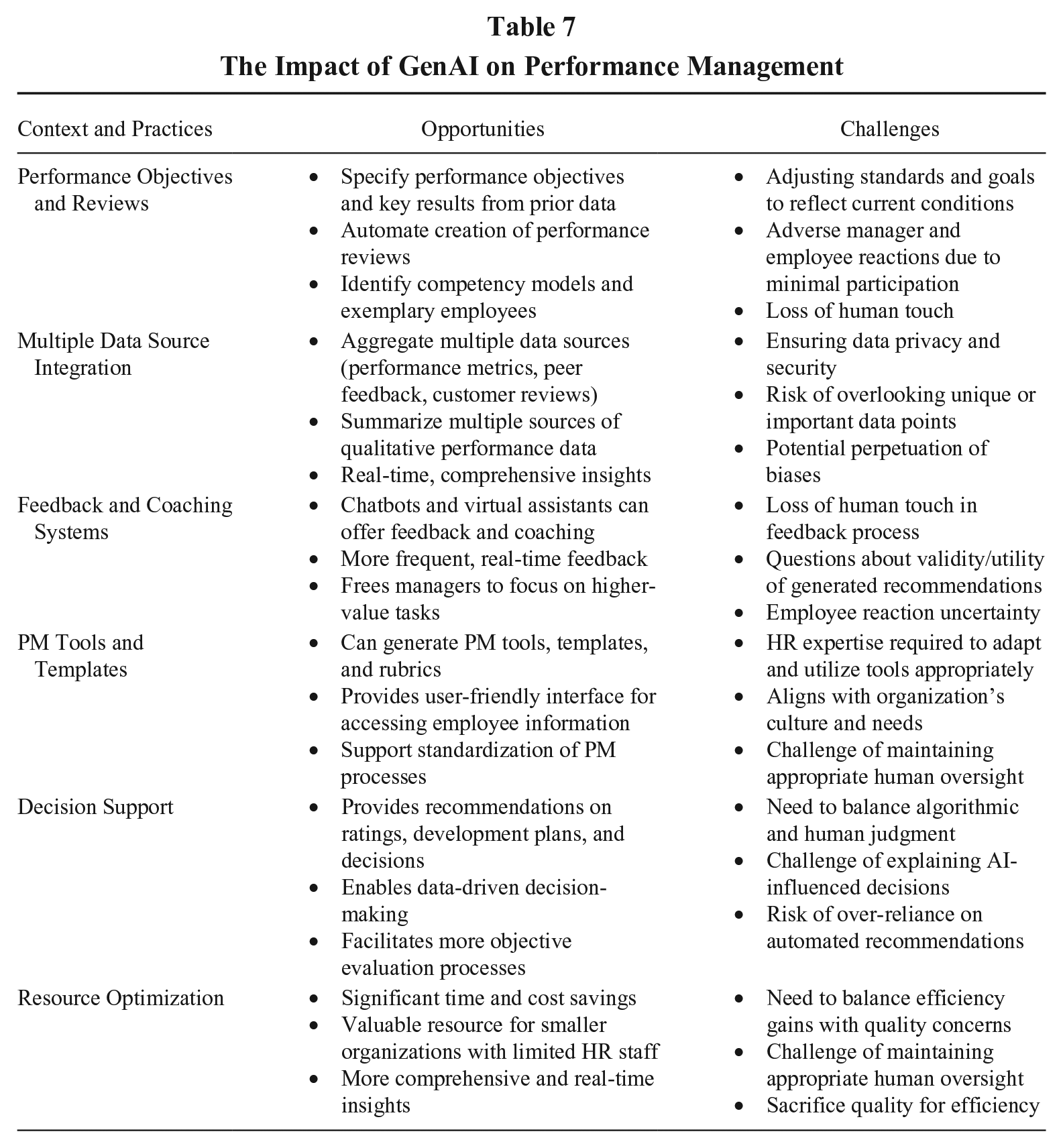

As Table 7 shows, there are several potential use cases for GenAI in PM, many of which are being tried by Summit participants (see also Varma, Pereira, & Patel, 2024). These include using GenAI to identify performance objectives and key results, write employee performance reviews, and even provide feedback and coaching. Interestingly (for PM researchers), it was suggested at the Summit that PM may in fact represent the area of greatest initial opportunity for GenAI in HR, for a few reasons. For one, because PM tends to be dreaded and despised by employees and managers alike (e.g., Adler et al., 2016; Ewenstein, Hancock, & Komm, 2016), this may be an area where initial buy-in for GenAI will be greater (Theme #3). Managers may embrace a tool that removes much of the time-consuming and perceived low value-added components of PM; and employees, who have never been fond of how managers evaluate their performance, might be less resistant to having this managerial task automated. Moreover, every minute a manager does not need to spend in a transactional HR process is time they can devote to something of higher value. And managers spend a great deal of time conducting performance evaluations and reviews (e.g., 2 million hours a year at Deloitte; Buckingham & Goodall, 2015). Thus, the potential benefits in terms of time-savings as well as employee and manager buy-in suggest that the PM function may be an excellent candidate for early adoption of GenAI in organizations. Yet, ultimately, employee and manager reactions to GenAI for PM is, of course, an empirical question, and we need additional research in this area.

The Impact of GenAI on Performance Management

Research Questions and Needs

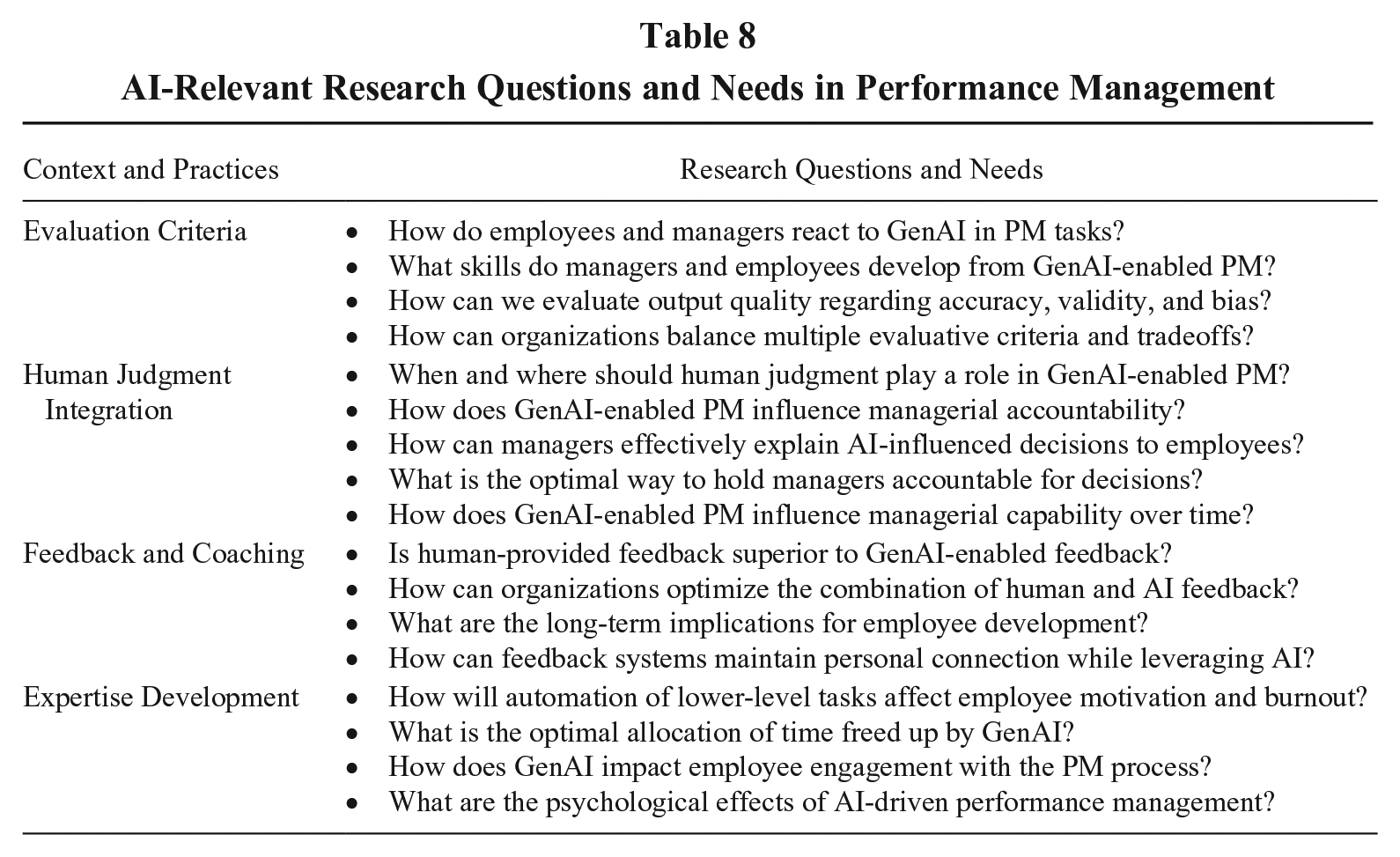

Our knowledge of PM suggests several areas that researchers should study to help practitioners with whether and how to capitalize on this opportunity (see summary of research agenda in Table 8). First, the goal of any effective PM system should be to help managers make the best decisions possible about their people, ideally with the least complexity possible (Effron & Ort, 2010). With GenAI, it appears (based on Summit examples) that PM data can be made available to managers via a single, user-friendly interface (e.g., GenAI can overlay all employee information systems, such that a manager can query anything about their people and have it instantly answered); this is exciting! Yet, even this single example illustrates the relevance and complexity of Theme #5 regarding the necessity of thinking about GenAI outcomes in a multidimensional way. The importance of multiple evaluative criteria for PM will become more important as GenAI assumes responsibility for some of these processes. For example, PM researchers would argue that efficiency is not a sufficient criterion for evaluating the effectiveness of GenAI-driven PM, given all that PM supports. We also need to know how both employees and managers react to the use of GenAI in PM (Budhwar et al., 2023), as well as what they learn and what skills they develop (see model of PM evaluative criteria by Schleicher, Baumann, Sullivan, & Yim, 2019). The quality of the outputs/decisions coming from PM (e.g., ratings; developmental recommendations; potential, promotion, and compensation decisions) will need to be carefully evaluated for their accuracy, validity, potential biases, and ethicality (Theme #4). Research should be directed to uncovering likely trade-offs across criteria (including at different levels) when GenAI is used for PM (e.g., improvements in efficiency may come with an increased risk of bias). What trade-offs are we (should we be) comfortable with?

AI-Relevant Research Questions and Needs in Performance Management

Second, there is an important question of where does, and where should, human judgment play a role in GenAI-enabled PM tasks (Theme #4). In the case of PM, a “human in the loop” might mean that GenAI provides summary performance information and even recommendations around ratings, promotions, potential, and bonuses, but it is the human manager who actually makes and is held accountable for the decision. However, this does not have to be the only model. An interesting research question is what the quality of the decision looks like under different models (e.g., GenAI providing data and recommendations to managers vs. GenAI also making the decision on ratings or promotions). Also, if GenAI serves as data aggregator and recommender, and manager as decision-maker, what is the optimal way to hold managers accountable for their decisions (and to ensure they can explain them to employees), when they were not responsible for the upstream parts of the process? Another intriguing question is what this “outsourcing” of PM-related tasks to GenAI does, over time, to managerial capabilities, and how it might fundamentally change the role of the manager within PM (Boon & den Hartog, forthcoming).

Third, even if GenAI is used “just” to create the performance reports and to recommend ratings, it was noted at the Summit that this would still free up manager time to spend on “higher value” aspects of PM, like feedback and coaching. This argument assumes that feedback and coaching are something that managers can do well, yet there is ample evidence to suggest they cannot (e.g., Milner & Milner, 2018). Moreover, this begs the question of whether GenAI might not, in fact, be better at these elements of PM than are human managers. In terms of research, it must be asked: What do we know about the value that employees extract from coaching (e.g., role clarity, skill acquisition; Dahling, Taylor, Chau, & Dwight, 2016; Liu & Batt, 2010; Riordan et al., in press), and must this come from a human? More generally, we should not take as a foregone conclusion that these “higher-level” tasks are always going to exist for humans (despite arguments to the contrary); is it not at least possible that we might find, in subsequent evolutions of AI, that many of the higher-level tasks (e.g., providing feedback to or coaching direct reports, or interacting with customers) are also done equally well, or even better, by GenAI?

GenAI and Job and Work Design

Job and work design focuses on structuring, organizing, and defining the roles and responsibilities of employees to optimize work processes and enhance performance, satisfaction, and well-being. It involves both determining specific tasks and responsibilities that make up a job, and how these are done (job design), as well as broader issues around how work is structured and organized within the organization (work design; Grant, Fried, Parker, & Frese, 2010). Work design also considers the impact of technology and organizational changes on work processes and employee roles (Becker & Huselid, 2010), which, in recent years, has included the rise of knowledge work, increasing use of technology, and a growing emphasis on employee autonomy and flexibility. Undoubtedly, and as reflected in the themes from the Summit (especially Themes 1, 3, and 6), the GenAI revolution will fundamentally change the nature of work and jobs, bringing with it major implications for job and work design from both a practical (e.g., how can GenAI tools assist with job and work design) and a scholarly (i.e., what job/work design research questions need to be addressed to help facilitate the GenAI revolution) perspective.

Context: Practices, Opportunities, and Challenges

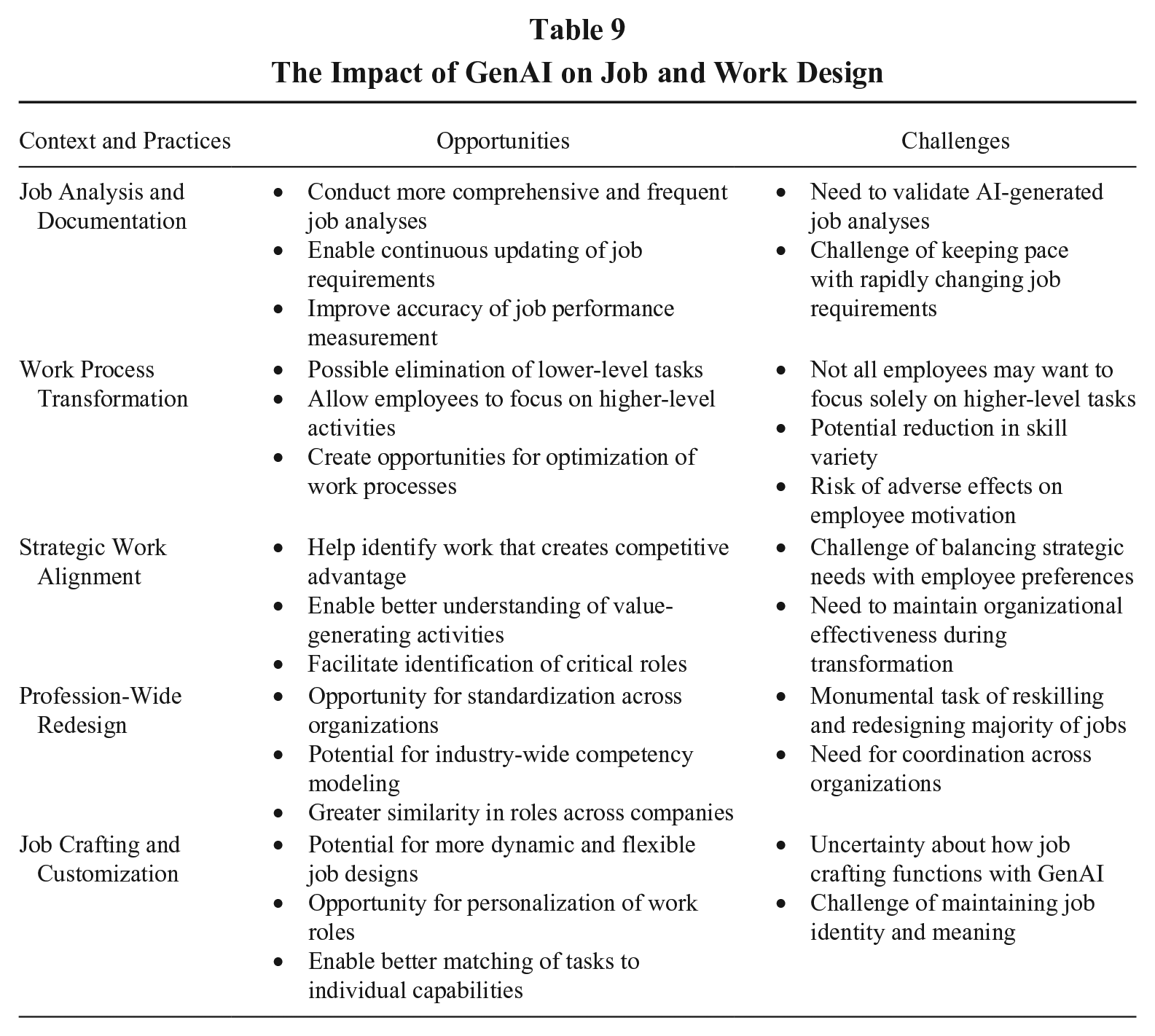

As shared via examples from Summit participants, using GenAI to improve job analysis represents some obvious “low hanging fruit.” Job analysis is foundational to recruitment and selection as well as many other areas of HR, but it is usually shortchanged in practice because of the resource demands to do it right. GenAI offers a means to conduct job analyses more comprehensively and frequently (allowing continuous updating). More accurate specification of job demands (via GenAI tools) offers the potential for both better TA and better PM. GenAI is also being used to enhance job performance measurement, fundamental to many aspects of job and work design interventions, and GenAI will continue to play a role in helping design high-quality work that benefits talent in the organization (Zhang & Parker, 2023). Table 9 provides a summary of several practices, opportunities, and challenges for GenAI in job and work design.

The Impact of GenAI on Job and Work Design

Research Questions and Needs

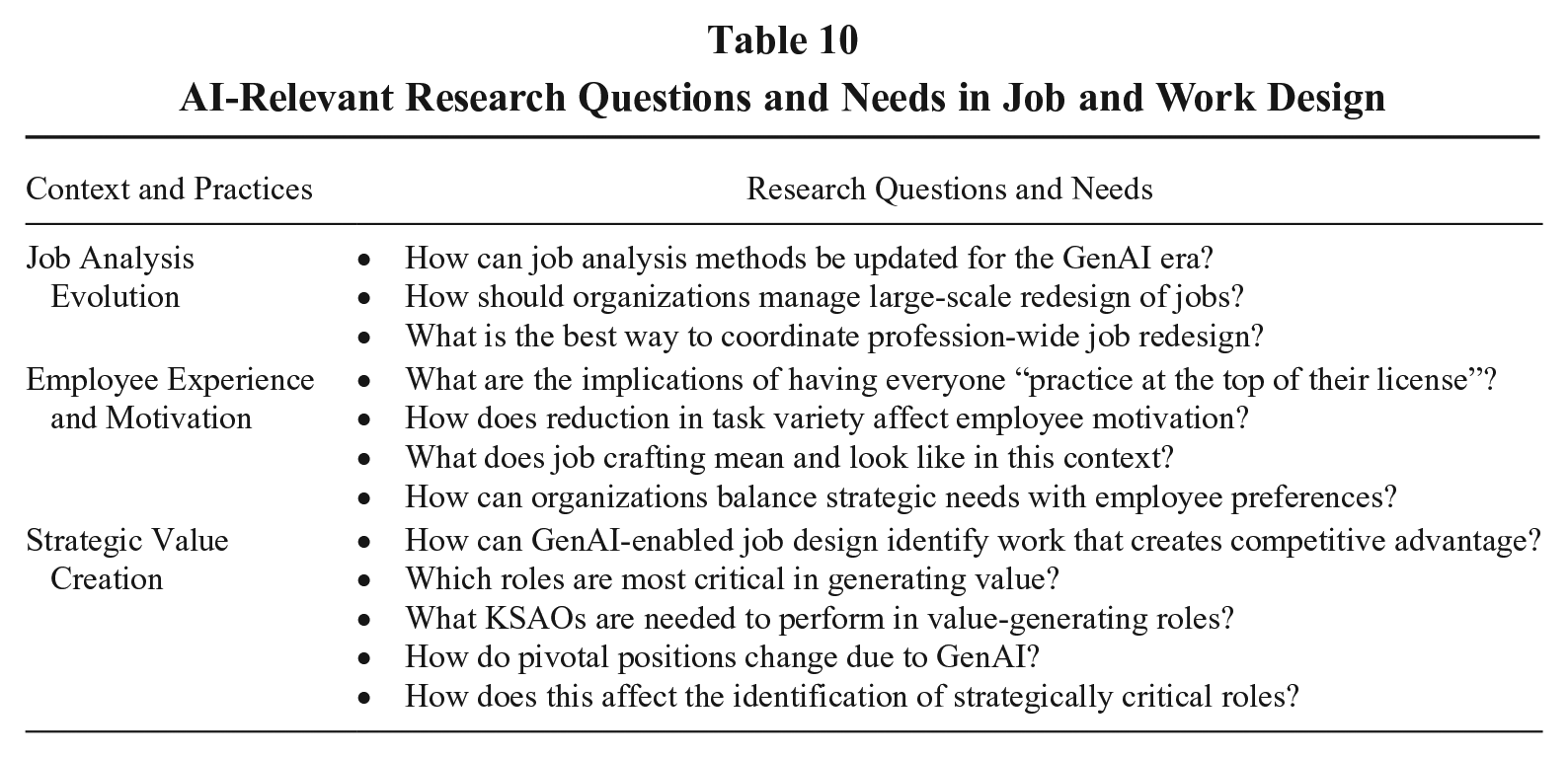

There are also myriad opportunities for future research, both in job design and in broader questions of work design (these are summarized in Table 10). In fact, Summit participants thought the areas of job and task design were especially pressing areas of research if our goal was to facilitate the GenAI revolution. For example, reskilling for and redesigning a majority of jobs (discussed as a likely need in the wake of GenAI) is a monumental task. However, as mused at the Summit, it is probably not a task that needs to proceed organization by organization, but rather profession by profession, and job role by job role. In other words, with the GenAI revolution, there is likely to be more similarity in roles across companies than seen with extant competency modeling. That makes academics uniquely situated to lead these efforts. But we need to understand how to manage this (e.g., ONET?), and we may need to both dust off and rethink our old job analysis and job design skills.

AI-Relevant Research Questions and Needs in Job and Work Design

An intriguing question asked by some of the Summit participants was what the implications are of having everyone “practice at the top of their license” (to borrow a phrase from the nursing profession). That is, stripping employees’ work of the lower-level transactional tasks (which are delegated to GenAI) and leaving only the higher-level activities begs the question: Does everyone want to spend more time on the higher-level activities (Theme #3)? Would this reduce the skill variety (Hackman & Oldham, 1976) of a position and potentially have adverse consequences in terms of employee motivation (or burnout), particularly among those who have a lower need for personal growth and development? Related, what does job crafting (Wrzesniewski & Dutton, 2001) mean and look like in this context?

Viewing job and work design in the wake of GenAI from a strategic perspective (see Becker & Huselid [2010] for a discussion of integrating job design and strategic HRM) reveals additional areas for inquiry, ones with likely immediate practical application. For example, there is a need to identify where GenAI adds value to the work that creates competitive advantage. Research should also consider how GenAI-enabled job/work design can be used to identify the types of work that contribute most to firm competitive advantage, which roles are most critical in generating value, and which KSAOs are needed to perform in those roles.

Related, and in line with theorizing in strategic HRM, the nature of the job is likely to impact significantly on the pressing question on the minds of HR leaders regarding how GenAI will impact on performance. Insights from “pivotal positions” (i.e., those jobs which are central to organizational strategy and where we see the greatest variability in the quality or quantity of output when the quality or quantity of employees in those roles increases) offer a useful lens to think about the potential differential value generation of GenAI in particular jobs (Collings, Mellahi, & Cascio, 2019). As the pace of development in GenAI is currently so rapid, we will likely see a high degree of volatility. Dell’Acqua and colleagues’ (2023) concept of the “jagged technological frontier,” where tasks that appear to be of similar difficulty may be either performed better or worse by GenAI, nicely captures the current uncertainty around the impact of GenAI on jobs. They conclude that how GenAI impacts work will involve careful analysis of how human interaction with AI will evolve based on where tasks sit on this frontier, and how the frontier will change over time. To gain better insight into how pivotal jobs change due to GenAI, research should focus on questions such as which tasks can be replaced by GenAI, and which ones are better done by humans? How does GenAI change which tasks and jobs are of most strategic value to the company? What does this imply for the identification of pivotal positions?

GenAI and Talent Management

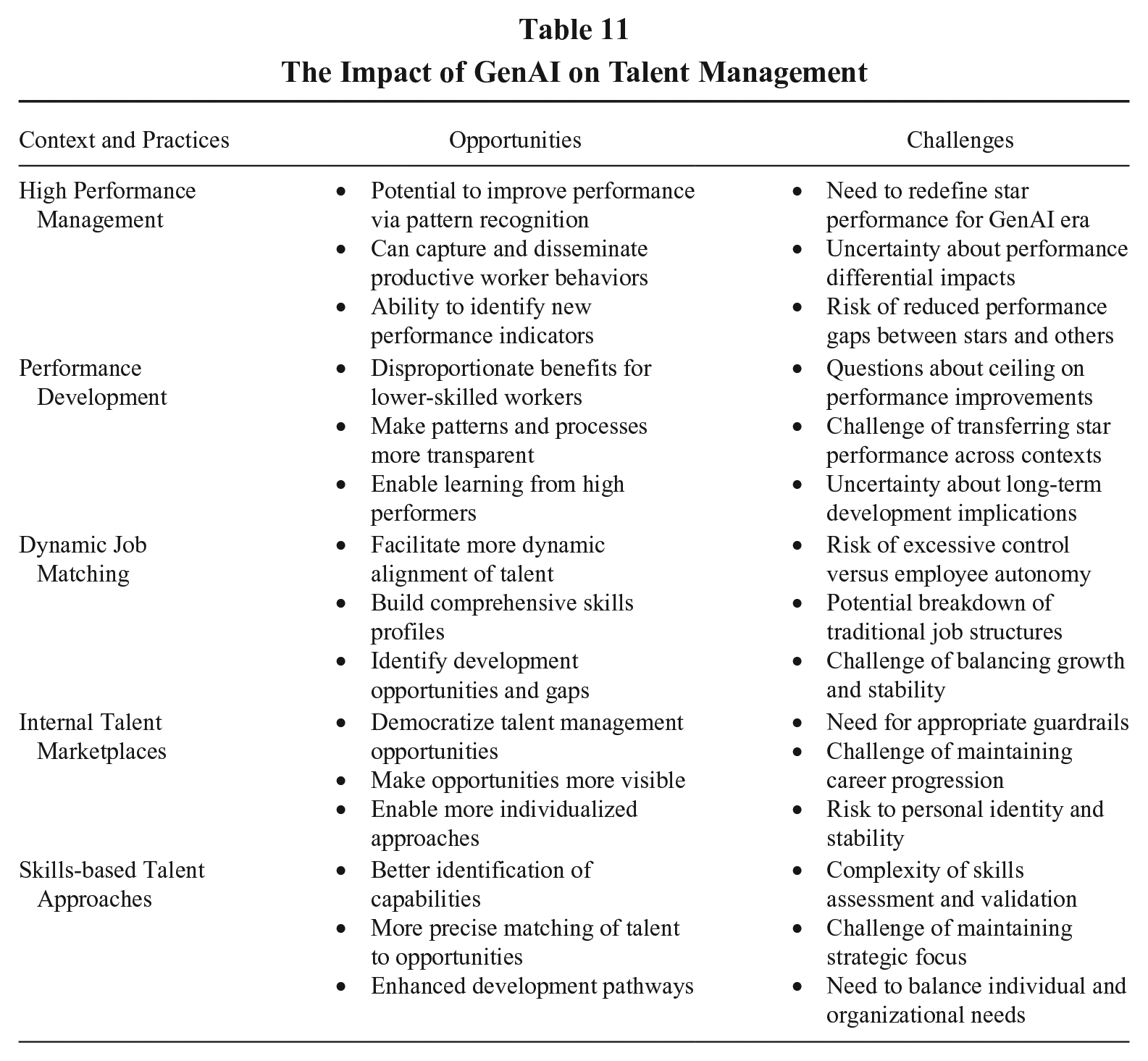

Talent management (TM) can be defined as the attraction, selection, development, and retention of the highest-performing employees in a firm’s pivotal positions (Collings et al., 2019). Thus, an organization’s high performing and high potential employees are critical to the conceptualization and operationalization of TM, and TM represents a differentiated approach to managing those employees. As suggested by trends and use cases shared at the Summit (especially Themes 1, 2, 3, and 5), GenAI is already impacting TM in a few ways and is likely to continue to challenge some of our assumptions and practices around what talent is and how talent should be identified and deployed in organizations, opening important directions for research (see Tables 11 and 12 for summaries). Here we focus on the current context and related research needs in two main areas: (a) how GenAI is likely to impact employee performance and challenge assumptions about antecedents of high performance and the differential performance between stars and other employees; and (b) how GenAI is likely to impact job matching, with implications for how talent is identified and deployed in organizations.

The Impact of GenAI on Talent Management

AI-Relevant Research Questions and Needs in Talent Management

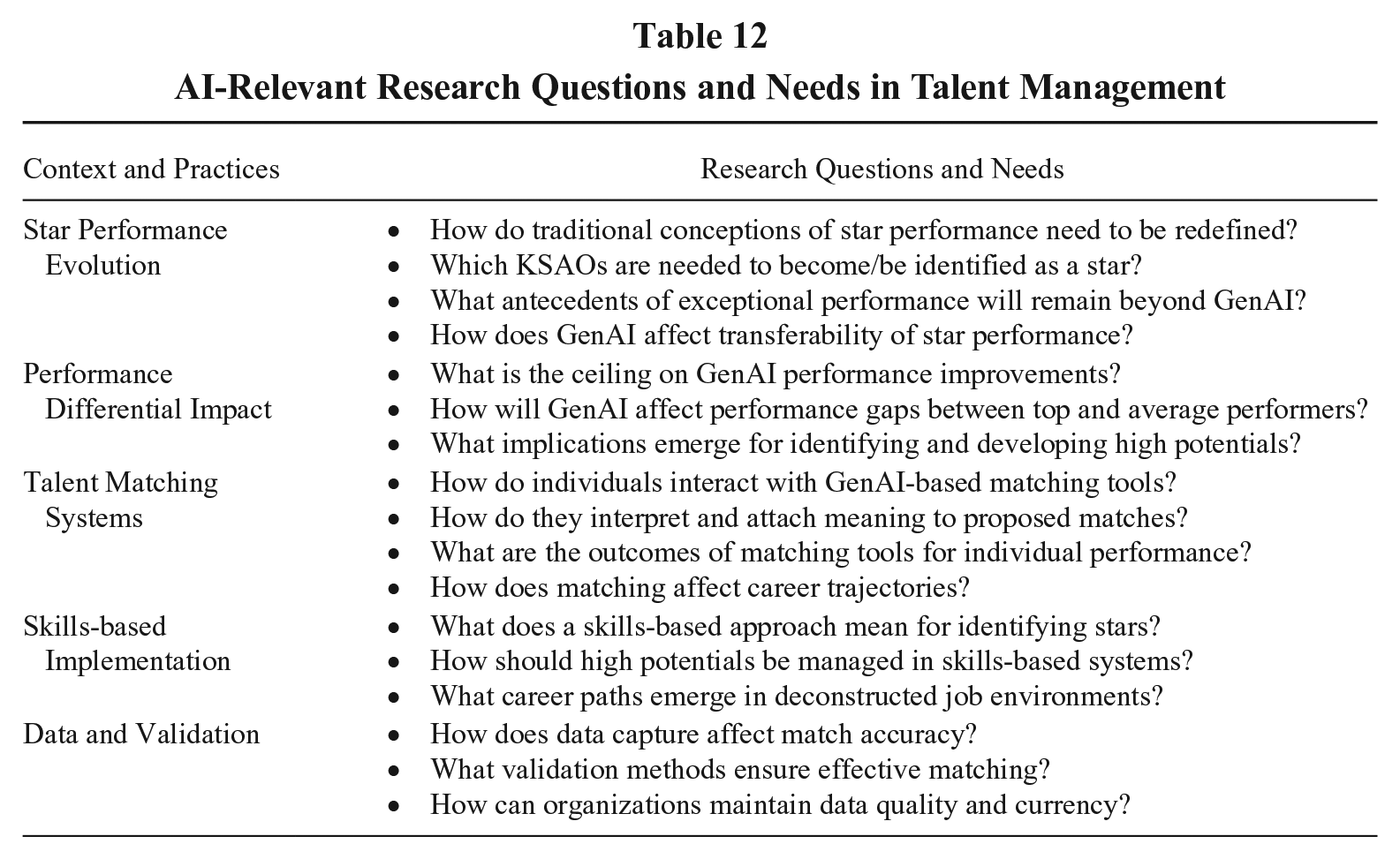

The Impact of GenAI on (High) Performance: Context and Research Needs

GenAI has the potential to significantly disrupt assumptions around the nature of exceptional performance in organizations (Theme #1). While some argue that much of the disruption emerging from AI will be to lower skilled work, there is increasing recognition that GenAI is likely to be transformative at all levels. It may require re-evaluation of taken for granted conductors and insulators of star performance (Aguinis, O’Boyle, Gonzalez-Mulé, & Joo, 2016) and the boundary conditions of differential performance at individual and job levels (Kehoe, Collings, & Cascio, 2023). As Brnjolfsson, Li, and Raymon highlight, a distinguishing characteristic of GenAI is that it can learn to perform tasks in the absence of instructions, “including tasks requiring tacit knowledge that could previously only be gained through lived experience” (2023: 2). Eloundou, Manning, Mishkin, and Rock (2023) categorize emerging GenAI tools as an entirely new category of automation, with abilities intersecting with the most educated, most creative, and most highly paid workers; thus, the impacts of GenAI are expected to be the greatest on those workers (Dell’Acqua et al., 2023). This means that star performers—those who display the highest levels of performance often combined with high and sustained visibility and social capital (Call et al., 2015)—are likely to be very affected by GenAI. To understand this impact, research should explore how traditional conceptions of star performance need to be redefined due to the influence of GenAI. For example, employees may need different combinations of KSAOs to be(come) identified as a star or a high potential; some KSAOs may reduce in importance while new ones may emerge as significant. A key question is how this might change the indicators of potential that organizations should consider in their high potential programs.

GenAI may also narrow performance differentials between star and non-stars, owing to the capacity to capture and disseminate the most productive workers’ behavior patterns (Brnjolfsson et al., 2023). There might be large benefits for non-star performers if GenAI makes patterns and processes more transparent, enabling learning and development (Theme #2). For example, studies across customer service agents (Brynjolfsson, Li, & Raymond, 2025), law examinations (Choi & Schwarcz, in press), and mid-level professional writing (Noy & Zhang, 2023) show performance benefits of GenAI disproportionately accruing to lower skilled or less experienced workers. Indeed, Dell’Acqua and colleagues’ (2023) research on realistic, complex, and knowledge-intensive tasks among business consultants confirmed the differential performance benefits of GenAI: those below the average performance threshold increased performance by 43% and those above it increased by 17%. Choi and Schwarcz (in press: 6) conclude that “AI assistance will most benefit those at the bottom of the skill distribution, potentially acting as an equalizing force in a notoriously unequal profession” (i.e., law). If GenAI significantly alters the distribution of individual performance, narrowing the gap between the best and the rest, this raises questions for TM. For example, what antecedents of exceptional performance will remain beyond the realms of GenAI? What is the ceiling on the performance improvements of GenAI? How does GenAI impact the transferability of star performance from one organizational context to others, given the challenges of transferring star performance across contexts (Kehoe et al., 2023)?

The Impact of AI on Job Matching: Context and Research Needs

Job matching is “the process by which individuals are dynamically aligned with organizations and the situations (roles, jobs, tasks, etc.) within them” (Weller, Hymer, Nyberg, & Ebert 2019: 189), and GenAI will likely facilitate more dynamic matching (Jooss, Collings, McMackin, & Dickmann, 2024). This contrasts with traditional approaches to TM, characterized by a more “static” and “stock” perspective on human capital (Collings et al., 2019). The emerging “flow” or “process” perspective (Burton-Jones & Spender, 2011) provides for a more dynamic approach to managing talent (Ployhart, Weekley, & Ramsey, 2009). Internal talent marketplaces, for example, build skills profiles based on inferences from employees’ education and work experience to identify opportunities and/or development gaps, as well as how these may be bridged through project/job assignments, mentoring, or training (Jooss et al., 2024). Such tools are democratizing TM by making opportunities more visible to a wider group of employees, while also offering a more individualized approach (Theme #3). The value of posting job roles to make them more visible, versus slotting preordained candidates into such roles without an open call, has been shown to lead to better outcomes for organizations and individuals (Keller, 2018), and it seems likely that GenAI can facilitate these processes.

At the same time, the wider trend of job deconstruction (which includes internal talent marketplaces) has been subject to increasing critiques. For example, the specific role descriptions and clear criteria for evaluation of deconstructed jobs combined with performance data can lead to greater control rather than employee autonomy; to the potential breakdown of traditional job structures that have been central to personal identity, stability, and career progression; and to an (im)balance between individual growth and stability in these systems (Rogiers & Collings, 2024). These authors argue that the key to navigating these tensions is putting in place guardrails to ensure that neither extreme becomes dominant to the detriment of the other. These complexities suggest questions for future research on the role of GenAI in matching in TM. For example, how do individuals interact with these tools and how do they interpret and attach meaning to the GenAI-proposed matches? What does a skills-based approach mean for identifying stars and high potentials and for managing and developing them? What might career trajectories look like for high potentials and stars in these emerging contexts?

GenAI and Compensation and Benefits

Context: Practices, Opportunities, and Challenges

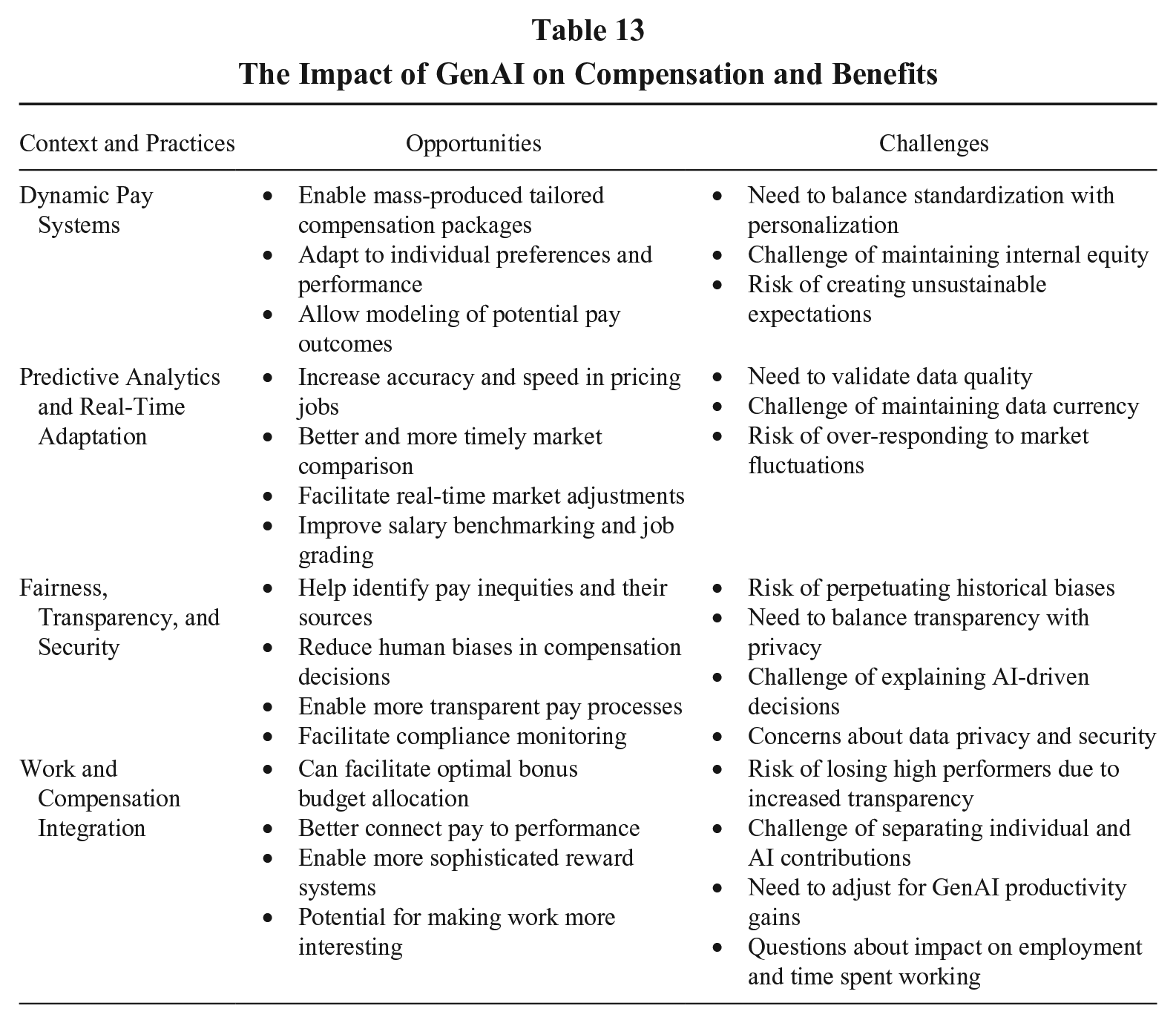

Compensation and benefits include “all forms of financial returns (e.g., salary, bonus, stock) and tangible services and benefits employees receive as part of an employment relationship” (Gerhart, 2023: 14). Related to Theme #2—that companies will compete on the quality and quantity of data—GenAI is likely to increase accuracy, efficiency, and speed in pricing jobs and employees, especially in dynamic markets (see Table 13 for a summary of practices, opportunities, and challenges). Efficiency increases arise from GenAI’s capacity to provide better and more timely information to applicants, employees, and employers, facilitating quicker, more accurate matches, and reducing mismatches. For example, Summit participants shared that GenAI can facilitate optimal allocations of bonus budgets based on a comprehensive analysis of internal and external data (i.e., performance, market conditions, and internal equity). We have already seen increased information availability due to technology (e.g., job search and networking websites) and regulatory changes like pay disclosure/transparency laws (Brown, Nyberg, Weller, & Strizver, 2022). Increases in information exchange via GenAI could further reduce labor market friction, creating better matches and value (Gerhart & Feng, 2021).

The Impact of GenAI on Compensation and Benefits

Whether this greater information and transparency that can accompany GenAI-based compensation will increase procedural fairness perceptions (Theme #4) depends on pay system quality (Alterman, Bamberger, Wang, Koopmann, Belogolovsky, & Shi, 2021). If GenAI helps explain the relationship between pay and performance in organizations where they are better connected, it could lead, in part via higher perceived fairness, to higher individual and organizational performance and employee pay satisfaction (Trevor, Reilly, & Gerhart, 2012). In the face of high employee mobility, as well as reduced friction in the labor market due to better information, sorting effects could also become stronger, meaning an increased risk to employers of losing high performers when they perceive their pay would better reflect their performance elsewhere (e.g., Gerhart, 2023; Nyberg, 2010).

An overarching compensation-related issue will be a new twist on an old challenge: how to separate and evaluate a worker’s contributions in the face of interdependence, but now the interdependence is with GenAI rather than other (human) workers. In areas where GenAI capabilities are standardized across organizations, this will likely be less of a challenge than in jobs where GenAI is either more tailored to the organization or where the organization has developed a nonstandard way to leverage GenAI.

Research Questions and Needs

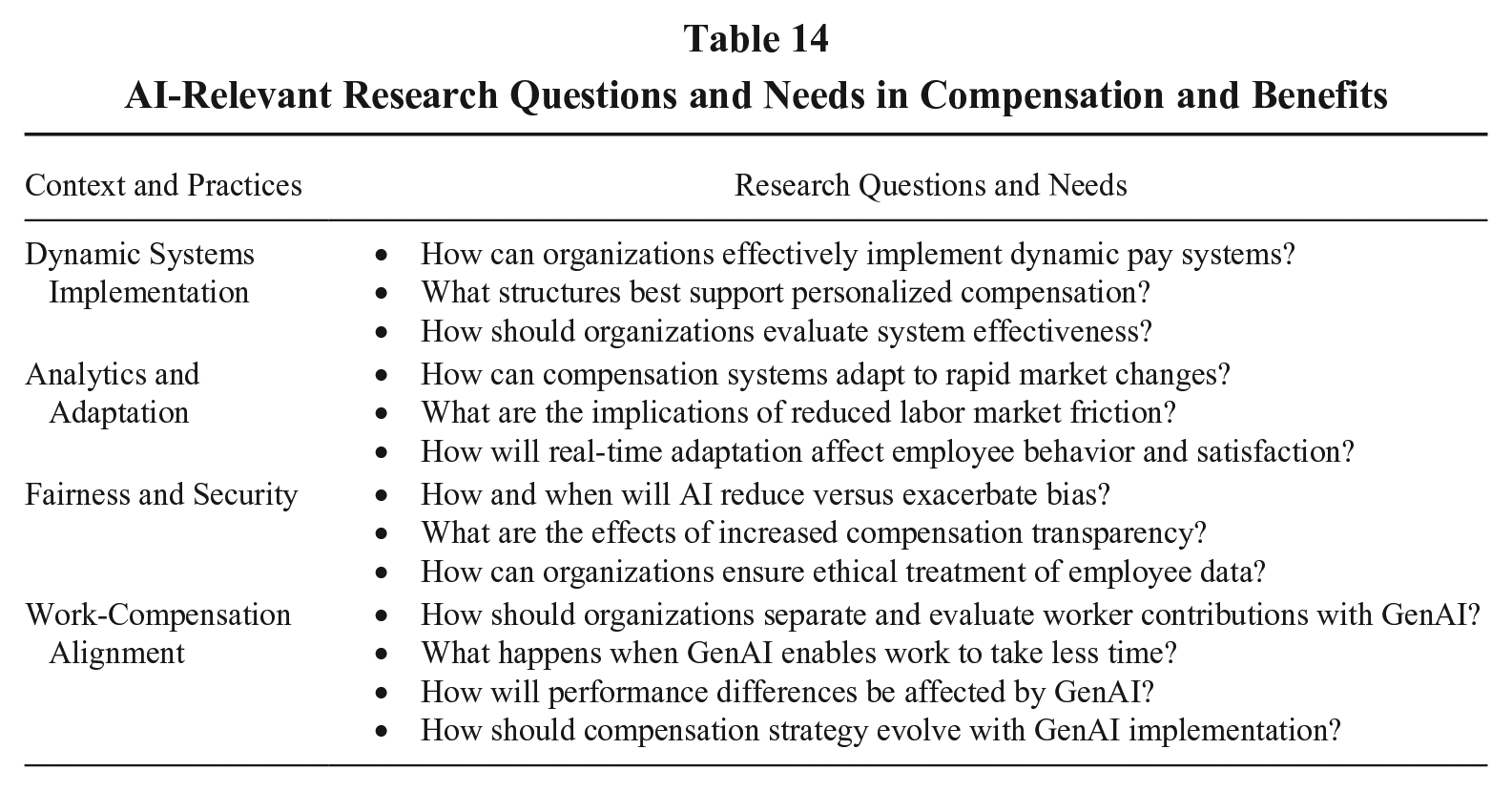

In Table 14, we identify four broad areas where future research should examine how, when, where, and why GenAI may influence compensation (especially related to Themes 1, 2, 4, and 6). We highlight a few of these here.

AI-Relevant Research Questions and Needs in Compensation and Benefits

GenAI may enable dynamic pay systems through mass-produced tailored compensation packages based on individual preferences and performance. Previous individualization attempts (e.g., cafeteria-style benefits) have had mixed results. However, if employees can access accurate and trusted tools that explain the link between pay and performance and can model potential pay outcomes under various scenarios in an understandable format, such systems could make tailored approaches viable (e.g., Abdulsalam, Maltarich, Nyberg, Reilly, & Martin, 2021; Maltarich, Nyberg, Reilly, Abdulsalam, & Martin, 2017). This could revolutionize compensation strategy, and it suggests several research questions. Regarding real-time adaptation, GenAI may enable continuous adjustments to compensation strategies based on the external market and internal performance, driving greater relevance to evolving needs. This opens new areas of investigation, and research will need to examine and explain real differences resulting from such compensation changes, differentiating them from Hawthorne effects.

Questions around how organizations can and should integrate GenAI and human decision-making in compensation raise concerns of fairness, transparency, and security (Theme #4). Will—or, more precisely, when will—GenAI insights into compensation decisions require human judgment? Summit attendees highlighted the ethical imperative to “keep humans in the loop” regarding decision-making responsibility, but how long will this be necessary/helpful when it comes to compensation? Regarding work’s relationship with compensation, the efficiency gains from GenAI suggest that work could take less time. Pay is often based on an expected number of hours worked (e.g., a 40-hour work week). What happens if fewer employees produce more in less time? How compensation could and should account for changes in time spent working is a challenging issue with societal implications that researchers should address.

Finally, we identify a more general research need in this area. As Summit participants reminded us, GenAI implementation must start with the organization’s strategy, including its strategy for compensation (Nyberg et al., 2023; Theme #6). It will be tempting to reach quick conclusions about GenAI’s impact, but we must remain grounded in theory and empirics to avoid becoming overly influenced by fads that lead to flawed conclusions, including and perhaps especially in the area of compensation. In reviewing trends in pay for performance research, Fulmer, Gerhart, and Kim (2023) highlight the importance of theory and empirical research to understand the implications of compensation strategies; this will both be more possible and more important as organizations embrace GenAI. The reliance on GenAI-driven compensation decisions could inadvertently introduce biases or overlook nuanced aspects of human judgment. Thus, following the perspective of Fulmer et al., (2023), we advocate here for a research agenda that explores the innovative applications of GenAI in compensation while also critically examining its alignment with and impact on established compensation theories.

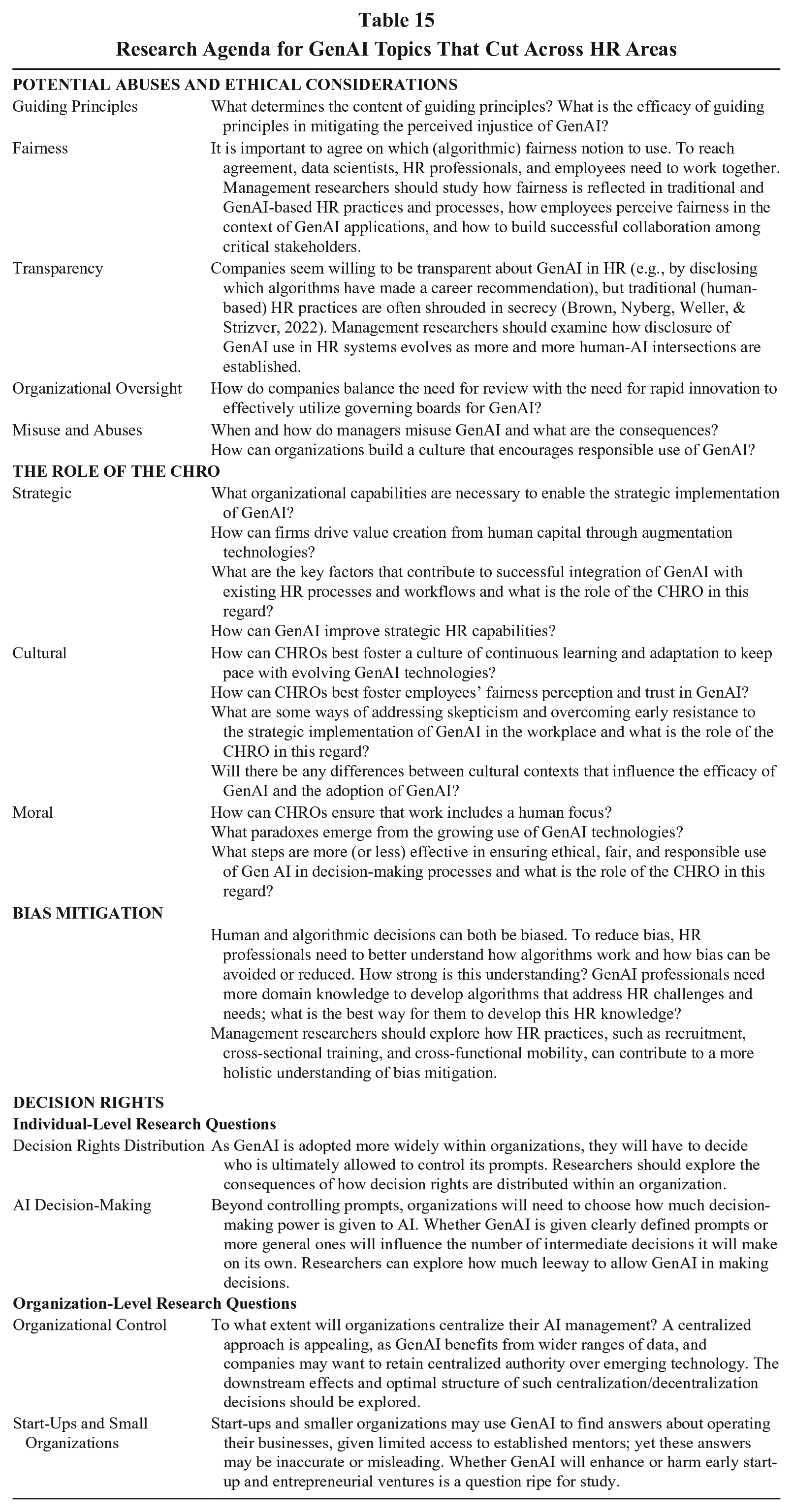

Other Research Needs at the GenAI-Hr Interface

The preceding sections discussed research questions tied to Summit themes for specific HR functional areas. There are also pressing research needs that cut across HR areas, and we summarize these in this section (and in Table 15). This includes research related to ethics and the role of CHROs (previously discussed under Themes), as well as two other data science topics that have implications across HR areas: bias mitigation and decision rights, discussed next.

Research Agenda for GenAI Topics That Cut Across HR Areas

Bias Mitigation

Bias is a critical issue in ensuring that GenAI-based solutions address the sensitivities and ethical challenges associated with many human-based decisions (Köchling & Wehner, 2020). An example is Amazon’s use of AI in hiring that came to public attention in 2018, when it was reported that they had to abandon an AI tool because it showed bias against female candidates (Dastin, 2018). The tool had been designed to review candidates’ resumes (submitted to the company over a 10-year period) and rate them, like how shoppers rate products on Amazon. However, because the tech industry, including Amazon, is male dominated, the AI learned to favor male candidates. For example, it would penalize resumes that included the word “women’s,” as in “women’s chess club captain,” and downgrade graduates of all-women colleges. Amazon’s experience underscores challenges of using GenAI in HR and highlights the need to continuously monitor and update GenAI systems to ensure they are not perpetuating biases.

Effective practice and impactful research in this area require understanding that bias in GenAI occurs for multiple reasons (De-Arteaga, Feuerriegel, & Saar-Tsechansky, 2022; Feuerriegel, Dolata, & Schwabe, 2020). “Data bias” refers to systematic discrimination that can be present in historical data used to train GenAI models, which often reflects past inequalities; if past hiring decisions were biased against certain groups, the GenAI system might learn to replicate these biases. “Algorithmic bias” reflects AI models that are biased due to the way they are designed or trained. For example, AI models typically use correlations and can learn spurious patterns. So even if gender is removed from a resume, a model may still guess it from other features such as hobbies or maternity leave. Similarly, many AI algorithms are trained to prioritize accuracy for the advantaged group at the cost of error for the disadvantaged group. As a result, if the training dataset includes fewer women than men, the AI model is prone to learn correct predictions for the latter, while it has a higher propensity to make errors for the former.

Significant attention has been devoted to addressing bias in AI, and new metrics have been developed for measuring bias (De-Arteaga et al., 2022). These metrics can help in identifying situations where risk of bias exists (i.e., “auditing” existing AI systems). In addition, algorithmic approaches have been developed to mitigate bias once it has occurred. However, none of these approaches are a silver bullet; rather, addressing GenAI bias in HR use cases requires a multifaceted approach, combining technical, organizational, and ethical considerations. To this end, and echoing Summit Theme #5, we emphasize the importance of stakeholder engagement, transparency and explainability, regular auditing, and monitoring throughout the GenAI in HR value chain. It must become an area of research inquiry among management and HR scholars, and not confined solely to the data scientists. Researchers should explore how multiple HR practices can contribute to a more holistic understanding of bias mitigation (see Table 15).

Decision Rights