Abstract

The detrimental influence of cognitive biases on decision-making and organizational performance is well established in management research. However, less attention has been given to bias mitigation interventions for improving organizational decisions. Drawing from the judgment and decision-making (JDM) literature, this paper offers a clear conceptualization of two approaches that mitigate bias via distinct cognitive mechanisms—debiasing and choice architecture—and presents a comprehensive integrative review of interventions tested experimentally within each approach. Observing a lack of comparative studies, we propose a novel framework that lays the foundation for future empirical research in bias mitigation. This framework identifies decision, organizational, and individual-level factors that are proposed to moderate the effectiveness of bias mitigation approaches across different contexts and can guide organizations in selecting the most suitable approach. By bridging JDM and management research, we offer a comprehensive research agenda and guidelines to select the most suitable evidence-based approach for improving decision-making processes and, ultimately, organizational performance.

Keywords

Organizational performance is tightly linked with the quality of the judgments and decisions made by individuals and teams (Heath, Larrick, & Klayman, 1998; Milkman, Chugh, & Bazerman, 2009). However, decision-makers often face constraints of time and cognitive resources (Keren & Teigen, 2004; Simon, 1978) that make them susceptible to cognitive errors and biases. 1 These biases can negatively impact outcomes across various organizational functions, causing detrimental consequences such as excessive market entry (Cain, Moore, & Haran, 2015; Camerer & Lovallo, 1999), startup failure (Cassar & Craig, 2009), discrimination in hiring and promotion practices (Krieger & Fiske, 2006; Nagtegaal, Tummers, Noordegraaf, & Bekkers, 2020), and suboptimal capital allocations (Bardolet, Fox, & Lovallo, 2011).

While the study of judgment errors and cognitive biases is now firmly established in management research (e.g., Das & Teng, 1999), most of this literature focuses on biases affecting a specific organizational domain (e.g., negotiation, Caputo, 2013, Schweinsberg, Thau, & Pillutla, 2022; entrepreneurship, Shepherd, Williams, & Patzelt, 2015; Thomas, 2018; environmental transformations, Acciarini, Brunetta, & Boccardelli, 2021; and employee selection, Moore & Flynn, 2008), or on the antecedents and consequences of specific biases (e.g., framing bias, Cornelissen & Werner, 2014; overconfidence bias, Chen, Crossland, & Luo, 2015; Heavey, Simsek, Fox, & Hersel, 2022; Russo & Schoemaker, 1992; Simon & Houghton, 2003; Tang, Qian, Chen, & Shen, 2015).

Relative to the demonstration of these biases and their impact on organizations, less attention has been devoted to investigating interventions that can improve the quality of the judgments and decisions made by individuals and teams and, as a result, enhance organizational performance (Heath et al., 1998; Milkman et al., 2009). This paper aims to redirect the attention of management scholars studying decision-making processes beyond the assessment of cognitive biases in organizations toward the rigorous study of approaches to mitigate these biases.

Drawing from the field of judgment and decision-making (JDM), we offer management researchers a clear conceptualization of two distinct approaches that have been empirically proven to mitigate bias in decision-making—debiasing and choice architecture. Their distinction is necessary because the two approaches follow different pathways for mitigating cognitive biases. Debiasing operates by directly equipping decision-makers with bias awareness, training, or tools to recognize and counter the influence of biases in their judgment and decision-making processes (Fischhoff, 1982). In contrast, choice architecture focuses on changing the structure of the decision problem or the information pertaining to the decision to facilitate better decision outcomes (Soll, Milkman, & Payne, 2016). Using this conceptualization, we conduct an extensive integrative review (Elsbach & van Knippenberg, 2020) of bias mitigation interventions tested experimentally within each approach.

This review makes several contributions to the management literature in the fields of managerial cognition and organizational behavior especially, as well as to the field of JDM. First, we make management scholars aware of bias mitigation approaches that have not yet been tested for their relative effectiveness in organizations. Second, we introduce a novel framework that lays the foundation of this comparative research and identifies decision, organizational, and individual-level factors that could moderate the suitability and effectiveness of bias mitigation interventions in different organizational settings. This framework addresses earlier calls for systematization of interventions to repair bias in organizations (Heath et al., 1998) and can serve as a practical tool for organizations seeking to tailor their bias mitigation strategies to specific contexts.

Additionally, we aim to promote interdisciplinary dialogue and collaboration with and among JDM scholars from diverse research traditions. While both bias mitigation approaches have been extensively studied in the JDM literature (for general overviews, see Larrick, 2004; Milkman et al., 2009; Soll et al., 2016; for reviews focusing on debiasing, see Arkes, 1991; Scopelliti, 2022; for reviews focusing on choice architecture, see Münscher, Vetter, & Scheuerle, 2016; Szaszi, Palinkas, Palfi, Szollosi, & Aczel, 2018), previous JDM research has almost exclusively tested debiasing and choice architecture interventions separately, with a limited number of studies combining them to identify potential synergies, which presents an opportunity for future research. We also highlight the need for extending research on bias mitigation to groups and for running field tests of debiasing interventions. By bridging JDM and management research, this review offers scholars a comprehensive research agenda and practitioners a set of evidence-based tools to enhance the quality of organizational decision-making processes and a structured approach to implement bias mitigation interventions in organizations.

Scope of the Integrative Review

This review focuses on interventions 2 designed to reduce the incidence of judgment errors or cognitive 3 biases in the decision-making process. We included studies testing interventions to improve the quality of judgments and decisions using experimental research methods that allow the establishment of causality. In response to the need for clearer nomenclature in the study of choice architecture (see Szaszi et al., 2018) and in contrast to earlier reviews that used the term “debiasing” to denote any interventions aiming to reduce bias in general (e.g., as in Soll et al., 2016), we categorized studies as debiasing or choice architecture according to the mechanism explaining how the intervention operates, irrespective of the labels used by the authors. According to our definitions, 4 an intervention was categorized as debiasing if it aimed to reduce cognitive errors or biases “in the decision-maker’s mind” (see Fischhoff, 1982), requiring active involvement from the decision-makers targeted. Conversely, an intervention was categorized as choice architecture if it required a choice architect (other than the decision-maker) to identify the less biased option and to modify the decision environment to facilitate its selection without any additional effort from the decision-makers targeted. We also identified a few interventions that required thinking engagement while simultaneously modifying the decision environment, combining debiasing and choice architecture in a single intervention. We consider these as examples of a novel “dual” approach to bias mitigation.

We focused on debiasing and choice architecture interventions because their relative simplicity allows their implementation across organizations of various sizes and with varying resources and technological capabilities. More complex, technology-intensive interventions, such as decision analysis or decision support systems (e.g., Ahn & Vazquez Novoa, 2013; see Edwards & Fasolo, 2001, for a review of decision support systems) may instead encounter implementation barriers and face resistance within organizations (Heath et al., 1998). In addition, our review focused on decision-making tasks (e.g., choosing one plan over another from several options) rather than behavior (e.g., remaining enrolled in a previously selected plan). This focus allows a comparable analysis of debiasing and choice architecture interventions, distinguishing this review from others that predominantly examine interventions targeting behavioral change (e.g., Beshears & Kosowsky, 2020; Szaszi et al., 2018).

In the spirit of integrative reviews (Elsbach & van Knippenberg, 2020), we adopted a broad inclusion scope without limiting the time window or excluding any field, experimental method, or journal, provided that the study was published in a journal with an Article Influence Score higher than the average. 5 We included only experimental studies where decision improvement could be assessed according to principles of rational information search and decision-making (Kahneman, 2011; Tversky & Kahneman, 1974). Recognizing that the operationalization of decision improvement varies across research traditions and organizations, we have included studies with different measures. For instance, some studies directly measured decision improvement as a decrease in judgment error (e.g., Herzog & Hertwig, 2009; Yoon, Scopelliti, & Morewedge, 2021) or as a reduced incidence of bias (e.g., susceptibility to confirmation bias, Morewedge, Yoon, Scopelliti, Symborski, Korris, & Kassam, 2015), whereas others assessed decision improvement according to the decision outcome influenced by the bias (e.g., the reduced choice of a suboptimal course of action supported by confirming evidence, Sellier, Scopelliti, & Morewedge, 2019). We excluded studies where the best option depended on specific individual preferences (e.g., risk preferences, Camilleri, Cam, & Hoffmann, 2019).

Additional details on the search strategy, the search strings used in the Web of Science database, the exclusion criteria, and the extraction of studies from the articles are provided in the Supplemental Material (Table S0). The other appendix tables (Table S1 for debiasing, Table S2 for choice architecture, and Table S3 for dual interventions) contain details on each study included in the review with respect to the technique featured by the intervention and manipulated as the independent variable in the experiment; the specific cognitive error(s) or bias(es) mitigated; the decision domain in which the intervention was tested (e.g., general, financial, etc.); the authors’ names; the year of publication; the study number (where articles included multiple studies); the type of experimental method (laboratory, field, or online experiment); the type of decision-maker (group or individual); the study sample size; and how the improvement in decision-making was assessed (i.e., the dependent variable in the experiment).

Overview of the Studies

Our integrative review includes 100 empirical studies extracted from 62 peer-reviewed scientific articles published between 1986 and 2022. Among the 100 studies, 32 tested debiasing interventions, 62 tested choice architecture interventions, and 6 tested dual interventions that combined elements of debiasing and choice architecture.

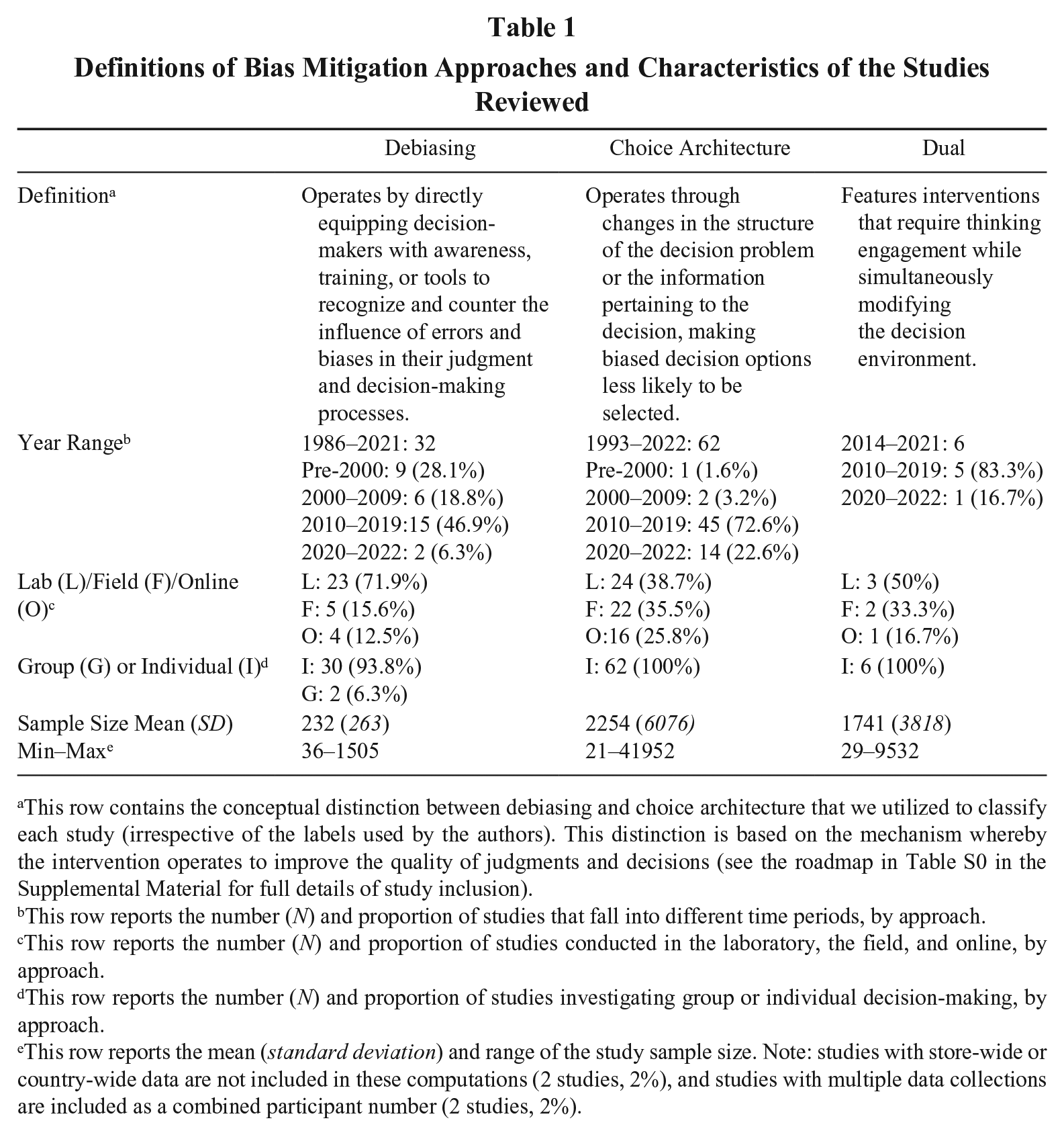

We found no study directly comparing bias mitigation interventions from the two approaches (i.e., comparing a debiasing and a choice architecture intervention), leaving questions on their relative effectiveness unanswered. The primary focus of the studies reviewed was improving individual decisions. Only two studies targeted group decision-making, both testing debiasing interventions. Compared to previous reviews of bias in organizations (e.g., Hodgkinson & Healey, 2008), the studies in our review were more diverse in their setting. Fifty percent of the studies were conducted in the laboratory, 29% in the field, and 21% online. Laboratory testing was used predominantly for debiasing interventions (23 out of 32 studies, 71.9%) and over one-third of choice architecture interventions (24 out of 62 studies, 38.7%). Possibly due to their higher reliance on laboratory experimentation, debiasing studies generally had smaller sample sizes (MDebiasing = 232, SDDebiasing = 263) than choice architecture studies (MCA = 2,254, SDCA = 6,076). Field experiments were more prevalent for choice architecture (22 out of 62 studies, 35.5%) than debiasing (5 out of 32 studies, 15.6%) interventions. Table 1 contains additional information, including the proportion of online studies by bias mitigation approach.

Definitions of Bias Mitigation Approaches and Characteristics of the Studies Reviewed

This row contains the conceptual distinction between debiasing and choice architecture that we utilized to classify each study (irrespective of the labels used by the authors). This distinction is based on the mechanism whereby the intervention operates to improve the quality of judgments and decisions (see the roadmap in Table S0 in the Supplemental Material for full details of study inclusion).

This row reports the number (N) and proportion of studies that fall into different time periods, by approach.

This row reports the number (N) and proportion of studies conducted in the laboratory, the field, and online, by approach.

This row reports the number (N) and proportion of studies investigating group or individual decision-making, by approach.

This row reports the mean (standard deviation) and range of the study sample size. Note: studies with store-wide or country-wide data are not included in these computations (2 studies, 2%), and studies with multiple data collections are included as a combined participant number (2 studies, 2%).

Most studies examined judgments and decisions in specific domains. Supplemental Figure S1 shows the distribution of decision domains across all studies, using a taxonomy similar to Beshears and Kosowsky (2020). In 21 of the 100 studies, the decisions targeted by the interventions were “domain-general,” with most of these testing debiasing interventions (13 out of 21). For example, Morewedge et al. (2015) examined the effectiveness of debiasing interventions in mitigating six cognitive biases relevant to intelligence analysis, policy, business, law, and medicine. Basu and Savani (2017) tested a choice architecture intervention to increase the choice of the optimal option between two generic risky prospects (e.g., Option A vs. Option B).

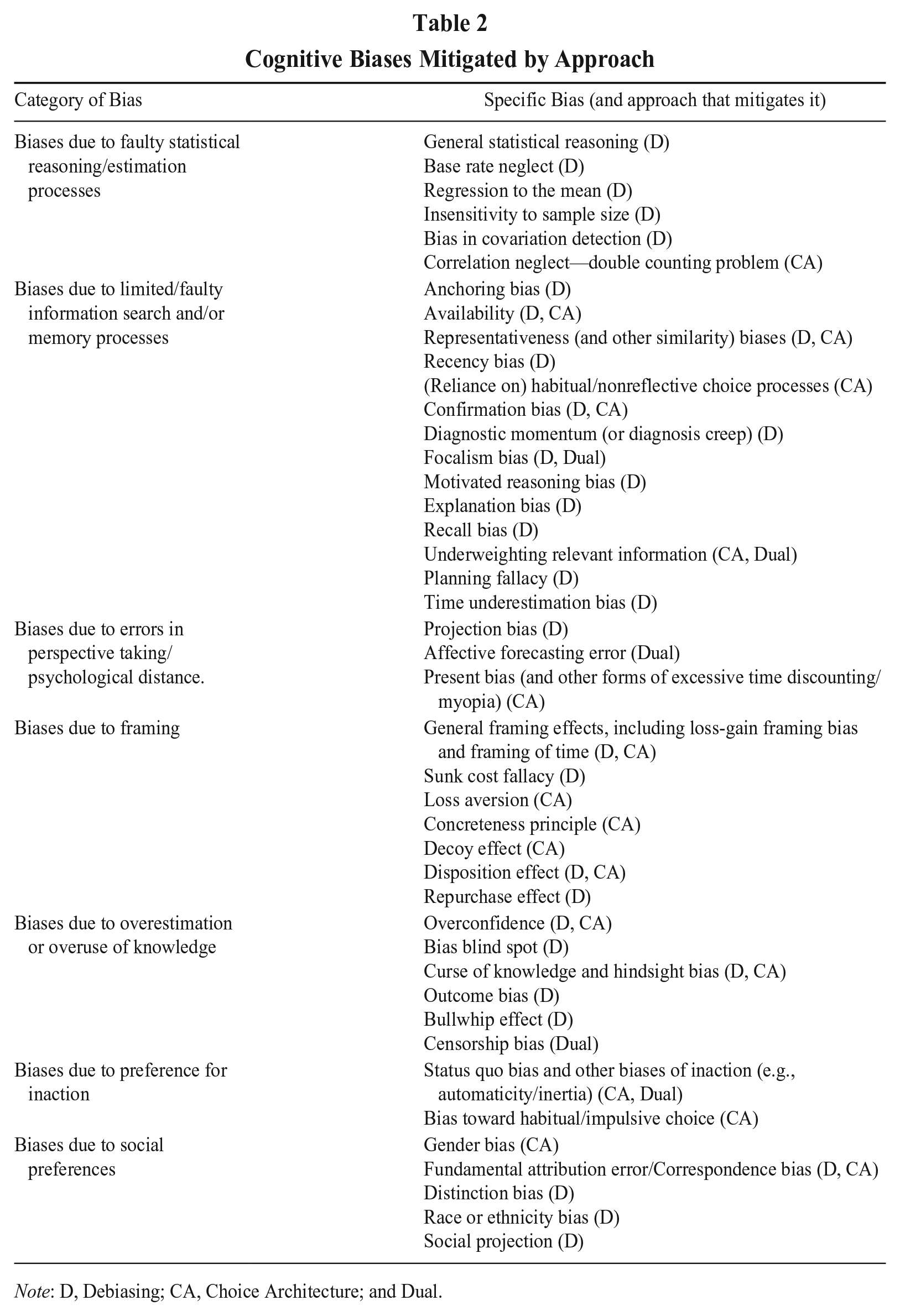

The 100 studies targeted over 40 cognitive errors and biases. These were categorized according to their underlying cognitive processes, as detailed in Table 2, in line with the perspective of biases as effects (e.g., Keren & Teigen, 2004). An important result from this categorization is that both debiasing and choice architecture approaches (in isolation or combined in dual interventions) effectively mitigate biases across all categories. This finding demonstrates the potential for both bias mitigation approaches to be effective across various organizational contexts. However, none of the studies provided guidance on when each approach would be more suitable, leaving a critical gap in our understanding of the relative effectiveness of these approaches.

Cognitive Biases Mitigated by Approach

Note: D, Debiasing; CA, Choice Architecture; and Dual.

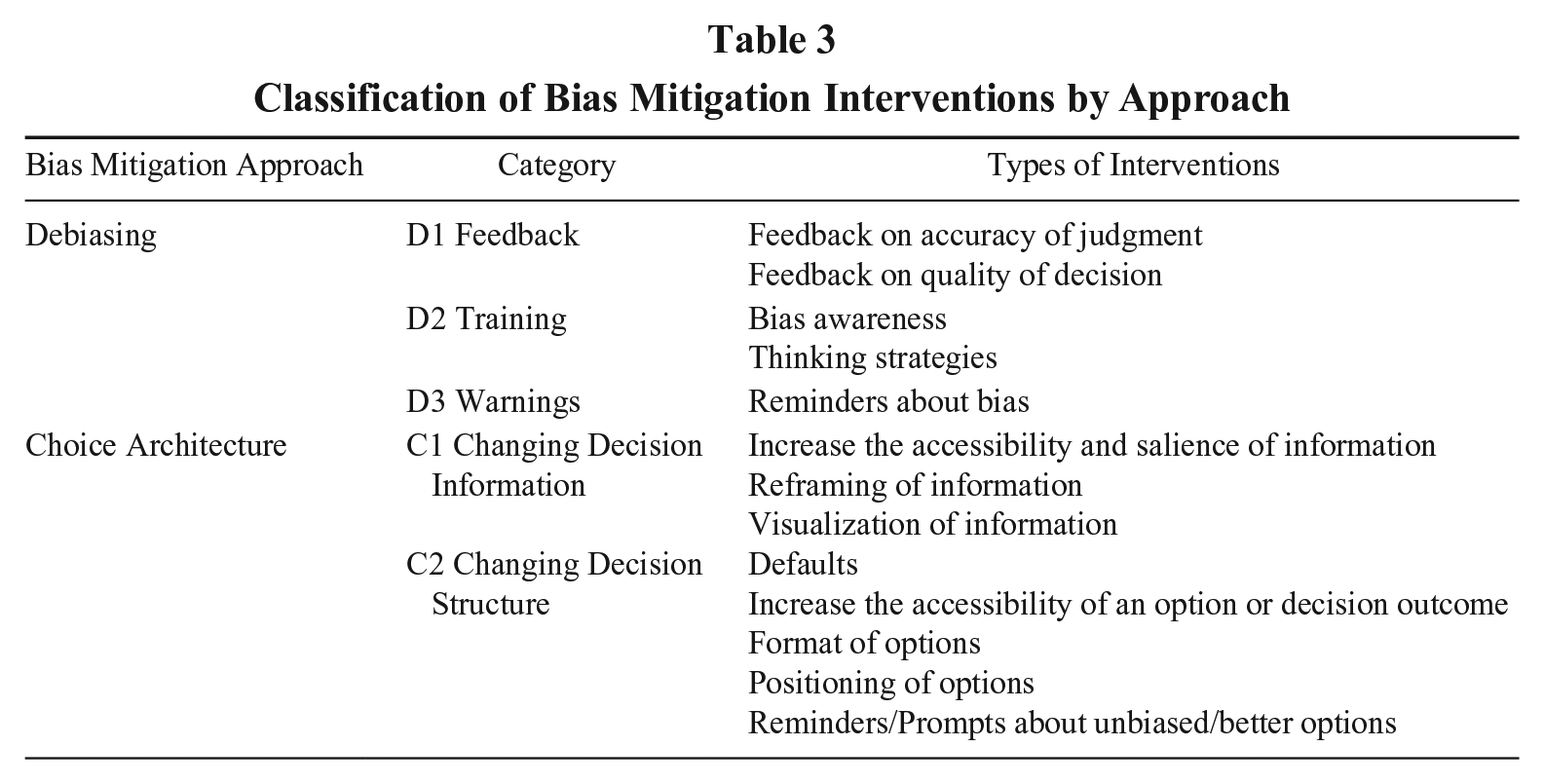

Classification of Bias Mitigation Approaches

Our review identified 36 distinct debiasing and choice architecture interventions tested in isolation or combination. We categorized them by adapting and combining existing classifications from the literature—specifically, Fischhoff (1982) and Larrick (2004) for debiasing, and Münscher et al. (2016) for choice architecture. The resulting classification, detailed in Table 3, offers conceptual clarity regarding the different types of interventions within each approach and was used to categorize each study as testing a debiasing (S1), choice architecture (S2), or dual (S3) intervention. We next articulate the main interventions within each approach to identify the factors that can influence the effectiveness and suitability of the two approaches to mitigate bias across different organizational decisions.

Classification of Bias Mitigation Interventions by Approach

Debiasing Approach

Debiasing interventions aim to improve bias awareness and provide decision-makers with cognitive tools to reduce error or bias while making judgments and decisions. The three categories of debiasing interventions in our review are training, warnings, and feedback. Notably, some debiasing interventions have combined several of these elements and achieved promising results. For example, Morewedge et al. (2015) integrated training with feedback into an interactive computer game, effectively reducing several cognitive errors and biases up to three months after the administration of the intervention.

For all three categories of debiasing interventions, their testing and implementation in an organization would require the active involvement of an agent who designs the training, warnings, or feedback and the engagement of all the decision-makers targeted, who must participate in the training or pay active attention to the warnings or feedback received and be willing to revise their judgments as a result. Detailed information for each study that tested debiasing interventions in isolation is available in the Supplemental Material (Table S1). For studies that included debiasing as part of a dual intervention, see the Supplemental Material (Table S3).

Training

Training emerged as the most extensively tested category of debiasing interventions, many of which are applicable across a wide range of contexts. Training interventions are designed to increase decision-makers’ awareness of biases and teach thinking strategies to mitigate them. Bias awareness training aims to make decision-makers aware of cognitive errors and biases, and of their potential influence on judgments and decisions. Bias awareness training can be delivered with a range of methods, from short informational articles (e.g., Scopelliti, Morewedge, McCormick, Min, Lebrech, & Kassam, 2015, to reduce the fundamental attribution error) to educational videos (e.g., Morewedge et al., 2015, to reduce susceptibility to six different cognitive biases).

Thinking strategies training teaches decision-makers generalizable decision strategies that they can employ to reduce the incidence of cognitive errors and biases. The most common thinking strategy among the training interventions reviewed was prompting the generation of counterarguments opposing initial beliefs (consider-the-opposite, counter-explanation, counterfactual thinking; Colombo, 2018; Hirt & Markman, 1995; Kennedy, 1995; Kray & Galinsky, 2003; Nagtegaal et al., 2020). As an example, Nagtegaal et al. (2020) demonstrated that consider-the-opposite thinking strategies corrected anchoring bias in employee performance evaluations.

Additional thinking strategies included teaching the law of large numbers (statistical training, Fong, Krantz, & Nisbett, 1986), prompting the recognition of similarities between cases (analogical training, Aczel, Bago, Szollosi, Foldes, & Lukacs, 2015), and encouraging the review of previous judgments (Ashton & Kennedy, 2002). Some specific thinking strategies aimed to reduce errors in estimation and forecasting. These included averaging and multiple estimation processes (averaging principle, affective averaging, dialectical bootstrapping; Comerford, 2011; Herzog & Hertwig, 2009; Yoon et al., 2021). For instance, dialectical bootstrapping encourages decision-makers to generate and average multiple estimates, each drawing on different perspectives and knowledge bodies, significantly improving the accuracy of individual estimates (Herzog & Hertwig, 2009).

Warnings

Warnings are designed to alert or remind decision-makers about potential cognitive errors and biases in the process of making a judgment or decision without providing training. These warnings are usually highly domain-specific. For example, Tokar, Aloysius, and Waller (2012) warned decision-makers about the bullwhip effect (a bias in inventory management), improving the quality of stock replenishment decisions. Refer to Table S3 for an example of warnings used as part of a dual intervention (i.e., King et al., 2014).

Feedback

The last category of debiasing interventions involves providing decision-makers with feedback about the accuracy of a prior judgment or the quality of a past decision in order to improve future judgments and decisions. Similar to warnings, the content of feedback is highly decision-specific. For an illustrative example, Król and Król (2019) gave investors feedback on whether their investment decisions were consistent with those of unbiased or well-performing investors. This feedback improved the quality of their stock trading decisions by mitigating their disposition effect (i.e., the bias of holding onto losing stocks for too long and selling assets that yield financial gains prematurely). Refer to Table S1 for additional examples of feedback interventions in conjunction with other debiasing interventions (e.g., Martey et al., 2017).

Choice Architecture Approach

Choice architecture is a bias mitigation approach that modifies the structure of the decision environment, or the way decision information is presented, to facilitate less biased judgments and decisions. Testing and implementing a choice architecture intervention in organizations would require the commitment of a choice architect who identifies the less biased option and redesigns the decision environment behind the scenes without requiring any additional effort or the direct involvement of the decision-makers targeted. As a result, choice architecture interventions typically streamline decision-making processes, reducing cognitive load on decision-makers.

Choice architecture interventions either change the structure of the decision problem or the information pertaining to the decision in order to facilitate better decision outcomes. Interventions that change decision structure were more prevalent in our review than those that change decision information. Some successful interventions have combined both structural changes (e.g., by setting defaults) and information modifications (e.g., using data visualizations), such as Elbel, Gillespie, and Raven’s (2014) study on the improvement of health center choices. Detailed information for each study that tested choice architecture in isolation is available in the Supplemental Material (Table S2). For the use of choice architecture as part of a dual intervention, see the Supplemental Material (Table S3).

Changing Decision Structure

Our review identified five types of interventions that change decision structure. The most prevalent type involves choice architects setting as the default the option that would be selected based on an unbiased processing of the available information. For example, He, Kang, and Lacetera (2021) reduced gender biases in career decisions by setting as default a competitive compensation scheme that would otherwise be less frequently chosen by women. By switching the default for this scheme from opt-in to opt-out, gender differences in competitiveness and bias in promotion decisions were reduced. Another way choice architects can change decision structure is by altering the format in which options are presented to facilitate unbiased information processing. Bohnet, van Geen, and Bazerman (2016) investigated whether changing the format from separate (one candidate at a time) to joint (multiple candidates at once) evaluations reduced gender bias in job candidate assessments. The joint evaluation format helped recruiters focus on individual performance irrespective of gender, whereas separate evaluations made gender more salient, even though it was not predictive of future performance.

The review revealed additional ways to change decision structure, such as making an option more prominent or accessible to increase its likelihood of being selected. For example, Vandenbroele, Slabbinck, van Kerckhove, and Vermeir (2021) promoted the choice of options with lower environmental impact by positioning meat substitutes next to their meat counterparts on shelves. Blackwell et al. (2020) increased the choice of healthier nonalcoholic beverages by increasing their relative availability compared to that of the less healthy alcoholic ones.

Finally, choice architects can incorporate verbal prompts and reminders about preferred options, courses of action, or consequences to facilitate less biased decisions. For instance, in a workplace context, Connolly, Reb, and Kausel (2013) showed that reminding decision-makers about possible regret they could feel after choosing a job could reduce the impact of an irrelevant decoy job option.

Changing Decision Information

Choice architects can improve decision-making by altering how decision-relevant information is presented without changing the options themselves or the decision structure. Our review identified three types of interventions that change decision information. The most prevalent involves the visualization of information. Some visualization techniques make specific pieces of information more salient, such as highlighting the purchase price of a stock to mitigate the disposition effect (Frydman & Rangel, 2014), while others represent information visually rather than numerically, such as using visual aids to mitigate framing effects (Garcia-Retamero & Dhami, 2013). Hershfield et al. (2011) tested a creative visualization technique using age-progressed avatars to represent decision-makers’ future selves, increasing their preference for delayed monetary rewards over immediate ones and mitigating present bias.

Another way choice architects can modify decision information is through reframing, which involves presenting existing information differently or from a different perspective to facilitate considerations that may be overlooked using the original framing. Reframing can be particularly helpful for activating important latent objectives that might be ignored or difficult to assess when the original information format is difficult to comprehend. For example, Mertens, Hahnel, and Brosch (2020) reframed quantitative household appliances’ energy and water consumption information by expressing it in terms of environmental friendliness, operation costs, and carbon emissions. This reframing, which presented the same underlying information through a different conceptual lens focused on salient consequences, simplified the information and increased the selection of energy-efficient products.

Finally, choice architects can increase the ease of processing and salience of decision-relevant information. For example, Scopelliti, Min, McCormick, Kassam, and Morewedge (2018) increased the accessibility of market performance information for participants evaluating fund managers’ performance. Making situational information (i.e., market performance) easier to process reduced correspondence bias (the tendency to account for situational factors insufficiently) in performance evaluations, especially among those most prone to this bias.

Dual Approach

Our review revealed a novel type of bias mitigation approach that combines debiasing and choice architecture techniques in a single dual intervention. Testing and implementing dual interventions require the involvement of both a choice architect who redesigns the decision environment and a debiasing agent who designs and actively involves the decision-makers in the intervention, although these two roles may co-exist in the same individual or team. Only a few studies in our review test dual interventions, but they are all relatively recent, suggesting an emerging trend in bias mitigation research. For example, Tong, Feiler, and Larrick (2018) tested a dual intervention combining debiasing training (i.e., prompting decision-makers to envision the extent of lost sales for each stockout period) with a choice architecture technique (i.e., increasing the salience of stockout information) to improve the quality of inventory decisions. Bhattacharyya, Jin, Le Floch, Chatman, and Walker (2019) combined a debiasing thinking strategy training designed to help decision-makers articulate their quality-of-life preferences with a choice architecture technique that visualized information about their priorities to improve the quality of a transportation decision. King et al. (2014) tested a dual intervention that combined two debiasing techniques, thinking strategies training (e.g., using a checklist to promote deliberation on specific reasons in the decision process) and warnings (to remind doctors about critical tasks and medication orders to reduce prescribing errors due to cognitive biases), together with a choice architecture technique changing the decision structure (e.g., defaults).

Future research needs to systematically examine when different bias mitigation approaches yield the most value in isolation or combined as a dual intervention. For instance, while there were positive outcomes when training was combined with changing decision information (Bhattacharyya et al., 2019), training did not have the desired effect when combined with changes in the decision structure (Barnes, Karpman, Long, Hanoch, & Rice, 2021). These mixed results suggest the importance of studying when bias mitigation approaches should be combined or applied in isolation. Detailed information for each dual approach study in our review is available in the Supplemental Material (Table S3).

A Theoretical Framework and Research Agenda on Bias Mitigation in Organizations

Debiasing and choice architecture approaches have been tested separately in the JDM literature, each targeting cognitive errors and biases through a different mechanism. Our review integrates studies on these two approaches, presenting an opportunity to understand how they could be suitable, either separately or jointly, for reducing cognitive errors and biases in decision processes and improving organizational decisions. By examining the interventions included in our review, we develop a novel framework that lays the foundation for a research agenda to advance the study of bias mitigation in organizations. This advancement requires rigorous comparative studies and an examination of the factors influencing the relative suitability of each bias mitigation approach, or their combination, for a specific organizational context.

First, our review revealed differences in the characteristics of the decisions targeted by the interventions, such as the stage of the decision-making process and the degree of decision uncertainty and complexity. This suggests that the suitability of a bias mitigation approach may depend on these decision-level factors. We also noted that almost all the interventions targeted individual decision-makers, which prompted us to identify two individual-level factors that could influence the interventions’ effectiveness: the availability of cognitive resources to invest in the bias mitigation process and the decision-makers’ actual and perceived susceptibility to cognitive biases. Finally, while most studies in the review focused on individual-level decision-making, we recognize that the organizational context in which decisions occur can fundamentally change the effectiveness of an intervention. Consequently, we discuss organization-level factors, such as the extent to which the organization promotes agency over decision outcomes and the degree of trust in the organizational actor introducing the intervention, which could impact the relative effectiveness of the two bias mitigation approaches.

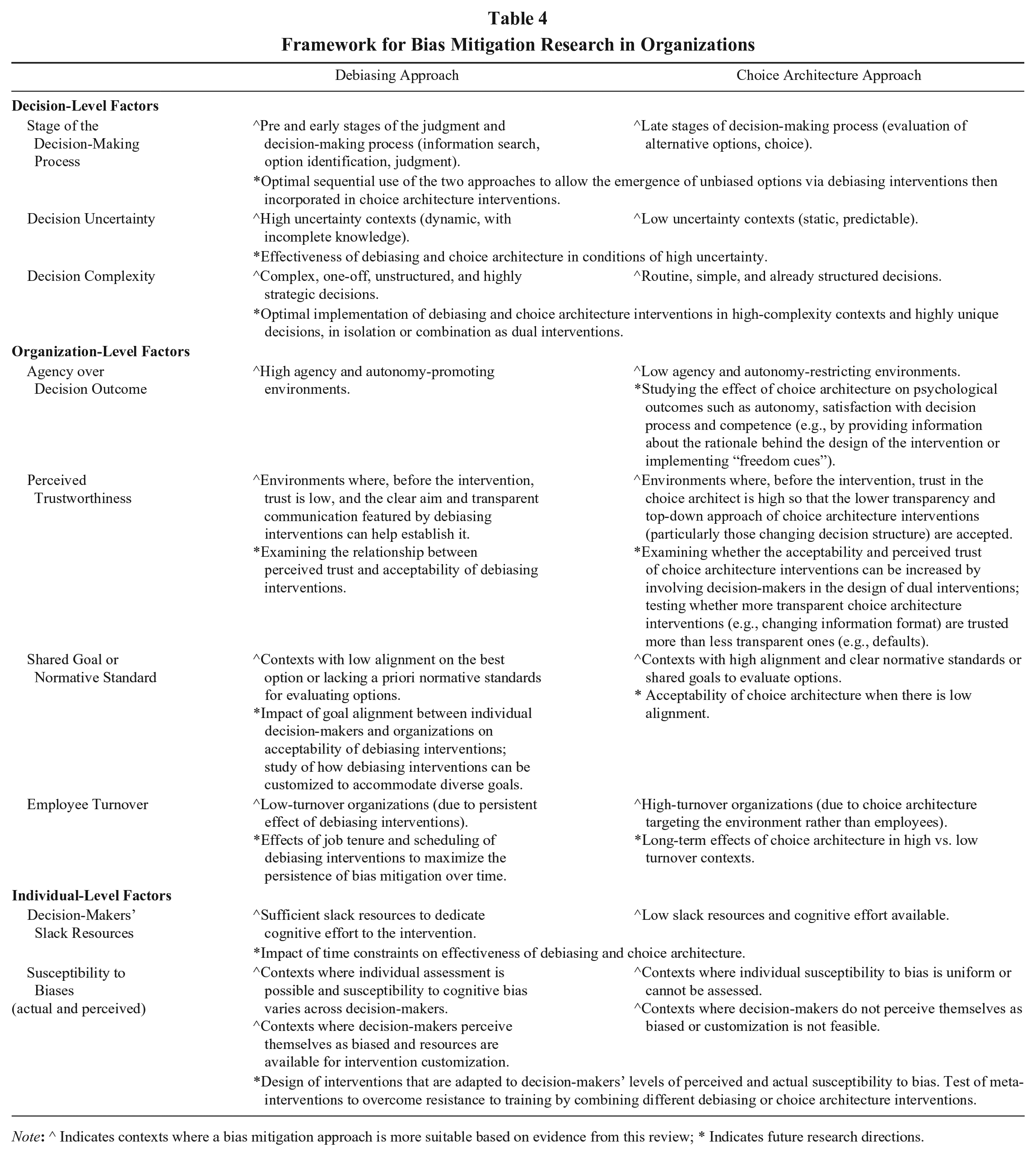

In summary, we propose a framework that identifies decision, organizational, and individual-level factors that can moderate the effectiveness of bias mitigation approaches in organizations. In the following sections, we examine how each of these sets of factors is expected to impact the suitability of debiasing and choice architecture approaches, based on evidence from the studies reviewed. We also develop propositions to guide future research on the comparative effectiveness of the two approaches (summarized in Table 4).

Framework for Bias Mitigation Research in Organizations

Note: ^ Indicates contexts where a bias mitigation approach is more suitable based on evidence from this review; * Indicates future research directions.

Decision-Level Factors

Stage in the Decision-Making Process

Cognitive errors and biases can occur at different phases of the judgment or decision-making process, from the early phases of judgment formulation, information search, and identification of alternatives, to the later phases of selecting the best of the alternatives available (McClelland, Stewart, Judd, & Bourne, 1987). Our review reveals that debiasing interventions primarily improve the accuracy of judgments and the comprehensiveness of thought processes preceding a judgment or the choice of an alternative. For example, Kennedy (1995) asked participants in auditing roles to write a counter-explanation before forming a judgment about the sale forecasts made by others they were auditing; Lowe and Recker’s (1994) intervention on hindsight bias was used before the participants made judgments about the relevance of audit partners’ use of several information cues (e.g., the possible bankruptcy of their clients). More generally, training interventions typically involve a dedicated phase in which the content of the training is administered to participants before the judgment or decision-making process begins (e.g., Aczel et al., 2015; Fong et al., 1986; Scopelliti et al., 2015; Yoon et al., 2021), whereas feedback interventions are usually administered after a judgment or a decision has been made, but are intended to have an impact on revised or subsequent judgments and decisions (e.g., Kròl & Kròl, 2019; Martey et al., 2017; Morewedge et al., 2015).

Conversely, only a few choice architecture studies in our review target the stages of making judgments and searching for information before the choice of the best alternative (e.g., Garcia-Retamero & Dhami, 2013; Klayman & Brown, 1993; Kray & Galinsky, 2003). Most choice architecture interventions are applied in contexts where a choice architect has already determined what represents the least biased course of action based on prior analyses. Consequently, they intervene when the decision-maker is evaluating alternatives or making a final decision. For example, Mertens et al.’s (2020) attribute translation intervention targeted the option evaluation stage. Defaults (e.g., Johnson & Goldstein, 2003; Thaler & Benartzi, 2004) intervene at the final stage of the decision-making process by setting the best option as the automatic selection unless the decision-maker actively opts out and chooses a different option.

Given the evidence in our review, we suggest that the suitability of a bias mitigation approach varies depending on the stage of the decision-making process an organization can and wants to target. In the early stages, where an optimal, unbiased decision outcome has not yet been determined, debiasing interventions are particularly beneficial. These interventions focus on improving judgments and the quality of the informational inputs to the decision, facilitating informed reasoning and critical thinking. Conversely, we propose that choice architecture interventions are more suitable for later stages of the decision-making process when a choice architect has already evaluated alternative options, and a preferred alternative has emerged as the unbiased choice. In such scenarios, choice architecture would allow organizations to improve decision outcomes by designing a decision environment that favors the preidentified optimal course of action.

Future research can test this proposed interaction between the stage in the decision process and bias mitigation approach on intervention effectiveness, as well as the effects of a sequential implementation of the two bias mitigation approaches within the same organizational decision-making process. This sequential process would employ debiasing interventions first to allow the emergence of unbiased decision outcomes that would eventually be incorporated into choice architecture interventions. One way to achieve this would be by administering debiasing interventions to members of the organization who acquire the relevant knowledge and skills to become choice architects.

Decision Uncertainty

Cognitive errors and biases can emerge in contexts characterized by low uncertainty, such as repeated decisions in stable environments where agents’ preferences are known and predictable (e.g., routine budgeting in a stable market or scheduling in a consistent production environment), or high uncertainty, such as decisions in dynamic environments with low predictability and incomplete knowledge (e.g., strategic planning in volatile markets or crisis management in unpredictable situations). Mitigating bias in decisions with high uncertainty presents a significant challenge, as it requires an approach with effects that are likely to generalize to different contexts and circumstances. Thus, the most suitable candidates for high-uncertainty decisions are domain-general interventions.

Our review highlights that debiasing interventions exhibit a broader range of domain-general applications than choice architecture interventions. Specifically, over one-third of the debiasing studies in our review fall within the domain-general category (Aczel et al., 2015; Fong et al., 1986; Galinsky & Moskowitz, 2000; Herzog & Hertwig, 2009; Martey et al., 2017; Morewedge et al., 2015; Sellier et al., 2019; Yoon et al., 2021), in contrast to less than 15% of the choice architecture studies (Basu & Savani, 2017; Enke & Zimmermann, 2019; Tu & Soman, 2014). This finding is important because it suggests that the debiasing approach has greater flexibility and adaptability than choice architecture in situations characterized by high uncertainty, such as dynamic and changing decision environments.

Some debiasing interventions, training in particular, have demonstrated effectiveness beyond their original domain, with bias mitigation effects extending to different problem types and decision domains (Fong & Nisbett, 1991; Larrick, Morgan, & Nisbett, 1990; Morewedge et al., 2015; Sellier et al., 2019). For example, participants trained on statistical principles using specific cases and examples showed improved decision-making both in those cases and unrelated scenarios (Fong & Nisbett, 1991). Similarly, training on thinking strategies to avoid the sunk cost fallacy in the financial domain has been shown to reduce the same bias in the time domain and vice versa (Larrick et al., 1990). Because debiasing training does not target knowledge specific to a particular decision, it imparts skills that can be applied across various contexts. For example, Kray and Galinsky’s (2003) debiasing training used a general scenario to prompt thinking about counterfactuals, fostering a more generalized ability to consider alternative possibilities in many decisions. In the case of infrequent and high uncertainty decisions, where past experiences are not readily available, debiasing training can provide a set of mental tools that are transferable across different contexts.

The ability of debiasing interventions to transcend specific domains highlights their potential suitability to improve decisions in uncertain and ill-defined contexts. Debiasing interventions, in particular training, but to some extent also feedback and warnings, provide decision-makers with tools and insights that could apply to diverse and dynamic circumstances. Conversely, we suggest that choice architecture intervention are more suited for decision contexts characterized by lower uncertainty and greater stability, as they involve high context-specificity (e.g., one option can be set as the default only if that option is expected to be continuously best in the future, and there are no changes to the number and quality of options available to the organization). As a result, the likelihood that the positive effects of choice architecture interventions will spill over to other decisions or domains is very low (Van Rookhuijzen, De Vet, & Adriaanse, 2021). Future research could examine these predictions and the effect of uncertainty on the effectiveness of different bias mitigation interventions.

Decision Complexity

Cognitive errors and biases can occur in decisions that vary in complexity, ranging from simple decisions, such as saving money regularly for a predetermined goal (Hershfield et al., 2011; Thaler & Benartzi, 2004), to complex multidimensional decisions, such as launching a new venture (Kray & Galinsky, 2003) or purchasing real estate (e.g., Bhattacharyya et al., 2019). Complex decisions are often highly strategic and require considering long-term implications, weighing multiple factors, and predicting multiple outcomes. These decisions typically have not been encountered before by the organization and lack a predetermined and explicit course of action (Mintzberg, Raisinghani, & Theoret, 1976).

Debiasing interventions, particularly in the form of training, can equip individuals with knowledge of biases and thinking strategies to guide them in navigating such complex scenarios (Hirt & Markman, 1995; Lowe & Reckers, 1994; Martey et al., 2017; Morewedge et al., 2015; Sellier et al., 2019; Yoon et al., 2021). Debiasing can enhance critical thinking skills, such as recognizing and addressing cognitive blind spots (Martey et al., 2017), avoiding common decision-making errors (Morewedge et al., 2015; Yoon et al., 2021), and fostering objective and analytical approaches to information search and consideration (Hirt & Markman, 1995; Kennedy, 1995; Kray & Galinsky, 2003). These tools can help decision-makers approach complex decisions by applying systematic rational thinking processes and analyzing situations using a structured approach.

In contrast, the choice architecture interventions in our review were typically applied to decision tasks simpler in structure, for which the best options are clearly identifiable by the choice architect. Choice architecture typically streamlines decision-making processes and simplifies the decision environment to reduce cognitive load on decision-makers and guide decision-makers toward these predetermined outcomes. For instance, the routine decision to save money regularly can be influenced by simple choice architectures, such as defaults (Thaler & Benartzi, 2004), or by prompts that encourage a future-focused mindset that simplifies the decision to save (Hershfield et al., 2011). Applying choice architecture interventions to complex decisions may be challenging, as these decisions often involve multiple interrelated factors and ambiguous or conflicting objectives, which make it difficult to identify a priori a best outcome.

In summary, our review suggests that debiasing interventions may be more effective than choice architecture ones when addressing complex and unstructured decisions. Our review also revealed that dual interventions combining elements of both bias mitigation approaches were predominantly implemented to mitigate bias in complex decisions involving numerous factors and interrelated judgments, such as choosing between complex health plans or places to live (Barnes et al., 2021; Bhattacharyya et al., 2019). In addition to examining the moderating effect of decision complexity on the effectiveness of different bias mitigation approaches, it would be valuable for future research to investigate how the two types of interventions can be integrated to enhance the quality of decisions in strategic contexts, particularly those that are highly unique and involve numerous interrelated factors.

Organization-Level Factors

Agency Over the Decision Outcome

Individuals value having and exerting autonomy (Deci, Olafsen, & Ryan, 2017); however, organizations differ in their ability to create autonomy-promoting environments where decision-making is decentralized and individual decision-makers have agency over the decision-making process and its outcomes (Campbell, Dunnette, Lawler, & Weick, 1970). In our review, approximately one-third of the choice architecture interventions involved setting default options or increasing the accessibility of a predetermined optimal outcome deemed less biased, de facto creating an autonomy-limiting decision context. For instance, defaults were implemented by choice architects who deemed saving more for retirement or increasing women’s participation in competitive promotion systems the optimal course of action, irrespective of the preferences of individual decision-makers (Ebeling & Lotz, 2015; Goda, Levy, Manchester, Sojourner, & Tasoff, 2020; He et al., 2021; Thaler & Benartzi, 2004).

Consequently, some default interventions have raised concerns about the decision-makers’ freedom and potential reactance (Blumenthal-Barby & Burroughs, 2012; Hill, 2007). The same considerations apply to most types of interventions within the choice architecture approach, which trade off bias mitigation with limitations on the decision-maker’s agency over the decision process and outcome. In organizational contexts where individual preferences and autonomy are highly valued, debiasing interventions may be a more suitable approach, as they empower individuals by equipping them with cognitive tools and strategies to identify and mitigate biases independently, making informed, autonomous decisions without external imposition.

Future research could systematically compare debiasing and choice architecture interventions in terms of their impact on decision-makers’ sense of autonomy, satisfaction with the decision process, and overall decision quality. Such studies could provide insights into how different approaches affect not only bias mitigation, but also psychological outcomes related to autonomy and the potential mediating role of psychological reactance and perceived freedom. Future research could test whether reactance or the potential dissatisfaction with a decision-making process targeted by choice architecture interventions could be reduced by implementing “freedom cues” (Fasolo, Misuraca, & Reutskaja, 2024) that remind decision-makers of their intrinsic autonomy.

Finally, bias researchers could investigate the potential for customizing choice architecture interventions to accommodate individual preferences and autonomy (Deci et al., 2017) and boost their competence (Hertwig & Grüne-Yanoff, 2017). For example, choice architecture interventions could incorporate information about the choice architect’s rationale for redesigning the decision environment or allow the customization of defaults.

Perceived Trustworthiness

High trust and perceived trustworthiness are crucial in any social context, particularly within organizations (Kramer, 1999). Our review highlights the importance of trust in influencing the acceptability of bias mitigation interventions, especially defaults in choice architecture. For example, Goswami and Urminsky (2016) theorized that adherence to defaults might decrease when the choice architect is trusted to a lesser extent. In their studies, high default donation amounts were effective due to the high trust people had in the organization receiving the donations (i.e., the Red Cross).

We suggest that the level of trust in the organization has substantial implications for the effectiveness of debiasing and choice architecture approaches. In contexts where decision-makers lack trust in the choice architect or debiasing agent, they may question the motivation behind the interventions, potentially diminishing their effectiveness. We posit that high trust is particularly relevant for choice architecture interventions, which may encounter more scrutiny due to their top-down design. Decision-makers express disapproval of interventions designed by choice architects holding opposing political views or competing interests (Tannenbaum & Ditto, 2021; Tannenbaum, Fox, & Rogers, 2017) and are more likely to accept and embrace default interventions when they trust the individuals or entities setting them (Diepeveen, Ling, Suhrcke, Roland, & Marteau, 2013; Reisch & Sunstein, 2016). Trust is influenced by the choice architect’s perceived expertise and good intentions (Junghans, Cheung, & de Ridder, 2015), whether they are working in the best interests of employees or society (Lades & Delaney, 2022), and the perceived complexity of the intervention.

In contrast, debiasing interventions actively involve decision-makers in the bias mitigation process and are generally characterized by greater transparency and intentionality. For example, training interventions often disclose their aims and goals (e.g., Morewedge et al., 2015, “unbiasing your biases” training video), fostering trust through transparency. Decision-makers are less likely to question simpler interventions (Heath et al., 1998). We therefore propose that more transparent choice architecture interventions, such as those involving verbal prompts (e.g., Connolly et al., 2013), may be perceived as more trustworthy than less transparent ones, such as opt-out defaults (Felsen, Castelo, & Reiner, 2013; Jung & Mellers, 2016; Sunstein, 2016).

While existing research has focused primarily on the role of trustworthiness in the context of choice architecture, we urge researchers to investigate systematically how trust in the organization affects the effectiveness of both debiasing and choice architecture interventions and the potential role of intervention transparency in explaining the effectiveness of bias mitigation interventions. Additionally, we propose that debiasing interventions may enhance trust in the organization compared to choice architecture due their heightened transparency and involvement of decision-makers. To address trust-related concerns, choice architects may consider developing dual interventions by adding debiasing techniques to enhance the involvement of the decision-maker in the bias mitigation process. Future research could explore how to design dual interventions that combine the perceived transparency of debiasing and the efficiency of choice architecture interventions.

Shared Goal or Normative Standard

Organizations can differ significantly in the degree to which their members are aligned on shared goals and normative criteria for assessing optimal decision outcomes (e.g., Aguilera, De Massis, Fini, & Vismara, 2024). Some organizations exhibit high levels of alignment, where there is a commonly accepted objective or desired outcome that serves the interests of all parties. In contrast, other organizations may face greater divergence in goals and a lack of consensus on how to evaluate optimal decisions among different stakeholders.

We propose that the alignment between individual decision-makers’ goals and the objectives of the organization designing the intervention is crucial in determining the suitability of a bias mitigation approach in a specific organizational context. In particular, we suggest that a choice architecture approach to bias mitigation is more suitable when this alignment exists. In the studies we reviewed, choice architecture interventions were implemented mostly in contexts where the predetermined unbiased or less biased decision outcomes were aligned with the goals of both the intervention designers and the individual decision-makers. For example, these goals involved achieving maximum financial returns or optimizing health outcomes (Allan, Johnston, & Campbell, 2015; Beshears, Dai, Milkman, & Benartzi, 2021; Blackwell et al., 2020; Clarke et al., 2021; Hershfield, Shu, & Benartzi, 2020; Hershfield et al., 2011; Levy, Riis, Sonnenberg, Barraclough, & Thorndike, 2012; Thaler & Benartzi, 2004; Thorndike, Riis, Sonnenberg, & Levy, 2014; van Kleef, Otten, & van Trijp, 2012). In contrast, choice architecture interventions may face resistance when they prioritize collective and pro-social decision outcomes over pro-self ones, even if the intention is to maximize overall benefits for individuals (Hagman, Andersson, Västfjäll, & Tinghög, 2015). We propose that in cases where alignment is low, debiasing interventions may be more suitable than forcing a normative standard on decision-makers through choice architecture.

Future research should delve deeper into the suitability of different bias mitigation approaches depending on the alignment between individual decision-makers’ interests and the organization’s overarching objectives, as well as the existence of a shared goal or normative standard to evaluate decision outcomes. Examining the applicability of choice architecture interventions in situations where alignment is low could also be valuable. Additionally, it would be beneficial to explore how debiasing interventions can be customized to accommodate diverse goals, thus enhancing our understanding of effective bias mitigation strategies in such contexts.

Employee Turnover

Organizations differ in their ability to retain their workforce, which has important consequences for individuals and organizations (e.g., Bolt, Winterton, & Cafferkey, 2022). The rate of employee turnover emerges as another organizational factor that is critical to examine in assessing the suitability of different bias mitigation approaches. Some debiasing studies in our review (e.g., Morewedge et al., 2015; Sellier et al., 2019) have tested the longitudinal effects of bias mitigation interventions. For instance, Morewedge et al. (2015) reported that training interventions produced moderate to large bias reduction effects in the immediate term, and these effects persisted up to three months after the intervention. These findings suggest that debiasing interventions may be particularly beneficial for organizations with low turnover, where employees are more likely to remain in the organization and benefit from these long-term effects. Future research could investigate optimal intervention scheduling strategies to maximize the persistence of bias mitigation over time.

In contrast, for organizations with high turnover rates, adopting a choice architecture approach to mitigate bias may be more beneficial, as such an approach targets the environment rather than the individual, thereby reducing reliance on the continuity of specific members within the organization. However, none of the studies reviewed examined the persistence of the effects of choice architecture interventions in organizations. Conducting longitudinal field experiments in real organizational settings to test the persistence of debiasing and choice architecture under varying levels of employee turnover could be a fruitful endeavor for future research.

Finally, since the positive effects of debiasing may be lost to the organization if there is high turnover and the targeted decision-makers depart, the fear of losing valuable debiased employees to competitors may reduce an organization’s motivation to implement debiasing interventions such as training initiatives (Glance, Hogg, & Huberman, 1997). Testing these propositions could provide valuable guidance for organizations seeking to navigate the challenges posed by turnover while pursuing effective bias mitigation.

Individual-Level Factors

Decision-Makers’ Slack Resources

The role of individual differences in decision-making has been researched extensively in the management literature and addressed in previous reviews (e.g., Hodgkinson & Healey, 2008). Our review highlights how the implementation of the two bias mitigation approaches requires different levels of individual decision makers’ slack resources. Choice architecture interventions, such as defaults (e.g., Johnson & Goldstein, 2003; Thaler & Benartzi, 2004), labeling (Allan et al., 2015; Levy et al., 2012), attribute translation (Mertens et al., 2020), and reframing (Beshears et al., 2021; Hershfield et al., 2020; Tu & Soman, 2014), require minimal effort from decision-makers once implemented by choice architects, because they operate seamlessly, demanding no additional resources or time from decision-makers.

Conversely, debiasing interventions necessitate not only the active involvement of the intervention designer (i.e., the debiasing agent) but also the time and cognitive resources of each targeted decision-maker, particularly when the intervention requires training or reflection on feedback (e.g., Fong et al., 1986; Morewedge et al., 2015; Sellier et al., 2019). Although some debiasing interventions have shown significant bias mitigation effects even with one-time, short training sessions (e.g., Yoon et al., 2021), their effectiveness may be compromised when decision-makers face severe constraints on time and attention. In such situations, choice architecture, which operates independently of the decision-maker’s cognitive effort, may prove more effective. Future research could directly examine the impact of this limiting factor on the effectiveness of different bias mitigation techniques, providing valuable insights for organizations aiming to implement targeted interventions within their resource capacity.

Actual and Perceived Susceptibility to Biases

Some of the studies reviewed suggest the existence of substantial interpersonal variation in susceptibility to cognitive biases and demonstrate its moderating role on the effectiveness of bias mitigation interventions (e.g., Scopelliti et al., 2015, 2018). This finding is corroborated by an expanding body of related research on individual differences in decision-making competence and reasoning skills (Bruine de Bruin, Parker, & Fischhoff, 2007; Stanovich & West, 1998a, 1998b; Stanovich, 1999). We propose that an organization’s ability to assess individual decision-makers’ susceptibility to cognitive biases is crucial in determining the most suitable approach to bias mitigation.

If bias susceptibility assessments are feasible, debiasing interventions could be customized according to individuals’ specific vulnerabilities, which could potentially enhance their effectiveness. In cases where susceptibility to cognitive biases is uniformly distributed across the target population, debiasing and choice architecture interventions may be equally suitable. However, in cases where bias susceptibility levels are diverse, debiasing interventions may offer a more tailored approach. Tailoring interventions to individual susceptibility profiles can be challenging when implementing choice architecture, which often involves standardized changes to the decision environment.

Our review also revealed that susceptibility to the bias blind spot, a cognitive bias wherein individuals tend to perceive themselves as less biased than others (Pronin, Lin, & Ross, 2002), moderates the effectiveness of debiasing training interventions. People who scored high on a measure of susceptibility to the bias blind spot were more resistant to the effects of a training intervention designed to raise awareness of the fundamental attribution error and teach strategies for its mitigation, resulting in weaker bias mitigation effects (Scopelliti et al., 2015). However, perceiving oneself as less biased than others does not necessarily translate to actual lower bias (West, Meserve, & Stanovich, 2012). Therefore, future research might examine alternative non-training-based debiasing techniques or choice architecture approaches to overcome resistance to training by decision-makers who are highly susceptible to the bias blind spot.

Acknowledging and evaluating individual differences in susceptibility to cognitive biases more generally, and the bias blind spot more specifically, holds the potential to fine-tune intervention targeting, focusing on decision-makers with the strongest need for bias mitigation, and can guide the selection of the most suitable approach. Future research could investigate prioritization strategies for effectively deploying bias mitigation interventions across target populations with diverse bias mitigation needs.

General Discussion

This paper makes several contributions to research in organizational behavior and managerial cognition as well as in JDM. Turning to management first, this review redirects organizational scholars’ attention beyond the current emphasis on assessing cognitive biases in organizations toward evaluating the suitability of bias mitigation solutions that can improve decisions and, ultimately, organizational outcomes. Our first contribution to the management literature is a clearer conceptualization of two distinct approaches to bias mitigation—debiasing and choice architecture—and an extensive integrative review of experimental research on the effectiveness of each approach. Finding a lack of comparative studies that assess the relative effectiveness of these approaches, particularly across different organizational contexts, our second contribution is a novel multi-level framework that lays the foundation for future management research to experimentally test the comparative effectiveness and suitability of these bias mitigation approaches. Taking the results of the integrative review as its starting point, this framework is articulated into three levels of factors: decision, organizational, and individual. We discuss each of these factors’ potential role in moderating the effectiveness of debiasing and choice architecture interventions in organizations. This framework can stimulate future management research into their complex interplay with bias mitigation.

The framework has also practical value as it could support systematic bias mitigation processes within bias-conscious organizations. Given the pervasive impact of cognitive biases and the value of realistic assumptions about human cognition in behavioral strategies (Sibony, Lovallo, & Powell, 2017), future research in strategic management could consider how our framework could be practically used as part of a behavioral strategy for bias mitigation (Powell, Lovallo, & Fox, 2011).

A systematic bias mitigation process could be initiated by a bias mitigating agent (or team) with relevant JDM expertise who identifies the decision(s) to be targeted and the judgment errors or cognitive biases that may occur in the decision-making process. Our framework can inform the identification of the most appropriate bias mitigation approach according to the specific characteristics of the decision, organization, and individual decision-makers involved. The implementation of the intervention will then differ depending on whether debiasing, choice architecture, or dual interventions are used. Once the intervention is implemented, the process could end with the assessment of the effects of the intervention on the target decision outcome(s) to inform future bias mitigation decisions.

The implementation of debiasing interventions—training in particular—is expected to be relatively accessible and scalable for organizations. These interventions can be integrated into existing training programs, organizational routines, and handbooks. Training interventions that increase decision-makers’ awareness of biases and their potential impact on judgments and decisions, or that provide warnings, are straightforward to implement and require limited resources. Debiasing interventions involving feedback may require more planning and system integration to ensure their timely and effective delivery. Fortunately, advancements in digital technologies and automated feedback systems may facilitate their implementation. Organizations that have already established feedback mechanisms could incorporate debiasing feedback seamlessly into their existing processes. Debiasing interventions that combine multiple elements, such as training, warnings, and feedback, may require more resources and expertise for effective implementation. Creating interactive video games or other interactive training tools might require skills that are not readily available within all organizations and need to be outsourced. However, once developed, these multi-component interventions could also be easily scalable, potentially yielding meaningful and persistent bias mitigation effects even with one-off administrations (Morewedge et al., 2015; Sellier et al., 2019).

In contrast, the implementation of choice architecture interventions requires the involvement of a dedicated choice architect who can redesign the decision environment to favor less biased outcomes after analyzing all the available options and information to define the optimal environmental changes. Importantly, because choice architecture focuses on the decision task itself rather than the decision-maker, the implementation of these interventions is decision-specific. Consequently, these interventions need adaptation and potential updating for each decision context where bias mitigation is desired, and as the decision environment evolves or new information becomes available.

Turning to our contribution to the JDM literature, our paper advances ongoing debates on the applicability of choice architecture across different domains, currently framing the diversity of decisions and decision-makers as a barrier to “blanket prescriptions” of choice architecture interventions across different contexts (Schmidt & Engelen, 2020; Sher, McKenzie, Müller-Trede, & Leong, 2022). We contribute to this debate by offering a nuanced understanding of characteristics pertaining to the decision problem, the organization, and the individual decision-makers that allow tailoring the prescription of bias mitigation interventions, including both choice architecture and debiasing approaches.

A further contribution to the JDM literature is the identification of a new approach to bias mitigation that combines elements of debiasing and choice architecture—the dual approach. While in some cases dual interventions emerged as effective combinations of the two approaches (e.g., Bhattacharyya et al., 2019), we also found cases where combining a choice architecture and debiasing techniques did not provide additional benefits (e.g., Barnes et al., 2021). We encourage JDM researchers to develop and test dual interventions to better understand when debiasing and choice architecture present synergies and fine-tune interventions that leverage the advantages of both approaches (e.g., the efficiency of choice architecture and the transparency of debiasing).

Finally, our review revealed a lack of studies on bias mitigation in group decision-making. Considering the importance of group decisions in organizational settings, where groups may, under certain conditions, be more susceptible to errors and biases than individuals (Kerr, MacCoun, & Kramer, 1996), we encourage future research in management and JDM to consider the effects of different bias mitigation interventions on group decision-making. In addition, half of the studies reviewed were conducted in laboratory settings, particularly those testing debiasing interventions (with a few exceptions, e.g., Sellier et al., 2019). We advocate for future research to examine the external validity of bias mitigation effects obtained in the laboratory, ideally by testing these interventions in field settings. Because of the prevalence of group decisions in the field, a move to the field would also offer researchers greater opportunities to advance research on bias mitigation in groups. Given the proliferation of digital and scaled organizational training programs, achieving this goal, at least in the realm of debiasing, appears attainable.

Limitations and Conclusions

The rich body of evidence in this review highlights the importance of enhancing our understanding of bias mitigation approaches and their effectiveness in different organizational contexts. The framework we propose sheds light on the factors influencing the suitability of debiasing and choice architecture interventions across different decisions, organizational contexts, and decision-maker characteristics. This framework provides management scholars, particularly those interested in managerial and organizational cognition and organizational behavior, with a roadmap to inform their future research agendas on bias mitigation.

Nevertheless, we must acknowledge several limitations in our integrative review. First, we focused on cognitive approaches to bias mitigation and did not consider motivational strategies, such as enhanced accountability, i.e., the need to justify one’s decisions to others (Tetlock, 1983). We did not include this type of interventions in our review because such strategies may mitigate some biases (overconfidence, Tetlock & Kim, 1987; sunk cost fallacy, Simonson & Nye, 1992) but exacerbate others (e.g., the dilution effect, Tetlock & Boettger, 1989). Future research could compare the effects of accountability with those of debiasing and choice architecture interventions on specific cognitive biases.

Similarly, we excluded studies on decision support systems and other technological interventions due to their complex nature and high level of customization, which reduce their applicability across different organizational contexts. The complexity of these interventions makes them less likely to be considered simple cognitive repairs (Heath et al., 1998). However, as artificial intelligence tools become more accessible, they may offer more flexible and adaptable technological solutions for bias mitigation. While reliance on artificial intelligence may introduce new biases (e.g., algorithmic bias, stereotyping, representation bias; Obermeyer, Powers, Vogeli, & Mullainathan, 2019), particularly in domains such as healthcare, legal, workplace, and consumer decisions, future research should examine how artificial intelligence can enhance organizations’ ability to assess these biases and scale interventions, for instance, by automating the administration of debiasing prompts or facilitating changes in the decision structure.

In conclusion, we believe that our framework holds substantial potential for advancing empirically grounded research on improving organizational decisions. By carefully considering the diversity of contexts where cognitive errors and biases arise and the specificities of the decision, the organization, and decision-makers, our framework can help tailor bias mitigation approaches and ultimately inform a systematic process of bias mitigation that can improve the quality and outcomes of individual and team decision-making processes in organizations.

Supplemental Material

sj-pdf-1-jom-10.1177_01492063241287188 – Supplemental material for Mitigating Cognitive Bias to Improve Organizational Decisions: An Integrative Review, Framework, and Research Agenda

Supplemental material, sj-pdf-1-jom-10.1177_01492063241287188 for Mitigating Cognitive Bias to Improve Organizational Decisions: An Integrative Review, Framework, and Research Agenda by Barbara Fasolo, Claire Heard and Irene Scopelliti in Journal of Management

Footnotes

Acknowledgements

We are most grateful to the Associate Editor and three anonymous reviewers for feedback and suggestions, to Valentina Ferretti, Celina Bade, Nienke Derksen, Wendi Ji, Yue Jin, and Mihir Parekh for precious assistance on the database creation, and to Daniel Heller, Niranjan Janardhanan, Hyun-Jung Lee, Francesca Manzi, Luc Schneider, and Emma Soane for feedback on earlier versions of this manuscript.

Supplemental material for this article is available with the manuscript on the JOM website.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.