Abstract

We provide a comprehensive but critical review of research on applicant reactions to selection procedures published since 2000 (n = 145), when the last major review article on applicant reactions appeared in the Journal of Management. We start by addressing the main criticisms levied against the field to determine whether applicant reactions matter to individuals and employers (“So what?”). This is followed by a consideration of “What’s new?” by conducting a comprehensive and detailed review of applicant reaction research centered upon four areas of growth: expansion of the theoretical lens, incorporation of new technology in the selection arena, internationalization of applicant reactions research, and emerging boundary conditions. Our final section focuses on “Where to next?” and offers an updated and integrated conceptual model of applicant reactions, four key challenges, and eight specific future research questions. Our conclusion is that the field demonstrates stronger research designs, with studies incorporating greater control, broader constructs, and multiple time points. There is also solid evidence that applicant reactions have significant and meaningful effects on attitudes, intentions, and behaviors. At the same time, we identify some remaining gaps in the literature and a number of critical questions that remain to be explored, particularly in light of technological and societal changes.

The field of applicant reactions emerged in the 1980s, fueled by the desire of researchers and practitioners to examine selection procedures from the applicants’ viewpoint (e.g., Liden & Parsons, 1986; Taylor & Bergman, 1987). This stood in stark contrast to research over many decades that had concentrated on selection processes from recruiter and organizational perspectives (as noted by Anderson, Born, & Cunningham-Snell, 2001; Bauer et al., 2006; Steiner & Gilliland, 2001). Since that time, research examining theoretical, empirical, and practical questions in the context of applicant reactions has proliferated, and the field of applicant reactions is still highly active today. It boasts solid theories, rigorous methods, comprehensive measurement tools, and a pool of studies large enough for meta-analytic reviews (Anderson, Salgado, & Hülsheger, 2010; Hausknecht, Day, & Thomas, 2004; Truxillo, Bodner, Bertolino, Bauer, & Yonce, 2009). It also has the attention of employers who continue to have a strong interest in applicant reactions, particularly given that many human resources departments are now viewed as strategic organizational partners.

That said, the field of applicant reactions is at a critical juncture, in that there have been historic questions over whether applicant reactions research is actually making progress or whether it reflects “much ado about nothing” (e.g., Ryan & Huth, 2008; Sackett & Lievens, 2008). In this review, we examine such critiques and conduct an up-to-date, comprehensive, and authoritative assessment of developments in the field of applicant reactions that have emerged since Ryan and Ployhart’s (2000) review of the literature in the Journal of Management. Our goal is to review past work and new developments in relation to wider research theories, including organization justice, attribution, and cognitive processing theories. The integration of theory and research across these areas enables us to advance a general conceptual model that can serve as a foundation for future theory and research on applicant reactions. As a whole, our systematic review summarizes 145 studies and several meta-analyses that have been published since 2000, examines a wider range of reactions than previous reviews, explicitly identifies how the field has advanced, and develops a comprehensive conceptual model describing established and hypothesized relations among variables.

Our review is organized into three main sections. First, we address the question of whether applicant reactions even matter—the “So what?” Here we tackle the criticisms levied against the field. Second, we explicitly highlight “What’s new?” by reviewing the new landscape of applicant reactions research. In doing so, we identify four core areas of growth: expansion of the theoretical lens, incorporation of new technology, selection within multinational contexts, and emerging boundary conditions. Our third and final section focuses on “Where to next?” This resulted in an updated and integrated model of applicant reactions incorporating a number of new variables, and we propose four major future-oriented trends and eight specific research questions warranting attention.

Addressing the “So What?” Question: Do Applicant Reactions Really Matter?

Applicant reactions reflect how job candidates perceive and respond to selection tools (e.g., personality tests, work samples, situational judgment tests) on the basis of their application experience. They include perceptions of fairness and justice, feelings of anxiety, and levels of motivation, among others. Research on applicant reactions gained strong momentum in the 1990s after the publication of Gilliland’s (1993) classic organizational justice model of applicant perceptions. Subsequently, researchers studied the topic using this justice lens, finding that procedural and distributive justice each influenced employer attractiveness, applicants’ intentions of accepting a job offer, and whether job candidates would recommend the employer to others (e.g., Bauer, Maertz, Dolen, & Campion, 1998). However, in the following decade, some scholars began questioning how much applicant reactions mattered. For example, Chan and Schmitt (2004) questioned whether it influenced actual behavior, and Ryan and Ployhart (2000) argued that the field needed an expanded view of applicant reactions. In this review, we summarize, disentangle, and update what we know today—some 15 years since the last comprehensive review of the literature.

Our review uncovered 145 primary studies and several meta-analyses published since 2000. Table S1 summarizes each of these studies by highlighting the reaction studied, the selection procedure used, the type of participants, the study design, and key findings. Because the table is very long, we include the full 42-page table as an online supplemental appendix. Overall, our analysis of the articles included in this table reveals that the literature has evolved to be more rigorous, relevant, and robust.

There is solid evidence that the quality of research designs has improved somewhat in terms of raw percentages and a great deal in terms of the number of studies, but there remain areas of concern. Specifically, our review of the 145 empirical studies published since the last review of the literature appeared in the Journal of Management reveals that although 88 were cross-sectional (many of which were experimental), the remaining 57 utilized time-lagged or longitudinal designs (see Table S1). In comparison, Ryan and Ployhart’s (2000) review included only 11 studies with time-lagged or longitudinal designs. Our review also found 81 studies that were conducted in the field. In 2000, Ryan and Ployhart reported only 23 field studies of applicant reactions. Finally, our review indicates that researchers have expanded their theoretical scope. Specifically, while fairness perceptions remain the norm, at least 55 new studies have included additional perceptions, such as motivation, anxiety, and test/self-efficacy. This is a sharp increase from the 14 studies that examine expanded reactions in Ryan and Ployhart. Similarly, new theoretical approaches have emerged which we summarize in the next section.

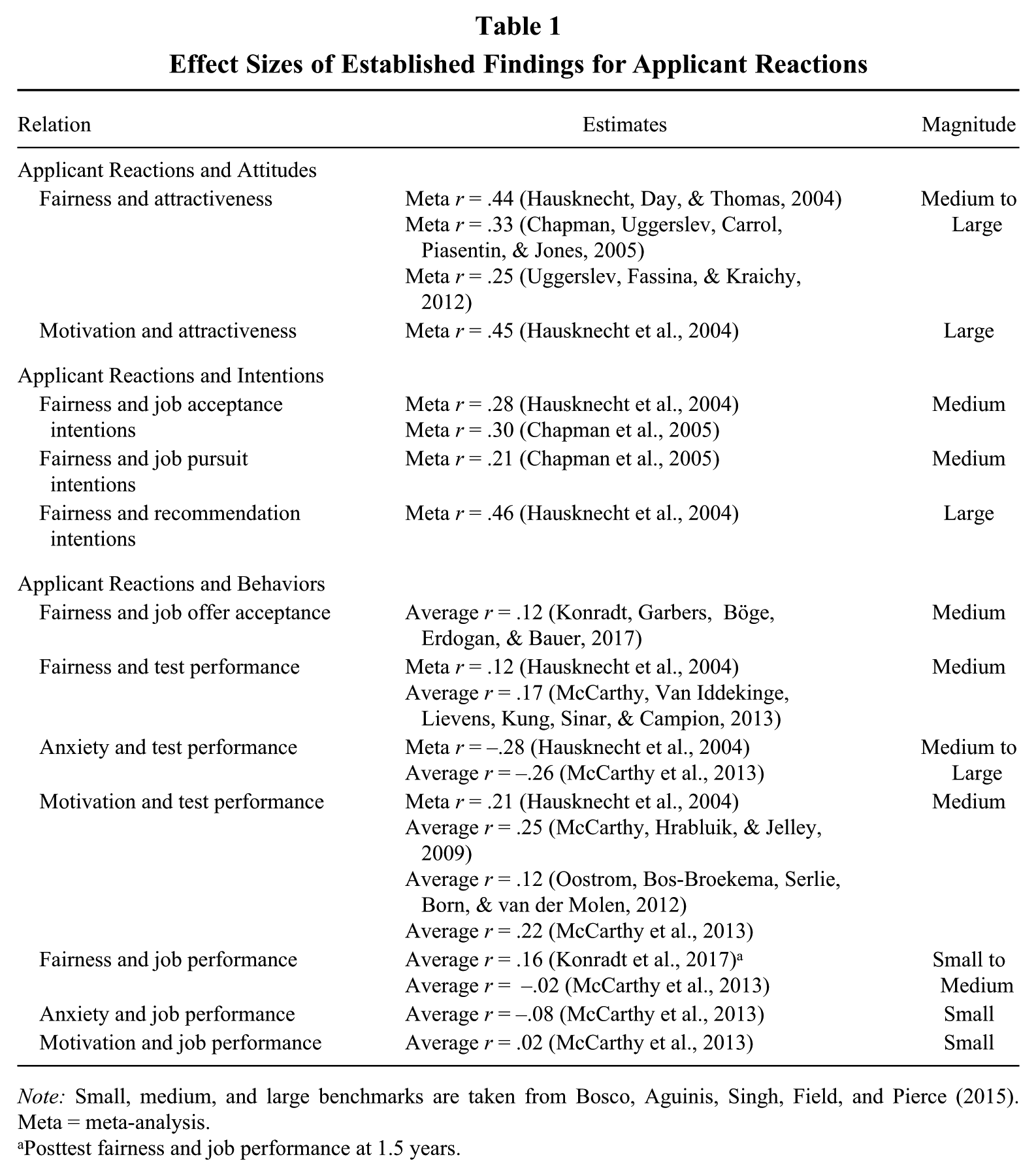

We also have solid evidence that applicant reactions have significant and meaningful effects on attitudes, intentions, and behaviors. This is illustrated by the key findings of the over 145 studies presented in Table S1 in the supplemental material. We summarize evidence for this assertion in Table 1. The top portion and middle portions of this table provide meta-analytic evidence demonstrating that applicant reactions are significantly related to applicant attitudes (organizational attractiveness) and intentions (intentions to pursue the job, intentions to accept the job, and intentions to recommend the job to others). When benchmarked against the field of organizational behavior and human resources, as per Bosco, Aguinis, Singh, Field, and Pierce (2015), these effects are medium to large in magnitude. As such, applicant reactions have significant implications for the design and implementation of selection tests.

Effect Sizes of Established Findings for Applicant Reactions

Note: Small, medium, and large benchmarks are taken from Bosco, Aguinis, Singh, Field, and Pierce (2015). Meta = meta-analysis.

Posttest fairness and job performance at 1.5 years.

Table 1 also presents findings with respect to the relation between applicant reactions and actual behaviors. As illustrated, evidence supporting these relations is accumulating. First, reactions influence the actual acceptance, not just intended acceptance, of job offers (Konradt, Garbers, Böge, Erdogan, & Bauer, 2017). When benchmarked against the field, the magnitude of this relation is moderate, which again supports the importance of applicant reactions. Furthermore, we now have meta-analytic evidence demonstrating that applicant reactions are significantly and meaningfully associated with performance on selection tests (see Hausknecht et al., 2004), and recent studies confirm these findings (see McCarthy, Hrabluik, & Jelley, 2009; McCarthy, Van Iddekinge, Lievens, Kung, Sinar, & Campion, 2013; Oostrom, Bos-Broekema, Serlie, Born, & van der Molen, 2012).

Finally, there is now evidence supporting significant relations between test reactions and job performance. As illustrated in Table 1, findings are small to medium in magnitude (Konradt et al., 2017; McCarthy et al., 2013). While these effects are noticeably smaller than those observed for attitudes and intentions, they are far from trivial, particularly the relation between fairness and job performance reported by Konradt et al. (2017). As such, they have notable implications for recruitment and selection. At the same time, the reduced magnitude of these relations is not surprising. For example, do we really expect the reactions of job applicants to selection procedures to have a strong relation with their job performance months later if and/or when they are hired? Should not issues more proximal to the work situation (e.g., coworkers, supervisors) be more likely to affect performance? Furthermore, there are other important outcomes in the selection situation apart from later job performance, and like the field of recruitment, the point of studying applicant reactions is to get applicants to be favorably disposed towards the organization and be more likely to accept a job if offered one and use the firm’s products.

Addressing the “What’s New?” Question: An Updated Model of Applicant Reactions

Our analysis of the full set of published articles since 2000 (see Table S1 in the supplemental appendix) revealed four promising new research trends. First, there is a noticeable expansion of the theoretical lens, such that studies are moving beyond the justice model introduced by Gilliland (1993). Second, there is a continuing emphasis on the role of new technology in applicant reactions. Third, there has been a marked internationalization of applicant reaction research, with studies emerging from all parts of the globe. Fourth, researchers are examining new boundary conditions. In the next section, we review each of these trends.

Research Trend 1: Expansion of the Theoretical Lens

Recently there has been an expansion and integration of theoretical models of applicant reactions, as well as empirical testing of such models. Our review reveals four ways in which the theoretical lens has expanded. First, there is a noticeable increase in studies that explicitly test Gilliland’s (1993) model. This was made possible, in large part, by the development of the Selection Procedural Justice Scale (SPJS; Bauer, Truxillo, Sanchez, Craig, Ferrara, & Campion, 2001) and Social Process Questionnaire on Selection (Derous, Born, & De Witte, 2004), which enabled more explicit measurement and testing of Gilliland’s rules. Second, this new era of applicant reactions boasts a broader focus, with scholars incorporating a wide range of theoretical perspectives that extend beyond Gilliland’s framework (e.g., expectations theory, attribution theory; e.g., Converse, Oswald, Imus, Hedricks, Roy, & Butera, 2008). This theoretical expansion has advanced our knowledge about the mechanisms underlying applicant reactions. Third, researchers have expanded the focus of applicant reactions research beyond perceptions of justice to include additional test-taking reactions, such as test-taking motivation, anxiety, and efficacy (Lievens, De Corte, & Brysse, 2003; Oostrom, Born, Serlie, & van der Molen, 2010), with a corresponding expansion of underlying theories, such as cognitive interference (McCarthy et al., 2013). Finally, there has been increased effort to bridge the gap between theory and practice by developing techniques to mitigate negative reactions, such as incorporating feedback explanations to candidates (e.g., Truxillo, Bauer, Campion, & Paronto, 2002).

Empirical Testing of Existing Theory

The most influential theoretical model of applicant reactions was advanced by Gilliland in 1993. Gilliland’s model was rooted in organizational justice theory and has been the primary driving force behind hundreds of studies on applicant reactions (Ryan & Ployhart, 2000). However, at the time of Ryan and Ployhart’s (2000) review, few rigorous tests of Gilliland’s model had been published. This was largely due to the fact that the measurement of fairness was unidimensional, inconsistent, and lacking in methodological rigor. As noted above, this changed in 2001, when Bauer and colleagues developed the SPJS. This instrument has served as the foundation for a wide range of additional studies, enabling a thorough assessment of the antecedents and outcomes of these procedural justice rules according to Gilliland’s propositions (see Bauer, Truxillo, Paronto, Weekley, & Campion, 2004; Konradt, Warszta, & Ellwart, 2013; LaHuis, 2005). In the intervening 15 years, several studies have directly examined and/or manipulated Gilliland’s rules in order to test the theoretical propositions of the model (Bertolino & Steiner, 2007; Konradt et al., 2013; Schleicher, Venkataramani, Morgeson, & Campion, 2006).

As illustrated in Table S1, findings support the proposed relations between Gilliland’s (1993) rules and perceptions of fairness, with job relevance, provision of explanations, and feedback being the most consistently related to fairness perceptions. Also consistent with Gilliland’s predictions, several studies have found that individual differences serve as important determinants of applicant fairness perceptions (e.g., Truxillo, Bauer, Campion, & Paronto, 2006; Viswesvaran & Ones, 2004; Wiechmann & Ryan, 2003). With respect to consequences, studies indicate that reactions have notable effects on both “soft” (e.g., organizational attractiveness) and “hard” (e.g., test performance, turnover) outcomes (Truxillo, Steiner, & Gilliland, 2004). A number of meta-analyses also support the underlying propositions of Gilliland’s model (see Anderson et al., 2010; Chapman, Uggerslev, Carrol, Piasentin, & Jones, 2005; Hausknecht et al., 2004; Truxillo et al., 2009; Uggerslev, Fassina, & Kraichy, 2012).

At the same time that Gilliland (1993) introduced his fairness model, Schuler (1993) had introduced an applicant reactions model that focused on the social impact of selection systems, which he termed social validity, or the extent to which candidates are treated with respect and dignity. The main tenets of this theory are aligned with that of Gilliland, but the focus is more strongly directed at applicant well-being. Although this model was published in 1993, it did not gain significant momentum until the turn of the century, when several European researchers used it as the foundation for their studies (e.g., Anderson & Goltsi, 2006; Derous et al., 2004; Klehe, König, Richter, Kleinmann, & Melchers, 2008; Klingner & Schuler, 2004). This focus on applicant health has been instrumental in directing our attention to applicant well-being as a core outcome.

Expanded Theoretical Focus

Studies have continued to push beyond Gilliland’s (1993) justice approach, basing their propositions on new theoretical frameworks. This has advanced the field, as it has expanded our understanding of the determinants, processes, and outcomes underlying applicant reactions. Three theoretical perspectives have emerged as the core foundations for research in the area: expectations theory, fairness heuristic theory, and attribution theory. Other, less commonly applied theoretical frameworks include signaling theory (e.g., Kashi & Zheng, 2013), decision-making theory (see Anderson et al., 2001), institution theory (e.g., König, Klehe, Berchtold, & Kleinmann, 2010), image theory (e.g., Ryan, Sacco, McFarland, & Kriska, 2000), psychological contract theory (e.g., Anderson, 2011a), social identity theory (e.g., Herriot, 2004), and theories of trust (e.g., Brockner, Chen, Mannix, Leung, & Skarlicki, 2000). These theoretical perspectives enable the advancement of past work by helping to explain why applicants react the way they do.

Expectations theory

Expectancy theory (Sanchez, Truxillo, & Bauer, 2000) has also been applied to applicant reactions, and since that time, there has been work on a related topic of what applicants expect from the selection process. Applicant expectations reflect beliefs that they hold about the future (Derous et al., 2004). According to Bell, Ryan, and Wiechmann (2004), such expectations can play an important role in determining the extent to which applicants experience high levels of test-taking motivation, anxiety, and fairness. In fact, the conceptual model advanced by Bell et al. positions expectancies as core mechanisms that underlie the relation between test-taking antecedents (i.e., past experiences, belief in tests) and test-taking reactions. The importance of applicant expectations is further highlighted by the development of the Applicant Expectations Survey (Schreurs, Derous, Proost, Notelaers, & De Witte, 2008).

A number of studies have tested the role of expectancies in job applicant reactions (see Ryan et al., 2000). Results support predictions, with justice expectations found to be important determinants of test-taking motivation, test efficacy, and perceptions of justice (Bell, Wiechmann, & Ryan, 2006; Chapman, Uggerslev, & Webster, 2003; Derous et al., 2004; Schreurs, Derous, Proost, & De Witte, 2010). More recently, Geenen, Proost, Schreurs, et al. (2012) conducted a study to examine the source of justice expectations. Consistent with the aforementioned models (Bell et al., 2004; Derous et al., 2004), justice beliefs were significantly related to procedural and distributive justice expectations. By incorporating expectations, these studies advance our understanding of the processes leading up to the formation of applicant reactions.

Fairness heuristic theory

An approach related to expectations is fairness heuristic theory, which holds that people construct a “fairness heuristic” that they use to guide their expectations and reactions and to reduce uncertainty (Lind, 2001). Early events (e.g., the selection process) and major events form the heuristic, which then becomes the lens through which individuals interpret organizational actions. This theory is relevant to applicant reactions, as selection is often the earliest contact with the organization, and it has been used as the foundation for research examining how selection systems are framed by applicants (Gamliel & Peer, 2009), how applicants react to perceived discrimination and job acceptance decisions (Harold, Holtz, Griepentrog, Brewer, & Marsh, 2015), and how applicants react to social networking as a selection tool (Madera, 2012). Given that fairness heuristics are related to group identity (Lind, 2001), fairness heuristic theory has also been used as the theoretical foundation for promotional candidate reactions (Ford, Truxillo, & Bauer, 2009).

Attribution theory

Applied to selection contexts, attribution theory (Weiner, 1985) focuses on the causal explanations that applicants attribute to behaviors and outcomes. For example, applicants who attribute success to internal causes, and failure to external causes, will put more effort into the selection test and achieve a higher score. Although a few early studies applied the attribution framework to applicant perceptions (see Arvey, Strickland, Drauden, & Martin, 1990; Ployhart & Ryan, 1997), it was not until the turn of the century that an emphasis on attributions emerged (e.g., Ployhart, Ehrhart, & Hayes 2005; Ployhart & Harold, 2004; Ployhart, McFarland, & Ryan, 2002; Schinkel, van Dierendonck, van Vianen, & Ryan, 2011; Silvester & Anderson, 2003), largely driven by the emergence of the applicant attribution-reaction theory (AART; Ployhart & Harold, 2004). AART proposed that applicant reactions are driven by attributions made about how they are treated and the outcomes they receive. These attributions derive from the match between their expectations and observations.

Studies based in AART reveal findings that are consistent with the theory’s propositions (see Konradt et al., 2017; Schinkel et al., 2011). Studies that directly assessed attributions have found significant relations between job applicant attributions and organizational perceptions (e.g., Ployhart et al., 2005). Other studies have found evidence supporting the role of expectations in fairness perceptions and organizational outcomes (e.g., Konradt et al., 2017). AART is also the foundation for Anderson’s (2011a, 2011b) theory of perceived job discrimination, which occurs when minority group members attribute perceived injustice to their minority status. Building on this model, Patterson and Zibarras (2011) introduced the concept of applicant perceived job discrimination.

Taken together, expectations theory, fairness heuristic theory, and attribution theory predict that expectancies are derived from individual differences, such as beliefs and experiences, as well as situational factors, such as procedural and organizational characteristics (Bell et al., 2004; Lind, 2001). These expectancies, in turn, serve as direct determinants of applicant attributions, which then lead directly to applicant reactions.

Expanded Reactions Focus

A third area of growth is an expansion in the focus of applicant reaction research, with new studies incorporating reactions that extend beyond fairness (see Truxillo, Bauer, & McCarthy, 2015). While Arvey and colleagues (1990) addressed these perceptions with their Test-Attitude Survey, it was not until the 21st century that empirical studies embraced this broader lens. For example, prior to the year 2000, there were only 9 studies on reactions beyond fairness (Ryan & Ployhart, 2000), but since this time the number has climbed to around 50. This was facilitated by the advancement of conceptual models that incorporated test-taking motivation, anxiety, self-efficacy, and test fairness as core test perceptions (Hausknecht et al., 2004; Ryan, 2001). The expanded focus was also driven by the development of theory-driven instruments assessing motivation (e.g., Valence Instrumentality Expectancy Motivation Scale; Sanchez et al., 2000) and anxiety (e.g., Measure of Anxiety in Selection Interviews: McCarthy & Goffin, 2004; Self- Versus Other-Referenced Anxiety Questionnaire: Proost, Derous, Schreurs, Hagtvet, & De Witte, 2008).

Consistent with Ryan and Ployhart’s (2000) recommendations, it is now common for studies to examine a variety of reactions simultaneously (i.e., Geenen, Proost, Schreurs, van Dam, & von Grumbkow, 2013; Lievens et al., 2003). This research indicated that applicants with high levels of test motivation (Derous & Born, 2005; McCarthy et al., 2013) and self-efficacy (Horvath, Ryan, & Stierwalt, 2000; Maertz, Bauer, Mosley, Posthuma, & Campion, 2005) achieve higher scores on selection tests, while applicants with high levels of anxiety achieve lower scores (Karim, Kaminsky, & Behrend, 2014; McCarthy et al., 2009). The primary mechanism underlying these effects is cognitive interference, such that high levels of anxiety, and low levels of motivation, interfere with the ability to process performance-relevant information, resulting in lower test performance (McCarthy et al., 2013). Stereotype threat is also relevant as it can lead to higher levels of anxiety and lower levels of motivation (Nguyen, O’Neal, & Ryan, 2003; Ployhart, Ziegert, & McFarland, 2003). When minority groups are highly motivated or anxious about selection tests, they are likely to experience higher levels of cognitive interference, lowering their test performance (Ployhart & Ehrhart, 2002).

A number of additional reactions are acknowledged as important considerations, such as belief in tests (e.g., Lievens et al., 2003), positive and negative psychological effects (Anderson & Goltsi, 2006), perceived job discrimination (e.g., Anderson, 2011a, 2011b), stress and health outcomes (e.g., Ford et al., 2009), and the perceived usefulness and ease of selection procedures (e.g., Oostrom et al., 2010). A useful taxonomy is to conceptualize these constructs as either dispositionally based (e.g., test anxiety, motivation, efficacy, test beliefs) or situationally based (e.g., fairness, job discrimination, test usefulness; McCarthy et al., 2013).

Bridging Theory and Practice

Our review also reveals that the field has made strides in bridging the gap between research and practice. This is most noticeably evident by the proliferation of studies that explicitly test Gilliland’s (1993) rules (see Table S1). Given that these rules map onto specific human resource functions, findings from these studies are easily translated into specific prescriptions for organizations and should not be understated. In fact, there have been a number of articles (e.g., Bauer, McCarthy, Anderson, Truxillo, & Salgado, 2012; Ryan & Huth, 2008) and book chapters (e.g., McCarthy & Cheng, 2014; Truxillo et al., 2015) that offer explicit prescriptions based on theory and research.

There have also been several empirical studies examining the types of explanations that organizations and recruiters can provide to applicants in order to improve their reactions to the selection process. The bulk of this work has examined explanations that applicants are given for not being hired. Such explanations can be classified into justifications (e.g., “the procedure was highly valid”) or excuses (e.g., “the applicant pool was strong”; Truxillo et al., 2009). Meta-analytic findings indicate that both explanations and excuses have significant positive effects on applicant reactions regardless of whether applicants receive positive or negative selection outcomes (Truxillo et al., 2009).

Other studies have focused on improving applicant reactions by providing pretest explanations as part of the selection process. These studies are also promising and suggest that pretest information (Holtz, Ployhart, & Dominguez, 2005), pretest explanations (Horvath et al., 2000), and pretest item vetting (Jelley & McCarthy, 2014) have positive relations with perceptions of test fairness. Furthermore, Lievens and colleagues (2003) found that the provision of pretest information was positively related to belief in tests and test fairness and negatively related to comparative anxiety. Relatedly, Derous and Born (2005) found that the provision of pretest information led to higher test-taking motivation.

There are other ways in which researchers are bridging the research-practice gap. For example, König et al. (2010) examined the choices that recruiters make regarding selection procedures, finding that applicant reactions played a significant role in recruiter choices. There is also an increased emphasis on practitioner-focused articles that provide specific recommendations for practice, such as a Society for Industrial and Organizational Psychology–Society for Human Resource Management (SIOP and SHRM, respectively) white paper on applicant reactions (Bauer et al., 2012). Ultimately, the increased focus on practical relevance, combined with the increased methodological rigor of research, indicates that the field is embracing pragmatic science and moving closer to resolving tensions between research and practice.

Nevertheless, the field has a long way to go towards fully bridging the science-practice gap, and it is our hope that by 2030 we will have made more significant strides in this regard. There are a number of ways that this may be accomplished. To begin, we can enhance the communication of study findings to practitioners through platforms such as white papers and social media channels. Going even further, we can make academic findings accessible to practitioners through Internet-based platforms. To accomplish this objective, new platforms could be developed, or existing platforms, such as ResearchGate, can be modified to facilitate joint participation of researchers and practitioners. This would enable practitioners to pose strategic questions and be provided with evidence-based responses in a timely manner. It would also help to establish collaborative working relationships with organizations such as The Talent Board—an organization that focuses on the quality of job candidate experiences and provides awards to organizations that treat candidates well. Our efforts to increase practitioner awareness of our annual conferences, such as SIOP and European Association of Work and Organizational Psychology, and organizations like SHRM, would also be improved. At the same time, an evidence-based Internet platform would enable scientists to understand the nature of organizational problems from the practitioners’ point of view. This would facilitate the design of research studies that empirically examine practical techniques for leveraging applicant reactions, such as the work by Truxillo and colleagues (2002). It would also allow us to transform our research into answers that are more meaningful to practitioners. Resources such as Google’s re:Work resource guides on improving the candidate experience complete the research-practice circle as an established organization is sharing resources they have generated based on practice and based on academic research done in this area to help improve applicant reactions more globally (e.g., Google, 2016). Thus, a variety of opportunities exist to bridge theory and practice.

Research Trend 2: Incorporation of New Technology

There have also been revolutionary changes in personnel recruitment and selection technologies since the development of the original applicant reactions models over 20 years ago (e.g., Gilliland, 1993; Schuler, 1993). Indeed, since the turn of the millennium, the Internet has become a principal medium through which organizations recruit and select their prospective employees. As a result, scholarly interest on how these technologies affect fairness perceptions and reactions has led to a flurry of developments in the applicant perspectives literature. Two main research trends are discernible: cost-benefits analyses and applicant preferences and equivalence.

Technology-Based Cost-Benefit Analysis

Technologies such as Internet-based job searches and recruitment Web sites allow an applicant the ability to search and apply for a higher number of jobs in a far shorter period of time than previously (Bartram, 2001). For organizations, the benefits of adopting such technologies were also significant. First, Internet-based technologies provided continuous access to job applicants regardless of their physical location, thus increasing the organization’s pool of candidates. Second, organizations could pass information to applicants about the job in a more consistent manner, and applicants would thus have much more information at their disposal before they decide to apply for a job than in the past (Lievens & Harris, 2003). In relation to selection, the adoption of Web-based selection platforms (e.g., Arthur, Glaze, Villado, & Taylor, 2009; Dineen, Noe, & Wang, 2004) has also been shown to be more effective in assuring objectivity in the handling of job applications (Konradt et al., 2013) and in saving considerable costs, for both employer and applicant, when compared with more traditional selection methods (Viswesvaran, 2003). The overwhelming benefits of adopting these new Internet technologies in selection have led to their speedy, widespread adoption across different sectors and industries worldwide.

Applicants’ Preferences for New Versus Traditional Selection Media

A second research trend is the attempt to establish applicants’ preferences and reactions to the new Internet-based selection procedures and to compare their equivalence to established selection media. Research has revealed generally positive reactions to Internet-based recruitment, both in contexts of student internships and in industry-specific contexts (see Lievens & Harris, 2003). Empirical evidence has confirmed the important role of Web site aesthetics as a way to give applicants informal means of evaluating a company’s characteristics and culture before deciding to apply (Dineen, Ash, & Noe, 2002; Van Rooy, Alonso, & Fairchild, 2003). Positive impressions of Web site realistic job previews (Highhouse, Stanton, & Reeve, 2004), and providing online feedback to candidates (Dineen et al., 2002), are positively related to applicants’ intention to apply for a job in that organization. Similarly, perceived ease of use of Internet-based recruitment technology has been found to influence job applicants’ organizational attractiveness perceptions (Williamson, Lepak, & King, 2003), as well as number of applications per job opening (Selden & Orenstein, 2011). In selection contexts, most studies investigating applicant perceptions of Internet-based testing report positive reactions (see Baron & Austin, 2000; Reynolds & Lin, 2003; Reynolds, Sinar, & McClough, 2000). In contrast, studies examining perceptions of job applicants regarding videoconferencing technologies in selection interviews show less favorable reactions than face-to-face interviews (see Chapman et al., 2003; Silvester, Anderson, Haddleton, Cunningham-Snell, & Gibb, 2000; Straus, Miles, & Levesque, 2001). Thus, it appears that while Internet-based tests hold advantages for both the organization and the applicant in terms of cost savings and remote administration, selection procedures requiring face-to-face interaction are more difficult to replace with technology. This is consistent with media richness theories, in that the use of natural language and the transmission of verbal and nonverbal cues are essential to selection interview procedures. Thus, when a technology medium degrades the quality or accuracy of an applicant’s intended message, it tends to be viewed negatively (Chapman & Webster, 2001).

Likewise, applicants form impressions of an organization on the basis of information, or signals, conveyed to them during interviews (e.g., the recruiter’s behavior), and technology-mediated interviews may generate signals about the type of organization that would use them (Rynes, Bretz, & Gerhart, 1991). As videoconferencing has increasingly become outmoded, newer technologies emerge, such as Skype, Google Hangouts, or FaceTime, that can be used as media to interview applicants. Variations in media richness perceptions of these technologies can generate different signals. For example, organizations that use such technologies can signal being sophisticated, less formal, and different in the way they interact with their applicants (see Anderson, 2003), which in turn can influence applicant perceptions about how fair the organization is and how it would feel to work there. Moreover, today’s applicants have technologies available that allow them to take selection tests or be interviewed from their personal devices (e.g., PCs, mobile phones) rather than using formal videoconferencing technologies from conference rooms in a set location. Thus, future research would benefit from integrating media richness and signaling theories in examining applicant reactions to different types of new selection media. Such research should also compare applicant reactions to different media in terms of their degree of formality, user-friendliness, and applicant control.

This shift towards studying new technologies has expanded original applicant reactions models (e.g., Gilliland, 1993) to a new, technology-based reality now widespread in employee selection. Several studies have focused on identifying new determinants of justice perceptions associated with technology, including Web site design (De Goede, Van Vianen, & Klehe, 2011), Web site content and user-friendliness (Selden & Orenstein, 2011), system speed (Sinar, Reynolds, & Paquet, 2003), and degree of customization to the applicant (Dineen, Ling, Ash, & DelVecchio, 2007). Other studies investigated the characteristics of the applicant that determined or at least moderated perceptions of Internet-based procedures. These include the applicant’s age, his or her Internet knowledge and exposure (Sinar et al., 2003), and person-organization fit (Dineen et al., 2007).

New Trends in Technology-Based Recruitment and Selection

Technological advances—such as the use of social media, computing power that allows for the compilation and analysis of big data, and gamification of screening tools—should be incorporated into applicant reactions research. Organizations can now access information about job applicants through “digital footprints” that are obtained through social media and networking Web sites, including both nonprofessional-oriented profiles (e.g., Facebook and Twitter) and professional-oriented profiles (e.g., LinkedIn; Nikolaou, 2014). Companies can also review applicants’ personal Web sites and blogs and perform Internet searches on them (e.g., via Google; Van Iddekinge, Lanivich, Roth, & Junco, 2016) and use automated computer-based (unstandardized) text analysis of all information available about applicants to generate assessments of applicants’ personality (Kluemper, Rosen, & Mossholder, 2012).

While the use of social media can actually amplify the importance of applicant reactions, research evidence suggests that there is little support for criterion-related validity of the inferences based on social media assessments and that there may be subgroup differences in such assessments that could lead to ethnicity-based adverse impact (Van Iddekinge et al., 2016). Additional concerns in relation to privacy of information, reliability of the information available, and impression management have also been highlighted given the increasing usage of these technologies for screening and selecting prospective job applicants (see Kluemper & Rosen, 2009; Stamper, 2010). A recent report by SHRM (2013) showed that 20% of the participating organizations used social media for screening applicants, which can lead to important legal and ethical implications and challenges, including discrimination against protected groups, individual biases allowed to influence selection decisions, and reliability of such information (Broughton, Foley, Ledermaier, & Cox, 2013; Brown & Vaughn, 2011; Clark & Roberts, 2010). Some countries have developed laws that require organizations to obtain written consent from the applicants before proceeding to conduct a social media background check (e.g., in some Canadian provinces; Pickell, 2011), while other countries (e.g., Germany; 18 U.S. states) have banned employers from using background checks through social media (Leggatt, 2010; Wright, 2014). However, many countries still have no such laws.

Organizations are also increasingly using content- and structure-based analytics to hire and manage employees (Tambe & Hitt, 2014). Content-based analytics involve the examination of data from social media sites, while structure-based analytics focus on the relationships between social media users (Gandomi & Haider, 2015). Today there is an immense amount of information that can be gathered from the Web and be organized and visualized through various text and Web mining techniques, such as Google Analytics, which provide a trail of the applicant’s online activities. Yet there is very little guidance about how content- and structure-based analytics may illegally discriminate or the extent to which they create a legal liability. Also, employees increasingly are using social computing (e.g., wikis, blogs, social media) to engage with each other, clients, and partners. Organizations can also use this information to screen potential candidates for internal promotions. Some organizations have even introduced their own corporate policies on social computing (e.g., IBM social computing guidelines; IBM, 2015).

In addition, game thinking via gamification is a rapidly developing trend to recruit and select job applicants. Organizations can use game principles and elements in nongame contexts to assess applicants on several aspects, such as attention, emotional intelligence, cognitive speed, personality, and their matches to specific jobs and organizations (Armstrong, Landers, & Collmus, 2015; Collmus, Armstrong, & Landers, 2016). This new technology can be advertised and used in social media to reach more applicants and provide them with information about the job vacancies and encourage them to play games, tests, and puzzles and share their information online, including results, recommendations, endorsements, and qualifications. This wealth of information can be used via big data techniques to analyze performance. Besides analyzing “likes,” followers, and retweets in social media platforms, organizations can harvest data for assessing and comparing applicants. Indeed, content- and structure-based analytics can be powerful tools for personnel selection in the future (Collmus et al., 2016). Even some third-party organizations (e.g., Pymetrics) are using assessment games on their dedicated social platform to match job applicants with careers and companies (Zeldovich, 2015). However, we urge considerable caution in these areas—all as yet remain unproven, and there is a notable lack of empirical studies that support the validity, reliability, fairness, and legality of such approaches.

Another generation of technology in selection is mobile testing (Ryan & Ployhart, 2014). Mobile versions of selection tests have shown to be equivalent to nonmobile versions across different types of tests, including cognitive ability tests, situational judgment tests, multimedia work simulations (Morelli, Mahan, & Illingworth, 2014), and personality and general mental ability tests (Arthur, Doverspike, Muñoz, Taylor, & Carr, 2014). Although currently only a small number of applicants tend to complete Internet-based assessments on mobile devices (e.g., Arthur et al., 2014), this trend is likely to increase in the future as organizations try to become more flexible in their delivery of selection procedures. The use of such mobile devices in selection may be particularly popular among applicants of particular demographic segments, such as younger applicants, women, Hispanics, and African Americans (Arthur et al., 2014), although more research is needed to see whether it increases outcomes such as job acceptance.

Ultimately, applicant reactions research has garnered attention from practitioners and employers because of its promise to affect actual applicant behaviors that are of acute interest to employers. Certainly, most practitioners who deal with applicants regularly have some anecdotal evidence that negative applicant perceptions affect applicant behaviors, such as complaints and litigation types of behaviors. Unfortunately, many of these applicant behaviors, while critical to the organization, have a low base rate that makes them difficult to study with conventional social sciences methodologies. We see the current growth of big data analytics as a promising way to establish the relationship between such reactions and actual applicant behaviors—particularly those that are difficult to assess through self-reports or with smaller data sets—such as purchasing or reapplying to the job or even more compelling, litigation types of behaviors.

Current Technology-Based Challenges and Adverse Impact

Perhaps one of the most challenging issues with respect to new technology is the potential for malfeasance (e.g., cheating and response distortion) under high-stakes selection conditions. One of the advantages of Internet-based testing is that it may be used as a “test anywhere–anytime” method (Arthur, Glaze, Villado, & Taylor, 2010). However, in order to capitalize on this advantage, Internet-based tests must be administered in an unproctored manner in the absence of human observation of test takers. Although this mode of testing has logistical advantages over onsite testing, researchers and practitioners continue to be concerned about the accuracy and validity of said test scores (e.g., Beaty, Nye, Borneman, Kantrowitz, Drasgow, & Grauer, 2011). A recent study by Karim et al. (2014) showed that using remote proctoring testing technology (e.g., real-time Webcam and screening sharing, archival biometric verifications) decreased cheating and had no direct effect on applicants’ test performance. However, they did find evidence that proctoring technology may be associated with an increase in applicant withdrawal from the application process. Thus, the decision to rely on such technologies may come at the cost of a smaller pool of applicants. In addition, organizations need to ensure that additional data collected from proctoring systems are maintained and stored safely or disposed properly (Karim et al., 2014).

Another particularly challenging issue has been information privacy concerns associated with Internet-based procedures. Harris, Van Hoye, and Lievens (2003) found that concerns about information privacy were related to reluctance to use technology-based selection systems. Similarly, in the context of online screening procedures, Bauer et al. (2006) found that applicants with higher privacy concerns had lower justice perceptions, which in turn affected their intentions and attraction towards the organization. Particularly in the age of the Internet and social media, applicants may feel that organizations are invading their information privacy when using information from social networks, perhaps originally intended only for family and friends (Black, Stone, & Johnson, 2015). Indeed, a study by Stoughton, Thompson, and Meade (2015) showed that screening through social media led applicants to feel that their privacy had been invaded, which in turn increased their litigation intentions and lowered their attraction towards the organization. Similarly, a study by Madera (2012) in the hospitality industry suggested that using social networking Web sites for selection purposes does have a negative impact on the applicants’ fairness perception of the selection process. From the applicant’s perspective, Roulin (2014) also noted that applicants currently may be less likely to have faux pas postings, especially since they have been made aware that a high number of organizations are using the information posted on social media networks as part of their assessment and selection. Thus, concerns over privacy of information selection procedures remain of extreme importance for both the applicant and the organization. In particular, organizations should look for ways to reinforce applicants’ perception that the technology-based selection process is secure (Roth, Bobko, Van Iddekinge, & Thatcher, 2016).

Research Trend 3: Sustained and Increased Focus on Internationalization

Many multinational corporations (MNCs) have to deal with deploying selection systems cross-nationally (Ryan & Ployhart, 2014; Ryan & Tippins, 2009). In response, there has also been an increasing focus on applicant reactions across countries, with dozens of studies examining similarities and differences in applicant reactions to popular methods comprising selection procedures. Although the North American context still prevails, there has been considerable growth in research in other countries, particularly within Europe (e.g., Anderson & Witvliet, 2008; Bertolino & Steiner, 2007; Hülsheger & Anderson, 2009; Konradt et al., 2017; Nikolaou & Judge, 2007), the Middle East (e.g., Anderson, Ahmed, & Costa, 2012), and Asia (e.g., Gamliel & Peer, 2009; Hoang, Truxillo, Erdogan, & Bauer, 2012; Liu, Potočnik, & Anderson, 2016). In fact, since the turn of the millennium, studies have been conducted in more than 30 countries worldwide (Walsh, Tuller, Barnes-Farrell, & Matthews, 2010). The findings from these studies provide invaluable evidence for MNCs on how their selection procedures will likely be perceived by applicants coming from different countries and cultures (as noted by Truxillo, Bauer, McCarthy, Anderson, & Ahmed, in press).

While earlier research had understandably anticipated that there would be cross-country differences in applicant reactions (e.g., Marcus, 2003; Moscoso & Salgado, 2004; Phillips & Gully, 2002), recent findings refute this assumption. To our knowledge, Moscoso and Salgado (2004) were the first authors to argue that reactions would vary as a function of country-specific variables, such as employment legislation, human resource management practices, culture, and socioeconomic status; they posited that “cross-cultural differences may moderate . . . procedural favorability” (188), a stance that has since been termed “situational specificity” by Anderson and colleagues (2010). Counter to this, a swath of recent primary studies, and one meta-analysis, reported findings of notable similarities in applicant reactions, regardless of such contextual moderators—so-called reaction generalizability across countries.

Anderson et al. (2010) reported findings from a meta-analytic summary incorporating 38 samples from 17 countries and covering 10 popular selection methods. They found considerable similarity in reaction favorability across all countries and evidence for reaction generalizability internationally. Candidates rated their preferences for methods in essentially three clusters: most preferred (interviews, work sample tests), favorably evaluated (résumés, cognitive tests, references, biodata, personality inventories), and least preferred (honesty tests, personal contacts, graphology). Importantly, the authors also found significant positive correlations between perceived justice dimensions and operational validity as defined by Schmidt and Hunter (1998; correlations ranged between r = .34 and r = .70). Applicants thus preferred methods that are more valid predictors of future job performance, regardless of their country of origin. Given these findings, the authors reasserted that the use of valid predictor methods in selection is likely to lead to positive applicant reactions.

Several other studies across widely differing countries have also supported reaction generalizability (e.g., Anderson et al., 2012, in Saudi Arabia; Nikolaou & Judge, 2007, in Greece; Bertolino & Steiner, 2007, in Italy). Ryan, Boyce, Ghumman, Jundt, and Schmidt (2009) examined applicant reactions to eight popular selection methods across 21 countries. They also found that perceptions were similar across individuals holding different cultural values. It is the level of overall similarity despite fundamental cultural and other differences that is striking in this tract of recent findings. Why might this be the case? One explanation is that there exists a “core” of reactions that is common across countries supplemented by more “surface-level” reactions that differ markedly (Truxillo et al., in press). Another is that there may be similar reactions among applicants to some more general dimensions but that on other, more culturally specific dimensions, applicants exhibit quite different responses (Hoang et al., 2012). Finally, a more contentious explanation is, as almost all of these studies have used Steiner and Gilliland’s (1996) scales of process favorability and procedural justice, that there may be a methodological confound occurring in subject responses. That is, the scale and research methodology itself may be provoking certain conformity responses or be insufficiently sensitive to tease out slight variations by country. We are aware of no research to date that has explored this final possibility. It is also possible that cross-cultural differences have failed to emerge given the substantial sample sizes that are required to detect small effects (Field, Munc, Bosco, Uggerslev, & Steel, 2015).

Research Trend 4: New Boundary Conditions

There is also an increased emphasis on the boundary conditions that may affect when applicant reactions matter. Traditionally, this has been a woefully understudied issue within the applicant reactions literature that has tended to conceptualize applicants as monolithic in their interests rather than as a diverse set of individuals operating in diverse contexts. In this section, we describe moderators of applicant reactions that are already receiving some attention, as well as factors we see as ripe for study.

Selection Context

Hiring expectations and selection ratio

The hiring expectations of applicants and their investment in the selection process have been cited as likely to affect applicant reactions, although until recently this has received little attention. Geenen, Proost, Schreurs, et al. (2012) found that the test-taking experience can enhance the relationship between belief in tests and justice expectations. Similarly, Reeder, Powers, Ryan, and Gibby (2012) found that job familiarity and prior success affected applicants’ face validity perceptions of a test. Thus, in determining when applicant reactions matter, the expectations and experience of job applicants appear to be important considerations and ones that deserve further study.

Job type

Ryan and Ployhart (2000) cited job type as a related variable that may moderate the effects of applicant reactions. Truxillo et al. (2002) cited job type as a possible interpretation for why organizational attractiveness was so high at all time points in their study. Job type could be expected to moderate applicant reactions in a few ways. For example, applicants would likely expect different types of selection procedures depending on the job that they are applying for. In addition, they might expect different types of interpersonal treatment (e.g., personal attention vs. an online screening) depending on the type of job they are applying for (e.g., executive position vs. entry level). However, to this point, job type has not been systematically examined for its effects on applicant reactions.

Job desirability

The desirability of getting a particular job is likely to determine when applicant reactions matter. Job desirability might attenuate the effects of selection fairness on attraction-related outcomes, but it might also amplify negative reactions if a job is denied. For example, when an applicant is denied a particularly desirable job, it could affect the attributions they make about the selection process. Conversely, when a job is highly desired by an applicant, the selection procedure is unlikely to affect an applicants’ likelihood of taking the job (see discussion of Truxillo et al., 2002, above). These effects also likely vary at different points in the process (e.g., pre- vs. postselection decision).

Social context

Job applicants operate in rich social contexts. Since the initial development of selection systems in the early 20th century, human resource practitioners have understood that test takers might discuss selection procedures with each other, affecting test security and validity. The social context can also affect perceptions of justice (Ambrose, Harland, & Kulik, 1991). It is remarkable, then, that this aspect of the social context—in which applicants attempt to make sense of the selection context through communication with each other—has been almost completely neglected in the applicant reactions literature.

Recently, Geenen et al. (2013) demonstrated that communication with peers can affect applicants’ justice perceptions, which in turn affects test anxiety and test motivation. Such peer effects on applicant reactions are ripe for further study, as peers—be they in person or online—may be a missing link in understanding how applicants perceive the selection situation and determining when reactions to selection affect outcomes, such as intention to litigate. Furthermore, Sumanth and Cable (2011) conceptualized the social context in terms of test-taker status and employer status. They found that the status of the applicant and the employer affected applicant reactions to demanding selection procedures. In two studies of U.S. and U.K. participants, they found that demanding selection procedures are considered to be fairer when used by a high-status organization but are considered unfair or even insulting by high-status individuals. In summary, these papers reveal that the social context of applicants is a potentially rich moderator of applicant reactions that is deserving of further study.

Selection decision

One moderator of applicant reactions is when reactions are measured, such as before or after feedback is received (Ryan & Ployhart, 2000). For example, Schleicher et al. (2006) found that opportunity to perform had a different relationship with fairness depending on whether it was measured before or after feedback, and Walker, Bauer, Cole, Bernerth, Feild, and Short (2013) found that justice differentially affected organizational attractiveness at different points in the recruitment process. Similarly, Zibarras and Patterson (2015) found that job relatedness measured pre- and postfeedback differentially predicted fairness. In other words, the research to date suggests that applicant reactions are dynamic in nature, and when they are measured in the selection process can affect reactions as well as their correlates. Although the issue of the dynamics of applicant reactions has been raised in previous reviews (e.g., Chan & Schmitt, 2004), this issue has received scant attention and is deserving of further study.

Internal versus external candidates

One of the moderators that may determine the effects of applicant reactions is whether applicants are internal or external candidates. The research to date suggests that the effects of applicant reactions may be much larger when dealing with internal candidates for promotion than they are for external candidates (Ambrose & Cropanzano, 2003; Truxillo & Bauer, 1999), perhaps because of internal candidates’ greater investment in the organization and the social context. Furthermore, it has been suggested that internal candidates may have a number of negative reactions that are important to the organization (satisfaction and performance) and to individuals (well-being; Ford et al., 2009). Indeed, García-Izquierdo, Moscoso, and Ramos-Villagrasa (2012) found that justice reactions to promotions were strongly related to job satisfaction. Recently, for example, Giumetti and Sinar (2012) found that opportunity to perform was more strongly related to recommendation intentions for external candidates than for internal candidates. To our knowledge, this is one of the few studies that directly compares internal and external candidates’ reactions. In short, whether an applicant is an external candidate or current employee is likely to affect the magnitude of reactions. More importantly, it may affect outcomes that are critical to organizational functioning, such as job satisfaction and organizational citizenship. However, this is an area ripe for additional research.

Organizational Context

Organizational size

One potential moderator of applicant reactions that has received little scrutiny is the size of the organization. Recently it has been argued (Truxillo et al., 2015) that selection systems differ considerably based on the size of the organization and that this could affect the extent to which applicant reactions matter, and which dimensions of fairness are salient to applicants. For example, organizations with larger selection systems tend to be more impersonal and may create distance between the applicant and future coworkers, at least early on in the process. In comparison, smaller organizations allow applicants to meet their hiring manager and coworkers much earlier in the process and, thus, allow these future coworkers to affect the applicants’ perceptions of the hiring organization. These substantial differences in selection systems and the way that they are experienced by applicants support the idea that the size of the organization may moderate the effects of applicant reactions, or at least which dimensions of the selection system may have the greatest effects (e.g., interpersonal treatment vs. job relatedness).

Societal Context

Culture

As discussed earlier, a number of studies have examined whether country or culture moderate applicant reactions, and findings indicate that applicants across cultures generally have similar reactions to different selection procedures (Anderson et al., 2010). In fact, only one recent study (Brender-Ilan & Sheaffer, 2015) suggested some differences between native Israelis and Israeli immigrants from the former Soviet Union. Although as a group these findings suggest that applicants across cultures have similar reactions, we anticipate studies comparing reactions in additional countries, given the growth of MNCs and the fact that centralized selection processes must take place across diverse cultural contexts.

Individual Differences

Personality

In addition to selection procedures themselves, researchers have illustrated that a number of applicant personality traits, such as Big Five personality dimensions, can account for significant variance in applicant justice perceptions and outcomes (e.g., Truxillo et al., 2006; Viswesvaran & Ones, 2004). The idea here is that, regardless of what an employer does, some applicants will be negatively or positively predisposed to the selection procedures and the selection system, depending on what personal characteristics they bring to the situation. Researchers have continued to examine personality traits of job applicants for their effects on applicant reactions (e.g., Honkaniemi, Feldt, Metsäpelto, & Tolvanen, 2013; Oostrom et al., 2010). Positive and negative affectivity also have been found to affect the relationship between justice and outcomes such as recommendations and litigation intentions (Geenen, Proost, Dijke, Witte, & von Grumbkow, 2012). In one of the most comprehensive examinations of the effects of such individual differences on reactions, Merkulova, Melchers, Kleinmann, Annen, and Tresch (2014) found that Big Five personality factors, core self-evaluations, trait affectivity, and cognitive ability affected perceptions of an assessment center even after controlling for applicants’ perceived and actual assessment center performance. This emerging recognition that applicants’ personality can affect their reactions and, thus, the relative effects of selection procedures themselves is a key factor in understanding how applicant reactions work.

Ethnicity and gender

As noted in previous reviews (Truxillo & Bauer, 2011), ethnicity and gender were examined for their moderating effects in early applicant reactions studies (e.g., Chan & Schmitt, 1997), often with the hopes that such differences might also explain ethnic differences in test performance. However, such demographic variables have not panned out as major moderators of applicant reactions, and Hausknecht et al. (2004) found near zero ethnicity and gender effects in their meta-analysis. Recently, Whitman, Kraus, and Van Rooy (2014) found that Blacks had more positive perceptions of emotional intelligence tests than did Whites and that some test reactions were more strongly related to test performance for Blacks. In Israel, Brender-Ilan and Sheaffer (2015) found significant differences between native Israelis and immigrants (i.e., ethnic minority) from the former Soviet Union concerning five selection procedures, and they attributed them to cultural differences between the two groups. Thus, although ethnicity and gender have shown some potential as moderators through the years, their track record thus far suggests that they are less promising as moderators than are other moderators cited in this section.

Interventions

Research examining ways to optimize, or enhance, applicant reactions through the use of test interventions has also emerged since the Ryan and Ployhart (2000) review. For example, providing information to applicants at the beginning of a test has been found to be a simple yet meaningful way to influence candidate reactions (see Horvath et al., 2000; Truxillo et al., 2002) and has been demonstrated meta-analytically to affect a number of applicant reactions (Truxillo et al., 2009). Thus, the provision of pretest information may serve as a valuable buffer for the potentially negative effects of testing characteristics (type of test, selection context) on test reactions. Giving applicants choice over aspects of the testing process (i.e., the order in which test questions are completed) may also enhance and/or buffer test reactions because it reduces feelings of anxiety (Ladouceur, Gosselin, & Dugas, 2000). The increased popularity of computer-based testing makes this a viable and potentially powerful option for organizations using computerized testing. Ultimately, additional research that explores the direct and moderating effects of interventions to leverage candidate reactions is likely to prove invaluable.

In summary, the existence of moderators may explain why sometimes applicant reactions matter and sometimes they do not. The examination of such moderators is the search not for whether applicant reactions matter but for under what circumstances they do.

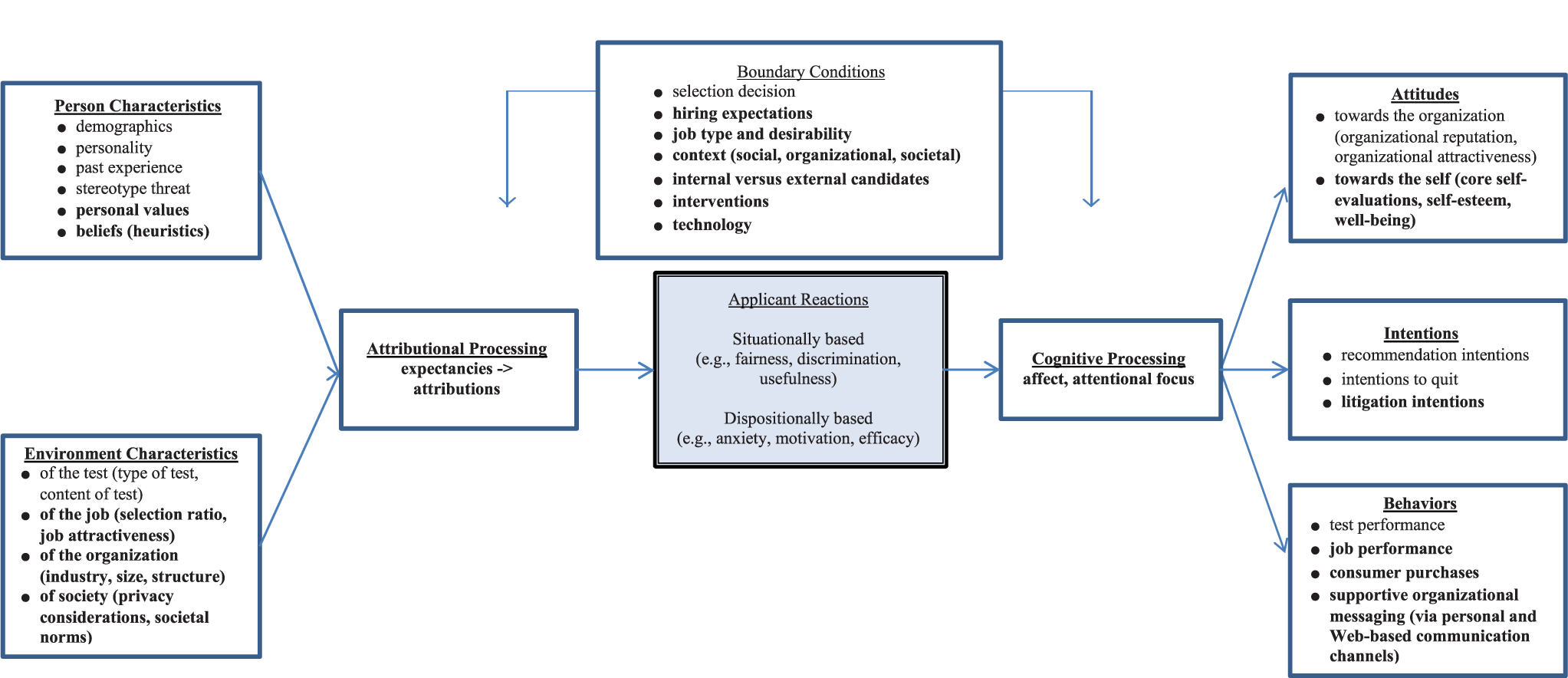

Addressing the “Where to Next?” Question

We have illustrated that the field of applicant reactions has become more theoretically and empirically sophisticated, with the last 15 years’ witnessing a proliferation of studies based on integrated and expanded foundations. Our model acknowledges this new era by providing an updated and unified general framework of applicant reactions that can serve as a foundation for future work (see Figure 1). We accomplish this objective by expanding the model advanced by Ryan and Ployhart (2000) to incorporate new antecedents (i.e., values, beliefs, societal characteristics), outcomes (i.e., attitudes towards the self, intent to litigate, consumer purchases), and boundary conditions (i.e., hiring expectations, interventions, technology). We also include attributional processing as the core mechanism underlying the relations between antecedents and reactions and cognitive processing as the core mechanism underlying the relations between reactions and the outcomes. The figure also distinguishes between established and less-established relations.

General Conceptual Framework: Applicant Perspectives in Selection

Drawing from expectations theory, fairness heuristic theory, and attribution theory, the first portion of our model predicts that applicants’ personal characteristics and the characteristics of the environment will influence the attributions that applicants make for what they experience during the selection process. It is noteworthy that applicant values and beliefs are now included among the determinants of applicants’ attributional processing. Attributions, in turn, have a direct effect on applicant reactions, which include the extent to which applicants perceive the process to be fair, are motivated to do well, exhibit test anxiety, and experience test efficacy. Positioning attributional processing as the core mechanism underlying applicant reactions is important and is aligned with the expansion and integration of current models.

At the core of our model is the full range of applicant reactions, including those that are situationally based (e.g., fairness, job discrimination, test usefulness) and those that are dispositionally based (e.g., test anxiety, motivation, efficacy, test beliefs; McCarthy et al., 2013). These reactions can relate to the general selection process, the outcome of the decision, and/or the specific assessment tool(s) used and are aligned with the expanded focus of applicant reactions research beyond perceptions of justice.

The final portion of our framework suggests that applicant reactions predict three categories of outcome variables: attitudes, intentions, and behaviors. A number of novel behaviors are suggested, including organizational purchasing (i.e., purchase of company products) and organizational messaging (i.e., Web-based communication). We encourage future research to consider additional behavioral outcomes that have relevance to the selection context—for example, social media postings (e.g., Facebook, Twitter) and the use of company products and/or services. In addition, more nuanced considerations of behavioral outcomes of applicant reactions may prove valuable—such as the extent to which the subsequent behaviors are deliberate versus rash, time intensive versus quick, or anonymous versus public. Finally, future research should consider the thresholds needed for observed effects, as applicants may require certain levels of applicant reactions in order for specific behaviors to occur.

As illustrated in the figure, the core process through which reactions influence these outcomes is via applicant cognition (levels of affect and attentional focus). Specifically, negative reactions (i.e., low levels of fairness/motivation and high levels of anxiety) are likely to trigger negative affect (Geenen, Proost, Dijke, et al., 2012; van den Bos, Maas, Waldring, & Semin, 2003) and result in lower organizational attitudes (e.g., organizational attractiveness) and adverse behaviors/behavioral intentions (e.g., recommendation and litigation intentions). Negative reactions may also reduce attentional focus (McCarthy et al., 2013), which can result in adverse behaviors/behavioral intentions (e.g., lower test performance). At the same time, positive reactions trigger positive affect and may increase attentional focus, leading to higher attitudes and more favorable behaviors/behavioral intentions. Positioning applicant cognitions as the core mechanisms underlying relations between applicant reactions and key outcome variables is aligned with current research and theory and provides a more comprehensive understanding of how reactions influence outcomes.

Our framework also identifies a number of boundary conditions: selection decision, hiring expectations, job type and desirability, context (social, organizational, societal), type of candidate (internal vs. external), interventions, and technology. These are aligned with the increased emphasis on factors that affect when and how applicant reactions matter. For example, we suggest that it is important to consider the context in terms of the characteristics of the selection process, the characteristics of the organization, and the characteristics of the society. More specifically, the effects of attributional processing on applicant reactions may vary by hiring expectations, organizational size, or societal culture. Interventions to leverage the effects of applicant reactions may also influence the attributional processing that leads to candidate reactions, as well as the cognitive processing that follows applicant reactions. For instance, the effects of attributions on applicant reactions may vary by the type of pretest explanations provided to applicants. Similarly, the effects of applicant reactions on cognitive processing may vary by the type and amount of feedback provided.

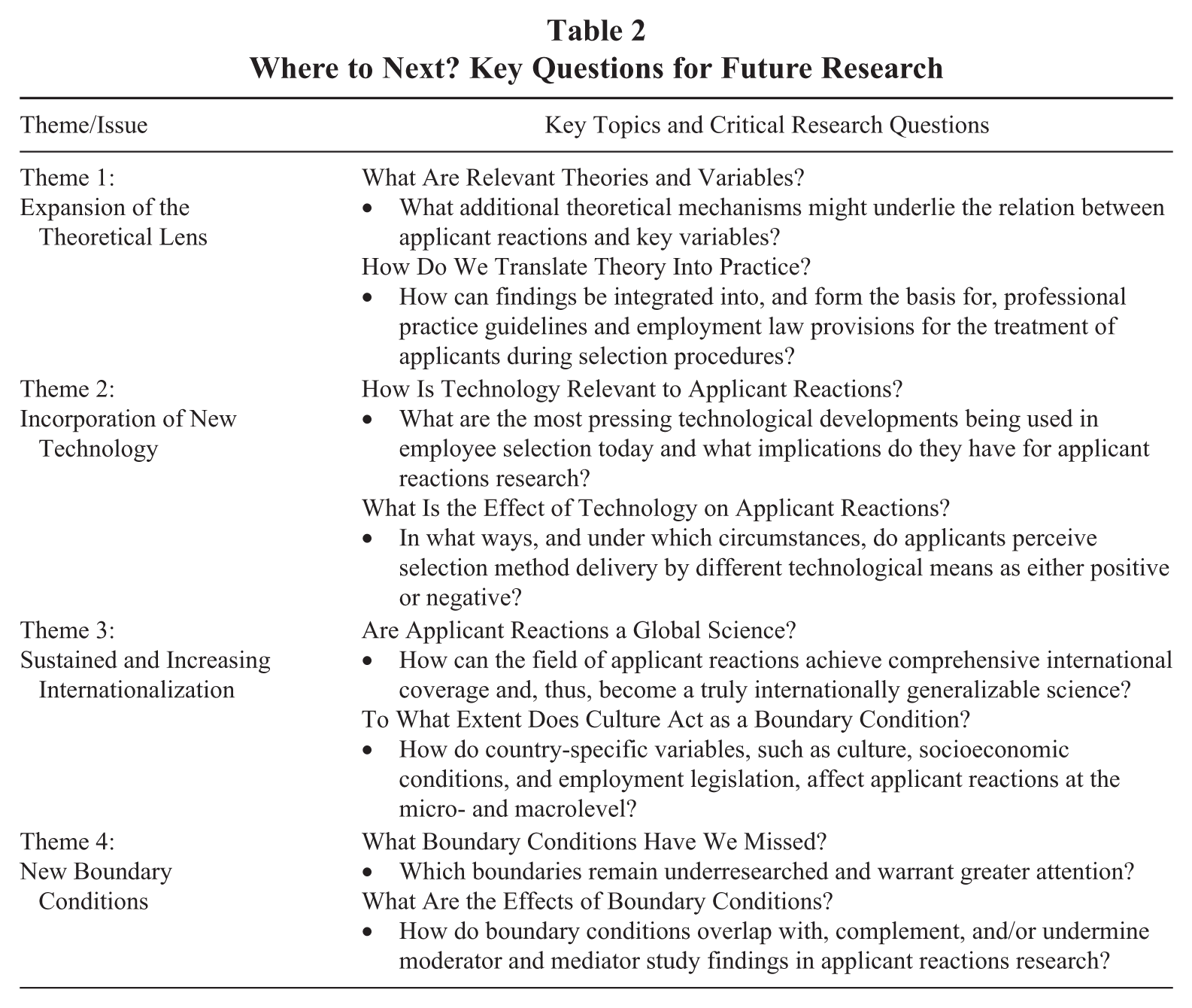

In conjunction with our conceptual framework, we offer four key ongoing challenges and themes, along with specific questions for future research (see Table 2). The first theme addresses the expansion of the theoretical lens and encourages researchers to continue to explore the theories and theoretical mechanisms that are practically relevant to applicant reactions. We further encourage the integration of additional reactions, variables, and relationships that may have previously been overlooked. Finally, we emphasize the need for additional work that translates theory and empirical findings into recommendations for practice. As mentioned earlier, progress has been made on this front—Ryan and Huth (2008) offered a set of practical recommendations, and Bauer et al. (2012) wrote an SIOP-SHRM white paper on applicant reactions geared towards practitioners. Furthermore, recent work in practice publically highlights the importance of the job applicant’s experience. For example, Google’s re:Work Web site (Google, 2016) offers a specific guide to shaping the candidate experience that was informed by research conducted in this area and includes links to specific research studies. While this is an excellent start, much more can be done to bridge the research-practice divide. Our second theme addresses the incorporation of new technology and encourages researchers to stay abreast of technological developments in personnel selection. We also emphasize the importance of studies that examine under what circumstances applicants may perceive selection techniques delivered by different technologies as positive or negative. The third theme focuses on the sustained and increasing internationalization. Within this theme, we encourage researchers to consider applicant reactions as a global science, asking how close we are to achieving comprehensive international coverage and robust moderator and mediator findings. We also emphasize the importance of societal culture as a moderator and/or mediator. Our fourth final theme addresses boundary conditions, or moderators, of applicant reactions. Meta-analytic estimates support the search for new moderators, as significant levels of variance remain after correcting for sampling error (Anderson et al., 2010; Hausknecht et al., 2004). We also emphasize the importance of considering whether boundary conditions overlap with, complement, or undermine past findings in applicant reactions research.

Where to Next? Key Questions for Future Research

Conclusion

Our review indicates that the field of applicant reactions has advanced considerably and made substantial and meaningful contributions to theory, methods, and practice of recruitment and selection over the last 15 years. First, the field boasts a broader theoretical base, which has prompted a more nuanced understanding of the mechanisms underlying applicant reactions. This is reflected in our updated conceptual framework (see Figure 1). Second, the field demonstrates stronger research designs, with studies incorporating greater control, broader constructs, and multiple time points (see Table S1 in the supplemental appendix). There is also an increased emphasis on moderators that facilitate the understanding of when and with whom applicant reactions matter. The field has expanded its international focus, with studies emerging from all corners of the world. Third, there have been significant strides in bridging the research-practice gap by providing specific practical recommendations that organizations can adopt. Researchers have also started examining interventions that can be used to leverage applicant reactions (see Table S1). Finally, the field is examining applicant reactions to new selection technologies.

Moving forward, we feel that as a field it is time to significantly increase the sophistication and impact of research in this area in order to keep up with the revolutionary changes that are taking place in the way that organizations recruit and select employees. One example is the beer company Heineken and their new “Go Places” campaign. Part of the campaign includes an interactive online job interview. The purpose is to show that Heineken is a dynamic, purposeful, and fun place to work in order to attract the millennial generation (Heineken, 2016). The field of applicant reactions is well positioned for this kind of dynamic thinking, as we have a solid theoretical and empirical foundation from which to explore innovative research questions. Below, we speculate on ways in which new studies can make revolutionary advances in what we know.

First, in line with Heineken’s interviews, we can provide applicants with real-time feedback about exam scores. This feedback could range from simple information about whether the applicant passed or failed the exam to more comprehensive information on how the applicant scored within each section and/or question. If real-time feedback is provided, consideration of how to contextualize the feedback so that applicants understand it in a way that does no harm is also essential. For instance, telling someone that he or she is highly neurotic without any contextualization may do more harm than good.

The field must also stay on the cutting edge of new proposed methods for hiring people, such as neuroscientific and biometric assessments (Tippins, 2015). Such tools could assess brain structures to determine people’s levels of cognitive ability, personality types, and emotion regulation, as examples. While this may seem very futuristic, there are suggestions that it is coming soon and is even partially here (Bolton, 2015; Maffin, 2013). Importantly, these new technologies need to be assessed not only in terms of validity and ethics but also from the applicant’s perspective to see whether they are perceived as fair and good.