Abstract

Mokken scale analysis is a popular method to evaluate the psychometric quality of clinical and personality questionnaires and their individual items. Although many empirical papers report on the extent to which sets of items form Mokken scales, there is less attention for the effect of violations of commonly used rules of thumb. In this study, the authors investigated the practical consequences of retaining or removing items with psychometric properties that do not comply with these rules of thumb. Using simulated data, they concluded that items with low scalability had some influence on the reliability of test scores, person ordering and selection, and criterion-related validity estimates. Removing the misfitting items from the scale had, in general, a small effect on the outcomes. Although important outcome variables were fairly robust against scale violations in some conditions, authors conclude that researchers should not rely exclusively on algorithms allowing automatic selection of items. In particular, content validity must be taken into account to build sensible psychometric instruments.

Item response theory (IRT) models are used to evaluate and construct tests and questionnaires, such as clinical and personality scales (e.g., Thomas, 2011). A popular IRT approach is Mokken scale analysis (MSA; e.g., Mokken, 1971; Sijtsma & Molenaar, 2002). MSA has been applied in various fields where multi-item scales are used to assess the standing of subjects on a particular characteristic or the latent trait of interest. In recent years, the popularity of MSA has increased. A simple search on Google scholar with the keywords “Mokken Scale Analysis AND scalability” from 2000 through 2019 yielded about 1,200 results, including a large set of empirical studies. These studies were conducted in various domains, such as in personality (e.g., Watson et al., 2007), clinical psychology and health (e.g., Emons et al., 2012), education (e.g., Wind, 2016), and in human resources and marketing (e.g., De Vries et al., 2003). Both the useful psychometric properties of MSA and the availability of easy-to-use software (e.g., the R “mokken” package; van der Ark, 2012) explain the popularity of MSA.

As discussed in the following, within the framework of MSA, there are several procedures that can be used to evaluate the quality of an existing scale or set of items that may form a scale. In practice, however, a set of items may not comply strictly with the assumptions of a Mokken scale and a researcher is then faced with a difficult decision: Include or exclude the offending items (Molenaar, 1997a)? The answer to this question is not straightforward. On one hand, the exclusion of items must be carefully considered because it may compromise construct validity (see American Educational Research Association et al., 2014, for a discussion of the types of validity evidence). On the other hand, it is not well known to what extent the retention of items that violate the premises of a Mokken scale affect important quality criteria.

The present study is aimed at investigating the effects of retaining or removing items that violate common premises in MSA on several important outcome variables. This study therefore offers novel insights into scale construction for practitioners applying MSA, going over and beyond what MSA typically offers. This study is organized as follows. First, some background on MSA is provided. Second, the results of a simulation study are presented, in which the effect of model violations on several important outcome variables was investigated. Finally, in the discussion section, an evaluative and integrated overview of the findings is provided and main conclusions and limitations are discussed.

MSA

For analyzing test and questionnaire data, MSA provides many more analytical tools than classical test theory (CTT; Lord & Novick, 1968), while avoiding the statistical complexities of parametric IRT models. One of the most important MSA models is the monotone homogeneity model (MHM). The MHM is based on three assumptions: (a) Unidimensionality: All items predominantly measure a single common latent trait, denoted as θ; (b) Monotonicity: The relationship between θ and the probability of scoring in a certain response category or higher is monotonically nondecreasing; and (c) Local independence: An individual’s response to an item is not influenced by his or her responses to other items in the same scale. Assumptions (a) through (c) allow the stochastic ordering of persons on the latent trait continuum by means of the sum score, when scales consist of dichotomous items (e.g., Sijtsma & Molenaar, 2002, p. 22). For a discussion on how this property applies to polytomous items, see Hemker et al. (1997) and van der Ark (2005).

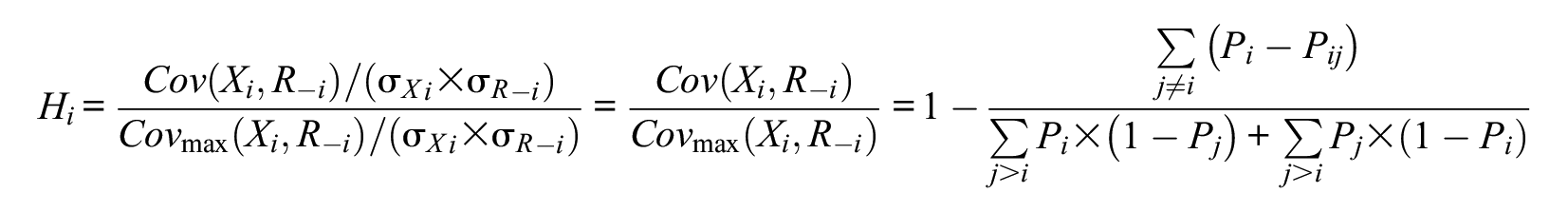

In MSA, Loevinger’s

In this formula,

Loevinger’s

A popular feature of MSA is its item selection tool, known as the automated item selection procedure (AISP; Sijtsma & Molenaar, 2002, chapters 4 and 5). The AISP assigns items into one or more Mokken (sub-)scales according to some well-defined criteria (see e.g., Meijer et al., 1990) and identifies items that cannot be assigned to any of the selected Mokken scales (i.e., unscalable items). The unscalable items may not discriminate well between persons and, depending on the researcher’s choice, may be removed from the final scale.

Both the AISP selection tool and the item quality check tool are based on the scalability coefficients. However, it is important to note that a suitable lower bound for the scalability coefficients should ultimately be determined by the user (Mokken, 1971), taking the specific characteristics of the data and the context into account. Although several authors emphasized the importance of not blindly using rules of thumb (e.g., Rosnow & Rosenthal, 1989, p. 1277, for a general discussion outside MSA), many researchers use the default lower bound offered by existing software when evaluating or constructing scales.

How is MSA Used in Practice?

Broadly speaking, there are two types of MSA research approaches: In one approach, MSA is used to evaluate the item and scale quality when constructing a questionnaire or test (e.g., De Boer et al., 2012; Ettema et al., 2007). In the other approach, MSA is used to evaluate an existing instrument (e.g., Bech et al., 2016; Bielderman et al., 2013; Bouman et al., 2011). Not surprisingly, researchers using MSA in the construction phase tend to remove items more often based on low scalability coefficients and/or the AISP results (e.g., Brenner et al., 2007; De Boer et al., 2012; De Vries et al., 2003) than researchers who evaluate existing instruments. However, researchers seldom use sound theoretical, content, or other psychometric arguments to remove items from a scale.

Researchers evaluating existing scales often simply report that items have low coefficients, but they are typically not in a position to remove items (e.g., Bech et al., 2016; Bielderman et al., 2013; Bouman et al., 2011; Cacciola et al., 2011, p. 12; Emons et al., 2012, p. 349; Ettema et al., 2007). Thus, practical constraints often predetermine researchers’ actions, but it is unclear to what extent other variables, such as predictive or criterion validity (American Educational Research Association et al., 2014), are affected by the inclusion of items with low scalability. What is, for example, the effect on the predictive validity of the sum scores obtained from a more homogeneous scale as compared to a scale that includes lower scalability items? For some general remarks about the relation between homogeneity and predictive validity, and about one of the drawbacks of relying on the

Practical Significance

In this study, the existing literature on the practical use of MSA (see Sijtsma & van der Ark, 2017 and Wind, 2017 for excellent tutorials for practitioners in the fields of psychology and education) is extended by systematically investigating how

In the remainder of this article, the methodology used to answer the research questions is described, the findings of this study are presented, and some insights for practitioners and researchers regarding scale construction and/or revision are provided.

Method

A simulation study using the following independent and dependent variables was conducted.

Independent Variables

The following four factors were manipulated:

Scale length

Scales consisting of

Proportion of items with low Hi values

In the existing literature using simulation studies, the number of misfitting items can vary between 8% and 75% or even 100% (see Rupp, 2013, for a discussion). In the present study, three levels for the proportion of items with

Number of response categories

Responses to both dichotomously and polytomously scored items with the number of categories equal to

Range of Hi values

For the

Design

The simulation was based on a fully crossed design consisting of 2(

Data Generation

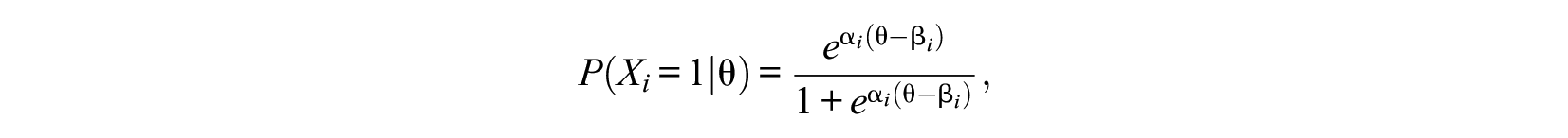

Population item response functions according to two parametric IRT models were generated: The two-parameter logistic model (2PLM; e.g., Embretson & Reise, 2000) in the case of dichotomous items and the graded response model (GRM; Samejima, 1969) in the case of polytomous items. The 2PLM is defined as follows:

where

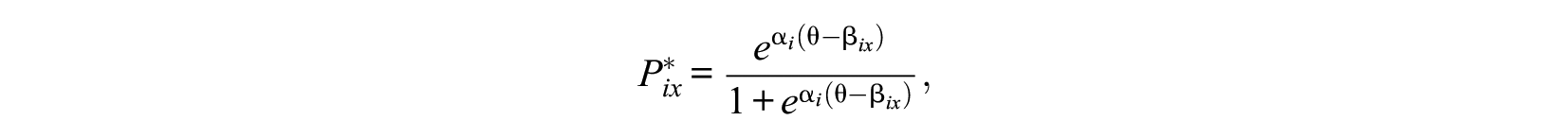

where

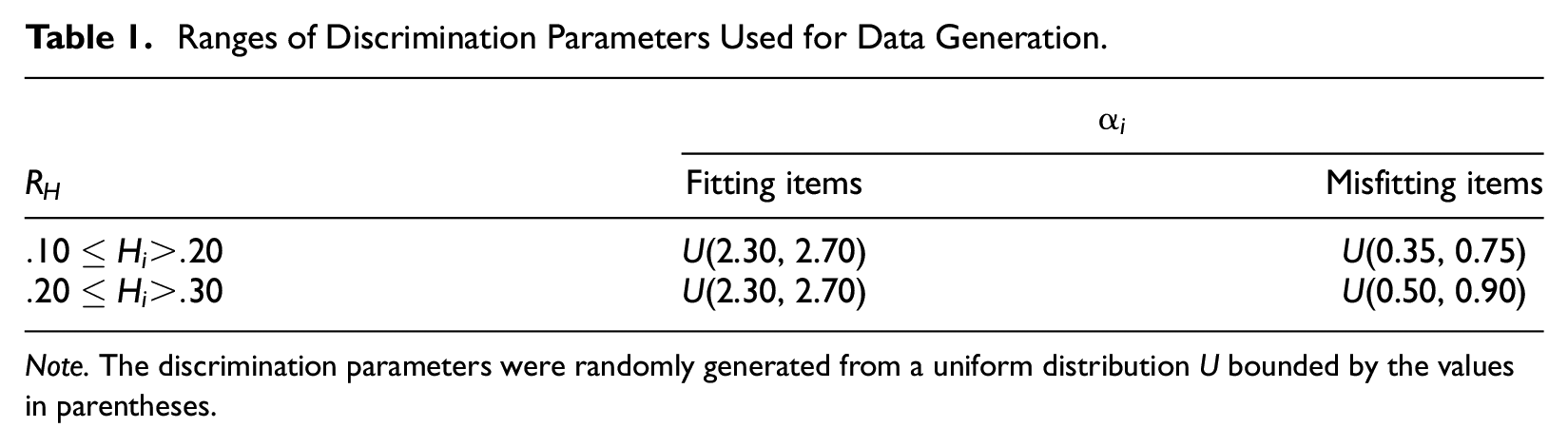

The 2PLM or the GRM was used to generate item scores, using discrimination parameters

1

that were constrained to optimize the chances of generating items with

Ranges of Discrimination Parameters Used for Data Generation.

In Table 1, the column labeled “Misfitting items” denotes the (100 ×

Finally, item scores for

Dependent Variables

The authors used the following outcome variables:

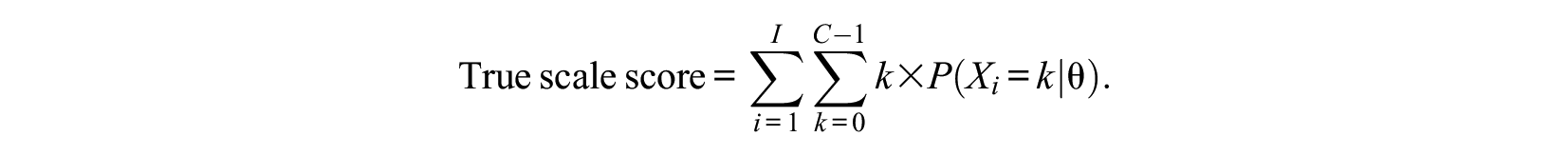

2.

3.

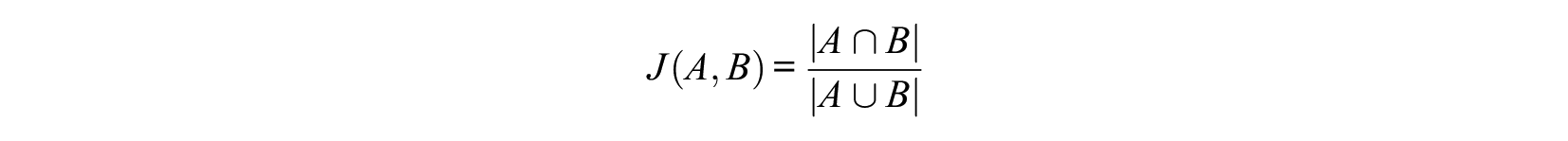

The index ranges from 0% (no top selected simulees in common) through 100% (perfect congruence). For each dataset, the authors therefore computed four values of the Jaccard Index, one for each selection ratio.

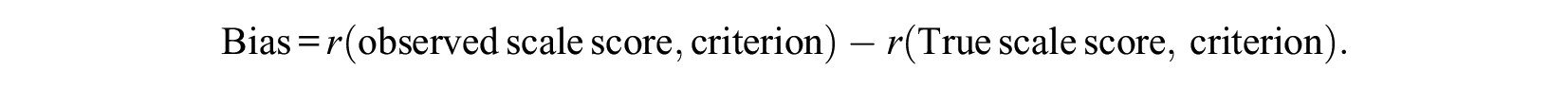

4.

The method was applied to the entire sample (

Implementation

The simulation in

Results

To investigate the effects of the manipulated variables on the outcomes, mixed-effects analysis of variance (ANOVA) models to the data were fitted, with

Scale Reliability and Rank Ordering

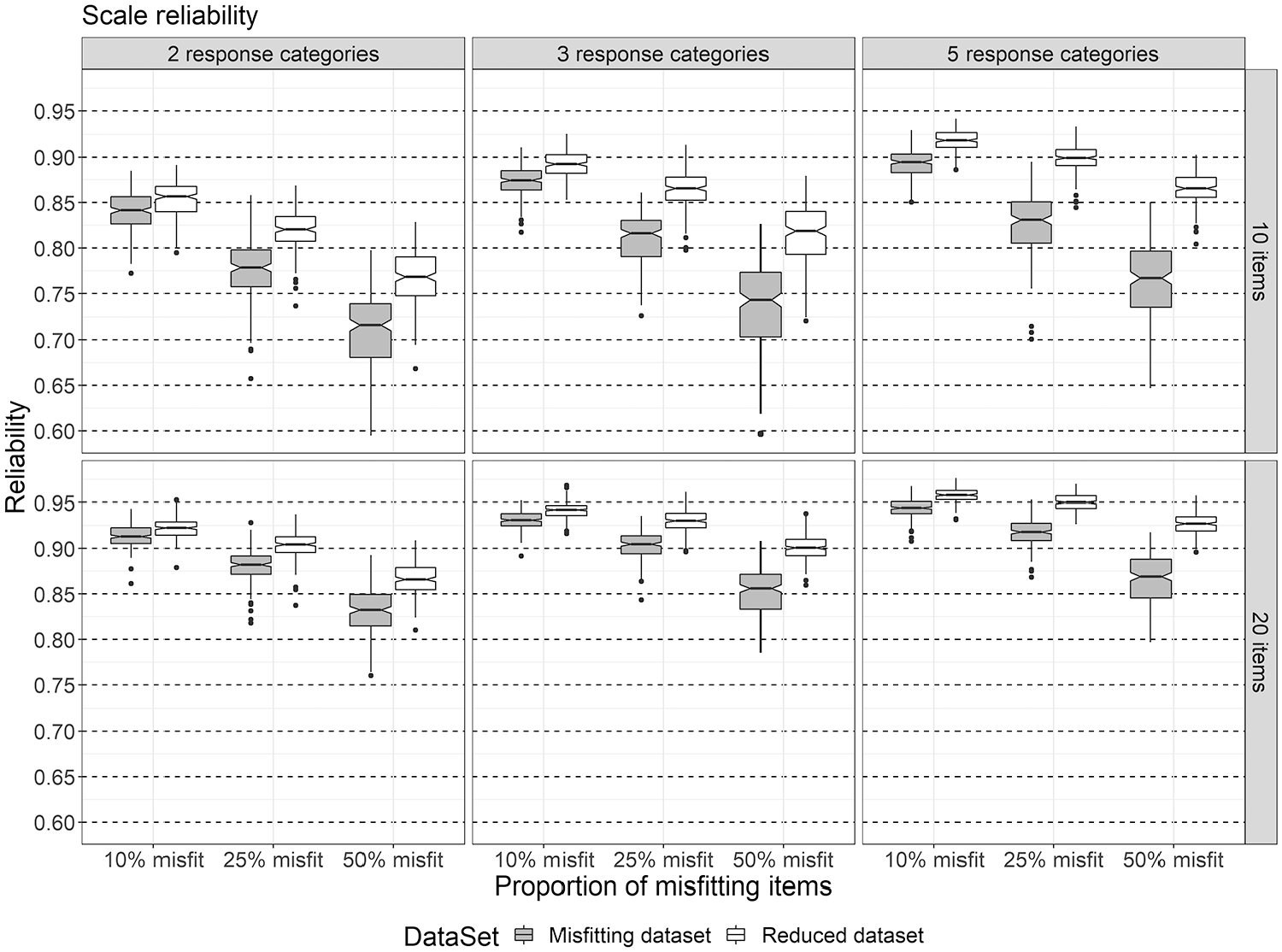

For score reliability, an average of 0.87 (

The distribution of reliability scores across the levels of

Elaborating on the effects of

Similar conclusions can be drawn for the rank ordering of persons. The average rank correlation over all conditions was 0.93 (

Regarding score reliability and person rank ordering, our findings show that scale length together with the proportion of MSA-violating items and number of response categories were the main factors affecting these outcomes: Score reliability and rank ordering were negatively affected by the proportion of items violating the Mokken scale quality criteria, especially when shorter scales were used. These outcomes were more robust against violations when longer scales were used. Removing the misfitting items improved scale reliability and person rank ordering to some extent.

Person Classification

Because large rank correlations do not necessarily imply high agreement regarding sets of selected simulees (Bland & Altman, 1986), the Jaccard Index across conditions was computed. For

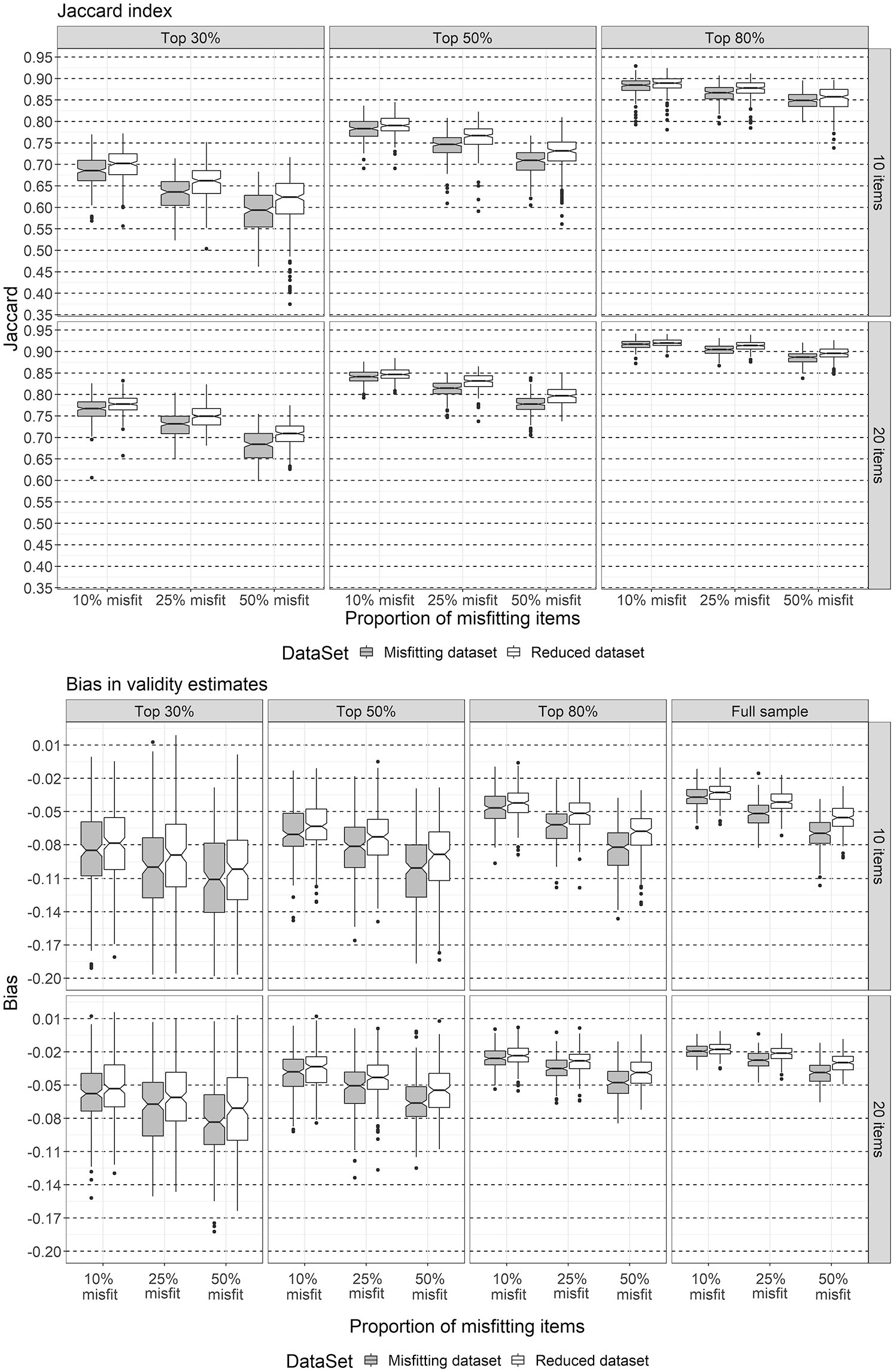

The distributions of the Jaccard Index (top panel) and of bias in criterion-related validity estimates (bottom panel) as a function of

The degree of overlap between sets of selected simulees was 80.9% averaged over all conditions, with a standard deviation of 0.09; 95% of the values of the Jaccard Index were distributed between 0.61 (about 61% overlap) and 0.94 (about 94% overlap). The ANOVA model with all main effects and two-way interactions accounted for 92.7% of the variation in the Jaccard Index. The variation was, to a large extent, accounted for by

Elaborating on the previous findings and focusing on the effects of selection ratio, scale length, proportion of items with

Bias in Criterion-Related Validity Estimates

The authors results indicated that the bias in criterion validity estimates varied, on average, between −0.05 (

Bias in validity estimates was larger in the top 30% subsample (median of −0.09) compared to the full sample (median of −0.05). Cohen’s

Furthermore, the results showed that criterion-related validity was also affected by the proportion of misfitting items. For example, when the authors wanted to predict the scores on a criterion variable of the top 30% of the simulees using a short scale, the difference in bias between

Discussion

In this study, the effects of keeping or removing items that are often considered “unscalable” in many empirical MSA studies were evaluated. Many empirical studies using Mokken scaling either remove items with

Scale score reliability, person rank ordering, and bias of criterion-related validity estimates were most affected by the proportion of items with low scalability. The authors found a decrease of about .10 in reliability and of about .05 in the Spearman correlation as the proportion of misfitting items increased from 10% to 50%. Removing the misfitting items from the scales led to a slight improvement in reliability and rank correlation (with .04 and .02, respectively). Furthermore, short scales with many misfitting items resulted in an underestimation of the true validity of .11, when predicting the scores on a criterion variable of the top 30% simulees. Removing the misfitting items reduced the bias by .01. Finally, the overlap between sets of selected simulees also decreased with .07, on average, as the proportion of misfitting items increased, and removing the misfitting items improved the overlap with .03. Interestingly, the effect of the range of item scalability coefficients had a negligible effect on the outcomes the authors studied.

In line with previous findings, scale length, number of response categories, and selection rates also had an effect on the outcome variables (e.g., Crişan et al., 2017; Zijlmans et al., 2018). The item scalability coefficient is equivalent to a normed item-rest correlation, which, in turn, is used as an index of item-score reliability (e.g., Zijlmans et al., 2018). Therefore, it is not surprising that overall scale reliability decreased as the item scalability coefficients decreased. Moreover, it is well-known that there is a positive relationship between scale length and reliability. This also partly explains our findings regarding the exclusion of misfitting items: Removing the misfitting items from the scales resulted in shorter scales, which had a negative impact on reliability.

Take-Home Message

The take-home message from this study is that depending on the characteristics of a scale (in terms of length and number of response categories), on the specific use of the scale (e.g., to select a proportion of individuals from the total sample), and on the strength of the relationship between the scale scores and some criterion, the consequences of keeping items that violate the rules-of-thumb often used in MSA item selection can vary in their magnitude. The authors tentatively conclude the following:

The number of items with

Removing misfitting items from the scale improves practical outcome measures, but the effect is moderate at best. Based on these and previous findings, the authors do not recommend removing the misfitting items from the scales when there are no other (content) arguments to do so. The relatively small gains in reliability, person selection results, and predictive validity might not outweigh the loss in construct coverage and criterion validity.

The distance between the

On one hand, these findings are reassuring because, as discussed above, researchers are often not in a position to simply remove items from a scale (see also Molenaar, 1997a). It also discharges the researcher from trying to find opportunistic arguments for keeping an item in the scale with, say, a relatively low

On a more general note, one should keep in mind that items can exhibit other kinds of misfit apart from low scalability, such as violations of invariant item ordering or of local independence. Thus, adequate scalability does not mean that items are free from other potential model violations.

Limitations and Future Research

This study has the following limitations: (a) The data generation algorithm of the simulation study was based on a trial-and-error process to sample items with scalability coefficients within the desired range. A more refined method to generate the data could have improved the efficiency of the algorithm used here. (b) In this study, the authors only considered either dichotomous or polytomous items with a fixed number of response categories (i.e., either three or five) per replication. It is of interest to consider mixed-format test data in future studies. (c) The practical outcomes the authors considered here are by no means exhaustive or equally relevant in all situations. Depending on the type of data and the application purpose, other outcomes might also be relevant. Therefore, this type of research can be extended to other outcomes of interest. Moreover, other types of scalability (e.g., person scalability) could have important practical consequences. These aspects should be addressed in future research.

Supplemental Material

Supplemental_Material – Supplemental material for On the Practical Consequences of Misfit in Mokken Scaling

Supplemental material, Supplemental_Material for On the Practical Consequences of Misfit in Mokken Scaling by Daniela Ramona Crişan, Jorge N. Tendeiro and Rob R. Meijer in Applied Psychological Measurement

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplementary material is available for this article online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.