Abstract

Research suggests that people spontaneously infer traits from behavioral information, thus forming impressions of actors’ personalities. Such spontaneous trait inferences (STI) have been examined in a wide range of studies in the last four decades. Here, we provide the first systematic meta-analysis of this vast literature. We included data from k = 86 publications, with overall N = 13,630 participants. The average STI effect was moderate to large (dz = 0.59) and showed substantial heterogeneity. The type of experimental paradigm significantly moderated the STI effect size, with larger effects in long-term memory–based paradigms compared with working memory–based paradigms. Generally, STI effects were robust to various methodological variations and also to potential concerns of publication bias. Contrary to expectations, cultural background (independent vs. interdependent) did not emerge as a significant moderator of STI effects. We discuss these findings with respect to their theoretical relevance and derive implications for future research and theorizing.

Theorizing and research on person perception has for a long time worked with the assumption that people rapidly form impressions of other people by drawing various inferences based on their appearances and behaviors (e.g., Uleman et al., 2008). Spontaneous trait inferences (STI) are one prominent and well-established phenomenon in this domain, documenting that people spontaneously infer personality traits from others’ behaviors (Uleman, Newman, & Moskowitz, 1996). For instance, upon observing or reading about a person who carries an old woman’s groceries across the street, people tend to infer that this person is helpful (Winter & Uleman, 1984).

A wealth of studies has examined STIs, investigating their underlying mechanisms, process characteristics, moderators, and boundary conditions in studies with a multitude of experimental designs and in various samples across different countries. Several narrative reviews have summarized this literature (e.g., Hamilton & Stroessner, 2021; Moskowitz, 2005; Orghian et al., 2015; Uleman, 1987; Uleman et al., 2005, 2012, 2008; Uleman, Newman, & Moskowitz, 1996; Uleman & Saribay, 2018). Surprisingly, however, no quantitative review or systematic meta-analysis on STIs exists to date. Given the remarkable number of studies on this topic and the phenomenon’s assumed high robustness (Uleman et al., 2008), quantifying the average overall effect size of STIs appears to be overdue. Moreover, the large variety of experimental paradigms, stimuli, and types of samples in STI research calls for moderator analyses to systematically explore which conditions affect the occurrence and size of the STI effect. Our meta-analysis fills this gap by systematically analyzing the average STI effect size, its robustness and heterogeneity, and its potentially relevant moderators. We also believe that this meta-analysis will facilitate important theoretical advances in the field of STIs and help identify open questions for future research. Furthermore, it will provide valuable information for STI researchers who seek to calibrate their experimental designs to their research endeavors. We provide a short overview of potentially relevant moderator variables in the introduction to this meta-analysis and later discuss in more detail their meaning and implications for our theorizing on STIs in impression formation.

Experimental Paradigms for the Investigation of STIs

The focus of STI research in the past lay mostly on investigating the assumed non-intentionality and spontaneity of person inferences from behavior. This objective was initially faced with the difficulty of developing appropriate assessment techniques (Uleman et al., 2008), but a number of indirect measurement procedures have since been proposed (see Supplemental Material A for a comprehensive overview). STI paradigms typically require participants to process trait-implying behavioral information about actors during an initial encoding phase (e.g., to read the statement “The reporter steps on his girlfriend’s feet as they foxtrot.”; Winter & Uleman, 1984). In a subsequent indirect test, which often requires some sort of recognition or memory retrieval, participants are prompted with the previously implied trait words (e.g., clumsy) versus control words to examine whether task performance is enhanced or impaired in a way consistent with previous spontaneous person inferences (for an exception, see Orghian et al., 2017). Using these paradigms, research over the last four decades has provided tremendous empirical support for the assumption that STIs are indeed person-specific inferences that spontaneously emerge during the encoding of actor and behavior information, even without the observer’s explicit intention or subjective awareness of this process (Orghian et al., 2015; Uleman et al., 2008; Uleman, Newman, & Moskowitz, 1996).

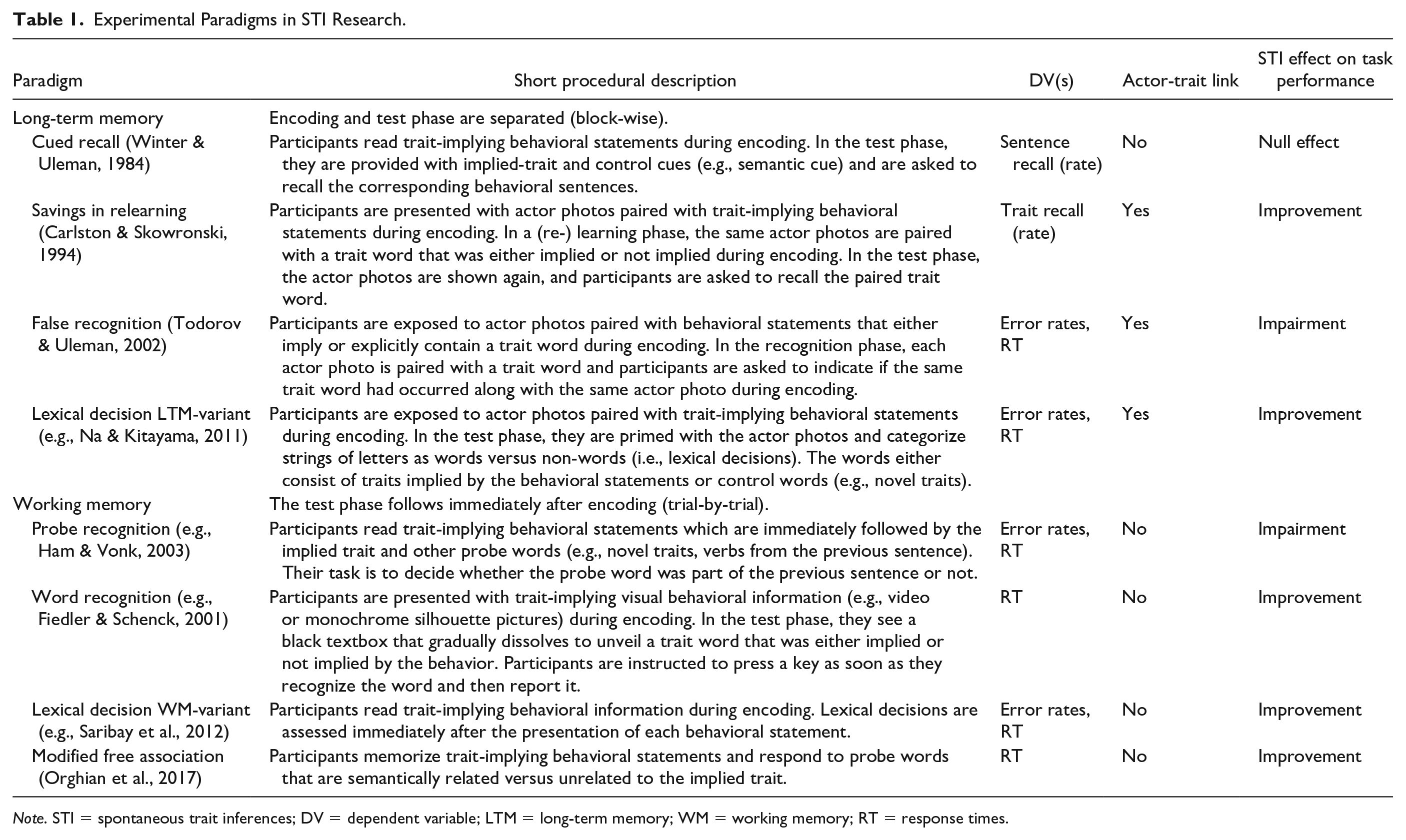

The procedural characteristics of these various experimental paradigms offer different yet complementary advantages but also methodological restrictions for the investigation of STIs that need to be considered when interpreting their outcomes (see overview in Table 1 and more detailed description in the Supplemental Material A). Systematically analyzing effect size differences between experimental paradigms could provide valuable insights from a methodological as well as a theoretical standpoint. First, and most obviously, observing significant STI effects in all paradigms would support the repeatedly stated robustness of the effect (Uleman et al., 2008). Second, investigating effect size differences between paradigms provides an informative empirical basis for choosing methodological procedures in future research. Third, and most importantly, a closer inspection of the individual paradigms may allow us to cluster them based on shared methodological characteristics that, in turn, allow further conclusions with regard to the underlying mechanisms of STIs.

Experimental Paradigms in STI Research.

Note. STI = spontaneous trait inferences; DV = dependent variable; LTM = long-term memory; WM = working memory; RT = response times.

Based on one predominant characteristic of the paradigms, we have categorized them into working memory–based versus long-term memory–based paradigms. In working memory–based paradigms, the test phase follows immediately after encoding of behavioral information, typically on a trial-by-trial basis. These paradigms appear most suited to investigate STI effects during spontaneous encoding of behavioral information. However, STI effects on task performance in these paradigms may predominantly rely on currently activated behavior–trait associations, which limits interpretability with regard to actor-specificity of trait inferences. In long-term memory–based paradigms, behavior encoding and test phase are structurally and temporally separated. More specifically, participants are first presented with trait-implying behavioral information, typically about several actors, and then—after an interval of varying duration that may or may not include filler tasks—enter a separate test phase. In consequence, performance in these paradigms additionally relies on long-term memory effects. Furthermore, the memory tests in these paradigms typically use some actor information as retrieval cue (photo, name, etc.), which allows for the conclusion that observed STI effects are indeed inferences about the actors rather than mere activations of trait–behavior associations (e.g., Todorov & Uleman, 2003).

Comparing the two groups of paradigms in our meta-analysis allows for a better differentiation of the processes relevant to STI formation and/or STI expression. For example, if STIs are formed indeed spontaneously upon encoding behavioral information (e.g., Uleman, Hon, et al., 1996), average effects of considerable size should already be visible in the working memory–based paradigms. The comparison to the average effect size in long-term memory paradigms would be equally informative: For example, smaller effect sizes in long-term compared with working memory–based paradigms could be taken as an indication that spontaneously formed impressions fade over time (and thus may have little impact on enduring impression formation). Also, such a result would support the assumption that spontaneous inference effects reflect the mere activation of behavior–trait associations rather than inferences about actors (e.g., Todorov & Uleman, 2004; Uleman et al., 2012). Finding comparable effect sizes in both groups of paradigms, on the other hand, would support the assumption that STI effects indeed represent actor-specific inferences (Todorov & Uleman, 2002). Finally, observing larger effect sizes in long-term memory–based paradigms than in working memory–based paradigms may indicate that impression-based biases of retrieval processes play a further role in STI effects.

Furthermore, the different experimental paradigms also allow for the implementation of different experimental manipulations, varying task instructions, different characteristics of the stimulus material and procedures, and the type of control conditions applied to examine STIs. In addition, some paradigms allow researchers to choose among different dependent variables (DVs) for investigating STI effects. These further methodological aspects of STI research also warrant meta-analytical investigation because they may also have theoretically relevant implications.

Generalizability of STI Effects

Besides the methodological variations in STI research, the current meta-analysis also allows inspection of the generalizability of STI effects across different sample characteristics. Besides exploring gender and age differences, we focus on two characteristics that appear relevant for theoretical conceptualizations about the psychological foundation of STI effects in reference to cultural influences.

Cultural differences in STI effects

It has been repeatedly argued that person perception is culturally grounded and affected by cross-cultural differences in person construal and behavior attribution processes (e.g., Markus & Kitayama, 1991; Nisbett et al., 2001). For example, cultures with a predominantly independent person construal emphasize each individual’s uniqueness and autonomy in behavioral decisions, whereas cultures with predominantly interdependent person construal emphasize the effects of the social and material context on persons and accentuate the individual’s social and situational connectedness (Triandis, 2018). Related research has documented that participants from interdependent cultures are more likely to attribute behaviors to situational contexts than to actor’s personal traits (e.g., Masuda & Kitayama, 2004; Miller, 1984; Miyamoto & Kitayama, 2002, 2018; Morris & Peng, 1994), but recent research could not replicate these differences (Carstensen et al., 2021). Similarly, previous research has documented more pronounced STI effects in participants with independent compared with interdependent cultural backgrounds (e.g., Lee et al., 2017; Na & Kitayama, 2011; Newman, 1991; Shimizu et al., 2017; Zárate et al., 2001).

We investigate cultural differences first by comparing STI effects in samples from countries that score relatively high in individualism and independence (i.e., European, North American) with samples from countries scoring relatively high in collectivism and interdependence (i.e., Asian). We expect larger STI effects in individualistic samples compared with collectivistic samples. We complement this analysis using the continuous country aggregates of the individualism–collectivism dimension provided by Hofstede (2001) as a continuous predictor of the STI effect size. Finally, we include the country aggregates of the other five cultural value dimensions discussed by Hofstede (i.e., power distance, masculinity vs. femininity, uncertainty avoidance, long-term vs. short-term normative orientation, and indulgence vs. restraint) into an exploratory moderator analysis.

Method

We preregistered this meta-analysis with the Open Science Framework in line with the checklist provided by PRISMA-P (Moher et al., 2015), adapted to our nonclinical research focus. There, we also provided an addendum after the coding process, specifying our analysis strategy and addressing several adjustments to the preregistered procedure and provide all data and analyses codes (https://osf.io/d5x9p).

Literature Search and Eligibility Criteria

We used the terms “spontaneous trait inference” OR “spontaneous trait inferences” without limiting the publication date, searching the PsycINFO, Web of Science, ProQuest, and EBSCO databases and Google Scholar. In addition, we sent out open calls for unpublished studies on July 14 and 16, 2019, via the open forum of the Society for Personality and Social Psychology and the Email service of the European Association of Social Psychology. Finally, we conducted a backward search based on the reference lists of all included publications and a forward search screening all records citing included publications.

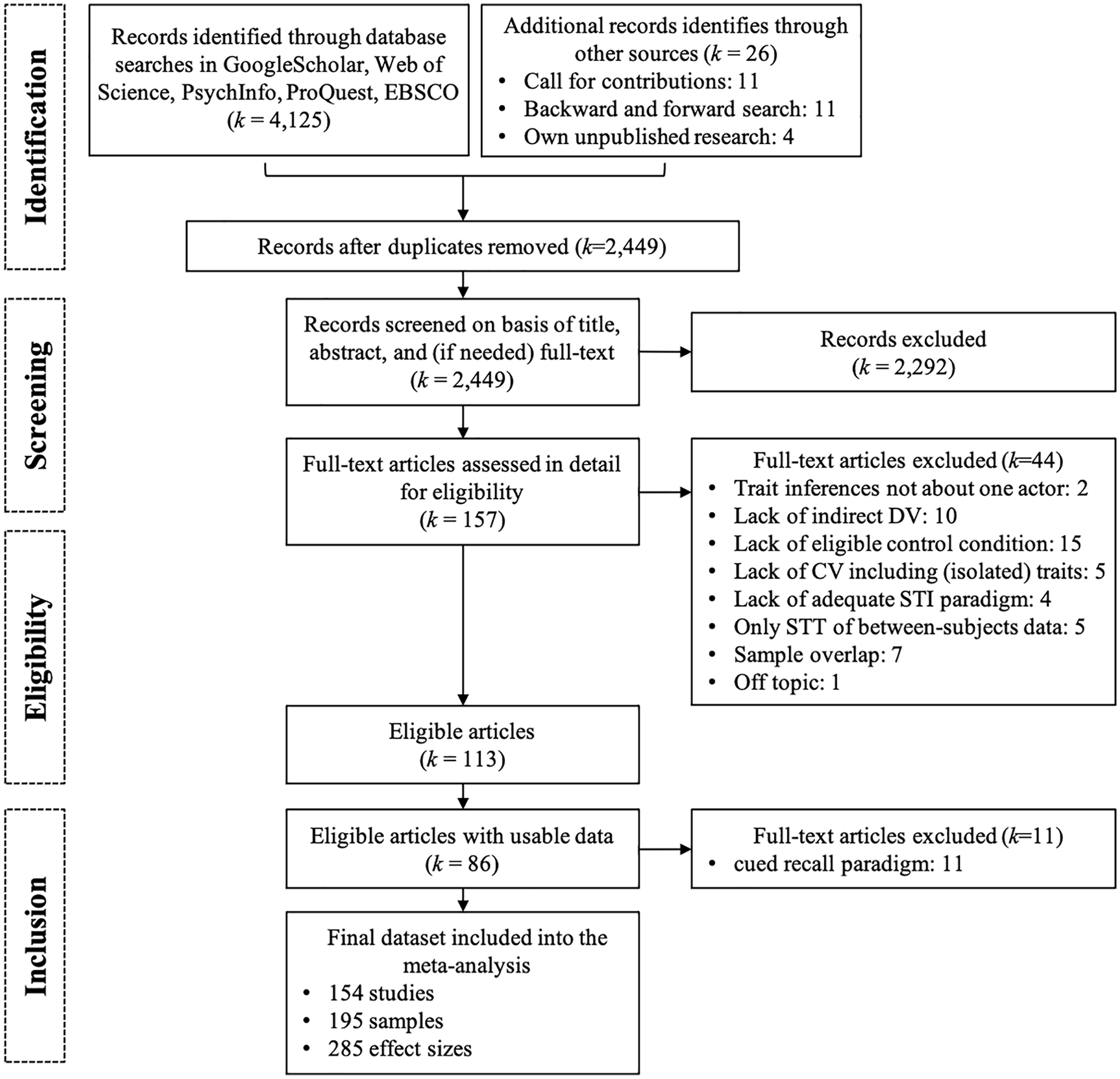

The database search was completed on October 25, 2018, and yielded 2,449 records after deleting duplicates (see Figure 1). Each record was screened for eligibility by two of the five independent coders, except for records in languages other than English, which were screened by only one coder each. Any discrepancies were resolved by discussion with the senior author (J.D.) if needed. We included studies meeting the following criteria:

Studies examining STIs from observed or reported human behavior. We excluded studies examining other inferences from behaviors (e.g., situation inferences, goal inferences, or other state inferences). We also excluded studies on trait inferences from information other than human behavior, for example, from faces, brands, contextual information, the behavior of animals, or attribute conditioning studies.

The traits had to be inferred about individual human actors. We excluded studies investigating trait inferences about groups of people, social categories, organizations, or animals.

The DV used had to refer to person-describing traits. We therefore excluded studies that used behavior predictions or person evaluations.

The measurement of STIs had to be indirect, for instance using error rates and/or response times in response to trait probes. We thus excluded studies using direct measures of trait impressions, such as open impression expressions, rating scales, or behavior predictions.

We excluded effect sizes based on brain imaging techniques (e.g., functional magnetic resonance imaging and electroencephalogram) given that effect sizes are difficult to compare with more frequently used DVs (at least in this meta-analytic context).

We included only studies conducted with nonclinical samples of native speakers.

In line with methodological criticisms (D’Agostino & Beegle, 1996) and incomparability of effect size estimation with all other paradigms, we excluded all studies that used the cued-recall paradigm (see detailed explanation in the Supplemental Material A). This exclusion criterion was not preregistered and applied only after a review of a previous version of this manuscript.

Flowchart of the coding process.

Coding Procedure

We coded all eligible publications according to an adapted version of our preregistered procedure. Each study published in English or German was coded by two of the five first authors. Studies published in Portuguese were coded by the only Portuguese-speaking author (F.K.) of this article and verified by a second author (A.B.) with the help of a native speaker of Portuguese. We coded each eligible effect size separately, allowing for the inclusion of multiple contrasts (and other within-person conditions) within samples, multiple samples within studies, and multiple studies within publications. In cases of insufficient reporting of the required information, we contacted the authors of the respective publication. 1

Recorded Variables

Based on narrative reviews on STIs, we had initially identified several relevant moderator variables that could, however, not be coded from the literature. Furthermore, we excluded moderator levels from analyses, for which fewer than 5 effect sizes were available (see Supplemental Table S6 for a comprehensive list). In the following sections, we only describe those variables that were included in the reported analyses of this article. Our project in the Open Science Framework contains a comprehensive overview of the complete coding scheme along with the preregistration and addendum to the preregistration (see https://bit.ly/3cHAJa8 and the Supplemental Material B).

Experimental paradigms

We coded research paradigms as savings in relearning, false recognition, lexical decision, probe recognition, or word recognition. We also coded cued recall, which was, however, excluded from the main analyses. 2 Because researchers sometimes used different labels for these paradigms, we based coding on the provided procedural descriptions (see Supplemental Material A). We additionally clustered the savings in relearning and false recognition paradigms as long-term memory–based because they implement structural and temporal separation between learning of actor-behavior information and memory test using various retention intervals with or without filler and distractor tasks. In this line, we clustered the probe recognition paradigm and the word recognition paradigm as short-term memory–based because they implement recognition tests immediately following each actor-behavior statement. The lexical decision paradigms were categorized as working memory–based if the lexical decision task was implemented on a trial-by-trial basis and as long-term memory–based when it was implemented as a block-wise testing procedure following an independent learning phase.

Instructions during behavior encoding

We coded the following instructions: impression formation, memorization, and familiarization. Note that several studies included manipulations of instructions but did not provide effect sizes separately for each condition such that we could not include them in this moderator analysis.

Contrasts

We coded all relevant STI contrasts for each given DV. We compared the following contrasts: implied versus implied other, implied versus novel trait, and implied versus antonym trait.

Dependent variables

We coded DVs as response times, error rates/accuracy, open recall of sentence parts, and recall of sentences. We were able to conduct moderator analyses including different DVs only for the probe recognition and the false recognition paradigm (RTs vs. error rates).

Stimulus and procedural characteristics

We recorded the following stimulus characteristics: number of tested traits (continuously), type of actor information (verbal and visual, verbal-only, and visual-only), type of verbal actor information (name, pronoun, and label/profession), type of verbal behavior description (sentence and paragraph), verbal behavior formulation (first person and third person). We coded the following procedural characteristics: filler task (absent, present), time interval between encoding and test phase continuously (in seconds for the working memory–based paradigms; in minutes for the long-term memory–based paradigms), and categorically (minutes vs. days in the savings in relearning paradigm).

Sample demographics

We recorded the mean age of the sample (continuously), sample type (children, students, adults), and the percentage of males within the sample (continuously).

Cultural differences

To establish the cultural background of the samples, we recorded the nationality of the sample and the sample ethnicity. If neither sample ethnicity nor nationality was explicitly reported, we used information about the location of the study or the first author’s affiliation as indirect indicators of sample nationality. We later categorized the apparent mono-cultural samples from Belgium, Canada, Germany, Italy, the Netherlands, Portugal, the United Kingdom, and the United States as “independent” and the monocultural samples from China and Japan as “interdependent.” 3 Although we had additionally planned to include bicultural samples (e.g., Asian Americans, Asian Canadians), we had to exclude this subgroup analysis because only k = 4 effect sizes were eligible. Second, we retrieved the country average values of the six Hofstede (2001) dimensions from all included sample nationalities (https://www.hofstede-insights.com) as continuous indirect indicators of the samples’ cultural backgrounds.

Additional variables

Finally, we coded the publication status (published, unpublished), year of publication (continuously), and impact factor (continuously) of each record.

Coding of Statistical Information

For each contrast, we coded the following statistical information, if available: means, standard deviations, standard errors, sample sizes, t value, F value, p value, correlation of measurements, Cohen’s d, η², η²p, degrees of freedom, and transformations applied to the data. Finally, we coded whether the results displayed an STI-conform effect.

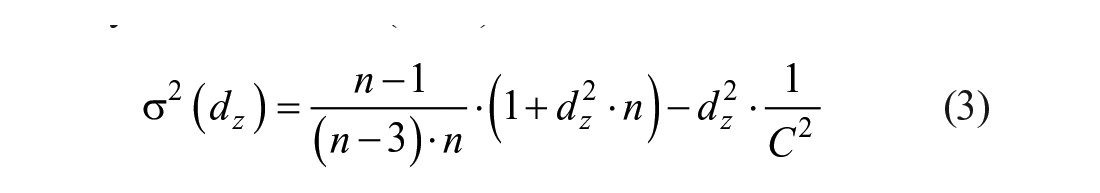

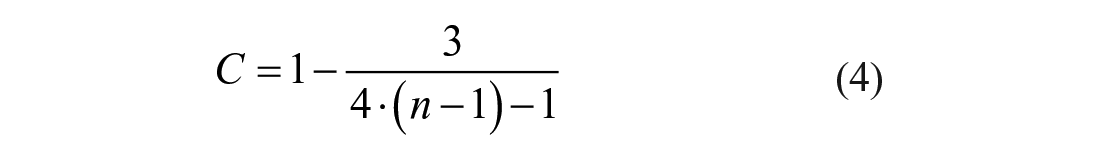

Effect Size Calculation

Given that almost all STI effects were based on within-subjects contrasts, we used the effect size dz (Lakens, 2013), which represents the mean difference between two within-subject conditions standardized by the standard deviation of this difference score. We used the equations provided by Lakens (2013) and Rosenthal (1991) to calculate dz from reported t-statistics and F-statistics:

In cases where t or F values were not available, we calculated t values based on the available information (i.e., difference scores with standard deviations or standard errors, exact p-values, means with standard deviations, and the correlations between conditions). In cases where no exact sample size or degrees of freedom for the effect size of interest were available, the sample size was approximated by dividing the overall sample size by the number of groups (with random allocation using the statistics software R in case the number was not evenly divisible). The sampling variance of dz was calculated with the Equations 3 and 4 provided by Gibbons et al. (1993):

Meta-Analytic Models

We included all relevant effect sizes from each sample such that many samples yielded multiple dependent effect sizes (e.g., when multiple DVs or STI contrasts were reported). This leads to statistical dependencies among effect sizes from the same sample. One way to address these statistical dependencies is the use of multilevel meta-analysis which models effect sizes as nested in samples (e.g., Cheung, 2014; Van den Noortgate et al., 2013). While this method does not model the dependencies exactly given the absence of full information about the covariance of effect sizes, simulation studies have generally supported its use (e.g., López-López et al., 2017; Moeyaert et al., 2017; Van den Noortgate et al., 2013). In addition to the inclusion of multiple effect sizes per sample, samples of participants were nested in studies, and studies were nested in publications. We therefore included “study” and “publication” as further levels in our multilevel meta-analysis (Fernández-Castilla et al., 2020). This resulted in a five-level meta-analytic model: Level 1: sampling variance of effect sizes; Level 2 (effect sizes): variation between effect sizes within samples; Level 3 (samples): variation between samples within studies; Level 4 (studies): variation between studies within publications; and Level 5 (publications): variation between publications. We implemented multilevel meta-analyses using the function rma.mv from the R package metafor (Version 2.0.0; Viechtbauer, 2010) of the open-source statistics software R (Version 3.4.3; R Core Team, 2018).

Main Analysis

For the main analysis, we fitted the five-level meta-analysis without additional predictors. The intercept of this model quantified the average overall effect size of STIs. The heterogeneity of effect sizes was estimated by using an overall Q-test, interpreting the square root of the variance estimates from the five-level meta-analysis (i.e., standard deviations on each level: τ2, τ3, τ4, and τ5, respectively), and using likelihood ratio tests to assess fit improvement when individual variance parameters were included versus excluded. The variance on Level 3 (sample) was estimated as zero, and this parameter was removed from further analyses, yielding a four-level meta-analytic model.

Moderator Analyses

All moderator analyses were implemented as four-level meta-analytic models with additional predictors. Given the high level of uncertainty with which variance components are estimated in the case of few effect sizes (Bender et al., 2018), moderator analyses based on few effects have to be interpreted with caution. In the first step, we examined the effects of paradigm (categorical; all levels with at least 5 effect sizes included) and paradigm type (long-term memory vs. working memory). Both were tested using likelihood ratio tests comparing the model with the predictor against a model without predictors. Given the pronounced effects of the paradigm, we controlled for it in all further moderator analyses. Thus, in line with the addendum to our preregistration (https://bit.ly/3cHAJa8), we created a base model with paradigm (categorical) as a predictor. 4 Further moderators were tested using likelihood ratio tests comparing the base model with a model including the base predictor (paradigm) and the additional moderator.

For all moderators, we also examined regression coefficients but based the statistical inference on likelihood ratio tests. To facilitate interpretability, the most frequent level of the moderator was used as the intercept. Continuous moderators were z-standardized. In addition to regression coefficients, we also examined the empirical overall effect size for each moderator level (categorical predictors). These empirical effect sizes were computed as the intercept of a four-level model including only data in which the moderator of interest had the specified level. 5 We provide 95% confidence intervals for all effect size estimates and regression coefficients.

In general, we applied the following rule for the inclusion of moderator levels: We included all moderator levels with five or more effect sizes and all continuous moderators with at least five effect sizes. Because the type of paradigm was included in the base model, we excluded all paradigms with less than five entries and all paradigms categorized as other for any given moderator analysis. For some individual moderator analyses, however, the approach of merely controlling for paradigm was not reasonable as the moderator could only be meaningfully examined within certain paradigms. In these cases, moderator analyses were implemented within individual paradigms or sets of paradigms. This was done for the following moderators: instruction (only meaningful for long-term memory paradigms), DV (examined within single paradigms), filler task (only meaningful for long-term memory paradigms), and the time interval between encoding and test phase (examined separately for working memory paradigms ranging in [milli-]seconds, long-term paradigms ranging in minutes, and for the savings in relearning paradigm ranging between minutes and days).

Publication Bias

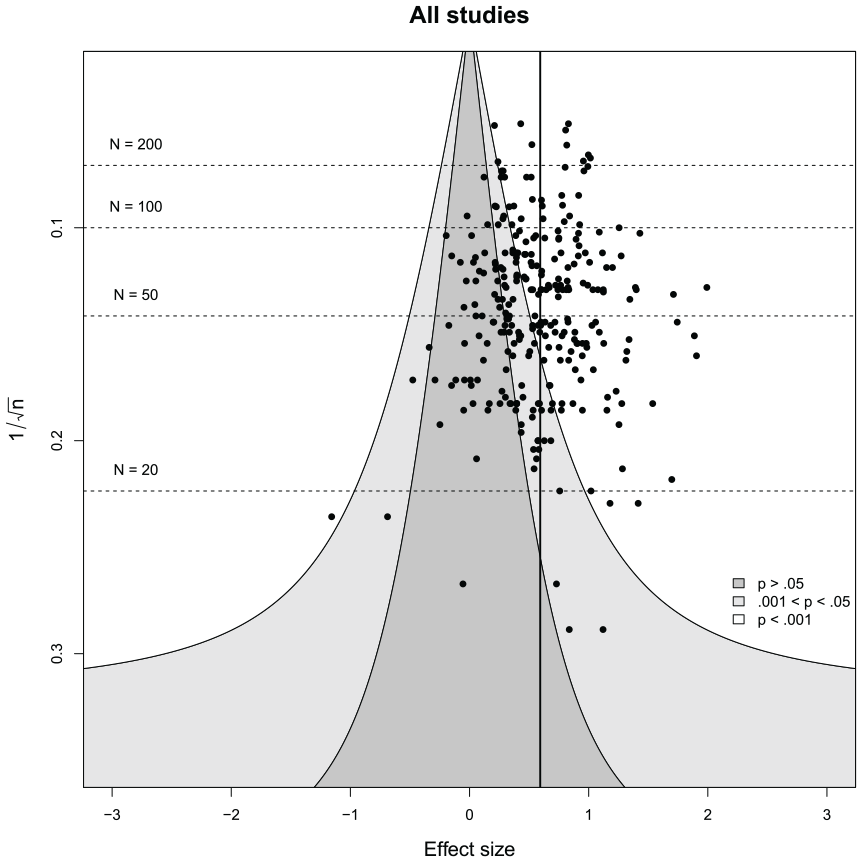

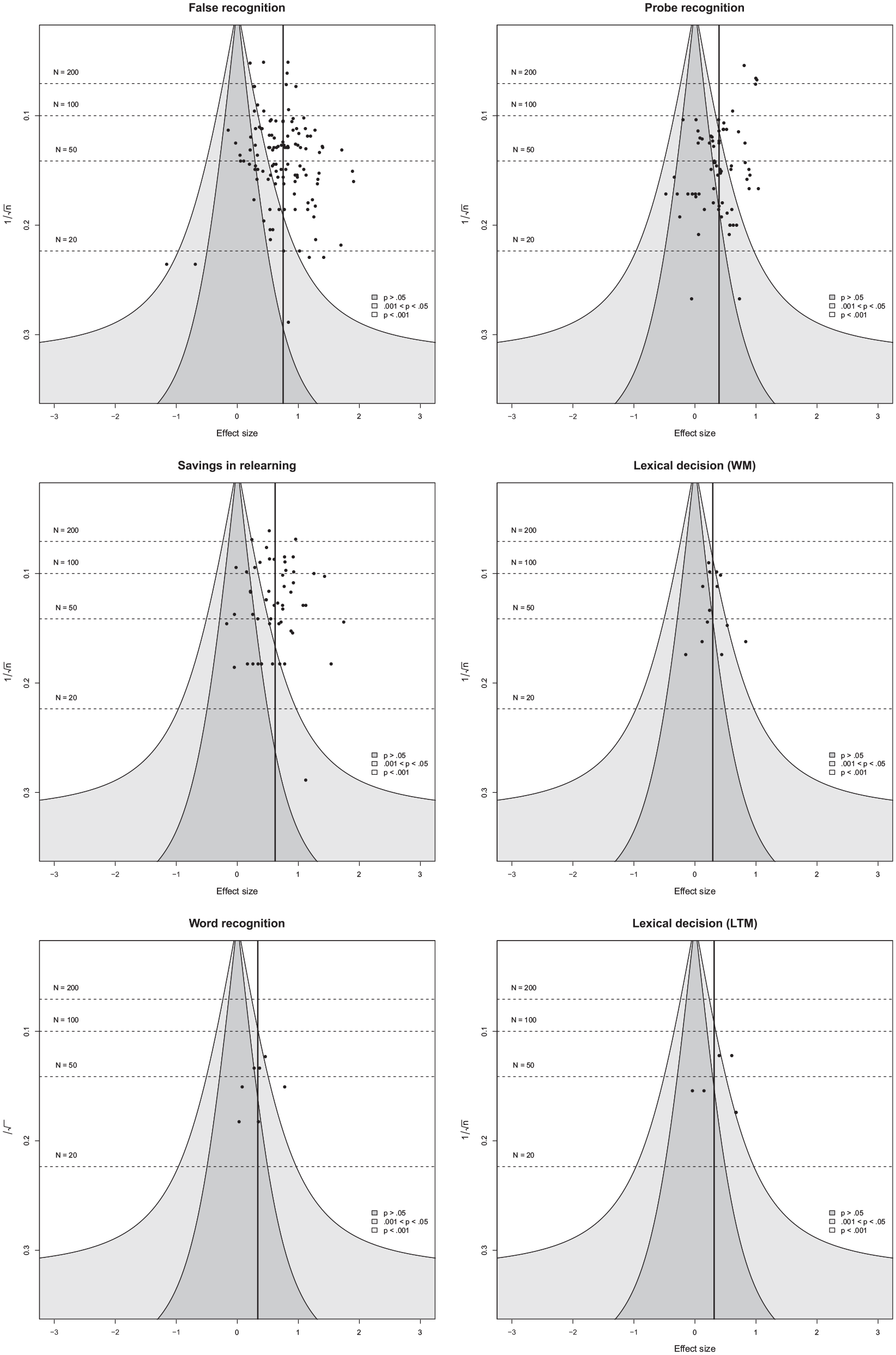

The potential role of publication bias (Rothstein et al., 2005) was examined using three approaches: First, we visually examined funnel plot asymmetry (using

Results

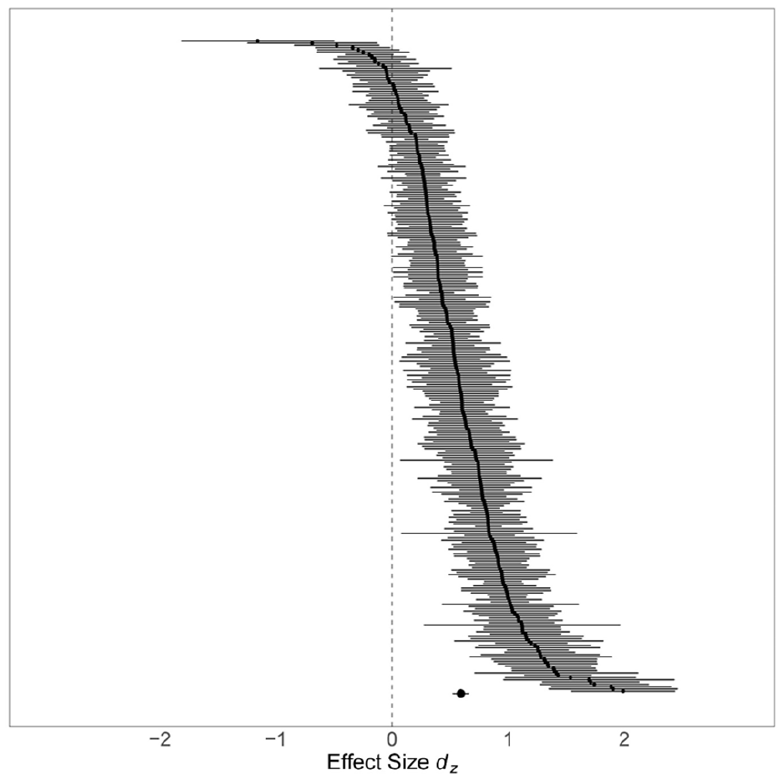

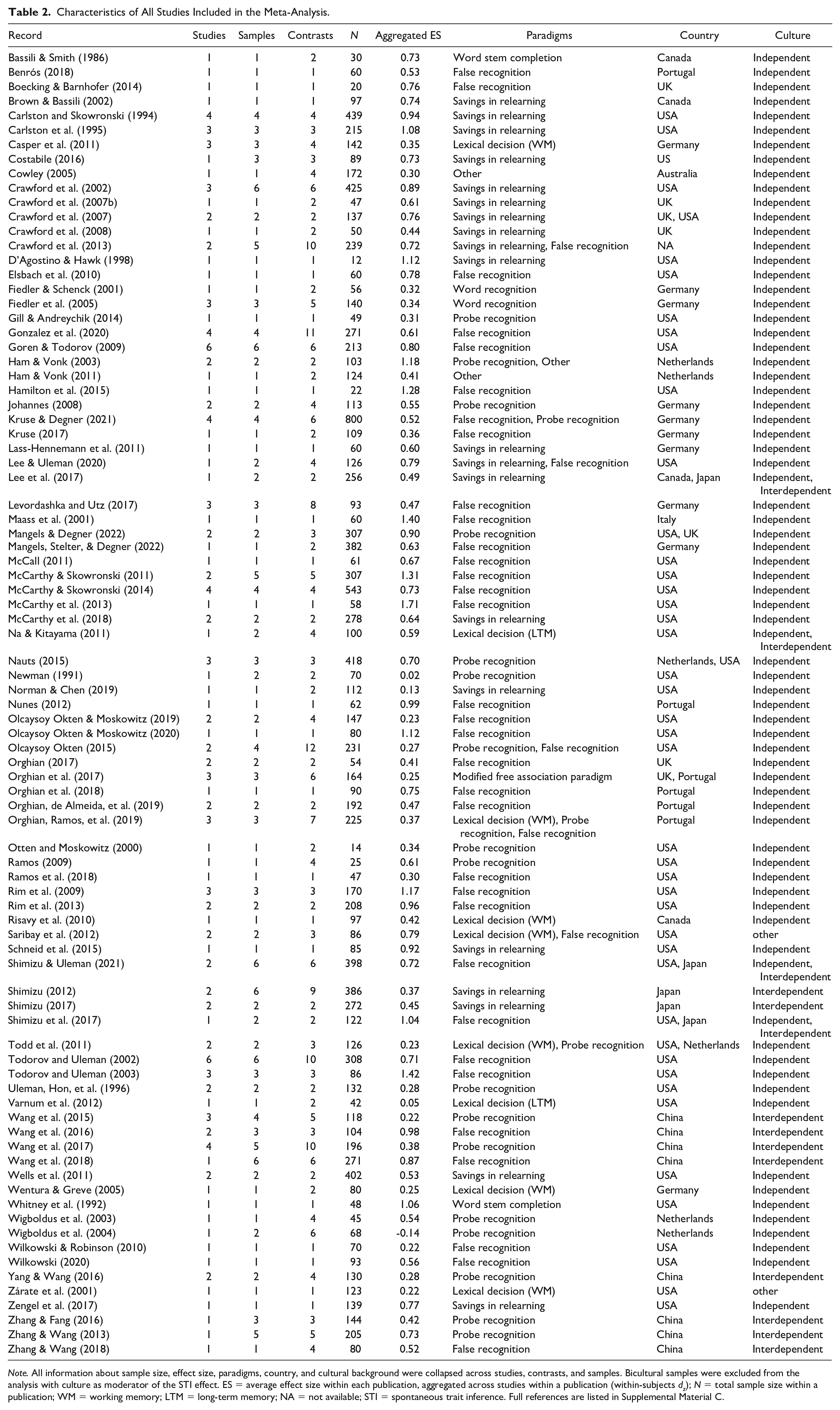

The screening process resulted in 102 eligible publications (see Flowchart in Figure 1) of which 86 provided sufficient data for coding and effect size calculation for our main analyses (partly obtained via additional author contacts). We included 285 effect sizes from 195 independent samples in 154 studies with N = 13,630 participants. For the forest plot of all effect sizes, see Figure 2 and for a detailed overview of the included studies, see Table 2. The full reference list of all included studies is provided in Supplemental Material C. Additional analyses of data from eleven publications on studies using the cued recall paradigm are reported in the Supplement Table S1.

Forest plot of effect sizes included in the meta-analysis.

Characteristics of All Studies Included in the Meta-Analysis.

Note. All information about sample size, effect size, paradigms, country, and cultural background were collapsed across studies, contrasts, and samples. Bicultural samples were excluded from the analysis with culture as moderator of the STI effect. ES = average effect size within each publication, aggregated across studies within a publication (within-subjects dz); N = total sample size within a publication; WM = working memory; LTM = long-term memory; NA = not available; STI = spontaneous trait inference. Full references are listed in Supplemental Material C.

Main Analysis

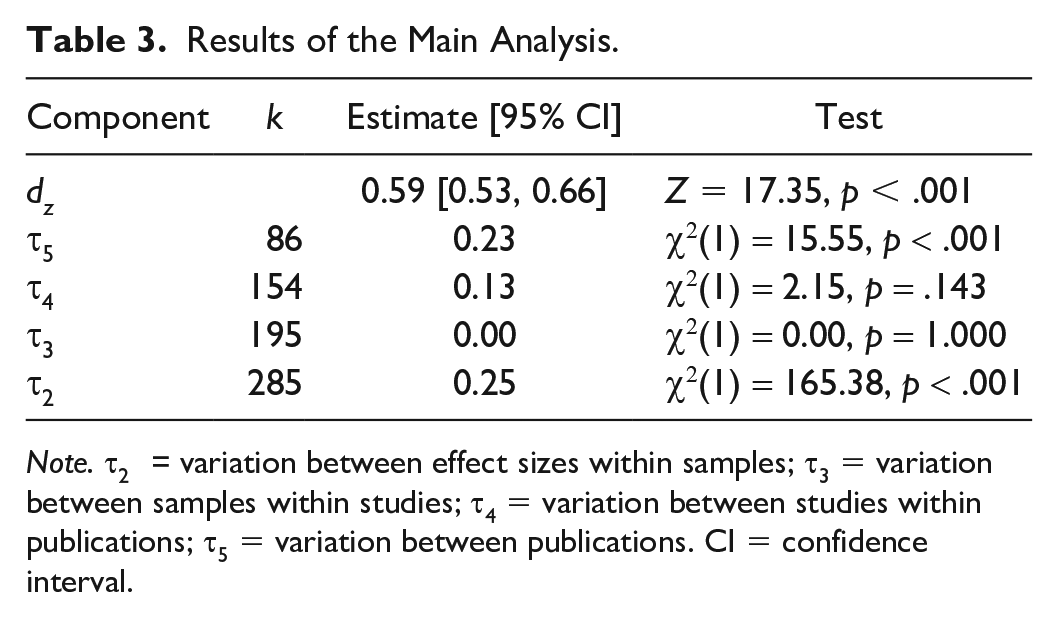

The main analysis indicated an overall STI effect of dz = 0.59, Z = 17.35, p < .001, with strong evidence for heterogeneity between effect sizes, Q(284) = 1,971.55, p < .001. The standard deviation of effect sizes was highest for effect sizes within samples, followed by variation between publications and between studies in publications (see Table 3). The corresponding standard deviations were substantial in magnitude, suggesting large amounts of variance that could be explained by moderators. The heterogeneity was estimated as zero on the level of samples within studies and was thus removed for further analyses.

Results of the Main Analysis.

Note. τ2 = variation between effect sizes within samples; τ3 = variation between samples within studies; τ4 = variation between studies within publications; τ5 = variation between publications. CI = confidence interval.

Moderator Analyses

We provide a comprehensive overview of descriptive statistics of all moderator variables along with intercorrelations of continuous moderators as well as an overview of co-occurrences of categorical moderator variable levels in Supplemental Tables S4 and S5, respectively.

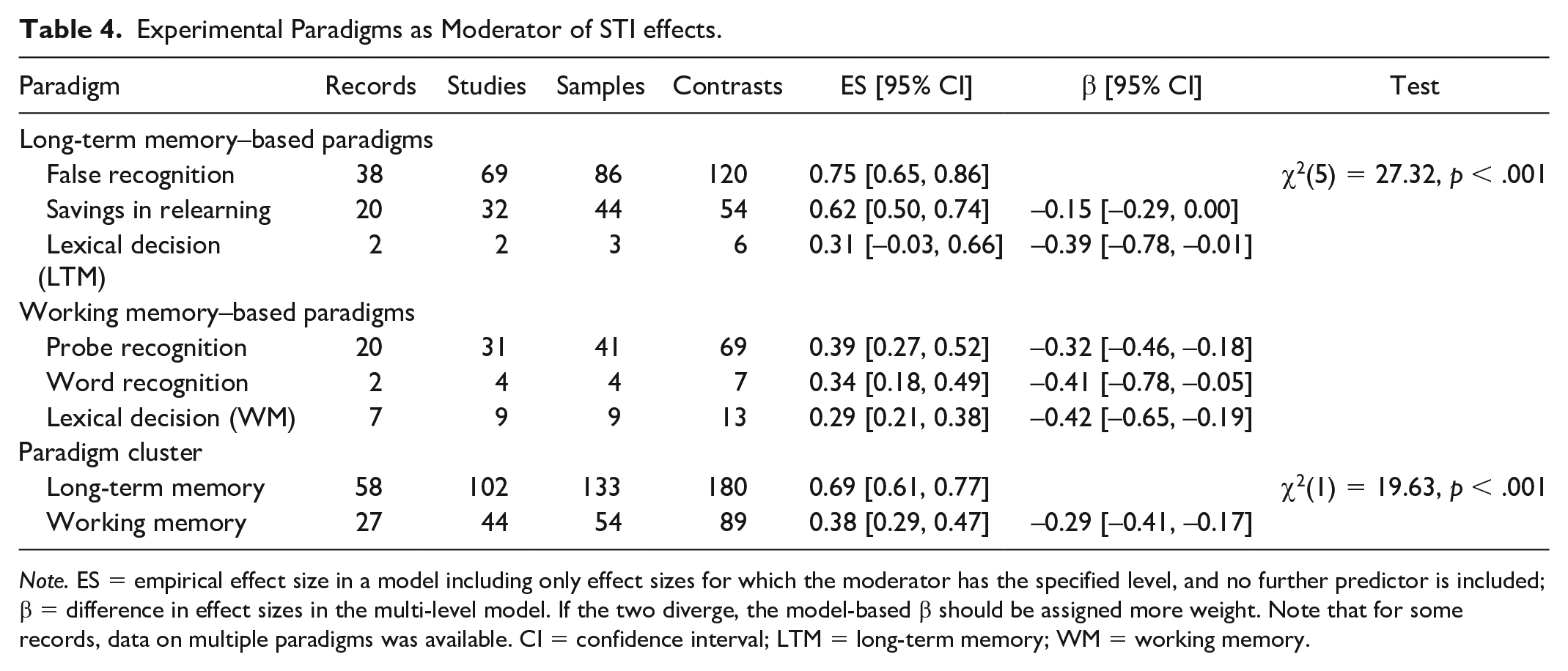

Experimental paradigm

The analysis of the paradigm as a moderator of the overall STI effect indicated significant and substantial differences between experimental paradigms (see Table 4). In particular, the effect size was highest for the false recognition paradigm, followed by the savings in relearning paradigm. In general, long-term memory-based paradigms yielded significantly larger effect sizes (dz = 0.69) than working memory-based paradigms (dz = 0.38), χ2(1) = 19.63, p < .001. Nevertheless, STI effects were evident for all paradigms, ranging from dz = 0.29 for lexical decision (WM) to dz = 0.75 for false recognition, and significant in all cases except lexical decision (LTM), which may be attributable to the small number of studies (see Table 4).

Experimental Paradigms as Moderator of STI effects.

Note. ES = empirical effect size in a model including only effect sizes for which the moderator has the specified level, and no further predictor is included; β = difference in effect sizes in the multi-level model. If the two diverge, the model-based β should be assigned more weight. Note that for some records, data on multiple paradigms was available. CI = confidence interval; LTM = long-term memory; WM = working memory.

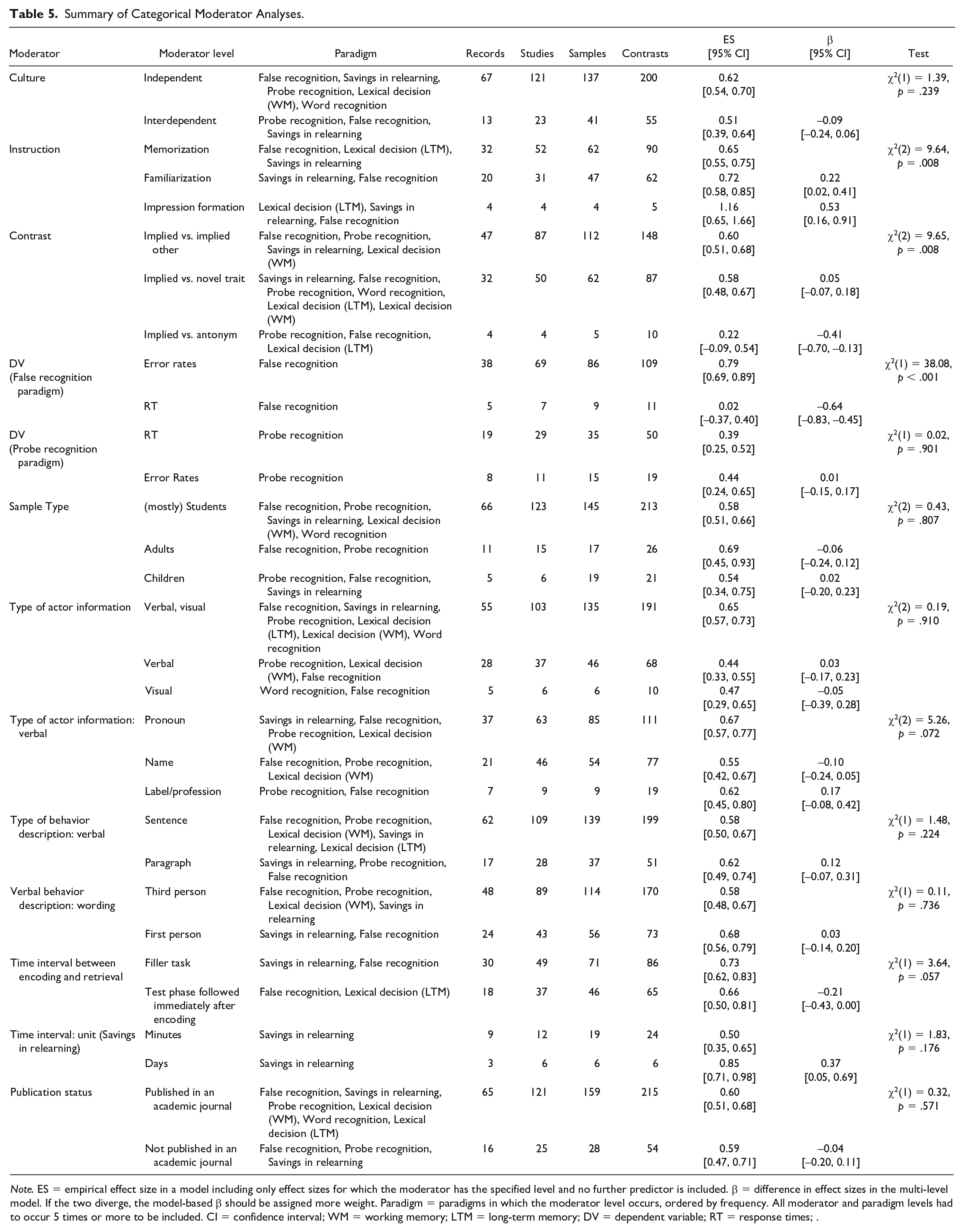

Instructions during behavior encoding

Only the following instructions could be used for moderator analyses: familiarization, memorization, and impression formation. As there was little variation in the instructions for working memory paradigms, the analysis of instruction was restricted to long-term memory paradigms. 6 The overall moderation effect was significant, χ2(2) = 9.64, p = .008, with impression formation and familiarization yielding larger effect sizes (dz = 1.16 and dz = 0.72, respectively) than memory formation (dz = 0.65). Note that the analysis for impression formation is severely limited by the small number of effect sizes (k = 5, see Table 5).

Summary of Categorical Moderator Analyses.

Note. ES = empirical effect size in a model including only effect sizes for which the moderator has the specified level and no further predictor is included. β = difference in effect sizes in the multi-level model. If the two diverge, the model-based β should be assigned more weight. Paradigm = paradigms in which the moderator level occurs, ordered by frequency. All moderator and paradigm levels had to occur 5 times or more to be included. CI = confidence interval; WM = working memory; LTM = long-term memory; DV = dependent variable; RT = response times; .

Contrasts

There was a significant effect of contrast, χ2(2) = 9.65, p = .008. This was largely attributable to a much smaller effect size in studies using the implied versus antonym contrast, β = −0.41. There was no significant difference between the implied versus implied other and the implied versus novel trait contrast (see Table 5).

Dependent variables

Moderation by type of DV was analyzed separately within two paradigms given the intrinsic link between DV and paradigm: false recognition and probe recognition. For the false recognition paradigm, we observed robust STI effects with the error rate as the DV (dz = 0.79) and essentially null effects for response times, χ2(1) = 38.08, p < .001 (see Table 5). In the probe recognition paradigm, however, effect size estimates for error rates and response times were nearly identical, χ2(1) = 0.02, p = .901 (see Table 5).

Stimulus and procedural characteristics

We further examined the following moderators: number of traits tested, actor information (both verbal and visual vs. verbal only vs. visual only), verbal actor information (pronoun vs. name vs. label/profession), verbal behavior description (sentence vs. paragraph), and verbal behavior wording (first person vs. third person). None of these moderators significantly improved model fit. Finally, neither the time interval between encoding and retrieval nor the presence or absence of a filler task was significantly related to the STI effect size (see Tables 5 and 6).

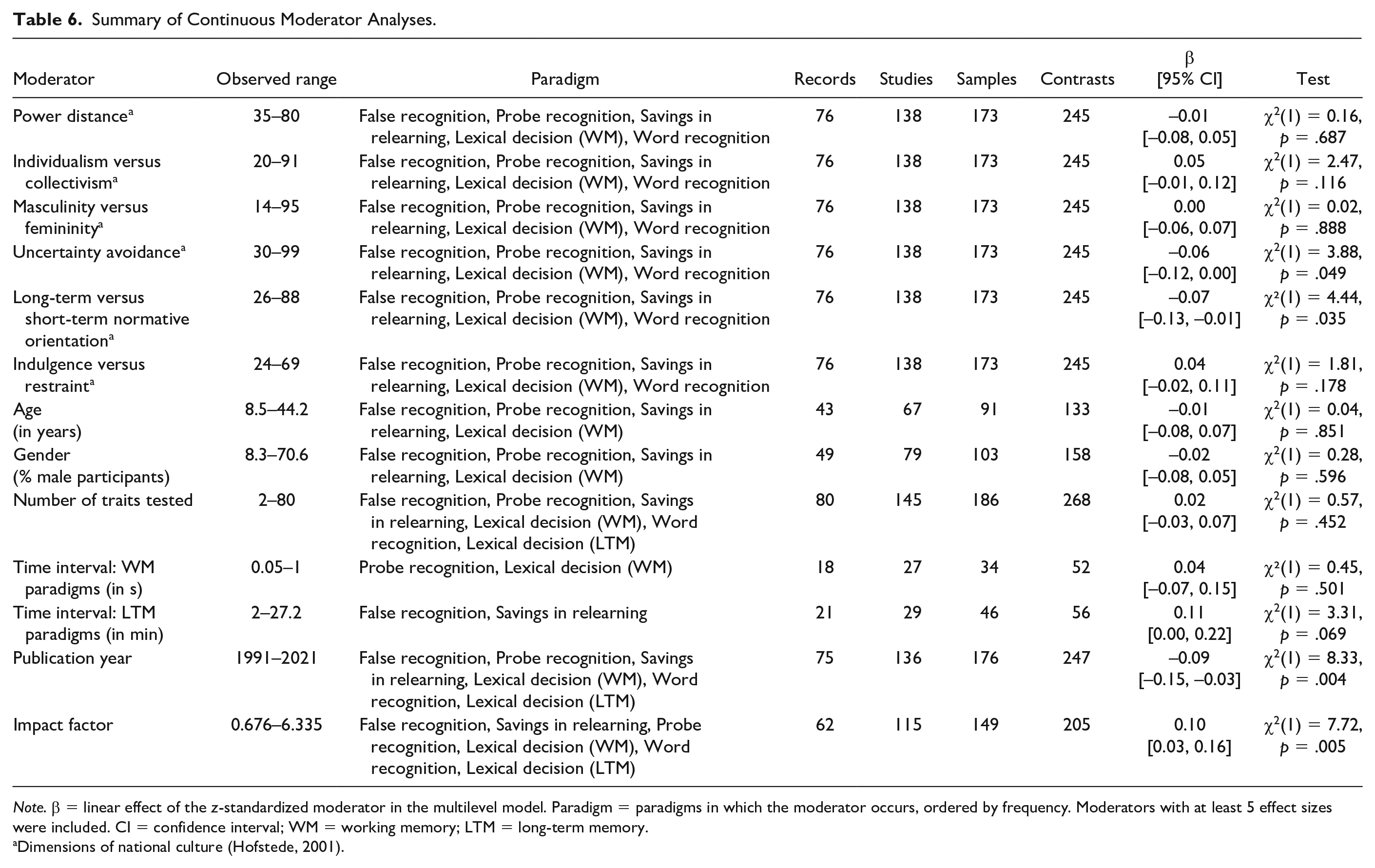

Summary of Continuous Moderator Analyses.

Note. β = linear effect of the z-standardized moderator in the multilevel model. Paradigm = paradigms in which the moderator occurs, ordered by frequency. Moderators with at least 5 effect sizes were included. CI = confidence interval; WM = working memory; LTM = long-term memory.

Dimensions of national culture (Hofstede, 2001).

Sample demographics

Cultural background did not significantly moderate the overall STI effect, χ²(1) = 1.39, p = .239, with substantial and significant STI effects both for samples from independent cultures (dz = 0.62) and for samples from interdependent cultures (dz = 0.51; Table 5). Similarly, the Hofstede dimension individualism versus collectivism was not significantly related to the STI effect size, β = 0.05, χ² (1) = 2.47, p = .116. Thus, while STI effect sizes were descriptively slightly smaller for participants in collectivistic than individualistic cultures, these differences were not significant. 7

Furthermore, two of the other dimensions of cultural value variations proposed by Hofstede were significantly associated with the STI effect size, long-term orientation, and uncertainty avoidance, which indicated that STI effects were larger in countries that tend toward low values on these dimensions (see Table 6).

The sample characteristics such as mean age, percentage of male participants, and sample type (children vs. students vs. adults) were all unrelated to the mean STI effect size (p-values ≥ .596, see Table 5 and Table 6). Substantial and significant STI effect sizes were observed in all age groups.

Publication Bia

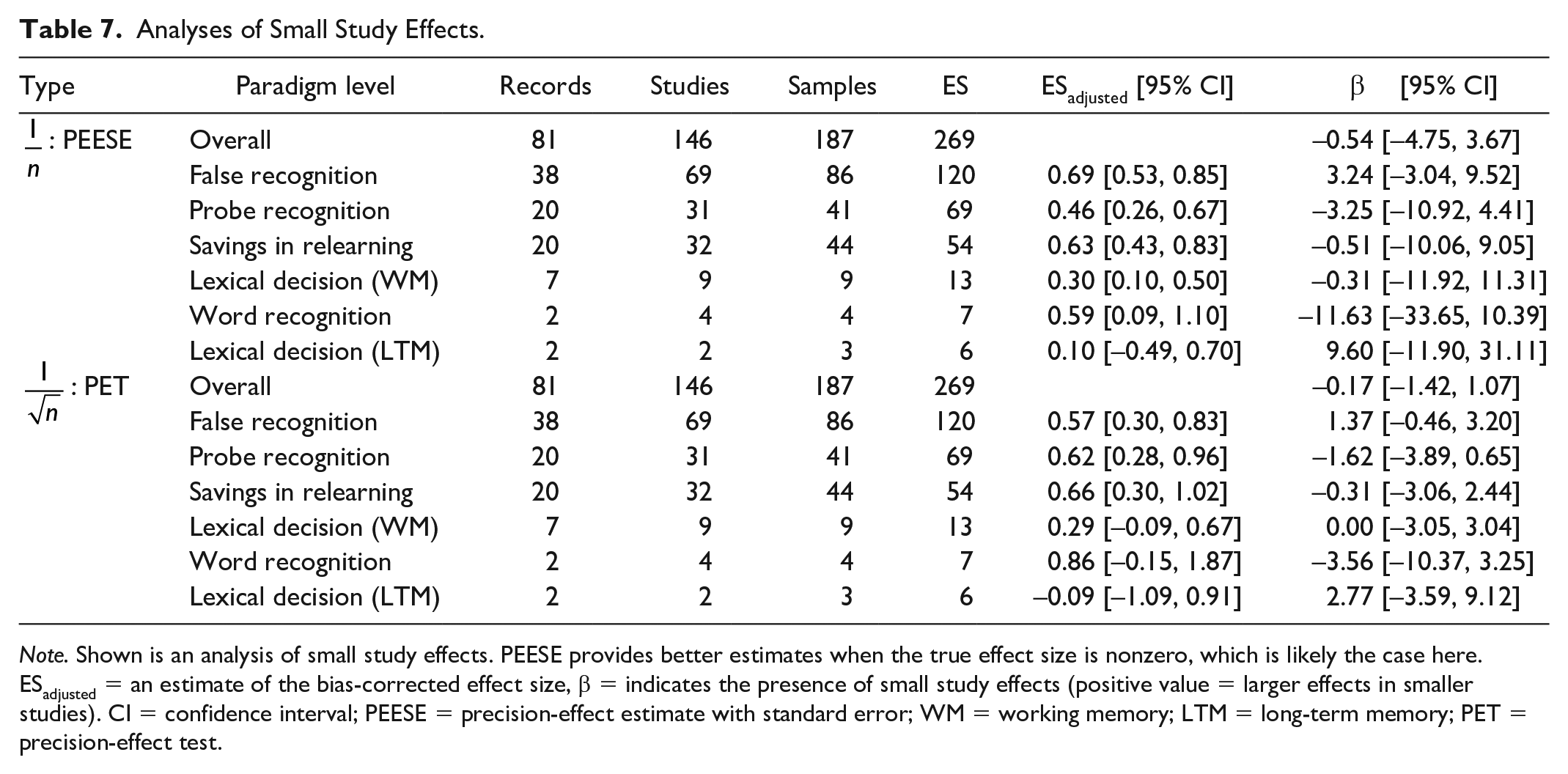

A visual inspection of the funnel plots (see Figures 3 and 4) indicated no obvious funnel plot asymmetry. Similarly, publication status (published vs. unpublished) was unrelated to the overall STI effect size, χ²(1) = 0.32, p = .571. Average effect sizes for published and unpublished studies were almost identical (see Table 5). Year of publication and journal impact factor were significantly associated with the overall effect size such that earlier reports and reports published in higher impact journals yielded somewhat higher effect sizes (see Table 6). Adding quadratic effects did not result in significant fit improvement (see Supplemental Table S3), although the quadratic effect of year was descriptively negative (Supplemental Figures S1 and S2). Finally, the PET and PEESE analyses supported the robustness of our results to publication bias. Neither

Funnel plot depicting all effect sizes included in the meta-analysis.

Funnel plots for each experimental paradigm.

Analyses of Small Study Effects.

Note. Shown is an analysis of small study effects. PEESE provides better estimates when the true effect size is nonzero, which is likely the case here. ESadjusted = an estimate of the bias-corrected effect size, β = indicates the presence of small study effects (positive value = larger effects in smaller studies). CI = confidence interval; PEESE = precision-effect estimate with standard error; WM = working memory; LTM = long-term memory; PET = precision-effect test.

Discussion

The overarching goal of this meta-analysis was to investigate the average effect size and the relative robustness of the STI effect and to identify potential moderators that may have both conceptual and methodological relevance. We first briefly discuss the results of the overall effect size analysis and then provide more detailed reflections on the implications of the results of the moderation analyses.

Overall Effect Size of STIs

Our results demonstrate a significant average STI effect estimate of moderate to large size (dz = 0.59). Translated into a common language effect size, the observed results imply that approximately 72% of all participants would be expected to numerically show an STI effect. This observed effect is comparable to a moderate between-subjects effect size of approximately d = 0.59 (Lakens, 2013), 8 which is substantially larger than the median effect size reported in social psychology (estimated as d = .36; Lovakov & Agadullina, 2021).

Taken together, our results support previous conclusions in narrative reviews that the STI effect is a robust phenomenon (e.g., Uleman et al., 2008). Furthermore, publication bias did not affect the overall effect size. Nevertheless, studies published earlier and in journals with higher impact factors reported somewhat larger effect sizes, thus indicating a decline effect known in many research fields (e.g., Schooler, 2011). Importantly, STI effects were similar for published and unpublished as well as for studies with small and large samples. In light of concerns regarding the replicability of published psychological effects (e.g., Open Science Collaboration, 2015), this finding provides further meaningful support for the robustness of STIs across the literature.

Our analyses further demonstrated significant heterogeneity across all effect sizes as well as between publications and between effect sizes within samples, indicating that the strength of the STI effect was influenced by several other factors. We focus our discussion first on methodological factors and their implications and then discuss sample characteristics.

Experimental Paradigm

Our moderator analysis of classes of experimental paradigms revealed significant STI effects in both long-term memory–based and working memory–based paradigms that were, however, significantly larger in the long-term memory–based paradigms. These results first of all indicate that STIs are detectable both at the initial encoding of behavioral information and at the retrieval of person information. These results also indicate that STI effects are stable over time—or at least over the time periods typically investigated in the long-term memory–based paradigms (ranging from minutes to days).

Besides the generally robust STI effects across the different memory-based paradigm clusters, we also observed substantial effect sizes in all individually tested paradigms, nearly all of which were significant. In further support of robustness, STIs seem to be measurable under various methodical settings, even under conditions where task performance hinders STI formation. Observing STIs under performance-hindering conditions has been viewed as the most robust evidence for the unintentionality of these inferences, as there is no incentive to perform unsuccessfully in a task (see also Carlston & Skowronski, 1994; Todorov & Uleman, 2002). Interestingly, the false recognition paradigm and the probe recognition paradigm that both operationalize STI effects via impaired task performance both elicited the highest STI effects within their clusters. These findings further eliminate the alternative explanation that attributes STI effects to deliberate strategies and underline the assumed nonintentionality of STIs (Uleman, Newman, & Moskowitz, 1996).

The observed effect size difference between the two clusters of working memory–based and long-term memory–based experimental paradigms can be interpreted in several ways. First and most straightforward, one may speculate about the potentially additive and/or interacting contributions of encoding and retrieval processes to person inference effects. Previous research has excluded mere behavioral recall and retrieval-based person inferences during testing as alternative explanations of STI effects (e.g., Todorov & Uleman, 2002). Nevertheless, it is still possible that such processes augment STI effects—leading to larger effect sizes in the long-term memory–based paradigms as compared to the working memory–based paradigms. Second, there are several further procedural differences between long-term memory– and working memory–based paradigms that offer other compelling interpretations and point toward important process characteristics of the formation and retrieval of STIs. One of them is the stimulus representation with or without actor images and another may be the relative difficulty of task completion in the different paradigms.

With regard to actor images, the included long-term memory–based paradigms present actor images along with behavioral statements and later use these actor images as prompts for measuring STI effects (which does not apply to the excluded cued recall paradigm, see Supplemental Material A). In contrast, working memory–based paradigms rarely employ actor images. Using actor images may facilitate the formation of actor-trait links at encoding, for example, because it increases the salience of the actor, which in turn may lead to larger STI effects. The effect size differences between both groups of paradigms may therefore be interpreted as further support that STI effects are more than mere effects of behavior-trait categorization at encoding of behavioral information but indeed represent actor-trait links based on inferences about the actors (e.g., van Overwalle et al., 1999). Alternatively, the presentation of actor images during encoding might simply increase the general stimulus salience, resulting in an unspecific processing advantage that—as a side effect—also supports the formation of STIs (e.g., Uleman et al., 1993) but is not necessarily specific to STI formation. Note, however, that when we specifically investigated the presence versus absence of actor images as moderator variable, we did not find significant differences in STI effects. This questions whether this variable is responsible for the difference between working memory–based and long-term memory–based STI effect sizes.

A second methodical confound between both types of paradigms refers to relative task difficulty. While long-term memory–based paradigms typically present behavioral information about a number of actors that are presented block-wise before proceeding to the STI test phase, working memory paradigms implement behavioral encoding and trait-inference testing consecutively on a trial-by-trial basis. Hence, the number of items learned and tested simultaneously is greater in designs with block-wise encoding. Along with longer time intervals between encoding and testing and/or interruptions by filler tasks, this may cause long-term memory–based paradigms to be generally more difficult than working memory paradigms. This increased difficulty might circumvent the problem of ceiling effects caused by highly accurate and fast responding in the easier working memory–based tasks and therefore lead to larger effect sizes. The comparison of the probe recognition paradigm and the false recognition paradigm serves as a good example here. Procedurally, these paradigms are highly similar with the main difference being an increased task difficulty because of block-wise stimulus encoding and testing in the false recognition paradigm versus the trial-by-trial procedure in the probe recognition paradigm. In line with this notion, Todorov and Uleman (2002) showed false recognition rates of over 40% for implied traits in the false recognition paradigm, while false recognition rates in probe recognition paradigms are typically below 10% (e.g., Wang & Yang, 2017). With less potential for ceiling effects, this may explain why false recognition yields higher effect sizes than probe recognition. While conceivable, this interpretation was not supported by the moderator analyses that investigated length of time intervals and absence versus presence of filler tasks in the long-term memory–based paradigms, which one may use as indirect indicators of task difficulty. Neither variable significantly moderated the STI effect size. This potential factor should be addressed in future systematic empirical investigations, along with further potential systematic differences between groups of paradigms.

In conclusion, results from the paradigm moderator analyses indicate that STIs are formed immediately at encoding and subsequently stored in long-term memory. Our speculations about the observed effect size differences between working memory–based and long-term memory–based experimental paradigms illustrate that a number of procedural aspects might play a pivotal role in explaining these differences. As these procedural choices are highly intertwined with other characteristics of the experimental paradigms, future research should more systematically test these variations to identify task characteristics that are responsible for effect size differences and their implications for conclusions regarding the underlying mechanisms of STIs.

Instructions During Behavior Encoding

We had additionally analyzed variations of instructions as potential moderators of STI effects. The most frequent variations were the instruction to memorize or familiarize with the actor and behavior information (e.g., Carlston & Skowronski, 1994; Goren & Todorov, 2009), which are typically compared with explicit instructions to form an impression of the actor (e.g., Uleman, 1987). The major rationale behind these variations was that finding significant STI effects in the familiarization and memorization conditions supports the assumption of spontaneous impression formation because participants have no explicit goal to form any impression of the actors. It was furthermore argued that instructions explicitly triggering intentional and deliberate impression formation may augment STI effects (Uleman, 1987) and thus represent the upper threshold for the STI effect size.

Contrasting previous findings (e.g., Carlston et al., 1995; Carlston & Skowronski, 1994; Ramos et al., 2018; Todorov & Uleman, 2002; Todorov & Uleman, 2004), our moderation analysis demonstrated that STI effect sizes were significantly affected the type of instruction during encoding. Specifically, effect sizes were large when participants received the instruction to actively form impressions about actors as compared with moderate effect sizes when participants were instructed to merely familiarize or memorize behavioral information. This result somewhat supports Uleman’s (1987) assumption that impression formation instructions evoke stronger trait inferences. Note, however, that this analysis was based on only few effect sizes (i.e., k = 5 for impression formation) because several studies using multiple instructions did not provide sufficiently detailed test statistics for individual conditions to allow inclusion into this moderation analysis. While this question itself may spark little interest for future research, we deem it important to more systematically investigate the comparative influence that spontaneous nonintentional trait inferences and deliberate intentional trait inferences have on overall impression formation in person perception or even their downstream effects on interpersonal interaction dynamics.

It should also be noted that several further theoretically important variations of instructions could not be investigated in our meta-analysis because too few effect sizes were available. Specifically, instructions to focus on the situation, to actively suppress dispositional inferences as well as cognitive load manipulations could not be included. Especially the latter manipulation has yielded controversial results as early work on the automaticity of STIs found no effect of cognitive load (e.g., Winter et al., 1985) while other investigations found evidence for an influence of cognitive load on STI formation (e.g., Wells et al., 2011).

Contrasts

As a further methodologically relevant variable, we investigated whether the chosen control condition and thus type of contrast significantly moderated the STI effect size. We indeed observed a significant moderation, which can be largely attributed to a smaller effect size in the implied versus antonym contrast while the implied versus implied other and implied versus novel trait contrasts did not significantly differ. These findings contradict previous assumptions that contrasts with antonyms should yield larger effect sizes because the trait inference might also imply the actor lacking the opposite of the implied trait (i.e., the antonym trait, Todorov & Uleman, 2002). Our analysis, however, is only based on k = 10 effect sizes of which k = 6 are from a single publication investigating the role of antonyms but also of stereotypes which may have decreased the effect sizes (Wigboldus et al., 2004).

Somewhat unexpected, our analyses yielded no difference between contrasts using traits implied by behavioral statements within the same task (implied-other) versus novel traits unrelated to all presented behavioral statements. Comparing implied traits with implied-other traits as control condition has been frequently advocated as gold standard because it controls for the unspecific familiarity or accessibility that trait words may acquire during the encoding phase. Using novel traits as control contrast, on the contrary, has been argued to artificially increase effect size estimations for STIs due to their relatively lower familiarity and/or accessibility. Our results, however, indicate that novel traits did not result in larger effect size estimates than implied-other traits. Thus, STI effect size estimation does not appear to be influenced by the relative familiarity or accessibility of the traits used in the control condition. Potential differences between the two contrasts, if they exist, are thus likely small, which provides higher levels of flexibility for researchers in choosing fitting control conditions within the constraints of their research questions.

Dependent Variable

As the choice of DV to measure STIs is highly contingent on the experimental paradigm, we only evaluated differences within two paradigms that provided sufficient variation: false recognition and probe recognition. Our results for probe recognition indicate that the choice of DV did not significantly alter the detectability of STIs. In this paradigm, STI effects of comparable size were observable for accuracy as well as response times. This suggests that response times and accuracy serve as reliable measures for STIs, and it should thus become standard practice to record, analyze, and report both.

In contrast, the choice of DV in the false recognition paradigm did make a significant difference, as significant STI effects were only observed in accuracy measures (i.e., error rates) and not in response times. Therefore, we recommend focusing on accuracy measures in this paradigm.

Stimulus and Procedural Characteristics

Our moderation analyses encompassed a number of further methodological aspects. The number of traits tested, presenting the actor information verbally or visually (or in both ways), describing the actor with the use of pronouns, names, or labels, using sentences or paragraphs for the behavior description, and presenting the behavior description in first or third person did not significantly moderate the STI effect size. These commonly used methodological variations appear to have limited effects on the STI effect size, again emphasizing the robustness of STI effects. Nevertheless, our results cannot rule out that further variations that are less commonly used in the literature can affect STI effects, which may be systematically investigated in future research.

The STI effect was also related neither to whether a filler task was used between encoding and retrieval nor to the time interval between encoding and retrieval. This shows that STI formation is not only robust against methodological variations but also against distractions (filler tasks) and longer time intervals between encoding and retrieval (e.g., days in the savings in relearning paradigm). Numerically, effects were even slightly larger for longer time intervals and when filler tasks were used. Nevertheless, it should be noted that studies systematically testing for effects of filler tasks and length of time intervals while holding all other conditions constant might still find effects of these factors as, for example, Carlston and Skowronski (1994) found that the STI effect weakened when the interval between encoding and retrieval was 7 days.

Sample Demographics

Neither age, gender, nor the sample type (e.g., student sample or children sample) significantly moderated the effect size of STIs. This is in line with work indicating that children already form STIs (e.g., Newman, 1991; Shimizu, 2012; Wang et al., 2018) and that STIs are present in nonstudent adult samples (e.g., McCarthy et al., 2013; Olcaysoy Okten, 2015). Although few studies already began to investigate the developmental course of STIs (e.g., Wang et al., 2018; Zhang & Fang, 2016) some demographic groups are underrepresented in our sample, especially young children (younger than 8 years) and elderly people. Nevertheless, the lack of age, gender, and sample type effects underlines that STI effects are not only robust across several methodological variations but also evident in different demographic groups.

Cultural Differences Between samples

Contrary to expectations, participants’ cultural background was not a significant moderator of STIs. Although results descriptively point in the hypothesized direction, samples with an independent cultural background did not show a substantially larger STI effect size than samples with an interdependent cultural background. Similar results were obtained when employing country aggregates of the individualism versus collectivism dimension provided by Hofstede (2001) as continuous predictors. An effect of (in)dependence as cultural orientation was only observed when restricting analyses to the studies that conducted a direct sample comparison (see Supplemental Table S2), but their number is too low to draw reliable meta-analytic conclusions. This result is at odds with early theoretical conceptualizations of how cultural differences along the individualism versus collectivism dimension might influence the formation of STIs (e.g., Moskowitz, 2005; Uleman, Newman, & Moskowitz, 1996). Depending on differences in independent versus interdependent self- and person construal (e.g., Markus & Kitayama, 1991, 2010) and the related tendency to spontaneously use traits to explain observed behavior, it has been theorized that STIs should occur more frequently in samples with a predominantly individualistic (e.g., European and North American) cultural background compared with samples having a rather collectivistic (e.g., Asian) cultural background (e.g., Moskowitz, 2005; Uleman et al., 2008). The lack of significant cultural differences in the present meta-analysis is largely attributable to recent research conducted in Chinese (Lee et al., 2017; Wang et al., 2015, 2016, 2018; Wang & Yang, 2017; Yang & Wang, 2016; Zhang & Fang, 2016; Zhang & Wang, 2013, 2018) and Japanese samples (Shimizu, 2012; Shimizu & Uleman, 2021). Thus, the results of this meta-analysis suggest that STIs might not be as dependent on cultural differences in person construal as previously theorized. Such findings are in line with recent cross-cultural research that did not find the expected difference in person versus situational attribution between U.S. American and Chinese participants (Carstensen et al., 2021). Our analyses did, however, reveal a moderating effect of two other dimension of cultural value differences: long-term versus short-term normative orientation and low versus high uncertainty avoidance. High scores on normative orientation dimension indicate a tendency among people to endorse efforts in modern education to prepare for the future, whereas low scores indicate a tendency to prefer established traditions over societal change. Scores on the uncertainty avoidance dimension indicate the relative comfort or discomfort with uncertainty, ambiguity and the unpredictability of the future and the relative strictness, rigidness, or tolerance toward aberrations from codes of belief and behavioral codes (Hofstede, 2001). Given the exploratory character of this non-preregistered analysis and our large number of hypothesis tests, this result should be interpreted with caution.

In general, the moderator analyses on cultural differences included data from only nine countries. Conclusions about (the lack) of cultural differences thus have to be drawn with caution. Clearly, the theoretical assumption that cultural values and/or differences in person construal may affect spontaneous impression formation should be investigated more systematically in the future, not just by conducting mere cross-country comparisons that are open to many confounding variables but by inclusion of theoretically derived measures of cultural variations as mediator variables. Instead of relying on mere country aggregates of cultural values, this research should adopt a multilevel approach in which not only group aggregates of cultural values but also individual levels of conformity to these values are included into analyses.

Limitations and Outlook

Multiple limitations need to be kept in mind in regard to our statistical moderator analyses. First, given insufficient codable effect sizes for several moderator levels, we had to exclude potentially relevant moderators from our analyses. This is partly attributable to suboptimal reporting and data availability standards that have only begun to improve in the last decade. Therefore, our results cannot provide a comprehensive view of the impact of different moderators on the STI effect size that we initially aimed for (see Supplemental Table S6 for a summary). For instance, we could not include cognitive load as an important instruction variation during behavior encoding. Furthermore, this meta-analysis did not include potential moderators regarding individual differences (e.g., perceivers’ expectations of observed behavior based on group membership and associated stereotypes) or item-based stimulus characteristics (e.g., valence or extremity of presented behavior). As a substantial amount of variance in the STI effect is still left unexplained, this stresses the need for highly powered studies systematically investigating relevant moderators in this research domain, along with improved reporting standards and open data accessibility.

Second, some of our moderator analyses suffer from limited statistical power due to an underrepresentation of datasets covering certain moderator variables or some of their levels, resulting in uncertain effect size estimates. In some cases, this can be attributed to an overall low occurrence of specific procedures in this field of research. However, we also identified several studies that investigated central moderator variables (e.g., instruction during behavior encoding) but that did not provide enough information for effect size calculations for single conditions—mostly when they did not observe significant differences between conditions. This supports the need for more transparent reporting standards, which are already being implemented more widely in psychology in recent years (e.g., Appelbaum et al., 2018).

Third, the correlational nature of meta-analytic moderator analyses leaves the possibility that the presented moderator effects are compromised by confounding variables, for example, differences in stimulus selection between samples or unreported sample characteristics. In contrast, however, our meta-analytic moderator analyses have the strength that they include far more effect sizes than is possible in individual reports. Nevertheless, large-scale experimental studies are needed to gauge our moderator findings, especially where unclear or unexpected (e.g., on the impact of cultural values or of instructions during encoding).

Next to the limitations of our analyses, some shortcomings of the STI literature itself need to be discussed. One main issue refers to the multitude of different stimulus sets that are employed at varying frequencies and lack systematic overall characterizations. As pointed out by Levordashka and Utz (2017), these stimulus sets seem to differ in both extremity and distinctiveness of the described behaviors which might result in STIs of varying strength and thus influence the robustness of the STI effect size. The variance and generalizability across stimuli are rarely investigated (but see Uleman, Hon, et al., 1996; see also the debates regarding the “the-language-as-a-fixed-effect fallacy,” as introduced by Clark, 1973). Ideally, raw data of individual studies and their full stimulus materials would be available for meta-analyses, which would allow systematically testing if and to what extent STI effects are affected by such stimulus characteristics. Because raw data were typically not available, we are left to state that despite several decades of highly active research, we are unable to draw firm conclusions with regard to many of these methodological issues. We hope that the increasing use of open science practices will enable such analyses in the future.

Future research on STIs should also consider ways of including stimuli as random variables into analyses to generalize results across stimuli as well (see Judd et al., 2012; Uleman, Hon, et al. 1996). This also implies that future research should enhance experimental paradigms to sufficiently increase power to conduct multilevel analyses.

Finally, STIs are typically studied in the laboratory by presenting participants with a series of isolated behavioral descriptions. This severely limits the external validity and generalizability of STI effects as currently studied. For example, the large majority of studies employed written description of behaviors. At the current point in time, we do not know whether and to what extent processing verbal behavioral information really is representative of processing directly observed behavioral information and drawing inferences about observed actors. After all, providing participants with verbal stimulus material about target persons’ behavior is a communicative act and principles of communication (e.g., Grice’s conversational maxim of relation/relevance; Grice, 1989) may influence the likelihood and/or strength of inferences drawn from that information. In a similar vein, the STI literature is largely not integrated with the broader literature of person perception (including literature from personality psychology). Future work should aim to integrate these different perspectives and methodological approaches to delineate how STIs contribute to person perception as it occurs in everyday life.

Conclusion

To conclude, our meta-analysis showed that STIs appear to be a robust and replicable phenomenon of moderate to large effect size. Substantial heterogeneity was observed and partly explained by methodological moderators, in particular, the type of experimental paradigm. The expected moderating effect of cultural background on STIs could not be supported based on our data. Future work should seek to carefully examine relevant moderators in highly powered studies and strive for integration with other literature on person perception occurring largely in parallel.

Supplemental Material

sj-docx-1-psp-10.1177_01461672221100336 – Supplemental material for Spontaneous Trait Inferences From Behavior: A Systematic Meta-Analysis

Supplemental material, sj-docx-1-psp-10.1177_01461672221100336 for Spontaneous Trait Inferences From Behavior: A Systematic Meta-Analysis by Antonia Bott, Larissa Brockmann, Ivo Denneberg, Espen Henken, Niclas Kuper, Felix Kruse and Juliane Degner in Personality and Social Psychology Bulletin

Footnotes

Acknowledgements

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material is available online with this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.