Abstract

Objective:

Timely, accurate referrals to head and neck cancer surgery are essential for survival but are often delayed or misrouted, contributing to late-stage presentation and disparities. We aim to develop and validate a supervised neural network to predict surgical appropriateness at the time of referral.

Methods:

Training data included >200 000 de-identified patient records from the National Cancer Database and Surveillance, Epidemiology, and End Results registries. External validation was conducted on 39 consecutive referrals at a tertiary care center (2020-2023) using demographics, tumor site, histology, TNM stage, and grade. Model outputs were compared to treatment recommendations from head and neck surgeons.

Results:

The model achieved 79% accuracy, 85% sensitivity, 50% specificity, and 90% positive predictive value in identifying surgical candidates. Performance was consistent across sex, age, and socioeconomic subgroups, with a trend toward improved accuracy in lower-stage disease.

Conclusions:

This externally validated tool demonstrates potential to streamline referral triage, expedite surgical consultation, and enhance equitable access to head and neck cancer care.

Introduction

Specialist referrals are ubiquitous, with over one-third of patients referred to a specialist each year. 1 However, nearly 1 in 3 new patient referrals to specialty physicians are never completed. 1 The referral process is complex and differs across institutions. One study examined various reasons for barriers to timely head and neck cancer referrals, and found 1 primary theme was the fragmentation of the head and neck cancer referral and triage pathway. 2 Referrals may be transmitted via fax, electronically, or phone. In some cases, families may be expected to call to schedule an appointment, whereas in other cases, the specialty clinic’s team may instead reach out to the patient preemptively. Clinical intake teams are also often required to review a referral. 3 This heterogeneous referral process leads to ample possibilities for referral process breakdowns that may arise due to incomplete medical records, scheduling delays, and communication barriers between patients and multiple provider teams. Patients can also be referred to the inappropriate consultant, further delaying their care. 4

Delays in referrals have severe consequences for patient care, leading to postponed patient management and diagnosis as well as increased patient loss to follow-up care. Late diagnosis of head and neck cancer is associated with decreased survival and quality of life, with 5-year survival rates of 90% if diagnosed at stage I, 60% for diagnosis at stage III, and 4% for diagnosis at stage IVc. 5

In addition, barriers in the referral process are often unevenly distributed among patient populations. The time to schedule and complete a referral has been found to be longer for Black patients compared to their White counterparts. Non-English-speaking patients with public insurance have also been found to have longer times to referrals. 3 Black patients are more likely to present with a more advanced stage of disease and have worse overall survival rates. 6 In addition, Native Hawaiian and Pacific Islander patients present with more advanced head and neck squamous cell carcinoma compared to their White counterparts. 7

Machine learning is a field of artificial intelligence that allows computers to learn without direct commands based on various algorithms. Through iterations of model optimization, these programs can reduce differences between estimates and known outcomes. Prior studies have investigated the use of machine learning models to optimize referral care: 1 study found that machine learning models could identify cancer patients who could benefit from early palliative care services, and might lead to an expansion in early consultations with palliative care specialists. 8 Other studies have investigated the use of machine learning models in predicting non-visible symptoms in cancer palliative care (e.g., pain, anxiety, spiritual issues) to assess the need for further palliative care services, and found accuracy rates ranging from 55% to 88%, sensitivity rates ranging from 3% to 85%, and specificity rates ranging from 24% to 97%. 9 Taken together, these studies have shown that machine learning algorithms can streamline the referral process and improve healthcare communication and organization, expedite referrals, reduce the time spent by physicians navigating inappropriate referrals, and lead to earlier diagnosis and improved patient outcomes. To our knowledge, there are no studies investigating the use of machine learning models to predict referrals to head and neck cancer surgical care.

A machine learning algorithm that supports the initial referral process could be helpful in streamlining patient entry into head and neck cancer care. Importantly, such tools are not intended to replace clinical decision-making or the role of head and neck surgeons, who remain central to evaluation, diagnosis, and coordination of multidisciplinary management. Rather, the goal would be to provide referring providers or office staff in primary care and general oncology settings with an additional aid to help ensure that patients are directed promptly to the most appropriate specialists.

In this study, we present initial findings on the potential of a neural network model to augment the referral pathway, with the aim of reducing delays to specialist consultation. This work is intended to explore whether such models can complement the critical role of head and neck surgeons in guiding patient care once patients enter the cancer system.

Patients and Methods

Approval for this study was granted by the Institutional Review Board at the University of California, San Francisco (UCSF).

Neural Network Development

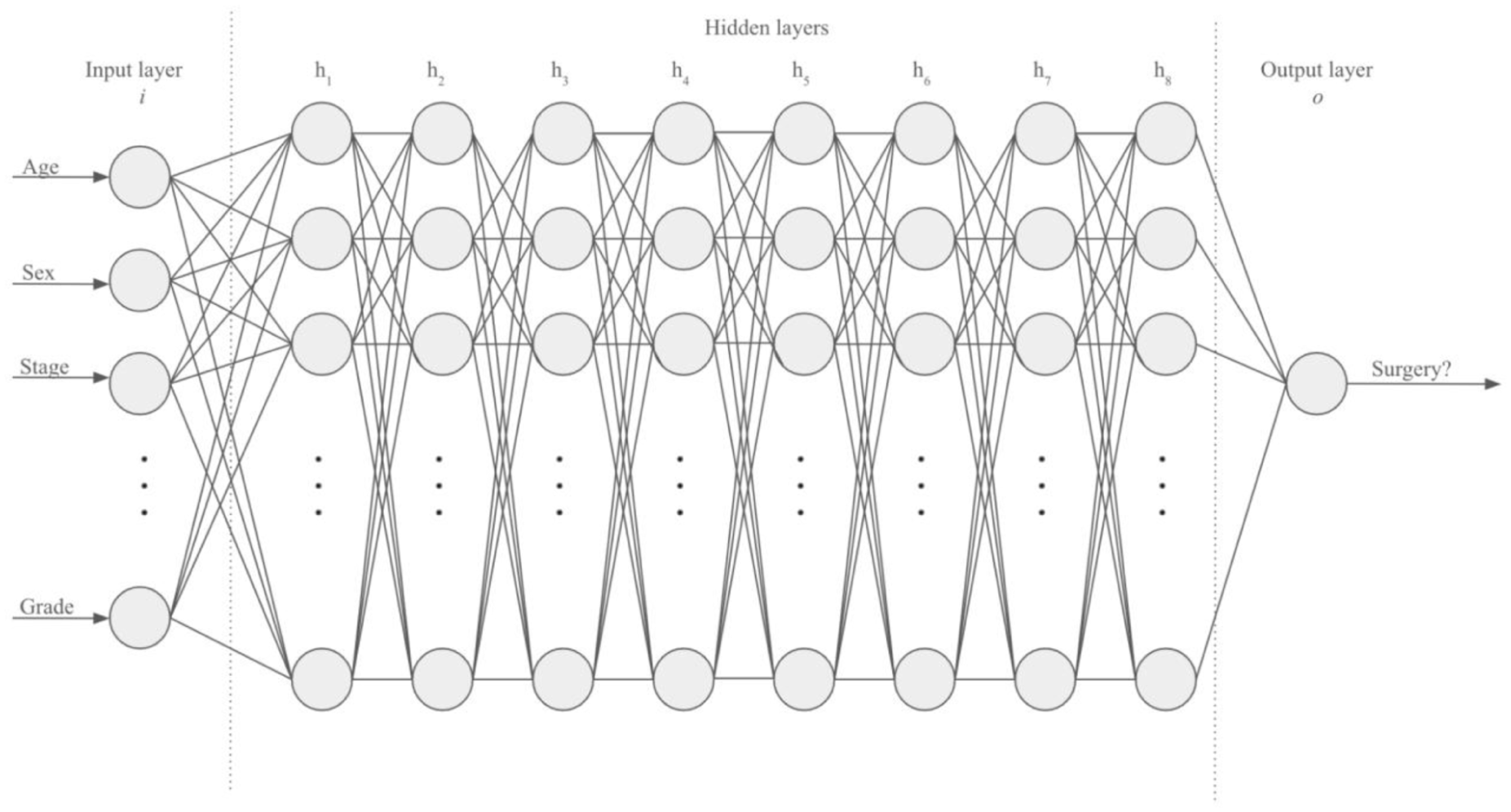

The neural network (IIAM Corporation) used in this study was developed in Google CoLab (Google) using the Keras and Tensorflow packages. The neural network consists of 10 layers, including 1 input layer, 8 hidden layers, and 1 output layer. Each inner layer had a weight constraint of 1.5 to prevent overfitting. A dropout rate of 20%, meaning that the specified proportion of nodes along with their incoming and outgoing connections was randomly dropped from each layer per training cycle, was programmed for each inner layer to combat overfitting. The 1-hot encoded output layer was defined to yield a binary outcome consisting of either “surgery recommended” or “surgery not recommended” (Figure 1).

Development of neural network.

To ensure appropriate model selection, we conducted preliminary benchmarking of several commonly used supervised learning algorithms, including logistic regression, random forest classifiers, and gradient-boosted decision trees (XGBoost), in addition to the neural network described above. Each model was trained and validated on the same National Cancer Database (NCDB) and Surveillance, Epidemiology, and End Results (SEER) datasets to allow direct comparison. The neural network consistently demonstrated the best overall performance across accuracy and Area Under the Curve (AUC) metrics and was therefore selected for final model development and external testing. During preliminary testing, we also experimented with variations in the number of hidden layers, weight constraints, and dropout rates. While deeper and more complex architectures (e.g., >10 hidden layers, alternative branching structures) were evaluated, they consistently resulted in overfitting and diminished performance on internal validation. The selected 10-layer model, with weight constraints and dropout regularization, provided the most stable balance of accuracy and generalizability, which is why it was ultimately chosen.

Independent variables in the model include age at diagnosis, cancer location, histology International Classification of Diseases (ICD) code, sex, stage, American Joint Committee on Cancer (AJCC) eighth edition pathologic T, N, M, and grade. The dependent variable was whether or not the patient was an appropriate surgical candidate. The accuracy rate of the machine learning model was defined as the percentage of treatment outcomes correctly predicted by the model.

Machine Learning Training and Testing Dataset

The neural network was trained for 20 epochs on 105 000 patient data points from the NCDB and 44 487 patient data points from the SEER database. At the end of each training epoch, the neural network was tested on an internal validation set consisting of 57 100 patient data points from NCDB and 19 066 patient data points from SEER. 10 The best-performing neural network was then evaluated on an external test set consisting of data obtained from patients at our institution.

Clinical Performance

Thirty-nine patients who were referred to UCSF for specialized head and neck cancer care from 2020 to 2023 were randomly selected. Data about patients’ head and neck cancer location and pathology were obtained from the electronic medical record. In addition, age at referral, gender, race/ethnicity, year of diagnosis, T-staging, N-staging, M-staging, overall staging, cancer grading, and treatment plan were documented. Surgical treatment was defined as any surgery for curative intent following referral to UCSF. Thus, surgical biopsies performed for diagnostic reasons were not classified as surgical treatment (e.g., direct laryngoscopy with biopsy). Furthermore, patients who received surgery prior to their referral at UCSF, but then were not recommended to undergo further surgery following their referral, were not classified as surgical treatment.

Clinical symptoms at the time of referral, medical comorbidities, and use of immunosuppressant or anticoagulation medications were recorded. Finally, patient zip code, county poverty level, and type of referring provider were documented.

The accuracy of the machine learning model was then compared against the treatment plan recommended in the attending physician’s clinical note, which was obtained through retrospective chart review.

Data Analysis

Categorical variables were analyzed with Fisher’s exact test. Continuous variables were analyzed with a 2-sample t-test. All statistical analyses were performed using Stata software version 18.0.

Results

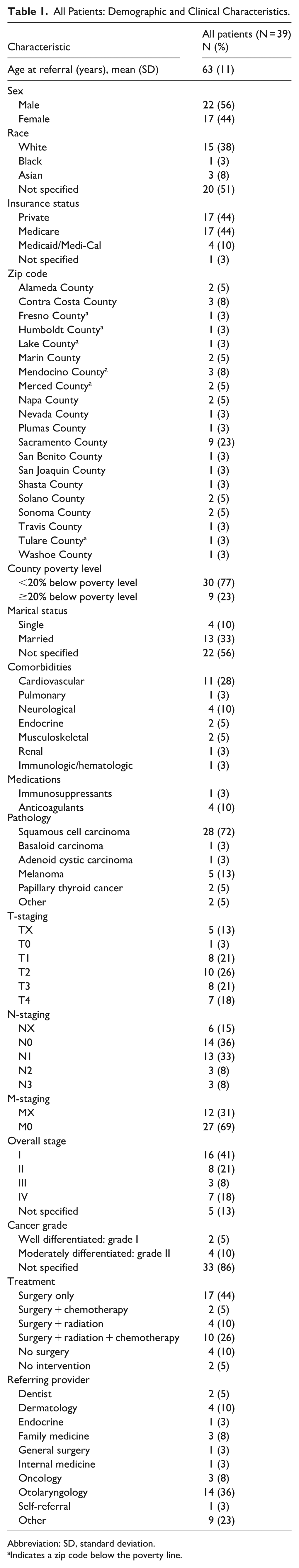

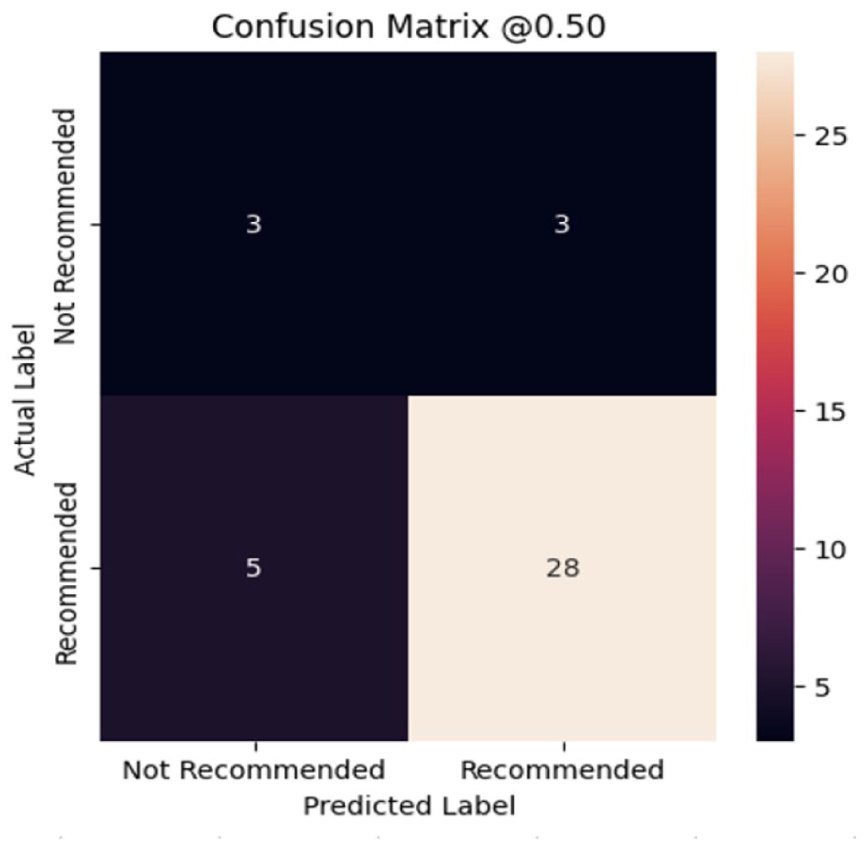

The clinical and demographic details for the cohort are summarized in Table 1. The average age of patients was 63 ± 11 years. The gender distribution was 22 males (56%) and 17 females (44%). The distribution of insurance status included 17 patients with private insurance (44%), 17 patients with Medicare (44%), and 4 patients with Medicaid/Medi-Cal (10%). The distribution of patients by county and zip code is shown in Table 1 and Figure 2. Nine patients (23%) lived in a county defined as a poverty area, in which 20 or more percent of residents live below the poverty level (Table 1). 11

All Patients: Demographic and Clinical Characteristics.

Abbreviation: SD, standard deviation.

Indicates a zip code below the poverty line.

Patient distribution by county.

The distribution of head and neck cancer subsites included nasopharynx (3%), oral cavity (31%), oropharynx (33%), larynx (5%), salivary gland (8%), thyroid (5%), and melanoma (15%; Table 1). Cancer pathology included 28 patients with squamous cell carcinoma (72%), 1 patient with basaloid carcinoma (3%), 1 patient with adenoid cystic carcinoma (3%), 5 patients with melanoma (13%), and 2 patients with papillary thyroid carcinoma (5%). The 2 patients classified as having “other” pathology included 1 patient with an unspecified salivary gland carcinoma and another patient with an epithelial-myoepithelial salivary carcinoma.

At the time of referral, 16 patients had stage I cancer (41%), 8 patients had stage II (21%), 3 patients had stage III cancer (8%), 7 patients had stage IV cancer (18%), and 5 patients (13%) did not have a specified stage documented (Table 1).

Thirty-three patients (85%) underwent surgery as part of their curative treatment option, whereas 6 patients (15%) did not undergo any surgical treatment, with 4 of these patients undergoing chemoradiation and 2 patients undergoing no therapy at all (Table 1).

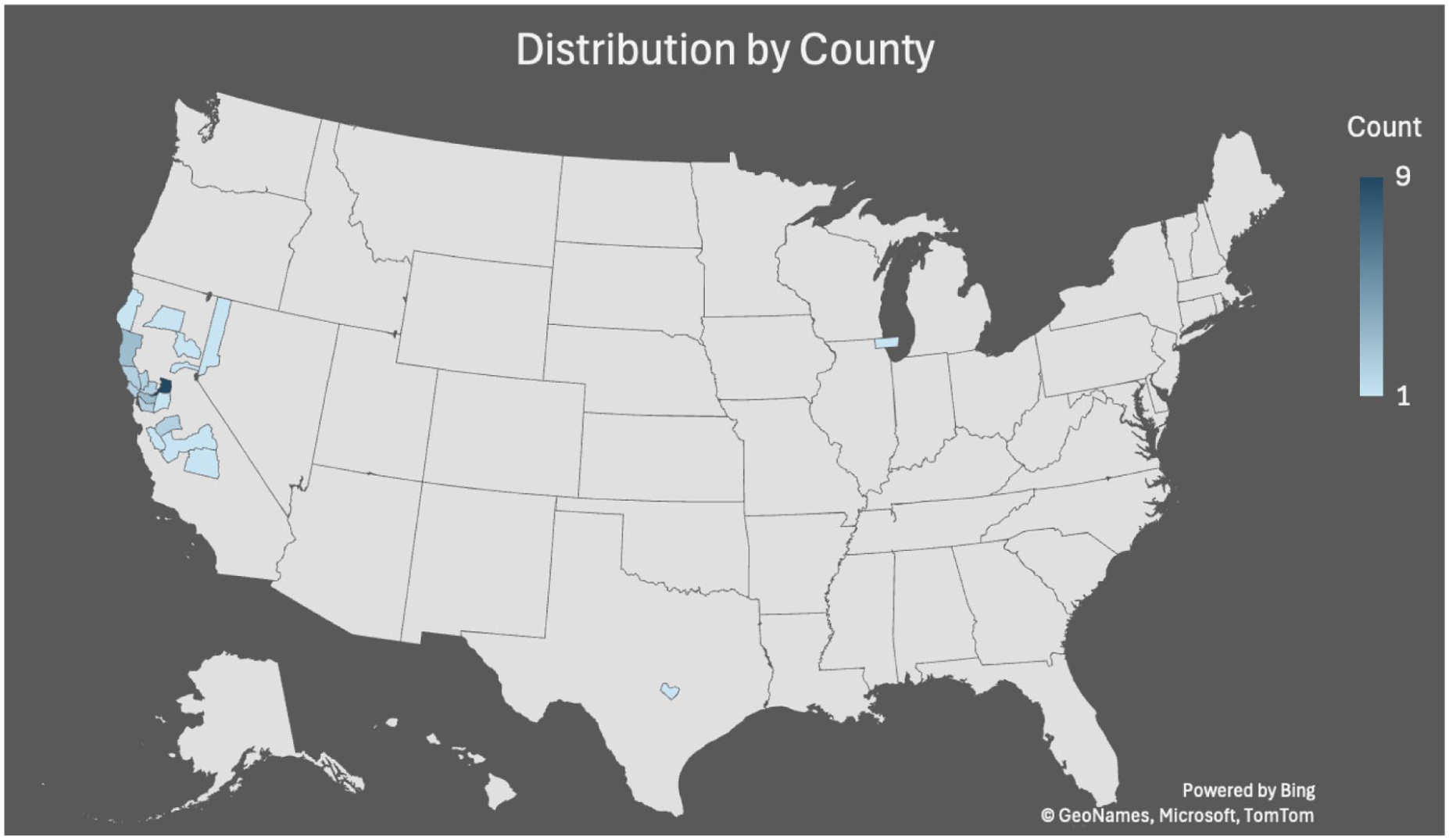

The neural network model had a 79% accuracy rate in predicting whether patients underwent surgery for curative intent following referral to head and neck cancer care (Figure 3). Sensitivity was 85%, specificity was 50%, positive predictive value was 90%, and negative predictive value was 38%.

Performance metrics for the neural network model. The confusion matrix demonstrates an unseen test data prediction accuracy of 79% on clinical patient data. The “Not Recommended” label refers to cases where surgical treatment was not recommended, and “Recommended” refers to cases where surgery was indicated.

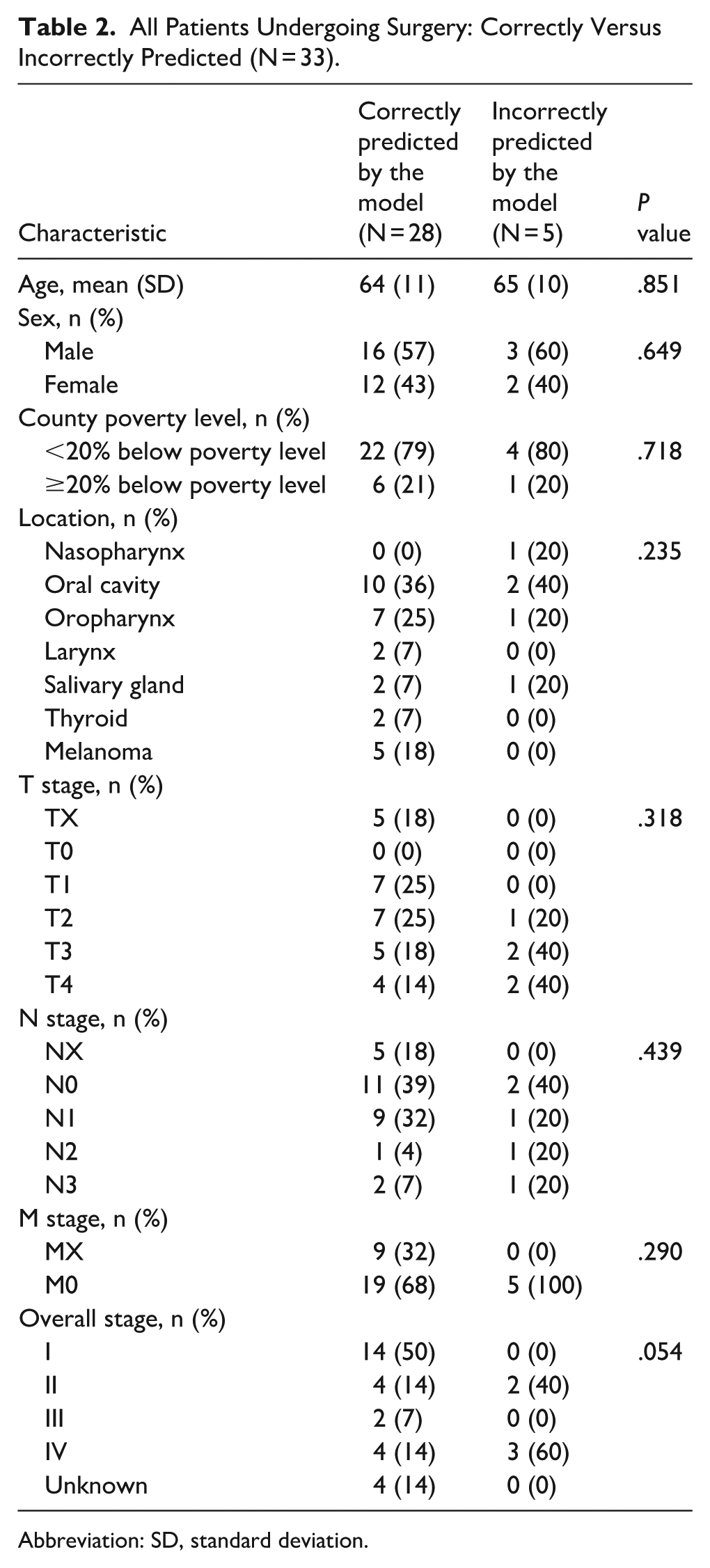

A subgroup analysis was performed on all patients who underwent surgery to determine clinical differences between patients who were correctly or incorrectly predicted by the machine learning model. Of the 33 patients who underwent surgery for curative intent as part of their treatment plan following referral to UCSF, 28 (85%) were correctly predicted by the model to undergo surgery. There were no significant differences in age, sex, cancer location, or cancer grade between correctly and incorrectly predicted surgical patients (Table 2). There were no significant differences in the proportion of patients from counties defined as a poverty area between correctly and incorrectly predicted surgical patients (Table 2). There was a trend toward differences in cancer stage between correctly and incorrectly predicted surgical patients with improved accuracy for lower stage cancer, though this did not reach significance (P = .054). Of patients who were correctly predicted to have surgery, 14 (50%) were stage I, 4 (14%) were stage II, 2 (7%) were stage III, and 4 (14%) were stage IV. Of patients who were incorrectly predicted not to have surgery, 2 (40%) were stage II and 3 (60%) were stage IV (Table 2).

All Patients Undergoing Surgery: Correctly Versus Incorrectly Predicted (N = 33).

Abbreviation: SD, standard deviation.

Discussion

In this study, we demonstrate that our machine learning model accurately predicted head and neck cancer surgery recommendations based on age at referral, cancer location, histology ICD code, sex, stage, AJCC eighth edition pathologic T, N, M, and grade, with a 79% accuracy rate and a 90% positive predictive value. Overall, this machine learning algorithm shows promise in predicting referrals to specialized head and neck surgical cancer care and determining when surgical treatment is indicated.

Other machine learning models, predominantly used to predict referrals to palliative care, currently have accuracy rates ranging from 55% to 88%, sensitivity rates ranging from 3% to 85%, and specificity rates ranging from 24% to 97%. 9 Our model is congruent with the prior literature and is within the upper limits of these ranges. In particular, our model has a very high positive predictive value of 90%, suggesting promise for being able to properly streamline “true positive” surgical patients directly to a surgical oncology service. The negative predictive value is 38%, which is consistent with what we expect, as this model is meant to be a screening test and is designed to overestimate the number of potential surgical candidates.

It is also important to acknowledge that referral documentation is often incomplete and inconsistent, which limits the ability of any model to perfectly mirror downstream clinical decision-making. Referring providers and office staff frequently submit only a subset of relevant clinical details, and critical information such as staging, comorbidities, or HPV/p16+ status may not be available at the time of referral. As a result, the data used in this study reflect the reality of what is typically present at the point of entry into the cancer care system.

It is known that the primary tumor site is one of the main predictors of whether surgery is performed. 12 For example, surgery is more often performed for oral cavity cancer, while radiation is often used for laryngeal cancer. Additional data points and characteristics, which can be employed by machine learning, would likely improve the accuracy of this prediction and help to streamline the referral process. In our previous research testing our model against SEER data and this current study, we found that several cancer characteristics and patient demographic variables had similar importance in determining whether or not a patient was to undergo surgery. 10 This suggests that decision-making about whether or not a patient undergoes surgery is more complex than a reductionist approach that mainly considers the primary tumor site in determining surgical appropriateness, and further emphasizes the importance of adapting machine learning models to this field.

This research expands on our previous work utilizing the SEER database. Previously, we investigated which predictive factors could accurately recommend head and neck cancer surgery, and demonstrated that our model had a high accuracy rate of 81%, with a sensitivity rate of 86% and a specificity rate of 71%. 10 In comparison, our accuracy rate in the current study was 79%. Thus, this study expands on our previous findings and demonstrates that our machine learning model has comparable accuracy when it comes to application to a specific clinical institution, which suggests it could be applied to other tertiary care referral centers. This may help streamline the referral process at institutions throughout the country, especially as the interest in self-referral increases. 13

There were potential limitations to this study. Our sample size is small, and thus clinical differences between the groups that were predicted accurately or not may be detected. In addition, there may have already existed a degree of referral filtering that occurred with this clinical dataset, by virtue of selecting patients that had been seen within the head and neck surgical oncology division at a tertiary care center, which may make these findings less generalizable to broader populations.

In conclusion, we found that our machine learning algorithm could accurately predict whether a patient would require head and neck cancer surgery based on specific clinical and demographic characteristics at the time of referral.

Footnotes

Author Note

Presented at AHNS 2025 (New Orleans, LA).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated and/or analyzed during the current study are not publicly available due to patient privacy and institutional restrictions.