Abstract

Objective:

YouTube is a video-sharing platform that patients frequently utilize. However, there are no objective assessments of the quality of information about otosclerosis on YouTube. Therefore, we aimed to assess the quality of YouTube videos for patient education via a cross-sectional study. We utilized 4 search phrases and analyzed them with 3 different scoring metrics, followed by statistical analysis.

Results:

Fifty videos were analyzed for the search terms “stapedectomy,” “stapedotomy,” “laser stapedotomy,” and “otosclerosis.” Most videos for “stapedotomy” (42%) and “otosclerosis” (41.2%) were intended for patients, while those for “stapedotomy” (48%) and “laser stapedotomy” (96%) were created for healthcare professionals or students. Higher modified DISCERN scores were associated with healthcare organization-produced videos for “otosclerosis” (P = .01964) and “stapedotomy” (P = .02842). Higher global quality score (P = .02964) and Journal of the American Medical Society scores (P = .01488) were significantly associated with videos made by verified users for “otosclerosis.”

Conclusion:

The quality of YouTube videos may not be sufficient for patient education on stapedectomy and stapedotomy for otosclerosis. Only 2 search terms included most videos geared toward patient education, while the other 2 terms had more videos for healthcare professionals. Lower transparency and reliability scores may give concerns about bias.

Introduction

Historically, patient education regarding surgical procedures has been primarily led by physicians during clinic visits and supplemented by experiences from friends, family, and caregivers. However, barriers such as long wait times and brief encounters can impede patients’ access to information from physicians. Previous studies have shown an association between short visits and increased odds of seeking other means of medical education.1 -3 YouTube is a free online video-sharing platform used by over 80% of US adults and is a resource that patients may use for medical information.4,5 YouTube is the second most popular social media platform with a total of 3.07 billion annual views, which is more than other platforms such as Instagram and TikTok with 2.0 billion and 1.59 billion annual views, respectively. 5

Otosclerosis is an osteodystrophic disease of the labyrinthine capsule that can cause progressive conductive hearing loss when it occurs at the oval window, leading to the blockage of the stapes footplate.6 -8 Clinical otosclerosis has a prevalence of around 20 per 100 000 patients, with significant variations among different ethnic groups. 9 Another study showed that 15% of patients with otosclerosis experience conductive hearing loss before the age of 18. 10 While conservative management, such as hearing aids, may be used for pediatric populations, stapedotomy and stapedectomy, which modify the stapes footplate, are considered to be optimal first-line treatments for pediatric and adult hearing loss.7,11,12 There are various techniques, such as laser stapedotomy, which diminishes intraoperative forces, autologous harvesting, which utilizes cortical bone, or endoscopic approaches, which provide improved visualization in otologic surgery.13 -15

Despite prior investigations in otology on the quality and credibility of YouTube videos for patient decision-making and professional education in healthcare, there is a lack of research on otosclerosis and these varying techniques.16 -19 Thus, we aim to assess the quality and reliability of otosclerosis content on YouTube to understand factors impacting the quality of content available to patients. This study specifically evaluates the role of available YouTube videos for patient education in otosclerosis, stapedectomy, stapedotomy, and laser stapedotomy. As such, we sought to complete an analysis of the utility of YouTube videos as a means of patient education using both video metrics and expert scoring.

Methods

Video Selection

The terms “stapedectomy,” “stapedotomy,” “laser stapedotomy,” and “otosclerosis” were queried on November 12, 2023, using an incognito window of Google Chrome web browser with all cookies and cache data cleared to avoid account-specific search biases. Following the methodology of similar studies that analyzed YouTube videos, 50 results from each search were selected to be included in the study, for a total of 200 screened videos.18,20 -22 Videos were then added to a private playlist for review. Inclusion criteria were English-language videos. Exclusion criteria were videos not in English, YouTube shorts, video duplicates (within or between searches), and videos not related or relevant to otosclerosis. Videos were screened until we met 50 included per search term, with a total of 128 not meeting the inclusion criteria.

Video Metrics and Categorization

Videos were accessed via YouTube, and all data were collected from what was publicly available. Video metrics included video length, date published, and the number of likes, views, comments, and account subscribers. Publishers were classified into 4 types: physician, healthcare organization, patient, and third party. Intended audiences were classified into 3 types: students, healthcare professionals, or patients.

Videos were classified into 6 types: advertisement, webinars/informational videos for health professionals, webinar/informational videos for patients, intraoperative videos, patient perspectives, and animation. Advertisements were defined as videos that were made by an organization that was announcing or elaborating on a product for sale. Webinars/informational videos for healthcare professionals were defined as videos intended to educate current or potential healthcare professionals and were not intended to assist in patient education. Webinars/informational videos for patients were defined as videos that were directed toward patients for informative purposes. Intraoperative videos showcased stapedectomy or stapedotomy procedures. Patient perspective videos were defined as those focused on patients, emphasizing the process of diagnosis and expectations regarding perioperative management. Videos that were animated were categorized into the animation video type.

Video quality was subjectively and independently assessed by 2 otology/neurotology fellowship-trained surgeons using the modified DISCERN (mDISCERN), global quality scores (GQS), and Journal of the American Medical Society (JAMA) benchmark. mDISCERN is a 25-point score that rates 5 criteria: achievement of video aims, reliability of information sources, bias of presented information, availability of additional information sources for viewer reference, and mention of areas of uncertainty (Supplemental Table 1). 23 GQS is a subjective 4-point score that assesses the quality, flow, relevancy, and utility of information in videos for a patient audience (Supplemental Table 2). 24 JAMA benchmark is a 4-point score that evaluates authorship, attribution, disclosure, and currency of selected videos with 1 point given for each subcategory (Supplemental Table 3). 25

Statistical Analysis

Categorical data were reported as rates and proportions, while continuous data were reported as medians with interquartile ranges (IQR). The Shapiro-Wilk test revealed a non-normal distribution of numerical data, prompting our use of non-parametric tests for subsequent analyses. Associations between video characteristics and expert assessment scores (mDISCERN, GQS, and JAMA benchmark) were analyzed using: Spearman’s rank correlation (rho) for continuous-continuous comparisons; Kruskal-Wallis rank sum test (H statistic) with Dunn’s post hoc tests for continuous-categorical comparisons; and Fisher’s exact test for categorical-categorical comparisons. All analyses were performed using R Statistical Software v4.2.2. 26 Graders divided and individually graded 100 videos.

This study was exempt from review by the Institutional Review Board of the Pennsylvania State College of Medicine due to a lack of patient involvement or personal identifying health information STUDY00027216.

Results

Video Characteristics

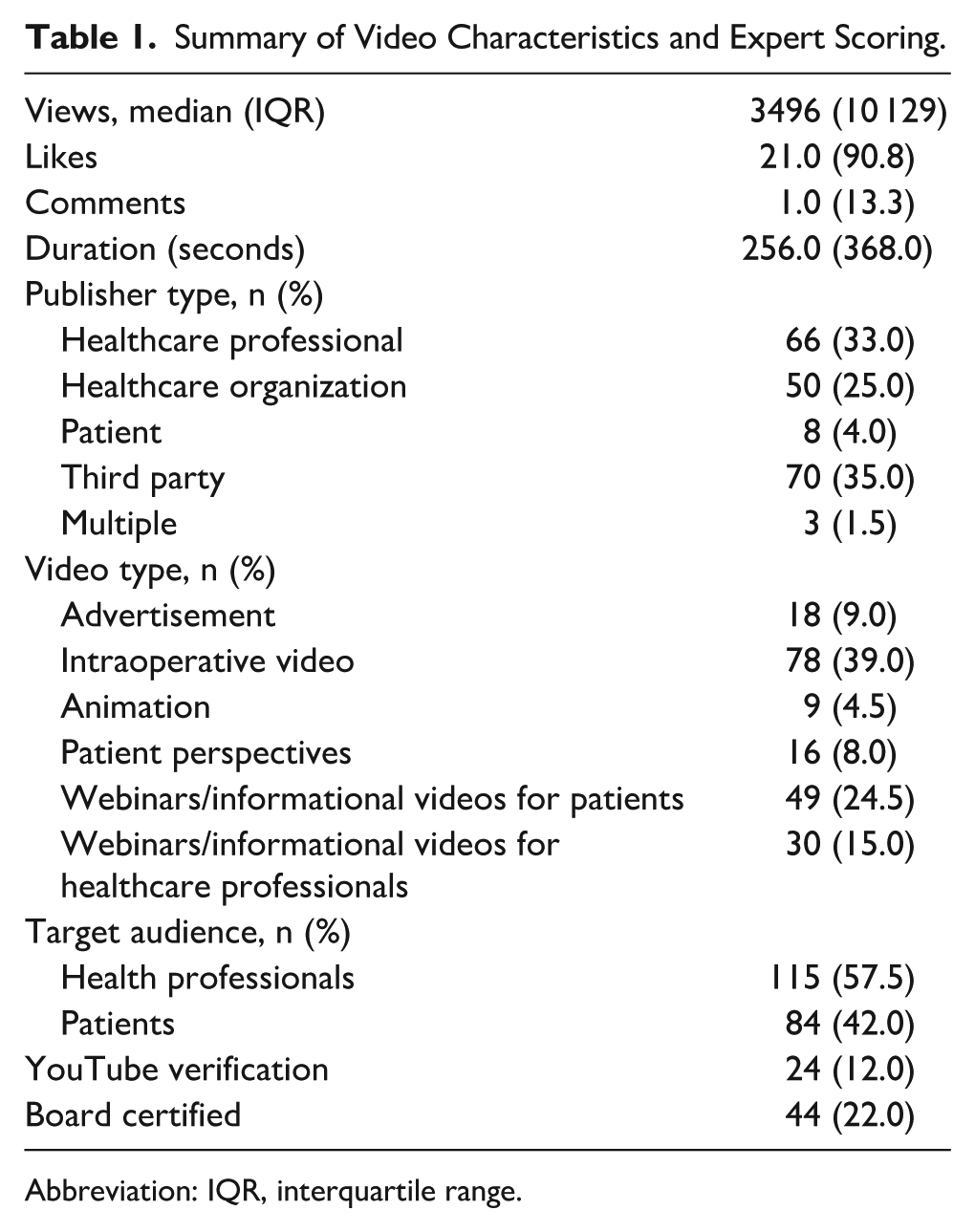

Two hundred videos with 4 561 813 combined views met our inclusion criteria and were included in our analysis. There were no duplicates identified among these 4 queries. Most included videos were intraoperative videos (n = 78, 39%) with 33 videos being contributed by laser stapedotomy, followed by webinars/informational videos for patients (n = 49, 24.5%) with 20 videos being contributed by the search term “otosclerosis.” Videos were primarily produced by third parties (n = 70, 35%) and healthcare professionals (n = 66, 33%), with the target audience being mostly healthcare professionals (n = 115, 57.5%).

Among the included videos, 50 (25%) videos were produced by healthcare organizations, with 15 (7.5%) produced by healthcare organizations or institutions verified by YouTube and identified as such on the video webpage. In addition, 44 (22%) were created by board-certified physicians. Included videos had a median view count of 3496 (IQR 10 129), a median like count of 21.0 (IQR 90.8), 1 (IQR 13.3) average comments, and an average length of 256.0 seconds (IQR 368.0 seconds; Table 1).

Summary of Video Characteristics and Expert Scoring.

Abbreviation: IQR, interquartile range.

Most videos found using the terms “stapedectomy” (n = 21, 42%) and “otosclerosis” (n = 21, 42%) were intended for patients, while the majority of videos found using the terms “stapedotomy” (n = 24, 48%) and “laser stapedotomy” (n = 48, 96%) were created for healthcare professionals or students.

Expert Scoring

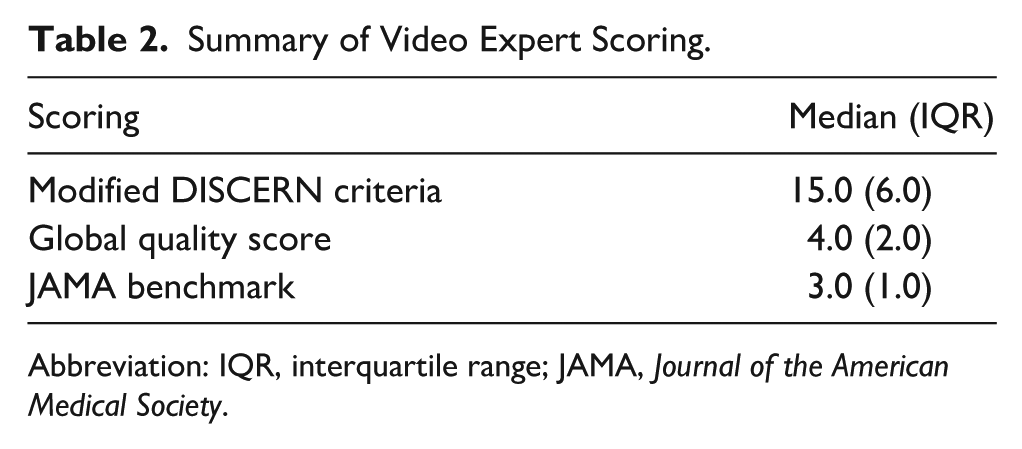

The median mDISCERN scores were 15/25 (IQR 5), 15/25 (IQR 9), 15.5/25 (IQR 3), and 10/25 (IQR 9.75) for “otosclerosis,” “stapedectomy,” “stapedotomy,” and “laser stapedotomy,” respectively. Median GQS scores were 5/5 (IQR 1), 3/5 (IQR 1), 5/5 (IQR 1), and 3/5 (IQR 1) for “otosclerosis,” “stapedectomy,” “stapedotomy,” and “laser stapedotomy,” respectively. JAMA benchmark scores were 2/4 (IQR 1.75), 3/4 (IQR 2), 2/4 (IQR 0), and 4/4 (IQR 1) for “otosclerosis,” “stapedectomy,” “stapedotomy,” and “laser stapedotomy,” respectively. Overall median mDISCERN, GQS, and JAMA benchmark were 15.0 (IQR 6.0), 4.0 (IQR 2.0), and 3.0 (IQR 1.0), respectively (Table 2).

Summary of Video Expert Scoring.

Abbreviation: IQR, interquartile range; JAMA, Journal of the American Medical Society.

Statistical Relationships

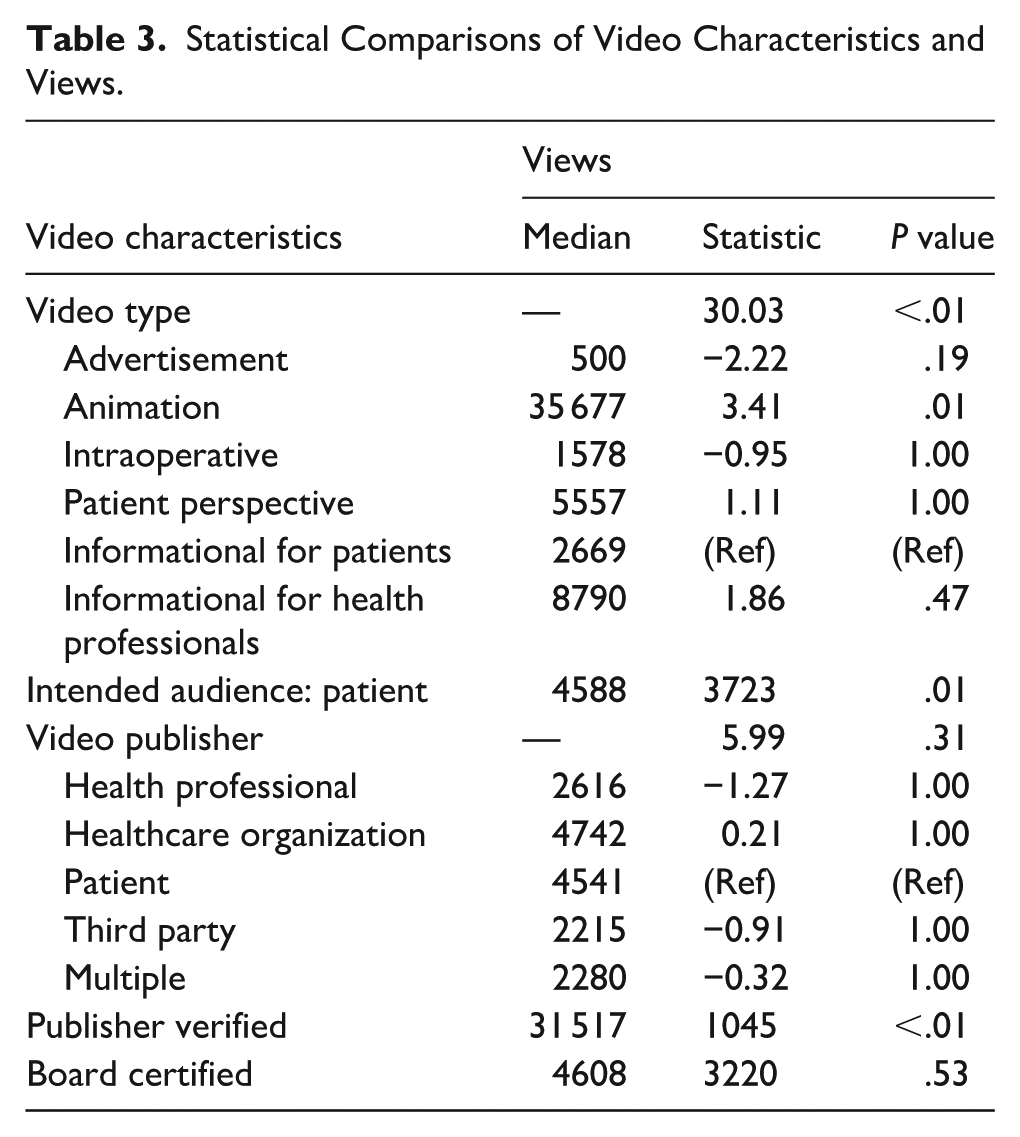

Statistical analysis showed that otosclerosis videos had better popularity if they were made if the YouTube account was verified (median = 31517, W = 1045, P < .01). Views also showed significance with video type (H = 30.03, P < .01) and significance with directionality with post hoc Dunn testing for animation (median = 35677, Z = 3.41, P < .01) but none of the other video types. Created for the intended audience also showed significance (median = 4588, W = 3723, P = .01; Table 3). Other characteristics, such as video publisher or created by board-certified physician, were not statistically significant.

Statistical Comparisons of Video Characteristics and Views.

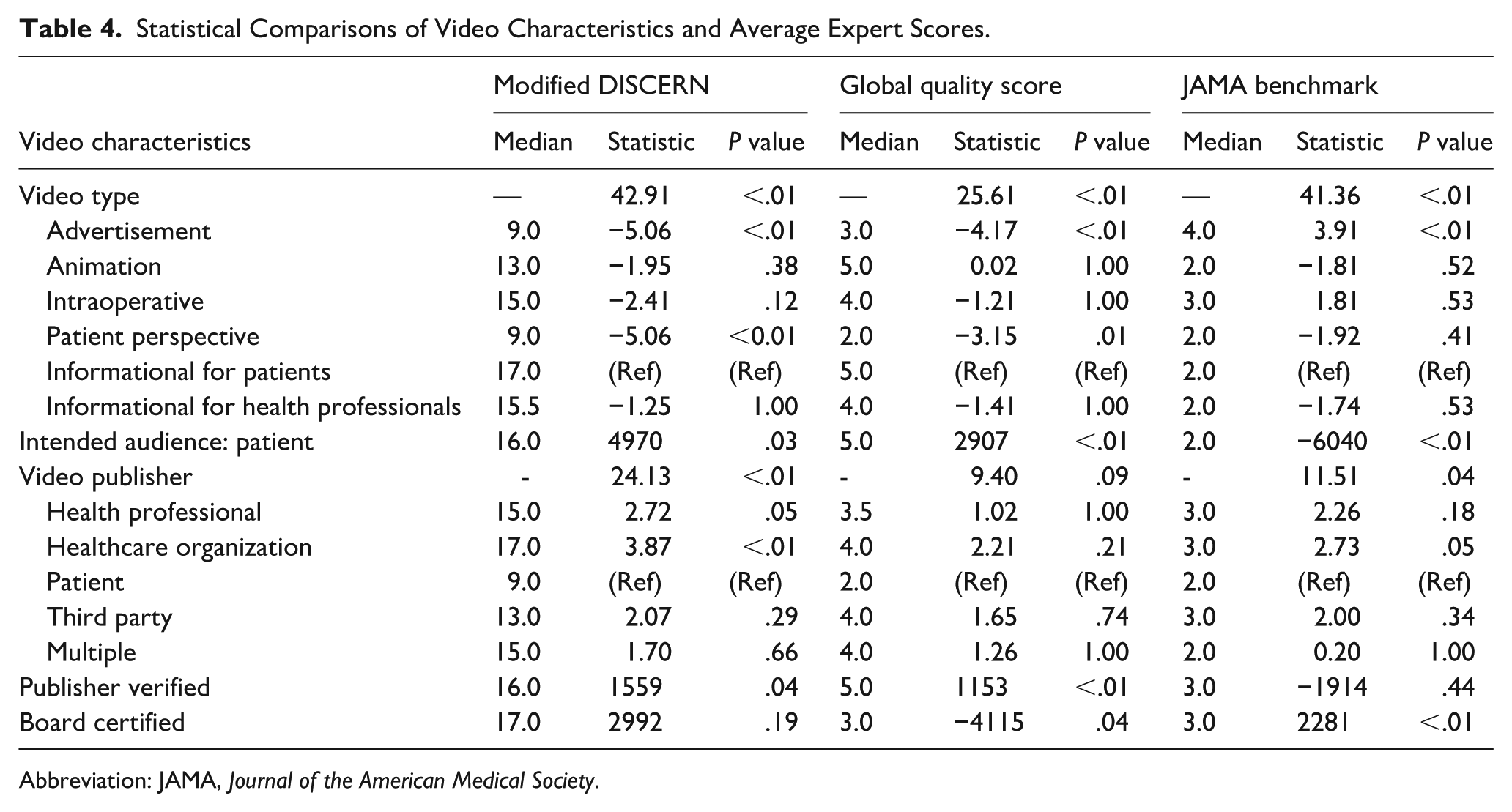

mDISCERN scores were significantly associated with video type (H = 42.91, P < .01), intended audience (median = 16, W = 4970, P = .03), and video publisher (H = 24.13, P < .01), with lower scores for advertisement (median = , Z = −5.06, P < .01) and patient perspective (median = 9.0, Z = −5.06, P < .01). Higher mDISCERN scores were associated with healthcare organizations (median = 17.0, Z = 3.87, P < .01), and if the publisher was YouTube verified (median = 16, W = 1559, P = .04).

GQS were significantly higher if they were made for the intended audience (median = 5.0, W = 2907, P < .01), or by a verified publisher (median = 5.0, W = 1153, P < .01). GQS was significantly lower if videos were made by a board-certified physician (median = 3.0, W = −4115, P = .04). GQS was also significant in regard to video type (H = 25.61, P < .01), with lower scores being significantly associated with advertisements (median = 3.0, Z = −4.17, P < .01).

There was a significant association between JAMA benchmark and video type (H = 41.36, P < .01) with higher scores for advertisements (median = 4.0, W = 3.91, P < .01). There was also a significant association with video publisher (H = 11.51, P = .04), with higher scores being associated with healthcare organizations’ intended audience (median = 3, Z = 2.73, P = .05) and board-certified physicians (median = 3, W = 2281, P < .01). Lower JAMA benchmark scores were significantly associated with intended audience (median = 2, W = −6040, P < .01; Table 4).

Statistical Comparisons of Video Characteristics and Average Expert Scores.

Abbreviation: JAMA, Journal of the American Medical Society.

Discussion

Our findings demonstrate that routine YouTube searches for the purpose of patient education reveal inconsistent results. Analysis revealed that while a significant portion of videos for “stapedectomy” (n = 21, 42%) and “otosclerosis” (n = 21, 42%) were intended for a patient audience, the majority of videos retrieved using the terms “stapedotomy” (n = 24, 48%) and “laser stapedotomy” (n = 48, 96%) were created for healthcare professionals or students. Videos created by healthcare organizations such as hospitals were associated with higher mDISCERN scores for “otosclerosis” (median 5/5; IQR 1) and “stapedotomy” (median 5/5; IQR 1).

These verified publishers were also associated with higher GQS (median = 16, W = 1559, P = .04) and GQS (median = 5.0, W = 1153, P < .01) scores for “otosclerosis” and were also associated with more views (median = 31 517, W = 1045, P < .01) than videos created by not verified board-certified physicians, healthcare professionals, and healthcare organizations. Intraoperative video quality was poor in all scoring metrics. Considering that surgical trainees benefit the most from stepwise visualization of surgical procedures, this shows a lack of high-quality results for trainee education in this field and a lack of applicability to patient education when searching these terms. The current study is the first to examine the quality of videos in otosclerosis education and treatment for both adult and pediatric patient education using validated metrics and methodologies. 16 Our study also utilized 4 search terms with a combined total of 200 unique videos for analysis as we adjusted our methods in an attempt to further characterize the videos available on YouTube. This allowed for a more complete representation of videos than previous cross-sectional YouTube studies, which would have as many as 100 videos for analysis.18,20,21

Video-based education is a resource that many patients rely on for their information when physician instruction is lacking.1 -3 YouTube has continued to be prevalent in patient education, with multiple studies showing a lack of standardization of online education. 27 While there are quality videos that exist for patient education on YouTube, they are not consistently found among varied search terms, with medical advice coming from a source who may or may not be medical professionals. Our study is in line with previous literature that easily accessible high-quality peer-reviewed information for patient education is available through hospital organization websites, including both written and video-based materials.28 -31 Also, with viewership being highly linked to verified publishers, it would be beneficial for healthcare organizations and healthcare providers to become verified so patients will be more likely to engage with their resources. However, due to the need to have 100 000 subscribers to become verified on YouTube, this might not be attainable for physicians or hospital systems that do not already have a large following on social media platforms. 32

This study is the first to assess the quality of videos in otosclerosis for patient education. While our methodology is based on previous studies, the data collection methods were altered to allow for more videos to be graded. This increases our generalizability by gathering a larger dataset for grading to further quantify the current videos present on YouTube at that time. However, this study has several limitations. The content available on YouTube is constantly changing, and the ranking of video search results is not transparent, with no way to replicate searches, even despite our standardized and user-anonymized search methodology. YouTube also utilizes tailored algorithm-driven search results to prioritize videos for each individual, which limits what videos may be presented to patients. 33 In addition, ratings that were given with our scoring criteria are subjective, and video scoring assignments were divided among graders to maximize our sample size. As new videos are uploaded, the findings of this study will inevitably evolve. The arrangement of search results is determined by a proprietary algorithm, which restricts the comprehensiveness of any large-scale analysis of YouTube’s video library. Another limitation is the lack of inclusion of videos on different platforms despite our detailed search methodology, such as Instagram, TikTok, and Facebook. Furthermore, this study focused solely on English-language videos and excluded YouTube shorts, which are becoming more prevalent with the use of TikTok and other short-form video-based social media platforms.34,35 Future research could assess the quality of videos in other languages and shorter media mediums.

Conclusion

The quality of YouTube videos may not be sufficient for patient education on stapedectomy and stapedotomy for otosclerosis. Videos related to otosclerosis care were categorized as fair through the mDISCERN criteria, with general good quality through the GQS, and on average meeting 3 out of 4 JAMA benchmark criteria. Only 2 search terms included a majority of videos geared toward patient education, while the other 2 terms had more videos for healthcare professionals, and verification status was linked to higher viewership. As more patients utilize YouTube and other video-based platforms as a source of educational materials, they are likely to encounter videos not intended for patient a lay audience. This underscores the importance for medical professionals to curate medical education resources or refer patients to peer-reviewed information from trusted organizations to avoid the utilization of substandard guidance that could have adverse effects on patient health outcomes.

Supplemental Material

sj-docx-1-ear-10.1177_01455613261438070 – Supplemental material for Quality and Reliability of YouTube Videos for Patient Education in Stapedectomy and Stapedotomy

Supplemental material, sj-docx-1-ear-10.1177_01455613261438070 for Quality and Reliability of YouTube Videos for Patient Education in Stapedectomy and Stapedotomy by Quentin C. Durfee, Hänel W. Eberly, Bao Y. Sciscent, Andrew Meci, Rachel Choi, Mark Whitaker and Varun S. Patel in Ear, Nose & Throat Journal

Footnotes

Acknowledgements

We would like to acknowledge Caia Hypatia for their contributions to this study regarding project management.

Author Note

This study was presented as a poster presentation at the Pennsylvania Academy of Otolaryngology, Hershey, PA, June 8 to June 10, 2024.

Ethical Considerations

This study was conducted at The Pennsylvania State University College of Medicine and was deemed exempt by The Pennsylvania State University Institutional Review Board (IRB STUDY00027216).

Author Contributions

Quentin C. Durfee: data curation; investigation; presentation of research; writing original draft; writing, review, and editing. Hänel W. Eberly: data curation; methodology; investigation; writing, review, and editing. Bao Y Sciscent: methodology; writing, review, and editing. Andrew Meci: methodology; statistical analysis; writing, review, and editing. Rachel Choi: data curation; writing, review, and editing. Mark Whitaker: supervision; writing, review, and editing. Varun S. Patel: conceptualization; data curation; methodology; project administration; supervision; validation; writing, review, and editing.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data supporting the findings of this study are available from the corresponding author on reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.