Abstract

Objective:

Traditional assessments for otorhinolaryngology clinical interns primarily rely on closed-book examinations (CBE) to evaluate foundational knowledge and reinforce long-term retention. This quasi-experimental study investigates the impact of a blended assessment model—integrating both open-book and closed-book components—on academic performance, test anxiety, and preparation time, compared to the conventional CBE approach.

Methods:

A total of 240 medical students from the 2019 (CBE, n = 115) and 2020 (blended assessment, n = 125) cohorts at the Third Affiliated Hospital of Sun Yat-sen University were enrolled. Exam scores, preparation time, test format preferences, and Revised Test Anxiety Scale (RTA) scores were collected and analyzed. Statistical comparisons between the 2 groups were performed.

Results:

All 240 participants (123 males and 117 females) completed the study, achieving a 100% participation rate. No significant differences were found between the CBE and blended assessment groups in academic performance (P = .906) or anxiety levels (P = .411). However, the blended assessment group reported significantly longer preparation times (P = .027). RTA scores were not significantly correlated with gender (P = .416), exam scores (P = .282), or preparation time (P = .410), though female students exhibited slightly higher anxiety levels. Regarding exam format preferences, 19.2% of students favored CBE (70.8% female), while 80.2% preferred open-book exams (43.6% female).

Conclusion:

The blended assessment model, incorporating both CBE and open-book examinations, serves as a feasible alternative for evaluating clinical interns, fostering their problem-solving abilities. While it demands increased preparation time, it is well-received by students and holds promise for broader adoption in medical education.

Introduction

In China’s 5-year clinical medical education system, students dedicate the first 3 years to acquiring foundational medical knowledge and theoretical understanding before commencing clinical internships in their fourth year. During these internships, students primarily engage in observational learning activities, such as observing surgeries and attending lectures, without direct involvement in surgical procedures or hands-on clinical interventions. Particularly in otorhinolaryngology clinical training, the conventional pedagogy tends to over-rely on didactic knowledge transmission. Given the specialty’s unique challenges—including intricate anatomical relationships and confined surgical fields—trainees frequently experience conceptual difficulties, demonstrate insufficient learning engagement, and ultimately achieve suboptimal training outcomes.

According to Bloom’s taxonomy, the learning process advances through hierarchical cognitive levels: Memory and Understanding, followed by Application, Analysis, Synthesis/Integration, and Evaluation—each stage fostering progressively higher-order cognitive abilities. 1 Recent studies have shown that open-book examinations (OBE) can effectively assess students’ higher-order cognitive abilities, such as knowledge application, problem analysis, and clinical decision-making.2,3 Particularly in highly practical disciplines like otorhinolaryngology, rote theoretical memorization proves inadequate for clinical demands. It is imperative to implement effective assessment methods that guide students in developing both clinical reasoning for disease management and essential procedural skills.

Traditionally, closed-book examinations (CBE) have served as the predominant method for assessing students’ knowledge at the Memory and Understanding levels, largely due to their perceived fairness and objectivity.4,5 However, the diagnosis and treatment of otorhino-laryngologic diseases often require multidisciplinary knowledge integration (eg, radiology, pathology) for comprehensive clinical decision-making. The current CBE model fails to adequately evaluate students’ ability to synthesize and apply such knowledge to solve real-world clinical problems. However, as students transition into clinical practice, the educational emphasis shifts toward cultivating higher-order cognitive skills, including Application, Analysis, Synthesis/Integration, and Evaluation. Current CBE formats, which often prioritize textbook-based responses, tend to encourage rote memorization while limiting opportunities for in-depth analysis and the development of clinical reasoning skills. 6

Building upon previous research,7,8 this study innovatively proposes a blended assessment (BA) model that strategically integrates the OBE and CBE. This approach, validated by Hong et al 8 in dental education, has demonstrated significant improvements in students’ self-directed learning capabilities while maintaining appropriate academic engagement levels. To address the unique challenges in otorhinolaryngology training, we have modified the traditional CBE by incorporating OBE elements: the first segment assesses foundational knowledge retention through CBE, while the second segment evaluates clinical problem-solving skills via OBE. Through comparative analysis with conventional CBE, this study aims to assess the impact of this innovative approach on both academic performance and pre-examination anxiety levels among otorhinolaryngology trainees. 9 The findings are expected to establish a novel assessment paradigm for enhancing clinical education quality in this specialty.

Materials and Methods

Research Design

This study employed a quasi-experimental design to evaluate the impact of a BA model—incorporating both OBE and CBE—on the academic performance and pre-examination anxiety levels of otorhinolaryngology clinical interns. The study compared outcomes between the 2019 cohort, which underwent traditional CBE, and the 2020 cohort, which participated in the BA. Differences in academic performance and anxiety levels were analyzed to assess the effectiveness of the blended approach.

Research Environment

The study was conducted at the Third Affiliated Hospital of Sun Yat-sen University, involving otorhinolaryngology clinical interns from the 2019 and 2020 cohorts. All participants completed assessments during their clinical internships, including a final examination at the end of the internship period. Data collection spanned from the fall semester of 2020 to the spring semester of 2021.

Participants

Participants included medical students from Sun Yat-sen University who undertook otorhinolaryngology clinical internships in the 2019 and 2020 cohorts. The sample size was determined based on expected effect size, statistical power (set at 0.8), and a significance level of .05. A minimum of 200 students was estimated to ensure sufficient statistical power for detecting significant differences in academic performance and anxiety levels between the groups. Inclusion criteria comprised voluntary participation in the study and completion of the clinical internship examination. Students who did not participate in the study or failed to complete the examination were excluded. A total of 240 students were enrolled, representing 100% of the target population, with a 100% response rate to the survey. The study adhered to the principles of the Declaration of Helsinki (revised in 2013), and all participants provided written informed consent. Ethical approval was obtained from the Ethics Committee of the Clinical Research Center at the Third Affiliated Hospital of Sun Yat-sen University (Approval No. II2024-160-01).

Data Collection

Medical students studied otorhinolaryngology in their seventh semester and began clinical internships in the eighth semester. The final assessment traditionally comprised a CBE, including 5 conceptual questions, 3 short-answer questions, and 1 essay question. While the 2019 cohort underwent CBE, the 2020 cohort participated in a BA combining OBE and CBE. Examination papers were reviewed by the Department of Otorhinolaryngology to ensure consistent difficulty levels. Following the examination, both cohorts completed the Revised Test Anxiety Scale (RTA) and provided data on preparation time. In addition, the 2020 cohort completed a survey assessing their preferences for the exam format, rated on a scale from −100 (preference for CBE) to +100 (preference for OBE), with higher absolute values indicating stronger preferences. To minimize measurement bias, exam scores and anxiety levels were assessed using standardized scales.

Variables

The primary outcome variables included exam scores and pre-examination anxiety levels, assessed using the RTA. Exam scores served as a direct measure of academic performance, while the RTA evaluated anxiety levels. Potential confounders included gender and preparation time, with exam format preferences considered as effect modifiers.

Implementation Plan

Exam format: The BA consisted of 2 components. The first was a traditional CBE, comprising half the standard question volume and focusing on foundational knowledge through conceptual and short-answer questions. The second component was an OBE, involving clinical case discussions that assessed diagnosis, differential diagnosis, treatment plans, and updates on treatment advancements from the past 5 years. This section evaluated clinical reasoning, analytical problem-solving, and awareness of recent developments. The OBE followed the CBE as part of the theoretical examination.

Exam duration: Each component lasted 45 minutes, totaling 90 minutes, consistent with the duration of the CBE for the 2019 cohort.

Exam requirements: The same disciplinary standards apply to both components. During the OBE, students were permitted to use pre-prepared materials and textbooks but were prohibited from accessing the internet, copying from peers, or exchanging materials. Students were informed of the exam format and requirements on the first day of their internships.

Scoring: Each component was worth 50 points, totaling 100 points, equivalent to the CBE scoring system used in 2019.

Question difficulty: CBE questions were drawn from the Department of Otorhinolaryngology’s existing question bank, maintaining consistent difficulty levels with previous exams. OBE questions were designed by faculty, requiring students to consult textbooks and literature to formulate comprehensive responses.

Pre-exam anxiety measurement: The RTA by Benson and El-Zahhar was used to measure anxiety levels. The RTA comprises 20 items across 4 factors: tension, worry, physical symptoms, and off-task thoughts. Responses were recorded on a four-point Likert scale ranging from “rarely” to “always.”8,10

Statistical Analysis

Data were analyzed using SPSS (IBM SPSS Statistics) software (version 25.0). Normally distributed data were expressed as mean ± standard deviation and compared using independent samples t-tests. Categorical data were presented as frequencies and percentages, analyzed using chi-square tests. Non-normally distributed variables were described using medians and interquartile ranges and compared using the Mann-Whitney U test. Correlations between continuous variables were assessed using Pearson’s correlation coefficient, with r < .3 indicating a low correlation, .3 ≤ r < .7 indicating a moderate correlation, and r ≥ .7 indicating a high correlation. Statistical significance was set at a 2-tailed P-value <.05. Subgroup analyses were conducted to explore potential differences based on gender and exam format preferences, with interaction effects assessed to evaluate their combined influence on outcomes.

Results

General Characteristics and Performance Differences Between CBE and BA Groups

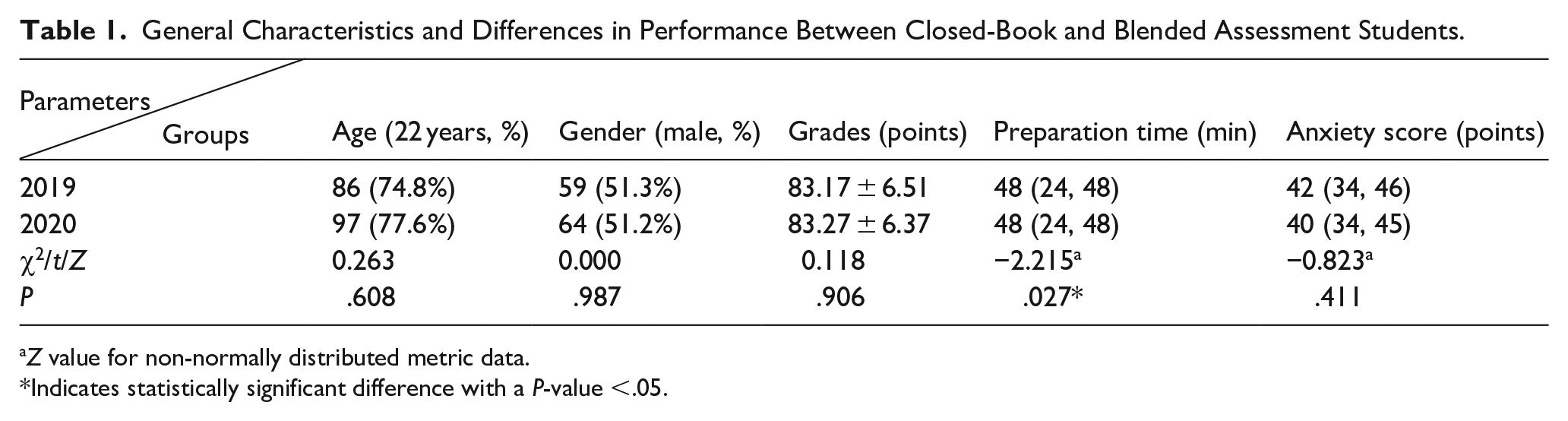

The study included 240 participants (123 males and 117 females). General characteristics and academic performance were analyzed. Age and gender distributions were assessed for normality and compared using the chi-square test. Exam grades, which followed a normal distribution, were compared using independent samples t-tests. Preparation time and anxiety scores, which were non-normally distributed, were compared using the Mann-Whitney U test (see Table 1).

General Characteristics and Differences in Performance Between Closed-Book and Blended Assessment Students.

Z value for non-normally distributed metric data.

Indicates statistically significant difference with a P-value <.05.

Analysis of Factors Influencing Pre-Examination Anxiety

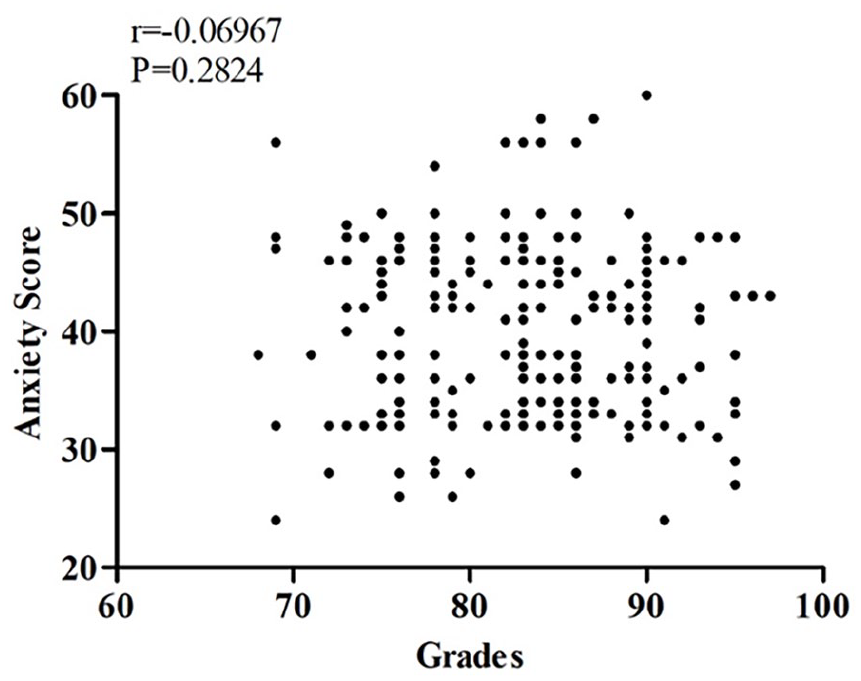

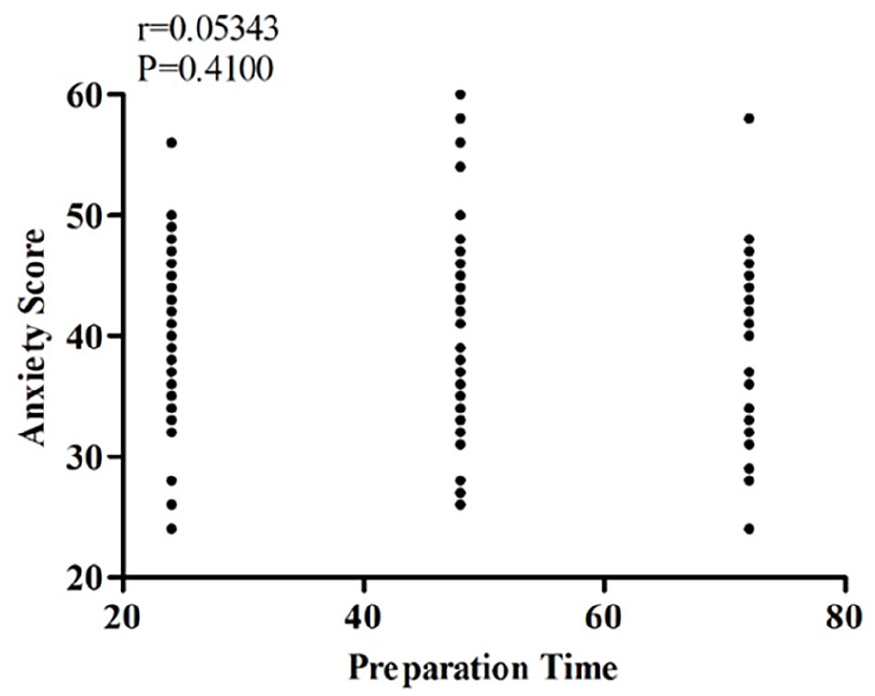

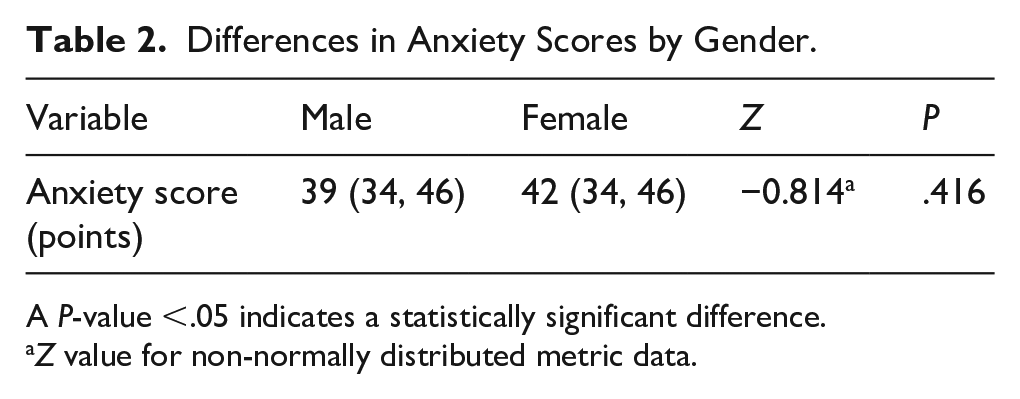

The Pearson correlation coefficient was used to examine potential relationships between RTA scores and other variables. No significant correlations were found between RTA scores and exam grades (see Figure 1) or preparation time (see Figure 2). In addition, the Mann-Whitney U test was employed to analyze the relationship between anxiety scores and gender, revealing no significant correlation (z = −0.814, P = .416; see Table 2). The mean RTA scores were 40.6 for females and 39.6 for males.

Correlation between anxiety scores and grades.

Correlation between anxiety scores and preparation time.

Differences in Anxiety Scores by Gender.

A P-value <.05 indicates a statistically significant difference.

Z value for non-normally distributed metric data.

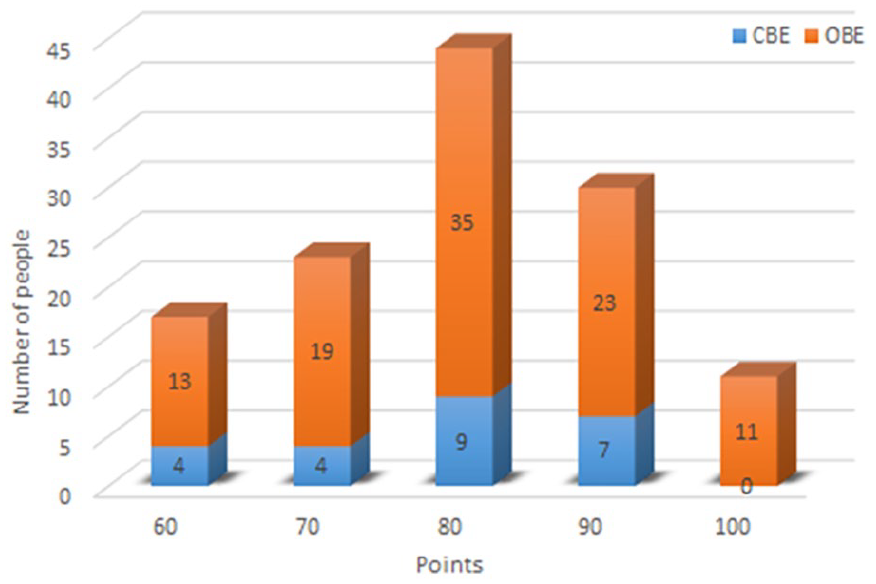

Student Preferences for Examination Formats

This segment of the study analyzed examination format preferences among 125 students from the 2020 cohort who participated in both CBE and OBE. Of these students, 24 (19.2%) preferred CBE, with females comprising 70.8% of this group. By contrast, the majority of students (80.2%) favored OBE, with females representing 43.6% of this preference group. For a detailed distribution of preference levels, see Figure 3.

Student preferences for examination formats.

Discussion

Examinations are a critical component of the educational process, serving as essential tools for evaluating teaching efficacy and student learning outcomes. In otorhinolaryngology clinical education, particularly within the internship phase of China’s 5-year medical training program, teaching practices have traditionally emphasized theoretical knowledge retention over clinical reasoning and practical skills development. This mismatch often limits students’ ability to manage the specialty’s complex clinical scenarios, which frequently involve intricate anatomical regions, narrow surgical spaces, and multidisciplinary diagnostic considerations.

With the ongoing evolution of medical education reform, especially under the internship system, there is a growing need to shift from rote memorization toward fostering students’ higher-order cognitive abilities—such as knowledge application, clinical analysis, and decision-making. In this context, understanding the advantages and limitations of OBE and CBE is particularly relevant for educators in highly practical specialties like otorhinolaryngology.

OBE, which permits the use of textbooks, notes, and other reference materials during exams, has been implemented in various educational settings for many years.11-13 However, to our knowledge, there are no reported instances of its application as a final assessment tool in otorhinolaryngology clinical internships. Existing literature suggests that OBE may reduce anxiety levels compared to CBE, potentially leading to improved academic performance.14-16 Unlike studies advocating for the wholesale replacement of CBE with OBE, this study introduces a BA model that strategically integrates the 2 formats and compares its outcomes to those of traditional CBE. Interestingly, our findings indicate that students undergoing BAs did not exhibit significantly different anxiety levels compared to those assessed using traditional CBE. This may be attributed to the novelty of the format, which could introduce additional cognitive demands and emotional stress. Prior research has shown that test anxiety is closely linked to poor performance, as it may impair focus and provoke negative emotions during high-stakes assessments. 17 Nevertheless, our results revealed no significant correlation between pre-examination anxiety levels and academic performance, preparation time, or gender. It is worth noting that female students reported slightly higher average anxiety scores (M = 40.6) than their male counterparts (M = 39.6), suggesting that female medical students may experience greater stress during high-stakes clinical assessments. In otorhinolaryngology training, where students must not only memorize theoretical concepts but also apply them flexibly in diverse clinical contexts, this gender difference underscores the need for targeted psychological support and exam preparation guidance.

Prior studies have highlighted that OBE fosters deeper understanding by encouraging students to apply and synthesize knowledge in real-world contexts, thereby enhancing clinical reasoning skills.7,18,19 By contrast, traditional CBE often places excessive emphasis on rote memorization and test-taking strategies, 1 which may be inadequate in disciplines such as otorhinolaryngology, where foundational knowledge is necessary but not sufficient for managing complex patient cases. Some research suggests that the lower stress associated with OBE may lead to complacency and reduced preparation. 18 For instance, Heijne-Penninga et al 17 observed that students tend to spend less time preparing for OBE, perceiving them as less demanding than CBE due to the availability of reference materials. However, studies by Myyry and Joutsenvirta, 20 and Theophilides and Koutselini 21 found no significant differences in study time between the 2 formats. In our study, although no significant differences in academic performance were observed between the BA and traditional CBE groups, students in the BA group reported longer preparation times. This suggests that the inclusion of OBE components may have enhanced students’ motivation to learn without compromising academic rigor. Such an approach may be particularly advantageous in otorhinolaryngology, where students must integrate anatomical, pathological, radiological, and procedural knowledge to make accurate diagnostic and therapeutic decisions.

In the survey on assessment preferences, over 80% of students favored the OBE component over CBE. Interestingly, the majority of CBE supporters were female (over 70%), possibly reflecting greater concerns about unfamiliar assessment formats. Student feedback indicated that although OBE questions were more challenging and did not alleviate anxiety, they promoted active learning and encouraged more thorough preparation and review. This is particularly meaningful in otorhinolaryngology, where active engagement with clinical cases—rather than passive absorption of textbook content—is essential for developing diagnostic and procedural competence. In addition, the blended model helped reduce the burden of memorizing exhaustive details, allowing students to focus more on developing clinical reasoning skills. Conversely, students who preferred CBE felt overwhelmed by the unpredictable nature of OBE questions, expressing difficulty in identifying effective study strategies. This highlights the importance of clearly communicating the expectations and structure of OBE assessments in otorhinolaryngology training and providing representative sample questions or practice exams to help students adjust their study approaches accordingly.

Despite these promising findings, several limitations must be acknowledged. First, students were asked to retrospectively report their preparation time, which may have introduced recall bias. Second, in the OBE segment, which aimed to assess higher-order cognitive abilities, students provided answers based on their levels of understanding, resulting in a wide range of responses. Although the evaluation criteria for open-book questions were established through faculty discussions, the objectivity, scientific validity, and measurability of these criteria require further verification. Future studies should address these limitations by incorporating real-time tracking of preparation time and refining the assessment rubrics for OBE to ensure consistency and reliability.

Conclusions

This study introduces an innovative BA model by incorporating an OBE component into the traditional CBE framework used in 5-year clinical internship teaching. Unlike conventional CBE or pure OBE approaches, this blended model retains a segment dedicated to evaluating foundational knowledge while integrating a component designed to assess the application of knowledge and problem-solving abilities in clinical contexts. This dual approach not only enables a comprehensive evaluation of students’ capacity to address real-world clinical challenges but also supports the transition from foundational knowledge acquisition to the development of advanced clinical reasoning skills. The findings demonstrate that this blended assessment model is a feasible and effective examination strategy for internship teaching, offering a balanced approach to assessing both theoretical understanding and practical competencies.

Footnotes

Ethical Considerations

This study was performed in line with the principles of the Declaration of Helsinki, Approval was granted by the Ethics Committee of the Clinical Research Center of the Third Affiliated Hospital of Sun Yat-sen University (Batch No. II2024-160-01).

Author Contributions

Shuo Wu designed the work, acquired and analyzed data; Shuo Wu, Feitong Jian, and Qintai Yang drafted, revised, and approved the manuscript; Qintai Yang agreed to be accountable for all aspects of the work.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Source data for the figures are provided with this paper. All other data are available from the corresponding author upon reasonable request.