Abstract

Keywords

Introduction

Given that the average adult in the United States has an eighth-grade reading level and 54% have literacy below the sixth-grade level, the American Medical Association (AMA) and the National Institute of Health (NIH) recommend that educational materials provided to patients should not exceed a sixth-grade reading level. 1 Maintaining a reading level below the national average allows these materials to be accessible to a larger volume of patients.

Previous studies have investigated the reading levels of patient education materials available on recognized medical society websites. These studies showed that education materials on the American Association for Surgery of Trauma website, obstetrics and gynecology societies, and 3 otolaryngological societies (the American Academy of Otolaryngology-Head and Neck Surgery [AAO-HNS], the Canadian Society of Otolaryngology-Head and Neck Surgery [CSO-HNS], and Ear, Nose, and Throat United Kingdom [ENT UK]) were consistently written above the recommended sixth-grade reading level.1-3 However, no previous studies have focused on the process of lowering the reading levels of the existing materials.

Artificial intelligence (AI) has become increasingly available to the public, and one such platform is ChatGPT. Previous research has analyzed some implications of using the platform in medicine, including its utility in scientific writing, research, and patient education. 4 This led to the authors’ hypothesis that AI might be an effective tool to reduce the reading level of patient education materials, specifically within pediatric otolaryngology, to increase patient and caregivers’ comprehension. There were 2 main objectives of this study: to identify the reading levels of existing patient education materials in pediatric otolaryngology and to utilize natural language processing AI to reduce the reading levels of these materials.

Materials and Methods

The patient education materials for pediatric conditions were identified and selected from the AAO-HNS website and included: Ankyloglossia, Pediatric Gastroesophageal Reflux Disease, Pediatric Hearing Loss, Pediatric Sinusitis, Pediatric Sleep-disordered Breathing, Pediatric Thyroid Cancer, Swimmer’s Ear, Tonsillitis, and Tonsil and Adenoid Difficulty (n = 9). 5 Patient education materials about the same conditions, if available, were identified and selected from the websites of 7 children’s hospitals based on US News & World Report 2023 to 2024 rankings of the best children’s hospitals across the country: Boston Children’s Hospital, Children’s Hospital Colorado, Children’s Hospital of Pennsylvania, Cincinnati Children’s Hospital, Monroe Carell Jr. Children’s Hospital at Vanderbilt, Seattle Children’s Hospital, and Texas Children’s Hospital.6-13 These 7 were more specifically selected to provide representation from as many geographic regions within the United States as possible. To preserve anonymity, the institutions were randomly labeled A-G.

All published education materials were scored with an online Flesch-Kincaid calculator to determine the grade level. 14 The Flesch-Kincaid grade level is calculated using the average sentence length (number of words divided by the number of sentences) and the average number of syllables per word (number of syllables divided by the number of words). 15 The scores calculated range from 0 to 18, with each number correlating to a grade level. 16 For example, a score of 12 means that an individual who is currently a senior in high school or has completed senior year of high school would comprehend the material written.

AI was prompted to convert the existing online education materials to a fifth-grade reading level by entering “Convert the following text to a fifth-grade reading level while keeping the same word count:” into ChatGPT version 3.5. Maintaining the exact word count was completed to prevent AI from reducing the amount of content as the goal was to solely reduce the grade level of the existing content. The text from the education materials was pasted into ChatGPT after the prompt. Once AI-generated text responses to the prompt, the texts were then re-scored using the Flesch-Kincaid calculator to determine the reading ease and the grade level.

This study was reviewed by the Pennsylvania State College of Medicine International Review Board and deemed to be exempt (Study ID #22789).

Statistical Analysis

Statistical analysis was performed by calculating mean and standard deviation. The unpaired t-test was used to calculate P-values. For this study, results were considered statistically significant if P < .05.

Results

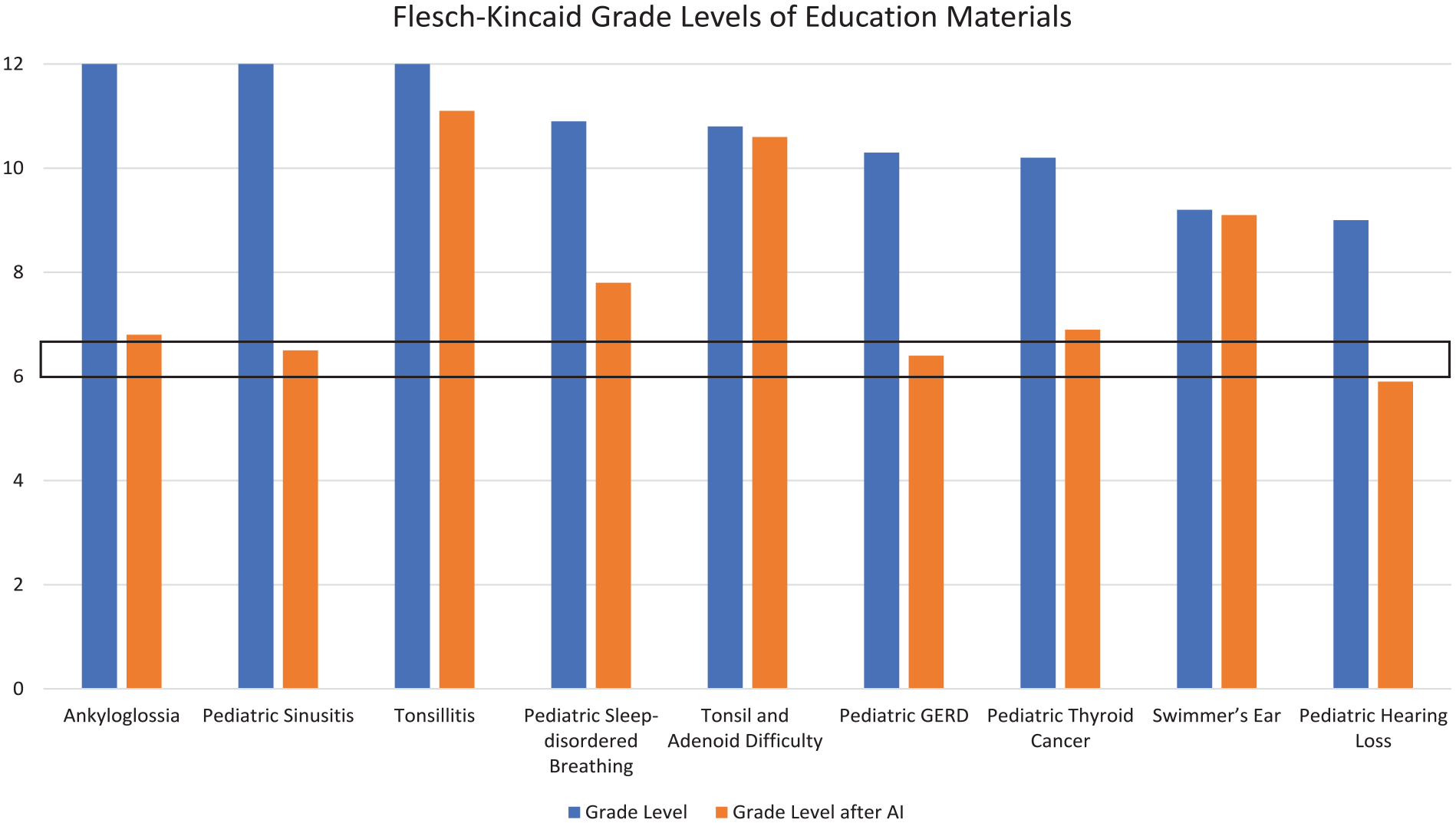

A total of 50 patient education materials were analyzed between AAO-HNS and the 7 institutions. On average, AAO-HNS pediatric education material was written at a 10.71 ± 0.71 grade level based on the Flesch-Kincaid grade level calculator. Seven published patient education materials complied with recommendations. Therefore, 43 patient education materials were asked to be reduced by ChatGPT. After requesting the reduction of those materials to a fifth-grade reading level, ChatGPT converted the materials on AAO-HNS to an average grade level of 7.9 ± 1.18 based on the Flesch-Kincaid calculator (Figure 1; P < .01). Samples from 2 conditions, Tonsillitis and Sinusitis, were included as Supplemental 1 to 4 to illustrate the differences in the Chat-GPT-modified products. Of note, the product from the reduction of the Sinusitis sheet had substantially fewer words (233) than the sheet published on the AAO-HNS website (826), though ChatGPT was instructed to maintain the same word count.

Comparison of Flesch-Kincaid grade levels of pediatric otolaryngology education materials from the AAO-HNS website—the mean grade level as published was 10.71 ± 0.71. AI was able to reduce the mean grade level to 7.90 ± 1.18 (P < .001). The black box represents the target of being within a sixth-grade level. AAO-HNS, American Academy of Otolaryngology-Head and Neck Surgery; AI, artificial intelligence.

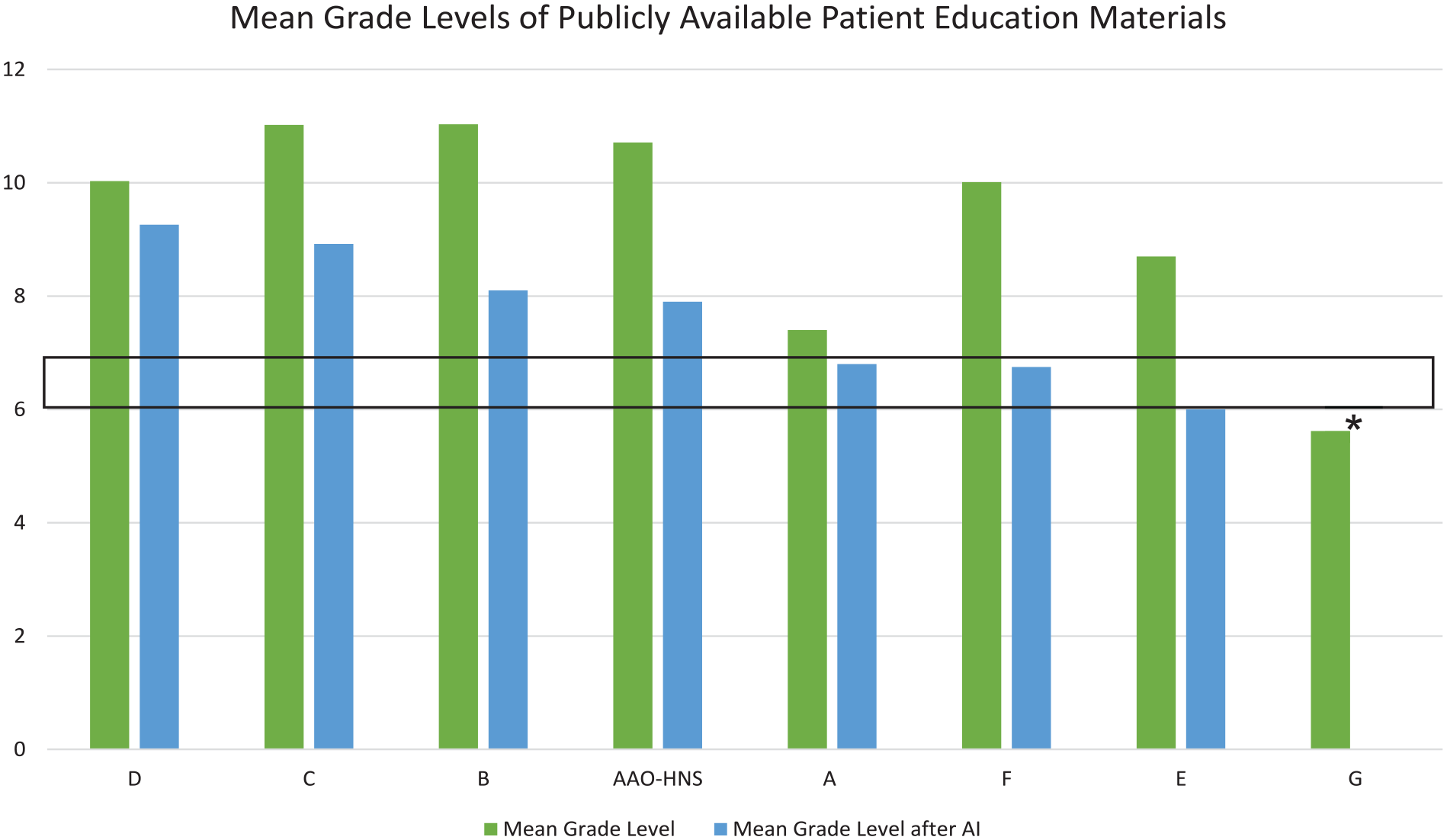

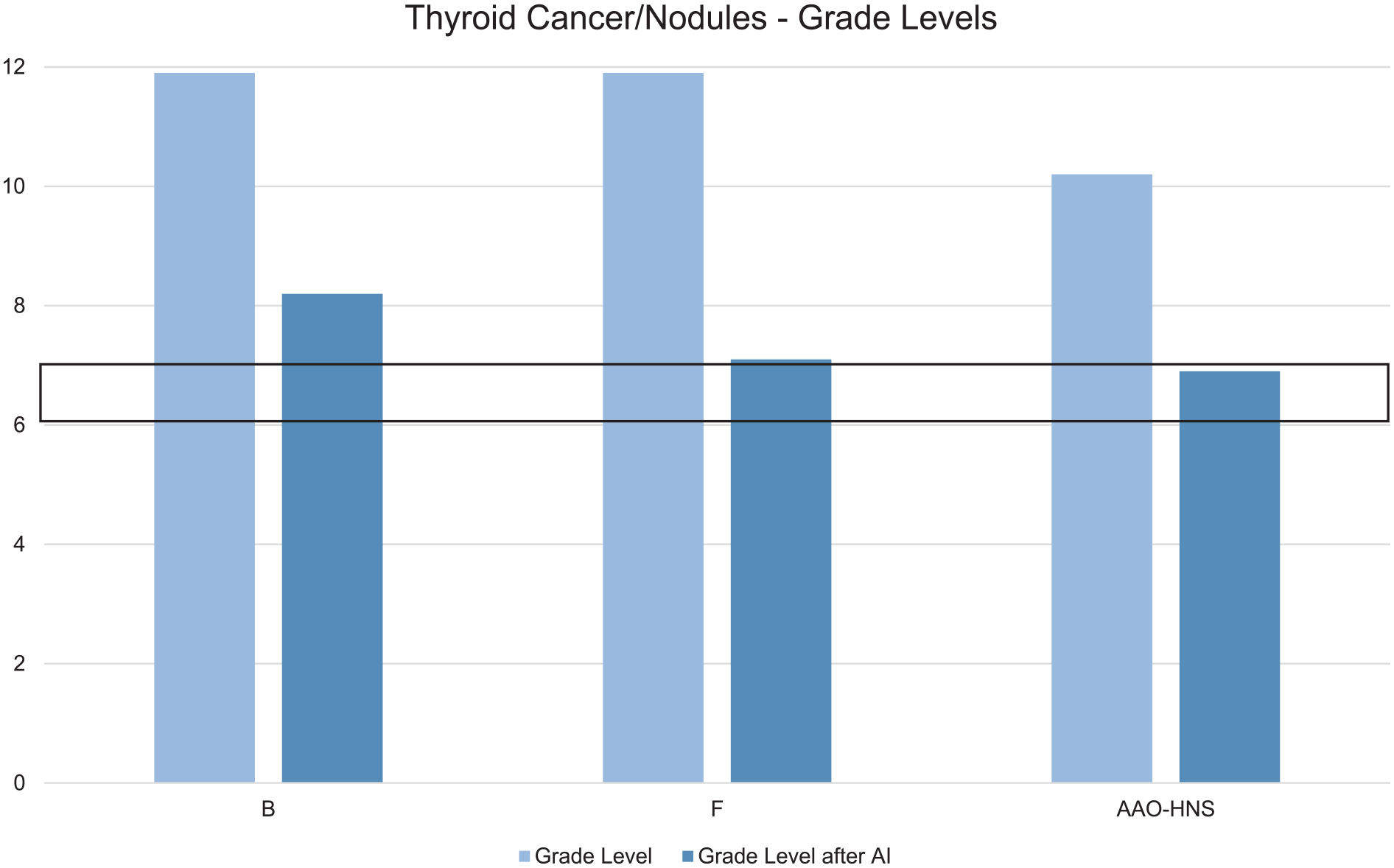

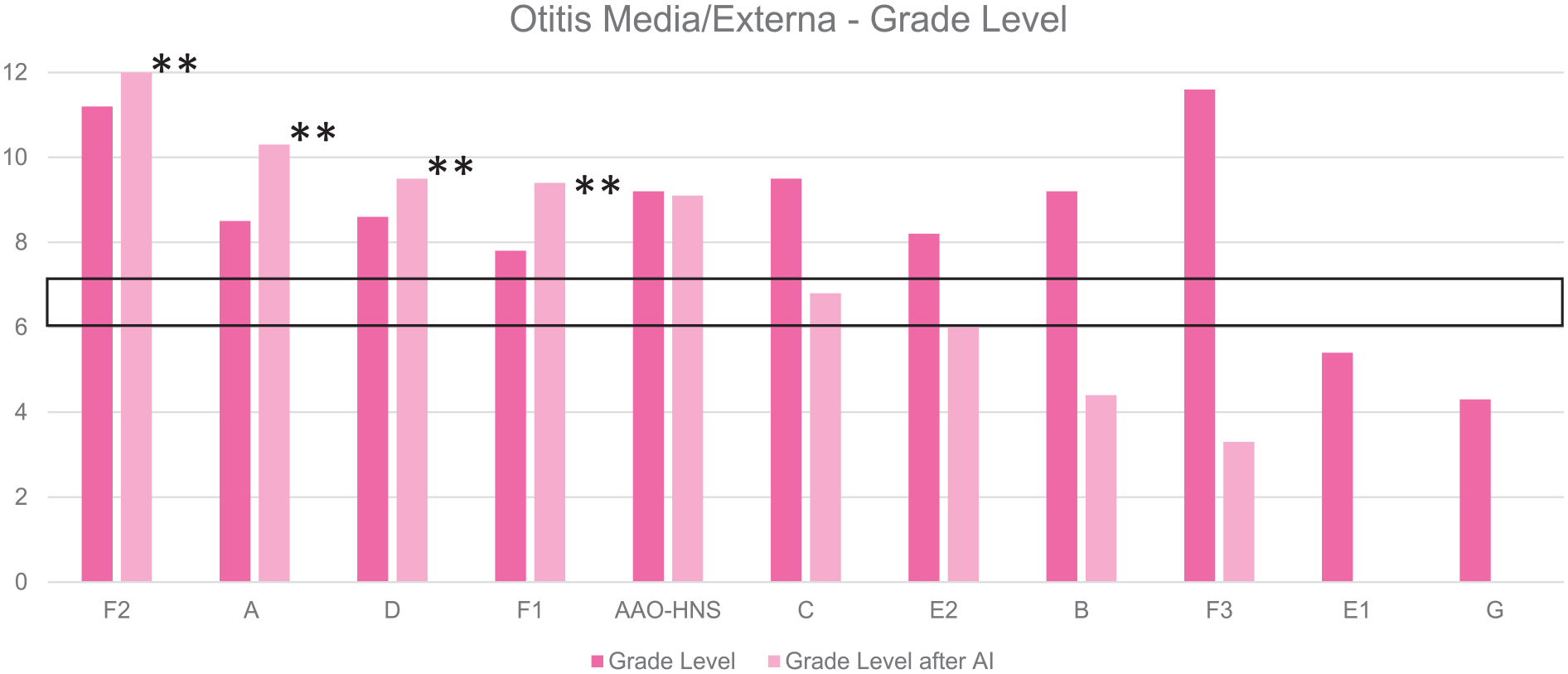

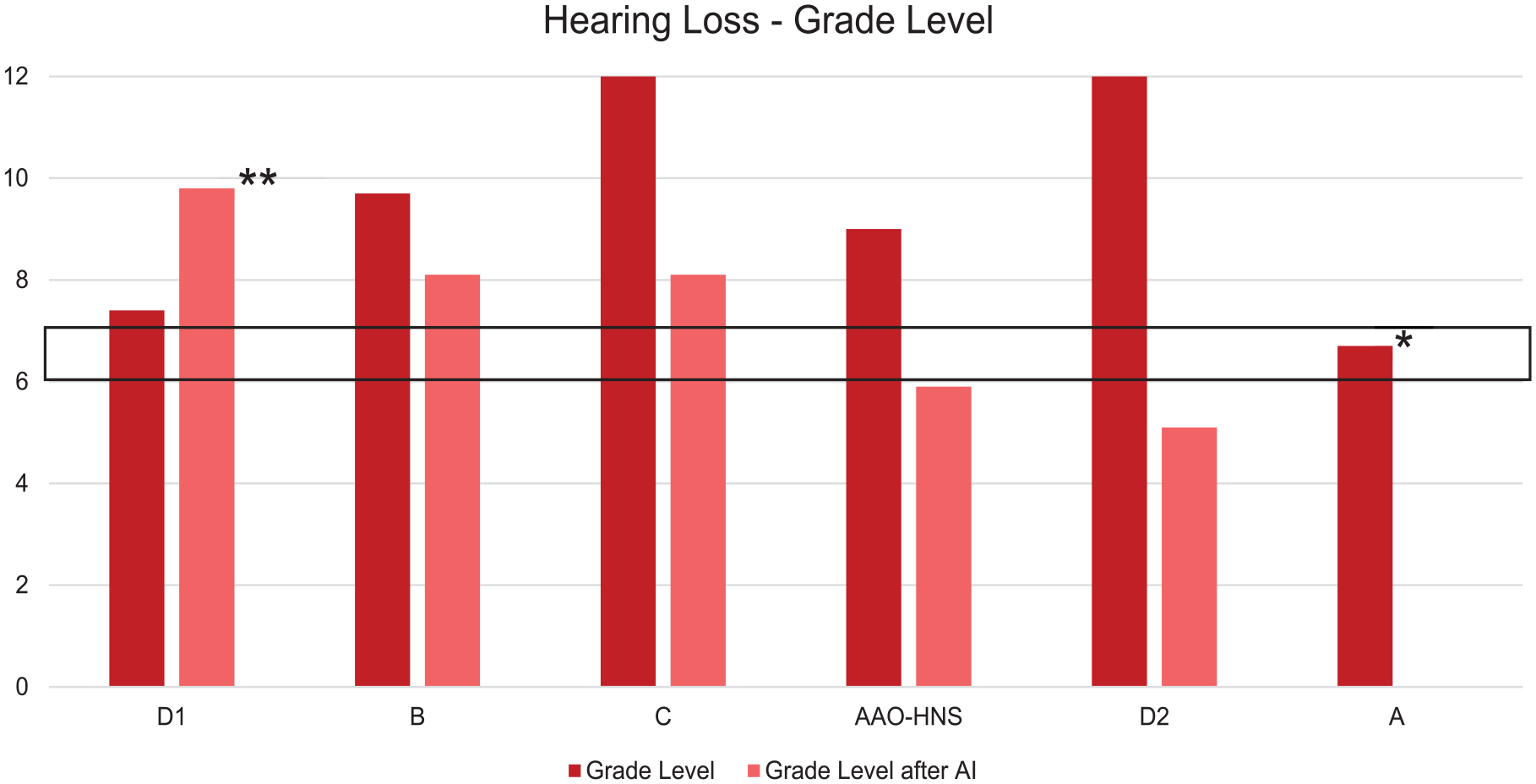

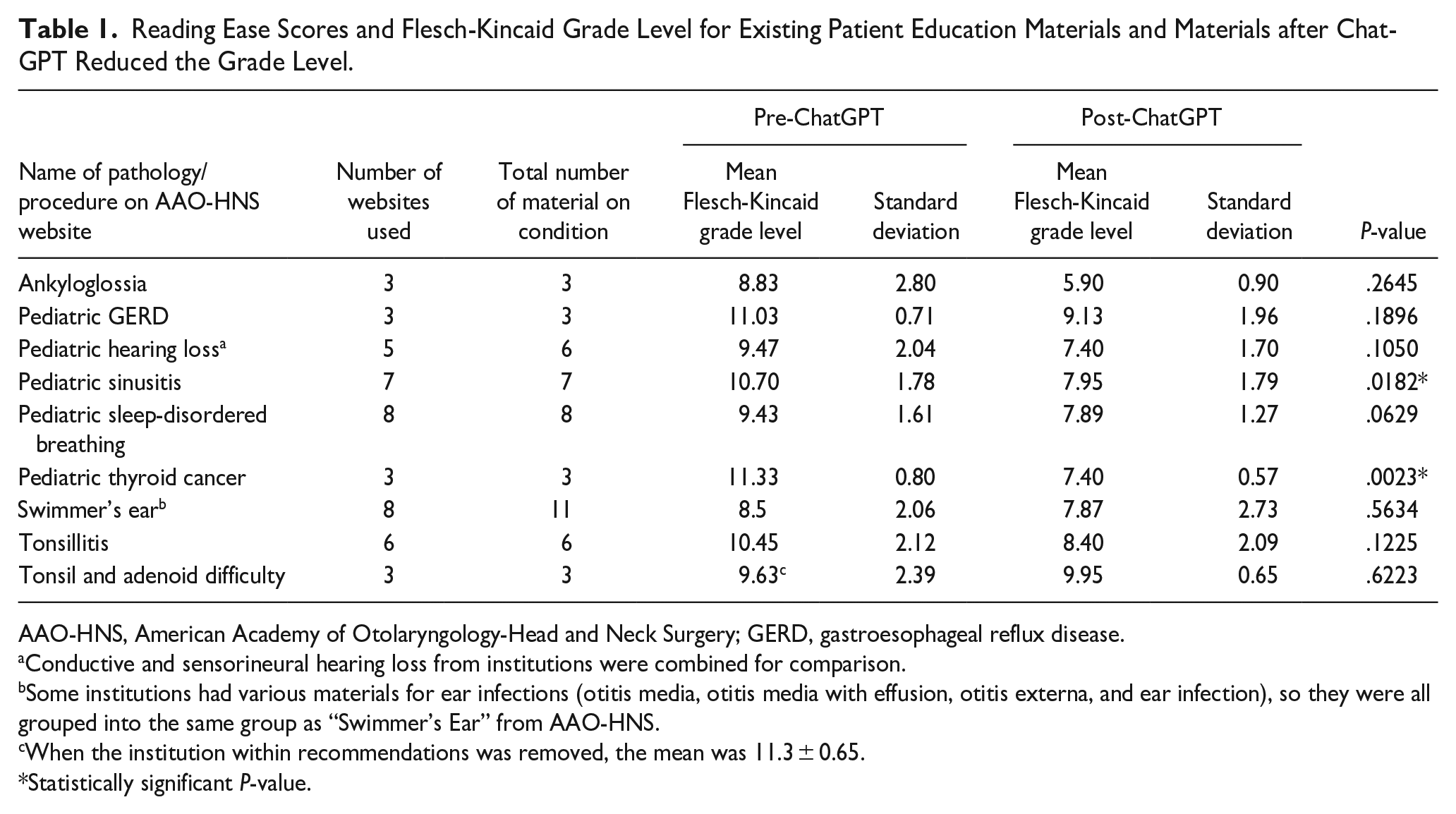

When comparing the published materials from AAO-HNS and the 7 institutions, the average grade level was 9.32 ± 1.82, and ChatGPT was able to reduce the average level to 7.68 ± 1.12 (Figure 2; P = .0598). Of the 7 children’s hospitals, only 1 institution had a mean grade level below the recommended sixth-grade level. Results of the published grade levels and the grade levels after AI editing for each of the 9 conditions are provided in Figures 3 to 11. Of note, 13.95% (n = 6/43) of the materials created by ChatGPT were at an increased grade level than the materials previously published. Results for each condition are summarized and condensed into Table 1.

Mean grade levels of publicly available patient education materials—the mean grade level as published was 9.32 ± 1.82. AI was able to reduce the mean grade level to 7.68 ± 1.12 (P = .0598). Organization “G” had a mean grade level within the recommendation, so their materials were not converted by ChatGPT. The black box represents the target of being within a sixth-grade level. AI, artificial intelligence.

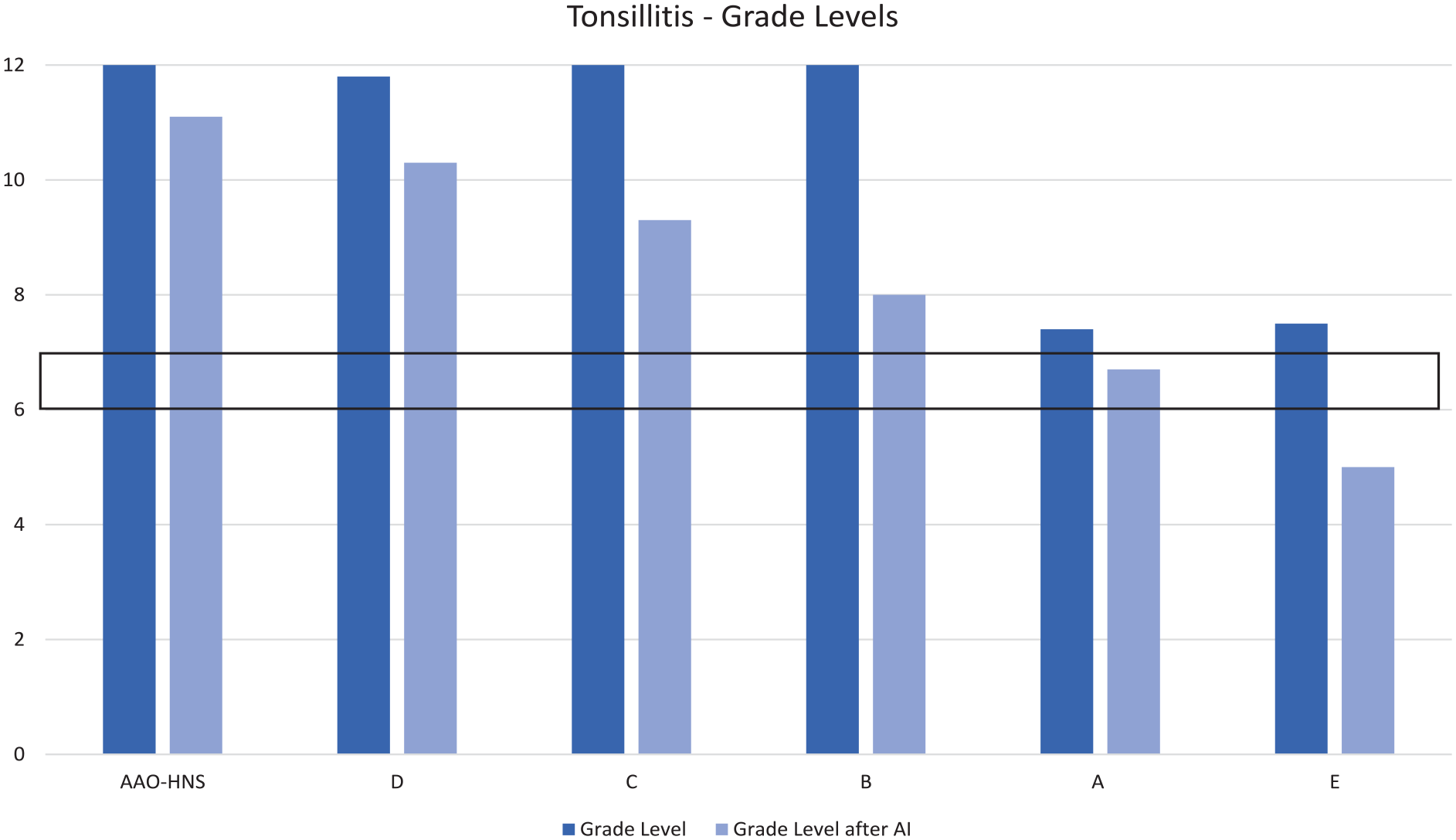

Grade levels for patient education materials about tonsillitis—the mean grade level as published was 10.45 ± 2.12. AI was able to reduce the mean grade level to 8.40 ± 2.09 (P = .1225). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence.

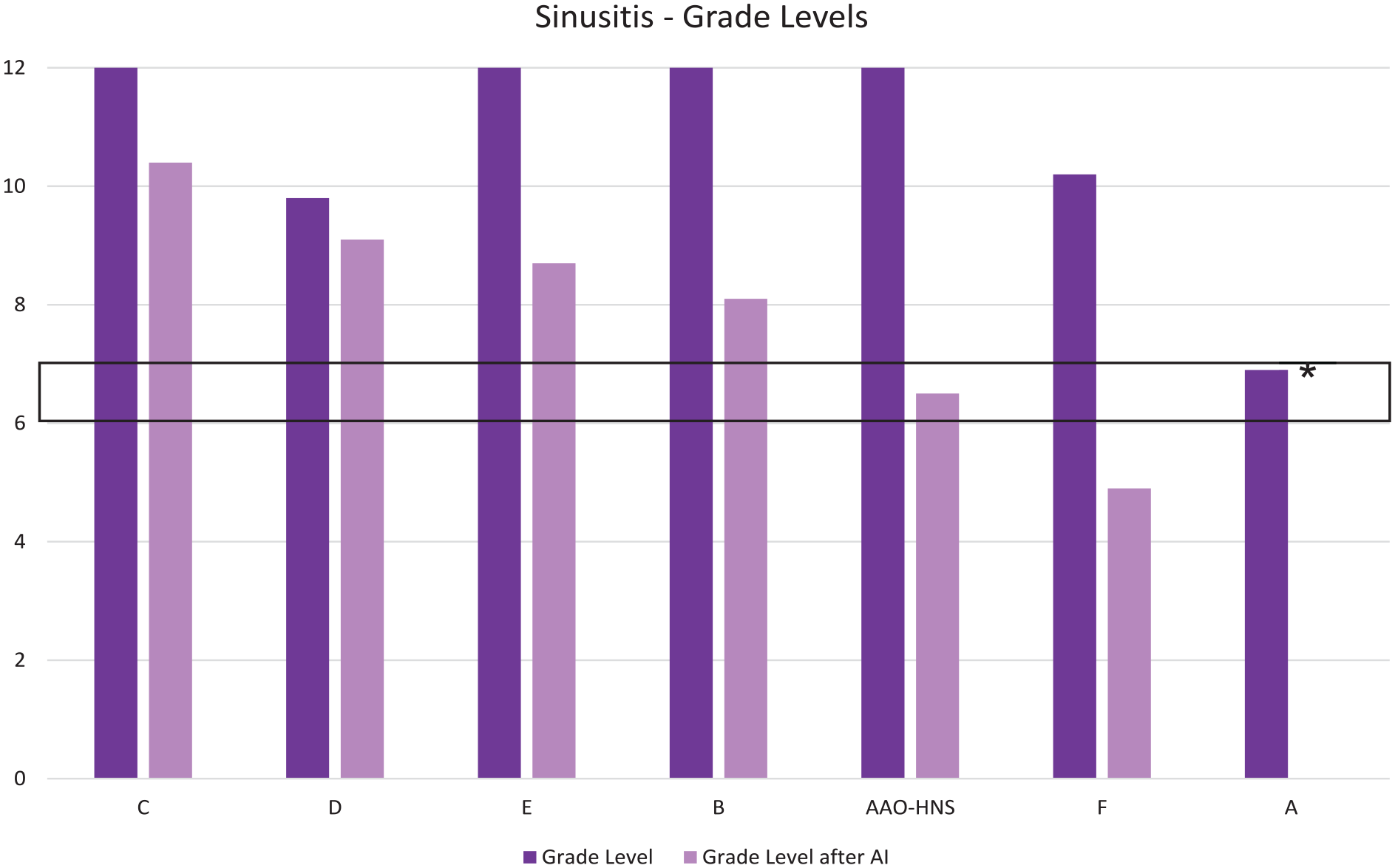

Grade levels for patient education materials about sinusitis—the mean grade level as published was 10.70 ± 1.78. AI was able to reduce the mean grade level to 7.95 ± 1.79 (P = .0182). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence.

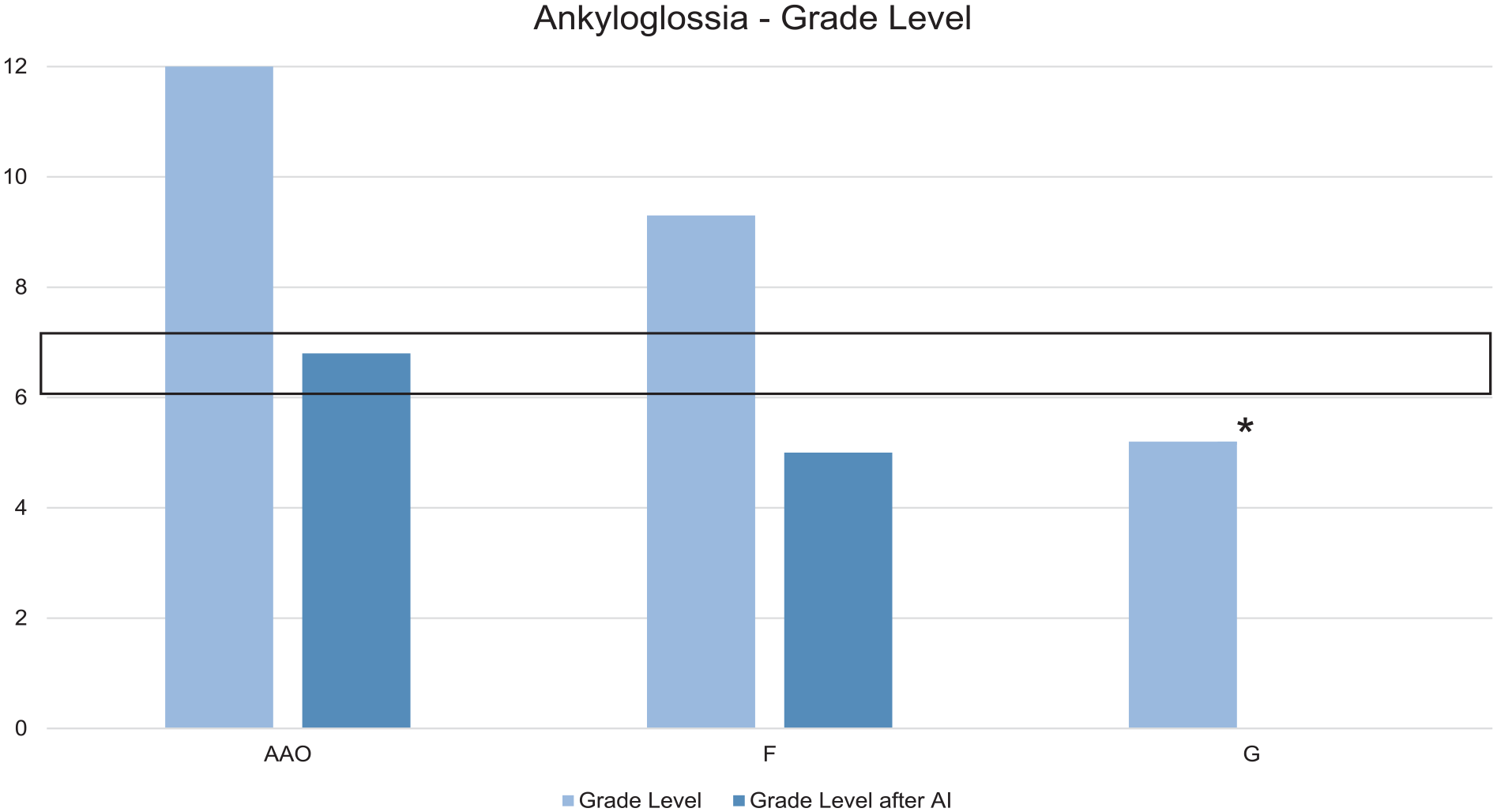

Grade levels for patient education materials about ankyloglossia—the mean grade level as published was 8.83 ± 2.80. AI was able to reduce the mean grade level to 5.90 ± 0.90 (P = .2645). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence.

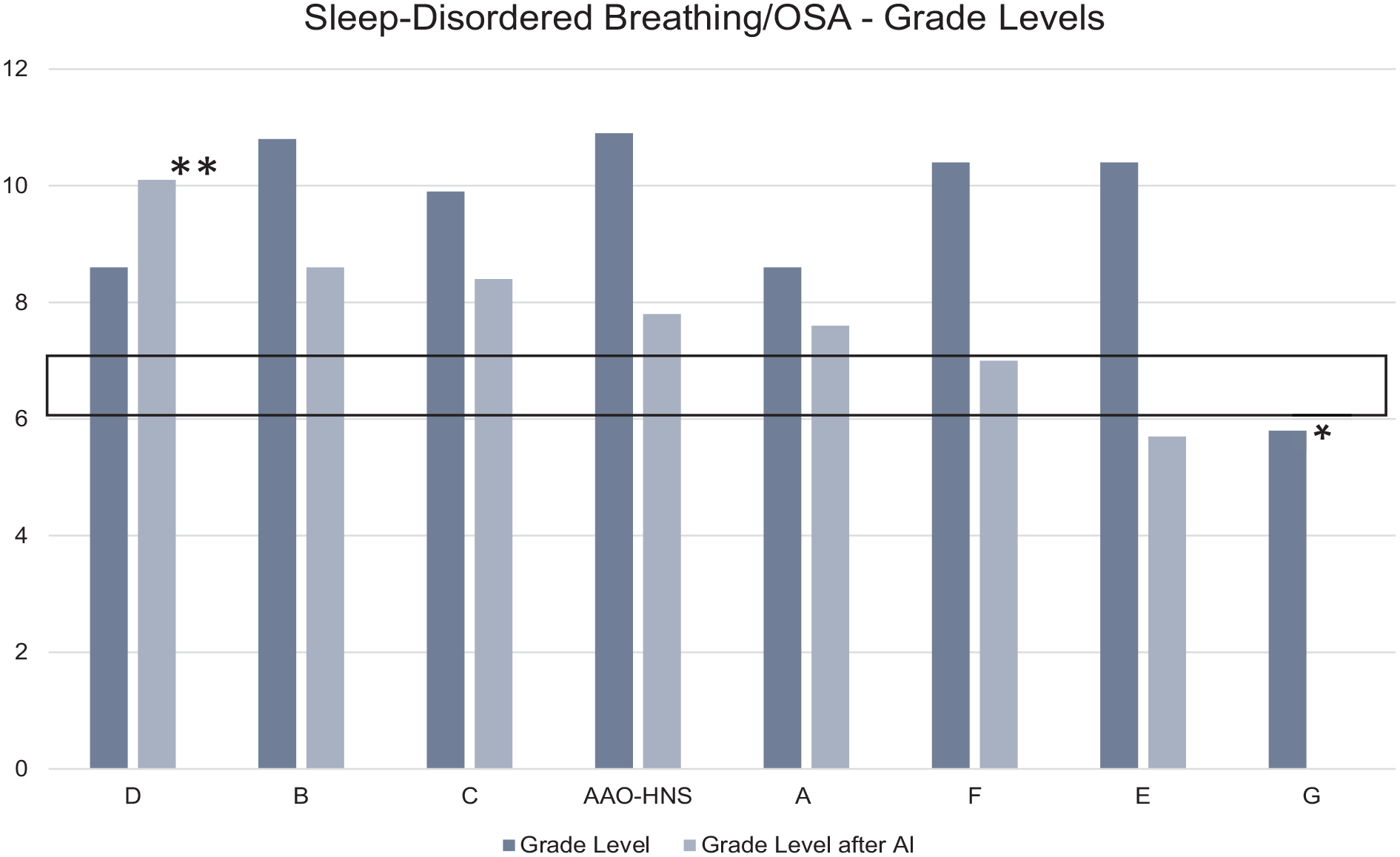

Grade levels for patient education materials about sleep-disordered breathing and OSA—the mean grade level as published was 9.43 ± 1.61. AI was able to reduce the mean grade level to 7.89 ± 1.27 (P = .0629). However, materials from Institution “D” were increased after AI intervention. The black box represents the target of being within a sixth-grade level. AI, artificial intelligence; OSA, obstructive sleep apnea.

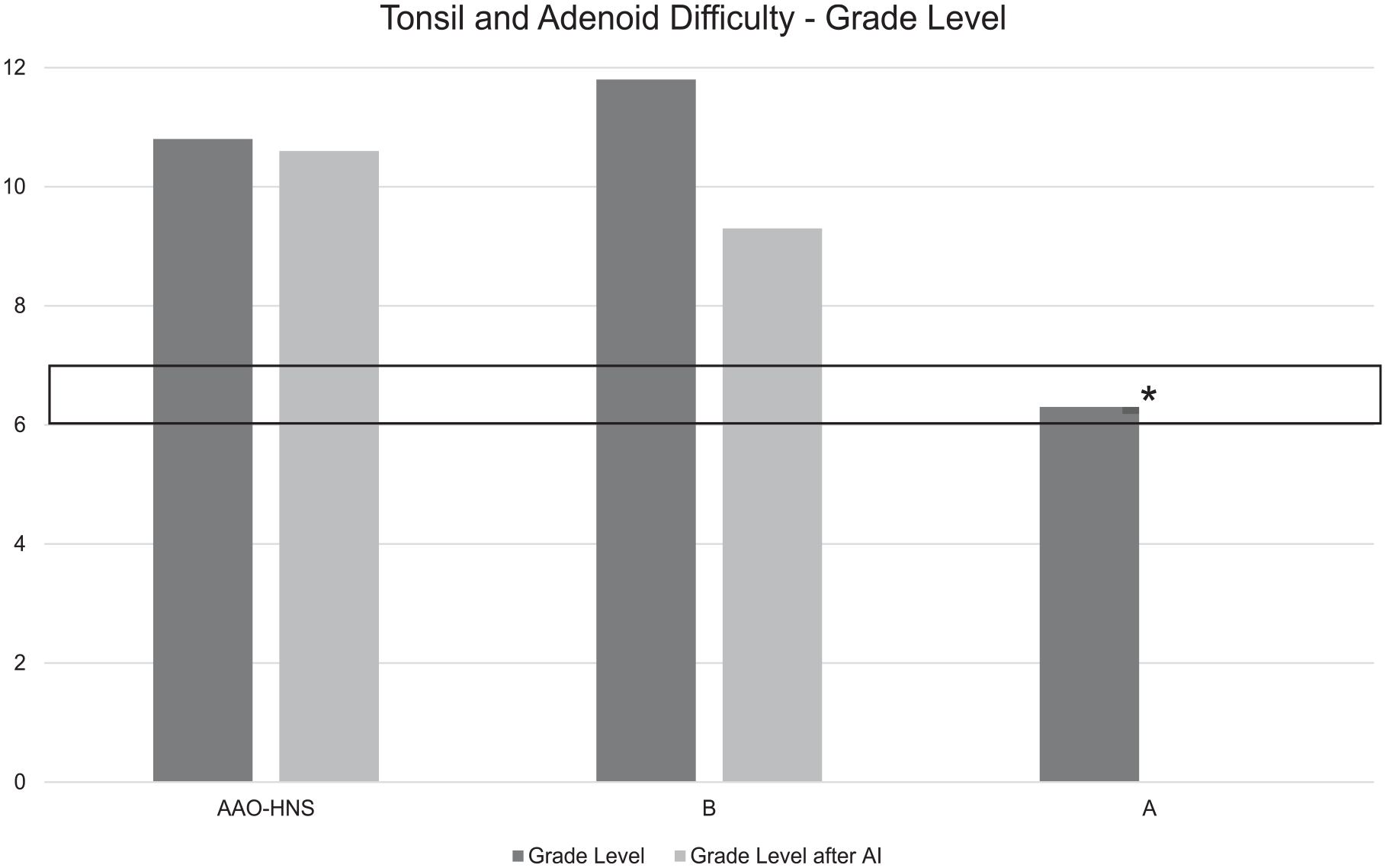

Grade levels for patient education materials about tonsil and adenoid difficulty—the mean grade level as published was 9.63 ± 2.39. Excluding Organization “A,” the mean was 11.3 ± 0.65. AI was able to reduce the mean grade level to 9.95 ± 0.65 (P = .1734). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence.

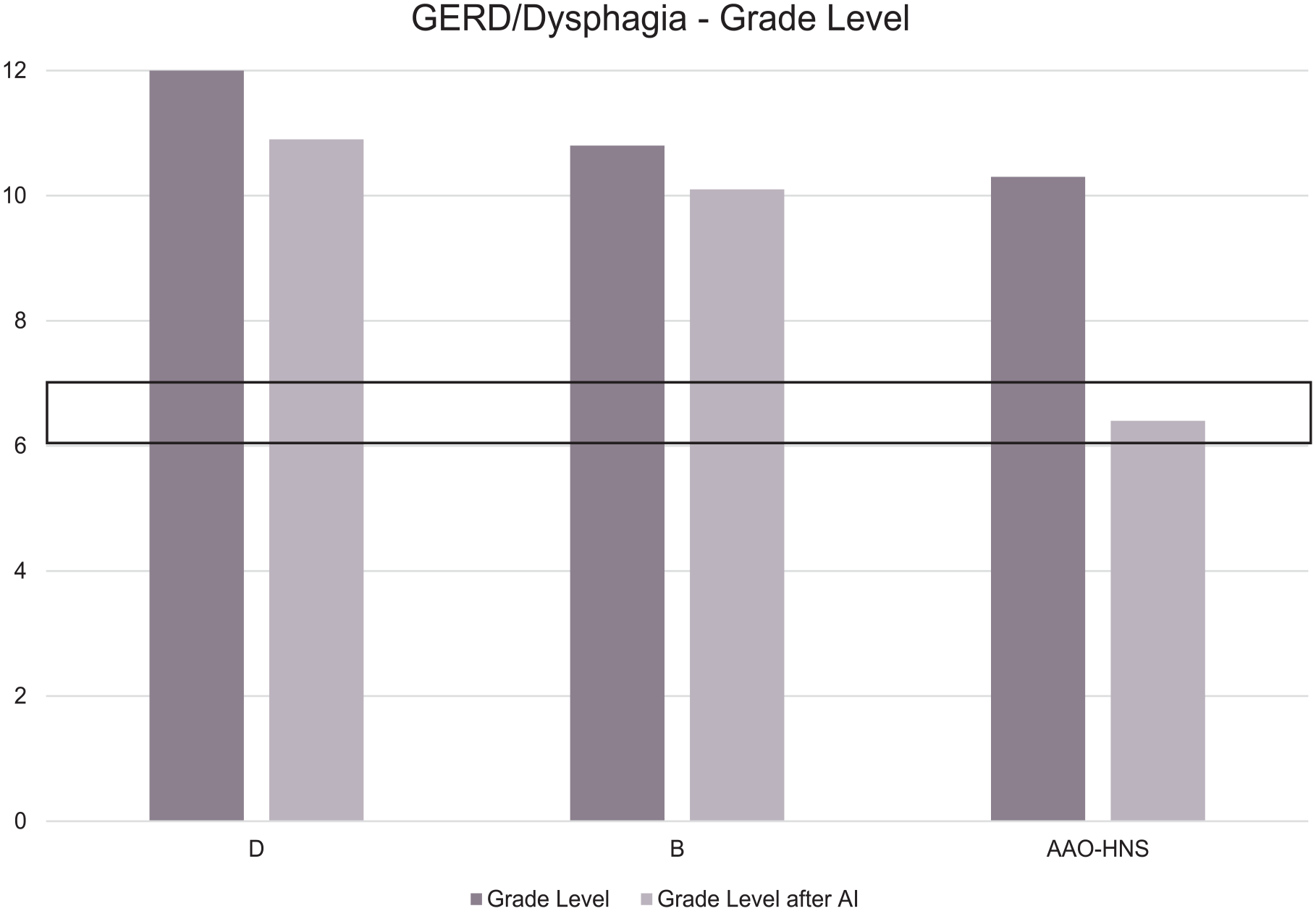

Grade levels for patient education materials about GERD and dysphagia—the mean grade level as published was 11.03 ± 0.71. AI was able to reduce the mean grade level to 9.13 ± 1.96 (P = .1896). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence; GERD, gastroesophageal reflux disease.

Grade levels for patient education materials about thyroid cancer and thyroid nodules—the mean grade level as published was 11.33 ± 0.08. AI was able to reduce the mean grade level to 7.40 ± 0.57 (P = .0023). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence.

Grade levels for patient education materials about otitis media and Swimmer’s Ear/otitis externa—the mean grade level as published was 8.50 ± 2.06. AI was able to reduce the mean grade level to 7.87 ± 2.73 (P = .5634). In 4 instances, the materials produced by AI had a higher grade level than the materials originally published. The black box represents the target of being within a sixth-grade level. AI, artificial intelligence; E1, otitis externa from Institution “E”; E2, otitis media from Institution “E”; F1, otitis externa from Organization “F”; F2, otitis media from Organization “F”; F3, otitis media with effusion from Organization “F.”

Grade levels for patient education materials about hearing loss—the mean grade level as published was 9.47 ± 2.04. AI was able to reduce the mean grade level to 7.40 ± 1.70 (P = .1050). The black box represents the target of being within a sixth-grade level. AI, artificial intelligence; D1, conductive hearing loss from Institution “D”; D2, sensorineural hearing loss from Institution “D.”

Reading Ease Scores and Flesch-Kincaid Grade Level for Existing Patient Education Materials and Materials after Chat-GPT Reduced the Grade Level.

AAO-HNS, American Academy of Otolaryngology-Head and Neck Surgery; GERD, gastroesophageal reflux disease.

Conductive and sensorineural hearing loss from institutions were combined for comparison.

Some institutions had various materials for ear infections (otitis media, otitis media with effusion, otitis externa, and ear infection), so they were all grouped into the same group as “Swimmer’s Ear” from AAO-HNS.

When the institution within recommendations was removed, the mean was 11.3 ± 0.65.

Statistically significant P-value.

Discussion

Both the AMA and NIH recommend that patient education materials should be at or below a sixth-grade reading level to increase accessibility to a majority of the population. However, previous literature discovered that this was not the case on recognized society websites that provide open-access patient education materials.1-3 This study expands upon the previous analyses of medical societies and the reading levels of their patient education materials by harnessing AI to reduce the reading level of the existing materials. We found that AI was useful in creating a statistically significant reduction in the mean grade levels of existing materials; however, it was not able to consistently create a mean grade level that complied with recommendations set forth by both the AMA and NIH.

The use of AI within medicine is a popular theme of investigation across many medical disciplines. A previous study in ophthalmology utilized ChatGPT to generate answers to patient questions on an online forum. This study compared responses generated by ChatGPT to those written by board-certified ophthalmologists. The responses were blinded, distributed to ophthalmologists, and the physicians were asked to identify which was written by a person and which was written by AI. Researchers discovered that AI was able to provide many responses that did not differ from physicians in terms of accuracy, likelihood/extent of harm, or standards within the ophthalmology community. 17 Therefore, it is of utmost importance that the utility and ability of AI to provide medical advice be studied by medical professionals across multiple specialties.

Results of our study show that the average reading level of existing pediatric otolaryngology patient education materials is above the recommended sixth-grade reading level. A previous study similarly showed that existing education materials in pediatric otolaryngology were above this recommended reading level, and this hindered parents’ understanding of the information presented. 18 Decreased healthcare literacy has been linked to worsened morbidity and mortality, especially in patients with chronic conditions. 19 Therefore, if decreasing the reading level of patient education materials may increase healthcare literacy and create better patient outcomes, AI can be utilized to improve patient care.

Based on our study, ChatGPT can successfully reduce the reading levels of patient education materials. However, the yielded texts did not comply with the recommendations set forth by the AMA and NIH. The average reading level of the AAO-HNS materials before ChatGPT reduction is written at a 10.71 ± 0.71 grade level, and the reading level is 7.9 ± 1.18 after prompting AI to reduce the reading level. However, ChatGPT did not reduce all materials to be below the recommended sixth-grade reading level. After prompting, 5 of the articles had reading levels increase. One recent study demonstrates how ChatGPT is not consistently masterful at tasks it is prompted to complete. This work notes that ChatGPT was insufficient at performing tasks if the prompt is not specific enough or requires processing following answer generation. Authors from the same study also note that ChatGPT produced answers that were unexpected or “out-of-range.” 20 In conjunction with our results, ChatGPT is a helpful tool, but it is not currently able to replace the capabilities of the human brain.

Although ChatGPT reduces the mean grade level of patient education materials, 13.95% of responses were above the original reading level of the published material. A previous study analyzing the readability of responses created by ChatGPT when providing parental guidance for adenoidectomy, tonsillectomy, and ventilation tube insertion surgery found that, though accurate, the responses had a mean Flesch-Kincaid grade level within high school levels. 21 This is not the only incident in which ChatGPT failed to complete the task it was prompted to perform. The platform has been noted to create false sources and incorrectly produce 43% of citations. 22 In the current study, the sheet yielded from ChatGPT modification of Sinusitis had 593 fewer words even though the technology was prompted to maintain the exact word count when reducing the grade level of materials. Therefore, ChatGPT should not be solely relied upon to complete a desired task. Rather, it should be employed as one of many tools.

This study utilizes ChatGPT 3.5. With the recent release of ChatGPT 4.0, there may be improvements in the ability to reduce the grade level. A recent study examines the ability of ChatGPT 4.0 to reduce materials on the AAO-HNS website. The mean grade level of all 71 patient education materials is 11.03, but ChatGPT can reduce the mean grade level to 5.80. 23 A previous study analyzing the performance of ChatGPT 3.0 on otolaryngology board questions reports that 57% of questions were correctly answered. 24 As technology improved, so did the board score. ChatGPT 3.5 answers 86% of rhinology board questions correctly, and this score increases to 86% when GPT4 is prompted to answer the same set of questions. 25 Given the recorded improvements of AI and its increased ability to accurately answer questions, ChatGPT may be able to consistently reduce pediatric otolaryngology education materials to comply with AMA and NIH guidelines within the near future.

Pediatric otolaryngology is specifically a field in which patient education must be addressed. A systematic review assessing healthcare disparities within pediatric otolaryngology reported that the most common disparity is low socioeconomic status, including lower education levels, and this has been shown to adversely affect healthcare outcomes due to decreased understanding of conditions and treatments.26,27 Improving the readability of online education materials, especially in pediatric otolaryngology, is of emerging importance because patients with lower education levels have increased access to the Internet over the past 20 years. 28 Therefore, lowering the grade level of online patient education materials increases the accessibility of these materials.

This study did not come without limitations. A major limitation of our study is the availability of patient education materials. The 9 conditions identified on the AAO-HNS website were not available on the websites of each institution. Furthermore, medical terminology is inherently difficult, and its incorporation into a piece of text subsequently increases the reading level. The algorithm utilized by ChatGPT is also unknown, so the reasoning behind the increased grade level is poorly understood. Only one specific prompt was provided to ChatGPT. Therefore, slight changes in the prompting may have altered the reading level of the yielded text. Furthermore, we were not able to incorporate the perspectives of patients. ChatGPT might have been trained using materials published on the websites utilized in this study, which presents another potential source of biased results. Future studies can utilize experts to review the material produced by AI to assess accuracy. Variations of prompts/questions can be incorporated into future studies to determine whether this has an effect on the ability of AI to reduce the grade level of materials. Given the recent release of ChatGPT 4.0, studies can be performed to determine whether there were any improvements with the new technological advances. In addition, future studies can survey patients on materials created by AI to determine if the materials are easier to comprehend, and patients can be polled on which conditions are most difficult to understand. Analysis of multimedia resources can also be performed to assess the effect videos and images have on the grade level of education materials.

Conclusion

Patient education materials in pediatric otolaryngology are consistently above the recommended sixth-grade reading level. We found that AI was able to create a statistically significant reduction in grade level. However, there were instances in which the product created by AI was higher than the originally published materials. To improve the clarity of information conveyed to patients, AI may be utilized in conjunction with expert proofreading to assist in lowering the reading levels of patient education materials without sacrificing accuracy.

Supplemental Material

sj-docx-1-ear-10.1177_01455613241289647 – Supplemental material for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology

Supplemental material, sj-docx-1-ear-10.1177_01455613241289647 for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology by Andrew J. Rothka, F. Jeffrey Lorenz, Madison Hearn, Andrew Meci, Brandon LaBarge, Scott G. Walen, Guy Slonimsky, Johnathan McGinn, Thomas Chung and Neerav Goyal in Ear, Nose & Throat Journal

Supplemental Material

sj-docx-2-ear-10.1177_01455613241289647 – Supplemental material for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology

Supplemental material, sj-docx-2-ear-10.1177_01455613241289647 for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology by Andrew J. Rothka, F. Jeffrey Lorenz, Madison Hearn, Andrew Meci, Brandon LaBarge, Scott G. Walen, Guy Slonimsky, Johnathan McGinn, Thomas Chung and Neerav Goyal in Ear, Nose & Throat Journal

Supplemental Material

sj-docx-3-ear-10.1177_01455613241289647 – Supplemental material for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology

Supplemental material, sj-docx-3-ear-10.1177_01455613241289647 for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology by Andrew J. Rothka, F. Jeffrey Lorenz, Madison Hearn, Andrew Meci, Brandon LaBarge, Scott G. Walen, Guy Slonimsky, Johnathan McGinn, Thomas Chung and Neerav Goyal in Ear, Nose & Throat Journal

Supplemental Material

sj-docx-4-ear-10.1177_01455613241289647 – Supplemental material for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology

Supplemental material, sj-docx-4-ear-10.1177_01455613241289647 for Utilizing Artificial Intelligence to Increase the Readability of Patient Education Materials in Pediatric Otolaryngology by Andrew J. Rothka, F. Jeffrey Lorenz, Madison Hearn, Andrew Meci, Brandon LaBarge, Scott G. Walen, Guy Slonimsky, Johnathan McGinn, Thomas Chung and Neerav Goyal in Ear, Nose & Throat Journal

Footnotes

Acknowledgements

The authors would like to thank Caia Hypatia for their help with manuscript preparation and submission.

Author Contributions

A.J.R.: Conceptualization, Methodology, Investigation, Writing – Original Draft, Writing – revising and editing. F.J.L.: Conceptualization, Methodology, Writing – Original Draft, Writing – revising and editing. M.H.: Conceptualization, Methodology, Writing – Original Draft, Writing – revising and editing. A.M.: Conceptualization, Methodology, Writing – Original Draft, Writing – revising and editing. B.L.: Conceptualization, Methodology, Writing – Original Draft, Writing – revising and editing. S.G.W.: Conceptualization, Methodology, Writing – Original Draft, Writing – revising and editing. G.S.: Conceptualization, Methodology, Writing – Original Draft, Writing – revising and editing. J.M.: Conceptualization, Methodology, Investigation, Writing – Original Draft, Writing – revising and editing. T.C.: Conceptualization, Methodology, Investigation, Writing – Original Draft, Writing – revising and editing. N.G.: Conceptualization, Methodology, Project Administration, Supervision, Writing – Original Draft, Writing – revising and editing.

Data Availability Statement

Data are available upon reasonable request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval

This study was approved by The Pennsylvania State University Institutional Review Board (Study ID #22789).

Statement of Informed Consent

The need to obtain informed consent was waived for the collection, analysis, and publication of the data for the non-interventional study.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.