Abstract

Introduction

Advantages of Speechreading for Cochlear Implantation Users

Cochlear implantation (CI) is, by far, the most successful treatment to provide auditory perception to individuals with severe to profound hearing loss (SPHL). 1 However, a CI conveys poor speech-sound information, as compared to a normally functioning human cochlea. The reduced spectral information conveyed by the CI is particularly problematic in noisy conditions, especially when they are faced with more complex real-life situations. 2 Most of the time, the majority of CI users have access to auditory and visual information when listening to speech, with the audiovisual modality being the most common environment in which they listen to speech. 3

CI users show considerable variability in speech perception outcomes among individuals who are congenitally deaf but did not acquire CI in early childhood, and also among those who received CI following acquired hearing loss. 4 This suggests that despite the increased access to auditory speech that a CI can bring, CI users may continue to make greater use of visual information than individuals with normal hearing, and thus may display a speechreading advantage under noisy conditions.

However, the evidence on the extent of speechreading advantages among CI users appears to be mixed. Researchers 5 reported no significant differences in recognition performance, when tests were presented in a visual-only (VO) format, suggesting that there was no speechreading advantage for CI users over their peers with normal hearing. Other evidence suggests that there is indeed a speechreading advantage for CI users. Rouger et al 6 assessed speechreading performance in a group of adults with postlingual deafness. It indicated that deaf adults showed significantly higher speechreading performance, compared to adults with normal hearing, either prior to receiving the CI or over the years following CI implantation.

Multisensory Processing Among CI Users and the Factors Influencing It

Based on findings in animal models and research on the early development of humans, it has been proposed that multisensory processing provides a strong foundation for the development of perceptual representations and facilitates the acquisition of higher-order cognitive functions. 7 However, more evidence is needed to determine the factors that affect multisensory processing. For example, previously conducted studies differ in age of participants (eg, children vs adults), etiology (eg, congenital vs acquired deafness), and the onset of hearing loss (eg, prelingual vs postlingual). We propose that these factors may help explain the discrepancies observed in previous studies. Furthermore, we believe that there may be a sensitive period during which multisensory development can occur.

Auditory speech perception outcomes after implantation are highly variable and are affected by different variables. For example, for individuals with prelingual deafness, age at implantation and residual hearing at preimplant, among other factors, are all related to speech recognition scores, following implantation. 8 However, whether these factors affect speechreading performance remains unclear.

The Purpose of the Present Study

Researching the development of sensory and multisensory abilities is of great importance, as doing so will help us try to understand if some of the variability in CI outcomes can be attributed to speechreading ability. Thus, in the present study, we examine whether CI users with degraded auditory ability show different speechreading advantages and whether these differences are also found among people of different age groups with hearing loss, during different developmental periods. We also aim to investigate whether and how such factors relate to speechreading ability.

Patients And Methods

Patients

A total of 104 CI users were included in the present study. Most of the participants had unaided hearing in the profoundly impaired range, which restricted access to speech perception as participants required hearing aids (HA). CI criteria included word and open-set sentence auditory-recognition scores <30% under best-aided conditions in quiet (ie, with conventional acoustic HA). All CI patients were recipients of a cochlear implant [eg, Nucleus (71/104), MED-EL (16/104), AB (17/104)] and used a range of different sound-coding strategies.

All participants were native Chinese speakers with self-reported normal or corrected-to-normal vision and without any previously known language or cognitive disorders. The study was approved by the Central Committee on Research Involving Human Subjects of the Peking University First Hospital. All participants or their parents provided written consent themselves or on behalf of their children to participate in the present study.

Materials

All participants were tested using an open-set Chinese sentence recognition test in quiet and in noise developed by Xi et al, which was established as a corpus of Mandarin Bamford-Kowal-Bench-like sentences, 9 with 4-talker babble as competing noise. The sentences were extracted from a first grade-level Mandarin textbook, and were read by a 20-year-old male native Mandarin speaker. This test comprised 16 lists of monosyllabic words and 28 lists of sentences from the Homogeneity Optimized via Psychometric Evaluation (HOPE) system. 10 Four extra practice monosyllabic word lists and 4 extra practice sentence lists were offered to allow participants to familiarize themselves with the procedure. The extra practice lists were part of the protocol and were applied to all research participants; the same list was not used twice in the schedule to avoid the learning effect. Each list of monosyllabic words comprised 25 Chinese characters, and each list of sentences comprised 15 sentences, each with 6 to 11 Chinese characters.

Twenty-five lists were used to test sentence recognition ability in visual conditions. For example, sentences such as “Mom just take me back” or “She recognized a rabbit” in Mandarin Chinese were recorded on video to test VO and audio-only (AO) ability (in both quiet and in noise). A video of each sentence was spoken by a professional male broadcaster with a general Mandarin accent using a Cannon HFr86. The sentence was spoken aloud at a normal conversational volume during the recording, and the videos were subsequently edited so that the participants saw a natural production of the word, but without any sound. The model maintained a neutral and silent facial expression at the beginning and the end of production of every sentence, and the distance from the camera, lighting, and background conditions were consistent for each word (see Figure 1).

Screenshot of the visual speech model who produced stimuli. It shows the model producing /ma/ sound which means “mother” in English.

Procedures and Stimuli

Speech Recognition Tests

The recognition tests consisted of 2 kinds of material (Mandarin monosyllabic words and Mandarin sentences), 2 conditions (quiet and noisy), and 2 modalities (AO and VO). To collect the baseline hearing-level data of participants, tests, including the Mandarin monosyllabic word test in quiet conditions and the Mandarin sentence recognition test in quiet and noisy conditions, were randomly conducted.

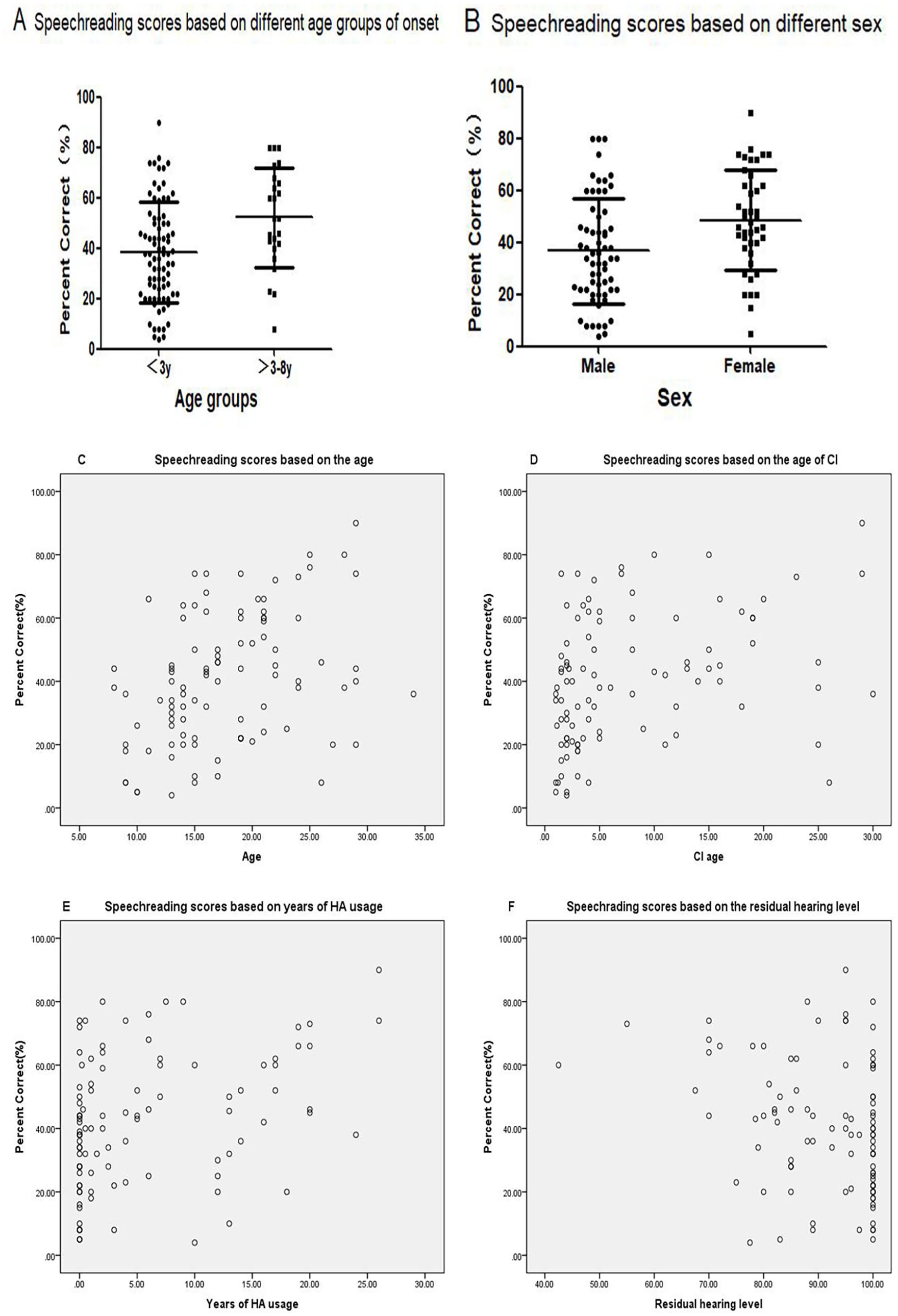

All participants were able to complete the tests and ensure a high standard of results. The test lists were presented after 2 practice lists (randomly chosen from the entire list) with different modalities and under quiet and noisy conditions at the beginning of every visit in the schedule. The participants would take a 10 minute break every half an hour. Given that 4-talker babble is used as a masker, we can view participants’ SNR50 (signal-to-noise ratio required for 50% accuracy) from their baseline data. We collect baseline data on the speech recognition abilities of the participants, as shown in Table 1.

Characteristics of CI Users.

Values are presented mean ± SD.

Abbreviations: CI, cochlear implantation; HA, hearing aids; LVAS, large vestibular aqueduct syndrome; SNR50, signal to noise ratio required for 50% accuracy.

Eighty-six participants accomplished the recognition test in noise and the other 18 participants complete the test only in quiet condition.

The test material was presented in separate modalities, as follows: VO or speechreading and AO conditions. Participants were asked to focus their attention while completing the task, but they could rest for 10 minutes after every 30 minutes. Only words that participants verbally repeated correctly were treated as correct responses (% correct score). A correct phoneme score was calculated for 2 lists of words in each condition. When the difference in phoneme scores between the lists was greater than 10%, a third list was administered.

Statistical Analysis

Statistical analyses were performed using IBM SPSS Statistics 20.0. For each test, the level of statistical significance was set at 5%. Outcomes for the speech recognition test were analyzed with Friedman’s analysis of variance for dependent variables. Associations between performance variables and predictors were investigated through univariate and multivariate linear regressions. After assessing associations between single variables and VO recognition scores through univariate regression, significant associations were entered into a multivariate regression model for each variable, if possible. Variables were included when the univariate linear regression had a significance level below .20. An α level of .05 was used to determine statistical significance.

Results

A total of 104 CI users (

CI users (the average age of implantation was 8.0 years) were tested, on average, during a period of 9.6 years post implantation, ranging from 0.5 to 18.5 years. Male CI users comprised 59.6%, while the other 40.4% of CI users were female. Eighty-six participants completed the recognition task in a noisy environment, and the other 18 participants completed the task in a quiet condition. The proportion of participants who used both CI and HA (bimodal) was 25.0% (n = 26); 60.6% (n = 63) of them used 1 implant; and 14.4% (n = 15) of them used 2 implants (bilateral CI).

Table 1 lists the participant characteristics of the CI users.

Different Speechreading Ability in CI Users

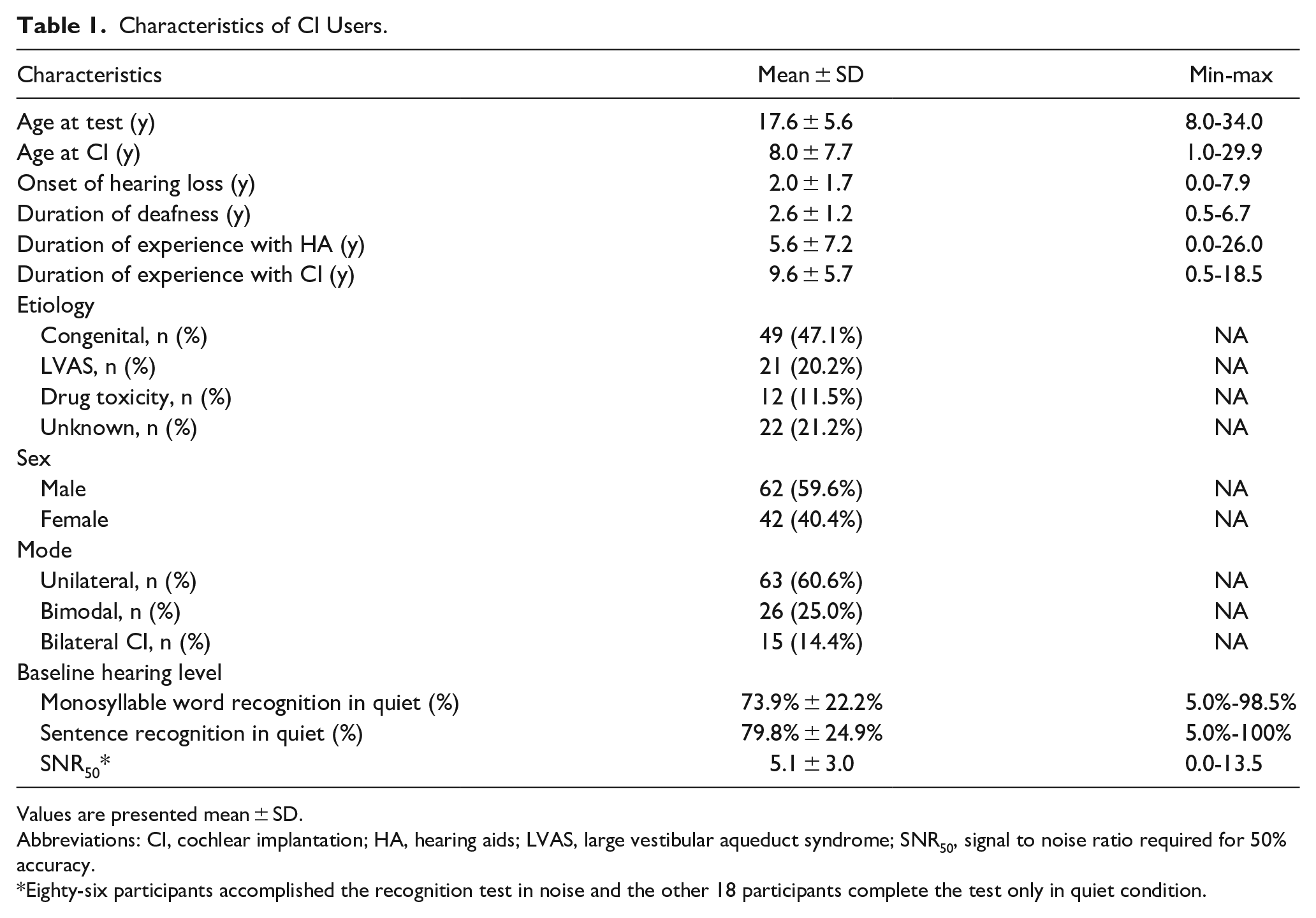

The mean and standard deviation of the VO speech recognition score are 42.1% ± 19.3%. The VO speech recognition scores of all 104 participants with CI were analyzed based on SNR50 (see Figure 2). SNR50 did not affect the VO performance of all CI users (P = .161). It also did not affect VO performance when we excluded 18 poor performers who could not recognize any words under the noisy conditions (P = .092).

The speechreading performances based on the SNR50 are shown. SNR50 was not found to correlate with the scores of visual-only ability (speechreading). CI, cochlear implantation; SNR50, the signal to noise ratio required for 50% accuracy.

Associations Between VO Speech Recognition Scores and Predictors

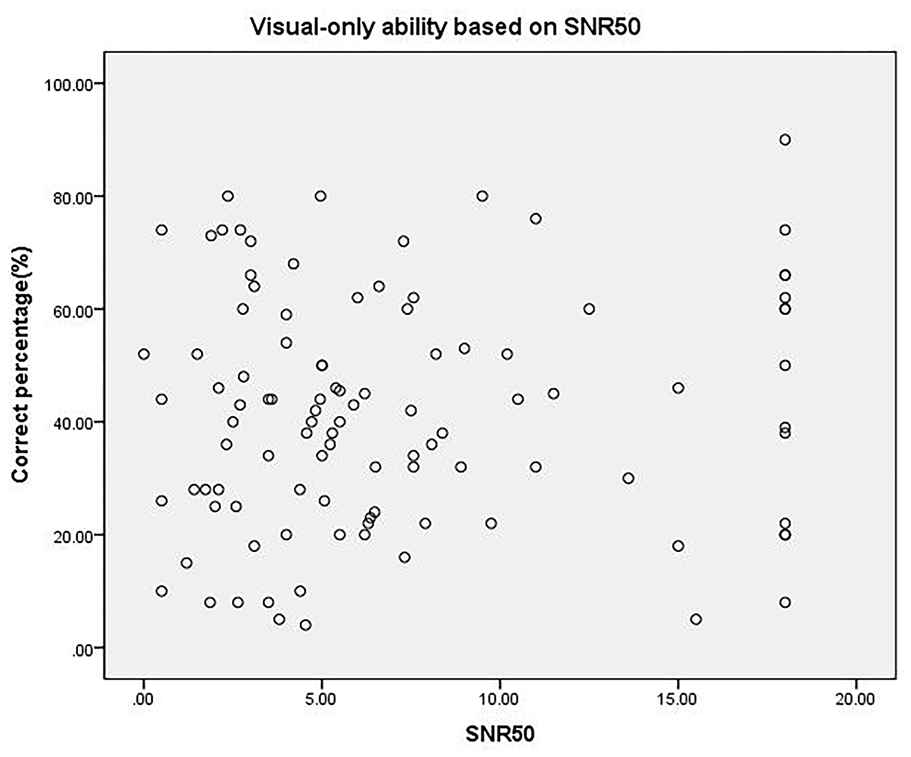

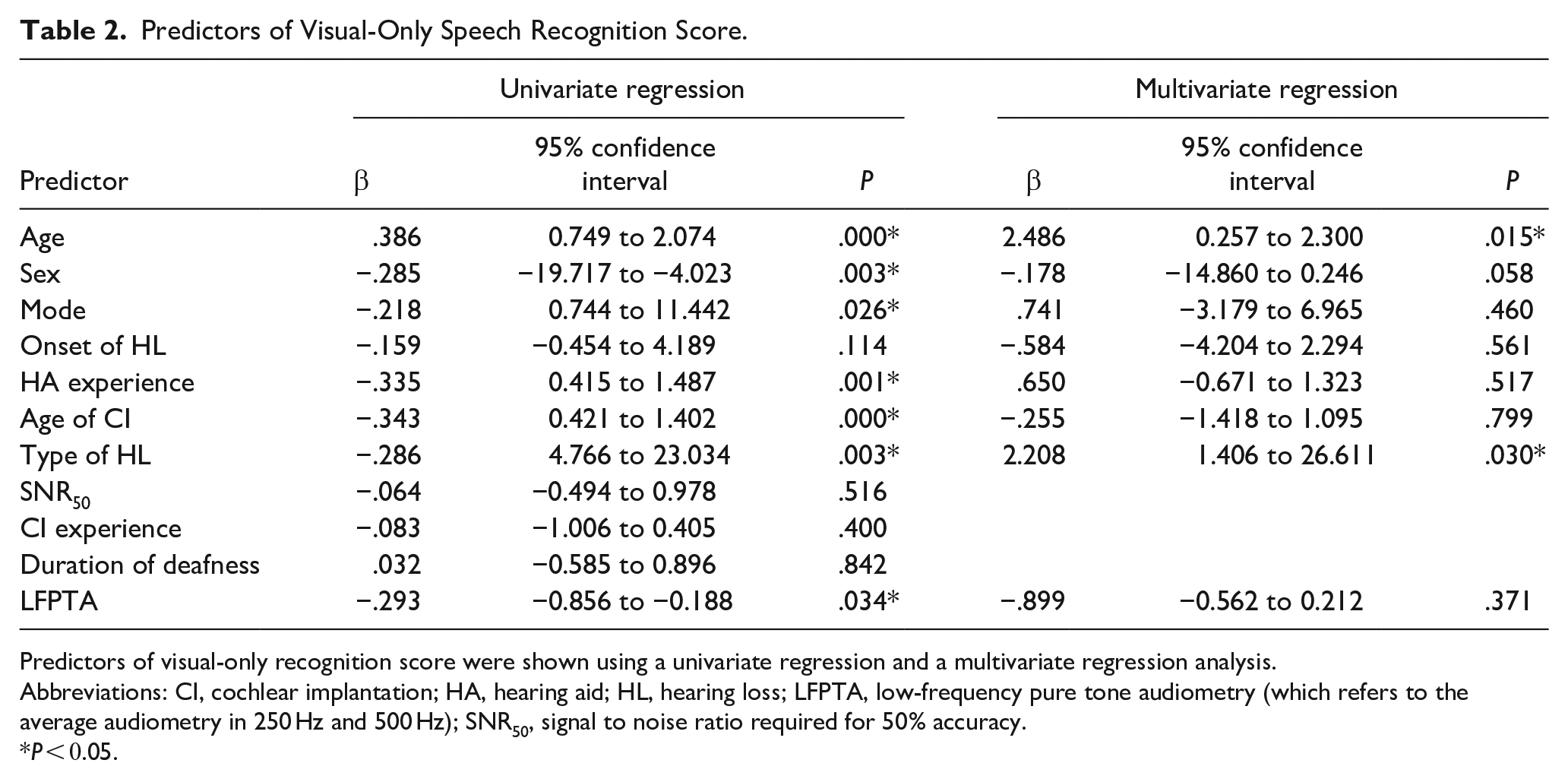

In analyzing relationships between factors and outcomes of VO scores, we found that older age was associated with superior speechreading skills (P = .015). There was also a significant difference between participants with prelingual deafness and participants suffering hearing loss between age 3 and 8 years (P = .030). The age that participants received their CI, being female, years of hearing aid usage, and age of implantation were positively related to superior speechreading scores (P < .05) only in the univariate regression (see Figure 3A–E); however, they were not associated with VO skills in the multivariate regression.

(A, B) The box plot of recognition results with visual-only speech recognition scores. Individuals whose age of onset of hearing loss was 3 to 8 years performed significantly better than participants who suffered from hearing loss, less than 3 years of age. Females were found to be better performers in visual-only modality. The speech recognition results with visual alone based on the (C) chronological age, (D) the age of CI, (E) the history of using hearing aids, and the (F) LFPTA. These factors were found to be correlated with the scores of visual-only speech recognition. CI, cochlear implantation; HA, hearing aid; LFPTA, low-frequency pure tone audiometry (which refers to the average audiometry in 250 Hz and 500 Hz).

In the present study, 68 participants had a history of hearing aid use prior to CI surgery. Hence, the VO recognition scores for these individuals were analyzed based on their residual hearing level (Figure 3F). Low-frequency pure tone audiometry (the average of pure tone audiometry at 250 Hz and 500 Hz) affected the recognitions scores of VO (P = .034) only in univariate regression. Other predictors were also considered, including the duration of deafness, mode (unilateral CI, bilateral CI, and bimodal), and etiology, and no significant correlation was found with speech recognition scores (Table 2).

Predictors of Visual-Only Speech Recognition Score.

Predictors of visual-only recognition score were shown using a univariate regression and a multivariate regression analysis.

Abbreviations: CI, cochlear implantation; HA, hearing aid; HL, hearing loss; LFPTA, low-frequency pure tone audiometry (which refers to the average audiometry in 250 Hz and 500 Hz); SNR50, signal to noise ratio required for 50% accuracy.

P < 0.05.

Discussion

A Complex Way of Perceptual Compensation that CI Users Follow

The perceptual compensation hypothesis suggests that sensory deprivation in one sensory modality stimulates compensatory perceptual improvement in another sensory modality. 11 According to the results, there is a significant speechreading advantage for CI users. Similarly, we found that different degrees of auditory performance led to different kinds of speechreading abilities for understanding spoken languages. This resulted in a notable disparity in speechreading ability among CI users with different speech outcomes; nevertheless, we did not observe a significant correlation between SNR50 and VO speech recognition scores. Thus, we posit that the CI users followed a more complex method of perceptual compensation.

Correlation Between Factors and VO Speech Recognition Scores

Regardless of whether speechreading abilities were driving the significant increase in audiovisual gains [improvement in perception or understanding that occurs when both auditory (sound) and visual (sight) information are presented simultaneously] seen in CI users, there are several additional factors that could be influencing this speechreading ability. The extent of the VO ability may have varied between the CI users, as they possessed different auditory abilities. This ability may be based on several factors that affect speech recognition after implantation, including age, age of implantation, history of HA, and residual hearing level, among others.

Age of Onset of Hearing Loss

The age of onset of hearing loss is a crucial factor in speech performance outcomes. According to the theory of perceptual compensation, when there is a loss of hearing, visual abilities begin to compensate and grow in a compensatory manner. However, we did not find a significant correlation between the age of onset of hearing loss and speechreading performance in the CI users. This may be due to the beneficial effect provided by the CI, as the implantation may have activated the window of development (a specific period during which an individual is particularly receptive to acquiring specific abilities) in individuals experiencing deafness early in life.

Gougoux et al 12 found superior pitch discrimination skills in early blind adults (blinded <2 years of age) compared to sighted adults, but these enhancements were not observed in late blind adults (blinded >5 years of age). They reported a significant negative correlation between the age of blindness onset and pitch discrimination, and argued that “cerebral plasticity is more efficient at early developmental stages.” In addition, past research has shown that typically developing children integrate visual cues that correspond with auditory cues very early in postnatal life. Typically developing infants and toddlers, for example, tend to look to the mouth—the source of linguistic multisensory redundancy—during pivotal periods in early language learning, such as when they are latching onto their native language and when they are experiencing an acceleration in word learning. 13 However, in the present study, participants who underwent hearing loss at <3 years of age performed significantly poorer in the VO speech recognition test than those with posterior deafness (onset at age 3-8). Participants who experienced hearing loss in this developmentally sensitive period (ie, 3-8 years of age) were more likely to be better at speechreading. A delayed period after age 3 appears to be a pivotal period in language learning, during the time at which CI users were experiencing an acceleration in word learning. We argue that cerebral plasticity for integrating Audio-Visual (AV) perception may be more efficient in the sensitive period after age 3, rather than at an earlier developmental stage (ie, before age 3). This finding highlights a developmental window in which one’s visual ability matures. This period of development is the typical period of expansive language acquisition that also corresponds with a peak in the formation of cortical synapses. 14 We posit that greater experience with, and attention to, visual speech, over a period of years, is necessary for the development of superior visual speech perception skills. And this visually experience-driven synaptic pruning is a critical component that shapes cortical circuits in early childhood and adolescence, no matter the visual or auditory perceptions.

The Age

By analyzing the relationship between the factors and the results of VO scores, we found that older age was associated with superior speechreading skills. One reason for this may be due to the fact that older age necessitates more advanced cognitions that are related to better speechreading ability. 15 Indeed, as compared to younger adults, older CI users tend to exhibit better speechreading performance and show significant gains under audiovisual conditions. 16

The Age of CI

Consistent with other findings, 17 our results showed a positive effect of CI age on speechreading performance. The age at implantation in speech recognition outcomes has been discussed in the context of the proposed sensitive developmental period of the central auditory system. Nevertheless, Sharma et al 18 have argued that maximal plasticity of the central auditory system occurs in the first 3.5 years of life. It has been suggested that receiving an implant earlier in life is associated with better auditory speech recognition due to the increased plasticity of the central auditory system. 19 In addition, earlier recipients experience an increased number of years of accessing auditory speech via the CI as compared to later recipients. Taken together, these findings may explain why those who received their CI earlier in life showed a reduced reliance on—and therefore, less well-developed—visual speech recognition skills than those who received their CI later in life. Our results are consistent with the perceptual compensation hypothesis. That is, we propose that those who receive their CI later in life undergo a more protracted period of auditory rehabilitation that is associated with superior visual speech perception skills.

The Length of the HA Experience

The residual hearing level greatly affects the length of the HA experience. Given that the length of HA experience may contribute to auditory developmental plasticity, 20 it is unsurprising that we found a significant correlation between the time of HA use and speechreading scores. If HA were the only approach used to improve auditory performance, these participants would have made more of an effort to spontaneously recognize language from the movement of the speaker’s face and lips to develop their speechreading ability. However, in the multivariate analysis, we found a significant correlation only between VO recognition scores and the type of hearing loss. We speculated that individuals with CI who suffer from hearing loss between 3 and 8 years of age (the sensitive period in our study) must rely more on visual speech for a longer duration of time. Doing so may lead to enhanced speechreading ability, regardless of whether they used CI as an auditory-assisted method at the onset of their hearing loss.

A limitation of the present study is that we did not take education attainment into consideration. However, the age range of participants was 8.0 to 34.0 years, and the majority of participants had age-matched education levels (ie, they had been attending the age-appropriate school or found a job after finishing school). This is important because the finding that older age was associated with better VO ability could be due to certain participants possessing an advanced degree. Aparicio et al 21 demonstrated that the visual speech signal can be enhanced by using cued speech, which requires users to pay more attention to the lips. However, we did not consider this factor, given that most of our participants received their CI over the course of our study, and they did not use cued speech with other people in their daily lives. Furthermore, there are some differences between Chinese and English in terms of auditory perception such as the different phoneme (speech unit) systems, the speech rhythm and stress patterns, and the tone which is an important feature in the Chinese language.22,23 These differences may affect the result interpretations of auditory perception. However, in this study, the focus is on speechreading, which is different from auditory perception. We believe that individuals using other languages have similar developmental trajectories in speechreading ability to Chinese speakers. Therefore, we consider the conclusions of this study to be applicable to speakers of other languages.

Conclusions

The study revealed that CI users with degraded auditory abilities exhibited different speechreading abilities among the CI users. This finding is in accordance with the perceptual compensation theory, but a more complex situation was found in the CI users. We argue that individuals with posterior deafness (ie, those who have suffered hearing loss between age 3 and 8 years) are more sensitive to developing a speechreading advantage than those who became deaf at a younger age. In addition, older age positively affects VO speech recognition scores (speechreading abilities), and CI users exhibit more experience-driven processes of the speech recognition. While vision plays an integral role in shaping the intelligibility of speech signals, natural speech processing is an audiovisual experience. When faced with impaired auditory inputs electrically, the incorporation of visual cues is an effective compensatory strategy for CI users after implantation.

Footnotes

Data Availability

The data that support the findings of this study are available from the corresponding author, Yuhe Liu, on reasonable request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research received grants from the following funding sources: National Natural Science Foundation of China (NSFC)—82071070; Beijing Municipal Science and Technology Commission—No. Z191100006619027; Capital’s Funds for Health Improvement and Research No. 2022-1-2023; and Beijing Hospitals Authority’s Ascent Plan, Code: DFL20220102.

Ethical Approval

The study was approved by the Central Committee on Research Involving Human Subjects of the Peking University First Hospital. The grant number is PK0020200034.

Informed Consent

All participants or their parents provided written consent themselves or on behalf of their children to participate in the present study.