Abstract

Introduction

ChatGPT is a conversational artificial intelligence (AI) tool developed by OpenAI. The tool is based on the generative pre-trained transformer (GPT) architecture, which uses machine learning to analyze and generate human-like text. ChatGPT is designed to be a resource for individuals seeking information on a wide range of topics. Gaining in popularity since its release, ChatGPT rose to the top 50 most popular websites worldwide, 1 and is becoming a significant tool in various fields, including medicine. 2

The emergence of ChatGPT has ushered in a revolution in the field of medicine. 3 For instance, the technology could assist physicians in decision-making process in radiology, 4 provide suicide risk assessment, 5 and advise patients on the most common hand procedures. 6 The accessibility and user-friendliness of this tool to the general public has enabled patients to conveniently access a vast repository of medical knowledge without spending much time on extensive web search. 7 When seeking advice from ChatGPT, users might be more open to revealing sensitive information relevant to their medical situation. 7

People actively use the internet to search for health information, approximately 35% of adults search for symptoms appraisal, but this figure varies between 23% and 75%. 8 Studies raised concerns about the quality of medical information available for online search, 9 including information about tonsilitis and tonsillectomy, which are mostly of poor quality 10 and readability 11 with minimal information about possible complications and different treatment options. 10 Concerns pertaining to the accuracy of the instruction provided by ChatGPT, specifically in matters relating to healthcare, have been raised.5,6,12-15

Tonsillectomy is one the most common procedure and, in our experience, associated with many questions parents usually have before or after surgery. We seek to evaluate the accuracy and efficacy of ChatGPT as a patient resource they can utilize to get information about tonsillectomy. This appraisal will focus on the reliability of the medical advice and instruction provided by ChatGPT.

Methods

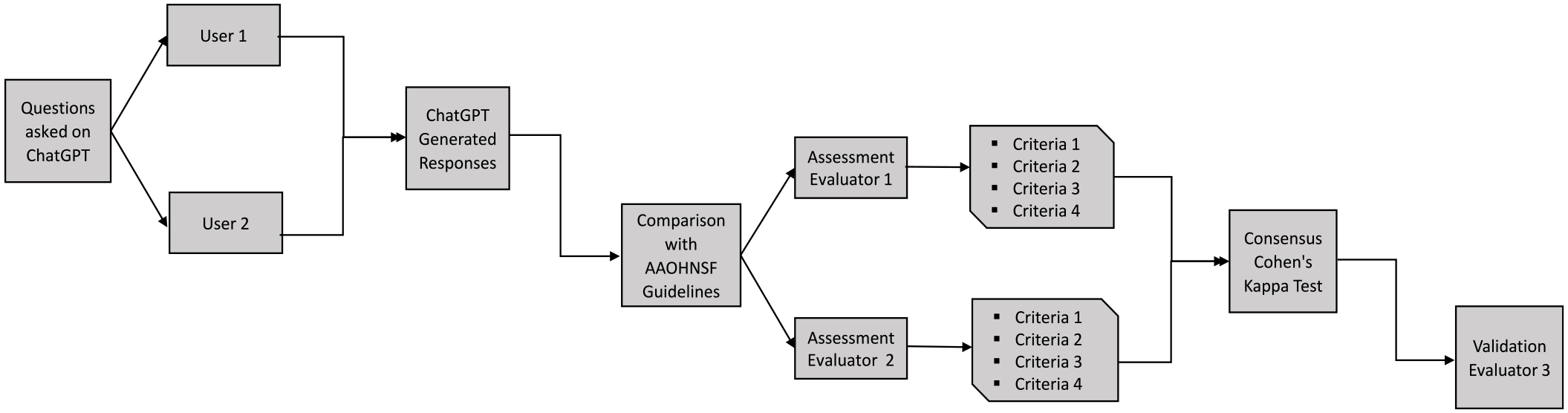

In our study, we used ChatGPT based on the GPT-3.5 architecture that is available at no cost. Two users on separate devices presented ChatGPT with a list of preestablished questions, which were devised by 2 otolaryngologists and approved by the senior author (SJD), concerning surgical indications and complications post-tonsillectomy, based on 16 key action statements from the current guideline: “American Academy of Otolaryngology Head and Neck Surgery Foundation Clinical Practice Guideline: Tonsillectomy in Children (Update)—Executive Summary.” 16 Both users reduced confounding bias by creating a novel ChatGPT session for each individual prompt, never combining prompt questions or follow-ups. Responses collected by the 2 users were recorded and compared with the statements published in the guideline. Two independent otolaryngologists evaluated the answer provided by ChatGPT on a 4-point scale: evaluating if the prompt aligned with the latest guidelines, cited the guidelines, explicitly referenced the American Academy of Otolaryngology—Head and Neck Surgery Foundation (AAO-HNSF), as well as communicated the importance of seeking a clinician’s input. Discrepancies in the assessments of the first and second reviewer were discussed together by 2 reviewers and the senior author (SJD) to reach a consensus. A visual representation of the workflow is depicted in Figure 1.

Workflow diagram.

This study was reviewed by the McGill University Faculty of Medicine and Health Sciences Research Ethics Office and granted exemption in accordance with the institutional requirements.

Results

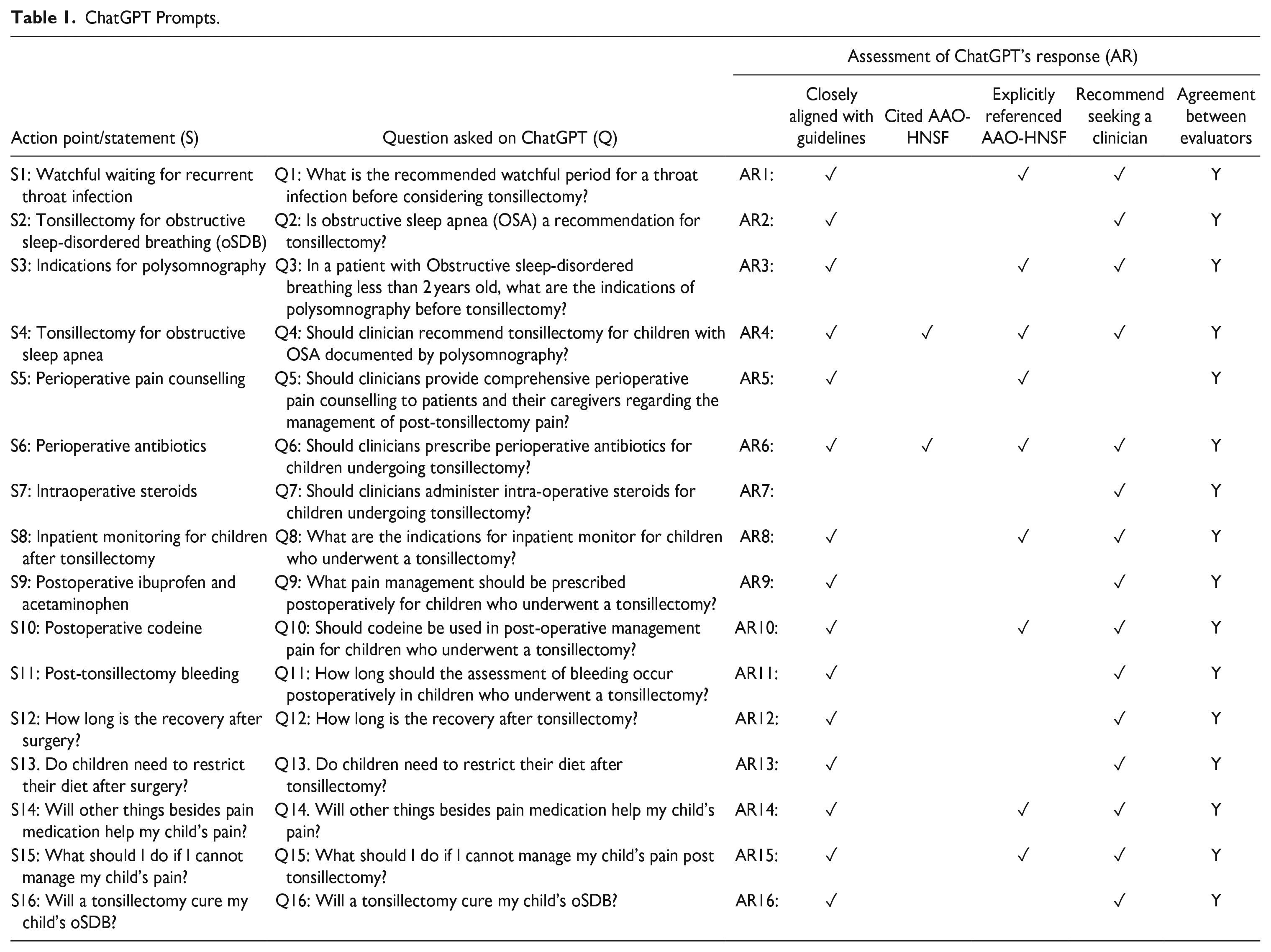

A total of 16 responses generated by ChatGPT regarding indications and complications post-tonsillectomy, seen in Table 1, were compared with the AAO-HNSF guideline. Following a thorough review, it was determined that these responses closely align with the gold standard set by the guideline. Both judges demonstrated complete agreement (Cohen’s K = 100%) that ChatGPT consistently offered comprehensive recommendations, aligning effectively with the information emphasized in the guidelines. Out of 16 responses, 15 prompts (93.8%) presented a high degree of correlation and closely aligned with the guideline. On the other hand, 1 out of 16 (6.2%) prompts did not capture the nuance described in the guideline statement. The clinical guideline stipulates that clinicians should administer a single intraoperative dose of intravenous dexamethasone to children undergoing tonsillectomy. When presented with the prompt: Should clinicians administer intra-operative steroids for children undergoing tonsillectomy? ChatGPT provided a broad and general response, without stipulating the type, dosage, or mechanism of steroid delivery. Refer to the Supplemental Table for the complete response.

ChatGPT Prompts.

In 2 out of 16 (12.5%) occasions, ChatGPT cited the AAO-HNSF guideline in its response. These references include the following example for statement 4, where ChatGPT explicitly outlines how “The American Academy of Otolaryngology—Head and Neck Surgery (AAO-HNS) has published clinical practice guidelines for the management of pediatric OSA and according to these guidelines tonsillectomy is considered the first-line treatment for children with OSA and enlarged tonsils.” The same explicit reference pertains to statement 6, where ChatGPT cites the guidelines verbatim, refer to the Supplemental Table. Fifteen out of 16 (93.8%) of ChatGPT’s responses made at least one direct reference to consult with a specialist or healthcare provider when considering all available treatment options and inherent risk factors.

A comprehensive breakdown of all responses generated by ChatGPT is available as Supplemental Data in Table S1.

Discussion

While ChatGPT assumes a role that refrains from providing medical advice, it aims to facilitate user consideration of options and comprehension of the multifaceted factors encompassing their specific medical situations. The answers provided by ChatGPT are concise, easily understandable, and tailored to meet the needs of parents who may not be familiar with medical terminology.

Fifteen out of 16 of ChatGPT’s responses made at least one direct reference to consult with a specialist or healthcare provider when considering all available treatment options and inherent risk factors associated with a patient’s particular case. This provides somewhat of a safety-net, considering that parents might ask 2 or 3 questions they are mainly concerned about and receive a reminder to consult with a healthcare professional. This trend was observed in other studies.6,17 It is not the role of ChatGPT to provide medical advice and the AI-generated responses made this abundantly clear to users who could potentially reduce the risk of avoiding seeking medical attention which can affect the final treatment outcome. 10

In addition, the incorporation of supplementary resources such as AI offers physicians the opportunity to augment their efficiency and facilitate the accessibility of information to parents.2,4-6,18-22 Notably, these responses are not only succinct but also effectively tailored for comprehension by parents who may lack familiarity with medical terminology. 23 Ensuring the reliability and accuracy of information provided to patients and their caregivers is of paramount importance, particularly in matters concerning health and patient education. 21

While AI and related resources contribute significantly to efficiency and accessibility, the fundamental responsibility remains in ensuring that the information delivered to patients and their caregivers is trustworthy, precise, and aligned with the highest standards of healthcare practice.15,18 However, the results of the current study should be viewed carefully in light of several shortcomings. Limitations of the study include the use of a small number of prompts and a rapidly evolving AI system, which is demonstrating improvement with each update as ChatGPT acquires new knowledge through learning.

The determination of information sources employed by ChatGPT becomes crucial, along with concerns regarding the up-to-dateness of the information.2,18,24,25 The dynamic nature of guidelines necessitates their periodic updates, reflecting advancements in medical knowledge, and evolving best practices. However, the inherent challenge lies in the ability of ChatGPT to effectively adapt and accommodate these changes, particularly in the fast-paced realm of general practice in healthcare. The potential risk arises from the reliance on ChatGPT’s capacity to remain updated and aligned with the latest guidelines, and its ability to grade quality of information that is used to generate response, such as choosing between scientific publications in reputable journals over articles in popular web resources or social media platforms that describe the same medical issues. 17 This could be improved by pre-training AI models on selected information or developing algorithms to prioritize scientific data over general information on the internet.

In the question regarding the usage of dexamethasone intraoperatively, ChatGPT’s response may not have been incorrect, as it acknowledged the consideration of all pertinent factors in determining management plans and treatment options; however, it did not provide a definitive answer. This has been referred to in previous study as to the noncommittal output of ChatGPT. 25 A limitation of ChatGPT lies in its ability to capture the tone or context of a prompt. 18 AI language models can at times fail to fully capture the nuances of a query. 24 When provided with a clinically oriented prompt, future AI-based clinical decision-making tools must address the limitations in identifying and reporting standard of care guidelines. 4 Prompt-fine tuning to set the tone and context of a question or concern is imperative in order to enhance accuracy of ChatGPT’s responses. It is noteworthy that despite clinical practice guidelines firmly supporting the aforementioned treatment plan, substantiated by literature evidence and expert review, ChatGPT did not supply an unequivocal response to the query.

Conclusion

The emergence of ChatGPT has revolutionized the accessibility of information available online, enabling patients to access a vast repository of medical knowledge. The reliability and comprehensiveness of ChatGPT’s responses are evident in a comparison with the AAO-HNSF guideline, where the tool provided predominantly comprehensive, easy to read answers, often including ancillary facts and references to support its responses. Although there was a slight discrepancy between official guideline statement and ChatGPT response in one question, there is no risk for the child if parents were to follow ChatGPT’s recommendation.

Physicians have to be aware of the limitations of ChatGPT and should consider it as an adjunct to the service they provide, which has to be supervised to ensure the safety and accuracy of the information patients receive.

Further studies are needed to establish the role and discover the potential of ChatGPT and similar AI tools in healthcare.

Supplemental Material

sj-docx-1-ear-10.1177_01455613241230841 – Supplemental material for Can ChatGPT Replace an Otolaryngologist in Guiding Parents on Tonsillectomy?

Supplemental material, sj-docx-1-ear-10.1177_01455613241230841 for Can ChatGPT Replace an Otolaryngologist in Guiding Parents on Tonsillectomy? by Alexander Moise, Adam Centomo-Bozzo, Ostap Orishchak, Mohammed K. Alnoury and Sam J. Daniel in Ear, Nose & Throat Journal

Footnotes

Acknowledgements

The authors would like to note that there are no acknowledgments for this article.

Author Contributions

Conceptualization and methodology, AM and SJD; data curation, AM and ACB; evaluation, OO and MKA; validation, SJD; writing—original draft preparation, AM; writing—review and editing, AM, ACB, OO, MKA and SJD; visualization, ACB; supervision SJD. All authors have read and agreed to the published version of the manuscript.

Data Availability

The authors confirm that the data supporting the findings of this study are available within the article and its supplementary data file.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval

Ethical review and approval were waived for this study by the McGill University Faculty of Medicine and Health Sciences Research Ethics Office on April 18, 2023, in accordance with the institutional requirements.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.