Abstract

Objectives

Online surgical videos are an increasingly popular resource for surgical trainees, especially in the context of the COVID-19 pandemic. Our objective was to assess the instructional quality of the YouTube videos of the transsphenoidal surgical approach (TSA), using LAParoscopic surgery Video Educational Guidelines (LAP-VEGaS).

Methods

YouTube TSA videos were searched using 5 keywords. Video characteristics were recorded. Two fellowship-trained rhinologists evaluated videos using LAP-VEGaS (scale 0 [worst] to 18 [best]).

Results

The searches produced 43 unique, unduplicated videos for analysis. Mean video length 7 minutes (standard deviation [SD] = 13), mean viewership was 16 017 views (SD = 29 415), and mean total LAP-VEGaS score was 9 (SD = 3). The LAP-VEGaS criteria with the lowest mean scores were presentation of the positioning of the patient/surgical team (mean = 0.2; SD = 0.6) and the procedure outcomes (mean = 0.4; SD = 0.6). There was substantial interrater agreement (κ = 0.71).

Conclusions

LAP-VEGaS, initially developed for laparoscopic procedures, is useful for evaluating TSA instructional videos. There is an opportunity to improve the quality of these videos.

Keywords

Introduction

The internet currently serves as the largest and most current resource available for medical information. 1 The widespread use of publicly accessible online surgical instructional videos, in particular, has revolutionized surgical education. Many sources are available online for trainees to reference in preparation for surgery, but YouTube has been found to be the most preferred audiovisual resource by surgical trainees.2,3 There is a literature gap on the effectiveness of YouTube as an instructional tool for surgical trainees, and most studies focus on its utility as an educational resource for patients.4,5 As there is no peer-review process to evaluate these videos; the quality of available surgical instructional videos can vary with the potential for misleading videos to circulate from unapprised sources. 6 Given its widespread popularity and use, studies are needed to review the quality of YouTube as a surgical instructional tool.7–9

Existing studies have evaluated YouTube as a surgical instructional tool primarily in laparoscopic and general surgery.1,2,7–11 The recent development and validation of the LAParoscopic surgery Video Educational GuidelineS (LAP-VEGaS) video assessment tool offers a standardized framework for the evaluation of surgical laparoscopy videos. Within the laparoscopic literature, it has been shown to be useful for the identification and selection of high-quality surgical videos for acceptance for presentation and publication at conferences. The LAP-VEGaS video assessment tool also demonstrates a high level of internal consistency and generalizability, indicating good reliability and assessment reproducibility amongst reviewers.12,13 Similar to laparoscopic procedures, nasal endoscopy in otolaryngology uses “ports,” the nostrils. The use of an endoscopic camera also similarly produces high-quality recordable video media, and surgical trainees who watch the video recording of the case can view the procedure from the same point of view as the operating surgeon during the procedure. Otolaryngologists are increasingly using the endoscope for minimally invasive approaches to the skull base, to treat tumors and other skull base pathology. 14 Endoscopic endonasal approaches (EEAs) provide improved visualization of the surgical area via the endoscope and allow surgeons to remove skull base pathology through the nose without the use of external incisions. The transsphenoidal approach (TSA) is the most common of EEAs used to access sellar pathology such as pituitary adenomas. The TSA has been incorporated into many residency training programs in otolaryngology and is a common focus of surgical instructional videos on YouTube, thus being an ideal and relevant endoscopic otolaryngologic procedure to gauge YouTube video quality. 15

During the novel COVID-19 pandemic, virtual platforms such as YouTube for surgical demonstration and visualization became increasingly important and valuable for training otolaryngologists. Most hospitals restricted the number of participants allowed in surgical operating rooms to only essential individuals, leading to significant negative impacts on surgical training. The evident pandemic restraints therefore highlighted the importance of evaluating the quality of the video repositories available for trainees, which have been used more than ever in the last year.16,17

In addition to gauging video quality, there is a strong need to evaluate the LAP-VEGaS video assessment tool across different specialties, as more widespread recognition and adoption of this tool may help to further improvement efforts. To date, only one other study has used this tool in otolaryngology, to evaluate the quality of surgical instructional videos for neck dissection, an open procedure. 10 The purpose of this study was to evaluate the instructional quality of YouTube videos for a common endoscopic otolaryngologic procedure, TSA, using the LAP-VEGaS video evaluation tool.

Materials and Methods

Video Search and Screening

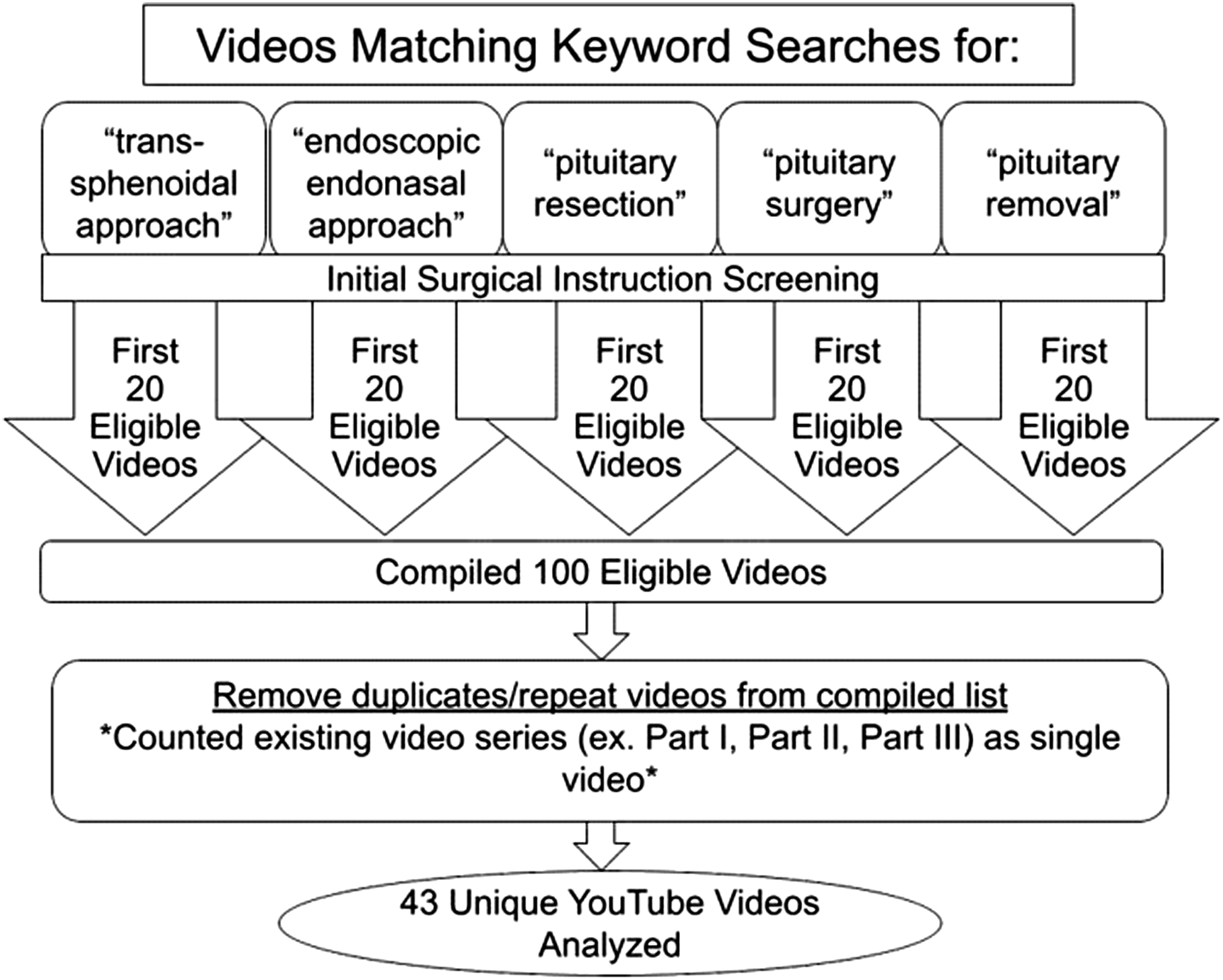

YouTube (http://www.youtube.com) was searched using the following 5 keywords: “transsphenoidal approach,” “endoscopic endonasal approach,” “pituitary resection,” “pituitary surgery,” and “pituitary removal.” This was done on a new incognito window browser each time for each keyword to ensure that previous internet searches and activity did not influence our yielded YouTube search results. The searches were carried out individually, on the same day (December 11, 2019), using the default settings without changing any filters. The results for each search were screened to select the first 20 eligible instructional videos that demonstrate TSA. Videos were deemed ineligible if they were not instructive or irrelevant to the surgery. The resulting videos were compiled, and duplicates were identified and removed. This process is shown in Figure 1. Flow chart outlining eligibility screening and review process for videos.

Video Evaluation

Video characteristics were recorded, including upload date, duration, viewership, comments, likes, and dislikes. Two fellowship-trained rhinologists (VSL and SAJ) independently reviewed and scored the resulting unduplicated videos. The LAP-VEGaS video assessment tool was used to specifically evaluate videos. 13 This tool is made up of 9 criteria, each rated as not present (0), partially present (1), or completely present (2). The total score is the sum of the individual scores for each of the 9 criteria, ranging from 0 to 18.

Statistical Analysis

Summary statistics were calculated for descriptive video characteristics, as well as individual criterion and total LAP-VEGaS assessment scores. Cohen’s kappa coefficient (κ) was measured to assess interrater agreement. Pearson’s correlation coefficients were calculated for the relationship between view count, comments-and-likes/dislikes ratio, and overall LAP-VEGaS score. Statistical analyses were performed using Microsoft Excel 2019 software (Microsoft, Redmond, WA).

Results

Descriptive Video Characteristics

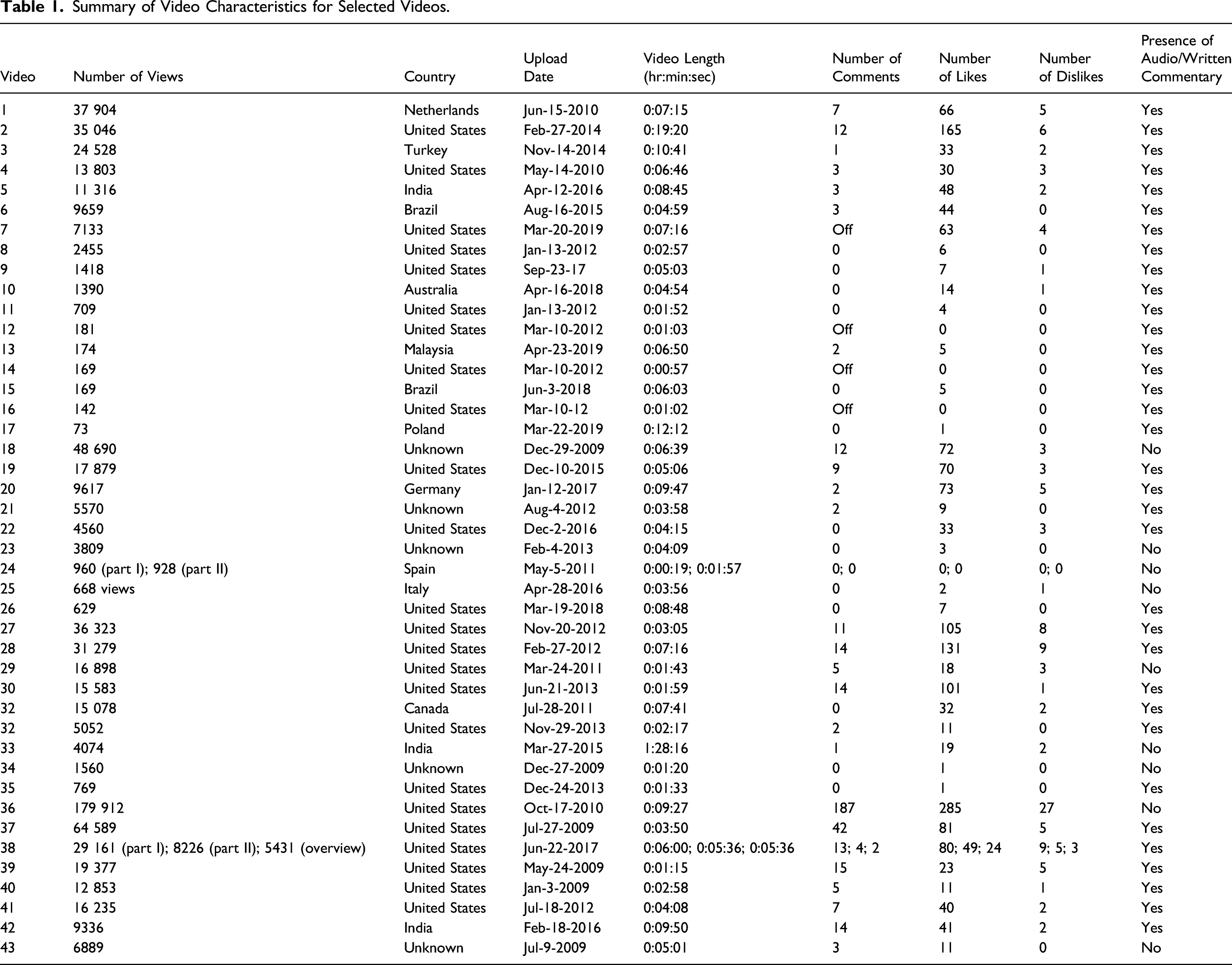

Summary of Video Characteristics for Selected Videos.

Video Quality Assessment Using the LAP-VEGaS Tool

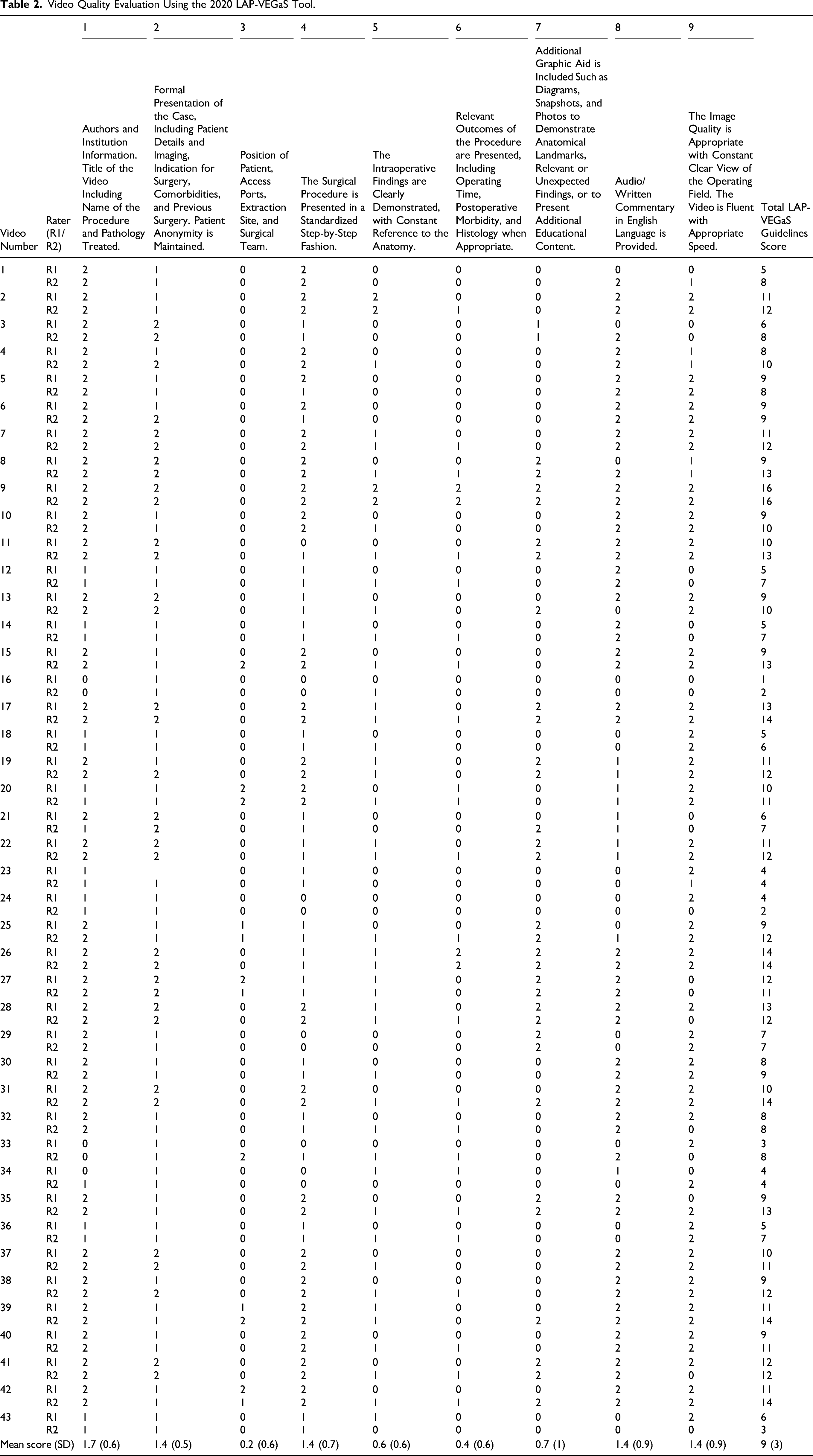

Video Quality Evaluation Using the 2020 LAP-VEGaS Tool.

Looking at the criterion individually, 5 of the criteria were rated with mean value scores above 1 (scale of 0 to 2). The presence of the author/institution information and video title, including the name of the procedure and pathology treated, produced a mean score of 1.7 (SD = 0.6). Formal presentation of the case, presentation of the surgery in a standardized step-by-step fashion, complete audio/written commentary in English, and appropriate video speed and imaging quality, all received mean scores of 1.4 (SD = 0.5, 0.7, 0.9, and 0.9, respectively). Four of the criteria had mean values less than 1: presentation of patient and surgical team positioning (0.2; SD = 0.6); clear demonstration of intraoperative findings (0.6; SD = 0.6); presentation of relevant outcomes of the procedure (0.4; SD = 0.6); and presentation of any additional graphic aid, such as additional diagrams or photos to demonstrate anatomical landmarks and/or relevant or unexpected findings (0.7; SD = 1).

The analysis of the overall quality ratings of the individual videos was also performed on the LAP-VEGaS criteria point scale (0-6: low; 7-12: medium; and 13-18: high). There were only 10 videos that scored 13 points or more. (videos 9, 11, 15, 17, 26, 28, 31, 35, 39, and 42). The agreement between the parties was substantial (κ = 0.71). Furthermore, the total score was not correlated with view count (r = −0.087, P = .57), number of comments (r = −0.15, P = .35), or like-to-dislike ratio (r = 0.089, P = .57).

Discussion

The results of this study indicate an opportunity to improve the quality of surgical instructional videos for TSAs on YouTube. There was no correlation between total LAP-VEGaS quality scores and video characteristics, specifically view count, number of comments, or the likes-to-dislikes ratio. Thus, if viewers are choosing to utilize any of these categories, such as view count, to filter and select the videos that they decide to watch, lower quality videos may be achieving more widespread viewership. The LAP-VEGaS video assessment tool provides useful guidelines for creators of surgical instructional videos to produce quality content. Incorporation of this tool into a video-vetting process on publicly available platforms such as YouTube analogous to the peer-review process used by journals may improve quality.

Video-filtering algorithms containing quality scores may also be used to promote higher quality videos to viewers. The exact YouTube search algorithm is unknown, and we are unable to provide recommendations on how to filter search results to yield the higher quality videos or increase their viewership. There were no commonalties noted upon reviewing the popular videos; the video popularity was random. The best way to ensure that the higher LAP-VEGaS score correlates with higher video popularity would be for YouTube and similar video search engines incorporate a quality check to screen the content uploaded on their platforms. Alternatively, future efforts may be aimed to have separate video repositories made by respective professional organizations that have quality control checks prior to upload.

As a recordable video feed is available directly from the endoscope, EEAs such as TSAs are conducive to the creation of instructional video media. Although most currently available videos provided author information and audio/written commentary, presented the case formally and surgery step-by-step, and had audio/written commentary in English and appropriate video speed/imaging quality, they were commonly missing key LAP-VEGaS criteria. One largely missing criterion was positioning, which is particularly critical in TSAs and EEAs in general, as a variety of setup configurations are possible; ergonomics is very important to mitigate surgeon fatigue in longer cases, and patient positioning plays a role in hemostasis and overall endoscopic visualization. Outcomes of the procedure were also not commonly presented; postoperative imaging, in particular, can be informative to juxtapose the techniques used with the extent of tumor resection. Videos also did not regularly include additional diagrams or photos to demonstrate anatomical landmarks and/or relevant or unexpected findings, which can increase their informative value between viewers of varying skill levels.

This study has limitations. First, only videos of TSA pituitary tumor removal videos available on YouTube were analyzed and reported. Although surgical trainees report YouTube as their most preferred audiovisual resource, there are a multitude of other resources that include free and paid video subscriptions, which warrant future analyses.2,3 Furthermore, there is the potential for selection bias with regard to videos available on YouTube through our search strategy. Furthermore, the LAP-VEGaS tool used to evaluate endoscopic videos in this study was initially designed to evaluate laparoscopic videos intended for the submission of academic presentations, although both are similar video-based procedures.

As a result of the COVID-19 pandemic, there has been a growing urgency to create a high-quality online curriculum for surgical trainees. The nasal cavity, in particular, is a high viral load area, and EEAs may pose a higher risk of transmission.18,19 Therefore, due to the increased health risks, hospitals and medical colleges in the United States limited their trainee involvement in procedures during the initial severe stages of the pandemic. During those times, surgical trainees had their learning substantially supplemented by virtual means, such as surgical instructional videos.20–22 This highlighted the efficiency of remote learning as a useful resource for trainees, now and in the future, as it can be done from any location. Thus, improving the quality of surgical instructional videos for these types of procedures is especially beneficial for training otolaryngologists.

The developers of the LAP-VEGaS video assessment tool, originally intended for the evaluation of laparoscopic procedures in general surgery, have noted the need to evaluate their tool in all surgical specialties. 13 This study is the first to apply the LAP-VEGaS video assessment tool to endoscopic procedures in otolaryngology, highlighting overall areas of improvement for these videos on the YouTube platform. Other online video publications, including the Journal of Visualized Surgery, Journal of Medical Insight, Journal of Visualized Experiments, CSurgeries, and video-sharing websites, such as Vimeo, are platforms used for the dissemination of surgical videos in otolaryngology. Evaluating and consolidating surgical videos using the LAP-VEGaS video assessment tool as an efficient, standardized peer-review-type screening method would create a unique, cost-effective, and easy-to-access online library platform. This consolidation process was recently modeled by the University of Pittsburgh Division of Surgical Oncology. 23 Their library consisted of 929 laparoscopic and robotic procedure videos from existing video recorders. They selected 110 videos to be edited using key steps with time metrics and uploaded them for trainees to review online in preparation for upcoming operations. This model that highlights the methodology for creating a video library can be adapted to otolaryngology. Currently, the American Academy of Otolaryngology – Head and Neck Surgery provides free access to a limited number of surgical instructional videos, which are uploaded to its YouTube account.16,17 The American Rhinologic Society also has a more comprehensive video repository for its members. These video libraries serve as a ready foundation for the development of a high-quality and standardized source for surgical videos in endoscopic otolaryngology.

Conclusions

Due to the COVID-19 pandemic, surgical instructional videos have become increasingly more relevant and are particularly important for endoscopic procedures in otolaryngology, which involve areas of high viral load. The results of this study indicate an opportunity to improve the quality of surgical instructional videos for TSAs on YouTube and highlight missing components that can be useful to viewers. This study also supports the usability and reliability of the LAP-VEGaS video evaluation tool for endoscopic procedures in otolaryngology. Such a tool provides an efficient, standardized peer-review-type screening method that can be readily incorporated into existing video platforms to improve video content quality.

Footnotes

Acknowledgments

The authors thank Kevin O’Grady for assistance with institutional regulatory activities related to this study.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.