Abstract

The first recorded myringotomy was in 1649. Astley Cooper presented 2 papers to the Royal Society in 1801, based on his observations that myringotomy could improve hearing. Widespread inappropriate use of the procedure followed, with no benefit to patients; this led to it falling from favor for many decades. Hermann Schwartze reintroduced myringotomy later in the 19th century. It had been realized earlier that the tympanic membrane heals spontaneously, and much experimentation took place in attempting to keep the perforation open. The first described grommet was made of gold foil. Other materials were tried, including Politzer’s attempts with rubber. Armstrong’s vinyl tube effectively reintroduced grommets into current practice last century. There have been many eponymous variants, but the underlying principle of creating a perforation and maintaining it with a ventilation tube has remained unchanged. Recent studies have cast doubt over the long-term benefits of grommet insertion; is this the end of the third era?

A grommet in otolaryngological terms is defined by the Oxford English Dictionary as a “tube surgically implanted in the eardrum to drain fluid from the middle ear.” Grommet or grummet is derived from the obsolete French gourmer “to curb”; the original meaning described a circle of rope used as a fastening. 1 Today the insertion of grommets into the tympanic membrane is one of the commonest operations performed by otolaryngologists and is the standard operative treatment (but not cure) for otitis media with effusion (OME) or “glue ear,” although other indications are also recognized.

The First Era of Myringotomy

Otitis media with effusion is not a new problem, although the term “glue ear” was not coined in the literature until 1960. 2 The condition was first described by Hippocrates and Aristotle, among others of their day. In 400 BC, the Hippocratic school described how the middle ear became filled with mucus but suggested that the condition could be relieved by incising the eardrum causing “a flux of humours.” 3 The first formal myringotomy was not reported until 1649 when Jean Riolan the Younger, a French anatomist and pathologist, described an improvement in hearing following intentional laceration of the tympanic membrane with an ear spoon. 4 He hypothesized that artificial perforations of the tympanic membrane might be a cure for congenital deafness.

During the 17th and 18th centuries, many now-famous names attempted to explain the relationship between the tympanic membrane and hearing. The majority of their experiments were carried out on dogs. Thomas Willis, the English physician after whom the Circle of Willis is named, found that dogs did not lose their hearing after perforating their eardrum. He was contradicted by his Italian contemporary Antonio Mario Valsalva, remembered for his eponymous maneuver.

Until this point, no physician had attempted myringotomy in humans. William Cheselden, having completed animal studies at St Thomas’s Hospital where he was a surgeon, almost succeeded in his quest for human test subjects. He obtained a Royal Pardon for a criminal, on the condition that he underwent a myringotomy. A public uproar ensued forcing him to cancel the proposed procedure. It later transpired that the criminal in question was a cousin of Cheselden. Furthermore, the partly deaf mistress of George II, Lady Suffolk, who was hoping to be cured if the findings were promising, had obtained the Pardon! 5

In 1748, Julius Busson became the first person to recommend perforating the tympanic membrane if pus was present medial to it. The French “strolling quack” Eli began undertaking the procedure for deafness in 1760. 6 Peter Degravers carried out the procedure in Edinburgh and described it in 1788: “I incised the membrane tympani of the right ear with a sharp, long, but small lancet. I left the patient in that state for some time, afterwards observing that it had reunited…I incised again the membrane tympani of the right ear but crucially; and on removing the part of the membrane incised, I discovered some of the ossiculae which I had brought out.” 7 We can only assume that the patient’s hearing did not improve after this somewhat overenthusiastic myringotomy.

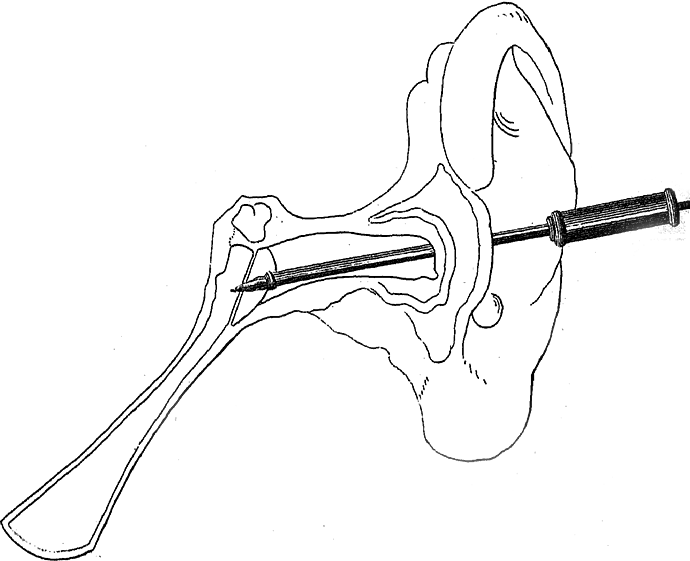

Although not the first to perform the procedure, Sir Astley Paston Cooper, surgeon to Guy’s Hospital, can certainly be acknowledged as the first to outline clear indications for myringotomy (Figure 1). The subject was first brought to his attention by his friend Sir Everard Home, surgeon to St George’s Hospital and son-in-law of John Hunter. Both Cooper and Home were students of Hunter. Home was one of the first to describe the radial fibers of the tympanic membrane and presented his findings to the Royal Society in London in 1799. 8 Following on from this, Cooper delivered a paper to the Royal Society in 1800, showing that tympanic membrane perforation did not always result in a loss of hearing. 9 A second paper, presented at the same venue in 1801, described the return of hearing after myringotomy, “the removal of a Particular Species of Deafness.” 10 He used a trocar concealed within a cannula to create a perforation (Figure 2) and recommended the anteroinferior quadrant as the appropriate site in order to preserve the ossicles. The procedure was, however, performed blind. His sole indication for the procedure was deafness due to Eustachian tube obstruction, and he was adamant that bone conduction must be present in these patients. He assessed this by placing a watch on their incisors or mastoid and finding that they could hear it more clearly than when it was held near the auricle. For these observations, at the age of 34, he was awarded the Copley medal, the highest honor bestowed by the Royal Society. 11

Sir Astley Paston Cooper.

Astley Cooper’s trocar and cannula for myringotomy. Reproduced from Phil Trans Roy Soc (1801).

In part due to Cooper’s enthusiasm, myringotomy became a very popular procedure around this time. In 1804, Christian Michaelis, a professor of anatomy and surgery from Marburg, performed tympanic membrane perforation in 63 patients. He recorded improved hearing in every fourth case, 12 but it is not clear if he applied such strict selection criteria as Cooper. Jean Marie Gaspard Itard also favored myringotomy for Eustachian tube obstruction and published his recommendations in 1821. 13 Unfortunately, Cooper’s strict indications were ignored by the majority of those performing it, many of whom were nonmedically trained. It was hailed as a “cure” for all forms of deafness. This injudicious overuse inevitably led to it falling from favor, as the majority of patients did not gain any benefit and many presumably lost further hearing as a result of blind tympanic membrane perforation. In addition, myringotomy had its opponents such as the German otologist Kramer, who disliked the procedure because the openings healed too quickly. 14 This was another reason for its loss of popularity.

The Second Era

By the mid-19th century, few surgeons were still performing myringotomy. Joseph Toynbee of St Mary’s Hospital in London was one; his assistant James Hinton another. In an interesting and well-documented case in 1853, Toynbee was consulted by a man whose hearing loss had been cured as a child when Astley Cooper performed myringotomies in both of his ears. The deafness had returned as an adult following a heavy cold and persisted except for a brief period following a sneeze. Toynbee’s initial prescriptions of leeches, blisters and mercury were not successful, and he therefore proceeded to perforate the eardrums. He reported an “instantaneous return of hearing” which lasted for just a few days before the deafness “slowly returned.” He then attempted to maintain an opening by turning down large tympanic membrane flaps but these also healed. 15 In Dublin, Sir William Wilde (father of the famous Oscar) was using a sickle knife to incise the anteroinferior quadrant, followed by silver nitrate cautery to the perforation edges in a bid to keep it open. 16 Although he continued to perform myringotomy, Wilde was aware of its problems and discussed them in his textbook, the first English book on ear surgery: “There has not been, perhaps, in the whole history of medicine during the present Century, a discovery to which so much praise was at the time awarded, but subsequent investigation and experience have, to say the least of it, so much disparaged.” 17

Myringotomy was reintroduced into popular otological practice in the latter half of the 19th century, predominantly by Schwartze and Politzer. 18 Herman Schwartze, an otologist at the University of Halle in Germany, had previously recognized its value in selected cases and in 1868 published his specific indications for the procedure. He advised it to be used only for fluid collections in the middle ear, particularly empyema and catarrh in children, and acute inflammation of the tympanic membrane with pain. 19 His indications are still relevant today. At that time, he recognized that the main problems were how to extract the fluid from the middle ear cavity and how to keep the perforation open.

Schwartze and his contemporaries tried instilling medication through the Eustachian tube, as well as down the external canal, in a bid to liquefy the middle ear fluid and enable its removal. Adam Politzer is credited with the first use of suction to remove fluid. 20 The “Father of Modern Scientific Otology” was a professor in Vienna, and there established the first ear clinic in the world, which he formed with his coprofessor Josef Gruber in 1873. 21 Although it was becoming fashionable to irrigate the middle ear Politzer avoided this, claiming it led to higher postoperative infection rates. 20 He is also thought to have designed the first ventilation tube, in an attempt to overcome the problem of perforation closure. 22

It had already been noted that one of the drawbacks to myringotomy was that the incision healed spontaneously, and often very quickly; this had been another reason for its earlier decline in popularity. Other evidence emerged from Jean Pierre Bonnafonte who documented a case in which 25 myringotomies were performed over a 3-year period on the same patient, with no opening persisting for more than a few months. 23 One of the earliest attempts to prevent this rapid healing was described by Antoine Saissy in 1829, who reported using oiled catgut string to keep the perforation open. 24 In Italy in the late 18th century, Monteggio attempted to maintain the opening with cautery. 6 At around the same time, the German ophthalmologist Himly devised a larger trocar for the same reason. The race was on to discover a technique to maintain the perforation.

Schwartze, Politzer and their contemporaries continued to search for a successful method of maintaining the tympanic membrane perforation and were essentially divided into 2 schools of thought. The first group, led by Philippeaux and Gruber, removed sections of the eardrum, progressing from larger myringotomies to wedge-shaped excisions, terming this a “myringodectomy,” but it did not leave a reliable permanent opening. In some cases, the annulus or part of the malleus was also removed. Painting the edges of the wound with sulfuric acid was also tried unsuccessfully. 25 It must be remembered that these techniques were all attempted without anesthetic, an eye-watering thought.

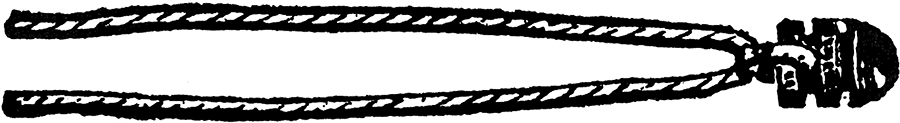

The second group, with Politzer at its helm, searched for a foreign body that would sit within the perforation and keep it open. Saissy’s catgut did not work, and nor did lead wire or whale bone which were subsequently trialed by others. In 1845, Martel Frank’s book described the use of a small gold tube which seemed to have more success, albeit still temporary. 26 Politzer first described his rubber grommet in 1868. It had 3 flanges and 2 grooves to allow it to sit across the tympanic membrane, as well as a silk thread to prevent it falling into the middle ear, 27 and bears a definite resemblance to today’s grommets (Figure 3). This was later adapted by Dalby, but still only remained in place for a few months before extruding. He noted that “it will sometimes be necessary to insert a fresh one, the first one more often than not slips out after a short time.” 27 Voltolini developed a gold ring in 1874, and later an aluminium version. 28 He incised anterior and posterior to the malleus and placed the ring around the handle, but to no avail.

Politzer’s grommet. Reproduced from Diseases and Injuries of the Ear (Dalby, 1893).

Despite all their efforts, myringotomy and grommet insertion again fell out of favor; the second era drew to a close at the end of the 19th century. Although the indications were now more appreciated, and the procedure was less abused, it was still only a temporary cure despite the introduction of primitive grommets. Added to this was a high complication rate, mainly postoperative infection, which is not surprising given the practice of injecting various fluids into the middle ear in an attempt to liquefy the viscous fluid, as well as foreign body insertion. This was the preantibiotic era and, untreated, these infections had potentially serious outcomes. By this time, otolaryngologists’ attention had moved to the nasopharynx, and the new “cure-all” operation of adenotonsillectomy. In his 1839 paper entitled “Deafness cured by cleaning out the passages from the throat to the ear,” Yearsley noted that tonsillectomy appeared to convey a benefit to hearing in some cases. 29 He pursued the theory that deafness was related to Eustachian tube obstruction and was particularly concerned with the upper poles of enlarged tonsils, 30 although it was not until 1868 that Wilhelm Meyer of Copenhagen described this lymphoid tissue as the adenoids. 6 Even Politzer abandoned his quest to maintain a tympanic membrane perforation in favor of insufflating the Eustachian tube with air, the technique which today still bears his name. A few remained faithful to myringotomy, including Sir William Milligan from Manchester who advocated its use in combination with adenoidectomy in 1925, while acknowledging that “chronic catarrhal otitis media” was “the bete noir of otologists…an incurable disease.” 31 He was mainly ignored and a new treatment was proposed, that of irradiating hyperplastic tonsils and adenoids. 32

The Third Era

The third era of myringotomy and grommets followed the Second World War. The first antibiotics had arrived, and postoperative infection was therefore treatable. The introduction of antibiotics also allowed acute otitis media to be treated, and the incidence of acute mastoiditis decreased dramatically. This in turn reduced the number of cortical mastoidectomies performed, and otologists would perhaps have had more time to consider other, less serious, conditions. 33 It has been suggested that, in the first half of the 20th century, the otologist was “treating the sequelae of serous otitis rather than preventing the sequelae from occurring.” 6 Perhaps because of this new awareness, the reported prevalence of “secretory otitis media” increased dramatically. 34 Indeed, some felt that a new disease had arrived, rather than simply increased recognition of a condition that had been documented for centuries. 35 Interest in the problem (at least in adults) may have been triggered during World War II. Not only were adults assessed for their fitness to serve in the armed forces, but “there were so many ruptured membrana tympani, an unusual opportunity was afforded for inspection of middle ear mucosas…A small number, apparently without infection, were thick and moist.” 34

Maybe because of the apparent increase in the number of cases, the current practice of conservative management was criticized. 34 Various authors were advocating myringotomy, and publishing very low infection rates. 36 The previous problem of perforation closure was still a concern, as documented by Hunt in 1948; he performed over 80 myringotomies in one patient’s ear. 37

In 1954, the American Beverley Armstrong overcame this problem with his allegedly “new” treatment—a ventilation tube. 38 Although not actually an original idea, his was the first modern grommet to be described and was made of plastic tubing. He recommended removal after 4 weeks, to allow the perforation to close, but it was found to remain in situ for much longer if left alone. He related its success to a beveled end, acting to secure the tube within the opening. In 1952, he advised creating a notch in the tube to engage the margin of the tympanic membrane. By 1959, he had adapted this further, producing the first flanged tube moulded of polypropylene. 39 In 1965, he designed a Teflon tube with a sloping flange which was easier to insert through a smaller incision. Although content with its success, he patented the “Armstrong V” in 1981. This tube was designed “for easy, precise insertion and to accommodate the anatomy.” Again moulded of Teflon, the flange has an “entry tab” for easy insertion through the myringotomy and comes complete with a stainless steel insertion instrument that fits onto a tab on the lateral end of the tube. Interestingly, Armstrong advises that the myringotomy be made in the anterosuperior quadrant of the drum immediately adjacent to the fibrous annulus. He believes that an incision at any other site will lead to premature extrusion of the tube, whereas “the right tube in the right place” will remain in situ for “two years, and sometimes for six or more”; the “right tube” was one of his own designs. 39

Myringotomy became a standard treatment for glue ear, with or without adenoidectomy, and a survey of UK ENT surgeons in 1977 indicated that 50% inserted a grommet following myringotomy. 40 Ninety-one percent of American otolaryngologists found ventilating tubes to be more effective than antibiotics in preventing acute otitis media, and 99.4% of those surveyed were using grommets regularly. 41 In fact, by 1981, not inserting ventilation tubes were said to be “cruel, insensitive and shameful” 42 and one otolaryngologist in the United States felt that “(Tubes) damn near put us out of business” 41 ; the third era of grommets continued.

Today, however, we may be looking at the end of that era, or at least a change in practice. Although ventilating tubes are still widely used in the treatment of glue ear, the Trial of Alternative Regimens in Glue Ear Treatment found no overall benefit to hearing and suggested that adenoidectomy is more effective overall. 43 A 2005 Cochrane review of grommets in children with OME concluded that their “benefits appear small.” 44 Whether we will witness a decline in grommet use for the third time remains to be seen.

What may well increase, however, is its use in the delivery of intratympanic treatment, first described in 1956 by Schuknecht. 45 He injected streptomycin “though small plastic tubing introduced into the middle ear through a small knife wound in the annulus tympanicus,” as a treatment for Menière’s disease. 46 Intratympanic instillation of gentamicin through a grommet is an option for this condition. 47 Other medications are beginning to be administered by this route, for example, steroids in the treatment of sudden sensorineural hearing loss. 48 As in Politzer’s day, new methods of maintaining a perforation continue to be described. Laser myringotomies have been shown to be less effective than standard grommets in the treatment of OME, 49 whereas radiofrequency-assisted myringotomy appears to delay closure. This effect is enhanced if mitomycin C is applied to the perforation edges. 50 New grommets are constantly being designed and marketed, and studies assessing which grommet is best continue to appear in the literature. 51

There have been 3 eras of myringotomy and grommets, each with its own successes, problems, and new ideas. These eras span 3 centuries; during this time, physicians have unfortunately still failed to successfully treat the underlying cause of glue ear—Eustachian tube dysfunction. Politzerization has had a recent resurgence in the form of Eustachian tube balloon dilatation, but long-term outcomes are lacking and placebo-controlled trials are still needed. 52

Footnotes

Authors’ Note

This article was first published by Cambridge University Press as: Rimmer J, Giddings C, and Weir N. History of myringotomy and grommets. The Journal of Laryngology & Otology, 2007;121(10):911-916 © 2007 JLO (1984) Limited. Reprinted (with minor amendments) with permission.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.