Abstract

The complexity of power distribution networks is rising, with smart technologies needed for efficient cybersecurity maintenance and risk management. Securing the scalability of predictive models across different grid configurations, managing massive amounts of sensor data, and integrating diverse data sources are all problems with existing approaches. Therefore, this study presents an artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) method to address the above-discussed potential issues. By dynamically analyzing real-time data, AI-DGOA optimizes grid performance, allowing for proactive decision-making and lowering the risk of power supply disruptions. We design fault detection and classification algorithms based on machine learning ensemble models. The proposed algorithms improve predictive maintenance, reducing maintenance expenses and downtime by detecting impending equipment failures and grid inefficiencies. In addition, AI-DGOA ensures operational resilience and data security by incorporating real-time cybersecurity risk assessment to protect power distribution networks from changing cyber threats. The simulation analysis and results show that AI-DGOA effectively predicts grid disappointments, optimizes load distribution, and predicts cybersecurity vulnerabilities. This study reduces cyber risks, schedules maintenance more efficiently, and increases grid dependability. This study presents the role of Artificial Intelligence (AI) in revolutionizing power distribution networks through better cybersecurity risk management and predictive maintenance, leading to safer and more efficient power grids. Moreover, the extensive analysis shows that the proposed method outperforms other baseline methods, where secured power distribution is a key objective in the networks.

Keywords

Introduction

Conventional approaches to evaluating the cybersecurity risks posed to power distribution networks (PDNs) and carrying out AI-enhanced predictive maintenance are confronted with several difficulties (Thapa and Arjunan, 2024). Scheduled inspections are complex and offer no assurance of outcomes; even while these methods used prior performance statistics, they lacked real-time data (Grunt and Potejko, 2024). Thus, this study may be unable to foresee equipment failure; standard PDN cybersecurity risk assessments involve manual threat monitoring and monthly vulnerability evaluations (Onih et al., 2024). These solutions failed due to network complexity and cyber risks. Static threat models and security solutions were used, even if new vulnerabilities could make them outmoded (Gong et al., 2024). Integration of maintenance data with security intelligence has been hindered by the compartmentalization of predictive maintenance and cybersecurity operations (Maddireddy and Maddireddy, 2021). A thorough risk profile and efficient event response became more challenging after the disintegration. Real-time monitoring, adaptive threat detection, and improved data analytics will be possible with AI integration (Iqbal et al., 2023). Switching from manual to AI-driven solutions includes overcoming data quality difficulties, system incompatibilities (Zeeshan et al., 2023), and the need for sophisticated algorithms that can handle complex and changing scenarios (Kalogiannidis et al., 2024).

Using AI-assisted predictive maintenance encounters many problems (Arif et al., 2024). Integrating AI technologies with existing infrastructure may be a technological barrier to adoption (Hobiny et al., 2022); data management accuracy is another significant difficulty because predictive model accuracy depends on the number and quality of data from multiple sources (Radanliev et al., 2020). Lack of knowledge about AI's advantages and disadvantages in maintenance worsens this issue (Abouelregal et al., 2023). Data privacy and security are important concerns given the growing use and sharing of sensitive operational data across networks (Wen et al., 2024).

On the other hand, evaluating these networks’ cybersecurity risks presents unique issues (Arinze et al., 2024). Cybercriminals use artificial intelligence (AI) to launch extortion and ransomware assaults on crucial infrastructure (Badshah et al., 2025). Malicious performers can assault AI systems; these systems can be manipulated for unreliable outcomes (Maddireddy andMaddireddy, 2022). A lack of knowledge about predictive maintenance and cybersecurity requirements exacerbates these issues. Businesses must dramatically increase their knowledge base and implement strict security measures. Recent events have highlighted the necessity for comprehensive PDN cybersecurity and predictive maintenance frameworks (Reddy, 2021). This is necessary for achieving the highest possible risk management (RM) and operational efficiency level (Badshah et al., 2024).

In addition, the PDNs are being transformed by cybersecurity risk assessment and AI-enhanced predictive maintenance. Machine learning (ML) algorithms allow utilities to predict equipment failure, reducing unplanned outages and enabling proactive maintenance. AI-powered cybersecurity risk assessment technologies protect critical infrastructure for utilities. These technologies search massive data sets for weaknesses and attacks; modern technologies make electricity distribution networks more efficient, reliable, and secure, improving grid resilience (Alshehri et al., 2024).

The above discussion motivates the design of an intelligent framework that integrates cybersecurity risk assessment with AI-enabled predictive maintenance. Using AI and analytics, utilities anticipate equipment failures, boost their defenses against emerging cyber threats, and keep the lights on in communities. Therefore, this study presents artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) to overcome these challenges and enhance the resilience, reliability, and security of (PDNs). Moreover, the key contribution of this study is discussed below.

This study introduces an AI-DGOA framework to improve the PDN. It addresses the limitations of conventional static models and manual assessments by integrating real-time data analytics and adaptive algorithms to enhance cybersecurity risk assessment, predictive maintenance, and grid performance. This methodology offers a unique approach to improving operational resilience, predicts equipment failures, and dynamically examines real-time data. We designed a cutting-edge algorithm based on ML ensemble models (EMs) to predict equipment failure and system inefficiencies, thereby improving grid reliability, reducing maintenance costs, and enhancing equipment uptime. The AI-DGOA includes a real-time cybersecurity risk assessment to defend PDNs from evolving cyber threats. The objective is to evaluate AI-DGOA's cybersecurity detection and fix capabilities to improve data protection and operational resilience. This integration bridges a critical gap in existing cybersecurity frameworks, contributing a new standard for adaptive threat defense in energy systems. We conducted extensive simulations, a key scientific endeavor, to rigorously analyze the AI-DGOA method's efficiency.

The remaining sections of this paper are structured as follows: The “Literature work” section discusses the literature work. The problem formulation is discussed in the “Problem formulation” section. The “Proposed AI-DGOA method” section presents the proposed (AI-DGOA) method. The “Performance evaluation and results discussion” section discusses the performance evaluation and results. Finally, we conclude this work in the “Conclusion” section.

Literature work

Integrating AI into system security and industrial systems has made significant strides. It improved several industries’ predictive maintenance, threat prediction, and RM (Trivedi et al., 2024). The study (Balasubramanian and Gurushankar, 2020) presents a technique for supporting real-time RM for the industry to vulnerability discovery and improving threat mitigation strategies. This paper claims that integrating AI and risk metrology helped enhance industries.

Another study proposed enhancing intrusion detection and prevention and increasing resilience using AI-empowered adaptive cyber defense strategies (ACDSs) for critical infrastructures. The proposed method tackled the risks and discussed how intelligent systems are incorporated (Ruennusan et al., 2023).

In the study (Volk, 2024), the author used AI for predictive maintenance (AI-PM), real-time data analysis, and optimization of grid operations. This work focused on smart grid reliability and efficient resource usage, rather than a large-scale environment.

Ahmad et al. (2025b) proposed an AI-enabled framework designed to detect anomalies in PDNs exposed to false data injection (FDI) attacks. Their model employs ML algorithms to identify malicious data patterns in real-time, enhancing the resilience of smart grid infrastructures. The system demonstrates effective defense capabilities against data manipulation by integrating AI techniques for robust detection.

Ahmed et al. (2025b) developed a consensus-oriented distributed protocol aimed at achieving resilient optimal power delivery in smart grids. The protocol specifically addresses the challenges posed by electric vehicle load variability and stochastic hybrid cyber-attacks, offering a distributed coordination mechanism that supports secure and adaptive energy management across network agents.

Ahmed et al. (2025a) conducted a comprehensive review of cybersecurity measures in microgrids, focusing on advanced detection techniques, secure communication protocols, and practical deployment strategies. Their study provides an extensive classification of threats and countermeasures, presenting both theoretical insights and practical challenges associated with the implementation of resilient energy systems.

Ahmad et al. (2025a) introduced a control system for frequency synchronization that is robust against FDI attacks. Their solution integrates metaheuristic optimization algorithms to fine-tune control parameters, thereby maintaining system stability under adversarial conditions. The proposed approach enhances synchronization accuracy while mitigating the effects of malicious data interference.

Ahmed et al. (2024) presented an attack-resilient and center-free energy dispatch framework that incorporates renewable energy sources in power generation. The model is designed to function without a centralized controller, making it suitable for distributed environments and reducing single points of failure. The framework also includes mechanisms to ensure operational stability under adversarial scenarios.

Ashraf et al. (2024) explored network security enhancements using hybrid feedback systems within the context of chaotic optical communication. Their method leverages chaos theory and optical feedback mechanisms to improve the confidentiality and reliability of data transmission in secure communication networks, particularly in power infrastructure systems.

Abdelkader et al. (2024) emphasized the importance of comprehensive cybersecurity strategies for modern power systems. Their study outlines best practices, architectural enhancements, and policy frameworks necessary for improving resilience and reliability against various forms of cyber-attacks. The work underscores the significance of multilayered defense mechanisms and cross-domain coordination in securing critical infrastructure.

The authors discussed the security of clouds by applying AI and ML technology. Such a system permits analytics based on forecasts, automates some responses, and includes self-repairing systems. However, the classifications of threats are complex in real-time systems (Deshpande and Anasica, 2024). On the other hand, the author suggested how advanced threats can be addressed by using AI-driven anomaly detection (AI-AD) while optimizing data storage to prevent and enhance protection against unauthorized access. This method provides security while minimizing the effects of internal attacks in smart environments and cyber-physical systems (Abdel-Wahid, 2024).

Furthermore, the author proposed an AI-based framework for dynamic cyber risk analytics implemented in IoT systems. This method intends to enhance the understanding of risk and the resilience of organizations (Kumari et al., 2022). In the study (Radanliev et al., 2020), the authors implemented AI-PM in industrial systems. This enables maintenance to be conducted to avert equipment failures, and maintenance plans are also carried out effectively, thus enhancing the overall performance and eliminating downtime, but it is also cost-effective.

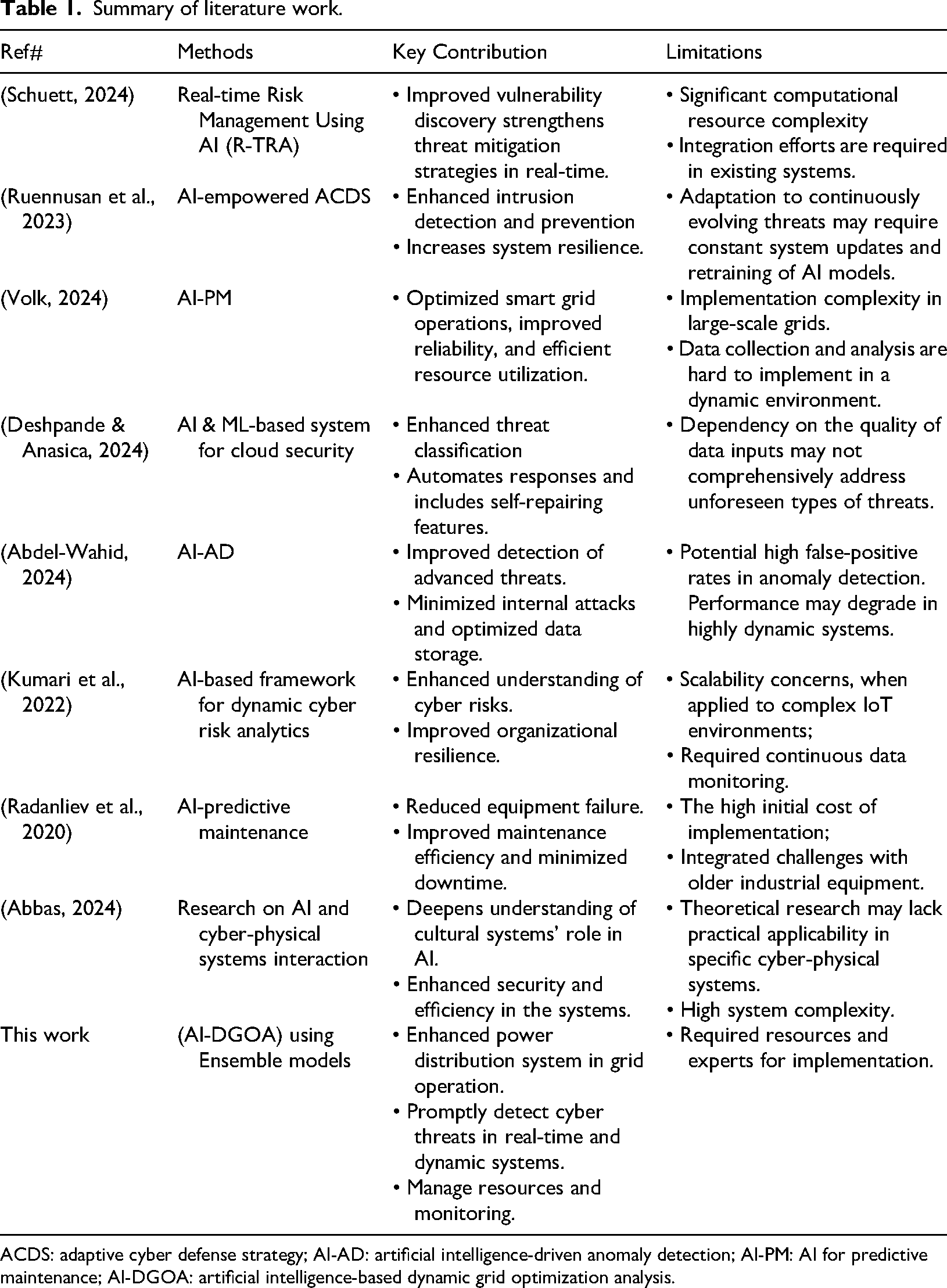

Another author presented work based on theoretical research and conducted research regarding the interaction between AI and cyber-physical systems, giving the author a detailed account of the concept and mechanisms. Thus, a fundamental understanding of the significance of cultural systems concerning AI has been realized to enhance the CP's safety and efficiency (Abbas, 2024). However, there is a need for an efficient and intelligent method to predict cyber threats promptly in real-time (PDNs) and dynamic environments. Therefore, we present a novel and efficient approach (AI-DGOA) to address the performance bottlenecks in grid networks. Moreover, we summarized the related work in Table 1.

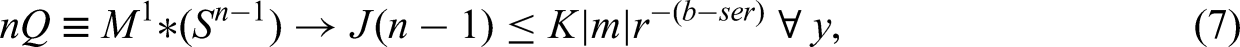

Summary of literature work.

ACDS: adaptive cyber defense strategy; AI-AD: artificial intelligence-driven anomaly detection; AI-PM: AI for predictive maintenance; AI-DGOA: artificial intelligence-based dynamic grid optimization analysis.

Problem formulation

The main objective is to optimize (PDNs) more efficiently, along with real-time predictive maintenance management and cybersecurity risk assessment. This can be expressed mathematically like this:

Objective function

Reduce total operational costs (

where

Constraints

Predictive constraints

where

Security constraints

where

Operational constraints

where

Proposed AI-DGOA method

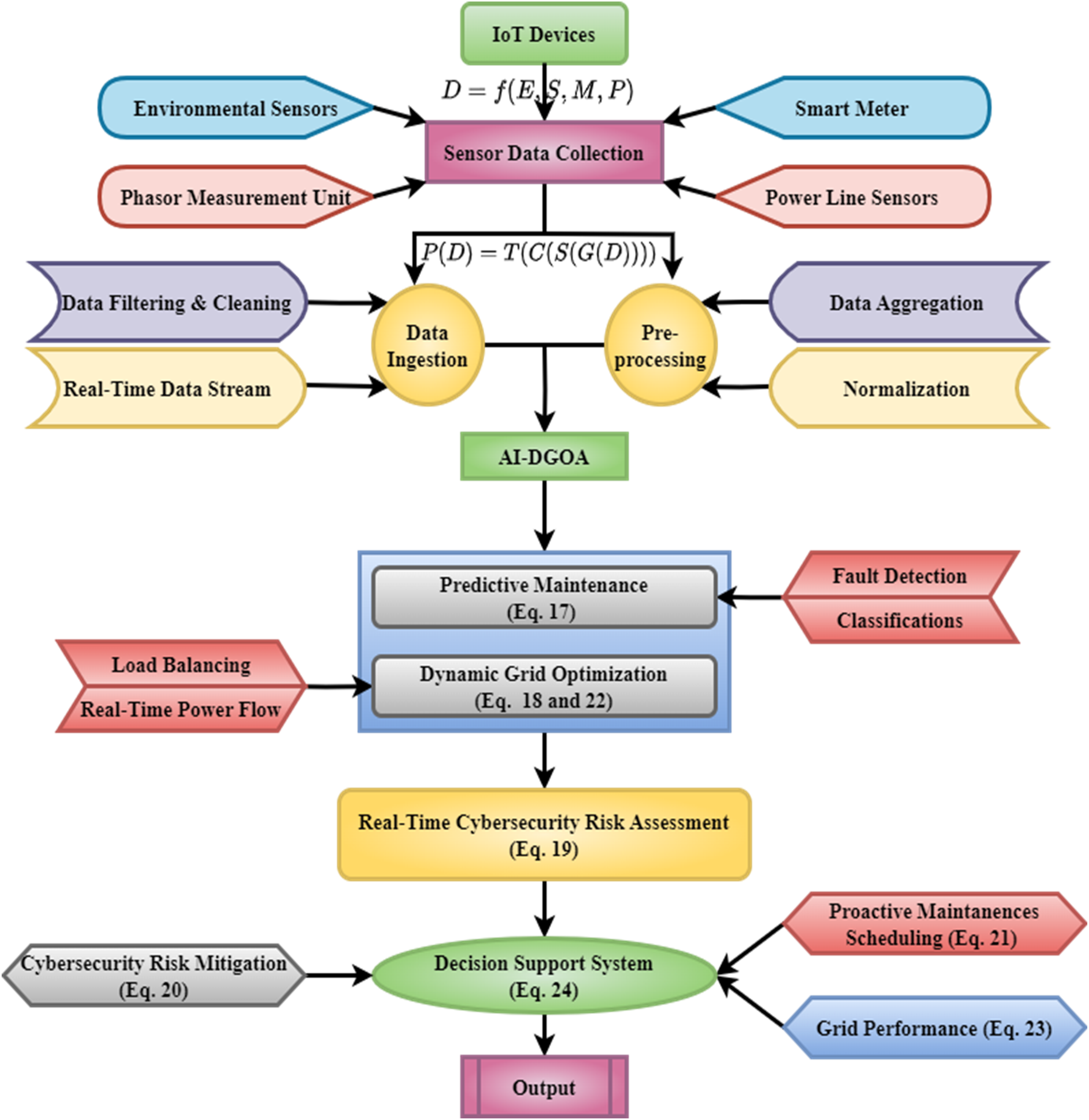

The proposed AI-DGOA improves the performance of (PDNs) using the integration of ML, predictive maintenance, and cybersecurity. The system uses real-time monitoring and data analytics to identify cybersecurity risks, operational strategies, and maintenance needs. Integrating edge and cloud computing facilitates real-time decision-making for continuous system operation. The proposed algorithms are to increase energy efficiency, predict equipment failures, and mitigate risks via a cyber-risk assessment layer. The grid's resilience is strengthened by the system's capacity to assess and adapt to threats, achieved through the implementation of a detection algorithm and threat modelling. The AI-DGOA enhances the security, reliability, and efficiency of power generation and distribution grids, aligning with modern demands for intelligent energy management systems.

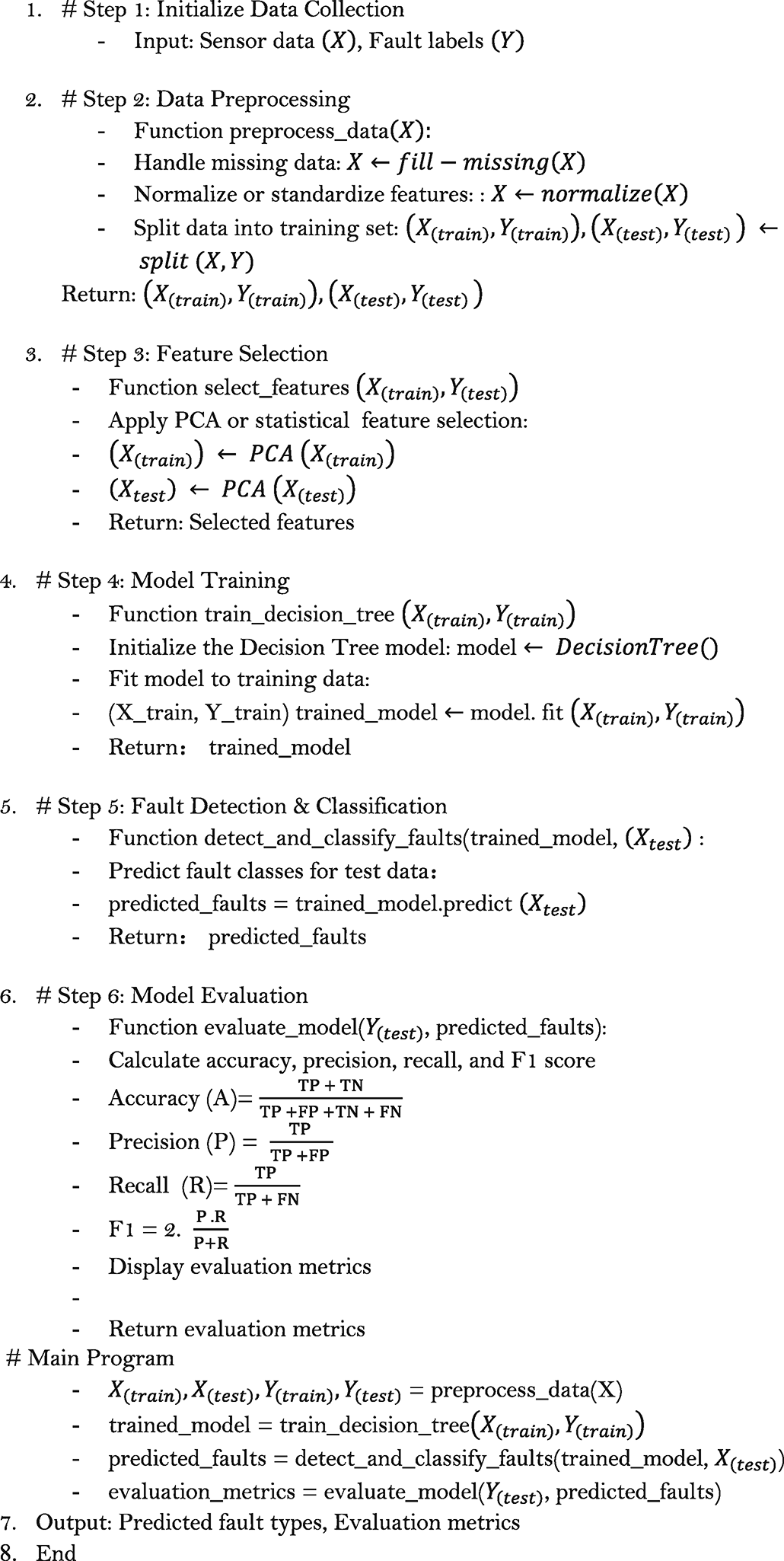

AI-DGOA for predictive maintenance

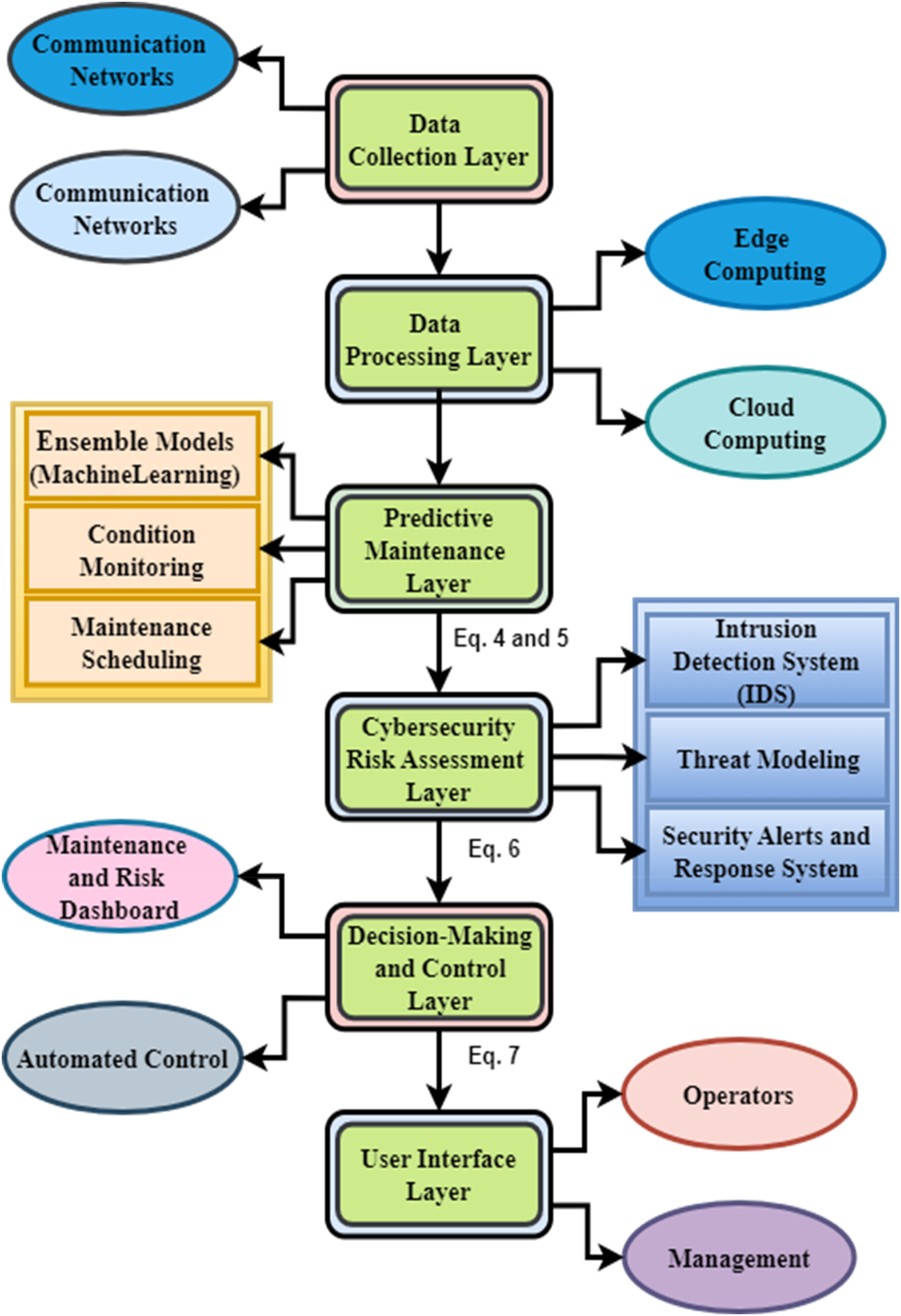

Figure 1 shows the AI-DGOA method for managing energy distribution networks. The data collection layer collects data from sensors through communication interfaces. The data processing layer systematically organizes data to facilitate its analysis. Ultimately, the AI model layer uses ML techniques to monitor and predict the timing of maintenance requirements and strategically schedule the execution of such activities.

Artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) layered architecture for cybersecurity risk assessment.

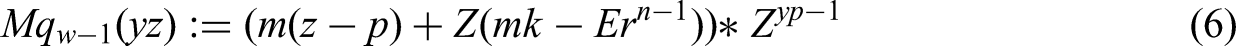

Figure 1 discusses the layered architecture based on AI-DGOA, where the cybersecurity risk assessment layer operates continuously to detect and evaluate cyber threats, while an intrusion detection system (IDS) and Threat Modelling assist in safeguarding operations. This setup enables real-time, uninterrupted decision-making and control by integrating edge and cloud computing. The maintenance and risk dashboard provides graphical representations of information, enabling operators and management to provide effective supervision. Automated Control integration enables improved resilience, thereby ensuring more dependable and secure operation of the power generation grid. Equation (5) is derived from information-theoretic principles, specifically modeling system performance as uncertainty reduction in cyber-physical systems.

The model's performance at a certain moment is represented by Equation 5,

Adjustments based on variations in grid parameters are captured by

Factors that vary over time are denoted by

Moreover, the decision-making output at the time

AI-DGOA for grid energy distribution and monitoring

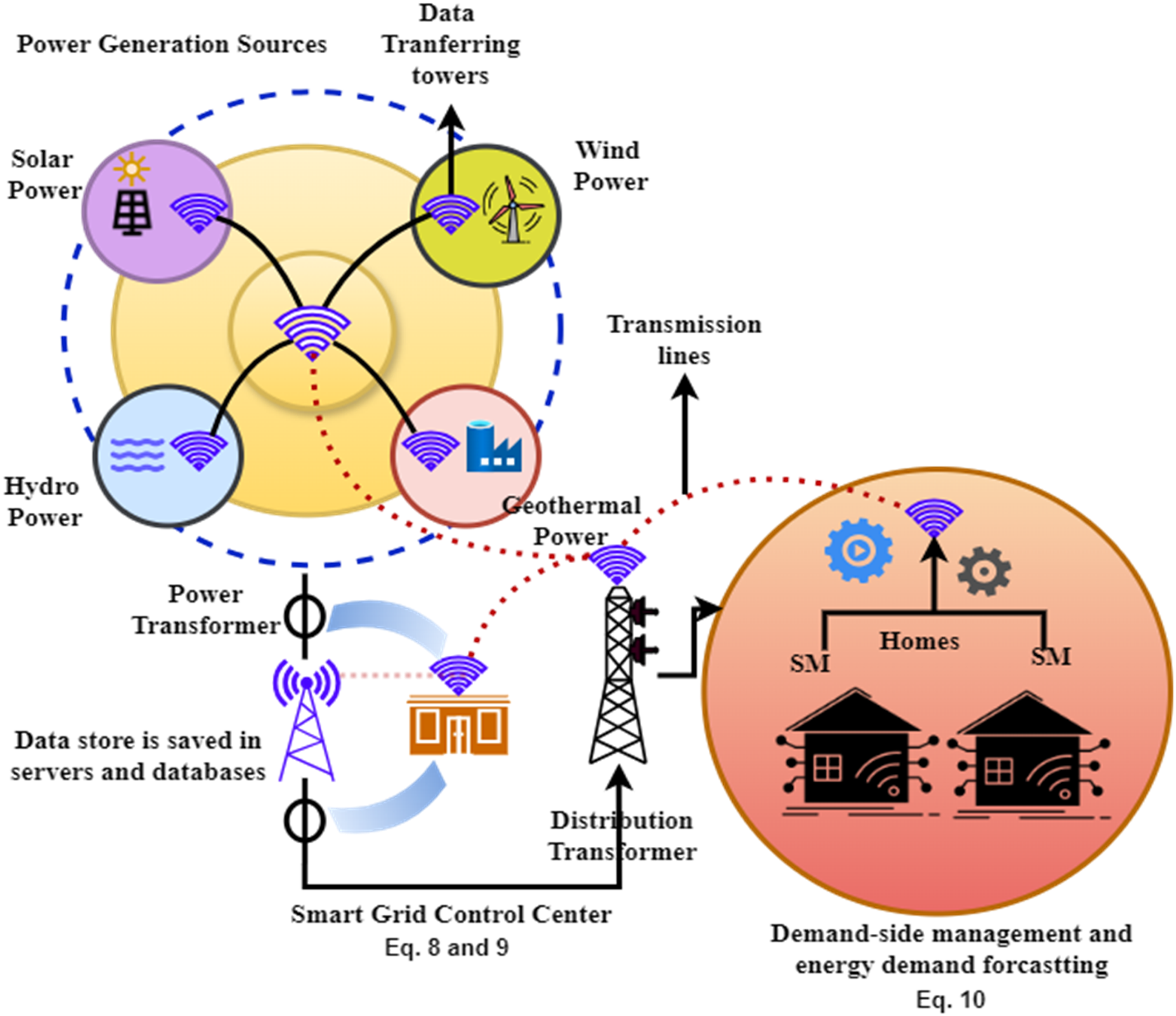

Figure 2 shows the AI-DGOA method used to manage energy distribution across smart grids. The energy network that communicates wirelessly includes solar, wind, hydro, and industrial power. These sources transmit data to a centralized control unit for processing to provide real-time decision-making via edge computing.

Artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) in smart grid energy distribution and monitoring.

Data acquired by smart meters (SMs) (Sharma and Saini, 2015) that monitor energy use and distribution could help homeowners with predictive maintenance and performance optimization. This data enables the AI-DGOA system to control energy flow, reduce grid inefficiencies, and predict equipment breakdowns. Control systems and local hubs communicate wirelessly to convey important information to grid operators. This real-time feedback loop improves the grid's efficiency and security by reducing the impact of disruptions and cyber-attacks. This allows for the smooth integration of cybersecurity measures by ensuring the system's durability.

The absolute performance metrics are shown by Equation 9,

With

Real-time grid optimization

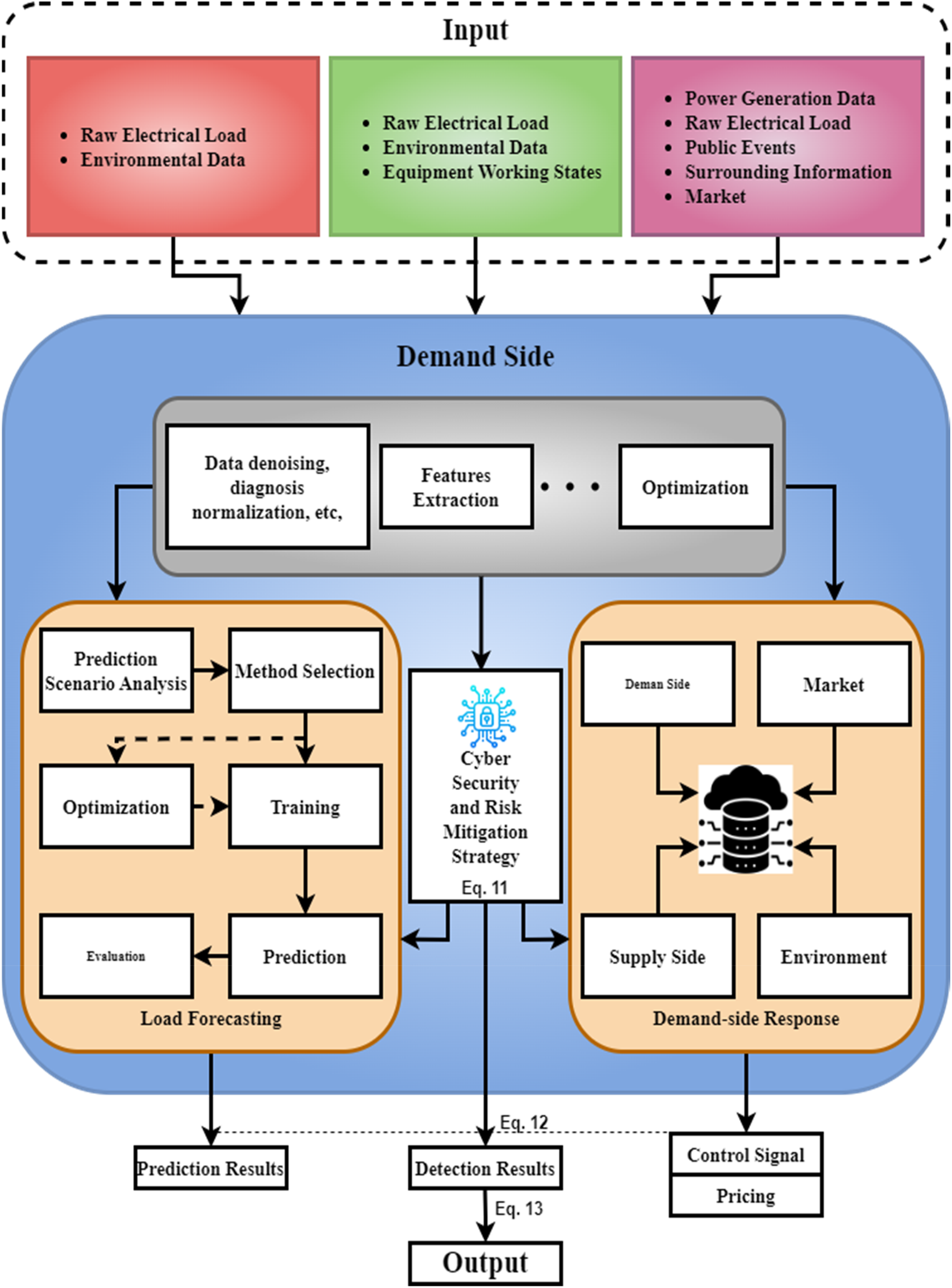

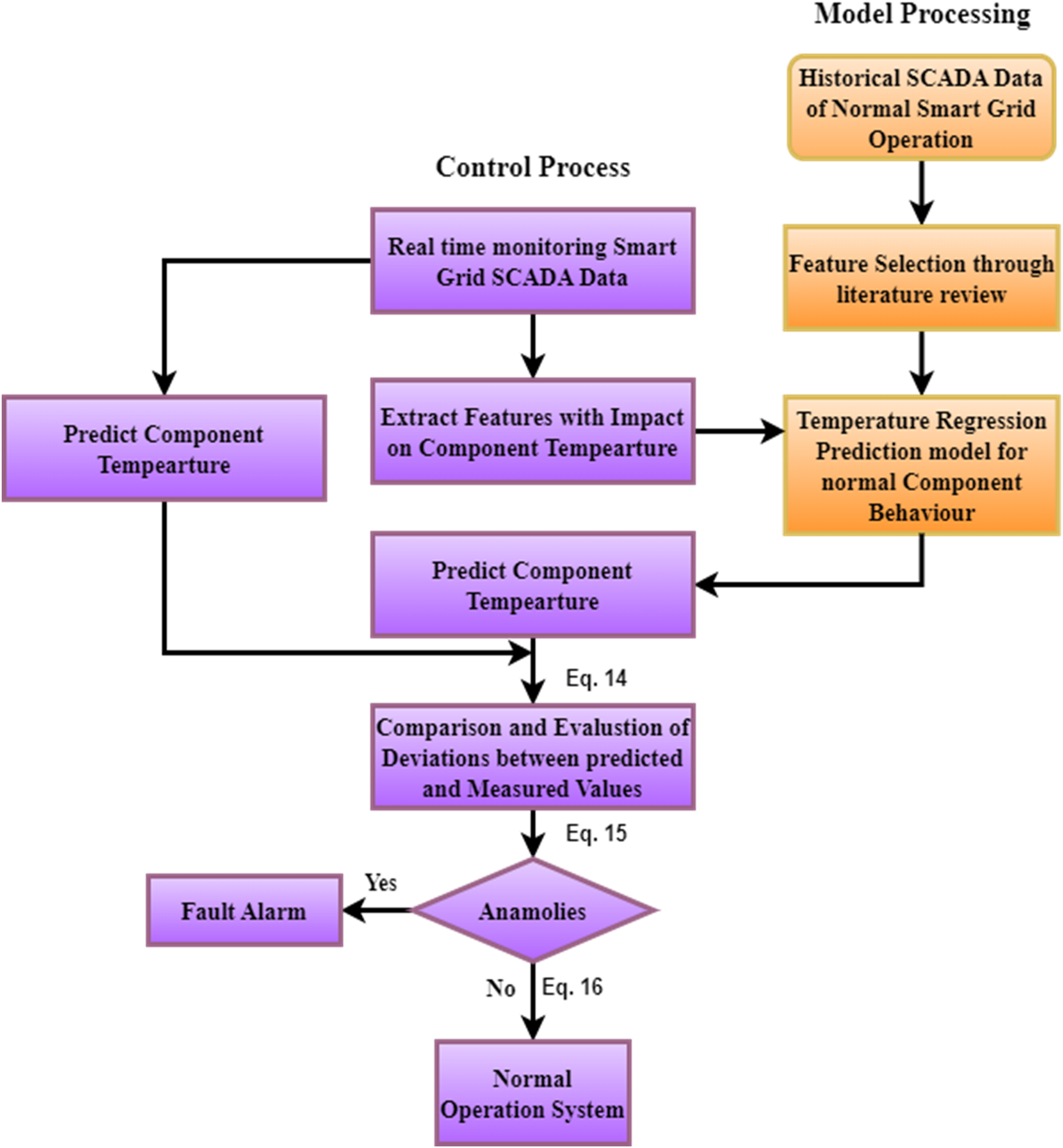

Figure 3 illustrates its use in demand-side load forecasting and cybersecurity inside power grids. An array of input data, including raw electrical load, environmental data, equipment conditions, and market considerations, is utilized to commence the process. The Demand Side component involves the processing of this data via many steps, including data denoising, feature extraction, and optimization.

Artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) framework for maintaining optimal grid performance.

The system predicts electricity demands by using techniques such as probability scenario analysis, optimization, and training. A thorough assessment of these techniques guarantees precision in forecasting future demand. Cybersecurity integration is achieved by adopting a cybersecurity and risk mitigation strategy, which protects against any threats encountered during load forecasting and demand response activities.

The demand-side response adapts to market signals regarding environmental conditions and supply-side data. Control signals and price feedback loops are crucial for optimizing demand and energy distribution, thereby achieving optimal grid performance and maintaining strong security and RM across the system.

while

Variations in grid effectiveness and predictive metrics

The projected performance regulated

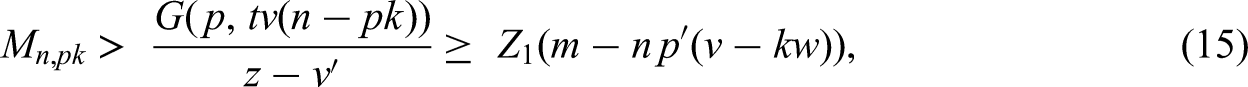

Algorithm 1 demonstrates a method for detecting and classifying defects using a decision tree model (DTM) and principal component analysis (PCA) method (James et al., 2023; Bharadiya, 2023). The algorithm performs multiple essential fault detection and classification steps. This includes Data Collection, the inputs being sensor data (X) and fault labels (Y). Data preprocessing involves dealing with missing data, normalizing features, and splitting the datasets into training and testing datasets. The feature selection reduces the number of features in the dataset, e.g., with PCA, to improve model efficiency. The heart of the algorithm is the DTM that we train using the training data. The model predicts fault classes for the test data after training. Algorithm 1 validates the model's performance using accuracy, precision, recall, and F1 score metrics. The main program calls all these steps and evaluates the predicted fault type and evaluation metrics.

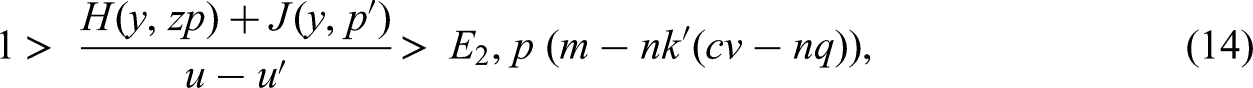

Figure 4 depicts the procedure of forecasting the temperature of components inside the AI-DGOA system, which aims to preserve the reliability of (PDNs). The process starts with the analysis of supervisory control and data acquisition (SCADA) (Folgado et al., 2023) and the continuous monitoring of smart grid components. The literature analysis identifies features that influence component temperature, which serves as the foundation for a temperature regression prediction model that precisely describes the typical behaviour of components. The system uses real-time data to forecast component temperatures and then compares these anticipated values with the collected measurements. Variations of considerable magnitude between projected and observed values are identified as anomalies, which may indicate possible problems. Discrepancies prompt the activation of a fault alert. Otherwise, the system continues with regular activities. This conceptual framework aligns with the abstract's focus on predictive maintenance, which proactively detects possible faults. It emphasizes the importance of ML in maintaining the grid's stability, reducing interruptions, and maximizing performance through real-time, proactive monitoring.

Predictive component temperature monitoring in artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) for smart grid systems.

The model's efficacy at the time

The model's particular requirements

while

Overall framework on cybersecurity risk assessment integration

Figure 5 illustrates the overall process for cybersecurity and risk assessment. Experimental data is collected using environmental sensors, SMs, and power line sensors. Data processing includes gathering, sorting, cleaning, and standardizing data.

Overall artificial intelligence-based dynamic grid optimization analysis (AI-DGOA) framework for smart power grid optimization and cybersecurity.

The AI-DGOA system utilizes preprocessed data for predictive maintenance and dynamic grid engineering. Defect detection, load balancing, and real-time power flow are important for achieving and maintaining optimum grid operating efficiency. The system integrates advanced real-time assessment of cybersecurity threats, offering proactive strategies to mitigate risks and safeguard the power grid. These insights help achieve optimal grid performance, proactive maintenance planning, and dependable results utilizing the decision support system. The remark above highlights the mutual reliance between predictive maintenance and cybersecurity. This study demonstrates the impact of AI on the transformation of electricity distribution networks.

The aim of minimizing variables

The performance evaluation parameters are given by Equation (19),

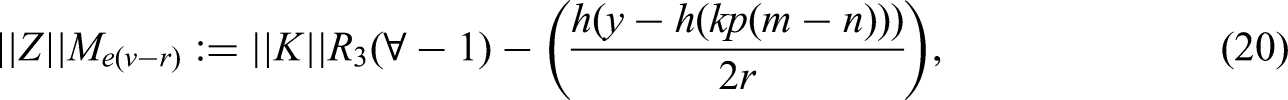

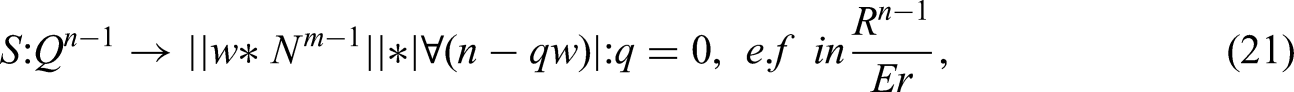

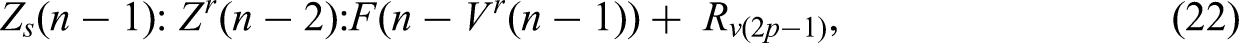

This model's effectiveness under particular circumstances is represented by Equation 20,

The system reaction at a previous time step is represented by Equation (21),

while

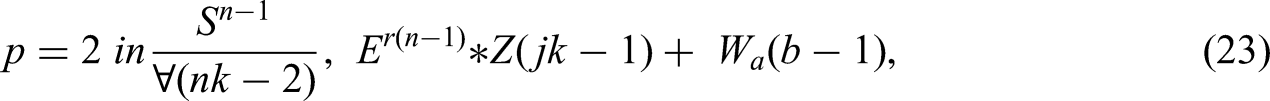

A criterion for assessing performance metrics is given by Equation (23),

Equation 24,

The adjusted performance metric

The AI-DGOA system optimizes the energy infrastructure by combining predictive maintenance with real-time data analytics and strong cybersecurity measures. A key feature of AI-DGOA is its use of ML for proactive threat management in cybersecurity, enabling real-time adjustments to changes in grid settings. The uninterrupted operation of the grid employs IDSs and threat models to ensure the method's continuous risk assessment layer. Energy flow and efficiency are improved during control system operation, and system vulnerabilities are decreased. AI-DGOA employs AI to monitor and control grid performance, resilience, and RM in real-time by integrating cloud and edge computing. A comprehensive monitoring system and automated maintenance scheduling improve grid dependability. Hence, the proposed AI-DGOA for energy distribution networks enhances the system's performance and security.

Performance evaluation and results discussion

This section discusses the performance of the AI-DGOA method, which serves as great evidence of improving grid management via cybernetic resilience assessment and intelligent predictive maintenance in PDNs. We compare this work with the baseline approaches R-TRA, ACDS, AI-AD, and AI-PM. The extensive simulation analysis and results show that our proposed work outperforms other methods.

Simulation scenario and dataset description

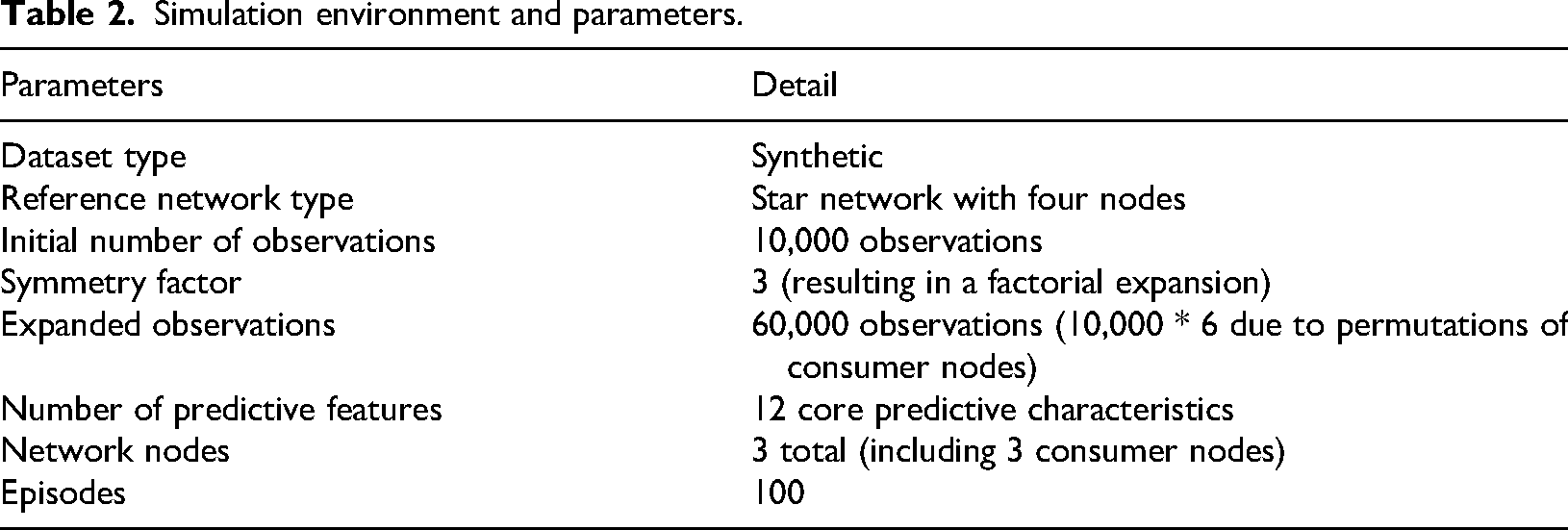

We used the Python language (Jimenez-Ruiz et al., 2024), TensorFlow (Şahin, 2024), and the scikit-learn library for designing models and predictions. The dataset that is selected for this experiment is synthetic and comprises the outcomes of grid stability simulations for a reference star network with four nodes (Wasi-whu, 2024). There are ten thousand observations included in the first dataset. Since the reference grid is symmetric, the dataset is expanded in three factorial times, equivalent to six times. This addition represents a permutation of the three customers who occupy three consumer nodes. After that, the expanded version had sixty thousand observations. Additionally, it has twelve core predictive characteristics and two dependent variables embedded inside it. Moreover, the simulation environment details are discussed in Table 2.

Simulation environment and parameters.

Result discussion

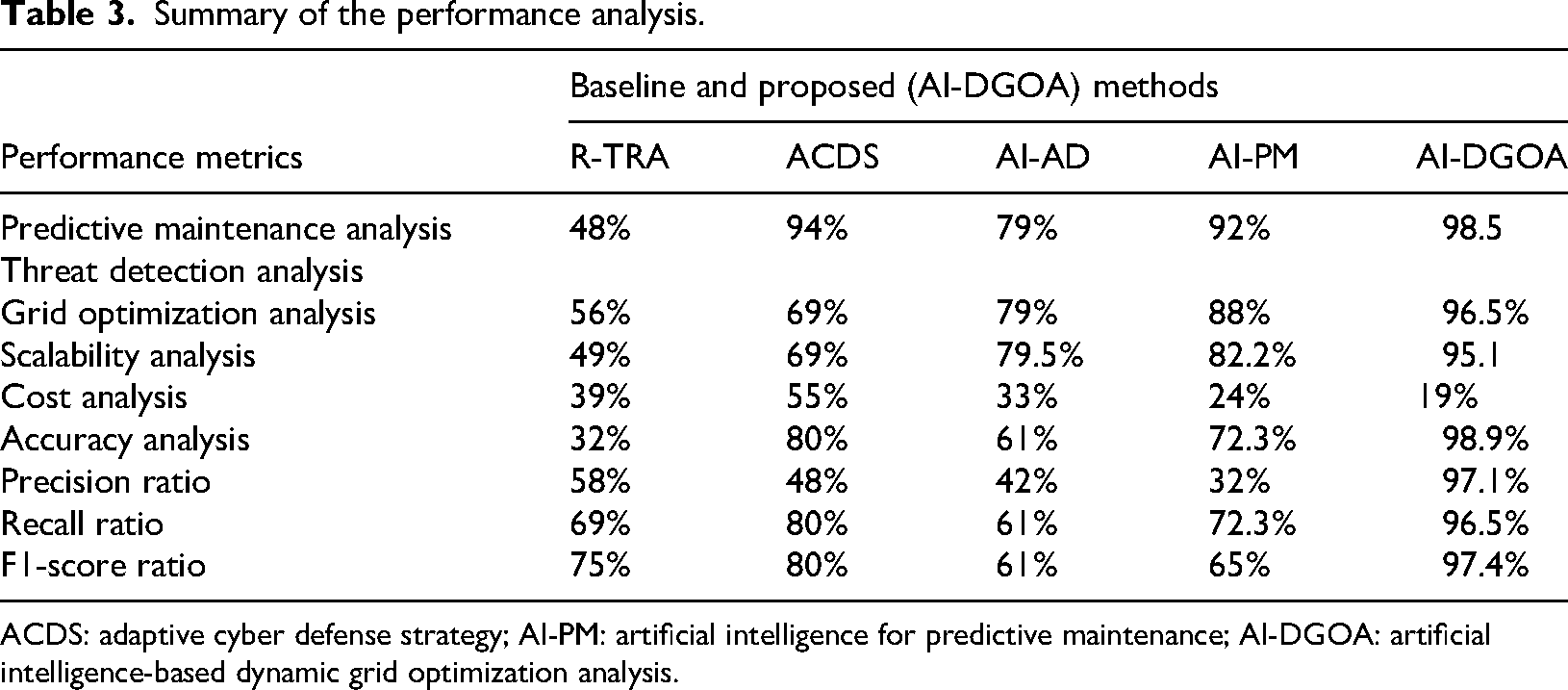

This section discusses the outcomes of the simulation analysis of the different metrics presented in Table 3. In addition, the summary of the comparison results is also discussed in Table 3.

Summary of the performance analysis.

ACDS: adaptive cyber defense strategy; AI-PM: artificial intelligence for predictive maintenance; AI-DGOA: artificial intelligence-based dynamic grid optimization analysis.

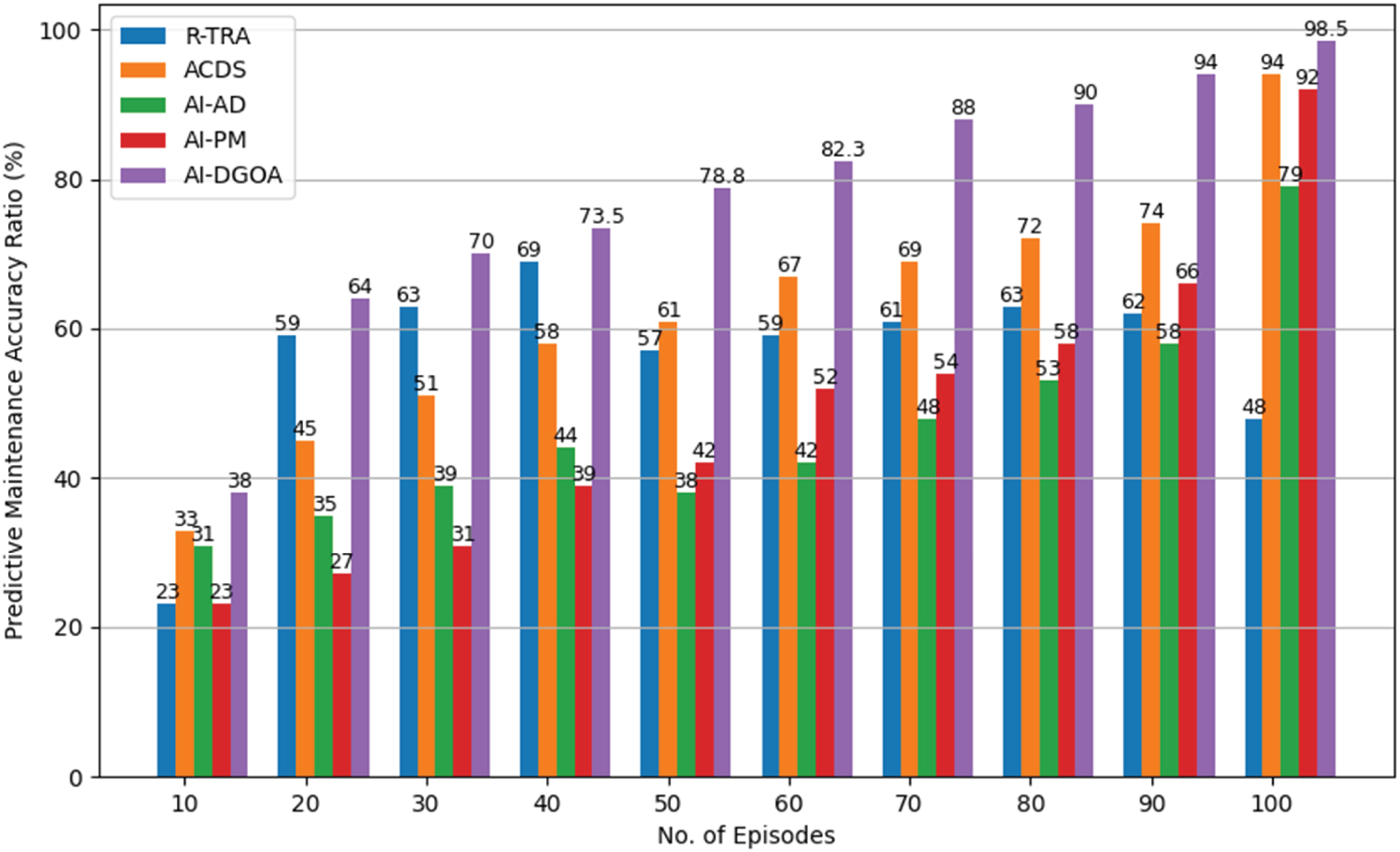

In Figure 6, the AI-DGOA method improves equipment failure detection and grid inefficiency diagnosis using ML. AI-DGOA processes enormous amounts of sensor data in real-time to predict failures; because of this, prompt actions can prevent costly failures and reduce downtime. Continuous learning from real-world grid data enables this fantastic accuracy. Therefore, the proposed algorithm and framework learn from the model and improve its predictions. Including many data sources ensures a complete understanding of grid performance, making predictions more accurate. The simulation analysis reveals that AI-DGOA improves predictive maintenance accuracy by reducing false positives and negatives in failure detection. Accuracy improves grid resilience, operational costs, and maintenance scheduling, which results in a 98.5% accuracy rate at episode 100. Predictive maintenance is essential for PDN safety, stability, and efficiency; preventative maintenance procedures help keep the grid working appropriately.

Predictive maintenance accuracy analysis by number of episodes.

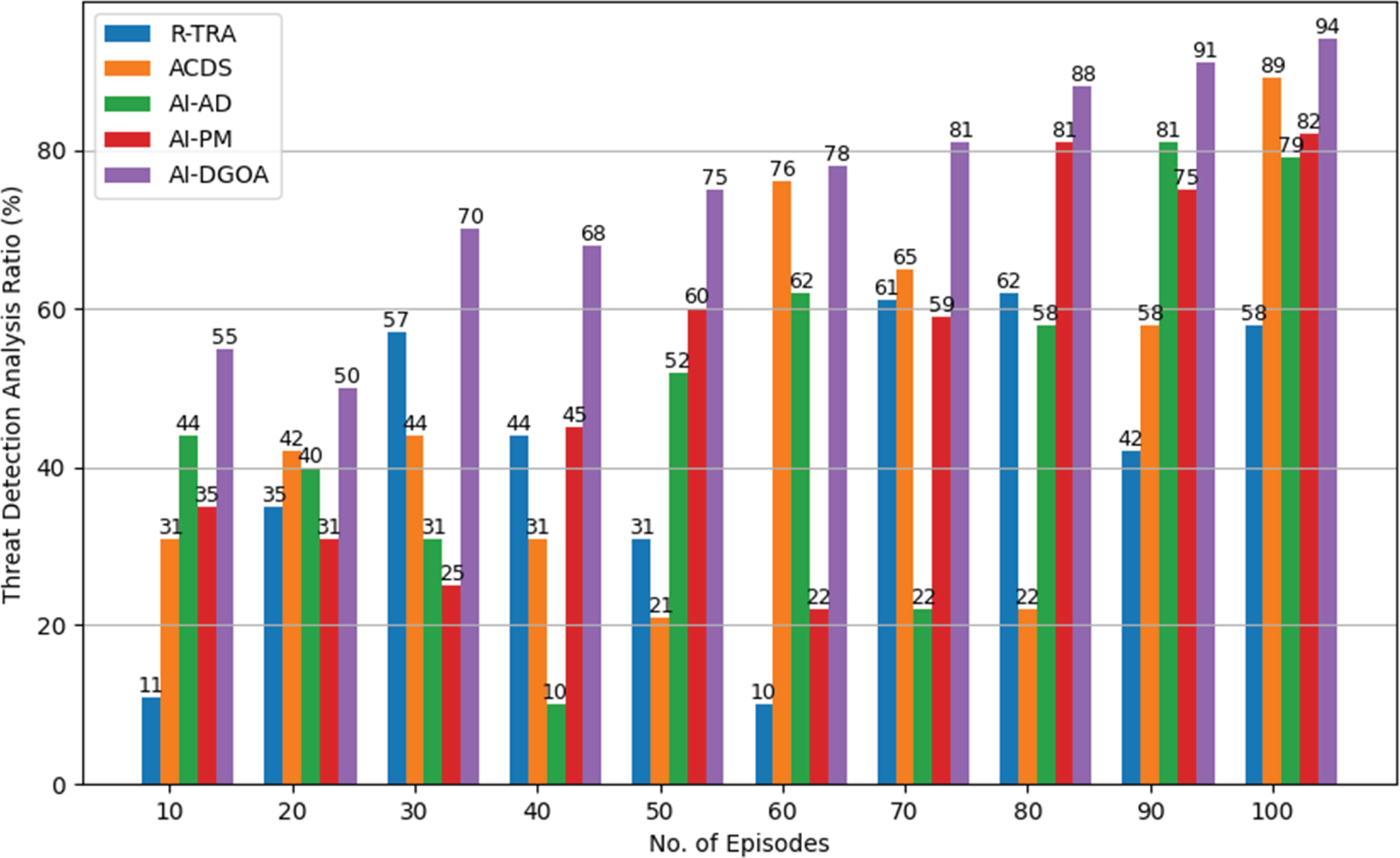

The proposed method enhances cybersecurity threat detection benefits (PDNs) by leveraging AI and ML to spot cyber threats faster and more accurately. Network monitoring tools and AI-powered threat detection systems analyze massive amounts of data in real-time to find abnormalities and attacks. The results, as shown in Figure 7, indicate that the AI-DGOA outperforms others. This is because the proposed methods used a proactive risk assessment and predictive maintenance method based on AI to help (PDNs) avoid major cyberattacks. Our AI-driven method uses threat intelligence to predict threats based on the facts and figures in the system. However, our proposed method enhanced predictive maintenance, gradually improving the system, and achieved an analysis ratio of 94.8% compared to other baseline methods.

Cybersecurity threat detection analysis by number of episodes.

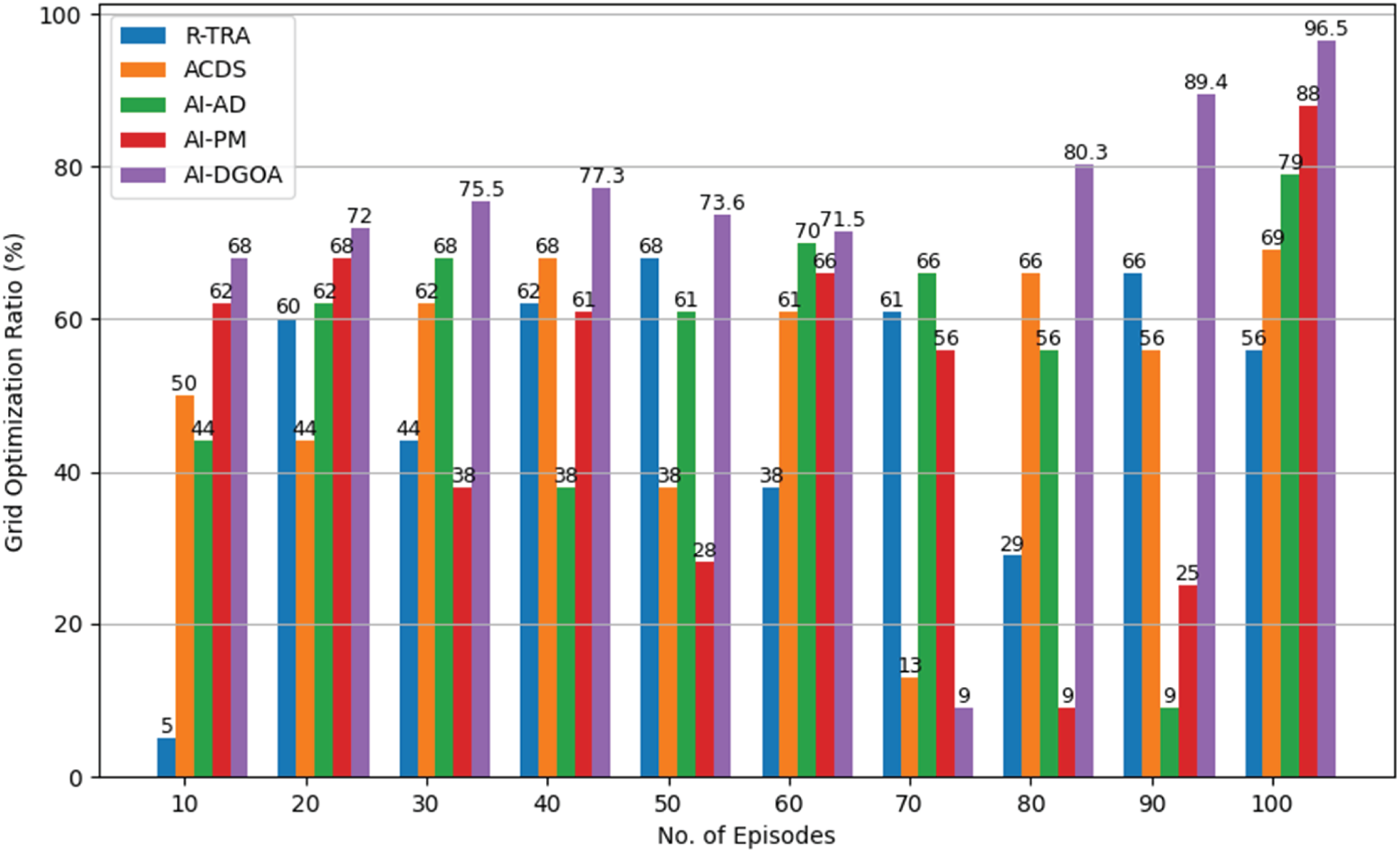

The proposed method is a predictive maintenance and cybersecurity risk assessment for (PDNs) and relies on the DGOA to improve grid efficiency and resilience. AI-DGOA optimizes load distribution and grid stability in various configurations using real-time data processed dynamically from grid components. In Figure 8 above, AI-DGOA optimized grid performance and supported real-time modifications to improve grid efficiency and reduce power outages. Monitoring operational indications, such as power usage, voltage fluctuations, and equipment performance, enables these changes. This dynamic optimization is enhanced by predictive maintenance data, which allows the system to address possible equipment issues before they degrade performance. AI-DGOA's ability to handle and analyze enormous volumes of sensor data for precise optimization decisions allows the grid to adapt to changing conditions without compromise. Additionally, optimization techniques that involve cybersecurity risk assessments detect and address cyber risks alongside grid performance issues, producing 98.3%. This dual approach optimizes operations and protects against cyberattacks. The AI-DGOA technique meets all the PDN's needs, including enhanced grid performance, reduced operational expenses, and increased security and resilience.

Dynamic grid optimization analysis by number of episodes.

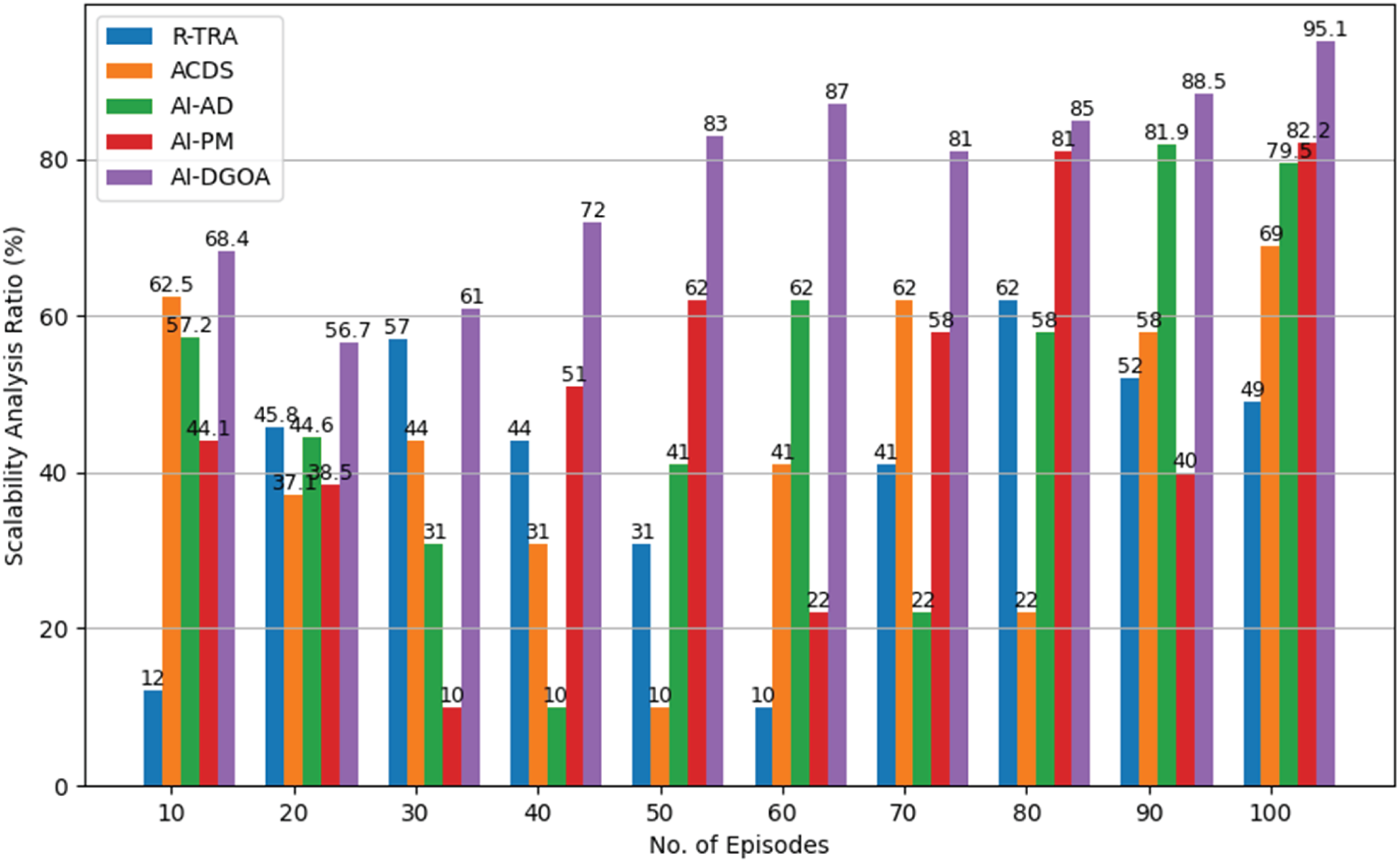

Figure 9 shows the performance of baseline methods and the proposed method, AI-DGOA. We noted that the performance of our proposed method is better than that of other methods. The reason is that we used a proactive approach based on AI and timely detected cyber threats and risk assessments in (PDNs). The AI-DGOA framework is designed to improve the usability of the system. As power networks incorporate renewable energy, predictive maintenance, and optimization become more crucial. It handles data feeds from many sensors across grid sectors and is adaptable. In this regard, our proposed models secure the system to handle vast amounts of real-time data without sacrificing performance. Despite grid complexity, AI-DGOA maintains forecast accuracy and optimization by efficiently processing enormous amounts of data. However, the AI-DGOA improved scalability performance more than others for future-proofing (PDNs) while ensuring operational continuity and minimizing downtime across growing infrastructures.

Scalability analysis by number of episodes.

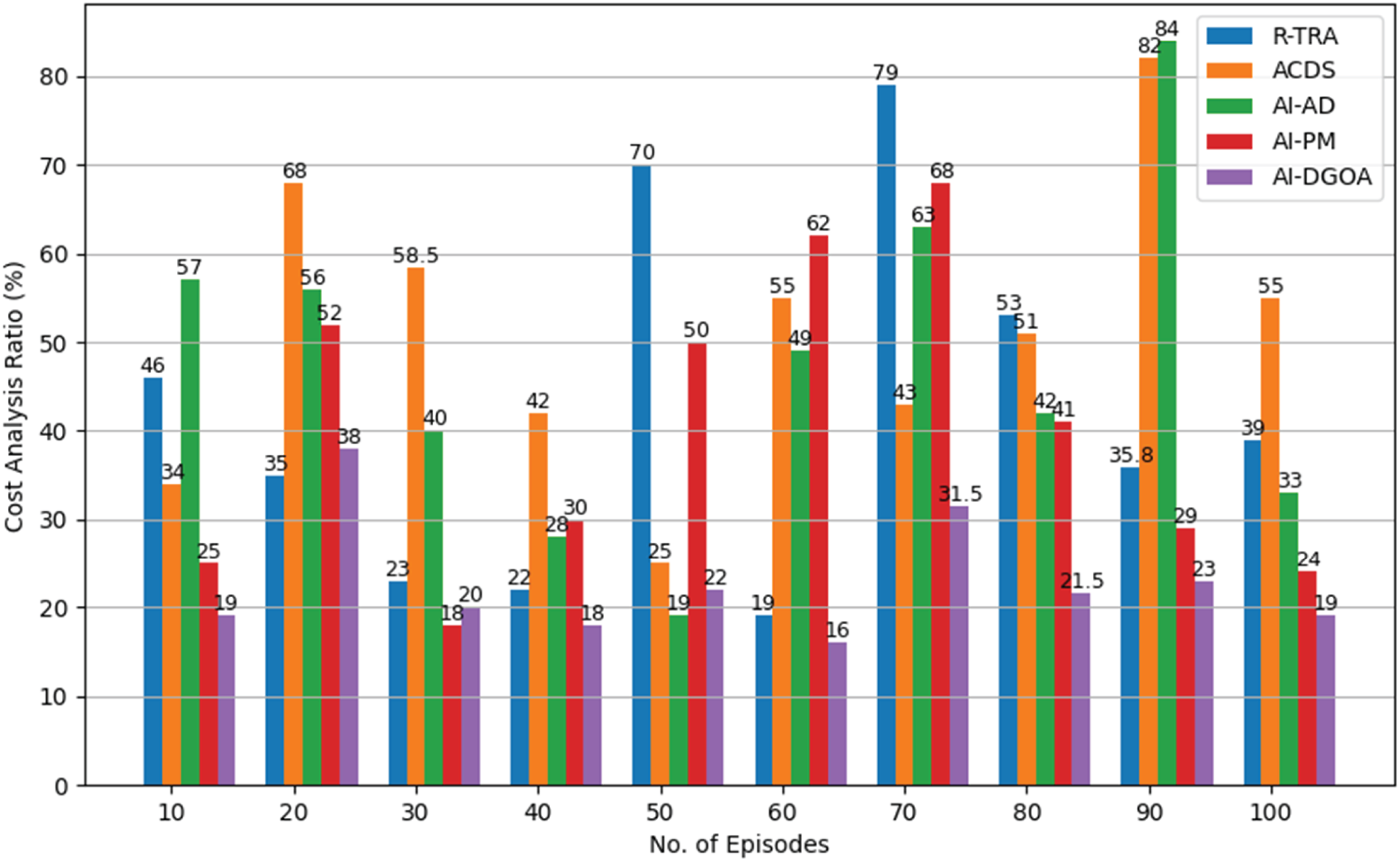

The result is shown in Figure 10 of the cost analysis, where AI-DGOA performance is better than other methods. The cost analysis includes computational costs and maintenance costs. AI-DGOA's ability to predict grid inefficiencies and equipment problems reduces costly and onerous emergency repairs. Using predictive analytics to plan maintenance extends the grid asset scope, reduces significant repairs, and reduces unexpected interruptions. Using predictive maintenance, reactive maintenance expenses are reduced for employees and materials. Real-time cybersecurity risk assessments reduce cyberattack-related financial and reputational damage. The proposed performance has a minimum cost due to the timely prediction of threats, assessment, and reduced complexity. Therefore, AI-DGOA improves grid performance and secures networks with minimum operational costs, with a secure system as the primary objective.

Cost analysis by number of episodes.

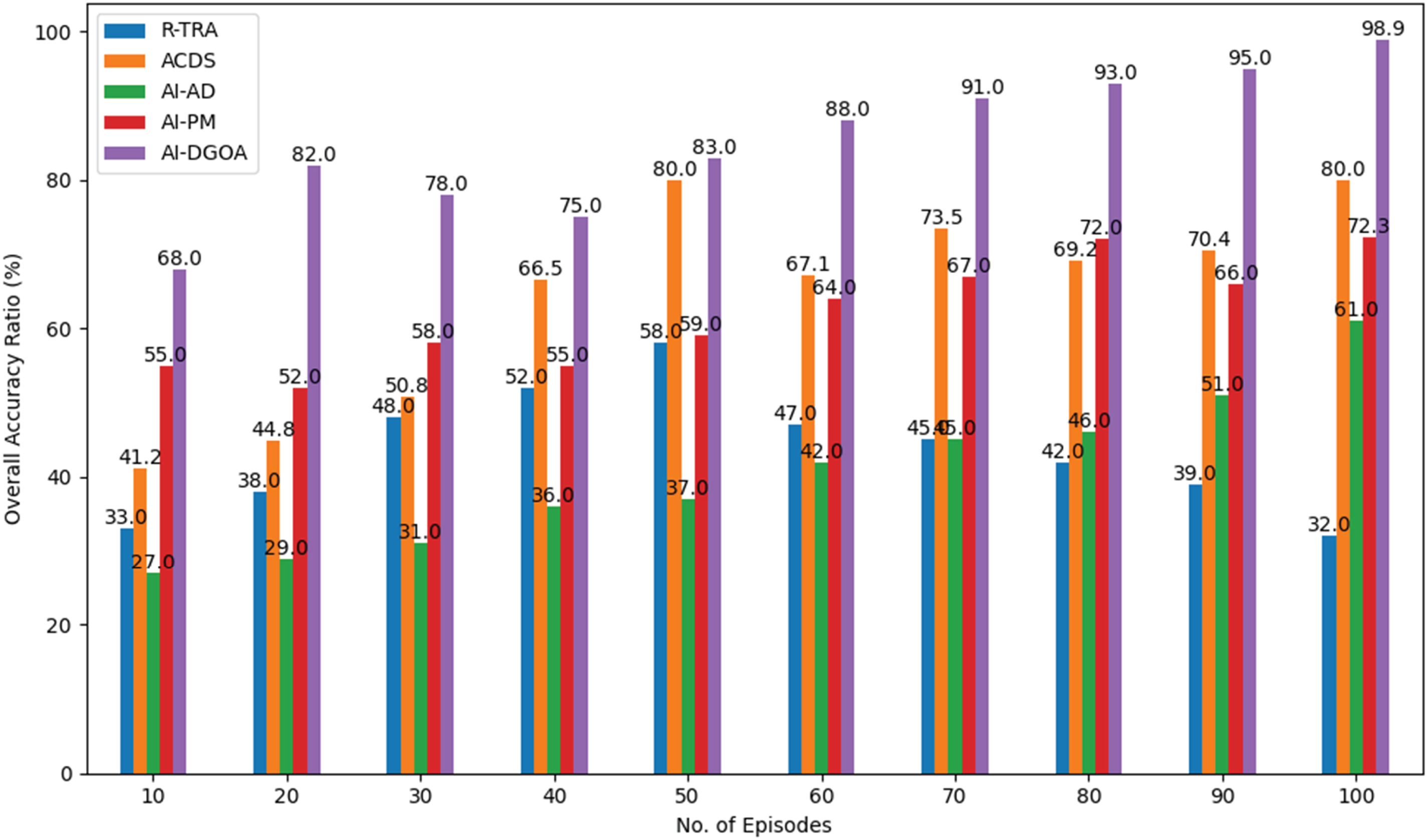

The results from the simulation analysis demonstrated that the AI-DGOA method significantly enhanced the PDN's operations, achieving an overall accuracy of 98.9% across 100 episodes, as shown in Figure 11. This performance is significantly better than that of other baseline methods, such as ACDS and R-TRA, which exhibit more fluctuation in accuracy and less consistent performance. Compared to traditional methods, AI-DGOA offers greater flexibility and continuous operation. The AI engine analyzes data in real-time, allowing organizations to take proactive action before problems arise. This enhances predictive maintenance by identifying equipment failures through ML, thereby minimizing costs and downtime. It also combines real-time cyber security risk assessments for protection against dynamic cyber attacks and utilizes load-balancing capabilities to enhance overall stability and performance. In conclusion, AI-DGOA stands out as a leading solution for managing the complexities inherent in modern PDNs, facilitating improved energy service delivery and enhanced cybersecurity RM.

Overall accuracy analysis by number of episodes.

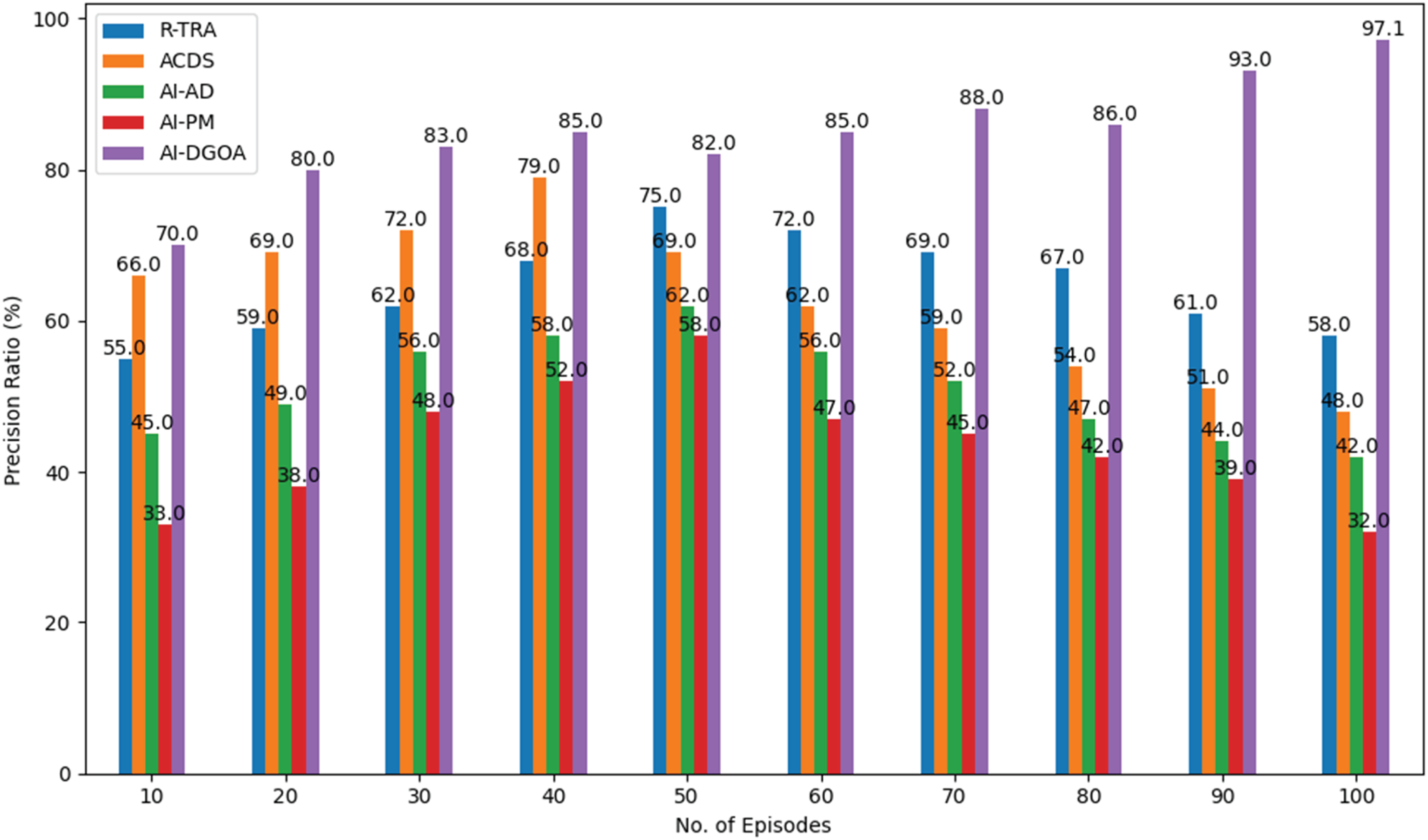

Figure 12 shows the precision analysis; the proposed AI-DGOA method aids in catering to the aforementioned complexities of PDNs in a vastly precise ratio concerning its effectiveness. Figure 12 results indicate that the AI-DGOA achieves the highest precision rate of 97.1% across 100 episodes, surpassing other competing techniques, R-TRA, ACDS, AI-AD, and AI-PM. Thanks to its dynamic real-time data analysis, AI-DGOA identifies potential problems before significant power supply disruption occurs, optimizing morbid grid effects for superior performance. Moreover, combining state-of-the-art fault detection and classification algorithms based on ML improves the ability to predict maintenance needs to prevent breakdowns and inefficiencies, thereby reducing downtime and maintenance costs. Additionally, AI-DGOA provides real-time cybersecurity risk assessments for the system, allowing for a strong safeguard against adaptively learning cyber-attacks, which is crucial in the increasingly interconnected energy system. Through intelligent predictive modeling of grid failures, optimal load balancing, and secure data management practices, AI-DGOA improves operational resilience and reliability.

Precision analysis by number of episodes.

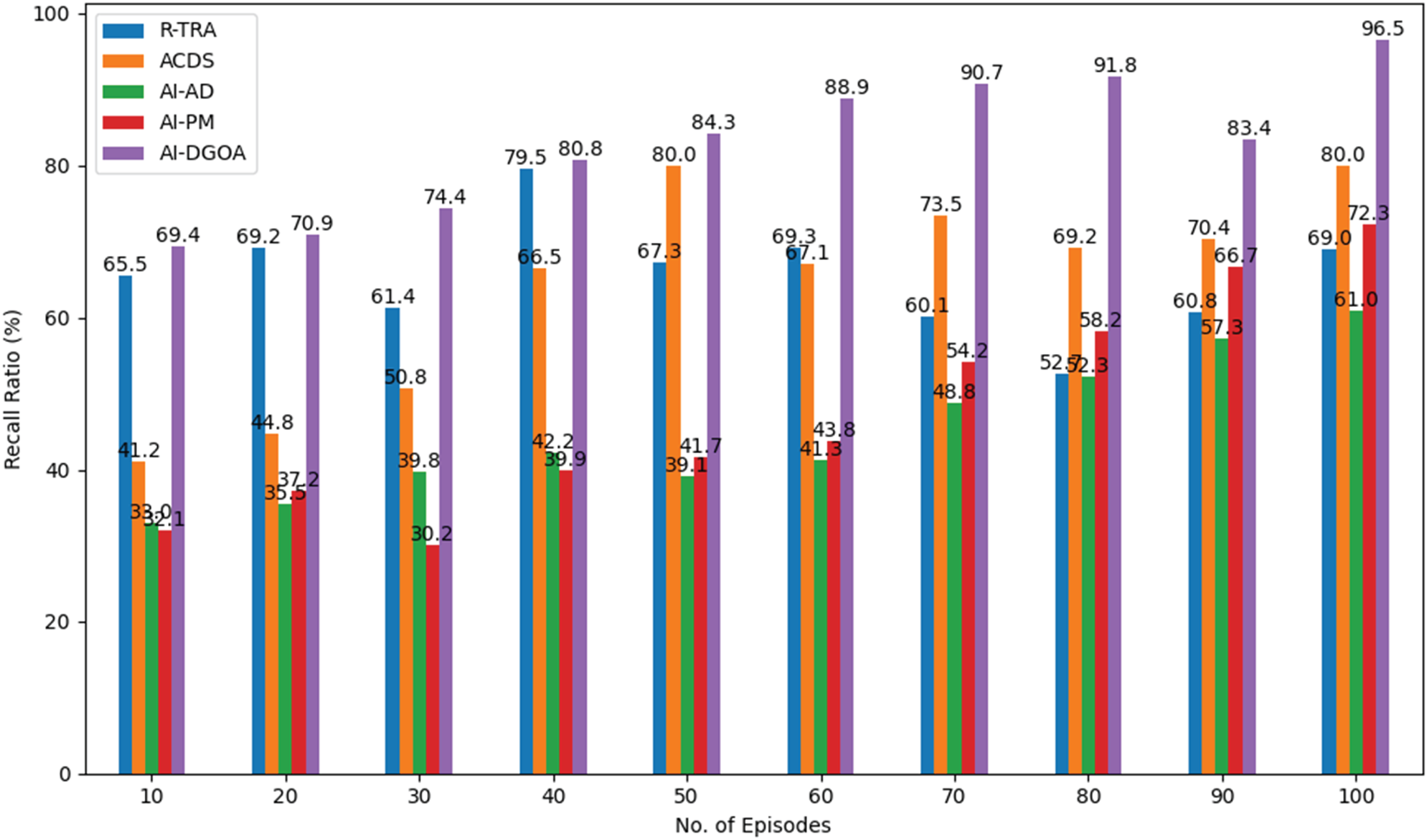

As shown in Figure 13, at 100 episodes, AI-DGOA obtains a 96.5% recall ratio. AI-DGOA outperforms the other method due to its efficient approach to monitoring real-time data, which improves the grid's performance and leads to advanced decision-making, reducing the chances of power supply interruptions. Integrating state-of-the-art ML algorithms for fault detection and classification within the framework provides a more intelligent predictive maintenance approach, allowing for the early detection of potential equipment failures and grid inefficiencies, thereby reducing maintenance costs and minimizing downtime. Moreover, the incorporation of real-time cybersecurity risk assessments in AI-DGOA strengthens PDNs against emergent cyber threats, safeguarding both operational continuity and data integrity. The effectiveness of AI-DGOA helps predict grid failures, optimize load distribution, schedule better maintenance, and minimize system risks.

Recall analysis by number of episodes.

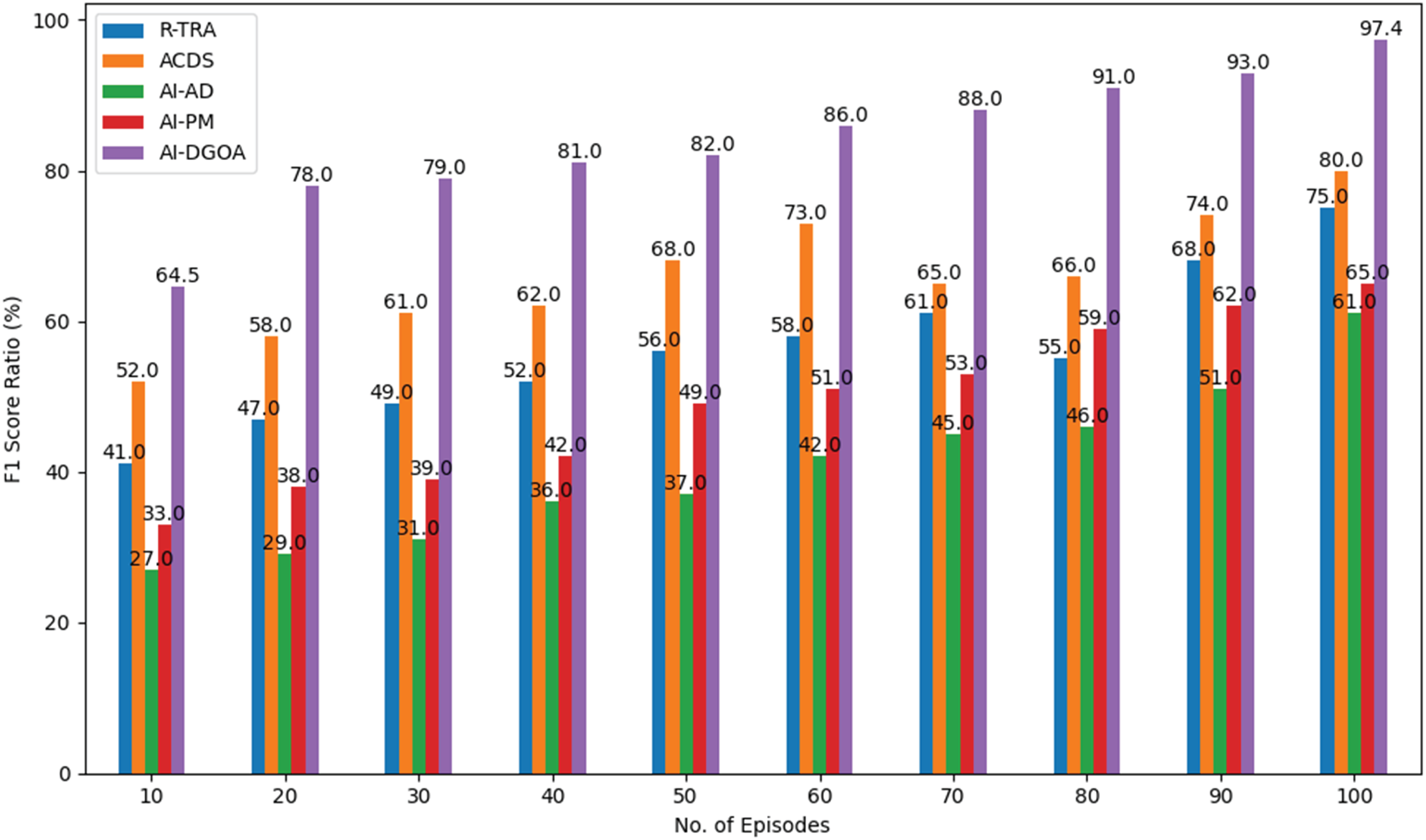

With its unique combination of accuracy and recall, the F1 score is an important indicator for measuring the effectiveness of the AI-DGOA method. True positives and false negatives are both taken into consideration by this balanced metric, which is the harmonic mean of recall and accuracy. With a high F1 score, AI-DGOA is able to efficiently detect the most important problems and risks in (PDNs) and make accurate forecasts. This equilibrium makes the system trustworthy, which is important for improving cybersecurity RM and optimizing grid performance. The F1 Score ratio of the proposed method is 97.4% at episodes of 100 compared to other baseline methods, as shown in Figure 14.

F1 score by number of episodes.

Conclusion

This study introduces an innovative AI-driven solution (AI-DGOA) for addressing the mounting complexities confronting PDNs. We designed the algorithms and framework to enhance PDNs’ predictive upkeep abilities, substantially decreasing operational expenses and downtime. The fault discovery and categorization algorithms evolved in this investigation display a marked improvement in pinpointing looming equipment failures and inefficiencies, thereby facilitating proactive decision-making. This not only optimizes grid performance but also fortifies the dependability of power supply systems. Furthermore, AI-DGOA incorporates real-time cybersecurity risk evaluation, addressing the evolving threats encountered by PDNs. By persistently monitoring and assessing cyber risks, the framework ensures that critical infrastructure is safeguarded against potential attacks, thus enhancing overall operational resilience. The simulation analysis indicates that AI-DGOA outperforms present methodologies, effectively predicting grid interruptions and optimizing load distribution while minimizing cybersecurity vulnerabilities. Future research should focus on refining the AI-DGOA algorithms and exploring their implementation in real situations, ensuring that PDNs can adapt to the challenges of an increasingly digital and interconnected world.

Footnotes

ORCID iDs

Author contributions

Muhammad Wasim Abbas Ashraf, Janagaraj Avanija, and Tulasi R R Ballireddy: Conceptualization, methodology, software, visualization, investigation, writing—Original draft preparation. Arvind R Singh: Data curation, validation, supervision, resources, writing—review & editing. Mohit Bajaj, Olena Rubanenko: Project administration, supervision, resources, writing—review & editing.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.