Abstract

General Cognitive Ability (GCA) is widely used in personnel selection, with meta-analyses from Europe and North America confirming its predictive validity for job performance. However, Swedish research remains limited, particularly in peer-reviewed literature. This study contributes to the field by summarizing mostly unpublished studies, broadening perspectives beyond academia. A psychometric meta-analysis of 25 studies (24 unpublished, one published) from 1949 to 2024 (N = 2875) applied two restriction of range correction strategies—“considered” and “conservative”—to examine their impact on results. Range restriction occurs when variability in predictor scores is artificially reduced due to pre-selection, leading to underestimated correlations. The observed mean correlation was .19, increasing to .32 after correcting for indirect range restriction and measurement error in job performance. Different correction strategies had minimal impact, reaffirming GCA as a strong predictor of job performance in Sweden. However, further validation studies using Swedish samples are needed to refine correction methods and improve generalizability.

Introduction

General Cognitive Ability (GCA) is one of the most extensively studied constructs in psychology (Spearman, 1904) and has been central to personnel selection since the early development of intelligence tests (Binet and Simon, 1916). While World War II is often cited as the starting point for large-scale GCA testing in selection (Yerkes, 1921), research suggests that several European countries implemented these assessments before North America (Salgado et al., 2010). The widespread adoption of GCA tests in military settings later extended into professional selection systems, making them a key component of modern personnel selection globally (Steiner, 2012).

The predictive validity of General Cognitive Ability for job performance has been extensively documented in North America (Hunter and Hunter, 1984) and Europe (Hülsheger et al., 2007; Salgado et al., 2004). However, Scandinavian and Nordic countries have contributed only sporadically to this research, and Sweden, in particular, remains largely absent from the international research landscape. While studies exist on intelligence and occupational choices in Swedish samples (Hemmingsson et al., 2006) and on individual differences and job performance published by Swedish researchers (Sjöberg et al., 2012), only one peer-reviewed study based on original Swedish data has been identified in major academic databases (Annell et al., 2015). The lack of published research raises concerns about whether findings from North America and other European countries can be generalized to the Swedish labor market, given potential differences in selection practices, labor market policies, and educational systems.

Another key motivation for this meta-analysis is the replication crisis in psychology (Schmidt, 2009). Given increasing concerns about research reproducibility and robustness, broadening the empirical foundation of GCA studies is essential. While most previous meta-analyses rely predominantly on North American and Western European data, Sweden has been underrepresented, despite the widespread use of GCA tests in academic, military, and professional selection. Expanding the knowledge base is crucial, even if cultural differences are assumed to have a limited impact on GCA’s predictive validity.

Finding published studies on GCA and job performance in Sweden proved nearly impossible, necessitating the use of grey literature, including unpublished studies, technical reports, and dissertations. Without incorporating unpublished data, the evidence base would be extremely limited or nonexistent, making this study impossible to conduct. Since GCA’s predictive validity has been firmly established in other regions, assessing its relevance in Sweden requires utilizing all available data sources. Excluding grey literature would not only leave a significant knowledge gap but also risk introducing publication bias, as published studies tend to favor statistically significant findings while disregarding null results (Hopewell et al., 2007). Incorporating grey literature helps mitigate publication bias, ensuring a more accurate and representative estimate of GCA’s predictive validity. However, accessing unpublished data presents an obvious challenge. Fortunately, there is a strong tradition among practitioners in personnel selection to both conduct and document studies on the relationship between GCA and job performance. The willingness of these researchers and consultants to share their documentation has made it possible to carry out this study.

Thus, this study makes an important contribution by leveraging previously unpublished primary studies, adding to the broader body of North American and European research on GCA and job performance. It conducts a meta-analysis of Swedish studies on GCA and job performance to provide the first comprehensive estimate of this relationship in Sweden.

General Cognitive Ability

The fact that individuals differ in their levels of job performance makes it essential for organizations, and applicants, to identify and hire the highest performers. Individual differences in GCA contribute significantly to explaining differences between individuals in many vital areas of life (Gottfredson, 1997a; Jensen, 1998; Neisser, 1996).

Based on the positive correlation between students’ rankings across different subjects in school grades, Spearman (1904) suggested that this shared variance represents a general factor, the g factor, of cognitive ability. In his two-factor theory, Spearman proposed that individual differences in the true score of any ability measurement are attributed to two factors: the g factor, which is common to all GCA assessments, and a second factor specific to each individual measure of mental ability.

In 1939, Holzinger and Swineford proposed the first hierarchical model of intelligence with a general factor at the top and several uncorrelated specific ability factors below, and although Spearman’s g factor and the hierarchical model have been criticized (e.g., Thurstone, 1947), accumulated research has provided solid evidence for the robustness and soundness of the hierarchical model and for the relevance of the g factor (Carroll, 1993). Competing theories (e.g., Guilford, 1988; Sternberg, 1985) are certainly not absent, but suffer, at least for the time being, from a lack of empirical support (Jensen, 1998). Since the publication of Spearman’s paper in 1904, more than a century of empirical research has demonstrated the pervasive influence of GCA in such various areas as academic achievement, occupational attainment, socioeconomic status, divorce, and even age of death (e.g., Cucina et al., 2024; Gottfredson, 1997b; Hemmingsson et al., 2006; Sackett et al., 2024).

Today, it is fair to say that there is broad consensus in the scientific community concerning the hierarchical structure of cognitive ability, the existence of the GCA factor, and the definition of the construct. GCA does not represent a narrow academic intelligence, but will manifest itself in almost any realm of activity that involves active information processing. A definition proven to be useful in applied psychology is the one presented by Gottfredson (1997b), which was first published in the Wall Street Journal in 1994 as part of an editorial written by Gottfredson and signed by a number of colleagues. In their words, GCA ‘is a very general mental capability that, among other things, involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly and learn from experience’ (Gottfredson, 1997b: 13).

Thus, the degree to which an individual is able to learn, adapt, deal with complexity, and process job-relevant information appears to determine his or her work behavior in general. In addition, the relationship between GCA and job performance has been found to be linear, which implies that higher levels of GCA are consistently related to higher levels of job performance, and that there is no point where a higher level of GCA is negatively related to job performance (Sackett et al., 2008). Demographics such as ethnicity and gender as well as organizational, national, and cultural settings have not been found to moderate the relationship between GCA and job performance (Hunter and Hunter, 1984).

It should be mentioned that the empirical support for using lower-order factors for personnel selection purposes (i.e., for predicting job performance) is not as convincing. Specific abilities are generally less important for explaining behavior than GCA, and research suggests that the incremental validity of specific abilities (defined as ability factors unrelated to the general factor) in the prediction of performance and training outcomes is minimal when more general factors are taken into account (Ree and Carreta, 2022; Ree et al., 1994). The reason for this might be that most tasks that involve active information processing rely on a range of abilities rather than one specific ability.

Job performance

The main objective of collecting and combining data about applicants in personnel selection is to predict job performance. Accurately rank-ordering applicants based on predictions of their future job performance, and making hiring decisions based on this rank-ordering, constitutes the very essence of personnel selection (Schmidt and Hunter, 1998).

The conceptualization of job performance as a hierarchical construct, where general job performance represents the highest-order, most generalizable factor at the top of the performance taxonomy and specific performance domains are situated at lower levels, has received strong empirical support (Viswesvaran et al., 2005).

In occupational settings, the general factor of job performance is defined as ‘scalable actions, behaviors, and outcomes that employees engage in or bring about, which are linked with and contribute to organizational goals’ (Viswesvaran and Ones, 2000: 216). The general factor of job performance is an aggregation of the primary performance domains, and there is strong support for a structure of three primary job performance domains—task performance, contextual performance, and avoidance of counterproductive work behaviors (Rotundo and Sackett, 2002). All three domains contribute to the general factor of job performance and represent three distinctly different aspects of human behavior and performance in the workplace. Although many meta-analyses have focused on primary dimensions of job performance (e.g., Gonzalez-Mulé et al., 2014), this study investigates the overall construct of job performance.

GCA and job performance

Recently, Sackett et al. (2022) conducted a partial re-analysis of data from 1980s General Aptitude Test Battery (GATB) testing, where the results were significantly re-evaluated. The authors suggested that the correlation reported in the 1980s was likely overestimated. Instead of .51 (Hunter, 1980), their new estimate was substantially lower at .31, primarily due to methodological issues related to range restriction corrections (e.g., Rydberg, 1968; Sackett et al., 2022), as described in more detail below. This study and a completely new meta-study (Sackett et al., 2024), which includes studies from 2000 to 2021, suggest a current effect of only .22, which is significantly lower compared to previous meta-analyses (Hunter and Hunter, 1984).

In the new meta-analysis by Sackett et al. (2024), it is stated that previous results (such as Schmidt and Hunter, 1998; Salgado et al., 2004) are based on “old” data, and that working life today looks different. It is postulated that we have transitioned from more traditional industrial jobs to performing more roles within the service sector, which may not require the same attributes. Sackett et al. (2024) also highlight that previous studies in North America primarily rely on a single GCA test, namely the General Aptitude Test Battery (GATB), making it difficult to generalize the results to GCA measured with other instruments.

Above all, sharp criticism is directed at the method used in earlier studies to calculate the so-called operational validity, specifically regarding whether and how to correct for what is known as range restriction. Restriction of range occurs when data for a predictor (in this case, GCA) are collected in a single validation study, where the same predictor has been directly or indirectly used for selection decisions on the same group that the study’s results are based on (Lang et al., 2010).

Direct range restriction occurs when a GCA test is used for selection decisions, such as when organizations set a threshold score on a GCA test for applicants to qualify. Indirect range restriction occurs when another predictor influences the distribution, such as when grades, highly correlated with GCA (Roth et al., 2015), are considered during the selection process. In both cases, when the relationship between GCA and job performance is examined, either in concurrent or predictive studies, the variation in GCA is reduced compared to what it would be if the study included all applicants, including those not selected.

Schmidt and Hunter (1998) corrected for restriction of range in both predictive and concurrent studies using available estimates. In contrast, Sackett et al. (2024) did not apply range restriction corrections in concurrent studies, despite having relevant data. Their differing assumptions reflect a broader stance by Sackett et al. (2022), who argue that range restriction is often negligible in typical selection settings and therefore should not be corrected in concurrent or validity generalization studies based on concurrent designs. Criticism of the “new” method, which has not fully corrected correlations in all studies, quickly emerged. Ones and Viswesvaran (2023: 363) state that “many researchers consider it good science to be conservative, but conservative estimates are by definition biased. We believe it is more appropriate to strive for unbiased estimates since the research goal is to maximize the accuracy of the final estimates.” And Oh et al. (2023) simply argue that Sackett’s new way of correcting (or rather the lack of correction) is unreasonable and that more work is needed to fully understand the representativeness of estimates of restriction of range and optimal correction procedures under typical conditions.

Further, Bobko et al. (2025) argue against Sackett et al.’s conservative estimation approach, claiming that the method they use is fundamentally flawed and misleading because some values are corrected for range restriction while others are not when validities are directly compared. Based on these and other concerns, Bobko et al. (2025) outline an alternative “considered estimation” strategy for comparing predictors of job performance.

In this study, we report the positions we take to calculate the so-called operational validity, i.e., the practical validity of using a GCA test to predict overall job performance. Operational validity reflects the correlation between GCA scores and job performance in real-world settings, where range restriction and measurement error are factors that can underestimate validity.

By focusing on operational validity, we measure the strength of this relationship within actual hiring practices, providing a more accurate estimate of the GCA test’s predictive power under practical conditions. According to established guidelines, both the observed correlation between GCA and job performance and the corrected correlation, adjusted for range restriction in GCA and measurement error in job performance (i.e., operational validity), are reported (Binning and Barrett, 1989; Schmidt and Hunter, 2015).

Challenges of GCA testing in Sweden

Although GCA testing began in Sweden as early as the early 1900s (Jäderholm, 1914), and the Swedish Scholastic Aptitude Test (SweSAT), developed in the 1960s, was based on GCA principles, such tests have also been widely used in military enlistment. Since 1944, nine versions of the Enlistment Battery have been developed (Husén, 1948). Despite these advancements, GCA testing in Sweden has faced political and ideological opposition. During the 1960s and 1970s, it was criticized as a capitalist tool, limiting its acceptance in occupational contexts. This debate shaped the training of industrial-organizational (I-O) psychologists, creating a generational divide in attitudes toward GCA testing. Psychologists trained in the 1960s typically had a strong foundation in psychometrics, whereas those trained in later decades received limited education in this area, fostering hostility toward GCA testing, particularly in personnel selection. This resistance has also reduced the academic community’s interest in publishing studies on the relationship between GCA and job performance, making it impossible to conduct a meta-analysis based solely on Swedish peer-reviewed publications.

Consequently, this study, based on 25 studies conducted between 1949 and 2024, provides a unique contribution for practical recruiters in Sweden. It also complements the recently published meta-analysis of both published and unpublished studies (Sackett et al., 2024), which primarily focuses on North American samples.

Method

Sample and inclusion criteria

We adopted the same criteria as Sackett et al. (2024) for measuring GCA, which included diverse problem-solving items (e.g., excluding tests solely based on matrices). We included studies measuring overall job or task performance, rated by supervisors or through objective job-related behavior. Excluded were studies using self, peer, or subordinate ratings, those focusing on narrow performance aspects like citizenship or counterproductive behavior, and those targeting non-performance outcomes like turnover, job satisfaction, or training performance. As far as we know, no compilation of studies conducted in Sweden exists, so we expanded our search beyond the last 20 years to include all available Swedish and English databases.

A literature search was conducted across various publicly available electronic databases to gather relevant studies. Google Scholar and ProQuest Dissertations and Theses were utilized, using search terms such as “cognitive ability,” “job performance,” and “Swedish sample.”

To ensure a diverse dataset, outreach was conducted via LinkedIn, Facebook, and personal contacts to solicit studies directly from Swedish consultants and test publishers. Additionally, all Swedish university databases were searched for master’s theses or dissertations unavailable in other databases. Unfortunately, only one published peer-reviewed study was found (Annell et al., 2015). However, these efforts resulted in 25 observed GCA–job performance correlations, which formed the input for our analysis (see Appendix 1). The results were derived from three different types of publications: technical psychometric manuals for published psychological tests (e.g., Sjöberg et al., 2006), published books (e.g., Hubendick, 2023), internal reports from Swedish authorities (e.g., Andersson et al., 1968), and internal documents from private companies. Since almost all studies were unpublished, it was impossible to fully assess the quality of the data. However, even though they had not undergone peer review, there was extensive documentation in manuals and internal reports, which was made available to the authors. In cases of uncertainty, direct contact was made with the researchers who conducted the studies to clarify any ambiguities.

Analysis

In some studies, multiple correlations were reported rather than a single correlation. In cases where it was not possible to directly reproduce a correlation between GCA and job performance, we employed a composite formula instead of simply averaging the correlations. This approach ensures a more accurate representation of the correlation levels for each individual study, thereby avoiding potential underestimation of operational validity (Le et al., 2007; Schmidt and Hunter, 2015). In one study, we could not find the correlations between the three GCA tests used as predictors. In this specific case (Study 25), only the average was used to ensure the study’s inclusion in the analysis.

A psychometric meta-analysis was conducted using the “psychmeta” package in R (Dahlke and Wiernik, 2019). The Schmidt and Hunter approach, utilized in “psychmeta” and combined with a random effects model, offers a comprehensive framework for handling the complexities often encountered in meta-analytic research. In this study, we will report the positions we take to calculate the operational validity, based on the “considered estimation” strategy (Bobko et al., 2025), but to understand how this might affect the results, we complement the analysis with a “conservative estimation” (Sackett et al., 2022).

As previously described, restriction of range refers to a reduction in the observed score variance of a sample (e.g., the selected applicants) compared to the variance of the entire population (e.g., all applicants to the position). In other words, restriction of range occurs when the variance of a predictor in a sample is reduced due to some form of pre-selection (Sackett and Yang, 2000). In practice, this involves comparing the standard deviation (SD) of the applicant sample with that of the incumbent sample, allowing for the computation of a u ratio (incumbent SD/applicant SD). This u ratio is then applied in correction formulas, such as Thorndike’s (1949) Case II formula, to adjust the observed correlation for range restriction effects. By accounting for the variance reduction caused by pre-selection, these corrections provide a more accurate estimate of the true relationship between predictors (e.g., GCA) and job performance. This ensures that selection tools are evaluated based on their actual predictive validity, rather than an artificially deflated correlation due to restricted variance in the incumbent sample.

Range restriction is a widespread issue in selection research. While recent years have seen intense debate on this topic without a clear consensus, it remains essential to clearly describe our approach to this issue. Of the 25 studies, 13 were predictive, meaning that GCA test data were collected before performance measures. In these 13 predictive studies, researchers and/or the study documentation provided individual estimates of restriction of range, which were therefore used in the analysis. Among the remaining 12 studies (concurrent validations), five involved researchers who had access to the same applicant population as the incumbents in the validation study. These five studies were therefore corrected for restriction of range. In the other six studies, where no information on restriction of range was available, the results were calculated without adjustment.

For the main analysis, the indirect restriction of range method was applied to the 13 predictive studies and the five concurrent validity studies (Hunter et al., 2006). Although the approach described by Hunter et al. may not be ideal under certain breached assumptions, it typically offers a more accurate assessment of operational validity than the alternatives (i.e., either omitting correction or presuming direct range restriction). Consequently, it is advisable to use this method when the information required for more sophisticated corrections is not available (Morris, 2023). For comparison, supplementary analyses will be presented in the results to examine the effects of our methodological decisions on the outcome (i.e., considered estimation) in contrast to more conservative estimation (i.e., Sackett et al., 2024).

Of the 25 studies, 20 utilized supervisory ratings of overall job performance, while the remaining five employed objective criteria (i.e., production results). As an example of objective measures, time studies of tractor drivers and their efficiency in loading can be mentioned, where the ratio between the number of working hours and the number of stoppages during loading served as a measure of work performance. No studies presented reliability data (i.e., intraclass coefficients) that were usable for correcting the observed correlation. Estimates from the latest update of reliability levels for supervisory ratings were obtained (Zhou et al., 2024): .61 for non-managerial jobs and .48 for managerial jobs using Schmidt and Hunter’s meta-analysis method. For studies that had objective criteria, the reliability was set to 1.00. We chose these estimates even though later analyses have shown slightly higher reliability levels for direct supervisory ratings (Speer et al., 2024). Except for two, all studies included in the analysis involved non-managerial jobs. The descriptive data for all included studies are presented in Appendix 1.

Results

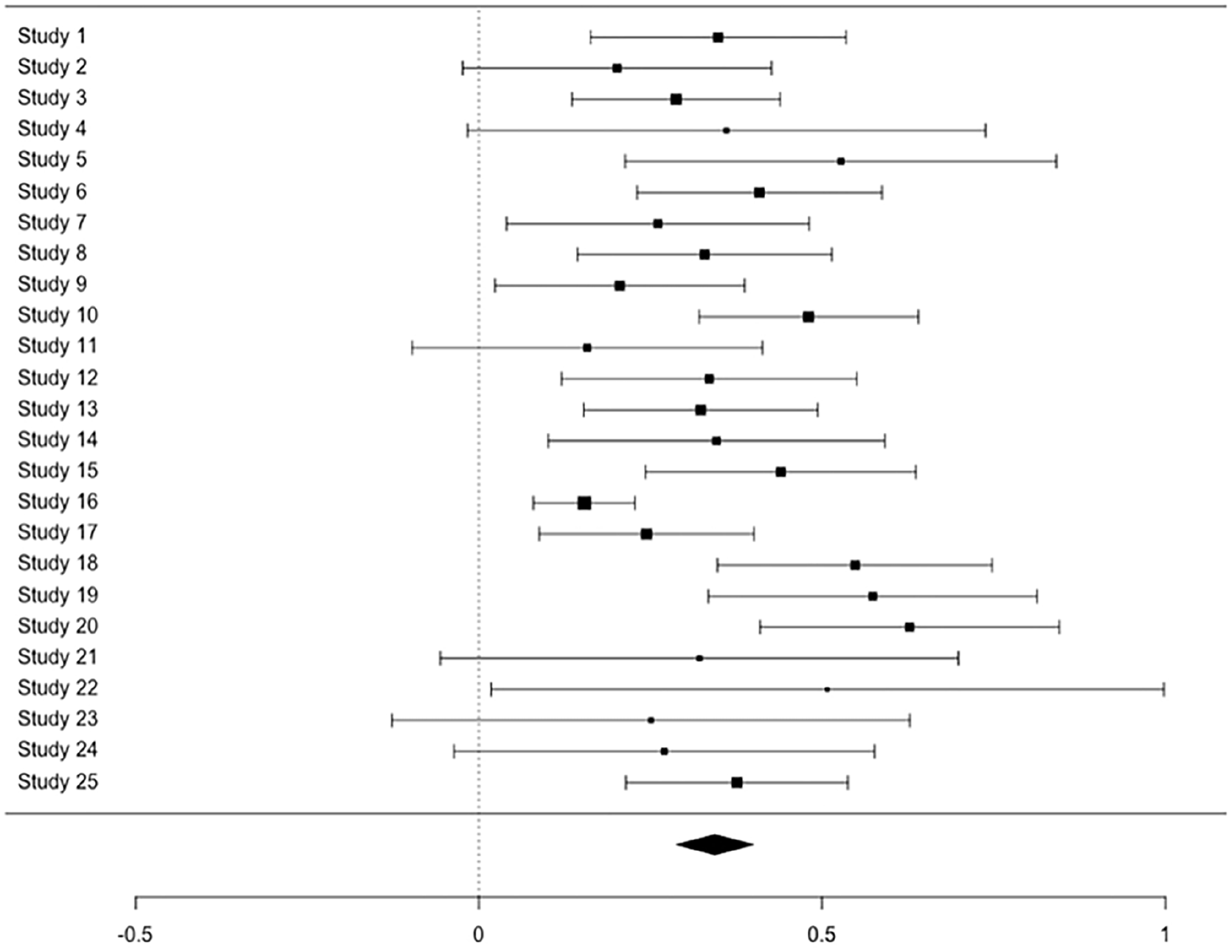

Figure 1 presents a forest plot of the corrected correlation effect sizes and 95% confidence intervals for the 25 studies included in the meta-analysis. Each row represents a study, with the black square indicating the point estimate of the correlation between General Cognitive Ability (GCA) and job performance. Square size reflects the study’s weight, typically based on sample size or precision, and the horizontal line shows the confidence interval.

Forest plot.

The vertical dashed line at zero indicates the null effect. Studies whose intervals do not cross this line are statistically significant at the .05 level. The diamond at the bottom represents the overall effect size from the random-effects model, with its center showing the mean estimate and its width the 95% confidence interval. Its position to the right of zero suggests a positive relationship between GCA and job performance.

Outliers may be influential if their inclusion or exclusion unduly affects the meta-analysis conclusions. To further investigate whether any studies deviated from the main result, we used Viechtbauer (2010) approach within the R metafor package to identify outliers and potentially influential studies. This approach defines outliers as studies with absolute studentized deleted residuals greater than 1.96, meaning they deviate significantly from the mean effect size. The result identified two studies (Study 16 and 20) as outliers. These outliers show a relatively low and high correlation compared to what might be expected, suggesting these studies deviate significantly from the overall trend in the meta-analysis.

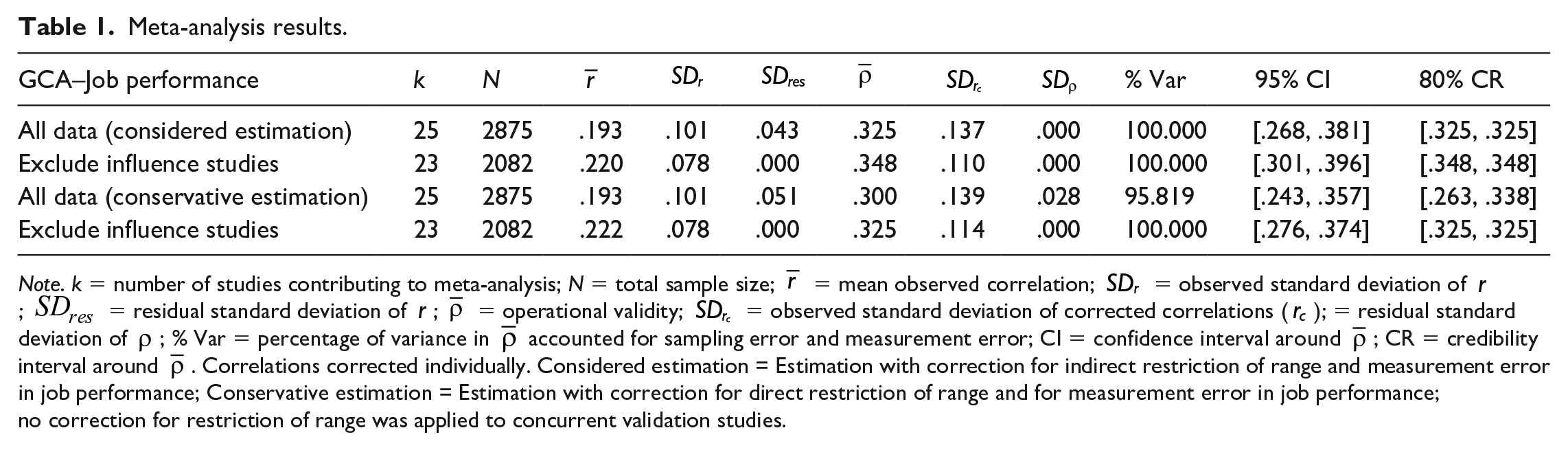

Table 1 provides a detailed breakdown of the meta-analysis results. The column labeled k indicates the number of studies that contributed to the meta-analysis, while N represents the total sample size across those studies. The mean observed correlation is shown as

Meta-analysis results.

Note. k = number of studies contributing to meta-analysis; N = total sample size;

The percentage of variance in

The meta-analysis results across 2875 individuals and 25 independent samples yielded an observed mean correlation (

The results with and without the outliers (i.e., after excluding the influential studies) are also presented in Table 1. Excluding these studies resulted in a higher observed mean correlation (

To test whether the results differ significantly when we follow Sackett’s recommendations not to correct for restriction of range in concurrent validation studies and, instead of correcting for indirect restriction of range, correct for direct restriction of range, we used a so-called conservative estimation (Bobko et al., 2014). Correcting for unreliability in the criterion and for direct restriction of range in the predictor produced, as expected, a slightly lower mean corrected correlation (

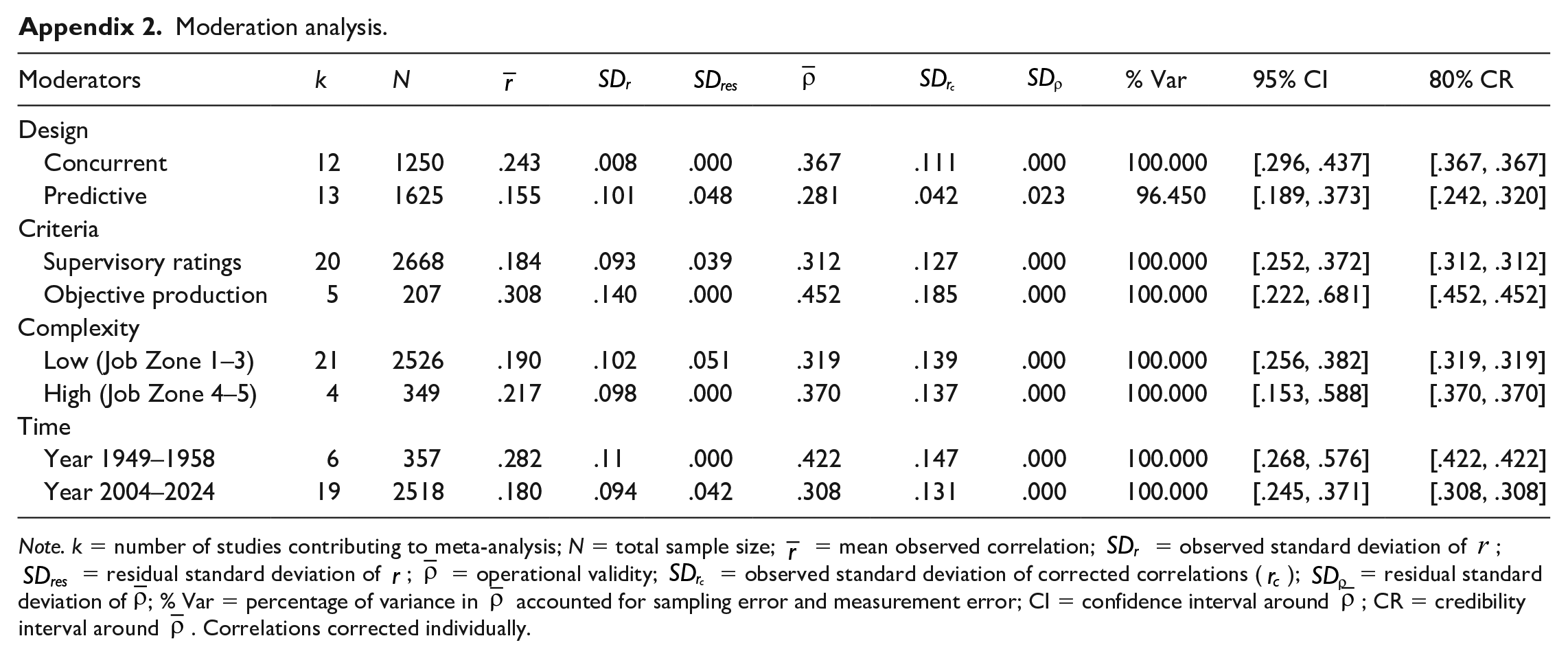

As a supplementary analysis, earlier studies (1949–1958) were separated from later studies (2005–2024), referred to as old and new studies, respectively. We also categorized the studies as concurrent or predictive, and as using supervisory ratings or objective performance and low and high complexity job. 1 These factors were considered potential moderators of the overall results. A meta-regression using a mixed-effects model, entering all moderators in the analysis, was conducted to assess significant differences among the moderators, accounting for both within-study sampling error and between-study variance, using the metafor package in R (Viechtbauer, 2010).

The meta-regression explained 12.96% of the heterogeneity, suggesting that the moderators accounted for a modest portion of the between-study variance. However, the overall test of moderators was not statistically significant, indicating that the moderators included in the analysis did not explain much of the variance. This suggests that unexamined factors may be influencing the differences, or that the selected moderators are weak predictors. Further exploration of other moderators or adjustments to the model may be necessary to explain the remaining heterogeneity. For descriptive purposes, we present the results of the moderation analyses in Appendix 2.

Discussion

The purpose of this study was to investigate whether previous findings on the relationship between GCA and job performance can be generalized to Swedish conditions. The almost complete absence of published studies on Swedish samples necessitated a search for lesser-known studies conducted outside academia. Our review identified studies dating back to the 1940s, which is a strength, as it enables the documentation of previously unknown research over an extensive period.

Earlier research, such as Hunter (1980), suggested a correlation of .51, but more recent analyses indicate that this was likely overestimated. Sackett et al. (2022) re-evaluated historical data and found a significantly lower estimate (

These results highlight the importance of contextual factors and methodological choices when evaluating GCA’s predictive validity. Our study contributes by broadening the empirical base, particularly for Swedish conditions, and by emphasizing the need for further research on the methodological nuances that influence operational validity estimates. Based on this study’s data, a cautious conclusion is that GCA correlates positively with job performance, though perhaps not as strongly as previously thought (Richardson and Norgate, 2015).

The overall effect (

Interestingly, the largest study, based on police applicants, shows the lowest effect (an observed correlation of .08) and is also the only published study (Annell et al., 2015). It seems unlikely that police officers, in particular, would be unaffected by cognitive ability in their work. Instead, the unusually low effect may be due to the aggregation of all regional police groups in Sweden into a single sample. This aggregation could introduce average differences between groups, causing the correlation to approach zero. Other methods used in the same study, such as the structured interview, also showed implausibly low, near-zero results, which supports this interpretation. 2

Another possible explanation is the criterion used in the study, which was based on supervisory ratings. Many supervisors evaluating their colleagues may not have had sufficient opportunities to closely observe their performance, potentially leading to less reliable assessments. This lack of direct observation could have weakened the observed relationship between GCA and job performance. The highest correlation was found in an older study (Study 20) of tree fellers, with an observed correlation of .44. This study, conducted over 50 years ago—long before the advent of meta-analyses—is included as an appendix in a recently published book about Lennart Bergström (Hubendick, 2023), one of Sweden’s first occupational psychologists. The results were calculated manually, and it is possible that some test results were excluded from the internal report simply due to the time required for manual calculations. By including only the highest correlations, this effect may be somewhat overestimated. However, it is also noted that the study’s design was superior to many later studies. All tests were administered under highly controlled conditions, and the test quality was high, likely covering the entire intended construct, with as many as nine subtests constituting the GCA measure. The criterion measure was also collected under controlled conditions and served as a good marker for all tasks performed by the tree fellers, which may have contributed to the relatively strong effect.

However, when the influence of these two studies was removed from the analysis, the strength of the effect increased slightly, from .32 to .35, which means we can be confident that these studies do not significantly alter our conclusions that Swedish samples show similar results compared to North American data. This holds true even though more recent studies from the 2000s show higher operational validity (corrected correlation .31, see Appendix 2) compared to the latest meta-analysis published primarily on North American data (Sackett et al., 2024).

The overall result of this Swedish meta-analysis can be compared with the latest meta-analyses from North America. In the military domain, using hands-on military job proficiency measures as criteria, Cucina et al. (2024) found an average effect of .44, significantly higher than what Sackett et al. found in civilian occupations (.22). This Swedish study falls between their effect sizes, raising the question of why there is such a difference. While range restriction correction may influence the results, it is unlikely to be the sole explanation. Future research should examine the criteria used, with more primary studies on highly complex jobs. Older and newer studies should also be analyzed more thoroughly using consistent methods for range restriction correction in GCA and measurement error in job performance.

Limitations

The fact that most of the included studies have not undergone peer review is, of course, a weakness. The absence of a thorough review of the reports that formed the basis for this meta-analysis is unfortunate. On the other hand, these studies represent solid work by occupational psychologists in organizations focusing on recruitment and selection, and several studies are well-documented in internal reports from consulting firms, public authorities, and test providers, ensuring a quality-assured result. Another limitation, compared to other meta-analyses, is that this Swedish study is relatively small in terms of sample size and number of studies, which may call the results into question. Therefore, this meta-analysis should be supplemented in the future with studies from Sweden and perhaps our other Nordic neighbors.

This study, along with others that have examined the relationship between cognitive ability and job performance, has focused on the general factor (e.g., Sackett et al., 2024), but Nye et al, (2022) showed that the narrow cognitive abilities least correlated with GCA added significant incremental validity for predicting task performance, training performance, and organizational citizenship behavior. These findings can guide future studies to explore subfactors of GCA as well as different dimensions of job performance.

Furthermore, as an anonymous reviewer pointed out, the lack of reliability studies on supervisory ratings in Swedish samples is a limitation. Therefore, it is important that future studies collect data to assess whether these estimates can be generalized to Swedish conditions.

Conclusion

This Swedish meta-analysis provides valuable insights into the relationship between General Cognitive Ability and job performance in a Swedish context. While the results align with international findings, they indicate a slightly lower operational validity than earlier studies, though higher than the most recent meta-analysis by Sackett et al. (2024). The study reinforces GCA’s positive correlation with job performance but underscores the importance of adopting a utility framework for assessing practical application in different contexts. Our hope is that this study will inspire further validation studies in Sweden and that research funding will also support this important area of work and organizational psychology.

Footnotes

Appendix

Moderation analysis.

| Moderators | k | N |

|

|

|

|

|

|

% Var | 95% CI | 80% CR |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Design | |||||||||||

| Concurrent | 12 | 1250 | .243 | .008 | .000 | .367 | .111 | .000 | 100.000 | [.296, .437] | [.367, .367] |

| Predictive | 13 | 1625 | .155 | .101 | .048 | .281 | .042 | .023 | 96.450 | [.189, .373] | [.242, .320] |

| Criteria | |||||||||||

| Supervisory ratings | 20 | 2668 | .184 | .093 | .039 | .312 | .127 | .000 | 100.000 | [.252, .372] | [.312, .312] |

| Objective production | 5 | 207 | .308 | .140 | .000 | .452 | .185 | .000 | 100.000 | [.222, .681] | [.452, .452] |

| Complexity | |||||||||||

| Low (Job Zone 1–3) | 21 | 2526 | .190 | .102 | .051 | .319 | .139 | .000 | 100.000 | [.256, .382] | [.319, .319] |

| High (Job Zone 4–5) | 4 | 349 | .217 | .098 | .000 | .370 | .137 | .000 | 100.000 | [.153, .588] | [.370, .370] |

| Time | |||||||||||

| Year 1949–1958 | 6 | 357 | .282 | .11 | .000 | .422 | .147 | .000 | 100.000 | [.268, .576] | [.422, .422] |

| Year 2004–2024 | 19 | 2518 | .180 | .094 | .042 | .308 | .131 | .000 | 100.000 | [.245, .371] | [.308, .308] |

Note. k = number of studies contributing to meta-analysis; N = total sample size;

Acknowledgements

In light of the complexities involved, the authors would like to express their sincere appreciation to the practicing work psychologists and organizations who enabled the publication of this article by granting access to previously unavailable data. Special thanks are due to Gudrun Hubendick, whose permission to examine long-forgotten archival materials from the early 1950s has contributed valuable historical depth to the present study.

Declaration of conflicting interests

Both authors are affiliated with commercial organizations that provide psychological testing services on the Swedish market. This potential conflict of interest has been transparently acknowledged, and every effort has been made to ensure that the research has been conducted and presented with scientific integrity and objectivity.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.