Abstract

Energy Performance Certificates (EPCs) are indicators of building energy efficiency and carbon emissions across Europe, following the EU Energy Performance of Buildings Directive (EPBD). Assumptions behind EPC design differ significantly across Europe, despite emanating from a common starting point. Next Generation EPCs (NGEPC) relate to recently proposed updates to the EPBD, suggesting new functions and features that EPCs could adopt to be more useful for supporting decisions on zero carbon buildings. However, faced with such variation in approaches across Europe, this paper illustrates that a single pathway for upgrading EPCs will be difficult to achieve. Faced with this challenge, the paper presents methods of EPC categorisation to identify differences across European EPC approaches in a systematic way, directly addressing the consequences of a lack of harmonisation on the design of NGEPCs. By accounting for this variation, a framework is proposed for evolving specific EPC approaches to NGEPC status, captured in a way that is replicable to other EPC methods across Europe. Whilst the paper is informed by approaches across a selection of European countries, the situation in the UK is used as a more focussed case-study, with recommendations provided for how this approach may evolve in light of NGEPC progress.

Practical application

The presented work provides guidance and decision-support for implementing changes to EPC frameworks as they incorporate next-generation EPC recommendations. The work particularly reflects on EPC practice in the UK but in context of many other European countries; in effect, learning from those countries (and the wider EPBD) but also potentially having impact on the implementation challenges in multiple countries.

Keywords

Introduction

Although EPCs have been subject to various Energy Performance of Buildings Directive (EPBD) recasts 1 over a period of many years, such change tends to happen slowly and incrementally. This pace of change is largely defendable; there is a need for consistency when comparing energy assessments over time and every update to a methodology requires a revision to advice, training, and implementation throughout the EPC journey. It is therefore difficult to push forward more dramatic changes to the various protocols and calculations behind standardised energy assessments of buildings. Despite this challenging starting point, the form that EPCs should take, and the metrics delivered from such a document, has been discussed extensively in recent research of Next Generation EPCs (NGEPC). Horizon-funded European projects, 2 and Technical Committees informed by this work, have suggested new building performance indicators and new EPC formats that countries following the EPBD should explore. Furthermore, in the UK, developments relating to the energy calculation models behind the EPC 3 have moved on from only considering steady-state calculation engines, exploring the possibility of dynamic, or semi-dynamic, simulation as a basis for modelling energy consumption in buildings.

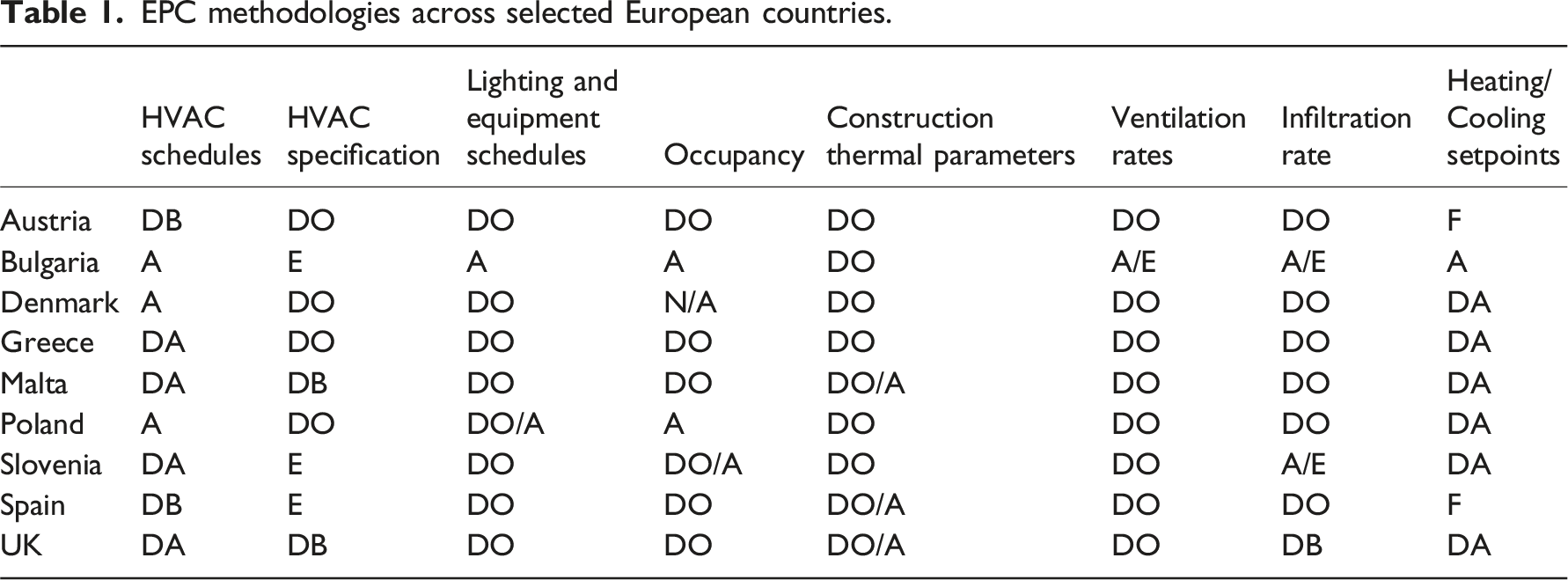

EPC methodologies across selected European countries.

A further obstacle, with the UK no longer a member state of the EU, is that some reporting and test cases on EPC design (and NGEPC design specifically 4 ) no longer includes the UK. However, as signatories to the EPBD, the UK is still impacted by updates and recasts and can benefit from the active research in other European countries. The crossCert project 5 carried out comparative exercises of EPCs across European countries (including the UK) to understand how and why EPCs are different by country. This comparison was wide-ranging in that it covered the technical basis used to generate EPCs, assessment protocols, and the training and background of EPC assessors. The project also focussed on the role and opinions of different end-users. This included an investigation of what users of EPCs actually need to make decisions, how/if existing EPCs fill that brief, and what information next-generation EPCs may deliver that can respond to those reported needs.

This paper documents that comparative exercise and, noting uncertainty around what form EPCs will take in the near future, overviews a framework for categorising the different EPC approaches on offer. In particular, the research will document the challenges posed by a lack of harmonisation of European EPCs, and the consequences of that when attempting, at EU level, to upgrade EPC delivery across Europe. Whilst a lack of harmonisation can be understood – specifically to allow an individual country to develop a process that works for a particular building stock and local energy policy – it does question whether, if a “European EPC” does not exist, it is possible to upgrade EPCs across Europe, at the same time, with generic advice. The findings are captured in a decision-support framework that could be applied for individual countries looking to navigate this European-level transition, whilst dealing with more local changes to EPC reform (such as what is currently occurring with UK and Scottish Governments 6 ).

The paper does refer to EPCs in the UK but, in reality, the situation in the UK is further complicated by differences within the constituent parts of the UK. However, it is generally true that, although different EPC documents are produced in England/Wales when compared to Scotland (with the latter having an additional carbon rating alongside the conventional EPC rating), the structure and modelling approach of EPCs across the UK are very similar (though not identical). With Scottish and UK Governments currently looking at EPC reform independently (including the use of EPCs in policy and regulation), it remains to be seen whether these EPCs approach diverge further; though, if so, the categorisation approach proposed in this paper may aid future comparisons between these different methods.

Energy compliance of buildings

The EPBD, as the genesis for all European EPCs across Europe, establishes guidelines for utilising building simulation and modelling to generate energy ratings for both residential and non-residential buildings. By design, these guidelines are sufficiently broad to allow for local (i.e. country-specific) application and delivery, leading to varied responses from different European countries to the EPBD and its revisions. On a technical basis, the EPBD allows for the modelling engine itself to: i) not use dynamic building simulation at all, ii) have it as a seldom-used option, or iii) make it a more mandatory aspect for specific building types. For the EPC document, individual countries have considerable flexibility to choose a format that best works for target user audiences within that country. From these choices, the type of EPC assessor required by each country to deliver those EPCs will also be significantly different.

The crossCert project investigated how EPC methodologies differ across Europe and the challenges this poses for designing NGEPCs that accommodate emerging needs and metrics for buildings. Along with a broader cluster of Horizon-funded initiatives (some referenced later in this paper), the work aims to shape the future of EPCs, addressing modelling, application, and user challenges.

A range of new EPC output metrics are being proposed by NGEPC research and related committees. Although being introduced as part of a voluntary initiative initially, plans have been proposed for the mandatory inclusion of some of these metrics. One of the more prominent proposals relates to the Smart Readiness Indicator (SRI). The SRI 7 assesses a building’s capability to manage its energy and environmental controls flexibly and intelligently. Collecting data for this indicator – such as the presence of smart control systems – does not require in-depth technical modelling but can offer a basic indication of the building’s potential for providing grid flexibility services. It is therefore a multi-criteria metric attempting to summarise, within the confines of a simple energy assessment, several aspects of “smartness” at the same time. As well as raising questions relating to the inputs required to generate the calculation, the weightings used within the SRI rating across the different aspects being captured (namely energy controls, environmental controls, and ability to incorporate energy demand flexibility solutions) require, within the methodology, a partly subjective judgement. When considering the underlying modelling architecture of EPC frameworks, there is also the challenge of attempting to characterise (particularly in relation to demand flexibility) inherently dynamic and transient factors within largely steady-state models.

Another NGEPC metric is the Operational Energy Rating, 8 which relies on measured energy consumption to derive an energy rating. This requires knowledge of user behaviour, an area not typically covered in any great detail in current assessor training programs. While existing EPCs (and, arguably, standardised building modelling more generally) often use simplified, generalised user profiles for modelling energy performance, a rating based on actual energy consumption reflects the choices of specific occupants. The increased data requirements for the assessment are significant; the majority of EPCs across Europe do not require measured energy data of buildings, and where they do (e.g. see discussions under 'Differences in EPC assessment') the usage is relatively simplified. This places an onus on the assessor – with implications on training and resource required for an extended assessment – to be able to analyse and use this data appropriately. For the communication of this information, there is then the consideration of how (or if) an empirical rating should be displayed next to a purely modelled rating. This is similar to the comparisons of Display Energy Certificates (DEC) and EPCs in the UK, where these two very different (but aesthetically similar) ratings can appear contradictory, even when used in physically separate documents. 9

Other NGEPC innovations relate to the application and interpolation of the results as much as the metrics themselves. Building Renovation Passports (BRP) 10 have been proposed to better disseminate recommendations of EPCs to particular end-users. Arguably, the standardised approach of EPCs in recommending energy efficiency measures could be well-suited to a formatted set of refurbishment advice – and therefore the underlying architecture of EPCs (even very standardised approaches in the UK EPC) may not require radical change to accommodate BRPs. However, if the intention is to make BRPs more tailored to a specific end-user (e.g. the real occupant of a building), this raises questions around how user-specific a set of generic recommendations can be. The UK has some experience of this dilemma with the Green Deal Occupancy Assessments in 2012. 11 For this scheme, an EPC assessment would be amended as part of the Green Deal Assessment to produce a new set of recommendations that were influenced by occupant-specific data collected during the site visit. This could include actual thermostat temperature settings, reflect a conversation with the occupant on general energy behaviour, and be informed by utility bill data if available. Whilst this did allow for the more standardised, traditional EPC to be amended to something more aligned to the user, it still leant heavily on other standardised assumptions of the EPC model (as documented in the following section) and some level of confusion of the outputs has been reported elsewhere, for a scheme that ultimately was not a success. 11

The NGEPC innovations discussed here are examples of how the purpose and application of EPCs may evolve in the future. However, there is still (by design) a limitation to what EPCs should be used for, as governed by the EPBD, and such innovations must be tested against those limitations. As will be discussed, further limitations can be identified that are country-specific, due to the differences observed in countries adopting EPCs. The following discussion will overview some of these, whilst focussing on the implications for EPC development in the UK.

Differences in EPC assessment

EPC methodologies across Europe

A direct and detailed comparison of EPC methodologies has been reviewed by crossCert elsewhere.

12

The work shows that, even when EPCs appear aesthetically similar and/or generate similar metrics, the underlying calculation and assessment methodologies can be fundamentally different. Table 1 summarises the differences recorded in inputs used for generating EPCs, informed by a review of standard documents in those countries and workshops with project partners from around Europe. Those countries are Austria,

13

Bulgaria,

14

Croatia,

15

Denmark,

16

Greece,

17

Malta,

18

Poland,

19

Slovenia,

20

Spain,

21

, and UK.18,22 To allow for a meaningful comparison, the EPC inputs are categorised based on whether they involve measured data, require considerable assessor inference, or are taken from a set (e.g. “look-up”) table of values. Specifically, Table 1 uses the following categories. - F: Fixed values that are not altered by building type or by assessor - DB: Default value that is categorised by building type - DA: Default value that is categorised by building activity - DO: Other default values that are linked to other criteria - A: Based on assessor judgement - E: Empirical values based on measured quantity from building being assessed

In some circumstances, a combination of these options is used (e.g. an assessor having to apply their own knowledge from a measured quantity is noted A/E in Table 1). Even this categorisation is a simplification of assessment protocols within each country; for example, significant differences can be seen across residential and non-residential (or types of non-residential) buildings and between new and existing buildings. The reviews informing Table 1 are dominated by responses from residential methodologies and refer to the dominant approach in that country, as reported in the referenced local methodological documents and feedback from assessors.

These categories do not indicate a single best practice option for EPC assessment. The value of allowing assessors to choose input parameters is highly dependent on the training and education background of those assessors, so assessments allowing for more assessor inference cannot be said to be better/worse than other assessments without knowledge of other factors in a given country. Likewise, the use of empirical energy data may seem like a more accurate approach to energy assessment, but this may run counter to the intention of a country to produce a highly standardised, regulated energy rating. These issues are discussed further below, using the nine listed countries as case-studies.

Tailored versus standardised assessments

The aforementioned country-specific reports note differences in inputs required for assessment, methods to obtain those inputs, calculation engine, and role of the assessor in making judgements about selection of inputs. This can all be seen as part of the intention of an EPC framework to either represent a standardised assessment for an “average” version of a building, or something more akin to a specific building modelling study of a particular building. To some degree, as per EPBD guidance, the EPC should be aligned to the former ambition to allow for fair and consistent comparison across a building stock (that is more concerned with the building asset, rather than the users of that building). However, approaches are markedly different across the selected European countries. For example, Bulgarian EPC assessors are able to exercise considerable judgement over what values to use for building services efficiencies, infiltration and ventilation rates, and also can adjust some inputs to calibrate final modelled energy estimates with that of measured energy consumption. Conversely, a UK EPC assessor (particularly for existing residential buildings) would be largely guided by look-up tables based on age of property, alongside other rule-of-thumb assumptions that intentionally try to avoid consideration of activities of specific occupants (and empirical information of building usage).

This has an additional consequence for the application of NGEPC metrics. Some of these new metrics may require input that is better characterised by more tailored assessment approaches; or a methodology behind generating that metric may be more robust (e.g. in relation to application of building physics) in some assessments than others.

Assessor training

Partly linked to the above, the technical requirements of an assessor to run an EPC methodology will clearly impact the type of training provided, and accreditation process, in a particular country. The UK, for residential EPCs, has a well-publicised training programme for prospective assessors, but this is relatively short in duration (c. 1 week) and has little in the way of formal education requirements prior to that training. As noted in previous work, 23 prior learning requirements and formal training programmes are not consistent across Europe for EPC assessors. Unsurprisingly, where assessor judgement is crucial in returning EPC calculations, a higher threshold for prior learning/education is often evident (as with the case for Bulgarian assessors having to carry out the example in the section on 'Tailored vs Standardised assessments'). This symbiotic relationship between type of assessment and assessor (both, to an extent, reflecting each other) can be a barrier to harmonisation of EPCs across Europe; an assessor workforce will have evolved in a country to meet a particular EPC approach, and that approach is unlikely to be developed outside the competence of known assessor qualifications. It would therefore be impossible to transplant, for example, an assessment framework from one country (which assumes a high level of building physics education) into another country (where entry requirements for accredited assessors do not require the same skillsets). This becomes a more complex issue when new NGEPC metrics are introduced. Based on current training, it is unlikely that all assessors across Europe will have a similar understanding of (for example) a Smart Readiness Indicator. Whilst the calculation of this, and other, metrics can be incorporated into new training schemes (and software), the responsibility of communicating what this means to a building owner is likely to fall on the EPC assessor. Background education, an obvious variable across European energy assessors, will play a key role in this communication.

Choice of output metrics

As discussed in this paper, new forms of output metrics are currently being tested for use with EPCs and the implementation of such metrics may vary with country. However, there are already differences in choice of EPC output metrics across Europe. While heating, ventilation, and hot water are consistently included in all the countries examined, there are notable differences that complicate direct comparisons of modelled energy or carbon results (as discussed elsewhere 12 ). For instance, lighting is not explicitly modelled in residential buildings in Austria, Poland, and Spain, and in Denmark it is only considered for shared areas of multi-family homes. Most countries, including the UK, do not incorporate consumer appliances (often termed “unregulated” energy use) in their EPC ratings; however, Bulgaria includes this for all buildings and Austria does so for non-residential. Among the countries studied, the UK is the only one that does not account for cooling in the EPC ratings for residential buildings, although an informal calculation is mentioned in the appendices of its methodology. 24 This demonstrates that, as well as there being no such thing as a “European EPC”, the meaning behind a rating is quite different depending on the country involved; it is not valid to compare, for example, percentage of A-rated buildings in different European countries.

Quality control of EPCs

As noted later in the paper ('What is meant by harmonisation?'), harmonisation of EPCs goes beyond just the specific inputs and protocols of the EPC assessment itself. Consistency of method can also be encouraged through the use of overarching frameworks that the assessment can sit within – such as the approach to quality control of EPCs.

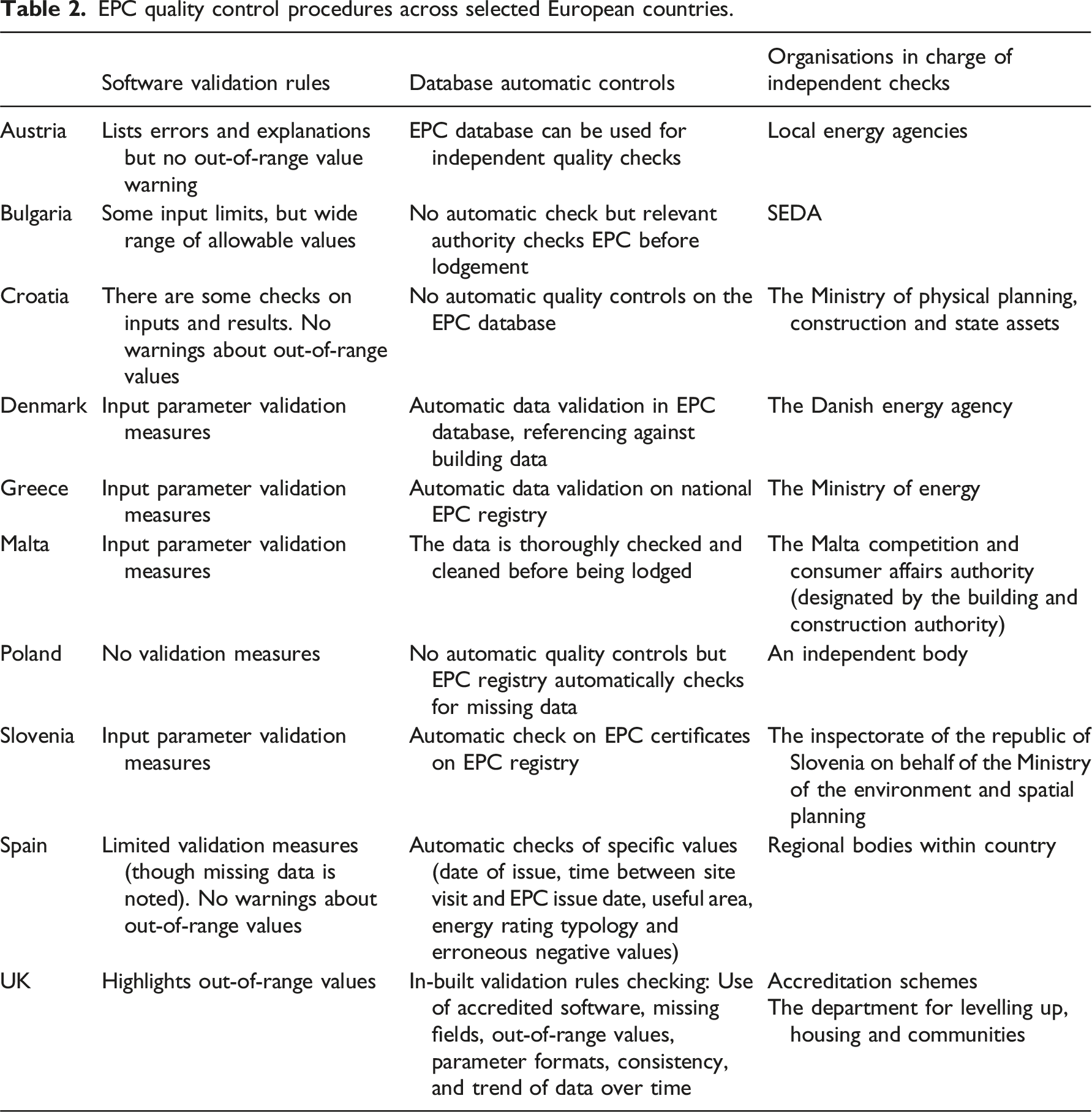

EPC quality control procedures across selected European countries.

The “Software validation rules” category in Table 2 refers to whether there is any error-checking process on data being used in the accredited software. For example, some countries will highlight when an input value is outside a realistic range, whereas others will require the user to carry out some form of validation on the values presented. “Database automatic controls” refers to checking that may occur when the EPC data is uploaded to a national database, to identify erroneous assessments. Table 2 also lists the bodies responsible for the overall procedure, which varies by country.

Guidance for next-generation EPCs

Understanding the benefits and limitations of harmonisation

As already indicated, harmonisation of EPCs can be interpreted in different ways. It can, and often is, more about higher level, general guidance for what an EPC should be achieving, rather than some consistent set of algorithms to apply universally across the entire European building stock. However, if attempting to make technical updates (such as new next-generation metrics) to European EPCs at the same time, the definition of harmonisation requires some consideration and clarification, as discussed below.

What is meant by harmonisation?

Harmonisation exists at different scales and at different points in the EPBD and its implementation. It can therefore become unclear what is meant by harmonisation when applying something to so many different countries. The priority may be harmonisation of primary goals (e.g. a consistent rating system) but with differences accepted elsewhere. The consistency could relate to the EPC energy bands themselves or the metrics often displayed alongside that rating. Taking the complete EPC journey (inputs used, calculation, assessment, assessors, outputs generated, use with national policies), there should be clarity on where in that process harmonisation is being targeted. As already noted, the EPC approach of a specific country encapsulates many different stages. Considering these stages, it is proposed that harmonisation levels may be proposed, as below, at a decreasing gradient of harmonisation. 1. A single cradle-to-grave EPC approach across Europe 2. Similarity of underlying calculation methodologies 3. Common use of output metrics 4. Common approach of EPC assessors 5. Similar application of EPCs within national energy policy/targets 6. A top-level objective for a standardised framework with common purpose 7. No target for Europe-wide harmonisation; focus on harmonisation and consistency within a country

The EPBD does not seek to enforce levels 1 and 2 – although there are common aspects (e.g. use of the EPC rating itself, provision of recommendations), there is no call for a common methodology across Europe; only for an approach that is standardised and replicable for a given country. Based on the information already presented (e.g. Table 1), it is difficult to find evidence of levels 3 to 5, though (as discussed in this paper) there are potentially clusters of EPC approaches that share common features in these categories. The suggestion of this paper, and the research presented, is that it is only really levels 6 and 7 where genuine harmonisation can be considered for European EPCs – and this allows for considerable variation in the application of EPCs and their suitability for different tasks.

Why might harmonisation be desirable?

When choosing the level or type of harmonisation, it is also necessary to distinguish what value this has and who are the beneficiaries. Europe-wide harmonisation may be encouraged by the EPBD, potentially allowing for some cross-comparison of EPCs (subject to previous discussions), but that is distinct from harmonisation within a country. NGEPCs, and the new solutions being proposed and tested around this, 26 will clearly have greater impact if baseline EPC approaches are similar enough to allow for consistent implementation. Even with current EPCs, the recognition of the EPC rating itself as a common standard can be considered as a driver for harmonisation – and the exporting of this approach outside Europe. This drive for consistency can, however, be a barrier to innovation and therefore needs a strong rationale (e.g. country-specific market transformation).

How can harmonisation be implemented?

Even with definition and purpose of harmonisation clarified, specific issues in individual countries may limit harmonisation and might even make harmonisation harmful to achieving local/national targets. When faced with different climates, technologies (particularly heating vs cooling), housing stocks, assessor workforce, policies, and energy behaviour/culture, the value (and audiences) of EPCs should be expected to differ by country. For next-generation EPCs, we cannot expect all changes to be adopted by different countries in the same way (due to the above differences). There is not one universal EPC method (that we can simply update for all countries at the same time in the same way), but we want to avoid having many different individualised approaches for every country to update their EPCs in different ways. The following section proposes an approach to support this update, where differences are categorised rather than ignored – and this is further supported by the differences already observed in 'Differences in EPC assessment'. So, for example, countries using a very tailored and data-heavy approach to EPC assessment could adopt a next-generation metric or other innovation in a similar way – but this may be different to how countries using very standardised/simplified assessments adopt that same innovation.

Frameworks for harmonisation and comparison

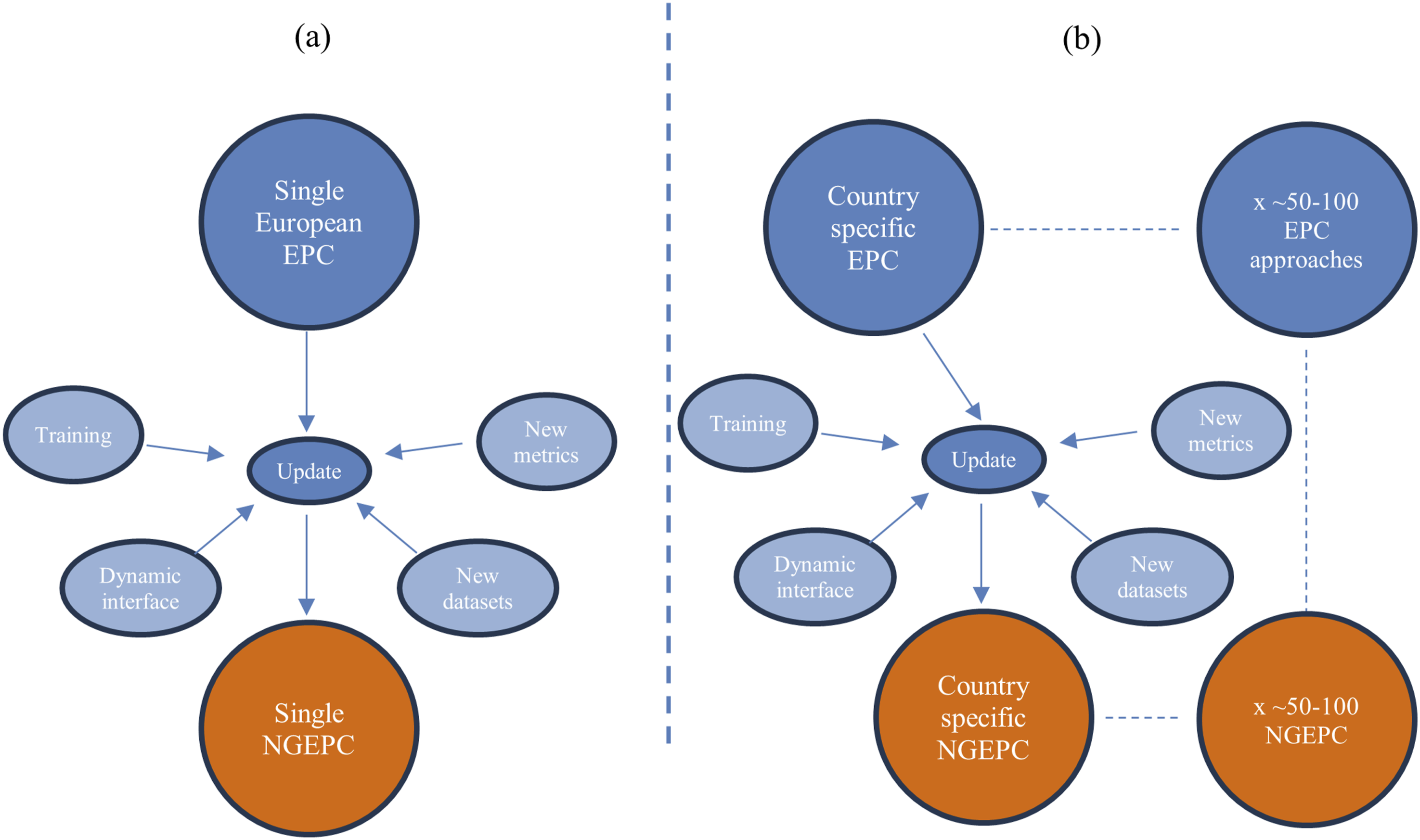

Within this context of harmonisation, there is arguably a need to identify differences in EPC approaches in a systematic way. The advantage of such categorisation of method is twofold. Firstly, this provides a more conceptual comparison of methodologies that is different, but complementary, to the technical comparison of Table 1. Secondly, by grouping EPC approaches, then it may be possible to apply new EPC innovations (such as those proposed through next-generation EPC design) to clusters of countries simultaneously, aiding implementation. Without this guidance, we are left with either applying innovations to EPCs universally and ignoring the country-specific issues that may hinder that implementation (as indicated in Figure 1(a)), or taking each country as a standalone case-study that may restrict good practice sharing and learning during that implementation stage (Figure 1(b)). The first approach is unlikely to be possible due to the variations stated in this paper, whilst the second approach is logistically demanding and raises the possibility that future NGEPCs will exhibit even greater variation across Europe than current EPCs. Note that Figure 1(b) refers to (approximately) 50-100 EPC approaches; the actual number of EPC approaches across the whole of Europe (accounting for residential and non-residential methods, different levels of and options for assessment available in some countries etc) is very difficult to determine but is likely to be more than the number of European countries following the EPBD. Attempting to upgrade EPCs to NGEPCs across Europe assuming (a) completely harmonised approach or (b) multiple, discrete methods.

Work published elsewhere

27

has proposed a framework for capturing this variation in EPC approaches, with a particular focus on new EPC innovations. Discussed in more detail in the aforementioned reference, the proposed categories for a comparative exercise are. 1. Alignment with reality: To what extent will the outputs align with empirical measurements of energy consumption 2. Quantifying new metrics: Does the generated model output allow for a range of information (e.g. at different temporal resolutions) to be calculated and displayed on the EPC, or is the model designed to produce traditional outputs such as annual energy consumption and nothing more 3. Accommodates new technology: For key heating and building technologies entering the market (e.g. heat pumps, district heating, energy storage), does the methodology have the ability to quantify key performance indicators of such options 4. Suitability for punitive action: With EPCs having growing importance for mandating (not just supporting) action on building renovation, are the outputs generated robust and validated to a level that such mandatory action can be justified 5. Extrapolating and standardising: Important for next-generation EPCs in particular, does increased detail and/or tailoring of a methodology restrict its replicability for all buildings and, therefore, restrict the ability of that framework to meet the standardisation requirements of the EPBD 6. Quality of input information: Standardised assessments tend to have reduced, and controlled, input information (noting discussion under 'Quality control of EPCs' section). Next-generation EPCs, and some forms of current EPCs observed across Europe, are likely to have a wider and more complex set of data needs. Maintaining quality control with such assessments is likely to be challenging and require new procedures (and assessor training) in place.

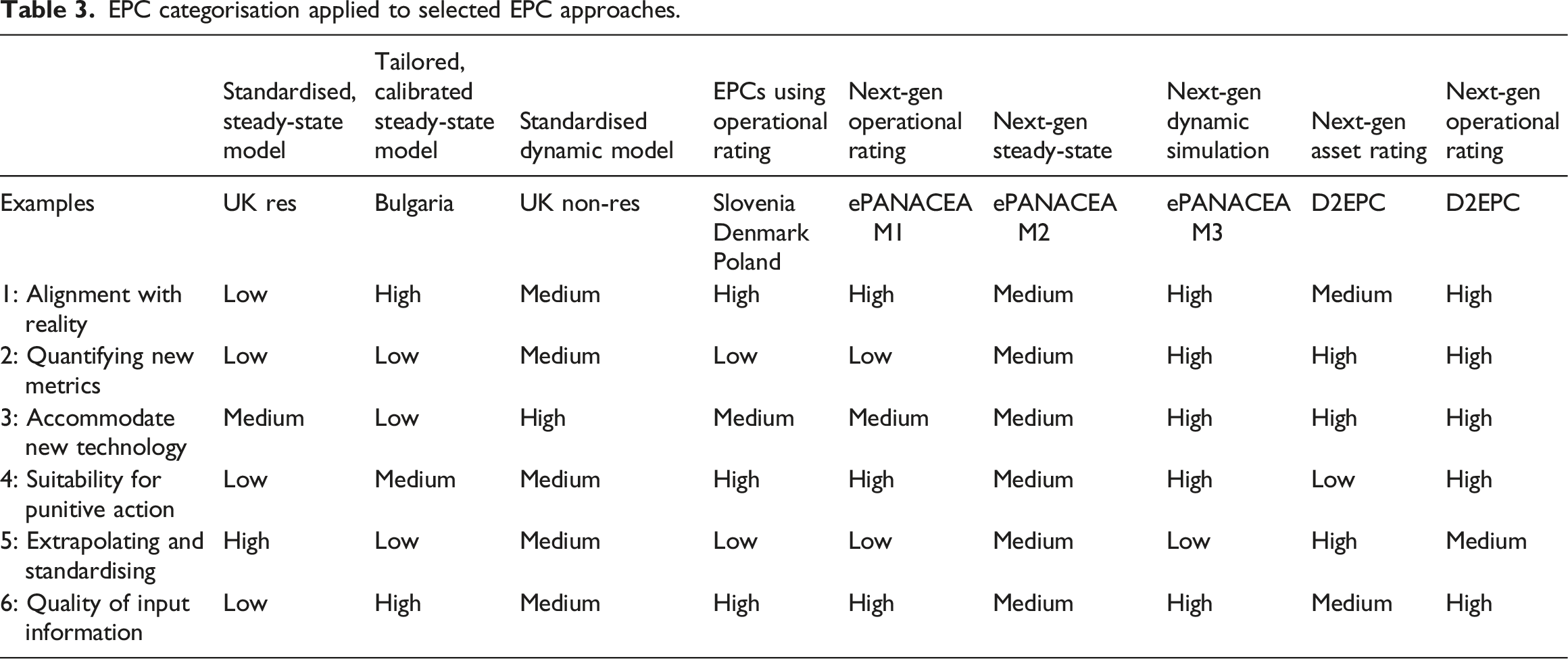

EPC categorisation applied to selected EPC approaches.

Categorising current and next-generation EPC approaches

Taking the criteria in the 'Frameworks for harmonisation and comparison' section, a number of current (i.e. active) and proposed (i.e. NGEPC research) EPC approaches are considered as a demonstration of how this categorisation could be applied. The different approaches are listed below, and chosen to be distinct from each other.

Standardised, steady-state models

As discussed earlier in the paper, steady-state calculations involve non-dynamic building physics (i.e. calculations where conditions between time-steps are not directly linked) to generate an estimate of building energy usage. Steady-state models are a very common form of calculation engine for EPCs across European residential buildings, and have considerable use with non-residential buildings. The UK has a particularly standardised use of steady-state model in terms of inputs used (see Table 1) which reduces the onus on the assessor and effectively seeks to assess an averaged archetype of a building (and occupants), rather than detail from the building itself.

Tailored, calibrated steady-state model

Although not commonly observed, it is possible to use empirical energy consumption data within an EPBD-compliant EPC approach. This is seen in Bulgaria, where modelled energy consumption can be adjusted to align with measured energy data. This allows for the model output to be aligned with a specific use of that particular building (which may be of value to a building occupant/owner) but creates challenges for replication and standardisation. This calibration is distinct from directly using empirical energy data as a basis for the energy rating (as discussed under 'EPCs using operational rating').

Standardised dynamic model

Dynamic simulation is less commonly used for the studied European countries; it is not present at all (at least in a mandatory sense) for current residential EPCs but is part of some non-residential calculations. In the UK, this can be used as an option for any non-residential building but is mandatory for “level 5” EPCs that apply to more complex buildings. 22 Such EPCs will be based on modelling that has a more complete picture of building physics; in theory this could allow a next-generation EPC to have a wider choice of modelled information to base output metrics on (e.g. those metrics requiring higher temporal resolution of calculation and/or output), though often the use of that dynamic simulation is for generating low-resolution metrics (e.g. annual energy consumption in the aforementioned UK example for non-domestic buildings).

EPCs using operational rating

As noted under 'Tailored, calibrated steady-state model', it is unusual for actual building operation (and empirical energy data that betrays such operation) to be used to generate an EPC, but the EPBD does allow this as an option if within a structured framework. Unlike the 'Tailored, calibrated steady-state model', the operational rating will be a fundamental part of the EPC rating, rather than a recalibration applied to the output of a purely theoretical EPC calculation.

Next-generation EPCs

To apply this categorisation process beyond just current EPCs, work of two European projects has been referenced to understand how proposed innovations to EPCs may compare with current EPC approaches. The ePANACEA 28 proposed methodology offers three pathways for calculating next-generation EPCs: a steady-state model based on ISO 52016, 29 a calibrated dynamic simulation, and an operational rating. In contrast, the D2EPC project 8 employs both asset ratings (using a steady state approach) and operational ratings, but its operational ratings rely on sensor data rather than the energy bill information used in ePANACEA. This reliance on sensor data allows D2EPC to better adapt to new technologies and metrics.

The ePANACEA methodology incorporates dynamic simulation, which accommodates demand flexibility and the inclusion of new metrics. The ePANACEA “M3” route, calibrated against energy bill data, offers an assessment that will be more aligned with real energy consumption than other EPCs based only on dynamic simulation (where a purely theoretical dynamic simulation will still suffer from issues of Performance Gap). However, with a range of options available for where the input data may come from – either drawn from default values or manually entered by assessors – the methodology’s ability to facilitate comparisons and standardisation may be questioned. Even the form of certain inputs (i.e. fixed values, stochastic models, deterministic hourly profiles) in this methodology can vary based on the assessor’s preferences and the accuracy required of the simulation, as this route requires modelling the building in the EnergyPlus environment. Results from the ePANACEA M3 route, calibrated to bill data and so representing some empirical truth for the building as it is currently used, may be more suited for policy enforcement, while D2EPC’s steady-state simulation draws inputs from building BIM files, which may use standardised values rather than actual operational data (and so have greater replicability). Both methods allow the use of default values for calculation inputs to simplify the assessment, while also enabling the assessor to provide customised inputs based on sensor data, measurements, documents, or observations. In their current form, they implement default values from existing standards, e.g., ePANACEA draws default values from the Spanish methodology and D2EPC used the values suggested in the ISO 17772-1:2017 standard. 30

The ePANACEA “M2” methodology has some alignment to other steady-state approaches but includes calculations for SRI and a calibration step. These enhancements improve its alignment with real-world conditions, and therefore how a current building user may be advised (or mandated) to act. However, the reliance on pre-defined or assessor-defined inputs reduces its potential for standardisation and extrapolation compared to some other existing approaches.

Discussion

Table 3 has attempted to use broad criteria in a way that “families” of different EPC approaches can be identified. The suggestion is that approaches with common categories across those criteria may be dealt with in a similar fashion when looking to upgrade to NGEPCs or when applying cross-country comparisons of EPC performance (noting the lack of harmonisation across multiple methods).

Although not explicitly suggested in this paper (but identified for further work), specific NGPC innovations could be graded by suitability for different methods. For example, an Operational Energy Rating could be identified as more suitable for those methods that rate highly for Alignment with reality (Criterion 1). Alternatively, an SRI rating may make more sense with a method with “high” Quality of input information (Criterion 6). This would require clearer guidance around how to come to a judgement of High, Medium, Low (in Table 3), involving specific questions that guide the user of this framework. These could include basis for modelling engine (dynamic simulation, steady-state modelling, or empirical), use of look-up tables for inputs (vs measured onsite), specified levels of assessor training for that method, temporal resolution of outputs (annual, monthly, hourly), and frequency that key external inputs (climate/weather, carbon intensity of fuels) are updated. A successful categorisation framework, formed around such set questions, could then lead to more tailored advice around the implementation of that NGEPC metric/innovation (e.g. whether and how to apply it to a method of a given category).

While most current EPC methodologies follow a set approach, NGEPC methodologies appear to move towards greater flexibility, enabling assessors to tailor the methodology type according to the specific needs and requirements of a building. Both next-generation methodologies studied in this paper offer operational and asset rating routes, and ePANACEA provides a dynamic simulation option for buildings where greater accuracy or alignment with real-world conditions is necessary. The categorisation presented will be particularly important with these less standard approaches, where assessors face multiple choices and must undertake a decision-making process to select the most suitable options.

Such changes may cause concern that the role of EPCs is moving away from the original intention of the EPBD. It is difficult to identify a point at which appropriate upgrading of a methodology (accounting for new assumptions, new technologies, and the improved software/hardware performance relating to the generation of EPCs) moves the EPC into an area which is beyond the competencies of the assessment (and the assessors). When changes (and indeed baselines) across Europe are not harmonised, this adds to the complexity; NGEPC alterations may function adequately in one country (and meet the skillset of assessors) but not be appropriate for another country. The work of this paper and the wider crossCert project, suggests that, during a process of evolving and improving EPCs, it is necessary to (i) consider whether the intended goal would be best served by an NGEPC or a different assessment entirely, and (ii) if the workforce behind EPCs in a specific country are naturally suited, in terms of skills, to achieve that end goal.

Conclusions

As EPC approaches move towards NGEPCs, it is necessary to understand the differences in current EPC approaches and how that lack of harmonisation may require many tailored changes to EPC implementation in different countries, rather than a single, unified upgrade of a European EPC. The work presented in this paper helps better understand the current baseline through a comparative exercise of European EPC approaches, indicating the significant differences of EPCs in concept and delivery across selected European countries. By systematically categorising these differences, in the way presented here, it may be possible to group EPC approaches together to make the adoption of current and future EPBD recasts more achievable for those countries following the EPBD. In this way, implementation can be applied that is cognisant of local/national assessment framework, assessor workforce, and national policies. The categorisation and comparative process documented here is designed to draw attention to challenges from the new metrics and innovations proposed from NGEPC work specifically. Whilst more work is needed to develop this framework, recommendations are provided to guide this development.

For a country like the UK, it is suggested that EPCs must maintain a degree of standardisation due to the current training of assessor workforce and use of EPCs in policy. Radical changes will require a significant retraining of assessors, and a revision of how EPC ratings inform building energy efficiency targets. Conversely, for countries (like Bulgaria) with less standardised training (and methods) but higher thresholds of background qualification, there may be more flexibility in incorporating new EPC innovations but achieving that consistently will be challenging.

Some NGEPC indicators may not align well with calculation methodologies that are designed for simplicity and replicability (again, noting the UK as an example). For an output such as the SRI, it is also questionable whether the audience is the same sector that EPCs are currently targeted at. Whilst there may be an intention, through some newer EPC metrics, to broaden EPC audiences, on a modelling basis an EPC approach that is highly reliant on steady-state calculations is unlikely to offer a detailed understanding of demand flexibility (where the dynamic nature of demand will be of more concern to audiences such as Distribution Network Operators).

Furthermore, a more diverse user audience could drive better use of interactive EPCs with dynamic interfaces to avoid the problems of information fatigue, where users can filter for information that is relevant to them. This would support a twin desire of detail for expert users and simplicity for non-expert users. The UK is already relatively unusual in offering an online EPC where the user has some (albeit currently limited) control of the data being presented. Should next-generation EPCs wish to communicate effectively to a wider range of audiences, the use of static EPCs may have to be reviewed.

Across all this work, the purpose and importance of harmonisation of EPCs is key. The EPBD, and innovations to that directive, require some degree of harmonisation so that top-level changes and guidance can be converted into national delivery strategies. Even with methodology divergence, harmonisation of some processes across Europe (quality control, data gathering processes etc) can still be possible and therefore allow for good practice sharing across those countries following the EPBD. However, country-specific variation is significant and cannot be ignored and the classification strategies proposed in this paper can aid countries to align with other European countries with commensurate strategies. This may also allow for the guidance of future EPBD updates to be better communicated and tailored for specific EPC frameworks.

Footnotes

Acknowledgments

The work described in this paper has been supported by the crossCert project, funded by Horizon2020 (Grant 101033778). The paper is an extended and updated version of an IBPSA-England/CIBSE Technical Symposium 2025 conference paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Horizon Europe Climate, Energy and Mobility (101033778).