Abstract

This paper provides a simple method for efficient performance evaluation of floating-point (FP) formats, addressing the challenge of implementing DNN (deep neural network)-based sensor nodes and edge measurement devices. Since resource constraints are imposed in such scenarios, the 32-bit FP format, standardly used for DNN implementation, is unsuitable, and the alternative is found in lower-resolution FP formats which leads to certain performance degradation. Hence, an efficient mechanism for performance evaluation of different FP formats is needed to examine the influence of resolution decreasing. Existing methods utilize the analogy between FP formats and piecewise uniform quantizers (PUQs), using the signal-to-quantization noise ratio (SQNR) of the PUQ to express the performance of FP formats. However, the high complexity of SQNR calculations, involving sum with many terms (e.g. 254 terms for FP formats with an 8-bit exponent), poses a significant challenge. This paper’s main contribution is the significant simplification of the SQNR expression for Gaussian-distributed data, reducing the number of sum terms from 254 to just 5 with minimal accuracy loss, allowing for simple and efficient performance evaluation of FP formats. Major findings include an in-depth analysis of the probability distribution across PUQ segments, a closed-form expression for identifying the highest probability segment, and an evaluation of the SQNR approximation’s accuracy. These findings provide a foundational basis for implementing intelligent DNN-based measurement systems, with applications extending to computing, signal processing, and other fields utilizing FP formats.

Keywords

Introduction

Due to the dominance of digital measurement systems, analyzing formats for digital representation of measurement data has become a crucial research task. The two main types of digital formats are fixed-point (FxP) and floating-point (FP) formats (Dinčić et al., 2023). FxP is simpler to implement, while FP offers a much wider variance range of consistent representation quality, making it preferable for numerous applications, including computing, signal processing (Moroz and Samotyy, 2019), and sensor data representation (Chen et al., 2024). The most commonly used is the 32-bit floating-point format (FP32) (Perić et al., 2021), providing high-quality digital representation over a very wide range of data variance, although it is complex to implement. The use of the FP32 format is especially relevant for the implementation of deep neural networks (DNNs), being used by default for representing DNN parameters (weights, activations, etc.) and input data. DNNs are increasingly used for processing of measurement signals, such as electrocardiogram (ECG) (Degachi and Ouni, 2025), electroencephalogram (EEG) (Li et al., 2024), and vibration signals (Syu and Lee, 2024), for the implementation of measurement systems (Chen and Chen, 2022; Chen et al., 2023; Liang and Huang, 2023; Liu and Yang, 2024; Lou et al., 2024), control systems (Bey and Chemachema, 2024; Xi et al., 2024), soft sensors (Luo et al., 2024; Sun and Ge, 2021), sensor networks (Kim et al., 2021; Wu et al., 2023) and Internet of Things (IoT) systems (Mohapatra et al., 2024; Mukhopadhyay et al., 2021), as well as for sensor linearization (Anandanatarajan et al., 2023), sensor drift compensation (Chaudhuri et al., 2021), and sensor fusion (Balemans et al., 2023). Initially, DNN-based measurement and control systems were implemented such that DNNs ran on powerful servers and clouds (Alamri et al., 2013), transmitting measurement data from sensors via the Internet and causing in that way latency and security issues (Sahni et al., 2022). Recently, local implementation of DNN algorithms on sensor nodes and edge measurement devices to be as close as possible to the source of data has become a key research focus, enabling the realization of intelligent and automated measurement and control systems capable of real-time data processing and decision-making with enhanced security and reliability and suitable for various applications (Al Koutayni et al., 2023; Fanariotis et al., 2023; Lai and Chang, 2023; Ronco et al., 2022; Yadav et al., 2024; Zhou et al., 2021).

However, the main challenge for DNN-based sensor nodes and edge measurement devices is their limited hardware resources (processing power, memory capacity, and especially available energy in battery-powered sensor nodes and edge devices), which makes implementation of the FP32 format very difficult due to its complexity (Syed et al., 2021). Consequently, considering lower resolution FP formats (e.g. 24-bit FP24 (Junaid et al., 2022), 16-bit bfloat16 (Agrawal et al., 2019), 8-bit FP8 (Wang et al., 2018), or some other) for implementation of intelligent sensor nodes and measurement devices becomes a topical research direction, with the aim of reducing complexity. However, lower resolution reduces performance by decreasing the quality of digital representation and narrowing the variance range, risking inadequate quality and variance range for specific applications. Thus, a critical research gap exists in developing efficient methods to evaluate the performance of various FP formats in terms of representation quality and variance range. Addressing this gap is essential for selecting the optimal FP format for each specific application and input data set, enabling resource-efficient implementation of intelligent DNN-based sensor nodes and measurement systems.

A significant advance in evaluating FP format performance was achieved in Perić et al. (2021), where an analogy between the FP format and the piecewise uniform quantizer (PUQ) was established. Quantization error is expressed by an objective measure called distortion. It is common to define distortion using the L2 norm as the mean squared quantization error, although an alternative approach defines distortion via the L1 norm as the mean absolute quantization error. Signal-to-quantization noise ratio (SQNR) represents another widely used objective performance measure of the quantizer. The typical approach, which will be used in this paper, calculates SQNR using the L2 norm, as the logarithmic ratio (expressed in dB) of data variance (representing the mean squared value of the data) to distortion calculated as the mean squared quantization error. However, some studies use an alternative method to calculate SQNR with the L1 norm, as the logarithmic ratio of the mean absolute value of the data to distortion calculated as the mean absolute quantization error (Marco and Neuhoff, 2006). In both cases, a higher quantization error results in greater distortion, leading to a lower SQNR.

The established analogy between the FP format and the PUQ allows us to express FP format performance through an objective measure such as the SQNR of the PUQ. However, the SQNR expression is very complex, containing the sum of a large number of terms (e.g. 254 for bfloat16, FP24, and FP32 formats with an 8-bit exponent (Perić and Dinčić, 2023; Perić et al., 2021)), each corresponding to one PUQ segment. In addition, the need for repeating SQNR calculation for various variances within a wide variance range (over 1500 dB for formats like bfloat16, FP24, and FP32) further increases complexity, making performance evaluation of FP formats very impractical. This paper adopts the idea of using the SQNR of the equivalent PUQ as a performance metric for FP formats, addressing in the same time the problem of high computational complexity of the exact SQNR expression and offering a simple and efficient solution for SQNR calculation, thereby enabling effective performance evaluation of FP formats. Considering that the quality of FP representation of DNN parameters, expressed by SQNR, greatly affects the prediction accuracy of DNNs, this paper will offer solutions for simplifying DNN implementations in resource-constrained environments without compromising DNNs accuracy, as a significant contribution to the development of intelligent DNN-based sensor nodes and measurement systems.

It is known that the performance of the FP format depends on the probability density function (PDF) of the measurement data (Perić et al., 2021). This paper focuses on the Gaussian PDF, which is effective for modeling stochastic measurement data (Dincic et al., 2013, 2014, 2016, 2023). Moreover, the fact that non-Gaussian signals can be transformed into Gaussian by appropriate filters (Dinčić et al., 2023) broadens the applicability of paper results.

The main novelty of this paper is a new approach to SQNR calculation, based on the analysis of the probability distribution of PUQ segments and the impact that each individual PUQ segment has on SQNR. As an important result of this new approach, it has been shown that, for any given variance, only a few segments have probabilities significantly different from zero, affecting the SQNR, while all other segments have negligible probabilities with negligible impact on SQNR. This led to the key insight that SQNR calculation can be drastically simplified by focusing just on the segment with the highest probability and a few adjacent segments. As the most probable segment of PUQ varies with changing data variance, a significant contribution of this paper is the derivation of a simple closed-form formula for determining the segment with the highest probability valid for any variance value. In addition, this paper explores different numbers of segments left and right to the most probable segment to be included in the SQNR expression, aiming to obtain a tight approximation of the original SQNR. A crucial finding achieved in this way is a very simple approximate expression for calculating SQNR involving only five terms in the sum, which drastically reduces the complexity of SQNR calculation compared to the original SQNR expression. In addition, this paper examines how different components of distortion (representing the mean squared quantization error) affect SQNR across a wide variance range, revealing that some components have negligible impact in certain variance ranges. This further simplifies the SQNR expression, as another significant finding of this paper.

This paper focuses on evaluating the performance of FP formats with an 8-bit exponent (FP32, FP24, and bfloat16) due to their particularly high complexity of calculating exact SQNR values. It provides numerical values for the exact SQNR across these formats, obtained using the complex formula involving a 254-term sum, as well as approximate SQNR values calculated by the simplified expression with only a five-term sum derived in this paper. Calculations were conducted over a broad range of variance values. Results demonstrate that the simplified SQNR expression, developed in this paper, achieves an exceptionally low error of just 0.024 dB relative to the exact SQNR formula, thereby demonstrating that the substantial reduction in computational complexity achieved by using the approximate SQNR expression does not compromise calculation accuracy. Numerical calculations show that all three considered FP formats maintain a nearly constant SQNR across an extensive variance range from approximately −750 dB to beyond 750 dB. Within this range, the SQNR values are approximately 151.9 dB for FP32, 103.75 dB for FP24, and 55.6 dB for bfloat16, indicating that the reduction of resolution for 8 bits decreases SQNR by roughly 48.15 dB. On the contrary, the reduction in resolution simplifies implementation complexity. Hence, the most suitable FP format should be determined for each specific application by balancing implementation complexity with performance. MATLAB simulations with 10 million randomly generated Gaussian numbers, as well as an experiment performed with weights of a trained MLP (Multilayer Perceptron) neural network, confirm the theoretical results.

To summarize, given the impact of SQNR in FP representation of DNN parameters on DNN accuracy, alongside the substantial effect of FP formats on DNN implementation complexity, it becomes essential to assess FP formats in the context of DNN deployment. This is particularly relevant when considering the potential application of DNNs in resource-constrained measurement systems, which could become more intelligent and automated through DNN integration. This paper provides some fundamental results related to simplified and efficient calculation of FP format performance across various resolutions and a wide range of variance, facilitating the use of lower-resolution FP formats than FP32 and reducing in that way implementation complexity and energy consumption while accelerating data processing. These findings have substantial practical applications for assessing the performance of various FP formats and selecting the most suitable format for each specific case. This is particularly valuable for developing intelligent DNN-based measurement systems with limited hardware resources and constrained available energy (such as battery-powered systems), as well as real-time measurement systems. One potential application is in vibration-based predictive maintenance measurement systems in industry, where high-frequency signal sampling generates large amounts of data, requiring efficient and low-complexity solutions. Furthermore, the results of this paper can be applied across many other fields, including computing and signal processing, due to the broad applicability of FP formats.

This paper is organized as follows. Section “Structure and performance evaluation of the FP format” explains the FP format structure and its analogy with the PUQ, from which the expression for SQNR calculation is derived. The section “Efficient performance evaluation of FP formats”, as the main part of this paper, analyzes the impact of quantizer segments on SQNR, derives the closed-form expression for determining the most probable segment, proposes simple approximate SQNR expressions, and examines the influence of various distortion components on SQNR for different variances, further simplifying the SQNR expression. This section also presents numerical results based on the theoretical analysis, as well as simulation results provided to confirm the achieved numerical results. The next section, “Experimental results,” validates the correctness of the developed theory by applying the proposed approach to the data set consisting of weights of a trained MLP neural network. The conclusion and list of references are given at the end of this paper.

Structure and performance evaluation of the FP format

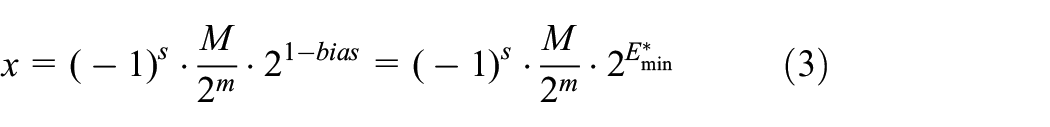

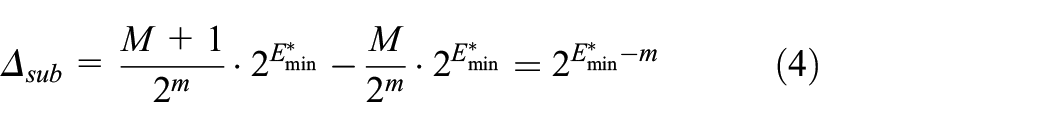

A real number x is represented in an R-bit FP format as (Perić et al., 2021)

where one bit “s” encodes the sign of the number x, e bits

where

Very small FP numbers in the interval (

There are a total of

Due to symmetry around 0, we can focus solely on positive FP numbers. Let us start with the positive subnormal numbers in the range (0,

is constant, making the subnormal FP numbers equidistant. Therefore, the structure of subnormal FP numbers is the same as the structure of a uniform quantizer with a quantization step size

is constant, but varies between different groups, making the numbers within one group equidistant. Therefore, each group of

It is evident that the structure of the FP format is equivalent to a symmetric PUQ with a support region

The SQNR of the quantizer is defined as (Perić et al., 2021)

where

where

Here,

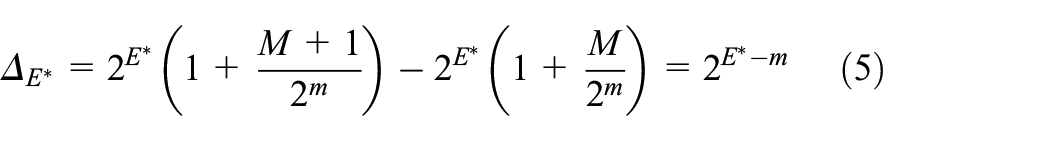

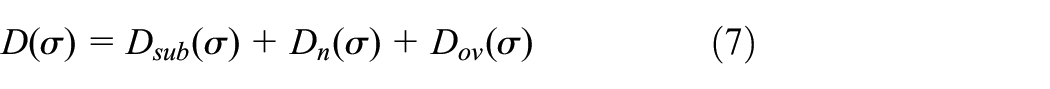

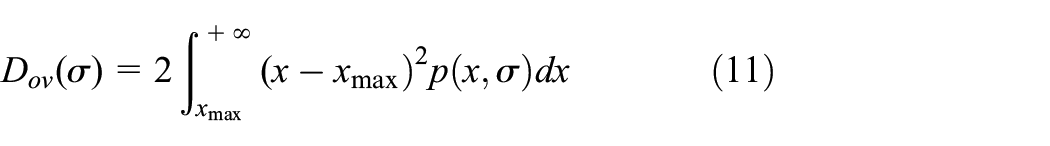

Distortion

where each term

where

Finally, the overload distortion is defined as

Multiplying by 2 in expressions (8), (9), and (11) includes the distortion in the negative part of the quantizer.

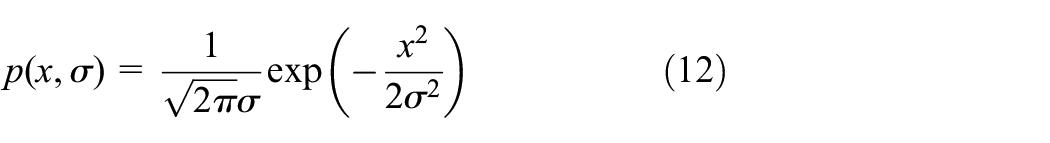

This paper considers the Gaussian PDF, defined as (Dinčić et al., 2023)

which has been successfully used for statistical modeling of measurement data. For

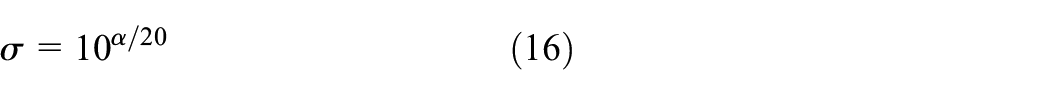

In scenarios involving a wide range of data variance, such as the case in FP formats, it is common to express variance in the logarithmic domain as

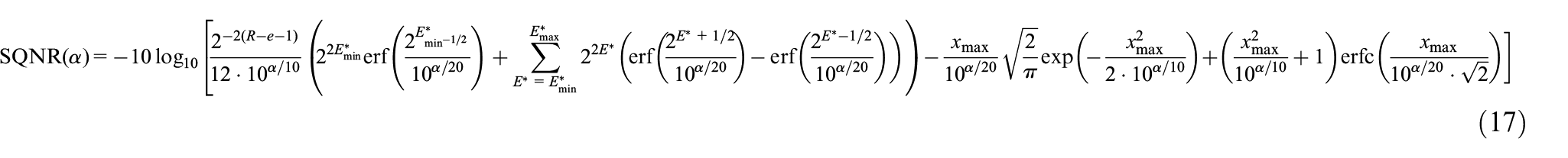

Taking into account expressions from (6) to (16), we obtain the final expression for SQNR of the FPQ

Expression (17) is crucial as it enables us to evaluate the performance of FPQ, and consequently the FP format, for any variance α of measurement data. However, expression (17) presents a challenge due to its extensive number of terms, particularly notable in FP formats with e = 8, like bfloat16, FP24, and FP32, with 254 terms in the sum. This complexity significantly increases the computational burden of calculating SQNR. As additional difficulty, SQNR computation needs to be repeated for various values of α over a very wide range, exceeding 1500 dB for bfloat16, FP24, and FP32. Therefore, a key research objective is to simplify expression (17), aiming for a faster and more efficient method to assess FP format performance with minimal computational overhead, while maintaining accuracy. This paper focuses on addressing this critical issue.

Efficient performance evaluation of FP formats

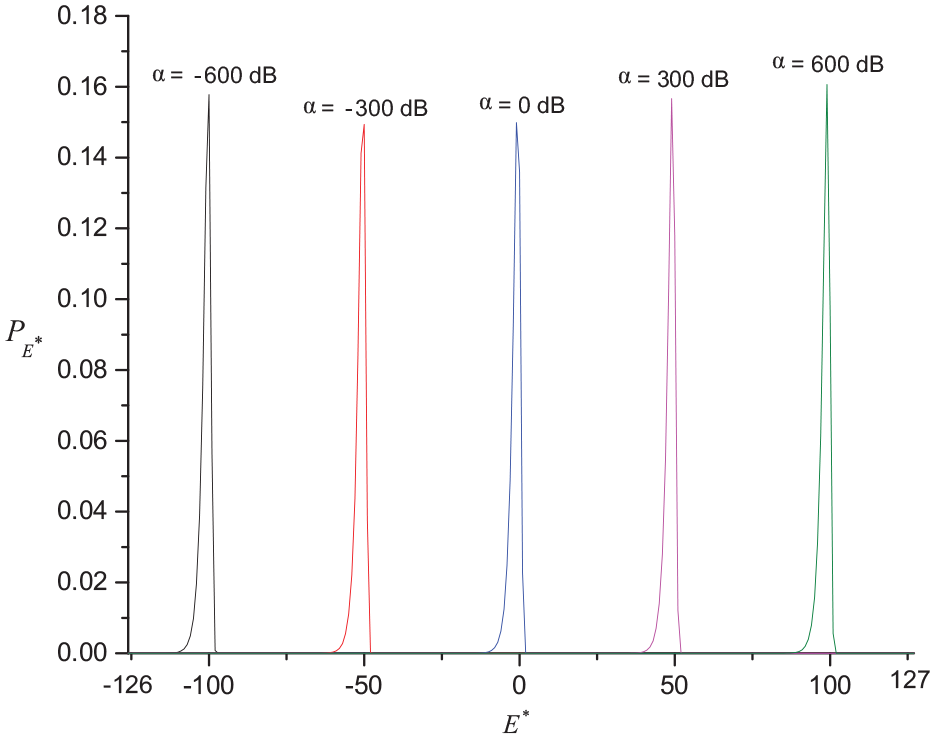

Figure 1 shows the probabilities

Probabilities of 32-bit FPQ segments for different values of the variance α.

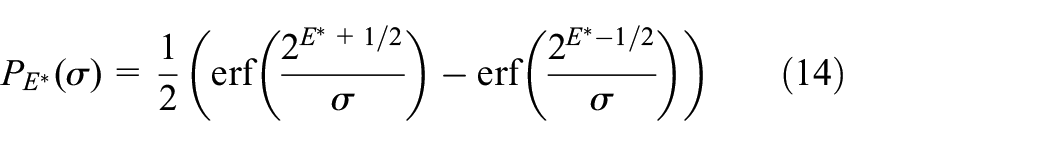

Let

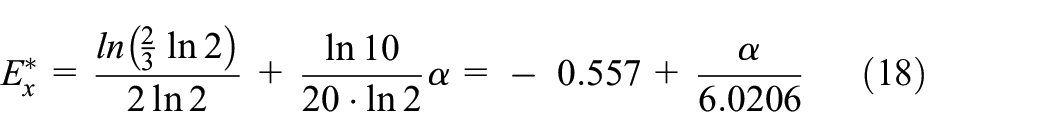

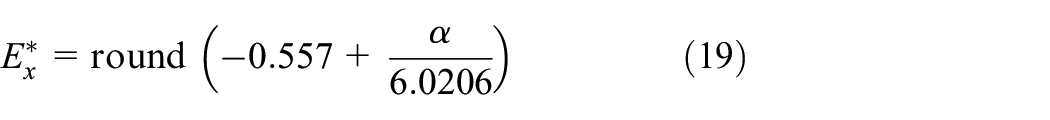

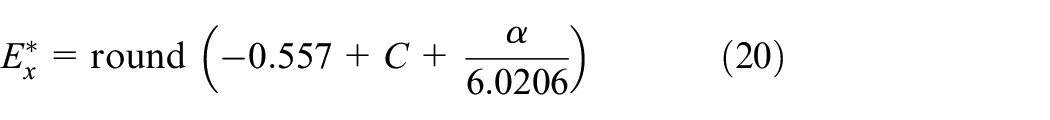

By differentiating the expression for

Note that expression (18) provides the value of

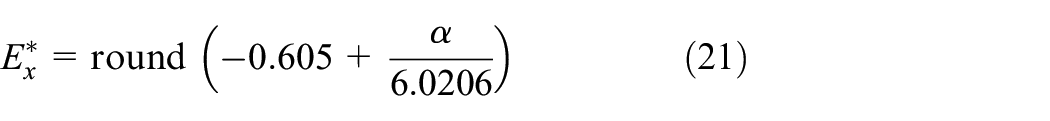

which gives accurate

Analysis carried out over a very wide range of variance α (from −800 dB to 800 dB) shows that C = −0.048 provides accurate values for

By introducing a small correction factor C, the rounding is finely tuned, ensuring accurate

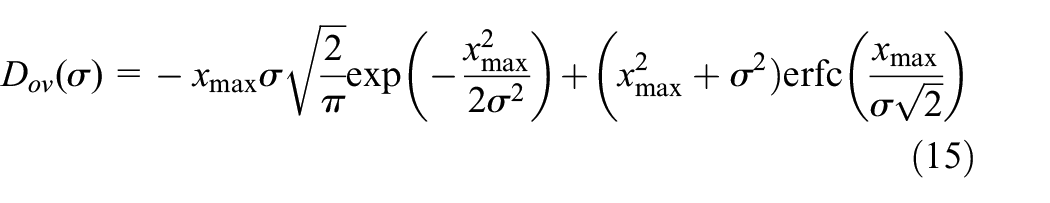

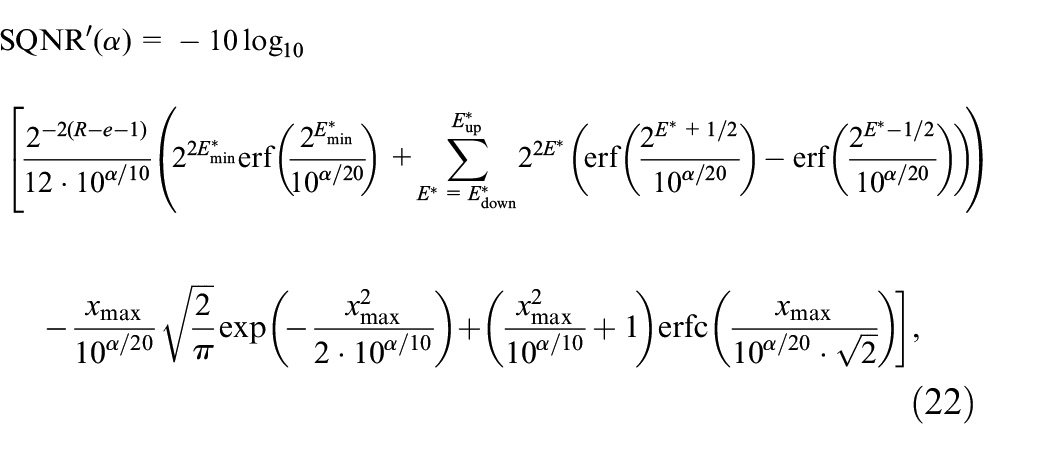

As mentioned, only the distortion in the segment

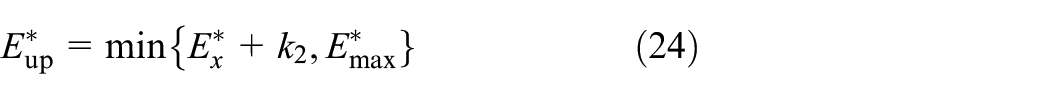

where

and

indicate the minimum and maximum values of the summation index. The expression (22) takes into account only

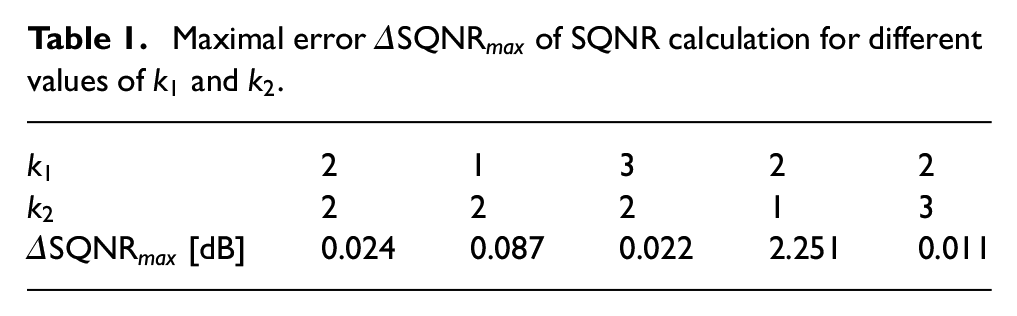

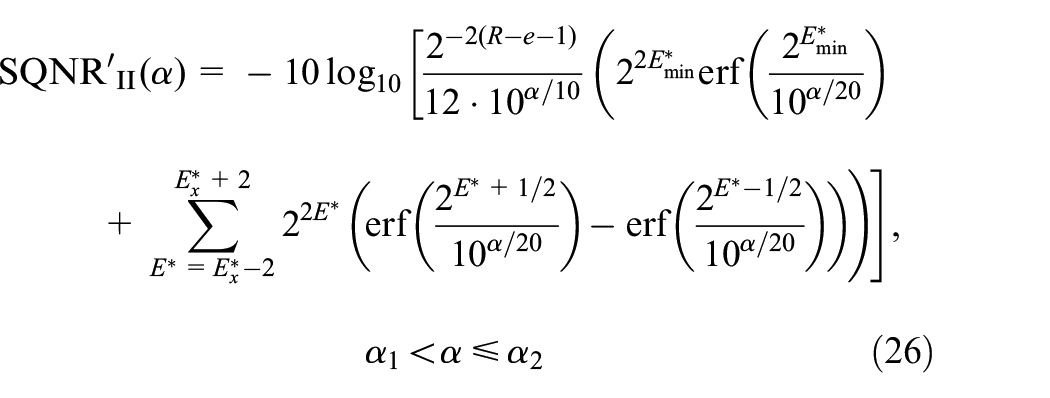

Maximal error

From Table 1, we see that the combination (

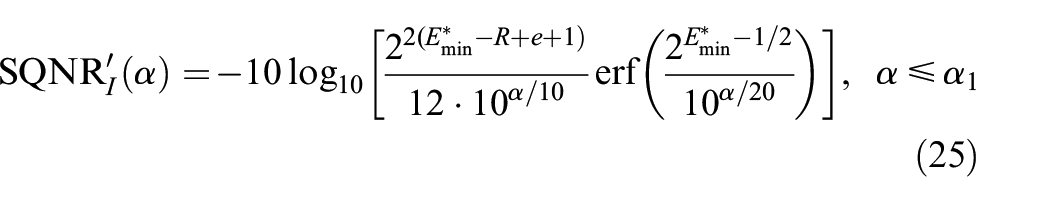

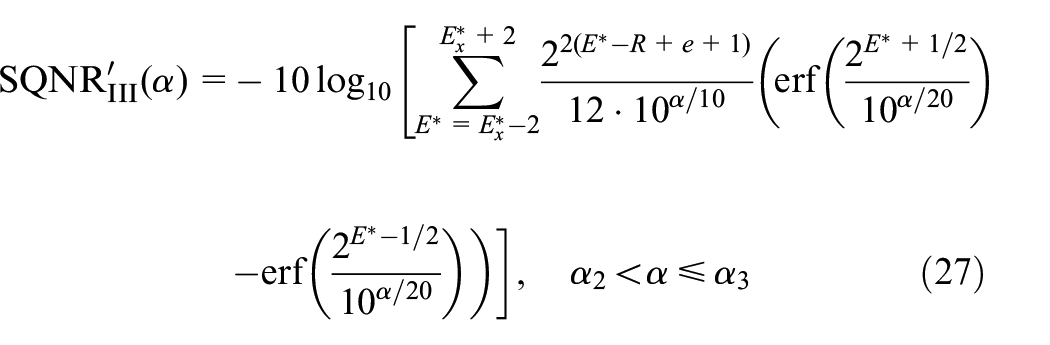

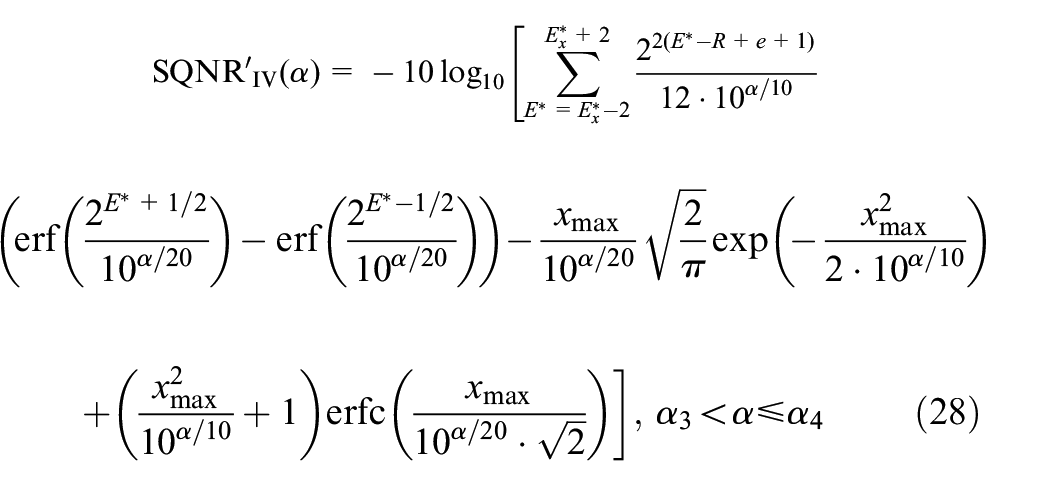

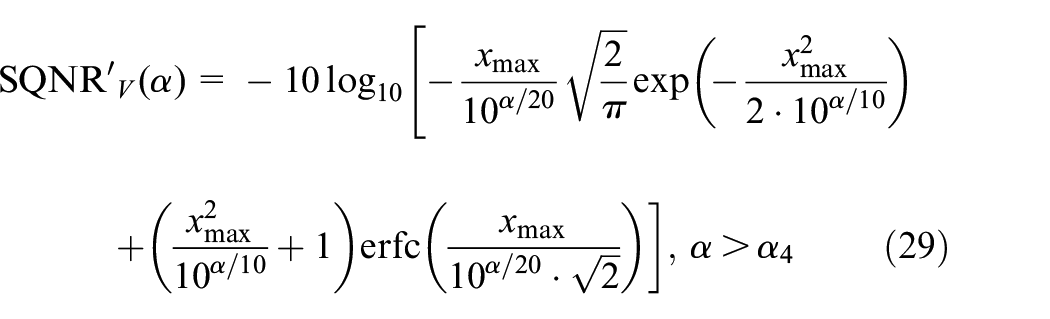

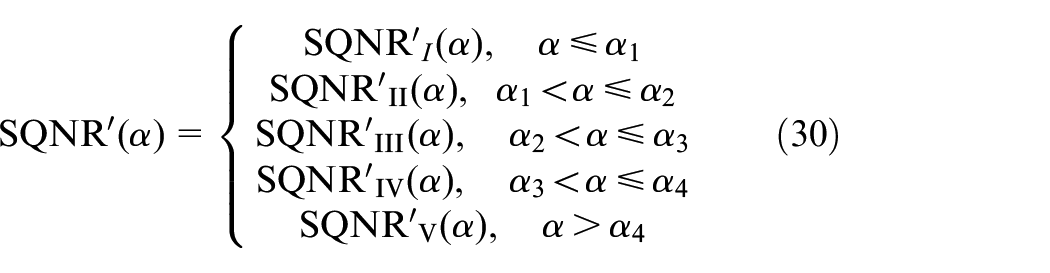

The expression (22) for SQNR can be further simplified. Specifically,

Range I, with

Range II, with

Range III, with

Range IV, with

Range V, with

Hence, the approximate expression (22) for SQNR can be written in a simplified way as

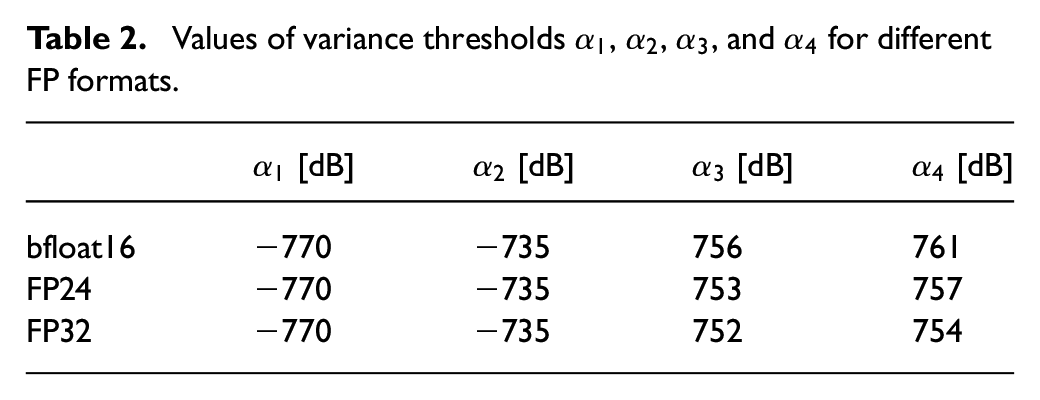

Table 2 provides the empirically obtained values for

Values of variance thresholds

Let us now comment about the accuracy of approximation (30). Namely, by applying expression (30), values of

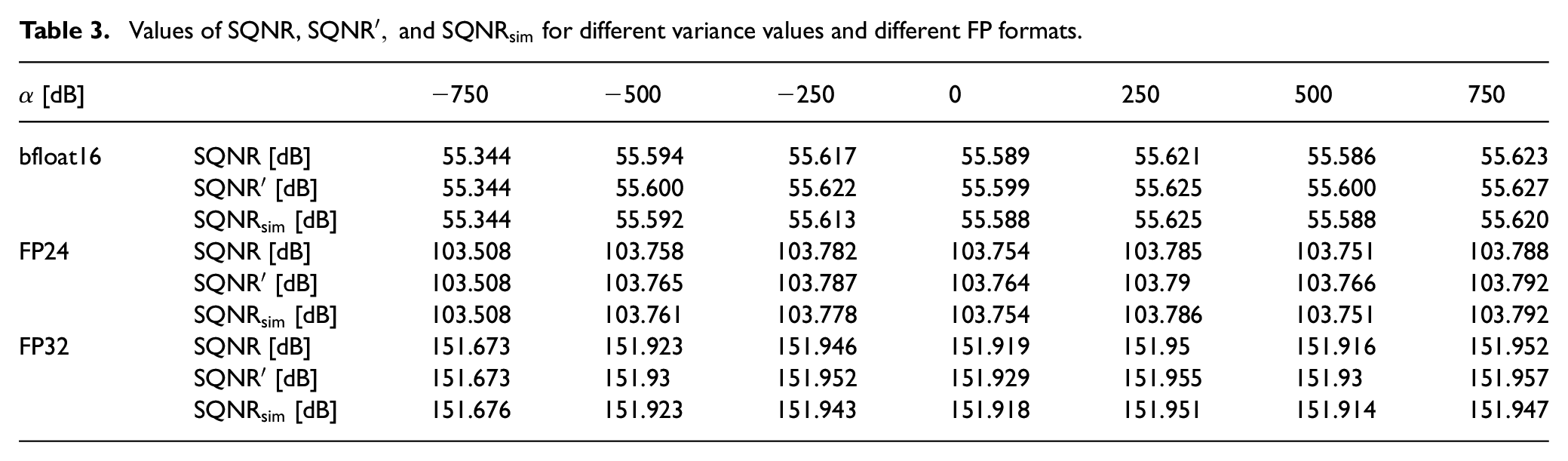

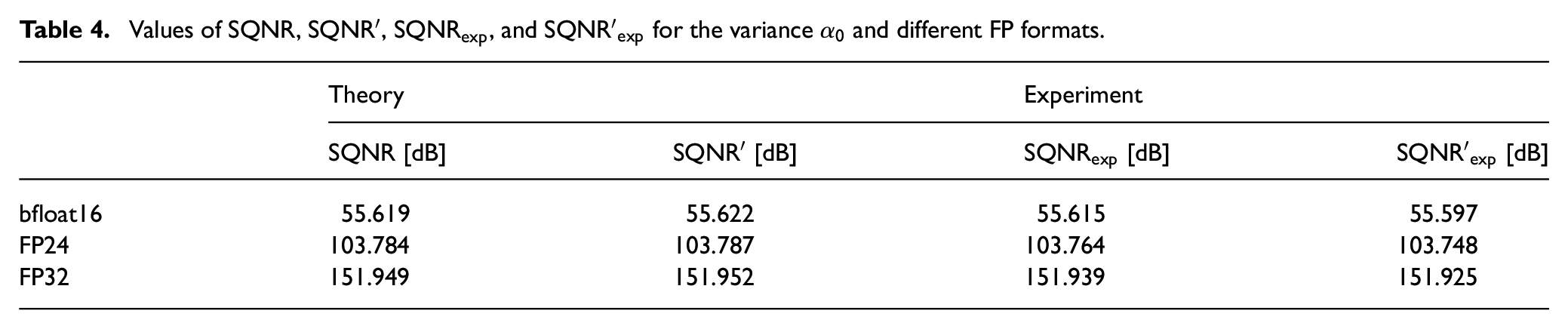

Table 3 provides the exact SQNR values calculated by equation (17), as well as the approximate values

Values of SQNR,

Using SQNR as a key performance measure, we can effectively compare the three FP formats under consideration: FP32, FP24, and bfloat16. Based on the results presented in Table 3, we can conclude that all three formats maintain a nearly constant SQNR across an extensive variance range from approximately −750 dB to beyond 750 dB. This similarity of variance ranges originates from the identical value of the parameter e (e = 8) for all three formats, which predominantly affects the variance range width. Within this range, the SQNR values are approximately 151.9 dB for FP32, 103.75 dB for FP24, and 55.6 dB for bfloat16. Notably, reducing the FP format resolution by 8 bits decreases SQNR by roughly 48.15 dB. However, this reduction in resolution also simplifies implementation complexity. In practical applications, balancing implementation complexity with performance is essential.

The results of this paper impact the implementation of intelligent measurement systems by simplifying the performance evaluation of FP formats, thereby making it easier to select the most suitable FP format for each specific application. Considering that implementation complexity is a key concern for resource-constrained intelligent measurement systems, the authors recommend selecting the FP format with the lowest complexity that still meets the application’s performance requirements.

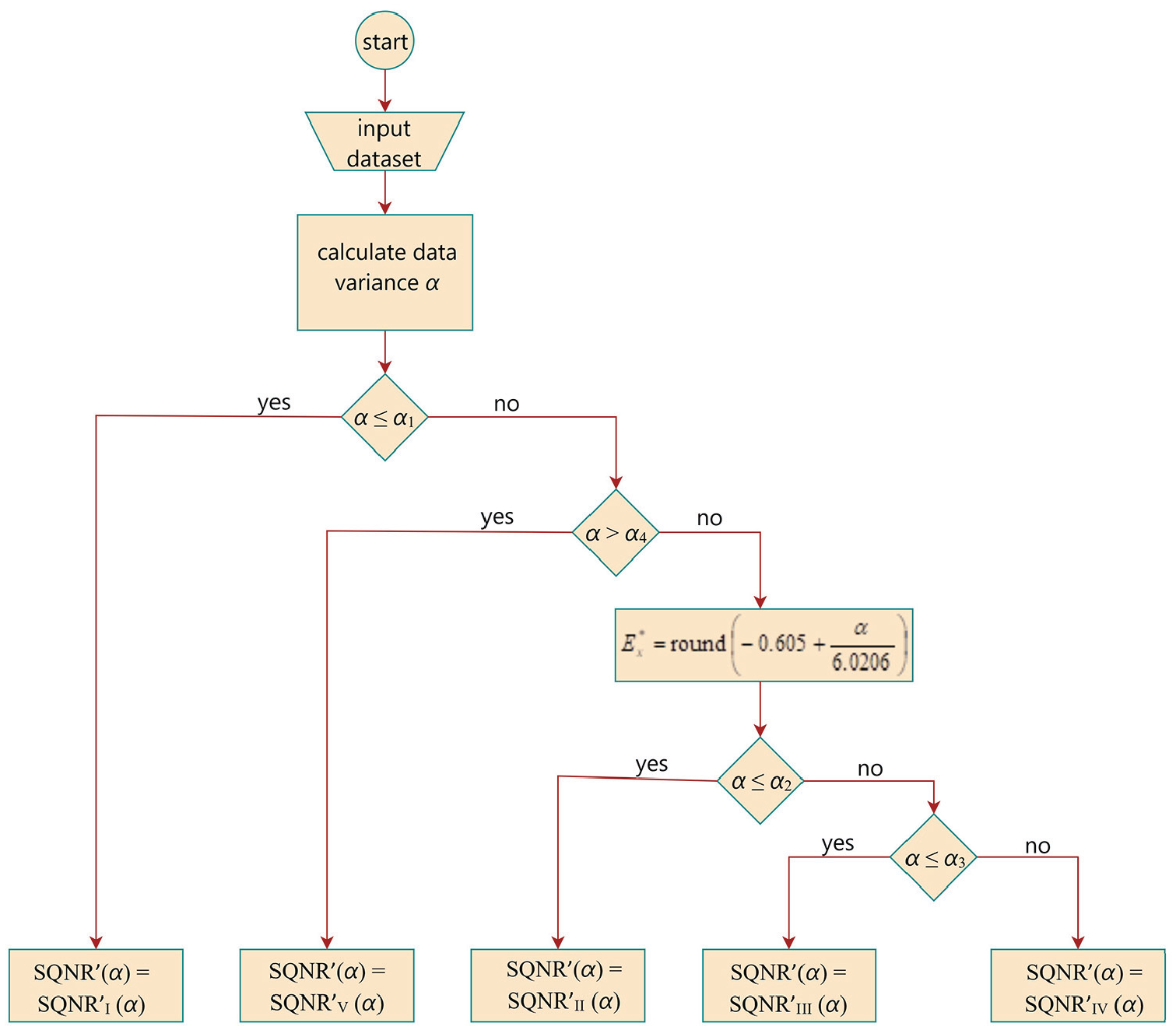

Figure 2 shows the flowchart of the applied methodology for calculating

The flowchart of the applied methodology for calculating

Finally, some limitations of the applied approach will be outlined. First, it is not recommended to use the described method for parameter values of e ≤ 3 and m ≤ 3, which occur in FP formats with very low resolutions. For e ≤ 3, the number of terms within the sum in the exact SQNR expression is not large, eliminating the need for simplifications. For m ≤ 3, the number of uniform quantization levels in each segment of the PUQ is less than 8, meaning the accuracy of formulas (8) and (10) for distortion, which were derived using asymptotic analysis (i.e. under the assumption of a sufficiently large number of quantization levels), may be insufficient. In addition, the presented method assumes that the Gaussian PDF has a zero mean. If this is not the case, the method can still be applied, but it requires subtracting the mean of the data set from each data point to center the data around zero. The method is then applied to the adjusted data set, with the mean added back at the final stage.

Experimental results

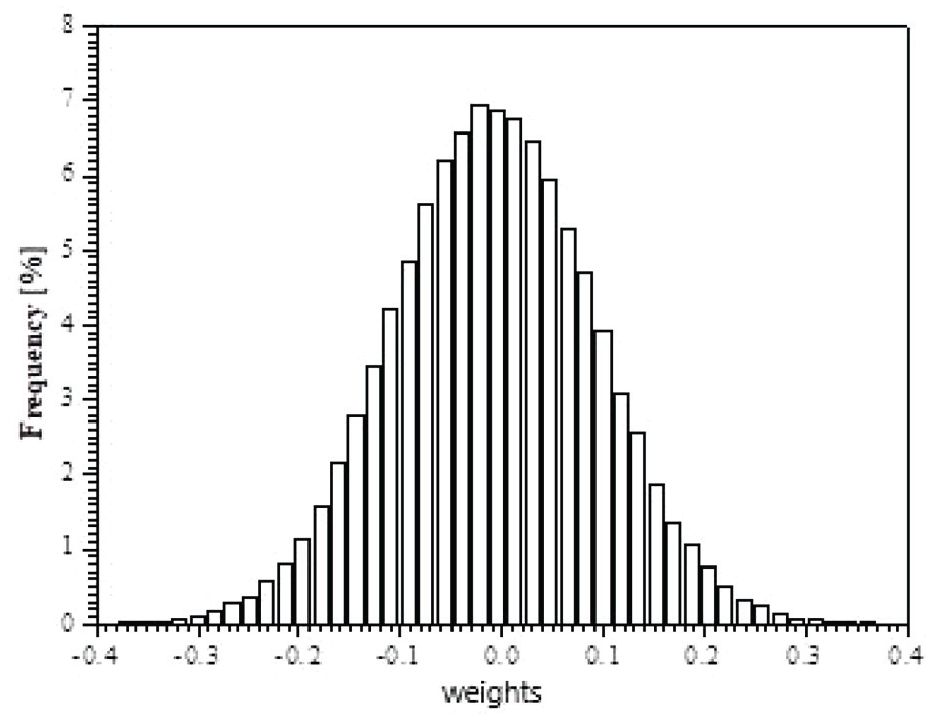

The theoretical SQNR results will be further validated by experimental findings, obtained by applying FP32, FP24, and bfloat16 formats on real data. These data comprise weights from a trained MLP neural network (Kruse et al., 2022) used for classification of images from the MNIST data set (Baldominos et al., 2019). The MLP architecture consists of input, hidden, and output layers with 784, 128, and 10 nodes, respectively. Hyperparameters were set as follows: regularization rate = 0.01, learning rate = 0.0005, and mini-batch size = 128. After 20 epochs, the MLP achieved accuracy scores of 0.9705 on the training set and 0.9686 on the test set. We used the weights between the input and hidden layers, amounting to M = 784 × 128 = 100,352. Figure 3 shows the histogram of the MLP weights, proving that they closely follow the zero-mean Gaussian distribution.

Histogram of MLP weights.

Let the MLP weights be denoted as

Values of SQNR,

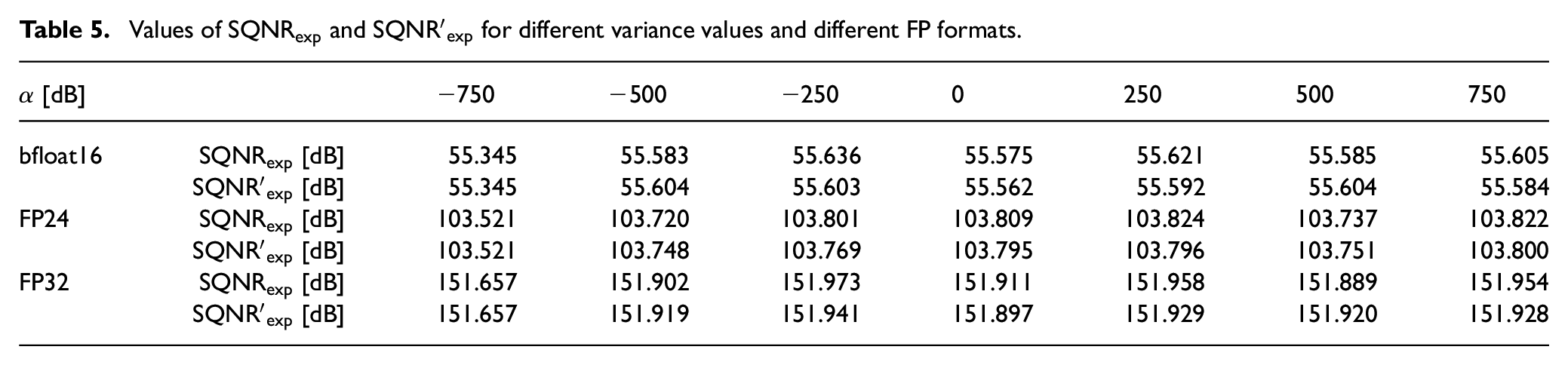

Our next goal is to experimentally verify the accuracy of the theoretical results over a wide variance range. By scaling the values of the MLP weights with an appropriate constant c, we can generate a data set of real data

Values of

Conclusion

This paper aims to develop a simple and efficient method for evaluating the performance of FP formats for Gaussian-distributed data. Starting from the analogy between FP formats and a PUQ, this paper identifies the high complexity in the SQNR expression of PUQ, which involves summing a large number of terms (e.g. 254 for bfloat16, FP24, and FP32 formats), and offers several important contributions to address this problem.

One key contribution is demonstrating that only a small number of PUQ segments significantly impact SQNR. This insight leads to the idea that the SQNR expression can be simplified by retaining only a few terms related to the segment with the highest probability and its adjacent segments. Since the segment with the highest probability depends on the variance, this paper provides a simple closed-form expression for determining this segment for any variance value. Another important result is an approximate SQNR expression with just five terms, significantly simplifying the original SQNR expression with 254 terms while maintaining accuracy with a maximum error of only 0.024 dB. The SQNR expression is further simplified by determining the ranges of variance in which certain distortion components are negligible. The theoretical results are confirmed by MATLAB simulations and an experiment conducted with the weights of a trained MLP neural network. Although the numerical results are provided for bfloat16, FP24, and FP32 formats with an 8-bit exponent due to their pronounced complexity, the findings can be applied to other FP formats, offering general significance.

As a final result, this paper presents a simple yet efficient method for calculating FP format performance, particularly important for implementing resource-constrained intelligent DNN-based sensor nodes and edge measurement devices where selecting the most suitable FP format for each specific application is crucial. The findings are also applicable in other fields, such as computing and signal processing.

Footnotes

Appendix

Given that

For

This condition implies

The first term in equation (33) is a constant. Given that, according to equation (16),

confirming the validity of expression (18).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Science, Technological Development and Innovation of the Republic of Serbia (grant no. 451-03-65/2024-03/200102) as well as by the European Union’s Horizon 2023 research and innovation program through the AIDA4Edge Twinning project (grant ID 101160293).

Data availability statement

Data sharing is not applicable to this article as no data sets were generated or analyzed during the current study.