Abstract

Introduction

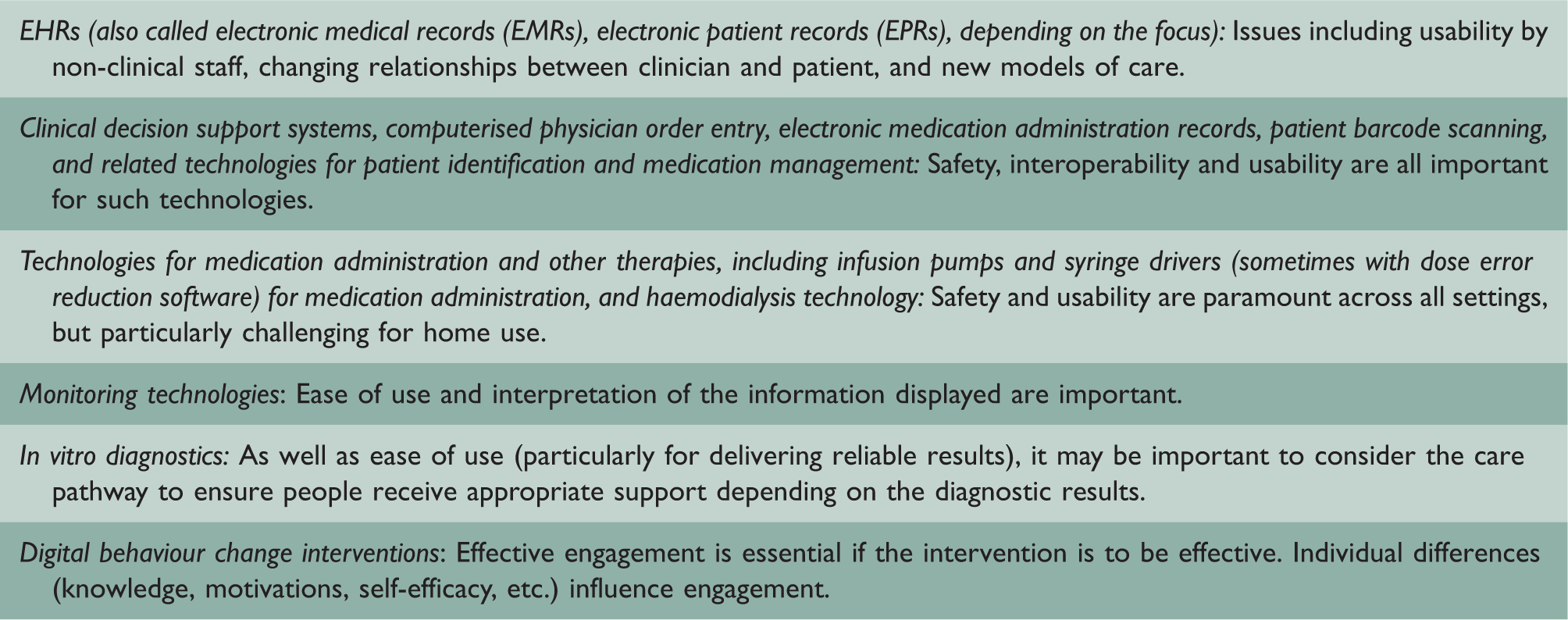

Examples of existing health information technologies and evaluation questions.

Randomised controlled trials of complex health information technology interventions are important and appropriate to evaluate effectiveness in many contexts, particularly if they are complemented by process and in-depth qualitative evaluations which yield insights into why the intervention was effective/ineffective and its likely generalisability. 3 But health information technology presents challenges for this traditional biomedical evaluation approach. It may have relatively small diffuse effects that are often difficult to trace and attribute as it involves redesigning existing organisational processes and ways of working (e.g. when implementing electronic health records). 4 The effect of the intervention can also be heavily shaped by the context and the way it is implemented 5 ; this may include, for example, varying organisational strategies, shifting responsibilities of healthcare professionals (e.g. towards a greater emphasis on data entry), increased managerial control (e.g. through review of data held within systems), changing power structures and whether or not computerised decision support is switched on. Health information technology is also often rapidly evolving and a certain user interface, for example, may no longer exist by the time a randomised controlled trial evaluative cycle (which typically takes years) has been completed. 6

New design and evaluation approaches are thus needed alongside randomised controlled trials to provide a broader array of evaluative approaches to investigate the effectiveness of different forms of health information technology. Building on our previous work highlighting the importance of continuous systemic health information technology evaluation, 7 we consider how evaluation approaches based on human factors engineering may help to address some of these needs.

In which contexts are randomised controlled trials appropriate?

Randomised controlled trials are of considerable importance to evaluating the effectiveness of interventions – including health information technology – because they have the potential to minimise the risk of bias, allocate confounders randomly between intervention arms and produce generalisable results. 8 This design is appropriate and indeed essential for some health information technology contexts where clear effects on a limited number of outcomes can be anticipated. For instance, if aiming to investigate the effectiveness of a safety/business-critical medical prototype device with discrete effects on health outcomes or if interventions are particularly expensive, then randomised controlled trial designs are likely to be an appropriate summative evaluative tool. Indeed, randomised controlled trials and alternatively quasi-randomised controlled trials, have been very useful in relation to evaluating health information technology, particularly when combined with embedded process and qualitative evaluations. 5

Why are randomised controlled trials problematic for some types of technology/context?

Our experiences have, however, indicated that there are a number of contexts where randomised controlled trials and quasi-randomised controlled trials are not feasible and/or appropriate. First, some health information technologies, such as electronic health records, are foundational and therefore have multiple small effects that are often hard to measure. Furthermore, with these types of technology, it can be difficult to establish appropriate controls, as health information technology implementations often involve large organisational transformations across care settings that are not directly comparable. Second, implementation context matters and should not be treated as a confounder. This may include the hospital environment into which systems are implemented, the leadership style and implementation strategy pursued, and user attributes such as personality and competencies. Third, many technologies rapidly evolve (e.g. by being refined, upgraded, customised) or they cease to exist altogether (e.g. apps), which means that the intervention may vary over time. As a result, many complex health information technology systems that are used across a range of contexts lack usability and fail to achieve their true potential as they are often used in ways other than intended. 9

To address some of these challenges, a human factors engineering approach may provide an alternative more agile approach to evaluating health information technology, as this takes into account the changing nature of technology while paying close attention to the context of system use and the variety of settings in which technologies may be implemented.

What is human factors engineering and when is it appropriate?

User-centred approaches to evaluation tend to be practically oriented, focusing on the iterative evaluation of the technology at hand; they are typically less concerned with producing generalisable results. 10 Although some traditional experimental evaluation approaches exist in these settings (e.g. performance and effectiveness analysis), there is an explicit focus on evaluating technology throughout the lifecycle, often involving iterative development and evaluation. 11 An example of such an approach to system development is rapid application development. This employs iterative methods that allow technological prototypes to be developed quickly and then tested and refined in real-world settings, often based on user feedback. It is well suited for complex environments where effects of technologies and user requirements are hard to predict in advance (e.g. when technologies require a high degree of interactivity). The approach may draw on cognitive task analysis, where user needs and workflows are assessed before new technology is introduced, followed by detailed analysis surrounding how new systems affect existing practices. 12 Action research can be a useful methodology in human factors engineering; however, human factors engineering employs a variety of research methods and has an explicit focus on design, particularly of technology.

Human factors engineering is thus a usability engineering-based approach to ‘designing systems for human use’, helping to develop systems over time to bring maximum benefits to users. 12 It includes prospective evaluation approaches that allow systems to be refined in ways that promote their effective use over time. The underlying assumption is that health information technology only works if it is usable and fits with users’ practices. Human factors engineering helps to design usable and useful systems. 13 Methods focus on obtaining a clear understanding of what task needs to be undertaken (the aim) and helping to design systems that assist and motivate users to accomplish this task (the tool). From this perspective, evaluation of technology should therefore focus on exploring its suitability for accomplishing a task from the perspective of users, the degree to which technologies support and enhance human effectiveness, and the degree to which they fit within their context of use.

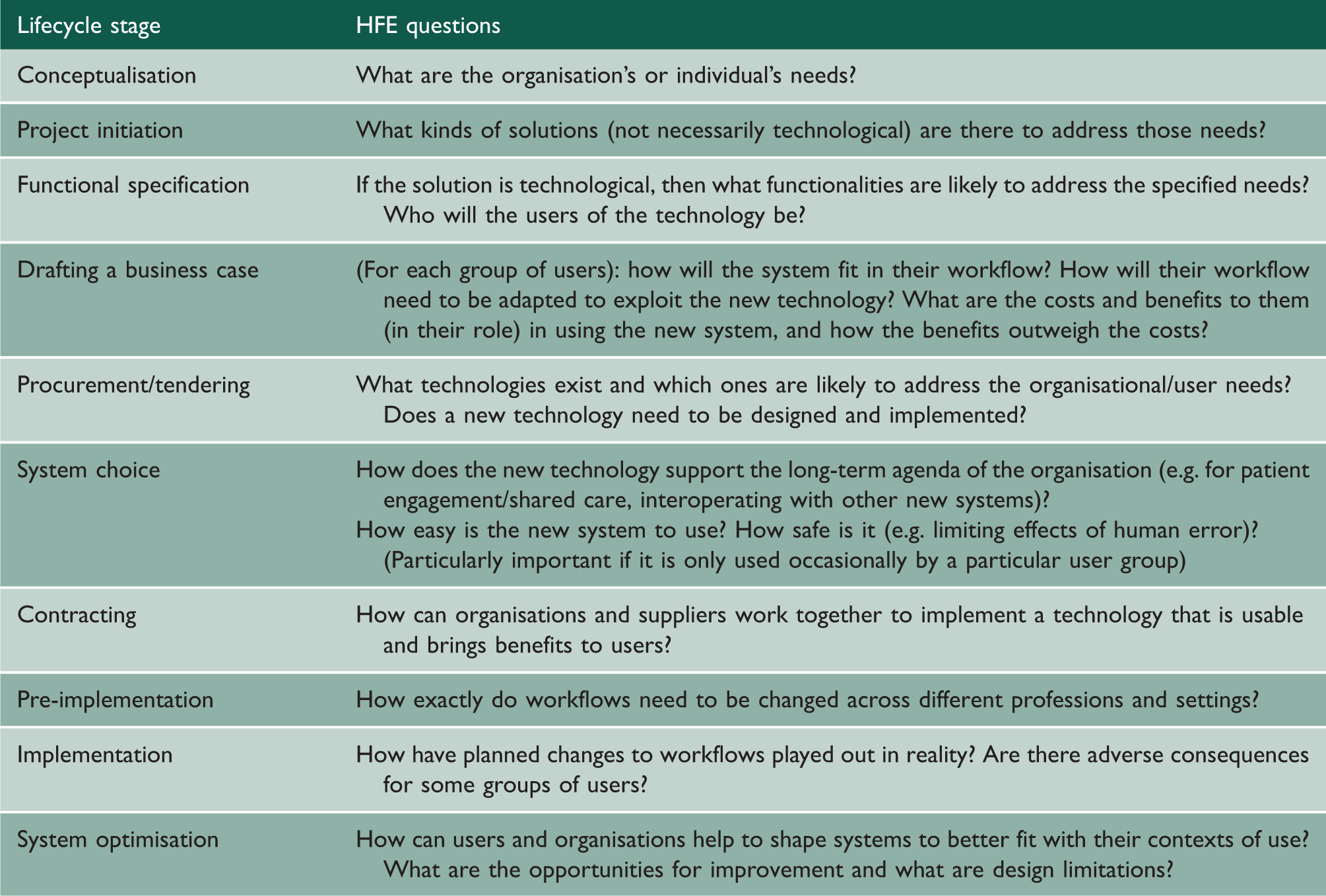

Lifecycle perspective of implementing health information technology and human factors engineering-informed lines of inquiry.

Methodologies commonly used in human factors engineering.

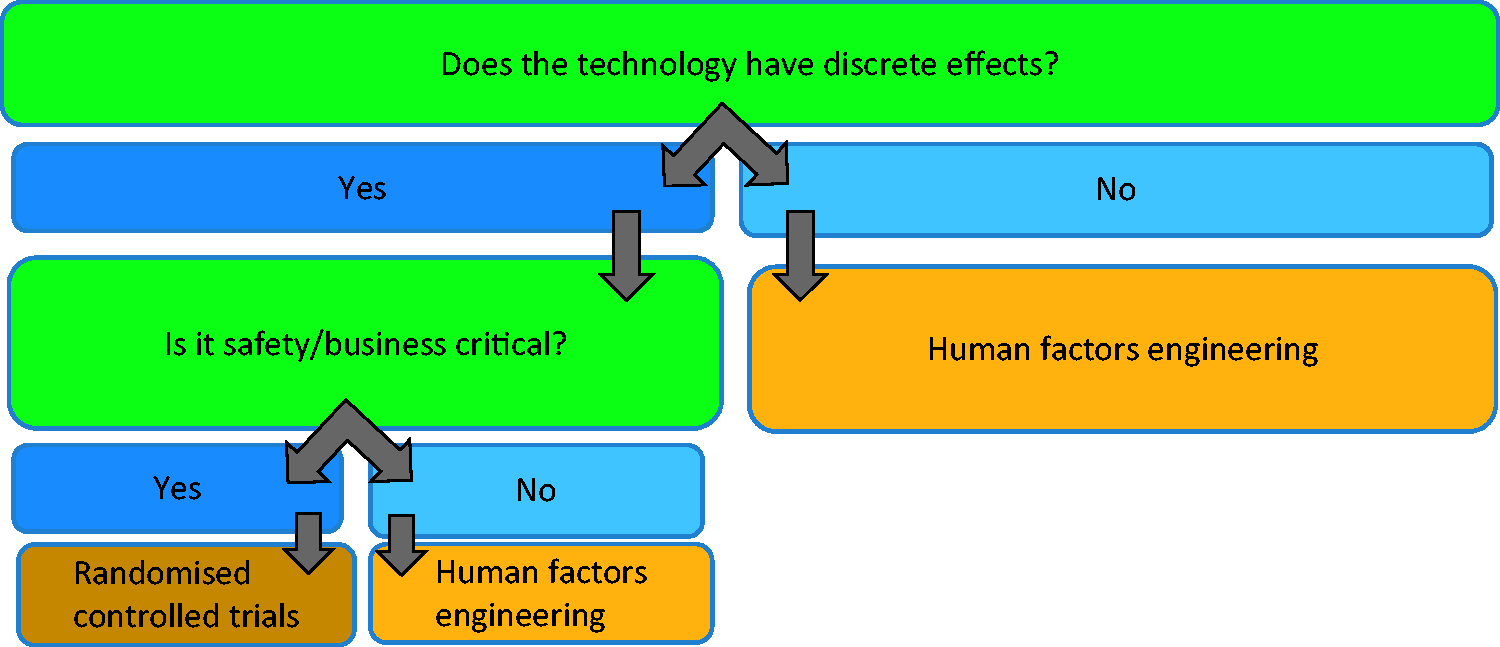

Towards re-conceptualising health information technology evaluation approaches

Both randomised controlled trial-based and human factors engineering-based approaches to evaluation are essential for evaluating the effectiveness of health information technology, but it is important to recognise that the appropriateness of methods depends on the needs emerging from different contexts/technologies. The choice of methods should be determined by the type of health information technology to be evaluated, its stage of development and the key evaluation questions in that situation. For example, human factors engineering approaches work well for technologies that have distributed effects and can be tested and refined in collaboration with users in real-world settings. In comparison, randomised controlled trials are necessary for pre-market testing of safety-critical medical devices and for determining the impact of technologies on health outcomes.

19

We propose a decision tree to guide evaluators in relation to choice of methods in Figure 1.

Flow diagram guiding the choice of evaluation approaches.

A key future activity should include establishing consensus among evaluators on which approaches are best suited to different types of technologies and evaluation questions, and establishing key influence diagrams to consider appropriate surrogate markers. 20 For instance, safety/business-critical technologies (e.g. infusion devices, haemodialysis machines, clinical decision support systems) are likely to require extensive upfront development (ideally in close collaboration with users) and extensive effectiveness testing, while prototypes of less critical devices (e.g. activity trackers) may be tested and refined in close collaboration with users in real-world settings without significant safety implications.

Conclusions

Traditional randomised controlled trial-based evaluation paradigms, although suitable for determining effectiveness surrounding health outcomes, are not appropriate for evaluating the effectiveness of different types of health information technology at different stages of development. Human factors engineering-based evaluation approaches can help to address these shortcomings, particularly in relation to designing systems that fulfil user needs and involve users throughout the development process.