Abstract

Introduction

Jerome Cornfield (1912–1979) was a man of philosophical bent, engaging wit, deep thought, great mathematical talent, and formidable skill in written and oral debate.1–7 He had extensive influence on biostatistics and medical research in the USA in the middle of the 20th century.

Cornfield was never awarded a university degree beyond a Bachelor of Science. Indeed, in the words of one distinguished colleague, ‘he represented a mockery of excessive adherence to traditional qualifications’. 8 Nevertheless, Cornfield was elected president of the American Statistical Association, the American Epidemiologic Society and the International Biometric Society’s Eastern North American Region, and he was a fellow of the Institute of Mathematical Statistics, the American Statistical Association and the American Association for the Advancement of Science.9,10

While at the National Cancer Institute in the 1950s, Cornfield developed statistical methods for laboratory studies and epidemiologic investigations. His writing on the nature of causation and on evidence that may be used to buttress an inference of cause-and-effect arose from his involvement in one of the great public health controversies of his time: lung cancer in relation to cigarette smoking.1,11

Among his many contributions to epidemiology, Cornfield defended case–control studies as an appropriate method to assess the potential effects of an exposure on the risk of disease; developed the odds ratio, based on a case–control study, as an approximation to the corresponding estimate of relative risk based on a cohort study; and developed the rationale for the use of relative risk, as opposed to absolute risk, in studies of disease etiology. He also gave a persuasive rationale for the use of observational studies in scientific inference. 2

Shortly after his death, the scope of Cornfield’s wide-ranging research activities was surveyed in a series of articles written by persons who knew him well. They discussed his contributions to laboratory research, 12 epidemiologic studies, 13 statistical theory 14 and clinical trials.15,16

Cornfield and clinical trials

Despite the ambiguities involved in design, in decision making and in conclusion reaching, it is undeniable that the clinical trial has constituted an important contribution to medicine. … [this] surely can be explained as simply a further triumph of experimental method as applied to clinical medicine. As such, it would not have surprised even Claude Bernard, and only the statistical participation would have puzzled him.6(p 420)

Cornfield’s skepticism about the role of statistical methods in decision-making and scientific inference had been reinforced by his recent experience with the interim monitoring of several large-scale, multi-centre clinical trials. His reservations, however, had been voiced much earlier in a more general context in 1959, in his carefully reasoned, thought-provoking overview of research methodology: ‘Principles of research’. 2

Cornfield had long advocated that clinical trials be randomised whenever feasible and ethical. He also urged that individuals be randomised if possible, rather than larger units, such as clinics or hospitals. He had stated the frequentist rationale for randomisation in 1959: The device of randomization did two things. First, it controlled the probability that the treated and the control group differed by more than a calculable amount in their exposure to disease, in their immune history, or with respect to any other variable, known or unknown to the experimenters, which might have a bearing on the outcome of their trial. Furthermore, as the size of the two groups being compared increased, it assured that the probability that they differ by more than this amount approached zero. The second thing that randomization made possible was an objective answer to the question that must be asked at the conclusion of any trial: In how many experiments could a difference of this magnitude have arisen by chance alone if the treatment truly has no effect?2(p 245) One of the finest fruits of the Fisherian revolution was the idea of randomization, and statisticians who agree on few other things have at least agreed on this. But despite this agreement and despite the widespread use of randomized allocation procedures in clinical and in other forms of experimentation, its logical status, i.e. the exact function it performs, is still obscure. Does it provide the only basis by which a valid comparison can be achieved or is it simply an ad hoc device to achieve comparability between treatment groups?6(p 418)

Decision-making and appraisal of uncertainty in monitoring clinical trials

Cornfield’s early work in statistics reflected his training in frequentist methods, for which ‘probability’ is a measure of the relative frequency with which events occur in the natural world. This interpretation differs from that of Bayesians, for whom ‘probability’ is a measure of a person’s degree of belief in a proposition, such as ‘the risk of lung cancer is increased 20-fold in persons who smoke 2 packs of cigarettes per day’. Both conceptions of probability have a long history of use and application,19,20 and both conform to the same axioms and theorems of probability theory. In addition to what is meant by probability, the major difference in the Bayesian approach is that it imposes two requirements: first, one must specify a probability distribution (of belief) for values of the parameters involved in a statistical model to be used for an analysis; and second, when the study data become available, one must apply Bayes’ theorem to update the prior probability distribution. This second requirement, called

In the mid-1960s, Cornfield repudiated frequentist methods for monitoring clinical trials and expressed his conversion to a then radical point of view, namely, that tests of statistical significance and

Anscombe’s carefully reasoned, trenchant criticism of sequential analysis and Neyman-Pearson theory of hypothesis tests was so important that senior statisticians at the US National Institutes of Health held ‘an informal seminar’ about the issue in June 1965. 36 On that occasion, Cornfield argued for a Bayesian approach to monitoring clinical trials. Although he subsequently continued to use the frequentist concepts of ‘power’ and ‘level of statistical significance’ to plan clinical trials, 37 Cornfield objected to their relevance in the interim analyses of a study’s emerging data, and by implication in the final analysis as well.

Cornfield and the University Group Diabetes Program

The University Group Diabetes Program saga

The UGDP was meant to be a model of clinical investigation. Planned and managed by a team of statisticians and clinicians, the study addressed a series of long-standing controversies over the clinical management of diabetes. Intended to demonstrate how a properly designed, randomized, controlled trial could resolve differences of clinical opinion, the UGDP instead became a symbol of all that was wrong with the statistical enterprise in medicine. Few recent controversies in medicine are comparable in length and rancor to that over the UGDP.38(p 198)

Because of the importance of the University Group Diabetes Program to the development of modern-day clinical trials,38,44,45 I use that study to explain Cornfield’s Bayesian method – relative betting odds – which was used as one of three statistical techniques to support the decision to discontinue tolbutamide. The University Group Diabetes Program also provides an instructive instance in which Cornfield’s formidable skills in rhetoric, statistical analysis and logical thinking are clearly displayed in undermining the arguments of a study’s critics.

4

It additionally provides a striking example in which Cornfield’s Bayesian rationale for avoiding the use of

Cornfield was not involved in the University Group Diabetes Program’s design, and he was not part of the research team initially. Nearly six years after the first patient had been enrolled and the study was fully in progress, his advice was sought by Christian Klimt, the head of the University Group Diabetes Program’s Statistical Center. At that time, Klimt was vexed by a major problem: informal, interim analyses indicated that more cardiovascular deaths were occurring in patients treated with tolbutamide than with placebo – a completely unexpected, inexplicable finding. Bayesian analyses by Cornfield and computer simulations for frequentist-based analyses were therefore developed to assess the unfavourable trend, which also indicated that overall mortality on tolbutamide was no better than that on placebo and was possibly worse. On the basis of these analyses, Cornfield advocated that treatment with tolbutamide be stopped, and he remained the major defender of the statistical basis of that recommendation.4,46,47

With Bayesian and frequentist analyses in hand, a two-day meeting of the University Group Diabetes Program’s Executive Steering Committee voted in June 1969 to drop tolbutamide as a study treatment, and duly informed the US Food and Drug Administration of their decision. Despite skepticism by some reviewers in the agency and by its external advisors, the US Food and Drug Administration announced its intention in May 1970 to place a warning of increased cardiovascular hazard on the label for tolbutamide and all chemically related agents (sulfonylurea drugs). This was done before any University Group Diabetes Program publication, and it set in motion a long-ensuing clinical and scientific controversy.

Within a few months of the University Group Diabetes Program’s first two publications,39,40 several articles highly critical of the study’s design, implementation and statistical analyses appeared in the medical literature.48–51 Those articles were followed shortly thereafter by further criticism of the University Group Diabetes Program,52,53 which continued throughout the 1970s.54–56

Cornfield was a representative of the University Group Diabetes Program in discussions with the US Food and Drug Administration, and he testified before a US Senate subcommittee in a hearing held over three days in September 1974 about the controversy.46,57 Amazingly, on the last day of that hearing, Cornfield was allowed to cross-examine an articulate, forceful opponent of the University Group Diabetes Program, Holbrooke Seltzer, who was Professor of Internal Medicine at the University of Texas and a member of the Board of Directors of the American Diabetes Association.47,58 Furthermore, at the request of Senator Gaylord Nelson, Chairman of the Subcommittee on Monopoly, Cornfield wrote replies to numerous interrogatories proffered by the attorney representing a group of diabetologists, including Seltzer, who opposed the actions of the US Food and Drug Administration and challenged the validity of the University Group Diabetes Program’s findings. 59 Those challenges, which unsuccessfully sought to obtain the University Group Diabetes Program data, were ultimately decided by the US Supreme Court. 60

The application of Cornfield’s ‘relative betting odds’

Although the University Group Diabetes Program was planned and monitored with frequentist methods, it became the first clinical trial in which a Bayesian analysis was used, what Cornfield called ‘relative betting odds’, which is a Bayesian method for testing hypotheses.27,29,33 The term ‘betting odds’ refers to an index of H: the risk of cardiovascular death on tolbutamide is identical to that on placebo.

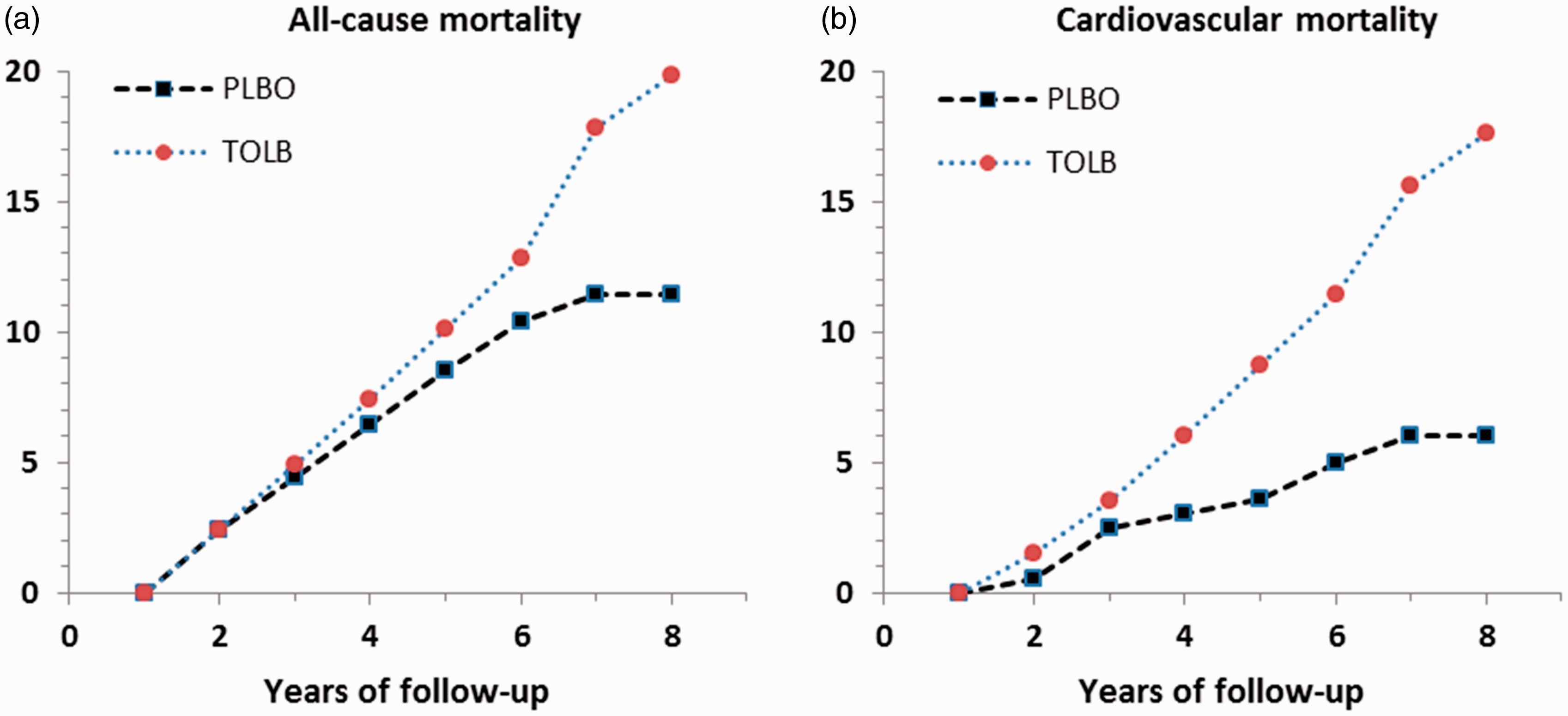

In Cornfield’s application to the University Group Diabetes Program, betting odds on H were often expressed relative to a specific alternative hypothesis (HA), HA: compared to placebo, the risk of cardiovascular death on tolbutamide is increased by 25%. Cumulative mortality rates per 100 persons at risk, by year of follow-up.

40

TOLB: tolbutamide; PLBO: placebo.

In the 204 patients on tolbutamide, there were 30 deaths in total by the eighth year of follow-up, 26 of which were attributed to cardiovascular causes. By comparison, there were only 21 deaths in the 205 patients on placebo, 10 of which were attributed to cardiovascular causes. The life-table estimate of the cumulative risk of cardiovascular death (intent-to-treat analysis) was 17.6 (standard error 2.5) per 100 on tolbutamide versus 6.0 (standard error 2.5) per 100 on placebo.

In view of such data, a person’s ‘posterior odds’ in favour of H being true, i.e. one’s belief that ‘the risk of cardiovascular death on tolbutamide is identical to that on placebo’, should be smaller than his ‘prior odds’. The amount of decrease is given by Bayes theorem. That amount is what Cornfield called the ‘relative betting odds’. It expresses the degree to which the acquisition of data should change one’s ‘prior odds’ to ‘posterior odds’. Expressed schematically:

61

posterior odds of H = relative betting odds × prior odds of H.

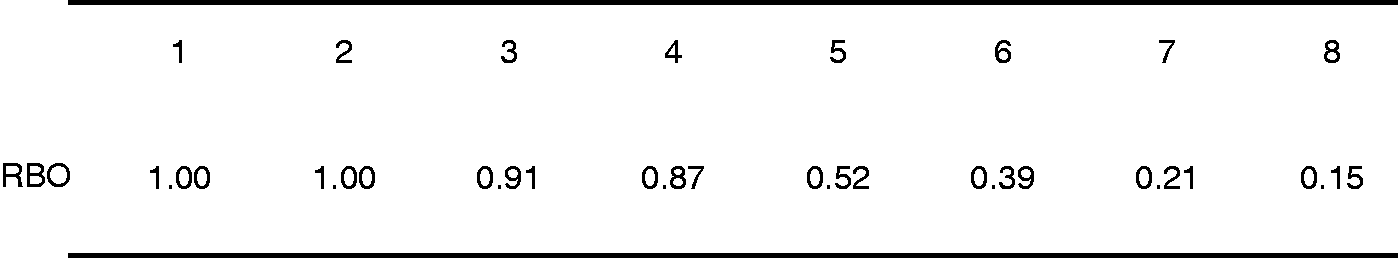

Figure 2 displays some of the University Group Diabetes Program’s many reported values of relative betting odds.

40

The alternative hypothesis (HA) in Figure 2 is that ‘the cumulative risk of cardiovascular death is increased by 25% over the cumulative risk on placebo’.

Relative betting odds for the difference in cumulative cardiovascular mortality (tolbutamide – placebo), by year of follow-up.

One sees from Figure 2 that the University Group Diabetes Program’s accumulating data led to progressively decreasing values of the relative betting odds. By the 8th year of follow-up, the relative betting odds were 0.15. In other words, at that time a person’s prior odds in favour of H, i.e. that ‘the risk of cardiovascular death on tolbutamide is identical to that on placebo’, should have been diminished by 85%.

Relative betting odds depend not only on the data but also on the degree of belief in the alternative hypotheses against which the null hypothesis is tested.27,62,63 Further complications arise in calculating relative betting odds for ‘composite hypotheses’, such as ‘the risk of cardiovascular death on tolbutamide is within ±5% of the risk on placebo’, or ‘the risk of cardiovascular death on tolbutamide is at least 25% greater than the risk on placebo’.29,33

Cornfield’s defence of the University Group Diabetes Program

… an investigation originally designed to produce new knowledge, suddenly found itself involved in a difficult and unwanted task of decision making. From the purely formal hypothesis testing point of view that dominated the early thinking in clinical trials, what had happened was that the same body of data had been used to formulate a hypothesis and to test it. From that point of view the University Group Diabetes Program results should have been treated as suggesting a hypothesis to be tested in a new and independent trial. But to the investigators this was inappropriate. They had to decide for themselves and their patients whether the evidence available to them justified the future exposure of anyone to these agents … 6(p 409) … received by some critics with a hostility which has no discernible scientific basis. … The subsequent analysis is undertaken to illuminate these alternatives [i.e., independent repetition of the UGDP vs. acceptance of its findings] and not to defend the UGDP. Its concentration on the strength of the evidence against tolbutamide should of course not be permitted to obscure the more general UGDP finding that lowering of blood glucose level did not appreciably lower the eight-year mortality from cardiovascular disease as compared with patients on diet alone.4(p 1676)

Cornfield also rebutted a number of clinical concerns, including those expressed about the eligibility criteria for study patients, the use of a fixed dose of tolbutamide, the determination of the principal cause of death, the definitions of baseline risk factors, the failure to obtain data on patients’ smoking histories, and the decision to stop treatment with tolbutamide before a more conclusive demonstration was available for its apparently harmful effect.

Despite Cornfield’s advocacy of Bayesian methods in clinical trials and the use of his relative betting odds to support the University Group Diabetes Program’s decision to terminate treatment with tolbutamide, his rebuttal relied extensively on the very frequentist methods that he was arguing against:

What can one say about the discrepancy between Cornfield’s preaching against

As emphasised by Lindley,65(p 313) Bayesian methods are deficient in situations involving conflict, a circumstance that embroiled the University Group Diabetes Program both internally (some of the study investigators opposed the decision to stop treatment with tolbutamide) and externally (for various reasons many clinicians not involved in the University Group Diabetes Program did not believe its results). Although the University Group Diabetes Program was challenged by some critics because the study had stopped treatment with tolbutamide ‘too soon’, i.e. before more data on mortality were at hand, it is doubtful in retrospect whether more data from the same study would have ever settled the controversy.

Cornfield’s legacy

It is sometimes claimed, or at least implied, by frequentists that, in contrast to Bayesians, they seek rules which minimize the long-run frequency of errors of inference or decision. If Bayesian procedures really lacked this property, then it would be difficult to accept them or to make them the basis of any scientifically defensible system of data analysis or behavior. We shall here argue that no such contradiction exists.30(p 15)

Only a few of Cornfield’s articles on clinical trials are now cited in articles and texts. His evocative phrase ‘RBO’, which so effectively conjures the image of a casino, has been supplanted sadly by the non-descript ‘Bayes factor’, a term coined by IJ Good.76–78 Despite Cornfield’s key contribution to the interpretation of the University Group Diabetes Program, the investigators did not use the term ‘RBO’ in their publications, perhaps because of its connotation and the controversy in which they were involved. The University Group Diabetes Program, however, did use ‘RBO’, which was said to be an abbreviation for ‘likelihood ratio’, and they cited Cornfield’s article, 29 but without mentioning that relative betting odd was his acronym for ‘RBO’.

Even if one subscribes to the use of Bayes’ rule for modifying prior belief, in practical applications such as the University Group Diabetes Program, making decisions and taking action will involve additional considerations: assessing the design of a study versus its implementation, and weighing costs, benefits and ethical concerns, all of which can be subject to widely differing opinions. 79 With regard to ethics, Anscombe’s 35 proposed Bayesian method to decide whether to continue recruitment to a clinical trial when accumulating data suggest that one of the treatments being compared is superior failed to account for physicians’ patient-centred perspective,79,80 a viewpoint which Cornfield evidently shared (see quotation at the start of ‘Cornfield’s defence of the University Group Diabetes Program’ section).

Cornfield became a staunch advocate of Bayesian methods, but he was never an ideologue on their behalf. His thinking and writing about ‘the Bayesian outlook’ from 1966 forward was accompanied by his contemporaneous use of frequentist techniques,

If the above remarks are true, then why did Cornfield convert to and espouse Bayesian methods? One answer, offered by Cornfield, is that frequentist methods not only lead to logical conundrums, but they are also inflexible in practice, especially in the very instances where flexibility is scientifically most needed.28–30,36(pp 862–866, 877–881) In adopting that position, he was apparently willing to overlook problems with Bayesian theory,64(pp 167–176),81 arguing that the advantage to be gained by improved flexibility was not accompanied by any important loss from abandoning frequentist techniques.

Cornfield’s paper on recent methodological contributions to clinical trials was presented on 30 April 1976 at the Reed-Frost Symposium of the Johns Hopkins University. At the time Cornfield spoke, he did not realise that he would soon cross a threshold and pass into history. One year earlier, however, he had mused about the future: … what is statistics and where is it going? When one tries, spider-like, to spin such a thread out of his viscera, he must say, first, according to Dean Acheson, ‘What do I know, or think I know, from my own experience and not by literary osmosis?’ An honest answer would be, ‘Not much; and I am not too sure of most of it’.

5