Abstract

Background

The validity of unmonitored online exams has raised concerns about academic integrity and grade inflation, especially given the rise of artificial intelligence–powered tools.

Objective

This study evaluates the validity of unmonitored online exams by comparing student performance between two sections of an undergraduate personality psychology course: one section completed an unmonitored online multiple-choice final exam while the other completed an in-person multiple-choice final exam.

Method

A quasi-experimental design was used with two undergraduate personality psychology course sections. Section 1 (Spring 2022, n = 153) took an in-person final exam, while Section 2 (Spring 2023, n = 160) took an unmonitored online final exam. Both sections completed identical in-person exams throughout the semester.

Results

Online final exam scores were significantly higher than the in-person final exam scores. The correlation between regular in-person exams was strong for the in-person final exam but weak for the online final exam. Exam format was a stronger predictor of final exam scores than prior performance.

Conclusion

Unmonitored online exams lead to inflated scores and may not reflect students’ true abilities.

Teaching Implications

Educators should reconsider using unmonitored online exams for high-stakes assessments and explore alternative methods or enhanced monitoring to maintain academic integrity.

Given the increasing reliance on online and hybrid forms of education, understanding the validity of online assessments is crucial for maintaining academic standards and ensuring fair evaluation of student performance (Bearman et al., 2023). The rapid shift to online learning during the COVID-19 pandemic necessitated a marked shift to embrace online academic activities and assessments, and this has continued after the pandemic (Chan, 2022; Viberg et al., 2024; Wahid et al., 2021). Although unmonitored online exams offer flexibility, convenience, and accessibility, their unmonitored nature raises concerns about academic integrity and validity, and unfortunately, study results are mixed (Chan & Ahn, 2023; Janke et al., 2021). Therefore, the present study provides a replication and extension of previous validity studies that have compared unmonitored online assessments to in-person assessments. Using a quasi-experimental multiwave design, we collected data from two-course sections over two academic terms, with identical regular in-person exams and instructors but different formats for the respective final exam. This design differs from previous studies by providing multiple in-person baseline measurements and examining performance in the current technological context, particularly given the rise of artificial intelligence (AI) tools that were unavailable when earlier studies were conducted.

Debates on the Validity of Unmonitored Online Exams

The validity of unmonitored online exams remains a contentious issue, with mixed findings in the literature. Some research supports the validity of these exams. For example, Chan and Ahn (2023) conducted a comprehensive study of nearly 2,000 students across 18 courses at a large Midwestern university during Spring 2020, comparing student performance between in-person exams taken before the pandemic shutdown and unproctored online exams taken after. They found a robust correlation between online and in-person exam scores (r = 0.59), suggesting that unproctored online exams could serve as meaningful assessments. Yet, these data were collected in the spring of 2020, a unique historical moment when courses rapidly shifted from in-person to online in the middle of the semester. Sorenson and Hanson (2023) found similar results in their examination of in-person versus online chemistry exams, with no statistically significant differences in performance between formats. The nature of the assessment type may play a crucial role in these findings, as skill-based problem-solving questions may be less susceptible to academic dishonesty than factual recall questions.

Nevertheless, much scholarship of teaching and learning (SoTL) research paints a more skeptical picture of the validity of unmonitored online exams (cf. Newton, 2023). Critics argue that these assessments do not accurately reflect students’ true understanding of course material. Instead, they might primarily measure students’ ability to access and utilize external resources during an exam. This is supported by experimental evidence that compared in-person closed-book examinations to in-person open-book examinations, finding that open-book approaches resulted in worse exam performance, potentially due to different retrieval practices and/or motivation (Rummer et al., 2019). Although large systematic reviews have found mixed results regarding exam performance across formats, a robust finding is that closed-book approaches tend to elicit better information retention over time (Durning et al., 2016; Permzadian & Cho, 2023).

These mixed findings are particularly important to resolve, given that the landscape of unmonitored online testing has been fundamentally transformed by the rapid evolution of AI tools, particularly with the launch of ChatGPT in November 2022 (Huang, 2023). The present study addresses this evolving context by examining exam performance in more recent semesters (Spring 2022 and Spring 2023) using a quasi-experimental design comparing two large sections (n = 153, n = 160) of the same course taught by the same instructor with multiple in-person baseline measurements before the final exam.

To further complicate matters, the issue of academic dishonesty in unmonitored online exams has become increasingly prevalent, as research consistently highlights the challenges of maintaining integrity in such settings. Academic dishonesty in unmonitored online exams has become a growing concern in recent years, with research consistently showing widespread cheating in online settings (Dendir & Maxwell, 2020; Janke et al., 2021; Pleasants et al., 2022). Moreover, Comas-Forgas et al. (2021) and Jenkins et al. (2023) reported a significant rise in cheating rates since the pandemic. In particular, the rise of AI introduces additional challenges for maintaining academic integrity in online exams, as large language models are increasingly accessible. Artificial intelligence–powered tools can generate answers, solve exam questions, and draft essays (Susnjak & McIntosh, 2024). Utilizing help from AI-powered tools—unlike traditional in-person cheating methods—is unique and difficult to detect. This misuse of AI technologies in unmonitored settings presents an additional layer of difficulty for educators striving to uphold the fundamental purpose of assessments, which is accurately gauging individual performance.

The widespread adoption of online education, accelerated by the COVID-19 pandemic, has made it imperative to address concerns surrounding the validity and integrity of online assessments. As more institutions rely on online and hybrid formats, ensuring that these assessments accurately reflect student learning is critical for maintaining academic standards. Given the rapid changes in technologies available for students completing exams online, it is important to provide a replication of earlier studies examining the relations between in-person and online exam performance.

Overview

The present study provides a replication and extension of previous validity studies that have compared unmonitored online assessments to in-person assessments. Using a quasi-experimental multiwave design, we collected data from two-course sections over two academic terms, with identical regular in-person exams and instructors but different formats for the respective final exam.

In examining the validity of unmonitored online exams, we asked two research questions: Research Question 1: Do correlations between online and in-person exam scores reflect similar correlations between in-person and other in-person exam scores? Suppose unmonitored online exams are a valid assessment format. In that case, correlations between online and in-person exam scores will reflect similar correlations as those between in-person and other in-person exam scores. Research Question 2: Which is a stronger predictor of exam score: exam format or previous performance? Suppose unmonitored online exams are a valid assessment format. In that case, students’ previous performance on in-person exams should be more predictive of their final exam score than exam format (i.e., in-person vs. online).

Method

Participants

The participants were undergraduate students enrolled in a personality psychology course at a large midwestern university, which is a required course for psychology majors but may serve as an elective for other majors. The only prerequisite is an introductory psychology course. Demographic data were not collected during either semester; however, to provide context for the present research, subsequent demographic data were collected in another section of the same course in Spring 2024 (71% response rate) from an unrelated Institutional Review Board (IRB)-approved SoTL study. Gender, Woman = 82%, Man = 18%; Race/Ethnicity, White = 70%, Hispanic/Latino/a = 15%, African American/Black = 9%, Asian American or Pacific Islander = 3%, Multiracial = 2%, American Indian, Alaskan Native or Hawaiian Native = 1%; Year, Junior = 53%, Senior = 27%, Sophomore = 17%, Freshman = 2%; and Age M (SD) = 20.67 (1.28), self-reported GPA = 3.42 (0.47). The sample was predominantly women (82%), reflecting a typical gender distribution of undergraduate psychology courses at our institution and similar to distributions reported in other psychology education research (National Science Foundation, National Center for Science and Engineering Statistics, 2018).

Materials

The required course textbook was “The Personality Puzzle” (8th Edition; Funder, 2019), and additional selected readings were provided through learning management software (LMS) when applicable. Both class sections completed identical multiple-choice regular in-person exams and an identical multiple-choice final exam. Each regular in-person exam was 75 min long and contained 50 multiple-choice questions plus 2–3 extra credit questions completed by students using pencils on OpScan sheets. The highest possible scores for each exam were, respectively, 104%, 106%, and 106%. The regular semester exams primarily covered new material but included some prior content to help prepare students for the final exam, which was cumulative.

The final exam contained 50 multiple-choice questions and four extra credit questions, with a time limit of 120 min for both in-person and online formats. The highest possible score was 108%. The in-person final exam allowed 120 min for completion during the course's scheduled final exam time, whereas the online final exam allowed students to start the exam during a four-day availability window at a location of their choosing; once students started the exam, they had 120 min to complete it. The online final exam was administered through a university-adapted version of Sakai LMS, which enforced the 120-min time limit for online participants.

Procedure

This study employed a quasi-experimental multiwave design to investigate the validity of unmonitored online exams as academic assessments. The primary focus was to compare students’ performance on unmonitored online final exams with their performance on previous in-person exams within the same course. The study was conducted within a personality psychology course across two sections and was taught using the same textbook and content. The same instructor provided instruction to ensure consistency in teaching methods and exam content. The instructor, also the first author of this study, was aware of the research being conducted. The instructor followed standardized procedures across both sections to minimize potential bias, including using identical teaching methods, lecture materials, assignments, and exams. Both sections were taught Tuesdays and Thursdays from 2:00 p.m. to 3:15 p.m. Three multiple-choice regular in-person exams were administered in-person for both sections, each with 2–3 extra credit questions. The final multiple-choice exam formats differed between the two sections, with Section 1 completing the final exam in-person and Section 2 completing the exam online:

Section 1 (n = 153): Spring 2022: Three in-person exams and one in-person final exam. Section 2 (n = 160): Spring 2023: Three in-person and one online final exam.

Regular in-person exams were proctored in a controlled, in-person environment for both sections during regular class times. The final exam for Section 1, the in-person final exam, was also proctored during a scheduled two-hour time block. The final exam for Section 2, the online final exam, was made available on LMS for four days, allowing students to start and complete the exam at their convenience within this timeframe. Students were allowed 120 min to complete the online final exam, with the time limit automatically enforced by the LMS. The online exam was open-book and open-note, but students were instructed not to collaborate or use unauthorized online resources. The syllabi for both sections described the nature of the exam formats as planned at the start of each semester—that all exams, including the final exam, would be in-person, closed book, and administered during class time or the scheduled final exam period. However, to accommodate flexibility for students, the final exam in Spring 2023 was ultimately administered online. The decision to administer the final exam online was communicated to students before the exam period.

Student performance data were collected for all three regular in-person exams and the final exam in both sections, with scores for each exam recorded as percentages to facilitate comparative analysis. The university's IRB reviewed and approved study procedures. Informed consent was not obtained from participants in this exempt research study because data were deidentified, demographic data were not collected, and it would be nearly impossible to contact previous students for consent as they may no longer be affiliated with the university.

Results

Descriptive Statistics

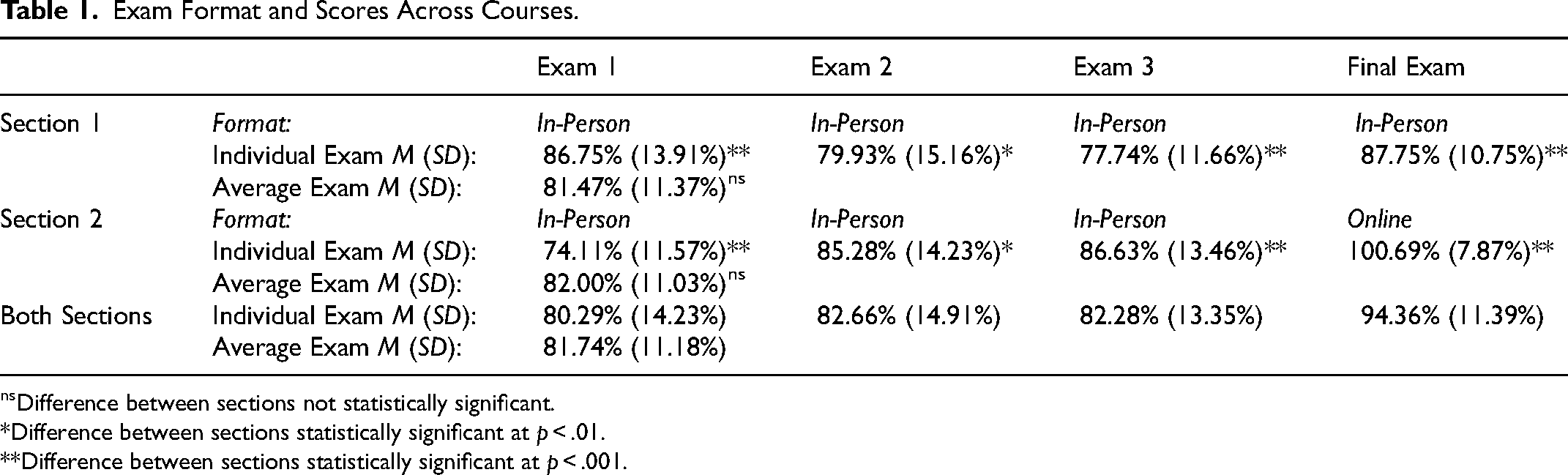

Table 1 shows scores on each of the three regular in-person exams varied across course sections. Yet, when averaged together, regular in-person exam scores did not vary across course sections (Mdiff = ‒0.53%, SE = 1.27%, 95% CI = [‒3.02%, 1.96%]). Section 1's regular in-person exams averaged 81.47% (SD = 11.37%, Range of scores = 40.00%, 114.00%), and Section 2's regular in-person exams averaged 82.00% (SD = 11.03%, Range of scores = 38.00%, 110.00%). However, there was a notable difference in the final exam scores between the two sections (Mdiff = ‒12.94%, SE = 1.06%, 95% CI = [‒15.03%, ‒10.85%]). Section 1's in-person final exam had a mean score of 87.75% (SD = 10.75%, Range = 52.00%, 108.00%). In contrast, Section 2's unmonitored online final exam had a mean score of 100.69% (SD = 7.87%, Range = 38.00%, 108.00%).

Exam Format and Scores Across Courses.

Difference between sections not statistically significant.

*Difference between sections statistically significant at p < .01.

**Difference between sections statistically significant at p < .001.

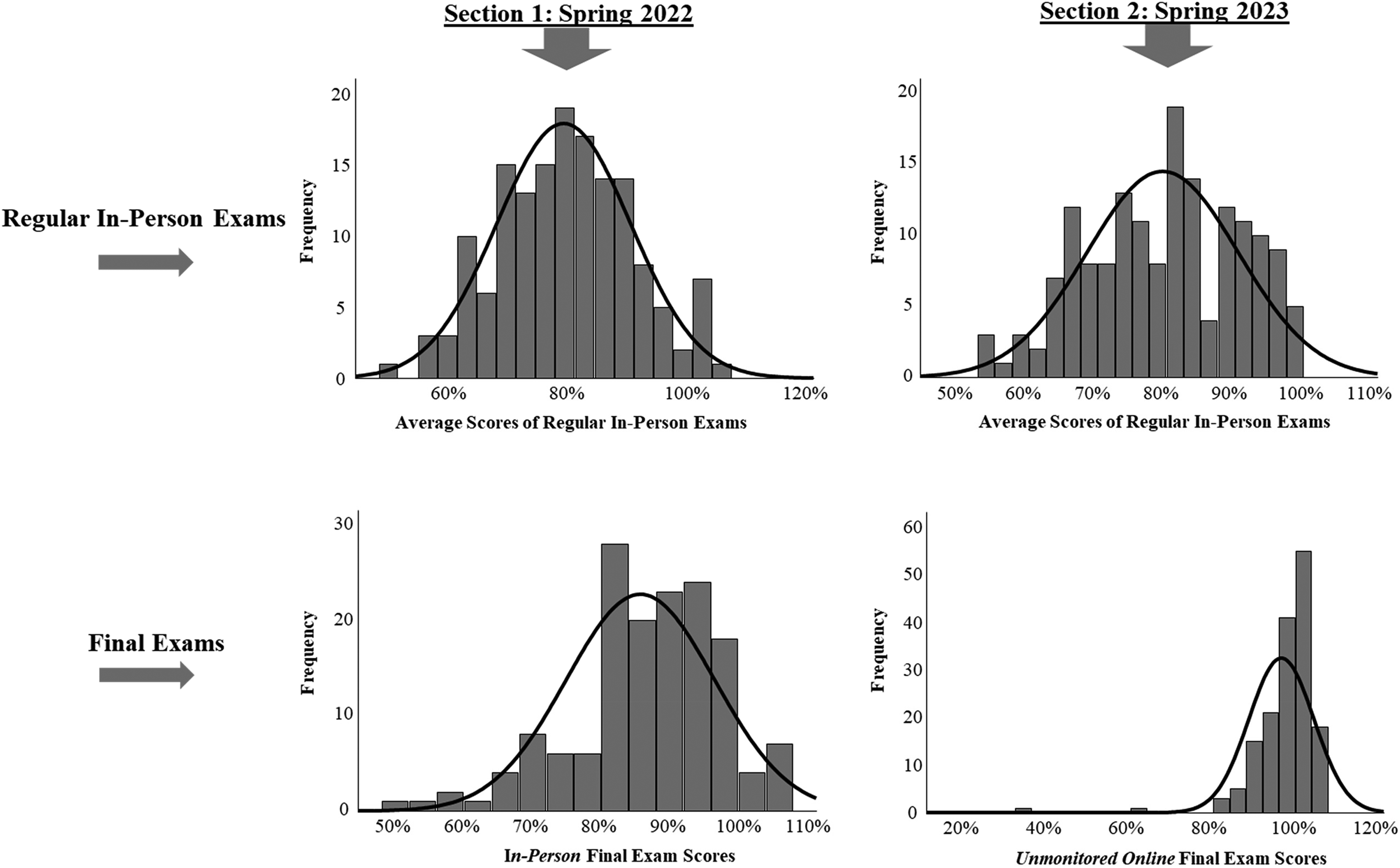

We also examined histograms for differences between the two-course sections. As seen in Figure 1, the histograms for all regular in-person exams indicate more normal distributions of scores, with most students scoring around 80%, suggesting that in-person exams were completed under more consistent testing conditions and reflected a more balanced difficulty level. Importantly, this is also true for the histogram of the in-person final exam (bottom left histogram), which was used as a comparison for the unmonitored online exam. In contrast, the histogram for the unmonitored online exam indicates a skewed distribution, with the vast majority of students scoring near or above 100%, suggesting inconsistent testing conditions and inflated scores with little variability.

Histograms of exam score distributions across course sections.

Main Analyses

We conducted correlation and relative weight analyses to investigate our primary research questions, which centered around examining the validity of unmonitored online final exams.

Correlation Analysis

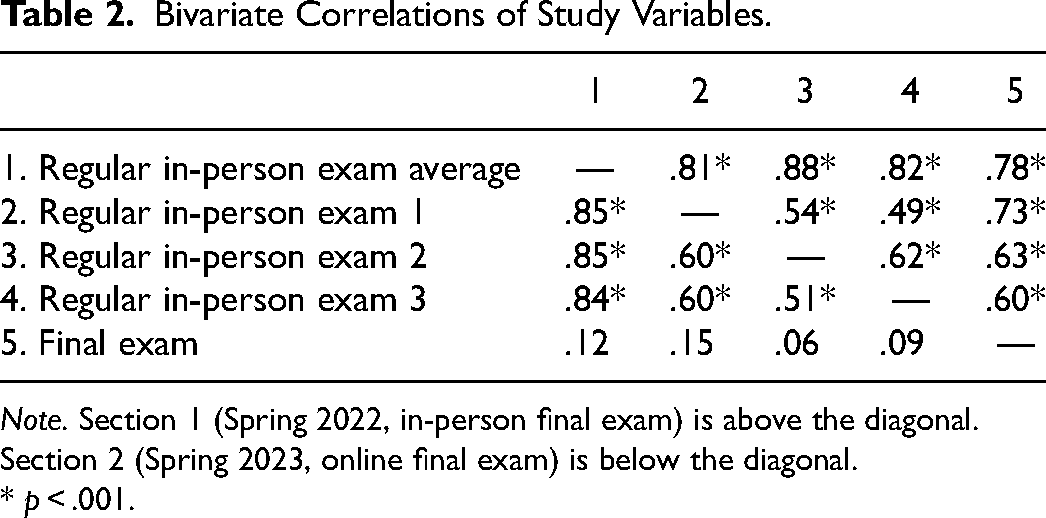

First, we examined Research Question 1, which explored whether correlations between online and in-person exam scores reflect similar correlations between in-person and other in-person exam scores. To answer this, we calculated Pearson correlation coefficients to examine the relation between students’ scores on the regular in-person exams and their final exam scores. As seen in Table 2, results indicated vastly different patterns of correlations. Specifically, for Section 1, statistically significant correlations between the regular in-person exams and the in-person final exam ranged from .60 to .72, and the correlation between the average of in-person midterm exams and the in-person final exam was .78. This indicates a robust, consistent relation between prior performance and final exam scores. In contrast, for Section 2, the correlations between the regular in-person exams and the unmonitored online final exam ranged from 0.06 to 0.15. The correlation between the average of the in-person midterm exams and the online final exam was .12. This indicates that online final exam scores were not consistent, suggesting that the online final exam scores were not strongly related to students’ previous performance. Additionally, a z-test comparing the independent correlations between the in-person exam average and the final exam across each section indicated a statistically significant difference between the two correlation coefficients (Section 1 in-person final r = .78 vs. online final, r = .12), z = 8.10, p < .001. A post hoc power analysis indicated that this analysis had sufficient power (1 – β) = 0.99, with a critical z value of 3.29.

Bivariate Correlations of Study Variables.

Note. Section 1 (Spring 2022, in-person final exam) is above the diagonal. Section 2 (Spring 2023, online final exam) is below the diagonal.

* p < .001.

Relative Weights Analysis

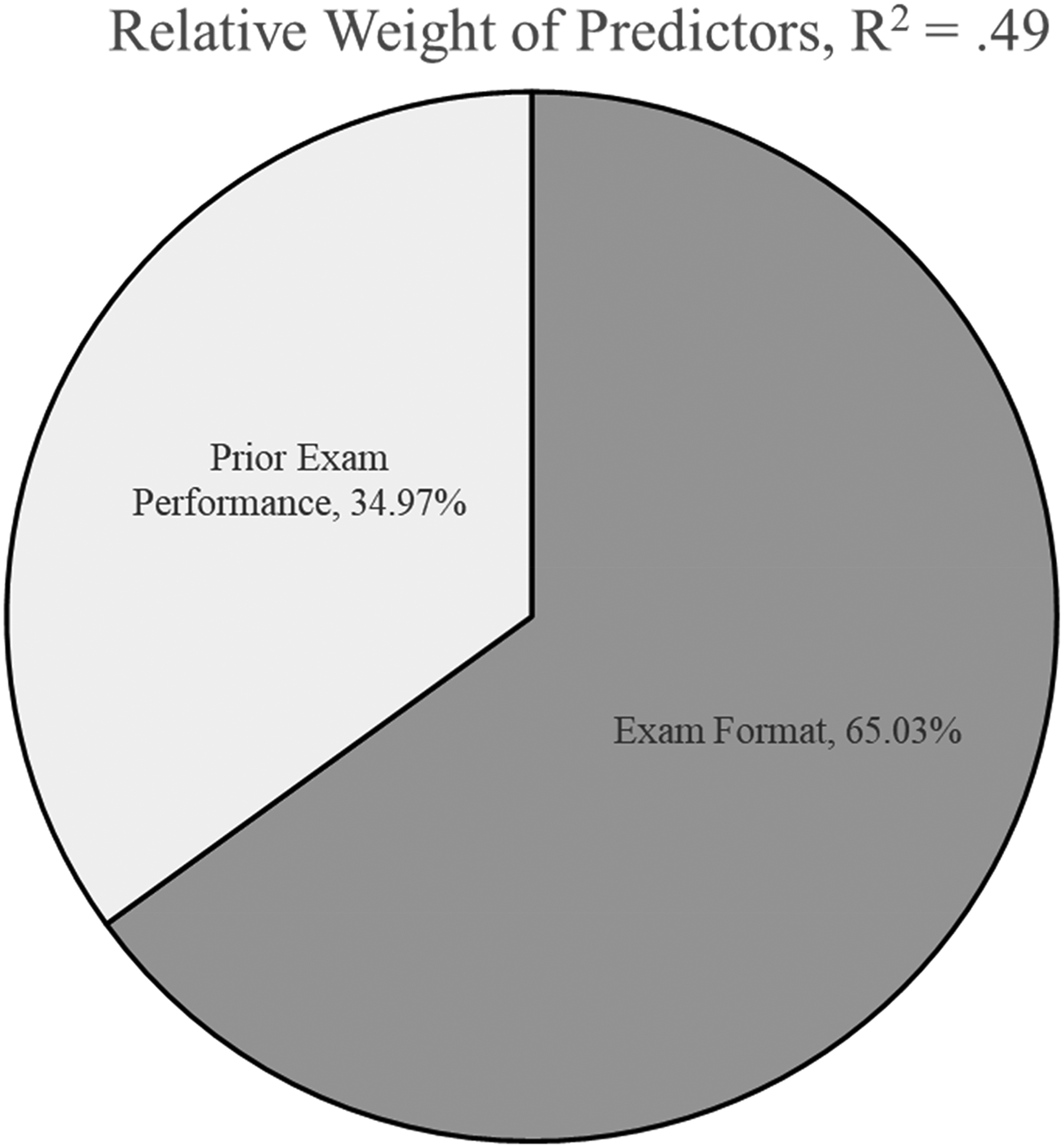

Second, we examined Research Question 2, which explored whether the strongest predictor of exam score was exam format or previous performance. To answer this question, we determined the relative importance of exam format and previous in-person exam performance as predictors of final exam scores. We conducted a relative weights analysis using the web-based tool by Tonidandel and LeBreton (2015) in a dataset that combined both course sections. Relative weight analysis estimates the unique percentage of variance in the criterion variable that can be attributed to each predictor by creating bootstrapped data samples that approximate the original variables but are uncorrelated (Barni, 2015). The analysis estimates a raw importance weight for each variable, which reflects the relative amount of variance that a predictor shares with the criterion variable; when summed, these weights equal the R2. Finally, a rescaled importance weight was created by dividing each relative importance weight by the R2 and multiplying it by 100. This rescaled importance coefficient can be interpreted as the percentage of observed variance in the criterion variable attributed to a particular predictor. When summed, these percentages equal 100%. In this analysis, the criterion variable was the final exam score. The predictor variables included the class section (indicating exam format) and the average of all three regular in-person exam scores. Prior performance and exam format accounted for nearly half of the variance in final exam scores, R2 = .49, p < .001.

As shown in Figure 2, the results of the relative weight analysis indicated that exam format was nearly twice as strong of a predictor of final exam scores than previous performance. Specifically, exam format had a rescaled relative weight of 65.03%, meaning that the format accounted for nearly two-thirds of the total observed variance in final exam scores. In contrast, previous in-person exam performance had a rescaled relative weight of 34.97%.

Results of relative weight analysis.

Discussion

The present study aimed to evaluate the validity of unmonitored online exams by comparing student performance on unmonitored online final exams with their prior performance on regular in-person exams in a quasi-experimental multiwave study design. The findings indicate a significant discrepancy between the in-person and unmonitored online exam formats, casting doubt regarding the validity of unmonitored online assessments. Students who took the unmonitored online final exam scored significantly higher (p < .001) than those who took the in-person final exam, with a substantial mean difference of 12.94%. In addition to being inflated, scores on unmonitored online exams also demonstrated a distribution of scores that was substantially restricted compared to in-person exams. Importantly, the correlation between regular in-person exams and the final exam was strong for the class that took an in-person final exam but weak for those that took an unmonitored online final exam. Finally, the relative weight analysis demonstrated that exam format was a stronger predictor of final exam scores than previous performance.

Overall, the present study's results suggest that unmonitored online exams may not reliably reflect students’ true academic performance. The inflated scores and weakened correlations with prior performance imply that factors other than students’ understanding of the course material can influence exam scores in an unmonitored online format. This aligns with concerns raised in the literature about the potential for increased academic dishonesty in unmonitored online settings (Dendir & Maxwell, 2020; Janke et al., 2021; Pleasants et al., 2022). The widespread use of AI-powered tools presents a growing threat to academic integrity, as advanced technologies like large language models can seamlessly produce answers to complex problems and compose increasingly nuanced academic essays, significantly undermining efforts to prevent unauthorized assistance during exams (Golding et al., 2024; Slade et al., 2024; Susnjak & McIntosh, 2024). It is also difficult for educators to detect when students use AI-powered tools because AI-detection software may not be reliable or accurate (Sadasivan et al., in press). Compounding these issues, many universities do not have updated academic integrity policies that account for the use of AI-powered tools, making it even more difficult to hold students accountable for utilizing external tools during online exams (Perkins & Roe, 2024).

Our findings differ from prior research, including Chan and Ahn (2023) and Sorenson and Hanson (2023), which suggested that unmonitored online exams can serve as valid assessments. Unlike these studies, the present research design incorporated multiple baseline in-person exams, enabling a direct comparison of student performance across formats within a consistent course structure and instructional context. Furthermore, our study reflects a more recent academic landscape, marked by the availability of advanced AI tools, such as ChatGPT, which were not accessible during the periods examined in prior research. These tools present significant challenges to academic integrity in online exams, particularly for multiple-choice assessments that emphasize factual recall. In contrast, the problem-solving nature of exams in Sorenson and Hanson (2023) and some reported in Chan and Ahn (2023) may make those formats less susceptible to unauthorized online assistance (Swiecki et al., 2022). By addressing these distinctions, the present study adds to a more comprehensive understanding of the limitations of unmonitored online exams as assessments of student learning.

Implications

These findings have important implications for educators and administrators seeking to maintain the reliability and credibility of academic evaluations in an increasingly digital learning environment. The shift to online learning environments, accelerated by the COVID-19 pandemic, has transformed the landscape of higher education. This transition has brought both opportunities and challenges, particularly concerning the assessment of student learning. Online exams offer unparalleled flexibility and accessibility, enabling students to take assessments anywhere and anytime. Furthermore, many university students favor online exams over traditional paper-based exams, citing time savings, cost savings, and increased flexibility as key advantages (Butler-Henderson & Crawford, 2020). Yet, even though considerations such as reducing expenses for physical materials and processing resources may influence institutional decisions about assessment methods, educators must weigh these practical factors against the validity concerns identified in this study.

The present study's findings underscore the need for caution when utilizing unmonitored online exams for high-stakes assessments. The potential for inflated scores and compromised academic integrity suggests that unmonitored online exams may not accurately measure student learning. In addition, educators might consider alternative assessment methods that are less susceptible to cheating, such as open-book exams that emphasize application and analysis over rote memorization, frequent low-stakes quizzes, project-based assessments, or other creative approaches such as having students write and complete their exams (Saucier et al., 2024). Incorporating proctoring technologies or supervised exam environments could also help mitigate cheating, although these solutions come with challenges, including privacy concerns and accessibility issues (Balash et al., 2021).

Limitations and Future Directions

This study has several limitations. First, the lack of demographic data for the specific cohorts limits the generalization of findings across different student populations, although contextual, demographic information was provided from a subsequent semester. Second, the quasi-experimental design does not control for all potential confounding variables, such as differences in student motivation, study habits, or external stressors between semesters. Relatedly, the use of external support materials was not assessed, and students were not prohibited from utilizing their textbooks or notes, which provides a salient confound. It is impossible to infer whether inflated scores were due to using authorized or unauthorized materials. Future research should systematically assess students’ use of various resources during online exams, perhaps through postexam surveys or other verification methods. Future research may also consider comparing exam completion times across formats. We could not analyze these times because in-person students left after finishing, and online timing data became inaccessible during our university's LMS. Understanding how students use allotted time could illuminate performance differences between formats.

Although demographic data from a subsequent section of this course (82% women) suggest a gender distribution typical of psychology majors at our institution and similar to other studies in this field, the lack of demographic data from our study sample limits our ability to examine potential demographic influences on exam performance differences. There is no theoretical or empirical basis to expect gender to systematically influence the relationship between in-person and online exam performance. Still, future research should collect and analyze demographic variables directly. Finally, the exams in the present study all utilized multiple-choice format, which precludes generalization to other exam formats such as essays, short-answer, fill-in-the-blank, or case studies. Future research could address these limitations by collecting comprehensive demographic data, employing a randomized controlled trial design, explicitly telling students they cannot utilize outside help when taking their online exams, and examining multiple exam formats other than multiple choice. Additionally, investigating the specific types and prevalence of academic dishonesty in unmonitored online exams would provide deeper insights into how and why these assessments may be compromised.

Conclusion

In conclusion, the study provides evidence that unmonitored online exams may not be valid assessments of students’ academic performance. The meaningful inflation of scores and the weak correlation with prior in-person exam performance highlight the challenges of ensuring academic integrity in unmonitored online settings. As online and hybrid learning environments become increasingly prevalent, educators must develop and adopt assessment strategies that accurately reflect student learning while upholding academic standards. By addressing these challenges proactively, educators can better ensure reliable and valid evaluations of student performance in the evolving landscape of higher education.

Footnotes

Data Availability and Positionality Statement

We report all data exclusions, manipulations, and measures in the study, and we follow JARS (Kazak, 2018). The dataset generated during and/or analyzed during the current study is available from the corresponding author on reasonable request. This study was not preregistered. This research did not receive any specific grant from funding agencies in the public, commercial, or not-for-profit sectors. On behalf of all authors, the corresponding author states that there is no conflict of interest. To provide greater transparency to this research, the authors provide readers with information about their backgrounds. At the time the manuscript was drafted, two authors self-identified as men, two as women, and all authors self-identified as White.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.