Abstract

Introduction

Student motivation is a critical predictor of academic achievement, engagement, and success in higher education. Motivating students is a crucial aspect of effective teaching.

Statement of the Problem

Although there is a wealth of research on student motivation, practical guidance for putting theory into practice in challenging teaching environments (i.e., large-format introductory courses) is lacking. We discuss a first step toward motivating students: understanding how motivated they are and using that information to inform teaching.

Literature Review

Anxiety, impeded motivation, and high student-to-teacher ratio are all challenges associated with teaching foundational introductory courses, such as statistics. The Expectancy–Value–Cost model of motivation provides theoretical background to assist with these courses. We discuss the implementation and use of motivation assessments as a teaching tool.

Teaching Implications

Motivation assessments are feasible and useful while teaching large-format introductory courses. Instructor reflections lend insights as to how to use these assessments to improve pedagogy.

Motivating students is one of the difficult yet most important aspects of effective teaching (Yarborough & Fedesco, 2020). Student motivation is a critical predictor of academic achievement, engagement, and success in higher education (Lazowski & Hulleman, 2016; Robbins et al., 2004), leading to better performance in school (e.g., Steinmayr & Spinath, 2009), and greater persistence in the face of obstacles (e.g., Allen, 1999; Liao et al., 2014). Though there is a wealth of motivation theory and laboratory studies on student motivation, motivating students in the classroom remains a challenge. There is little practical guidance for putting theory into practice, leaving the task of translating the extensive motivation literature into concrete elements of a course design to the instructors. In the current paper, we discuss a first step toward motivating students: understanding how motivated they are and using that information to inform teaching. We present our experience of implementing motivation assessments in a large-format psychology course that all psychology majors are required to take, introduction to statistics. We describe the challenge of understanding and ultimately motivating students in large-format courses, particularly statistics, and how we used motivation assessments to reflect on our teaching and generate recommendations for practice.

Challenges of Teaching Large-Format Statistics in Psychology

Quantitative methods and statistics are an integral part of the field of psychology and are a foundational part of the major’s curriculum (American Psychological Association, 2013; APA). Goal two: Scientific Inquiry and Critical Thinking, of the APA’s guidelines for psychology majors list statistics and methodology as a core part of degree attainment and recommend taking such courses early to develop foundational skills (Halonen et al., 2013). Most undergraduate psychology degree programs require students to successfully pass an introductory statistics course to obtain their degree (Stoloff et al., 2010). Poor self-concept, anxiety, and low intrinsic motivation have all been observed in students enrolled in statistics (e.g., Baloğlu, 2003; González et al., 2016; Onwuegbuzie & Wilson, 2003). More specifically, the literature shows that psychology students are not motivated to take statistics, often reporting high levels of anxiety towards statistics (Gorvine & Smith, 2015; Onwuegbuzie & Seaman, 1995; Tremblay et al., 2000). Additionally, psychology majors often delay taking the course (Bartsch et al., 2012; McGrath, 2014), which in turn negatively affects their learning and performance throughout their major (Onwuegbuzie & Wilson, 2003; Tremblay et al., 2000).

If teachers are to apply the literature on students’ motivation in statistics to their classrooms, they should prepare to support many students who dread the course, lament that they are required to take it, and struggle to be successful as a result. In fact, when we began teaching introduction to statistics for psychology majors, our colleagues warned us of the perils, advising us to brace for a difficult semester. As we considered how to motivate psychology students and support their success in statistics, a key challenge was getting insight into students’ attitudes and experiences. Statistics, like other foundational introductory courses, are often large lecture format, with high student-to-teacher ratios. Our experience includes teaching introduction to statistics with as many as 400 students in a large lecture hall, with limited opportunity for individual student-teacher interactions. As instructors, we were left wondering how we would know if our students were motivated or anxious, and whether there were specific course elements or points in time that were particularly motivationally detrimental. Monitoring our students’ motivation throughout the course to inform our teaching and in turn better serve our students became a goal of our teaching practice.

Motivation Assessments as a Teaching Tool

Despite knowing that reducing anxiety and increasing motivation have been identified as key goals for teaching (Conners et al., 1998), instructors have little practical guidance on how to meet those goals in the challenging teaching environment of large-format introductory courses. Although end-of-semester student evaluations can provide valuable feedback, they are often the sole form of instructional feedback (Keig, 2000). Further, changes can only be implemented to the next group of students, who may have different expectations and difficulties. These forms of feedback do not provide insight into how the students in the class are doing during the course. In a small course it might be easy to understand how motivated students are or if a particular lesson was anxiety provoking. However, getting a snapshot of how a large lecture class is doing, motivationally, in time to make a change that will positively affect those students is near impossible. There are hundreds of instruments that measure students’ motivation, but these were developed for research purposes, and we know of no study that has used such instruments as classroom assessments. Here, we consider how to implement a motivation instrument as a classroom assessment, to get insight into students’ experience while the course is on-going, as well as facilitate reflection of teaching practices.

In the following sections, we present how we designed our large-format introductory statistics course to include motivation assessments, while also leaving room to adjust our course based on the results of the assessment, and our own reflection. We also discuss different alternatives that we considered when designing our course and the implications of these alternatives. Our aim is to lay the groundwork for other instructors to implement motivation assessments in their classroom, facilitate their own reflection, and support course improvements with students’ motivation in mind. Although we focused our discussion on an introductory statistics course, the information presented below can be valuable to a wide range of large-format university courses.

Motivation Assessment and Theoretical Framework

To understand students’ motivation we used the Expectancy–Value–Cost (EVC) model (Barron & Hulleman, 2015), which builds on Eccles’ et al. (1983) Expectancy-Value theory. The EVC framework describes achievement motivation as a function of one’s expectations for success (i.e., individuals’ subjective appraisal of their ability to succeed at a task), their subjective value of the task (i.e., the importance, usefulness, or enjoyment an individual associates with success on a task), and subjective costs (i.e., the perceived effort, sacrifices, and psychological toll of engaging in a task). Generally, expectancy and value are positively related to academic achievement, performance, course taking, activity participation, and academic standing (Eccles, 2005; Eccles et al., 2004; Simpkins et al., 2006). In contrast, cost is negatively related to expectancy, value, achievement, and course taking (Jiang et al., 2018; Kosovich et al., 2015). Cost has been argued to play a distinct role, compared to value and expectancy, in achievement and academic outcomes (e.g., Barron & Hulleman, 2015; Jiang et al., 2018; Perez et al., 2014).

Although there are multiple achievement motivation theories, we thoughtfully chose to measure our students’ motivation using the EVC model. First, there is a large body of research on Expectancy-Value theory (see Wigfield & Eccles, 2000 for a review) and it has been studied in a variety of contexts, including to understand motivation in undergraduate statistics courses (e.g., Ramirez et al., 2012). Second, the EVC model synthesizes multiple perspectives and theories, which supports comprehensive understanding of students’ motivation. For example, expectancy captures one’s belief that they can accomplish a task, which incorporates ideas from Marsh’s Self-Concept Theory (1990), Bandura’s Self-Efficacy Theory (1986), Rotter’s Locus of Control Theory (1966), and Weiner’s Attribution Theory (1972). Similarly, the concept of value and the importance of wanting to engage in a task has also been captured in other influential theoretical frameworks such as Deci and Ryan’s Self-Determination Theory (1985) and Ames’ Achievement Goal Theory (1992). Though we appreciate the theoretical value in these other influential frameworks for research purposes, the EVC model is comprehensive while also being accessible to teachers who may not be versed in the nuances of motivation theory. With little review of theory, a student can consider the questions of, “Can I do this; Do I want to do it and why; and What are the costs of doing this?” Correspondingly, a teacher can consider what they can do to support expectations, values, and decrease costs.

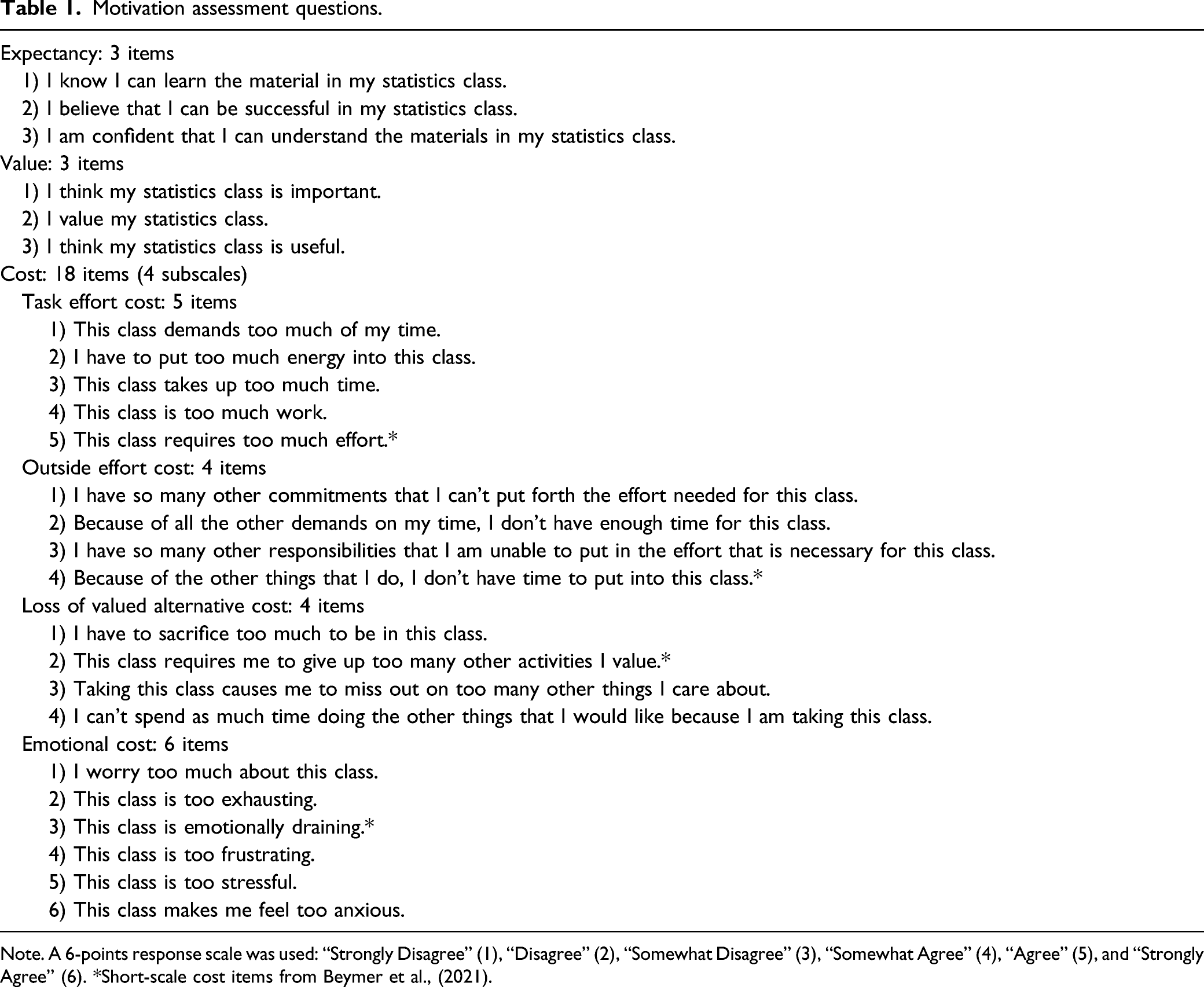

Motivation assessment questions.

Note. A 6-points response scale was used: “Strongly Disagree” (1), “Disagree” (2), “Somewhat Disagree” (3), “Somewhat Agree” (4), “Agree” (5), and “Strongly Agree” (6). *Short-scale cost items from Beymer et al., (2021).

Implementing Motivation Assessments

The motivation assessment could have been implemented in various ways, and instructors must consider what is best suited for their course. To understand our implementation decisions, and facilitate reuse and further development of practice, we provide details about our course structure and design. We also describe how we delivered the assessment and discuss other viable alternatives we considered.

Course Design

The course was a 15-week, large-format lecture offered by the psychology department. The course covered introductory statistical concepts using a blend of traditional lecture, guided practice problems, and group work. The course took place once a week, for three hours. Lectures were designed to follow material in the assigned textbook (i.e., Gravetter & Wallnau, 2015) that students were required to read. Over the course of the semester, students completed four motivation assessments and completion counted for a total of five percent of their final grade. The rest of their grade was from: summative exams (55%), group activities (10%), and weekly homework (30%).

Assessment Delivery

We chose to deliver the motivation assessments outside of class, through Qualtrics. Students had a week to fill out the questionnaire. For each completed questionnaires, students received 1.25% of their participation credit, which amounted to 5% of their final grade. We selected Qualtrics because of our familiarity with it and because it can automatically generate reports of responses, making the assessment feasible to produce and score during the semester. Our decision to collect the assessment outside of class was not an easy one. We also considered open source, in class options using smartphones, as well as university sanctioned clickers. These are viable options, but our concerns included the need for students to own a smartphone or purchase a clicker, how the assessment would use instructional time, and that students who were not in class would not be assessed, potentially biasing the data toward motivated students. We recognize that not all these concerns are fully addressed with an outside of class option and are eager to see more research into evaluating different options to further develop practices. Since the piloting of the motivation assessment that we discuss here, we have developed the assessment (originally a total of 24 items; see Table 1) into a short version (10 items total: 3 Expectancy items, 3 Value items, and 4 Cost items) with a thorough validation study (Beymer et al., 2021). This shortened version makes in-class (or out of class) assessment more time efficient, while still providing a useful motivational snapshot of the students.

Another important consideration is how to protect students’ privacy and encourage honest and open responses, while also tracking which students completed the assessment for participation credit. Instructors could choose a totally anonymous option that would prevent awarding participation credit, but ensure students felt comfortable to report honestly. We did not choose this option because rates of response on the anonymous evaluations were very low (less than 10%). Instead, we recruited a research assistant who was not involved in teaching the course to log who completed the survey, then remove identifying information such that when we looked at the assessment data, we did not see names or identifying information of current students. We choose to only view aggregated, anonymous responses and never followed up with specific students who reported low motivation. We acknowledge that even though we were transparent about our procedures and encouraged students to communicate with us their genuine attitudes, some students may have felt it would be socially desirable to report high expectancy and value and low cost.

Finally, we assigned the motivation assessments after weeks 1, 6, 9, and 11 of the 15-week semester. We decided to collect data on our students’ motivation at multiple time points to pinpoint times in the semester that were particularly tough on students and get a sense of how motivation might change as the course progressed. Further, we made sure to assess the first week of class to gauge first impressions, giving us insight on whether major shifts in their motivation occurred during the course. Paired with weekly homework grades, we could also determine if certain material in the course was particularly detrimental to students’ motivation. However, teachers with more or less experience, different class sizes, and different curriculum may require a different number and timing of assessments to reflect on and adapt their course.

Using the Motivation Assessment Data for Teaching

Teachers of psychology are always on the hunt for relevant and interesting examples for class. A secondary benefit of the motivation data is that it can be used to teach concepts related to statistics. For example, a histogram of the average scores of the expectancy subscale was presented to students. In our data, the distribution was negatively skewed, such that most students reported high expectancy. We circled the lower, less frequent end of the distribution and explained that if they were worried about doing well, students could attend help sessions, tutorials, and access ungraded practice problems. In another example, we used data from two time points to demonstrate a paired-samples t-test. Using these data, we could display descriptive statistics from each time point, reinforcing earlier concepts, as well as interpret a null hypothesis test and measures of effect size. Overall, the anonymous data provides many opportunities to demonstrate concepts in class, with real data that is directly relevant to the students. Further, using the data for a limited number of examples communicates that the teacher investigated the responses and took them seriously.

Reflections and Improving Our Practice

The promise of a motivation assessment is to foster teachers’ reflections on how they can promote expectancies and value while decreasing cost. Students can be unmotivated for many reasons and those reasons can be invisible to the instructor: do they not see the value in the course material, or are they uncertain of their ability, or is it because the course is too demanding or stressful? The motivation assessment can be used to adapt course plans, implement interventions, and communicate support at the right time. There is a growing body of research on motivation interventions that could be used in classrooms (see Harackiewicz & Priniski, 2018 for a review) and with a better understanding of their students’ motivation, instructors can then select the best intervention for their class. In the following section, we share our reflections from using the assessment and how we worked to improve our teaching practice.

First Impressions

We were eager to see students’ first impressions of the course and much to our surprise, and contrary to what previous research would predict (e.g., González et al., 2016; Tremblay et al., 2000), students enrolled in our course, on average, expected to do well (out of a 6-point scale: M = 4.65, SD = 0.80), saw the value in the course (M = 4.83, SD = 0.91), and had moderate anticipated cost associated with the course (M = 3.14, SD = 0.85). We went back to class with a more accurate understanding of students’ motivation and enthusiasm to teach students with a positive outlook. Some students still lingered after class or came to office hours with the motivational struggles the literature would suggest, but we were able to understand these students’ experience in the larger context of our course.

Reflecting on the Components of Motivation

The EVC assessment facilitated reflection on each of the core components of motivation when designing and adapting our course. Regarding value, we observed that most of our students reported high levels early in the term, but research indicates that motivation decreases over time (Kosovich et al., 2017). In an effort to avoid this decline, we incorporated “statistics in the news” examples into lectures. Students were encouraged to bring or email news that included statistics, and multiple students did. This value promoting practice is aligned with research demonstrating that value can be improved by helping students generate connections between the course material and their own lives (e.g., Harackiewicz et al., 2016; Hulleman & Harackiewicz, 2009; Kosovich et al., 2019; Rosenzweig et al., 2020). Incorporating the EVC model and resulting assessment data into our teaching encouraged us to put theory into action.

We observed that cost was the most variable of the components initially and across time. We reflected that despite having a majority of motivated students, some students were experiencing stress and felt overworked, and that this increased during the semester. For example, some of our students’ cost increased as time progressed, and homework grades dropped slightly in the sixth week of the term. Using this information, we told the students that extra class time would be spent on that content, resulting in an extension for an assignment and a shortening of the next assignment. When we announced this slow down to the class, we heard audible sighs of relief and some students clapped. The combination of the motivation data with the homework scores allowed us to act quickly. However, now, as standard practice, we have a “flex week for muddy points” every term, demonstrating that motivation assessments can inform in the short- and long-term design of a course.

Regarding expectancy, scores were higher than we anticipated, and therefore we did not act on the data beyond reminding students of additional resources throughout the semester. However, we did design the course to promote high expectancy at the outset by allowing students to drop the lowest two assignments, repeat another version of an assignment to improve their learning and performance, and by incorporating participation grades for in-class activities. Other possible adaptions we considered including if our students would have reported more concerning levels of value or cost more consistently were writing exercise interventions (see Rosenzweig et al., 2020 for details). We acknowledge that students’ level of motivation will differ across classroom and cohorts. Not all students will respond in the same way and instructors may reflect differently. However, no matter the response pattern, having the results of the motivation assessment can enable the instructor to reflect on their students’ motivation and approach their teaching differently based on that reflection.

Motivating Ourselves

Finally, though there is a focus in the literature on students’ motivation, teachers’ motivation is equally important. Our students were faces lost in the crowd, and we assumed they were having a miserable time and that they would dread the course. The motivation assessment changed that. We reflected that the assessments fostered a sense of a two-way feedback system that would otherwise be difficult to achieve in large courses. Usually, students get high stakes feedback in the form of exams, while teachers get feedback at the end of the term when it is too late to change anything for those students. For us, the motivation assessments created a culture of communication and improvement that is otherwise difficult to achieve in a large-format or online course. Our experience using the motivation assessments reinforced that through feedback, both the students and teaching team can work together to improve the learning environment. As Chew et al. (2018) suggested, it is critical for instructors to receive feedback on their teaching from the students to initiate change and improvement in the teaching and learning of psychology. When students witness their feedback in action it can help to establish a supportive classroom environment, which is critical for retention and learning outcomes (Catt et al., 2007).

Concluding Remarks

Overall, using a motivation assessment provided us several advantages that are otherwise difficult to achieve in a large classroom: (a) the ability to have a more accurate representation of students’ motivation in the course, (b) greater ability to identify challenging topics, (c) opportunities to adjust the course content to accommodate those challenges, in real time, and (d) the ability to create a feedback culture in the classroom. We hope that this work is only the beginning of a discussion about using motivation assessments as a teaching tool in large university courses.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council of Canada (SSHRC Small Grants Program).