Abstract

Background

Retrieval practice promotes retention of learned information more than restudying the information. However, benefits of multiple-choice testing over restudying in real-world educational contexts and the role of practically relevant moderators such as feedback and learners’ ability to retrieve tested content from memory (i.e., retrievability) are still underexplored.

Objective

The present research examines the benefits of multiple-choice questions with an experimental design that maximizes internal validity, while investigating the role of feedback and retrievability in an authentic educational setting of a university psychology course.

Method

After course sessions, students answered multiple-choice questions or restudied course content and afterward could choose to revisit learning content and obtain feedback in a self-regulated way.

Results

Participants on average obtained corrective feedback for 9% of practiced items when practicing course content. In the criterial test, practicing retrieval was not superior to reading summarizing statements in general but a testing effect emerged for questions that targeted information that participants could easily retrieve from memory.

Conclusion

Feedback was rarely sought. However, even without feedback, participants profited from multiple-choice questions that targeted easily retrievable information.

Teaching Implications

Caution is advised when employing multiple-choice testing in self-regulated learning environments in which students are required to actively obtain feedback.

Keywords

Retention of Highly Retrievable Information

Students often use (electronic) flashcards, and teachers use clicker questions to encourage the practice of learning content (Caldwell, 2007; Chauhan, 2017; Golding et al., 2012; Mayer et al., 2009; Wissman et al., 2012). Digital flashcards and online quizzes have the common purpose of responding to questions about the learning content. Learners using these technologies knowingly or unknowingly benefit from the testing effect, also known as retrieval practice effect. The testing effect means that active recall from practicing learned content is more beneficial for retention than restudying the same content. This testing effect has been reliably found in many laboratory studies (c.f, the meta-analyses by Adesope et al., 2017; Phelps, 2012; Rowland, 2014). Consequently, research has focused on the application of the testing effect in real-world educational contexts (Adesope et al., 2017; Schwieren et al., 2017). However, the role of feedback for benefits of multiple-choice testing over restudying in applied contexts is still largely unclear (McDaniel & Little, 2019; Moreira et al., 2019; Roediger et al., 2011). Applied research has indicated that whenever feedback is withheld, multiple-choice testing is not more effective than restudying (Greving & Richter, 2018). Feedback seems especially important for students who engage in self-regulated restudying after unsuccessful testing. The aim of this article is to examine the testing effect for multiple-choice questions in an authentic educational setting of a university course while also allowing students to obtain corrective feedback in a naturalistic way.

Testing Effects in Laboratory and Applied Research

The effectiveness of answering multiple-choice questions for promoting retention of learned content has been demonstrated in many laboratory studies. Summarizing this research, meta-analyses have found small to medium effect sizes (Hedges’ g) ranging between 0.36 and 0.70 (Adesope et al., 2017; Rowland, 2014). These analyses also revealed that the most important moderators of the testing effect are retrievability (Rowland, 2014) and feedback (Adesope et al., 2017; Phelps, 2012; Rowland, 2014). Retrievability in this context describes the success with which learning content can be retrieved from memory, resulting in correct responses in the testing condition. Therefore, retrievability can be operationalized by the difficulty of items in the practice tests. In contrast, corrective feedback seems to increase the testing effect mainly by fostering learning from unsuccessful retrieval attempts (Pashler et al., 2005).

Providing learners with multiple-choice questions instead of restudying opportunities is easy and, therefore, bears great potential for its application in educational settings. To determine the effectiveness of multiple-choice testing for practical purposes, applied research should provide evidence that these tests lead to better retention than other review strategies such as restudying. Furthermore, applied research should provide evidence for whether factors found to influence the testing effect in laboratory settings are equally significant in educational contexts. These results can inform practitioners in developing guidelines on when and how to use multiple-choice testing as a tool for fostering retention. Proponents of the testing effect assume a beneficial effect of testing compared to restudying, irrespective of feedback (e.g., Roediger & Karpicke, 2006). However, a recent review of testing in educational contexts identified that all studies on multiple-choice testing had employed feedback or had not compared testing to another activity (Moreira et al., 2019). Consequently, the authors stated that no conclusions can be drawn on whether multiple-choice testing alone is superior to other activities that foster retention. This conclusion is especially worrying since recent studies have not found multiple-choice testing superior to restudying when simulating classroom learning under laboratory conditions (Butler & Roediger, 2007; Kang et al., 2007; Nungester & Duchastel, 1982). Additionally, a study that investigated multiple-choice testing without feedback in a university lecture found that restudying was equally effective as taking multiple-choice quizzes and that retrievability of practice questions had no effect on these outcomes (Greving & Richter, 2018).

The differences in findings of studies that have investigated multiple-choice testing with and without feedback can be partly attributed to various indirect factors in addition to benefits of testing on memory (McDaniel & Little, 2019; Roediger et al., 2011). Consequently, additional research is needed that investigates the testing effect of multiple-choice questions and its potential moderators in educational contexts.

Feedback and the Testing Effect

Feedback is an important component in self-regulated learning that shapes cognitive and metacognitive processes alike: It can confirm information and beliefs students hold, correct erroneous information or beliefs, add knowledge, tune motivation and beliefs, or restructure knowledge (Butler & Winne, 1995). When students learn in a self-regulated fashion, feedback can arise from various sources such as self-generation or external sources (Bangert-Drowns et al., 1991). Feedback also comes in different types such as formative or summative and focusses on different aspects of learning such as learning outcomes, meta-memory, or motivation (Butler & Winne, 1995). In research on the testing effect, it is most common to use feedback that is formative, external, and outcome-oriented and indicates whether a given answer is correct, with incorrect answers often followed by the correct answer. Feedback following practice tests provides two kinds of information to students. For one, it helps correct erroneous information, confirm correct information, or add knowledge and can thus exert a memorial effect (Pashler et al., 2005). Furthermore, it can provide a more realistic judgment of the learning outcomes and guide future learning activities and thus operate on a metacognitive level (e.g., Kornell & Rhodes, 2013).

Examining the influence of feedback in real-world educational settings and whether it adds to the unmediated effects of practice tests is important for several reasons. First, very few applied studies have investigated the effects of feedback on practice testing, and these studies have yielded mixed results. Two studies found beneficial effects of feedback on the testing effect (Marsh et al., 2012; Vojdanoska et al., 2010). However, among other shortcomings, both studies lacked a restudy control condition. Additionally, Butler and Roediger (2007) found no effects of feedback on the testing effect. However, the study was conducted in a simulated classroom, and the effect of feedback given in this context might have differed from the effect of feedback in real-world educational contexts.

Second, studies investigating testing effects in educational contexts without the provision of corrective feedback might have suffered from limited ecological validity. We assume that students gain an additional metamemorial benefit from practice testing as they become aware of their knowledge gaps. This assumption is backed by findings that practice tests lead to more accurate memory predictions (Little & McDaniel, 2015), which in turn influence students’ decisions for or against additional studying (Metcalfe & Finn, 2008). Consequently, students who use practice testing compared to students who restudy might therefore engage in additional restudying to close knowledge gaps that become apparent from practicing questions. Thus, research is necessary that assesses students’ need for feedback following different practice opportunities.

Third, lab studies have shown beneficial effects of feedback when practicing multiple-choice questions, but this effect can also avert the negative effects that could arise because of the exposure to incorrect information in the form of lures or distractors (Butler & Roediger, 2008; Roediger & Marsh, 2005). Therefore, research is needed to investigate whether feedback adds to the unmediated effects of multiple-choice practice tests in educational settings.

Rationale of This Study

The present study adds to the body of research by investigating the beneficial effects of multiple-choice questions without feedback compared to restudying in an educational setting. Additionally, we incorporated two moderators that might be crucial for the effectiveness of multiple-choice practice tests in a real-world educational context. The first potential moderator is retrievability of learned content when the learned content is practiced. The second potential moderator is the possibility to obtain feedback for responses in the practice test. In applied research that investigated the beneficial effects of multiple-choice testing with additional feedback, researchers advocating this procedure have implicitly assumed that feedback will always be provided by instructors or that students will seek feedback whenever they do not know the answer to practice questions. Questioning this assumption, we employed a more naturalistic manipulation of feedback. Participants were allowed to revisit the learning material whenever needed while practicing the learned content. This method is similar to the type of feedback found in educational settings that incorporate self-regulated learning.

We manipulated students’ follow-up learning opportunities after they visited a university course session. Practice consisted of answering multiple-choice questions about the learning content or restudying the learning content. Students could revisit the original content after practicing to receive corrective feedback. We assessed the effectiveness of practice testing versus restudying with a surprise criterial test administered between 1 and 7 days after the last practice session. The original learning materials were textbook chapters that were well written, clearly structured, and did not require a large amount of specific prior knowledge. Thus, the complexity (or element interactivity) of the learning materials in terms of cognitive load theory was rather low, which should create favorable conditions for the testing effect to occur (Hanham et al., 2017; Van Gog & Sweller, 2015).

Prior research suggests that being tested on learned information is associated with a critical evaluation of one’s knowledge of the learning content (Metcalfe & Finn, 2008).

Given that there is considerable evidence for testing effects in real-world educational settings (Adesope et al., 2017; Schwieren et al., 2017), we expected a testing effect. From research investigating the retrievability of tested items (Greving & Richter, 2018) and beneficial effects of feedback on the testing effect, we assumed both retrievability and feedback to add to the testing effect.

We tested the following four operational hypotheses derived from our assumptions: Hypothesis 1 states that answering multiple-choice questions leads to more request for feedback in terms of revisiting the original content compared to restudying. Hypothesis 2 states that in terms of retention of the learning content, the testing condition outperforms the restudy condition. Hypotheses 3 and 4 state that higher retrievability rates and more corrective feedback are associated with more pronounced testing effects, respectively.

Method

Participants, Power, and Required Sample Size

We aimed to recruit participants from university courses on the topic of behavioral and learning disorders in childhood and youth. Usually, 35 students participate in each of these courses. The students in these courses were enrolled in a teacher training program and would eventually become teachers in different school tracks. The courses belong to the mandatory psychology curriculum of the study program. Students could freely choose between eight different seminars and one lecture, all of which conveyed the same content and prepared for the same exam. The final exam was scored automatically with a fixed scoring rubric.

We did not know beforehand how many of the students in a course would participate in the study. Therefore, we simulated the statistical power necessary to detect a testing effect that was similar to those reported in meta-analyses investigating the testing effect with feedback in the psychology classroom (Schwieren et al., 2017). In these power analyses, we simulated the outcomes of multiple generalized linear mixed models (GLMM, see Results section) that matched our planned analyses for different sample sizes and checked whether the confidence interval of a simulated statistical power surpassed the 80% threshold (Brysbaert & Stevens, 2018). Model parameters were taken from a study that investigated the testing effect for multiple-choice tests and differing retrievability in the university classroom (Greving & Richter, 2018). Power simulations revealed that planned analyses with only 20 students would not reliably provide enough statistical power within a 95% confidence interval (power = 81%, CI [79, 83]); however 25 (power = 87%, CI [85, 89]), 30 (power = 89%, CI [87, 91]), or 40 (power = 97%, CI [95, 98]) students would be sufficient. Based on this power analysis and considering that not all students would opt to participate in the study, we chose to recruit students from two courses to make sure that the minimum number of 25 participants is reached.

Participants gave their informed and written consent prior to participation and they received course credit for participating in form of a small bonus on their final course grade regardless of their performance in the experiment. Additionally, students were informed that participation in this study involved practicing the learning content taught in these courses and thus was assumed to yield better preparation for the upcoming exam. The decision not to participate in the study did not yield any negative consequences. Moreover, there were other opportunities to earn the bonus on the final course grade. Given the flexibility of exam choice and the objective automatic scoring of the results, students could be assured that their participation had no negative effect on their grades. The study was in accordance with all ethical guidelines of the German Psychological Association. According to these guidelines, explicit approval of the ethics committee of the Institute of Psychology was not required.

Thirty students (73% female) completed all parts of the study. Participants’ age ranged from 18 to 24 years (M = 19.80, SD = 1.49), and participants were mostly students in their second semester (M = 2.13, SD = 0.68) of their study program, whose length ranges from seven (elementary school) to nine (upper educational tracks and special education) semesters. The courses in psychology are recommended for students to attend early in the first year of their studies, because the contents taught in the psychology courses partly provide the basis for other courses in the teacher curriculum. However, the recommendations are not binding, and students can deviate from them in their study plan.

Materials

Test Items and Restudy Statements

Two chapters from a textbook on mental disorders that are part of the regular reading assignments of the course were selected as the basis for study material. We identified text segments that reflected the logical structure of the text and represented one key information unit of the text. The content of the chapter on “Drug abuse and addiction” was divided into 58 text segments and the chapter on “Suicidality” was divided into 37 text segments. Text segment length ranged between 28 and 255 words (M =113.21, SD = 46.64). Each text segment was made available for revisiting as part of the feedback. For 20 information units from each chapter, one statement and one multiple-choice question for each unit were created by summarizing the key information of the information unit. An example statement is: “The prevalence of alcohol addiction in adolescents is identical to the prevalence of alcohol addiction in the total population: 2–3%. Girls make up one fifth of the juvenile alcohol addicts.” Multiple-choice questions with four response options were created by asking for the key information, for example: “Which statement concerning the prevalence of addiction to alcohol is correct? (A, correct option) Girls make up a fifth of the juvenile alcohol addicts, (B) Girls make up a third of the juvenile alcohol addicts, (C) The prevalence of alcohol addiction in the total population is 15%, and (D) The prevalence of alcohol addiction among adolescents is 15%.”

Practice Materials and Feedback

Practice materials and feedback were presented with the software Inquisit (Version 5.0.6.0; Millisecond Software, 2016) and consisted of either 20 multiple-choice questions (testing condition) or 20 summarizing statements (restudy condition). In both conditions, each practice item was presented on one page.

Whenever participants requested feedback, they were first presented with a table of contents from which to choose text segments to revisit. After choosing a text segment, the segment was displayed and participants were free to navigate the text by using arrow keys or by returning to the table of contents. Text segments served as the basis for the initial creation of idea units. Conversely, the information of each question and answer and each statement could be found in the corresponding text segment.

The experimental software recorded which text segments were revisited (if any) for each preceding practice item.

Criterial Test

A criterial test was constructed that consisted of 14 items from each topic, resulting in 28 questions. All items were identical to the practice items in the testing condition.

Design

We investigated the effect of the independent variable practice condition (testing or restudy) across two course sessions on the dependent variable performance in the criterial test. All participants experienced all practice conditions in the course sessions (within-participants design). To control for effects of topic and sequence, we counterbalanced the sequence of conditions. Each participant was randomly assigned to one of the two sequences upon arrival at the first practice session.

Procedure

General Procedure

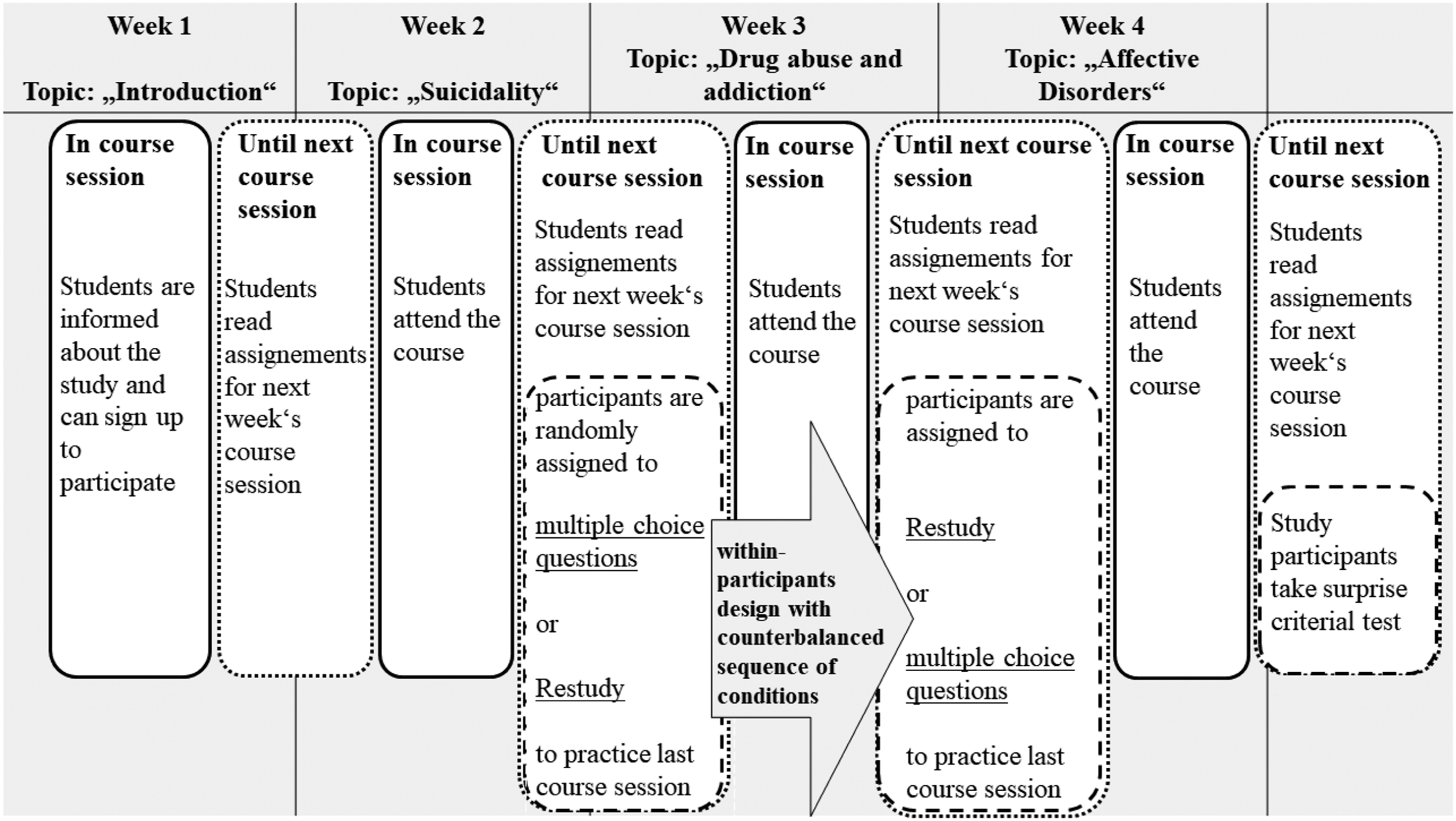

Students in the course were assigned to read book chapters in preparation for the course sessions. All course sessions were taught by the first author, and the course content was based on and followed the thematic structure of the reading assignments but contained further explanations and details for a deeper understanding of the contents. All contents addressed in the upcoming exam questions occurred in both the literature and the course. The study was conducted in the first 4 weeks of the semester, whereas after two subsequent regular course sessions (i.e., the focal sessions), voluntary practice sessions were offered, in which the experiment was conducted (see Figure 1). In Week 1, students were informed about the study and the course and given the first reading assignment in preparation of the second course session. In Week 2, the course session on “Suicidality” took place (first focal session). At the end of the course session, students were given the second reading assignment in preparation of the third course session and study participants were asked to practice the course content of the last session in the laboratory within 1 week. In Week 3, the course session on “Drug abuse and addiction” took place (second focal session). At the end of the course session, study participants were asked to practice the course content of the last session in the laboratory within 1 week. In Week 4, the next course session took place that was unrelated to the study, and at the end of the session, study participants were instructed to present themselves to the laboratory within 1 week, which was announced as an additional practice session. In this session in the lab, the surprise criterial test was administered. Visual Presentation of Design and General Procedure by Week. Note. In course activities are displayed differently (solid outline) than activities between course sessions (dotted outline). Additionally, study participants’ activities are furthermore visually differentiated from student activities (dashed outline)

Practice Sessions and Criterial Test

Practice sessions were conducted in the laboratory and took between 15 and 50 min, depending on participants’ speed and willingness to obtain feedback. In each practice session, participants first answered sociodemographic items and reported whether they fulfilled the reading assignment. Participants then engaged in practicing the course content in one of the two practice conditions (testing or restudy). Practice was self-paced. The order of practice items was randomized. In the restudy condition, participants were asked to read statements, whereas in the testing condition, participants were asked to answer multiple-choice questions. In the restudy condition, participants were then asked whether they wanted to revisit the original learning content. In the testing condition, a message first indicated whether an item was answered correctly before asking the participants whether they wanted to revisit the original learning content. Whenever participants gave an affirmative response in both conditions, they were allowed access to the original learning content and to browse and reread it with no time limitation.

At the beginning of the criterial test, participants were informed that no further practice would take place and that they would be tested on the two previous course sessions. All items were then presented in randomized order and without a time limit. Finally, participants were thanked, debriefed, and reminded not to disclose information regarding this study to other students.

Results

Learning Behavior, Retrieval Practice Performance, and Feedback-Seeking Behavior

Seventeen participants reported having read the chapter on “Suicidality” and 15 participants indicated having read the chapter on “Drug abuse and addiction”. Participants in the practice condition answered between 3 and 16 of the 20 items correctly, on average 51% (number of correct answers: M = 10.10, SD = 3.37). A majority (54%) answered more than half of the questions correctly. Participants requested feedback in the form of revisiting the text for 0–9 items in the practice sessions, on average for only 9% (number of items: M = 3.53, SD = 5.83). We investigated whether the practice condition had an effect on feedback behavior (Hypothesis 1): Participants requested feedback following items in the testing condition (M = 2.07, SD = 1.36) slightly more often than for items in the restudy condition (M = 1.47, SD = 1.94), but the difference was not significant, t(51.98) = 1.38, p = .172, d = 0.36, CI [-0.27, 1.47]. Of 106 total revisits of the text, only 19 (18%) text segments included the content of the preceding practice item.

In sum, the practice items in the testing condition were relatively difficult. Nevertheless, overall request for feedback was small, the requests did not differ between conditions, and the relevant information was mostly not found in the learning materials. We therefore concluded that participants’ feedback behavior was too sporadic and erratic to be considered appropriate in terms of corrective feedback.

Modeling Performance in the Criterial Tests

Hypotheses 2, 3, and 4 assume effects of different variables on retention of course content as measured in the criterial test. These variables varied between items (retrievability and practice condition) or as a function of participants and items (feedback behavior of participants for items). Additionally, considering that—similar to real educational setting—participants varied in their ability to retain content and learning content varied in its memorability. We chose to adapt multi-level modeling because this approach allows for simultaneous investigation of predictors on the item level (retrievability and practice condition) and on the combined participant-item level (feedback behavior per item) while controlling for unsystematic variance on both the item and participant level. Mixed-effect models have many advantages compared to ANOVAs (e.g., Baayen et al., 2008; Richter, 2006) especially with regard to analyzing categorical outcome variables (Jaeger, 2008). We estimated GLMMs with a logit-link function (Dixon, 2008) with the R package lme4 (Bates et al., 2015). We used the package emmeans (Lenth, 2019) for comparisons between experimental conditions and for estimating performance scores for different conditions. Participants and test items were included as random effects (random intercepts) in all models.

The testing condition was compared to the restudy condition (dummy coded: testing = 1, restudy = 0).

We included the retrievability of learned information with two dummy-coded predictors that contrasted items of medium retrievability and low retrievability with items of high retrievability as the reference condition. To construct this predictor, we grouped the multiple-choice questions into three equally sized ordered categories (tertiles) according to their difficulty in the practice tests. To avoid distortions from extreme values, we discarded the lowest and the highest 5% of the distribution before the grouping, which led to the exclusion of three items. Item difficulties were corrected for guessing by subtracting the times an item was answered incorrectly from the times an item was answered correctly and dividing this difference by the available multiple-choice options - 1 (e.g., Frary, 1988). This procedure resulted in three categories of items with high (item difficulties from 56% to 91%), medium (21%–55%), or low retrievability (0%–20%). Following this rationale, retrievability of 10 of the items was classified as being easily retrievable, whereas 11 and 7 items were associated with medium and low retrievability, respectively. Finally, the models included the interaction of retrievability with testing versus restudying. We refrained from including feedback behavior as a predictor because of the sporadic and erratic way participants used feedback. All predictors and the interactions were entered simultaneously in the models.

To account for the possibility of the length of the text section exerting direct or indirect effects on retention of the learning content, we also estimated a model that included the z-transformed text length as well as its interactions with all other predictors. However, model fit for this model was not significantly better than for the more parsimonious original model Δχ2(6) = 5.48, p = .484. Thus, the model including text length was discarded.

Model-based Hypothesis Testing

As hypotheses 2, 3, and 4 assume an influence of certain variables on the retention of learning content as measured in the criterial test, determining the significance of factors in the estimated model serves as hypotheses tests. As the erratic nature of the feedback behavior led us to exclude feedback behavior, Hypothesis 4 could not be tested.

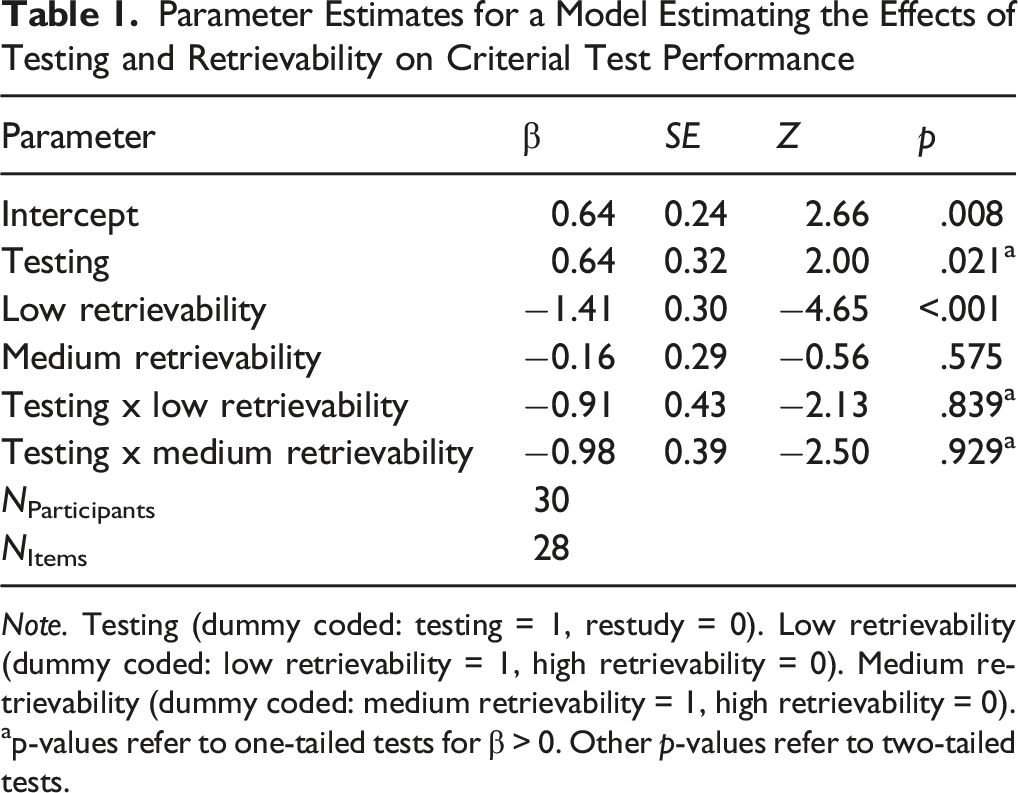

Parameter Estimates for a Model Estimating the Effects of Testing and Retrievability on Criterial Test Performance

Note. Testing (dummy coded: testing = 1, restudy = 0). Low retrievability (dummy coded: low retrievability = 1, high retrievability = 0). Medium retrievability (dummy coded: medium retrievability = 1, high retrievability = 0). ap-values refer to one-tailed tests for β > 0. Other p-values refer to two-tailed tests.

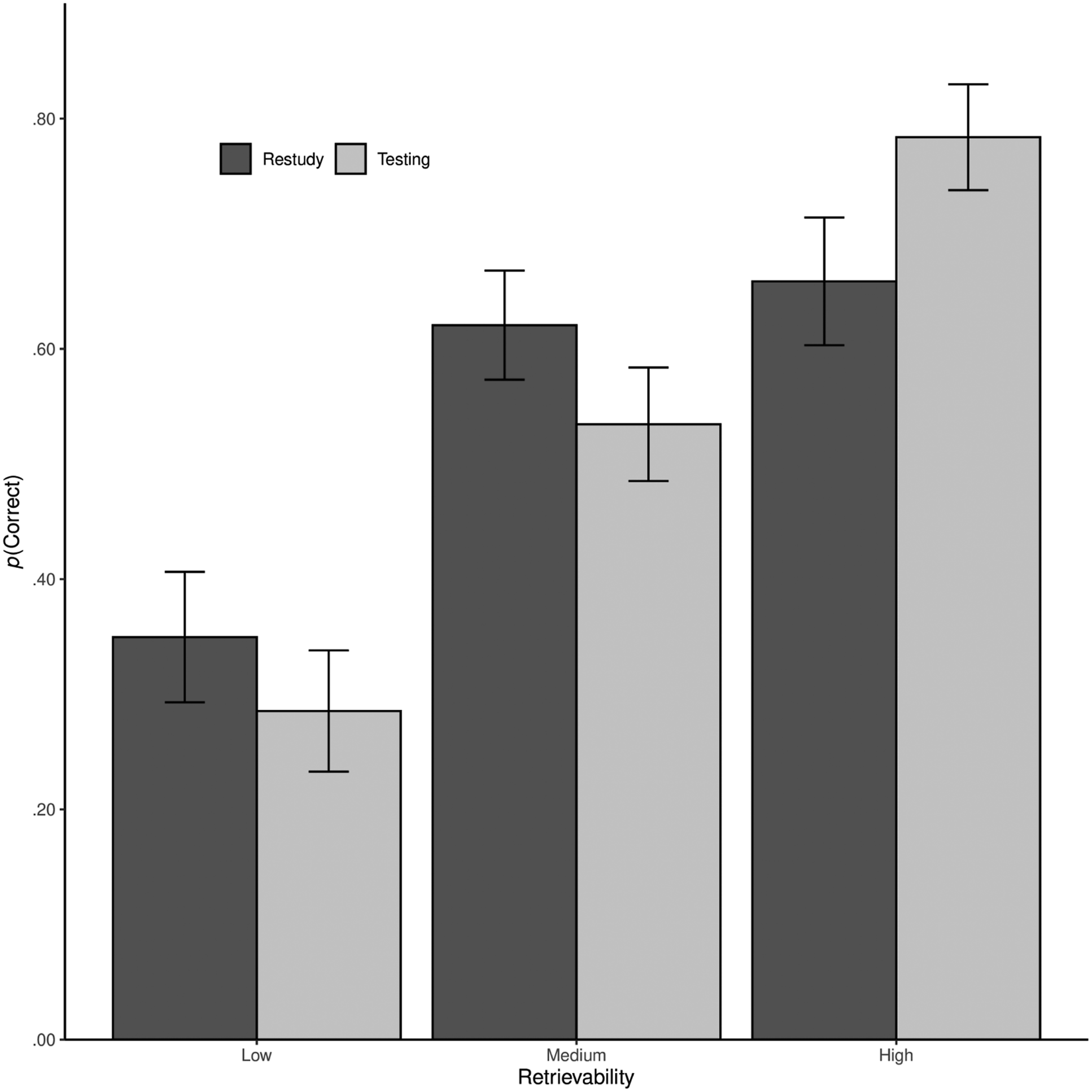

Probability of a Correct Response in the Criterial Test by Retrievability and Practice Condition. Note. Mean probability of correct responses (with standard errors) in criterial test items (back-transformed from the logits in the GLMM) by retrievability (low, medium, or high) and practice condition (testing vs. restudy)

Discussion

We investigated the effects of multiple-choice testing compared to restudying within an existing university course using a minimal intervention design. We expected the benefit of testing to be dependent on the retrievability of the tested items and the amount of feedback learners were able to obtain by revisiting the learning content.

One main finding was that learners were unwilling to obtain feedback by revisiting the text, and whenever they revisited the text, they were often unable to identify relevant text segments that corresponded to the practiced content. Contrary to our assumptions, feedback requests were independent of practice condition (Hypothesis 1). The main consequence of this feedback behavior was that only 2% percent of practiced items were followed by feedback that included the correct information. We therefore assume that no corrective feedback was provided in this study and thus interpret the remaining results accordingly and refrain from testing Hypothesis 4. It is beyond the scope of this study to investigate reasons for the unwillingness to obtain feedback; however, it is reasonable to assume a variety of factors that might influence feedback on a general level, such as study participants shying the additional effort. It is a strength of this study to investigate the effects of multiple-choice testing in a naturalistic setting while using course material that eventually was also tested in the exam, and that participants were informed that practicing course content (which also includes multiple-choice questions with additional feedback) will help them prepare for the upcoming exam. The naturalistic setting suggests that the observed feedback behavior observed indeed reflects students’ willingness to engage in more learning subsequent to being tested. Additional research, however, should investigate participant-specific explanations for the lack of feedback behavior such as meta-cognitive illusions (Metcalfe & Finn, 2008) or experienced stereotype threat imposed by the testing situation (e.g., Mangels et al., 2012).

Another main finding was that the beneficial effects of testing largely depended on the retrievability of the practiced items (Hypothesis 3): Contrary to Hypothesis 2, we found a testing effect for highly retrievable items only, which corresponds to mean retrievability rates between 56% and 91%. To our knowledge, this result is the first evidence for a testing effect for multiple-choice questions in a real-world educational context, without the provision of corrective feedback and in comparison to another activity that fosters retention.

Beneficial effects of practicing highly retrievable multiple-choice questions without feedback are in line with the bifurcation model (Halamish & Bjork, 2011; Kornell et al., 2011), with previous findings from laboratory research (Rowland, 2014), and also findings from research in educational contexts using short-answer questions (Butler & Roediger, 2007; Greving & Richter, 2018). The bifurcation model states that the superiority of testing without feedback compared to restudying is largely dependent on the amount of successfully retrieved items in the testing condition. This assumption can be partly backed up by the findings from Rowland’s meta-analysis, which shows no testing effects for laboratory studies with no corrective feedback and retrievability rates of less than or equal to 50%. Similar interaction patterns were found in studies investigating the testing effect in educational settings. In these studies, a testing effect emerged for short-answer questions for which retrievability rates were higher than 50% (Butler & Roediger, 2007; Greving & Richter, 2018). Note that these studies followed a rationale similar to the present study. However, both studies found no testing effects for highly retrievable multiple-choice questions. This discrepancy of results might be explained by the delay of practice tests and the resulting increase in test difficulty. In the two previous studies, participants answered multiple-choice questions immediately after the initial study, whereas in the present study, time between initial study and practice conditions ranged between 1 day and 1 week. Research has shown that delaying an initial retrieval attempt increases retrieval difficulty, which in turn promotes long-term retention, provided that the retrieval is successful (Karpicke & Roediger, 2007). This explanation is in line with theoretical accounts stating that more difficult practice leads to better long-term retention (Bjork, 1994; Pyc & Rawson, 2009) and with findings from studies in university classrooms (Greving et al., 2020).

We demonstrated in this study that multiple-choice testing increased the retention of learned content, whenever the learned content was retrievable. Learners had little need for corrective feedback. We emphasize the ecological validity of the current study because the method was implemented in an existing university course, and feedback was operationalized in a naturalistic way that closely resembles how students usually obtain feedback in self-regulated learning at the university.

Although the field-experimental approach is a strength of the study in terms of ecological validity, it also presents some limitations. Compared to laboratory experiments, external influences potentially play a much greater role in a field setting, which are unknown to researchers and difficult to control. This limitation applies especially to students studying behavior between recorded practice and tests and also to metamemorial, motivational, and metacognitive variables. Another general limitation is that we examined the effectiveness of practice tests with multiple-choice items in just a single course with one sample of participants. The extent that the results generalize to student populations in higher education in general remains a question to be clarified in further research. A third limitation is that the practice and criterial-test questions used in this experiment were fact-based questions that drew on information explicitly provided in the learning materials or easily inferred. Especially in higher education, the ultimate goal is to teach transferable knowledge that can be applied to novel questions and problems. Our results cannot answer the question whether retrieval practice, even without feedback, enhances learning transfer. However, remembering the to-be-learned information may be seen as a necessary although not sufficient condition for transfer. Moreover, based on the meta-analysis by Pan and Rickard (2018), one may speculate that retrieval practice in a university classroom, as implemented in our study, also has the potential to increase performance on typical transfer questions, such as application and inference questions.

To conclude, in this research we were able to demonstrate a testing effect for multiple-choice questions in a real-world educational context while using an experimental design that minimized common methodological issues. We also demonstrated that research that has identified retrievability as an important factor to consider when practicing short-answer questions in university teaching can be extended to multiple-choice question. In real-world educational settings, practitioners can exert influence on the retrievability by enabling students to answer practice questions correctly. However, practitioners should proceed with caution when employing multiple-choice testing in self-regulated learning environments in which students are required to actively obtain feedback. To profit the most from the testing effect, practitioners and textbook authors should thus identify the most important aspects of the learning content and foster a clear understanding of these prior to practicing multiple-choice tests.

ORCID iD

Sven Greving https://orcid.org/0000-0002-5072-6307

Footnotes

Declaration of Conflicting Interests

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Würzburg Professional School of Education.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by Würzburg Professional School of Education.

Author Note

Work on this research was supported by the Professional School of Education of the University of Würzburg. The authors have no conflict of interest to report. Anonymized data and analysis protocols are deposited in the repository of the Open Science Framework (![]() ). Researchers interested in the materials should contact Tobias Richter (

). Researchers interested in the materials should contact Tobias Richter (