Abstract

Background:

Higher education institutions and universities aim to provide students with a range of transferable skills that enable them to become more thoughtful and effective employees, citizens, and consumers. One of these skills is critical thinking.

Objective:

The aim of the present research was to examine whether taking a psychology degree is concomitant with students’ increase in critical thinking skills when students are not explicitly taught critical thinking.

Method:

Study 1 utilized a cross-sectional design and Study 2 a longitudinal design. The Watson and Glaser Critical Thinking Appraisal (WGCTA, UK) was used to measure critical thinking.

Results:

For both studies, the overall scores of WGCTA, as well as scores of the subtest of Recognition of Assumptions, were significantly higher for final-year than for first-year students.

Conclusion:

From the findings, we conclude that the levels of critical thinking by final-year psychology students may be enhanced.

Teaching implications:

We propose that teaching other aspects of critical thinking such as Evaluation of Arguments and Interpretation, as measured by this test, could be beneficial in further developing psychology students’ overall critical thinking performance.

There are many skills that are required for the world of work including information management, oral and written communication, collaboration, critical and analytical thinking, self-regulation, integrity, and adaptability (e.g., Naufel et al., 2018). Given the importance of these skills not only in the workplace but also to support almost any significant learning opportunity in life generally, many higher education institutions list these skills as part of the learning outcomes for their students (Roohr et al., 2019).

There is an argument that among these aforementioned skills sets, critical thinking is central for shaping effective global workforce roles (Liu et al., 2014). However, in addition to that in a world where the opportunity for the consumption of information and misinformation is ubiquitous, critical thinking becomes a valued skill for students to develop in order to participate as responsible members of their communities (Dam & Volman, 2004; Paul & Elder, 2019). Moreover, it is also a valued skill for differentiating between real and the so-called “fake news” (Musgrove et al., 2018; Paul & Elder, 2019).

Although interest in students’ development of critical thinking has been longstanding, it is only relatively recent that empirical research has been focused on what factors might increase critical thinking skills. The majority of this work has focused on examining the effectiveness of critical thinking interventions (e.g., Barnett & Francis, 2012; Bensley et al., 2010; Cloete, 2018; Solon, 2007). However, very few empirical studies have focused on whether the learning, research, and practice that students normally engage in lead students to improve these skills (e.g., Liu, Liu, et al., 2016; Pascarella & Terenzini, 2005).

From a teaching perspective, two issues are relevant with respect to the teaching and assessing of critical thinking: (1) teaching: whether critical thinking skills should be taught in the context of discipline-specific matter and focus on cognitive skills relevant to that discipline or whether universities should teach critical thinking skills with general vignettes with nondiscipline-specific problem arguments and statements and (2) assessment: whether the tests used to assess students’ critical thinking should be discipline-specific or whether a generic critical thinking test should be used. The ability of students to transfer critical thinking skills to the workplace is important because if critical thinking skills are in the main more subject-specific, then the transferability of critical thinking skills to graduate employment might be more limited. Furthermore, given that many employers use generic tests (Watson & Glaser, 2018), perhaps this is an opportunity for universities to demonstrate that the rhetoric that promises improved employability and transferable skills has actual relevance in relation to some of the criteria (occupational testing) used in the actual world of work.

Critical thinking is widely assumed to be an important part of psychology graduate, as reflected in the British Psychological Association (BPS, 2019) Standards for the accreditation of undergraduate, conversion and integrated masters programmes in psychology in the UK and the American Psychological Association (2013) Guidelines for the Undergraduate Psychology Major in the United States. Furthermore, psychology degrees include courses on research methods which promote rules and values of science such as objectivity, valid evidence, falsifiability, and operationism; the use of a wide range of methods and statistical analyses; and appropriate conclusions derived from empirical evidence and analysis (Bensley, 2009; Yanchar et al., 2008).

In this article, we present research that examines the extent, if at all, students taking a psychology degree, without any additional intervention or explicit teaching of critical thinking skills, further develop their critical thinking. In what follows, we review critical thinking literature that focuses on two aspects: the time span across assessment of students’ critical thinking skills and the specific tests used to assess critical thinking skills. We then present two studies.

Empirical Evidence of Students’ Development of Critical Thinking in Education

Time Span Between Testing

The focus of a considerable number of studies has been on the effectiveness of interventions to develop students’ critical thinking skills in higher education, using a “pretest to posttest” design where the same participants are tested once at the beginning and again at the end of a semester (e.g., Bensley et al., 2010; Burke et al., 2014; Haw, 2011; Lawson, 1999; Lawson et al., 2015; Stark, 2012). In these studies, the time frame where critical thinking skills are expected to develop is relatively small ranging between 12 and 16 weeks. One of the main limitations of these studies is that they do not provide evidence on whether the gain in critical thinking continues after posttesting at the end of a semester.

In contrast, the focus of other studies has been on possible student critical thinking gains over larger time spans than a single semester (e.g., Bauwens & Gerhard, 1987; Behrens, 1996; Cloete, 2018; Liu, Liu, et al., 2016; Liu, Mao, et al., 2016; Roohr et al., 2019) using either cross-sectional (i.e., data from two different cohorts of students, e.g., first-year vs. final-year students) or longitudinal designs (i.e., data from the same group over time).

Using a longitudinal design, the authors of several studies on nursing training programs have found a lack of gain in critical thinking skills (e.g., Bauwens & Gerhard, 1987; Behrens, 1996; Jones & Morris, 2007; see also Huber & Kuncel’s, 2016, meta-analysis). However, when including participants from a range of disciplines, Roohr et al. (2017) found a significant difference in students’ critical thinking scores after 4/5 years in higher education, but not after 1, 2, or 3 years, suggesting the need to measure critical thinking skills over a longer period of time.

Using a cross-sectional design and students from different American colleges and different disciplines, Liu, Mao, et al.’s (2016) findings suggest that scores from final-year students were significantly higher than the first years’ scores. Similar findings were obtained by Mines et al. (1990) who include mathematics and social science students from a U.S. University.

Subject-Specific Versus Generic Critical Thinking Tests

Over the past decades, many definitions of critical thinking have been put forward (e.g., Ennis, 1989; Facione, 1990; see also Griggs et al., 1998; Hitchcock, 2018; Liu et al., 2014, for reviews of definitions). Following a panel of experts on critical thinking, Facione (1990, p. 3) offered the following broad definition of critical thinking with its emphasis on cognitive skills: “We understand critical thinking to be purposeful, self-regulatory judgment which results in interpretation, analysis, evaluation, and inference, as well as explanation of the evidential, conceptual, methodological, criteriological, or contextual considerations upon which that judgment is based.”

One of the controversies in the critical thinking literature is whether the test used to measure critical thinking skills should be subject-specific or a generic critical thinking test. Some researchers have developed subject-specific critical thinking tests that focus on the content and cognitive skills related to a particular discipline (e.g., Bensley et al., 2010; Lawson, 1999; Lawson et al., 2015; Wentworth & Whitmarsh, 2017). For example, Lawson et al. (2015) developed further the Psychological Critical Thinking Exam (PCTE) proposed by Lawson (1999), which they claim taps into the critical thinking cognitive skills of Evaluation of claims (see also Wentworth & Whithmarsh, 2017, for another psychology specific test with similar characteristics to the PCTE). However, one of the main limitations of these studies is that the extent to which the skills developed for subject-specific discipline tap into nondiscipline-specific critical thinking is not clear.

Alternatively, some studies have focused on students’ level of critical thinking using generic critical thinking tests such as Watson and Glaser Critical Thinking Appraisal (WGCTA) or Cornell Critical Thinking Test (CCTT). Tests such as WGCTA are called generic because they assess the individual’s critical thinking skills applied to statements that reflect the wide variety of arguments from many everyday life situations such as newspapers, magazines, conversations, and media material in general (Watson & Glaser, 2018).

The findings of the studies using WGCTA or CCTT are mixed. Some show that following an intervention, students’ performance in critical thinking skills increases (e.g., Barnett & Francis, 2012; Cloete, 2018; Solon, 2007) while other studies show no gain between pretest and posttest (Renaud & Murray, 2008). Furthermore, Cloete (2018) showed that both the control and the experimental group improve their performance on the WGCTA test, albeit that the improvement in the control group was more limited, thereby showing that students were able to improve their critical thinking performance without intervention. Burke et al. (2014) also used the WGCTA test to assess students’ critical thinking skills. The aim of their study was to compare directly psychology and philosophy undergraduates’ performance (cf. Ortiz, 2007). Findings revealed that only the philosophy students improved their critical thinking between pretesting and posttesting. These authors argue that the lack of improvement in critical thinking skills for the psychology students might have been due to psychology students learning mainly inductive reasoning (statistical and methodological), while WGCTA is mainly (but not exclusively) based on deductive reasoning. Nevertheless, what is unclear from their study is whether there is any long-term gain in critical thinking performance as a result of their degree experience, as participants were tested after (only) 15 weeks.

Aim of Study 1

The aim of Study 1 was to examine whether students taking an accredited psychology degree 1 without additional explicit teaching of critical thinking skills (e.g., Bensley et al., 2010; Haw, 2011; McLean & Miller, 2010; Stark, 2012) gain critical thinking skills. We used the critical thinking test WGCTA, UK principally because (1) it is a recognized psychometric test with psychometric properties (Watson & Glaser, 2002, 2018) and (2) it assesses the individual’s critical thinking skills applied to statements that reflect the wide variety of material encountered in many everyday life situations (Watson & Glaser, 2018).

Study 1 compared a group of first-year psychology students at the beginning of their degree with a group of third-year students at the end of their degree. 2 We had two research questions: (1) Under the assumption that psychology courses enable students to develop their critical thinking, it was hypothesized that Year 3 students would show higher scores in the WGCTA critical thinking test than the Year 1 students, when at the same time controlling for potential confounds such as age, entry qualification, and socioeconomic background and (2) Burke et al. (2014) argue that psychology degrees address mainly inductive critical thinking. Furthermore, as far as we know, to date, research has not included WGCTA subtest analysis. With the second research question, we aimed to explore whether Year 3 performance scores on each of the subtest differed from Year 1 performance scores.

Study 1: A Cross-Sectional Study

Method

Participants

Participants were 184 psychology students from a University in England, UK. Ninety-four participants were first-year students (15 male, 79 female) with mean age 19.47 (range 18–44), and 90 were third-year students (17 male, 73 female) with mean age 21.30 (range 20–33). Deprivation index 3 (DEPI) based on the zip code of first-year student’s home indicated that 23.9% came from deprived areas (Scales 2–4) while 76.1% came from nondeprived areas (Scales 0–1). For third-year students, the index indicated that 20.2% came from deprived areas (Scales 2–4) while 79.8% came from nondeprived areas (Scales 0–1). We did not collect information on race/ethnicity of the participants; however, the student population at the University was 90%–92% European White.

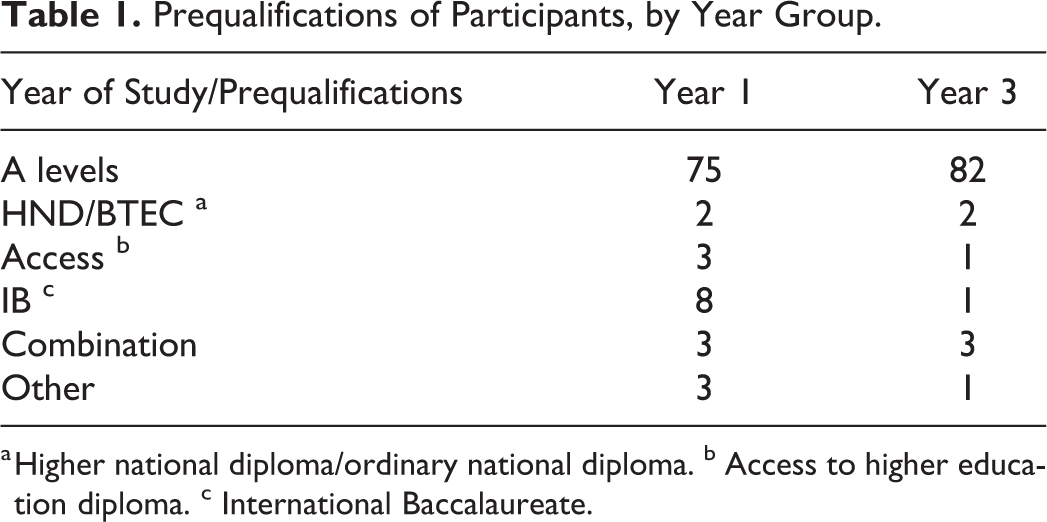

The entry qualifications of participants included A levels, Business and Technology Education Council (BTEC), higher national diploma (HND), International Baccalaureate (IB), and access routes, with a predominance of A levels (see Table 1). A level is a qualification offered by education institutions in the UK and is used by universities to assess a student’s eligibility for an undergraduate degree course. International students are more likely to study an IB as a form of access to a UK University, and mature students take the access route that is a qualification that prepares individuals without a traditional qualification for study at University. BTEC and HND are diplomas in further education and vocational qualifications. 4 We had full details of students’ entry points for 89.5% Year 1 and 96% Year 3 students. An independent t test between these two groups showed no significant differences on level points between Year 1 (M = 87.01, standard deviation [SD] = 15.33) and Year 3 (M = 90.49, SD = 13.15); t(169) = 1.57, p = .118, CI [7.739, 0.877, Cohen’s d = .24, small effect size difference; Cohen, 1988).

Prequalifications of Participants, by Year Group.

a Higher national diploma/ordinary national diploma. b Access to higher education diploma. c International Baccalaureate.

Measures

Students’ critical thinking was measured using the WGCTA, UK (Watson & Glaser, 2002), a measure of verbal reasoning the content of which relates to a wide range of contemporary sociopolitical and everyday scenarios which are presented in the form of problems, statements, arguments, and interpretations. This 80-item instrument consists of five different subtests, each consisting of 16 items, measuring the following aspects of critical thinking: inference, recognition of assumptions, deduction, interpretation, and evaluation of argument (Watson & Glaser, 2002, 2018; see Appendix for full definitions of each subtest and example items).

The test as a whole measures a candidate’s critical ability to correctly identify answers that are correct in an absolute, logical way, as well as those for which only a more probabilistic judgment can be given, given the sufficiency or otherwise of evidence provided.

The WGCTA, UK raw scores range from 0 to 80. The data reported below are reported in terms of raw scores adjusted for chance 5 (see Wagner & Harvey, 2006) with higher scores indicating a greater facility for critical thinking, or if indicated, in terms of standardized scores which are required for any comparison between different sample data previously obtained (WGCTA, UK, [Watson & Glaser, 2002]).

Procedure

To ensure that testing took place in conditions commensurate to psychometric testing for job recruitment, the WGCTA, UK was administered strictly in accordance with the procedure outlined in the manual (Watson & Glaser, 2002, 2018) by qualified BPS occupational test administrators. One of the test administrators was responsible for the test score conversion.

On arrival, participants were seated and were asked to read the briefing instructions and asked for written consent. The test instructions were then read out with an opportunity for asking questions. Participants were given a maximum of 50 min to complete the test. The test was administered in paper-and-pencil form.

First-year students completed the WGCTA, UK during the first 5 weeks of their first semester at university, which meant that they had not completed any credits at the university, whereas third-year students did so during the last 4 weeks of their final semester. These third-year students had all completed 285 credits in psychology. 6 To encourage participation, first-year students were offered credit participation points toward their required psychology study participation quota. All final-year students, in recognition of their time commitment, were offered a small financial incentive (£10.00) to take part.

Results

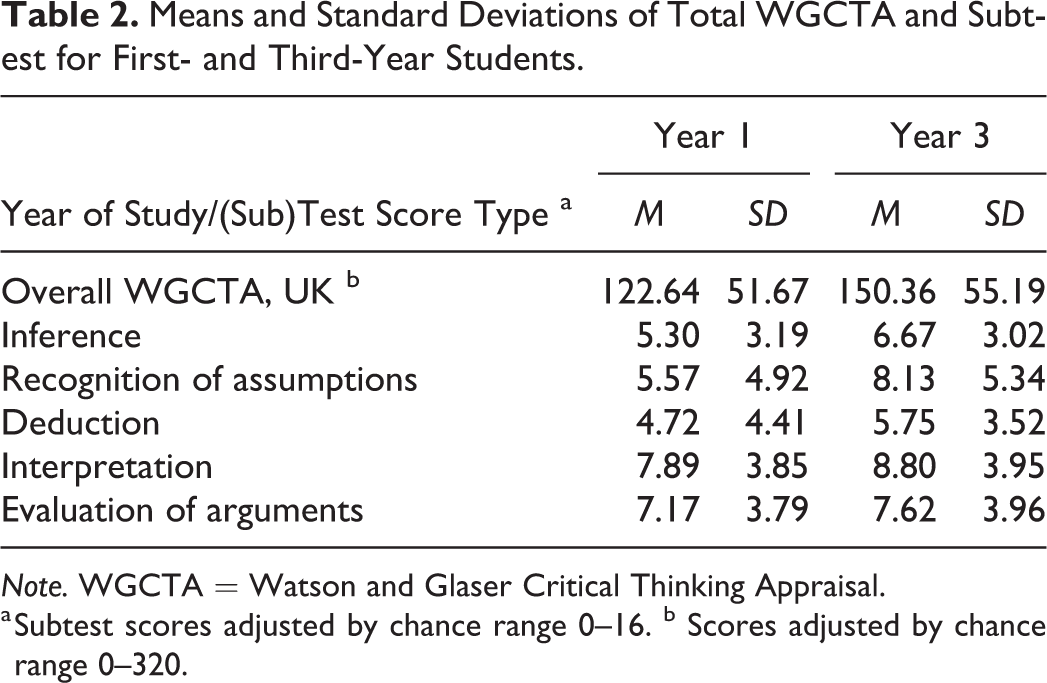

Concerning Research Question 1, it was predicted that overall WGCTA, UK scores would be higher for Year 3 than Year 1 students. We present descriptive analyses in Table 2. To examine whether the performance scores from students in Year 3 were significantly higher than from Year 1 students, we carried out an analysis of covariance (ANCOVA) with overall WGCTA as the dependent variable, year group as the fixed factor, and age, A-level score, and DEPI score as covariate. The main effect of year group was significant, F(1, 149) = 3.947, p = .049,

Means and Standard Deviations of Total WGCTA and Subtest for First- and Third-Year Students.

Note. WGCTA = Watson and Glaser Critical Thinking Appraisal.

a Subtest scores adjusted by chance range 0–16. b Scores adjusted by chance range 0–320.

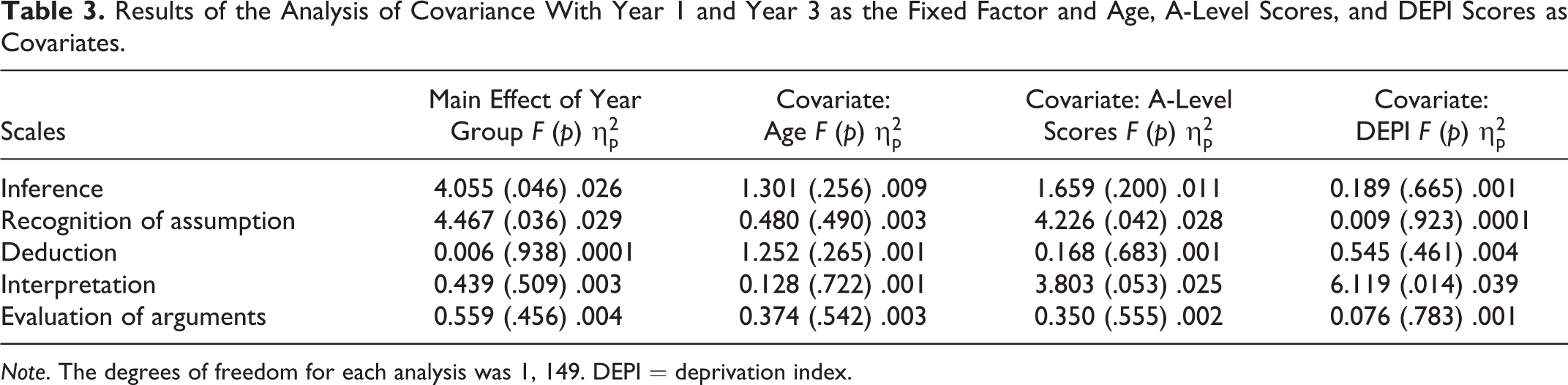

With the Research Question 2, we aimed to explore whether the performance scores on the WGCTA subtests were different between Year 3 and Year 1 students. Table 2 shows the descriptive statistics (means and SDs) of subscale scores for both groups. To analyze whether any of the differences were statistically significant, further ANCOVAs were carried out, one for each of the WGCTA subscales, using the subscales scores as the dependent variable and year group (Year 1 vs. Year 3) as the fixed factor. Students’ age, scores on entry qualification, and DEPI scores were entered as covariate. As can be seen from Table 3, there was a significant difference between Year 1 and Year 3 on both the measures of Inference and Recognition of Assumptions. There was no significant difference between the year groups on the other three WGCTA subscales. The covariate age was not significant for any of the subscales. The covariate entry scores were significant for the subscale of Recognition of Assumptions only and the covariate DEPI was significant for the subscale Interpretation only.

Results of the Analysis of Covariance With Year 1 and Year 3 as the Fixed Factor and Age, A-Level Scores, and DEPI Scores as Covariates.

Note. The degrees of freedom for each analysis was 1, 149. DEPI = deprivation index.

Study 1 Discussion

The findings of Study 1 support the hypothesis that final-year students evidence a higher level of critical thinking skills than students at the beginning of their degree course using an industry-standard critical thinking test. These findings are comparable with other research which included students from other disciplines and utilized a cross-sectional design (e.g., Liu, Mao, et al., 2016; Mines et al., 1990) and builds on previous research that has also used the WGCTA test (e.g., Behrens, 1996; Burke et al., 2014; Cloete, 2018; McLean & Miller, 2010; Mines et al., 1990). These findings suggest that participating in a psychology degree at university could increase students’ critical thinking skills. The findings relating to specific subscale scores also extend previous findings that have focused only on the overall WGCTA scores (e.g., Behrens, 1996; Burke et al., 2014; Cloete, 2018; McLean & Miller, 2010; Mines et al., 1990) to provide more details on the subscale scores for psychology students. In particular, it shows that only Inference and Recognition of Assumptions were significantly different between the two groups, partly confirming the argument made by Burke et al. (2014).

However, there are some important limitations to the above study that lead us to treat the results with caution. For this study, we followed a cross-sectional design comparing two groups in two different stages of their degree. Although, here, we include age, entry qualification scores (A-level scores), and deprivation information (DEPI scores) as covariates to take into account possible confounding variables affecting the main variables, the two groups of students were nevertheless not matched for other variables that could have affected the results, such as for example, motivation, intellectual skills, cognitive ability, academic performance, or critical thinking disposition (cf. Bensley et al., 2010; Facione, 1990; Solon, 2007).

This led us to carry out Study 2 using a longitudinal design where we compare the scores of students in Year 1 with the scores of the same students at the end of their studies, in Year 3. The longitudinal design allowed for some potentially confounding variables (such as, e.g., entry point and DEPI, as well as intellectual skills, cognitive ability, and critical thinking disposition) to be controlled for by including the same participants.

The aims of this second study were twofold: (1) to examine whether we could replicate the findings of Study 1 using a longitudinal design. Under the assumption that undergoing a psychology curriculum enables students to develop their critical thinking, it was hypothesized that the performance scores of the students in their final year would be higher than the scores they obtained at the beginning of their study. Furthermore, following the results of Study 1, it was predicted that the scores of the subtests of Recognition of Assumptions and Inference would be higher in Year 3 than in Year 1 and (2) in order to be able to provide some possible recommendation regarding teaching to further enhance students’ performance in critical thinking, we examined the contribution of each subtest of the WGCTA to the final overall WGCTA scores. To this aim, we carried out a hierarchical regression analysis.

Study 2: A Longitudinal Perspective

Method

Participants

The same participants who were Year 1 students at the time of Study 1 took part in Study 2. From the initial 94 students who took part in Study 1, 63 took the WGCTA, UK again when they were in Year 3 (10 males and 53 females).

Measures and Procedure

The measures and procedures were the same as for Study 1. As these students were now in Year 3 of their study, as alternative to course credits as those would not have been of benefit to them at the end of their degree, they received £10.00 for taking the time to take part in the study. The same qualified BPS occupational test administrators were employed for data collection and score transformation.

Results

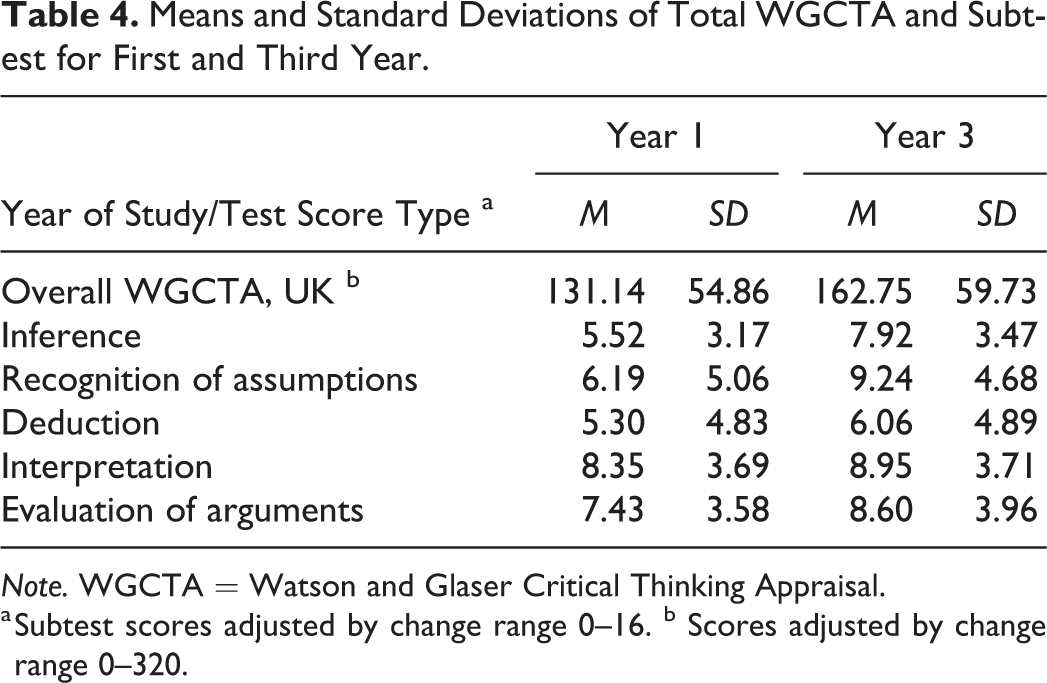

The first aim of this second study was to examine whether the students’ scores for Year 1 differed from the scores of the same students in Year 3. Table 4 shows the descriptive statistics (means and SDs) of scores obtained during Years 1 and 3. As can be seen, the overall WGCTA, UK scores were higher for Year 3 than for Year 1.

Means and Standard Deviations of Total WGCTA and Subtest for First and Third Year.

Note. WGCTA = Watson and Glaser Critical Thinking Appraisal.

a Subtest scores adjusted by change range 0–16. b Scores adjusted by change range 0–320.

To assess whether these differences were statistically significant, we carried out a repeated measure ANCOVA with age at Year 3 as covariate and total WGCTA scores as repeated measures. From the results, we found that there was a significant difference in the overall critical thinking performance between students in their first and final year, F(1, 61) = 6.029, p = .017,

In addition, to examine whether the scores of each of the five subtests reliably differed between study years, further repeated measure ANCOVAs were carried out, with age at Year 3 as covariate and the scores on the WGCTA subtest as repeated measures. From the findings it transpired that there was a significant difference between Year 1 and Year 3 for Recognition of Assumptions, F(1, 61) = 3.989, p = .050,

There was no significant difference, however, for the remaining subtests of critical thinking included in the WGCTA: Inference, F(1, 61) = 0.020, p = .888,

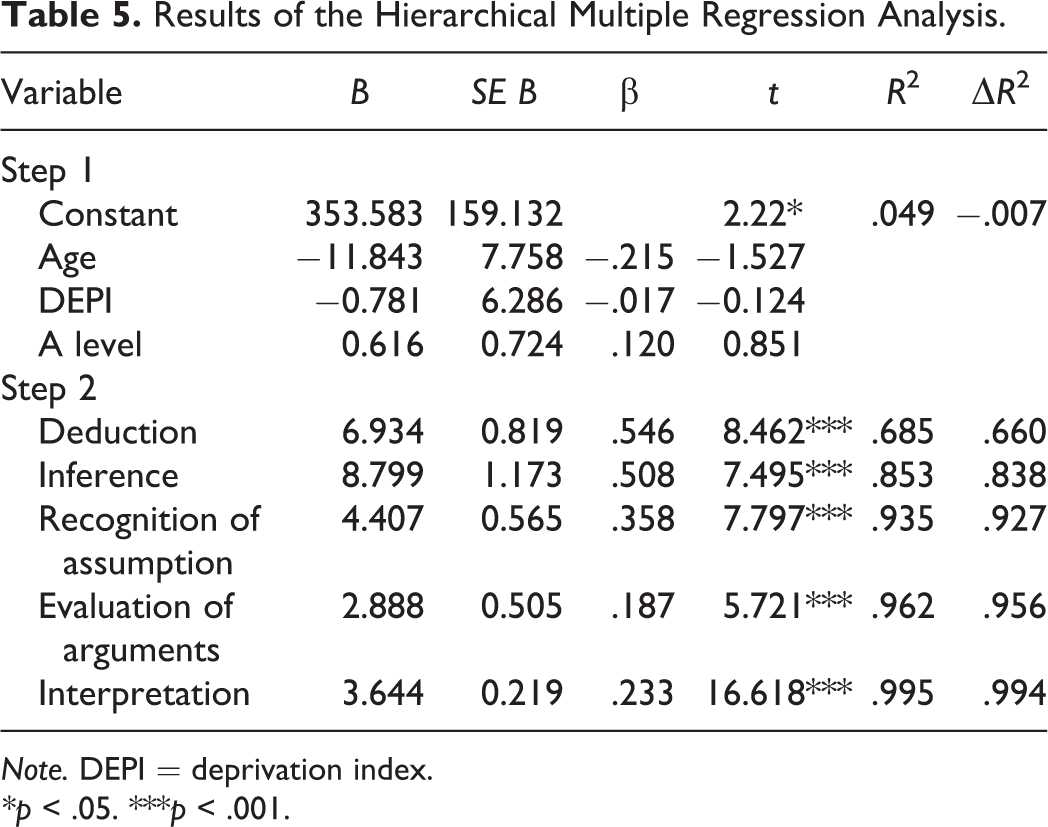

The second aim of the Study 2 was to examine the data as a means to provide possible recommendations regarding teaching to further enhance students’ performance in critical thinking. In particular, we focused on how much each WGCTA subtest contributed to the overall WGCTA scores when students were in their final year of study. To this effect, we carried out a hierarchical regression analysis with overall score as the dependent variable. 7

When age, DEPI, and entry qualification scores were included as independent variables in the first step, a nonsignificant model emerged, F(3, 51) = 0.871, p = .468,

Results of the Hierarchical Multiple Regression Analysis.

Note. DEPI = deprivation index.

*p < .05. ***p < .001.

A significant model emerges when the subscale of Deduction was included as independent variable, F(4, 50) = 27.204, p = .0001,

Study 2 Discussion

The findings of Study 2 are similar to those of Study 1. Students in Year 3 reported higher scores on the overall WGCTA scores and hence overall higher levels of critical thinking skills than when they were at the beginning of their degree and had yet to complete any credits at university. The results are also similar to those found for Study 1 with respect to the measures of Recognition of Assumptions.

Furthermore, the findings of Study 2 extend to psychology students’ previous results, which utilized a longitudinal design with nursing degree students (e.g., Bauwens & Gerhard, 1987; Behrens, 1996; Jones & Morris, 2007) or students from arts, humanities, and science disciplines (e.g., Roohr et al., 2017).

Finally, the results of the hierarchical regression analysis provide us with an overview of how much each individual subtest contributes toward the overall WGCTA score. Looking at the literature, research on critical thinking which employs the WGCTA as a measure of critical thinking focuses on the overall scores without considering its component measures. Whereas overall scores are used by companies and organizations that utilize the WGCTA as a tool for selection or career advancement of employees (Watson & Glaser, 2002, 2018). From an education perspective, it is important to know what contributes to the overall WGCTA score in order to provide any recommendation to help further psychology students to improve their critical thinking. The findings of the hierarchical regression analysis suggest that the component measures that contribute least to the overall WGCTA are Evaluation of Arguments and Interpretation. This may suggest that there is value in addressing the skills underlying these specific measures more explicitly, from a teaching and instruction point of view (see below), in order to advance student critical thinking.

General Discussion

The aim of the present research was to investigate the extent to which taking a psychology degree is concomitant with students’ increase in critical thinking skills where students are not explicitly taught critical thinking but develop critical thinking skills through the learning experience traditionally characteristic of psychology courses.

Findings of both the cross-sectional and longitudinal studies reported above were similar. With respect to the overall WGCTA scores, students in their final year of their degree obtained significantly higher WCGTA performance scores than students at the start of their degree. Overall, the findings of our studies are consistent with, and extend to psychology, the claims made by Pascarella and Terenzini (2005) who suggest that attending university on the whole improves students’ critical thinking performance (see also Huber & Kuncel, 2016).

Our results also extend previous findings that have included psychology students as participants and a subject-specific critical thinking test (e.g., Haw, 2011; Lawson, 1999; Lawson et al., 2015) to the WGCTA critical thinking test and by assessing participants increase in critical thinking skills for longer than one semester. At the same time, our findings contrast with previous longitudinal research with students in nursing programs which found no increase in critical thinking skills (e.g., Bauwens & Gerhard, 1987; Behrens, 1996; Mines et al., 1990). They also contrast with previous research that investigated a performance increase from a specific intervention (e.g., Renaud & Murray, 2008; Stark, 2012; Williams et al., 2004).

With respect to the WGCTA subtests, from both studies, we found that students’ critical thinking performance increases for Recognition of Assumptions. Very few studies have looked at WGCTA subtest differences and the few that exist have looked at the relationship between WGCTA subtest and grade scores (Gadzella et al., 2002; Steward & Al-Abdulla, 1989). For example, Gadzella et al. (2002) found that only the subscales of Interpretation and Evaluation of Arguments significantly predicted grade point average.

Implications of the Results

Graduates need to demonstrate a range of skills and attributes to successfully enter the workplace, with better paid jobs frequently going to candidates with better critical thinking measures. There are a very large number of occupations, including occupations attractive to psychology graduates, where critical thinking is included as a basic skill required for these occupations (e.g., Cottrell, 2017; O*Net Online, 2019; Liu et al., 2014), and there is evidence of a significant high positive correlation between the WGCTA overall scores and job performance (Pearson-TatentLens, 2016). The higher levels of critical thinking skills that psychology students evidence at the end of their degree compared to the start of their course may highlight the relative value of taking a psychology degree in terms of employability.

However, the need for a thorough command of critical thinking is not confined to the world of employment, rather it relates to our ability to make sense of information flow generally, where it is important that we are able to distinguish between sound or cogent arguments and the so-called “fake news” information and other forms of misinformation (Musgrove et al., 2018; Paul & Elder, 2019; Pennycook & Rand, 2019). For example, Pennycook and Rand (2019) show that participants’ scores on analytical reasoning were positively correlated with the ability to differentiate between “fake news” and real news. Furthermore, critical thinking is a valued skill for students in order to develop good citizenship (Dam & Volman, 2004; Paul & Elder, 2019).

From the results of the hierarchical regression analysis reported above, we can see that the WGCTA subtest of Evaluation of Arguments contributed a modest 2.9% toward the overall WGCTA score. Evaluation of Arguments requires the individual to analyze the evidence and arguments put forward in a text (or conversation). To this aim, it is important to be objective and work logically through the arguments and information put forward. There is the need to distinguish between arguments appealing to logic rather than emotion and avoid privileging information which confirms a preferred perspective. According to Lawson et al. (2015), the PCTE taps into the critical thinking skills of Evaluation of claims. The work of Lawson et al. shows that over the span of a 15-week semester, senior psychology majors scored significantly higher on the PCTE than junior psychology majors, senior biology majors, senior art majors, and introductory psychology students. Other interventions focusing on the increase of argument development have also found positive results (e.g., Hasnunidah et al., 2015; Kuhn & Udell, 2003). Although the long-term impact of these interventions is unknown, teaching psychology undergraduates explicitly how to critically evaluate arguments might help increase their overall critical thinking skills.

Similarly, from the results of the hierarchical regression analysis in Study 2, we can see that the WGCTA subtest of Interpretation contributed 3.8% toward the overall WGCTA score. According to Facione (1990), interpretation means to understand the meaning or significance of information. Students practice the skill of interpretation when they comprehend and express the meaning or significance of a wide variety of experiences (Facione, 1990). Teaching students to effectively interpret text would help students toward developing interpretation critical thinking skills. Furthermore, the use of collaborative or peer-learning has been reported to enhance critical thinking (Gokhale, 1995), including interpretation.

Limitations and Future Directions

One limitation of the studies presented here is that the higher performance levels of critical thinking at the end of the course could be due to students’ maturation (see, e.g., Huber & Kuncel, 2016; Pascarella & Terenzini, 2005) and not due to reading a degree in psychology. To statistically control for this, we used age as a covariate for the analyses for both Studies 1 and 2 and included it in the first step of the hierarchical multiple regression analysis. From the results, we concluded that age did not affect the significant difference between the overall critical thinking scores of Year 1 and Year 3 students. In this context, Pascarella and Terenzini (2005) have claimed that when studies control for maturation, university attendance still produces significant gains.

Our studies did not include participants taking a degree other than psychology and hence our results cannot be generalized to students taking other disciplines. This raises the issue of the lack of a control group to compare psychology students’ findings with. In other words, whether the findings in our studies are related to students taking psychology rather than some other relevant variable. However, this raises the question of what constitutes a relevant control group in this context. Including students from other disciplines could potentially reveal the relative efficacy, or otherwise, of the experience of a psychology curriculum in this setting. However, one could argue that including students from different disciplines to compare the relative efficacy of different curricula in this regard does not provide the best control measure either, in that a better control group might be similarly aged individuals not pursuing a degree course. But then, as Huber and Kuncel (2016) argue, it is difficult to conduct such study comparing individuals attending or not attending university while at the same time controlling for many other potential confounds. Future research could include students enrolled in different disciplines to examine the relative efficacy of enhancing students’ critical thinking in each discipline.

The sample size utilized here was comparable to many studies that have examined students’ gains in critical thinking utilizing either a cross-sectional or longitudinal design (e.g., Jones & Morris, 2007; McLean & Miller, 2010; Mines et al., 1990; Roohr et al., 2017). Nevertheless, taking into consideration the large population of students in our higher education institutions, the sample is relatively small. Furthermore, issues of sampling are particularly relevant for the longitudinal study as participants who took the test twice were by and large a much more self-selected group, than the students who partook in the study only once at the beginning of their degree. Another limitation is the small number of males in our sample, which could imply that current findings might be restricted to female psychology students only. Finally, the participants in our studies came from only one institution with the same aims and ethos with respect to students learning and development. Hence, further research with a large number of participants and more institutions is needed to confirm present findings.

Conclusion

From the findings, we conclude that the scores of students taking a psychology degree were significantly higher in Year 3 than Year 1, and hence, there was an enhancement of their critical thinking performance, even when critical thinking was not explicitly taught, as measured with WGCT, an industry-standard psychometric test. We suggest that to further increase psychology, students’ critical thinking skills instructors might focus on the development of skills related to the WGCT component measures of Evaluation of Arguments and Inference.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.