Abstract

Forensic science is a central component of jurors’ decisions in many criminal cases. Nevertheless, research has shown that jurors are not sensitive to violations of testimonial guidelines for expert testimony in court and generally struggle to comprehend and evaluate forensic science testimony. Consequently, the U.S. Department of Justice (DOJ) developed the Uniform Language for Testimony and Reports (ULTR) to standardize the language used in such testimony. The current study created and tested a Forensic Science Informational (FSI) video as an intervention to bolster jurors’ understanding of FSI. After reading a case summary, participants were randomly assigned to read and rate five forensic expert testimony violations without any training, or to watch the FSI video before reading and rating each violation. Results revealed that participants with video exposure rated both the expert testimony and the expert themselves lower than those without such exposure, indicating they recognized the violations.

Despite the centrality of forensic science to jurors’ decisions in many criminal cases, there is reason for concern regarding jurors’ comprehension of such testimony (Association of Forensic Science Providers, 2009; Koehler, 1996; Martire et al., 2013; Thompson & Schumann, 1987). There is also reason for concern about the scientific accuracy of statements made by forensic experts on the stand. In 2009, the National Academy of Sciences’ (NAS) National Research Council (NRC) released a report that, in part, called for improving the presentation of forensic science in testimony and reports. The NAS recommended that court testimony and scientific reports should be standardized through established guidelines for reporting the results of forensic tests. The report suggested that the terminology used by forensic scientists “can and does have a profound effect on how the trier of fact in a criminal or civil matter perceives and evaluates scientific evidence” (NRC, 2009, p. 21). As such, an intervention to help jurors recognize when forensic experts provide inappropriate testimony is critical.

In the following pages, we review the empirical research that has focused on jurors’ assessments of evidence and forensic expert testimony, including a discussion of the utility of different interventions designed to improve jurors’ assessments of testimony and evidence. We also discuss the implementation of informational videos as a potential intervention to improve jurors’ assessments of forensic expert testimony. Finally, we introduce the novel informational video we created for the present study and test its utility as to this goal.

The Impact of Forensic Experts’ Testimony on Jurors’ Assessments of Evidence

Previous research has explored several ways in which a forensic expert’s testimony can impact jurors’ assessments of evidence. First, jurors may have difficulty comprehending the statistical analysis and language used to convey forensic science (Martire et al., 2013; McQuiston-Surrett & Saks, 2009). Psychological research has largely shown that people in general struggle to understand probabilities, statistics, normative models, and likelihood ratios, all of which can be used to convey forensic evidence (Association of Forensic Science Providers, 2009; Koehler, 1996; Martire et al., 2013; Thompson & Schumann, 1987). Research more specifically related to jurors’ comprehension of statistics has found that jurors tend to improperly evaluate probabilistic evidence (Koehler, 1996; Nance & Morris, 2005; Schklar & Diamond, 1999). For example, Thompson and Schumann (1987) found that when participants were provided with conditional probabilities (i.e., there is “a two percent chance the defendant’s hair would be indistinguishable from that of the perpetrator if he were innocent,” p. 173), participants made erroneous judgments about the probability of the suspect’s guilt (e.g., 98% chance of guilt). Despite these findings, jurors can be overly confident in their ability to understand the evidence presented by forensic experts (McQuiston-Surrett & Saks, 2009). This may lead to a mismatch between jurors’ actual understanding of forensic evidence compared to jurors’ assessments of their comprehension.

Second, forensic science testimony itself can suffer from data presentation errors, including overestimations of the probative value of the evidence and overstatements of the precision and accuracy of the science by experts (Eastwood & Caldwell, 2015; Garrett & Neufeld, 2009). Garrett and Neufeld (2009) determined that in the 137 wrongful conviction trial transcripts that they analyzed, 60% of the cases included invalid forensic science testimony. These trials included testimony that implied that a defendant could be the source of some evidence when in fact the evidence was nonprobative, testimony that discounted exculpatory evidence, inaccurate statistics, and statistics (numerical and nonnumerical) that lacked empirical support. Gould and colleagues (2014) similarly found that the most common forensic error in their review of 460 cases was experts overstating the inculpatory nature of the evidence or the precision of the results. Such data presentation issues coupled with jurors’ overconfidence (despite their poor comprehension) pose a concern for how jurors come to decisions when forensic evidence is presented at trial.

Third, jurors tend to overlook the limitations of forensic science when assessing the testimony, even when those limitations are pointed out to them. Cross-examination by opposing counsel is one way in which limitations of an expert’s testimony are typically introduced (McQuiston-Surrett & Saks, 2009). McQuiston-Surrett and Saks (2009) presented mock jurors with a trial summary in which a microscopic hair examination expert testified. Mock jurors were informed about the limitations of the hair examination either via the cross-examination of the expert by the defense or via posttrial jury instructions presented by the judge. Participants largely ignored these limitations when assessing the expert testimony. Garrett and colleagues (2020) also explored whether cross-examination of the expert was an effective tool for helping jurors discount definitive conclusions. They found that cross-examination did not help participants appropriately adjust their ratings of the evidence. Research on other types of expert testimony (e.g., future dangerousness of a murder defendant, child witnesses) has similarly found that cross-examination aimed at highlighting the limitations of expert testimony or evidence does not tend to influence jurors’ perceptions of the quality of the evidence, ratings of an expert’s persuasiveness, or jurors’ ultimate case decisions (Diamond et al., 1996; Kovera et al., 1994).

Another way limitations of an expert’s testimony are commonly presented is via court instructions. The research on the effectiveness of these instructions is mixed. Some research finds that judicial instructions can help jurors understand forensic evidence (Rowe, 1997), while other research suggests such instructions do not help jurors (McQuiston-Surrett & Saks, 2009; Smith et al., 1996). Such findings further support concerns about jurors’ ability to comprehend and adequately evaluate forensic evidence.

Finally, previous research has also documented how the language used to convey examination conclusions may impact jurors’ evaluations of forensic evidence and testimony. Specifically, Garrett and Mitchell (2013) explored whether the specific language used in fingerprint testimony impacts evaluations of the evidence. Participants read about a hypothetical robbery including information about the comparison between a fingerprint collected at the scene of a crime and a fingerprint sample from the defendant. The match statements ranged from simple and vague statements (e.g., “A latent fingerprint found at the scene was individualized as the left thumb of the defendant,” Garrett & Mitchell, 2013, p. 489) to more detailed and certain statements (e.g., “I conclude to a reasonable degree of scientific certainty in the field of latent fingerprint examination that the latent fingerprint found at the scene came from the same source as the left thumb print on the ink card labeled as taken from the defendant,” Garrett & Mitchell, 2013, p. 489). Mock jurors rated the likelihood that the defendant committed the crime and the probability that the defendant left their fingerprints at the scene similarly regardless of language used to describe the match.

The NAS report (2009) relatedly expressed concern that making definitive conclusions (such as statements about individualization) in forensic examinations may lack reliability and validity. Therefore, they suggested that experts should use more cautious language when discussing their examinations or conclusions. To determine whether such limitations or guidelines for conclusion language are effective, Garrett and colleagues (2020) tested whether the language used by a firearm examiner to draw a conclusion impacted mock jurors’ decisions. Results indicated that variations in language used to describe a match generally did not impact guilty verdicts, ratings of the likelihood the defendant was the person who fired the gun, or ratings of the credibility and reliability of the firearm analysis. The authors assert that although the DOJ guidelines aim to keep examiners from overstating their conclusions to jurors, altering the language used in testimony does not seem to significantly impact trial outcomes or credibility ratings of either the expert or the science and the evidence.

Uniform Language for Testimony and Reports (ULTR) Guidelines

In response to concerns raised in the NAS report (2009) regarding the presentation of forensic evidence and jurors’ comprehension of forensic evidence supported by research, the U.S. DOJ began creating guidelines for various forensic disciplines in 2016 to limit the language used by the justice department’s forensic experts (Garrett et al., 2020). These guidelines, known as the Uniform Language for Testimony and Reports (ULTR; U.S. DOJ, 2018), generally aim to lessen overstatements or invalid statements in forensic testimony. The guidelines apply at the federal level and are intended for anyone that testifies for the DOJ (including all federal agencies, such as the FBI, ATF, and DEA). The latent print ULTR, which is specific to fingerprint examination, was the first ULTR document officially approved by the DOJ in 2018 (Cole, 2018).

In the present study, the latent print ULTR is the primary interest. 1 ULTRs for other disciplines have similar aims but may have different individual guidelines. These other ULTRs range from mitochondrial DNA examination guidelines to forensic textile fiber guidelines (see https://www.justice.gov/olp/uniform-language-testimony-and-reports for the full list and available documents of ULTR). These other disciplines are outside the scope of the present study and will not be discussed in detail. The latent print ULTR guidelines state that testifying forensic experts should not: (1) claim that two prints came from the same person to the exclusion of all other persons or use the words “individualize” or “individualization”; (2) state that a forensic print examination is perfect, is mistake-free, or has a zero-error rate; (3) give a conclusion about prints that includes a numerical or statistical degree of probability; (4) talk about the number of print examinations they have done in their career as proof that their conclusion is correct; and (5) use phrases like “reasonable degree of scientific certainty,” or “reasonable scientific certainty,” unless required to do so by law (U.S. Department of Justice [U.S. DOJ], 2018). Penalties for violating these guidelines may vary by laboratory or agency.

There is little research regarding jurors’ assessment of expert testimony that violates the ULTR guidelines, but there is some research related to the concepts represented in the guidelines. For example, one of the ULTR guidelines states that experts should not talk about the number of print examinations they have done in their career as proof that their conclusion is correct. Research on the experience-accuracy effect in clinical judgments has investigated whether more experience (e.g., training) leads to improved accuracy of their clinical judgments (e.g., diagnoses). Spengler and Pilipis’ (2015) conducted a meta-analysis and found that the more experience mental health professionals have equates to only a slight increase in decision-making accuracy. Moreover, this small effect was stable over time (from studies ranging from 1999 to 2010), and thus this effect did not change over time even when considering possible changes in mental health professionals’ training over time (Spengler & Pilipis, 2015). This research exemplifies why an expert’s experience is not necessarily indicative of the accuracy of their examination’s conclusion.

However, research also suggests that jurors tend to rely on an expert’s experience as a metric for the quality and accuracy of the expert’s conclusion rather than the validity of the methods used. Bitemark and fingerprint evidence presented by a highly experienced expert was viewed by mock jurors as significantly stronger and led to higher guilt ratings than evidence presented by a less experienced expert (Koehler et al., 2016). Based on these findings, Koehler and colleagues (2016) suggested that “jurors use the background and experience of an expert as a proxy for the value of the evidence the expert provides” (p. 410). As jurors rely heavily on an expert’s experience to assess the reliability of their testimony, they may be more inclined to view testimony equating experience with accuracy as acceptable, even if it violates DOJ guidelines.

The Intervention: An FSI Video

To address concerns about jurors’ evaluation of forensic science testimony we created a FSI video that explains the basic process of latent print analysis and the specific types of inappropriate testimony that may be provided by forensic experts. In recent years, courts have begun to use videos to introduce important concepts, procedures, and topics to jurors prior to a trial. For example, the Western District of Washington introduced an Unconscious Bias video in 2017. This video explains the concept of unconscious bias and what role it may play in a trial, with the aim of helping jurors fulfill their role as objective factfinders. Our FSI video takes inspiration from both the structure and general intention of the Western District of Washington’s video (U.S. District Court Western District of Washington, n.d.) and other similar videos used in federal district courts, such as jury orientation videos. To the authors’ knowledge, our FSI video would be the first focused on jurors’ evaluations of forensic science, specifically latent print examinations.

The cognitive theory of multimedia learning suggests that videos may be a particularly powerful tool for relaying information to jurors as individuals learn better when they are presented information both verbally and visually than with words alone (Mayer, 2002). Mayer (2005) outlines three cognitive principles of learning that support this theory: (1) the dual-channel assumption (see Baddeley, 1992; Clark & Paivio, 1991; Mayer, 2002), (2) the limited capacity assumption (see Baddeley, 1992; Chandler & Sweller, 1991), and (3) the active processing assumption (see Mayer, 2005). Essentially, individuals may receive information from two different channels (verbal and visual). That information then goes into working memory to be integrated into a mental representation via multiple cognitive processes (e.g., paying attention and organizing the information) that can then be held in long-term memory. Thus, the theory suggests that presenting individuals with multimedia messages (e.g., videos) aids learning because it lessens the cognitive load for the learner by facilitating the cognitive processes undergone in learning. Empirical studies (e.g., Lloyd & Robertson, 2012; Rackaway, 2012; Stockwell et al., 2015) and a meta-analysis (Kay, 2012) support the utility of multimedia learning.

In light of the cognitive theory of multimedia learning, one intervention that may be useful to improve jurors’ evaluations of testimony is an informational video. An informational video would educate jurors on (in)appropriate testimony, both verbally and visually, which in turn should support their learning. Moreover, informational videos may also be advantageous as they provide jurors with a uniform communication of the guidelines for testimony aimed at improving laypersons’ understanding of the evidence. There is also the benefit of standardization of knowledge across jurors and trials with a video, where information is presented in the exact same way each time. In contrast, instructions presented live by a judge may vary in terms of the speed of presentation, clarity of speech, and so forth. As such, the FSI video can provide jurors with a clearly presented framework to assess the quality of an expert’s testimony at the beginning of a trial, allowing them to attend to the testimony more carefully and make better-informed case decisions.

The Current Study

Given latent print examination’s prominence in criminal cases and the DOJ’s tailored guidelines, some important questions arise. First, how do jurors evaluate expert testimony related to latent print examination? Second, when an expert witness violates the DOJ guidelines, does this impact jurors’ evaluations of the testimony? Third, does an informational video about the DOJ guidelines impact jurors’ evaluations of the testimony? In the present study, the existing guidelines for latent print examiner testimony set out by the DOJ were incorporated into the FSI video to assess how being presented with the FSI video affected mock jurors’ perceptions of forensic testimony which violated the guidelines. The present study further examined the level of untrained knowledge mock jurors may have when assessing forensic experts’ testimony about the print examination that violates the DOJ’s ULTR. While some previous research demonstrates that jurors struggle to comprehend evidence (Association of Forensic Science Providers, 2009; Koehler, 1996; Martire et al., 2013; Thompson & Schumann, 1987), other research suggests jurors can assess evidence quite well and make case decisions in line with judges’ assessments 71% to 75% of the time (Jones et al., 2019).

The central focus of the present study was to explore the impact of our FSI video on mock jurors’ identification and assessments of various types of inappropriate forensic testimony on subsequent ratings of that testimony and the forensic expert. Specifically, the present study examined mock jurors’ perceptions of five excerpts of forensic science testimony that violated the DOJ’s guidelines either without access to the FSI video (untrained) or after the presentation of the FSI video (FSI video trained). This allowed us to determine whether a video intervention can assist in aligning jurors’ views with the testimonial ideal laid out by the DOJ. FSI video trained (vs. untrained) participants were expected to apply the information in the video when evaluating the testimony. Specifically, those participants were expected to rate testimony that violated the guidelines as less acceptable and less convincing in court, rate fingerprint evidence as less convincing, and rate the forensic expert as less confident, likable, knowledgeable, and trustworthy. There were no predictions about the differences across the five DOJ guideline violations; analysis of this variable was exploratory. The current study builds upon Garrett et al.’s (2020) research that suggests that contrary to the goals of guidelines such as the DOJ’s ULTR, mock jurors case decisions may be unaffected by the particular forensic testimony wording. We explore mock jurors’ perceptions of latent print expert testimony (rather than firearm testimony) and test an intervention to help jurors differentiate between testimonial violations. We predict that, in line with the cognitive theory of multimedia learning, presenting information to mock jurors via a video will be an effective intervention to help participants identify inappropriate testimony. This present study also collected participants’ perceptions of the quality and usefulness of the FSI video. No specific hypotheses were made as to this objective.

Method

Design

A 2 (video training status: untrained vs. FSI video trained) × 5 (DOJ guideline violation type: individualization vs. mistake-free vs. specific probability vs. experience as accuracy vs. scientific certainty) mixed design was employed in which video training status was a between participants variable and violation type was a within-participants variable. Violation type was conceptualized as a within-participants variable because each participant received all five statements and rated them on the same items. This allowed us to compare participants’ ratings across the five statements.

Participants

An a priori power analysis conducted via GPower (Erdfelder et al., 1996) revealed that 212 participants were needed to detect an effect of the independent variables with adequate power (0.8) and a small to medium effect size (f = 0.15). A sample of 289 participants was recruited from undergraduate psychology classes at a large Southeastern university. This study was conducted fully online. After removing participants who did not finish the survey or failed any of the three attention checks within the survey, the final sample size was N = 229. All participants self-reported three key jury eligibility qualifications, being (a) at least 18 years of age, (b) U.S. citizens, and (c) fluent in English. They also confirmed that, to their knowledge, they were eligible to serve on a jury. While 11 participants were ineligible based on these currently applied requirements, this question was the first item on the survey and so they never began the study. The sample was majority female (85.2%) with a mean age of 23.49 (SD = 5.94, range = 18-64). The majority of participants identified as Latino/a or Hispanic (81.2%). Regarding race, the majority of participants identified as White (74.7%), followed by Black or African American (12.2%); biracial or multiracial (4.8%); American Indian, Indigenous, or Alaska Native (2.6%); East Asian (<1%); and South Asian or Indian (<1%). The remaining participants (4.9%) identified as something not listed or left the question blank.

Materials

Case Summary

Participants read a 444-word case summary that outlined a criminal trial in which the defendant was accused of theft by larceny. This case summary included a summary of the prosecution’s case, including a witness’ testimony (a police officer), and the defense’s case, including the defendant’s side of the story. The evidence against the defendant included a fingerprint collected from the scene of the crime. Participants were informed in the summary that a forensic print expert testified about the science behind fingerprint examination and how identifications are made. The facts of the case (what occurred during the theft including witnesses involved) were primarily derived from Castellon v. State (2009). The full case summary can be found on the Open Science Framework (OSF; https://osf.io/d5vsb/).

DOJ ULTR Guideline Violations

After reading the case summary, participants read five excerpts of forensic expert testimony presented individually. Each excerpt featured the forensic expert violating one of the five ULTR guidelines; testimony was adapted from USA v. Henry (2015) and occasionally edited based on input and forensic science expertise of two of the authors. Specifically, participants read excerpts of testimony in which the forensic expert: (1) claimed that two prints came from the same person to the exclusion of all other persons (individualization), (2) stated that a forensic print examination was mistake-free (mistake-free), (3) gave a conclusion about prints that included a numerical or statistical degree of probability (specific probability), (4) talked about the number of print examinations they had conducted in their career as proof that their conclusion is correct (experience as accuracy), and (5) used the phrase “reasonable degree of scientific certainty” (scientific certainty).

Measures Related to the Testimony

The first four dependent measures assessed participants’ views of the forensic science testimony (i.e., each of the five testimony excerpts). Participants were asked: (a) to what extent they thought the testimony would be acceptable or unacceptable for a forensic expert to say in a trial (1 = completely unacceptable to 10 = completely acceptable); (b) how convincing they found the testimony to be for the prosecution in this case (1 = not at all convincing to 10 = completely convincing); (c) how convincing they found fingerprint evidence in general to be based on the testimony (1 = not at all convincing to 10 = completely convincing). In addition, participants were asked to what extent the testimony would convince them that the defendant was innocent or convince them that the defendant was guilty (−5 = it would completely convince me that the defendant is guilty to 5 = it would completely convince me that the defendant is innocent).

Measures Related to the Expert Witness

The next four dependent measures were related to participants’ assessments of the forensic science expert. Participants rated how (a) confident, (b) likable, (c) knowledgeable, and (d) trustworthy they found the expert on a 10-point Likert-type scale where 1 indicated a low score and 10 indicated a high score (e.g., 1 = not confident, 10 = confident). These measures corresponded to the four main factors/subscales that make up Brodsky and colleagues’ Witness Credibility Scale (WCS) which assesses subjective ratings of an expert witness’s credibility (Brodsky et al., 2010).

FSI Video

All participants viewed a 4.5-minute FSI video in which a narrator (a White male with extensive media training) describes five main concepts: (a) a brief job description of a forensic scientist; (b) the basics of latent print examination; (c) the role of a forensic expert witness at trial; (d) the role of the jury in hearing and assessing this testimony; and (e) an overview of the DOJ’s ULTR guidelines. The FSI video included photographs illustrating aspects of latent print examination and written examples of what experts should and should not say in court which were also narrated. The format of the FSI video was based on the Western District of Washington’s Implicit Bias Video. The video was created in collaboration with legal psychologists, attorneys, and forensic scientists. Note, this video was not intended to replace jury instructions but to supplement them by providing additional information to jurors prior to a case.

Measures Related to the FSI Video

All participants rated the quality and usefulness of the FSI video. Participants indicated the extent to which they agreed or disagreed with the following statements: (a) I understood the information in the video; (b) The information in the video was explained poorly; (c) I could hear the video clearly; (d) I could see the video clearly; (e) The video was boring; (f) The video was not informative; (g) The video helped me learn about what a forensic expert should not say in court; and (h) The video helped me learn about forensic print examination. These ratings were all on a 7-point Likert-type scale (1 = strongly disagree to 7 = strongly agree).

Procedure

All participants were randomly assigned to a condition and asked to read the case summary. In the untrained condition, participants immediately rated each of the five forensic expert testimony violation excerpts one at a time, which were presented in random order. The violations were presented separately from the case summary. Participants rated each excerpt on the eight dependent measures immediately after reading each excerpt. They then watched the FSI video and provided ratings of the video. In contrast, in the FSI video training condition, participants watched the FSI video after reading the case summary. After watching the video, they proceeded to read and rate the forensic expert testimony excerpts and provided ratings of the FSI video. There were two reasons why untrained condition participants watched the FSI video after making their ratings of the testimony. This allowed us to measure participants’ baseline knowledge via their ratings of the testimony on its own, without any additional training (i.e., the FSI video), and to have every participant rate the FSI video. All participants thus rated the video on quality, clarity, and utility, but their ratings of the testimony and the expert were provided either with, or without, exposure to the FSI video depending on their randomized condition. All participants answered three attention check questions; in the untrained condition, one of these questions was presented after the FSI video while in the trained condition all three were presented after the FSI video.

Within each excerpt, participants were asked to focus on the specific testimony in a bolded color. For the purposes of this manuscript, the wording has been changed to “underlined testimony,” for clarity. This specific text represented a violation of the DOJ’s guidelines, although participants were not explicitly informed about this. For example, participants read: Please read the question-answer exchange below. Put yourself in the shoes of a juror in the criminal trial. Your task is to evaluate the forensic expert’s testimony about the fingerprint evidence. Focus specifically on the Attorney for the Prosecution: Is there a process called ACE that you’re familiar with? Forensic Print Expert: Yes, there is. ACE consists of four phases: analysis, comparison, evaluation and technical review. ACE is the process I use and it is recognized in my field as being an appropriate method of doing fingerprint comparison and identification.

All of our study materials, data, and analysis syntax are posted on OSF (https://osf.io/d5vsb/). This study was not preregistered.

Results

Evaluations of the FSI Video

The FSI video-related measures were reverse-coded as necessary so that for all measures higher means indicate a positive assessment of the video. Overall, participants rated the FSI video highly on all measures with most ratings averaging greater than 6 on a 7-point scale: (a) I understood the information in the video (M = 6.37, SD = 0.75); (b) The information in the video was explained poorly (reverse-coded; M = 6.32, SD = 1.0); (c) I could hear the video clearly (M = 6.71, SD = 0.77); (d) I could see the video clearly (M = 6.78, SD = 0.46); (e) The video was boring (reverse-coded; M = 5.09, SD = 1.68); (f) The video was not informative (reverse-coded; M = 6.21, SD = 1.33); (g) The video helped me learn about what a forensic expert should not say in court (M = 6.33, SD = 0.99); and (h) The video helped me learn about forensic print examination (M = 5.62, SD = 1.55). We conducted t-tests to determine if there were any differences between the two groups in their ratings of the video. The only significant difference was that participants in the video-trained group (M = 6.22, SD = 0.82) reported that they understood the information in the video less than participants in the untrained condition (M = 6.54, SD = 0.62), t(227) = 3.35, p < .001, d = .44. This may have been because untrained participants were exposed to the testimony first and thus had already seen forensic testimony in context. The full t-test results can be found on OSF (https://osf.io/d5vsb/).

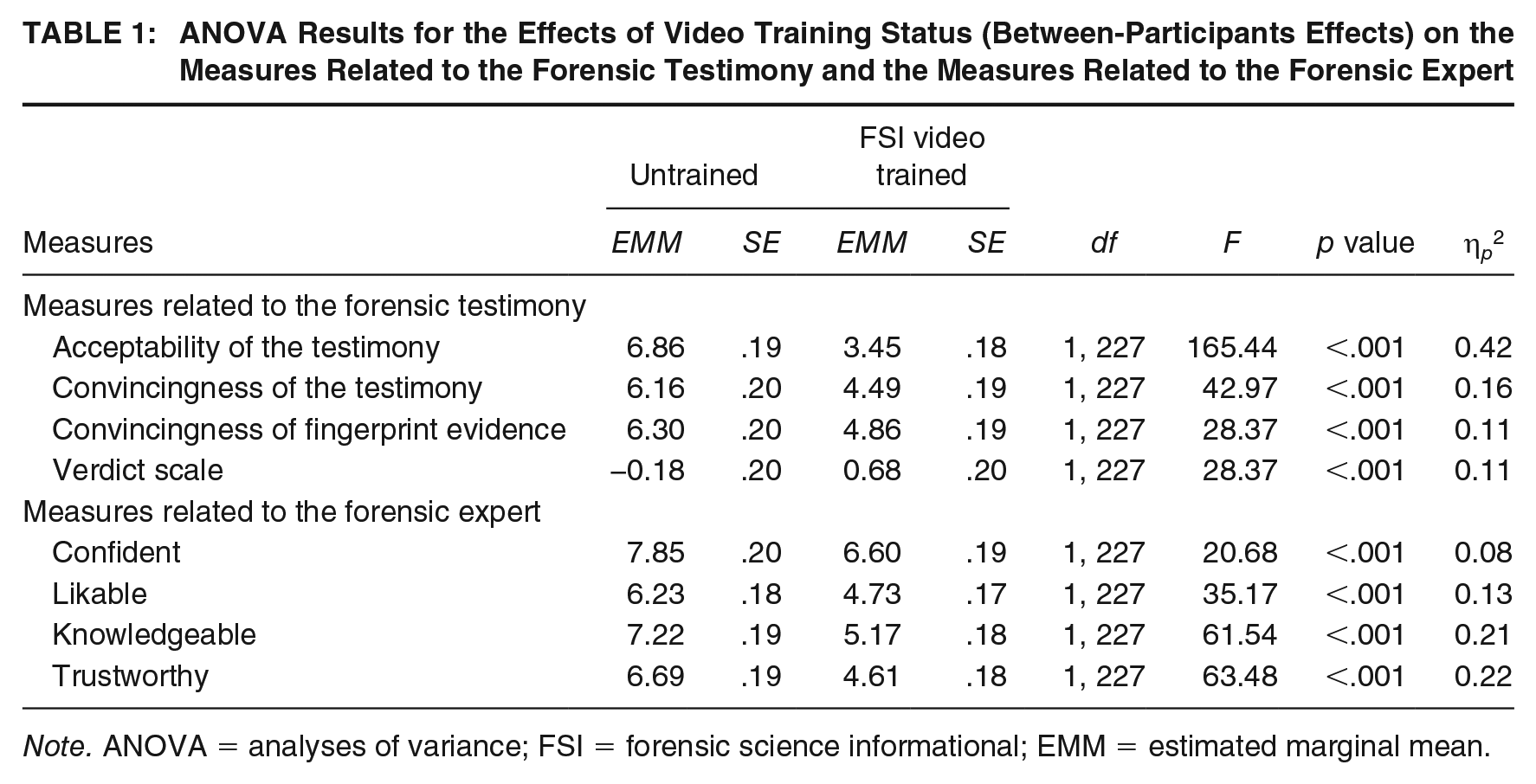

Measures Related to the Testimony

We conducted a series of four mixed analyses of variance (ANOVAs) to test the effect of video training status (untrained vs. FSI video-trained; the between-participants factor) and DOJ guideline violation type (individualization vs. mistake-free vs. specific probability vs. experience as accuracy vs. scientific certainty; the within-participants factor) on each of the four dependent measures related to the testimony (e.g., to what extent would the testimony be acceptable or unacceptable for a forensic expert to say in trial). All F-tests for the between-participants effects are reported in Table 1 and the within-participants effects are reported in Table 2. Overall, there was a main effect between participants of FSI video training for all four measures related to the testimony (see Table 1), indicating that FSI video-trained participants’ ratings of the testimony were overall lower than untrained participants’ ratings.

ANOVA Results for the Effects of Video Training Status (Between-Participants Effects) on the Measures Related to the Forensic Testimony and the Measures Related to the Forensic Expert

Note. ANOVA = analyses of variance; FSI = forensic science informational; EMM = estimated marginal mean.

ANOVA Results for the Effects of DOJ Guideline Violation Type and of the DOJ Guideline Violation Type by Video Training Status Interaction (Within-Participants Effects) on the Measures Related to the Forensic Testimony and the Measures Related to the Forensic Expert

Note. ANOVA = analyses of variance; DOJ = Department of Justice.

In terms of the main effect of FSI video training, first, FSI video-trained participants (those who saw the FSI video before providing their ratings) rated the testimony as less acceptable (Estimated marginal mean [EMM] = 3.45, SE = .18) than untrained participants (those who provided ratings without having seen the FSI video; EMM = 6.86, SE = .19). Second, video trained participants rated the testimony as less convincing for the prosecution than untrained participants, EMM = 4.39, SE = .19 and EMM = 6.16, SE = .20, respectively. Third, video-trained participants rated fingerprint evidence in general overall as less convincing than untrained participants, EMM = 4.86, SE = .19 and EMM = 6.30, SE = .20, respectively. Finally, video-trained participants were more likely to be convinced that the defendant was innocent than untrained participants, EMM = 0.68, SE = .20 and EMM = −0.18, SE = .20, respectively.

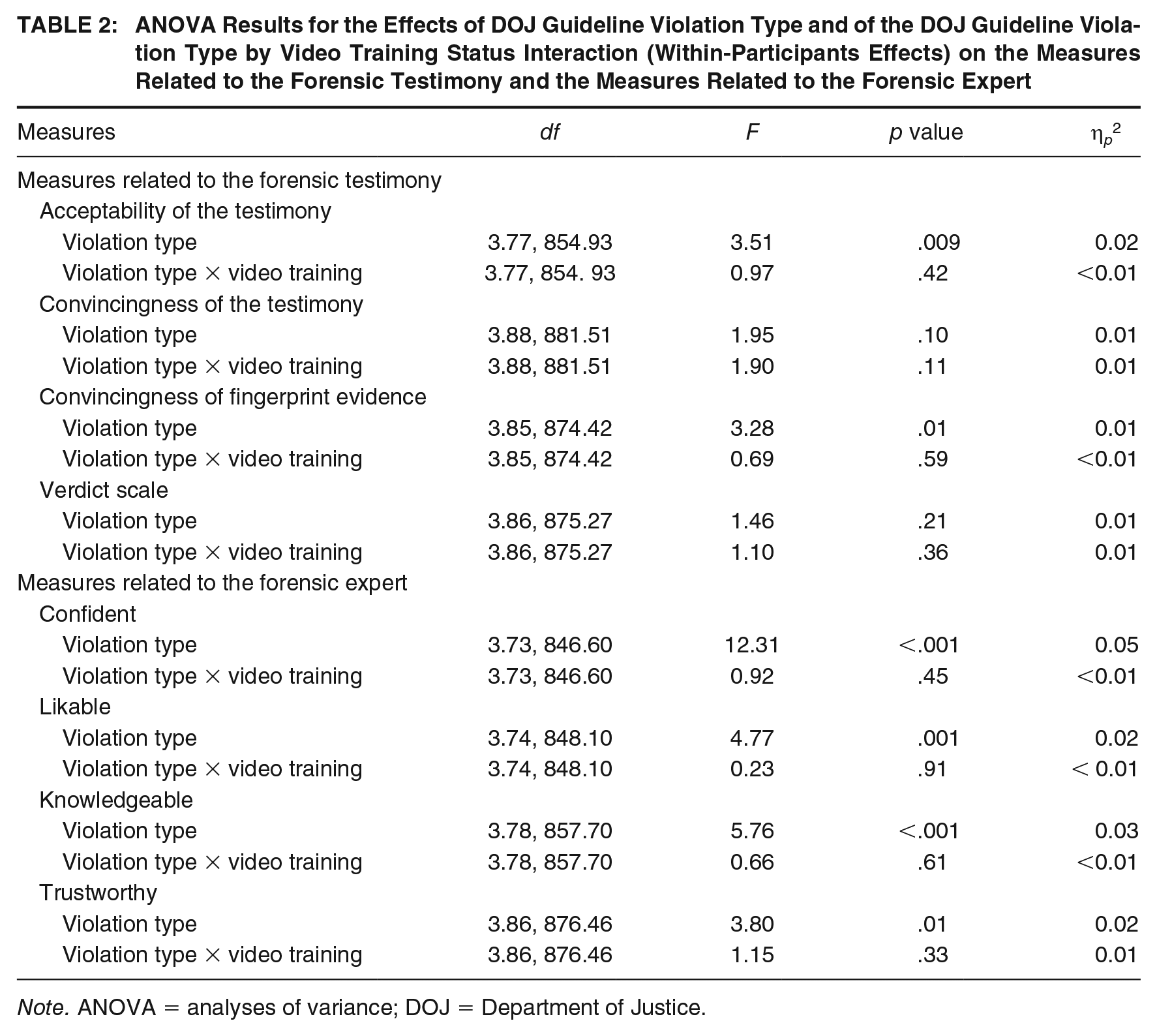

There was a main effect of DOJ guideline violation on participants’ ratings of how acceptable the testimony was and how convincing fingerprint evidence was overall (see Table 2). In terms of the main effect of DOJ guideline violation on acceptability ratings (see Table 2), post hoc pairwise comparisons with a Bonferroni adjustment revealed that participants rated both the mistake-free violation (EMM = 4.92, SE = .18, p = .03) and the specific probability violation (EMM = 4.90, SE = .19, p = .02) as significantly less acceptable to say in court than the scientific certainty violation (EMM = 5.55, SE = .19; see Figure 1). No other pairwise comparisons were significant (ps ≥ .60). Similarly, regarding the main effect of DOJ guideline violations on the convincingness of fingerprint evidence, participants rated fingerprint evidence as significantly less convincing in the mistake-free and the specific probability violations (EMM = 5.43, SE = .17, p = .04 and EMM = 5.38, SE = .18, p =.03, respectively) compared to the scientific certainty violation (EMM = 5.92, SE = .17; see Figure 1). No other pairwise comparisons were significant (ps ≥ .39). The effects of DOJ guideline violation on participants’ ratings of how convincing the testimony was for the prosecution and participants’ ratings on the verdict scale were nonsignificant (ps ≥ .10). There were no significant interaction effects (ps ≥ .11; see Table 2). A full table of means can be found on OSF (https://osf.io/d5vsb/). Taken together, the results show that the mistake-free and the specific probability violations led participants to rate the forensic testimony as less acceptable and fingerprint evidence as less convincing compared to the scientific certainty violation.

The Main Effect of DOJ Guideline Violation Type on Participants’ Ratings of the Evidence

Measures Related to the Expert Witness

We conducted a series of four mixed ANOVAs to test the effects of video training status and DOJ guideline violation type on each of the four dependent measures related to the expert witness (e.g., how likable was the forensic expert). All F-tests for the between-participants effects on the measures related to the expert are reported in Table 1 and the within-participants effects are reported in Table 2. Again, there were no significant interaction effects (ps ≥ .33; see Table 4). There was a main effect of video training for all measures related to perceptions of the expert witness (see Table 1). FSI video-trained participants rated the forensic science expert as less confident (EMM = 6.60, SE = .19), less likable (EMM = 4.73, SE = .17), less knowledgeable (EMM = 5.17, SE = .18), and less trustworthy (EMM = 4.61, SE = .18) than untrained participants (EMM = 7.85, SE = .20; EMM = 6.23, SE = .18; EMM = 7.22, SE = .19; and EMM = 6.69, SE = .19, respectively).

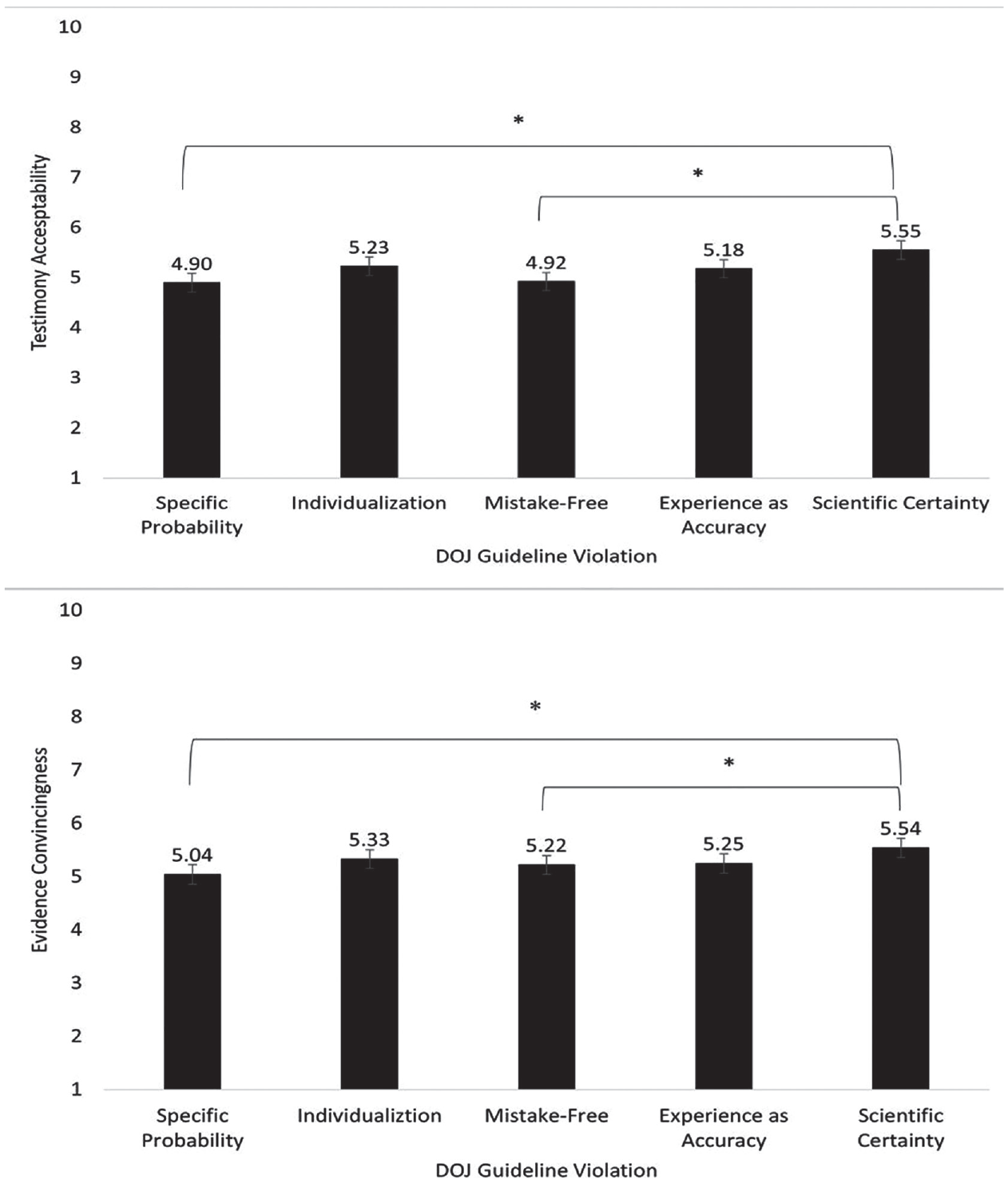

There was also a main effect of violation type for all expert witness measures (see Table 2). Participants’ ratings differed across the five violations of the DOJ guidelines for testimony in terms of their ratings of the forensic expert’s confidence, likeability, knowledgeability, and trustworthiness (see Figure 2). A full table of means can be found on OSF. Regarding expert confidence, pairwise comparison with a Bonferroni correction revealed that when considering the specific probability violation (EMM = 6.54, SE = .18), participants rated the forensic expert as significantly less confident than they did when considering the individualization violation (EMM = 7.45, SE = .18, p < .001), the mistake-free violation (EMM = 7.40, SE = .18, p < .001), the experience as accuracy violation (EMM = 7.69, SE = .18, p < .001), and marginally less than they did when considering the scientific certainty violation (EMM = 7.05, SE = .17, p = .055). No other comparisons were significant (ps ≥ .41).

The Main Effects of DOJ Guideline Violation Type on Participants’ Ratings of the Expert

Regarding likeability, when considering the scientific certainty violation (EMM = 5.87, SE = .16) participants rated the forensic expert as significantly more likable than when considering the individualization violation and the specific probability violation (EMM = 5.25, SE = .16, p = .002 and EMM = 5.32, SE = .17, p = .016, respectively). No other pairwise comparisons were significant (ps ≥ .07). Regarding knowledgeability when considering the experience as accuracy violation (EMM = 6.56, SE = .17), participants rated the forensic expert as significantly more knowledgeable than when considering the individualization violation and the specific probability violation (EMM = 5.91, SE = .17, p = .001 and EMM = 5.87, SE = .18, p = .002, respectively). No other pairwise comparisons were significant (ps ≥ .08). Regarding trustworthiness, participants rated the expert as significantly less trustworthy when considering the specific probability violation than when considering the scientific certainty violation (EMM = 5.40, SE = .17 and EMM = 6.01, SE = .17, respectively, p = .008). No other pairwise comparisons were significant (ps ≥ .09).

Discussion

Improving the translation of forensic science in the courtroom has received little research attention, despite its crucial role in investigations, convictions, and exonerations (NRC, 2009). Jurors have been shown to struggle with their perceptions and understanding of forensic expert testimony (e.g., Koehler, 1996; Martire et al., 2013). One possible intervention to improve jurors’ perceptions, namely an instructional video presented to jurors prior to expert testimony, was investigated in the present study. The aim of this study was to provide a preliminary test of our FSI video. Previous research suggests that even when explicitly instructed about the limitations of expert testimony, mock jurors do not pick up on these limitations or adjust their ratings of the testimony (Diamond et al., 1996; Kovera et al., 1994; McQuiston-Surrett & Saks, 2009; Smith et al., 1996). As such, we chose to first test our FSI video in the simplest situation, providing the easiest test to determine if it can help mock jurors recognize inappropriate testimony. The present study finds that at minimum, after FSI video training participants rated an expert giving improper testimony lower and rated the testimony lower than participants without such training (who generally found such flawed testimony to be strong).

Our findings suggest that exposure to the FSI video before evaluating testimony that violated the DOJ guidelines led mock jurors to rate both the expert testimony as well as the expert themselves lower than those without such training. Specifically, participants in our study rated the violation testimony as less acceptable for an expert to say in court, less convincing for the prosecution’s case, and rated fingerprint evidence in general as less convincing when they were previously exposed to the FSI video training. After the FSI video, participants also rated the expert lower across the board, that is as less confident, likable, knowledgeable, and trustworthy. However, these effects were small-to-medium with the largest effect size for the acceptability of the expert’s testimony (0.42) followed by the knowledgeability (0.21) and trustworthiness of the expert (0.22). The other effects, while significant, were small, with effect sizes ranging from 0.11 to 0.16.

Conversely, our results indicate that at baseline untrained mock jurors rated forensic expert testimony that violated the DOJ’s guidelines highly. This means that without training on proper forensic expert testimony, our mock jurors generally believed the testimony that violated the DOJ’s standards was acceptable. This aligns with previous research indicating that jurors generally struggle to comprehend and evaluate expert testimony (Association of Forensic Science Providers, 2009; Garrett & Mitchell, 2013; Garrett et al., 2020; Koehler, 1996; Martire et al., 2013; Thompson & Schumann, 1987). In addition, in line with the cognitive theory of multimedia learning (Mayer, 2002), it seems that presenting information via a video helped facilitate participants’ learning about violations of the DOJ testimony guidelines. Our findings suggest that at minimum, instructional videos may be a useful tool for alerting jurors to suboptimal forensic testimony.

In terms of the exploratory analyses, our results suggest that mock jurors may evaluate violations related to the DOJ’s ULTR guidelines differently. Mock jurors found the scientific certainty violation to be less egregious than others. Compared to other testimony violations, mock jurors rated testimony that used the term “scientific certainty” as more acceptable, fingerprint evidence in general as more convincing, and the forensic expert as more likable. Similarly, mock jurors in the present study also seemed to find the expert violating the experience as accuracy guideline as more excusable compared to other violation types. This finding aligns with Koehler and colleagues’ (2016) research finding that mock jurors tend to rely on an expert’s background and experience in evaluating evidence. In fact, it may be that jurors perceive experts who make statements about their experience as more knowledgeable, which, in turn, makes them view the evidence more favorably.

Taken together, these findings suggest that jurors may view some violations of DOJ testimony guidelines differently than other violations. It should be noted that the effect sizes are quite small and there were no interaction effects. Thus, the differences in ratings of the testimony and expert based on the different DOJ guidelines may have little practical significance. Future research should seek to determine if these differences are practically significant by testing the video in the context of a full trial. If future studies find the video to differentially impact participants’ understanding and evaluations of expert testimony violations, it may be pertinent to improve the FSI video to focus more time on the violations that jurors do not pick up on as easily (e.g., experience as accuracy). These findings also give credence to the idea that without any training jurors may not be well equipped to assess suboptimal forensic expert testimony. It is encouraging however that the FSI video appeared to decrease participants’ ratings of problematic testimony across all violations.

The results also revealed a statistically significant shift in guilt ratings. Participants who watched the FSI video were more likely to be convinced that the defendant was innocent than those participants who did not watch the video, who were slightly more likely to be convinced the defendant was guilty. It is worth noting that both group means hovered around neutral, indicating no propensity in either direction. Thus, while the difference was statistically significant, it may not be practically significant in that the video did not drastically shift jurors’ guilt ratings one way or the other.

The current study’s findings further suggest that after watching the informational video mock jurors may adjust not only their evaluations of problematic testimony but also their evaluations of the expert providing that testimony. Previous research on jurors’ evaluations of expert witnesses has shown across different expert witnesses, case information, and study methodologies that mock jurors’ perceptions of confidence, likeability, knowledgeability, and trustworthiness are critical to their assessments of expert credibility (Brodsky et al., 2010; Cramer et al., 2009; Griffin & Clark, 2007; Neal & Brodsky, 2008). Jurors find lower confidence experts less persuasive than more confident experts (Cramer et al., 2009), find more likable experts more persuasive (Brodsky et al., 2010; Neal et al., 2012; Younan & Martire, 2021), and find more knowledgeable experts more credible and persuasive (Neal et al., 2012; Parrot et al., 2015). Thus, the lower ratings of the expert from video-trained participants compared to untrained participants in the present study suggest that jurors’ awareness of problematic forensic testimony impacts their ratings of the expert and the expert’s credibility.

Our findings regarding the efficacy of the training video contrast with findings of some past research testing other interventions (i.e., cross-examination of the expert or posttrial jury instructions). These interventions did not affect jurors’ evaluations of expert testimony (Garrett et al., 2020; McQuiston-Surrett & Saks, 2009). The FSI video may be a useful tool to help jurors contextualize forensic expert testimony. However, this was not tested in the context of a full trial. It is important that future research demonstrates that exposure to the FSI video does not render mock jurors more critical of forensic testimony per se, but only testimony that violates best practice testimony guidelines. Further, the FSI video should be directly compared to other approaches (e.g., instructions) in the context of a full trial.

Finally, participants generally found the FSI video of high quality and value. Participants understood the content of the video, found the images and sound to be of high quality, and found the video to be useful to learn about forensic print examination as well as what a forensic expert should not say in court. These findings further suggest that the FSI video has the potential to be an effective tool for assessing suboptimal testimony, though it is not ready to be implemented.

Limitations and Future Directions

One limitation of the current study is the lack of diversity and representation within the sample. Most of the participants were female and Latina or Hispanic students, recruited from a psychology department. Although this sample was representative of the institution, it is not reflective of jury-eligible adults in the United States overall. Future research should aim for a more nationally representative sample. In addition, it is possible that college-educated individuals are better able to comprehend both forensic science testimony and the information presented in the FSI video compared to laypeople, making the sample less generalizable to all jury-eligible adults. That said, juror decision-making research generally finds that sampling from student populations does not yield different results than from community populations (Bornstein et al., 2017).

In addition, we did not have a control group in the present study in which participants rated testimony that did not violate any of the guidelines. However, we did have a control group of untrained participants who simply rated the testimony immediately after reading the case summary. This allowed us to compare participants who were trained with the FSI video and those who were not in their ratings of inappropriate testimony. Thus, the results show that at minimum, after FSI video training, participants rated an expert giving improper testimony lower and rated the testimony itself lower.

Another limitation of this study is that participants were presented with forensic testimony excerpts violating the DOJ’s guidelines, without the context of complete testimony, or a full trial. Future research should empirically test the FSI video and present the testimony within the context of full forensic expert testimony, and, ideally, embedded within a trial. Specifically, the trial should include more testimony by additional witnesses. This would allow for a direct examination of the FSI video’s effect on jurors’ ability to assess the overall quality of forensic expert testimony rather than the more simplified task of identifying statements that violate guidelines for appropriate testimony. Further variables that could be tested within a trial context include expert experience, expert gender, evidence strength, and juror characteristics. In addition, it is possible that the effect of the 4.5-minute FSI video is less potent in the context of a lengthy trial. However, research on videos in education has found that short videos can enhance students’ learning (Hsin & Cigas, 2013). Future research should also seek to determine the FSI video’s effect on jurors’ overall case decisions. Such additions would have the added benefit of improving ecological validity.

An additional caveat to our findings is that they may represent a skepticism effect. The present study included low-quality testimony but no high-quality testimony, so we cannot assess participants’ sensitivity to the evidence quality. Indeed, previous research on eyewitness memory experts suggests that informing jurors of variables that may impact the accuracy of an identification did not improve jurors’ ability to evaluate the evidence objectively. Instead, jurors became skeptical of the evidence overall, rendering fewer guilty verdicts regardless of the strength or weakness of the evidence (Dillon et al., 2017). These results mirror previous research showing that instruction on eyewitness evidence produces general skepticism effects (Papailiou et al., 2015). While it is promising that the FSI video seemed to improve jurors’ ability to recognize problematic forensic testimony, it is equally important that the intervention does not cause jurors to become skeptical of all forensic testimony. Further research is needed to determine whether the video achieves this balance and should test jurors’ evaluations of high- and low-quality testimony.

Furthermore, in the excerpts of testimony, the specific violation we asked participants to rate was bolded. It is possible that this created demand characteristics, leading participants to purposefully rate the testimony low. However, the untrained participants rated the same testimony excerpts and the expert highly. In addition, the FSI video consisted of more information than the DOJ guidelines (e.g., explanations of the role of a forensic expert in a trial, how print examinations are conducted, examples of permissible testimony, etc.). In addition, we chose to do this to better ensure that participants focused on the specific testimony we were interested in rather than other portions of the excerpt. The bolded text also allowed us to test whether mock jurors could identify issues with the testimony that are fairly obvious. The results showed they recognized these issues with the help of the FSI video, thus future research should take the next step to determine if mock jurors can detect issues that are less obvious.

Finally, we did not collect actual forensic scientists’ ratings of the video. Their input on the video’s accuracy and clarity could have been beneficial, and we encourage future researchers to take this on. We would like to point out, however, that two of our authors are forensic experts and were involved in the creation of the FSI video to ensure the video content was accurate. Overall, the limitations in this study are meaningful and the results should be interpreted with the limitations in mind.

Policy Implications

Juror decision-making can be a highly complex process depending on the amount and type of information offered by the court, witnesses, and experts. Introducing forensic evidence that requires a scientific explanation within certain parameters is yet another layer of complexity for jurors to comprehend and evaluate. Even when jurors are presented with instructions from the court about guidelines and limitations of forensic testimony, research has shown that jurors may still unwittingly overlook or not fully comprehend violations by the testifying expert (Garrett et al., 2020; McQuiston-Surrett & Saks, 2009). Without having a thorough understanding of the “ground-rules” and constraints for expert testimony, jurors are not likely to accurately evaluate forensic evidence. This study’s objective to improve said understanding yielded a promising mitigation approach to assist jurors in evaluating forensic evidence via a training video. While the results from this study can serve as a promising starting point to translate new policies and practices to jurors, more research is needed to ensure that training videos are concise, effective, and easy to understand without yielding undue skepticism toward the whole field.

The National Institute of Standards and Technology (NIST) has been developing, promoting, and promulgating technically sound standards through the Organization of Scientific Area Committees (OSAC) for Forensic Science. 2 These standards can also be used as a resource when developing educational videos. Although not all standards will clearly communicate what an expert shall not testify to, standards should consider including clear and concise statements about limitations of certain examinations, methods, and practices to allow for future inclusion in educational material for jurors. Novel approaches to educate jurors must be implemented to safeguard the risk that forensic testimony is appropriately interpreted by laypersons.

Conclusion

The present study’s findings are a first step toward helping jurors evaluate expert testimony, suggesting that a video can educate potential jurors on the types of statements forensic experts should abstain from, and impact their evaluations of the expert’s testimony and the expert. Although further research is required to determine the extent of the video’s impact, a training video may allow jurors to learn more about quality forensic expert testimony. In addition, such a video may help jurors more adequately assess the evidence presented, in turn rendering them more effective factfinders.