Abstract

Due to the prevalence of misinformation in current media environments, there is an urgent need for effective media literacy interventions that broadly protect people from its negative effects. However, such interventions do not always have their desired impact, calling for a better understanding of the factors influencing their efficacy. Therefore, we conducted a systematic literature review on 80 experimental studies, following the PRISMA checklist. Interestingly, findings suggest that intervention effectiveness depended more on the outcome variables targeted than on specific intervention characteristics. Notably, most interventions successfully improved users’ ability to detect misinformation, likely because many were specifically designed with this goal in mind. However, their effects on persuasive outcomes (e.g., attitudes) were more inconsistent, suggesting that changing such outcomes may require different or additional strategies beyond misinformation detection training. Based on these findings we propose several suggestions for future research and recommendations for developing more effective media literacy interventions.

Introduction

The spread of misinformation in today’s online media landscape has become a pressing societal issue. Misinformation, 1 which can be defined as demonstrably false or otherwise misleading information (cf. American Psychological Association, 2023), poses significant threats to individuals, organizations, and society at large. The rapid dissemination of inaccurate information through online channels can lead to profound negative consequences, including cynicism toward politicians (Balmas, 2014), vaccine hesitancy (Krishna & Thompson, 2021), lowered perceived credibility of organizations (Visentin et al., 2019), and a general distrust in media, government, and democracy (Bennett & Livingston, 2018; Van Aelst et al., 2017). As such, academics, practitioners, and policymakers have been actively searching for ways to counter these negative effects.

One widely adopted and extensively studied approach to mitigate misinformation effects, is the act of fact-checking. Although fact-checking has been proven effective in some contexts (Walter et al., 2020), it faces significant challenges. In particular, research has shown that this type of reactive intervention—often referred to as debunking—may not be sufficient by itself in eliminating all negative consequences of misinformation exposure (Ecker et al., 2022). In fact, research on the continued influence effect of misinformation shows that although corrections of misinformation do generally decrease people’s belief in the veracity of the information, they do not completely neutralize its persuasive impact (e.g., van Huijstee et al., 2022). This means that there is a discrepancy between people’s actual belief in the misinformation and the influence it still has on their beliefs, attitudes, and behavior. Furthermore, the scalability of fact-checking as a solution to misinformation is limited, given its time-consuming and resource-intensive nature. Due to the rapid growth of misinformation production and dissemination, and the advent of AI-generated misinformation (Zhou et al., 2023), the feasibility of fact-checking every piece of information containing potential misinformation becomes progressively more challenging.

As a result, there has been a growing interest in the development of pre-emptive interventions—often referred to as prebunking—as a countermeasure to the adverse effects of misinformation. Pre-emptive interventions are designed to more broadly protect people against (the negative consequences of) misinformation, preferably even before they come into contact with it (Ecker et al., 2022; Lewandowsky et al., 2012; van der Linden, 2022). These approaches often revolve around enhancing media literacy.

Although the literature on media literacy is characterized by a number of competing definitions and debates about the scope of the concept (Potter, 2022), most definitions focus on a set of competencies necessary for being an informed and successful media consumer. In general, media literacy can be seen as people’s ability to access, analyze, evaluate, create, reflect on, and act on all forms of media messages (Aufderheide, 1993; Hobbs, 2010; Potter, 2010). Over time, “new,” often more specialized types of media literacy have emerged that reflect many of these same competencies: digital literacy, health literacy, information literacy, or news literacy. These literacies are not in opposition to the general concept of media literacy, but rather interconnected members of the same family (Hobbs, 2010).

Thus, media literacy is a multifaceted concept that touches upon a wide range of topics, from the ability to discern the intentions behind news reporting, to recognizing persuasive techniques used in advertising, or being able to spot phishing scams. As such, media literate individuals are able to successfully navigate the media landscape and make informed decisions about, and based on, the media they consume, share, and/or create because they understand how these media messages are created and/or manipulated. Moreover, media literate individuals possess the critical thinking capabilities to evaluate the accuracy and credibility of the media messages they encounter (online), and are able to understand the consequences of potential misleading media content (Boh Podgornik et al., 2016; Coiro et al., 2014; Machete & Turpin, 2020; Potter, 2010; Tully et al., 2020).

Hence, scholars and practitioners often believe that media literacy education is a solution for combatting the effects of misinformation (Mihailidis & Viotty, 2017). Indeed, many media literacy interventions are specifically developed to enhance individuals’ ability to critically evaluate and interact with (mis)information. For example, some inoculation programs use gamified perspective-taking to help people recognize persuasive intent and think more critically about the accuracy of information (Ecker et al., 2022). Other types of media literacy education focus on teaching people to evaluate argument strength, identify exaggerated or sensational claims, and spot content designed to grab attention rather than to provide facts (Ecker et al., 2022).

While research provides some empirical evidence supporting the effectiveness of media literacy interventions (e.g., people with higher media literacy are better at identifying false information, hold less misinformation-induced misperceptions, and are less likely to share misinformation online; Jones-Jang et al., 2021; Khan & Idris, 2019; Wei et al., 2023; Xiao et al., 2021), other studies suggest that such pre-emptive interventions do not always have their desired impact (Ecker et al., 2022). Many current media literacy interventions seem to largely focus on helping individuals detect whether information is accurate or false. Various scholars argue that this approach fails to account for more complex effects of misinformation, such as societal distrust or polarization (Angwald & Wagnsson, 2024; P. Cook, 2023). The emphasis on information accuracy also overlooks a critical issue: the continued influence effect of misinformation (e.g., van Huijstee et al., 2022). Recognizing misinformation as false does not necessarily prevent it from influencing beliefs, attitudes, or behaviors. This means that improving people’s accuracy judgments of misinformation rather than addressing its broader persuasive impact, might render media literacy efforts less effective than they could be. However, the effectiveness of media literacy interventions seems to be rarely assessed beyond veracity judgments.

In the current study, we aim to provide a systematic, fine-grained, and comprehensive understanding of experimental research exploring (1) characteristics of employed media literacy interventions, (2) the outcome variables they aim to influence, and (3) their effectiveness in achieving these outcomes. By focusing solely on experimental research, we can investigate whether media literacy interventions can actually directly impact intended outcomes. In cross-sectional studies, both levels of media literacy and observed behavior may be a by-product of people’s extant critical thinking capabilities or education levels (McDougall et al., 2021). As such, this type of review will allow for a more nuanced assessment of the overall effectiveness of media literacy interventions to combat the negative consequences of misinformation, and can provide evidence-based practical suggestions for the development of new media literacy interventions that target particular outcome variables of interest. This study therefore attempts to answer the following research question:

RQ1: What is the scope and focus of existing research on media literacy interventions designed to counter misinformation, and how do different approaches and outcomes compare across studies?

To address this overarching question, we have broken it down into three more specific sub-questions:

(a) What types of media literacy interventions have been explored in the literature to counter misinformation?

(b) What outcome variables have been the focus of these studies?

(c) How effective have these interventions been in achieving their intended outcomes?

Method

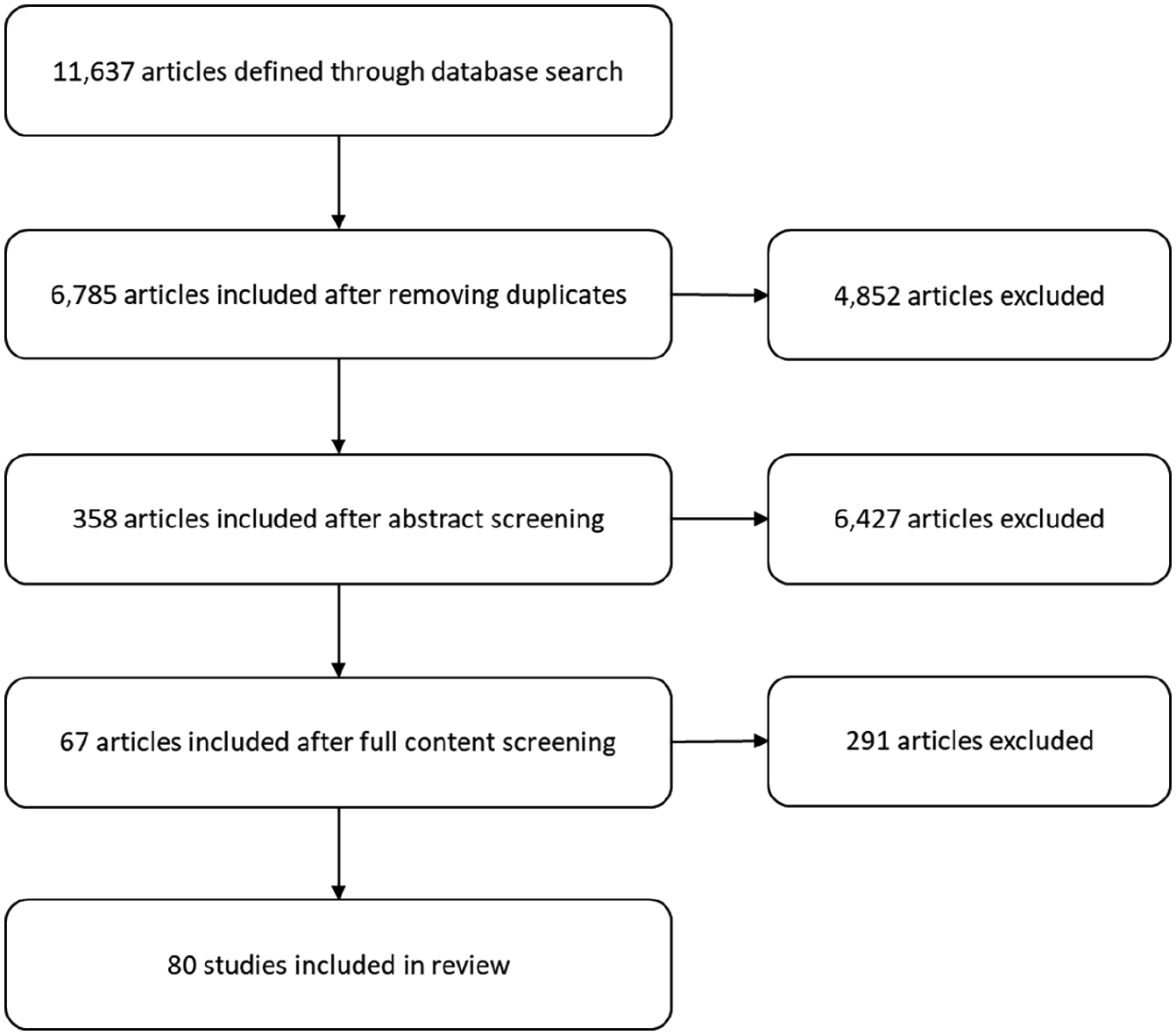

A systematic literature review on experimental research articles was conducted to investigate the characteristics and effectiveness of media literacy interventions developed to counter the effects of misinformation. We largely followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) reporting guidelines (Page et al., 2021). The PRISMA checklist, is a widely recognized tool that provides comprehensive instructions for reporting on systematic reviews evaluating the effects of interventions. Although PRISMA originated in the medical sciences, its structured approach is equally valuable for, for example, the evaluation of educational interventions in communication research. PRISMA covers a range of essential aspects, from the development of search strategies to the synthesizing of results, ensuring transparency, and reproducibility of the review process. The following section provides detailed information on the steps involved in the selection of studies for inclusion in the review: (1) database search, (2) duplicate removal, (3) abstract screening, (4) full content screening, and (5) article coding. All these steps are illustrated in the flow chart presented in Figure 1.

Flow chart of the systematic literature review inclusion process.

Database Search

In Step 1, potentially eligible articles were identified through a search in four electronic databases (SCOPUS, Web of Science, PsychInfo, and Eric). These databases were chosen based on their relevance to the topic. In these databases, the publication title, abstract, and keywords were searched, using search strings with Boolean operators including all of the following three components: (1) misinformation, (2) countermeasure, and (3) experiment (for the full details of the search string see online Supplemental Appendix A on the Open Science Framework: https://osf.io/6jp3e/). The search was completed on the 10th of January 2023, and included journal articles published through the end of 2022. This database search resulted in 11,637 potentially eligible articles. In Step 2, we removed 4,852 duplicates, resulting in 6,785 potentially eligible articles.

Screening Procedure

To enhance the efficiency and quality of the abstract screening procedure in Step 3, all abstracts of the 6,785 potentially eligible articles were imported into ASReview (version1.1; van de Schoot et al., 2021). ASReview is a machine learning tool that uses an active language-based machine learning technique. The researcher provides the ASReview system with a few abstracts of known relevant and irrelevant studies. These abstracts serve as prior knowledge, teaching the model to distinguish between relevant and irrelevant studies. The trained model evaluates the remaining unreviewed abstracts, ranking them based on their estimated likelihood of relevance. ASReview then presents the highest-ranked abstracts to the researcher for evaluation. The researcher subsequently labels each abstract as either relevant or irrelevant. The relevancy of the abstracts suggested by ASReview improves with training, leading to higher accuracy in abstract screening. By learning from the researcher’s input, ASReview prioritizes and presents the most relevant study abstracts first, significantly reducing the number of records that need to be manually screened.

Abstracts were manually classified as relevant or irrelevant based on pre-established inclusion and exclusion criteria. The abstracts were only included if they: (1) were written in English, (2) were published in a peer-reviewed journal, (3) contained experimental research, and (4) tested the effectiveness of a misinformation countermeasure. The scope of misinformation was not limited to a specific topic; studies addressing misinformation across diverse areas, such as political, health, and social domains, were considered eligible. Meta-analyses, literature reviews, books, chapters in a book, dissertations, proceedings, issues, editorials, or conference papers were excluded. When in doubt, the abstract was included. At some point in the active learning process, most relevant articles are included and mainly irrelevant abstracts remain. A conservative “stop search” criterium was defined at 100 consecutive irrelevant articles. At the time of reaching this criterium, almost 17% of all abstracts were screened for eligibility. This roughly coincides with findings of van de Schoot et al. (2021), who observed that after screening 10% of an article set in ASReview, one will have found between 70% and 100% of the relevant articles. The abstract screening process resulted in 358 articles eligible for a full content screening.

In Step 4, the method sections of the articles were manually screened to assess whether the article actually complied with inclusion criteria. Articles were only included if they tested the effectiveness of a media literacy intervention, as opposed to, message-specific fact-checking countermeasures. This screening and selection round resulted in 67 articles with a total of 80 studies that were included in the review, as several articles reported multiple experiments.

Coding Procedure

In the final step, characteristics, outcome measures, and effectiveness of the media literacy interventions were coded according to a detailed coding scheme (for the full coding scheme, see online Supplemental Appendix B on the Open Science Framework: https://osf.io/6jp3e/). First, some general article characteristics (e.g., author names, year of publication), and study and sample characteristics (e.g., design, sample size, target group characteristics) were coded. We also assessed the articles on their use of open science practices (e.g., open data, preregistration, power analysis), as well as on the topic of the misinformation the participants were exposed to (e.g., health, politics, science).

The content of the articles was then further assessed to determine what type of media literacy intervention was studied (e.g., passive inoculation, active inoculation), as well as their intended learning goals (e.g., detection vs. comprehension). The articles were further examined for the outcome variables that were hypothesized to be affected by the media literacy intervention. Finally, we reported the significance and the direction of the direct effects of the media literacy interventions on these outcome variables. Intercoder reliability was established by having a second coder independently code 20% of the studies included in this systematic review. The agreement between the two coders was good (due to the skewed nature of the data, percentage agreement was used; M = 95.7%; range between 81.3% and 100%). Any disagreements between the coders were resolved through discussion.

Results

General Characteristics

Study Characteristics

The experimental studies included in the review varied in settings and designs. The experiments were predominantly carried out online (81.3%; n = 65), while a smaller proportion was conducted in the field (15%; n = 12) or in a lab (2.5%; n = 2). The experiments employed various experimental designs—between-, within-, or mixed-subjects designs, with experimental conditions ranging from 1 to 30 (with a median of three conditions).

Studies explored a diverse range of misinformation topics. Science-related misinformation, primarily revolving around climate change, was the focus of attention in 17.5% (n = 14) of the studies. Furthermore, 16.3% (n = 13) of the included studies targeted COVID-19-related misinformation, while 12.5% (n = 10) focused on other health-related misinformation such as vaccination, breast cancer, e-cigarettes, raw milk consumption, or sunscreen use. Of the included studies, 13.8% (n = 11) concentrated on political related misinformation (including issues such as crime rates, gun control, abortion, and immigration). The remaining 43.8% (n = 35) focused on a variety of misinformation topics such as Islam, animal protection organizations, or included multiple different topics (e.g., a mix of politics, health, entertainment, and sports news).

Sample Characteristics

The participant groups in the studies varied significantly, with sample sizes ranging from as few as 20 to as many as 22,632 individuals (with a median of 517 participants). Predominantly, these participants were drawn from population-based samples (68.8%; n = 55), while some other studies only included student-based samples (15%; n = 12) or specific target groups (16.3%; n = 13; e.g., unvaccinated participants).

Due to the diversity in reporting on participant characteristics such as gender, political ideology, education level, and age (e.g., age is sometimes reported as a mean, as a median, or as a modal bracket), it is difficult to draw any meaningful conclusions about sample characteristics across the range of studies included. Notably, age or gender information was missing in 11.3% (n = 9) and 13.8% (n = 11) of the included studies, respectively. Information gaps were even more pronounced for education levels (missing in 45% of studies) and political ideology (not reported in 73.9% of studies; n = 59).

Regarding the geographic origins of the study samples, the results show that participants originated from five different continents: North America, Europe, Asia, Africa, and Australia. However, there was a noticeable Western bias, with almost half of the studies using participants (45.1%; n = 36) from North America (i.e., USA or Canada), and 21.3% (n = 17) from Europe (i.e., Germany, France, The Netherlands, Italy, Macedonia, Poland, Romania, Austria, UK, or Ukraine). Asian participants (i.e., those from China, Hong Kong, Singapore, South-Korea or Taiwan) were included in only 11.3% (n = 9) of the studies, while 7.5% (n = 6) used African participants from countries such as Ghana and Nigeria. Australian participants were involved in just one study (1.3%). Additionally, 9% (n = 7) of the studies used international samples, which comprised a mix of participants from multiple countries (e.g., USA, Portugal, and the Netherlands).

Open Science Characteristics

Less than half of the studies (45%; n = 36) made their data publicly available. Moreover, preregistration, a recommended practice for increasing the reproducibility and transparency of research findings, was employed by only 27.5% (n = 22) of the studies. In addition, more than half of the studies (56.3%; n = 45) did not report an a priori power analysis to determine the appropriate sample size for their experiments. Although these percentages may appear modest, they are higher than the overall percentages of studies using open science practices in communication science (Markowitz et al., 2021). These numbers thus indicate that researchers who study the effectiveness of media literacy interventions are moderately progressive in terms of adopting open research practices.

Media Literacy Intervention Characteristics

RQ1a pertained to the characteristics of media literacy interventions to counter the negative effects of misinformation. As it turns out, media literacy interventions have been operationalized, and conceptualized in various ways. To categorize these variations, we differentiated between types of media literacy interventions as well as the learning goals that these interventions aimed to promote.

Media Literacy Intervention Types

A first way to classify media literacy interventions is based on the characteristics of the methods they employ to impart media literacy. Based on the conceptualizations and operationalizations of the media literacy interventions included in this review, we identified five different types of media literacy interventions, aligning with earlier classifications (Ecker et al., 2022): (1) passive inoculation, (2) active inoculation, (3) general media literacy, (4) logic-based corrections, and (5) source-based corrections.

Inoculation is based on the metaphorical idea that, just like vaccines, exposing people to (1) a pre-emptive forewarning and to (2) a weakened dose of misinformation (along with strong refutations against this misinformation), builds immunity to later misinformation (Ecker et al., 2022; McGuire, 1964, 1970; van der Linden, 2022). In passive inoculation interventions, people are mere spectators who passively receive the inoculation message from the researcher (e.g., through exposure to a social media message). In contrast, active inoculation interventions revolve around people actively generating “their own ‘antibodies’” (van der Linden, 2022, p. 464). These latter intervention strategies generally involve a form of perspective-taking where participants for example play the role of misinformation creators (e.g., gamified inoculation interventions; Roozenbeek & van der Linden, 2019).

General media literacy interventions also try to educate people about the possible threat of misinformation, and to encompass them with an array of resources to enhance their ability to identify false information and to withstand its negative effects (Dumitru et al., 2022; Ecker et al., 2022; Eisemann & Pimmer, 2020). However, in contrast to inoculation interventions, general media literacy interventions do not expose people to a “weakened” form of misinformation (Ecker et al., 2022). These types of general media literacy interventions often take the form of infographics, guidelines, or social media messages warning people about misinformation, and provide tips on how to spot misinformation, without exposing people to the misinformation itself.

Finally, media literacy interventions can also take the form of logic- or source-based corrections. In these cases, misinformation is corrected before or after exposure by a correction that (1) explains fallacious reasoning in the misinformation (i.e., a logic-based correction; J. Cook et al., 2017; Ecker et al., 2022), or (2) undermines the credibility of its source (i.e., a source-based correction; Ecker et al., 2022; Hughes et al., 2014). These types of corrections (e.g., on social media) are considered a media literacy intervention because they aim to increase people’s critical thinking skills and might be able to provide broader protection against future misinformation that employ similar fallacies or sources 2 (Ecker et al., 2022; Vraga et al., 2019).

Results reveal that the plurality of the studies (36.3%; n = 29) examined the effectiveness of passive inoculation interventions. Such interventions for example consisted of exposure to a social media message that contained both a warning, a “weakened” form of misinformation, and refutations against the misinformation or the misleading techniques used in the misinformation. Furthermore, a substantial proportion of the studies (27.5%; n = 22) investigated the effectiveness of general media literacy interventions. These interventions typically took the form of infographics providing users with guidance on how to spot misinformation. Active inoculation interventions, exemplified by gamified inoculation tools like the Bad News Game (Roozenbeek & van der Linden, 2019), Go Viral (Basol et al., 2021), and Harmony Square (Roozenbeek & van der Linden, 2020), were studied relatively often as well (22.5%; n = 18). A smaller, but still noteworthy fraction of the studies focused on logic-based corrections (8.75%; n = 7). These corrections often involved responding to alleged misinformation on social media platforms like Twitter (now X) with a humorous cartoon explaining the logical fallacy made in the misinformation. Quite uncommon was the investigation of source-based corrections (1.3%; n = 1). This sole intervention used a Facebook message to encourage individuals to rely exclusively on information from credible sources with relevant expertise in relation to COVID-19 misinformation. Finally, there was a small cluster of studies (12.5%; n = 10) that did not fit neatly into one of the aforementioned categories. These studies included interventions such as utilizing an AI misinformation classification system, or encouraging people’s counterfactual thinking skills.

Media Literacy Learning Goals

A second way to classify media literacy interventions is by considering their intended learning goals. In this regard, we categorized interventions based on whether they primarily focused on enhancing people’s misinformation detection skills, or their comprehension of the broader influence of misinformation on themselves and society, or both. Detection-focused interventions aim to enhance people’s ability to evaluate (mis)information veracity by teaching them how to spot markers of misinformation (e.g., the use of logical fallacies, sensational headlines, emotional manipulation) and to use verification tools like reverse image searches. In contrast, comprehension-focused interventions aim to improve people’s understanding of the broader influence of misinformation on people’s beliefs, emotions, and behaviors. These interventions encourage individuals to reflect on their own susceptibility to misinformation, and that of others, while also exploring its wider societal impact (e.g., distrust, polarization).

Our findings reveal two primary approaches: detection-focused interventions and dual-focused interventions that combine detection with broader misinformation comprehension. The majority of the studies (82.5%; n = 66) explored detection-focused interventions. These interventions for example taught people to recognize the use of common misinformation techniques (e.g., “DEPICT”: Discrediting opponents, Emotional language use, increasing intergroup Polarization, Impersonating people through fake accounts, spreading Conspiracy theories and evoking outrage through Trolling; Roozenbeek & van der Linden, 2019), to be able to better discern between true and false information. A much smaller subset of studies (16.3%; n = 13) investigated the effectiveness of dual-focused interventions. These interventions not only sought to improve misinformation detection skills but also aimed to educate people about the social and personal consequences of misinformation such as dehumanization, stereotypes, and hate speech, and/or provided a basic understanding of media dynamics related to misinformation.

Outcome Variables

RQ1b focused on categorizing the outcome variables of interest in the reviewed studies. The included studies examined the effectiveness of media literacy interventions on a diverse array of outcomes variables. Based on previous literature and the specific conceptualizations and operationalizations of the outcome variables within the reviewed studies, a fundamental distinction between three categories of outcome variables could be made: (1) informational outcomes, (2) persuasive outcomes, and (3) media literacy outcomes. The first outcome type typically involves measures like judging the veracity or credibility of the (mis)information presented in the experiments. The second type predominantly focuses on people’s (psychological) responses to the misinformation or intervention (e.g., beliefs, attitudes, behaviors), and thus indicate how persuasive the misinformation and/or the intervention was. The third type mainly assesses improvements in (self-perceived) media literacy competencies.

Informational Outcomes

When examining the different types informational outcomes, results reveal that within the reviewed studies, the majority (65%; n = 52) included some type of measure assessing the veracity, credibility, or trustworthiness of the (mis)information. A subset of these studies (7.5%; n = 6) also measured people’s confidence in their own veracity judgments. Furthermore, a considerable proportion of the studies (20%; n = 16) explored people’s intentions to endorse the (mis)information online (liking, sharing, or commenting on the (mis)information). Finally, some studies also delved into other misinformation assessment-related outcome variables, although much less frequently, such as people’s perception of the persuasiveness, vividness, or argument strength of the (mis)information (5%; n = 4).

Persuasive Outcomes

In relation to the different types of persuasive outcomes, the results reveal that of the reviewed studies, the assessment of people’s (changed) beliefs about the misinformation topics was the most frequently studied type of outcome variable (25%; n = 20). These variables encompassed a wide variety of subjects, such as climate change beliefs, and plant or raw milk (mis)perceptions. The second most commonly studied type of persuasive outcome variable were people’s attitudes toward the misinformation topics (22.5%; n = 18). These outcome variables pertained to issues like vaccine attitudes, agreement with the misinformation, or climate change policy support. Subsequently, 12.5% (n = 10) of the studies examined people’s behavioral intentions related to the misinformation topics, including people’s intentions to vaccinate, or use sunscreen. People’s actual (online) behaviors were only measured in 2.5% (n = 2) of the studies, which included behaviors such as taking action toward obtaining vaccination. Several studies (30%; n = 24), also focused on other types of outcome variables such as people’s emotional responses to the misinformation, their social media mindfulness, their worries about their reputation when sharing misinformation, or their physiological attention toward the misinformation and the intervention.

Media Literacy Outcomes

Regarding media literacy outcomes, we found that only 13.8% (n = 11) of the studies assessed whether media literacy interventions improved people’s (self-perceived) media literacy. It is worth noting that although most studies incorporated media literacy interventions with the explicit aim of enhancing people’s media literacy competencies, only a limited number of studies explicitly measured if exposure to such an intervention increased people’s level of media literacy. In the rare cases that media literacy was indeed assessed, measurements predominantly centered around self-perceived media literacy (e.g., by asking “I have a good understanding of the concept of media literacy”), as opposed to demonstrable media literacy.

Short- Versus Long-Term Effects

When examining the distinction between studies that explored short- versus long-term effects of media literacy interventions, results reveal that the majority of the studies focused on short-term effects, by measuring outcome variables either immediately after exposure to the intervention (81.3%; n = 65), after a few hours (1.3%; n = 1), or after 1 day or more (1.3%; n = 1). Only a small subset of the studies focused on longer-term effects of media literacy interventions (16.3%; n = 13). In these instances, outcome variables were measured at various time points in the experiment, for example before exposure to the intervention, immediately after exposure, and a week or month after exposure.

Effects of Media Literacy Interventions on Outcome Variables

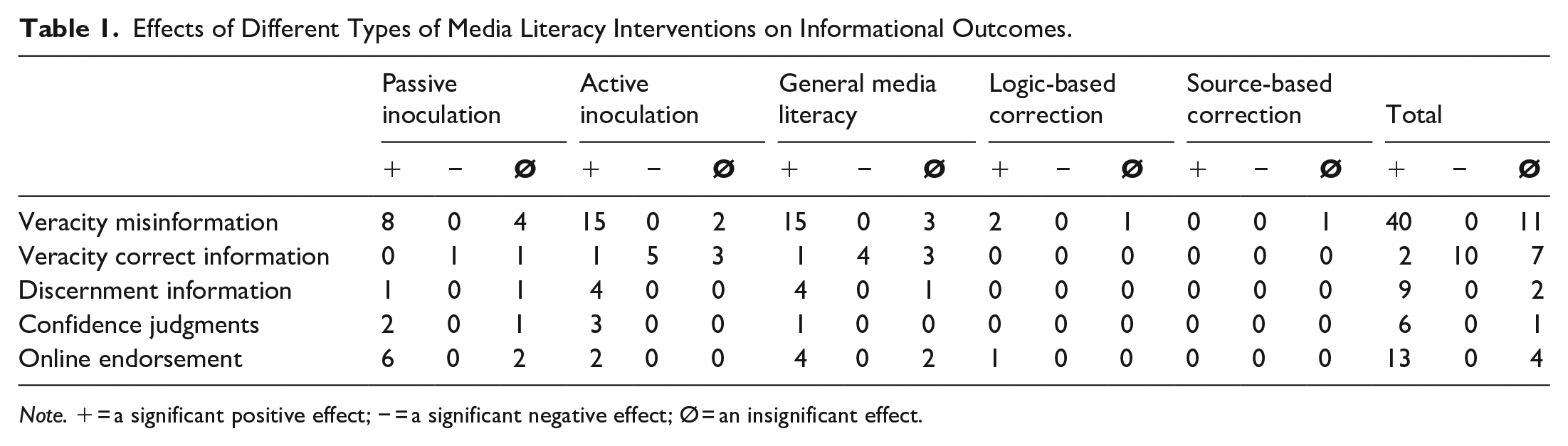

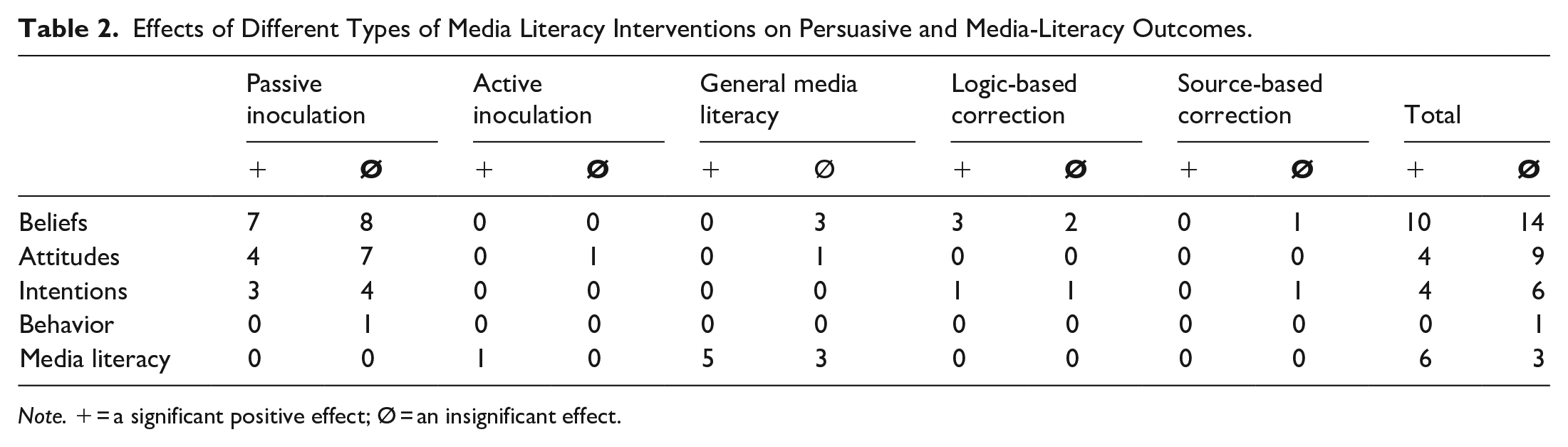

RQ1c concerned the effectiveness of different media literacy interventions on their outcome variables of interest. Detailed results of the relationships between media literacy intervention types and the most frequently measured outcome variables are presented in Tables 1 and 2. These results only pertain either to studies that compared the effectiveness of the media literacy intervention(s) of interest to a control condition (i.e., no intervention), or to studies that employed a pre- versus post-test design. Several studies did not fulfill these criteria and were therefore not considered in this part of the review. This includes five studies that tested effects of intervention strategies that could not be classified into one of the previously defined media literacy intervention types.10,12,17,32,41 Two other studies lacked quantitative reporting of their experimental findings,16,55 and six studies only compared two (or more) media literacy interventions to each other without a control condition or a pre- versus post-test design.4,13,14,29,67 Results in Tables 1 and 2 are therefore based on the 67 remaining studies (see Online Supplemental Appendix C on the Open Science Framework: https://osf.io/6jp3e/ for the references of the studies included in this part of the review). If notable differences in effectiveness are observed between different types of interventions on specific outcome variables, these will be highlighted in the results section. Note that due to the limited number of comprehension-focused interventions (compared to detection-focused interventions), it is difficult to draw definitive conclusions about their relative effectiveness. Therefore, this distinction is not explored further.

Effects of Different Types of Media Literacy Interventions on Informational Outcomes.

Note. + = a significant positive effect; − = a significant negative effect; Ø = an insignificant effect.

Effects of Different Types of Media Literacy Interventions on Persuasive and Media-Literacy Outcomes.

Note. + = a significant positive effect; Ø = an insignificant effect.

Informational Outcomes

Veracity Judgments of Misinformation

The majority of the studies investigating misinformation veracity judgments (n =40) found that exposure to an intervention,2,5,8,9,15,18,21,22,23,24,25,27,30,33,35,36,37,39,42,43,44,45,46,47,49,51,54,61,62,66 yielded a positive impact on people’s ability to correctly assess accuracy, credibility, or trustworthiness of the misinformation. Eleven studies failed to substantially improve people’s ability to identify misinformation after being exposed to a media literacy intervention.1,6,11,22,40,42,43,50,54,60,66 This lack of effectiveness was distributed fairly evenly across the various media literacy intervention types. Overall, these findings suggest that media literacy interventions, for the most part, improve people’s ability to identify misinformation.

Veracity Judgments of Correct Information

In relation to the studies that measured people’s veracity judgments of correct information, a surprising observation emerged. A total of 10 interventions,8,22,23,42,43,44,54,62 seemed to have had the unexpected consequence of diminishing people’s ability to accurately identify correct information. Only two studies27,36 found an improvement in people’s accurate assessment of correct information. Seven studies8,22,37,40,42,43 did not identify any change in people’s ability to accurately assess correct information after exposure to a media literacy intervention. This mixture of results was again relatively evenly distributed across the various categories of media literacy interventions. Overall, these results suggest that media literacy interventions may inadvertently prompt individuals to adopt a more critical stance toward news (sources) in general, potentially leading to a decreased ability to accurately assess correct information.

Discernment Between Misinformation and Correct Information

The above results show an interesting pattern regarding the duality of the influence of media literacy interventions on people’s ability to accurately assess (mis)information. On the one hand, interventions sometimes lead to a decrease in people’s accuracy when it comes to identifying correct information, while on the other hand, the majority of the studies show that these interventions actually enhance people’s ability to correctly identify misinformation. However, when zooming in on what one could consider the decisive variable, the ability to discern between misinformation and correct information, results of this review indicate that nine interventions8,23,31,36,43,47,64 increased people’s discernment capabilities between misinformation and correct information, whereas only two40,64 did not yield any significant change. In sum, this body of evidence suggests that although people become less accurate at identifying correct information, on the whole, media literacy interventions are somewhat successful in equipping people with the necessary skills to discern false from correct information.

Confidence in Veracity Judgments

Of the seven studies that assessed individuals’ confidence in their veracity judgments of (mis)information, the majority (n = 6) reported positive results.8,9,46,47,56 Only one intervention66 deviated from this pattern by failing to find a significant relationship. These findings underscore the potential of media literacy interventions in enhancing people’s confidence in their ability to distinguish between false and correct information.

Online Endorsement of Misinformation

The majority (n = 13) of the studies that investigated people’s intentions to engage in various social media behaviors show that being exposed to media literacy interventions significantly lowers people’s intention to like, comment on, or share misinformation on social media.2,5,8,21,25,26,30,33,39,46,47,53 Only four studies8,25,26,33 did not establish a significant effect. Overall, these findings thus indicate that exposure to a media literacy intervention tends to diminish the likelihood of people endorsing misinformation on social media. This outcome is likely due to the enhanced awareness of its limited truth value.

Persuasive Outcomes

Beliefs About the Misinformation Topic

The findings concerning persuasive outcomes are much more mixed than those concerning informational outcomes. In relation to people’s beliefs about the misinformation topics, 10 studies15,26,34,52,56,60,61,63,65,66 have documented the potential of media literacy interventions to reduce negative effects of misinformation, while 14 studies1,11,15,19,48,52,56,60,65,66 did not find such effects. Notably, all the general media literacy interventions that assessed effects on individuals’ beliefs yielded insignificant results; effects of other media literacy intervention types were more mixed. In sum, these results suggest that the influence of media literacy on people’s beliefs about the misinformation topics is mixed, with general media literacy interventions appearing the least powerful in affecting individuals’ beliefs.

Attitudes Toward the Misinformation Topic

The results of the studies focusing on people’s attitudes toward various misinformation topics show a similar mixed picture. The majority of studies (n = 9) failed to establish a significant relationship between exposure to media literacy interventions and people’s attitudes toward topics, such as climate change, vaccinations, or smoking.19,20,24,28,45,48,57,64 Four studies7,26,30,38 did find proof for the potential of media literacy interventions on people’s attitudes toward misinformation topics. Interestingly, studies investigating the effectiveness of active inoculation and general media literacy interventions rarely included assessments of their impact on people’s attitudes, while studies exploring passive inoculation interventions did this much more frequently. Overall, the results suggest that although some studies find that interventions affect people’s attitudes about misinformation topics, the ability of media literacy interventions to do so is generally limited.

Behavioral Intentions

Unsurprisingly, findings of studies investigating the influence of media literacy interventions on individuals’ behavioral intentions is mixed as well. In total, four studies38,39,57 have demonstrated that exposure to a media literacy intervention can effectively mitigate negative effects of misinformation on for example people’s intentions to get vaccinated. However, six studies1,20,26,28,52,53 did not find a significant relationship between exposure to a media literacy intervention and people’s behavioral intentions across various areas such as vaccination intentions, or intentions related to smoking. Consequently, it becomes evident that media literacy interventions may have a limited capacity to significantly affect people’s behavioral intentions.

Behavior

Only one study measured some type of misinformation related behavior. The findings of this study indicate that the use this intervention did not significantly altered people’s next video preference.30 As such, there is a lack of evidence that media literacy interventions can be effective in promoting the positive behaviors educated in the media literacy intervention.

Media Literacy Outcomes

Finally, of the few studies that measured the effectiveness of media literacy interventions on people’s media literacy, the majority (n = 6) found positive effects2,5,36,37,49,54 (e.g., increases in people’s social media or misinformation knowledge, their perceived behavioral control toward verifying misinformation, or their self-perceived general media literacy). However, three other studies,54,62 failed to establish a significant relationship. All in all, the findings generally support the notion that media literacy interventions have the potential to effectively enhance people’s self-perceived media literacy.

Long-Term Effects

The included studies that examined long-term effects of media literacy interventions also present a mixed picture. On the one hand, several studies attest to the enduring influence of media literacy interventions over time. For example, one study that investigated the effectiveness of a passive inoculation intervention on people’s scientific consensus beliefs about climate change reported a sustained positive effect of this intervention after a week.34 Moreover, three active inoculation studies also demonstrated lasting effects of their interventions on people’s accurate veracity judgments of misinformation, with these effects persisting for 1 week, 5 weeks, and even 3 months.8,35 Furthermore, one general media literacy intervention study found that its impact on people’s correct misinformation veracity judgments endured for 2 weeks, although its magnitude was attenuated by more than half.23

On the other hand, several other studies found that the effects of media literacy interventions tend to dissipate over time. Two passive inoculation studies showed that the effectiveness of these interventions on people’s misconceptions about Russia’s accountability for various negative events disappeared in the 2 weeks after the intervention.65,66 Moreover, two general media literacy and one active inoculation study indicated that the effects of these interventions on people’s correct misinformation veracity judgments dissipated after 1 week (general media literacy),8 2 weeks (general media literacy),23 and after 2 months (active inoculation).35 All in all, these findings suggest that media literacy interventions can vary in their long-term effects, and that in order to uphold their effectiveness, media literacy interventions may need to be reinforced or repeated.

Conclusion and Discussion

This study systematically reviewed 80 experimental studies to present an overview of the characteristics and effectiveness of media literacy interventions to combat negative effects of misinformation. The following section summarizes the main findings and discusses them in relation to existing literature. Finally, the limitations of this review and several recommendations for future research, as well as for the development of media literacy interventions, are discussed.

Characteristics of Media Literacy Interventions

The media literacy interventions described in the included studies were conceptualized and operationalized in numerous ways, using existing typologies of media literacy interventions (Ecker et al., 2022; van der Linden, 2022), or based on their educational goals.

Media Literacy Intervention Types

In terms of the type of interventions, this review showed that inoculation strategies, particularly passive forms, were the most frequently studied, followed by general media literacy interventions. Active inoculation approaches were also commonly examined, while logic- and source-based interventions were studied much less frequently. Overall, these findings demonstrate the relative dominance of the, theoretically inspired, inoculation strategies in current literature on misinformation and media literacy (Ecker et al., 2022; McGuire, 1964, 1970; van der Linden, 2022). However, a more practice-based approach studying the impact of general media literacy interventions that utilize infographics or other forms of informative materials remains a prominent research focus as well (Dumitru et al., 2022; Ecker et al., 2022; Eisemann & Pimmer, 2020).

Media Literacy Intervention Learning Goals

The findings of this review highlight a clear dominance of detection-focused media literacy interventions. A small subset of studies had a dual-focused approach, teaching detection skills while also addressing the broader impact of misinformation, such as societal and individual consequences. This detection-focused approach, while valuable, does not fully align with the broader definition of the concept of media literacy, which also includes understanding the consequences of exposure to information and developing critical thinking skills to evaluate and reflect on media messages, beyond their truth value (Aufderheide, 1993; Hobbs, 2010; Potter, 2010).

Outcome Variables and Media Literacy Effectiveness

Outcome variables of interest in the reviewed studies had many different forms, but could be roughly categorized into three types of outcomes: (1) informational outcomes, (2) persuasive outcomes, and (3) media literacy outcomes. The findings of this review also highlight the critical need to distinguish between these outcome variables when discussing the effectiveness of media literacy interventions, since clear differences emerged in the effectiveness of media literacy interventions depending on the type of outcome variables of interest.

Informational Outcomes

The review revealed that many studies prioritized assessing people’s ability to evaluate the veracity of misinformation. While media literacy interventions were generally effective in improving users’ ability to accurately assess the veracity of misinformation, no specific type of media literacy intervention was clearly superior to the other in respect to this outcome. Interestingly, over half of the studies investigating veracity judgments of correct information reported negative effects, particularly for active inoculation and general media literacy interventions. This aligns with findings from previous literature, showing that interventions may make users overly critical of accurate information (Guay et al., 2023; Hameleers, 2023; Modirrousta-Galian & Higham, 2022).

Persuasive Outcomes

While the majority of studies in this review investigated informational outcomes, persuasive outcomes such as changes in beliefs, attitudes, and behaviors were explored in only a small number of studies. Among the studies which did, findings were mixed. More than half of the studies investigating persuasive outcomes showed that media literacy interventions were not effective in counteracting misinformation’s influence on beliefs, attitudes and behavioral intentions. This suggests that being able to detect misinformation may not be enough to counteract the persuasive effects misinformation may have on individuals. This observation is in line with the notion of continued influence (Lewandowsky et al., 2012; van Huijstee et al., 2022), suggesting that information known to be false may still influence people’s cognitions.

Media Literacy Outcomes

Surprisingly few studies tested whether media literacy interventions actually enhanced users’ level of media literacy. Those that did often reported positive effects, although many relied on self-reported measures, raising concerns about placebo effects (Stewart-Williams & Podd, 2004). This lack of direct testing is striking, given that improvements in media literacy is generally proposed as the mechanism by which observed positive effects of media literacy interventions on the processing of misinformation can be explained (Ecker et al., 2022).

Short- Versus Long-Term Effects

Most studies reviewed focused exclusively on the immediate effects of media literacy interventions, with only a small proportion examining their longer-term impact. Among those that did, the findings were inconsistent: approximately half of the studies reported persistent, though diminished, effects, while the other half found no lasting impact. As such, these findings align with previous reviews suggesting that the effectiveness of media literacy interventions often dissipates over time (Ecker et al., 2022).

Limitations of Current Media Literacy Interventions and Research

A critical limitation of the current body of research included in this review, is the overwhelming focus on detection-focused interventions and outcomes, that is, interventions designed to improve, and measures aimed at assessing, people’s ability to evaluate the veracity of (mis)information. While people’s ability to detect misinformation is undeniably important, several studies (e.g., Lewandowsky et al., 2012; van Huijstee et al., 2022), as well as the results presented in this review, highlight that there may be a significant disconnect between the ability to assess misinformation’s veracity and the continued influence that misinformation may have on people’s beliefs, attitudes, and behaviors. Importantly, these persuasive outcomes of misinformation often have stronger societal implications than the mere belief in an individual misinformation narrative (Bennett & Livingston, 2018; Lewandowsky & Cook, 2020; McKay & Tenove, 2021; Roozenbeek et al., 2021; Van Aelst et al., 2017). For this reason, it would make sense if future studies (and media literacy intervention developers alike) would focus more on interventions aiming to reduce persuasive outcomes of misinformation exposure.

A more specific issue related to this detection-based focus is that media literacy is about more than only identifying false information, it also includes the ability to access correct information. The current review shows that only few studies (30%) testing the effects of a media literacy intervention assessed to what extent the interventions helps users (in addition to identifying misinformation as such) to identify correct information as such. Of the studies that do, a majority shows that exposure to a media literacy intervention reduces people’s ability to identify correct information. This suggests that media literacy interventions can provide users with a skeptical attitude toward information in general. The few studies that assessed interventions’ capabilities to increase users’ discernment between misinformation and correct information provide a somewhat more positive image, with discernment skills improving in most studies, but still we should be wary of media literacy interventions that reduce users’ access to correct information. Future studies could focus less on media literacy strategies leveraging cues for distrust and information avoidance, and more on strategies leveraging trust cues and information selection.

Additionally, future research should place greater emphasis on evaluating to what extent media literacy interventions actually enhance people’s media literacy. Although the direct aim of these interventions is making people more media literate, thereby being able to navigate (mis)information spaces better, our systematic literature review clearly highlights the limited attention devoted in studies to directly examine this aspect. One likely reason for this scarcity is the difficulty in developing objective, reliable, feasible, and valid measures for complex concepts such as media literacy. Most of the measures that were included in studies in this review focused on people’s self-perceived (i.e., subjective) media literacy, rather than media literacy objectified in some type of test, thereby rendering findings prone to subjective biases (Purington Drake et al., 2023) and placebo effects (Stewart-Williams & Podd, 2004). The difference between media literacy competencies and media literacy behaviors adds complexity to its assessment. If competencies are not measured accurately, it becomes difficult to determine whether any observed changes in media literacy-related behaviors (e.g., fact-checking or misinformation avoidance) are truly driven by improved competencies, or perhaps by other factors, such as an increased general mistrust of information. To address this limitation, future research should be directed toward the development of measures that can accurately assess people’s objective media literacy (e.g., such as the Youth Social Media Literacy Inventory; Purington Drake et al., 2023).

Finally, the lack of attention for the long-term effectiveness of media literacy interventions underscores the need for more comprehensive longitudinal research in the field. Gaining a better understanding of the durable impact of media literacy interventions could assist practitioners and policymakers in deciding whether the costs of certain media literacy interventions outweigh their (longer-term) benefits. Furthermore, given the mixed results observed in the few studies that did measure long-term effects, it is imperative for future research to investigate specific characteristics of media literacy interventions that were found to enhance people’s media literacy over a longer period of time (Basol et al., 2021; Guess et al., 2020; Maertens et al., 2020, 2021). Gaining insights into these characteristics can provide valuable guidance for the development of more effective and enduring media literacy programs.

Recommendations for Practitioners

Based on our systematic analysis of the extant literature on the effectiveness of media literacy interventions, we developed the following three recommendations for practitioners who focus on developing media literacy interventions to counter the negative effects of misinformation: (1) expand the focus of interventions beyond (mis)information detection alone, (2) focus more on information selection literacy, and less on information processing literacy, and (3) (re-)activate a media literate mindset on top of educating media literacy.

Expand Interventions’ Focus Beyond Misinformation Detection

While most of the studied media literacy interventions in our review focused on enhancing users’ ability to assess misinformation’s veracity, our review underscores the need for a broader media literacy approach. Media literacy is not only about recognizing false information, it is also about understanding, and building resilience toward, the broader psychological, persuasive, and societal effects of misinformation. By failing to address these dimensions, current approaches leave critical gaps in users’ ability to fully comprehend and mitigate the influence of misinformation. Additionally, detection-focused approaches do not adequately protect individuals from the continued influence of misinformation. Even when users identify information as false, its psychological and persuasive effects can still shape their attitudes, beliefs, and behaviors.

As such, media literacy interventions should be designed to equip users with a more comprehensive set of media literacy competencies. For instance, by educating individuals on the social, psychological, and political consequences of misinformation, users can better understand its potential to manipulate opinions, polarize communities, and undermine democratic processes. Similarly, interventions should aim to incorporate strategies to mitigate users’ psychological susceptibility toward misinformation, such as fostering emotion regulation, and resilience against the persuasive tactics often employed in misinformation. As a result, users will be able to make better-informed decisions regarding their own (mis)information exposure.

Focus on Information Selection Rather Than Information Processing

Our review showed that most media literacy interventions improve people’s ability to correctly process misinformation. As a result, they are better able to assess the veracity of such information. However, repeated evidence from continued influence research suggests that information that is evaluated as non-credible may still influence users’ beliefs, attitudes, and behavior. This indicates that the processing of misinformation, independent of what the final assessment will be, may elicit persuasive effects. This, in turn, implies that media literacy interventions should not induce people to closely scrutinize misinformation as is now done in for example many general media literacy intervention (which, e.g., prompt readers to verify claims, click on links, and check references), because such activities may likely increase persuasive effects of misinformation.

A related finding from this review and other research as well (Modirrousta-Galian & Higham, 2022), is that media literacy interventions did not only improve users’ accuracy in identifying misinformation, but also reduced users’ accuracy in identifying correct information. This effect illustrates that media literacy interventions mostly taught users to be skeptical, rather than to genuinely differentiate between information.

Based on these findings, we argue that media literacy interventions should shift their focus from enhancing information processing skills to improving information selection skills. Instead of teaching users only how to scrutinize misinformation, interventions should equip them with the skills to quickly distinguish between reliable and unreliable information. If misinformation is dismissed during the information selection phase, rather than upon close processing, its persuasive impact is likely to be much smaller. To achieve this, media literacy interventions should not only focus on characteristics of misinformation, but also on those of reliable information. Educating users about principles of credible journalism, such as the processes and standards involved in creating trustworthy content, can help users better recognize quality information. Such an approach thus would shift the focus from merely identifying falsehoods to fostering a deeper understanding of what constitutes reliable content.

Activate Rather Than Solely Educate Media Literacy

The findings of this review highlight the importance of considering a trade-off between the effectiveness of media literacy interventions and the time commitment required from users, as this balance can significantly influence the suitability of different intervention types for various audiences. Regarding users’ levels of media literacy, different audiences may be in different phases of their learning curves. There are specific audiences (e.g., children, elderly users, lower tech-literate users, lower-educated users) who may still have a relatively low level of media literacy. As a consequence, in these audiences, comprehensive educational media literacy interventions will produce a steep learning effect. Implementing such, elaborate and therefore incidental, interventions is recommended in such audiences.

Other audiences, in contrast, already have obtained more advanced media literacy, learning effects from educational media literacy interventions are therefore expected to be much weaker. A recommended approach in such audiences would be to implement interventions that aim to activate extant media literacy rather than to educate new media literacy. Such activating interventions should simply remind users to consider their media literacy in their upcoming activity of selecting and processing online information (by e.g., reminding them to check an article’s source). This may be especially effective because many users enter social media environments with an entertainment motive rather than the motivation to be well-informed (Whiting & Williams, 2013), suggesting that media competency skills are likely to be low in salience.

Activation of interventions should be offered timely (i.e., right before exposure to possibly ambiguous information is likely), but can be simple, short, and unobtrusive, and therefore can often be repeated. The latter is advisable, given that evidence for long-term effects of media literacy interventions is weak; this also implicates that interventions should be visually attractive and/or attention grabbing, in order to avoid desensitization.

Limitations of the Systematic Literature Review

A caveat of this systematic literature review lies in its primary focus on the direct effects of media literacy interventions, thereby potentially disregarding moderating and mediating variables. It is important to recognize that various factors, such as prior attitudes (Amazeen et al., 2022) or perceived information characteristics (e.g., credibility; Vraga et al., 2020) could significantly influence the relationships we found in this review, by strengthening or weakening the effectiveness of certain media literacy interventions. Other factors (e.g., cognitive, emotional and/or excitative responses; Valkenburg & Peter, 2013) could act as underlying mechanisms through which certain media literacy interventions are effective or not. As such, a significant avenue for future research in this area lies in conducting a more comprehensive investigation of the conditional influence of such moderating and mediating variables. Such an approach would lead to a better understanding of how, when, for whom, and under which conditions, which specific types of media literacy interventions prove effective for which specific outcome variables.

The current systematic literature review included only experimental studies, because of their high informational value regarding the effectiveness of interventions, However, other types of studies into the impact of media literacy interventions to combat misinformation effects, such as surveys or social network analyses, could provide additional insights into the real-world applicability and broader societal impact of these interventions. Including such studies would enhance our understanding of how media literacy interventions perform in everyday life situations, beyond the more controlled experimental environments often employed in the experimental studies included in this review.

Moreover, the generalizability of the findings of the current review to a broader population may be limited due to certain characteristics of the samples used in the reviewed studies. The studies mainly focused on effectiveness of media literacy interventions within adult populations, predominantly in Western regions. As a result, the findings of this review especially pertain to a group of people who likely already possess higher baseline levels of media literacy, compared to other demographic groups such as children or elderly. The observed effectiveness of media literacy interventions might be substantially different for such groups. Notably, nearly half of the studies did not report participants’ educational background, an important omission, given the well-established link between education and media literacy. This limits the interpretability of the findings across different socio-educational groups. Consequently, there is a clear need for future research to place a stronger emphasis on the investigation of the effectiveness of media literacy interventions among children and (other) vulnerable groups, in various global and educational contexts, in order to increase the generalizability and robustness of our findings.

Conclusion

Current media literacy interventions combatting the impact of misinformation primarily focus on enhancing users’ ability to detect misinformation. Although generally effective, this narrow focus does not fully reflect the comprehensive set of media literacy competencies people require to navigate misinformation in today’s complex media environment. Media literacy is about more than distinguishing between true and false information; it also involves understanding the broader psychological, persuasive, and societal effects of misinformation. Moreover, focusing predominantly on misinformation detection does also not protect people from the influence misinformation has on their beliefs, attitudes and behaviors, even after it has been recognized as false. Without addressing these aspects, current interventions thus risk leaving people media skeptic rather than media literate, and at the same time naïve rather than equipped in countering the harmful effects of misinformation.

To overcome these issues, the results of this systematic literature review provide several recommendations for practitioners in the field. These recommendations include broadening the scope of media literacy interventions beyond a narrow focus on misinformation detection. Interventions should prioritize developing comprehensive competencies, such as skills in information selection, understanding the broader psychological, persuasive, and societal impacts of misinformation, and building resilience against its persuasive effects. Practitioners should also consider audience-specific strategies, activating existing media literacy for more advanced users while emphasizing education for less experienced groups. By integrating such recommendations, media literacy interventions can more effectively equip people with the ability to critically engage with (mis)information, mitigate the influence of misinformation, and strengthen their ability to navigate the complexities of our current ambiguous media environment.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is part of the OMEDIALITERACY project “overview of the challenges and opportunities of media literacy policies in Europe,” selected under the European Media and Information Fund managed by Calouste Gulbenkian Foundation. The sole responsibility for any content supported by the European Media and Information Fund lies with the author(s) and it may not necessarily reflect the positions of the EMIF and the Fund Partners, the Calouste Gulbenkian Foundation and the European University Institute.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.