Abstract

One of the most fundamental changes in today’s political information environment is an increasing lack of communicative truthfulness. To explore this worrisome phenomenon, this study aims to investigate the effects of political misinformation by integrating three theoretical approaches: (1) misinformation, (2) polarization, and (3) selective exposure. In this article, we examine the role of fact-checkers in discrediting polarized misinformation in a fragmented media environment. We rely on two experiments (N = 1,117) in which we vary exposure to attitudinal-congruent or incongruent political news and a follow-up fact-check article debunking the information. Participants were either forced to see or free to select a fact-checker. Results show that fact-checkers can be successful as they (1) lower agreement with attitudinally congruent political misinformation and (2) can overcome political polarization. Moreover, dependent on the issue, fact-checkers are most likely to be selected when they confirm prior attitudes and avoided when they are incongruent, indicating a confirmation bias for selecting corrective information. The freedom to select or avoid fact-checkers does not have an impact on political beliefs.

In late 2016, especially when the U.S. Election day approached, an alarming lack of honesty in political communication became a major concern within the public debate. Ever since, numerous websites that appear credible but are fake in nature have popped up. As a reaction to this uncontrolled spread of misinformation, political fact-checking emerged as a new journalistic practice and medium (Fridkin, Kenney, & Wintersieck, 2015). So far, studies provide mixed evidence on the effectiveness of political fact-checkers and have not explicitly taken into account that audiences nowadays can actively select or avoid this type of corrective information. To advance our understanding of the effectiveness of fact-checkers in discrediting partisan misinformation in the contemporary high-choice media environment, this study relies on three related theoretical approaches: (1) misinformation, (2) polarization, and (3) selective exposure.

First, the noticeable increase in the dissemination of fake news, in scientific literature known as “misinformation” (Waisbord, 2015), has resulted in concerns about the effects of misperceptions in multiple contexts, such as political elections (e.g., Thorson, 2016). Related, it has been argued that we are currently living in an era characterized by postfactual relativism (e.g., Van Aelst et al., 2017). This implies that the epistemic premises of information and factual knowledge have increasingly become a source of doubt and distrust. This development poses a severe threat on democratic decision-making because citizens and politicians can no longer agree on factual information that forms the input for policy making.

Second, as misperceptions primarily persist when tightly intertwined with strongly held beliefs or ideologies, misinformation is inherently related to political polarization. The efforts of numerous facts-checkers such as PolitiFact, The AP Fact Check, and FactCheck.org to determine the accuracy of political claims can be successful at times (Cobb, Nyhan, & Reifler, 2013; Lewandowsky, Ecker, Seifert, Schwarz, & Cook, 2012). However, individuals tend to reject corrections that run counter to their views (Nyhan & Reifler, 2010). At the same time, people do not always actively avoid information that counters their priors (e.g., Garrett, 2009). Thus, in a politically polarized context, the question is how effective fact-checkers are in refuting partisan political news.

Third, this problem becomes even more salient when regarding the impact of misinformation and fact-checkers in the context of the increasingly more fragmented, high-choice media environment. The literature on both political polarization and selective exposure postulates that when self-selecting news, people avoid information that counters their priors, while searching for information congruent with their existing beliefs (Bennett & Iyengar, 2008). Thus, audiences might play a central role in creating their own biased news environment where sticking to prior beliefs outweighs the need for factual correctness (Taber & Lodge, 2006). The term selective exposure is used here to denote that audiences’ selection preferences might take the form of a confirmation bias (Knobloch-Westerwick, Mothes, & Polavin, 2017), which casts doubt on the assumption that partisans actively seek out, or are exposed to, corrective information that challenges their views (Iyengar & Hahn, 2009; Stroud, 2008).

Against this backdrop, this study aims to make two key contributions. First, the findings of research exploring whether fact-checkers can effectively discredit misinformation in partisan settings are still inconclusive (e.g., Fridkin et al., 2015; Thorson, 2016). Second, most of these experimental studies required individuals to read fact-checking messages that they may not normally choose to consume, ignoring potential confirmation biases in the self-selection or avoidance of such information. Hence, the current research aims to advance our understanding of the impact of fact-checkers by testing the effectiveness of corrective information in a self-selection environment.

This study empirically investigates the effects of fact-checkers in response to partisan news among polarized audiences in a selective exposure media environment. We rely on two experiments that vary respondents’ exposure to attitudinal-congruent or incongruent political news and a follow-up fact-check article debunking the information. To simulate citizens’ patterns of self-selection or avoidance, participants were either free or forced to select a fact-checker. Study 1 tests these effects for the issue of refugees entering the United States and Study 2 for climate change policies.

Misinformation and Corrective Efforts

To understand the impact of the spread of incorrect information, the concept of misinformation has gained momentum in the field of communication science (e.g., Waisbord, 2015). Misinformation is information that is presented as accurate but later found to be false (Thorson, 2016). Such false information can eventually lead to false beliefs or factual misperceptions, posing vexing problems on democratic decision-making (Reedy, Wells, & Gastil, 2014). At times, corrective information can counter the effects of misinformation on false beliefs (e.g., Cobb et al., 2013; Lewandowsky et al., 2012; Wood & Porter, 2018). In this setting, fact-checkers have been forwarded as a potential solution in correcting the rising spread of misinformation that has become part of the contemporary mass communication landscape (e.g., Fridkin et al., 2015; Thorson, 2016). By thoroughly testing the claims foregrounded in political news stories, fact-checkers demonstrate which news can be trusted and which news is incorrect, hereby guiding citizens to make the most accurate decisions. Because fact-checkers’ instrumental use of message simplicity and emphasis on facts, both recommended elements of corrective information (Lewandowsky et al., 2012), it might be a successful way of countering misinformation. However, before assessing the effects of corrective efforts, it is crucial to assess who selects or avoids this type of political information.

Selective Exposure to Fact-Checkers

Audiences can nowadays actively seek out or avoid political information based on their political priors. To incorporate the phenomenon of self-selection in high-choice media settings, we rely on the theories of cognitive dissonance and selective exposure (e.g., Bennett & Iyengar, 2008; Stroud, 2008) and explain how selection processes can result in confirmation biases. Selective exposure can be defined as the process by which an individual’s prior beliefs guide the selection of new information in their media environment (e.g., Iyengar & Hahn, 2009; Stroud, 2008).

Although selection processes may not always operate consciously, the selection of reinforcing rather than challenging views fulfills the need to maintain a consistent image of the self (Festinger, 1957). The desire to avoid cognitive dissonance can result in a confirmation bias, meaning that audiences only select information congruent with their prior attitudes (Knobloch-Westerwick et al., 2017). Based on the psychological mechanism of cognitive dissonance, individuals may restrict themselves largely to messages that align with preexisting attitudes, resulting in negative consequences for political discourse and polarization (Bennett & Iyengar, 2008). While a debate exists on whether the avoidance of attitude-incongruent information is actually as strong as the preference for attitude-congruent content (Garrett, 2009), there seems to be growing consensus that audiences generally approach political news with a confirmation bias (e.g., Knobloch-Westerwick et al., 2017).

In sum, especially in today’s high-choice media environment, individuals with certain priors are biased in selecting political congruent information (Stroud, 2008). This selection bias may also hold in the context of correcting misinformation. Audiences might avoid fact-checkers debunking information that is in line with their attitudes and select those that correct incongruent information. Against this backdrop, we raise the first hypothesis on selective exposure to fact-checkers:

Correcting Misinformation Congruent With Existing Political Beliefs

When fact-checkers are selected, the question is how effective are they in debunking incongruent or congruent misinformation. Despite the potential of corrective efforts, it has been argued that misinformation is hard to correct, even after scientific consensus on the falsehood of the claims (e.g., Poland & Spier, 2010). Even after clear retractions, misinformation primarily continues to influence and persuade people’s attitudes when it is tightly intertwined with strongly held partisan beliefs or ideology (Lewandowsky et al., 2012; Taber & Lodge, 2006; Thorson, 2016). In line with the premises of motivated reasoning and cognitive dissonance theory, this persistence could be explained by the notion that individuals evaluate information in a biased manner in order to remain consistent with existing beliefs (Jerit & Barabas, 2012; Taber & Lodge, 2006). Thus, information in line with prior-held beliefs is falsely evaluated as more credible and reliable than disconfirming information. Empirical studies, in the context of U.S. partisanship, confirm that political claims in line with people’s political ideology, regardless of its veracity, are more likely to be accepted and likewise with the rejection of belief-incongruent content (e.g., Nyhan & Reifler, 2010). In brief, the effects of misinformation may be dependent on the audience’s partisan lenses and the effectiveness of fact-checkers might depend on people’s existing political preferences—that is, polarization of the audience (Price, Cappella, & Nir, 2002; Thorson, 2016).

Despite the alleged perseverance of misinformation on partisans’ perceptions, a growing body of literature seems to indicate that corrective attempts such as fact-checkers have the potential to change perceptions in the desired direction (e.g., Wood & Porter, 2018). Accordingly, a meta-analysis of the psychological efficacy of messages countering misinformation (Chan, Jones, Hall Jamieson, & Albarracín, 2017) concluded that the introduction of new and detailed counterarguments to fact-checkers should increase acceptance of the debunking message. Yet, some inconsistencies are identified in prior research. These could be driven by different conceptualizations of the dependent variable. Fridkin et al. (2015), for example, show that fact-checks can have an impact on the acceptance of claims made in a political advertisement, and that there is only a mild role of motivated reasoning in the processing of fact-check’s claims. Shin and Thorson (2017) found a stronger role of partisanship, which may be explained by their focus on more affective and indirect dependent variables: out-group negativity and hostility toward fact-checkers. Because we rely on agreement with the claims made in the news story as the dependent variable, the basic input for accurate democratic decision making and a key indicator of whether corrective information can debunk misperceptions, we expect that fact-checkers can be successful even in discrediting attitude-congruent information. Although the outcome variable does contain references to (partisan) identity, we measure the level of agreement with the positions emphasized in news articles, rather than citizens’ feelings toward groups in society.

At large, extant research indicates that fact-checking can have an effect even among people that perceive the content as attitudinal-incongruent (Nyhan, Porter, Reifler, & Wood, 2017; Wood & Porter, 2018). In line with Fridkin et al. (2015), we assume that fact-checks that challenge pro- or counter-attitudinal news are more powerful and relevant than fact-checkers that confirm statements made in news items. For this reason, we exclusively focus on fact-checkers that debunk misinformation, that is, either attitudinal-congruent or incongruent, which is in line with the democratic challenge of misperceptions as a result of mis- or disinformation in the current postfactual era (Van Aelst et al., 2017). We look at situations where audiences are experimentally forced to read a fact-checker (i.e., forced exposure media settings) and situations where they are free to select or avoid a fact-checker (i.e., selective exposure media environment). We forward the following hypotheses:

Ceteris paribus, we expect that citizens are sensitive to the rebuttals offered by fact-checkers and adjust their issue agreement accordingly.

Countering Polarization in Response to Partisan Political News

Corrective attempts may not only correct misperceptions, they may also reduce the gap between partisans by challenging their prior attitudes. In line with this, it has been argued that exposure to counter-attitudinal information may actually counter political polarization (e.g., Matthes & Valenzuela, 2012)—in this research operationalized as the divergence in people’s attitudes regarding political issues such as immigration or climate change. Moreover, fact-checkers are found to have a modest effect on correcting “extreme” attitudes (e.g., Wood & Porter, 2018). Specifically, alternative perspectives on an issue may enhance citizens’ understanding of the motivations that drive alternative issue positions, which may enhance openness to other perspectives on the issue at hand (Price et al., 2002). Thorson (2016), in contrast, argues that misinformation is not effectively discredited by fact-checkers in the context of polarized audiences. In line with this, Shin and Thorson (2017) have demonstrated that partisans select and share only those fact-checkers that confirm their priors. However, because we focus on content-related issue agreement, we do believe that political polarization can be countered by offering factual challenging information (e.g., Lewandowsky et al., 2012). We thus expect that fact-checkers’ correction of misperceptions resulting from exposure to misinformation can reduce the gap between peoples’ political attitudes:

The Effects of Selective Exposure to Fact-Checkers

Next to selection and attitudinal effects, it is important to explore how the freedom to select congruent versus incongruent fact-checkers affects citizens’ agreement with political (mis)information. Theories of motivated reasoning assume that people are more likely to select and uncritically accept congruent information, whereas they avoid or counter-argue disconfirming information (Taber & Lodge, 2006). In the context of this confirmation bias, self-selected information is more likely to be congruent with people’s priors, and therefore also more persuasive. As a consequence, people’s priors will be reinforced for cognitive consistency motives (Garret & Stroud, 2014). In line with this mechanism, we raise the final hypothesis of this study:

The effects of selective exposure to fact-checkers are tested in the context of two polarized issues. Both issues are highly visible in public opinion and media debates, yet both respond to different aspects of partisan identities and issue ownership. In this regard, issue ownership can be defined as the policy positions that citizens associate with certain political parties (Van der Brug, 2004). For Study 1, we look at the issue of immigration and issue positions revolving around the debate to what extent refugees should be allowed to enter the United States. In Study 2, climate change policies are central, highlighting the different opinions on the issue position if climate change has human causes. Immigration resonates with nativist/exclusionist identities and is mainly an issue owned by the right-wing. Climate change, however, is an issue that may transcend national borders. This issue position may be regarded as owned by the left.

Study 1: The Effects of Fact-Checkers in Discrediting Partisan News on Immigration

Method Study 1

Design

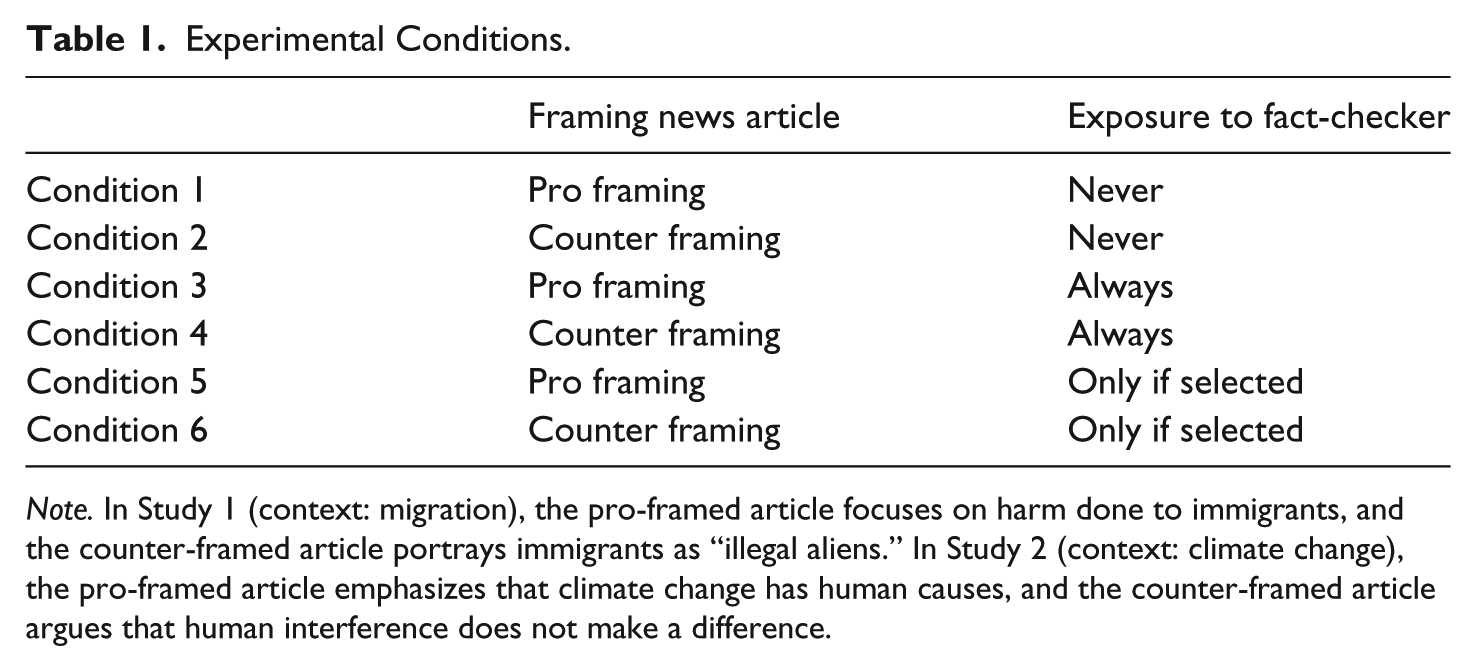

The first experiment concerns a 2 (attitudinal stance: pro- versus counter-immigration framing of news article) × 3 (exposure to a fact-checker: no fact-checker versus forced exposure to a fact-checker versus free to select or avoid fact-checker) between-subject factorial design (see Table 1). Notably, in the “free-to-select” condition, participants thus either self-select or avoid the fact-checker after seeing the headline of it, creating additional groups. To assess whether the immigration news item was congruent with participants’ prior attitudes, the sample was randomly divided into equally sized groups of respondents that either supported or opposed immigration. Respondents were randomly assigned to the experimental conditions.

Experimental Conditions.

Note. In Study 1 (context: migration), the pro-framed article focuses on harm done to immigrants, and the counter-framed article portrays immigrants as “illegal aliens.” In Study 2 (context: climate change), the pro-framed article emphasizes that climate change has human causes, and the counter-framed article argues that human interference does not make a difference.

Sample

U.S. participants were recruited from Amazon Mechanical Turk (MT), a platform for crowd-sourcing that allocates financial rewards to participants that complete a task. Numerous studies that compared participants from MT to traditional laboratory subjects have demonstrated that MT subjects are not outperformed when it comes to reliability measures of personality scales (see Buhrmester, Kwang, & Gosling, 2011). MT replications of classical experiments provide similar results (Sprouse, 2011). Next to this, MT participants are substantially more nationally representative than college student samples (Buhrmester et al., 2011).

A total of 881 individuals accessed the link, and the study was completed by 550 participants who successfully answered an attention-check item situated in the beginning of the experiment (completion rate 62.4%). The sample reflected a good representation of the voting population in terms of age, gender, partisanship, and level of education. The mean age of participants was 37.47 years (SD = 11.39 years). In terms of gender, 50.2% were males, 49.1% were females, and two participants did not identify with this classification (other). Regarding the distribution of education, 36.2% was lower educated, 16.0% was higher educated, and 47.8% had a moderate level of education. In terms of partisanship, 33.1% self-identified as Independent, 30.2% as Republican, and 36.7% as Democrat, which is close to recent representative polling data (e.g., Gallup, 2016).

Procedure

Participants accessed the survey via an online link. First, they had to give informed consent for their participation. Next, they completed the attitude question that asked them for their general support of the issue of migrants coming to the United States. We included quota to ensure that the sample distribution reflected actual partisan boundaries in the United States. This question was followed by the pretest questionnaire including demographics and control variables. After this, participants were randomly assigned to the six experimental conditions. Every participant first of all read a short online article framed as either pro- or counter-immigration. In a second screen, in the selective exposure conditions, people additionally had the freedom to avoid or select a fact-checker, based on seeing the headline of the fact-checker that evaluated the news article they just read as “mostly false.” In the forced exposure conditions, they were forced to read the fact-checker. In the no fact-checker condition, they did not see one. The stimuli were visible for at least 20 seconds. This minimum forced-reading time resulted from pretesting and corresponds to the minimal time people needed to process the message. After participants completed reading, they were forwarded to the posttest questionnaire, in which questions for the manipulation checks and dependent variables were included. Upon completion, participants were thanked and debriefed.

Independent variables and stimuli

Participants were first of all exposed to a short online news article on immigration. The news article was manipulated to either provide arguments in support of immigration and policies or provided arguments against this position. As a result, the article was either congruent or incongruent with respondents’ attitudes on the issue of immigration. Our stimuli were informed by the central framing components identified in content analyses of immigration news (e.g., S. H. Kim, Carvalho, Davis, & Mullins, 2011). Specifically, they were structured around a problem definition, causes, and treatment for immigration—the central components of emphasis frames (Entman, 1993).

The counter-immigration frame focused on the problem aspects of immigrants as “illegal aliens,” as well as the causes of illegal immigration based on the failure of the Democratic government’s policy. The pro-immigration frame focused on the harm done to these immigrants and highlighted the troubled economy of the immigrants as a cause. Blame was attributed to the Republican Party. Based on the content features identified by S. H. Kim et al. (2011), we designed two versions of partisan political news that were structured by these three key components known to constitute the framing of immigration in U.S. media (see Online Appendix A for stimuli). The articles contained both an attitudinal and a partisan stance on the issue of immigration. Moreover, both articles were written in a way that respondents could not know if they were factual or not. In doing so, the articles could serve as misinformation or at least as a debatable stance on the issue that would later, dependent on the condition, be debunked by a fact-checker.

The stimuli of the fact-checkers were informed by templates of actual fact-checker websites, such as PolitiFact, The AP Fact Check, and FactCheck.org. We termed the fact-checkers “PolitiCheck,” which is in line with the stimuli used by Fridkin et al. (2015). Similar to these existing fact-checker websites, the judgment of the corrective attempt was already visible in the title/first screen (PolitiFact shows the verdict using a thermometer in the first screen). The fact-checker refuted all claims made in the article, structured by the problem definition, the causal interpretation, and the treatment recommendation. The fact-checker ended with the conclusion that, based on the facts, the article was “mostly false” (see Fridkin et al., 2015). The refutation of claims was founded in empirical evidence, in line with the type of content typically used in fact-checkers (see Online Appendix A).

Measuring attitudinal congruence

To divide participants into different groups based on their political attitudes toward immigration, the following question was asked at the start of the survey: “We want to ask you a question about the issue of migrants coming to the United States. On a scale from 1 to 7, please indicate how strongly you support or oppose that immigrants are entering the United States.” Participants who scored 1 through 3 were labeled as opposing migration, those who scored 5 through 7 were labeled as supporters. Respondents who answered 4, labeled as neither oppose or support, were excluded from the survey. To make sure this one-item measure is a valid and stable representation of participants’ immigration attitudes, we included a five-item immigration attitudes scale at a different point in the survey (Cronbach’s α = .92, M = 3.96, SD = 1.74). The very strong correlation between both measures indicates that the filter question validly tapped into participants’ general immigration attitudes (r = .93, p < .001).

Dependent variables

We asked participants to what extent they agreed with the forwarded problem definition, causal interpretation, and treatment recommendation for the versions of the immigration story they were exposed to. In total, we used five items to measure agreement with the pro-immigration story (e.g., “The well-being of immigrants coming to the United States is threatened severely”; Cronbach’s α = .89, M = 3.43, SD = 1.80) and five items to measure agreement with the anti-immigration story (e.g., “Immigrants coming to the U.S. are responsible for major increases in crime rate”; Cronbach’s α = .94, M = 3.54, SD = 1.60). The measures were congruent with the manipulated dimensions in the treatment, and thus tapped into agreement with the issue positions forwarded in the pro- and counter-framed article. Measures were equivalent for participants exposed to a fact-checker and participants not exposed to a fact-checker. All items were measured on 7-point scales (see Online Appendix B for item measures).

Self-selection was assessed with a one-item question: “Thinking of your everyday life, would you, as a follow-up, read the following fact-checker of the article you just read?”—1 = no (35%), 2 = yes (65%).

Manipulation checks

The manipulation of the article’s attitudinal stance was successful, F(1, 535) = 788.15, p < .001. Specifically, participants in the pro-immigration condition perceived the article as significantly and substantially more inclined to support immigration (M = 6.05, SD = 1.13) than participants in the anti-immigration conditions (M = 2.39, SD = 1.50). The manipulation of the fact-checker’s stance was also successful, F(1, 293) = 256.99, p < .001. This means that people in the fact-check conditions were substantially and significantly more likely to perceive that the fact-checker refuted the article’s claims (M = 6.50, SD = 1.23) than in the other conditions (M = 2.32, SD = 1.83). Finally, people in the selective exposure conditions were significantly more likely to perceive freedom of selecting a fact-checker (M = 5.85, SD = 1.98) than participants in the forced exposure conditions (M = 2.78, SD = 2.26), F(3, 294) = 49.73, p < .001.

Analyses

First, we computed new conditions based on the attitudinal congruence of the newspaper article and the fact-checkers. Articles and fact-checkers were coded as congruent if the message was in line with participants’ prior immigration attitudes and were coded incongruent if the message counter argued their views on immigration. Specifically, scores ranging from 1 through 3 on the 7-point immigration scale were regarded as congruent with anti-immigration stimuli, and incongruent with the pro-immigration stimuli. Scores 5 through 7 were interpreted as congruent with the pro-immigration story and incongruent with the counter-immigration story. Participants who scored the midpoint on the scale (4) were not retained in the analysis. The same coding procedure was applied to the fact-checkers.

In the next steps, multinomial logistic regression analyses were used to predict the likelihood of self-selection to congruent and incongruent fact-checkers. A series of ANOVAs were used to compare mean scores on the resulting conditions (6) and groups (8) and test the effect on agreement with the story and polarization.

Results Study 1

Selective exposure and fact-checkers

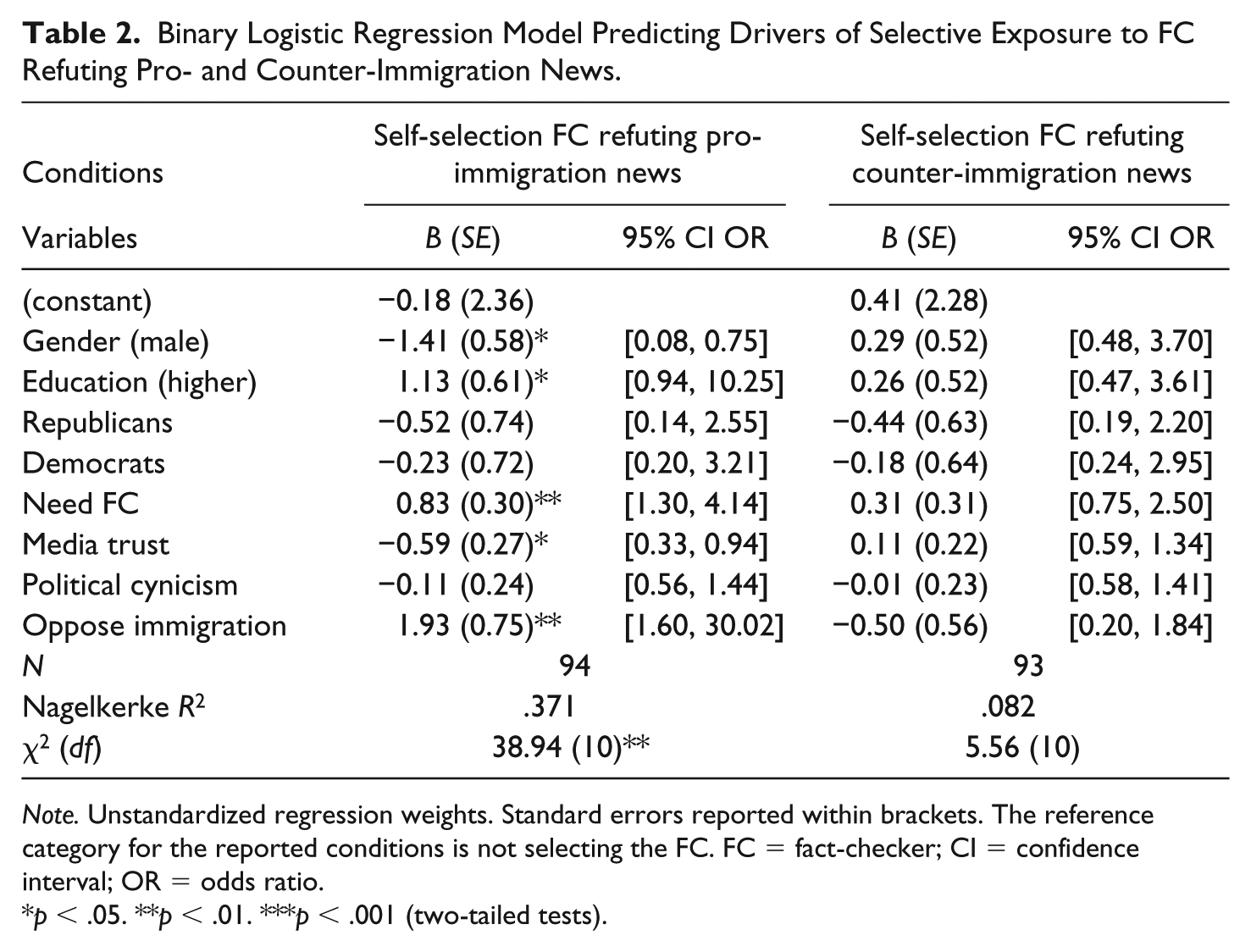

To test H1, we estimated a logistic regression model that explored the likelihood of self-selection into fact-checkers based on issue attitudes and partisanship, while controlling for demographics, political attitudes, and media use variables (see Table 2). As can be seen in Table 2, participants that oppose immigration are significantly more likely to select a fact-checker that refutes pro-immigration news than people who support immigration. Participants faced with the choice to select an incongruent fact-checker are more likely to avoid the disconfirming information offered in the rebuttal. This supports H1.

Binary Logistic Regression Model Predicting Drivers of Selective Exposure to FC Refuting Pro- and Counter-Immigration News.

Note. Unstandardized regression weights. Standard errors reported within brackets. The reference category for the reported conditions is not selecting the FC. FC = fact-checker; CI = confidence interval; OR = odds ratio.

p < .05. **p < .01. ***p < .001 (two-tailed tests).

The effects of exposure to fact-checkers discrediting misinformation on immigration

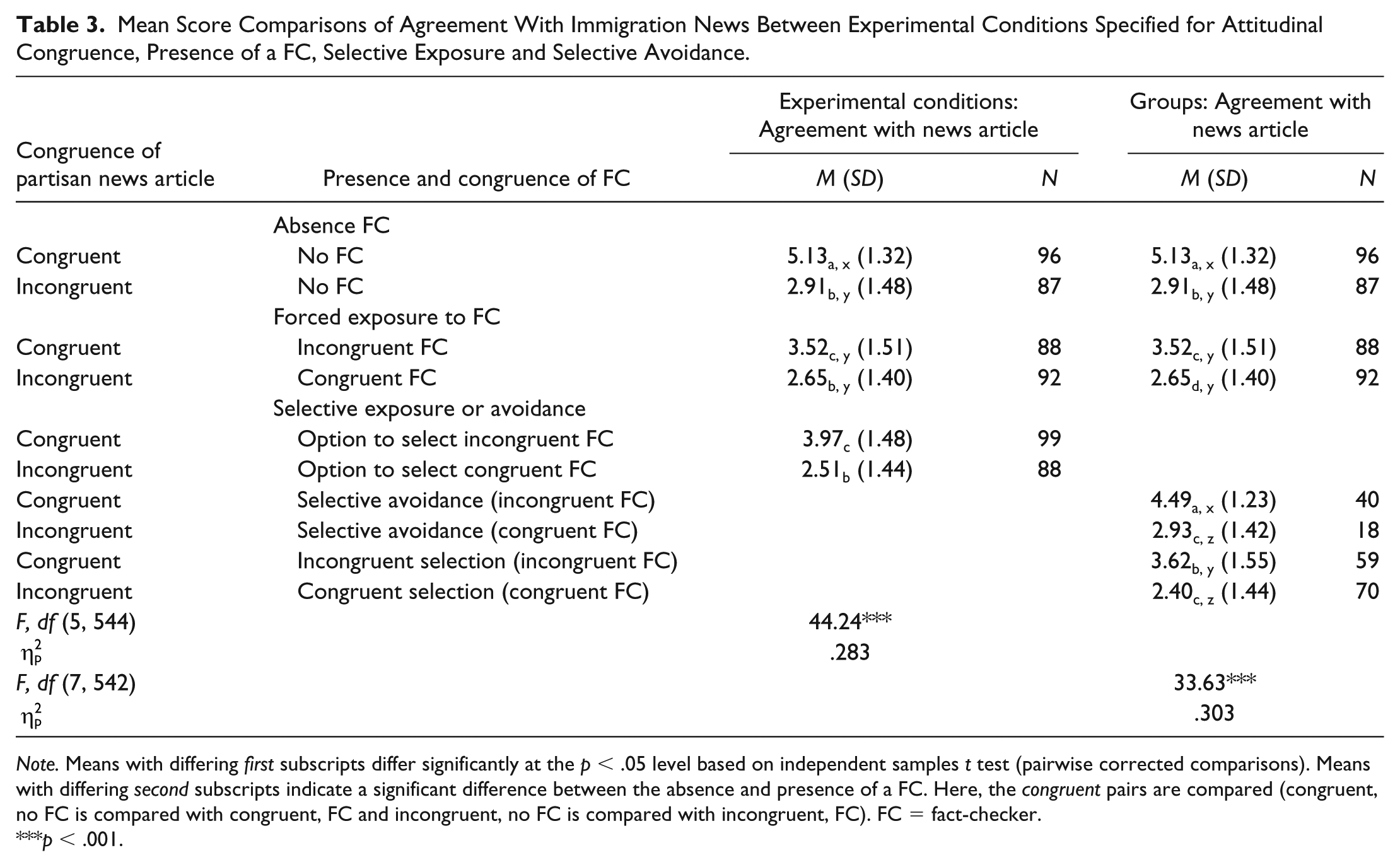

We expected that fact-checkers have a negative effect on participants’ agreement with the statements made in the rebutted news item (H2). To test these expectations, two ANOVA models were run, one based on the random exposure to the six experimental conditions and one including the comparison of groups that resulted from participants’ selection or avoidance of a fact-checker in the free-to-select condition (see Table 3). The results of the factorial ANOVA with the experimental conditions (Table 3, model experimental conditions) indicate a significant main effect of the conditions, F(5, 544) = 44.24, p < .001,

Mean Score Comparisons of Agreement With Immigration News Between Experimental Conditions Specified for Attitudinal Congruence, Presence of a FC, Selective Exposure and Selective Avoidance.

Note. Means with differing first subscripts differ significantly at the p < .05 level based on independent samples t test (pairwise corrected comparisons). Means with differing second subscripts indicate a significant difference between the absence and presence of a FC. Here, the congruent pairs are compared (congruent, no FC is compared with congruent, FC and incongruent, no FC is compared with incongruent, FC). FC = fact-checker.

p < .001.

Fact-checkers and political polarization

In the next step, we tested if fact-checkers can reduce polarization (H3). In both the forced and selective exposure conditions, we see that the agreement scores of partisans are far apart without exposure to a fact-checker. In other words, participants exposed to congruent news without a fact-checker agree significantly and substantially more with the news (forced: M = 5.13, SD = 1.32; selective: M = 4.49, SD = 1.23) than participants exposed to incongruent news (forced: M = 2.91, SD = 1.48; selective: M = 2.93, SD = 1.42). This indicates a strong main effect of partisanship (forced: ΔM = 2.22, ΔSE = 0.21, p < .001, 95% CI = [1.61, 2.83]; selective: ΔM = 1.55, ΔSE = 0.40, p < .001, 95% CI = [0.28, 2.84]). Comparing the mean scores between incongruent and congruent news exposure with exposure to a debunking fact-checker, we see that the difference decreases (forced: ΔM = 0.87, ΔSE = 0.21, p = .002, 95% CI = [0.19, 1.54]; selective: ΔM = 1.21, ΔSE = 0.25, p < .001, 95% CI = [0.42, 2.00]). For forced exposure to a fact-checker, the agreement scores of partisans are significantly less far apart as compared with no exposure to fact-checkers (ΔM = 1.35, ΔSE = 0.02, p < .001, 95% CI = [1.31, 1.39]). The difference is also significant for the selective exposure groups, albeit less substantial, ΔM = 0.34, ΔSE = 0.05, p < .001, 95% CI = [0.25, 0.44]. This supports H3.

The effect of selective exposure

In the next step, we explored how the freedom of selective exposure altered the effects of fact-checkers on participants’ agreement with the news story (H4). The interaction effect of selective exposure and fact-checkers on agreement with the story was nonsignificant, F(3, 546) = 0.21, p = .89,

Study 2: The Effects of Fact-Checkers in Discrediting Partisan News on Climate Change

Our first experiment revealed that fact-checkers have a substantial effect in discrediting partisan political news on immigration and that fact-checkers can even reduce political polarization. In the next step, we aim to explore if these conclusions are also valid for fact-checkers that refute claims on climate change policies. Climate change may also be regarded as a polarized issue, but the source of identification and (partisan) issue ownership is different.

Method Study 2

Design

The design of the second experiment is similar to the first experiment. Participants were again randomly allocated to one of the six conditions. Prior to exposure, participants were divided into groups that were either pro-climate change or counter-climate change policies. Pro-climate change meant that participants believed that climate change had human causes, and that human action is needed to avert the threat. Counter-climate change reflected the position that evidence regarding climate change’s existence is unconvincing, and that human interference does not make a difference. Pro-climate change news was framed as partisan news supporting Democrats, and counter-climate news was framed as partisan news supporting Republicans.

Sample

Amazon MT was used to recruit participants. A total of 1,221 individuals accessed the link, and the study was completed by 567 participants (completion rate 46.4%). The relative high proportion of incompletes is caused by the attitude-congruent filter.

The sample reflected a good representation of the voting population in terms of age, gender, and level of education. The mean age of participants was 37.37 years (SD = 11.72 years). In terms of gender, 53.6% were males, 46.0% were females, and two participants did not identify with this classification (other). Regarding the distribution of education, 33.2% was lower educated, 18.3% was higher educated, and 48.5% had a moderate level of education. Regarding partisan identities, 30.0% self-identified as Independent, 32.8% as Republican, and 37.2% as Democrat.

Procedure

The experiment followed exactly the same procedure as reported in Study 1.

Independent variables and stimuli

A news article on climate change was manipulated along the lines of the problem definition, causal interpretation, and treatment recommendation (Entman, 1993). In the pro-attitudinal framing, a significant role was attributed to human interference in both the causes and treatments of climate change. Moreover, the causal and treatment interpretations were framed along partisan lines: the counter-attitudinal frame attributed blame to the Democrats for wasting money on climate change, and for failing to see the “real” problems facing the United States. In the pro-attitudinal frame, in contrast, the Republicans were blamed for not spending enough money on climate change policies, and for failing to acknowledge this issue as a salient threat to society (see Online Appendix A for stimuli). The fact-checkers refuted the claims on the problem definition, causal interpretations, and treatment recommendation highlighted in the articles. The lay-out and evidence type were similar to the stimuli used in the first experiment.

Manipulation checks

The manipulations of the article’s attitudinal stance were successful, F(1, 511) = 1,566.70, p < .001. Specifically, the pro-attitudinal frame (pro-Democrats) was perceived as significantly more congruent with the issue position supporting human interference (M = 5.91, SD = 1.17) than the counter-attitudinal frame (M = 1.88, SD = 1.14). The same pattern was found for the counter-attitudinal frame. The manipulation of selective exposure was also perceived by participants, F(1, 271) = 130.38, p < .001, which indicates that participants in the selective exposure conditions were more likely to believe they had the freedom to select a fact-checker (M = 5.51, SD = 2.21) than participants in the forced exposure conditions (M = 2.51, SD = 2.03). Finally, the fact-based counter argumentative stance of the fact-checker was also picked up by participants. For example, discrediting the argument of little governmental spending by Republicans was picked up much more by those exposed to a fact-checker concluding that Republicans actually spend a relatively high amount of money on climate change (M = 6.20, SD = 1.34) compared with those not exposed to this fact-checker (M = 1.95, SD = 1.61), F(1, 271) = 560.16, p < .001. The same pattern was found for the other refuted claims.

Measures for attitudinal congruence

Attitudinal congruence was assessed prior to exposure by asking the following question: Before we proceed with the survey, we want to ask you about your opinion on the issue of climate change policies in the United States. On a scale from 1 to 7, please indicate how strongly you support or oppose the position that Earth is warming due to human activity, and that we need to take immediate action to counter this development?

Participants’ scores were classified as either supporting or opposing this position on climate change. These respondents were randomly allocated to pro- versus counter-attitudinal stimuli in the next step. Again, as robustness check, we assessed the validity of the congruency measure with a full six-item scale on climate change attitudes later in the survey (Cronbach’s α = .87, M = 4.52, SD = 2.03). Again, we found a very strong correlation between the one-item measure and the full scale (r = .932, p < .001).

Dependent variables

We asked participants to what extent they agreed with the article’s problem definition, causal interpretation, and treatment recommendation. In total, we used six items to tap agreement with the pro-attitudinal climate change article (e.g., “It is detrimental to spend less money on the fight against climate change”; Cronbach’s α = .97, M = 3.43, SD = 1.97) and seven items to measure agreement with the counter-attitudinal climate change article (e.g., “The measures proposed by the Democrats are not effective in fighting global warming”; Cronbach’s α = .94, M = 3.66, SD = 1.77). Both were measured on 7-point scales (see Online Appendix B). The measure for selective exposure was identical to the variable used in Study 1.

Analyses

The analysis strategy for Study 2 was identical to the strategy reported under Study 1.

Results Study 2

Selective exposure to fact-checkers

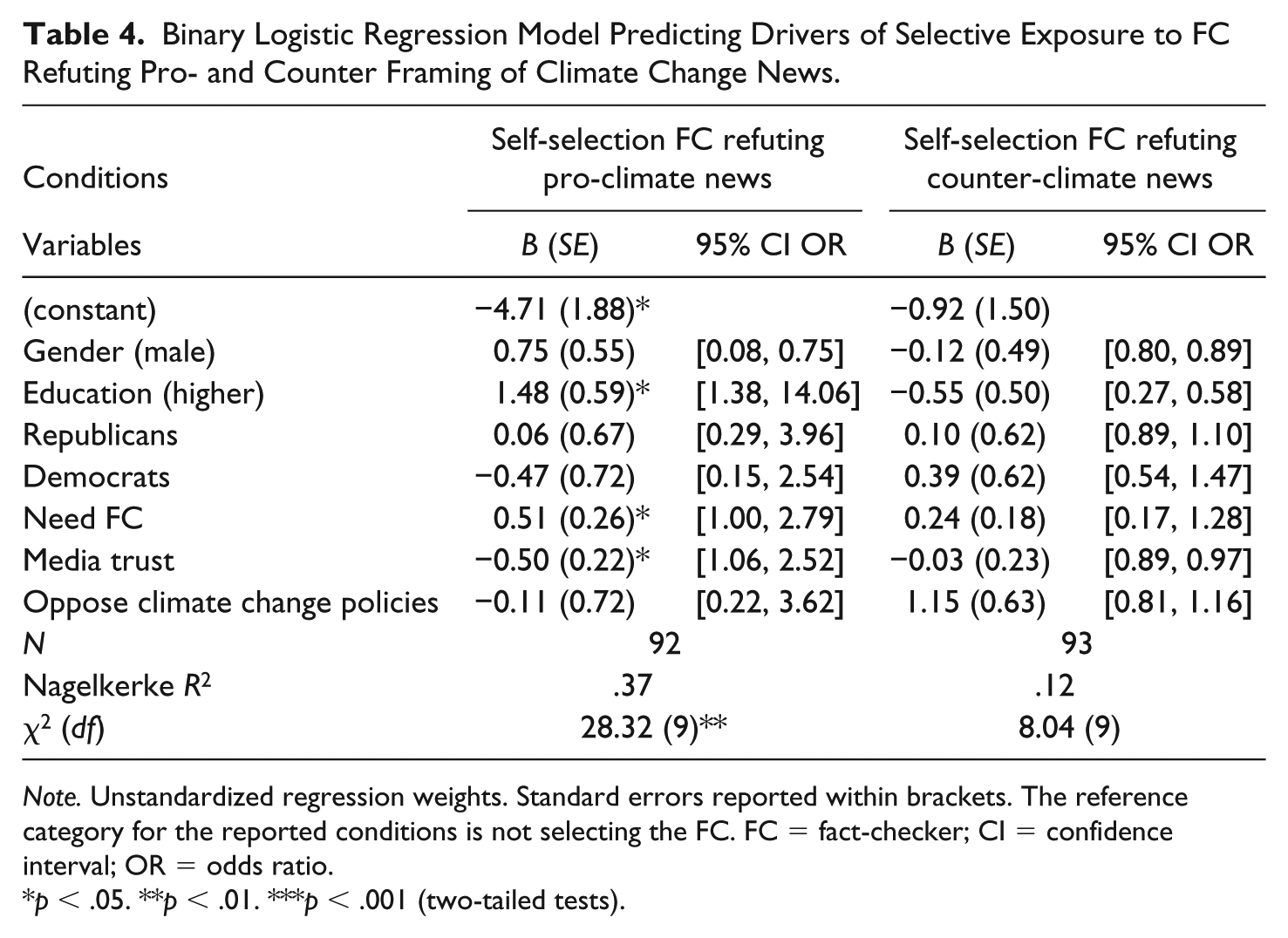

First, we investigated the extent to which disconfirming fact-checkers are selected or avoided (H1). As shown by the results of the logistic regression model in Table 4, higher education, the need for a fact-checker, and media trust predicted the likelihood of selective exposure to a fact-checker discrediting news supporting climate change policies. Specifically, higher educated participants were more likely to select a fact-checker. Next to this, the more the media were distrusted, the more likely participants were to select a fact-checker. In addition, the more participants had a desire to be exposed to news that checks facts, the more likely they were to select a fact-checker. In contrast to the findings of the first experiment, being opposed to climate change policies does not increase the likelihood of selecting a fact-checker. This does not provide full support for H1. Partisan biases only seem to have an influence on the selection of fact-checkers discrediting pro-immigration news (Study 1). In the context of news on climate change policies, attitudinal congruence does not drive selective exposure.

Binary Logistic Regression Model Predicting Drivers of Selective Exposure to FC Refuting Pro- and Counter Framing of Climate Change News.

Note. Unstandardized regression weights. Standard errors reported within brackets. The reference category for the reported conditions is not selecting the FC. FC = fact-checker; CI = confidence interval; OR = odds ratio.

p < .05. **p < .01. ***p < .001 (two-tailed tests).

The effects of exposure to fact-checkers

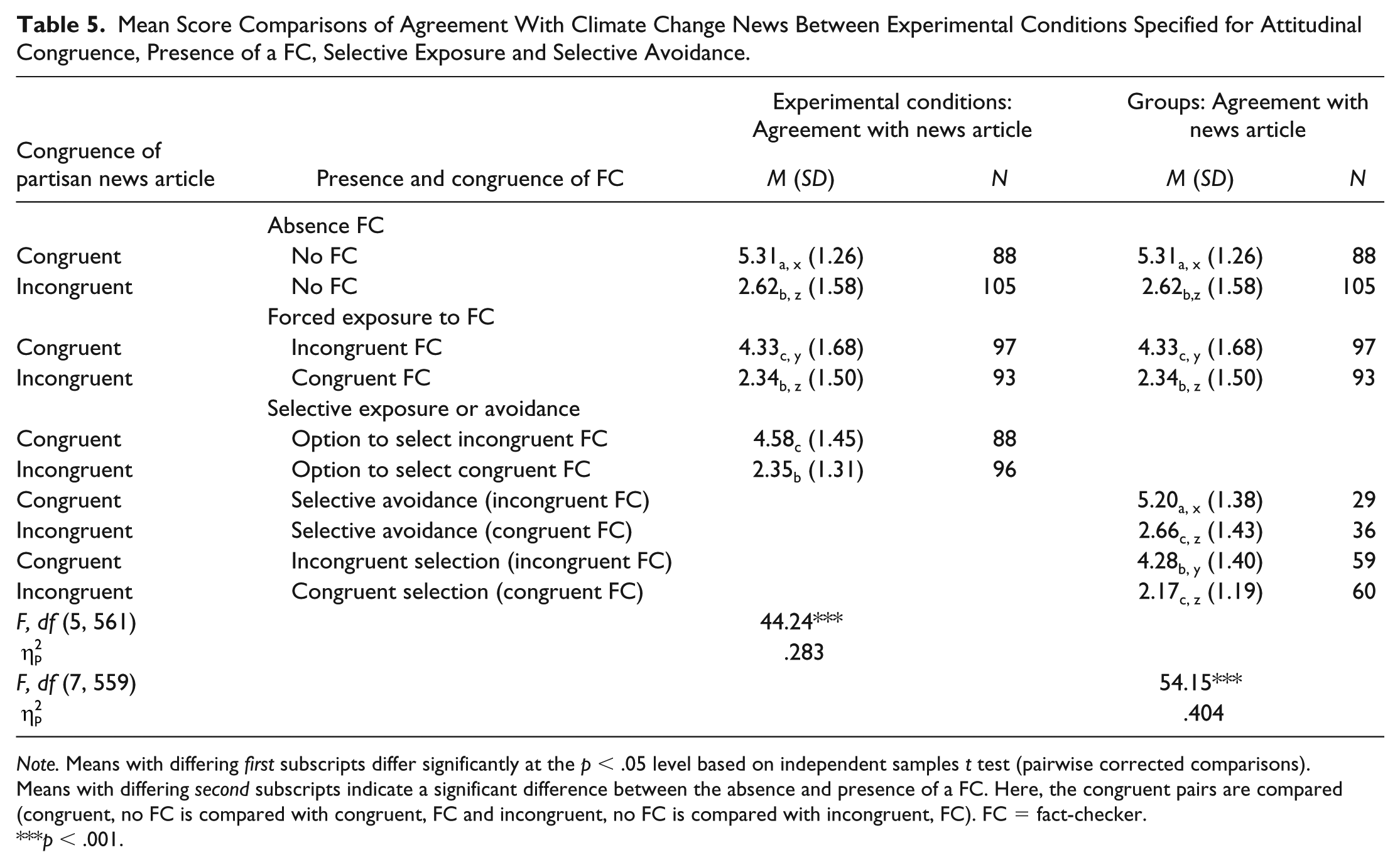

In line with the results of our first experiment, the factorial ANOVA (Table 5, model experimental conditions) indicate a significant main effect of the conditions, F(5, 561) = 44.24, p < .001,

Mean Score Comparisons of Agreement With Climate Change News Between Experimental Conditions Specified for Attitudinal Congruence, Presence of a FC, Selective Exposure and Selective Avoidance.

Note. Means with differing first subscripts differ significantly at the p < .05 level based on independent samples t test (pairwise corrected comparisons). Means with differing second subscripts indicate a significant difference between the absence and presence of a FC. Here, the congruent pairs are compared (congruent, no FC is compared with congruent, FC and incongruent, no FC is compared with incongruent, FC). FC = fact-checker.

p < .001.

Fact-checkers and political polarization

The results indicate that partisans are polarized around the issue of climate change when fact-checkers are absent. Specifically, participants only exposed to an incongruent text (without fact-checker) agree substantially and significantly less with the article compared with participants only exposed to a congruent climate change text (see Table 5 for mean-score comparisons). The mean difference between respondents exposed to a fact-checker and those who did not see a fact-checker is only significant for the forced exposure experimental conditions (ΔM = 0.70, ΔSE = 0.15, p < .001, 95% CI = [0.41, 99]). These findings provide partial support for H3: In the forced exposure conditions, the gap between audiences’ issue attitudes can be reduced significantly by exposing participants to a counter-attitudinal fact-checker.

The effect of selective exposure

Finally, to test H4, we investigated the role of selective exposure in driving the persuasiveness of fact-checkers. The interaction effect of selective exposure and fact-checkers on agreement with the climate change story was nonsignificant, F(3, 563) = 0.60, p = .81,

Discussion

The current media landscape can be characterized by three related developments that pose vexing challenges for democratic decision-making. First, because of the increasing strategic spread of misinformation or “fake news,” factual knowledge has become the target of severe doubt and skepticism (e.g., Van Aelst et al., 2017). As a consequence, citizens no longer agree on the basic information they need to make well-informed political decisions. Second, in this era of postfactual relativism, people’s motivations to confirm and reinforce their partisan identities may outweigh their desire to make accurate decisions, which may fuel political polarization (e.g., Taber & Lodge, 2006). Third, situated in the current high-choice and fragmented media environment, political polarization may be augmented by selective exposure and avoidance of political information, resulting in confirmation biases (e.g., Stroud, 2008). In the context of these developments, fact-checkers have been forwarded as a solution to correct misinformation and therewith counter misperception and overcome polarization (e.g., Fridkin et al., 2015). As a key contribution to this literature, this research aimed to assess how selective exposure to attitudinal-congruent and incongruent rebuttals of misinformation (i.e., fact-checkers) affects citizens’ agreements with statements made in immigration and climate change news.

The results of two experimental studies (N = 1,117) consistently indicate that fact-checkers do have the potential to correct attitude-congruent misinformation. This means that partisans used the information provided in the fact-checkers to reconsider their prior attitudes and to moderate their issue positions. Importantly, individuals who already disagreed with the misinformation were not guided by the fact-checkers. In other words, when people already disagreed with the misinformation based on their prior attitude, neither the exposure to misinformation or a fact-checker altered their view as this was already correct (i.e., in line with the fact-checker) in the first place. This can be interpreted as evidence for the effectiveness of fact-checkers in discrediting misinformation in a political polarized context. This is in line with recent research that has indicated that corrective attempts presented in fact-checkers can help counter misperceptions, even among those with partisan identities (e.g., Wood & Porter, 2018). To some extent, however, these findings differ from studies that identified a stronger role of partisan identities and attitudinal congruence (Shin & Thorson, 2017; Thorson, 2016). The difference in findings may partially be explained by differences in the conceptualization of the dependent variable. Shin and Thorson (2017), for example, look at identity-based out-group perceptions, which are strongly intertwined with partisan views than our issue agreement measures. Moreover, other research does not agree that fact-checkers are completely countered by partisan identification, but rather that such identifications may weaken their impact (e.g., Thorson, 2016). In that sense, our focus on content-related issue agreement can explain differences of our experiment compared with others. Taken together, our experiments indicate that fact-checkers do have the potential to outweigh partisan biases in citizens’ interpretation of partisan political news (see Matthes & Valenzuela, 2012). It is, however, crucial to take into account whether the fact-checker actually refutes news that is congruent with citizens’ prior attitudes. Our study demonstrates that, to fully understand the effect of fact-checkers, attitudinal congruence needs to be taken into account. This is in line with the mechanisms of cognitive dissonance and (defensive) motivated reasoning (e.g., Taber & Lodge, 2006). Although partisans are open to corrective attempts that are not in line with their partisan identities (also see Wood & Porter, 2018), fact-checkers only have an effect when the original news item was congruent with partisans’ attitudes. Although one could argue that this is a floor effect, these findings do demonstrate that fact-checkers do not have a “corrective” effect when disagreement is low. Hence, people are not affected by fact-checkers of incongruent news, which should make their opposition less salient. An important implication is that audiences come closer together, closing the gap between pro- and counter-attitudinal camps for polarized issues.

Crucially, the results of our first experiment demonstrate that political polarization can be overcome by exposing partisans to fact-checkers. In line with a great body of literature, we found that Democrats and Republicans are strongly divided in their attitudes toward immigration (e.g., Fiorina, Abrams, & Pope, 2008). However, the polarized stances of Republicans and Democrats were moderated by exposing partisans to a fact-checker. These findings corroborate literature indicating that exposure to alternative views may bolster individuals’ openness to other issue positions (Price et al., 2002) and provide empirical support for the notion that retractions of misinformation may reduce political polarization (Matthes & Valenzuela, 2012). It must however be noted that in the case of climate change policies, audiences remained divided after exposure to fact-checkers, although the gap between Republicans and Democrats did decrease substantially when participants were forcefully exposed to a fact-checker. This means that the potential of fact-checkers in reducing political polarization is at least partially issue dependent: Although partisan divides are canceled out for immigration news, refuting misinformation on climate change policies did not fully overcome partisan divides, especially among participants who would avoid a fact-checker if they could.

Our results further indicate that, although Republicans are more likely to select fact-checkers that agree with their issue positions on immigration news, Democrats do not show such a self-selection bias. Also, attitudinal congruence does not drive selection of climate change news. We thus do find support for attitudinal-congruent selective exposure and conformation biases, but not across all issues and partisan identities. Our findings are in line with literature that has claimed that Democrats and Republicans show different patterns of selective exposure (Iyengar & Hahn, 2009) and that selection effects may depend on the issue at hand. Moreover, despite the relevance attributed to selective exposure in previous literature (e.g., Bennett & Iyengar, 2008), our results indicate that the freedom to select or avoid fact-checkers do not have an impact on political beliefs. This corroborates literature that indicated that the evidence of the pervasiveness of selective exposure is mixed at best (Garrett, Carnahan, & Lynch, 2013).

Our results have important ramifications for understanding the current fragmented and postfactual media environment (e.g., Van Aelst et al., 2017). At the intersections of misinformation, polarization, and selective exposure, it can be concluded that potentially harmful phenomena of mis- and disinformation and political polarization can be countered by exposing citizens to fact-checkers. Importantly, forced exposure to fact-checkers can also be successful. Translated into practical recommendations, policy makers and media planners can design fact-checkers to refute “fake” partisan political news without having to overcome the barrier of selective exposure. Indeed, partisans are more open to challenging views (Price et al., 2002) than currently assumed in a large body of selective exposure literature (e.g., Stroud, 2008). What matters is that these challenging views use factual information and evidence to counter misinformation (Lewandowsky et al., 2012). As key democratic implication, in the context of fact-checkers, partisan identities do not always outweigh the desire to be accurate (Taber & Lodge, 2006). Fact-checkers have the potential to overcome partisan identities, which makes them an important journalistic instrument in countering the negative consequences of the current era of postfactual relativism. However, it should be noted here that the overall impact of fact-checkers does not depend on selective exposure, which implies that fact-checkers do not have strong effects on people who would avoid them if they could. It is therefore important to present fact-checkers in such a way that avoidance among the most biased processors is overcome, for example, using formats that are free of partisan cues.

Our studies bear some limitations that may need to be dealt with in future research. First, we only assessed the effects of fact-checkers on agreement with the issue statements made in a news article. Although the focus on cognitive elements fits the democratic discussion of postfactual relativism, which argues that the “trueness” of claims in political news is contested (Van Aelst et al., 2017), it does raise the question of the applicability of our findings to affective polarization (e.g., Iyengar & Hahn, 2009). Affective polarization may be driven more by partisan biases than by fact-checkers because affective polarization relates to the desire to reassure a positive self-concept as belonging to a political party (Tajfel & Turner, 1979). Future studies should disentangle the effects of fact-checkers on cognitive and affective outcomes.

As a second key limitation, our selective exposure design excluded participants with moderate attitudes and did not offer entertainment options as alternatives to political news (Feldman, Stroud, Bimber, & Wojcieszak, 2013). Moreover, participants could have selected or avoided fact-checkers for various reasons that we may not have measured in our study. Related, the comparison of selective exposure to selective avoidance conditions is in fact correlational, as we cannot randomly assign participants to either selecting or avoiding stimuli. We assessed differences between participants that selected and avoided the stimuli, but they could differ with respect to other characteristics. These methodological choices are at the core of all selective exposure experiments, but may nevertheless have an impact on the findings. To reassure that the effects of selection are similar when providing different options and when including moderates, future research may offer more options rather than selecting or avoiding fact-checkers, and may control for moderates rather than excluding them from the analysis (see Arceneaux, Johnson, & Murphy, 2012). Moreover, the interactive setting of today’s media environment may be considered more explicitly in the design of future research.

Third, our experiment was limited to two highly salient and polarized political issues. Future research may replicate the experimental design on more topics and across different countries to assess the robustness of our findings. However, because our experimental studies are the first to assess the effect of fact-checkers in a high-choice media setting, we regard the findings of our study as important foundational evidence for the democratic potential of fact-checkers. We demonstrated that the potentially harmful phenomena of misperceptions resulting from misinformation and political polarization can be countered by the journalistic practice of checking facts.

Supplemental Material

Online_Appendix – Supplemental material for Misinformation and Polarization in a High-Choice Media Environment: How Effective Are Political Fact-Checkers?

Supplemental material, Online_Appendix for Misinformation and Polarization in a High-Choice Media Environment: How Effective Are Political Fact-Checkers? by Michael Hameleers and Toni G. L. A. van der Meer in Communication Research

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Notes

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.