Abstract

The research project assignment can create meaningful opportunities for students to apply sociological concepts. For grading these projects, assessment rubrics are useful pedagogical tools to evaluate students’ abilities in achieving course learning objectives. In this study, I analyzed final research papers collected over multiple semesters in my undergraduate Methods of Social Research course. My goals are to (1) evaluate the grading rubric’s effectiveness in enabling students to meet course objectives and (2) identify improvements in students’ outcomes from revisions to the rubric over time. Findings indicate that rubrics can provide students the information needed to apply course concepts to their work and that rubric revisions are necessary to ensure validity, reliability, and equity across grading. In conclusion, I provide suggestions for implementing a semester-long research project assignment and initiating iterative revisions to rubric criteria.

Keywords

Takata and Leiting (1987) described teaching research as “learning by doing”—assessing data and making real-time research decisions informing subsequent stages of the project. Such hands-on learning assignments can activate neural pathways in the brain and influence sociological perspectives beyond the classroom (Messineo 2018; Zull 2002). Formalized assessment guidelines, presented as grading rubrics, present a how-to guide for completing key learning outcomes of a research project, such as compiling a literature review, designing a survey, and effectively presenting findings.

For students, rubrics provide transparent grading criteria and yield assignments with high levels of proficiency (Gezie et al. 2012; Howell 2014). Students can also use the rubric to self-assess their drafts in progress (Andrade 2005). For high-achieving students, rubrics can also serve as performance boosters and facilitate their critical thinking and self-reflexivity (Andrade 2000; Hawe et al. 2021; Sadler 2009). For instructors, rubrics help lessen the time spent evaluating student work (Andrade 2005) and prioritize the conceptual requirements of the assignment over the technical ones (howe and Correnti 2020). Rubrics are also useful for minimizing implicit biases when grading a student’s work (Jackson 2018) and can be referenced in grading debates (Adedoyin 2013). From a pedagogical perspective, rubrics can effectively measure students’ proficiencies in course learning objectives (Eber and Parker 2007).

In this study, I examine the learning outcomes demonstrated in a semester-long research project assignment in an undergraduate sociology research methods course. The assignment was designed around key course learning objectives presented to students as an analytic assessment (grading) rubric. I highlight the rubric’s effectiveness for yielding proficiencies in learning objectives and identify performance improvements in subsequence semesters from iterative rubric revisions. First, I discuss the relevant literature on sociology research assignments and best practices for creating and implementing assessment rubrics. Next, I discuss the details of the research project assignment and present my holistic findings of assignment proficiency. Finally, I discuss the outcomes of implementing rubrics and offer items for instructors to consider when developing their own tools.

Literature Review

Assigning Research Projects

Research assignments are fundamental to a sociology curriculum (Holtzman 2017; Lovekamp, Soboroff, and Gillespie 2016; McKinney and Day 2012; Medley-Rath and Morgan 2022; Strangefeld 2013; Takata and Leiting 1987; Willms and O’Brien-Jenks 2021; Wisecup 2016). An empirical research project assignment gives students a creative opportunity to engage with research methods and write reflexively about their experiences. For this type of assignment, students select a research topic, create the materials for data collection, analyze their findings, evaluate their research process, and reflect on limitations and areas of improvement.

Across undergraduate programs, research methods courses tend to adhere to the following broad objectives: reading, understanding, and evaluating empirical research; developing testable hypotheses based on theories; articulating the distinctions between various research designs and research methods; creating and critiquing survey instruments; using SPSS or similar statistical software to analyze data with the appropriate tests; interpreting conclusions based on findings; and preparing written reports (e.g., California State University, Channel Islands 2016). As such, research methods courses are less conceptual than other topic courses in the curriculum and can cause many feelings for students ranging from apprehension to apathy (Medley-Rath and Morgan 2022; Wisecup 2016).

Research projects can have a strong impact on students’ learning experiences. For example, Takata and Leiting (1987) observed a great deal of positive change among their students during the research process. McKinney and Day (2012) found that sociology students had the highest perceptions of self-learning from research methods, specifically in executing methodological tasks (e.g., survey design, data collection) and eliciting self-reflection. Medley-Rath and Morgan (2022) reported that students completing sociological research improved in their self-reported knowledge, confidence, and experience.

Ideally, research term papers are written as multiple drafts with carefully crafted sections, similar to the structure of assignments in writing intensive courses (Grauerholz 1999). The revision process can help students practice their sociological writing as distinct from other forms of writing and help with developing their arguments before submitting the final version (Migliaccio and Carrigan 2017). Because research papers can yield a spectrum of proficiencies, rubrics are useful tools for assessing written work (Andrade 2005; Core 2017).

Creating and Implementing Rubrics

Assessment rubrics have three essential features: (1) evaluation categories (i.e., from poor to excellent), (2) quality definitions for each category, and (3) an overall scoring strategy (Popham 1997). Rubrics are suitable for a variety of assignments, including concept maps, literature reviews, reflective writings, bibliographies, citation analyses, portfolios, presentations, and other measures of oral and written communication (Reddy and Andrade 2010). Instructors first conceptualize rubrics to reflect their functions of assessment, instruction, and learning. As pedagogical tools, rubrics are boundary objects between course objectives and grade values (howe and Correnti 2020). Arter and Chappuis (2007) outlined key steps for creating rubrics: (1) selecting meaningful learning objectives to measure, (2) reviewing the literature on rubrics that assess similar content, and (3) using prior coursework to revise the rubric over time.

The scholarship on rubrics differentiate between holistic rubrics, which designate a single score for task completion, and analytic rubrics, which designate scores on various components of a task (Badia 2019; Dawson 2017; Jonsson and Svingby 2007; Mertler 2000; Reddy and Andrade 2010; Sadler 2009). While holistic rubrics facilitate more efficient assessment, analytic rubrics can offer more detailed feedback to students across multiple criteria (Badia 2019). Holistic rubrics are best implemented when the product is of satisfactory quality with minor errors by assessing a single score on the overall quality, performance, proficiency, or understanding. These rubrics are especially useful where there is no singular way of achieving proficiency. On the other hand, analytic rubrics require multiple reviews of each assignment focusing on each specific criteria.

Rubrics are transparent guidelines that can improve students’ performance (Bacchus et al. 2020; Brookhart and Chen 2015; Chan and Ho 2019; Jonsson 2014; Kilgour et al. 2020; Reddy and Andrade 2010). In their review, Panadero and Jonsson (2013) found that rubrics have the potential to positively influence students’ learning by reducing anxiety, aiding with feedback, improving self-efficacy, and supporting self-regulation. Chan and Ho (2019) identified students’ positive perceptions of rubrics based on the objectivity of criteria and standardization of evaluation. Reynders and colleagues (2020) highlighted the effectiveness of rubrics for sharing insights on creating assignments, finding that that rubrics enhance critical thinking skills for college students. When possible, sharing exemplars with students of both excellent and poor work are also helpful for demonstrating proficiencies and distinctions in quality (Bacchus et al. 2020; Hawe et al. 2021).

Iterative rubric revisions allow instructors to improve on their assessment criteria and reflect on the objectives of their assignments (Janssen, Meier, and Trace 2015). Instructors can implement rubric revisions systematically based on prior assignments or more intuitively based on prior experiences. Revisions ensure the validity of rubrics for establishing successful completion of assignments and the reliability of rubrics as tools producing consistent scores across graders or classes (Jonsson and Svingby 2007). Identifying examples of strong, medium, and weak categories of assignments further support assessment designations.

There are some noteworthy public references for rubrics, many of which have been identified by the Teaching and Learning Centers of colleges and universities. The VALUE Rubrics from the American Association of Colleges and Universities (AAC&U) are open resources that present assessment criteria for a variety of outcomes related to intellectual and practical skills, personal and social responsibility, and integrative and applied learning (AAC&U n.d.). Additionally, the National Postsecondary Education Cooperative (NPEC) created a sourcebook on assessment methods for communication, leadership, information literacy, and quantitative reasoning and skills (NPEC 2005).

Recently, scholars have written about their experiences implementing these public resources for sociology writing assignments. Willms and O’Brien-Jencks (2021) used a rubric informed by the VALUE Information Literacy Rubric to assess satisfactory performance in constructing a literature review. Core (2017) utilized the VALUE Global Learning Rubric to assess students’ experiences in a study abroad program. Migliaccio and Carrigan (2017) evaluated writing among undergraduate sociology students across their program using a modified version of the VALUE Written Communication Rubric. In their own analysis of implementing VALUE rubrics, the AAC&U discussed the importance of rubric revisions for ensuring the validity and reliability of the assignments (Pike and McConnell 2018).

Data and Methods

For this study, I analyzed 127 final research papers collected over nine semesters from my Methods of Social Research course. As a post hoc analysis of deidentified final papers, my former university’s Institutional Review Board classified this project as “not human subjects research.” My two research questions are as follows:

Research Question 1: In what ways do undergraduate research assignments exhibit learning objectives in sociology research methods?

Research Question 2: In what ways do rubric revisions impact students’ outcomes in course learning objectives?

The Research Paper Assignment

For the assignment, students first submitted their topic and hypotheses with three independent variables. Once approved, students conducted a literature review and drafted an annotated bibliography. Next, students workshopped their fixed-choice survey questions with classmates and created a final survey with an informed consent statement for online distribution. Students were required to have at least 100 respondents, with surveys including a minimum of eight questions: three fixed-choice questions corresponding with their three independent variables and five fixed-choice questions measuring attitudes related to their dependent variable. After a three- to four-week period of data collection, students organized and analyzed their data during class using SPSS statistical software. From their results, students wrote a final research paper and presented their findings in class.

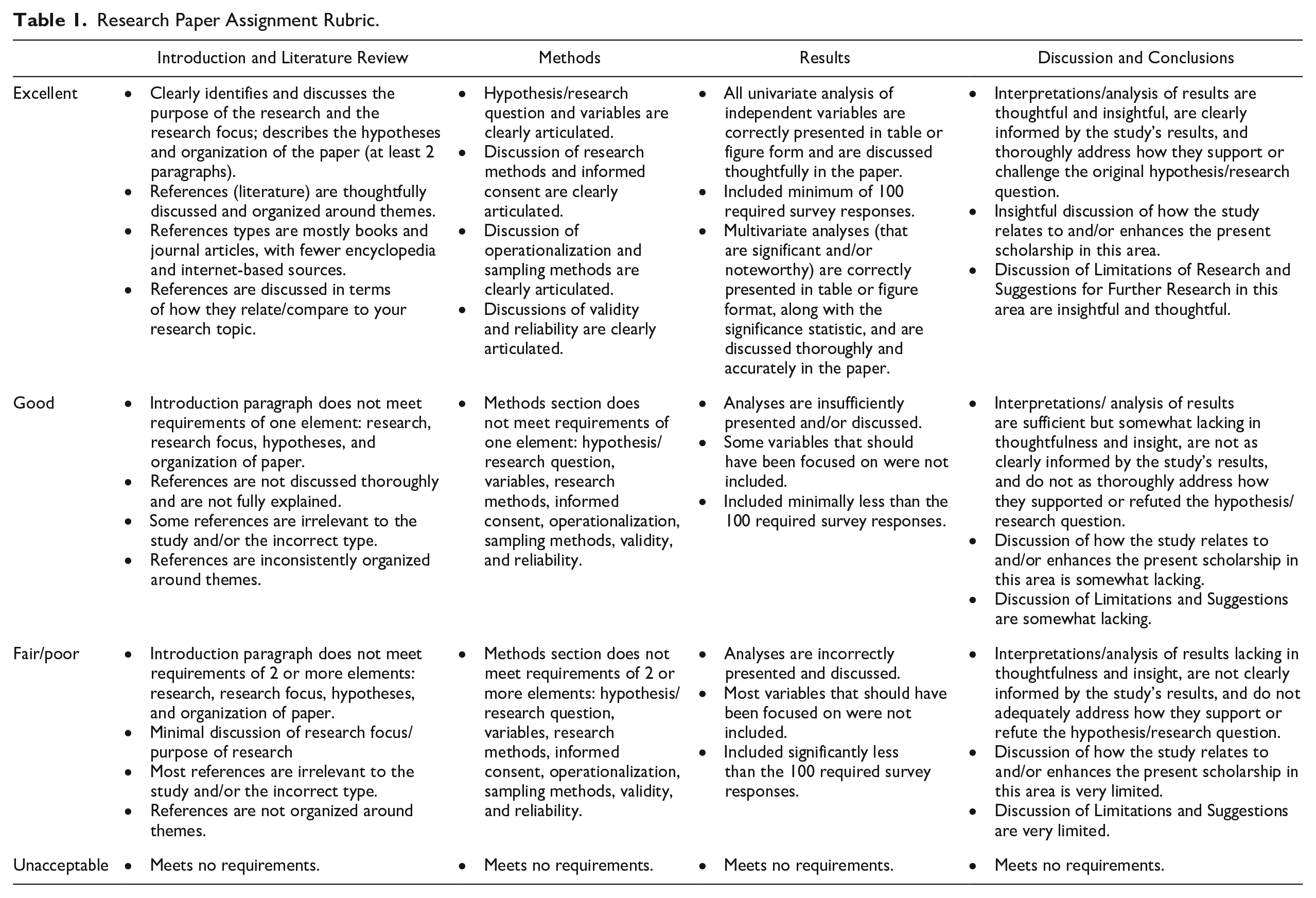

In the first few weeks of every semester, I provided my analytic grading rubric outlining assignment guidelines and course learning objectives (Table 1). The rubric was originally conceptualized in spring 2014 with a deficit model to demonstrate success in achieving the myriad measures of learning proficiency. After its first use, I revised my grading rubric before every semester to clarify expectations and align more closely with my learning objectives. After five rounds of revisions, I implemented the final version in fall 2016 and subsequent semesters.

Research Paper Assignment Rubric.

The grading rubric was developed independently and revised to align with key course learning objectives for sociology research methods. First, students identified quantifiable variables of interest and researched the subjects in the library database to identify empirical research published in peer-reviewed journals. Next, they applied their knowledge to create an online survey using a survey platform, collecting data from a convenience sample of friends and family. From there, they analyzed their results using SPSS to determine if there were differences in responses among their independent variable groups. Students then presented the findings in table form, drafted up the various steps of research project to create a narrative, evaluated their findings, revised their written work into a final research paper, and presented key aspects of their project in class. In Table 1, I present the written criteria provided to the students for their final research paper assignment. In class, students were also provided guidelines for citations/formatting and their in-class presentations.

In later semesters, I coordinated my instruction to better correspond with satisfactory proficiency in course learning objectives. For example, the revised rubric required students to organize their Literature Review section by subtheme, emphasizing the process of conceptualization. To improve the quality of references, in later semesters, I conducted my own in-class information sessions on research search strategies to ensure that students had references from peer-reviewed sociology and social science publications.

I also appended my class instruction of key objectives. For example, I emphasized the distinction between independent and dependent variables as related to conceptualization, hypothesis building, and measuring perceptions (attitudes). Sometimes students had to change out their variables and questions because they were too nebulous, were an improper way of operationalizing, or were not really valid measures. I encouraged students to choose more straightforward independent variable measures like race, gender, and age for easier data comprehension. Students also demonstrated proficiency in operationalization when their dependent variable survey questions were mutually exclusive and exhaustive.

In class, I presented a lecture on reading SPSS output using students’ midsemester evaluation data. Student-generated data can be useful for teaching quantitative proficiency (Lindner 2012). To improve data comprehension and presentation, I later required students to obtain independent variable groups of at least 20 percent or collapse their independent variable categories for more streamlined categorical results. Most students struggled to obtain diverse groups across their independent variable categories (particularly race because most White students obtained majority-White samples and most Black, Indigenous, and people of color students obtained majority samples of their own racial/ethnic groups in their social networks). Students demonstrated proficiency by reflecting in their papers on the limitations of their sampling methods and survey operationalization.

Analysis

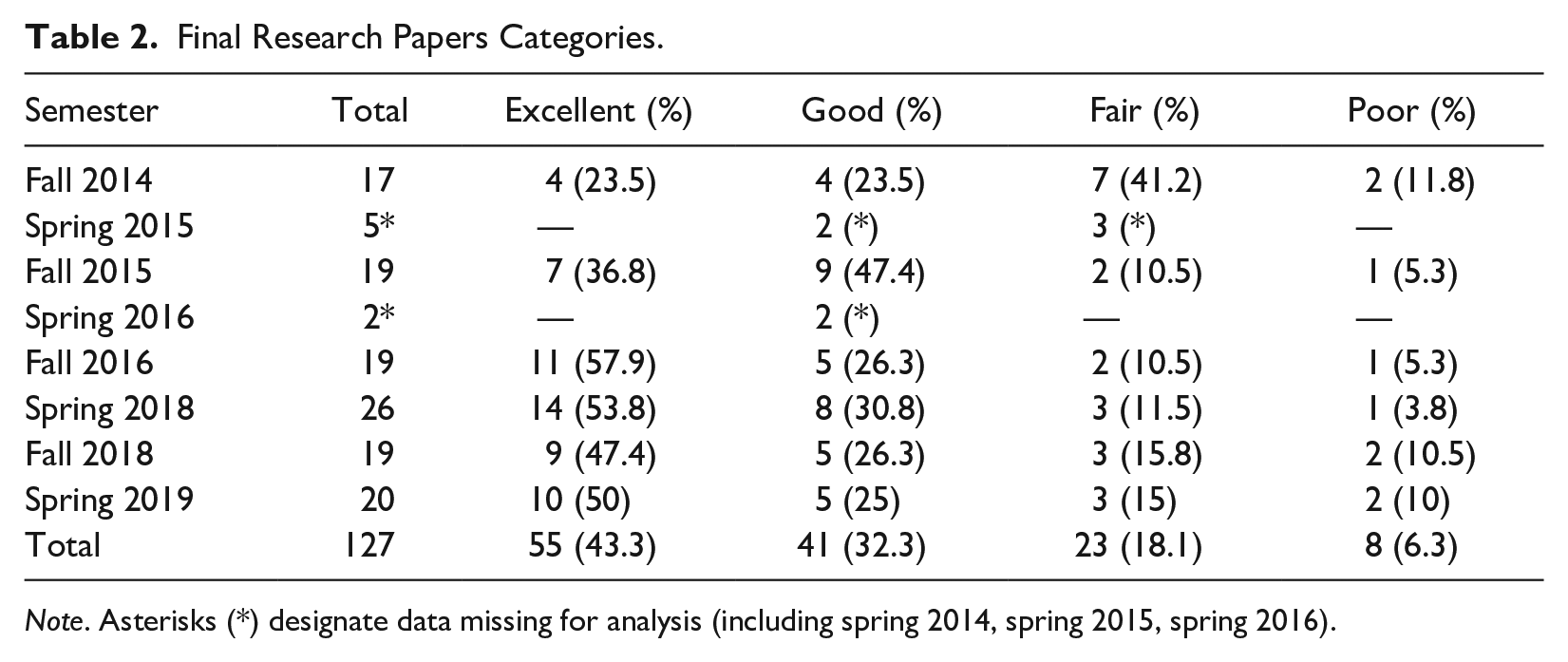

For this analysis, I coded on five criteria demonstrating skills in research methods: (1) Conceptualization (to include introduction of subjects, importance of research, and hypotheses design), (2) Literature Review (to include organization and quality and quantity of references), (3) Methods (to include basic concepts of research methods like research design, ethics, sampling, methodology), (4) SPSS Analysis (to include operationalization, data cleaning, sorting, statistical tests, and data presentation), and (5) Conclusion (to include discussion of findings, connections back to hypotheses and literature review, limitations of design and operationalization, and lessons learned). Each paper was first analyzed on each of these criteria and then summarized with a holistic assessment designating distinctions in proficiency. As illustrated in Table 2, the majority of students successfully completed the research project, and results by semester align with a typical distribution of student success.

Final Research Papers Categories.

Note. Asterisks (*) designate data missing for analysis (including spring 2014, spring 2015, spring 2016).

Results

First, the “poor” papers (n = 8) were missing any discussion of Conceptualization or topic origins or were politically biased (often on the topics of police and/or guns). The Literature Review was either missing from the final paper, was comprised of a few biased references, or was presented in the annotated bibliography assignment format from earlier in the semester. The Methods section was omitted or reduced to annotations, demonstrating lack of proficiency in basic research synthesis. The survey instrument was not included, thus limiting an assessment of the student’s proficiencies in operationalization. This category also measured those instances of poorly worded or seriously biased survey questions that negatively affected the results and conclusions. This was usually in the case of students who did not properly conceptualize their topic, did not attend the in-class survey workshop, did not follow instructions for revisions before their data collection, or did not submit their draft assignments.

In these papers, the SPSS Analysis was missing or limited, usually the result of an incomplete research project or from students who experienced major computer errors during their analysis. In the Conclusion, students omitted any discussion or was only presented as a couple of sentences. (Because the required items for the Conclusion were presented on the assessment rubric, it is likely in these instances that the student ran out of time.) While some papers may have been coded in this category for any one given aspect, only a few papers fell entirely under this category each semester (see Table 2). Overall, these papers were missing the fundamental concepts for conducting sociological research from students who had poor comprehension of the course objectives and the content overall.

Second, the “fair” papers (n = 23) mirrored the failings of the poor papers but with some attempts at the core requirements. For example, the Conceptualization of the research was included but only as a couple of sentences. The Literature Review did not include the required number of references or relied heavily on non-peer-reviewed online publications. Some of these papers also failed to systematically organize references around subthemes with headings. Most papers with this specific error were from earlier semesters, where previous iterations of the rubric did not specify organizing references by subheadings. In the Methods section, students were missing most of the key required concepts of the rubric. If a concept was discussed, it was applied superficially rather than to their research project specifically (i.e., quoting from the textbook or echoing some of my memorable lecture quotes like, “Validity is when the measure measures what you want it to measure!”).

In the SPSS Analysis section, students may have attempted the statistical tests but presented the analysis incorrectly by omitting percentages or the test statistics. In the SPSS instructions provided to students, I outlined the steps for running multivariate analyses based on whether the variables were nominal/ordinal or interval/ratio. Students who were unable to distinguish their variables may have performed the incorrect statistical test. In this category of papers, students had significantly less than 100 respondents and/or failed to condense their independent variables to categories with a minimum 20 percent of respondents. Because condensing was not required for students until later semesters, this particular missing requirement was evident in papers from earlier semesters. In the Conclusion, these papers presented some connection to the research project but were missing a thorough discussion of the literature review or limitations of the research project. Like the poor papers, such grievous errors were limited to early semesters and only comprised a few of the papers in later semesters.

Third, the “good” papers (n = 41) included a baseline of all required items from the grading rubric. Overall, these papers demonstrated proficiency in the basic objectives of the assignment but with some minor errors (i.e., in presenting data or summarizing in the Methods or Conclusion sections). In the Introduction, students articulated their personal connection to the research topic and clearly defined their research hypotheses. The Literature Review was organized around subthemes correspond to their independent variables but may not have included an extensive analysis of the references or synthesis between similar references. Most students in this category demonstrated comprehension of the in-class information session to identify references and organize their Literature Reviews around their independent variables. For the Methods section, students successfully reviewed the rubric and included what was required—for example, by correctly applying key terms like validity and reliability to their research.

These students successfully operationalized their variables, with perhaps some minor errors related to data presentation, coding/condensing, survey response options, or question phrasing. While I provided students with comments in their assignment drafts on how to consider these items, I also allowed them some creative freedom as opportunities for learning and reflections. Most students who made these errors did include a reflection of these research errors in their Conclusion. As required in the rubric, the Conclusion was a minimum two paragraphs, with a discussion of references and limitations. Papers in this category demonstrated students’ abilities to conduct research from beginning to end, with attention paid to key concepts in Research Methods.

Fourth, the “excellent” papers (n = 55) were interesting and engaging for the reader. As noted in Table 2, in later semesters, around half of the papers were coded here after the implementation of the final rubric beginning in fall 2016. For the Conceptualization, students effectively articulated their research experiences with their interests. The Literature Review established proficiency in understanding conceptualization and hypothesis testing. These papers were well organized around subthemes/variables, demonstrating proficiency in research search strategies. In the Methods section, these papers included an exhaustive discussion of all items as required in grading rubric. Students demonstrated excellent comprehension in research methods design. The survey questions were workshopped and conceptualized well, and students had sufficient data to compare differences between groups.

In their SPSS Analysis section, students effectively presented and discussed their findings. The Conclusion section included thoughtful connection of findings to their initial hypotheses and references. This category also captured instances of important reflections on operationalization, as related to additional measures, sampling, question phrasing, response options, statistical tests, or condensing variables. These papers included clear articulation of how their convenience samples impacted the generalizability of the research findings. Finally, in these papers, students comprehensively reflected on their practices and processes of conducting social research.

Discussion and Conclusion

In this study, I discuss the usefulness of implementing rubrics as guidelines for students to achieve success in sociology learning objectives. Research assignments are useful for developing basic research skills: conceptualization, operationalization, research design, quantitative analysis, and report writing. As I suggest, research experiences in which students can exercise their creativity also cultivate student learning and critical thinking skills at the neurological level (Messineo 2018; Zull 2002). Students will not only develop in their own comprehension, but also develop the necessary skills to achieve future career success.

In my analysis of students’ papers over time, I also establish the effectiveness of iterative rubric revisions over semesters to better align with course learning objectives and yield higher proficiency. Because I was unable to obtain all of the archived papers from three of the earlier semesters as noted in Table 2, I am missing opportunities to fully measure change over time from earlier versions of this assignment. With the data available, however, there are marked trends toward improvement.

While the final research papers were effective data for testing proficiency of the course learning objectives and improvements over time, there were also some limitations to this analysis. For example, the grading rubric did not require final papers to include survey questions. As a result, I had to use more intuition for those missing pieces in some of the papers. Without requiring previous iterations of literature reviews or survey questions, I was also missing opportunities to analyze changes over time at the individual level. Many papers did not include any personal reflections and were written in the third person. For research paper assignments, required personal reflections can provide insights into the transformative influence of “learning by doing” (Takata and Leiting 1987).

In a review of my course evaluation data, students were overwhelmingly positive about the research assignment and the course. The most frequent suggestion was providing more time for each section of the final paper. After my analysis, I would agree that this assignment would improve by instructing students to submit revised sections of their paper throughout the semester. In future iterations, I would require students to draft and redraft sections at each stage and later plug in these final pieces into their cumulative assignment. Such practices are in line with writing-intensive courses, which require multiple versions of the final product (Grauerholz 1999). There are also some advantages to incorporating components of a research project across courses to allow for more comprehensive experience (Lovekamp et al 2016; Medley-Rath 2021; Medley-Rath and Morgan 2022). Finally, I recommend beginning this assignment with the Literature Review component before students define their research questions and hypotheses.

Future research on rubrics would benefit from incorporating best practices in the scholarship of teaching and learning, such as by comparing assignments across courses or institutions, and with multiple raters for analysis (Chin 2018; Grauerholz and Zipp 2008). Future studies could also incorporate students’ perspectives on using rubrics. There is a growing body of scholarship highlighting the benefits of constructing rubrics with student input (Bacchus et al. 2020; Kilgour et al. 2020; Reddy and Andrade 2010). While students have reported that co-construction can make assessments more transparent, such efforts also take more time for the instructor to implement (Kilgour and et al. 2020). Because the most effective rubrics support students’ success, instructors must remain reflective in presenting our expectations.

Footnotes

Acknowledgements

I would like to thank Mrs. Karen Musser for her administrative assistance and Dr. Jim Smith for the opportunity to teach this course over many semesters. I would also like to thank Dr. Liz Grauerholz for her feedback on a preliminary version of this article and Dr. Ann Miller for her support through the journal article writing course. This project was presented at the 2022 American Sociological Association Annual Meeting.

Editor’s Note

Reviewers for this manuscript were, in alphabetical order, Stephanie Medley-Rath, Melinda Messineo, and Nicole Willms.