Abstract

Introduction

For many, community colleges are an appealing postsecondary educational option. They uphold an open access policy and welcome all who seek to advance their education, foregoing the stringent admission requirements of some 4-year institutions. Further, they provide local and flexible educational opportunities at about one-third of the cost of 4-year institutions. It is not surprising, then, that these institutions attract nearly half of the nation’s undergraduates each year (AACC, 2020). Students who enroll in community colleges come from some of the most marginalized student populations in higher education; they are often first in their families to attend college, from racial groups historically underrepresented in higher education, and from low-income backgrounds (AACC, 2020). Although the broad acceptance policy of these institutions grants postsecondary access to students who may not otherwise attend college, it also gives rise to one of the most pressing challenges faced by 2-year colleges—the need to uphold college-level academic standards while serving a diverse student body (Hassel & Giordano, 2015).

Efforts to address this challenge have historically been made through pre college-level courses, also known as precollegiate, basic skills, or developmental courses. These courses have been described by some scholars as a “catapult,” (Goudas & Boylan, 2012) allowing access to postsecondary education to students who are considered underprepared while helping to prepare them for college-level coursework (Arendale, 2002; Boylan & Bonham, 2007). Often, multi-level sequences are offered in math, reading, and English and standardized tests are used to determine preparedness and to place students into either the college-level course or into a course within a sequence of the precollegiate courses. Recently, however, standardized placement tests such as ACCUPLACER and Compass, used widely to determine student preparedness, have come under scrutiny and have been shown to misplace about one-third to one-half of students, mostly under-placing them (Barnett & Reddy, 2017; Hassel & Giordano, 2015). Further, placement policies using these tests have been shown to be inequitable, having a disparate impact on students from backgrounds that have been disproportionately excluded from college-level courses based on criteria that do not accurately reflect their ability to succeed (Henson & Hern, 2019). Most students placed by such tests and who enroll into precollegiate courses do not persist to college-level coursework. Such data have brought the effectiveness of pre-collegiate courses into question and have led to the rising belief that student placement into lengthy precollegiate course sequences hinders students’ progress toward degree completion (Barnett & Reddy, 2017; Mejia et al., 2016). This has prompted a shift in the focus of policy from merely providing college access to a greater number of students to supporting the accomplishment of students’ educational goals, resulting in the attainment of certificates, degrees, and/or transfer to 4-year institutions. The change places a greater emphasis and importance on the initial placement of students into courses.

Of notable concern are first-year transfer-level (FYTL) English composition courses. These courses are the place where students become versed participants in academic discourse and where they develop the advanced literacy skills needed to succeed in courses in other disciplines (Duff, 2010; Hassel & Giordano, 2015). Further, the courses are often situated within the institution as pre-requisites for other courses and are required for degree attainment and transfer to 4-year colleges. In these ways, they function as gateways to college success in both their content and context (Nazzal et al., 2019) and are crucial to overall academic success (Kassner & Wardle 2016; Troia, 2014). However, despite the importance of taking and passing the FYTL composition course, recent state-wide data in California reveal that most students in community colleges never even reach the point of enrolling in it, and less than half of students who start out in pre-collegiate writing courses take and pass the FYTL course (Mejia et al., 2016). These students are essentially blocked from forward movement at the college that could lead to the attainment of their educational goals.

Widespread reform is underway across the nation with initiatives in several states to improve the persistence and completion rates of community college students. In California, where community colleges constitute the largest system of higher education in the nation, serving 1.8 million students (CCCCO) (California Community Colleges Chancellor’s Office, 2023), recently passed legislation has rapidly and drastically expanded the scope of reform throughout the state’s 116 institutions. In effect since the fall of 2019, Assembly Bill 705 mandates a shift in the methods for placement of students into English and math courses. Colleges are now required to make placement recommendations to students that ensure “optimized opportunities” for them to complete FYTL coursework within a year and are not allowed to place students into precollegiate courses unless they are “highly unlikely to succeed without them” (Hope, 2018, p. 1).

At many institutions, this has led to the elimination of placement tests 1 and a move toward the use of high school records, including courses taken, grades, and grade point averages (GPAs) (Rodriguez et al., 2018). Support courses are offered concurrently with the FYTL composition course and are commonly taught by the same faculty as the transfer-level course. To determine if students need a support course and what kind of support is needed, some colleges have turned to the use of guided, or directed self-placement (DSP).

Scholars D. Royer and R. Gilles define DSP as any method that offers students information and advice about placement options and allows students to make the ultimate decision about their placement. The direction can take many forms, such as detailed course descriptions and sample assignments, offering students a self-inventory of their experiences with writing and a chance to meet with a counselor. DSP is based on the importance of informed student choice (Royer & Gilles, 2020); its processes and materials vary widely across institutions and are shaped by local decision-making. DSP eliminates the cost of largescale essay assessment and allows students quick access to the FYTL course. It is viewed by some scholars as more equitable than previous placement policies and as a promising alternative to mandatory placement. However, some scholars question the fairness of the “burden shifting” that takes place in DSP when the responsibility of appropriate placement is transferred from experienced faculty who are familiar with curricular demands to novice students who often have not yet begun their college experience (Saxon & Morante, 2014; Toth, 2019). Further, these scholars emphasize the limited published research on the consequences of DSP in community colleges, particularly for student groups who have been disadvantaged by standardized placement exams.

Against the backdrop of widespread reform in community colleges across the nation, the purpose of this study is to gain insight about the impact of a particular reform effort on the placement and success of students in the FYTL composition course. The aim is to find if students who need the most writing support are, by the new placement method, positioning themselves to receive it by enrolling in the course version with a concurrent support course. This study also examines if the new placement policy improves students’ chances for college success while examining its impact on specific student subgroups.

Method

Institutional Context

The present research was conducted at a community college in California enrolling approximately 50,000 students. The institution is one of the largest single-campus community colleges in the state and is designated as a Hispanic-Serving Institution (HSI). Enrolled students are 55% Hispanic and 19% Asian. Most attend the college part-time and three-quarters receive financial aid. This investigation took place shortly after the implementation of extensive structural and curricular changes that affected the placement of students into composition courses. This included major restructuring of courses and alteration of criteria by which students were placed into courses in order to help expedite their completion of the FYTL course requirement and to provide a more streamlined route to the attainment of their associate degree and/or transfer to a 4-year institution.

Pre and Post Reform Placement

Prior to the implementation of reform, a faculty-created and holistically-scored writing assessment was used to place students into one of four composition course options: one of three pre-collegiate course levels (PCL) or the first-year transfer-level (FYTL) composition course. The placement exam consisted of an option between two freestanding (non text-based) prompts. Students were given 45 minutes to answer the prompt in writing, either by hand or on a computer. Based on the results of this assessment, about 15% of students were placed into the FYTL course and 77% placed into one of three PCL courses. The remaining percentage of students placed into the college’s English as a Second Language (ESL) or American Language courses.

The implementation of reform at this institution resulted in the near elimination of the precollegiate course sequence and replacement of the writing placement exam by a placement process including an online questionnaire that provided students with an automated course recommendation to self-place into one of two versions of the FYTL composition course—either one with or without a concurrent support course. Based on input, some students (mainly those whose native languages are not English) were directed further to a set of questions that asked them to reflect on how coursework aligns with their personal educational goals and abilities and their familiarity and comfort with course requirements. Some of these students received a recommendation to contact the American Language department to assist with their placement.

Acceptance of the guidance given by the college was not mandated—students chose whether or not to follow the recommendation. The guidance, based primarily on high school records (including students’ self-report of GPA and courses taken, as well as the grade received in the courses), resulted in 85% of students enrolling into the FYTL course (73% into CL, the stand-alone first-year transfer-level course and 12% into CL+S, the same course with a concurrent support course). The remaining students enrolled in either the ESL or American Language courses, or into one of the few remaining sections of precollegiate courses offered. The cut-off high school GPA for recommendation to enroll in the CL course was 2.6. Students with a lower GPA were directed toward enrollment into the CL+S course version. The reform led to a large shift in the number of students placing directly into the CL course—from 15% to 85% over the time span of 1 year.

The CL+S Course Option

At this institution, the CL+S course option is identical in content to the CL course according to the course outline and outcomes, but supplementary support is offered, including an additional class session and access to a tutor. The additional class session is scheduled as a stand-alone, one-unit section linked to the CL course that meets for 1 hour a week with the same instructor as the CL course. Individual faculty decide how the time is used. The section is often conducted just before or after the CL session, but is sometimes held on a different day of the week. Tutors in the Classroom (TCs) are assigned to all CL+S course sections. The TC Program is managed through the college’s writing center and is described as a combination of tutoring and supplemental instruction. TCs are non-students, employed through the writing center that attend class with students for both CL and S class times.

Participants

A writing assessment was administered to 530 student participants in 25 course sections of the transfer-level composition course over the spring and fall semesters of 2019. Of these participants, 255 students were enrolled in eleven sections of the stand-alone version of the course and 275 students in 14 sections of the version with the additional, concurrent support course. Students in the course sections taught by six participating faculty were involved in the study, with the choice to opt out. Participants were mostly between the ages of 17 and 21 (73%). Male and female participants were almost equally represented in the sample. Student participants were 60% Hispanic, 22% Asian, 15% White, 1.5% African American, and 1.5% other races. Most students were bilingual (73%), speaking at least one language in addition to English. Nearly half of the bilingual students are English learners who indicated having taken either English as a Second Language (ESL) or English Language Development (ELD) classes throughout their educational experiences. These students consist of 36% of the total sample. A high school diploma was reported to be the highest level of education attained by either one or both parents for 60% of participants. For 21% of participants, the highest level of education of either parent was reported to be less than a high school diploma. Only 7% of the sample had at least one parent with a professional degree. Half of participating students reported working an average of 25 hours per week while attending the college. Almost all students (93%) who reported taking the college questionnaire (n = 252) indicated that they followed the college’s course recommendation for enrollment into their selection of the composition course. Of the student respondents to the survey administered in this study (n = 334), 87% indicated that they thought the composition course they enrolled in is a good fit for them. Of those recommended to take the stand-alone college level (CL) class, 30% took the class with support (CL+S) instead. Only 9% of those recommended to take the CL+S course took the CL course instead. Of those whose recommendation was presented as either the CL or CL+S course, 82% selected the CL+S course. Nearly all participants (84%, n = 507) indicated having the goal of attaining the associate’s degree and/or transfer.

Data Collection and Analytic Measures

To investigate possible differences between students who self-placed into the CL and CL+S courses, data were collected using an analytic writing assessment and a student survey to compare the following variables between students in the two course types: writing proficiency level, student reported high school grade point average (HSGPA), and final grade in the course. The survey was used to gather information about students’ educational background and goals as well as their perceived level of preparedness for college and their reasons for the course type chosen. Information obtained from the surveys included student-reported HSGPA, the placement recommendation received from the college questionnaire, and whether or not students followed the college’s recommendation for enrollment.

Writing Assessment Instrument

The instrument used to assess writing competency and to detect academic writing strengths and weaknesses was a text-based analytical writing assessment (AWA) which called for the interpretation and integration of two texts (Olson & Land, 2007). The prompt required students to read, interpret, and synthesize the two texts and to construct an argument drawing upon both sources—skills that constitute holistic literacy practice which signifies college readiness (Perin, 2013). Students responded to the prompts while making and supporting a claim about what they identified as the characteristic most essential to the character’s success or survival of the main character. Two different but structurally comparable prompts and sets of texts were used to minimize individual advantage based on the prompt. One-half of the students in each course type were given a test with one prompt and set of texts, and the other half of students were given a second prompt and set of texts in order to control for differences that may arise between groups based on the prompt (see Appendix A).

Administration

Administration of the AWA occurred in two 45-minute segments and was completed by the third week of classes in all course sections to minimize the amount of instruction students received before testing. In the first segment, faculty read the two texts aloud while students followed along with their own copies of the texts. Students were encouraged to annotate the texts as they were read. Faculty then guided students through the completion of a conceptual planning packet using graphic organizers that led to the formation of a distinct claim, establishing the main argument for the paper, and a list of evidence from the text that would be used to support their claim. In the second 45-minute segment, students wrote the essay while referring to the two annotated passages and the completed conceptual planning packet.

Scoring

The assessment rubric measured skills that students should have when ready for college and aligned with established measures of high school writing competency such as the California High School Exit Exam (required for graduation in California up to 2015), requirements of the National Assessment of Educational Progress (2011), and the California Common Core State Standards (2010). The assessment was administered to 90% of students (n = 479) in both versions of the course.

After norming procedures, assessments were scored holistically on a 6-point scale (see Appendix B). Papers that scored four or greater were considered proficient. Readers were doctoral students in the School of Education at the University of California, Irvine (UCI) with various backgrounds in literacy. All readers participated in training and norming for scoring papers.

To check for robustness in scoring, a subsample of papers consisting of 22% (n = 103) of the overall sample was read and scored a second time. The subsample was selected purposively through stratified randomization to represent all scores in each course type in proportion to the score frequency. This sample exceeds the recommended 20% threshold of subsample/sample ratio (Creswell, 2017). There was agreement within one point of difference for most of the subsample (α = .87). Exact agreement of scores occurred with 51% of the papers.

Analyses

Students’ level of writing proficiency was measured using the AWA. Students’ self-report of HSGPA, which previous research shows tends to be accurate (Sanchez & Buddin, 2015), was obtained from the student surveys and was reported by 88% of students in the overall sample (n = 468). Final course grade data were obtained from the institution for consenting students (n = 346). Averages for each variable in the two course types were calculated and tested for statistically significant differences between them using two-way t tests. Correlational analyses using Pearson’s r coefficient along with scatterplots were used to detect and test the strength of linear relationship between the variables. In order to control for other variables, regression analysis was used to determine the effect of other factors on the variables of interest. To disaggregate the data by student subgroups, the variables were analyzed by students’ sex, race, and language background.

Results

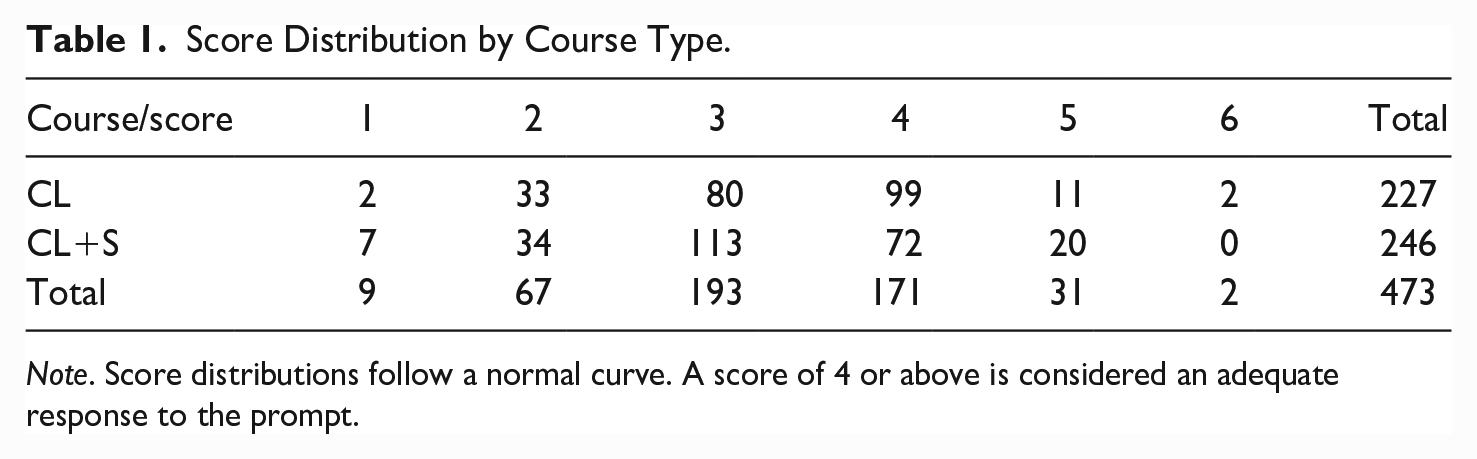

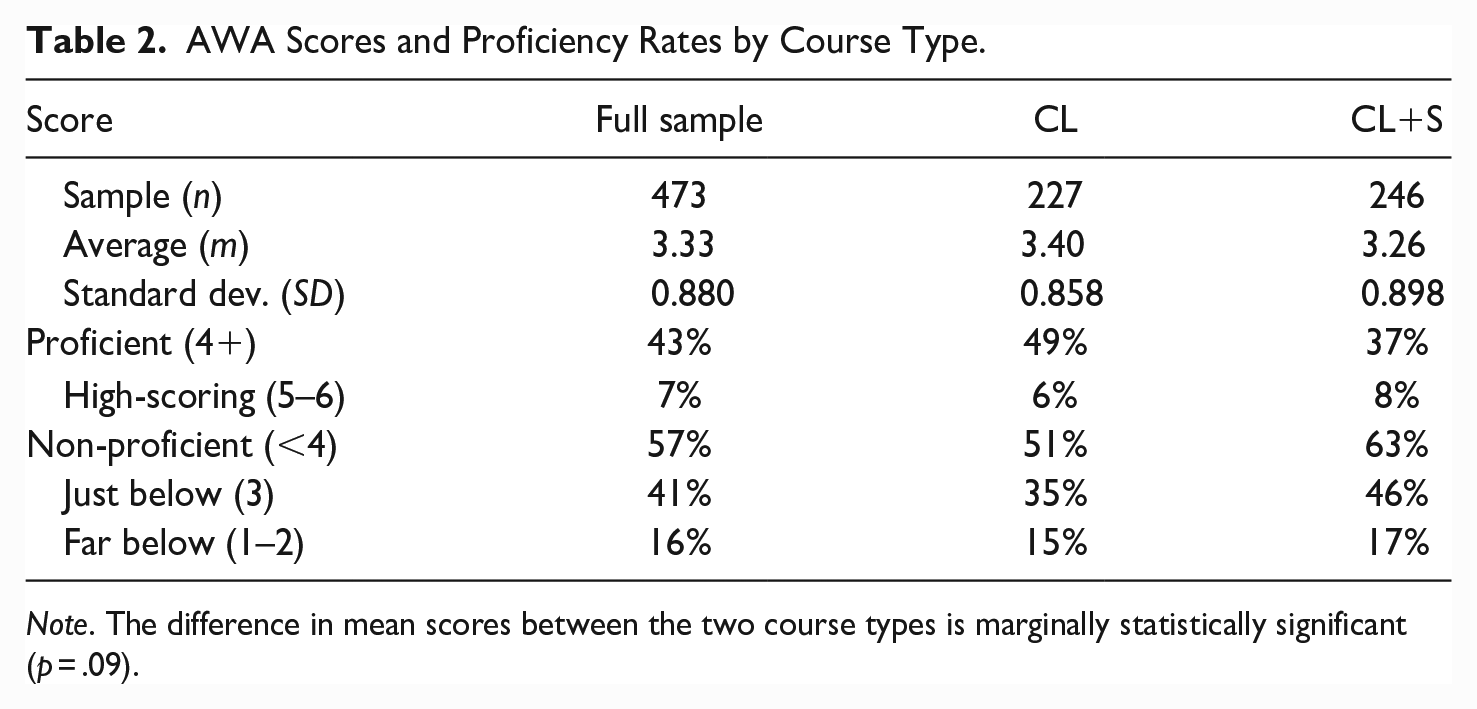

To investigate differences in student writing between the two FYTL composition course types (CL and CL+S), mean scores on the AWA were calculated and compared. Score distributions follow a normal curve and vary more widely in the CL course. Students in the CL course scored every possible score, 1 to 6. The range of scores was smaller in the CL+S course, ranging from 1 to 5 (see Table 1). The average score for the overall sample was 3.33 (see Table 2). The CL course average score of 3.4 was slightly higher (by 0.07) than the overall average. The CL+S average score of 3.26 was slightly lower than the overall average (also by 0.07) and 0.14 lower than the CL group. This difference in mean scores between the two courses was small and only marginally significant (p = .09).

Score Distribution by Course Type.

Note. Score distributions follow a normal curve. A score of 4 or above is considered an adequate response to the prompt.

AWA Scores and Proficiency Rates by Course Type.

Note. The difference in mean scores between the two course types is marginally statistically significant (p = .09).

A score of 4 or more was considered an adequate response to the prompt, demonstrating proficiency. Close to half of all students assessed (43%) received a score of 4 or above (see Table 2). In the CL course, about half of the students (49%) received a score considered proficient; for students in the CL+S course, the percentage was lower (37%). Papers with high scores of 5 or 6 constituted 7% of the overall sample and were more highly represented in the CL+S course type (8% compared to 6% in the CL course). More than half of the papers in the overall sample (57%) received a non-proficient score (a score of 1–3) with 41% of these papers scoring a 3, just below the proficient mark. The percentage of papers that received a 3 was lower for the CL course (35%) and higher for the CL+S course (46%). Papers that scored a 1 or a 2 were considered far below proficient. These papers constituted 16% of the overall sample, 15% of the CL papers, and 17% of the CL+S papers.

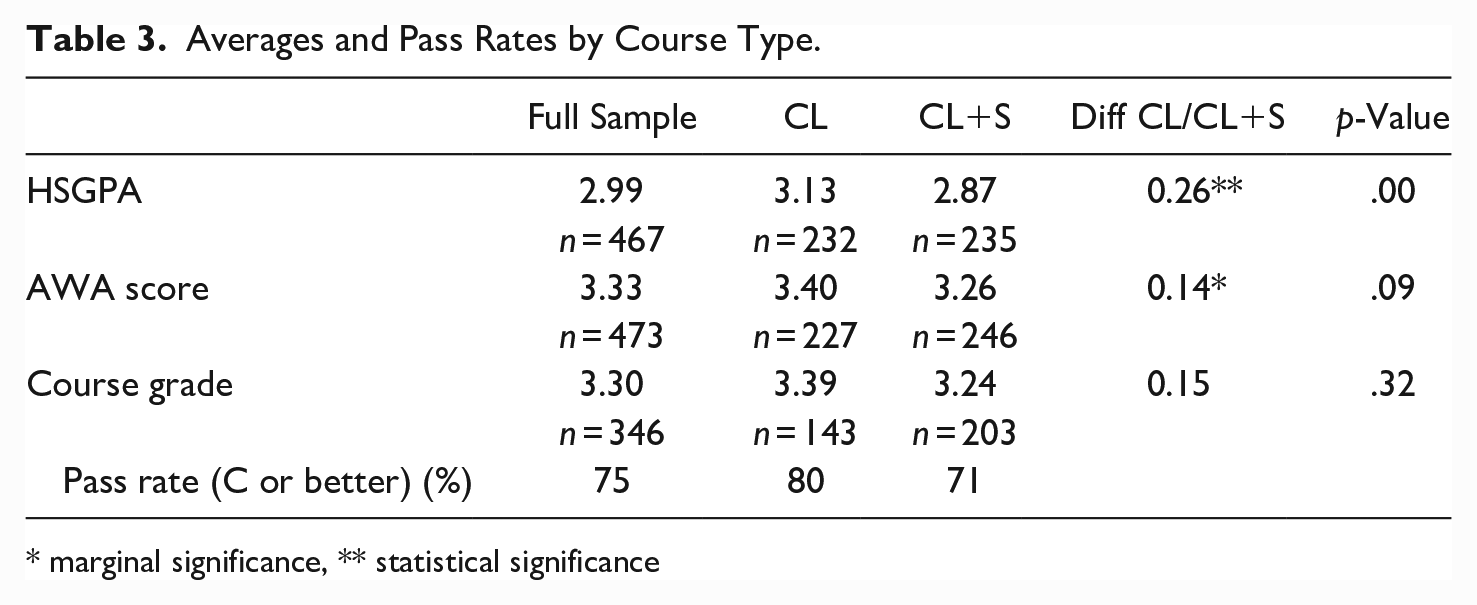

The average HSGPA for all participants was nearly a 3.0 (see Table 3). Students in the CL course had a higher average (3.13) than the overall sample while the average for students in the CL+S was lower (2.87). The difference between the two groups (0.14) is statistically significant (p < .001). This result is expected, since HSGPA was used as a measure to recommend placement to students into the two course types through the college questionnaire.

Averages and Pass Rates by Course Type.

* marginal significance, ** statistical significance

Most students in both course types passed the course with a grade of C or better (see Table 3). The pass rate of both students whose writing was scored as proficient and those who scored non-proficient (assessed in the third week of the semester) was 76%. There were not significant differences between students in their first year and new to the college (70% of participants) and students who were at the college longer and had taken precollegiate level courses in their HSGPAs (p = .73), AWA scores (p = .23) or final course grades (p = .58).

To investigate possible relationships between HSGPA, writing performance, and final course grades, three correlation analyses were performed. Results of the Pearson correlation indicated a very weak association between students’ self-reported high HSGPA and their writing scores (r = .1642, n = 416); a scatterplot reveals a lack of linear relationship, or interdependence, between the two variables. The second analysis, examining the relationship between HSGPA and final course grades revealed a weak positive correlation between the two variables (r = .27, n = 311) and no linearity. The final correlation analysis conducted between students’ writing scores on the AWA and final course grades also showed a very weak association (r = .1134, n = 323) and a lack of linear relationship.

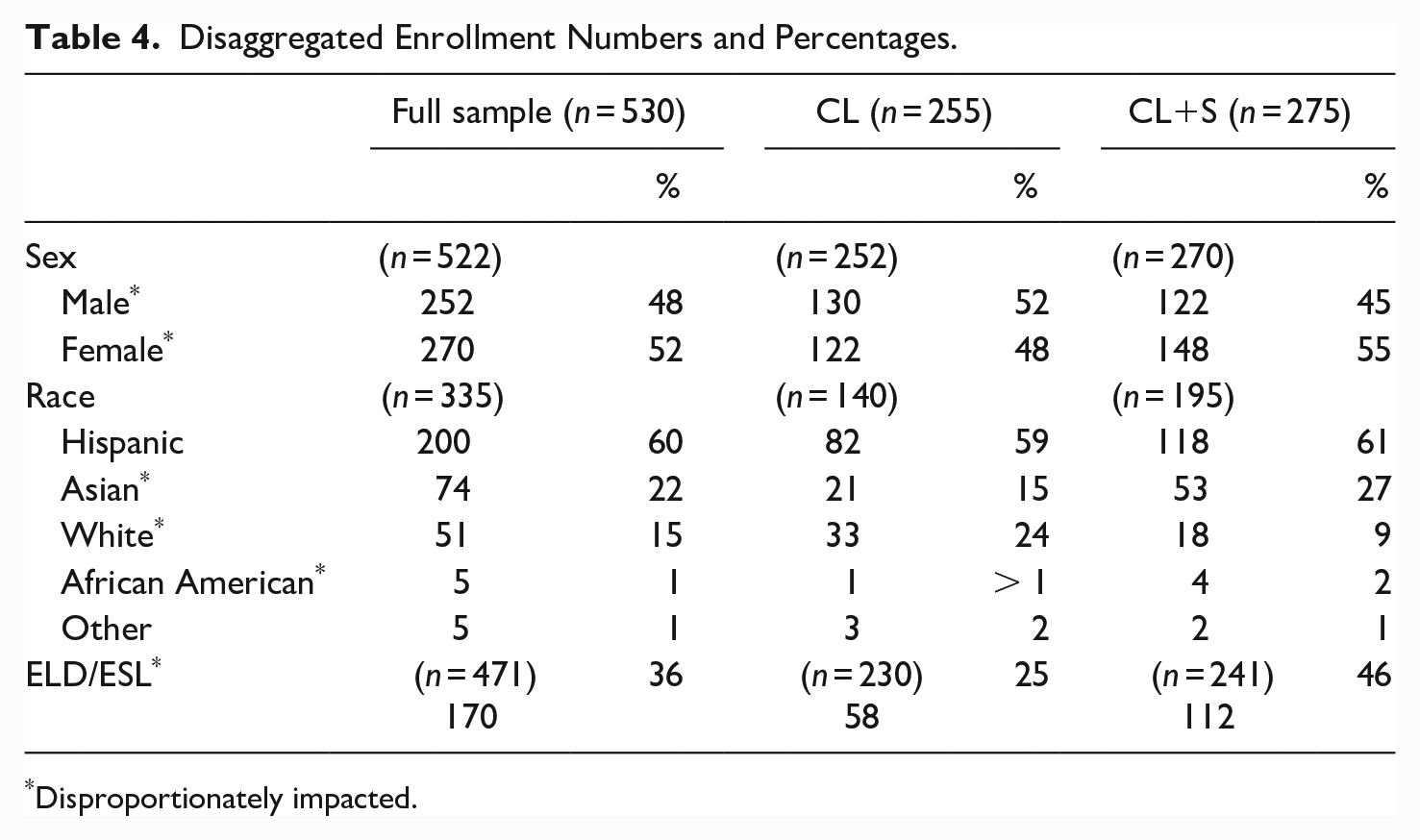

To determine disparate, or disproportionate, impact on enrollment of student subgroups into the different course types, the percentage of the group in the full sample was compared to the percentage of students from that group who enrolled in each course type. Disproportion was detected for subgroups by sex, race, and language background. The data show that although males are less represented than females in the overall sample (48% males to 52% females) and have a lower HSGPA on average than females with statistically significant difference, they were more highly represented in the CL course (52%) than in the CL+S course (45%) (see Table 4).

Disaggregated Enrollment Numbers and Percentages.

Disproportionately impacted.

Disproportionality by race was also found for three student subgroups: White, Asian, and African American. Although White students make up 15% of the full sample, they were more highly represented in the CL course (24%) than in the CL+S course (9%). On the other hand, despite having average HSGPAs well above the 2.6 cut off and higher than those of White students, Asian and African American students were more highly represented in the CL+S course (Asian, 27%; African American, 2%) and less represented in the CL (Asian, 15%; African American, >1%) course than they are in the full sample (Asian, 22%; African American, 1%). Additionally, results show that students with backgrounds in ELD and/or ESL were more highly represented in the CL+S course (46%) and less represented in the CL course (25%) than they were in the full sample (36%).

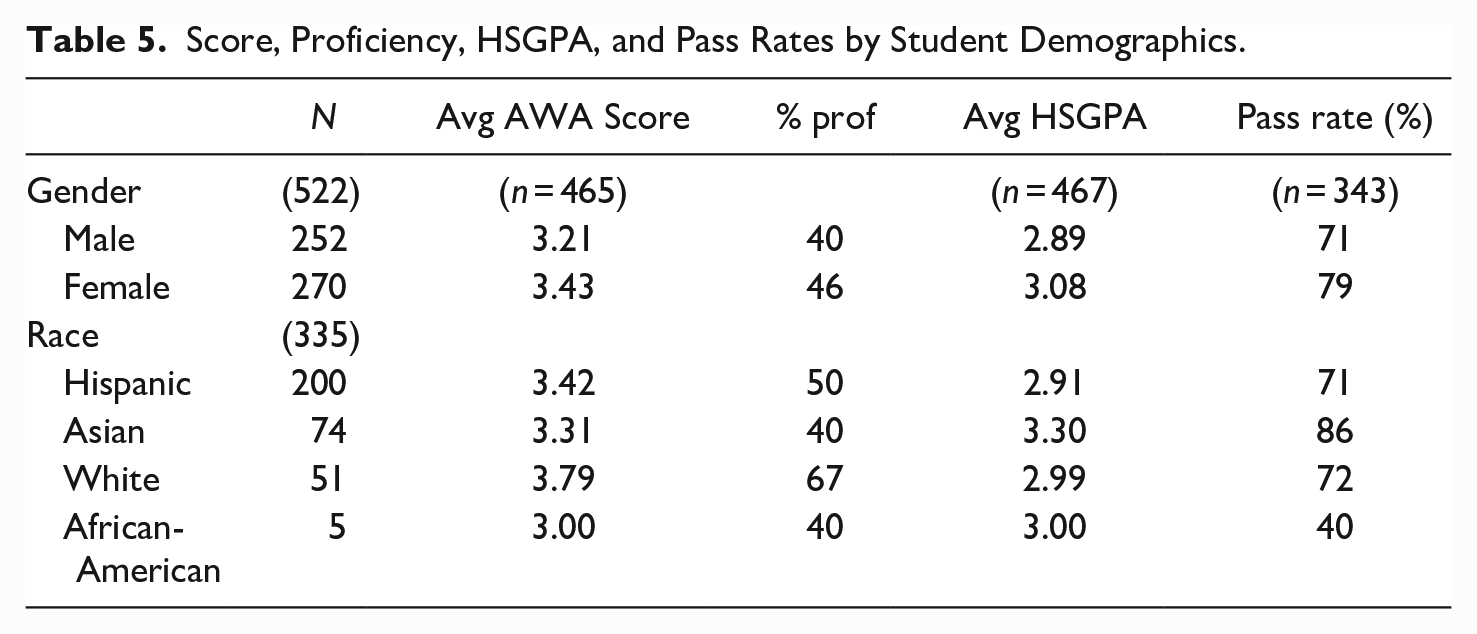

When comparing the average AWA scores of student subgroups based on sex and race, the data reveal that females had a higher average score than males (3.43 compared to 3.21) and students that identify as White had a higher average score than other racial subgroups (3.79) (see Table 5). Students of all races scored an average within the same point range (3.00–3.79), just below a four, which is considered proficient. Females had a higher course pass rate than males (79% compared to 71%). The pass rates for students of almost all racial groups was over 70%, with Asian American students exceeding this rate at 86%. The pass rate for African American students was the exception at 40%; however, since there were only five African American students in the sample of participants who provided information about race in the survey, these data are limited in their generalizability (see Table 5).

Score, Proficiency, HSGPA, and Pass Rates by Student Demographics.

Discussion

This work is conducted with the awareness of some limitations. It is recognized that the type of writing prompted by the assessment used in this study is a single academic task that does not allow for much revision and does not assess students’ information literacy. In the assessment of what is termed “proficiency,” the writing sample is a mere snapshot of what students are able to produce, one generated at a given moment of time, but also one that can help stakeholders gain an understanding of students’ familiarity with the academic genre. Holistic scoring is used to evaluate the writing produced, which, despite its limitations, is regarded by assessment scholars as a “successful method of scoring writing” and a “major advance in the assessment of writing ability” (White, 2009, p. 26). It is with acknowledgment of these limitations that a discussion of the findings is offered.

In investigating the question, What differences might exist in students’ writing proficiency, HSGPAs, and final course grades between students who place themselves in the stand-alone transfer-level course (CL) and those who place themselves into the same course with a concurrent support course (CL+S)?, results reveal that: (1) students enrolled in the two course types do not differ significantly in their writing proficiency; (2) the HSGPAs of students enrolled in the two course types differ significantly, but HSGPA is only weakly correlated with students’ measured levels of writing proficiency; and (3) differences in students’ final course grades between the two course types are insignificant, and course pass rates are high for students of all proficiency levels.

Results of this study show that under the current placement policy, students of nearly all levels of writing proficiency enroll in both course types (with the exception of those who scored a 6—see Table 1). This wide range of scores in both course types demonstrates a need for differentiated instruction for students in both the CL and the CL+S course. Although there was a difference in students’ mean scores on the AWA between the two groups, that difference is only marginally significant (p = .09). This suggests that the two groups do not differ enough in terms of students’ writing ability to warrant the need for two course types in order to address students’ writing needs.

Just Below Proficient

More than half of the papers in the overall sample (57%) received a non-proficient score. A large portion of these papers (41%) scored a 3, just below the proficient mark. This percentage was lower for the CL course (35%) and higher for the CL+S course (46%). Results revealing low proficiency rates are consistent with previous research which shows that writing continues to present a great challenge for large numbers of students through the postsecondary level (MacArthur & Philippakos, 2013; Perin, 2013). Scholars highlight the need for the explicit teaching of writing, and prior studies show it is possible to teach the processes used by strong writers to those who are less proficient (MacArthur & Philippakos, 2013). A recent study identified specific features of academic writing that can be taught explicitly and discussed differences in how students in various course levels used these features in their writing (Nazzal et al., 2020). Knowledge of such aspects of writing can be valuable for students at all levels of writing proficiency and used by faculty to provide students with achievable goals to pursue in academic writing. For the considerable number of students whose writing is just on the threshold of what would be considered proficient, targeted instruction in strategies used by more practiced writers can help them to effectively communicate in the academic register (Nazzal et al., 2020).

Far Below Proficient

Papers that scored 1 or 2 comprised 16% of the overall sample and were 15% of the CL papers and 17% of the CL+S papers; they were considered far below proficient and the students that produced them may have been placed into the lowest-level precollegiate course under the previous placement policy. Previous studies showed that the writing of students who placed into this course differed significantly from students in the FYTL course (Nazzal et al., 2019) and significant differences were found between these students and students in the FYTL course in how often they employed certain writing features, such as making a claim and following prompt directions (Nazzal et al., 2020). For students whose previous experiences may not have prepared them for the challenges of transfer-level work in composition, but who are placed into the transfer-level composition course under the new placement policy, targeted instruction in specific writing features essential to academic writing can help them to better participate in academic discourse and to increase their chances for success.

The findings of this study also show that the difference in average HSGPA of students enrolled in the two course types are statistically significant. This result is expected, given that HSGPA is the primary measure used for course recommendations made to students by the college and that almost all participants (93%) who took the college questionnaire followed the college’s recommendation when they enrolled. However, results of the Pearson correlation analysis indicated that there was a very weak association between students’ writing scores and their self-reported HSGPA (r = .16, n = 416) and a scatterplot reveals a lack of linear relationship, or interdependence, between the two variables.

This result confirms earlier research showing a lack of association between HSGPA and students’ writing scores (Nazzal et al., 2019), suggesting that although HSGPA has been thought to reflect readiness for college coursework not captured by standardized exam scores (Hodara & Lewis, 2017), it appears to be an insufficient measure for matching students more precisely with academic interventions that meet their writing needs. Still, the use of placement measures by institutions into composition courses is quickly shifting primarily to the use of students’ HSGPA. The widespread adoption of such measures for placement among 2-year institutions, and even among 4 year institutions, has the potential to undo carefully established placement processes including faculty-created-and-scored writing assessments that can provide important insight into students’ levels of academic writing proficiency.

In the current reform environment at the college in this study, the course type with a concurrent support section (CL+S) is offered to address students who need more support with their writing. The course demands a greater commitment from students, requires more of their already pressed resources of time and money, and also comes at greater cost to the college than the stand-alone course. Students’ reliance on the institution to guide them in making the best course choice is demonstrated by the many students that followed the college’s recommendation for course placement. However, since positioning students to receive support is based on HSGPA rather than on their writing needs, the placement misses the mark, leaving students who need more support in the CL course without it, while placing an unnecessary burden on students enrolled in the CL+S course who may not need the additional support.

These results confirm the skepticism of some scholars about the use of HSGPA as a placement measure due to the lack of comparability across high schools which can vary in many ways including course rigor, grading standards, and availability of economic resources and qualified teachers (Sackett et al., 2008). Additionally, there is reason to believe that HSGPA is not reflective of students’ writing ability since writing studies scholars have found that the amount of writing assigned in high school is meager and is often low in quality (Applebee & Langer, 2011; Kiuhara et al., 2009). Based on the findings of this study, measures that provide more specific information about students’ writing preparedness are needed in order to place students into composition courses and to determine which students need more support. In this way, limited resources can be better targeted to provide support to students who most need it.

Results of the anlaysis of students’ final course grades reveal that the overall average grade was in the range of a C. Although the point values differ within the range of the letter grade, a C was the average grade for students in both the CL and the CL+S courses. There is also no statistical significance in the difference in students’ final grades between the course types (p = .32) (see Table 3). The overall pass rate for students who received a grade of C or higher was 76% for the full sample, higher for the CL group (80%) and lower for the CL+S group (71%). Analysis of the relationship between students’ writing scores on the AWA and their final course grades shows no linear relationship between the two variables (r = .27), signifying that writing proficiency score on the AWA at the start of the semester is a weak predictor of students’ final grades in the course. Another assessment at the end of the semester would provide data needed to gauge changes in proficiency. If students that passed with a C do not demonstrate proficiency at the end of the semester, it can reinforce the concern of some scholars about grade inflation, a type of problematic grading. Composition scholars assert that “the final writing of passing students ought to be solid, competent writing” because “passing is about writing at a certain level” (Royer & Gilles, 2020).

Although previous studies show that directed self-placement, which allows students agency in the placement process, can lead to lower course pass rates (Hassel et al., 2015; Hu et al., 2016), that is not the case in this study. In fact, the high pass rates show a substantial increase from pass rates at the college in the two course types just one year earlier (from 65% to 80% in the CL course and from 52 % to 71% in the CL+S course). This increase may be indicative of what some scholars call “grade norming,” a reason for skepticism about the effectiveness of open enrollment into transfer-level courses (Saxon & Morante, 2014, p. 24). They believe that this type of enrollment may compel faculty to adjust course content and instructional methods to address a wide range of student skills, which can lead to softening of academic standards, reduced rigor, and eventually, grading based on relative student performance instead of set standards. The overall pass rate for students who scored far below proficient was 71% and even higher for those in the CL course (79%). In order to ensure the success of students in subsequent coursework that leads to degree attainment and transfer without undermining the learning that took place throughout the semester, further investigation is needed to better understand the reasons for the overall high pass rates seen in this study.

Are Students Who Need the Most Writing Support Positioning Themselves to Receive It?

The results in this study show that given the particular placement method used in this case—automated directed self-placement, guided by high school records—students are not sorted into the two course types based on their writing ability. While the aim of reform efforts is to place nearly all students into the FYTL composition course, the course type with a concurrent support section is offered to ensure that students who need more writing support can receive it. However, there is concern among college stakeholders that students who need the additional support, for various and wellfounded reasons including time constraints, financial limitations and even convenience, would choose not to enroll in the course section that provides it. Students in this study generally chose to follow the college’s course recommendation that was based on their HSGPA, which was found to be weakly correlated with students’ level of writing proficiency. Therefore, only some students who need more writing support than is feasible in the stand-alone transfer-level course are positioned to receive it. In addition, students who do not demonstrate a need for extensive support enroll in the CL+S course, which may place additional and unnecessary demands on their time and finances. The results also show that some student groups, such as female, Asian Americans, and African Americans may have a tendency to select the course with support (CL+S) even though they qualify for the stand alone course (CL), which costs them more time, money, and commitment. These findings echo the concern that through self-placement, especially in community colleges, women and students of color might “reproduce their own subordination” (Toth, 2019, p. 150).

Scholars suggest that assessment and placement instruments and policies should match students more precisely with academic interventions that meet their needs and that student writing be used, among other pieces of evidence, to assess student needs and abilities (Edgecombe, 2011; Hughes & Scott-Clayton, 2011; Royer & Gilles, 2020). Unfortunately, in the broad sweep of reform in community colleges, as standardized placement exams in writing are eliminated due to evidence of the misplacement of students, so also are faculty-created and scored writing assessments, which provide more complete and nuanced information on which to base placement decisions (Barnett & Reddy, 2017; Nazzal et al., 2019; Rodriguez et al., 2018). Sorting students into stratified groups based on their demonstrated proficiency can also help ease the labor of teaching (Toth, 2019), and efforts to do this early in the term are important in order to provide students with the instructional support they need to become more proficient writers. However, under the current placement policy that does not include a student writing sample, identification of students who need additional writing support and determination of what their writing needs are is left to individual faculty.

Has the New Placement Policy Improved Students’ Chances for College Success?

Most student participants (84%, n = 507) in this study declared having an educational goal of either attaining the AA degree, transferring to a university, or both. Upon completion of the required FYTL course, these students may be more likely to persist and go on to attain their degree and/or transfer, especially those who would have placed into the lower-level precollegiate courses under the previous policy since by passing this class, students save up to three semesters of coursework. Completion of this course also opens doors for enrollment into courses in other disciplines, for which this course is often a prerequisite. Therefore, this particular reform effort appears to increase students’ chances of moving forward toward the attainment of their educational goals. It is less clear, however, whether passing this course leads to advancement in writing proficiency, and whether by so doing, students are prepared to address the literacy demands of coursework in other disciplines or at the 4-year institutions into which they transfer.

Conclusion

Student placement based on the determination of readiness for transfer-level coursework affects not only access to the FYTL course, but also student persistence and the likelihood of transfer and degree attainment (Dominick et al., 2007). This can have steep economic impacts on students since unemployment rates drop and median income earnings rise with each level of increase in education (U.S. Bureau of Labor Statistics, 2020) and since those who do not attain at least an associate degree or a certificate will likely have more difficulty supporting a family above the poverty line. This is particularly so for students enrolled in community colleges, since they are disproportionately from low-income backgrounds, first in their families to attend college, and from racial backgrounds that are historically underrepresented in higher education (American Association of Community Colleges [AACC], 2020).

The reform implemented at this community college has allowed far more students direct access into the transfer-level composition course than the previous placement policy. However, scholars highlight that a successful Directed-Self Placement (DSP) process relies on an institution’s clear definition of the requirements and outcomes of its various course options, a consistent maintenance of those definitions, and clear communication of them to incoming students (Royer & Gilles, 2020; Saxon & Morante, 2014). With the high course pass rates seen in this study (76% for the overall sample and even higher in the CL course), it appears that changes made through reform helped many more students complete the transfer-level composition class in a shorter time period than they would have under the previous policy. However, results also show that whether or not students completed the course successfully with a grade of C or higher was weakly related to their HSGPA and nearly independent of their levels of writing proficiency at the beginning of the semester as measured by the assessment in this study. Because the Analytical Writing Assessment (AWA) administered in this study was not taken a second time at the end of the semester, it remains unclear if the generally high pass rates reflect improvement in student academic writing proficiency that might allow for successful completion of subsequent coursework, if they are influenced by grade norming, or if there are other explanations for these pass rates since the adoption of the new policy.

For students who did not pass the course, the reasons are unknown and likely vary. It is also unclear whether enrollment into a precollegiate course to prepare for the transfer-level course would have been more beneficial for these students. Repetition of the transfer-level class and exposure again to transfer-level curricula may be more beneficial than risking the same in a class for which they do not receive transferable units. However, 44% of these students who did not pass the class scored a 4 or above (what would be considered proficient) on the AWA at the start of the semester. This suggests that the reasons for not passing may be unrelated to students’ writing proficiency. Further research is needed that integrates student and faculty perspectives with these findings.

Investigation of the impact and value of the support courses is beyond the scope of this study. It is unclear to what extent, if any at all, students’ pass rates in the support course can be attributed to the additional hour of instruction or the in-classroom tutor’s presence and/or supplemental tutoring sessions. It is possible that students’ pass rates could have been lower in the CL+S course without these additional resources, but this study can not lead to conclusive claims about this. Based on findings of this study which show that less than half of students in the overall sample scored above a 4 (what was considered proficient), all students could benefit from explicit instruction in how to write essays in which they synthesize multiple texts.

Although scholars assert that assessment and placement instruments and policies should match students more precisely with academic interventions that meet their needs (Edgecombe, 2011; Royer & Gilles, 2020), the findings of this study demonstrate that in relying primarily on high school records and GPA for placing students into composition courses, institutions may be taking a step back from this goal. Scholars suggest that student writing be used among other pieces of evidence to assess students’ writing needs and abilities and to provide support to those who need it (Edgecombe, 2011; Hughes & Scott-Clayton, 2011; Nazzal et al., 2020; Royer & Gilles, 2020). This allows for more effective overall support of students and the efficient use of limited resources toward this end. Results of this study can help to provide important insight about effects of the particular reform strategies used at this institution as well as to provide direction for broader reform efforts currently taking place in community colleges nationwide to help support student success.

Footnotes

Appendix

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.