Abstract

Panel data are often used to study change and stability in social patterns. However, repeated interviewing may affect respondents’ attitudes in a panel study by triggering reflection processes on the surveyed topics (cognitive stimulus hypothesis). Using data from a survey experiment within a probability-based and a nonprobability panel in Germany, we investigate change—and the mechanisms underlying change—in respondents’ abortion attitudes over six panel waves. The experiment manipulated the frequency of receiving identical attitude questions. We estimate multiple-group and longitudinal structural equation models to differentiate change in the measurement of abortion attitudes from “real” attitude change. Results show that repeatedly administering the same abortion questions increases the reliability of respondents’ reported attitudes and the stability of their latent attitudes toward abortion. However, we find no evidence of an increase in attitude certainty and knowledgeability on abortion and only tentative evidence of improved response behavior (increased attitude reliability) due to general survey experience.

Keywords

Introduction

Panel surveys are widely used to track the attitudes and subjective beliefs of individuals over time and to study change and stability in social patterns. However, shifts in respondents’ attitudes over the course of a longitudinal study may not only reflect individual or social change but may also be due to repeated interviewing (Bergmann and Barth 2018; Sturgis, Allum, and Brunton-Smith 2009; Waterton and Lievesley 1989). This potential bias, which is inherent in panel studies, is generally referred to as panel conditioning (Kalton, Kasprzyk, and McMillen 1989; Lazarsfeld 1940).

Recent studies have explored the presence and magnitude of panel conditioning effects (e.g., Cornesse et al. 2023; Eckman and Bach 2021; Kraemer et al. 2023; Silber et al. 2019). Research specifically investigating the effects of panel conditioning on respondents’ attitudes over time suggests that repeated surveying on the same issues raises respondents’ awareness of these issues and stimulates further reflection processes on the surveyed topics—a psychological mechanism described by Sturgis, Allum, and Brunton-Smith (2009:114) and referred to as the “cognitive stimulus (CS) model.” Studies have provided some support for Sturgis, Allum, and Brunton-Smith's (2009:116) “cognitive stimulus hypothesis” by documenting more stable and reliable survey responses and increased “opinionation”—that is, reporting an attitude instead of giving a “don’t know” response—among experienced panelists (Bergmann and Barth 2018; Binswanger Schunk, and Toepoel 2013; Kroh Winter, and Schupp 2016; Sturgis, Allum, and Brunton-Smith 2009).

Previous studies have typically used nonexperimental designs to investigate panel conditioning (Bergmann and Barth 2018; Binswanger, Schunk, and Toepoel 2013; Sturgis, Allum, and Brunton-Smith 2009). However, nonexperimental designs are susceptible to various types of bias, such as maturation effects or selective dropout, that may affect change rates. These effects are very difficult to control for in observational data and might introduce systematic bias of an unknown magnitude into change estimates. More importantly, most previous studies have not investigated the reasons why attitudinal responses in panel studies become more stable and reliable over time. Are these panel conditioning effects the result of increased reflection by the respondent on the survey topics, or do panel respondents simply become more consistent in their responses over time due to increased experience with answering surveys, and thus greater familiarity with typical response tasks?

In this article, we aim to address these research gaps by examining the following research questions:

Our study is unique in that it uses data from a longitudinal survey experiment conducted over six waves within a probability-based and a nonprobability panel. The experimental design manipulated the frequency of receiving identical attitude questions over the course of the study and allows us to investigate the impact of different levels of exposure to identical attitude questions on the presence and magnitude of attitude changes. Additionally, this design enables us to study attitude change not only between different respondent groups but also across the six survey waves to further examine the underlying mechanisms of change in respondents’ attitudes. To specifically investigate the relevance of reflection processes for change in respondents’ (reported) attitudes, our study further includes measures of attitude certainty and knowledgeability. Lastly, to study the effects of repeated interviewing on both measurement error and “real” attitude change, we use structural equation modeling as a modeling technique to differentiate between respondents’ reported and “true” attitudes, thereby extending previous research.

Background

Research addressing the effects of panel conditioning on attitudes and subjective beliefs is scarce. Most studies on panel conditioning to date have focused on identifying and estimating bias in overall response quality or behavioral outcomes such as voter turnout (Battaglia, Zell, and Ching 1996; Clausen 1968; Halpern-Manners, Warren, and Torche 2017; Sun, Tourangeau, and Stanley Presser 2019; Yalch 1976). Only a few studies have investigated how repeated interviewing changes respondents’ attitudes over the course of a panel study (e.g., Binswanger Schunk, and Toepoel 2013; Jagodzinski Kühnel, and Schmidt 1987; Sturgis, Allum, and Brunton-Smith 2009).

Despite the variety of research designs across these studies, their findings regarding attitude change in panel studies are largely consistent. They show more stable, reliable answers to attitudinal questions and increased opinionation on surveyed topics in later waves of a study.

In one of the earlier studies that investigated attitudinal responses over the course of a panel study, Jagodzinski, Kühnel, and Schmidt (1987) differentiated between changes in latent attitudes and changes in reported attitudes and showed that the reliability of attitude reports regarding immigrant workers in Germany increased between the first and second survey waves. Waterton and Lievesley (1989) compared the political attitudes of members of the British Social Attitudes Panel over the course of three survey waves with fresh cross-sections and found an increase in politicization due to repeated interviewing, with panelists more often reporting support for a political party. Similarly, Sturgis, Allum, and Brunton-Smith (2009) found that panelists of the British Household Panel Survey provided fewer “don’t know” responses to a left–right political orientation question over the course of 11 panel waves—a finding that they attributed to an increase in (political) opinionation. Additionally, Sturgis, Allum, and Brunton-Smith (2009) found that respondents’ answers to attitudinal questions became more reliable and stable over time.

Other studies have provided further evidence of an increase in the stability and reliability of responses to attitudinal survey questions over time. Kruse et al. (2009) found an increase in inter-wave stabilities of environmental attitudes. Kroh, Winter, and Schupp (2016) examined the consistency of individual response patterns in 17 multi-item scales administered in 30 consecutive waves of the German Socio-Economic Panel and found that repeated interviewing increased the reliability of individual survey responses over time. Sun, Tourangeau, and Stanley Presser (2019) investigated the effect of panel conditioning on overall response quality using several response quality indicators. They found an increase in the reliability of selected attitude scales over time for members of two probability-based panel studies in the United States, thereby providing further evidence for an increase in the reliability of attitudinal responses over the course of a panel study. Using data from the German Longitudinal Election Study, Bergmann and Barth (2018) showed that repeated interviewing decreased respondents’ political indecision and increased attitude strength. This effect was especially prominent for respondents whose attitudes regarding their party vote intention had initially been weak.

Most of the existing studies on panel conditioning and its effects on attitudinal responses have explained their findings based on the notion that repeated interviewing increases awareness and prompts respondents to reflect intensively on the surveyed topics. This reflection either leads them to form opinions where none existed prior to the survey, or it strengthens existing attitudes. The assumption that reflection processes are triggered by repeated interviewing on specific topics was developed into a general theoretical framework—the CS model—by Sturgis, Allum, and Brunton-Smith (2009).

The CS model assumes that instead of forming opinions “on the spot,” respondents base their opinions on information on and intensive cognitive engagement with the issues to which the questions pertain (Sturgis, Allum, and Brunton-Smith 2009:114): Being repeatedly exposed to and asked about the same issues might stimulate respondents to intensively reflect on the surveyed topics or to obtain further information. For example, they may discuss the issue with others or pay closer attention to the news (Sturgis, Allum, and Brunton-Smith 2009). Sturgis et al. (2005) argue that even if respondents do not change their existing views, the triggered reflection processes lead them to hold more consistent attitudes and to show greater attitude strength (a process referred to as “attitude crystallization”) than they would have done without intensified deliberation due to repeated interviewing. The three main empirical implications of the CS model are that (a) attitudes become more reliable over time, (b) attitudes become more stable over time, and (c) opinionation on surveyed issues increases.

Following the assumptions of the CS model (Sturgis, Allum, and Brunton-Smith 2009), respondents’ learning effects over the course of a panel study are topic- or question-specific. Changes in respondents’ answers are due to repeated exposure to similar or identical question content and the thereby prompted reflection processes. By conducting in-depth follow-up interviews with panel members, Waterton and Lievesley (1989) provided initial evidence for the mechanism of content-specific reflection processes. They found that the survey prompted panelists to discuss the issues with friends and family. By contrast, Clinton (2001) found that long-term members of an online panel reported lower levels of news consumption compared with respondents who had been in the panel for a shorter period of time, challenging the assumption that topic-specific reflection increases respondents’ attentiveness to relevant news in the media (Sturgis, Allum, and Brunton-Smith 2009). In any case, the present evidence regarding the mechanisms underlying the observed crystallization of panelists’ attitudes over time is at best suggestive.

In contrast to the assumptions of the CS model, some studies have argued that observed attitude change and increases in the reliability of responses to attitudinal questions over time might simply be the result of increased experience with answering surveys in general and consequently “better” response behavior (Kroh, Winter, and Schupp 2016; Warren and Halpern-Manners 2012; Waterton and Lievesley 1989). It is argued that repeatedly answering surveys may increase respondents’ familiarity with the overall survey procedure and improve their understanding of the rules and requirements of an interview (e.g., Struminskaya 2016; Waterton and Lievesley 1989; Yan and Eckman 2012). Consequently, respondents might learn about the relevance of reporting their true opinions and behaviors, and might learn to use different survey instruments and to map their answers to different response scales (Nancarrow and Cartwright 2007; Waterton and Lievesley 1989). Research suggests that the question comprehension task does in fact become easier the longer respondents participate in a panel survey (Waterton and Lievesley 1989), that retrieval of relevant information becomes less error-prone (Bailar 1989), and that mapping the retrieved information to a variety of response scales becomes easier, too (Basso et al. 2001).

Findings from the GESIS Panel in Germany show shorter response times for more experienced panelists, which cannot be attributed to satisficing response behavior (Kartsounidou et al. 2023; Kraemer et al. 2023). Similarly, Scherpenzeel and Zandvliet (2011) found that experienced members of the LISS Panel in the Netherlands more often completed their task on time compared with novice respondents, indicating that a higher level of survey experience is associated with “better” response behavior.

Respondents might even prepare for subsequent interviews by looking up relevant information beforehand in order to give better answers. For example, studies using external validation data have found that the accuracy of the information that respondents provide on their income and their welfare benefit receipt status increases with more panel experience (Frick et al. 2006; Yan and Eckman 2012). We refer to this mechanism underlying observed attitude change and increases in attitude reliability over time as the “better respondents” model (see Yan and Eckman 2012).

Both the CS model and the “better respondents” model can lead to the same outcome, but they are based on different mechanisms. Whereas Sturgis, Allum, and Brunton-Smith (2009) attributed fewer “don’t know” responses of more experienced panelists to increased opinionation on the subject matter, Waterton and Lievesley (1989:334) attributed this reduced propensity to answer “don’t know” to the fact that “repeated interviewing leads to improved understanding of the rules that govern the interview process.”

In sum, the existing empirical findings are only suggestive of the mechanisms underlying observed changes in attitude reports. There is no direct evidence on the role of content-specific reflection processes versus familiarity with the response task. To contribute to the literature, we aim to investigate the mechanisms underlying observed attitude changes in repeated measurement settings by testing four hypotheses, which are presented below.

According to the CS model (Sturgis, Allum, and Brunton-Smith 2009), repeatedly being asked about a specific issue increases awareness and stimulates respondents to reflect intensively on the subject matter. If respondents do not have opinions on the issue, this might lead to the formation of opinions and therefore to an increase in opinionation reflected in fewer “don’t know” responses. By contrast, the “better respondents” model (Yan and Eckman 2012) would attribute fewer “don’t know” responses to respondents’ increased familiarity with the response task and the survey procedure, which in turn increases their willingness to report their attitudes. Based on both models, we hypothesize:

Reflection processes on surveyed topics due to repeated interviewing are central to the CS model, which postulates that they lead to more consistent and crystallized attitudes. Following the “better respondents” model, on the other hand, more consistent and reliable attitudes would be the result of respondents becoming better at answering (multi-item) scales, leading to more accurate attitude reports in which “noise” and random errors are reduced. Based on either model, we expect:

The next hypothesis is in line with the assumptions of the CS model but not the “better respondents” model, as it addresses the mechanism underlying the CS model:

Our final hypothesis is based on the “better respondents” model but not on the CS model. Following Kroh, Winter, and Schupp (2016), who discuss the possibility that the increased familiarity with the survey response task as a whole could have an impact on increases in the reliability of individual survey responses over time, we hypothesize:

Methods

Experimental Design

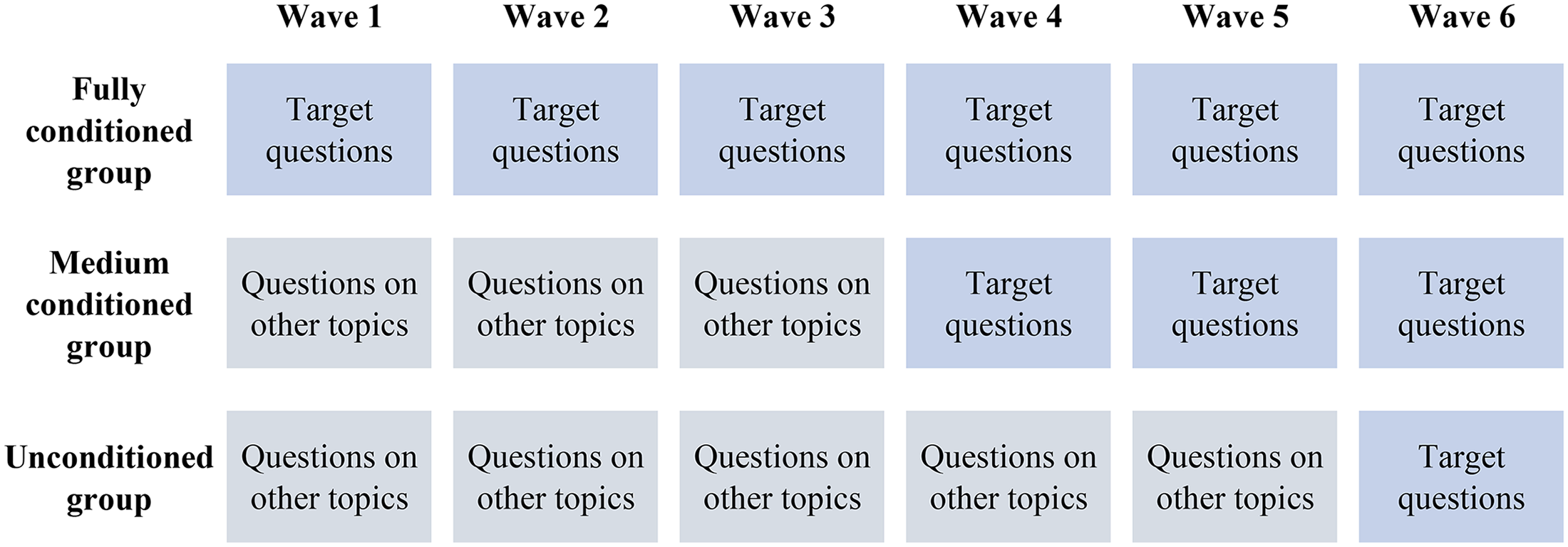

To test our hypotheses, we use data collected in a longitudinal survey experiment carried out within a probability-based mixed-mode panel study and a nonprobability online access panel in Germany. The survey experiment covered six panel waves and manipulated the frequency of answering identical questions (i.e., target questions). Respondents were randomly assigned to one of two experimental groups or to a control group. The first experimental group received the identical target questions in each of the six waves (i.e., “fully conditioned”); the second experimental group received the target questions only from Wave 4 onwards (i.e., “medium conditioned”); the control group received the target questions only once, namely, in the final wave (i.e., “unconditioned”). 1 For an illustration of the experimental design, see Figure 1.

Experimental design: Manipulation of exposure to identical target questions.

In the nonprobability panel study, respondents were randomly assigned to three groups of equal sample size (n fully conditioned = 647; n medium conditioned = 651; n unconditioned = 648). Respondents of the probability-based panel study were randomly assigned to three groups of unequal sample size: 50% were assigned to the “fully conditioned” experimental group, 25% were assigned to the “medium conditioned” experimental group, and 25% to the “unconditioned” control group (n fully conditioned = 2,337; n medium conditioned = 1,165; n unconditioned = 1,158). 2

Data

Nonprobability Panel Study

The experimental study conducted within the nonprobability online access panel was fielded between October 2020 and December 2021 (Silber et al. 2024b). The recruitment of respondents targeted persons aged 18 years and older who were residing in Germany at the time of the study and were registered as members of the commercial online access panel that was used as the sampling frame. For the implementation of the study, a quota sample was drawn based on the demographic distribution of the population according to the German Microcensus. Sample members were selected using cross-quotas for gender (female/male), age (18-35 years, 36-65 years, 66 years and older), and level of education measured as the highest general school leaving certificate (below secondary school certificate; secondary school certificate; higher education entrance qualification). Data were collected exclusively online via web-based surveys, with each survey wave taking about 15 min to complete, except for the first wave, which took about 35 min due to the inclusion of additional background measures on sociodemographics, political attitudes, and personality.

Selected panel members were invited by the panel provider to participate in the study via a personalized email. However, every panel member could also participate via the panel's online user interface. Only respondents who completed the first survey wave were able to participate in the subsequent waves. The invited panel members received up to four email reminders. Each reminder was sent three to four days after the invitation or the preceding reminder email. Respondents received a postpaid incentive after each completed survey. The amount of the incentive varied by wave from €0.60 to €2.50, depending on the average survey duration. Furthermore, respondents received an additional bonus payment of €2 if they successfully participated in all six panel waves.

Across the six waves of the panel study, the completion rates (COMRs; Callegaro and DiSogra 2008) ranged between 93.9% in the first wave to 92.4% in the last wave. By the last wave of the study, 44.2% of the respondents had dropped out (Callegaro and DiSogra 2008) (see Appendix B, Tables B1 and B2 in the online Supplemental Materials for detailed completion and attrition rates for each wave and Table B9 for an overview of the sociodemographic composition of the sample).

Probability-Based Panel Study

The longitudinal survey experiment was also fielded within the GESIS Panel—a German probability-based mixed-mode access panel comprising about 5,200 panelists who are surveyed on topics such as subjective well-being, personality and personal values, media usage, and work and leisure (GESIS 2022). The GESIS Panel was initially recruited in 2013, based on a random sample drawn from the population registers of selected municipalities in Germany. The target population comprised German-speaking adults aged 18–70 years who were permanently residing in private households in Germany. The recruitment process followed a multi-stage procedure that initially included a face-to-face interview, whose response rate (AAPOR RR1; American Association for Public Opinion Research 2023) was 35.5% (Bosnjak et al. 2018). In 2016 and 2018, two refreshment samples (n = 1,710; n = 1,607) were added to counter the effects of panel attrition.

Data collection in the GESIS panel is administered in two modes: via web-based surveys (online mode) or paper questionnaires sent by postal mail (offline mode). By 2021, the majority of respondents (about 75%) completed the surveys online, while the remaining 25% of panelists participated in the offline mode. Irrespective of the participation mode, each panelist receives a survey invitation by postal mail that contains a prepaid cash incentive of €5. Panelists participating in the online mode additionally receive an email survey invitation and up to two email reminders: One week and two weeks after the invitation. Offline respondents do not receive reminders.

In this article, we use data from six consecutive survey waves of the GESIS Panel (Waves 41-46), which were fielded between October 2020 and January 2022. Since 2021, survey waves of the GESIS Panel have been administered every three months. However, until 2020, data collection was conducted bimonthly. Accordingly, while the experimental study was being fielded, the interval between survey waves changed from two to three months. The questionnaire of the experimental study alone took approximately 5 min to complete. It was part of the standard GESIS Panel wave questionnaires, which include annually recurring core studies and external studies on other topics and take about 20-25 min in total to complete.

In the first wave of the experimental study, COMRs (AAPOR 2023) ranged from 93.2% for the first panel cohort to 93.7% and 89.7% for the second and third cohorts, respectively. 3 In the last wave, they ranged from 92.2% for the first and second panel cohorts to 90.3% for the third panel cohort (for detailed COMRs by wave and cohort, see Table B3, Appendix B in the online Supplemental Materials). Cumulative response rates (CUMRRs; AAPOR 2023) in the first wave of the experimental study ranged from 11.5% for the first panel cohort to 9.7% for the second, and 9.6% for the third. In the last wave of the experimental study, CUMRRs ranged from 10.7% for the first panel cohort to 8.8% for both the second and third panel cohorts (for detailed CUMRRs by wave and cohort, see Table B4, Appendix B in the online Supplemental Materials). In the final wave of our experimental study, attrition rates ranged from 49.6% for the first cohort to 41.0% for the second and 31.6% for the third cohort (Schulz, Minderop, and Kai Weyandt 2021; Stadtmüller, Schwerdtfeger, and Kai Weyandt 2022; for detailed attrition rates by wave and cohort, see Table B5, Appendix B in the online Supplemental Materials; for an overview of the sociodemographic composition of the sample, see Table B9, Appendix B in the online Supplemental Materials).

Measures

Attitudes Toward Abortion

To investigate change in respondents’ (reported) attitudes, we used four items measuring attitudes toward abortion that have been used in the US General Social Survey (GSS; Adamczyk and Valdimarsdóttir 2018; Carter, Carter, and Dodge 2009). We chose to investigate abortion attitudes over time as a conversative approach to identifying changes in attitudes due to repeated interviewing. Abortion attitudes have been shown to be overall relatively stable and resistant to change but to vary depending on the contextual factors of an abortion (Hans and Kimberly 2014; Jelen and Wilcox 2003). To measure abortion attitudes, respondents were presented with four statements on different circumstances under which a woman should be able to obtain a legal abortion. They were then asked to indicate to what extent they agreed or disagreed with each statement on a scale ranging from 1 (strongly disagree) to 7 (strongly agree). As a further response alternative, each item included a “don’t know” option. The stated reasons for an abortion were (a) the woman does not want any more children, (b) the family cannot afford any more children, (c) there is a high probability of serious birth defects, and (d) the pregnancy endangers the woman's health (for question wording and implementation, see Appendix A in the online Supplemental Materials). The items were presented in an item-by-item format, with each of the four items shown on a separate screen with a vertical response scale. 4

Attitude Certainty

To gain further insights into the relevance of reflection processes for attitude change, we used follow-up measures that were administered both in the first and last wave of the study directly after the four items about abortion attitudes. The first measure assessed the certainty of respondents’ abortion attitudes. Respondents were asked to indicate on a 7-point Likert scale ranging from 1 (I do not have a clear opinion on that) to 7 (I have a clear opinion on that) whether they had a clear opinion on the issue of abortion. The item included a “don’t know” option.

Knowledgeability

The second measure captured respondents’ level of knowledgeability on the issue. Respondents were asked to rate their familiarity with the topic of legal abortion on a 7-point Likert scale ranging from 1 (Not familiar at all) to 7 (Very familiar). The item included a “don’t know” option.

Conditioning Frequency

To investigate whether topic-specific reflection processes are responsible for changes in respondents’ (reported) attitudes, we use the study's experimental design and compare respondents with three different levels of exposure to identical attitude questions over the course of the six survey waves. We compare respondents who received identical attitude questions in every wave (i.e., the “fully conditioned” experimental group) with respondents who received identical questions only three times, that is, from the fourth wave of the study onwards (i.e., the “medium conditioned” experimental group) and those who received the target attitude questions only once, namely, in the final wave of the study (i.e., the “unconditioned” control group).

Survey Experience

To explore the possible impact of experience with the general response task on answers to attitude questions, we use two different operationalizations of survey experience. First, we compare the respondents across the probability and nonprobability panels. We argue that, on average, respondents of nonprobability panels have much more experience of participating in many different surveys compared with respondents of probability-based panel studies, who participate, for instance, only every two or three months. Administrative data obtained by the panel provider do indeed show that respondents of the nonprobability panel had a median of 74 completed surveys in the last 12 months prior to our study, whereas respondents of the probability-based panel had participated in a maximum of six survey waves in the same period. Some nonprobability online panelists might even be members of one or multiple other online panels (Callegaro et al. 2014).

As a second operationalization of survey experience, we focus on respondents’ experience within a panel and differentiate each respondent group within the probability-based panel by panel cohort. We compare respondents of the initial panel cohort (recruited in 2013; high level of survey experience) with respondents of the second cohort (recruited in 2016; medium level of survey experience) and the third cohort (recruited in 2018; lowest level of survey experience).

Analytic Strategy

The overall goal of our analyses is to examine the mechanisms responsible for observed changes in attitude answers in panel studies. First, to test H1, we investigate differences in the proportion of “don’t know” responses to the abortion items between the three respondent groups (i.e., the two experimental groups and the control group) in each panel study by conducting chi-squared tests or—if the number of observations is not sufficiently high—Fisher's exact tests.

In a second step, to test H2, we conduct a between-group comparison across the six respondent groups regarding their observed abortion attitudes in the last wave of the study. To compare the groups, we use multiple-group confirmatory factor analysis (MG-CFA) testing measurement invariance of the target abortion measures across the differently conditioned respondent groups (i.e., differential exposure to identical abortion questions and general survey experience) in Wave 6. For this and subsequent analyses based on structural equation modeling, we use the R package lavaan (Rosseel 2012). By conducting an MG-CFA, we can disentangle the effect of repeated interviewing on the measurement component of responses to the abortion items (i.e., how respondents report their attitudes on abortion) from the effect of repeated interviewing on the actual underlying latent abortion attitudes. Testing measurement invariance consists of a series of pre-defined model comparisons that successively impose more stringent equality constraints on the parameters of a measurement model (Byrne 1989).

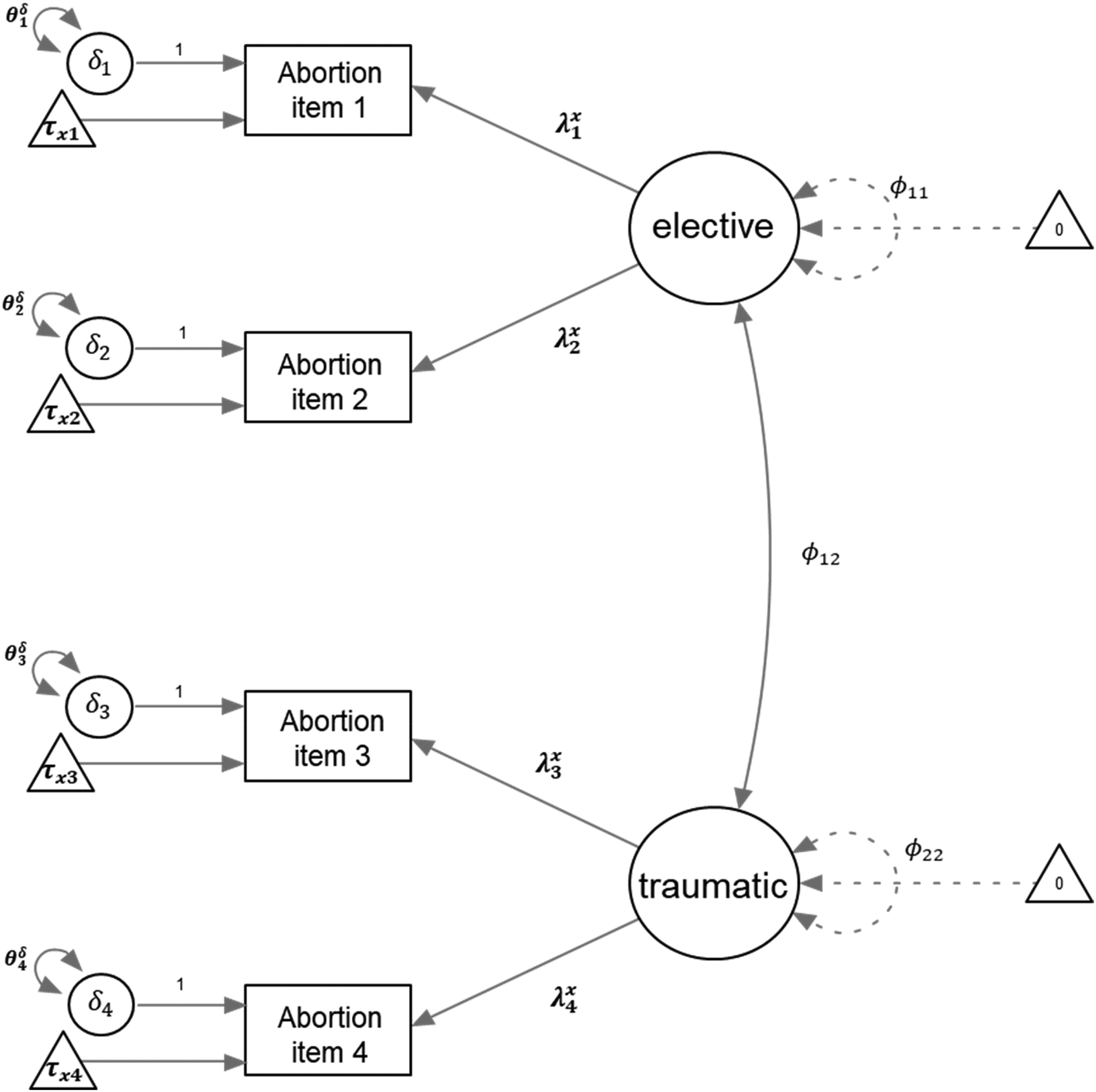

The baseline measurement model that we compare across the groups in Wave 6 is a two-factor model distinguishing between abortion sought for elective reasons and abortion sought for traumatic or health-related reasons. 5 In particular, the first two abortion items (i.e., the woman does not want any more children; the family cannot afford any more children) load on a latent factor representing elective reasons, whereas the last two items (i.e., high probability of birth defects and serious endangerment of the woman's health) load on a second latent factor capturing the traumatic reasons for seeking an abortion (for an illustration of the two-factor model, see Figure 2). The two-factor model has been validated against a model with only one latent factor in several previous studies (Hoffmann and Johnson 2005; Osborne et al. 2022) and in various country contexts (Huang et al. 2016; Karpov and Kääriäinen 2005; Osborne and Davies 2012).

Two-factor measurement model on attitudes toward abortion.

Following the pre-defined model comparisons of an MG-CFA, we first test the overall factor structure and pattern of factor loadings of our measurement model (i.e., test of configural invariance) across the six respondent groups (i.e., the two experimental groups and one control group in each panel study). Configural invariance exists if the two-factor structure as well as the loading patterns of the four abortion items are the same in each of the six respondent groups. If configural invariance can be established, we will proceed to test whether the factor loadings are equal across the groups (i.e., metric invariance), and whether the intercepts of the items are equal across the groups (i.e., scalar invariance). Finally, we will test whether residual variances of the indicators are equal across the groups (i.e., strict invariance).

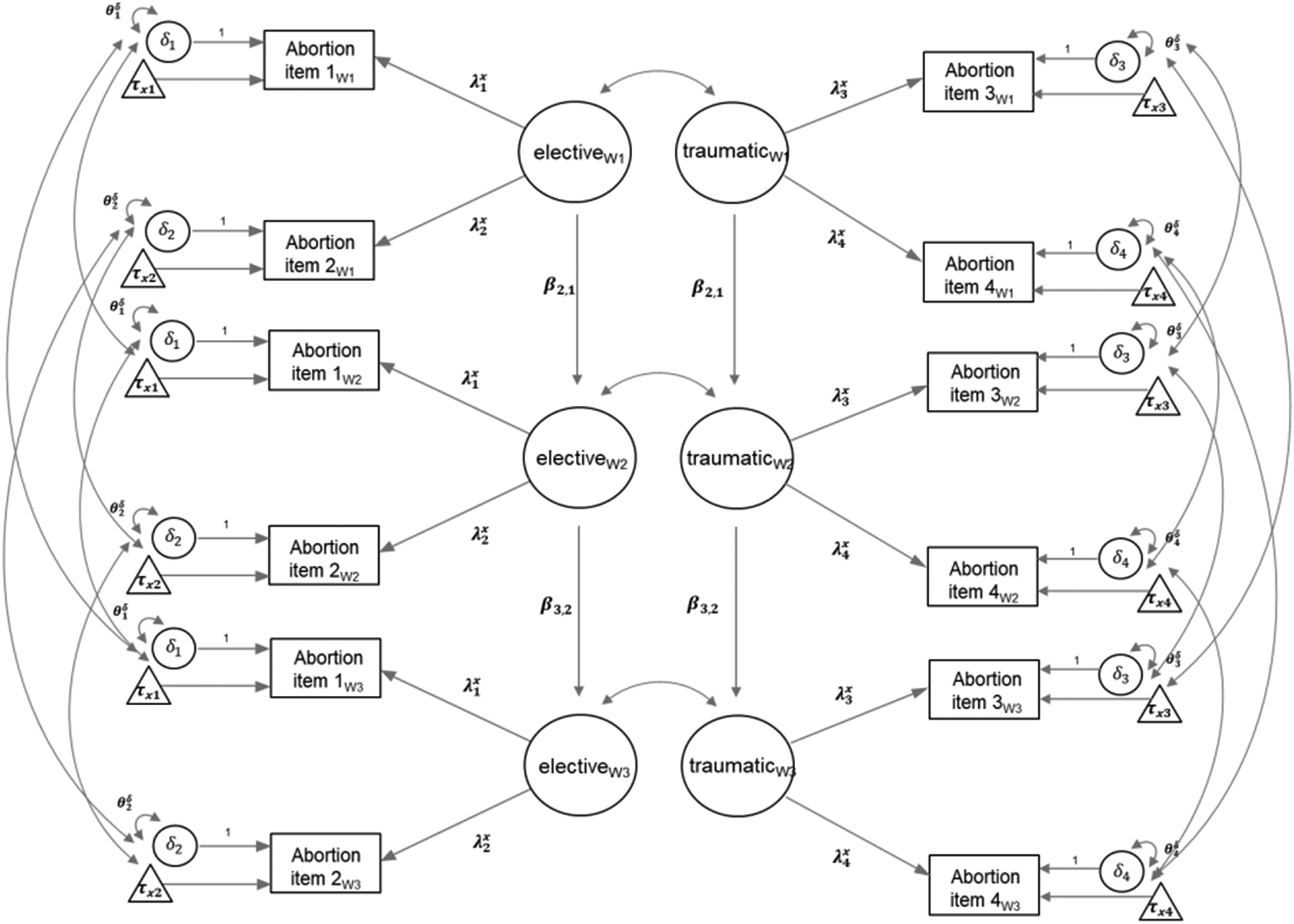

As a sensitivity analysis, we additionally examine the responses to the target abortion items across the six survey waves to test H2 further. We conduct this within-group comparison for respondents in the “fully conditioned” experimental groups who received the abortion measure in each survey wave and carry out a longitudinal factor analysis that tests the measurement invariance of abortion attitudes over time, differentiating between changes in reported attitudes and in underlying latent attitudes. Again, we follow the pre-defined series of model comparisons, testing differences in the measurement components (i.e., factor loadings, item intercepts, residual variances). Testing our baseline model over the six survey waves, we allow the residual variance of an item to correlate across the six waves. If scalar invariance as a minimum requirement can be established, we will then further employ the longitudinal design of our study and use the within-group comparison to investigate the stability of the latent abortion attitudes over time by estimating an autoregressive first-order model (i.e., a simplex model; see Figure 3).

Autoregressive first-order model (simplex model). Note. For reasons of conciseness, only the first three of the six waves are shown.

To test H3, which specifically relates to the assumptions of the CS model, we incorporate (self-reported) attitude certainty and knowledgeability on the issue of abortion into the between-group comparison of the baseline measurement model in the last wave of the study. In doing so, we estimate a structural equation model including these external covariates, which affect both latent factors of the abortion items. We test whether the means of respondents’ attitude certainty and knowledgeability are significantly different across groups and how their impact on the latent abortion factors differs across groups.

Finally, to test H4, we compare the three respondent groups in the probability-based panel with those in the nonprobability panel. Additionally, we differentiate the fully conditioned, medium conditioned, and unconditioned respondent groups of the probability-based panel by cohort and conduct MG-CFAs across the three different cohorts of the panel for each group separately.

Results

H1: Differences in the Proportion of “Don’t Know” Responses

Before investigating change and the underlying mechanisms of change in respondents’ abortion attitudes, we first compare the dropout rates in the last wave of the study across the respondent groups to exclude the possibility of bias due to differential nonresponse potentially affecting differences in observed abortion attitudes across groups. In both the probability-based and the nonprobability panel studies, we find no statistically significant differences in dropout rates across the groups, indicating that differential nonresponse is unlikely to bias cross-group comparisons (see Tables B6 and B7, Appendix B in the online Supplemental Materials for the detailed dropout rates by group).

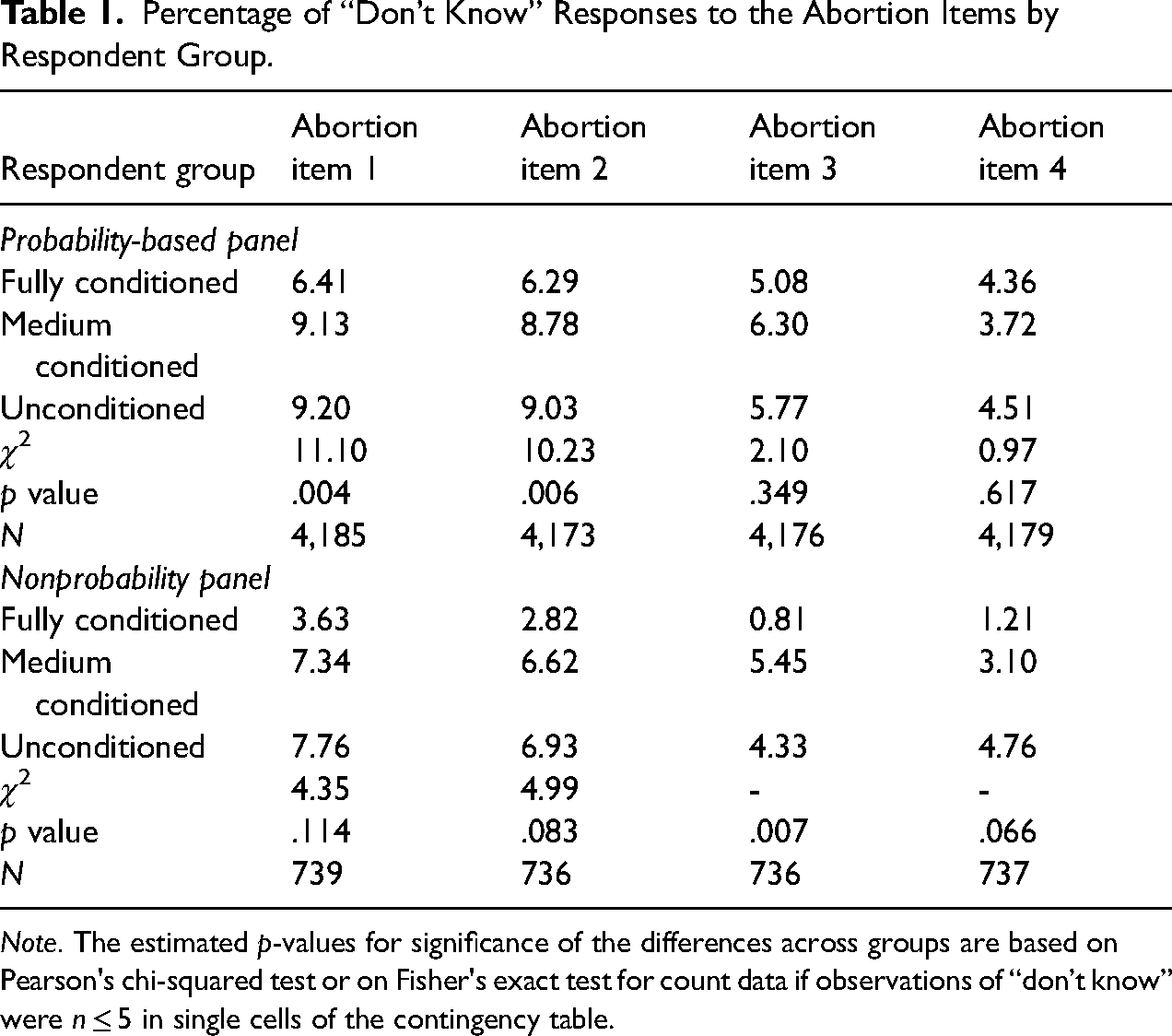

Table 1 displays the proportion of “don’t know” responses across the six respondent groups (H1). The results show that the three respondent groups of the probability-based panel differ significantly in the proportion of “don’t know” responses given to two of the four abortion items (i.e., the woman does not want any more children and the family cannot afford any more children), with fully conditioned respondents (i.e., respondents who were surveyed on abortion in each wave) showing the lowest proportions of “don’t know” responses, which supports H1. For the last two abortion items (high probability of serious birth defects; the pregnancy endangers the woman's health), the proportions of “don’t know” responses do not differ across the respondent groups of the probability-based panel, indicating that being repeatedly surveyed on these issues does not influence whether respondents provide a substantive response or report that they “don’t know.” This might be because respondents already have quite a strong opinion on abortion for traumatic reasons, or they may view the topic as rather complex and ambivalent. The respondent groups of the nonprobability panel differ significantly only in the proportion of “don’t know” responses to one of the four abortion items (i.e., high probability of serious birth defects). 6 Nevertheless, fully conditioned respondents consistently show a smaller proportion of “don’t know” responses across all items compared with the other two respondent groups, which is in line with H1. The magnitude of differences is greater in the nonprobability panel, with differences of up to 4 percentage points (p.p.) on average, whereas differences across the respondent groups in the probability-based panel are only 2 p.p. on average.

Percentage of “Don’t Know” Responses to the Abortion Items by Respondent Group.

Note. The estimated p-values for significance of the differences across groups are based on Pearson's chi-squared test or on Fisher's exact test for count data if observations of “don’t know” were n ≤ 5 in single cells of the contingency table.

By excluding “don’t know” and “skips” as nonsubstantive responses from the further analyses below, we have complete data on all four abortion items for 4,370 respondents. Data are partly missing for 430 respondents who are included in the between-group analyses that focus on the last wave of the study. For the within-group analyses, which focus on all six panel waves, we have complete data on all abortion items across six waves for 1,289 respondents and partly missing data (at least one item in one wave missing) for 1,031 respondents of the probability-based panel. For the nonprobability sample, we have complete data of 185 respondents and partial data for 264 respondents to analyze across waves. (for a detailed overview on the sample sizes of each analysis, see Table B8, Appendix B in the online Supplemental Materials). To account for the missing patterns in the data and to maintain a larger effective sample size, we use full information maximum likelihood to estimate the model coefficients (Enders and Bandalos 2001).

Testing Measurement Invariance Across the six Respondent Groups

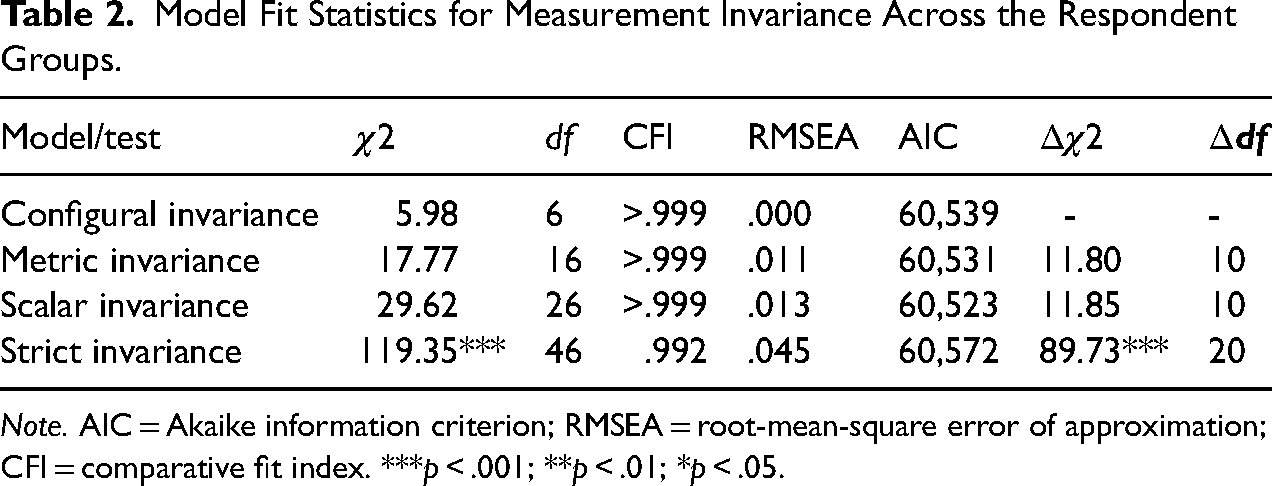

We first test configural invariance of the measurement model by fitting the two-factor structure of the measurement model on abortion attitudes in Wave 6 to the six different respondent groups while freely estimating the model's parameters (i.e., factor loadings, item intercepts, residual variances). The results provided in Table 2 show that—according to the rules of thumb for model fit (Kline 2023)—the two-factor model fits the data in each group (χ2(6) = 5.98, p = .43; root-mean-square error of approximation [RMSEA] = 0.000; comparative fit index [CFI]>0.999; Akaike information criterion [AIC] = 60,539).

Model Fit Statistics for Measurement Invariance Across the Respondent Groups.

Note. AIC = Akaike information criterion; RMSEA = root-mean-square error of approximation; CFI = comparative fit index. ***p < .001; **p < .01; *p < .05.

Next, we test for metric invariance by constraining all factor loadings to be equal across the six respondent groups but allowing for variation in item intercepts and residual variances. The results show that the metric invariance model does not fit significantly worse than the configural invariance model (Δχ2(10) = 11.80, p = .30) and additionally has a smaller AIC value (60,531), indicating that the factor loadings do not differ between the six respondent groups.

Consequently, we test for scalar invariance by constraining all factor loadings and additionally the item intercepts to be equal across the respondent groups but freely estimating residual variances of the items across groups. Again, results show that the more restrictive model fits the data better than the less restrictive metric invariance model (Δχ2(10) = 11.85, p = .30; AIC = 60,523), and accordingly that both factor loadings and item intercepts are equal across the six respondent groups.

Lastly, we test for strict invariance and constrain all parameters (i.e., factor loadings, item intercepts, and residual variances) of the measurement model to be equal across the six respondent groups. The results indicate that equal residual variances across groups are not supported by the data, as the strict invariance model fits the data significantly worse than the less restrictive scalar invariance model (Δχ2(20) = 89.73, p < .001; AIC = 60,572). Thus, while we find no differences in the factor loadings and the item intercepts of the abortion measurement model, we find that residual variances of the abortion items differ significantly across the six respondent groups.

H2: Attitude Crystallization

Between-Group Analyses

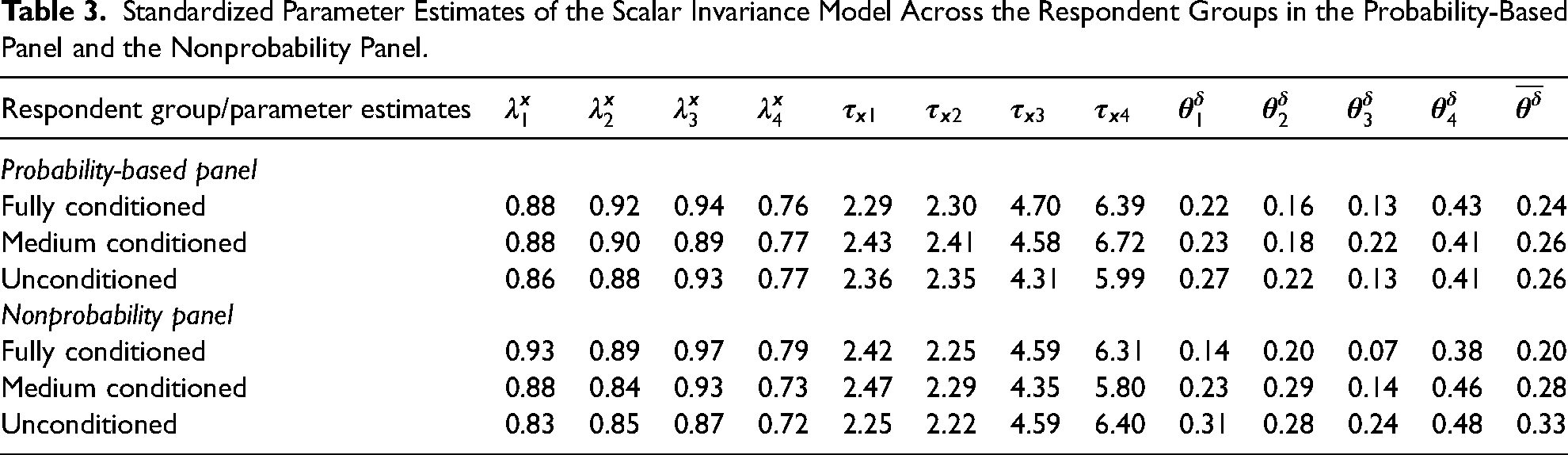

Reliability. To test H2 regarding the crystallization of attitudes due to repeated interviewing on the same issues, we first investigate changes in attitude reliability. For this, we inspect in more detail the residual variances of the four abortion items—which we have shown to be unequal across the six respondent groups. Similar to Jagodzinski, Kühnel, and Schmidt (1987), we infer a higher degree of attitude reliability from smaller residual item variances.

Table 3 shows the standardized estimates for the residual variances of each of the four abortion items (Columns

Standardized Parameter Estimates of the Scalar Invariance Model Across the Respondent Groups in the Probability-Based Panel and the Nonprobability Panel.

We additionally estimate and compare reliability coefficients (i.e., McDonald's third coefficient omega; McDonald 2013) across the six groups. We find the highest reliability coefficients (.90 or higher for both factors in the nonprobability panel and for the elective factor in the probability-based panel) among fully conditioned respondents (see Appendix C, Table C2 in the online Supplemental Materials) 7 , providing further support for H2.

Within-Group Analyses

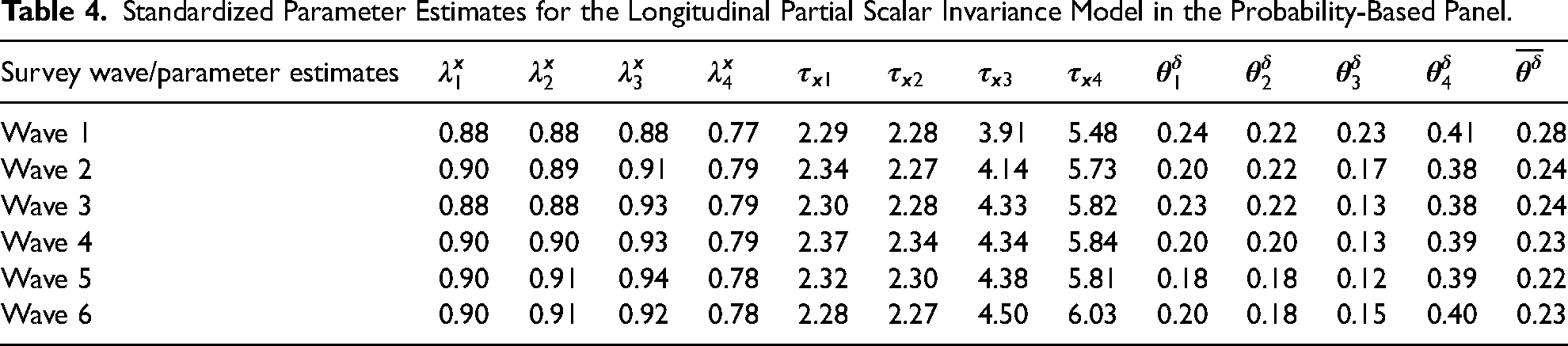

As a sensitivity analysis, we additionally investigate within-group changes in respondents’ attitudes toward abortion over time. We focus on the analysis of respondents in the fully conditioned experimental groups (who received the identical abortion question in all six waves) in both the probability-based and the nonprobability panels, and we first examine measurement invariance of the abortion items across the six waves. Our analysis of measurement invariance over time for respondents of the probability-based panel shows that only partial scalar invariance can be established (i.e., equal factor loadings and equal item intercepts except for the fourth abortion item in Wave 1), and that similar to the between-group analyses, residual variances are not equal across the six waves. 8 For fully conditioned respondents of the nonprobability panel, measurement invariance analyses show similarly that only scalar invariance is supported by the data and that the residual variances of the four abortion items are significantly different across the six waves (for detailed results, see Appendix E, Table E1 in the online Supplemental Materials for the probability-based panel and Table E4 for nonprobability panel). However, due to inconsistencies observed in the parameter estimates for respondents in the nonprobability panel, we focus exclusively on fully conditioned respondents in the probability-based panel for our within-group analyses over time. 9

Reliability

The initial screening of the residual variances of the items over time (Table 4, Columns

Standardized Parameter Estimates for the Longitudinal Partial Scalar Invariance Model in the Probability-Based Panel.

The longitudinal patterns of the residual variances of the items provide additional tentative support for H2, which expects an increase in attitude reliability due to repeated interviewing on the identical issue.

Additional analyses comparing McDonald's third coefficient omega across the six waves for respondents in the fully conditioned experiment group in the probability-based panel provide further tentative evidence of an increase in reliability, which supports H2 (for detailed results, see Tables E3, Appendix E in the online Supplemental Materials).

Stability

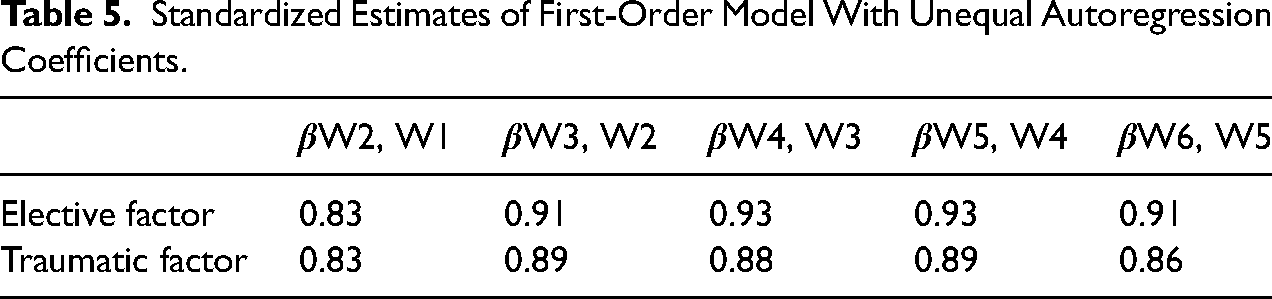

We further examine the crystallization of attitudes due to repeated interviewing (H2) by investigating changes in the stability of respondents’ underlying abortion attitudes over time. For this, we again use within-group comparison across the six survey waves and focus on respondents in the fully conditioned group in the probability-based panel. As the data support partial scalar invariance of our measurement model over time, we can investigate change patterns in respondents’ latent abortion attitudes over the course of the six waves. We estimate an autoregressive first-order model (simplex model) modeling respondents’ latent abortion attitudes at measurement point t as being influenced solely by their abortion attitudes at the previous measurement point

To investigate the pattern of the autoregression coefficients over time, we inspect the coefficients’ estimates provided in Table 5 (for the unstandardized estimates, see Table E9, Appendix E in the online Supplemental Materials). The results show that autoregression coefficients between Wave 1 and Wave 2 are lowest compared with subsequent waves, indicating that the stability of respondents’ latent abortion attitudes increases after the second interview. Moreover, autoregression coefficients show the most notable increase between Waves 2 and 3, while they level off in the subsequent waves. Consequently, observed changes over time are not restricted to changes in the measurement component of abortion attitudes but are also reflected in respondents’ latent abortion attitudes, which become more stable over time, with most of the change happening from the second to the third panel wave. It should be noted that the results on changes in autoregression coefficients over time should be interpreted cautiously, as the simplex model fits the data worse than the previously established partial scalar invariance model, possibly due to model complexity or complex structural patterns that cannot be fully captured by the simplex model alone. Nevertheless, the increase in stability over time provides further tentative evidence in support of H2, which assumes that repeatedly answering identical attitude questions leads to the crystallization of attitudes.

Standardized Estimates of First-Order Model With Unequal Autoregression Coefficients.

H3: Increase in Attitude Certainty and Knowledgeability as a Result of Reflection Processes

To test H3, we investigate the role of respondents’ attitude certainty and knowledgeability. Using data from Wave 6, we first fit a structural model with attitude certainty and knowledgeability as external covariates that influence both latent factors across the six respondent groups. The model fits the data sufficiently well (χ2(30) = 33.50, p = .30, RMSEA = 0.012, CFI ≤ 0.999, AIC = 95,522). We then compare this model with a structural equation model that includes equality constraints on the means of attitude certainty and knowledgeability across groups. We find that the model with equality constraints on the means of the covariates does not fit the data significantly worse than the less restrictive model (Δχ2(10) = 15.08, p = .13; AIC = 95,517; for detailed model fit statistics, see Table F1, Appendix F in the online Supplemental Materials). This indicates that respondents do not have stronger attitudes or show higher levels of knowledgeability when they are repeatedly asked identical questions on abortion compared with those respondents who are not repeatedly exposed to these questions.

After testing the means of the covariates across the respondent groups, we also estimate a model with equality constraints on the regression coefficients between knowledge and attitude strength and the latent abortion attitudes (i.e., equal regression slopes). We find that the model with equal regression slopes does not fit the data worse than the model with unequal regression slopes (Δχ2(20) = 30.89, p = .06; AIC = 95,508; see Table F1, Appendix F in the online Supplemental Materials for detailed results), suggesting that the impact of attitude certainty and knowledgeability on latent abortion attitudes is also the same across the respondent groups. Altogether, these results do not provide support for H3.

Hypothesis 4: Survey Experience and Increased Attitude Reliability (“Better Respondents” Model)

Probability-Based Versus Nonprobability Panel Respondents

To test our fourth hypothesis regarding the impact of survey experience (i.e., experience with the response task in general) on the reliability of reported attitudes on abortion, we first compare residual variances as an indicator of attitude reliability between fully conditioned respondents of the probability-based panel against respondents in the fully conditioned experimental group in the nonprobability panel. To do so, we first constrain the residual variances of the abortion items to be equal between respondents of the probability-based and the nonprobability panels. The results show that the model with equal residual variances among respondents of the probability-based and nonprobability panels fits the data significantly worse than the scalar invariance model with unequal residual variances (Δχ2(12) = 45.27, p < .001; AIC = 60,544; for detailed results, see Table G1, Appendix G in the online Supplemental Materials), indicating that respondents from the probability-based and the nonprobability panels significantly differ from each other.

When comparing the standardized residual variances between the two panels (see parameter estimates of the scalar invariance model in Table 3), the residual variances of the fully conditioned respondents are larger in the probability-based panel compared with the nonprobability panel, which is in line with H4. With respect to the reliability coefficients (see Table C2, Appendix C in the online Supplemental Materials), we observe a similar pattern, with respondents in the fully conditioned group in the nonprobability panel showing (slightly) higher reliability coefficients compared with their counterparts in the probability-based panel. Because the magnitude of the observed differences is small, our first analysis on the impact of survey experience provides only tentative support for H4, which states that higher levels of survey experience lead to increased attitude reliability.

Comparison of Panel Cohorts Within the Probability-Based Panel

To test H4 further, we additionally differentiate the respondent groups of the probability-based panel by panel cohort. For every conditioning level, we test differences in our measurement model between respondents of the three cohorts, who have different tenure within the panel. Results of the MG-CFA across the panel cohorts show no significant differences in model parameters (for detailed results, see Tables H1–H3, Appendix H in the online Supplemental Materials). Consequently, factor loadings, item intercepts, and the residual variances of the abortion items are equal across cohorts, indicating that experience within the probability-based panel does not change respondents’ reported attitudes on abortion. Overall, the results of this comparison suggest that respondents’ attitudes on abortion are not affected by their level of survey experience. Hence, H4 is not supported.

Discussion

Previous research suggests that repeated interviewing on the same issues stimulates reflection processes within respondents, which can lead to the crystallization of attitudes. This results in more reliable and stable attitudes (CS hypothesis; see Sturgis, Allum, and Brunton-Smith 2009). In the present study, we tested the CS hypothesis against the “better respondents” model (Yan and Eckman 2012), which attributes change in answers to attitudinal questions over time to respondents’ increased experience and skill with the general response task.

Using experimental data from six consecutive waves of a nonprobability and a probability-based panel study, we investigated changes in respondents’ attitudes toward abortion and the mechanisms underlying these changes. In the experiment, we manipulated the frequency of receiving identical attitude questions (once vs. three times vs. six times). We estimated structural equation models to differentiate between (a) changes in respondents’ reported attitudes toward abortion and (b) changes in their latent attitudes toward abortion.

Overall, our study provides evidence for panel conditioning effects. Respondents who received the identical abortion questions in each wave of the study gave fewer “don’t know” responses to most items of the abortion battery compared with respondents who received the target attitudinal questions less often (H1). While the test of H1 is based on the comparison of respondents who vary in the intensity of answering sensitive issues and observed differences suggest a process of opinionation on the topic (Sturgis, Allum, and Brunton-Smith 2009), fewer “don’t know responses could also be attributed to improved response behavior due to an increased understanding of the relevance of one's answers (Waterton and Lievesley 1989). The results further show that attitude reliability differs significantly across the respondent groups and is highest for those respondents who received the identical abortion questions in each survey wave (i.e., fully conditioned respondents) (H2). As in the case of H1, these differences between respondents who vary in their engagement with sensitive issues indicate attitude crystallization, triggered by the repeated exposure to these issues and increased reflection processes. However, our test of H2 cannot fully disentangle the effects of general survey experience from those of issue-specific reflection and increases in attitude reliability could also be due to better response behavior leading to more accurate self-reports. Within-group comparisons of fully conditioned respondents provide further evidence in line with the findings of the between-group comparisons: Respondents’ attitude reports toward abortion become more reliable over time. The results of the autoregressive model, which examines change in latent traits, further indicate that respondents’ latent abortion attitudes become more stable in subsequent waves of the study following the second interview. While these findings should be interpreted with caution due to the models’ fit statistics, they suggest that increases in attitude reliability may, at least in part, reflect a change in respondents’ underlying abortion attitudes rather than result from better response behavior. Both the within-group comparison and the autoregressive model results suggest that most of the change across waves occurs early on, particularly over the first three waves of the study, implying that the effect of repeated interviewing is strongest at the beginning of a study and levels off in later waves. Overall, our findings on increased attitude reliability and stability are consistent with those of previous studies documenting more reliable and stable attitudes among more experienced panelists (Bergmann and Barth 2018; Kroh, Winter, and Schupp 2016; Sturgis, Allum, and Brunton-Smith 2009; Sun, Tourangeau, and Stanley Presser 2019).

We did not find evidence of increased attitude certainty and knowledgeability on abortion after respondents answered the identical abortion question multiple times (H3). This finding contradicts the assumptions of the CS hypothesis (Sturgis, Allum, and Brunton-Smith 2009) which postulates that repeated exposure to the same attitude questions triggers reflection processes and further information searches, which in turn lead to increased attitude strength and knowledgeability. However, we used self-reported measures of attitude strength and knowledgeability, which might have impacted this result.

We further tested the CS hypothesis against the “better respondent” model by comparing (a) respondents from the probability-based panel with the respondents of the nonprobability panel and (b) different panel cohorts of the probability-based panel to capture the effect of different levels of general survey experience on answers to attitudinal questions. Whereas we do not find any evidence for differences in reported attitudes toward abortion across the three cohorts of the probability-based panel, we find that fully conditioned respondents in the nonprobability panel show smaller residual variances and slightly higher reliability coefficients compared with their counterparts in the probability-based panel. Therefore, our analyses to test H4 provide only tentative evidence of the relevance of general survey experience for changes in attitude reliability across waves.

Limitations and Opportunities for Future Research

Our study has several limitations that offer opportunities for future research. First, our analyses show statistically significant but rather small and inconsistent effects overall, which reflect only small changes in attitudinal responses or underlying attitudes toward abortion in panel studies that might not hold for every abortion item.

Second, both operationalizations of general survey experience used to test the “better respondent” model have weaknesses. For the comparison between respondents of the probability-based and nonprobability panels, higher levels of survey experience among non-probability panelists based on the number of surveys completed per year may not be indicative of being a “better respondent” but rather of being a respondent who is driven by financial motives rather than intrinsic interest in the survey (Cornesse and Blom 2023; Silber, Stadtmüller, and Cernat 2023). For the comparison between cohorts in the probability-based panel, our analysis allows only a comparison where the “youngest” (i.e., least experienced) cohort in the probability-based panel already had two years of panel experience with a maximum of 16 completed survey waves at the beginning of our study. However, the most significant effects of experience with the overall response task on response behavior might occur among respondents with very little or no previous survey experience, which we fail to capture in our analyses.

Third, to test the CS hypothesis, we used subjective measures of attitude certainty and knowledgeability on the issue of abortion, which possibly affected our conclusions regarding the relevance of reflection processes for attitude change in panel studies. To deepen the understanding of the mechanisms underlying attitude change, future research could use objective measures (e.g., of response latency or actual knowledge) and test behavioral measures such as discussions with family and peers and attention to media reports on surveyed topics.

In addition, to shed further light on which mechanism (reflection processes stimulated by the repeated exposure to the same issues vs. improved response behavior due to increased survey experience) is most likely responsible for observed changes in respondents’ answers to attitude questions over time, future studies could experimentally vary the difficulty of the topic and the response tasks while still manipulating the frequency of receiving identical question content.

More generally, future research could contribute most by developing a unified theoretical framework of panel conditioning that differentiates the underlying mechanisms and their implications for panel data quality, including potential mediating and moderating factors, in order to advance our understanding of the nature of conditioning effects, and to isolate the effect of general survey experience from the specific experience of repeatedly answering a particular survey question.

Fourth, our study focuses on the analysis of attitudes toward abortion—a sensitive issue that might be susceptible to socially desirable responding, which likely limits the generalizability of our findings to other non-sensitive issues. Similarly, the analyzed abortion items feature an agree–disagree scale, which may be affected by acquiescence bias and its change across waves. Future research should therefore investigate the effect of repeated exposure to attitudinal questions on less sensitive topics, examine whether some topics are more susceptible to conditioning effects than others, and investigate conditioning effects on different attitudinal questions with different response scales.

Finally, previous research suggests that panel conditioning effects are most likely to occur if the interval between panel waves is comparatively short (i.e., about a month or less; Halpern-Manners and Warren 2012). Our study uses data from six panel waves that were administered every two to three months. Future studies should investigate whether our findings can be generalized to panel studies with longer and/or shorter intervals between waves.

Practical Recommendations

To assess whether and to what extent answers to attitudinal questions are subject to panel conditioning effects, panel practitioners should closely examine changes in attitude reports across a range of attitudinal questions as different mechanisms may have an impact on respondents’ answers to attitude questions (i.e., social desirability bias in the case of sensitive topics, satisficing response strategies in complex attitudinal questions).

In addition, to better understand whether existing conditioning effects reflect improved response behavior and an improved engagement with measurement instruments or if respondents’ underlying attitudes were affected by repeated interviewing, panel infrastructures could draw on several available tools.

One option, particularly for panel practitioners of online panel studies, is to analyze paradata, such as response time, mouse movements, or tab switching behavior (Macke, Daviss, and Williams-Baron 2024). These indicators can provide insights into the degree of respondents’ engagement with the question, such as whether respondents can easily map their underlying attitudes to the response scale, or whether they take more time to process a question. Paradata also allows to investigate the extent to which respondents revise their initial response choices when repeatedly answering the same question over time.

The growing availability of digital trace data further expands the toolkit for investigating conditioning effects in attitudinal answers (Silber et al. 2024a). For instance, panel infrastructures could use digital trace data to investigate whether respondents seek out further information on recurring topics (e.g., through specific website visits) after repeated interviewing, which could indicate increased topic awareness and interest prompted by repeated survey participation.

Finally, as commercial online panels become more widespread, both panel but also cross-sectional studies could routinely ask respondents whether they are an active member of other online panels to account for sources of conditioning that originate outside of the study (Hillygus, Jackson, and Young 2014). Even if respondent conditioning lies outside the control of the study in question, survey responses may nevertheless, be affected by survey professionalism.

Conclusion

Overall, our study provides valuable insights into the validity of attitudinal responses in longitudinal studies. In the context of abortion attitudes—which are both relatively stable and sensitive—it suggests that panel conditioning is not a major concern for the response quality of attitude answers. Rather, our study shows that repeated measurement can, in fact, improve the accuracy of attitudinal responses to issues such as abortion in panel studies, which allows researchers a valid analysis of change and stability over time. By using an experimental study design and distinguishing between changes in the measurement of respondents’ attitudes and their latent traits, we not only show that repeated interviewing can decrease measurement error but that latent attitudes become more stable. The increase in attitude stability does not affect analyses of societal change, but it should be considered for specific research questions, for example, on attitude strength over time. While our study finds only small effects of repeated interviewing on attitude reliability and stability, we emphasize that the potential impact of panel conditioning on the validity of results should be assessed with respect to researchers’ specific research goals and outcomes of interest. Our findings might be particularly relevant for survey practitioners or panel infrastructures that use rotating panel designs to mitigate the effects of panel conditioning or for attitude surveys more generally, as they suggest that, in the case of abortion attitudes, identical attitudinal questions can be repeatedly administered without adverse effects on measurement error in later waves.

Supplemental Material

sj-docx-1-smr-10.1177_00491241251372503 - Supplemental material for Monitoring Attitudes Over Time: Real Change or the Result of Repeated Interviewing?

Supplemental material, sj-docx-1-smr-10.1177_00491241251372503 for Monitoring Attitudes Over Time: Real Change or the Result of Repeated Interviewing? by Fabienne Kraemer, Peter Lugtig, Bella Struminskaya, Henning Silber, Bernd Weiß and Michael Bosnjak in Sociological Methods & Research

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the “German Research Foundation” (DFG) [grant number: 418316085]

Data and Code Availability Statement

This paper uses data from a research project called “Mechanisms of Panel Conditioning in Longitudinal Studies: Reflection, Satisficing, and Social Desirability (PaCo)” (project number: 418316085), which was funded by the German Research Foundation (DFG). The project comprised of two panel studies: A nonprobability study that was fielded within an online access panel as well as a probability-based panel study fielded within the GESIS Panel.

The data of the nonprobability panel study is published at PsychArchives with a scientific use license and can be accessed for scientific/academic purposes:

Silber, Henning, Bella Struminskaya, Michael Bosnjak, Bernd Weiß, Fabienne Kraemer, and Joanna Koßmann. 2024. “PaCo—Non-Probability Panel Study [Data set].” PsychArchives.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.