Abstract

The robustness of qualitative comparative analysis (QCA) results features high on the agenda of methodologists and practitioners. This article aims at advancing this debate on several fronts. First, in line with the extant literature, we take a comprehensive view on robustness arguing that decisions on calibration, consistency, and frequency thresholds should all be tested. Second, we introduce the notion of “sensitivity range” as the range of values for any of these parameters within which the solution formula remains unchanged. Third, we argue that interpreting robustness is more intricate than simply checking if solutions remain unchanged. Beyond sensitivity ranges, researchers should assess robustness by evaluating changes in parameters of fit and the classification of cases as robust, shaky, or possible. Fourth, we enable researchers to perform more than one robustness test at a time by proposing the notions of a “test set”: the overlap between conceptually plausible alternative solutions that can be generated; and of a “robust core”: that part of a QCA solution that withstands the robustness checks. Fifth, we present functionalities implemented in the R package SetMethods that enable researchers to put in practice our proposals. These advancements are integrated into a comprehensive QCA Robustness Test Protocol consisting of three main tests: sensitivity ranges, fit-oriented robustness, and case-oriented robustness. We illustrate the protocol’s implementation with an example on high life expectancy across the globe.

Robustness features high on the agenda in many empirical data analysis techniques. By and large, the higher the number of cases included in the analysis and the less intimate case knowledge exists, the more discretionary analytic choices researchers have to make, and thus, the more important becomes the need for applying systematic robustness tests against such choices. This also holds for qualitative comparative analysis (QCA), especially when applied to more than a handful of cases. With a larger number of cases, algorithmic routines with multiple, often discretionary, analytic decisions to be made gain prominence over intimate case knowledge. There already exists a literature on robustness in QCA. Some primarily focus on the question of how robust the method of QCA is. For different conclusions on this question, see Arel-Bundock (2019); Baumgartner and Thiem (2017); Braumoeller (2015); Hug (2013); Krogslund, Choi, and Poertner (2015); Rohlfing (2015, 2018); and Thiem, Spöhel, and Duşa (2016). Others are more interested in how robust specific results in applied QCA are, for instance, Cooper and Glaesser (2015); Emmenegger, Schraff, and Walter (2014); Schneider and Wagemann (2012); Skaaning (2011); and Rutten (2020). In tests of the method of QCA, simulated data are often used and are arguably superior to nonsimulated data from applied research (Rohlfing 2016; but see Lucas and Szatrowski 2014), simply because with simulations we can make sure the true data generating process is known and deviations from this “truth” become measurable. In tests of results generated in applied QCA, in contrast, such truth is not known. The QCA Robustness Test Protocol that we present in this article is primarily for applied QCA researchers, but elements of it can, and we think should, inform tests of QCA as a method as well. In other words, our protocol aims at probing how robust a specific QCA solution, derived from empirical data, is. This specific QCA result might or might not reflect the true data generating process. Our goal, thus, does not consist in testing whether QCA as a method is, in principle, capable of extracting the true data generating process. Instead, the goal is to probe how robust given QCA results are in applied research settings.

Across both groups of testing QCA as a method and of QCA results, a consensus seems to have emerged against which analytic decisions QCA should be robust. These are changes in raw consistency, frequency cutoff, calibrations, and changes to the set of cases or conditions under analysis. Authors in both groups also seem to agree by now on what can be considered as a robust QCA result. Only a minority takes the demanding position that the Boolean expressions must remain identical (e.g., Krogslund et al. 2015). A more lenient and differentiated approach stipulates that the results at least remain in a set relation (e.g., Arel-Bundock 2019; Baumgartner and Thiem 2017; Schneider and Wagemann 2012; Thiem et al. 2016). 1 What the applied robustness literature has in common, so far, is that any of the proposed robustness tests is performed “by hand,” that is, tailored to specific examples and executed in often time-consuming (and convoluted) series of separate calculations and interpretations of the test results (e.g., Rutten 2020). The few software solutions that exist, such as the retention function in R package QCA (Dusa 2018) or packages QCAfalsePositive (Braumoeller 2015) and braQCA (Gibson and Burrell 2018), are either meant to test QCA as a method or confined to narrower notions of QCA solution robustness tests than what we conceptually propose and computationally implement in this article.

This article aims at pushing the debate ahead on several fronts. 2 First, in line with the extant literature, we take a comprehensive view on robustness challenges and argue that decisions on calibration, raw consistency threshold, and frequency threshold should all be tested. Second, focusing on the Boolean expression of the solution, we introduce the notion of “sensitivity range.” This is the range of values for the location of any one of the qualitative calibration anchors, the raw consistency threshold, or the frequency threshold within which the Boolean formula for the solution remains unchanged, if only this single parameter is changed and everything else in the analysis is left unaltered. Third, we argue that the interpretation of robustness tests is more intricate than simply checking whether Boolean expressions of solutions remain unchanged. In addition to sensitivity tests, researchers should combine both what we call a fit-oriented and a case-oriented perspective on robustness. Along these lines, we, fourth, propose the notions of a test set (TS) and a robust core (RC). The TS comprises all conceptually plausible alternative solutions and we propose to aggregate it in two ways: using their intersection (minimal test set [minTS]) and union (maximal test set [maxTS]). The RC is that part of a QCA solution that overlaps with the TS, that is, that withstands all of the robustness checks summarized in the TS. We show that the concepts of minTS/maxTS and RC enable researchers to perform more than one robustness test at a time. Contrasting the minTS/maxTS and the RC with the initial solution (IS) reveals which elements of the latter stand on shaky grounds and which cases are deemed deviant or typical for nonrobust reasons. Fifth, we present a set of functions implemented in the R package SetMethods (Oana and Schneider 2018) that enable researchers to put in practice our proposals for robustness tests, thus making cumbersome and time-consuming robustness checks “by hand” an inefficient and error-prone process of the past. We illustrate the implementation of our QCA Robustness Test Protocol with a study on high life expectancy in countries across the globe (Paykani, Rafiey, and Sajjadi 2018).

Sensitivity Ranges

We label as

The concept of sensitivity range refers to the range of changes vis-à-vis calibration, raw consistency threshold, or frequency cut within which the IS stays the same. 3 Sensitivity ranges allow us to empirically assess the limits within which the Boolean expression for the solution remains unchanged. This robustness test gives us lower and upper bounds for the location of any of the calibration anchors, for any condition or the outcome, the frequency threshold, or the raw consistency threshold. Knowing the size of these ranges informs about how far the IS depends on particular choices of calibration, 4 raw consistency thresholds, or frequency cuts.

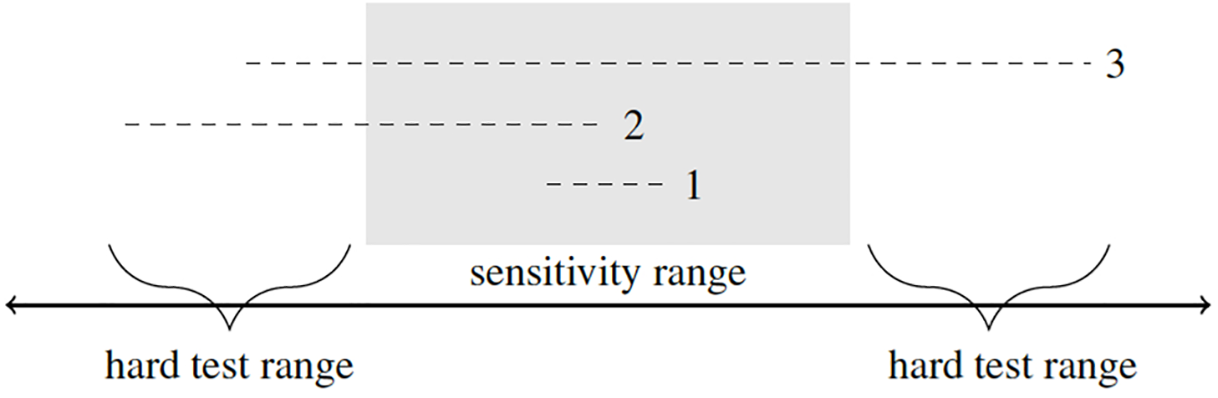

For obtaining sensitivity ranges, we perform each possible change in turn (change of qualitative anchors, change in raw consistency, and change in frequency threshold) by gradually increasing and then gradually decreasing values until the Boolean formula for the solution changes. This is done for each change in isolation, holding all the other parameters at their original value. Through this iterative procedure, we obtain the upper and lower limits for a particular change within which the IS stays the same. 5 Therefore, in contrast to other QCA Robustness Test Protocol elements introduced below, for sensitivity ranges we do not start by modifying multiple parameters simultaneously. Instead, we focus only on the IS to find its robust boundaries by performing one change at a time. The sensitivity range is entirely derived based on empirical findings. Considerations as to what a conceptually plausible range of analytic decisions looks like only come into play during other elements of our robustness protocol (see below). This implies that empirically derived sensitivity ranges could be both too narrow—there are conceptually plausible thresholds outside the range (scenarios 2 and 3 in Figure 1)—or too broad—the QCA solution is robust even against conceptually implausible thresholds (scenario 1 in Figure 1).

Since changes can be made for the raw consistency threshold, the frequency cut, and for each qualitative anchor (the crossover point for crisp sets or the 0, 0.5, and 1 anchors for fuzzy sets) of each condition and the outcome, the formula for obtaining the full spectrum of sensitivity ranges within which we have perfect robustness in terms of the Boolean expression of the IS is:

Calculating sensitivity ranges is an improvement in applied QCA, yet it suffers from two shortcomings. First, each sensitivity range is calculated in isolation. Each change (e.g., for a single calibration anchor in a single condition) is performed in turn, while keeping all the other parameters fixed at their values in the IS (e.g., keeping the rest of the calibration anchors fixed, keeping the same raw consistency threshold, keeping the same frequency threshold). What we obtain from this procedure is an answer to the following question: keeping all other analytic decisions fixed, what is the range of values for the analytic decision of interest within which the IS is insensitive? However, the challenge in gauging robustness in QCA consists in the fact that it can be threatened by multiple sources and that the combination of changes might matter for robustness. There are many analytic decisions a researcher has to make, most of which could be taken in a slightly different yet equally plausible manner. The qualitative anchors for one or more conditions or the outcome could be placed slightly different, the raw consistency threshold could be placed slightly higher or lower, or the frequency cutoff for truth table rows be chosen to be slightly higher or lower. 7 Both in principle and practice, it can happen that the combination of two such analytic changes that are both within the sensitivity range can trigger a change to the IS. 8

Second, conceptually plausible analytic changes might be outside of the empirically derived sensitivity ranges. Imagine the empirically derived sensitivity range for calibrating the set of “tall person” runs from 195 to 210 cm. Yet, conceptually, it is plausible to argue that persons of 190 cm height—a value outside the sensitivity range—could be considered tall as well. This corresponds to scenario 2 in Figure 1. We argue that strong conceptual arguments overwrite empirically derived sensitivity ranges: One should always perform robustness tests against conceptually plausible ranges. Conceptually, plausible test outside of the empirical sensitivity range constitutes hard tests (see Figure 1) and hard tests are to be preferred.

Empirical and conceptual sensitivity ranges. Note: – – – = conceptual ranges; 1 = fully inside empirical sensitivity; 2 = partially; 3 = fully outside.

Fit Orientation and Case Orientation on Robustness

The sheer number of potential robustness threats in isolation already poses practical problems. They are partially tackled by the notion of sensitivity ranges just introduced and empirically illustrated below. The problem of manifold potential robustness threats is further exacerbated by the fact that alternative analytic decisions could also be taken in combination rather than in isolation. The number of different combinations of plausible robustness checks lets currently available robustness test procedures and their interpretation quickly spiral out of control. What is needed is an approach to robustness that can cope with multiple robustness checks at the same time and that summarizes the findings in a concise yet detailed manner. The next two steps in the robustness protocol are designed to handle such a robustness test strategy.

The IS, the minTS/maxTS, and the RC

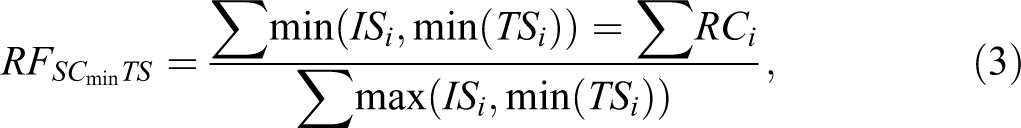

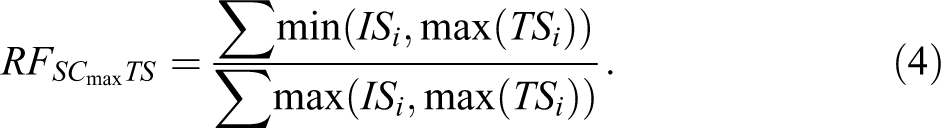

We propose the following solution to this conundrum. Rather than contrasting the

Apart from the

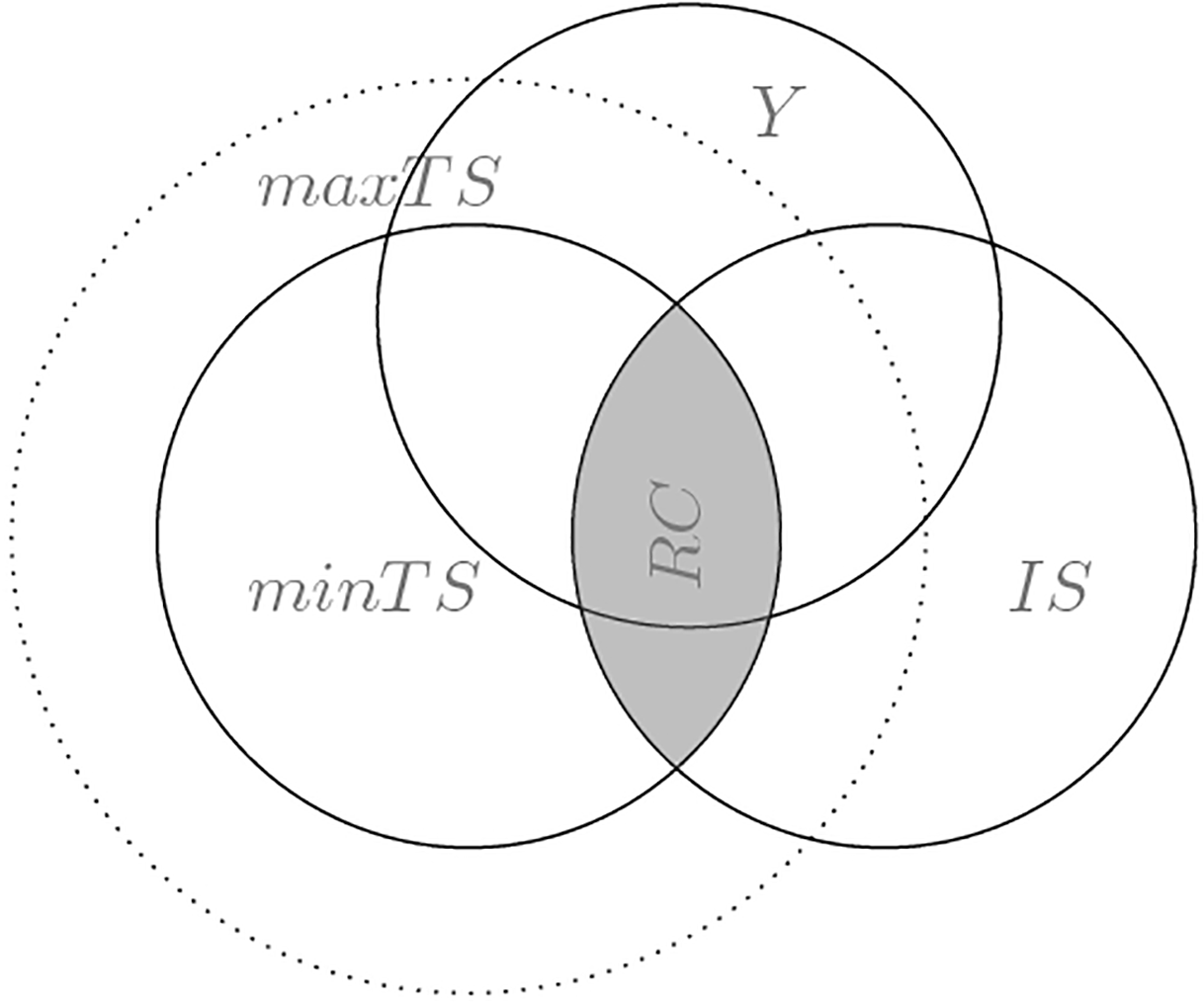

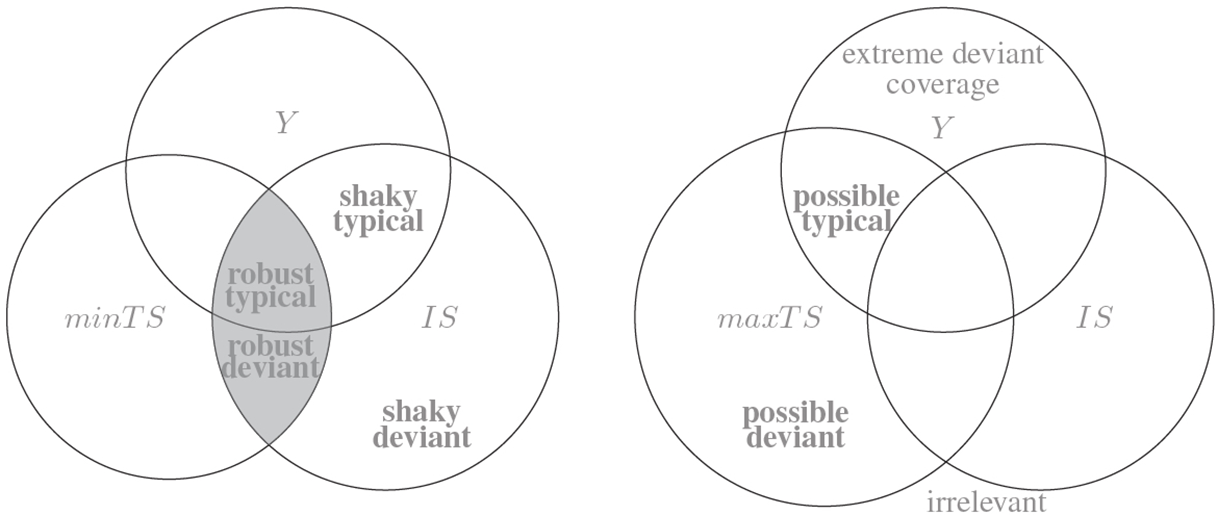

Figure 2 visualizes our idea for robustness in QCA. It depicts the

Initial solution, minimal and maximal test set, and the robust core. Note: minTS = minimal test set; maxTS = maximal test set; RC = robust core; IS = initial solution; Y = outcome.

Finally, Figure 2 also displays the outcome set. This is important both for our fit-oriented and our case-oriented robustness perspectives that we introduce in the next sections.

Fit-Oriented Robustness

The fit-oriented perspective compares how well the

The first two parameters express how well the

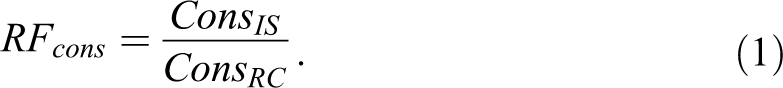

For consistency, the RF parameters (

In a similar manner, for coverage,

In addition to these two parameters, another two parameters of fit,

All four parameters range from 0 to 1, with higher values indicating higher fit robustness. These are continuous measure with no clear threshold above which an IS is to be considered robust and below which it is not robust. Our RF parameters share this lack of clear thresholds with several other parameters in QCA (consistency, coverage, and Relevance of Necessity (RoN)), and just as with those other parameters, we also suggest that a case-oriented perspective must complement the fit-oriented numerical assessment of robustness. We develop this idea in the following section.

Case-oriented Robustness

Robustness parameters of fit allow us to assess the degree of overlap between the

Venn diagrams and types of robustness-relevant cases

Figure 3 is an updated version of Figure 2. Each area in the respective diagrams for

Looking on the left-side diagram, we see the various intersections between the

Note that we use

Along the same line, it provides for a harder robustness test to use

Any case outside of both

Robustness-relevant case types.

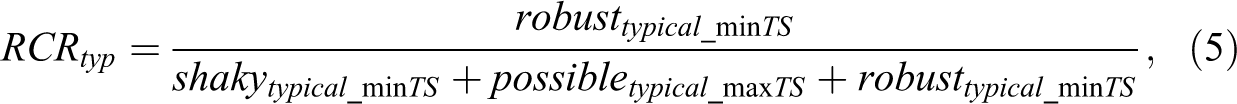

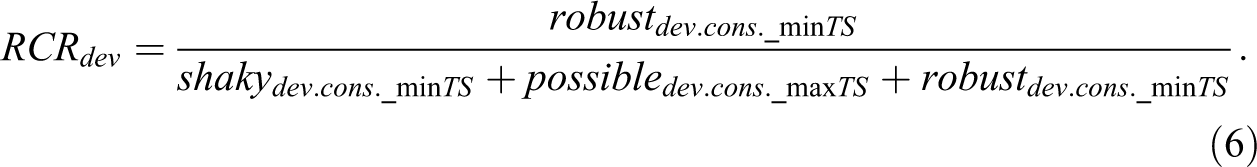

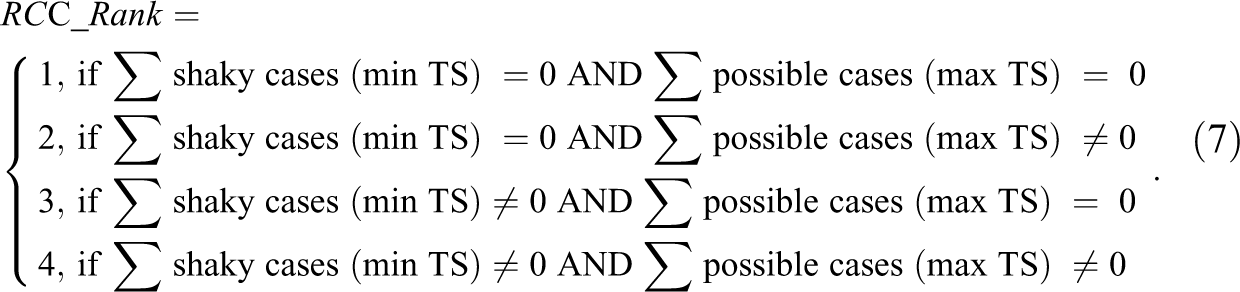

In addition to visualizing which specific cases fall into which category of case, we propose to also use the relative frequencies of the different case types for expressing the robustness of the

XY plots and case-oriented robustness

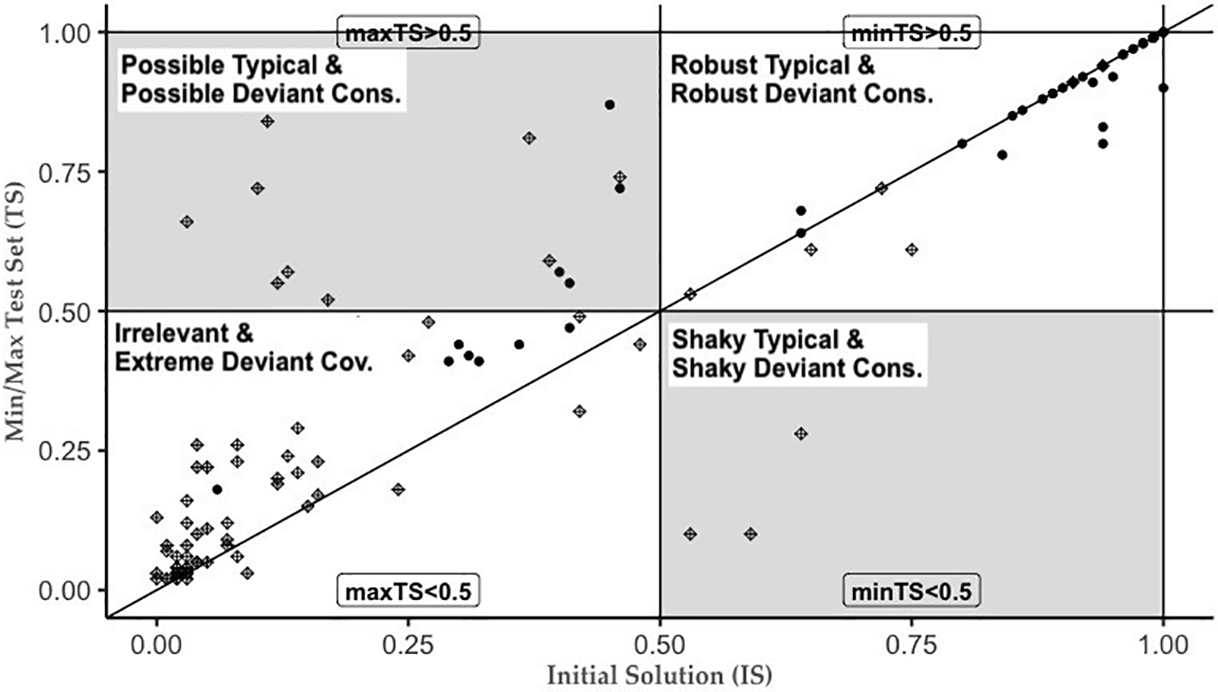

Venn diagrams like in Figure 3 are particularly useful in visualizing our conceptual idea of case types. They are less well suited for depicting concrete empirical situations in a given QCA study, especially when fuzzy sets are involved. For this, XY plots can be used. Figure 4 presents an XY plot with case memberships in a hypothetical initial sufficient solution (

The subset relationship between different solution formulas.

If all cases are exactly on the diagonal, then

Alternatively, if all cases are below the diagonal, then

In general, the farther away cases are from the diagonal, the smaller at least some of our RF parameters are.

15

The more cases are in the “forbidden quadrants” (upper-left and lower-right), the lower are our case-oriented parameters. Moreover, if there are cases in both of these areas simultaneously, it means that the

The literature on robustness in QCA puts emphasis on the subset relations between solutions and argues that solutions that are in a subset relation ought to be considered as more robust than those that are not. We further specify this subset notion of QCA solutions into a robustness case rank

In sum, our case-oriented perspective resonates both with QCA’s case-based origin and a focus on correct case classifications, which QCA has in common with a wide array of statistical and machine-learning procedures (Muchlinski, Siroky, and Kocher 2016). Both theory and practice in QCA robustness tests has long ignored this vital component.

The QCA Robustness Test Protocol in a Nutshell

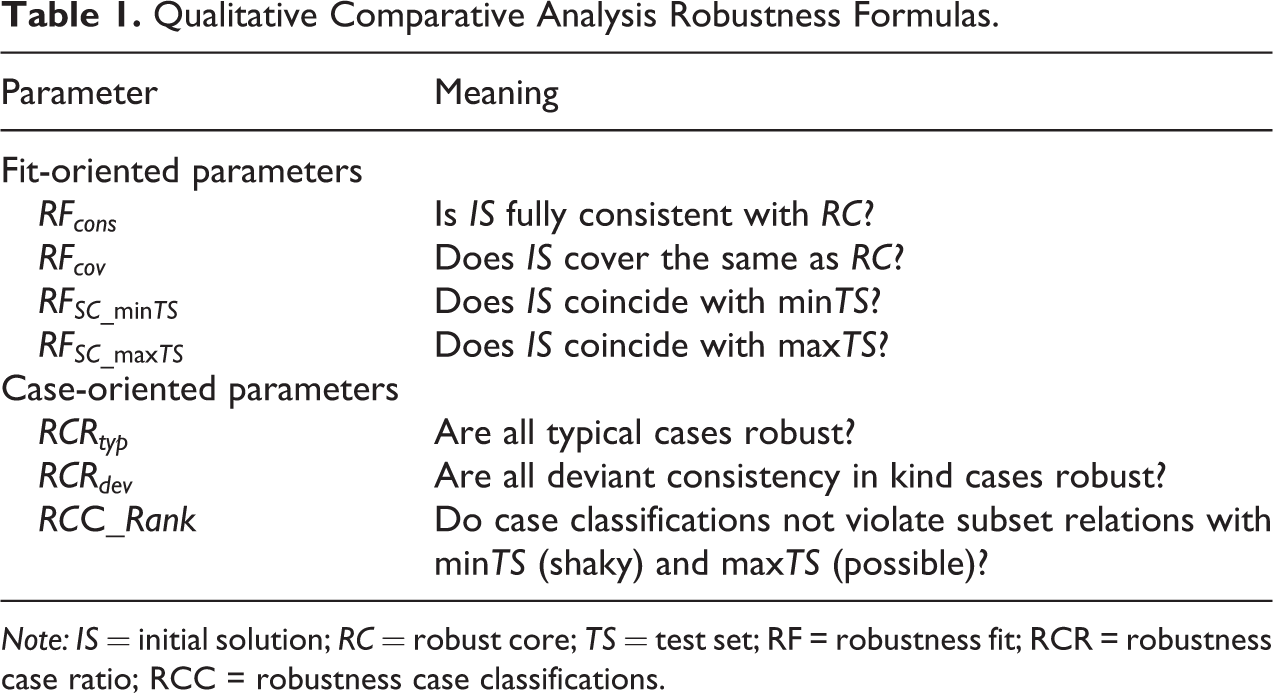

Applied QCA researchers have a total of seven parameters at their disposal. These parameters and their meaning are summarized in Table 1. Four of the parameters approach robustness from the perspective of parameters of fit and three from the perspective of cases and their classification as typical or deviant. The first six of them indicate maximum robustness with a value of 1 and lower robustness with values lower than 1. A seventh, the

Qualitative Comparative Analysis Robustness Formulas.

Note: IS = initial solution; RC = robust core; TS = test set; RF = robustness fit; RCR = robustness case ratio; RCC = robustness case classifications.

We now have all tools at our disposal to integrate our conceptualization and operationalization of QCA robustness tests in the form of a protocol. The following steps should be followed by applied QCA researchers who aim at testing the robustness of their findings. This protocol enables researchers to test the robustness of their findings against multiple alternative analytic decisions (calibration, raw consistency threshold, and frequency cut), both individually (using sensitivity ranges) and in combination (using the Produce Determine the sensitivity ranges for all relevant analytic decisions in isolation. Produce alternative solutions for the various analytic changes considered. In practice, we advice researchers to attempt to build tests that are as challenging as conceptually plausible by: • varying parameters not only within sensitivity ranges but at the margins of the “hard test range” of conceptual plausibility (see Figure 1); • building alternative solutions by combining the analytic changes in this hard test range. Obtain the • Intersect all alternative solutions in • Create the union of all alternative solutions in • Intersect Calculate the fit-oriented parameters ( Calculate the case-oriented robustness parameters ( Interpret the robustness results (including identifying the “hardest” test solution used).

The QCA Robustness Test Protocol in Practice

To illustrate how our seven-step QCA Robustness Test Protocol is put in practice using the R package SetMethods, v.3.0 (Oana/Schneider 2018), we use the study by Paykani et al. (2018) on the conditions for explaining high life expectancy in 131 countries around the globe. They use fuzzy-set data on the conditions high quality education (

Step 1: Produce the IS

For the purpose of this illustration, we create an initial parsimonious solution using a raw consistency threshold of

Step 2: Determine the Sensitivity Ranges

Sensitivity ranges to calibration can be calculated using the function rob.calibrange(). This function changes the calibration of the condition indicated in the option test.cond.raw, initially calibrated with the thresholds in test.thresholds, by modifying it once at a time with the value indicated in option step. The goal is to find the upper and lower bounds within which the solution stays the same, while keeping constant all other parameters that produced the

We see that for condition “

Functions rob.inclrange() and rob.ncutrange() are used for finding similar ranges for the raw consistency threshold and for the frequency cut, respectively, by gradually modifying them with a step value. We learn that our IS is rather sensitive to changes in raw consistency threshold and frequency (n.cut) threshold, respectively. The

Step 3: Produce Alternative Solutions, Taking Into Consideration the Sensitivity Range Analysis and Conceptually Plausible Changes in the Hard Test Range

For analyzing how multiple changes affect the IS and implementing the notion of RC, we first create, say, three additional solutions. In each of these additional solutions, we alter the analytic decision made for the IS. In alternative test solution 1 (

Note that we have set the test parameters, such that all of them are out of the sensitivity ranges determined in the previous step so that they are all in the hard test range. 24 Additionally, we argue that in order to test robustness in a challenging manner, one should test parameters that are as different as possible, but within conceptually plausible ranges. For example, our choice for a different raw consistency threshold is far outside the sensitivity range found in step 2 above (0.85–0.87), but still abides to the general guideline of not having a threshold below 0.75. In other words, robustness tests should be set up, such that they are as hard to pass as conceptually plausible. 25

Step 4: Obtain the

and the

In our example, we have produced three alternative solutions. One of the advantages of our approach to robustness is, however, that the number of these alternative solutions can be arbitrarily high. For practical purposes, in order to obtain the

We now have the

Step 5: Calculate the RF Parameters

By comparing the parameters of fit for

The parameters of

Step 6: Identify Robustness-relevant Types of Cases and the RCRs

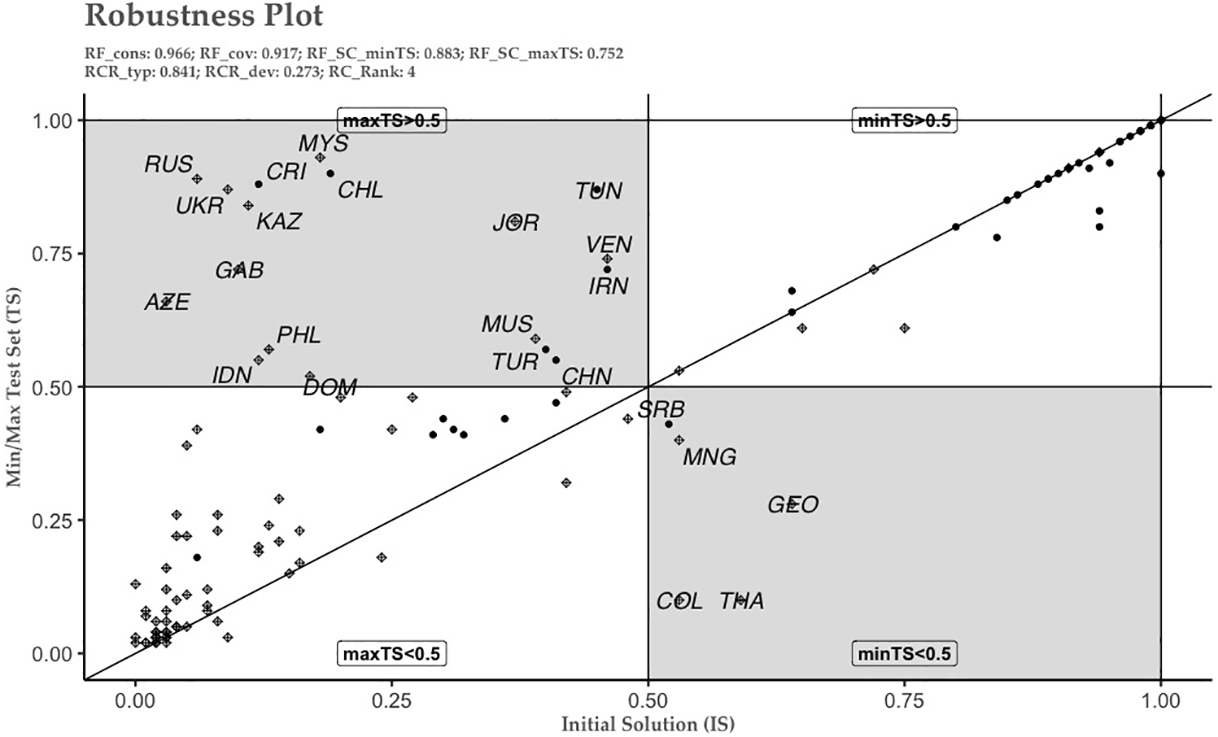

As explained above, fit-oriented robustness should be complemented with a case-oriented perspective. There are two main, mutually nonexclusive, ways of implementing case-oriented robustness in practice. First, we can produce an XY plot with the

For plotting the

One of the main advantages of visualizing robustness using XY plots is that we can easily spot the case rank of the relation between

The relation between minimal test set, maximal test set, and the initial solution.

Function rob.cases() identifies which cases fall under which robustness-relevant case type. In addition, the function automatically reports the

We see that

Step 7: Interpret the Robustness Results

The information revealed by our QCA Robustness Test Protocol and the various parameters it produces shows that while our

In order to further disaggregate these results, our protocol also allows for checking which alternative test solution turned out to be most problematic. This can provide insights into further analysis and/or further conceptual work by identifying those specific analytic changes that are most consequential for robustness. Function rob.singletest() provides this information by reporting the set coincidence between each individual test solution and the

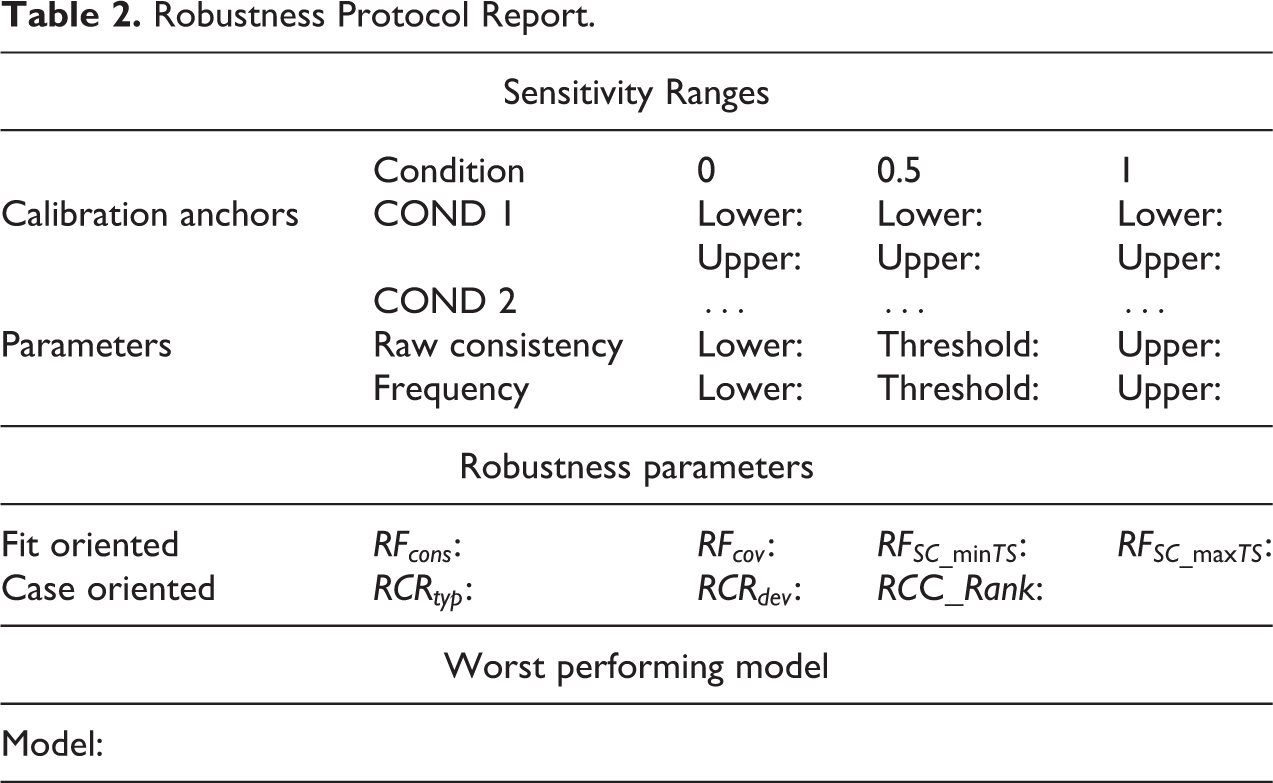

Applied QCA researchers should provide information on their robustness tests in a concise manner. While further details can be provided in an Online Appendix, the summary of robustness tests should be shown in the main text, if possible. We provide here a robustness protocol report table (Table 2) as one way of displaying such a summary about the robustness tests.

Robustness Protocol Report.

Finally, beyond the standard steps in the robustness test protocol presented above, one could also further engage with the notions introduced here in order to produce various improvements to the

Concluding Remarks

When conceptual borders are easily definable, when cases cluster neatly in truth table rows, or when the number of cases in the analysis is small, analytic decisions related to calibration or to the truth table analysis can be straightforward. In applied QCA, though, this is rarely the case. Conceptual borders are often imprecise and, consequently, the exact placement of calibration anchors open to debate. Cases are sometimes numerous and do not neatly cluster in specific truth table rows. This often makes decisions on raw consistency threshold and frequency cuts ambiguous. In such situations, we argue that researchers should apply systematic robustness tests to assess the consequences of changes in their various analytic decisions.

In this article, we have made proposals on how to move forward the discussion on both principles and practices of robustness tests in QCA. We believe that robustness is a multifaceted concept that needs to be assessed from various complementary angles. We have introduced the notion of sensitivity range as that range of values for calibration anchors, raw consistency, and frequency cutoffs within which our QCA result does not change. Going beyond sensitivity ranges, we have proposed various numerical robustness parameters that express how much and in which way QCA results change. We have defined different types of cases whose existence and relative frequencies provide additional information on how robust our QCA results are. Taken together, sensitivity ranges, the fit-oriented, and the case-oriented perspectives provide an integrated QCA Robustness Test Protocol, for both crisp and fuzzy set data, that is implemented in the R software package SetMethods.

While the tools introduced here allow for the implementation of this robustness protocol in a straightforward manner, we envisage several developments. As it stands now, the protocol introduced here assumes that cases’ membership in the outcome remains unchanged in the identification of robust/possible/shaky typical and deviant cases and in the calculation of parameters of fit. Future developments could overcome these limitations related to outcome recalibration. Future developments could also focus on ways to expand the robustness protocol to situations in which changes to the selection of cases and of conditions are made. For such changes, it should be kept in mind, though, that they tend to pertain to the domain of QCA as an approach rather than as a technique because they constitute more fundamental changes to the research design of a QCA.

Finally, we argue that any future developments should not abuse the more automated approach to robustness that our functions in package SetMethods undoubtedly provides. Instances of such abuse would consist in letting the algorithm identify the optimal thresholds for calibration, raw consistency, or row frequency, whereby optimal would be defined as “most robust.” This is why we suggest that the best practice should be to not only first provide a QCA solution (which here we have labeled IS) but to also specify an expected substantive plausibility range derived from theoretical and conceptual considerations. This recommendation is particularly salient for the calibration range because the location of the calibration anchors should be strongly driven by conceptual considerations on the meaning of the set to be calibrated.

All in all, we believe it important that the performance and reporting of robustness test become standard in QCA. With the conceptual and computational tools presented in this article, there are fewer reasons to object to this emerging standard of good practice.

Supplemental Material

Supplemental Material, sj-pdf-1-smr-10.1177_00491241211036158 - A Robustness Test Protocol for Applied QCA: Theory and R Software Application

Supplemental Material, sj-pdf-1-smr-10.1177_00491241211036158 for A Robustness Test Protocol for Applied QCA: Theory and R Software Application by Ioana-Elena Oana and Carsten Q. Schneider in Sociological Methods & Research

Footnotes

Authors’ Note

Rotation principle: both authors contributed equally. This article has benefited from fruitful feedback provided by participants of the second International Qualitative Comparative Analysis Expert Workshop in Antwerp 2019 and of the ECPR Methods Schools in Budapest and Bamberg in 2018 and 2019 and virtual in 2020. We also thank the anonymous reviewers whose comments have improved our arguments in decisive ways.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.