Abstract

Uncertainty undermines causal claims; however, the nature of causal claims decides what counts as relevant uncertainty. Empirical robustness is imperative in regularity theories of causality. Regularity theory features strongly in QCA, making its case sensitivity a weakness. Following qualitative comparative analysis (QCA) founder Charles Ragin’s emphasis on ontological realism, this article suggests causality as a power and thus breaks with the ontological determinism of regularity theories. Exercising causal powers makes it possible for human agents to achieve an outcome but does not determine that they will. The article explains how QCA’s truth table analysis “models” possibilistic uncertainty and how crisp sets do this better than fuzzy sets. Causal power is at the heart of critical realist philosophy of science. Like Ragin, critical realism suggests empirical analysis as merely describing underlying causal relationships. Empirical statements must be substantively interpreted into causal claims. The article is critical of “empiricist” QCA that infers causality from the robustness of set relationships.

Qualitative comparative analysis (QCA) continues to gain in popularity; however, confusion persists on the nature of its causal claims and the uncertainty affecting them. Existing methodological work on causality and uncertainty relies heavily on a regularity theory of causality (RTC) to address case sensitivity and QCA’s lack of empirical robustness (Braumoeller 2015; Thiem, Spöhel, and Duşa 2016). However, RTC does not square with the commitment to ontological realism evident in the work of Ragin (2008, [1987] 2014). His “interpretive” approach emphasizes a dialogue between cases, data, and theory. Instead, much current practice and methodological development in QCA (implicitly) follows RTC to infer causality from the robustness of set relationships. The “empiricist turn” suggests QCA as a data analytical method and brackets or even ignores case-based and contextual knowledge. This is deeply problematic because of QCA’s deterministic epistemology (Pula 2020). QCA’s empirical statements follow propositional logic and lack uncertainty measures—unless one mistakes the consistency of set relationships as measure for uncertainty (see below)—which means they fail as causal claims (King, Keohane, and Verba 1994; Lucas and Szatrowski 2014). Recognizing the problem, some “empiricists” in QCA have suggested second-order fuzzy sets to model uncertainty of calibration (Rohlfing and Schneider 2014:33). However, apart from the fact that second-order fuzzy sets greatly complicate the truth table analysis, they do not solve the fundamental problem. Where Ragin’s interpretive approach requires a separation of epistemology (empirical statements) and ontology (causal claims; Ragin 2009: 524-25), RTC-informed empiricism in QCA collapses the two (e.g., Thiem et al. 2016). While Ragin never suggested Boolean expression to evidence causality (Rohlfing and Schneider 2018:44), “empiricists” in QCA do just that (Baumgartner 2015; Braumoeller 2015; Thiem et al. 2016). Lest one accuses the paper of setting up “empiricist QCA” as a strawman: Very few, if any, QCA-researchers advocate an exclusive RTC-informed approach to QCA. However, causality and uncertainty are understood in incommensurable ways in RTC and the ontological realism of Ragin (2008, [1987] 2014). Therefore, mixing up Ragin’s interpretative notion of causality with RTC-informed concerns about the empirical robustness of set relationships is deeply problematic.

The starting point for discussion in this paper is uncertainty, which closely connects to causality (see below). The paper draws from critical realist philosophy of science (Archer et al. 2016), which has a radically different understanding of causality and uncertainty than RTC. This paper is not the first to note that QCA, in its interpretive fashion, and critical realism are a very good match (Byrne 2009; Gerrits and Pagliarin 2020; Gerrits and Verweij 2013; Pula 2020; Rutten 2020a). They both separate epistemology (empirical statements) from ontology (causal claims), whereas RTC collapses them. However, a systematic connection of critical realism’s three domains of reality (the real, the actual, and the empirical; Collier 1994: 42-45) to the epistemology and ontology of uncertainty, and causality in QCA has thus far been missing. Connecting QCA practice to the three domains of reality gives QCA researchers a clear understanding of how to practice consistently the methods’ interpretive approach and how to avoid falling back on (nocturnal) empiricist instincts.

This article begins with a discussion of how ontological realism and RTC are “mixed-up” in QCA and why that is problematic. The next section explains how critical realism’s three domains of reality connect to QCA. The article follows contemporary critical realism in suggesting causality as a power that human agents may exercise rather than a mechanism. Causal mechanisms ignore human agency and connect better to RTC (Gorski 2018:27). The next sections discuss uncertainty in each of the three domains of reality and how QCA may deal with it. The discussion suggests causal claims as “normic” statements, that is, ontological tendencies that explain why the presence of a cause makes it possible for human agents to achieve the outcome but does not determine that they will (Collier 1994:64). In particular, the discussion suggests the truth table as a tool to “model” possibilistic uncertainty and legitimizes QCA’s good practice of using the intermediate solution for causal interpretation because of its (very) low possibilistic uncertainty. The discussion further suggests that crisp set QCA (csQCA) more conscientiously addresses possibilistic uncertainty than fuzzy set QCA (fsQCA). The ontology-first approach of this article conflicts with the recent “empiricist turn” in QCA, in particular their various empirical metrics to inform causal explanation in QCA. Being explicit about their theory of causality helps prevent ontological realists in QCA from turning to RTC to address uncertainty.

A Diagnosis

Uncertainty is a soft spot in QCA, and it has received least attention in the method’s development. This is not to say that QCA has no means of dealing with uncertainty. Consistency thresholds, PRI values, various robustness tests, calibration, and going back to the cases all address uncertainty in one form or another (Maggetti and Levi-Faur 2013), however, not in a systematic way. A systematic approach to uncertainty follows from a theory of uncertainty, which, in turn, follows from a theory of causality. QCA lacks both. Of course, causality in QCA is complex, that is, it is configurational, equifinal, and asymmetrical (Ragin [1987] 2014:27); however, that does not answer the more fundamental question whether causal explanation follows a regularity theory? RTC assumes ontological determinism, namely, the nature of social reality is such that a particular (configuration of) cause(s), if left undisturbed, will always produce a specific outcome (Collier 1994:128-30). Complex causality does not rule out a regularity notion of causality. QCA makes causal claims as statements of sufficiency or necessity, which, empirically (as set relationships), are expressed as if-then statements (as regularities). However, this empirical regularity may be descriptive and need not imply an invariant mechanism connecting cause to outcome, that is, ontological determinism (Decoteau 2018:90; Steinmetz 1998:177). The relationship between theory of causality and theory of uncertainty is obvious from variable-based methods. They follow RTC where empirically observed regularities (epistemology) evidence invariant connections (causal relationships) between cause and outcome (ontology; Lawson 2005:375). Empirical regularities are affected by unsystematic measurement error (epistemology) and by random variations in how cause and outcome are connected in social reality (ontology). Consequently, causal claims are expressed probabilistically (predictions are probabilistic), and uncertainty, too, (both measurement uncertainty and uncertainty about the connections between cause and outcome in social reality) is probabilistic (namely randomness in the data). Consequently, variable-based methods prefer statistical techniques to make causal claims and use probabilistic criteria, such as the p value, to distinguish between genuine and spurious regularities. That is, the theory of uncertainty in variable-based methods follows from its theory of causality and puts in place probabilistic empirical thresholds for the validity of causal claims (Lawson 2005).

Contrary to variable-based methods, QCA (a case-based method), per Ragin (2008, [1987] 2014) and the authoritative handbook of Schneider and Wagemann (2012), does not regard empirically observed regularities between sets (i.e., consistent set relationships) as evidence of ontological regularities between cause and outcome (Greckhamer et al. 2018:484, Rohlfing and Schneider 2018:44). In fact, QCA is strongly committed to ontological realism (Byrne 2005) where causal inference is a matter of substantively interpreting empirical findings (consistent set relationships) and knowledge of cases and context into plausible explanations. QCA methodology is clear that going back to the-cases is quintessential to the method and that QCA cannot be reduced to truth table minimization. Empirical regularities may be indicative of causality, but substantive interpretation makes the causal argument. This is retroductive reasoning, which sits uncomfortable with the belief that empirical regularities evidence causal effects (Pula 2020:5). Causal effects focus on what individual causes “do” to outcomes. Instead, retroductive reasoning, applied to QCA, considers cases holistically as configurations of causes and outcomes (Goertz and Mahoney 2012: 41-42). A causal effects approach suggests that a cause will necessarily produce an outcome (ontological determinism, a causal mechanism connecting cause to outcome), while retroductive reasoning explains why underlying “causal powers” produce the co-occurrence of cause and outcome on the level of cases (Gorski 2018: 25-27). However, this is not how QCA is always used in practice. Large-N applications in particular often settle for an “empirics-only” approach to QCA where set consistency is accepted as evidence of causal effects of configurations of causes on outcomes. Problematically, this empiricism invites mistaking conditions for causes (conditions are not causal; they describe cases and their causal powers) and follows a deterministic epistemology (Byrne 2005; Pula 2020).

Two arguments may explain why QCA methodologists and practitioners may (implicitly) follow RTC and think in terms of causal effects. First, it is the dominant approach to causal inference in the social sciences. Under the influence of variable-based methods, many social scientists believe the experimental model to be the “golden standard” of causality (Pula 2020: 1-2). Methods teaching often suggest causal effects, expressed as statistically significant covariation between independent and dependent variables, as the overarching causal logic of the social sciences (e.g., King et al. 1994). Even when QCA methodologists and practitioners take the case-based causal logic of QCA seriously, the dominant framing of causality as regularity may still (nocturnally) influence their thinking. Second, responding to criticism from regularity theory, QCA methodologists have introduced a range of sensitivity and robustness diagnostics that explicitly follow a regularity logic. For example, “bootstrapping”—where researchers randomly delete a number of cases and then redo the truth table analysis hoping to find a solution that closely approximates the original solution—is a common sensitivity test for large-N QCA (Rutten 2020a). The procedure is based on the believe that if a cause is invariantly connected to an outcome (ontological determinism), this should be evidenced by empirically robust set relationships that are independent of the sample of cases under investigation (Schneider and Wagemann 2012: 285-86). However, this practice is seriously problematic, not least because QCA populations are constructed rather than randomly sampled (Rutten 2020a). In addition to RTC (nocturnally) affecting how QCA methodologists and practitioners think about causality, the QCA community (if one can call it that) is divers and open with regard to methodological approaches. Some argue that simulated data may test how QCA works empirically, while others emphasize the importance of real-world data (Olson 2014; Rohlfing 2016). Furthermore, different kinds of research questions and different kinds of data may lend themselves to different approaches, for example, a case-oriented or a condition-oriented approach (Thomann and Maggetti 2017). This diversity complicates converging on shared theories of causality and uncertainty. There is a very lively debate on whether the parsimonious solution, which is mathematically sufficient, is causally interpretable. Critics of the parsimonious solution argue against it because it relies on untenable assumptions (difficult counterfactuals). For them, the implication is that mathematical sufficiency (i.e., an empirical regularity) may not imply substantive sufficiency (i.e., a plausible explanation of the outcome; Duşa 2019). This debate evidences a divide between ontological realists (“interpretivists”) and regularity theorists (“empiricists”) in QCA (Schneider 2018).

The case for substantive over mathematical sufficiency in causal explanation corroborates the original works of Ragin (2008, [1987] 2014) and Schneider and Wagemann (2012) who argue that the truth table analysis is merely descriptive. Obviously, there is a difference between “good” and “bad” description; QCA good practice suggests that a consistency below 0.8 (for sufficiency) does not validly describe the set relationships in the data (Greckhamer et al. 2018:8). However, a higher consistency does not necessarily make a better description. In fact, configurations with lower consistencies (but above 0.8) may be better interpretable in the light of substantive knowledge than configurations with higher consistencies (Rutten 2020a). As long as consistency remains above 0.8, a description is empirically valid, and researchers may add or delete conditions from the configurations that the truth table analysis produces, if substantive arguments permit (Ragin [1987] 2014: 42-44, 164-71; Schneider and Wagemann 2012: 280-82). (Adding conditions is unproblematic because it leads to higher consistencies; however, deleting conditions is unacceptable when it reduces consistency below 0.8.) Deviating from the truth table analysis finding in this way echoes the suggestion of Amrhein, Greenland, and McShane (2019) and Wasserstein, Schirm, and Lazar (2019) to abolish the p value for regression analysis and to let substantive arguments rather than a correlational metric decide which description of the data is best causally interpretable. Importantly, the 0.8 consistency threshold does not, in the first place, express a concern for measurement error. The contingent and heterogeneous nature of social reality makes perfect set relationships implausible (Byrne 2005:97-100, 115; Ragin [1987] 2014:27, 42-44). There will always be (hidden) constraints that prevent an outcome from occurring, also when the relevant cause is present. That is, even with perfect data, one would still not find perfect set relationships because causes and constraints may interact differently in different cases. This argument is a strong example of ontological realism and distances QCA from an RTC. However, in spite of this commitment to ontological realism and in spite of Ragin (2008, [1987] 2014) and Schneider and Wagemann (2012), emphasizing the descriptive nature of empirical inference, regularity arguments are not difficult to find in the QCA methodological literature. Some examples: Most obviously, the above sensitivity and robustness diagnostics make the validity of causal claims dependent on empirical regularities. Second, Rohlfing’s (2018:74) argument that “The ideal set relation is equivalent to an empirical set relation displaying a consistency value of 1 because classic propositional logic underlying QCA does not feature deviations from the ideal” demonstrates a commitment to ontological determinism and empirical regularities as evidence of causal relationships. However, taking Ragin’s commitment to ontological realism seriously, “ideal set relationships” (if they exist at all) would have a degree of inconsistency because causes and constraints “interact” differently in different cases and will always negate the “causal power” of conditions in some cases. That is, when ontological realism meets propositional logic, propositional statements become tendencies, namely, the presence of a cause makes the outcome possible but does not determine that it occurs (Bhaskar [1979] 2015: 126-27; Collier 1994:64). Ontological realism suggests neither an ontologically invariant connection between cause and outcome nor an empirical regularity to evidence the ontological connection between cause and outcome. The complexity of social reality and the heterogeneity of cases mitigate against both (Gerrits and Pagliarin 2020; Pula 2020). Third, also Rohlfing and Schneider (2018:39) follow an RTC when they argue that, “sound causal analysis should establish a causal effect on the cross-case level and involve complementary causal explanation via the analysis of causal mechanisms.” They effectively say that causal mechanisms invariantly connect causes to outcomes (ontological determinism), that cross-case empirical regularities evidence the existence of such mechanisms, and that subsequent within-case analysis will reveal the mechanism. This argument makes the strong assumption that the mechanism connecting cause to outcome in one case also happens in other cases where the same cause and outcome are present. This assumption further suggests that human agents will “trigger a mechanism” just because a cause is present (Lawson 2005: 383-85). It also limits equifinality to different configurations producing the same outcome (equifinality of causes); however, equifinality may equally pertain to “mechanisms” (Goertz 2017:52-55, Steinmetz 1998:177). One may drive too fast (cause) and get fined (outcome) by being caught on a police camera (technology mechanism) or by being stopped by a police officer (human interaction mechanism). Moreover, following Ragin (2008, [1987] 2014), the truth table analysis is descriptive; it does not identify causal effects. Rohlfing and Schneider (2018) connect their causal approach to counterfactual analysis, which is at the heart of causal explanation in QCA (and set theory in general). Counterfactual analysis investigates whether the outcome would still occur if a cause were absent or constraint were present. However, since it is counterfactual, researchers cannot know whether the outcome will materialize, only make a plausible argument to that effect. Moreover, it is not the cause that “does something” to the outcome, it takes human agency to exercise the “power” of a cause to achieve the outcome (Gorksi 2018; Steinmetz 1998). Neither human agency nor it producing the outcome can be determined. Even when human agents exercise a causal power, unforeseen constraints may negate their effort. Put differently, from an ontological realist perspective, counterfactual analysis is not about whether a cause is necessarily (ontologically deterministically) followed by the outcome but about whether a putative cause makes it possible for the outcome to occur, without determining that it will (Bhaskar [1975] 2008:51). A fourth trace of regularity theory in QCA is the distinction between core and peripheral conditions—or at least, that is how it is often used. Fiss (2011:394) suggests that core conditions are literally the core of configurations and that they “produce” the outcome in combination with more “peripheral” conditions that may be interchangeable. However, to “empiricists,” the notion of core conditions may suggest that conditions that are present in the parsimonious solution are not only empirically more important but, therefore, also theoretically more important. Core conditions have an elevated theoretical importance because they exhibit a “stronger” empirical regularity with the outcome than peripheral conditions. However, the notion of INUS conditions suggests that all conditions in a configuration are individually necessary but only jointly sufficient, that is, all conditions are substantively equally relevant. This interpretation of INUS conditions reveals a commitment to ontological realism, while distinguishing between core and peripheral conditions is suggestive of a regularity notion.

That is, the strong commitment of Ragin (2008, [1987] 2014) and Schneider and Wagemann (2012) to ontological realism is not consistently followed in the methodological development and practical application of QCA. Ontological realism rules out an RTC, yet it influences QCA methodology and practice. The complexity approach to causality insufficiently addresses the dichotomy between ontological realism and RTC. Asymmetrical, equifinal configurations may be thought of as causes that are invariantly connected to outcomes (RTC) but also as describing “causal powers” that make the outcome possible without (ontologically) determining that it occurs. Until the issue of a theory of causality is settled, it is difficult to develop a theory of uncertainty. Case sensitivity and low consistency (just above 0.8) indicate uncertainty from a regularity perspective but not from an ontological realist perspective, while a strong substantive argument may persuade an ontological realist but do little to convince a regularity theorist. One solution is for QCA to adopt a truly ontological realist theory of causality and a matching theory of uncertainty. Ontological realism is the cornerstone of critical realist philosophy of science. Several QCA methodologists (e.g., Byrne 2005; Gerrits and Pagliarin 2020; Gerrits and Verweij 2013; Olson 2014; Pula 2020) have recognized the merits of critical realism and also the work of Ragin (2009) strongly connects to critical realism. However, critical realism has not informed much QCA research thus far.

Causal Explanation in Critical Realism

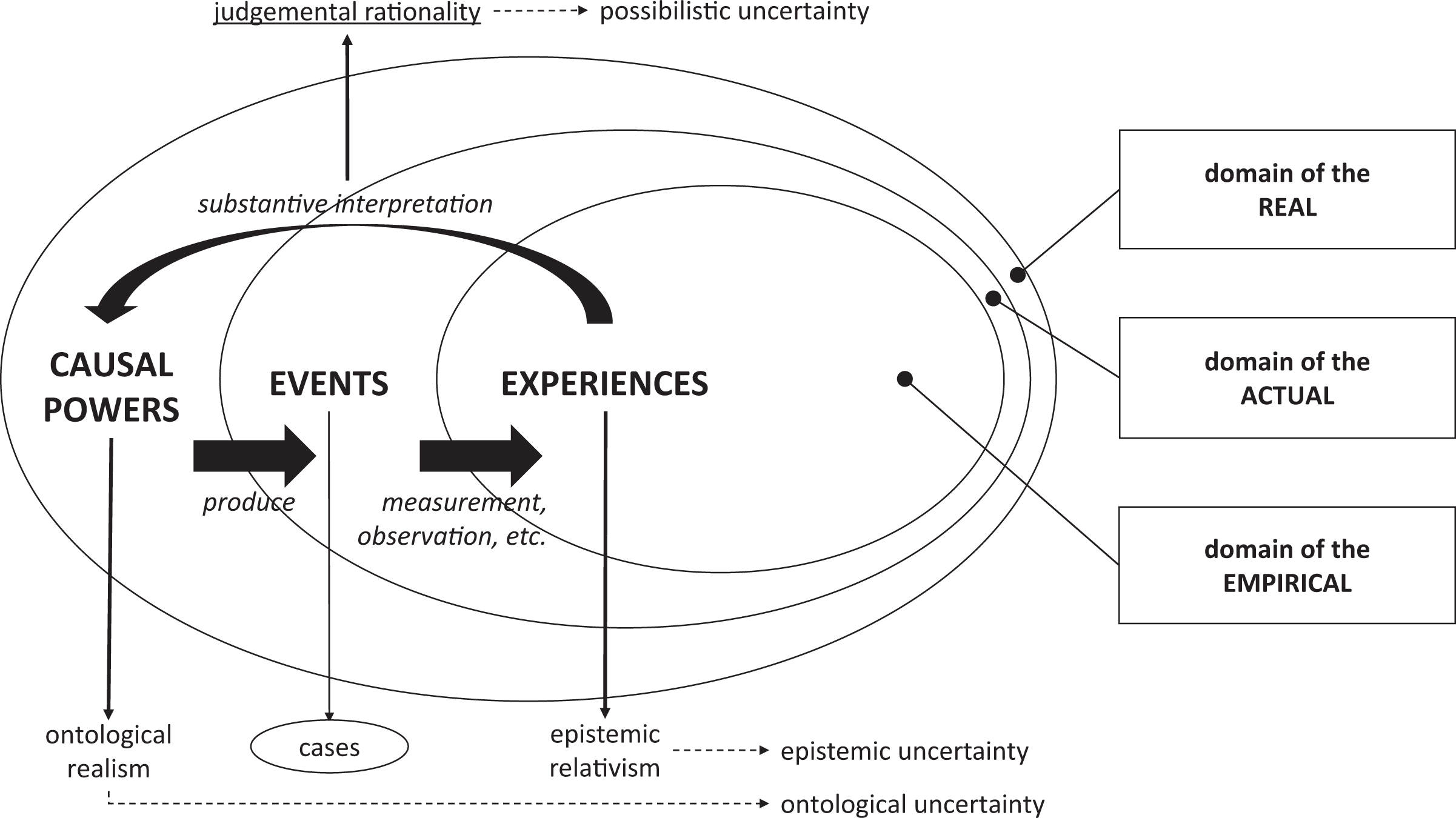

Figure 1 visualizes the argument of this section. Critical realism is a school of thought on what social reality must be like for social science to be possible (Archer et al. 2016; Pula 2020; Steinmetz 1998). Although a diverse school, all critical realists stratify social reality into three domains: the real, the actual, and the empirical (Collier 1994: 42-45). This stratified view of social reality challenges the “unified empirical reality” that underlies RTC. As argued, regularity theory assumes an ontologically deterministic connection between cause and outcome that can be observed as an empirical regularity between independent and dependent variables (the unified empirical reality; Bhaskar [1975] 2008: 69-79). This view brackets human agency, whereas critical realists argue that causes only produce outcomes when they are “actualized” by human agents (Lawson 2005:381). For example, a city can collect garbage if it owns bin lorries, employs binmen, and has a schedule that informs citizens when to put their bins on the street. However, garbage will not be collected when the binmen are on strike. That is, owning bin lorries, employing binmen and having a garbage-collecting schedule give the city the causal power to collect garbage, but it still takes the agency of binmen to exercise this power. In critical realist speak, causal power “resides” in the domain of the real, while the intended outcome (collecting garbage) “happens” in the domain of the actual, but only when the causal power is exercised. That is, the domain of the real is a superset of the domain of the actual. The domain of the actual only contains events that actually happen.

Critical realist causal explanation.

For example, garbage was collected on Monday because the binmen did their job; on Tuesday garbage, was not collected because the bin lorry broke down on route; and on Wednesday, garbage was not collected because the binmen were on strike. That is, the garbage collection event only happened on Monday (domain of the actual) but could also have occurred on Tuesday (when the causal power was exercised but remained ineffective) and Wednesday (when the causal power was not exercised). That is, garbage-collecting events on Tuesday and Wednesday are “possible worlds” that “reside” in the domain of the real (cf. Mahoney and Berrenechea 2019:308). However, given that the domain of the actual contains only one garbage-collecting event, scientists can only develop knowledge of that one event in the domain of the empirical. This means that researchers investigating the causal power of the city to collect garbage would only develop partial knowledge of reality. Moreover, they would miss that, on Wednesday, the causal power of the city to collect garbage was negated by the causal power of the labor union to organize strikes. What appears as a nonevent to a researcher investigating the causal power of the city to collect garbage is actually a positive event of a strike. What happens in the domain of the actual is the outcome of multiple causal powers “interacting,” but researchers developing knowledge of the actual in the domain of the empirical may not be aware of all of them. Moreover, the tools that scientists use to develop knowledge, that is, concepts and methods, are always theory- and value-laden (Pula 2020:8). Looking at the same data through different methods, one can legitimately see different things (Goertz and Mahoney 2012:13, Rutten 2020b:2). Furthermore, what counts as corporate social responsibility may be different for a neoliberal economist (e.g., making a high profit and paying it as dividend to shareholders) and a human rights activist (e.g., sacrificing profit to improve working conditions). This is critical realism’s principle of epistemic relativism: The knowledge that social scientists develop about events is always contingent, partial, perspectival, and fallible (Archer et al. 2016). Consequently, the domain of the empirical is a subset of the domain of the actual. It also means that empirical regularities are not so much observed as constructed. Therefore, to take empirical regularities (domain of the empirical) as evidence of causal powers (domain of the real) collapses epistemology and ontology, that is, commits the epistemic fallacy (Bhaskar [1975] 2008: 36-38). Critical realists thus reject regularity theories because ontological regularities deny the role of human agency and because the constructed nature of empirical regularities means they cannot evidence underlying causal powers.

Inferring causal powers (domain of the real) from knowledge (domain of the empirical) about events (domain of the actual) requires making a judgment. For critical realists, knowledge is neither a fact nor an opinion but a judgment. Scientists use theory- and value-laden concepts and methods to develop causal explanations through retroductive reasoning. This is the principle of judgmental rationality (Archer et al. 2016). It is obvious how Ragin’s commitment to ontological realism (2008, 2009, 2014) connects QCA to critical realism (Byrne 2009; Gerrits and Verweij 2013; Pula 2020). His emphasis on substantively interpreting truth table analysis findings into causal claims closely follows critical realism’s principles of ontological realism (causal explanations), epistemic relativism (descriptive empirics), and judgmental rationality (substantive interpretation). Furthermore, QCA suggesting cases as configurations of conditions and outcomes turns them into events in the domain of the actual.

Seeing cases as events does justice to their holistic nature. An event is an “assemblage” of causal powers, human agents exercising them and the outcome they (fail to) achieve (Byrne 2009; Ragin 2009; Rutzou and Elder-Vass 2019). Events may take a few moments (a police officer stopping a speeding driver), a whole day (collecting garbage), or a decade (the French Revolution 1789–1799). In all cases, the existence of social structures and social institutions allows human agents to exercise causal powers and (attempt to) achieve a desired outcome (Byrne 2005:103; Gorski 2018:29-30, 37-38). For example, stopping a speeding driver requires a police force (social structure), a traffic law, and social rules regulating interaction between police officers and members of the public (social institutions). In fact, it requires much more. Among others, police officers must be trained, which requires a police academy. The police are publicly funded, which requires a government collecting taxes, and so on. These are deeper ontological layers that have bearing on the event but that may not be relevant to explaining the outcome of interest (Pula 2020:3). Ultimately, understanding the interaction between police officer and driver draws from social psychology, which in turn draws from neurology. However, if the aim is to explain how fining speeding drivers improves road safety, these deeper ontological layers are not relevant. That is, the critical QCA question: “What is a case?” requires identifying relevant events and delineating the ontological depth required to explain them (Ragin 2009). The latter informs which conditions (describing putative causes) should be considered. In the above example, density of traffic, availability of police officers, and severity of fines may be relevant conditions, but not personality characteristics of police officers.

Seeing cases as events and causality as a power to be exercised by human agency allows the possibility that human agents change social structures and institutions over the course of the event (Rutzou and Elder-Vass 2019). That is, human agents exercise the causal power of social structures and institutions to achieve intended outcomes (causation stories). However, they may also use their agency to change social structures and institutions themselves (formation stories; Byrne 2005: 102). While the truth table analysis is perfectly time-agnostic, QCA as a method is not; it is retrospective (Goertz and Mahoney 2012: 41-44). Cases are events that have unfolded over time. The fact that, retrospectively, characteristics (conditions) are “present” does not imply they were present throughout the event. Suppose we find that A AND B is sufficient for C. It may be that in one case, A and B were both present from the start; however, in another case, B may only have emerged over the course of the event as part of the attempt to achieve C. To limit causal explanation to answering the question, why does the presence of A AND B make C possible (causation story) does not do justice to the emergent nature of social reality (Gerrits and Pagliarin 2020: 5-6). QCA researchers may legitimately focus on causation stories only; however, conditions (characteristics) and outcomes emerge together over the course of the event. Going back to the example of the binmen going on strike on Wednesday, a researcher may delineate the strike event between Monday and Wednesday. On Monday and Tuesday, union representatives tried to persuade the binmen to go on strike, but they only succeeded on Wednesday. Effective communication between union representatives and the binmen may be an important INUS condition to explain the occurrence of a strike; however, this condition may have needed Monday and Tuesday to emerge while the binmen and union representatives were discussing the option of going on strike to voice certain grievances. Emergence is key element of critical realist causal explanation (Bhaskar [1979] 2015:97; Collier 1994: 107-34). For the present discussion, it suffices to show how suggesting cases as events allows accounting for the emergence of conditions and outcomes (Byrne 2009).

Note that exercising causal powers depends on human agency but not on any one particular human agent. Any police officer can fine any speeding driver. This means that critical realism does not collapse into a form of methodological individualism. Instead, social structures and institutions (causal powers) precede any round of human agency (Byrne 2005:103). Without a police force and a traffic law, a speeding-driver-gets-fined event does not exist in the domain of the real and cannot be actualized. Note further that the speeding-driver-gets-fined event is “fluid” because the relationship between police officer and driver is fluid. However, the garbage-collecting event is “stable” because the same binmen repeat the same event over a long time. That is, the stability or fluidity of the social relationships between the human agents involved in the event matters for how the event unfolds and thus for causal explanation (Lawson 2005; Rutzou and Elder-Vass 2019). How QCA can account for fluidity and stability of social relationships in events (cases) is beyond the scope of this article. As argued, cases (events) may be seen as “assemblages” of social structures and institutions and of human agents and their social relationships. Cases (events) have internal dynamics (causation and formation) and external dynamics (they are always nested in broader, wider events, and enclose narrower, smaller events, that is, they have deeper ontological layers; Byrne 2005:105; Collier 1994:44-50). Consequently, to define a case is to, first, identify relevant events and, second, to delineate the case (event) in terms of relevant internal and external dynamics affecting the outcome.

Causal powers (of social structures and institutions) define a finite range of possible worlds (domain of the real). For example, it is possible for a police officer to fine a speeding member of the public but not a diplomat. Events that actually occur (domain of the actual) are a subset of the possible worlds, which makes QCA’s counterfactual analysis an obvious method for causal inference (Mahoney and Berrenechea 2019). Which of the possible events actually occur cannot be determined but is neither the result of random forces. Things “don’t just happen” in social reality, they happen because human agents intentionally exercise causal powers in order to achieve or avoid a particular outcome. (Bhasker 2015:90-97; Which does not deny unintentional outcomes nor claim that intentional agency is rational or voluntary.) Therefore, critical realist causal claims connect causes to outcomes in “normic” statements expressing ontological tendencies or possibilities. The presence of a cause makes it possible for human agents to achieve an outcome but does not determine that they will. Human agents may have reasons not to exercise causal powers and, when exercised, other causal powers may negate them—thus producing “inconsistent cases” that upset regularities but do not defeat normic causal claim. Normic causal statements express the belief that given fallible epistemic knowledge of underlying causal powers, a particular outcome is in the realm of possible worlds (Archer et al. 2016). Researchers arrive at normic causal claims after exercising judgmental rationality (substantive interpretation). Contrary to causal mechanisms, causal powers explain why an outcome occurs, not how. Discussing the merits of a causal powers approach over a causal mechanisms approach is beyond the scope of this article (see Groff 2016). However, where mechanisms suggest a constant conjunction between cause and outcome, they confess to ontological determinism and RTC.

Contemporary critical realists object that social reality, as an open system, is far too contingent and heterogeneous for any such (ontological) regularities to exist. How a cause “produces” an outcome (the “mechanism”) may be largely idiosyncratic (Decoteau 2018; Gorski 2018; Groff 2016). For example, to exercise the causal power of the police force and the traffic law, a police officer must stop a speeding driver. Whether it takes one or several police officers to do so, whether they had to give chase or merely signal the driver to stop, whether the interaction with the driver is friendly or hostile, that is, how the causal power is exercised, is irrelevant for the fact that exercising it makes the outcome possible. Normic causal claims explain the causal power of social structures and institutions. They concern the potentiality of the cause rather than the actuality of the outcome. Critical realists generally agree that if exercised in closed systems, causal powers would always produce the outcome; however, closed systems do not exist in social reality. For critical realists, the only place to infer causality is the real world. 1 Consequently, methods must be designed to deal with the contingent and heterogeneous nature of social reality, which is literally a different world compared to the notion of independent variables in RTC (Decoteau 2018:100). Believing that social reality is contingent and heterogeneous gives rise to very different concerns regarding uncertainty than the belief in a unified empirical reality where each (configuration of) cause(s) has its own, analytically separable effect on the outcome. The next sections discuss uncertainty in each of the three domains of reality, and how QCA deals or may deal with them.

Uncertainty in the Domain of the Empirical

Uncertainty in the domain of the empirical is epistemic; it pertains to how scientists develop knowledge of events (domain of the actual). The first concern is to collect raw data; however, uncertainty issues concerning data collection are not specific to QCA and are outside the scope of this article. QCA researchers must observe the good practice rules of whatever data collection method they use. QCA uses calibrated data. Calibration is a semantic transformation that connects meaning to values (Schneider and Wagemann 2012: 32-35). Since meaning is about ontology, calibration may also be called an ontological transformation. Calibration may be seen as a two-step process: First, it answers what is X (ontology), second, it answers how do we know an X when we see one (epistemology)? The uncertainty surrounding the set membership value attributed to a case thus is a function of how well the set (the concept) is defined and the validity of the raw data. That is, uncertainty is about how accurately set membership values express the nature of cases. The QCA literature offers good discussions of how to define concepts and subsequently calibrate cases. Therefore, this topic will not be discussed here.

The ontology first approach to calibration in QCA means that concepts (sets) need to be defined unambiguously. The definition of sets captures the essence of what it means to be a full member of the set. Clear definition (meaning) reduces epistemic uncertainty because it will be obvious when a case qualifies as a member of the set. That is, calibration is not a kind of coding that converts raw data into set membership values. Instead, calibration assesses whether (or to what degree) cases meet the criteria that define a set and then puts a set membership value on the assessments. Set membership values thus express a specific semantical meaning (Ragin 2008: 71-74). Calibration reduces empirical uncertainty because a set membership value (a meaning) may cover a range of raw data values. Therefore, particularly small measurement errors are unlikely to result in wrong calibrations. Moreover, wrong calibrations will only affect the truth table analysis when cases end up on the wrong side of the crossover point (Maggetti and Levi-Faur 2013). However, clear definitions of sets and the crossover point in combination with knowledge of the cases will usually guard against that. For example, a person nicknamed “shorty” being calibrated as a tall person should raise a red flag. In sum, calibration reduces epistemic uncertainty about the nature of cases and makes the truth table analysis less sensitive to unsystematic measurement error.

Fuzzy sets are generally considered superior to crisp sets (Ragin 2008:141); however, the difference making logic of the truth table analysis condenses all fuzzy sets to crisp sets. Crisp sets are perfectly consistent with the notion of causality as a power that human agents must exercise (Decoteau 2018:107). From a causal powers perspective, the definition of the crossover point is critical. For example, everyone who has completed some form of formal education has membership in the set of educated people. However, we would not consider a person who only completed primary education an educated person. So the question is how much education must a person have completed to qualify as an educated person? That depends on how “educated” is defined. If it means having moderate cognitive skills and being able to apply them to moderately complex problems, upper-secondary education may mark the crossover point between educated and not-educated persons. (The crossover point then is between upper-secondary education and the education level below it.) The crossover point, therefore, is the ontological threshold where a case has enough of the characteristics of a set (epistemology) to ontologically qualify as an instance of the set. That is, while all persons who have completed a form of formal education are members of the set of educated persons (have a degree of membership in the set), only upper-secondary education graduates and higher qualify as instances of the set, that is, as educated persons. This is consistent with the notion of causal power because only persons of upper-secondary education and higher can apply moderate cognitive skills to moderately complex problems. That is, causality as a power is consistent with the difference making logic of the truth table analysis because power can only be exercised when it is ontologically present (i.e., set membership above 0.5, the ontological threshold). Sets may have fuzzy boundaries; causal powers have clearly defined thresholds and “QCA may be seen as a threshold methodology” (Goertz 2020:182).

The fact that crisp sets are sufficient to make a normic causal claim does not render fuzzy sets irrelevant. One of the drawbacks of crisp sets is that they conflate nonmembers of a set and cases that are more out of than in the set. However, a school dropout (set membership 0.0) is a qualitatively different case than a primary-educated person (set membership, e.g., 0.1). While it makes no difference in the truth table analysis, for causal inference, it is more helpful to go back to a 0.1 case than to a 0.0 case. Ontologically, fuzzy sets become problematic when they disconnect meanings from set membership values; which happens with continuous or fine-grained fuzzy sets. For example, a person with 0.78 membership in the set educated people may have had a few months more education than a person with 0.71 membership in that set; however, they may both have a bachelor degree. When the empirical difference between 0.71 and 0.78 does not reflect an ontological difference, the empirical difference is irrelevant, and both cases should have the same set membership value. That is, the levels in a set membership scale (its “fine grainedness”) should not depend on the fine grainedness of the raw data but on the fine grainedness of the definition (semantical meaning) of the set (Goertz 2020:20). This is a more limited understanding of fuzzy sets than suggested by Ragin (2000: 157-58). Furthermore, while fuzzy sets more accurately calculate the consistency of set-relationships, the strength of this empirical regularity says nothing about its causal interpretability (see above). Put differently, calibrating fuzzy sets is about balancing empirical and analytical accuracy. Fine-grained scales may be empirically accurate, baring measurement error; however, they compromise analytical accuracy to the extent that they disconnect meanings from numbers. The result is an increase of epistemic uncertainty, namely, it is unclear to what extent the resulting calibration captures the nature of the cases.

The problem is not that fuzzy set membership levels in QCA have a degree of uncertainty but that fuzzy sets are linear while social science concepts are not. Social reality is complex and cases are heterogeneous (Gerrits and Pagliarin 2020), which means that cases “deviate” from the “ideal type” 1.0 case in nonlinear ways (Lakoff 2014). The difference between, for example, primary education (0.1 membership in the set of educated people) and medium vocational education (0.4) is unlikely to mean the same thing as the identical numerical distance between, for example, bachelor degree (0.6) and research master degree (0.9). QCA treats fuzzy sets as ratio scales, and this accounts for their assumed empirical accuracy. On this basis, fuzzy set relationships (e.g., their consistency) are calculated. However, what if the variation between the qualitative anchors is not continuous (ratio)? Then the empirical accuracy of fuzzy set-relationships in QCA is an illusion.

Instead, it is not the concepts that are fuzzy (in QCA, they are clearly defined) but the cases that are heterogeneous and, consequently, they have different causal powers. A person with a bachelor’s degree may only be different in degree from a person with a PhD in terms of their membership in the set of educated people; however, there still is a qualitative difference between them. Why else would some jobs require a PhD? That is, a bachelor’s degree has different causal powers than a PhD. Different set membership levels not so much describe degrees of membership in a set but different, although closely related, kinds of cases with different causal powers. Instead of a continuous (ratio) scale from fully out to fully in the set of educated people, “educated” is a nominal scale of weakly, moderately, very, etc., educated people. Each level describes cases with different causal powers and going from one level to next is to cross a(n ontological) threshold. That is, causal powers come in thresholds (Decoteau 2018:107; Goertz 2020: 160, 180-82; Lakoff 2014: 12-13) that are nominally ordered. Each threshold connects to a set membership value (giving values a specific meaning); however, the heterogeneity of cases that these thresholds describe make a linear ordering highly problematic.

A continuous interpretation of fuzzy sets in QCA uses the very variable-based logic that the method rejects (Ragin 2008: 6-10). This is obvious from the direct method of calibration (Ragin 2008: 87-88), which imposes a linear conversion on heterogeneous cases (Lakoff 2014:13). Additionally, the direct method imports all measurement error from the raw data into the calibrated data, which makes this method both ontologically and epistemologically inaccurate. That is, when fuzzy sets are used to capture empirical variation rather than to express meaning (ontology), they actually weaken QCA’s difference making logic because not all empirical differences are equally relevant. Irrelevant variation in QCA’s ontological fuzzy sets exists not only beyond the qualitative anchors but also between them. Moreover, the claimed precision of fuzzy set relationships is challenged by the nonlinear nature of differences (between cases) in social reality.

In sum, contrary to the unified empirical reality of RTC, uncertainty in the domain of the empirical is not primarily about measurement uncertainty but about the correct calibration of cases (Olson 2014). Better measurement alone does not address this epistemic uncertainty; it also requires clear definitions of sets and ontological thresholds (most importantly, the crossover point). Contrary to the broadly shared understanding in the QCA literature, fuzzy sets are not necessarily better at addressing epistemic uncertainty than crisp sets. They increase epistemic uncertainty when set membership levels are not unambiguously connected to meaning.

Uncertainty in the Domain of the Actual

Addressing uncertainty in the domain of the actual is about case selection. This is well understood in case-based methodology; case selection is about constructing a homogenous population of cases. In principle, cases differ only on the putative causes under investigation and the outcome of interest (Ragin 2000: 50-52). Put in terms of causal power; given that social reality is an open system wherein a multitude of (unknown) causal powers reside, homogenizing cases ensures the same causal powers are “at work” in all cases. The social structures and institutions effecting each case must be the same because this is where causal powers come from. Homogenizing cases thus allows researchers to investigate whether (not) exercising the causal powers under investigation is responsible for the (non-)occurrence of the outcome (Byrne 2005). The conditions on which cases are homogenized set the scope for normic causal claims; they are valid for (may be generalized to) cases that conform to the scope conditions. Scope conditions are an effective way of dealing with the ontological depth of events (cases; Goertz 2017: 66-68). It helps to think of this as a multilevel problem although critical realists would argue it is about different strata in social reality and, ultimately, that makes a huge difference. Take the police-officer-stops-speeding-driver event. Ontologically, the event can be defined as a routine traffic stop initiated by a single police officer and delineated in time and space as a particular time of day and a particular stretch of road. This clearly defines what counts as a case. The intentionality behind the agency of the police officer is to improve road safety. This outcome could be defined as achieving a change in driving style of the speeding driver (individual level or stratum of social reality) or as reducing the number of speeding drivers on that stretch of road at that time of day (societal level or stratum). In the first case (individual stratum), a researcher may compare driver reactions to being fined. In the second case (societal stratum), a researcher would compare similar stretches of road at the same time of day. Relevant conditions for the individual stratum may be driver personality, driver haste, and police officer conduct. These conditions develop an explanation in the individual stratum. Relevant conditions for the societal stratum may be road layout, traffic density, and intensity of police surveillance. These conditions develop an explanation in the societal stratum. Variable-based researchers would argue that these levels of analysis interact and suggest a multilevel analysis. Critical realists argue that explanations are unique to their stratum because different causal powers (social structures and institutions) are “at work” in different strata (e.g., social hierarchy [structure] and behavioral rules [institution] in the individual stratum and the police force [structure] and the traffic law [institution] in the societal stratum), and “each stratum is causally autonomous” (Pula 2020:3). Therefore, the individual stratum explanation may refine but does not affect the societal stratum explanation. Case selection and scope conditions are thus critical to dealing with ontological depth (Collier 1994: 46-48; Goertz and Mahoney 2012: 206-10).

Finally, case selection defines the population to which the “true causal structure” belongs. RTC methodologists have argued that QCA’s case sensitivity causes false positives and negatives in the solution, that is, the solution does not reflect the “true causal structure” (Braumoeller 2015; Rohlfing 2018). First of all, critical realists would not assume a causal structure to be present in the data because, rejecting ontological determinism, there is no reason why causes should be invariantly connected to outcomes. Second, suggesting social reality as an open system, critical realists believe that researchers can only know causal powers within a clearly defined and delineated context (see above). In QCA’s set theoretical language, case selection defines a universal set, and researchers will have good reasons to include or exclude cases from their universe (Schneider and Wagemann 2012:48). Ontologically defined, the universe includes not only the cases under observation but all cases in the domain of the real that meet the criteria. Thus, while variable-based researchers think of research populations in a top-down way, namely, as a sample from a given population, critical realists think of it as constructed in a bottom-up way. Therefore, for variable-based researchers, the “true causal structure” belongs to the given population and the sample must replicate or closely approximate it. For critical realists, the “causal structure” belongs to the ontologically defined universe (Ragin 2000: 61-62). Logical remainder rows in the truth table counterfactually account for those cases in the domain of the real that are not included in the “sample.” Consequently, provided the universe has been properly defined, the solution (causal structure) that the truth table analysis identifies is always the correct solution. Including or excluding cases changes the universe and, therefore, the “true causal structure.” Moreover, normic causal claims are generalized analytically, not empirically, because they do not assume a unified empirical reality.

In sum, carefully defining and delineating events and then carefully selecting cases reduces uncertainty because it identifies a clear scope or context within which causal powers may “produce” the outcome. Equifinality suggests that in different contexts, the same causal powers may “produce” different outcomes. Put differently, case selection limits the reach of normic causal claims. Contrary to regularity-based causal claims having a ring of universality (the “true causal structure”), normic causal claims are contextual (Byrne 2005:97).

Uncertainty in the Domain of the Real

The domain of the real is the realm of possible worlds. All events that are possible given the nature of the causal powers of social structures and institutions reside in the domain of the real. The domain of the real cannot be observed directly. Consequently, uncertainty in the domain of the real is exclusively ontological. In fact, it is possibilistic, namely, not knowing whether it is possible for a putative cause to explain the outcome (Bhaskar [1975] 2008:78). As a case-based method, QCA detects plausible causes of known outcomes (Goertz and Mahoney 2012: 41-42). It does so by substantively interpreting Boolean algebraic configurations that are mathematically sufficient for the outcome. Substantive interpretation follows a retroductive logic, a dialogue between empirical, contextual, and theoretical knowledge. It answers the question: Why does the presence of the putative cause (exercising its causal power) make it possible for human agents to achieve the outcome (Ragin [1987] 2014:16)? However, because cases are heterogeneous, the domain of the empirical only partially reveals causal powers (domain of the real); researchers cannot have ontological certainty that the putative cause makes the outcome possible.

One can think of QCA’s truth table analysis as a tool to “model” possibilistic uncertainty (Rutten 2020a:7). Ontologically, a truth table row indicates if the outcome is possible given the corresponding configuration. Truth table rows thus express an ontological tendency (normic claim). csQCA does this more conscientiously than fsQCA because csQCA ontologically resolves contradictory truth table rows. Researchers use substantive knowledge to assess whether the outcome is possible for a contradictory row. fsQCA resolves contradictions empirically by considering the consistency of set relationships (Schneider and Wagemann 2012: 120-21, 127). Thus, fsQCA considers empirical trends in a population of cases, while csQCA deals with the cases head-on, that is, fsQCA uses a regularity argument to resolve an ontological (possibilistic) uncertainty. Consequently, it risks mistaking empirical trends for ontological tendencies (see below). Using populated rows only, the complex solution has a very low possibilistic uncertainty—assuming that the uncertainties in the domains of the empirical and the actual are adequately addressed. For populated truth table rows, researchers know that the outcome is (not) possible; they have observed it. The intermediate solution makes assumptions on easy counterfactuals only, which marginally increases possibilistic uncertainty because substantive knowledge suggests that the outcome is possible, that is, the counterfactual outcome exists in the domain of the real. Making assumptions on difficult counterfactuals also, the parsimonious solution has a (very) high possibilistic uncertainty. For these logical remainder rows, substantive knowledge suggests that the outcome is not possible, that is, the counterfactual outcome does not exist in the domain of the real. The possibilistic approach to ontological uncertainty thus corroborates Duşa’s (2019) argument (see above) that substantive sufficiency is not an exclusively logical (Boolean) proposition. Of course, the intermediate and complex solutions are likely to contain redundant conditions and redundant conditions can never cause the outcome (causal effects logic; Baumgartner 2015:844). However, conjunctions containing a redundant condition contain everything to make the outcome possible. In fact, the outcome did occur (Byrne 2009:108). The causal explanation is unnecessarily limited to a subset of cases that have the outcome, that is, redundant conditions curtail normic causal claims. However, the outcome is perfectly possible under those conditions (Rohlfing 2018:73). In fact, following critical realism, all causal explanation is likely to contain redundant elements because, owing to the contingent and heterogeneous nature of social reality, researchers cannot know, only infer, causal power (Bhaskar [1975] 2008: 123-25; Collier 1994: 62-64). Furthermore, the notion of plausibility underlying substantive interpretation allows deviating from the intermediate solution. The intermediate solution suggested by the truth table analysis balances consistency and coverage; however, usually multiple solutions exist between the complex and parsimonious solutions (Ragin 2008: 164-66). Substantively interpreting a plausible explanation allows tweaking the algorithmically derived intermediate solution into a “choice solution.” That is, the choice solution reported in QCA’s solution table formalizes in Boolean expressions the most plausible explanation of the outcome (Rutten 2020a:4). This underlines that QCA is an ontological method where plausibility is more important than empirical robustness (Ragin 2008: 172-75).

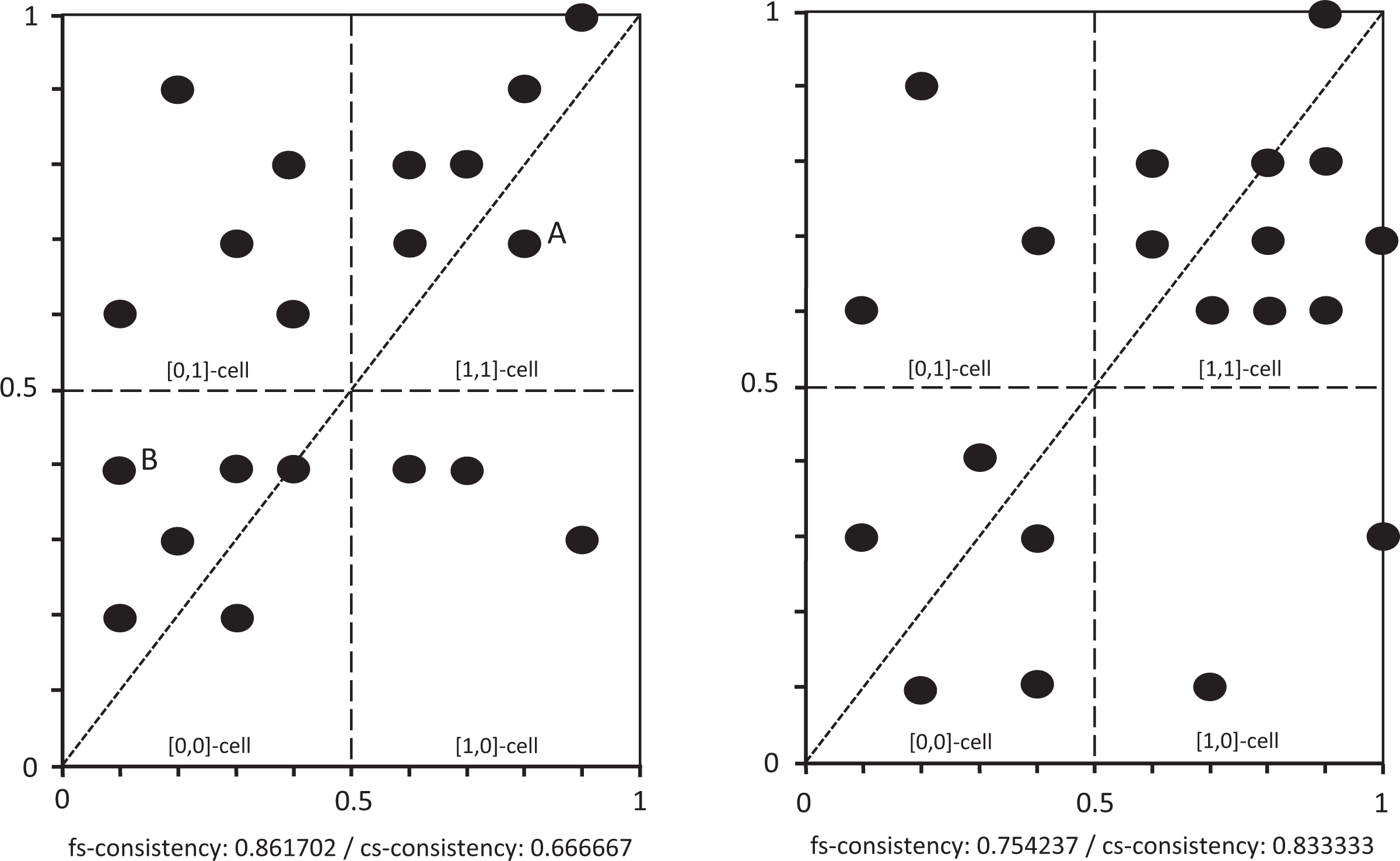

The difference in how fsQCA and csQCA identify underlying causal powers is easily demonstrated by looking at cases A and B in the left pane of Figure 2. In csQCA, case A is a consistent case; [1,1] cases (condition and outcome present) suggest the cause has the power to “produce” the outcome. However, in fsQCA, case A reduces the consistency of the set relationship because X > Y. In csQCA, case B is causally irrelevant; [0,0] cases say nothing about why the putative cause may be connected to the outcome (Schneider and Wagemann 2012:308). On the contrary, in fsQCA, case B contributes to the consistency of the set relationship because X ≤ Y. By implication, underlying causal powers are identified as tendencies of cases in csQCA but as trends on the level of a population of cases in fsQCA. What matters first in csQCA is whether individual cases have the causal power to “produce” the outcome. Consistency in csQCA calculates the proportion of cases where the causal power was effective and, hence, whether this causal power reflects an ontological tendency in the universe. Instead, fsQCA calculates consistency as an empirical trend across a population of cases. This trend abstracts from individual cases (Ragin 2008:51). Moreover, by including [0,0] cases, fsQCA departs Boolean algebraic difference making logic that causes only matter when ontologically present (>0.5). Instead, fsQCA suggests a regularity logic where a little bit of the cause may “produce” a little bit of the outcome (case B). Thus, the nature of causal power, or at least, how it manifests itself, is understood differently in fsQCA and csQCA. It is easy to see how this can lead to different conclusions. The left pane of Figure 2 shows a population of (simulated) cases where fsQCA identifies consistency, suggesting the putative cause has the power to “produce” the outcome. However, csQCA suggests no such causal power. In the right pane of Figure 2, the opposite is the case.

Differences in fuzzy-set and crisp-set consistency.

The question is how researchers can know that an empirical trend (regularity) in fsQCA evidences an ontological tendency. First of all, this requires careful case selection and calibration (addressing uncertainty in the domains of the actual and the empirical) because it ensures that empirical trends have meaning. Second, the empirical trend must be reconnected to cases. fsQCA struggles with reconnecting to cases as is evident from the distinction between cases inconsistent in kind and inconsistent in degree. fsQCA researchers usually ignore cases inconsistent in degree because they are in the causally relevant [1,1] cell. However, this practice conflates csQCA and fsQCA logic. There is no such thing as “inconsistent in degree,” all cases X > Y weaken the population-level empirical trend and violate the regularity logic underlying it (Rutten 2020a:15). Reconnecting to the cases, fsQCA may calculate cs-consistency as an additional metric. Calculating cs-consistency suggests whether the cause “produces” the outcome in actual cases. Consequently, whether an fsQCA empirical trend may be interpreted as an ontological tendency depends on three metrics: fs-consistency, the PRI value, and cs-consistency. The fs-consistency evidences that an empirical trend validly describes the data. The PRI value guards against simultaneous subset relations, where X is a consistent subset of both Y and not-Y. This is empirically possible in fsQCA but ontologically nonsensical. The cs-consistency suggests that the cross-case trend is an ontological tendency, that the assumed causal power “produces” the outcome in real cases (events).

In sum, uncertainty in the domain of the real is possibilistic. It affects the certainty of normic causal claims; did substantive interpretation identify causes that plausibly explain the outcome, that is, is it possible for human agents to achieve the outcome when exercising the power of the putative cause? Dealing with uncertainty in a possibilistic way legitimizes QCA as good practice of using the intermediate solution for causal interpretation; however, it problematizes the validity of interpreting fsQCA empirical regularities as ontological (normic) tendencies.

Conclusion

This article used the notion of uncertainty for a more fundamental discussion on the nature of causality in QCA. The article suggests that Ragin’s commitment to ontological realism connects QCA to critical realist philosophy of science. Ragin’s interpretive approach applies critical realism’s three principles of causal explanation: ontological realism, epistemic relativism, and judgmental rationality. The article contrasts Ragin’s “interpretivist” approach against the widespread “empiricist” practice in QCA and criticizes the latter’s emphasis on the robustness of set relationships for causal inference. This is problematic because of QCA’s deterministic epistemology. Moreover, the empiricist approach uses regularity arguments that are incommensurable with ontological realism. Furthermore, from a critical realist perspective, empiricist QCA is flawed when it mistakes conditions for causes. Instead, conditions describe cases and their causal powers, and given the heterogeneity of social reality, there is no reason why a causal power would invariantly “produce” the same outcome. Consequently, the absence of empirical regularities does not refute causal claims. In a powers approach, causality is about the potentiality of the cause, not about the actuality of the outcome. While QCA’s complexity approach to causality is compatible with RTC, critical realism clarifies that the robustness of empirical regularities is not particularly relevant and that the nature of uncertainty surrounding QCA’s causal claims is interpretive rather than empirical. Following critical realism, causal claims in QCA are neither probabilistic nor deterministic but normic and the uncertainty surrounding them is possibilistic. The in-depth discussion of what uncertainty means in each of critical realism’s three domains of reality and how QCA researchers may deal with them, is a major contribution of this article. The connection between QCA and critical realism has been made before; however, a systematic discussion of how critical realism may inform QCA practice was thus far missing. Suggesting the truth table as a tool to “model” possibilistic uncertainty legitimizes the use of the intermediate solution in QCA. Together with suggesting causality as a power that is real, regardless of whether it is exercised or effective when exercised, this is an important contribution to the ongoing debate on the robustness of QCA’s causal claims. It renders RTC criticism against QCA’s case sensitivity and lack of empirical robustness irrelevant. Such criticism hits the (empirical) target but misses the (ontological) point.

Fundamentally, ontological realism aims for a stronger connection between social reality, concepts, and measurements. Social science is not about regularities but about “people doing stuff,” 2 that is, about events (Byrne 2005; Decoteau 2018). Decomposing reality into continuous variables may be highly accurate empirically; however, correlations between them say little about events. Events as cases is where concepts and empirics meet. Cases as events address the relationship between “structure” (social structures and institutions) and “agency” (human agency). They allow researchers to deal with the multidimensionality and multilevelness of social reality as ontological depth. Begin and end points of events account for time and, hence, emergence. Moreover, ontologically, events either occur or do not occur; one cannot be fined in degree. Ontological realism and the notion of causal power thus legitimize the dichotomization of the truth table analysis. One can only exercise a power if it is ontologically present. Profoundly, ontological thresholds are not about “throwing away” variation but about considering the full richness of the data to assess whether thresholds are reached. Moreover, ontological thresholds can be raised or lowered, for example, to see whether generous or moderate speeding is sufficient for being fined.

While critical realism clarifies many issues concerning causality and uncertainty and thus explains why a mechanistic approach to QCA is simply wrong, it also raises two important issues. First, suggesting causality as a power that may only be exercised when present opens a debate between fsQCA and csQCA. A debate that the “QCA community” tries hard to avoid. However, this article argues that inferring causal powers directly from the cases (csQCA) is something different than inferring it from cross-case regularities (fsQCA). Reconnecting fsQCA to cases may resolve the matter empirically; however, it remains problematic to understand how an ontologically nonexisting cause (X < 0.5) may contribute to an ontologically nonexisting outcome (Y < 0.5). That is, in spite of its determination not to distinguish between fsQCA and csQCA, the “QCA community” must urgently debate the meaning and relevance of [0,0] cases. Second, in recent years, QCA has witnessed an “empiricist turn.” One that develops robustness metrics to inform causal interpretation. This trend is juxtaposed to the critical realist approach of this article. Because “empiricists” in QCA can plausibly use regularity arguments to talk about the validity of complex causal claims, the empiricist turn opens a rift between “empiricists” (regularity theorists) and “interpretivists” (ontological realists) in QCA. Profoundly, the nonlinearity of differences between cases challenges the empiricist assumption of the empirical accuracy of fuzzy sets. The difference between “empiricism” and “interpretivism” makes it very difficult for QCA to mature into a well-defined research approach and confuses (new) practitioners, critics, and reviewers on what QCA is about. Perhaps, to avoid confusion, QCA researchers should explicitly confess to “empiricism” or “interpretivism” in their papers.

Some will consider the critical realist approach to QCA an important step forward because it clarifies what causality and uncertainty mean and informs good practice. Others will reject it because they hold on to a unified empirical reality and corresponding regularity notions of causality and uncertainty or because they favor the empirical accuracy of continuous fuzzy sets. That is a good thing, though, because it clarifies what opponents are debating and why they disagree. Moreover, disagreement need not stop opponents from appreciating that critical realism supports a coherent and compelling way of doing QCA.

Footnotes

Acknowledgment

This would have been a substantially lesser work but for the invaluable comments of three anonymous reviewers.

Author's Note

Roel Rutten is now affiliated with European Regional Affairs Consultants (ERAC), ‘s-Hertogenbosch, the Netherlands. Newcastle Business School, Northumbria University, Newcastle, UK.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.