Abstract

This article describes the development and evaluation of an e-exercise application (eISTAT) created for an introductory statistics course at the Turku School of Economics, Finland. Automatically generated exercises with instant feedback were programmed by the course instructor within an existing learning environment (ViLLE). Two survey datasets (

Keywords

Introduction

Universities worldwide promote learning that is independent of time and place, supported by the widespread availability of devices enabling both students and instructors to better leverage the possibilities of digitalisation. Technology encourages professionals, educators, and learners to reflect on their core beliefs in order to utilise it for the redesign or re-engineering of education (Kumar Basak et al., 2018). Digitalisation has profoundly transformed methods of interaction.

This article describes the development and evaluation of an e-exercise application for a university-level statistics course. By the term “e-exercise”, we refer to “electronic exercises”, which, in our case, are completed online. The course—an introduction to statistics—combined face-to-face and online teaching elements and falls within the field of e-learning. There are multiple definitions of e-learning; for example: E-learning refers to the use of computer network technology, primarily over or through the internet, to deliver information and instructions to individuals (Sambrook, 2003; Wang et al., 2010).

Blended and hybrid teaching models are forms of e-learning. “Blended” is defined as the combination of two modes of instruction: face-to-face and online learning (Kumar Basak et al., 2018). Typically, it involves attending classes in person while also completing coursework online (Hermita et al., 2024). The term “hybrid” refers to classroom teaching being replaced, wholly or partially, by technology-enhanced instruction or activities conducted outside the classroom (Linder, 2017; Saichaie, 2020). For example, students have the option to attend classes either in person or online, depending on their preferences and circumstances. Moreover, the online and in-person components of the hybrid learning model are designed to be interchangeable, allowing students to switch between them effortlessly (Singh et al., 2021). According to the literature, the hybrid environment removes barriers between face-to-face and virtual instruction, enabling not only the application of personalised learning strategies but also a significant expansion of their scope and effectiveness (Engel Rocamora & Coll Salvador, 2022). According to Linder (2017), hybrid pedagogy refers to a method of teaching that utilises technology to create a variety of learning environments for students. Instructors who employ hybrid pedagogies intentionally use technology not only to enhance student learning but also to accommodate a wide range of learning preferences. In addition, different learning environments address practical challenges such as geographical distance (Hall & Villareal, 2015).

The teaching format of the course combined multiple models. Approximately 30% of the students participated exclusively online, primarily due to the distance between the two university campuses. The remaining students attended sessions either face-to-face or online, depending on their preferences. In addition, the course included a series of exercises that were submitted and assessed online. Given the distribution of students and the course design, the teaching model can be described as “mostly hybrid,” with elements of blended learning.

The substantive setting of this article is an Introduction to Statistics course at a business school. Studies have shown that introductory statistics is arguably one of the most critical quantitative courses in a non-STEM (Science, Technology, Engineering, and Mathematics) student's university experience, due to the diverse needs of society (Zieffler et al., 2018, p. 50). Statistical literacy encompasses understanding and using the basic language and methodology of statistics: knowing what fundamental statistical terms mean, understanding the use of statistical symbols, and being able to recognise and interpret different representations of data. Statistical literacy is an important component for understanding the economy, government decision-making, health issues, and much more (Bateiha et al., 2020; Garfield & Ben-Zvi, 2007; Ramirez et al., 2012; Rumsey, 2002; Sabbag et al., 2025).

The purpose of the study is to describe students’ experiences with an e-exercises application and to analyse the relationship between the use of the application and course performance. In order to provide additional insights into the evaluation and development of an e-exercises application, the experiences of instructors and developers are also discussed. The structure of the article is as follows: first, we discuss the critical success factors of an e-exercise application; second, we describe the e-exercise system, hereafter referred to as eISTAT; thirdly, we outline the research questions and hypotheses for the application evaluation. The analyses include students’ experiences of using the application and the effect of application usage on exam grades using survey and learning data. Developer and instructor experiences are also discussed. Finally, a concept for a future e-exercise application is proposed.

Success Factors of e-Exercises Development

Successful development of e-exercises requires attention to various critical factors that influence the overall efficacy, engagement, and sustainability of the e-learning process. Min and Yu (2023) reviewed critical success factors in blended learning. Both blended and hybrid teaching involve integrating in-person and digital learning environments, which is a central theme of Min and Yu's (2023) review. Six categories of success factors include instructor, learner, course, design, technology, and environment. Ozkan and Koseler (2009) proposed a conceptual e-learning evaluation framework based on six dimensions: system quality, service quality, content quality, learner perspective, instructor attitudes, and supportive issues. Volery and Lord (2000) suggested four key success factor groups for online delivery: teaching effectiveness, technology, student characteristics, and instructor characteristics. Drawing on these classifications, we present a general framework for the development and evaluation of a statistics e-exercise application.

Instructor training and competence are foundational to the development of e-exercises, especially when the instructor is responsible for the development process. The quality of instructors—their knowledge and ability to create engaging and interactive learning environments—is a vital component of the e-learning process. Instructors who possess diverse competencies in online teaching are more likely to design effective and engaging e-exercises (Felix et al., 2023). For instructors, substantive knowledge and pedagogical skills, alongside course management abilities and familiarity with computer systems and technology, are identified as critical (Ozkan & Koseler, 2009). In contemporary statistical education, the instructor's role has evolved significantly, underscored by the essential need to assess and enhance the suitability of statistical software for educational contexts. Biehler (1997) emphasises instructors’ responsibility to critically evaluate the appropriateness of statistical software for learning and to engage in improving software quality. In this respect, instructors’ skills in adapting and modifying software and materials to meet the needs of their students and educational goals are especially important in introductory statistics courses.

Student engagement has been shown to contribute to higher overall satisfaction and performance in online learning environments. Studies have also indicated a moderately high level of acceptance of online learning among university students together with a positive relationship between interaction and online learning satisfaction (She et al., 2021; Sim et al., 2021). According to Min and Yu (2023), critical success factors related to learners include learning pace, commitment, attitude, motivation, cognition, computer self-efficacy, and prior experience. Prior knowledge constitutes the foundation upon which new learning is constructed (Delucchi, 2019).

The influence of student-related factors on the development of e-exercise applications is significant. Students may provide feedback regarding the functionality and usability of these applications, particularly highlighting shortcomings that could hinder their learning. This feedback loop between students and developers is essential for creating effective educational tools. Regularly soliciting and responding to student feedback enables educators to make necessary adjustments to enhance learning activities and clarify course content. This iterative process contributes to the creation of more effective and responsive e-learning environments (Alkhafaji & Sriram, 2012; Zheng & Yang, 2023).

Course design and structure: The critical pedagogical features of a course include design-related factors such as clear learning objectives, teaching methods, and learning strategies (Min & Yu, 2023). Well-structured courses that incorporate interactive and multimedia components enhance student engagement and knowledge retention. Research suggests that integrating diverse content formats can lead to more favourable learning experiences. According to Olufunke et al. (2022), the implementation of multimedia presentations, such as videos, audio, and images, has been found to be an appealing approach for students’ learning and for enhancing their cognitive performance. By offering customised content, adaptive e-learning environments improve the quality of online learning. Such customised environments should be flexible and responsive to students’ needs and learning styles (Franzoni & Assar, 2009; Kolekar et al., 2017).

Assessment design is a crucial component of any successful e-exercise. Online assessment is defined as a systematic method of gathering information about a learner and their learning processes to draw inferences regarding the learner's dispositions. Online assessments offer opportunities for meaningful feedback and interactive support for learners, as well as potential impacts on learner engagement and learning outcomes (Heil & Ifenthaler, 2023). In drawing inferences about students’ learning processes, online assessment may encompass various types of evaluations, ranging from single- and multiple-choice quizzes to games and simulations (Conrad & Openo, 2018). For example, a quiz of lecture content not only provides valuable feedback regarding students’ learning, but it also informs course development by identifying areas on which to focus in future teaching sessions.

Technology: Continued investment in technological infrastructure, including reliable learning management systems and digital resources, is essential to ensure smooth operation and accessibility. Research highlights that the successful integration of technology positively influences both instructors’ and students’ experiences in e-learning. The primary causes of e-learning failures are attributed to insufficient technological infrastructure, encompassing hardware, software, facilities, and network capabilities within the university (Almaiah et al., 2020). According to Min and Yu (2023), technology-related critical success factors include the availability and accessibility of ICT systems, ease of use, quality, reliability, efficiency, privacy, and software utilisation. Features provided by the development environment also fall within this category, such as course management, learning analytics, and programming tools. For example, Moodle, the most widely used Learning Management System (LMS), is programmable using the PHP programming language (Xin & Singh, 2021).

Environment: Collaboration and support networks are essential to fostering a cohesive e-exercise development process. Establishing strong networks among faculty, technical staff, and academic support services ensures that educators can harness collective expertise and shared resources to enhance e-learning experiences. Feedback from colleagues, prompt technical support, and online resources such as programming language discussion groups and developer websites provide a smoother pathway to efficient software development. These platforms facilitate knowledge generation through user contributions via blogs, wikis, forums, and online social networks (Kinsella et al., 2009). More recently, artificial intelligence has demonstrated the capability to generate programming code on demand.

To summarise, the success of development is determined by the instructor's capabilities—both substantive knowledge and IT skills—supported by motivated students, grounded in sound course design that utilises a variety of media and meaningful assessment, well integrated into the technological environment, and underpinned by a network of relevant actors.

Development: Design and Implementation of the e-Exercise Application

Curricular Context

The e-exercise application eISTAT was developed for an introductory statistics course offered at the Turku School of Economics, Finland. The course is a compulsory, three-credit undergraduate module taught in Finnish. It is typically taken during the second year of studies, with approximately 400 students enrolling each year. Students come from a variety of major subjects, such as accounting and finance, marketing, business management, information systems science, or may not yet have declared a major.

The course content covers probability theory and statistical inference, and is divided into six weekly modules. The first module, an introduction to probability and statistics, focuses on developing the basic skills required for the course, such as combinatorics. This is followed by modules on discrete and continuous random variables. The course then continues with an introduction to statistical inference using numerical variables, statistical inference using categorical variables, and concludes with additional aspects of statistical inference.

To motivate students and alleviate concerns about the mathematical aspects of learning statistics, the examples and exercises have been designed to provide a broader perspective—both in terms of applying the methods in other statistical courses within the degree programme and in the master's thesis, as well as their use in business and other organisations, such as in quality analysis.

The course incorporates a variety of teaching methods. Lectures are offered both face-to-face and online. Online teaching is primarily intended for students based at the Pori unit of the Turku School of Economics, located approximately 139 kilometres from the main campus. Students at the Turku unit may choose to attend lectures either in person or online.

Students are required to complete two types of assignments. First, they submit traditional handwritten exercises by the deadline via Moodle. These exercises are compulsory and identical for all students, and are assessed by the instructor. The eISTAT exercises are voluntary, feature unique starting values, and are assessed automatically.

The final examination is an electronic test consisting of multiple-choice questions, each with a single correct answer. The questions are designed to assess students’ understanding of statistical concepts and notation, as well as their ability to identify the correct numerical solution to statistical problems, including the interpretation of results. The examination is conducted in a supervised computer laboratory at a time selected by the student. No materials or devices other than those provided by the system are permitted in the examination room.

Key Features of the Application

We begin by noting that the development of the application involved customising existing software to meet specific educational needs. In our case, a separate web-based learning environment—ViLLE (VisuaL Learning Environment; internationally known as “Eduten”, www.eduten.com)—developed by the Department of Information Technology at the University of Turku, Finland, was readily available. ViLLE was awarded the UNESCO ICT in Education Prize in 2020 and the UNICEF Special EdTech Award “Blue Unicorn” in 2022 (UNESCO, 2022; UNICEF, 2022). It is an exercise-based learning environment, primarily used in primary school education.

A critical question when designing and adopting educational software is whether the new setting is expected to enhance learning (Tchounikine, 2011). In this regard, the ViLLE environment offers several important features that can be incorporated into the application to add value to the course and address user needs.

The application enables automatic assessment of submissions with instant feedback in a large-scale course. From the students’ perspective, these features provide flexibility in terms of both time and location, as submitting assignments and receiving feedback are no longer tied to the course's weekly schedule. The instant feedback not only indicates whether a submission is correct, but also provides guidance on how to arrive at the correct solution. Additionally, as students can retake assignments as many times as they wish, they are able to study at their own pace.

The ViLLE environment comes with a variety of exercise types (Laakso et al., 2018). Instructors can code and update the system as well as create exercises to accommodate changes in course content or teaching methods. The exercise code can be integrated into the ViLLE, covering exercise setup, evaluation, and feedback. MAXIMA and Gnuplot programming languages were used to set up exercises (Gnuplot 5.4 Manual (2022); Li and Racine (2008); Maxima Manual, Version 5.47.0). LaTeX was used to build mathematical presentations. Multiple sections can be used, for example, to include both mathematical calculations and verbal interpretations based on numeric results.

Consequently, the application allows for the personalisation of assignments; the system can be configured as simulation-based, with random starting values for exercises. This feature effectively mitigates the problem of free-riding, thereby simplifying course management. At the same time, the application enables the design of similar exercise “stories” to facilitate teamwork and support the social dimension of learning.

Many features related to the technological and environmental factors in Min and Yu's (2023) framework are implemented in eISTAT. The system runs on a standard web browser with no additional plug-ins required, is device-independent, and offers easy access. By easy access, we mean that students can log in using their university network usernames and passwords without needing any additional login credentials.

The simple, menu-driven user interface allows the system to be used without any command-line input and follows a structure similar to that of the course—for example, the main exercise blocks correspond to the six weeks of teaching. This user-friendly interface reduces the time required for both teaching and learning how to operate the application.

The application is designed to provide students with relevant learning material when they begin an exercise. Students use external tools—Microsoft Excel is recommended to calculate of probabilities, values of test statistics, degrees of freedom and p-values, because Microsoft Excel is familiar to students from their previous studies. Students then enter the numeric values calculated with Excel into the corresponding eISTAT fields. This approach is beneficial because students are typically familiar with such software, and it gives them the freedom to choose their preferred tools, thereby supporting adaptive learning styles. Excel is also available in the supervised examination room. After completing an exercise, students receive feedback on their progress, which is intended to enhance motivation.

The current application requires students to respond either by entering a numerical answer or by selecting from multiple-choice alternatives—the latter being the method used in the final examination. This helps students become familiar with the exam format.

Four types of e-exercises were set up in the application:

Mathematical (Type 1): Random parameter values and/or data are generated. User-input numerical answers are automatically compared to system-defined answers, allowing for up to a 2% margin of error. The margin of error was deemed necessary, as students often round off the results of intermediate calculations, which has an effect on the final numerical value of the answer.

Mathematical (Type 2): Random parameter values and/or data are generated. Students select the correct answer from four numerical options. Verbal: Content-based questions where students choose the correct answer from four fixed alternatives.

Mathematical and verbal: A two-phase exercise. In the first phase, numerical results are calculated and evaluated similarly to Types 1 and 2. In the second phase, students select the correct choice from several alternatives based on the numerical results.

The Application

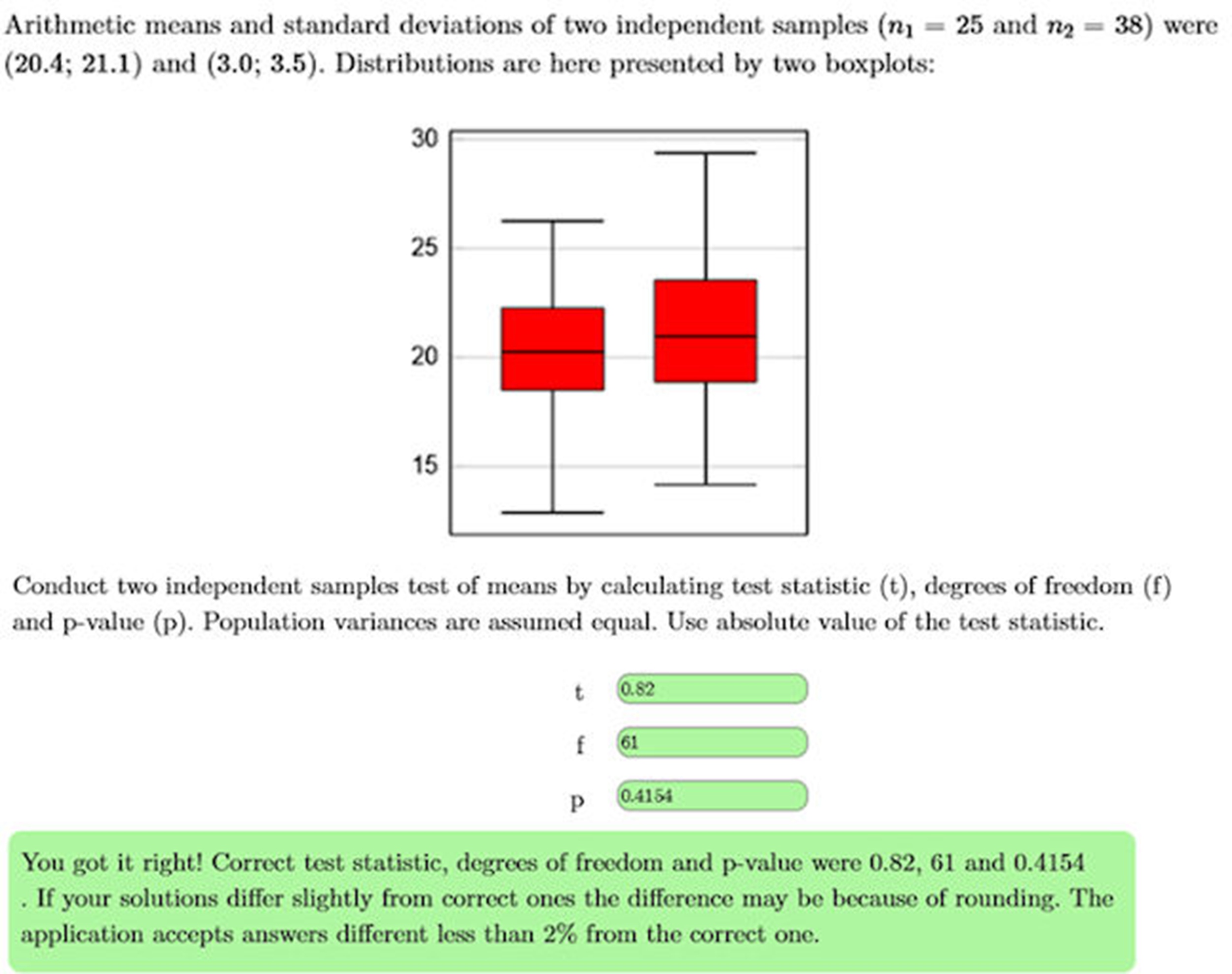

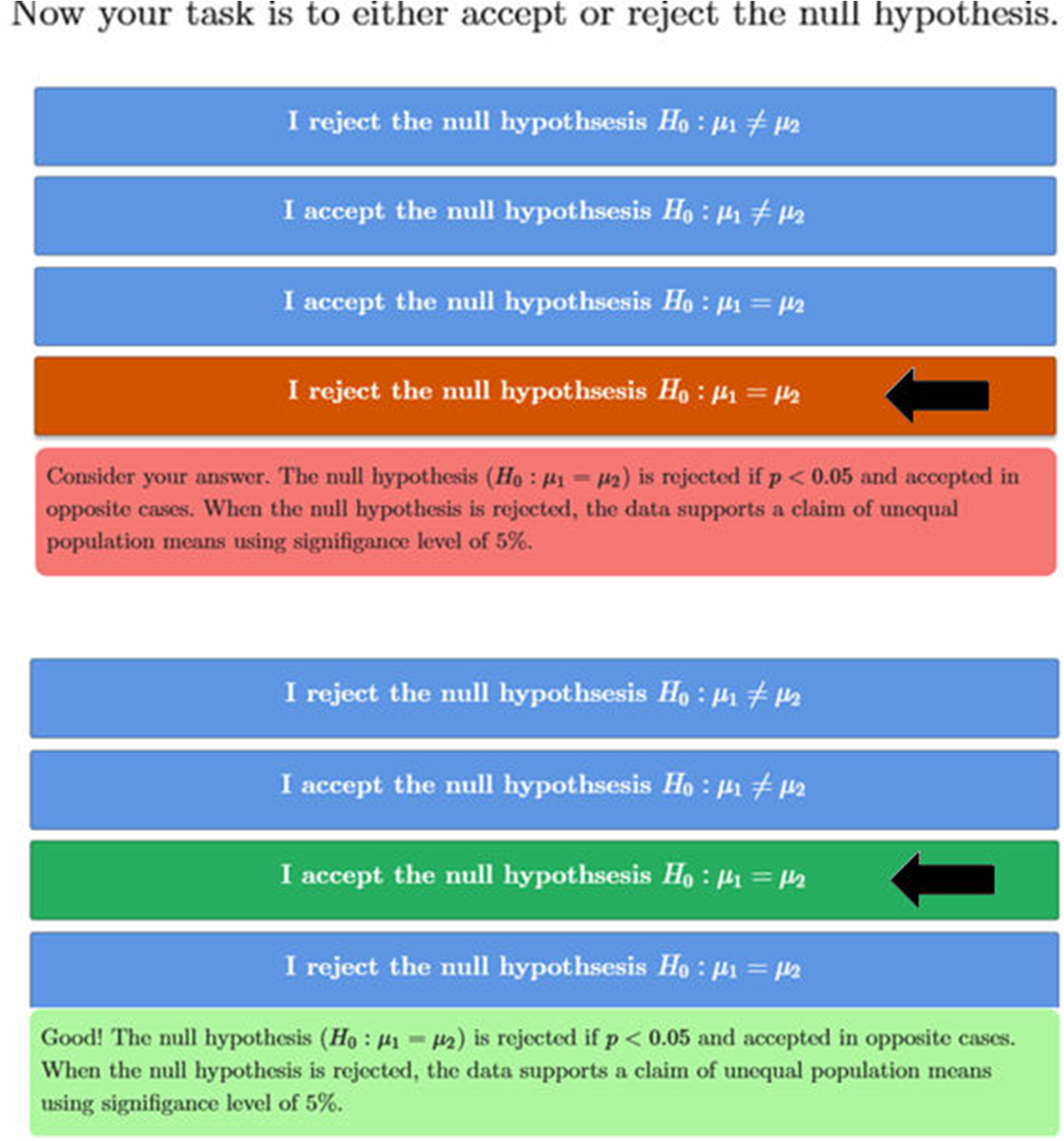

The user interface was designed around the six-week structure of the course. At the second level, each exercise is labelled with a specific title. The interface was kept as simple as possible. Figures 1 and 2 (translated from the original Finnish) illustrate an exercise that includes both mathematical and verbal assignments. Figure 1 shows an example of a two independent samples test of means (for a complete list of exercises, see the Appendix). In this example, the user provided correct answers, which are indicated by a positive feedback message.

Example of a problem solution (Math).

Example of a problem solution (Verbal).

In Figure 2 (translated from Finnish), the correct choice was selected after one incorrect attempt. This choice was determined based on the numerical results shown in Figure 1. In this case, the null hypothesis was accepted because the p-value was greater than 0.05.

Evaluation: Research Questions and Hypothesis

Research Questions

Research Hypotheses

Results

The Data

A short survey (

In 2025, further data were collected, including a survey (

Statistical Methods

Relative frequencies and descriptive statistics were used to summarise the data. Two independent samples t-tests and

Descriptive Analysis: Students, Use of eISTAT and Exam Grades

The group of course participants was relatively homogeneous. Among the survey respondents, 83.5% were first- or second-year students. Approximately 48.1% reported having prior experience with learning environments similar to eISTAT. In Finland, nearly all students follow the same national education system, in which mathematics and related subjects are studied throughout the nine-year comprehensive school. Secondary education is typically completed at upper secondary school, attended by all (100%) of the survey respondents. Furthermore, 65.8% of respondents had studied advanced mathematics during upper secondary school.

We converted both eISTAT exercise scores and exam grades into categorical variables. The first categorisation divided students into non-users and users of eISTAT. The second categorised users based on their completion rates: non-users, those completing more than 0% but less than 50% of eISTAT points, those completing between 50% and 75%, and those completing more than 75% of the points. Exam grades (ranging from 0 to 5) were recoded into two categories: fail (0) and pass (1–5). An additional variable distinguished between non-excellent (grades 0–3) and excellent (grades 4 or 5) performance. During the course, 63.5% of students used eISTAT (distribution across the four categories: 36.6%, 14.8%, 5.1%, and 43.5%, respectively). The distribution of grades (from 0 to 5) was 33.4, 14.8, 12.1, 16.7, 16.4 and 6.6%, respectively. In other words, 66.6% of students passed the exam (grade>0), and 23.0% achieved excellent grades (grade 4 or 5).

User and Learning Experience (Q1)

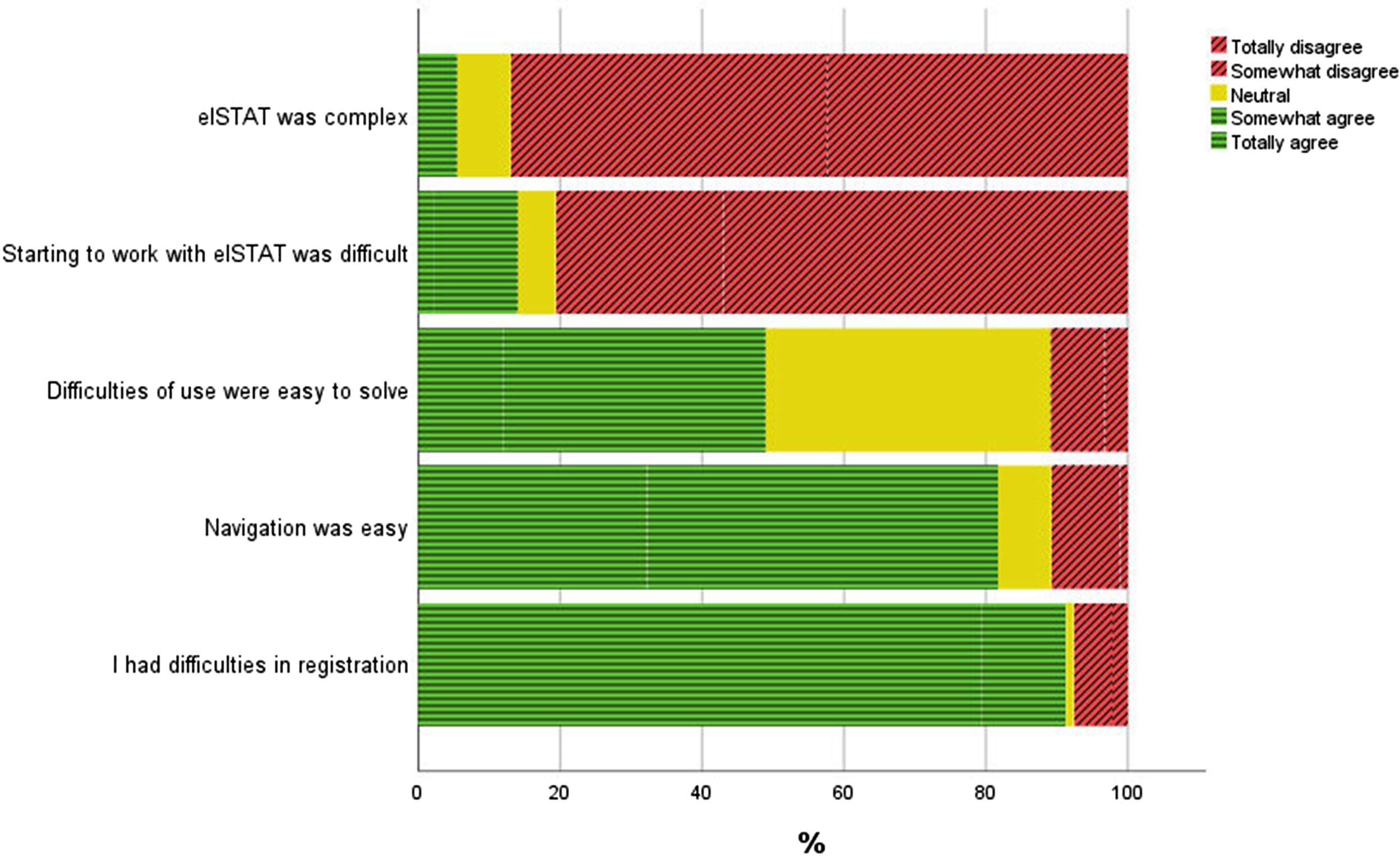

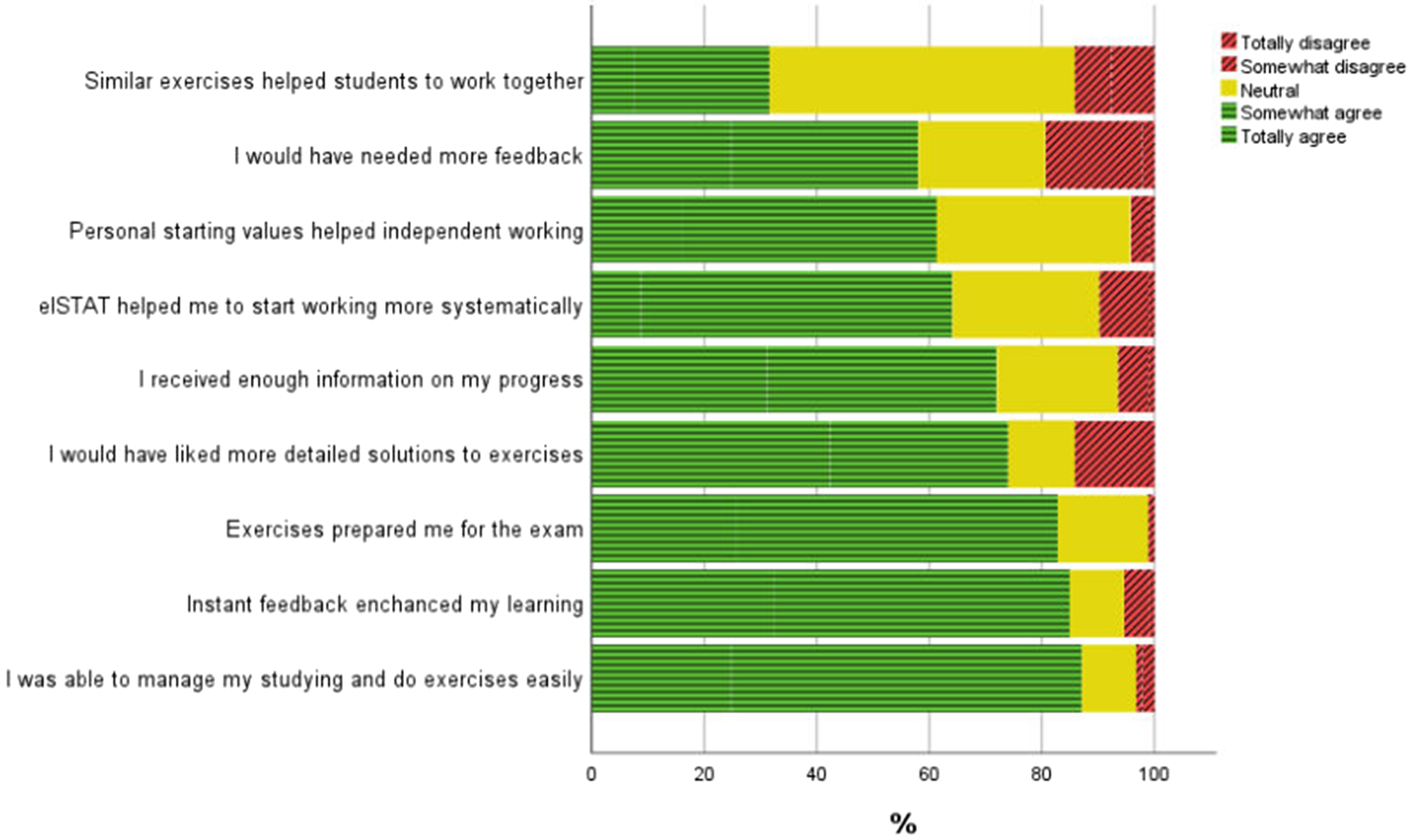

The results indicated that students were generally satisfied with the eISTAT application. Figures 3 and 4 present the distributions of selected questionnaire items. The statements are ordered according to the level of agreement, combining responses of somewhat agree and strongly agree.

User experience, 2024 (

Learning experience, 2024 (

The user experience was generally positive: eISTAT was not widely perceived as complex. The majority of survey respondents found the application easy to use from the outset. Approximately half of the respondents reported that any difficulties encountered were easy to resolve, and most described the navigation as straightforward. The user surveys also proved valuable in identifying areas for improvement, with many students reporting challenges during the registration process.

The application supported collaboration to some extent, with 35% of respondents somewhat or strongly agreeing with this statement (Figure 4; “Similar exercises helped students to work together”). Slightly more than half of the students expressed a need for more feedback. Overall, most students felt that the application supported independent and systematic work, providing sufficient information on their progress.

The majority of students indicated that they would have preferred more detailed solutions. Over 80% rated the application as a useful tool for exam preparation. Instant feedback was reported to enhance learning by enabling students to manage their studies more effectively.

Developer and Instructor Experience: Challenges, Impact and Risks (Q1)

Several technical challenges arose during the development of the application. The use of unfamiliar programming languages, along with poor integration between them, contributed to increased development time. For example, the boxplots shown in this article had to be drawn using multiple rectangles rather than a single command. The use of a more common language, such as Python, might have offered better online resources and support for a solo developer.

The environment also exhibited limited flexibility in certain aspects. For example, feedback for true or false answers was static—if a student submitted the same incorrect answer multiple times, the feedback did not change.

On the positive side, the use of a platform with numerous built-in tools for course setup was a significant advantage, as it enabled the developer to concentrate on exercise creation. The developer also received valuable support from the Department of Information Technology, which assisted with programming and testing. Student feedback was particularly useful in identifying a feasible range of random starting values for the exercises. For example, students contacted the developer when a particular set of random starting values resulted in a zero denominator during calculations, rendering the calculations incomplete. In such instances, the developer provided a replacement set of starting values and modified the application code to address the issue.

The application had an immediate impact on the teacher's workload. Previously, assessing exercises required approximately one full day per week, as all assessments had to be completed manually. With the introduction of eISTAT, the instructor's responsibilities were reduced to addressing occasional student queries—around one hour per week, either in person or via email.

In any system development, risks must be carefully evaluated. A critical concern for the application is its reliance on the programmer. Maintenance and further development depend heavily on the instructor. Should the responsible individual change, the application's future will hinge on the motivation, experience, and skills of their successor. Comprehensive documentation is therefore essential to ensure continuity.

Another concern relates to the lifespan of the ViLLE environment, as the application depends on its ongoing maintenance. The future of the coded applications becomes uncertain if support for ViLLE ceases. However, the University of Turku currently maintains ViLLE and supports over 100,000 active users. Discontinuation of the learning environment is therefore unlikely in the near future.

Impact of Using the System (Q2)

The analysis revealed no statistically significant association between the level of mathematics in previous studies and the use of eISTAT, whether measured on a two-point or four-point scale (Hypothesis H1). Similarly, no significant relationship was found between eISTAT usage (on either scale) and passing the exam or achieving an excellent exam result (all

Dependencies between the use of eISTAT (both the two- and four-category variables) and course outcomes were tested using the

Analysing eISTAT usage in relation to excellent exam grades revealed similarly clear differences. Among users, 26.3% achieved a grade of 4 or 5, compared to only 10.8% of non-users. When using the four-point scale of completion rates, the percentages of excellent grades in the four groups were 10.8%, 17.7%, 15.8%, and 29.4%, respectively (p < 0.05). These results clearly indicate that students who used eISTAT more frequently, and completed more exercises, tended to achieve better exam outcomes. The percentage of students achieving excellent grades was nearly three times higher among the most active eISTAT users compared to the least active users (29.4% versus 10.8%).

Further analysis comparing exam success, measured by points, showed the same trend. Students who used eISTAT earned significantly higher average exam scores (

Conclusion

The results of the data analysis showed that students appreciated features of eISTAT such as instant feedback, ease of use, and personalised starting values—all of which enhanced their study and learning experience. These findings were consistent with the previously identified success factors for e-exercises. Furthermore, eISTAT usage was associated with better exam performance. However, the latter finding warrants further analysis.

The underlying strengths of eISTAT development were also in line with presented e-exercises success factors:

The course instructor had extensive experience in teaching and managing the course, along with programming skills. Student engagement: Hundreds of students tested eISTAT. The testing is still ongoing. Students used the application very actively and gave valuable feedback. When contacted by a student, the developer typically provided hints to assist with the completion of an assignment. Additionally, as mentioned earlier, in some instances, the developer supplied a replacement set of starting values for students to utilise. Such occurrences were beneficial for the development of the application. Course design: The application structure was based on the blocks of 6-weeks course. Exercises simulated the exam. Technology: The application was developed in already existing environment providing for example course management and learning analytics. eISTAT is an embedded system within the students’ intranet, accessed using university network credentials. Environment: Maintainer of the ViLLE learning environment gave support in technical matters. Online information sources were used to enhance programming.

The data gathered warrants particular attention. Learning and survey data are pivotal in educational contexts, serving multiple functions. Such data can support research that helps foster innovative practices in educational settings. For instance, survey methodologies are commonly utilised to collect structured feedback from participants, including students and educators, which can illuminate feelings, attitudes, and perceptions towards various learning environments (Weiner & Weiner, 2017). This contributes to a more comprehensive understanding of the factors influencing learning and, consequently, the development of learning technologies.

We propose an eISTAT-style educational module integrated with an existing statistical package, such as SAS or SPSS. This module would combine lecture-style content with hypertext and videos, support the use of personal or system-provided data, and include a section for manual calculations aided by interactive tools, automatic assessment, and feedback. Users could present correct calculation results in SPSS (or a similar package) output format and demonstrate analyses either manually or using the software. This approach helps users connect mathematical concepts and tools with real data analyses performed using the software. Artificial Intelligence (AI) could assist users when they require help selecting appropriate methods. For example, AI may aid a student in locating the correct portion of the course material to work with, or AI could help in identifying most common errors in the analysis and suggest corrections. Additionally, the system would collect detailed learning data to inform the ongoing development of both course content and the software itself. In this way, the statistical package can support students at all university levels, enabling them to apply complex methodologies after introductory courses within a familiar learning environment.

We acknowledge that several educational modules exist within statistical packages such as R. The benefit of the development environment of eISTAT (ViLLE) is that coding is limited to creating exercises, as foundational components, such as course management tools, are already in place.

To conclude, AI-enhanced educational tools enable software to tailor resources and instructional strategies according to the individual progress and needs of learners. Kahn and Winters (2021) highlight the profound impact of AI on education, emphasising how AI systems can facilitate personalised learning experiences by analysing student data and adjusting instruction accordingly. Although not discussed in detail in this article, this trend clearly represents a key focus in the development of e-exercises.

Limitations

It remains unclear to what extent other factors influence the relationship between eISTAT usage and improved exam performance. A more comprehensive model of course outcomes would require additional data. From a statistical perspective, a multivariate linear model—such as a structural equation model—would better account for dependencies among relevant factors, including students’ attitudes towards statistics. More data are needed to estimate such a model.

Footnotes

Acknowledgments

We thank University Lecturer Dr. Erkki Kaila from the University of Turku for his valuable comments.

Ethical Approval

The study followed the responsible conduct of research guidelines set by the Finnish National Board on Research Integrity. In collection, storage, analysis, and reporting, compliance with the Data Protection Act was ensured.

Research and use of registration data approvals were granted by University of Turku.

Consent to Participate

Participation in the study was voluntary. Informed consent to participate was taken from all participants. Students were also informed about the information being collected and its purpose.

Consent for Publication

Not applicable.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The quantitative datasets used and analyzed in this study are available from the corresponding author on reasonable request.

Notes

Author Biographies

Appendix. List of Exercises of the Statistics Course System (number of exercises in parenthesis)

Operation of sum (2)

Combinatorics (2)

Basic probability theory (1)

Total probability and Bayes theorem (1)

Levels of measurement (1)

Crosstabulation (1)

Sample estimates (1)

Cumulative distribution function of a discrete random variable (1)

Binomial distribution (2)

Hypergeometric distribution (1)

Poisson distribution (1)

Cumulative distribution function of a continuous random variable (1)

Uniform distribution (1)

Exponential distribution (1)

Normal distribution (3)

One sample test of mean and confidence interval (2)

Two independent samples test of mean and confidence interval (4)

Two dependent samples test of mean and confidence interval (2)

Two independent samples test of variance (1)

Test of correlation (1)

One sample test of proportion and confidence interval (2)

Two independent samples test of proportion and confidence interval (2)