Abstract

ChatGPT and AI programs are creating a stir on college campuses nationwide. Concerns about cheating are strong and many instructors are adopting new teaching strategies to dissuade students from using the technology in assignments. In the present study, undergraduate students in an introductory epidemiology course were assigned to use ChatGPT to produce essays about topics related to course content and, in pairs, critically analyze the resulting essays. Individually, students responded to reflection questions regarding the technology and its implications for college classrooms. In this study, those qualitative reflection responses were analyzed for themes. While a variety of viewpoints were expressed regarding expectations of the program, most students were aware of the potential for cheating but remained cautiously optimistic about the use of ChatGPT as an educational tool.

Introduction

In recent months it has been virtually impossible to avoid hearing about ChatGPT, GPT4, Open AI, and other AI tools in the media, in particular in the context of educational settings. By many accounts, AI tools like ChatGPT are entering the mainstream with a dizzying speed (Roose, 2023; Rudolph et al., 2023; Satariano, 2023). The potential impacts on education are vast, and reactions range from excitement to concern. In a piece written for the New York Times in January, 2023, Kalley Huang described instructional changes taking place in schools across the country, including colleges and universities. Some instructors are adopting pedagogical methods that make it harder for students to use AI, while others are incorporating the technology. “In higher education, colleges and universities have been reluctant to ban the A.I. tool because administrators doubt the move would be effective and they don't want to infringe on academic freedom. That means the way people teach is changing instead” (Huang, 2023, para. 10).

Academic dishonesty is a topic that permeates the educational landscape. The scholarly literature on cheating is vast. As technology rapidly advances, students are exposed to newer and more effective ways to skirt academic requirements. Scholars have advocated for using proctored assessments in online courses (Trenholm, 2007), exploring new methods of verifying student identity for authorship (Semple et al., 2010), and approaching academic dishonesty through intra- and inter-institutional collaboration (Aaron & Roche, 2013).

Student responses to the use of tools like ChatGPT vary. Some students are wary of the possibility for inaccurate content created by the tools while others are enthusiastically using it to ease their workload (Huang, 2023). In a survey of college students, 43% of respondents had used ChatGPT or similar tools, and 50% of those students had used the tool to complete assignments or tests (Welding, 2023). In the same study, about half of students said they agreed with the statement “using AI tools to complete assignments and exams constitutes cheating” and 54% said that their instructors had not openly discussed the use of ChatGPT or similar tools.

The purpose of this study was to engage students in dialogue about the use of AI tools like ChatGPT in the classroom through an assignment that linked content learning with critical reflection. In particular, the following research questions framed the study:

What expectations do students have regarding AI tools like ChatGPT for academic use? What positive and negative implications for the classroom do students perceive with the use of tools such as ChatGPT? How do students contextualize the use of tools such as ChatGPT within their own academic experience?

Methods

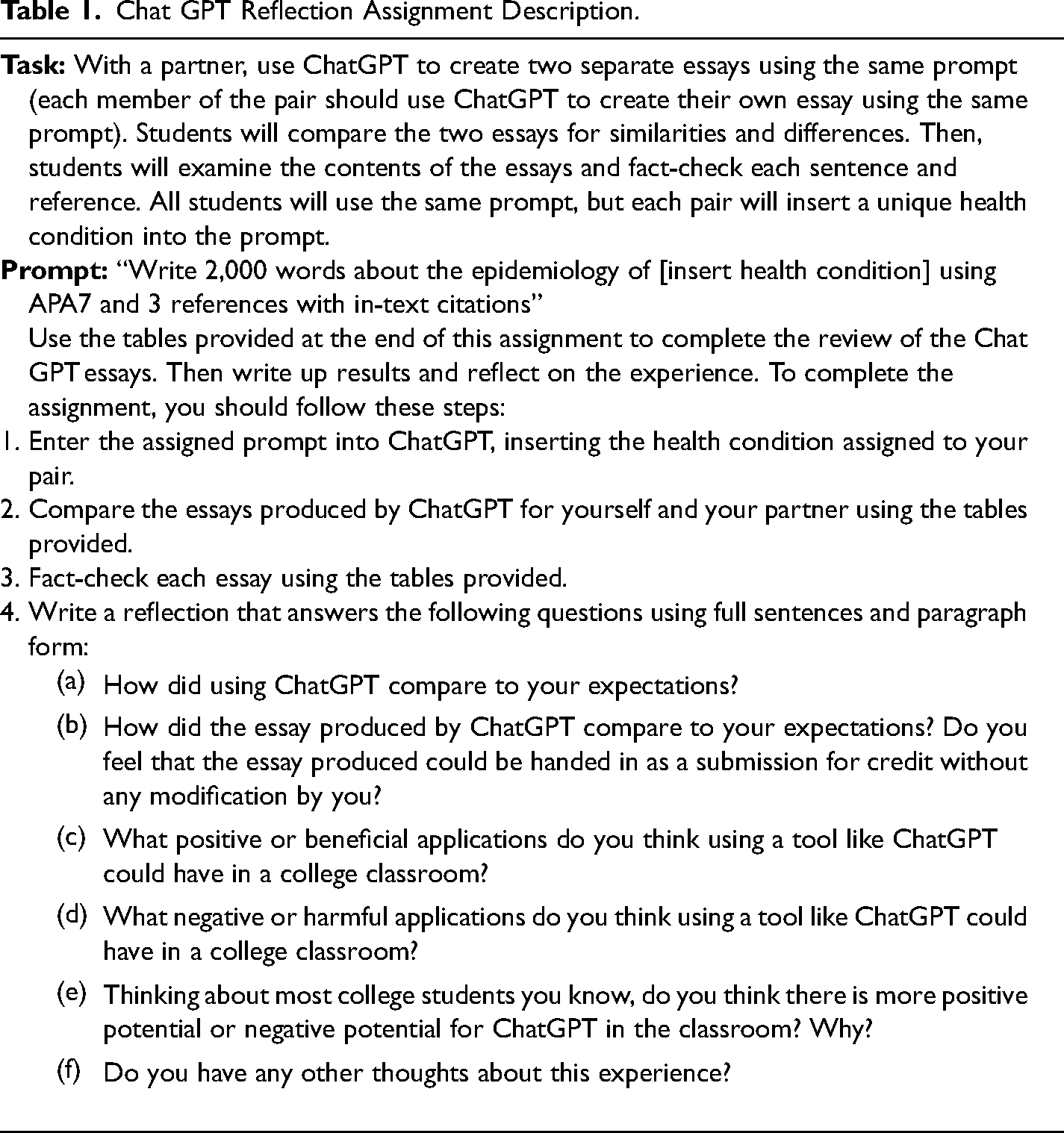

For this study, the data consisted of student work samples from an undergraduate introductory epidemiology course at a comprehensive university in the Midwest (total course enrollment was 38 students). The assignment was conducted in February, 2023. The class assignment asked students to work with a partner, enter a specific prompt into ChatGPT, and critically analyze the resulting content produced by the program (Table 1). Dyads collected quantitative data on each ChatGPT essay including number of words, sentences, references, and peer reviewed references. They also counted the number of sentences that contained factual statements, the number of sentences with factual errors, the number of sentences that did not match the references provided, the number of in-text citations, the number of incorrect in-text citations, the number of in-text citations that adhered to APA7 guidance, and the number of references that adhered to APA7 guidance. Then the dyads worked together to compare their ChatGPT essays on each of these metrics. Finally, each individual student wrote a qualitative reflective narrative to explore how using ChatGPT compared to their expectations, their thoughts about using work produced by ChatGPT in academic settings, and their thoughts about positive and negative implications of using tools like ChatGPT in the classroom.

Chat GPT Reflection Assignment Description.

Students were informed of the research component and all students who consented to participate had their submissions anonymized and entered into the qualitative dataset. Exploratory qualitative data analysis was conducted via content analysis to examine emerging issues and themes. Institutional Review Board approval was obtained prior to the initiation of any research activities.

Findings

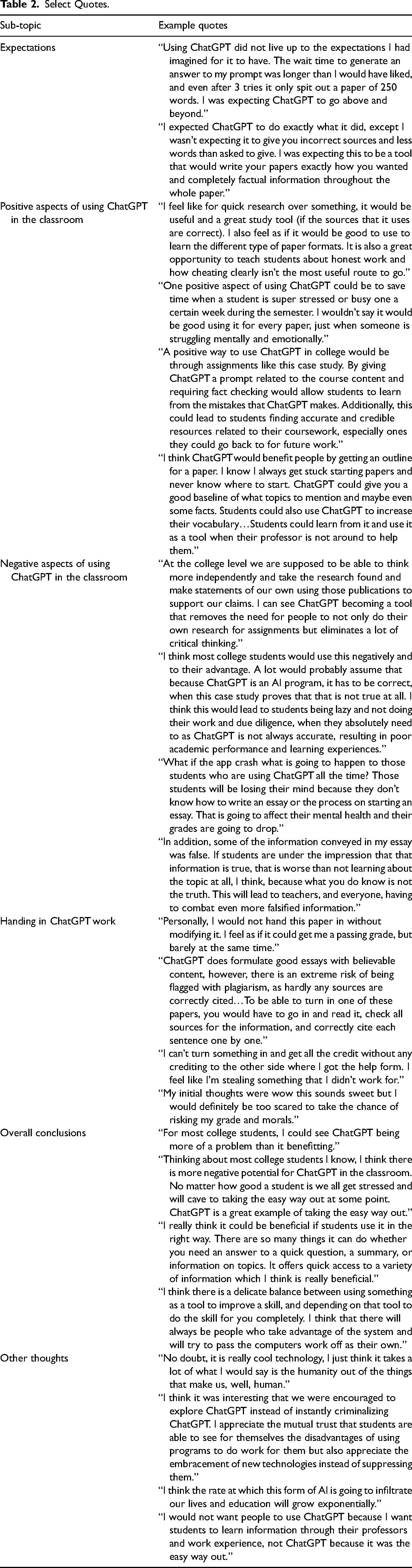

Thirty students participated in the assignment and consented to have their reflections included in the analysis (see Table 2 for select quotes). Initial expectations of using ChatGPT were mixed. While some students stated that they started with low expectations, most students reported being either uncertain about their expectations or having high expectations. Among students with high expectations, most were disappointed in the results produced by the program. Many of the concerns listed by students included issues with the technology including wait times to access the program (some students reported waiting hours or days to access the site) or having the program respond to the prompt by saying it was not able to write an essay meeting the specific criteria provided. Students were disappointed in the lack of instant gratification. Many students stated that they were impressed with the speed at which the program produced information about their topic, but there were significant issues with the perceived quality of the essay. For example, while the prompt specified 2,000 words, the essays produced were much shorter, often in the 300–500 word range. In addition, the references produced were often fabricated rather than real sources. Students expressed alarm at these quality issues. And, as a result, none of the students stated that they would feel comfortable turning in an essay produced by ChatGPT without significant fact-checking and modification.

Select Quotes.

Many students expressed optimism regarding possible beneficial uses of programs like ChatGPT in the classroom. One such benefit was the possibility for students to critically review work produced by AI programs with the outcome of enhancing their own understanding of both the topic and the writing process. A second benefit stated by several respondents was that the program could help students “get started” with an essay or paper, provide a template for how to write an essay, or suggest an outline for content. This was expressed as a benefit especially for students who have a hard time getting started on written assignments. Two students suggested that using ChatGPT could be beneficial for students who were extremely stressed or pressed for time; the time savings associated with using a first essay draft produced by ChatGPT was presented as a way to improve mental health.

When discussing negative aspects of using ChatGPT in the classroom, nearly all respondents mentioned the possibility for cheating. There was a general consensus among responses that submitting work produced by ChatGPT without modifying it was a form of plagiarism and was unethical. In addition, most students expressed concerns regarding the degradation of critical thinking that could occur with increased use of programs like ChatGPT. There were also several students who pointed out that the negative mental health consequences of using ChatGPT were significant and included anxiety as a result of trying to pass off work produced by ChatGPT as one's own and stress about poor grades.

When asked to reflect on the balance of positive and negative potential for ChatGPT in the classroom, responses were mixed; students stated both positive and negative opinions. While some students were adamant that only negative aspects (i.e., cheating) would prevail, others stated more optimistic viewpoints about how the technology could be integrated into college classrooms.

Discussion

The students who participated in this study presented a variety of viewpoints about the use of ChatGPT in collegiate classroom settings. Overall, students expressed certainty that AI technology will become ubiquitous and will be difficult to both keep up with and keep out of classroom settings. They also expressed pessimism about the use of ChatGPT and similar programs for cheating, but contextualized that pessimism with the perspective that cheating will always occur and AI programs are a new tool for that purpose.

There were clear contradictions in the views expressed by students. Regarding mental health, using ChatGPT was identified as a way to reduce stress by saving time but also as a way of worsening stress by increasing anxiety around perceived cheating if using the technology. Regarding use of ChatGPT for class assignments, respondents would not submit work produced by the technology without editing it (that was seen as cheating), but they would use it to get a paper started or to see an example (that was seen as using the technology as a resource). In addition, while some students said they personally would not use the program, most said they thought others would use the program, indicating a general consensus around social norms related to the use of AI to complete assignments. There was some excitement, or “buzz,” around the technology, but many students were disappointed that they did not get exactly what they asked for. The sentiment expressed by many was that the program is interesting but potentially dangerous, with an understanding that there is a “right way” and a “wrong way” to use it, with an unclear line of demarcation between the two.

The purpose of this study was to understand student perceptions and expectations around the use of AI in an academic setting through qualitative analysis. As such, quantitative measures related to the similarity or accuracy of the essays produced by ChatGPT were not included here even though that domain was part of the original assignment. Future research should address those more quantitative measures alongside qualitative data regarding student use and reactions.

As college instructors grapple with selecting and implementing teaching strategies and tools to assess student learning, keeping up with and integrating technology into the classroom appropriately is a challenge. The reflections of these students indicate that they also fear the loss of critical thinking and connections with their professors and peers in the learning process. This suggests that many students value the academic college experience and expect to be challenged in college. Rather than working in an adversarial model in which instructors feel they need to race to be ahead of the technology and prevent its use, perhaps a paradigm shift to embed both the tech and critical thinking would be useful. Students seem ready to have discussion and debate about the use of these technologies in the classroom. Instructors should be open to engaging in those discussions as well. Assignments like this one, in which students are asked to use, analyze, and improve upon ChatGPT and its outputs, provide an opportunity for students to feel engaged, included, and trusted in the learning process. They also create space for conversations about how to be a digital citizen and a member of a collaborative learning community.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.