Abstract

Intelligent assessment, the core of any AI-based educational technology, is defined as embedded, stealth and ubiquitous assessment which uses intelligent techniques to diagnose the current cognitive level, monitor dynamic progress, predict success and update students’ profiling continuously. It also uses various technologies, such as learning analytics, educational data mining, intelligent sensors, wearables and machine learning. This can be the key to Precision Education (PE): adaptive, tailored, individualized instruction and learning. This paper explores (a) the applications of Machine Learning (ML) in intelligent assessment, and (b) the use of deep learning models in ‘knowledge tracing and student modeling’. The paper concludes by discussing barriers involved in using state-of-the-art ML methods and some suggestions to unleash the power of data and ML to improve educational decision-making.

Keywords

Introduction

Over the last decade, we witnessed the rise of the ‘big data’ phenomenon. Developments in computing power and cloud computing, coupled with increased digitization of traditional data and the prevalence of virtual environments, led to an exponential growth of data-big data- with 5 V characteristics: volume, velocity, variety, veracity and value. The race to use data as ‘new oil’ and commit to data-informed decision-making is evident in the current policies of many countries, such as the USA, the UK, China, and Germany. Data on students’ enrollment and admission, demographic, attendance, interaction, engagement, and performance combined with institutional and curricular data, can reveal trends, patterns, and anomalies that might not be visible in smaller datasets (Gagliardi & Turk, 2017). Furthermore, in Massive Open Online Courses (MOOC) platforms such as EdX or Khan academy, where millions of participants learn online, evaluating the learning process and outcomes (including satisfaction and experiences) pose considerable challenges in tracking and analyzing learning traces and interactions of participants (Tzeng et al., 2022). Such extremely complex, unstructured, multi-modal, and temporal data cannot be dealt with traditional research methods (i.e., observation, interview), conventional data management systems (e.g., SPSS), or typical statistical techniques (i.e., ANOVA).

Big data demand their own infrastructure and analytical techniques. Artificial Intelligence (AI) is the key component of many educational technologies, such as intelligent tutoring systems (Boulay, 2018), early warning systems (Akçapınar et al., 2019), recommendation systems (Chiappe & Rodriguez, 2017), adaptive learning systems (Bajaj & Sharma, 2018), learning analytics (g., Krumm et al., 2018), educational data mining (Fischer et al., 2020), automatic scoring (Lazendic et al., 2018), etc.

This paper explores the roles and applications of ML, a sub-domain of AI, in intelligent assessment. Intelligent assessment involves continuous capturing, processing, analyzing and visualizing massive data about learners’ cognitive levels, learning styles, attitudes, habits, etc. to provide personalized learning support such as tailored content, path recommendation and intelligent tutoring (Li et al., 2021). The following research questions will be addressed:

Research Question 1: what is the current state-of-the-art of Machine Learning in educational assessment?

Research Questions 2: which methods or approaches are used to infer or trace progress in knowledge?

First, a quick review of AI in Education, precision education, intelligent assessment and typical models of ML are discussed. Later, we review the state-of-the-art ML methods in intelligent assessment, focusing primarily on ‘knowledge tracing’ and ‘student modeling’ due to their decisive role in determining the learning state of learners. Detailed descriptions of the challenges and limitations of every model are presented. Finally, we elaborate on future directions, e.g., Transformer-based GPT-3 models, for knowledge tracing models.

Artificial Intelligence (AI): An Overview

AI can be defined as machines, agents, or computer programs that simulate cognitive functions associated with human minds, such as perceiving patterns, abstract reasoning, learning, communicating and problem-solving (US national science & technology council, 2016). The origin of AI can be traced back to the 1950th, with pioneers like Turing (imitation game and Turing test, 1950), McCarthy (Dartmouth research project,1955), Newell and Simon (Logic theorist, 1956) and Feigenbaum (Expert Systems, 1965). Although early enthusiasm and excitement about what AI can do were abated by the so-called AI-winters (disillusion with AI and a noticeable reduction in governmental funds and investment), a milestone was the victory of Deep Blue, IBM's chess computer, against the world champion Garry Kasparov in 1997. Since then, AI has made impressive advancements in many domains.

Automated vehicles (e.g., self-driving), AI-equipped unmanned aircraft systems (e.g., drones), dark factories operated by robots, predicting algorithms in stock exchanges, medical image recognition, E-mail spamming, journalist robots, smart homes, digital assistants such as Apple's Siri or Amazon's Alexa, AlphaZero, etc. are examples of how AI is revolutionizing medicine, transportation, finance, business, military, logistic, games, etc. Although AI is not a single technology and uses several approaches such as Natural Language Processing (NLP), machine vision, robotics, etc.; most of the breakthroughs mentioned earlier were facilitated through developments in Machine Learning.

Machine Learning

Machine Learning (ML) is a sub-field or a technical approach to AI, which is able to learn from data and practical experiences instead of being explicitly programed or instructed on what to do (Mitchell, 1997). In contrast to the traditional, knowledge-based ‘Expert system’ approach to AI by using a set of if-then syntax and rules, ML uses input data to extract a rule or procedure and recognize patterns that explain the data or predict future data. In addition to drivers such as the Internet of the Things (IoT) and cloud computing, developments in ML can be mostly attributed to (a) increased computing power (Moore's Law), (b) reduced data processing time due to Graphical Processing Unit (PPU), (c) emergence of big data, and (d) advanced algorithms (Webber & Zheng, 2020).

Generally, there are two steps in ML, namely (a) training/learning process: when the computer learns from data to build algorithmic models, (b) testing/predicting process: when the computer makes decisions such as classifying or predicting with new data (Zhai et al., 2020). There are different approaches to evaluating the performance of algorithms, e.g., self-validation, split-validation and cross-validation. Whereas in statistics, residual or goodness-of-fit is used to judge the accuracy of a model (e.g., predictive), algorithmic accuracy is judged in terms of F1 score, recall, precision, AUC (area under the curve), etc. ML training methods can be classified into three categories: (a) supervised, (b) unsupervised and (c) Reinforcement Learning (Yu & Lu, 2021).

Supervised ML requires an expert to label the data manually- classification, prediction and recommendation belong to this category. Examples of supervised learning algorithms are Random Forest (RF), Decision Tree (DT), Regression (linear and logistic), K-nearest Neighbors (KNN), Support Vector Machine (SVM), Naïve Bayes (NB), etc. Unsupervised ML involves the automatic extraction of features without human intervention. Clustering techniques (K-means clustering for user profiling), dimension reduction techniques (Principal Component Analysis) and a priori algorithms are the most common types of unsupervised ML. Reinforcement learning algorithms are based on a behavioral model of learning: through trial-and-error and interaction with the environment, the agent changes and adapts its behavior based on the feedback it gets. For instance, a self-driving car learns about the environment by using sensors, RL and other ML techniques to detect patterns from large sets of images containing vehicles, people, traffic signs, etc.

ML uses a variety of models such as Neural Networks (NN), Generative Adversarial Networks (GAN) and Deep Learning. A deep neural network is “composed of multiple processing layers to learn representations of data with multiple levels of abstraction” (LeCun et al., 2015, p. 436). Compared with traditional statistical techniques, e.g., logistic regression, or conventional ML techniques, e.g., SVM, deep learning models require little manual engineering and achieve much higher accuracy in diagnosing, predicting and classifying (Cazarez & Martin, 2018).

Artificial Intelligence in Education (AIEd): Precision Education

Traditional education, with its one-size-fits-all content, fixed sequence, similar activity and assignment, focuses on cultivating average students (Cook et al., 2018). Teachers do not have resources (e.g., time, energy, repertoire) to tailor instruction in real-time to the individual needs, styles, and preferences of every single student in the classroom. Inspired by precision medicine, an innovative approach to disease prevention and treatment that takes into account individual differences in people's genes, environments and lifestyles (Collins & Varmus, 2015), Precision Education (PE) aims at enhancing diagnosis, prediction and treatment. Although it puts a special focus on identifying, preventing, and timely intervention for at-risk students-those who are predicted to have a higher chance of dropout/withdrawal, disengagement and low academic performance (Luan & Tsai, 2021; Yang, 2021)- the ultimate goal of PE is ‘personalized and adaptive’ learning’. Adaptive learning systems are essentially data-driven_ an intelligent system uses data from diverse sources to continuously adjust learning content, difficulty level, the pace of instruction, and path recommendation to different background knowledge, cognitive abilities, learning levels and styles (Brusilovsky, 1996). Several meta-analyses report higher educational effectiveness of adaptive systems compared to human teacher-led courses (Kulik & Fletcher, 2016). Cogbooks (UK), Knewton (USA), Smart Sparrow (Australia), Knower (South Korea) and Classba (China) are some examples of adaptive learning systems which are data-driven and use intelligent assessment. In the following, we explore the determining role of intelligent assessment in such systems.

Intelligent Assessment: Applying AI to Educational Big Data

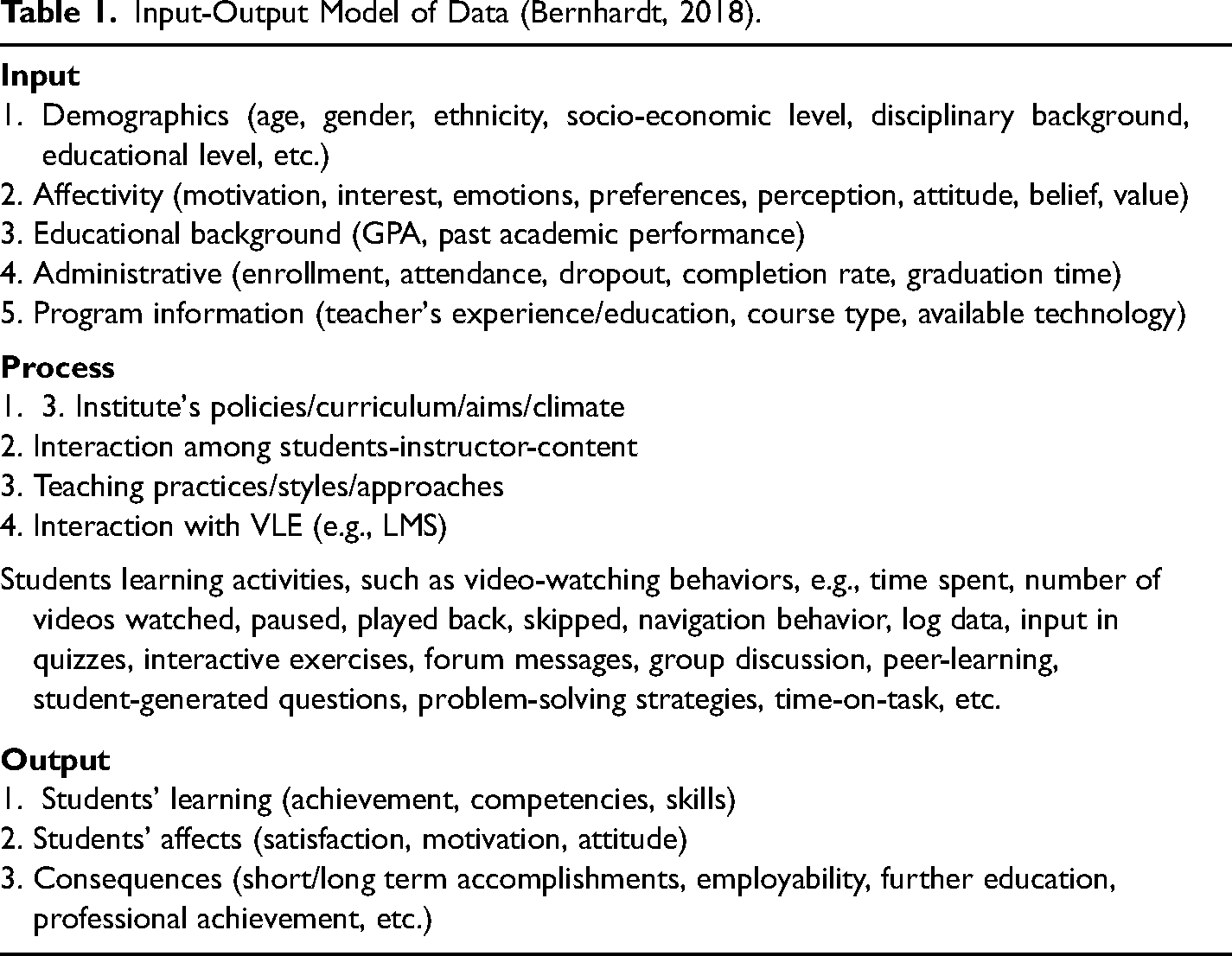

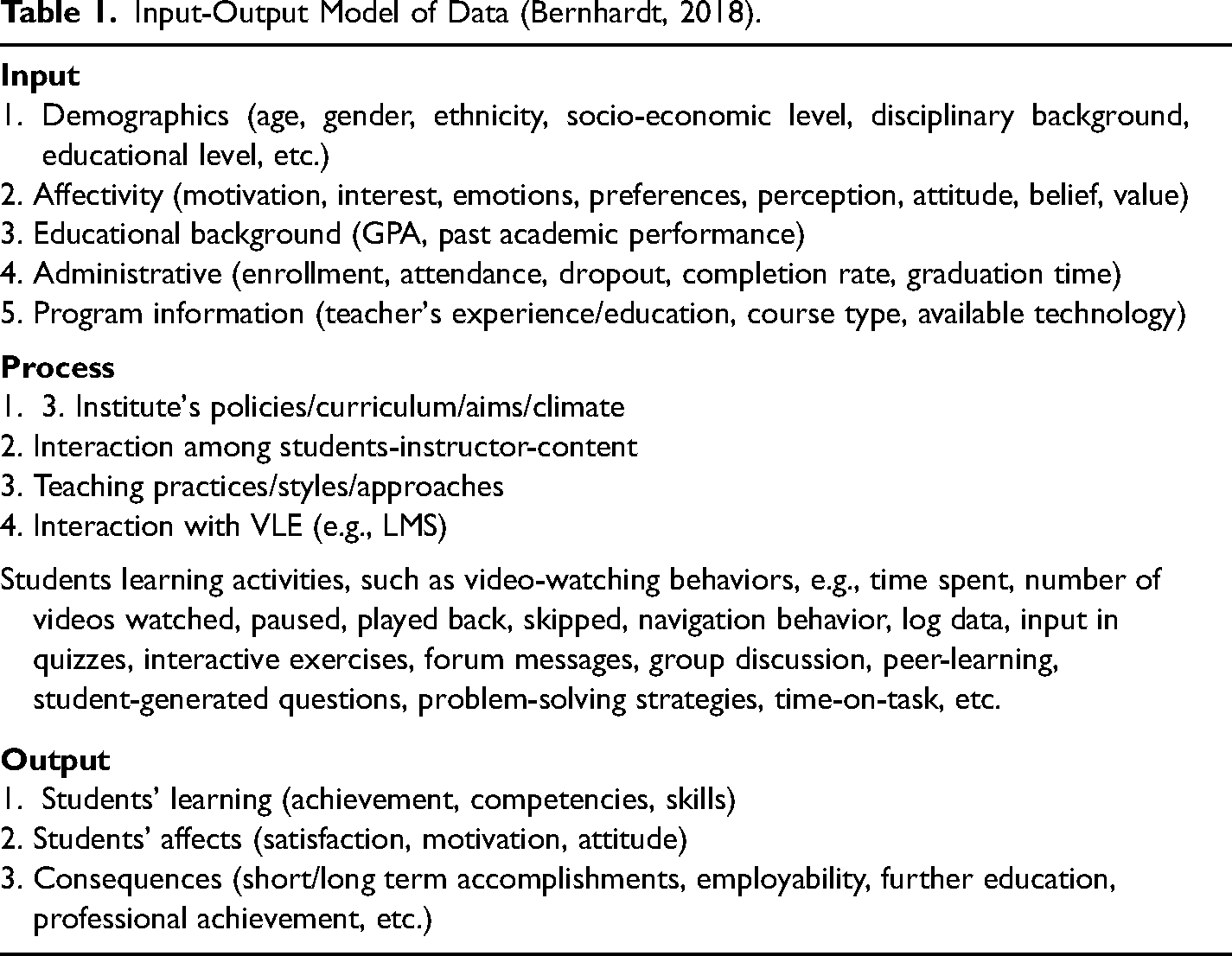

The expansion of ubiquitous learning in digital environments (large OER repositories, augmented/virtual reality, online games, MOOCs, smartphones, etc.) has led to an exponential growth of educational data. Educational data can come from different sources, in different formats and with different levels of granularity. The input-process-output model of data (Bernhardt, 2018) depicts the diversity and richness of educational data (see Table 1).

Input-Output Model of Data (Bernhardt, 2018).

Input-Output Model of Data (Bernhardt, 2018).

Although transforming these data into new insights can benefit students, teachers and administrators, it is almost impossible to analyze such data manually: we need new tools and technology for automatic data collection and analysis (Romero & Ventura, 2020).

Intelligent assessment (McCusker et al., 2013) uses a wide range of AI-based technologies to automatically capture and analyze data related to the quality of learning outcomes, retention, transfer, satisfaction, learning efficiency, motivation, etc. Therefore, intelligent assessment goes beyond evaluating narrow aspects of learning (e.g., knowledge) through traditional tests (e.g., multiple-choice items) and builds a portrait of a student's competencies by connecting data about cognition, emotion, and behavior of learners through more performance-based approaches, e.g., simulation, games, portfolio, virtual reality, etc.

Intelligent assessment or evaluation is used extensively in (a)

For instance, data on affective factors such as ‘emotion, feeling, motivation’ were collected traditionally by human practitioners through questionnaire surveys, self-reports, observation, or interviews. Intelligent evaluation uses technologies such as sensors & wearables (virtual/augmented reality, smart glasses/watches, face reader) to collect ‘process data’ on affective factors during learning. In a study conducted in the naturalistic setting of the classroom, Bosch et al. (2016) applied learning analytics, computer vision, and machine learning to measure and characterize students’ emotional states (affects). The results showed such systems can detect engagement, confusion, boredom, frustration, and joy accurately 98% of the time. Such data can be combined with data from other sources, i.e., neurological data which are gathered directly from the brain by technologies such as fMRI or non-invasive EEG, to triangulate and validate the results.

Deep Learning (DL), also known as Deep Network Learning, is a particular type of ML whose architecture is based on computational models of the brain called Artificial Neural Networks (ANN). DL involves an input layer, an output layer, and multiple connected, hierarchical hidden layers which gradually transform input signals to the desired output. Due to its usage of backward propagation in weighted neural networks, DL has a nondeterministic nature which allows the system to adapt and change through practical experience or training (LeCun et al., 2015). Given a large annotated dataset, DL demonstrates advantages that make it superior to traditional ML, i.e., automatic feature generation from raw data, transfer learning, and highly accurate representation of tasks. We examine three DL models that are used in intelligent assessment, namely Recurrent Neural Networks (RNN), Convolutional Neural Networks (CNNT) and Graph Neural Networks (GNN).

Recurrent Neural Network (RNN)

Convolutional Neural Networks (CNN)

Graph Neural Network (GNN)

RNNs, a variation of the feed-forward neural networks, are a chained set of artificial neurons in which information is propagated recursively over time. Since RNNs can preserve data contexts through current input (e.g., a word) and the information retained previously, they are mostly applied to time-series, sequential data, e.g., clickstream data as students interact with LMS, speech recognition, or sensor data which are produced in real-time or at a high-speed frequency. Such fast-generated data have high-temporal dimensionality causing a challenge for RNNs. Long-short Term Memory (LSTM) and Gated Recurrent Unit (GRU) are two particular variations of RNNs which can handle long-term temporal dependencies and prevent problems such as gradient explosion or vanishing. LSTM is especially suitable for learning complex patterns which require retention and memory, e.g., remembering past or previous performance of students while predicting their performance on future tasks (Marinescu-Muster et al., 2020).

CNN is a multi-layer neural network that can handle multi-dimensional data by applying local convolutional filters to extract features locally (Sun et al., 2021). For example, Qiu et al. (2019) applied a two-dimensional CNN, which can automatically extract the best features from the raw clickstream data, and predicted dropout with more than 86% accuracy. CNN can also be used in automatic scoring: e.g., Radatrmath, a personalized ITS for mathematics education, uses CNN in its output layer to assess and score open-ended questions such as defining factorization, which requires a constructed response (Lu et al., 2021).

Recently, researchers started using Graph Neural Networks (GNN) in MOOCs evaluation. GNN treats the input as a graph, and due to its arbitrary, non-Euclidean, and irregular structure, graphs can richly represent the knowledge among entities, e.g., students and courses, in the real world (Zhang et al., 2020). Furthermore, nodes (representing entities) in graphs can be order-invariant, making GNN a flexible and expressive model in capturing and representing real-time knowledge evolution, e.g., the interaction between the student and online questions in a test. Although GNN is used successfully in areas such as recommender systems and social network analysis, its application in online platforms is relatively new. In a recent study, Lu et al. (2021) applied graphs to determine learning order dynamically and guide the individual learning process.

Knowledge Tracing for Student Modeling

Intelligent Tutoring Systems (ITS) are AI-based computer programs that simulate teacher performance for automatic scoring, providing personalized feedback, learning content, suggestion, or support to individual students during the learning process. They consist of four components: domain model, student model, tutor model and user interface.

Evaluating learners’ knowledge, also called latent knowledge estimation or knowledge inference, is at the heart of both measurement theory and an essential component of ITS called ‘student modeling’ (Koedinger et al., 1997). The aim of student modeling is to track the activities of individual students (e.g., clickstream, time-on-task, number of correct answers, requested hints/help, etc.) to adapt instruction to students’ models. We examine both conventional diagnostic models, e.g., Item Response Theory (IRT, Rasch 1960), and ML-based models, such as Bayesian Knowledge Tracing (BKT, Corbett & Anderson, 1995) and Deep Knowledge Tracing (DKT, Khajah et al., 2016; Piech et al., 2015) that different ITS systems use to model students by inferring what they know.

IRT estimates students’ knowledge based on item parameters (i.e., item facility, item discrimination, and guessing level) and the prior knowledge level. The main flaw with this model is ‘unidimensionality’ or a single skill response mode: it can measure mastery on a single-dimensional construct and fails to capture performance across multiple tasks. Although IRT and its alternative, multi-dimensional model ‘DINA’ (deterministic input, noisy and gate), can be used with a manageable sample size, the rapid growth of public data captured during students’ interaction with digital environments and learning platforms, e.g., Cognitive Tutor and ASSISTmet, led to the provision of millions of complex data points which cannot be analyzed with such models (Yu & Lu, 2021).

BKT is a probabilistic, ML-based model which employs a Hidden Markov Model to track and estimate student knowledge and mastery by considering factors such as difficulty levels of topic, students’ background knowledge (e.g., previous grades) and guessing/errors (Liu & Koedinger, 2017; Yudelson et al., 2013). However, it can only show mastery in a binary value (0 = no mastery, 1 = mastery) of a single knowledge concept and lacks a quantitative representation value. Cognitive Tutor (Pane et al., 2014) is an ITS which uses BKT for students’ modeling. 1

During the past few years, researchers started using Deep Learning algorithms such as Recurrent Neural Networks (RNN) to keep track of sequential data such as changes in students’ knowledge during learning. ALEKS is an adaptive learning and assessment system which uses both RNN models, namely LSTM and GRU, to classify students into groups based on their learning behaviors in real time. ALEKS is an adaptive learning and assessment system in that the student's previous answers affect the choice of the next item, and the system immediately updates its expectation of what items the student knows or doesn’t know. Furthermore, the probabilistic nature of assessment allows for dealing with careless errors and lucky guesses (Matayoshi et al., 2021). In an innovative study, Li et al. (2020) used a combination of graph and convolutional knowledge, called Relational-2-Graph Convolutional Networks, to model students’ knowledge evolution and predict performance on a dynamic student-question interaction pool. Their approach outperforms both traditional machine learning models (i.e., Decision Tree and Logistic Regression) and the classical GCN model in terms of F1 score and accuracy.

Recently, a new generation of deep learning, ‘Transformers’, outperformed RNN models in handling longer sequences of data, e.g., sequences longer than 12,000 (Child et al., 2019). Furthermore, Transformers can be trained more efficiently and faster. These new algorithms demonstrate human-like performance, which makes them very popular in NLP (see BERT and GPT3). Although DKT provides very accurate predictions and students’ modeling, their applications are limited due to limitations such as the need for large datasets (ALEKS uses more than 1.4 million data), stability of prediction, interpretability and transparency (Yeung & Yeung, 2018).

Conclusion: Barriers & Solutions

This paper explored how machine learning methods facilitate PE, intelligent assessment and data-intensive research in education. By using machine learning, especially DL models, in adaptive ITS, the learning paths can be changed dynamically and personalized based on the learner's progress and pace.

The COVID-19 pandemic left a lasting impact on education: it showed that technology will no longer be an add-on, ‘nice-to-have’ thing; rather, it will be forever a substantial, ‘must-have’ part of teaching, learning, and assessment. Therefore, education must change to prepare for a world more deeply infused with ubiquitous and pervasive AI-based technologies (Roschelle et al., 2020). However, there are lots of obstacles, limitations, and barriers which prevent a smooth and easy integration and use of technology in educational settings.

Education and technology Separation: the split and lack of understanding between scholars in education and computer science pose a substantial challenge: educational practitioners and researchers lack relevant knowledge of ML or programming skills (e.g., Python) which is a barrier to using adaptive systems. Computer scientists also need to be aware of learning and educational theories and challenges in designing any adaptive, intelligent, personalized systems, otherwise, the system's potential can not be exploited fully (Cui & Xu, 2019). Webber and Zheng (2020) emphasized the centrality of instructors’ ‘professional belief’ to adopt a pedagogical innovation by asserting that practitioners must believe that the technology is useful (e.g., saving time and resources), applicable (easy-to-use) and flexible to their needs. Moreover, they should see an alignment between technology and their own belief and ability to improve teaching and learning (e.g., using ML-based automatic scoring frees instructors from the burden of manual grading of students’ responses). Data-related risks: serious issues have been raised regarding risks of privacy leakage, data manipulation, security of data management, lack of transparency, lack of model verifiability, algorithmic biases, fairness, etc. Although compliance with data security and privacy guidelines set by GDPR (General Data Protection Regulations) might mitigate some of the above-mentioned risks, still there are issues that need serious consideration, e.g., data ownership: there is no agreed-upon policy about to whom the data belongs (Yu & Lu, 2021). Webber and Zheng (2020, p. 294) suggested ‘If data is truly considered a strategic asset, it must be protected, managed, and leveraged just like other valuable assets on campus.’ Webb et al. (2020) emphasized that the probabilistic nature of neural networks and their complex, multi-layered architecture turn deep learning into a “black box”: it's excessively difficult to access, explain and interpret how the machine made a decision. Explainability is essential to minimize bias and ensure that decisions made based on machine learning are accessible, interpretable, and fair for all. Robustness of deep learning: several studies indicated ML models, especially DNN, are not robust enough: they can easily be fooled by noises or are vulnerable to adversarial attacks (Xu et al., 2021). Higher education resources: Running ML, especially DL, models require considerable infrastructure such as voluminous data, big data warehouses/lakes, computational power, a large number of chips to run analysis. Universities lack such infrastructure resources. It's the responsibility of the federal government to provide academics with the necessary technologies, tools, training, and support (Harris, 2019).

Scholars also suggest diverse measures to ameliorate these limitations, e.g., (a) development of ‘Explainable AI’ (XAI) which is traceable, understandable, transparent, fair, and accountable, (b) involving all stakeholders such as teachers, students, policy-makers, (c) adopting and communicating clear and strong policies regarding ethics, user protection, security, etc. It requires a long-term investment in the Data Literacy of educational stakeholders to establish a culture of evidence that exploits AI-based technologies for data-informed decision-making in education.

Although we cannot expect teachers to develop ML algorithms, they can be provided by ‘Professional Development Programs (PDP)’ to learn how to (a) interpret predictions and learning paths generated by ML, (b) make more targeted, precise pedagogical decisions (e.g., providing timely feedback or scaffolding according to students models), (c) develop a large repertoire of personalized intervention based on ‘evidence-based best practice’ in ML research and (d) to enhance students’ motivation and engagement with such systems. Such PDPs can be supplemented with a ‘Community of Practice (CoP)’, where practitioners get together to share their experiences, challenges, success and learn from each other in an informal, supportive environment. Literature Review

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article