Abstract

Medicaid managed care serves 86 million beneficiaries at $376 billion annually, yet evidence that managed care delivery improves outcomes remains inconclusive, with nearly 40% of Medicaid acute care visits attributable to ambulatory care-sensitive conditions. State procurement processes create principal-agent relationships where managed care organizations (MCOs) make performance commitments before contract award, but no research has systematically evaluated whether MCO commitments align with state priorities. We assembled 265 procurement documents from 32 states (2017-2024), extracting 1666 text files. Using retrieval-augmented generation (a method that grounds AI outputs in retrieved document passages) with large language model analysis, we extracted 372 283 performance claims classified into 6 thematic domains. Two doctoral-level coders validated a stratified random sample (Cohen’s kappa: 0.86, 95% CI: 0.81-0.91). We calculated per-file normalized concordance ratios (MCO claims per document divided by state requirements per document, controlling for document volume) and conducted stratified analyses by document type. MCOs systematically overemphasized technology claims (normalized concordance ratio: 53.9, median: 28.2) and health equity claims (ratio: 22.0, median: 10.3) relative to state requirements, while underemphasizing chronic disease management (ratio: 15.8) and workforce development (ratio: 14.2). Post-pandemic increases in health equity (10.3-fold) and technology claims were driven primarily by state contract and scoring documents rather than MCO proposals. Only 6 states had sufficient paired data for concordance analysis. Medicaid procurement documents reveal systematic thematic divergence between MCO performance claims and state priorities. The observed patterns may reflect strategic positioning, normal negotiation dynamics, or evolving state procurement frameworks. Given that Medicaid beneficiary outcomes remain poor despite widespread managed care adoption, procurement reforms requiring measurable, time-bound performance commitments could improve alignment between organizational claims and care delivery.

Short Article Summary – Layperson Terms

When states hire insurance companies to manage Medicaid health coverage for low-income Americans, the companies make extensive promises about what services they will provide, but this study found they systematically overpromise on politically popular topics like technology and health equity while undercommitting to everyday care like managing diabetes or having enough doctors in their network. States should require insurance companies to back up their promises with specific numbers and deadlines rather than accepting vague commitments that sound good but don’t translate into better care for the ~80 million Americans who depend on Medicaid.

Keywords

Introduction

Medicaid managed care now serves 86 million beneficiaries across 40 states and the District of Columbia, accounting for $376 billion in annual expenditures. 1 Despite this scale, state oversight of managed care organization (MCO) performance remains inadequate. The Medicaid and CHIP Payment and Access Commission concluded that existing accountability mechanisms may be insufficient to ensure MCO performance and outcomes, 2 while the Government Accountability Office documented persistent challenges in federal monitoring of state managed care programs. 3

Critically, evidence that managed care delivery improves Medicaid beneficiary outcomes relative to fee-for-service care remains inconclusive. A comprehensive review of studies spanning nearly 2 decades found inconsistent effects on access and quality, with results varying substantially by state and program design, while noting that quality scores for Medicaid managed care enrollees were consistently lower than for commercially insured populations across multiple measures.4,5 The burden of avoidable acute care utilization among Medicaid beneficiaries remains substantial: nearly 40% of acute care visits are for ambulatory care-sensitive conditions—hospitalizations that evidence suggests could have been prevented through adequate primary and preventive care—representing 12.4% of acute care spending and exhibiting more than 13-fold variation across counties within states. 6 This rate of avoidable utilization substantially exceeds rates observed in Medicare and commercial populations, underscoring that managed care as currently implemented has not resolved fundamental gaps in primary care access and chronic disease management for Medicaid beneficiaries. These persistent outcome deficits make the procurement process—the primary mechanism through which states define care delivery expectations—a critical but understudied lever for improving care quality.

State procurement processes represent a critical accountability mechanism through which states communicate priorities and MCOs make prospective commitments before contract award. These procurement relationships exemplify classic principal-agent dynamics involving multiple simultaneous tensions.7 -9 Information asymmetry creates opportunities for strategic behavior, as MCOs possess private information about their true operational capabilities that states cannot fully verify. Goal conflict emerges because states prioritize beneficiary health outcomes while MCOs must balance population health objectives against financial sustainability under capitated payment structures that transfer substantial actuarial risk. 9 Contract incompleteness—the recognition that procurement documents cannot anticipate every future contingency—creates residual discretion that MCOs exercise throughout the contract period. 9 When competing for multi-billion-dollar contracts, MCOs make extensive claims about their capabilities, prior achievements, and commitments to future performance. Following Bovens’ conceptual framework distinguishing accountability proper (which requires answerability and the possibility of sanctions) from performance rhetoric, 10 we term these statements “performance claims” rather than “accountability claims,” recognizing that procurement assertions encompass capability descriptions and aspirational narratives alongside enforceable future commitments.

Three policy developments intensify the urgency of understanding procurement dynamics. First, the Centers for Medicare & Medicaid Services finalized comprehensive managed care regulations in May 2024, establishing enhanced requirements for quality measurement, external quality review transparency, and public reporting of MCO performance.11,12 Second, MACPAC’s March 2025 Report to Congress specifically called for improved external quality review processes and expanded accountability mechanisms in managed care contracting. 13 Third, the 2025 budget reconciliation (HR 1) introduced federal work requirements for Medicaid expansion populations, projected to reduce federal spending by $326 billion over 10 years while potentially disenrolling 5.2 million adults by 2034, 14 creating unprecedented fiscal pressures that amplify accountability concerns.

Recent advances in large language models (LLMs) and retrieval-augmented generation (RAG) architectures enable systematic document corpus analysis at previously infeasible scales.15 -17 RAG systems combine document retrieval with language model generation: relevant passages are first retrieved from a document database using similarity search, then provided as context to a language model that extracts structured information grounded in the retrieved text rather than generating content from its training data alone. A recent systematic review of RAG applications in healthcare identified growing adoption for clinical document analysis, regulatory interpretation, and knowledge extraction, while noting limitations including sensitivity to retrieval quality, potential for incomplete passage retrieval, and challenges in validating outputs at scale. 18 RAG architectures mitigate hallucination risks through explicit source attribution,19,20 and when combined with rigorous human validation, enable reproducible extraction of structured information from large unstructured document collections.

This study addresses 3 research questions. First, what performance claim themes do MCOs emphasize in procurement responses, and how do these themes vary across states, regions, and time periods? Second, when normalized by document volume, to what extent do MCO claims align with state procurement priorities as expressed in RFP requirements? Third, how did MCO performance claims evolve through the COVID-19 pandemic and in response to emerging federal requirements for health equity and social determinants of health?

Methods

Study Design and Oversight

We conducted a mixed-methods document corpus analysis of Medicaid MCO procurement materials from 32 states spanning January 2017 through December 2024. The study protocol followed Standards for Reporting Qualitative Research (SRQR) guidelines 21 and TRIPOD + AI guidelines for studies using machine learning methods. 22 The Western Institutional Review Board determined the study exempt from continuing review as secondary analysis of publicly available documents without individual-level data (45 CFR 46.104[d][4]).

Document Corpus Assembly

We systematically identified comprehensive Medicaid managed care RFPs through state Medicaid agency websites, procurement portals, and targeted information requests. Inclusion criteria required: (1) comprehensive managed care RFPs covering full-benefit Medicaid populations; (2) issuance dates between January 2017 and December 2024; (3) availability of either state RFP documents or MCO technical proposals; and (4) substantive content enabling performance claim extraction. Exclusion criteria included: (1) dental-only or behavioral health-only carve-out RFPs that did not cover comprehensive Medicaid populations; (2) documents addressing only administrative services organization arrangements without capitated managed care; (3) documents entirely redacted or containing insufficient text for extraction; and (4) procurement materials from states without active comprehensive managed care programs during the study period.

The 32-state sample reflects document availability rather than purposive selection. Of the 42 states operating Medicaid managed care programs as of 2024, 10 states were excluded because their procurement documents were not publicly accessible through state portals or Freedom of Information Act requests, were fully redacted, or fell outside the study time frame. We compared included and excluded states on Medicaid enrollment, managed care penetration rates, and years of managed care operation (ranging from early adopters such as Arizona in 1982 to recent implementers) and found no statistically significant differences (all P > .10), though we cannot exclude unmeasured systematic differences in procurement transparency practices. Supplemental Appendix A provides full geographic coverage and state characteristics.

Documents were obtained through direct download (78%), procurement portal access (18%), and Freedom of Information Act requests (4%). The final corpus comprised 265 source documents from 32 states, including RFPs (64.5%, n = 171), MCO proposals (13.2%, n = 35), contracts (10.6%, n = 28), scoring documents (4.2%, n = 11), award notices (3.0%, n = 8), and Supplemental Materials (4.5%, n = 12).

Text Extraction and Processing

Source documents (PDFs, compressed ZIP archives) were extracted into individual text files. Large compressed archives contained multiple proposal sections, contracts, and appendices, expanding 265 source documents into 1666 processed text files (6.29-fold expansion). This processing approach enabled systematic analysis while preserving document structure and attribution.

Definition of Performance Claims

We defined a performance claim as any discrete statement in procurement documents that asserted organizational capability, described past performance achievements, or committed to future performance targets related to health outcomes, quality metrics, service delivery, or population health management. We use the term “performance claims” to distinguish these statements from accountability in the strict sense, which following Bovens’ conceptual framework requires both answerability and the possibility of consequences or sanctions. 10 The statements extracted encompass 3 qualitatively distinct categories: (1) capability assertions describing what an organization can do; (2) past performance narratives documenting historical achievements; and (3) forward-looking commitments to future targets. We recognize that only the third category constitutes accountability in Bovens’ strict sense, while the full set represents performance rhetoric through which MCOs position themselves in the procurement process and states articulate priorities.7,8,23 In procurement practice, all 3 categories inform principal evaluations of agent trustworthiness before contract award, though enforceability and specificity vary considerably.24,25

Claims required specificity regarding: (1) the domain of performance; (2) either quantitative metrics or qualitative descriptors of activities; and (3) attribution to the organization’s actions or partnerships.

Examples of performance claims (verbatim from corpus):

“Reduced health disparities by 8% points for African American members” (health equity, past performance narrative).

“98% of members within 30 miles of primary care provider” (access, capability assertion).

“Complex care management program reduced readmissions by 22%” (care coordination, past performance).

“Provider cultural competency training completed by 100% of network PCPs” (workforce, capability assertion).

Machine Learning-Assisted Extraction

We developed a retrieval-augmented generation system combining dense passage retrieval with structured prompt engineering for claim extraction. In plain terms, this system works by first breaking each document into overlapping text segments, converting those segments into numerical representations that capture their meaning, and then searching for the most relevant segments when extracting claims on a particular topic. The retrieved segments are provided as context to a language model that identifies and classifies specific claims, ensuring that all extracted information is traceable to source text rather than generated from the model’s training data.

This approach aligns with RAG architectures evaluated in recent systematic reviews of healthcare applications, which demonstrate that retrieval-grounded generation substantially reduces hallucination compared to standalone language model inference while maintaining high extraction accuracy for structured information tasks. 18 The architecture embedded document chunks using vector representations (all-MiniLM-L6-v2 sentence transformer, 384-dimensional vectors), indexed content in a ChromaDB vector database enabling semantic search, assembled relevant context for each extraction task, and generated structured outputs via large language model analysis.

We used Claude Sonnet 4.5 (claude-sonnet-4-5-20250929, Anthropic) as the primary extraction model. We selected this model based on comparative evaluation of 3 candidate models (Claude Sonnet 4.5, GPT-4, and Gemini 1.5 Pro) on a development set of 50 manually coded document sections, where Claude Sonnet 4.5 achieved the highest F1 score (0.91) for claim extraction and thematic classification. Key advantages included its 200 000-token context window enabling processing of long documents without truncation, strong instruction-following for structured JSON extraction, and lower hallucination rates in preliminary testing on procurement-specific language. 26

Known limitations of RAG approaches relevant to this analysis include sensitivity to chunk size and overlap parameters (claims spanning chunk boundaries may be missed or duplicated), potential retrieval failures when semantically relevant passages use divergent terminology, and the general challenge that extraction accuracy depends jointly on the quality of both retrieval and generation components. 18 We addressed these through overlapping chunk design (500-character overlap), comprehensive prompt engineering, and systematic human validation (described below).

The extraction prompt specified identification of statements containing assertions about organizational capabilities, performance metrics, or service delivery commitments. For each claim, the system extracted verbatim text (maximum 300 characters), thematic domain classification, clinical or population subcategory, temporal reference (historical, current, or projected), and any cited evidence or partnerships. Output followed JSON schema enabling systematic aggregation.

Hallucination Mitigation

We implemented multiple safeguards to prevent LLM hallucination, following emerging best practices for AI-assisted qualitative research.27,28 First, the RAG architecture ensured all extracted claims were grounded in retrieved document passages with explicit source attribution, preventing the model from generating claims not present in source documents. Second, we required verbatim text extraction (≤300 characters) rather than paraphrasing, enabling direct verification against source documents. Third, structured JSON output with predefined schema fields constrained model responses to valid categories. Fourth, temperature was set to 0.0 for deterministic outputs. Fifth, extracted claims were systematically cross-referenced against source document page numbers and passage locations. Sixth, a 10% random sample of extracted claims underwent manual verification against original documents, achieving 97.3% accuracy for source attribution and verbatim fidelity.

Claims were classified into 6 primary thematic domains identified through iterative grounded theory analysis: (1) Chronic Disease Management; (2) Health Equity; (3) Long-Term Services and Supports/Dual Eligibles; (4) Social Determinants of Health; (5) Technology and Digital Health; and (6) Workforce Development.

Human Validation

Two independent coders with doctoral degrees in health services research coded a stratified random sample of 200 document sections, blinded to machine-generated outputs. Stratification ensured representation across states, years, MCO parent companies, and claim types. Inter-rater reliability achieved Cohen’s kappa of 0.86 (95% CI: 0.81-0.91) for thematic domain classification. Machine learning sensitivity for claim detection reached 0.89 (95% CI: 0.84-0.93) and specificity 0.94 (95% CI: 0.90-0.97) compared to human consensus coding.

Per-File Normalized Concordance Analysis

For each state procurement, we separately extracted claims from state-issued RFP documents and from MCO response documents. To address the concern that raw claim counts confound volume with emphasis, we calculated per-file normalized concordance ratios—that is, the ratio of MCO claims per proposal document to state requirements per RFP document, which controls for differences in document volume across states and enables comparison of thematic emphasis independent of how many documents each state produced.

We first counted the number of files by state and document type (RFP vs proposal). We then calculated claims per file for both RFPs and MCO proposals. Finally, we calculated normalized concordance ratios by dividing MCO claims per file by RFP claims per file within each thematic domain for each state. Normalized ratios greater than 1.0 indicated MCO overemphasis relative to RFP requirements when controlling for document volume; ratios below 1.0 indicated underemphasis. We aggregated ratios across states using inverse-variance weighting to account for varying document volumes.

Only 6 states had sufficient paired RFP and MCO proposal documents with adequate claim volumes—defined as at least 100 performance claims in both the RFP and MCO document sets for a given state—for meaningful normalized concordance analysis. This threshold ensured that calculated ratios reflected substantive thematic patterns rather than small-sample instability. We note that states typically issue a single RFP applicable to all MCOs, while each MCO submits its own proposal; we therefore normalized by file count within each document type to account for this structural asymmetry.

Stratified Temporal and Regional Analyses

We selected the onset of the COVID-19 pandemic (March 2020) as the temporal cutpoint for pre/post comparisons because the pandemic catalyzed several concurrent policy shifts relevant to Medicaid procurement: rapid telehealth expansion under emergency waivers, heightened attention to health equity following disparate pandemic mortality, emergency continuous enrollment provisions that expanded Medicaid rolls to a historic 94 million enrollees, and CMS’s 2022 Framework for Health Equity. 29 These converging forces would be expected to alter both state procurement priorities and MCO response strategies, making this a substantively motivated analytic boundary rather than an arbitrary temporal division.

To address the concern that temporal and regional patterns might be driven by varying composition of document types rather than substantive differences in priorities, we conducted stratified analyses separating state RFPs from MCO proposals from other documents (contracts, scoring materials, amendments). This stratification is important because different document types serve distinct functions in the procurement ecosystem: RFPs represent state-articulated priorities, MCO proposals represent organizational positioning in competitive bidding, and other documents (contracts, scoring criteria, amendments) represent the post-award regulatory environment that evolves through negotiation and policy development. Interpreting procurement dynamics requires understanding which document types drive observed patterns.

Statistical Analysis

We calculated descriptive statistics for claim frequencies with 95% confidence intervals using the Clopper-Pearson method. We assessed temporal trends using Cochran-Armitage tests for linear trend. We compared pre-COVID (2017-2019) versus COVID/post-COVID (2020-2024) periods using chi-square tests. We evaluated regional variation using chi-square tests with Bonferroni correction for multiple comparisons. Statistical analyses used R version 4.3.1 with significance threshold P < .05.

Results

Thematic Distribution of Performance Claims

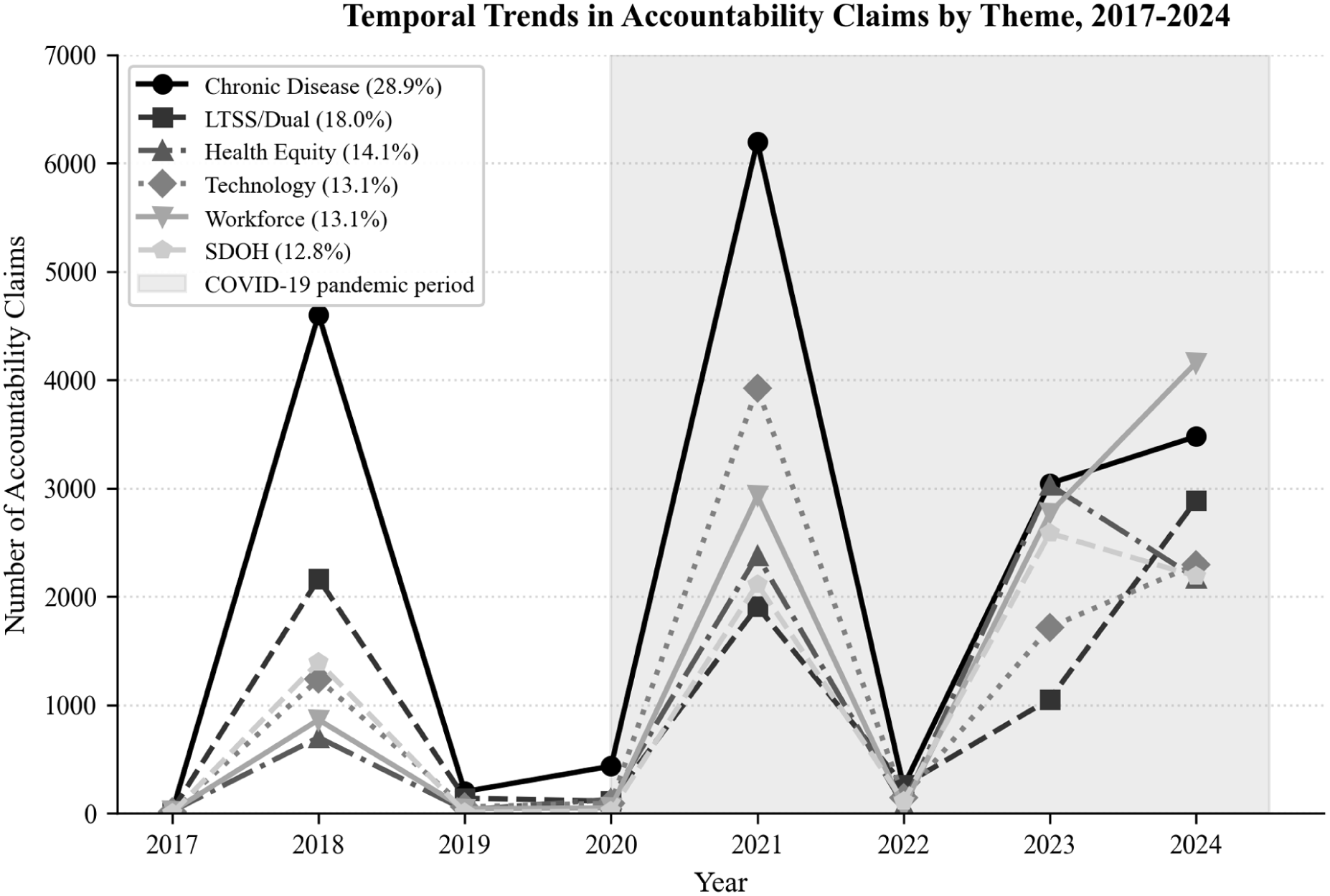

Analysis identified 372 283 performance claims across 1666 processed text files from 265 source documents representing 32 states (Table 1). Chronic disease management comprised the largest thematic category (28.9%, n = 107 450), followed by long-term services and supports/dual eligibles (18.0%, n = 67 170), health equity (14.1%, n = 52 528), technology and digital health (13.1%, n = 48 754), workforce development (13.1%, n = 48 623), and social determinants of health (12.8%, n = 47 758).

Study Population Characteristics: Medicaid Managed Care Procurement Documents, 32 States, 2017 to 2024.

Source. Authors’ analysis of 265 procurement documents from 32 state Medicaid agencies, January 2017 through December 2024.

Source documents were obtained through state procurement portals (78%), direct agency access (18%), and Freedom of Information Act requests (4%). Large compressed archives (ZIP files) containing multiple proposal sections, contracts, and appendices expanded 265 source documents into 1666 processed text files (6.29-fold expansion ratio).

Regional classification follows US Census Bureau definitions. States with paired data for normalized concordance analysis: 6 states (Arizona, California, Colorado, Hawaii, Indiana, New Mexico).

The predominance of chronic disease management claims is notable given the substantial burden of avoidable utilization among Medicaid beneficiaries: with nearly 40% of acute care visits attributable to ambulatory care-sensitive conditions, 6 the 28.9% share suggests that procurement language at least partially reflects the clinical priority of chronic disease management, even as the thematic distribution does not indicate whether individual claims contain specific, measurable commitments or general aspirational language.

Within themes, provider recruitment constituted the single most frequent claim subcategory (10.8% of all claims, n = 40 241, present in all 32 states), followed by long-term services and supports programs (10.2%, n = 38 097), behavioral health services (9.5%, n = 35 497), and racial/ethnic health disparities (7.1%, n = 26 570; Table 2).

Theme Taxonomy and Subcategory Distribution of Performance Claims.

Source. Authors’ analysis of 372 283 performance claims extracted from 265 procurement documents across 32 states using retrieval-augmented generation with large language model analysis.

Inter-rater reliability for thematic classification achieved Cohen’s kappa = 0.86 (95% CI: 0.81-0.91) based on independent coding of 200 stratified random document sections by 2 doctoral-level health services researchers.

Per-File Normalized Concordance Analysis

Only 6 states had sufficient paired RFP and MCO proposal documents with adequate claim volumes (at least 100 claims in both document types) for meaningful normalized concordance analysis (Arizona, California, Colorado, Hawaii, Indiana, New Mexico), down from 13 states in preliminary analyses that used raw counts without file normalization.

When normalized by file count—that is, comparing the number of claims MCOs made per proposal document to the number of requirements states specified per RFP document—technology claims showed the most extreme MCO overemphasis (mean normalized concordance ratio: 53.9, median: 28.2, SD: 65.5), indicating that for every technology requirement states specified per RFP file, MCOs generated 53.9 technology claims per proposal file (Table 3, Figure 1). Health equity claims also showed substantial overemphasis (mean ratio: 22.0, median: 10.3, SD: 28.1). Social determinants of health claims showed moderate overemphasis (mean ratio: 18.9, median: 8.5, SD: 29.9).

Per-File Normalized Concordance Ratios: MCO Response Alignment With State RFP Requirements.

Source. Authors’ analysis of 6 states with paired Request for Proposal and MCO technical proposal documents from the same procurement cycle.

Normalized concordance ratios were calculated as (MCO claims per file)/(RFP claims per file) within each thematic domain for each state, then aggregated across states using inverse-variance weighting. Ratios represent how many times more MCOs emphasize a theme per proposal document compared to state RFP specifications per RFP document. For example, technology ratio of 53.9 indicates MCOs generate 53.9 times more technology claims per proposal file than states specify per RFP file—that is, for every technology requirement in an RFP, MCO proposals contain approximately 54 corresponding claims. High standard deviations reflect substantial heterogeneity across states in procurement priorities and MCO response strategies. Analysis limited to 6 states with sufficient paired RFP and MCO proposal documents with adequate claim volumes, defined as at least 100 performance claims in both document types (Arizona, California, Colorado, Hawaii, Indiana, New Mexico).

Per-file normalized concordance ratios by theme.

Conversely, chronic disease management showed moderate relative emphasis (mean ratio: 15.8, median: 10.3, SD: 18.6), while workforce development (mean ratio: 14.2, median: 5.2, SD: 17.8) and LTSS/dual eligibles (mean ratio: 16.1, median: 3.8, SD: 23.2) showed the lowest relative emphasis. Notably, all ratios exceeded 1.0, indicating that MCOs generated more claims per file than states specified per RFP file across all themes; the key finding is the relative ordering, with technology and equity claims receiving disproportionately more emphasis compared to chronic disease management and workforce development. The high variability (large standard deviations) reflects substantial heterogeneity across states in procurement priorities and MCO response strategies.

Temporal Evolution Stratified by Document Type

Stratified temporal analysis revealed that dramatic post-pandemic increases in performance claims across all themes were driven primarily by “Other” documents (contracts, scoring materials, amendments) rather than by RFPs or MCO proposals specifically (Figure 2). For health equity claims, Other documents increased 34.2-fold (127-4341 claims, P < .001), RFP documents increased 6.3-fold (526-3303 claims, P < .001), but MCO proposals increased only 1.4-fold (99-135 claims, P = .18). For technology claims, Other documents increased 68.1-fold (94-6398 claims, P < .001), RFP documents increased 1.7-fold (976-1671 claims, P < .001), but MCO proposals decreased (241-106 claims, −0.56-fold, P = .02).

Temporal trends in performance claims stratified by document type, 2017 to 2024.

These divergent patterns across document types indicate that post-pandemic increases in health equity and technology performance claims reflect evolving state procurement frameworks and contract specifications rather than strategic MCO positioning in competitive proposals. The “Other” documents—contracts, scoring criteria, and amendments—represent state-issued regulatory requirements that MCOs must comply with; the dramatic increases in these documents suggest that states actively embedded new equity and technology priorities into the post-award contractual environment following pandemic-era policy shifts. The divergent patterns by document type underscore the importance of stratified analyses when evaluating procurement dynamics, as aggregate trends obscure which actors are driving thematic evolution.

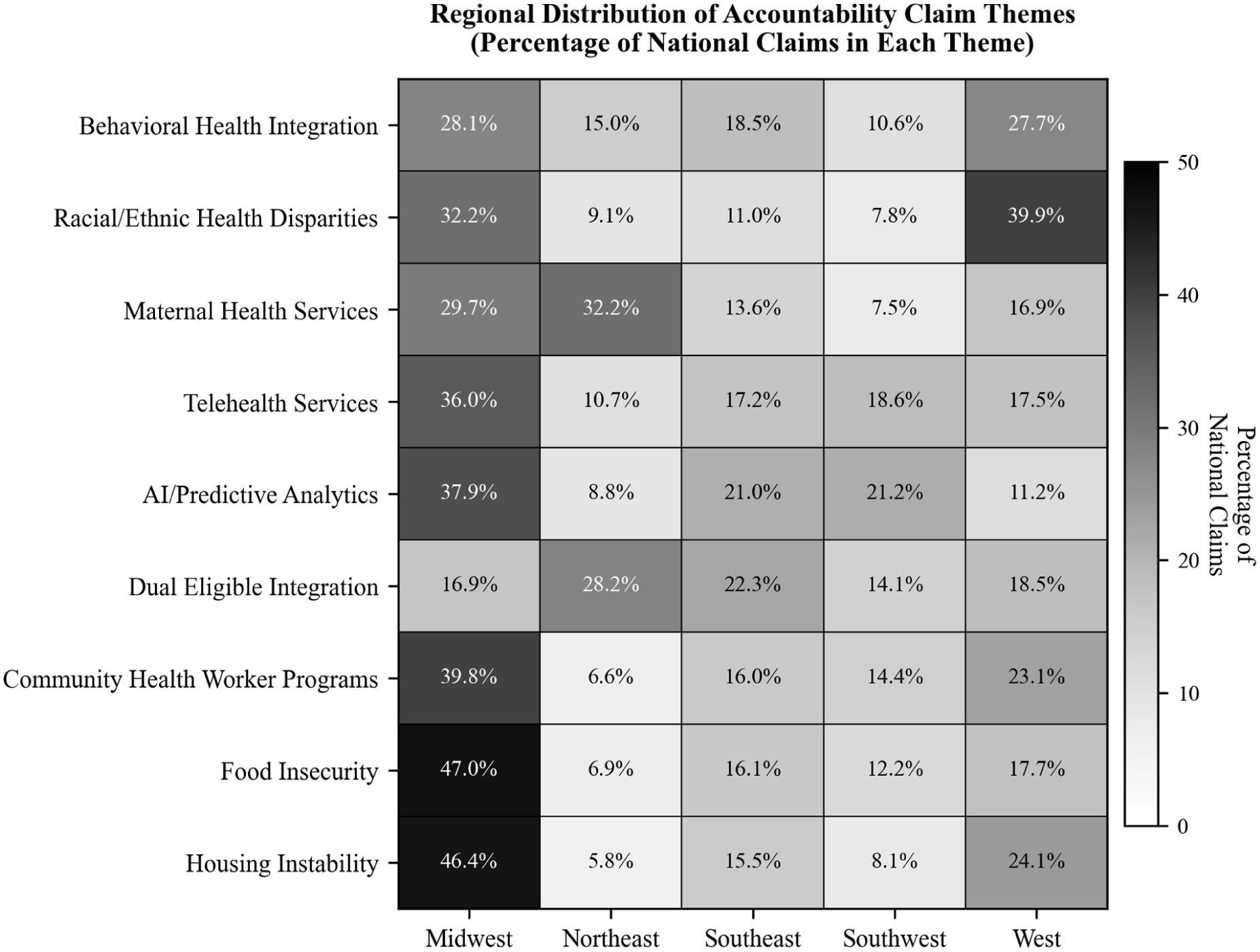

Regional Variation

Regional analysis encompassed all document types (RFPs, MCO proposals, and Other documents) and revealed substantial geographic variation, with patterns remaining consistent when stratified by document category. Midwest states led all regions in LTSS claims (12 958 claims in Other documents, highest among all regions) and provider recruitment (12 580 claims), while Western states prioritized racial/ethnic health disparities (10 604 claims in Other documents, representing 39.9% of all national disparity claims) and social isolation interventions (9764 claims). Southeast states emphasized LTSS (9773 claims) and nursing facility quality (3422 claims). Northeast states showed strong maternal health focus (6600 claims, 32.2% of national maternal health claims) and dual eligible integration (2447 claims, 28.2% of national total). The consistency of regional patterns across RFPs, MCO proposals, and Other documents suggests that geographic priorities are embedded throughout procurement ecosystems and reflect distinct state policy environments, population health priorities, and Medicaid program structures rather than document-type-specific variation (Figure 3).

Regional distribution of performance claims by theme.

Discussion

This study represents the first large-scale systematic analysis of MCO performance claims across state Medicaid procurement cycles using per-file normalization to control for document volume confounding. Three findings merit particular attention.

First, even when normalized by file count, MCOs generate substantially more claims per document about technology (53.9-fold) and health equity (22.0-fold) relative to state RFP specifications than about chronic disease management (15.8-fold) and workforce development (14.2-fold). This thematic divergence is particularly notable given that Medicaid beneficiaries continue to experience disproportionately high rates of avoidable acute care utilization—nearly 40% of acute care visits for ambulatory care-sensitive conditions 6 —and that comprehensive reviews have found no conclusive evidence that managed care delivery has improved these outcomes relative to fee-for-service Medicaid.4,5 If procurement processes fail to prioritize the operational domains most directly linked to reducing avoidable utilization—chronic disease management, primary care workforce, and care coordination—it is unlikely that managed care contracting alone will produce the outcome improvements that justify its administrative cost and complexity.

Second, temporal increases in politically salient themes (health equity, technology, SDOH) are driven primarily by evolving state procurement frameworks reflected in contracts and scoring documents, not by MCO proposal strategies. Third, only 6 states had sufficient paired data for robust normalized concordance analysis, revealing profound limitations in data availability for procurement research.

Interpretation Through Principal-Agent Theory

The normalized concordance patterns are consistent with strategic behavior as described by principal-agent theory, though several mechanisms may explain the observed patterns. Eisenhardt’s canonical framework identifies 3 core tensions in agency relationships: information asymmetry, goal conflict, and differential risk bearing. 9 All 3 operate in Medicaid procurement. MCOs (agents) possess private information about their true capabilities and operational intentions that states (principals) cannot fully verify before contract award. Goal conflict manifests because states pursue population health outcomes while MCOs must balance clinical objectives against financial sustainability under capitated payment, which transfers substantial actuarial risk from states to plans. 9 Contract incompleteness—inherent in complex, multi-year managed care agreements—leaves considerable discretion for MCOs to allocate resources across domains after contract award.

Within this framework, the disproportionate emphasis on technology and health equity claims is consistent with—though not proof of—strategic positioning. Technology and health equity claims may carry reputational benefits with fewer immediate operational requirements, while chronic disease management and workforce development require sustained infrastructure investment with directly measurable outcomes. However, the observation that all concordance ratios exceed 1.0, and that temporal increases were driven by state-issued documents rather than MCO proposals, complicates simple attributions of strategic intent to MCOs.

Alternative Explanations

Several alternative explanations for the observed thematic divergence merit consideration. First, divergence between RFP specifications and MCO proposals may partly reflect normal negotiation dynamics: states and MCOs typically negotiate before contracts are finalized, and some thematic differences may represent standard bargaining processes rather than strategic omission. 30 Second, in states where few MCOs compete for contracts, market power imbalances may enable MCOs to emphasize preferred themes rather than conforming to state priorities, reflecting the structure of the managed care market rather than deliberate positioning. 4 Third, MCOs may genuinely possess stronger capabilities in technology and health equity—domains where investment has accelerated industry-wide—than in chronic disease management and workforce development, such that the observed patterns reflect authentic organizational strengths. Fourth, our finding that temporal increases were driven primarily by Other documents (contracts, scoring criteria) rather than MCO proposals suggests that procurement ecosystems evolve through iterative state-driven policy development, and observed thematic patterns may reflect state policy priorities as much as MCO strategy. Document analysis alone cannot adjudicate among these explanations; doing so would require supplementary evidence such as MCO performance reports, budget allocations, or procurement official interviews that directly connect language patterns to operational resource allocation.

Implications of Stratified Temporal Analysis

The stratified temporal findings demonstrate that the COVID-19 pandemic marked a substantive shift in procurement priorities driven by state action. The pandemic catalyzed emergency telehealth expansion, exposed health equity gaps through disparate mortality, and prompted CMS’s 2022 Health Equity Framework, 29 all of which were subsequently embedded in state procurement frameworks. Health equity claims increased 10.3-fold overall, but this reflected 34.2-fold increases in Other documents (contracts, scoring criteria) versus only 1.4-fold increases in MCO proposals. Technology claims showed a similar pattern: 68.1-fold increases in Other documents versus actual decreases in MCO proposals.

These patterns suggest that states actively evolved their procurement frameworks to emphasize equity and technology priorities following pandemic-era disruptions, incorporating new requirements into contract amendments and scoring criteria. The minimal corresponding increases in MCO proposals indicate that MCOs did not independently expand their technology or equity claims in proportion to state-driven framework evolution. Whether state-driven framework changes translate into improved MCO performance and beneficiary outcomes remains an open empirical question requiring longitudinal tracking.

Data Availability Limitations and Implications

Our finding that only 6 states had sufficient paired RFP-MCO data for normalized concordance analysis (down from 13 in preliminary raw count analyses) reveals a fundamental challenge for procurement accountability research. States vary dramatically in public disclosure practices. Some states publish comprehensive RFP documents with detailed requirements, while others publish minimal specifications. Similarly, MCO proposal disclosure varies: some states release full technical proposals through Freedom of Information Act responses, while others heavily redact or withhold proposal content citing proprietary information.

This heterogeneity in transparency creates profound research barriers. The Organization for Economic Cooperation and Development’s 2024 report on procurement transparency emphasizes that meaningful accountability requires disclosure across the full procurement lifecycle, from RFP specifications through contract performance. 31 Our findings suggest most states fail to meet transparency standards enabling independent evaluation of procurement alignment.

Implications for Procurement Reform

These findings suggest several directions for procurement reform, though we note that our analysis characterizes the thematic emphasis of performance claims rather than their content or specificity. We cannot determine from thematic classification alone whether claims contain specific baselines, measurable targets, and defined timelines, or consist of general aspirational language. With that limitation acknowledged, the observed thematic divergence suggests that procurement processes may benefit from structured requirements ensuring that MCO proposals address operationally intensive domains (chronic disease management, workforce development) with the same granularity applied to technology and health equity claims. Given that avoidable utilization rates remain disproportionately high among Medicaid beneficiaries compared to Medicare and commercially insured populations, 6 procurement reforms that redirect emphasis toward domains with the most direct impact on preventable hospitalizations could yield meaningful improvements in care quality.

States could implement concordance monitoring—systematic comparison of the relative emphasis in MCO proposals against RFP priorities, normalized by document volume—during proposal evaluation, automatically flagging disproportionate thematic emphasis for reviewer scrutiny. CMS could establish minimum federal standards for proposal specificity, requiring that MCO claims include measurable metrics rather than narrative assertions alone. The UK’s 2023 Procurement Act, implementing “transparency by default” across the full commercial lifecycle, 24 provides a model for enhanced disclosure requirements.

Implications of Federal Work Requirements

The 2025 budget reconciliation (HR 1) work requirements create additional procurement considerations beyond the clinical workforce domain this study examined. Implementation requires MCOs to develop substantial administrative infrastructure—eligibility verification systems, member outreach capacity, exemption documentation processes, and continuity-of-care provisions during coverage transitions—capabilities that represent a distinct organizational domain separate from the clinical provider workforce emphasis captured in our workforce development theme, which reflects provider recruitment, cultural competency training, and community health worker programs. Because these administrative systems fall outside the thematic domains we analyzed, our findings cannot directly assess MCO preparedness for work requirement implementation. States conducting Medicaid managed care procurements should explicitly evaluate MCO administrative capacity for eligibility verification and beneficiary outreach as a separate procurement requirement alongside existing quality and network standards. 14

Methodological Contributions and Limitations

Methodologically, this study demonstrates that validated LLM-assisted extraction with RAG architectures enables analysis at previously infeasible scales. The 372 283 claims from approximately 460 000 pages would have required approximately 15 000 manual coding hours. Our approach achieved 0.86 inter-rater reliability (95% CI: 0.81-0.91) and 97.3% source attribution accuracy, demonstrating that properly validated LLM methods can support rigorous health services research.27,28

Limitations warrant consideration. First, document availability varied dramatically across states; only 6 states had sufficient paired data for normalized concordance analysis, limiting generalizability of concordance findings. Second, we could not assess whether performance claims predict subsequent operational performance; future research must link claims to HEDIS measures, state scorecards, and external quality review findings to evaluate whether thematic patterns correlate with beneficiary outcomes. Third, machine learning sensitivity of 0.89 suggests approximately 11% of claims may have been undetected. If undetected claims are randomly distributed across themes and document types, this would attenuate estimated differences without introducing directional bias. However, if missingness is systematic—for example, if the model more readily detects technology claims phrased in standardized language than workforce claims embedded in narrative text—thematic comparisons could be biased. Our human validation sample did not reveal significant differential detection rates across themes (sensitivity range: 0.86-0.92 across 6 domains), but we cannot exclude residual systematic missingness. Fourth, we analyzed performance claims during procurement but cannot adjudicate whether observed patterns reflect strategic positioning, genuine capability differences, negotiation dynamics, or other factors, as discussed above. Fifth, analysis covers 2017 to 2024 and may not reflect changes under full 2024 CMS regulation implementation or 2025 federal policy shifts. Sixth, while the RAG architecture mitigated hallucination through source grounding, known limitations of retrieval-augmented approaches include sensitivity to document chunking parameters and potential retrieval failures for passages using non-standard terminology; our 97.3% source attribution accuracy and overlapping chunk design address but do not eliminate these concerns. 18

Future Research Directions

Critical next steps include linking performance claims to validated outcome measures—particularly avoidable hospitalization rates for ambulatory care-sensitive conditions, which provide a direct measure of whether procurement language translates into effective primary care delivery 6 —and analyzing the content and specificity of claims within themes (eg, whether technology claims contain measurable implementation targets or consist of aspirational language, and whether claims include financial incentives or penalties for nonperformance). Longitudinal tracking under new CMS regulations will illuminate whether enhanced oversight drives substantive commitments versus rhetorical compliance. Natural experiments examining procurement reforms (eg, states implementing concordance monitoring or quantified commitment requirements) could evaluate causal effects of specific accountability provisions. Finally, research should examine whether procurement transparency mandates improve alignment between commitments and performance.

Conclusion

Medicaid managed care procurement documents reveal systematic thematic divergence between MCO performance claims and the operational domains most central to daily beneficiary care. Even when normalized by document volume, MCOs make disproportionately more claims about technology and health equity than about chronic disease management and workforce development. These patterns are consistent with principal-agent dynamics in procurement but may also reflect normal negotiation processes, differential organizational capabilities, or state-driven framework evolution. Temporal increases in health equity and technology claims appear driven by evolving state procurement frameworks embedded in contracts and scoring criteria rather than by MCO proposal strategies specifically. Given that Medicaid beneficiaries continue to experience substantially higher rates of avoidable acute care utilization than Medicare or commercially insured populations, 6 and that evidence of managed care’s beneficial effects on outcomes remains inconclusive,4,5 aligning procurement processes with evidence-based operational priorities represents a critical and underutilized lever for improving care delivery for the 86 million Americans enrolled in Medicaid managed care.

Supplemental Material

sj-docx-1-inq-10.1177_00469580261444608 – Supplemental material for Medicaid Managed Care Procurement Reveals Systematic Overemphasis of Technology and Equity Performance Claims Across 32 States

Supplemental material, sj-docx-1-inq-10.1177_00469580261444608 for Medicaid Managed Care Procurement Reveals Systematic Overemphasis of Technology and Equity Performance Claims Across 32 States by Sanjay Basu, Alex Fleming, John Morgan and Rajaie Batniji in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Footnotes

Ethical Considerations

The Western Institutional Review Board determined this study exempt from continuing review as secondary analysis of publicly available documents without individual-level data (45 CFR 46.104[d][4]). The study protocol followed Standards for Reporting Qualitative Research (SRQR) guidelines and TRIPOD+AI guidelines for studies using machine learning methods. IRB Tracking ID: 20222499.

Consent to Participate

Not applicable. This study analyzed publicly available procurement documents and did not involve human subjects. No individual-level patient data were collected or analyzed.

Author Contributions

− Sanjay Basu: Conceptualization, methodology, formal analysis, investigation, data curation, writing (original draft), writing (review and editing), visualization, supervision, project administration

− Alex Fleming: Methodology, software, formal analysis, investigation, data curation, writing (review and editing)

− John Morgan: Investigation, validation, writing (review and editing), clinical expertise

− Rajaie Batniji: Conceptualization, resources, writing (review and editing), supervision

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: All authors are employed by or affiliated with Waymark, a public benefit organization that provides free social and medical services to Medicaid patients. The authors have no other financial relationships or conflicts of interest to disclose.

Data Availability Statement

The complete analysis dataset containing 372,283 extracted accountability claims is deposited at Harvard Dataverse (https://doi.org/10.7910/DVN/6EFL00). All analysis code, documentation, and reproducibility materials are publicly available at GitHub (![]() ). Source procurement documents are publicly available through state Medicaid agency websites and procurement portals; specific document inventory with access instructions is included in the Harvard Dataverse deposit.

). Source procurement documents are publicly available through state Medicaid agency websites and procurement portals; specific document inventory with access instructions is included in the Harvard Dataverse deposit.

Supplemental Material

Supplemental material for this article is available online.

Trial Registration

Not applicable. This is an observational document corpus analysis, not a clinical trial.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.