Abstract

Timely identification of critically ill patients for do-not-resuscitate (DNR) decisions is crucial to support shared decision-making and ethical end-of-life care. This study developed an explainable multitask learning (MTL) model using the MIMIC-IV database to predict the DNR decision within 24 h. The model was trained on data from 7789 adult patients who were admitted to the intensive care units (ICUs) with a length of stay longer than 3 days, using features including clinical parameters and nursing assessments across a 72-h window. Model performance was evaluated using the area under the receiver operating characteristic curve (AUROC), calibration plots, and decision curve analysis. Interpretability was illustrated by SHapley Additive exPlanations (SHAP) plots and partial dependence plots (PDP). Error analysis was performed to explore the strengths and limitations of the established model. The MTL model achieved superior performance compared to single-task learning (AUROC: 0.798 vs 0.764). SHAP and PDP plots demonstrated that verbalization ability, ventilatory support, and muscle strength as key features. Error analysis identified a subgroup of patients with extreme ventilatory demand and muscle weakness who contributed to misclassification; excluding this subgroup improved AUROC from 0.792 to 0.827. We developed a DNR prediction model and demonstrated the feasibility of integrating an explainable model into ICU care for a nudge to consider the DNR-relevant issue.

Introduction

The increasing number of patients admitted to intensive care units (ICUs) reflects a growing challenge for healthcare systems worldwide. 1 However, approximately 20% of patients do not survive despite intense life-sustaining treatment (LST). 2 Moreover, the survivors may still experience long-term physical and cognitive decline, leading to the requirement of extensive caregiving support and poor quality of life.3,4

Indeed, decision-making regarding the initiation, continuation, or withdrawal of LSTs is inherently complex and requires careful consideration of clinical prognosis, patient autonomy, ethical principles, family perspectives, and medical cost.5 -7 Patients, families and care teams frequently struggle to reach a consensus on LST decisions due to the inherent uncertainty of critical illness outcomes and the moral distress regarding the decision on LSTs.8,9 For example, delayed initiation of palliative care may result in prolonged and potentially futile LSTs, whereas premature initiation might be perceived as an abandonment of care.10,11 These dilemmas underscore the essential need for timely decision-making frameworks to guide LST discussions and decisions.12 -14

Artificial intelligence (AI) has emerged as a transformative tool in critical care, offering the ability to analyze complex data to support decision-making in critical care.15,16 AI-driven predictive models may help identify patients at high risk for adverse outcomes, thereby facilitating early discussion on LSTs and the integration of palliative care. 17 However, integrating AI into ethically sensitive areas such as Do-Not-Resuscitate (DNR) decision-making necessitates explainable AI (XAI) frameworks that ensure AI-generated recommendations align with the experience of the critical care and support shared decision-making.18,19

This study used the Medical Information Mart for Intensive Care (MIMIC)-IV database to develop a multitask learning model for predicting DNR decisions among critically ill patients. By incorporating explainable AI methods and error analysis, this study addresses a critical clinical need for interpretable AI applications with regard to DNR issues in intensive care settings.

Methods

Ethical Considerations

We used the MIMIC-IV database, which is a de-identified and publicly available critical care database. 20 The present study, limited to a secondary analysis of anonymized data, was approved by the institutional review board at the study hospital. The informed consent is waived due to all of the data was de-identified data.

Database and Study Design

We conducted this retrospective cohort study using the openly available MIMIC-IV (v2.1) database, and data from 53 569 critically ill patients were included. 20 We excluded patients whose ICU length of stay (LOS) was ≤3 days and those who were not their first ICU stay during hospitalization, leaving 7789 critically ill adults with an ICU-LOS > 3 days for analysis (Figure 1).

Flowchart of subject enrollment.

Outcome Definition

The primary task was to use the data within 72 h to predict the decision of a do-not-resuscitate (DNR) in the next 24 h (Supplemental Figure 1 provides details of the study’s data time frame). DNR status was obtained from chart events using item identifiers corresponding to “DNAR,” “DNAR/DNI,” “DNR,” “DNR/DNI” and “DNI” (total 652 positives). Four clinically related targets were modeled concurrently to enable knowledge-sharing in a multitask framework: (i) development of shock, defined by the requirement for vasopressors, (ii) 30-day all-cause mortality, (iii) use of mechanical ventilation, and (iv) receiving renal-replacement therapy/hemodialysis.

Key Variables

For each subject, we extracted 72 h of data preceding the prediction time (day-3 to day-1). Covariates consisted of demographics (age, gender, body weight, height), comorbidities, hemodynamic parameters (blood pressure, heart rate, respiratory rate), laboratory results (white blood cell count, hemoglobin, platelets, blood urea nitrogen), ventilator settings (fraction of inspired oxygen (FiO2), positive end-expiratory pressure (PEEP,) tidal volume, peak and mean airway pressures), and nursing assessments (verbalization ability, muscle strength, sedation status, leg strength).

Model Establishment

The core analytic approach was a multitask learning neural network architecture (Figure 2 and Supplemental methodology for details regarding the architecture of the multitask model). This consisted of a shared bottom network for extracting common representations across tasks and multiple task-specific tower networks. The primary prediction task was DNR status, while auxiliary tasks included prediction of shock, 30-day mortality, use of mechanical ventilation and receiving hemodialysis, allowing the model to leverage shared patterns and improve generalizability. With regard to the selection of auxiliary outcomes, we selected the objective and critical outcomes, including mortality, presence of shock, use of mechanical ventilation, and receiving hemodialysis, as auxiliary outcomes in this study. These aforementioned outcomes represent the outcomes of organ failure, consisting of mortality, circulatory, respiratory, and renal failure. As shown in Supplemental Table 2, mortality (Odds Ratio 5.63) and shock (OR 2.31) exhibited the strongest associations with DNR status, followed by mechanical ventilation (OR 2.22; Supplemental Table 2). We also examined the performance of each combination of DNR and the other relevant features, and found shock, mortality, and use of mechanical ventilation contributed to the performance of predicting the decision of DNR (Supplemental Table 3). The following multitask model was implemented using multilayer perceptron (MLP) with fully connected layers, trained on 80% of the data and tested on the remaining 20%.

Architecture of the multitask model.

Feature and Prediction Window

The temporal structure of the model was designed to provide daily DNR risk predictions. A moving feature window of 72 h was used to collect input data, and the model predicted the risk of DNR within the next 24 h (Supplemental Figure 1 provides details of the study’s data time frame). This approach aligns with real-world ICU workflows, enabling real-world landing for daily identification of patients at high risk for DNR decisions and supporting proactive family meetings and shared decision-making.

Model Interpretation and Error Analysis

To address the black-box nature of AI, model explainability was prioritized. 21 Shapley Additive Explanations (SHAP) were used to quantify the contribution of each feature to the DNR prediction, and partial dependence plots (PDP) illustrated the directionality of key predictors. 22 These visualizations facilitated clinical interpretation and transparency. An error analysis was conducted using a surrogate tree-based model to mimic the neural network’s predictions, enabling the identification of subgroups with high error rates.

Statistical Analysis

Continuous data are reported as median (IQR) and compared with Wilcoxon rank-sum tests; categorical data with chi-square tests where appropriate. The performance of the model was measured by the discrimination, accuracy across predictive probabilities and applicability of the models in the testing sets by using the area under the receiver operating characteristic (AUROC) curve analysis, calibration plot and decision curve analysis, respectively.23,24 We further used the DeLong et al test to determine the difference in performance among distinct models. 25 Python version 3.7.4 was applied in the present study.

Results

Study Population

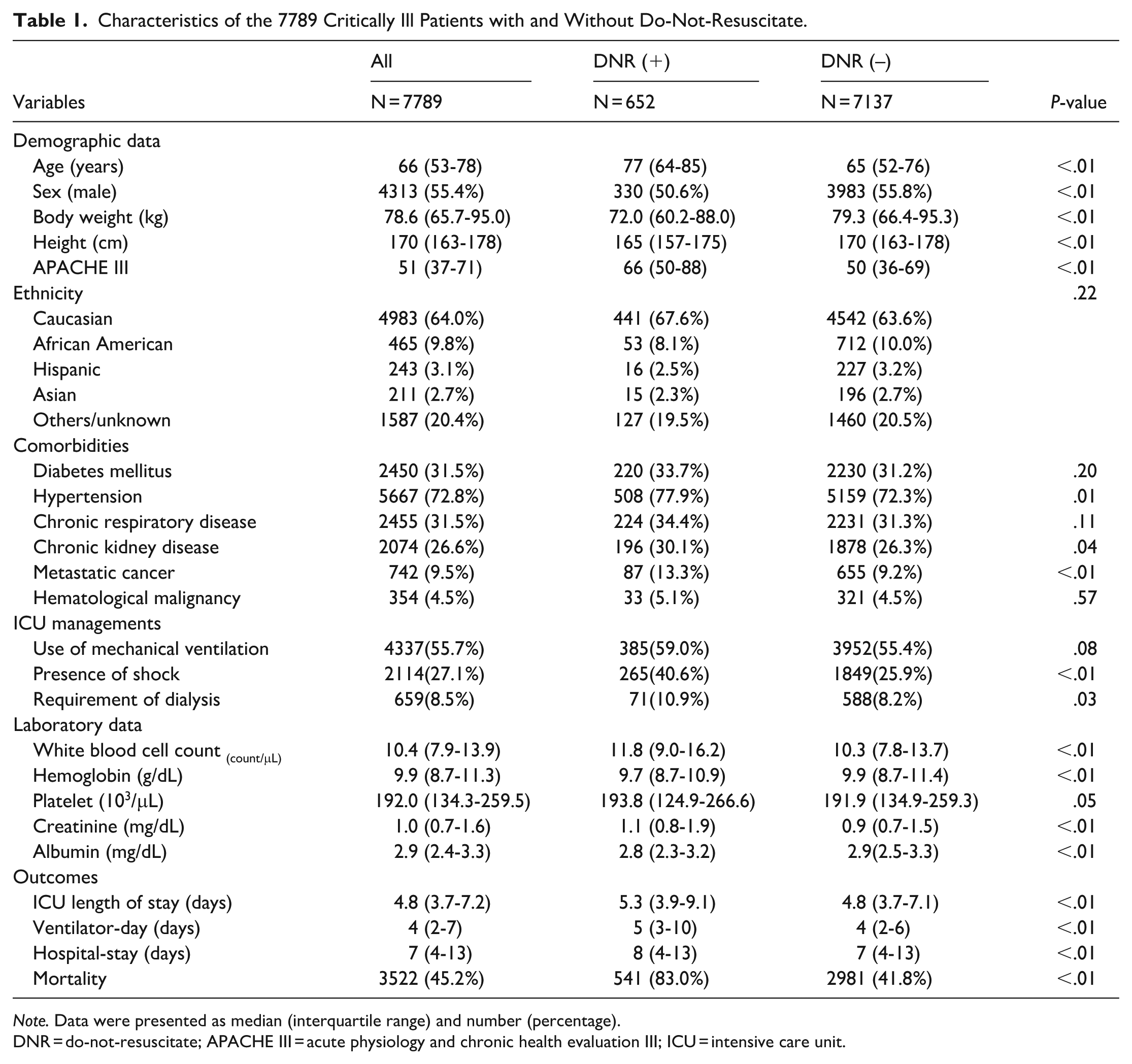

A total of 7789 critically ill adult patients with an ICU length of stay greater than 3 days were included in the analysis after excluding repeat admissions and patients with shorter ICU stays (Figure 1). The median age of the cohort was 66 years (IQR: 53-78), with a predominance of male patients (Table 1). Among these, 652 patients (8.4%) had a documented DNR order during their ICU stay. Patients with a DNR order were markedly older (median 77 [64-85] vs 65 [52-76] years, P < .01), higher APACHE-III score (66 [50-88] vs 50 [36-69], P < .01), and had lower body weight and height than their counterparts without DNR orders. The comorbidity burden was slightly higher in the DNR cohort, particularly hypertension (77.9% vs 72.3%, P = .01), chronic kidney disease (30.1% vs 26.3%, P = .04), and metastatic cancer (13.3% vs 9.2%, P < .01). Patients in the DNR group had higher white-cell count, creatinine, and lower albumin than those in the non-DNR group (all P < .01).

Characteristics of the 7789 Critically Ill Patients with and Without Do-Not-Resuscitate.

Note. Data were presented as median (interquartile range) and number (percentage).

DNR = do-not-resuscitate; APACHE III = acute physiology and chronic health evaluation III; ICU = intensive care unit.

Performance of Multitask Learning

Table 2 compares single-task learning (STL) and 2 multitask learning (MTL) methods. When the model was trained solely on the DNR task, the AUROC was 0.764 ± 0.009 with an accuracy of 0.697 ± 0.014. Adding the shock and 30-day mortality tasks (MTL-1) significantly improved the AUROC (0.795 ± 0.005; P < .001 vs STL) and accuracy (0.712 ± 0.023, P < .001 vs STL; Table 2). Calibration plots indicated good agreement between predicted and observed probabilities, and decision-curve analysis indicated a positive net benefit across threshold probabilities of .05 to .40 relative to default strategies (Figure 3). We further examine whether the prediction performance could be improved by the inclusion of all auxiliary tasks—shock, mortality, mechanical-ventilation use, and hemodialysis (MTL-2). However, we found that MTL-2 tended to have slightly increased AUROC of 0.798 ± 0.006 and accuracy of 0.731 ± 0.015, although the increased performance over MTL-1 was not statistically significant (P = .37 by DeLong’s test).

Performance of Single-Task and Multitask Learning to Predict the Decision of Do-Not-Resuscitate.

AUROC = area under the receiver operating characteristics.

DNR-alone.

Tasks included shock, mortality, and DNR.

Tasks included shock, mortality, use of mechanical ventilation, receiving hemodialysis, and DNR

P < .001 between single task learning and multi-task learning-1. #P = .37 between multi-task learning-1 and multi-task learning-2, determined by Delung test.

Performance of the proposed model to predict the decision of do-not-resuscitate: (a) receiver operating characteristic plot, (b) calibration curve, and (c) decision curve analysis.

Model Interpretability and Error Analysis

Figure 4 demonstrates the top 15 feature contributions through SHAP values (Figure 4). High-impact red markers to the right of zero indicate the absence of verbal response, ventilation exceeding 3 days, low muscular resistance/flaccid muscular tone, no need for sedation, and high blood urea nitrogen positively correlated with the decision of DNR. Figure 5 illustrates marginal effects via PDPs of the 6 key features (Figure 5). Taking the strength of the leg on day-1 as an example, we found that the probability of DNR increased with the decrease of the strength of the leg in a dose-response manner. These visualized interpretation abilities were consistent with medical knowledge and may enhance the trust in the model of the clinicians. Error analysis identified a minority of patients (n = 67, 7.0%) characterized by profound muscle weakness on day-1 (ie, muscular full resistance = 0) and high minute ventilation (>12.47 L/min on day-3) who contributed to misclassification. After excluding these 67 patients in the test group, the AUROC improved from 0.792 to 0.827, and accuracy increased from 0.740 to 0.769, with corresponding improvements in precision, recall, and F1-score (Table 3 and Supplemental Figure 2).

SHAP to illustrate the top 15 features to predict the decision of do-not-resuscitate. Each point on the plot represented a Shapley value for one feature and subject.

Partial dependence plots of key features: (a) patient verbalized on day-1, (b) ventilator >3 days, (c) muscular full resistance on day-1, (d) strength of leg on day-1, (e) sedation status on day-3, and (f) blood urea nitrogen on day -3.

Performance of the Original Test Population and Subgroup Analysis of Multitask Learning to Predict the Decision of Do-Not-Resuscitate.

AUROC = area under the receiver operating characteristics.

Exclusion of 67 patients whose muscular full resistance on day-1 = 0 and ventilatory minute ventilation on day-3 > 12.47 L/min in the test group.

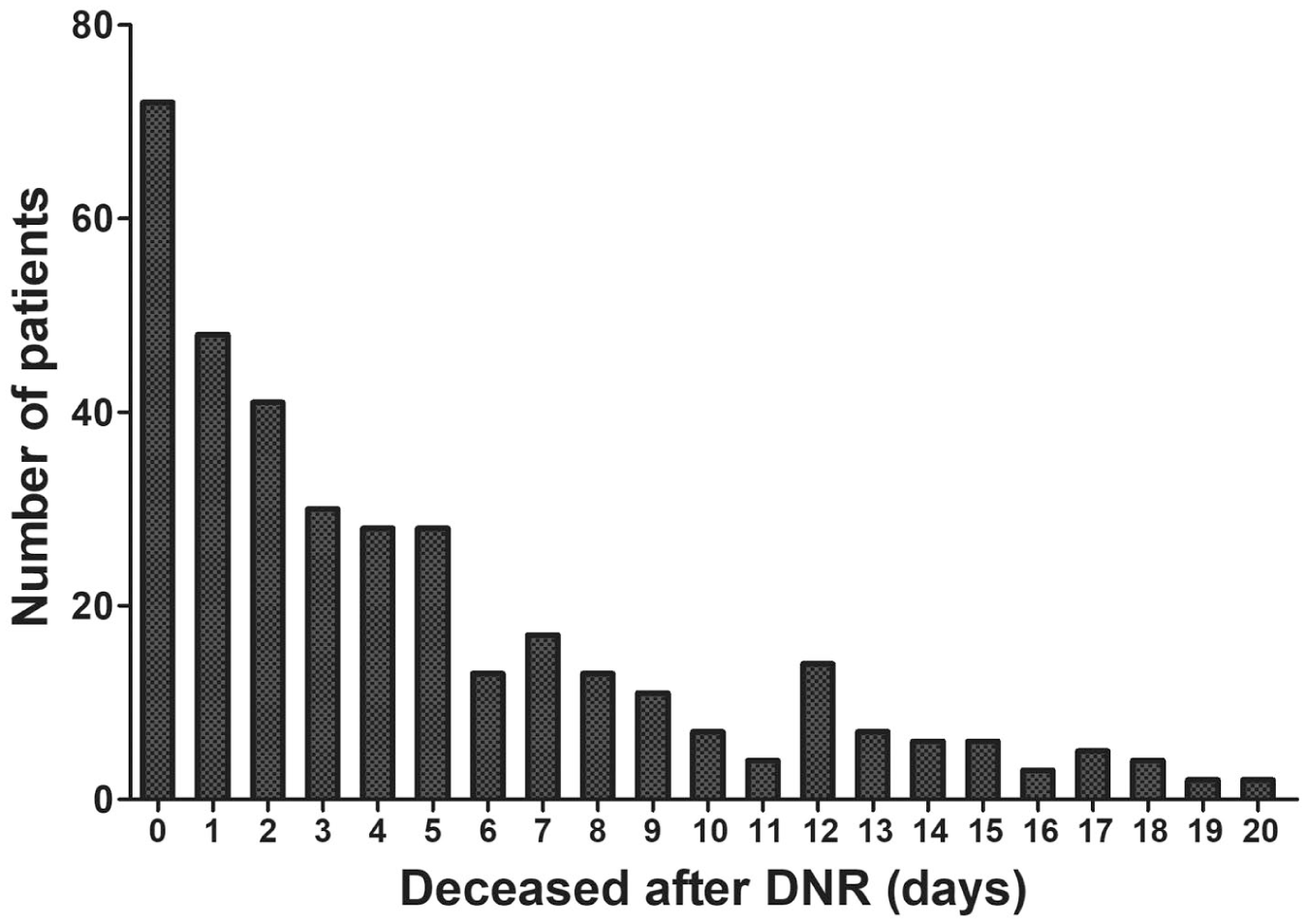

Temporal Trajectory After DNR

To further explore the post-DNR condition, we examined the number of patients who deceased categorized by days after DNR. Among the 652 patients with DNR, the distribution of death following DNR was right-skewed, with 30.5% dying within 1 day, 55.8% within 3 days, and 73.6% within 7 days after the decision of DNR (Figure 6). These data reflected the need for timely identification of those who might need discussion of the DNR issue.

The number of patients who deceased was categorized by days after the decision of DNR.

Discussion

There is an essential need for AI-supported ethical LST decisions, including DNR. We used the MIMIC IV database and integrated diverse features, including nurse assessments (such as verbalization ability, muscle strength, sedation status) and clinical parameters in critical care, to provide interpretable and timely predictions of DNR. Model performance was robust, as demonstrated by AUROC, calibration plots, and decision curve analyses. Explainability of the model was performed through using SHAP and PDP, and we also used error analysis to identify the subgroup with high predictive performance. Notably, the model’s design aligns with ICU clinical workflows, enabling daily, proactive identification of patients with a high probability for DNR decisions and supporting timely initiation of family discussion and shared decision-making processes.

The limitation of LST is undoubtedly a critical issue with high moral stress in ICU. 6 Persistent aggressive therapy to maintain the life function of terminally ill patients may prolong their dying, introducing excessive suffering or violating their dignity. 8 The integration of AI into critical care represents a paradigm shift in critical care, particularly in high-stakes decision-making processes such as LST issues, including DNR decision.16,19 Notably, the AI model aligns with clinical workflow in the ICU through using the 72-h clinical data to predict DNR 24 h later. The proposed model may serve as a proactive nudge. By flagging patients 1 day before a potential DNR decision, the predictive capability enables the intensivist to call for family meetings today to discuss on the LST issue. Importantly, by demonstrating SHAP and PDP plots, the model becomes more interpretable. This enables intensivists and families to discuss life-sustaining treatment (LST) decisions based on key features such as lack of verbalization capacity, prolonged ventilator use, decreased muscular strength, lack of need for light sedation in critically ill ventilated patients, impaired renal function with decreased urine output and elevated blood urea nitrogen levels, and impaired cardiovascular function with a persistently increased heart rate (Figure 4). Therefore, the proposed model not only aligns with workflow in the ICU but also alleviates the moral stress in making high-stakes decisions. However, given the risk of premature AI promoting, there is an essential necessity of human oversight in DNR decision-making. Collectively, the AI may be implemented as a “nudge with explanation” system for LST decisions within human-AI collaboration systems in the ICU.

The unpredictability of critical care trajectories and a prevailing tendency toward active treatment may delay the initiation of end-of-life discussions and palliative care planning.12,26 A decision-support tool powered by AI, functioning as a behavioral “nudge,” can use risk-stratified prompts and notifications to alert clinicians when patients are likely to benefit from timely conversations about goals of care and potential DNR decisions. 27 These AI-enabled nudges may remind clinicians to recognize patients who would benefit from timely discussions about end-of-life issues.27,28 Recent studies have shown that machine-learning–triggered alerts for patients at high short-term mortality risk can increase serious-illness conversations and reduce burdensome end-of-life interventions, and that clinicians generally regard such tools as helpful clinical aids rather than replacements for their judgment.27,28 These studies suggest that clinicians tend to accept AI-based prediction and behavioral nudges among patients potentially requiring palliative care. Moreover, transparency in AI processes should strengthen the trust among clinicians, nurses, and patients’ families, particularly the interpretability incorporating subjective elements like verbalization abilities and physical function, which are often underrepresented in conventional models. 18 Collectively, AI-driven behavioral nudges hold promise in supporting clinicians to pinpoint patients at high risk of mortality, prompting timely discussions on end-of-life planning, including the DNR issue.

Intriguingly, our study demonstrates the key role of integrating nurse assessment into the DNR prediction model. The MIMIC-IV database has been extensively used in critical care research; however, few studies specifically explored the utility of nurse assessment, particularly in predicting DNR. As we have shown in this study, nursing assessments, including observations of verbalization, muscle strength and sedative states, may provide crucial insights regarding the dignity and quality of life of critically ill patients. Moreover, AI models with nursing assessment can further enhance interpretability, given that these assessments are patient-centric, real-world clinical observations for the trajectory of physical abilities or verbal interaction. Collectively, the application of nursing assessments increases the performance and transparency of the DNR prediction model, and the established model aligns with clinical workflow in critical care.

The integration of AI in critical care has traditionally focused on objective outcomes like survival probabilities; however, a paradigm shift is emerging, and AI may also address spiritual outcomes, such as pain assessment and DNR decision in this study, for holistic, patient-centered care.19,27,29,30 Notably, the prediction of decision for DNR and prediction for mortality are actually distinct tasks as we have shown that critically ill patients may decease after a few days of decision of DNR and 41.8% ( 2981/7137) of critically ill patients without DNR deceased during the hospital course (Figure 1 and Table 1). Our previous studies have shown that to predict mortality or weaning from ventilator, mainly used the hemodynamic and laboratory data.22,31 We further provided the comparison between the top 15 features between task predicting DNR and task predicting mortality to demonstrate that the model to predict mortality mainly relies on acute hemodynamic parameters (Supplemental Table 4). Beyond hemodynamic and laboratory data, we demonstrated how spiritually relevant features, like patient verbal expressions, can be incorporated into AI-supported decisions on DNR (Figure 4 and Supplemental Table 4). Integrating such multidimensional data can reduce the moral burden on ICU teams by objectively identifying patients needing LST discussions, thus facilitating shared decision-making with patients and families.10,32 The AI-based prediction of outcomes with moral stress, such as DNR, may mitigate the ethical stress of feeling among physicians who were responsible for life-altering decisions, and key features of prediction may enable team members to collectively interpret AI-supported DNR recommendations. 33 Notably, the established model to predict has a high negative predictive value, which should be crucial given that false alarms appear to be an essential issue in predicting outcomes with high moral stress (Supplemental Table 1). Notably, ICU team resilience has gained attention as vital for sustainable critical care. 34 End-of-life decision-making is particularly challenging and often associated with significant moral distress for critical care nurses and physicians. 35 Ensuring patients are treated with dignity and compassion can alleviate emotional fatigue and strengthen team cohesion. 10 In line with nurse-led palliative needs-assessment programs, integrating structured nursing assessment into our model is a key element in identifying patients who may benefit from palliative care.36,37 In this study, we point out the importance of incorporating nursing assessments, which may reflect the nurse’s perception regarding humane critical care. We believe the use of AI-facilitated DNR decision support should not only be beneficial for patient care by facilitating timely and humane interventions but also have a positive impact on the resiliency of ICU teams.

In the error analysis, we first used the model outputs to identify the feature patterns and clinical relevance of misclassifications. We then applied domain knowledge to group these cases into clinically meaningful subgroups for targeted performance evaluation. Our error analysis showed that removing 67 patients with marked muscle weakness and high ventilatory demand (7 % of the test cohort) further increased the discrimination (AUROC 0.827 vs 0.792) and accuracy (0.769 vs 0.740) of the model. The error analysis reflects that critically ill patients who are already intubated with intense ventilatory support but still weak in muscular power are indeed in an ethical dilemma in the ICU and cannot be accurately predicted by the model. The high uncertainty of clinical outcome in these patients requires clinical review for individual decision regarding DNR. Therefore, our data suggested that error analysis is required in healthcare AI because misclassification can precipitate harmful decisions, such as premature discussion of LST might lead to unnecessary conflict among ICU healthcare team members and families. Currently, rigorous error analysis is increasingly recognized as an essential medical-AI system.38,39 Notably, systematic scrutiny of the model through transparent reporting of error patterns not only discloses the strength/limitation of the model but also may enhance the trust in AI of the clinicians, so-called responsible AI. 38

There are limitations in this study. First, this investigation relies on a single-center retrospective cohort extracted from the MIMIC-IV database; the retrospective design may limit causal inference and restrict the generalizability of the findings to other hospitals or patient groups. Second, patient values, preferences, and autonomy-which are central to DNR decisions-cannot be assessed from the database. Third, DNR orders may be signed shortly before death, making it difficult to disentangle DNR prediction from mere mortality prediction, which could reduce the clinical specificity of the model (Figure 6).

Conclusion

This study demonstrates that a multitask learning model incorporating both clinical and nursing 1ith ICU clinical workflows and enhancing interpretability through explainable AI techniques, the proposed model offers an “AI-within-the-team” nudge with the explanation that can trigger actionable activity, such as initiation of interdisciplinary discussions and family meetings. Future work should prospectively validate the model across diverse institutions and incorporate patient-reported values for paving the way for AI-supported decision support systems on the LST issue in critically ill patients.

Supplemental Material

sj-pdf-1-inq-10.1177_00469580261420721 – Supplemental material for Predicting Do-Not-Resuscitate Decisions in Critically Ill Patients Through Using Multitask Learning: A Retrospective Study of the MIMIC-IV Database

Supplemental material, sj-pdf-1-inq-10.1177_00469580261420721 for Predicting Do-Not-Resuscitate Decisions in Critically Ill Patients Through Using Multitask Learning: A Retrospective Study of the MIMIC-IV Database by Ming-Yen Lin, Chuan-Feng Yeh and Wen-Cheng Chao in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Supplemental Material

sj-pdf-2-inq-10.1177_00469580261420721 – Supplemental material for Predicting Do-Not-Resuscitate Decisions in Critically Ill Patients Through Using Multitask Learning: A Retrospective Study of the MIMIC-IV Database

Supplemental material, sj-pdf-2-inq-10.1177_00469580261420721 for Predicting Do-Not-Resuscitate Decisions in Critically Ill Patients Through Using Multitask Learning: A Retrospective Study of the MIMIC-IV Database by Ming-Yen Lin, Chuan-Feng Yeh and Wen-Cheng Chao in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Footnotes

Ethical Considerations

We used the MIMIC-IV database, which is a de-identified and publicly available critical care database. The present study, limited to a secondary analysis of anonymized data, was approved by the institutional review board of Taichung Veterans General Hospital (IRB number: CE25245B).

Consent to Participate

The informed consent is waived due to all of the data was de-identified data.

Authors’ Contributions

Study concept and design: MYL and WCC. Acquisition of data: MYL, CFY, and WCC. Analysis and interpretation of data: MYL, CFY, and WCC. Drafting the manuscript: MYL and WCC. All authors read and approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Taichung Veterans General Hospital (TCVGH-1142901C, TCVGH-114G212, and TCVGH-SCI114110) and National Science and Technology Council Taiwan (NSTC 112-2314-B-075A-001-MY2, NSTC 113-2221-E-035-062, NSTC114-2221-E-035-049, and NSTC 114-2314-B-075A-016-MY3).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.