Abstract

Recent U.S. federal government policy has required or recommended the use of evidence-based interventions (EBIs), so that it is important to determine the extent to which this priority is reflected in actual federal solicitations for intervention funding, particularly for behavioral healthcare interventions. Understanding how well such policies are incorporated in federal opportunity announcements (FOAs) for grant funding could improve compliance with policy and increase the societal use of evidence-based interventions for behavioral healthcare. FOAs for discretionary grants (n = 243) in fiscal year 2021 were obtained from the Grants.gov website for 44 federal departments, agencies and sub-agencies that were likely to fund interventions in behavioral health-related areas. FOAs for block/formula grants to states that included behavioral healthcare (n = 17) were obtained from the SAM.gov website. Across both discretionary and block grants, EBIs were required in 60% and recommended in 21% of these FOAs for funding. Numerous different terms were used to signify EBIs by the FOAs, with the greatest variation occurring among the block grants. Lack of adequate elaboration or definition of alternative EBI terms prominently characterized FOAs issued by the Department of Health and Human Services, although less so for those issued by the Departments of Justice and Education. Overall, 43% of FOAs referenced evidence-based program registers on the web, which are scientifically credible sources of EBIs. Otherwise, most of the remaining elaborations of EBI terms in these FOAs were quite brief, often idiosyncratic, and not scientifically vetted. The FOAs generally adhered to federal policy requiring or encouraging the use of EBIs for funding requests. However, an overall pattern showing lack or inadequate elaboration of terms signifying EBIs makes it difficult for applicants to comply with federal policies regarding use of EBIs for behavioral healthcare.

Keywords

There has been an increasing focus on the use of evidence in U.S. federal policymaking, for instance the Foundations for Evidence-based Policymaking Act of 2018, which calls for all federal agencies to use evidence and data to create policies and inform interventions.

The research determined how well federal policies encouraging evidence-based interventions in behavioral healthcare are incorporated in federal opportunity announcements (FOAs) for grants and whether these FOAs provide sufficient guidance to applicants about what constitutes evidence-based interventions.

Although FOAs for behavioral healthcare grants generally adhered to federal policy, these FOAs also need to provide better, more specific guidance to applicants for program funding to improve their ability to comply with federal policy.

Introduction/Background

The provision of behavioral healthcare in the United States involves significant expenditures of financial and human resources. Mental health expenditures, which include addiction treatment and prevention across the U.S. were $225 billion in 2019. 1 In order to increase the return on investment in behavioral health services, there has been an increasing focus on the use of evidence in U.S. federal policymaking. 2 An important example is the Foundations for Evidence-based Policymaking Act of 2018, 3 which calls for all federal agencies to engage in evidence-based policymaking. In the context of this movement evidence-based policy has a specific meaning, which is those policies that demonstrate a measurable positive impact on whatever problem the policy addresses. As an extension of this, policies exist that require or recommend the use of evidence-based interventions (EBIs) as a condition of receiving funding from the federal government.4,5 EBIs are those interventions that demonstrate a positive effect when subjected to rigorous evaluation.6-8

Rigorous program evaluation has traditionally meant the use of randomized controlled trials (RCTs) as a gold standard of research design.6,9-11 More recently, hierarchies of evidence have been developed that allow for evaluations conducted using other designs to be counted as credible evidence for the merit of an intervention. 10 Additionally, a “best available evidence” approach to evaluating interventions is also used as criteria for evaluating the evidence-base of an intervention. This “best available evidence” approach recognizes that high quality RCTs or meta-analyses may not be available for a given intervention, and as such, decision makers may need to accept evidence from less rigorous designs.11,12 So when defining EBIs, the term “rigorous’ should be seen to encompass a range of research designs. Thus, in our present operational definition of the term EBI, we use the phrase “accepted principles of research quality” to encompass the variety of perspectives about what constitutes acceptable research designs.

The present study defines the concept of an EBI as a specific intervention that demonstrates improvement in one or more behavioral health outcomes as determined in empirical studies conducted according to accepted principles of research quality. Improvement in this sense means “symptom improvement not accounted for by measurement error alone.” 13 (p. 1) The use of the phrase “empirical studies” in this definition is meant to indicate the primacy of evidence obtained through research. 14 Finally, the phrase “conducted according to accepted principles of research quality” is meant to indicate that although there may be a range of research designs employed in the evaluation of an intervention, credible evidence should be derived from evaluations with designs that are known to produce valid and reliable estimates of intervention effects. Although most general definitions of EBIs are fairly similar across the literature, the individual criteria for what constitutes an EBI varies across organizations, individuals, and other actors in the evidence-based policy system.15-18 This variation is due to factors such as political influence, social context, and shifting ideologies of a diverse range of stakeholders.6,19

The implementation of EBIs occurs within complex ecosystem of actors, including the individual practitioner, provider organization, and systems within which policy is made.19-22 In order to ensure the implementation of these evidence-based interventions, all actors within these systems must have clear expectations as to what constitutes an evidence-based intervention that is acceptable to implement.23,24

The provision of clearly defined criteria about what constitutes an EBI, including specification of multiple tiers of evidence, is considered to be essential to evidence-based policymaking.25,26 A recent study of state-level mandates for the use of evidence-based interventions found that the majority of mandates included in the study simply mentioned key terms related to evidence-based interventions, but did not elaborate on them to any degree. 27 This appears to indicate that clear communication about what constitutes an evidence-based intervention is often lacking at the state level.

Two methods governments can use to clearly communicate expectations about what constitutes an EBI are legislative mandates and funding levers such as grant and contract awards. 28 One resource that could enhance the ability of these policy and purchasing levers to clarify criteria for evidence-based interventions is the evidence-based program register (EBPR). EBPRs are online databases of behavioral health interventions that evaluate evidence in support of programmatic decision-making.29-32 Recent research identified 28 major extant EBPRs, 23 although an updated web search by the authors identified at least 40 EBPRs that list individual EBIs or modalities of interventions (ie, general approaches such as cognitive-behavioral therapy) for behavioral health. Prior studies suggest that inclusion of EBPRs in legislative mandates for the use of evidence-based interventions could clarify and strengthen those mandates. 24 The same seems likely for purchasing levers as well.

Purchasing levers exist at the state and federal level. A major purchasing lever used by the federal government is through grants that require or recommend the use of EBIs. Presently, no studies have investigated the degree to which federal funding opportunities have included language related either to requiring or recommending the use of evidence-based behavioral health interventions.

Purpose of the Study

The purpose of the present study is to understand to what extent and in what manner federal grant funding leverage is used to require or encourage the use of EBIs for behavioral healthcare. This purpose is realized first, by identifying the types of interventions that are solicited in federal opportunity announcements (FOAs) for behavioral healthcare grants (research question #1), to understand the perceived gaps in behavioral healthcare that the federal government is trying to mitigate. Second, we identify the terminology used by the FOAs in relation to EBIs and the extent to which these FOAs require or recommend EBIs (research question #2), to learn how effectively the government is leveraging its funding to increase implementation of EBIs. Third, we examine the various ways in which the FOAs define or operationalize key EBI-related terms (research question #3), to enable potential recommendations for effective EBI policy. Finally, we examine the ways in which EBPRs are referenced in these FOAs (research question #4), to help determine whether funding for behavioral healthcare grants could make better use of this existing resource. The overall objective of these inquiries is to learn how the concept of EBIs might be more effectively incorporated in these FOAs.

Methods

The present study is a mixed-methods analysis of federal discretionary and block grants for the implementation of behavioral health interventions, where descriptive statistics based on a coding system developed through the use of applied thematic analysis were obtained for key variables. 33 Behavioral health is a term that refers to the connection between behaviors and the mental, emotional, and physical function and well-being of an individual.34-37 For the purposes of this study, the term behavioral health intervention is defined as an action, service, approach, strategy, or policy that is designed to change the behaviors of individuals, communities, or systems (eg, units of government or governmental agencies such as departments of corrections, etc.), and that relates to one or more of the following areas: psychological/emotional well-being, socio-economic well-being, safety and security, and physical well-being. More concretely, behavioral health interventions address issues related to “substance misuse treatment and prevention, mental health, child welfare, youth and family services, teen pregnancy prevention, HIV/AIDS prevention. . .offender rehabilitation,” and some aspects of the education system with behavioral components (eg, dropout prevention, bullying prevention, etc.). 23 (p. 3)

Creating the Analytic Dataset

There are 2 major types of grant announcements issued by the U.S. federal government—discretionary grants and block grants (also known as formula grants). Discretionary grants are discreet grants that tend to focus on funding provider agencies or networks of agencies to accomplish the goals of the grant on a competitive basis, whereas block grants represent authorizations made by the U.S. congress to allocate funds to state governments using a distributional formula. 38 Block grants usually flow through state agencies to service provider organizations, whereas discretionary grants are made directly from the granting agency to a local organization. An example of a discretionary grant would be funds to implement a community mental health center that were allocated to a provider organization(s) who submitted the best intervention proposal(s). An example of a block grant would be funds allocated to a state mental health authority to fund individual interventions at its discretion, where the funding levels were determined based on a set of criteria (eg, the number of students receiving free or reduced lunch statewide).

In order to determine the study’s pool of discretionary grants, funding announcements were obtained from Grants.gov for 44 federal agencies and sub-agencies that were likely to fund interventions in behavioral health-related areas. Grants.gov is a continuously updated database of federal funding opportunities for all cabinet level agencies and sub-agencies. An initial search was conducted for all funding opportunities issued by the relevant agencies. These funding announcements were screened by date, and any FOAs that were open and available for applications during the period of 10/1/2020 and 9/30/2021 were included in the study.

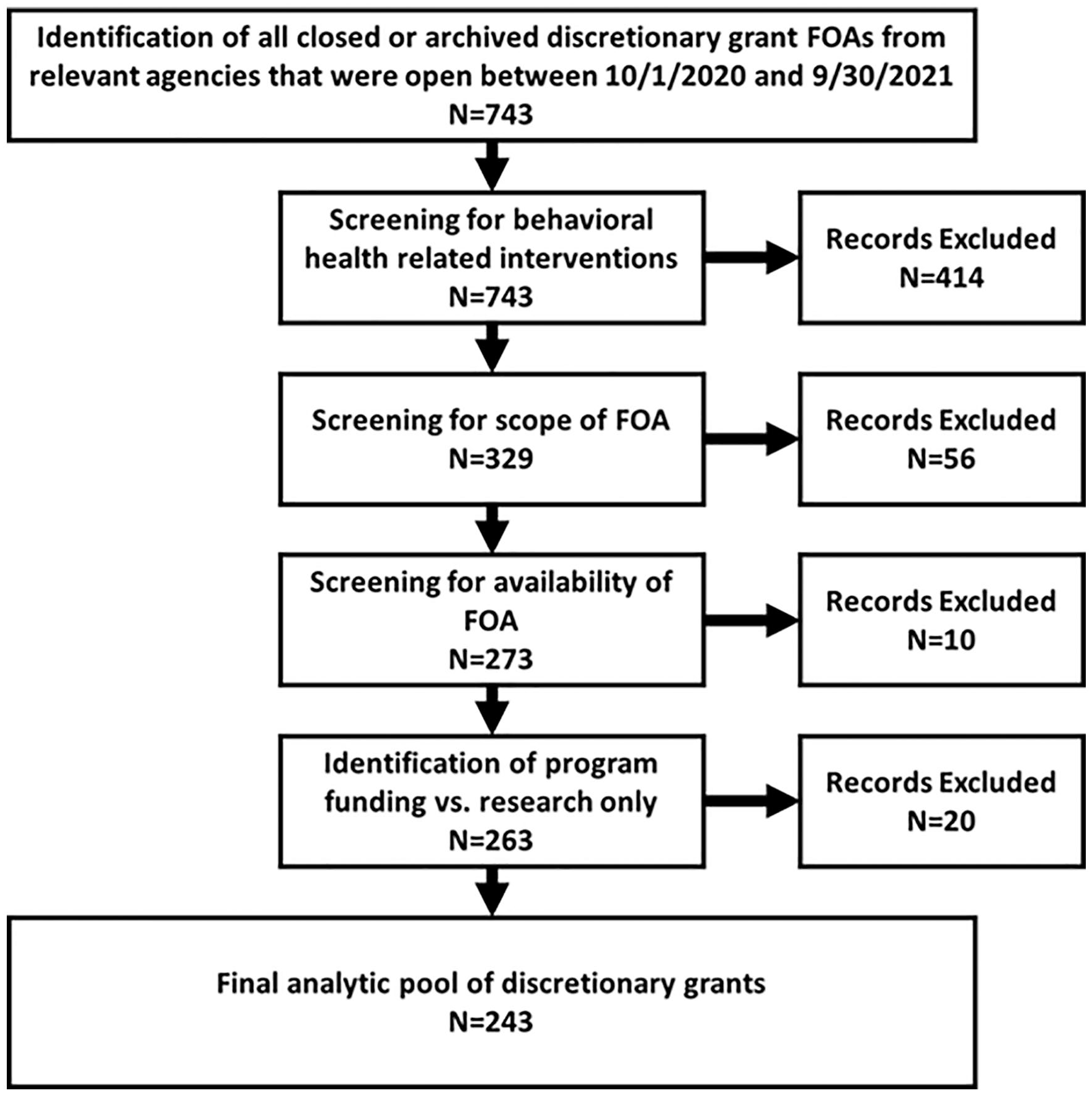

Once this screening was complete, the FOAs were further screened for the inclusion of behavioral health interventions, the scope of the FOA (ie, FOAs not continuations of funding or announcement of awards), the availability of the FOA for review, and whether the FOA represented funding of interventions versus research only opportunities. This produced the final analytic dataset of 243 discretionary funding announcements that were coded in the present study. Figure 1 outlines the step-by-step process of arriving at the pool of discretionary grant FOAs included in the study.

Flowchart for creating analytic dataset of discretionary grant FOAs.

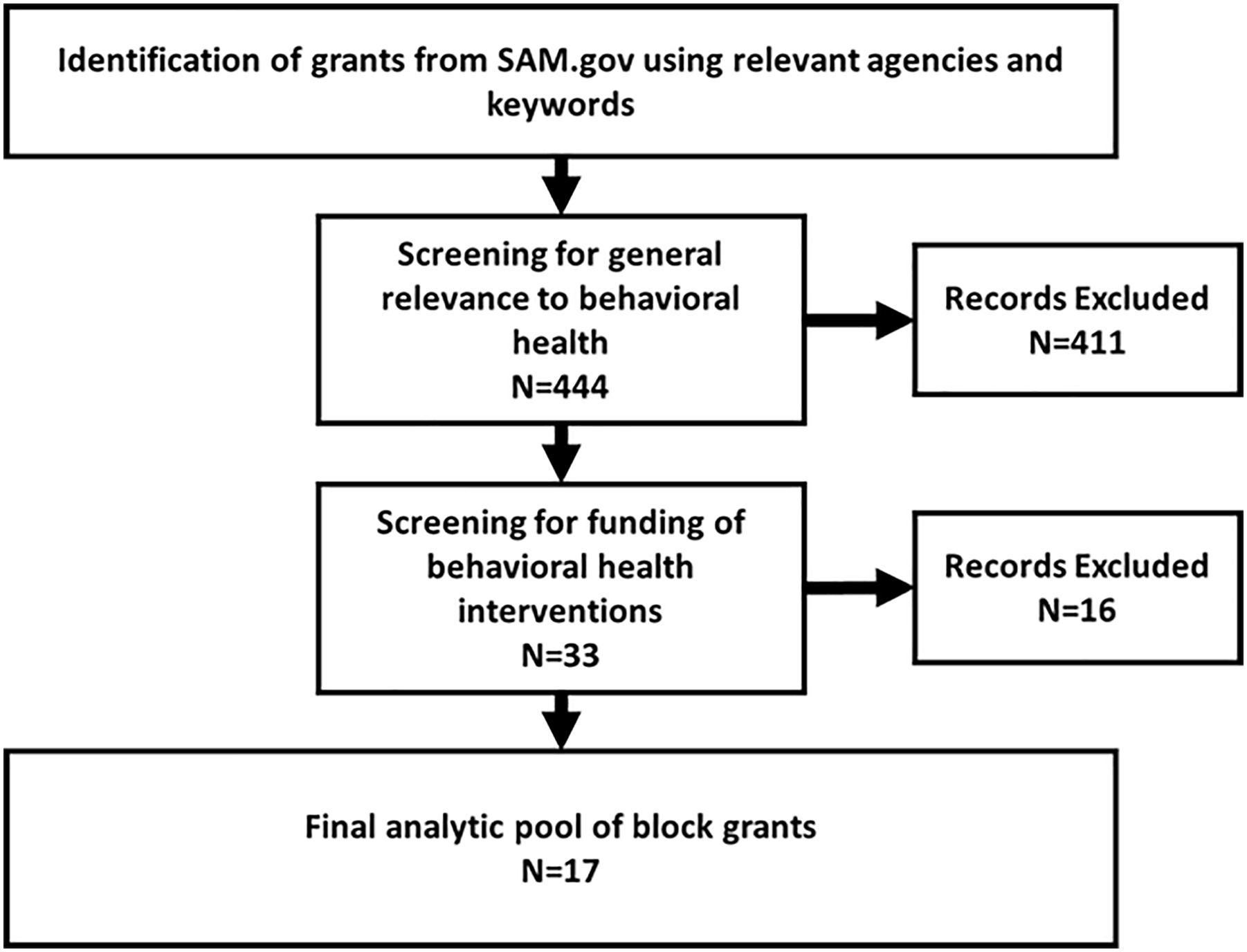

In order to identify potential block grants to be included in the dataset, the research team searched SAM.gov, for the terms “block” or “formula” as keywords. SAM.gov is the official U.S. government system for assistance listings. All federal agencies included in the discretionary grants search were included in the search filter. A member of the research team then accessed documentation related to the FOAs, including the texts of the authorizing legislation, supporting documents such as FAQs or implementation guides, funding opportunity announcements, or grant application documents. It should be noted that not all of these documents were available for each grant. For example, some agencies placed their application documents behind a registration wall to which the research team did not have access.

Once the available grant documents were obtained, a member of the team further screened these documents to identify whether the grant was intended to support a behavioral health intervention. This led to a final sample of 17 relevant block grants. Figure 2 outlines the step-by-step process of developing the pool of block grants included in the study. It should be noted, however, that many block grants feature interventions related to multiple areas, sometimes combining behavioral health with cash assistance programs or other non-behavioral health interventions. In these instances, the grant was still coded for the portions of the grant that applied to behavioral health.

Flowchart for creating analytic dataset of block grants.

Variables

Each FOA was coded for the following variables: (a) federal funding agency (b) discretionary or block grant (c) primary service system to which the intervention relates, (d) topic areas addressed by the FOA, (e) intervention type, (f) population target, (g) key alternative terms referring to EBIs, and (h) whether any of the alternative terms referring to EBIs were only mentioned or were elaborated in the FOA.

“Mentions” were just that; the term is referenced but not further defined, detailed or explicated in any way. An “elaboration” of a key term occurs when details such as an explicit definition or list of criteria are provided as a means of operationalizing the presented term. An example of an elaborated term is “evidence-based programs are programs that have rigorous scientific evidence supporting an impact on family violence.”

Based on the prior literature and the experience of the research team, 31 the following were used to search the FOAs for key alternative terms referring to evidence-based interventions (variable g): Evidence-based; effective program; best practice; promising practice; evidence-informed; research-based; science-based; empirically-supported; clinically-proven; and evidence-supported. Also searched were a set of phrases such as: shown to be effective; demonstrated effectiveness. Hyphenated and non-hyphenated versions of the terms were used in the search. Each FOA could have been coded for multiple terms used.

If there was elaboration of a key EBI-related term, the following additional variables were coded: (i60%) whether the elaboration referred to criteria that were internal, external or both; (j) specific type of EBI criteria. The phrase “internal elaboration” references elaborations where the criteria were internal to the funding agency or apparently unique to that FOA, whereas “external elaboration” refers to elaborations where the criteria pointed to standards or sources of interventions external to the funding agency.

With respect to specific EBI criteria, the concepts of quality of evidence and strength of evidence are useful for categorization. Criteria related to “quality of evidence” usually refer to research designs of primary evaluation studies that are permissible as evidence of effectiveness. For example, some FOAs required that an intervention was studied in a randomized controlled trial or that the intervention had evidence obtained from “rigorous scientific studies.” Quality of evidence (QOE) was coded using the following mutually exclusive categories: no QOE statement; general QOE statement; QOE uses hierarchy of evidence levels; QOE uses best available evidence model; or requires studies with specific evaluation designs.

With respect to “strength of evidence” criteria, these address outcomes of interventions as found in primary studies considered to support the effectiveness of the intervention. For example, some FOAs required that interventions had demonstrated a statistically significant impact in a research study, while others stated that the program needed to have a positive impact on a specific outcome. Strength of evidence (SOE) was coded using the following mutually exclusive categories: no SOE statement; general SOE statement; SOE uses hierarchy of evidence levels; SOE uses best available evidence model; or requires specific outcomes related to statistical significance or effect size.

Each FOA or grant document may have included multiple EBI criteria. The full list of criteria as found in the FOAs appears in Figure 9.

Development of Variable Coding Schemes

The coding schemes for all relevant variables were developed based on prior research, 31 the knowledge of the researchers, and the text of the FOAs using techniques of applied thematic analysis. 33 The coding schemes were reviewed by the study’s principal investigator and were revised until all study team members agreed on the final codes.

Analysis

Descriptive statistics were obtained using SPSS version 27 for all variables, and these statistics formed the basis of the tables and figures.

Results

The FOAs were issued by 7 major federal agencies (or their subunits). The distribution of FOAs by agency type appears in Figure 3. In some cases, there were variations in the results of an analysis when the data were disaggregated by agency. In those cases, a subgroup analysis based on funding agency was conducted to further provide information about the differences. We omitted 4 agencies from these subgroup analyses due to the minimal number of FOAs they issued (n ≤ 4 FOAs per agency). These were the Department of Housing and Urban Development (HUD), Department of Labor (DOL), Office of National Drug Control Policy (ONDCP), and Department of Veteran’s Affairs (VA).

Federal agencies represented by grants.

Research Question #1: What types of interventions are solicited in federal FOAs for behavioral healthcare grants?

The FOAs addressed a total of 24 different topic areas. The 5 most prevalent topic areas addressed by the FOAs were prevention, intervention, or recovery from substance misuse disorders (26.9% of FOAs), prevention, intervention or recovery from a mental/psychiatric illness (19.2% of FOAs), recovery from victimization due to crime or violence (16.9% of FOAs), violence prevention (11.5% of FOAs), and prevention or treatment of criminal behaviors, delinquency, and/or recidivism (11.2% of FOAs). There were differences between the agencies in which topic areas were most prevalent. For the Department of Health and Human Services (DHHS), prevention, intervention, or recovery from mental/psychiatric illness or substance abuse were the most prevalent topics. Recovery from victimization due to crime or violence and prevention or treatment of criminal behaviors, delinquency, and/or recidivism were the most prevalent topic areas for the Department of Justice (DOJ). The most prevalent topic areas for the Department of Education (DOE) were services for individuals with developmental/intellectual disabilities and services related to dropout prevention/academic retention.

The FOAs represented 5 main intervention types. These include capacity building for providers (56.9% of FOAs), direct service to individual consumers or populations (44.6%), training/technical assistance (33.5%), strategic planning (2.3%), and model/curriculum development (1.9%). When disaggregated by department, there was no difference between DHHS, DOJ, and DOE in which intervention types were most prevalent, with the order of prevalence of intervention types mirroring the order of prevalence for the full sample.

Over half of the FOAs included capacity building interventions. In many cases, these capacity building interventions were aimed at improving the ability of grantees to deliver direct services. It should be noted that in many cases, there were multiple intervention types represented in the same FOA. In one example, the funding was to be used to deliver direct services to clients and also to build and improve networks of care (capacity building), which could support improved service delivery.

The interventions included in the FOAs were aimed at several different population types: professionals/service providers (54.6% of FOAs), clients/consumers/caregivers/family (45.4%), government units (33.1%), researchers/evaluators (1.2%), and other (1.5%). This finding aligns with the finding that the majority of FOAs are geared toward capacity building and training/technical assistance or direct services. There were differences between the departments in relation to intervention targets. For the DOJ there were roughly equal amounts of FOAs aimed at clients/consumers/caregivers/family, topic area professionals/service providers, and government units. For both DHHS and DOE, topic area professionals/service providers was the most frequent consumer type, followed by clients/consumers/caregivers/family, and then government units.

Research Question #2: What alternative terms are used in FOAs to describe EBIs for behavioral healthcare grants and how frequently do these FOAs require or recommend the use of EBIs (considering all alternative terms) in applications for funding?

As indicated by references to at least 1 of the 10 key alternative terms for an EBI, 214 out of 243 discretionary FOAs (88%) and 16 out of 17 block grants FOAs (88%) required or recommended EBIs for healthcare funding. Across both grant types, EBIs were required in 60% of FOAs and recommended in 21% of FOAs. There was no difference in the patterns between grant types. There were differences in requirements for the use of EBIs across federal departments. EBIs were required in 73% of the DHHS grants and recommended in 10%, while EBIs were required in 50% of the DOJ grants and recommended in 34%, and EBIs were required in 36% of the DOE grants and recommended in 36%.

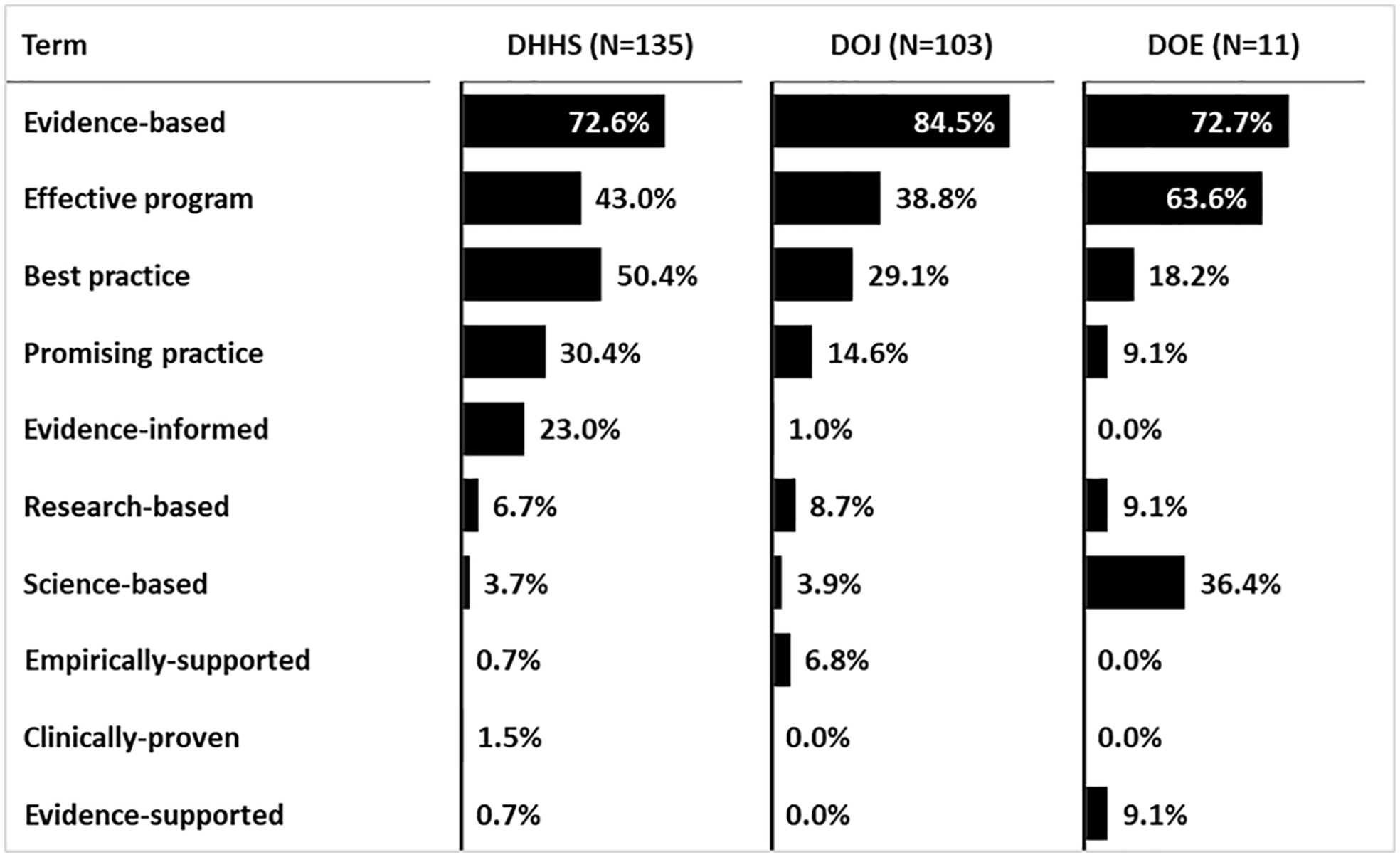

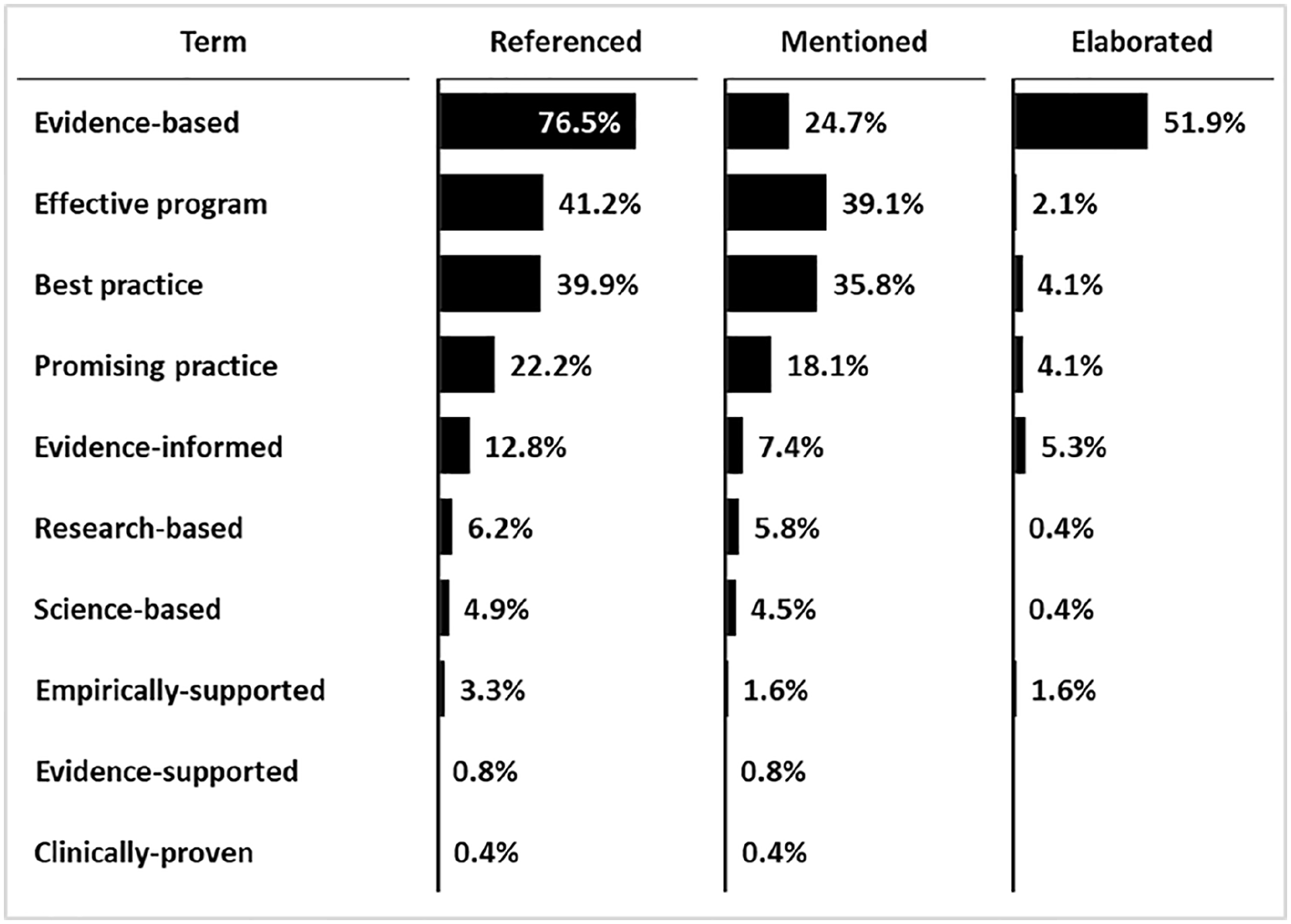

The most common term used was “evidence-based,” which was referenced in 76% of 260 grants included in the study (block and discretionary combined). This was followed by “effective program” (42% of all grants), “best practice” (39% of all grants), “promising practice” (22% of all grants), and “evidence-informed” (13% of all grants). See Figure 4 for a complete breakdown of terms referenced by grant type.

Use of key terms by grant type.

There were differences between the departments in some of the terminology used. While “evidence-based” was the most commonly used term by each of the 3 departments in the subgroup analysis, the term “effective program” was the second most commonly used by the DOJ and DOE and the third most commonly used by the DHHS. Additionally, the term “best practice” was the second most commonly used term by DHHS, and the third and fourth most commonly used term by DOJ and DOE respectively. The term “science-based” was used more frequently in DOE grants than in DHHS or DOJ grants. See Figure 5 for a complete breakdown of terms used by each agency.

Use of terms by department, all grant types.

Research Question #3: In what ways is the concept of evidence-based practice (EBI) defined or elaborated in these FOAs for behavioral healthcare grants?

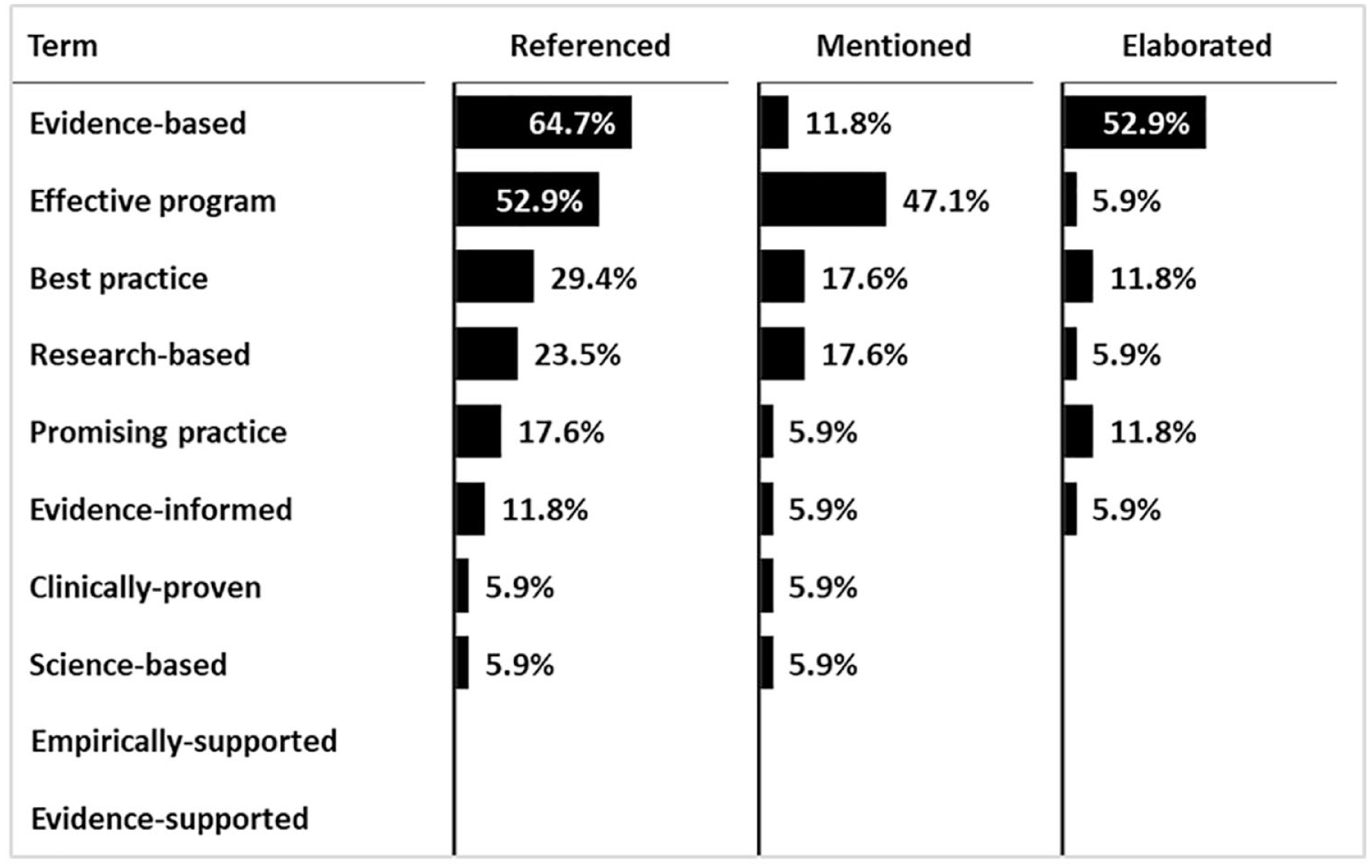

An analysis was conducted on how key alternative EBI terms were presented in the FOAs (ie, simply mentioned versus elaborated or defined in some level of detail). Where an alternative term was elaborated, we examined how it was elaborated (ie, using criteria internal to the agency issuing the FOA, criteria pointing to sources outside the agency, or both). In total, 136 discretionary FOAs (56%) contained at least one alternative term that was elaborated, while 11 block grants (65%) contained at least one term that was elaborated. The distribution of alternative terms by simple mentions versus detailed elaborations is shown in Figure 6 for discretionary grants and Figure 7 for block grants. There were differences between agencies in the frequency of mentions versus elaborations of terms. For example, the term “evidence-based” was elaborated in 78% of DOJ grants and 64% of DOE grants, while it was only elaborated in 34% of the DHHS grants. Figure 8 presents a full breakdown of mentions versus elaborations by agency for all grant types.

Use of key alternative terms for EBIs in discretionary grant FOAs (n = 243).

Use of key alternative terms for EBIs in block grant documents (n = 17).

Use of key alternative terms for EBIs by department, all grant types.

Across the 136 discretionary FOAs where at least one alternative EBI term was elaborated, there were 170 instances of a term being elaborated, because FOAs could have referenced and elaborated more than one term. Out of the 170 instances of a term being elaborated, 20.6% elaborated the term using criteria internal to the agency, 56.5% used criteria from sources external to the agency, and 22.9% used criteria that were both internally and externally referenced. Across the 11 block grants where at least one alternative EBI term was elaborated, there were 16 instances of a term being elaborated. Out of that 16, 31% used criteria that were internal to the agency, 44% used criteria from sources external to the agency, and 25% used criteria that were both internally and externally referenced.

A full list and frequencies of the different ways that alternative EBI terms were elaborated in the FOAs are shown in Figure 9. For discretionary grants, the most common type of elaboration was to require or recommend that behavioral health interventions listed on an EBPR be used for the application. (This will be further discussed under research question #4 below). Second most frequent was pointing to other external websites that provided more general resources related to selecting, developing or implementing EBIs (eg, the Centers for Disease Control’s “A Comprehensive Technical Package for the Prevention of Youth Violence and Associated Risk Behaviors,” or their “Best Practices Guide for Youth Engagement”). Third most frequent was a list of specific healthcare interventions endorsed by the funding agency through an internal process. These were also the most frequent types of elaborations for the block grants, except that an internally-generated list of interventions was the most common form of elaboration.

Criteria used in EBI elaborations by grant type, all agencies.

After these major categories, there was a proliferation of less common elaborations of what would be acceptable to the funding agency as an EBI. Some of the elaborations only made general statements about the quality of evidence required to qualify as an EBI, such as “must have high quality evidence” or “must have evidence obtained through rigorous scientific research.” However, most of the elaborations were more specific, though perhaps not comprehensive, such as requiring the intervention pass peer review or be endorsed by professional consensus or expert opinion.

There were differences between agencies in the most commonly used criteria in elaborations of terms. For example, 78% of elaborations in DOJ grants and 100% of elaborations in DOE grants referred to an EBPR, while only 31.6% of elaborations in DHHS grants referred to an EBPR. Additionally, 100% of elaborations found in DOE grants used hierarchies of evidence levels in their elaborations, while no elaborations from DHHS or DOJ mentioned hierarchies of evidence. A full breakdown of criteria used in elaborations by agency appears in Figure 10.

Criteria used in EBI elaborations by agency, all grant types.

It should be noted that in most cases, an elaboration featured only a small number of criteria, and that the criteria used in the elaborations of different terms varied across FOAs. Also, the terms used in some FOAs may have been arranged hierarchically. That is, the term “evidence-based” may be used as the designator for interventions with the strongest evidence, while the term “best practice” may be used to indicate interventions with moderate evidence. In these cases, the criteria used to elaborate those terms differed to denote higher or lower standards. An example of this is an FOA which requires that evaluations of an intervention must be conducted using a specific design (eg, RCT) in order to be deemed evidence-based, while an intervention is considered to be a promising program if the evidence in favor of the intervention is based on consensus or expert opinion. The present analysis does not disaggregate criteria by term used or by whether terms are used hierarchically, so no inferences are made concerning these issues.

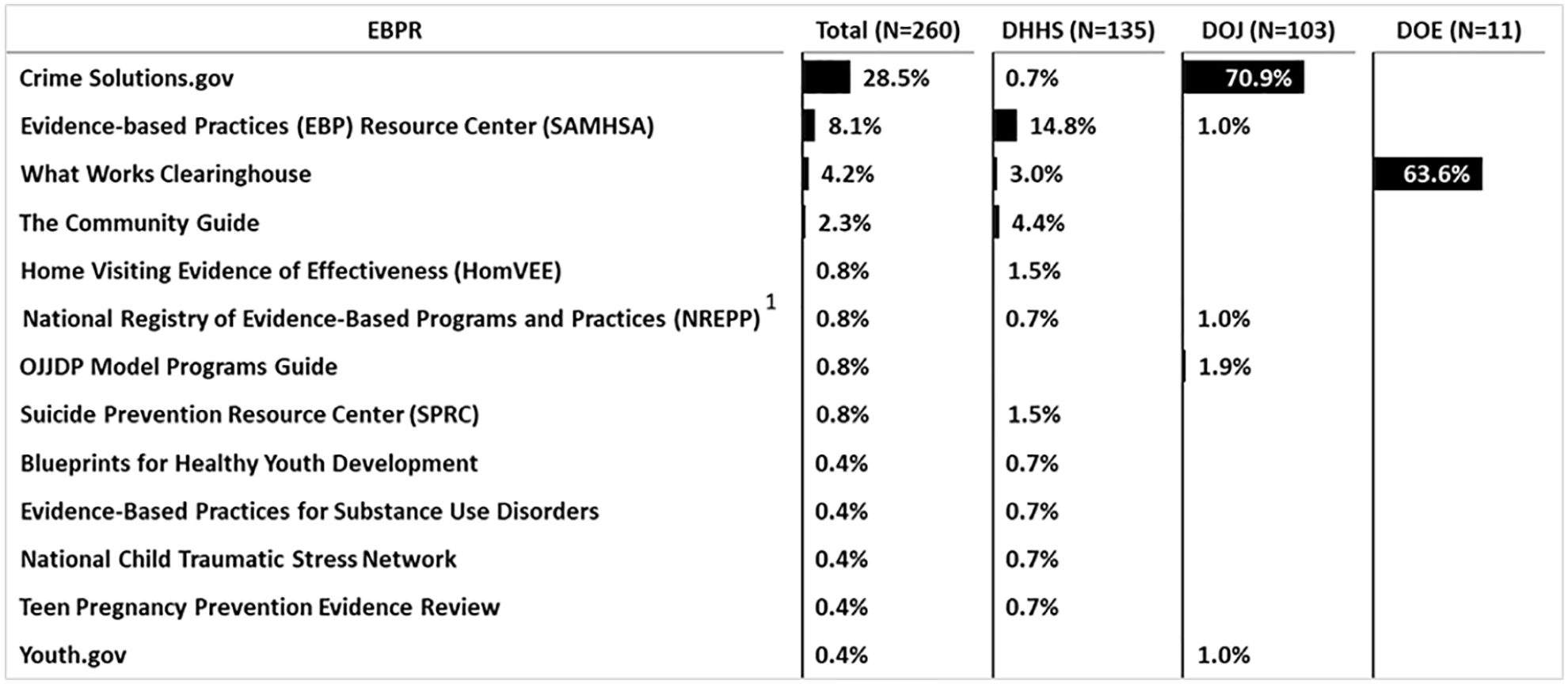

Research Question #4: How frequently do these FOAs require or recommend EBPRs as sources of EBIs and which specific EBPRs are referenced?

Overall, FOAs referenced an EBPR in 41.2% of discretionary grants and 35.3% of block grants as a source of EBIs (Figure 11). EBPRs were recommended in 37.9% of discretionary grants and 23.5% of block grants and were required in 3.3% of discretionary grants and 11.8% of block grants. There were differences between agencies in the requirements to use EBPRs. EBPRs were mentioned in 72% of DOJ FOAs and 64% of DOE FOAs, while they were mentioned in only 19% of DHHS FOAs. EBPR use was recommended in 99% of DOJ FOAs that mentioned an EBPR and required in 1%. Similarly, EBPR use was recommended in 92% of DHHS FOAs that mentioned an EBPR and required in 8%. Conversely, EBPR use was required in 100% of the DOE FOAs that mentioned an EBPR.

Percentage of FOAs that mentioned an EBPR by agency (discretionary and block grants combined).

Specifically, as might be expected by their sponsorships, references to Crimesolutions.gov, the SAMSHA Evidence-Based Practice Resource Center, and the What Works Clearinghouse were almost entirely attributable to FOAs from the Department of Justice, the Department of Health and Human Services, and the Department of Education, respectively (Figure 11). The preceding were also the most frequently referenced EBPRs overall. References to other EBPRs from the existing pool of at least 28 major EBPRs were rare, even when also sponsored by a specific department such as the Department of Justice, that is, the OJJDP Model Programs Guide and Youth.gov.

Discussion

Recent U.S. federal government policy has required or recommended the use of evidence-based interventions, making it important to determine the extent to which this priority is reflected in actual federal solicitations for program funding, particularly for behavioral healthcare interventions. The majority of FOAs included in the present study were issued by the Department of Health and Human Services, followed by the Department of Justice and the Department of Education. Together these FOAs constituted approximately 95% of the entire sample of FOAs related to the funding of behavioral healthcare. Almost all the relevant discretionary and block grant FOAs from the federal agencies studied required or recommended the inclusion of EBIs in applications. This largely adheres to published U.S. policies for eligibility for behavioral healthcare funding.

The funding of capacity building activities, along with training and technical assistance, represent indirect impacts on those in need of behavioral health services. By improving systems of care, policies that fund these organizational elements have a potentially wider reach than policies that fund consumer services directly. (Of course, policies that target consumers directly also improve the availability of those services to those in need.)

A range of different terms was used to signify EBIs by the FOAs included in the present study. The most frequently used term overall was “evidence-based,” followed by “effective program” and “best practice.” However, numerous other terms were also used, with the greatest variation occurring among the block grants. There were also differences in the use of terms across agencies.

All the terms included in the present study do appear in the research and evaluation literature on EBIs and appear to be used interchangeably by professionals in the behavioral healthcare field. Nevertheless, the variation in the use of terminology may contribute to potential uncertainty among those who are seeking federal grant funding. More consistent terminology could be helpful in clarifying what types of interventions are acceptable for different federal funding announcements.

A slight majority of alternative EBI terms were elaborated for discretionary grant FOAs and two-thirds for block grant FOAs. The substantial minorities of FOAs without elaborations are concerning, because such FOAs effectively fail to provide guidance or state expectations for applicants seeking behavioral healthcare funding. This means that in those FOAs that do not elaborate key terms, the applicants are left to their own devices to provide a rationale for using a given EBI - rationales which may not be accepted by federal grant application reviewers.

Some agencies were more prescriptive about what constitutes an EBI (a “top-down” approach), while others were less prescriptive about what constitutes an EBI (a “bottom-up” approach). One possible explanation for this is that a great majority of instances where a term was elaborated in DOJ and DOE grants referred to an EBPR (specifically CrimeSolutions.gov and the What Works Clearinghouse), and the EBPRs referenced by those 2 agencies are specifically focused on behavioral health interventions related to crime and education, respectively. Thus those agencies may have an advantage in formulating more comprehensive elaborations of EBI-related terms than other agencies.

Although the tendency to not elaborate the requirements and expectations for what constitutes an EBI at the federal level may be seen as a drawback, it may also potentially be seen as an advantage. That is, since block grant funding flows through the states, and since states are the major drivers of EBI implementation, 39 it could be reasonable to let states define EBIs for the purpose of block grant funding. Under this paradigm, less prescriptive federal requirements would allow states to define EBIs in ways that are more responsive to local political, financial, and clinical contexts. However, a recent study which examined how state agencies define EBIs found a similar lack of elaboration of EBI-related terms at the state level, indicating that federal funding announcements may need to be more prescriptive about what constitutes an EBI. 27

The use of external elaborations of alternative EBI terms can be considered to be beneficial, if those external sources have research credibility and remain accessible throughout the life-cycle of the grant. 27 Most of the external sources of elaboration include references to EBPRs, which generally are based on accepted hierarchies of research evidence 30 and thus are credible. Internal elaborations can also be useful, although prior research has shown that legislators and funding agency staff may not have the expertise necessary to develop guidelines that capture essential criteria for what constitutes evidence-based interventions. 40 However, most of the elaborations of EBI-related terms (other than when they point to EBPRs) are quite brief, do not appear scientifically vetted, and could even be characterized as idiosyncratic. Such limited elaborations do not provide adequate guidance for applicants to propose acceptable EBIs for their funding applications.

Moreover, a great majority of elaborations of EBI-related terms did not provide any explicit statements related to the quality or strength of evidence (eg, requiring specific research designs or effect sizes in evaluations, or applying hierarchies of evidence levels to the evaluation of supporting evidence for an EBI). Guidance in these 2 areas would be beneficial in FOA requirements so that grant applicants can better understand how to select the most appropriate interventions. For example, an FOA could require that an intervention be studied in a large-scale RCT or that it produces a certain effect size, so that an applicant does not have to resort to creating their own idiosyncratic criteria for what constitutes an acceptable EBI. This could help standardize the quality of EBIs that are proposed in response to funding announcements.

As mentioned above, referencing EBPRs could provide a “ready-made” solution for addressing the quality of research and strength of evidence in evaluations of behavioral health interventions. Unfortunately, pointing to EBPRs does necessarily solve the problem, because EBPRs vary in the stringency with which they evaluate quality of evidence, and most EBPRs do not require a certain strength of evidence, such as a minimum intervention effect size or minimum number of replications of evaluation studies, to qualify for inclusion as an EBI.30,31 Thus, interventions might be funded that result in a “statistically significant effect” (as compared with an alternative intervention or placebo), even if those interventions yield results with limited clinical significance. For example, in a large sample an intervention may produce a statistically significant reduction in alcohol consumption, but that result may be an average of one less day of drinking per month. Additionally, the estimates of program impact provided by the evaluations may be biased in unknown ways, given the variation in quality of evidence requirements, leading to the funding of interventions with impacts that are overestimated.

Nevertheless, there seems to be opportunity for more references to EBPRs than currently appear in these FOAs, especially for federal agencies within DHHs. This is consistent with the findings of previous studies, which found either a general lack or restricted range of references to EBPRs on both state agency websites and within state-level behavioral health policies for the use of EBIs.23,24 EBPRs are potentially valuable resources for identifying EBIs for behavioral health and for obtaining guidance for program implementation. Thus, increasing references to EBPRs in FOAs for federal funding of behavioral healthcare interventions would likely improve the FOA process and increase the societal impact of such interventions.

Limitations

The present study has several limitations. First, the study includes FOAs that were open during the most current federal grant cycle, which encompasses 1 year only. This cross section of the data may be insufficient to draw conclusions about all grant requirements over numerous years and also may be insufficient to allow for inferences about future FOAs. However, this cross section does provide insight into possible issues that could affect the utility of grant requirements for the use of EBIs. For example, our research illuminates the need for greater elaboration of terms such as evidence-based practice or best practice in grant funding requirements.

Second, the time period limitation, required because of the labor-intensive nature of the study, overlapped with a covid-19 pandemic year. This could potentially produce some unknown biases in the data and analysis, although the nature and extent of such biases is unclear. It is unlikely that pandemic-related issues would affect the sophistication of funding requirements, as many of the concepts about what constitutes an EBI are based on generally accepted principles found in the literature on evidence-based interventions, such as requiring an intervention to have been evaluated using a randomized controlled trial.

Third, the research team did not interview any applicants or other stakeholders for these FOAs, so it is not entirely certain whether the lack of or inadequate elaboration of important EBI terms is in fact problematic for them. Fourth, we did not query the authors of the FOAs or departmental sponsors of these grants to understand whether they are generally receiving grant applications that include viable EBIs from their points of view. Future research could illuminate these questions. Lastly, the study was limited to FOAs relevant to behavioral healthcare. Although this is a prime field utilizing the concept of EBIs, there is opportunity for broader study of federal funding of evidence-based interventions in society, for example, in other medical fields or economics.

Conclusions

Almost all discretionary and block federal opportunity announcements for behavioral healthcare grants required or recommended the inclusion of EBIs in applications. Across both grant types, EBIs were required in 60% and recommended in 21% of these FOAs. This largely but not completely adheres to published U.S. policies informing eligibility for behavioral healthcare funding. The overall use of effective interventions could potentially increase as the requirements for EBIs are included in more FOAs, thus improving the services provided to those in need of help with behavioral health issues. One caveat to this is when there are a limited number of interventions available that align with the purpose of the grant, or when the grant addresses a novel problem that has not been well-researched. In these instances, it would not be reasonable to include a requirement specifically for the use of an EBI as traditionally defined, although such grants could still support the use of the “best” interventions available or propose to fund the development of an appropriate EBI.

However, numerous different terms were used to signify EBIs by the FOAs, with the greatest variation occurring among the block grants. This variation in the use of terminology may contribute to potential uncertainty among applicants for federal grant funding about the quality of interventions that are eligible for funding.

Lack of adequate elaboration or definition of EBI terms prominently characterized FOAs issued by the Department of Health and Human Services, although less so for those issued by the Departments of Justice and Education. The most common type of elaboration, when it occurred, was pointing to the research standards and behavioral healthcare interventions found in various EBPRs. EBPRs are a credible source of EBIs, but most elaborations of EBI terms in FOAs were brief, poorly scientifically vetted, and even highly idiosyncratic. It appears that more use could be made of a wider range of EBPRs in these FOAs, provided that EBPRs are used that are known to be trustworthy for accurately distinguishing between interventions that are evidence-based and those that are not.

In sum, the frequent lack or inadequate elaboration of terms signifying EBIs makes it difficult for applicants to comply with federal policies requiring or recommending use of EBIs for behavioral healthcare. Federal funding initiatives could potentially better encourage the use of EBIs by elaborating pertinent terms more frequently, more explicitly, and more consistently, and also by including references to highly vetted sources of interventions such as EBPRs.

Footnotes

Acknowledgements

Mary Ramlow provided valuable administrative support.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by Grant # R01 DA042036 from the National Institute on Drug Abuse.

Ethics/Consent Statement

Ethical permission was not applied for and there is no consent document because they are not needed; this study of federal documents does not involve human or animal subjects.