Abstract

This article explores a case study of the potential influence of a capacity building investment toward public health partnerships (PHPs) targeting asthma. This case study explores what factors were salient among PHPs who were indirect recipients of a funder’s capacity building. Our case study suggests that a funder’s capacity building efforts may be linked to evaluation practice guidelines and decisions toward individual and organizational level use of evaluation use within partnerships. Moreover, examining the contextual factors that were associated with the evaluation of these PHPs explicates where adjustments may be needed in applying capacity building to the PHP setting. This case study has implications for future health planning policies.

Keywords

Public health partnerships are unique organizations in that they are valued as important to community health goals but they often lack access to data to navigate the challenges associated with public health and partnerships.

This research is novel is that the literature has not explored whether or not evaluation capacity building assumptions apply easily to a public health partnership and this work is further distinguished by exploring the individual and organizational indicators associated with ECB translation.

Findings imply that federal funding policies should consider providing evaluation capacity building in all initiatives include public health partnerships and funders may need to assist public health partnerships in addressing nonevaluation factors that simultaneously limit partnership functioning and evaluation use.

Introduction

Public health partnerships, frequently referred to in literature as community coalitions or collaboratives, offer cost-effective solutions to implementing health initiatives that rally awareness, mobilize support, and improve health outcomes in a particular disease.1,2 Public health partnerships (PHPs) are defined as “organizational partnerships of two or more organizational bodies, which aim to improve public health outcomes through population health improvement and/or a reduction in health inequalities.” 2 PHPs are different from public-private partnerships who operate using a business model through formal contracts and whose private partners generally absorb more of the financial risk.3-5 PHPs are very susceptible to early termination from short term funding, competitive relationships with partners, and high turnover of members.6-11 PHPs are unique organizations in that they are valued as important to community health goals but they often lack access to data to navigate the challenges associated with public health and partnerships.7,12,13 Recognizing that partnerships are often vulnerable to threats to their existence, some funders and sponsors provide technical assistance to PHPs as a voluntary resource to support their work.12,14

Evaluation capacity building (ECB) can include technical assistance, policy, or funding to increase a settings’ capacity to understand and meaningfully use evaluation on an ongoing basis. 15 ECB may be more beneficial to PHPs than general technical assistance because evaluation data can be used at any stage of development, whereas technical assistance may require a modicum of stability within a PHP.

Gaps in Literature

Until now, much of the literature on evaluation use in PHPs is based on the assumption that these organizations only functioned as autonomous systems. However, international and national sponsors often facilitate a symbiotic relationship by mandating local municipalities and other types of grantees to coordinate with a local PHP in order to achieve aims of a grant. 16 This symbiotic relationship may result in gains or losses for the PHP. 10 Gains for a PHP may include funding or technical assistance like ECB, even when they are not directly receiving the funding. However, if a grantee is experiencing a challenge in functioning, the associated PHP may also struggle to maintain its existence. In a national evaluative study, US researchers found that that loss of federal funding for state-based programs also influenced the functioning of their PHPs; suggesting some level of influence between the two entities. 17 This article seeks to expand the knowledge base by further exploring the quality of influence by identifying ECB-related factors across PHPs supporting state asthma programs.

Methods

Design

Case analyses are often used to examine phenomena that cannot be easily manipulated. 18 Centers for Disease Control and Prevention Asthma and Community Health Branch (ACHB) promoted evaluation use through the provision of ECB to funded state asthma programs. ACHB used their 2009 cooperative agreement as a pivotal opportunity to further strengthen evaluation, partnership, surveillance, and intervention strategies in states and territories funded for asthma control by providing funds and technical support along with prescribed guidance for a longer period. 19 ACHB established a new ECB approach through the creation of a team of evaluation technical advisors who were directed to assist with developing evaluation capacity within the 36 funded grantees. This new ECB approach was reinforced by requiring grantees to hire a half-time evaluator, create a strategic evaluation plan, and use evaluation for asthma program improvements. Later in this period, grantees were specifically asked to evaluate the 3 major components of this funding announcement: partnerships, interventions, and surveillance. At the beginning of the period, ACHB provided specific support for partnership evaluation such as developing a resource guide and delivering a webinar, with both products outlining best practices for PHPs.

The boundary of this case analysis design was demarcated by the following criteria: (1) the PHP was a state asthma partnership linked to grantees funded under ACHB and (2) included data submitted by grantees for this cooperative agreement during September 2009 through September 2014. We used a qualitative focus in this case analysis to explore the quality of evaluation use among PHPs and to identify which contextual factors might have affected evaluation use. Evaluation questions included the following:

How were partnership stakeholders involved in the evaluation?

What level of organizational support was available for evaluation?

How were partnership evaluation findings used?

What contextual factors influenced use of evaluation in partnerships?

Stakeholder involvement

Stakeholders refers to those invested parties who are end users or who are directly involved in the setting or program under evaluation. Partnership members are logical stakeholders since they are collectively vested in the goals of this collaborative organization. Therefore, stakeholders need to define what level of collaboration is expected. 20 Stakeholder involvement in partnership evaluation is a recommended best practice for partnership and other collaborations. 21 Stakeholder involvement has been described as an individual level outcome of ECB. 22 Stakeholder activities may include planning and designing, collecting data, interpreting results, disseminating findings, and assisting with decision making.22,23 Moreover, involving members in evaluation is a cost efficient way of developing a ready workforce to implement evaluations.

Organizational support

Organizational support for evaluation refers to human, financial, or material resources available to the partnership that can be used to plan, develop, and use evaluation for the benefit of the partnership.15,24-28 The provision of this type of organizational support has been described as a “necessary outcome to document successful ECB practice.”29,30 In Preskill & Boyle’s evaluation capacity building model, authors suggest that sustainability of ECB efforts are dependent on quality of infrastructure support, for example, leadership. 15 In Appleton-Dyer, Clinton, Carswell, and McNeil conceptual model of public sector partnership evaluation use, they allude to similar expectations of organizational level factors. 25

Use of evaluation findings

An organization’s routinized use of evaluation findings is another potential outcome indicator of ECB.22,30 Rather than delay measurement to assess only impact, evaluation should be used during earlier stages of a partnership development to ensure stages were completed successfully; increasing the likelihood that more advanced stages of partnership development will be reached. 31 In addition to the inherent benefits of having information for decision making, Appleton-Dyer et al suggest that exposure to evaluation data and other stages of the evaluation process can transform how a member perceives evaluation, the topic, or the partnership itself. 25

Quality and relevance of data are important predictors for evaluation use. For PHPs, best practices to improve data quality and relevance include mixed methods, repeated measures, and collecting data on different facets of partnership success. 1 Mixed methods enhances the rigor of partnership by using diverse data collection strategies in order to offset the limitation of each type. 32 Partnership theorists recommend the repeated use of evaluation because as these systems evolve and encounter different opportunities for growth, new data will be needed for each stage of development.20,31 For PHPs in particular, Fran Butterfoss and Vincent Francisco recommend that the PHP evaluation simultaneously examine infrastructure, intervention efforts, and health and setting outcomes. 1 By applying the findings from each of these levels of PHP life, partnership evaluation data may offer greater guidance on achieving PHP sustainability and impact.

Contextual factors

ECB research has revealed a number of conditions that need to be fostered or developed in order for outcomes of ECB to be achieved such as a supportive organization’s infrastructure and the priority given to evaluation in that organization.15,22 In addition to an organization’s characteristics, each PHP is distinguished by their local culture, historical relationships to other organizations, and political environment. Therefore identifying contextual factors may help inform how ECB should be tailored.

Data Collection

Data were abstracted from completed ACHB Evaluation Capacity Building Checklists and archival records.

Data Sources

Description of grantees

The 36 grantees were awarded funding under an announcement which included asthma programs located in the health departments of 34 states and the District of Columbia and Puerto Rico, providing representation in all regions of the United States. Many of these grantees were funded under earlier ACHB cooperative agreements where they were encouraged to evaluate collaboration or their partnerships but without access to the resources available under new ECB efforts launched under the 2009 funding announcement. The evaluation capacity of grantees also varied with professional experience of evaluators ranging from experts to novices.

ACHB Evaluation Capacity Building Checklist

An internal checklist was developed by ACHB evaluation technical advisors as part of routine activities to determine how evaluation was progressing across the grantees and included some of the following questions: (1) How does evaluator participate, (2) what goals are most important for the partnership, and (3) how does the partnership determine if a goal has been achieved.

Archival records

Archival records were reviewed to understand the variation in partnerships and identified which factors may be associated with ECB translation. Archival records included partnership presentations, evaluation technical advisor meeting notes, evaluation materials, and other grantee reporting documents.

Data analyses

The analyses were guided by confirmability and credibility, two commonly referenced qualitative standards. 32 Confirmability refers to an evaluators’ ability to demonstrate that evidence was obtained through systematic, meticulous, and impartial techniques. Confirmability was enhanced through triangulation of data to validate if findings were consistent across archival records submitted by ACHB grantees.32,33 For qualitative analyses, credibility alludes to the soundness of findings, and this quality can be strengthened when bias can be minimized through testing assumptions. Negative case analyses is one technique that can be used to determine credibility of findings. 34 Credibility was strengthened by using ETA checklists to compare observations of ETAs with reports of evaluation use in described in the archival records. The use of the ETA checklists were initially used as a strategy for negative case analyses to assist researcher in case there were any contradictions or data missing from archival records.32,33 In all cases, data were consistent but ETA checklists were helpful in adding supplementary data.

Data were analyzed by identifying descriptive themes on stakeholder involvement, organizational support, and findings use. Stakeholder involvement was discerned from evaluation summaries and annual reports. Organizational support was determined by annual reports and ETA checklists. Findings use was explored using descriptive and iterative coding based on grantee reports of changes attributed to partnership evaluations. Descriptive themes of findings use were later quantified into frequencies. For contextual factors, the focus was on predominant themes related to evaluation challenges so the researcher used an iterative coding strategy organized by language introduced in grantee documents, and partnership literature.

Duration

This study includes data submitted by grantees for a cooperative agreement spanning from September 2009 through September 2014. This study began in March 2012 and ended in December 2016.

Location

Although this study involves national data, the study was located in Atlanta Georgia.

Results

Understanding the utility of a partnership evaluation begins with understanding the variation among PHPs. Below we briefly describe our findings on the grantees and their PHPs and then describe how evaluation questions were answered.

Partnership Descriptions

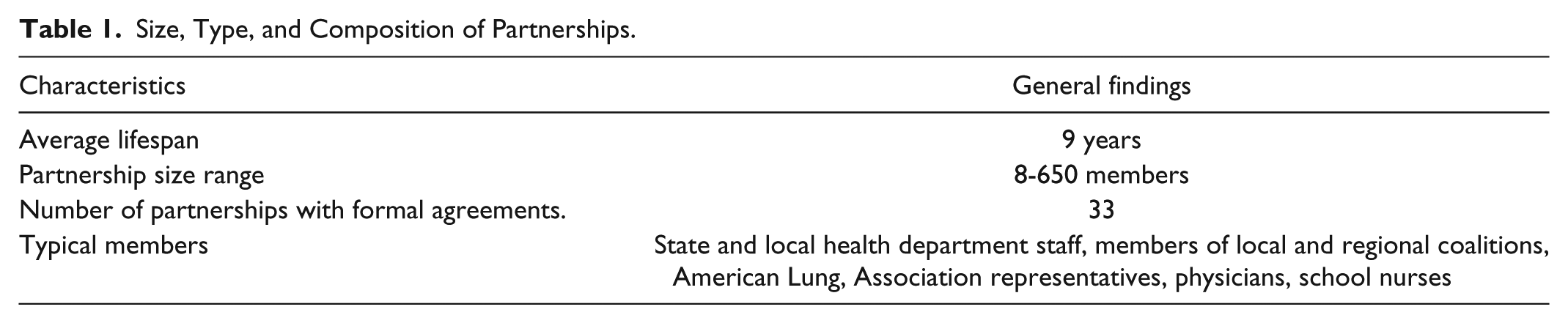

Description of 36 grantee partnerships are shown in Table 1. Partnership’ membership size ranged from 8 to 650 individual members. The average lifespan of the PHP was 9 years. Grantees were required to have organizational partners within and outside of their agencies who shared goals supporting asthma control. Partnerships were often directly involved in asthma program work; such as developing a statewide strategy for addressing asthma control, raising awareness for asthma, disseminating asthma surveillance data, and rallying their partnerships in support of the asthma interventions.

Size, Type, and Composition of Partnerships.

Most PHPs (n = 32) were funded in some fashion by ACHB grantees but funding amounts varied across years. Among the 36 grantees, 32 reported offering administrative support that included convening partnership meetings, recruiting and engaging partners, or paying for supplies.

Stakeholder Involvement

The members of partnerships are important stakeholders and were generally represented on most evaluation planning teams for the coalitions. Most grantees (28) developed an individual evaluation plan (IEP) where they described how they planned to engage stakeholders in their partnership evaluations. IEP descriptions also suggested that coalition members would be included in most steps of the evaluation process.

A small percentage of the 34 evaluation summaries described how partnership members were involved in the later stages of the evaluation which mainly referenced collecting data (n = 4), justifying conclusions (n = 6), and ensuring use and lessons learned (n = 10).

Level of Support

All state grantees were required to maintain at least a half-time evaluator during this funding period which provided most state PHPs with access to an evaluator. This evaluator position often served as a resource for the partnership based on descriptions from the ETA checklists. Even with evaluators accessible to the partnership, the level of access varied across grantees since it was up to the purview of that grantee.

The annual reports described the existence of evaluation workgroups. A reference to an evaluation workgroup is an important finding because it confirms the creation of an evaluation infrastructure within the partnership. Evaluation specific work groups were found for 11 statewide partnerships.

Use of Findings

For this study, partnership evaluations were defined as any assessment activity that collected data to improve needs, function, or impact of a PHP. Totally, 34 grantees reported completing partnership evaluations. However, details on data collection of evaluation data were only provided by 33 grantees. The data collections for evaluating partnerships ranged from a one-time data collection event to recurring data collections as seen in Table 2. Most grantees (n = 23) opted to collect data once. About 8 grantees collected data on multiple occasions involving their partnerships—annually, quarterly, or as needed. Based on evaluation summaries, data collection among grantees included the following activities in order of use: surveys (n = 31), document reviews/data abstractions (n = 10), interviews/focus groups (n = 10), observations (n = 6), and informal feedback (n = 2). About 10 grantees used multiple data sources.

Data Collection.

The majority (n = 34) of the grantees detailed how evaluation data were used to monitor progress or inform the development of a partnership. Among the 34 grantees who completed an evaluation, 20 grantees specified the audience they intended to or did reach with their findings which included the PHP, subgroups within the partnership, and even the state asthma program staff. Recommendations from evaluation summary reports included the following:

Offer enrichment content on financial sustainability, emphasizing the importance of community engagement and support in fundraising and project implementation, at future coalition leadership meetings.

It was also noted that some important groups are underrepresented within the coalition, such as persons with asthma, doctors, and caregivers. With this in mind, it was noted that the team should make membership recruitment a priority.

Have the meetings at a free venue or one of the involved institutions. It might be nice to see other hospitals/companies and get a feel for their program.

More opportunities for members of the group to take the lead on things and be more of a presence in order to reflect a true, deep-rooted spirit of collaboration.

Authentically engaging partners—not just individual representatives of partner organizations

[Attain] Adequate support from health department to carry out partnership activities.

Leadership might want to investigate the possibility of conducting future surveys on-line using one of the many free or low-cost survey tools available.

Find a strong community partner who will be the champion . . . perhaps a legislator who has disease.

Butterfoss and Francisco recommend the best practice of examining a PHP at 3 different levels: infrastructure, asthma programs and activities, and changes observed in health condition or setting. 1 In this study, most PHPs (n = 22) used evaluation findings to improve the partnership infrastructure, for example, better leadership or membership engagement. Fewer PHPs (n = 7) used their findings to improve asthma programs and activities. Although 6 PHPs did use findings to improve their linked state asthma program, there was not a report of using PHP evaluation data to assist with improvements in health conditions or settings.

Contextual Factors

Contextual factors were examined because they described the forces that affect partnership evaluation practice beyond the control of PHP, ACHB (the funder), or grantee (state asthma program). Themes relevant to evaluation challenges were (1) evaluator vacancies, (2) low participation of members, and (3) major changes in the partnership.

Evaluator vacancy

Totally, 20 of the 36 grantees lost an evaluator during the 5-year funding period, in addition to other positions in the state asthma program. The state program had difficulty filling a half-time position; thus leading to further delays. When grantees could not immediately fill the evaluator vacancy, the burden of evaluation work was left up to other asthma program staff, such as the epidemiologist.

Low levels of member participation

All partnerships reported a low level of member participation when comparing the total membership they reported on paper to the number of members who participated in the evaluation. The average response rate for surveys was 25%. Several evaluators reasoned in their evaluation summaries that the response rate should be based on the active members rather than the total membership of the partnership which would increase their response rate. Grantees attributed members’ low participation to difficulty in retaining partnership members, limited funding for partnership, voluntary role of members, and barriers to attending meetings.

When members were not active in the partnerships, they were also not available to participate in evaluations. This contextual factor was often mentioned in annual reports to explain why evaluation planning had stalled or why response rates to surveys and other data collection efforts were low. References to low member participation can be found below:

The lack of responses created a bias by type of organization; all agencies were not represented by the evaluation and therefore other coalition member priorities and future goals may not be reflected in the evaluations results.

There is a need to assess how to keep current partners active and reengage partners who are no longer active.

Of surveyed members, 71.4% (n = 15) indicated that they agree the coalition currently lacks adequate statewide representation. In addition, 55% (n = 11) of members agree that membership lacks influential people from key sectors of the community.

Attendance at Coalition meetings has not increased. The Coalition discussed this issue as part of its review of the Annual Membership Survey. This discussion yielded several options including: using meeting software instead of a teleconference phone to remotely connect Coalition members to meetings.

Major changes in partnerships

Lastly, changes in 7 partnerships contributed to shifts in focus and delays of evaluation projects. Two partnerships reorganized their organizational structure which ultimately shifted focus from measuring partnership outcomes as originally planned to partnership functioning in order to monitor progress of recent changes. Another partnership evaluation was delayed due to reorganization of their linked state health department which influenced the way organizational partners interacted with each other. One PHP created an asthma intervention product, but consistent delays inspired the evaluator to change focus from the product to the quality of communication and level of understanding among members about their roles. Examples of how partnership functioning influenced the evaluation can be found below:

Barriers faced during the first half of Year 4 with regard to evaluation of the coalition include the fact that there was very little coalition from summer of 2012 through the present. This is largely due to the fact that coalition changed leadership during the summer of 2012, and a couple of key members were on extended medical leave

The partnerships evaluation has been a struggle as it has been difficult to get the leadership to respond to information requests.

Discussion

Evaluation is recognized as an important administration function for healthy partnerships.33,35,36 Partnership evaluations in public health have been described as underused, lacking rigor and producing inconclusive findings because many contextual factors plague public health work and partnerships alike.13,21,37 These frequent complications demonstrate how important it is for ACHB and other funders that require the use of PHPs to also provide necessary ECB resources for partnerships to have sustained access to evaluation data. This article offers positive support for use of ECB among PHPs. In the following text, we will discuss the implications of observing stakeholder involvement, organizational support, use of evaluation findings, and contextual factors as it relates to ACHB investment of ECB.

How Were Partnership Stakeholders Involved in the Evaluation?

Engaging stakeholders in evaluation planning is recommended practice in community settings because stakeholder involvement would allow PHPs to enhance evaluation with their members’ perspectives as well as contribute to increased capacity for future use of evaluation.38-41 In this study, the majority of the partnership evaluation plans indicated that partnership members would assist with planning. This level of participation differed in the descriptions of the completed evaluations. Few partnership members appeared to be involved in latter stages of evaluation: data collection, interpretation, or dissemination. It was not always clear why there was a reduction in stakeholder involvement from planning to actual implementation of evaluation. However, partnership documents did describe contextual factors that were responsible for complicating evaluation, such as the attrition of partnership members. Funders who invest in public health should plan for these types of challenges by examining how to prevent such a high turnover. As in the case of ACHB, funders often mandate what types of partners should be recruited.16,19 This requirement may prove to be difficult if a particular partner does not collaborate well within partnership. Sponsors should instead collaborate with their grantees to determine which partners are a more suitable fit. The overall decrease in stakeholder involvement in evaluation across PHPs from planning to implementation of evaluation offers new understanding on what may be feasible as far as the level of engagement that can be expected from partnership members over time.

What Level of Organizational Support Was Available for Evaluation?

Several PHPs created evaluation workgroups. The establishment of an evaluation workgroup is another concrete example of sustainable evaluation practice because it suggests that partnership members valued evaluation sufficiently enough to create a forum for these types of activities. Furthermore, it is important to highlight need for internal support for evaluation because its use may be suspended when an evaluator has to leave a position. Preskill and Boyle stated, in addition to financial resources, the organization must also invest in personnel with evaluation expertise who can champion ongoing evaluation activity and provide evaluation assistance to staff members as needs arise.

15

Personnel also need adequate time and opportunities to engage in evaluation activities and processes.

PHPs differ from programs in that they generally rely on their volunteer workforce comprised of partnership members. The creation of PHP evaluation workgroups suggest that even with the frequent absence of evaluators, that there were people within PHPs that could “champion ongoing evaluation activity and provide evaluation assistance . . . .” 15 This assertion is partially supported by 34 grantees reporting that they completed partnership evaluations despite the majority (n = 20) of them having an evaluator vacancy.

Appleton-Dyer et al also suggest in their model that organizational level behavior toward evaluation is important to evaluation use in PHPs. 25 According to Appleton-Dyer et al, evaluation use is influenced by different characteristics of partnership, including its structure, operations, and active commitment and readiness for evaluation. 25 Appleton-Dyer’s et al organizational indicators also appears to be consistent with the organizational outcomes that Labin et al alludes to in their ECB practice model.22,25 According to Labin et al model, the delivery of ECB has the potential to create organizational level changes in evaluation practice such as “collaborative learning; cooperation, teams, risk-taking, participatory decision making, peer learning.” 22 Although a direct causal relationship cannot be established between ACHB ECB and PHP evaluation workgroups, it is promising to discover that at least 11 PHPs were able to maintain an internal organizational mechanism for evaluation despite a high attrition rate of evaluators. Funders and PHPs committed to sustain evaluation use should invest in the creation of evaluation workgroups as a way to continue access to evaluation data, preventing challenges associated with a departing evaluator.

How Were Partnership Evaluation Findings Used?

In this question, we sought to explore if ECB investment was associated with findings use. Use of evaluation findings signals an appreciation of the evaluation process and indicates that the end user was able to use the data for decision making. Finding use may not always occur when PHPs and other organizations struggle with factors that threaten their existence. For a small number of grantees, partnership evaluations findings were even used for improvements of state health program which offers some support for how symbiotic PHPs may be with local public health initiatives.

Although evaluations of the PHPs were an elective activity, the majority of grantees were able to complete a partnership evaluation which speaks to the possible influence of the ACHB’s ECB support. The frequency of completed PHP evaluations among ACHB grantees suggest that ongoing ECB may have incentivized the grantees to act on recommendations to complete partnership evaluations. A funder’s support of evaluation is also attributed to evaluation use in partnerships and other settings.25,42 The current study suggests that even when ECB support is indirect through a state health program, PHPs may still be affected.

It is noteworthy that even with grantees deciding evaluation foci, there was evidence that partnership evaluation included methodological best practices for this type of organization such as enhancing rigor or collecting data at different times and on different facets of PHPs. Partnership are often faulted for lacking rigor in their evaluations yet a third of the grantees managed to include mixed methods in their data collection, a recommended strategy for strengthening evaluation findings.43,44 Incorporating a diversity of data sources and data collection strategies into one evaluation is costly for any organization, and perhaps more so for an organization that did not always have access to an evaluator. This level of commitment may also imply that the grantees and their PHPs wanted to improve the accuracy of their findings. Although only 8 states reported collecting data more than once, this finding suggest that a few PHPs had multiple opportunities to improve either their structure or their asthma program work. With the many threats to PHPs, evaluation should be ongoing even when the partnership is also able to demonstrate outcomes. 45

Findings use was further examined by identifying if PHPs were able to apply the finding of 3 levels of partnership evaluation proposed by Butterfoss and Francisco. 1 At least half of the grantees used data to improve partnership infrastructure, a predictor for partnership sustainability.1,31,46 Evaluation data for 7 PHPs were used to improve the asthma interventions, which is promising since the creation of an asthma intervention is much more proximal goal to achieving health impact than partnership infrastructure.

About 6 PHPs were only able to apply findings to improve the functioning of the grantee rather than the ever elusive changes in health outcomes or community settings. This discovery may indicate that health departments are a more realistic setting to measure PHP influence when the PHP is mandated component for the grantee, in our case, the state asthma program. Moreover, few partners indicated that they were able to measure impact which may be related to contextual factors that interrupted evaluation.

Overall, the majority of grantees indicated that findings were used to make improvements of some kind, indicating instrumental use. These types of best practices could be more strongly encouraged if evaluation strategies were required and prescribed in detail by the funder and other supportive backers. 47 Funders like ACHB may have to be more prescriptive in the types of evaluation especially if they would like to collect evidence on the level of impact that a PHP provides toward a particular health issue.

What Contextual Factors Influenced Use of Evaluation in Partnerships?

Lastly, we examined emerging contextual factors to further explore how to improve future use of ECB in support of PHPs. Evaluator vacancy, low levels of member participation, and changes in partnership were commonly cited by grantees as factors that interfered with the execution of partnership evaluations. The identification of these factors is important for funders also serving as an ECB provider to its grantees. It’s important to stay informed of contextual factors that simultaneously affect evaluation and public health programming. 48 Contextual data should be used to guide future funding opportunities in order to create conditions that would allow a PHP to have greater opportunity for evaluation use.

Staff turnover is common in state health agencies. 49 The evaluator vacancies experienced by many ACHB grantees was one reason why PHPs reported limited access to a competent evaluator. Despite a high turnover of evaluators, this factor did not prevent the high frequency of partnership evaluations completed. For some states, the existence of evaluator workgroups, may have offset the absence of an evaluator.

Based on the contextual factors identified in this study, ECB strategies for PHPs may need to be tailored by investing in strategies to retain and engage members, adjusting hiring strategies to retain competent evaluators, and demonstrating how to use evaluation when there are major changes in partnership.

Limitations

Grantees were not required to design or implement their partnership evaluation at all but ACHB encouraged partnership evaluation as a strategy to develop the local public health infrastructure to improve asthma control. Direct interaction with grantees during this analyses might have identified other factors or outcomes related to evaluation capacity building.

Conclusion

This study is distinguished by exploring the level of evaluation use among PHPs after a funder’s broadened investment of ECB. Findings suggest that ACHB expanded use of ECB may be associated with involvement of stakeholders in evaluation, and the creation of evaluation workgroups within PHPs. Discovering evidence of ECB outcomes indicate that ACHB broadened ECB investment may have been beneficial to PHPs and would lend itself to future funding policies. In addition to indicators of ECB outcomes, PHP evaluations included best practices such as stakeholder involvement, mixed methods data collection design, and inclusion of multiple levels of partnership evaluation. Future direction of this work will examine quality of evaluation use with ECB tailored for PHPs.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The data were collected under grant by CDC.