Abstract

The Massachusetts health care reform was expected to reduce the financial burden of medical care, but literature exploring this effect is limited. In this study, we use hospital financial information and a panel data difference-in-difference model to assess the impact of the Massachusetts health care reform on unpaid medical bills. We find that the reform reduced the financial burden for patients, reflected by a 26percent decrease in hospital bad debt. The effect was more pronounced among safety-net hospitals, indicating a larger benefit for the most vulnerable population.

Massachusetts enacted health care reform legislation in 2006 to move the state toward universal coverage. The reform made insurance coverage mandatory for adults, expanded public coverage through MassHealth and Commonwealth Care, created an insurance exchange for individuals and small businesses, and required larger employers to provide private insurance (McDonough et al. 2008). A growing body of literature has reported the reform’s impact on different stakeholders (Cozad 2012; Gettens et al. 2011; Kenney, Long, and Luque 2010; Kolstad and Kowalski 2010; Ku et al. 2011; Long, Stockley, and Yemane 2009; Miller 2012a, 2012c; Pande et al. 2011). However, studies on the effects of the reform on patient’s ability to pay for medical bills are limited.

The literature assessing the impact of Massachusetts health care reform on the financial burden of medical care has used information from either patient surveys or personal bankruptcy files. For example, Long (2008b) used the Massachusetts Health Reform Survey to show an initial reduction of 24 percent in the number of residents reporting problems paying medical bills. However, this reduction vanished and was not statistically significant in the following years (Long, Stockley, and Dahlen 2012). Rather than ineffectiveness of the reform, the result of this pre–post study could be explained by the negative effect of the economic recession of 2008–2009 on the financial burden of households nationwide. Without a control group, both effects cannot be disentangled. Using personal bankruptcy files, Miller estimated the effect of the reform on bankruptcy rates through a quasi-experimental design (Miller 2012b). 1 The author found that the reform reduced personal bankruptcy by about 12 percent, a high but plausible effect considering that insurance expansion concentrated in low-income households (the most vulnerable to financial distress). However, although personal bankruptcy could be triggered by medical debt, it only captures extreme cases of unpaid medical bills. According to Domowitz and Sartain, the probability of filing for bankruptcy is only 2 percent for households with medical debt below 2 percent of income, while the same probability is 58 percent for households with higher medical debt (Domowitz and Sartain 1999). Moreover, medical bills are one of many other factors that contribute to bankruptcy. Since any liability is equally likely to push households into bankruptcy (Dranove and Millenson 2006), using bankruptcy rates to assess the burden of medical bills could be limited, since it requires to control for multiple factors that are in many cases unobserved.

In this study, we take an indirect approach to assess unpaid medical bills. Rather than surveying patients about their financial condition or using an extreme event as personal bankruptcy, we use hospital financial statements to obtain information of patients’ unpaid medical bills. Because hospitals record the medical bills that are expected to be uncollected as allowances for bad debt, this account reflects the financial burden of medical care for patients. We use a quasi-experimental design that tracks hospitals in Massachusetts and control states before and after the Massachusetts health care reform. We obtain the treatment effect or causal connection between the reform and unpaid medical bills by means of a panel data difference-in-difference (DID) estimator, which allows controlling for unobserved hospital heterogeneity.

The effect of the reform on unpaid medical bills depends on how subsidies for uncompensated care shifted from hospitals to individuals. In Massachusetts, the Uncompensated Care Pool (UCP) partially reimbursed hospitals for charity care and emergency room bad debt provided to uninsured low-income patients. It represented an important source of subsidies to reduce the financial burden of medical care of the uninsured. The reform replaced the UCP by the Health Safety Net office, reducing funding for uncompensated care and making the hospital requirements to request funds more stringent. The goal was to shift those funds to finance the expansion of the Medicaid program and the subsidies to help low- and moderate-income residents purchase insurance. While reductions in uncompensated care funds increase the financial burden of medical care, the expansion of insurance coverage decreases it.

We expect the overall effect of the health reform to reduce unpaid medical bills due to the significant gains in insurance coverage. There is strong evidence that the reform reduced uninsurance rate in Massachusetts by at least 50 percent among nonelderly adults and children. This reduction was higher for the low-income population (Kenney, Long, and Luque 2010; Long, Stockley, and Yemane 2009; Pande et al. 2011). Furthermore, there is not conclusive evidence of increased costs and cost sharing after the reform, which could be affected patients’ financial burden. While a body of the literature found that the reform increased health care costs (Cozad 2012; Holahan and Blumberg 2009; Long, Stockley, and Dahlen 2012), other reports found either an insignificant effect on costs (Kolstad and Kowalski 2010) or potential cost savings through shifting from emergency care to physician’s office use (Miller 2012a). In terms of cost sharing, Cogan, Hubbard, and Kessler (2010) found that the reform increased employer-sponsored insurance premiums. This may have forced many employers to reduce the quality of the insurance plans, leaving employees and their families more exposed to costs through higher deductibles, co-payments, or coinsurance (Massachusetts Division of Health Care Finance and Policy 2011). However, the evidence on underinsurance is also mixed. This is because a pre–post study showed that the share of insured adults who were underinsured dropped by almost 2 percentage points in the first year after the reform (Long 2008a).

We also expect that the effect on unpaid medical bills was different based on access to uncompensated care subsidies before the reform. On one hand, the group of uninsured individuals who did not qualify for uncompensated care was likely to transfer most of the financial risk to third party payers after getting insurance with the reform. On the other hand, the group of uninsured individuals who qualified for uncompensated care was likely to remain financially protected after the reform by either the Health Safety Net or a third party payer if they become insured. However, the reduction in unpaid medical bills for this latter group depends on how fast safety-net funds shrunk compared with gains in health insurance. To explore the differences in the impact of the reform among both groups, we look at hospital’s safety-net status and estimate separate treatment effects for safety-net and non-safety-net hospitals.

Study Method

To assess the impact of Massachusetts’ health care reform on hospital bad debt, we consider the reform a natural experiment. The treatment group is composed by hospitals in Massachusetts, and the control group is composed by hospitals in states that did not implement health care reform. We compare bad debt before and after the reform for both treatment and control groups. We use a panel data DID approach that allows us to obtain the treatment effect or causal connection between the reform and bad debt (Cameron and Trivedi 2005). The DID estimator has been also used to assess the impact of Massachusetts’ health care reform on individual coverage, employer-based insurance premiums, health outcomes, hospital utilization, and hospital input decisions (Cogan, Hubbard, and Kessler 2010; Cozad 2012; Kenney, Long, and Luque 2010; Kolstad and Kowalski 2010; Long, Stockley, and Yemane 2009; Miller 2012c). However, with the exception of Cozad (Cozad 2012), data have been limited to pooled cross-section surveys. In this study, outcomes are observed for the same hospitals over time. This allows us to estimate a hospital-level panel data with fixed effects that control for unobserved hospital heterogeneity.

Equation 1 describes the panel data DID specification used in the analysis. The hospital-level panel data are estimated by a fixed-effect estimator that allows identification of the treatment effect controlling for permanent unobserved hospital heterogeneity (Cameron and Trivedi 2005).

Yit is the bad debt ratio for hospital i in year t (t = 2004,. . .,2009). We transform bad debt in logs to interpret treatment effects as a percentage change. Tit is the binary treatment indicator that equals 1 for all hospitals in Massachusetts after the reform. The treatment effect (effect of the reform on bad debt) is captured by β1. Xit includes three hospital-level variables and two county-level variables. Hospital-level variables include total assets (in millions, adjusted by inflation) and number of beds (in hundreds) to control for hospital size and average length of stay (number of total patient days divided by total discharges) to control for potential gains in efficiency after the reform. Cozad (2012) found a significant reduction in patient’s average length of stay after the reform that could potentially influence hospital bad debt. County-level variables include unemployment rate and personal income (in thousands, adjusted by inflation) to control for changes in economic activity in the geographic area where the hospital is located. The hospital-specific fixed effect (θ i ) removes any hospital time-invariant heterogeneity that may bias the treatment effect. The time-specific fixed effects (θ t ) accounts for aggregate time effects. The time-specific fixed effects as well as the county economic activity indicators are particularly relevant because they control for the effect of the economic recession of years 2008–2009. The panel data DID are also estimated separately for safety-net and non-safety-net hospitals, according to the three following safety-net definitions described.

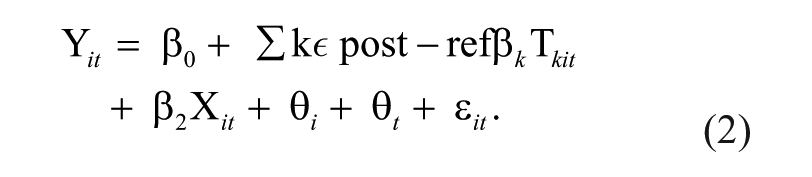

A second specification is estimated to assess the effect of the reform over time. In Equation 2, the treatment effect β k is estimated for each year k that belongs to the postreform period. Because the expansion of insurance coverage was gradual, we also expect a gradual impact of the reform on unpaid medical bills.

Our panel data DID specification are subject to serial correlation that may understate the standard error of the treatment effect. We account for this issue and correct standard errors using a block bootstrap method by state (Bertrand, Duflo, and Mullainathan 2004). Following Kolstad and Kowalski (2010), bootstrapping is based on a large number of replications—2,000—to account for the fact that Massachusetts is the only treatment state compared to a larger number of comparison states.

Data Source

The primary source of data in this analysis is the Centers for Medicare and Medicaid Services (CMS) hospital cost report. CMS cost reports contain standardized information filed by hospitals participating in the Medicare program. Use of CMS cost reports enables us to avoid a sample selection problem because Medicare participation can be safely assumed to be independent of the outcome (doubtful debt) and the intervention: Massachusetts’ health care reform did not affect the federal Medicare program. From the CMS cost reports, we obtain information on Medicaid and total discharges, days of patient care, number of beds, geographic location, and financial status.

For the selection of the intervention and control groups, we consider all hospitals that filed the CMS cost report in years 2004–2009. All hospitals located in Massachusetts form the intervention group. Of a total of 101 hospitals that reported to CMS, 92 percent filed the report for the six consecutive years. For the control group, we follow Kenney et al. (Kenney, Long, and Luque 2010) and include all hospitals in the New England census division as well as Minnesota and Washington State, which have similar demographics and did not implement major health care changes. Of a total of 374 hospitals in the control group, 97 percent reported to CMS for the six consecutive years of analysis.

We define the prereform period as 2004–2005 and the postreform period as 2006–2009. Although the reform was signed in April 2006, the key policies affecting coverage were implemented between October 2006 and October 2007. Because the reform was implemented in the first half of 2006, we include 2006 in the postreform period. To check if the treatment effect was sensitive to this inclusion, we also estimated a model that excludes 2006 and 2007 from the analysis.

Definition of Bad Debt

Information on hospital bad debt is quite limited in terms of availability and accuracy. The main source of data is a hospital’s financial statement, where bad debt is reported in the balance sheet as an allowance for doubtful patient accounts and in the income statement as an expense. Although audited financial statements are the most reliable source of accounting information, they are not publicly available for most states. That makes worksheet G of the CMS cost report the most widely used national database of hospital financial accounting 2 besides its well-documented limitations (Kane and Magnus 2001). In particular, bad debt expense is questionable because various accounting standards place bad debt in either the total operating expenses or the net patient revenue. 3 For that reason, our primary indicator for bad debt comes from the balance sheet. We define bad debt as the ratio of “allowances for uncollectible notes and accounts receivable” to “(gross) notes, accounts, and other receivables.” We found that a significant number of hospitals (17.3 percent in our sample) still reported zero allowances for bad debt in all periods because they netted the account receivables. We omit those cases.

For further robustness, we also consider a second definition of bad debt using the income statement and exploiting the fact that worksheet G-2 allows adding an operating expense item not listed in the income statement. We found that many hospitals use worksheet G-2 to report bad debt expenses. In those cases, we assume that the hospital did not include bad debt in the net patient revenue, and we define bad debt as the ratio of “bad debt expense” (reported in worksheet G-2) to “total patient revenues” (reported in worksheet G-3). In our sample, 76.3 percent of hospitals report bad debt expenses in one or all periods.

The lack of accuracy of hospital bad debt data may bias our results in two ways. First, definitions of bad debt based on CMS cost reports are subject to measurement error. However, measurement error in continuous dependent variables does not bias linear regression estimates unless the measurement error is correlated with the policy variable (Hausman 2001). Because CMS cost files are neither reported to nor regulated by state agencies, it can be safely assumed that the Massachusetts health care reform did not affect measurement errors in hospital bad debt data. We confirm this assumption by testing the reliability of the bad debt measure using coefficient of variations and regression-based tests (Atkinson and Nevill 1998). A second limitation is that a significant percentage of hospitals reported bad debt in some but not all years, making our panel data unbalanced. Correlating missing values of bad debt with the policy variable would bias our results. We use tests by Verbeek & Nijman and by Wooldridge (Vella 1998), and find no evidence of selectivity bias. 4

Definition of Safety Net

Definition of safety-net status is challenging because of the lack of consensus in the literature (Zuckerman et al. 2001). The Institute of Medicine (IOM) defines safety-net providers as those with a substantial share of their case mix in uninsured, Medicaid, and other vulnerable patients. However, not all identification strategies are consistent with the IOM definition. Zwanziger and Khan (2008) found that strategies based on the hospital commitment to vulnerable population, such as public ownership or teaching status, failed to identify safety-net status. The authors suggested the use of measures of actual behavior, such as the uncompensated care burden, high proportion of Medicaid patients, and service to a low socioeconomic status population.

We use the last two approaches: (1) high proportion of Medicaid patients and (2) service to a low socioeconomic status population because the outcome variable (bad debt) is included in the definition of uncompensated care. For the first measure, we identify a hospital as safety net if its ratio of Medicaid patients to total patients is above the median observed in the prereform period. For the second measure, we consider two socioeconomic characteristics of the hospital’s service area: (1) percentage of minority residents and (2) percentage of households below poverty line. In both cases, we identify a hospital as safety net if percentages are above the median observed in the prereform period.

Results

Table 1 provides summary statistics of Massachusetts and comparison states, before and after the health reform. It reports averages and standard errors of our two definitions of bad debt, as well as control variables, and prereform variables used to identify safety-net hospitals. Table 1 shows that the average doubtful debt remained unchanged in comparison states after the reform, while it fell in Massachusetts for both definitions of hospital bad debt. A test for differences for hospitals in Massachusetts is reported in Table 2. Simple pre–post reform differences—expressed as percentage changes with respect to the prereform period—show an overall reduction in bad debt of 10.4 percent at 10 percent significance level. When compared by safety-net status, most differences are not statistically significant. Two factors may bias pre–post differences. First, the postreform period was affected by the economic recession of 2008–2009, which may have increased bad debt. The treatment effect of the reform and the economic recession cannot be disentangled with the pre–post study design. Second, the comparison of pre–post averages does not correct by potential unobserved hospital heterogeneity, which may bias the result. We overcome both limitations with the panel data DID model.

Summary Statistics of Massachusetts and Comparison States, Before and After State Health Reform.

Source. CMS hospital cost reports 2004–2009.

Note. Standard errors in parenthesis. Definitions of the safety-net classification are provided in the text. Prereform period is 2004–2005; postreform period is 2006–2009. AR = Account Receivables, CMS = Centers for Medicare and Medicaid Services.

Changes in Patients’ Doubtful Debt in Hospitals in Massachusetts, Before and After State Health Care Reform.

Source. CMS hospital cost reports 2004–2009.

Note. Patients’ bad debt is defined here as hospital’s allowances for bad debt/gross account receivables. Definitions of the safety-net classification are provided in the text. Prereform period is 2004–2005; postreform period is 2006–2009. CMS = Centers for Medicare and Medicaid Services.

Difference expressed as a percentage change relative to the prereform period.

Significant at 10 percent. **Significant at 5 percent. ***Significant at 1 percent.

Table 3 presents treatment effect estimates from the panel data DID model. Full regression results are provided in Table A1 in the appendix. The treatment effect estimates confirm the impact of health care reform on patients’ bad debt reduction. In contrast with simple average differences, the impact for all hospitals is statistically significant. The reform reduced patients’ bad debt by roughly 26 percent.

Difference-in-Differences Estimates of the Effect of Health Care Reform on Doubtful Debt, Overall, and by Safety-Net Classification of Hospitals.

Source. CMS hospital cost reports 2004–2009.

Note. DID model is estimated by panel data fixed effects, with block bootstrap standard errors. The model includes time-specific fixed effects, controlling for hospital size (number of beds and total assets), length of stay, and economic activity at county level (personal income and employment rate). Patients’ bad debt is defined as hospital’s allowances for bad debt/gross account receivables. Definitions of the safety-net classification are provided in the text. Prereform period is 2004–2005; postreform period is 2006–2009. Control group consists of hospitals in the New England states, Minnesota, and Washington state. DID = difference-in-difference.

Treatment effects are interpreted as percentage changes since bad debt enters in logs.

Significant at 10 percent. **Significant at 5 percent. ***Significant at 1 percent.

With regard to safety-net classification, the results show that the impact of the reform was larger among safety-net hospitals. This result is consistent across the three definitions of safety-net hospitals. While the reform reduced patients’ bad debt by a range of 12 to 20 percent in non-safety-net hospitals, the reduction ranged from 28 to 37 percent in safety-net facilities.

Finally, Table 4 presents the results of our second specification (Equation 2). Overall, the reform reduced hospital doubtful debt gradually from 18.5 percent by 2006 to 32.3 percent by 2009. For safety-net hospitals, the effect was also gradual but most of it occurred in the earlier years of the reform. Conversely, for non-safety-net hospitals, the effect was negligible in the first years of the reform and larger but mostly nonstatistically significant in subsequent years.

Difference-in-Differences Estimates of the Effect of Health Care Reform on Doubtful Debt, by Year and by Safety-Net Classification of Hospitals.

Source. CMS hospital cost reports 2004–2009.

Note. DID model is estimated by panel data fixed effects, with block bootstrap standard errors. The model includes time-specific fixed effects, controlling for hospital size (number of beds and total assets), length of stay, and economic activity at county level (personal income and employment rate). Patients’ bad debt is defined as hospital’s allowances for bad debt/gross account receivables. Definitions of the safety-net classification are provided in the text. Prereform period is 2004–2005; postreform period is 2006–2009. Control group consists of hospitals in the New England states, Minnesota, and Washington state. CMS = Centers for Medicare and Medicaid Services; DID = difference-in-difference.

Treatment effects are interpreted as percentage changes since bad debt enters in logs.

Significant at 10 percent. **Significant at 5 percent. ***Significant at 1 percent.

Robustness Check

We assess the sensitivity of the treatment effects estimates to two different specifications of the estimation: (1) the second definition of bad debt (bad debt expenses to total revenues) and (2) the alternate definition of pre- and postreform period (2004–2005 and 2007–2008, respectively). Table A2 in the appendix reports the treatment effect estimates for the core model and the additional specifications in columns I, II, and III, respectively. When we compare results for all hospitals, the treatment effects are statistically significant in all specifications. Reductions in doubtful debt range from 26 to 32 percent.

The treatment effect in safety-net hospitals is mostly significant, but it shows more variability across specifications and definitions of safety-net status, ranging from 14 to 45 percent. For non-safety-net hospitals, the reductions in patients’ bad debt are generally smaller and nonsignificant. Although not conclusive, these results suggest that the health care reform resulted in greater reduction of patients’ bad debt in safety-net hospitals.

We also check for potential anticipatory effects that may have reduced hospital bad debt before the enactment of the health care reform. If individuals purchased insurance earlier than 2006 as a response to the debate over the reform, our treatment effects could be biased by the magnitude of the anticipatory effect. To check this, we use a specification that looks for effects within the prereform period, between 2004 and 2005. The estimated treatment effects are presented in column IV of Table A2 in the appendix. All estimates are not statistically significant, alleviating concerns regarding anticipatory effects.

Discussion

The findings of this study indicate that the health care reform in Massachusetts reduced unpaid medical bills by roughly 26 percent. Our result is robust to different definitions of bad debt and pre–post reform periods. Our finding is also consistent with results from the Massachusetts health reform survey that showed a reduction of 24 percent in the number of residents reporting problems paying medical bills (Long 2008b) before the economic recession.

Our results suggest that the health care reform had a protective effect for Massachusetts patients during the economic recession. Previous studies based on pre–post reform evaluations found a nonstatistically significant increment in the financial burden of medical bills when the 2008–2009 economic recession was included (Himmelstein, Thorne, and Woolhandler 2011; Long, Stockley, and Dahlen 2012). However, because the economic recession affected all states, pre–post evaluations fail to control for common time effects. Our panel data DID estimation strategy was able to control for the effect of the economic recession, suggesting that the effects of the recession on unpaid medical bills would have been worse without the health care reform.

Since the Massachusetts health reform reduced insurance coverage by at least 5 percentage points (Kenney, Long, and Luque 2010; Long, Stockley, and Yemane 2009; Pande et al. 2011), our estimates suggest that a 1 percentage point reduction in insurance coverage reduces unpaid medical bills by about 5 percent. This point estimate is large but not implausible because bad debt is concentrated in low- and middle-income households (Weissman, Dryfoos, and London 1999), that is, those with the highest expansion in insurance coverage. Other studies have also found large impacts in the context of personal bankruptcy. Miller (2012b) found that the 5 percentage point reduction in insurance coverage due to the Massachusetts health reform reduced personal bankruptcy by about 12 percent. Gross and Notowidigdo studied the impact of Medicaid eligibility expansions on bankruptcies. They found that a 10 percentage point increase in Medicaid eligibility reduced bankruptcies by 8.1 percent (Gross and Notowidigdo 2011). Compared with doubtful debt, personal bankruptcy is an extreme event since not all households with unpaid medical debt will file for bankruptcy. For instance, Domowitz and Sartain (1999) estimate that households with high medical debt have 58 percent probability of filing for bankruptcy, while the probability is only 2 percent for households with low medical bad debt. 5 All these numbers together imply that the impact of insurance expansion on bad debt could be between 1.7 and 50 times higher than the impact on bankruptcy, which is consistent with the estimates presented in this study.

Reduction in hospital bad debt does not only reflect improvements in the financial burden for patients but it also implies savings for hospitals. Before the reform, the bad debt expense per patient per year reported in our dataset was about $1,238 for an average hospital in Massachusetts. Based on the study estimates, the reform produced savings of around $321 per patient per year. The savings represent about 4.2 percent of the average cost for hospital stay in 2005. 6

A second important finding of this study is that the reform had a larger impact in reducing unpaid medical bills in safety-net hospitals, compared to non-safety-nets. This suggests that the reform provided more financial protection to the most vulnerable population, which is consistent with their larger gains in insurance coverage. While the uninsured rate fell by at least 5 percentage points among adults in Massachusetts, the rate among low-income adults was reduced by about 10 percentage points (Long, Stockley, and Yemane 2009). The result is also consistent with the growing demand for care at safety-net hospitals after the reform (Ku et al. 2011).

Our findings are highly relevant in the context of the Affordable Care Act (ACA). Both reforms share similarities in their approach to expand coverage and shift public funding from hospitals to individuals. Beginning in 2014, the ACA contemplates a series of payment reductions in Medicaid and Medicare Disproportional Share Hospital (DSH) programs. These programs provide funding for uncompensated care to nearly three quarters of U.S. hospitals (Graves 2012). Under ACA, reductions in DSH funds are determined with a formula that includes reduction in each state’s uninsured rate. Consequently, the effect of the Federal health care reform on hospital bad debt is expected to be similar to the observed in Massachusetts, at least for the states that expand their Medicaid programs following ACA. However, additional initiatives accompanying ACA may reduce the overall impact on unpaid medical debt due to moral hazard effects. In contrast with the Massachusetts reform, the Federal reform has created provisions to restrict collection actions implemented by nonprofit hospitals (Lunder and Liu 2009). Several state and federal initiatives are trying to extend restrictions on for-profit hospitals and to add more barriers to the collection of medical debt (Bailey, Franklin, and Hearle 2010). Restricting collection actions of medical bills may create incentives for bad behavior, increasing delinquent debt among those who are able to pay. The moderate effect on bad debt observed in the Massachusetts health care reform could be negligible under ACA without an appropriate mechanism to protect against moral hazard effects.

Footnotes

Appendix

Robustness Check. Difference-in-Differences Estimates of the Effect of Health Care Reform on Patients’ Doubtful Debt, Overall and by Safety-Net Classification of Hospitals.

| Treatment effect

a

|

||||

|---|---|---|---|---|

| Core model (I) | Alternate definition of bad debt (II) | Alternate pre–post reform period (III) | Prereform period only: 2004 vs. 2005 (IV) | |

| All hospitals | −0.257** | −0.269*** | −0.324** | −0.036 |

| Safety-net hospitals | ||||

| Defined by % of Medicaid patients | −0.283*** | −0.140 | −0.324*** | −0.041 |

| Defined by % of minorities | −0.366** | −0.289*** | −0.454** | 0.021 |

| Defined by % under poverty line | −0.310*** | −0.243* | −0.364*** | −0.024 |

| Non-safety-net hospitals | ||||

| Defined by % of Medicaid patients | −0.183* | −0.507** | −0.316** | −0.055* |

| Defined by % of minorities | −0.121* | −0.200 | −0.180* | −0.023 |

| Defined by % under poverty line | −0.198 | −0.263 | −0.284 | −0.060 |

Source. CMS hospital cost reports 2004–2009.

Note. DID model is estimated by panel data fixed effects, with block bootstrap standard errors. The model includes time-specific fixed effects, controlling for hospital size (number of beds and total assets), length of stay, and economic activity at county level (personal income and employment rate). Definitions of the safety-net classification are provided in the text. Control group consists of hospitals in the New England states, Minnesota, and Washington state. CMS = Centers for Medicare and Medicaid Services; DID = difference-in-difference.

Treatment effects are interpreted as percentage changes since bad debt enters in logs.

Significant at 10 percent. **Significant at 5 percent. ***Significant at 1 percent.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.