Abstract

In order to realize online color difference detection of printed fabrics, a color difference detection method of printed fabrics based on image segmentation and image registration was proposed to detect the color difference of simple printed fabrics. The acquired standard fabric images are segmented using the combination of a Canny edge detector and uniform grid partitioning. The color information of each sub image is extracted based on the segmentation results and saved. We use the speeded-up robust features algorithm that incorporates the position information of the cyclic element where the feature points are located. The segmentation path of the standard image is registered onto the fabric image to be detected. The color information of each sub image of the image to be detected is extracted according to the registered segmentation path. The color difference value between the sub image of the image to be detected and the sub image of the standard sample is calculated using the CIEDE2000 color difference formula. The experimental results show that when fabric images with duplicate patterns are registered, the image registration methods mentioned in this paper significantly improve the accuracy of registration. The accuracy of the detection method in this paper on the printed fabric dataset can reach 95% compared to the manual calibration results.

Keywords

In production in the textile industry, controlling the color difference of fabrics is one of the primary issues to ensure the quality of printed fabrics, ensuring that their color appearance is consistent with the standard color sample as much as possible. 1 The difference between a certain pattern on printed fabric and the process standard sample is called the color difference. The color difference is a comprehensive result caused by differences in hue, brightness, and saturation. 2 At present, the main methods for measuring color and color difference include photoelectric integration, spectrophotometry, and digital colorimetry. The photoelectric integrating instrument can measure the difference between two color sources, but cannot accurately measure the tristimulus value and chromaticity coordinates of the color source. 3 The spectrophotometric method can accurately measure the reflectivity of monochromatic objects and convert the reflectivity into various color parameters, evaluating the objective color of objects, with short measurement time and good reproducibility. 4 However, a spectrophotometer has certain requirements for the detected object, which must be a monochromatic material and completely cover the measurement aperture of the spectrophotometer. The measurement result is the average spectral reflectance within the measurement aperture, so the spectrophotometer cannot accurately measure the color of printed fabrics. The digital colorimetric method calibrates camera parameters using a specific calibration color card under a standard light source and, after calibration, the standard chromaticity data of the sample can be obtained.5,6 Color difference detection methods and systems based on computer vision have made significant progress in recent years. However, most of the current research focuses on online color difference detection of monochromatic fabrics. 7 The variety of colors on the surface of printed fabrics increases the difficulty of online color difference detection, and there is currently little research on online color difference detection of multi-color fabrics.

This article designs a color difference detection system for printed fabrics. According to the requirements of the color difference detection system, an image segmentation method combining the Canny edge detection algorithm and uniform grid division is proposed to segment the standard image. By adding the location information of the cyclic element where the feature points are located in the speeded-up robust features (SURF) description algorithm through the distance matching function (DMF), image registration is performed, which solves the problem that the traditional feature point-based registration method cannot register images with duplicate units. We register the segmentation path extracted from the standard image to the image to be detected, and use the CIEDE2000 color difference formula to calculate the color difference values between the corresponding sub images of the standard image and the image to be detected, completing the color difference detection of printed fabrics.

Overall design of the color difference detection system

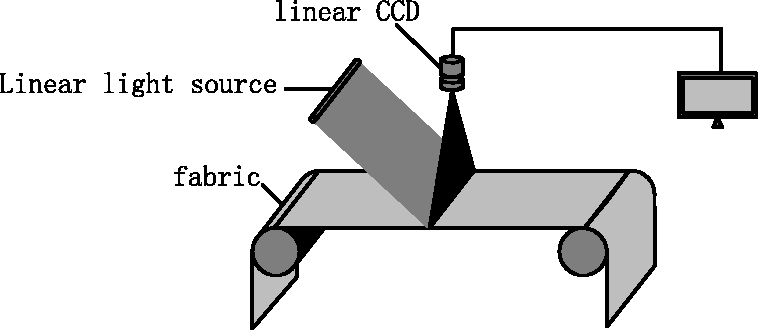

The online color difference detection system for printed fabrics studied in this article mainly includes two parts, namely the hardware part and the software part. The hardware part includes two systems, namely an image acquisition system composed of a CCD (charge-coupled device) camera, an optical lens, a D65 standard light source, and a shading box, and a driving system that ensures that the fabric passes through the image acquisition box at a constant speed and stable state. The color displayed on the fabric is influenced by the light source and the spectral reflectance of the fabric surface. The position of the light source and camera is shown in Figure 1. The sampling background is a black platform, and the light source illuminates the fabric surface from both sides, with the camera suspended directly above. After determining the position of the camera, light source, and fabric, we calibrate the camera color using a standard 24 color card to complete the hardware construction.

Schematic diagram of the image acquisition platform. CCD: charge-coupled device.

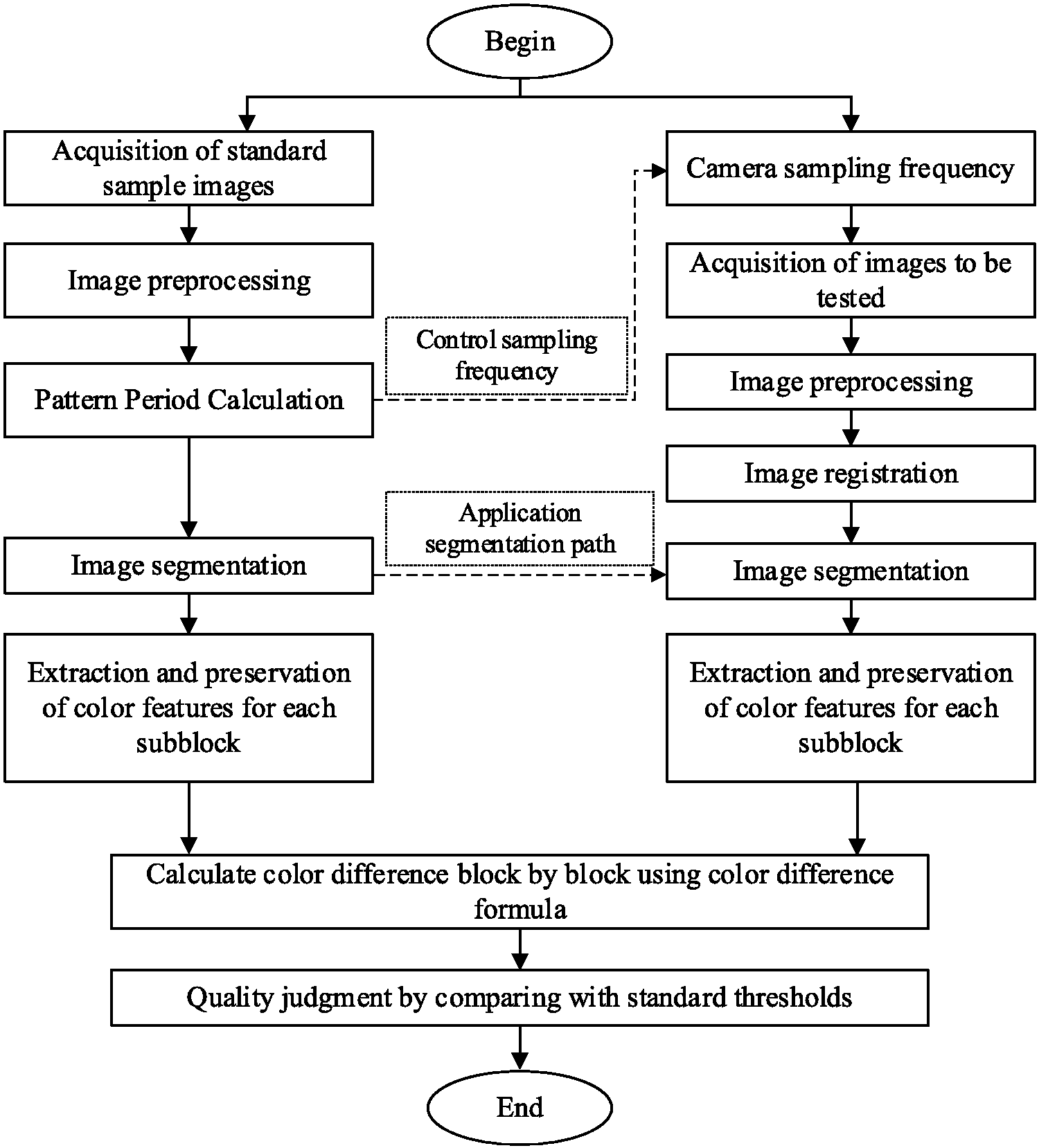

The software part includes four modules, which are the image preprocessing module, image segmentation module, image registration module, and color difference calculation module. This article mainly introduces the software part of the detection system. Figure 2 shows the flow chart of the online color difference detection system for printed fabrics.

Flow chart of the online color difference detection system for printed fabrics.

Image preprocessing module

The fabric images acquisitioned by the system need to be preprocessed before color difference detection. Through preprocessing, on the one hand, noise generated during image acquisition and transmission can be removed and, on the other hand, the original image information can be simply filtered to remove excess information in the image, preparing for subsequent image processing.

There is a great deal of pattern information on the surface of printed fabrics, and using traditional filtering methods can blur the pattern while filtering out noise, thereby increasing the error in color difference calculation. The bilateral filter algorithm is a nonlinear filtering algorithm, which combines brightness similarity and spatial information for filtering processing. It can preserve the edge and detail information of the image to the maximum extent while smoothing noise reduction, and has edge preserving characteristics. In this paper, the bilateral filter algorithm is used to denoise fabric images.

Color difference calculation module

In this article, the color difference detection system uses a CCD camera for image acquisition. The acquisitioned image is in RGB mode, but in the RGB color space, the correlation of each component is high, which does not conform to human visual perception characteristics, and the color space is uneven, which is not suitable for comparing the differences between two colors.8,9 To solve this problem, it is necessary to convert the image into a uniform color space independent of the device, and then calculate the color difference value between the images.

Currently, the International Commission on Illumination (CIE) L*a*b* uniform color space is widely used internationally for color difference calculation. The CIE color space is a color system based on human vision and color measurement, and is not affected by equipment changes. 10 The CIE system has the largest gamut space, where any color can be mapped.

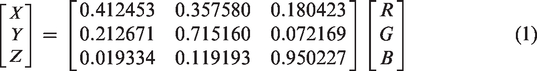

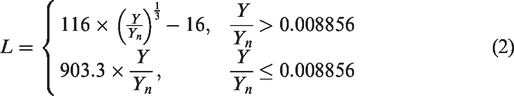

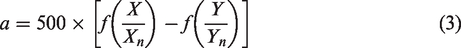

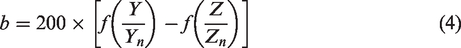

The conversion from RGB color mode to CIE L*a*b* color mode is as follows: RGB → XYZ → CIE L*a*b*

Among them, there is a linear relationship between the RGB and XYZ models, and the RGB color model can be converted to the XYZ model by multiplying a 3 × 3 matrix. The

The Lab color space can be converted by the CIEXYZ chromaticity system through mathematical methods, as follows:

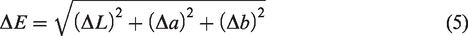

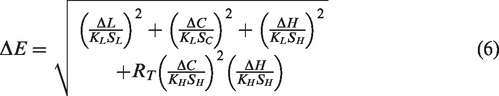

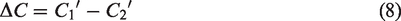

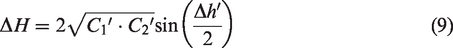

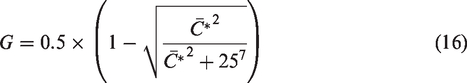

The color difference detection of machine vision serves humans, so the color difference detected by machine vision should be consistent with the color difference perceived by the human eye. For this purpose, color difference researchers have put forward many color difference formulas. At present, the Lab color difference formula formulated by the CIE is the most widely used, as shown in the following formula.

In the formula,

This system uses a color difference formula newly proposed by the CIE, which is called

In the formula,

Color difference detection algorithm

Image segmentation of printed fabrics

It is necessary to segment the standard images of printed fabrics in color difference detection. If the color difference between the standard image and the image to be measured is directly calculated, the standard image and the image to be measured need to be compared pixel-by-pixel and extremely accurate image registration is required, but highly accurate image registration will significantly increase the amount of calculation. At the same time, the computational complexity of the color difference formula itself is high, and comparing the entire image pixel-by-pixel will also cause a rapid increase in computational complexity, which cannot meet the real-time requirements of online detection. Reasonable image segmentation of fabric standard images can not only improve the efficiency and accuracy of color difference detection, but also reduce the demand for registration accuracy in color difference detection systems.

According to the requirements of fabric color difference detection, this article proposes an image segmentation method that combines the Canny edge detection algorithm with uniform grid division to segment standard fabric images.

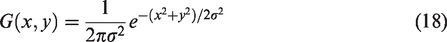

The Canny edge detection algorithm is based on a multi-level edge algorithm and is a commonly used edge detection algorithm. The Canny edge detector uses the detection model derived from the Gaussian model. 13 Because the original image may contain noise, a Gaussian filter is applied to the image before edge detection to obtain a slightly smooth image to avoid the interference of a single noise pixel with the edge detection results.

The main process of Canny edge detector is as follows:

image denoising → gradient calculation → non-maximum suppression → double threshold boundary tracing

Firstly, Gaussian blur is used to denoise the image, so that the edge is free from noise interference. The two-dimensional Gaussian distribution formula is as follows:

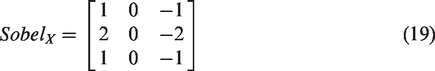

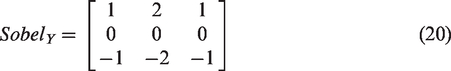

Next, we calculate the gradient. We calculate

Further, the amplitude of the gradient of the image at point

The direction of the gradient of the image at point

Then we apply non-maximum suppression to remove a large portion of non-edge points. The gradient direction is approximated to one of the following values:

Compare the gradient intensity of this pixel with the gradient intensity of the pixel that located in the positive and negative gradient directions of this pixel. If the gradient intensity of the pixel is the highest, it is retained; otherwise, it is suppressed:

Finally, the dual threshold method is used to determine the possible boundaries.

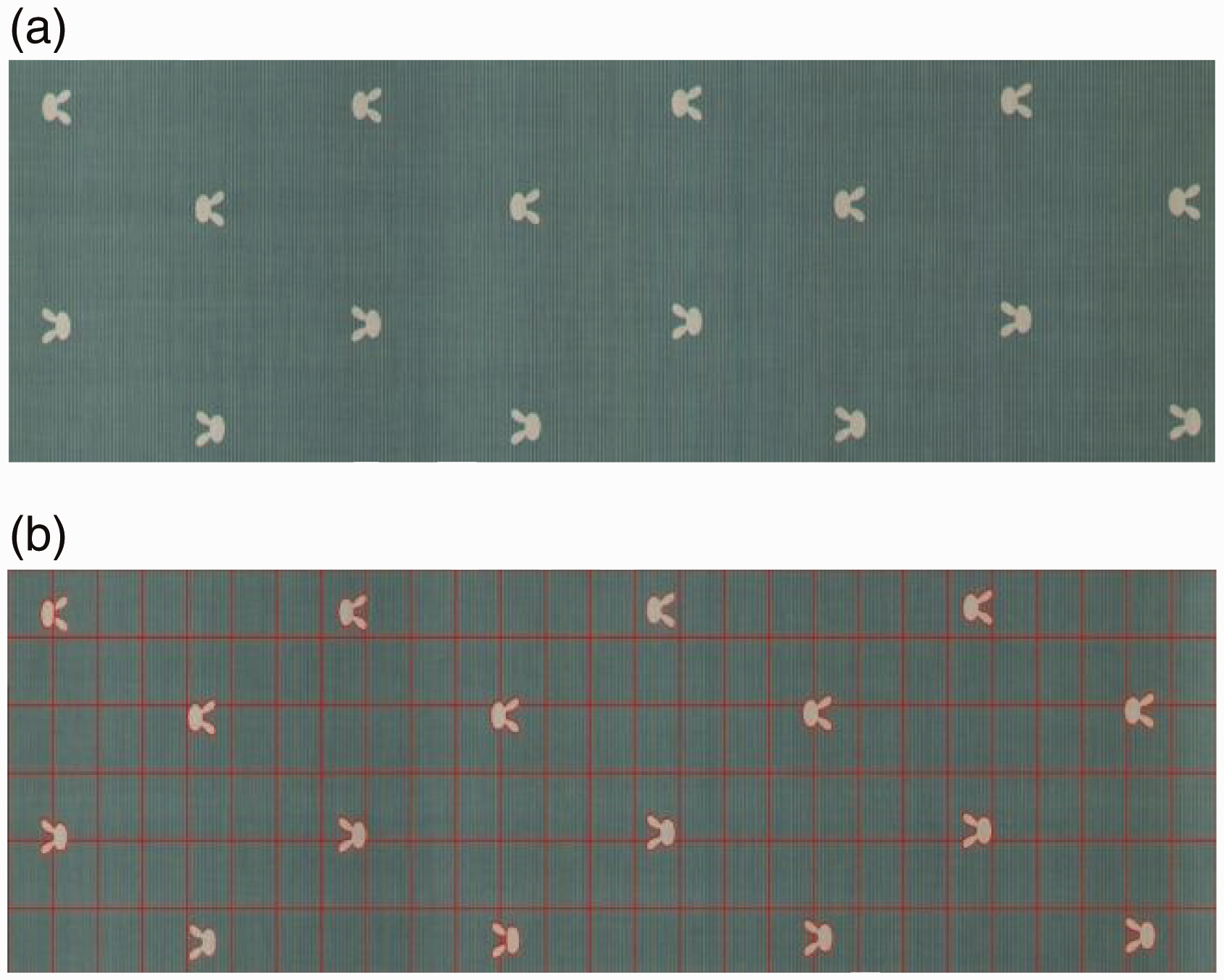

We perform preliminary segmentation of standard fabric images based on Canny edges, and then mesh each sub image based on the initial segmentation according to detection accuracy requirements. The system completes image segmentation of standard fabrics. The results are shown in Figure 3.

Image segmentation effect: (a) original image and (b) image segmentation result graph.

Image registration with cyclic elements

The key to detecting fabric color difference through machine vision is the registration of the standard image and the image to be detected. The speed and accuracy of registration directly affect the efficiency and accuracy of the system detection.

The SURF algorithm, as a scale space-based image local feature description algorithm, is an improvement on the scale invariant feature (SIFT) algorithm. It maintains the advantages of the SIFT algorithm, namely that it is invariant to image scaling, rotation, and affine transformation, while enhancing the robustness to illumination changes and noise interference. The most important thing is to reduce the time complexity of the algorithm, which has better real-time performance and is widely used in image recognition and image matching. 14 Therefore, this project uses the SURF algorithm to complete the registration of the standard image and the image to be detected.

However, printed fabrics are mostly composed of identical small patterns. In feature description, the SURF algorithm only includes the gradient information of the grayscale values around the interest points in the image. When the SURF algorithm is used to extract feature points or describe the feature points of such fabric images, the extracted feature points and corresponding feature point descriptors will be repeated with the pattern cycle in the fabric image, resulting in multiple feature points with identical descriptors in the same image. This leads to significant registration errors and even the inability to complete registration.

In response to the above issues, this article uses the DMF to segment the fabric image according to the pattern cycle based on the working characteristics of the fabric inspection machine. In the SURF description algorithm, position information of the cyclic element where feature points are located is added. We select feature points with the same cyclic element positions in the two images for matching, and complete the registration between the fabric image to be detected and the standard image.

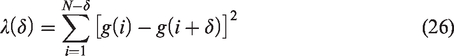

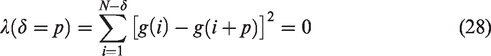

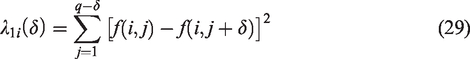

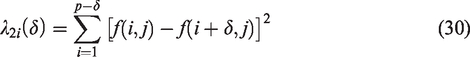

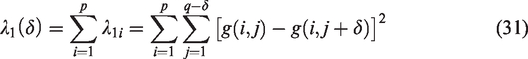

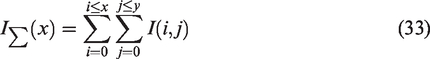

Firstly, the cycle of the pattern in the fabric image is calculated using the DMF, and the image is divided into

The DMF for one-dimensional horizontal sequences is defined as follows:

The DMF for the line-

We accumulate the DMF for each row to obtain the row DMF for the entire image as follows:

The column DMF obtained by the same method is as follows:

By analyzing the distance between multiple minimum points, one can obtain the cycle of the pattern.

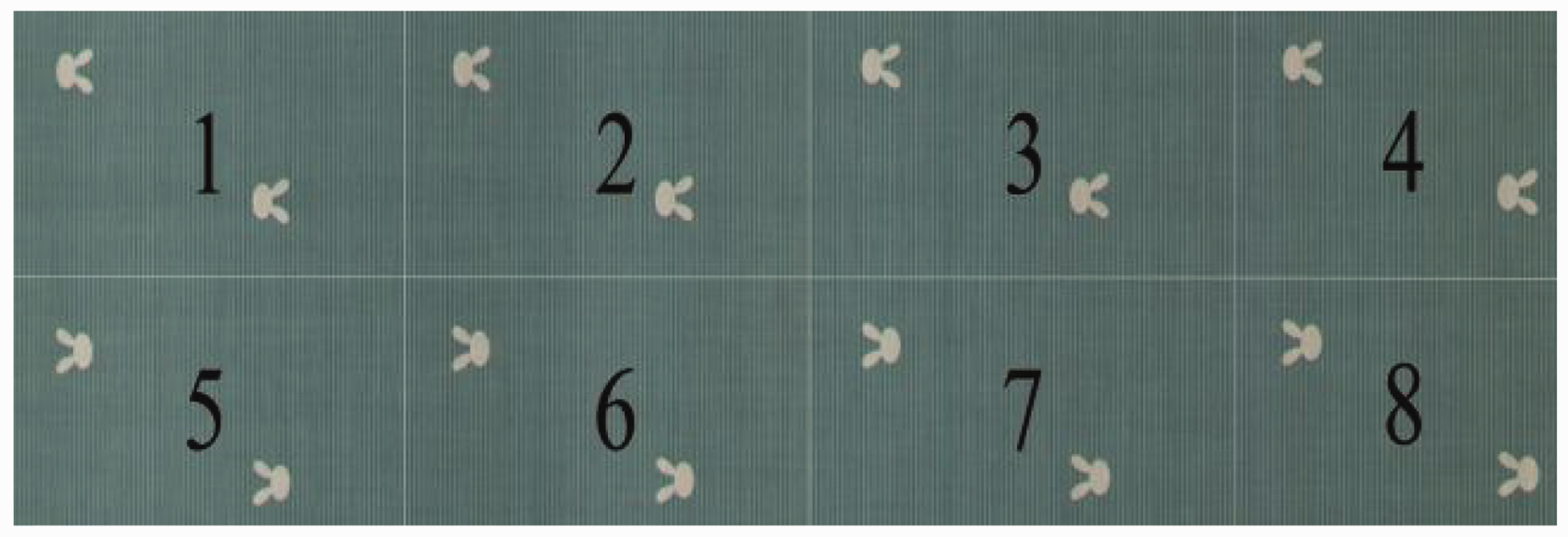

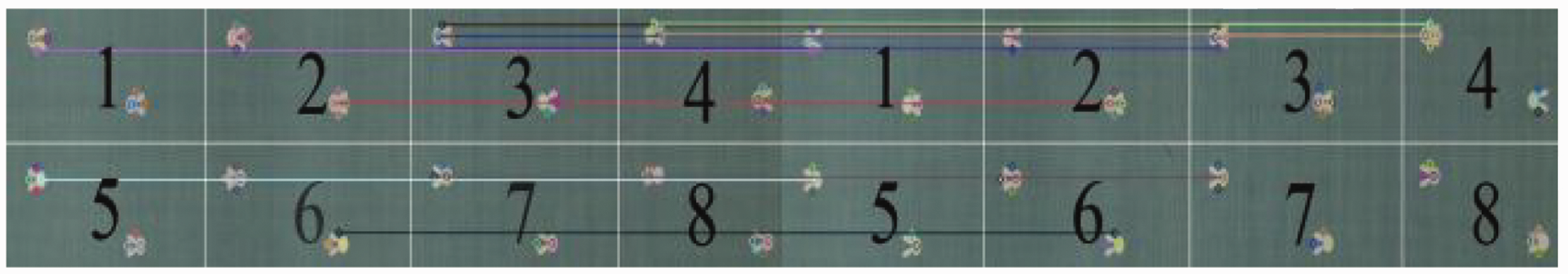

According to the calculation result of the DMF, the fabric image is divided into

Cyclic element segmentation results.

We extract the feature points of the standard image and the image to be detected using the SURF algorithm, and assign corresponding labels to each feature point based on its subgraph number.

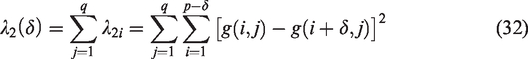

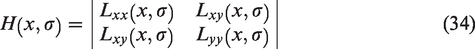

The SURF algorithm detects local extreme points at different scales through a fast Hessian detector based on the integrated image, and determines the main direction and feature point descriptor of each feature point by calculating the Haar wavelet transform.

The definition of an integral image is as follows:

In the formula,

The Hessian matrix

We calculate the Haar wavelet transform with a side length of 4s in the x and y directions for each point in the neighborhood with the feature point as the center and 6s as the radius (s being the scale of the current feature point's space). The response value is given a Gaussian weight coefficient (weighting factor

Haar wavelet filters to compute the responses in the

Then, centered around the feature points, we establish a fan-shaped window with an angle of 3 within the region. The Haar wavelet responses are accumulated within the fan-shaped window to obtain a new vector and traverse the entire region. Among them, the longest direction of the vector is the direction of the feature point.

We rotate the coordinate axis to the main direction with the feature point as the center. Using the main direction as the reference, we select a square area with a side length of 20s, divide the region into 4 × 4 sub regions, and then calculate the wavelet response within the 5s range within each sub region. The Haar wavelet responses in the horizontal and vertical directions of the main direction are recorded as

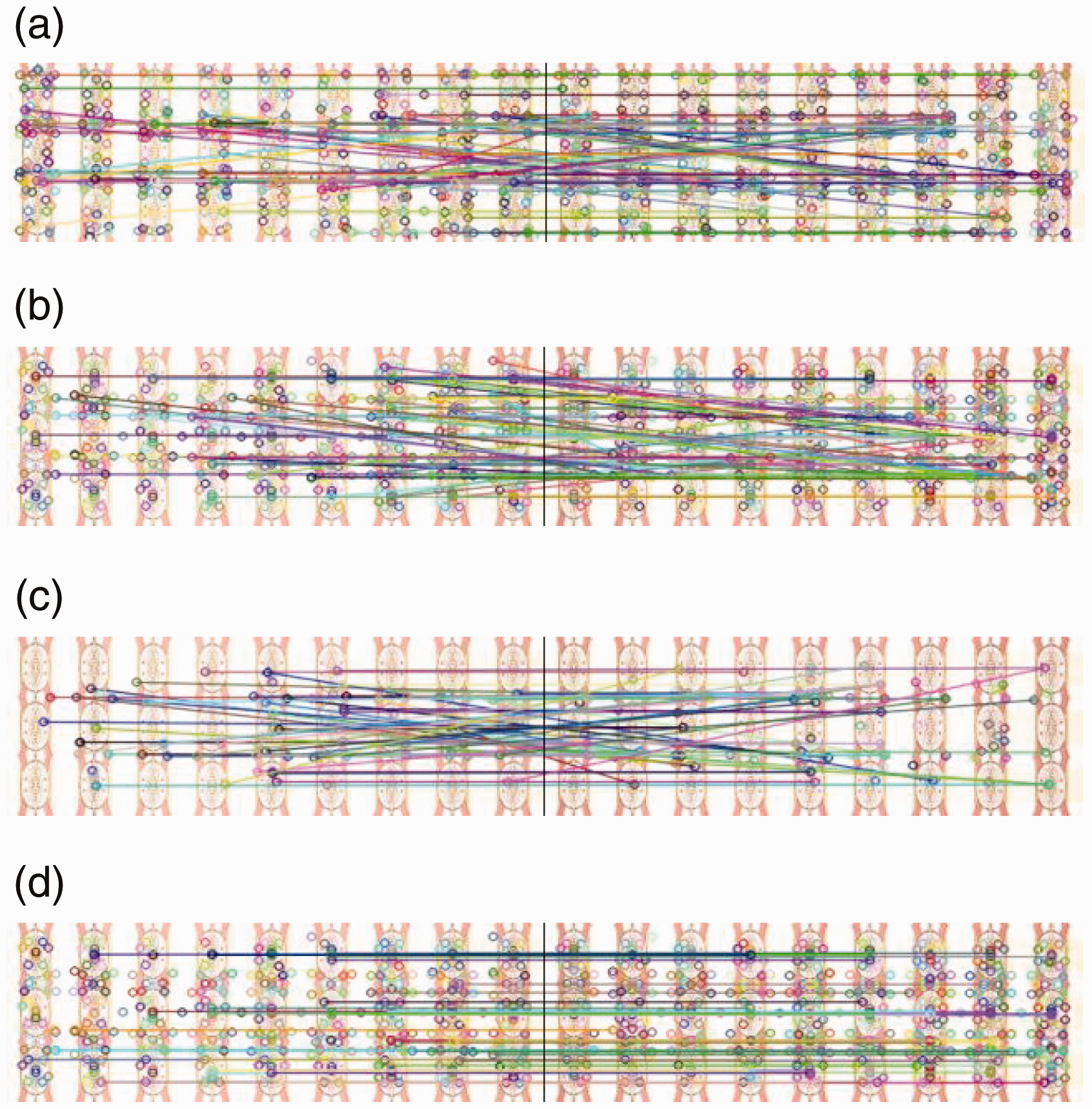

We select feature points with the same cyclic element number in the standard image and the image to be detected for matching. Then the affine transformation matrix is calculated by using the matched feature point pairs to complete the registration of the standard image and the image to be measured. The result of feature point matching is shown in Figure 6.

Results of image registration.

Experimental results and analysis

This article uses the Tianchi dataset for experiments. The mixed image library contains 30 categories and a total of 3000 pieces of common printed fabrics, such as cartoons, fabrics, flowers, animals, and plaids. There are approximately 20 flawless standard images and 80 common defect images for each type of patterned fabric. We select 10 manually calibrated flawless images and 10 images with color difference issues from the fabric images of each pattern to form the sample data for this experiment. Each sample image has a resolution of

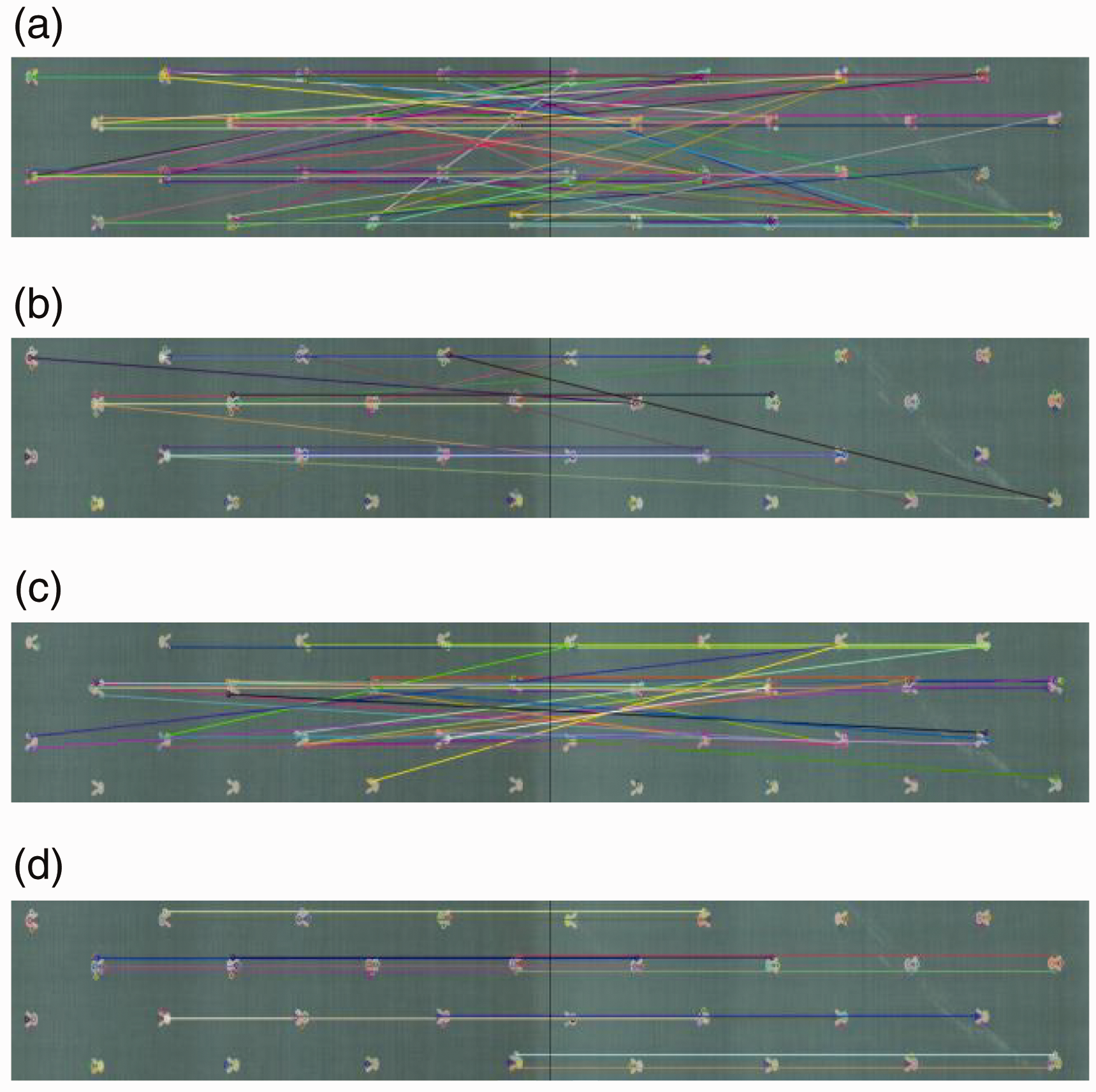

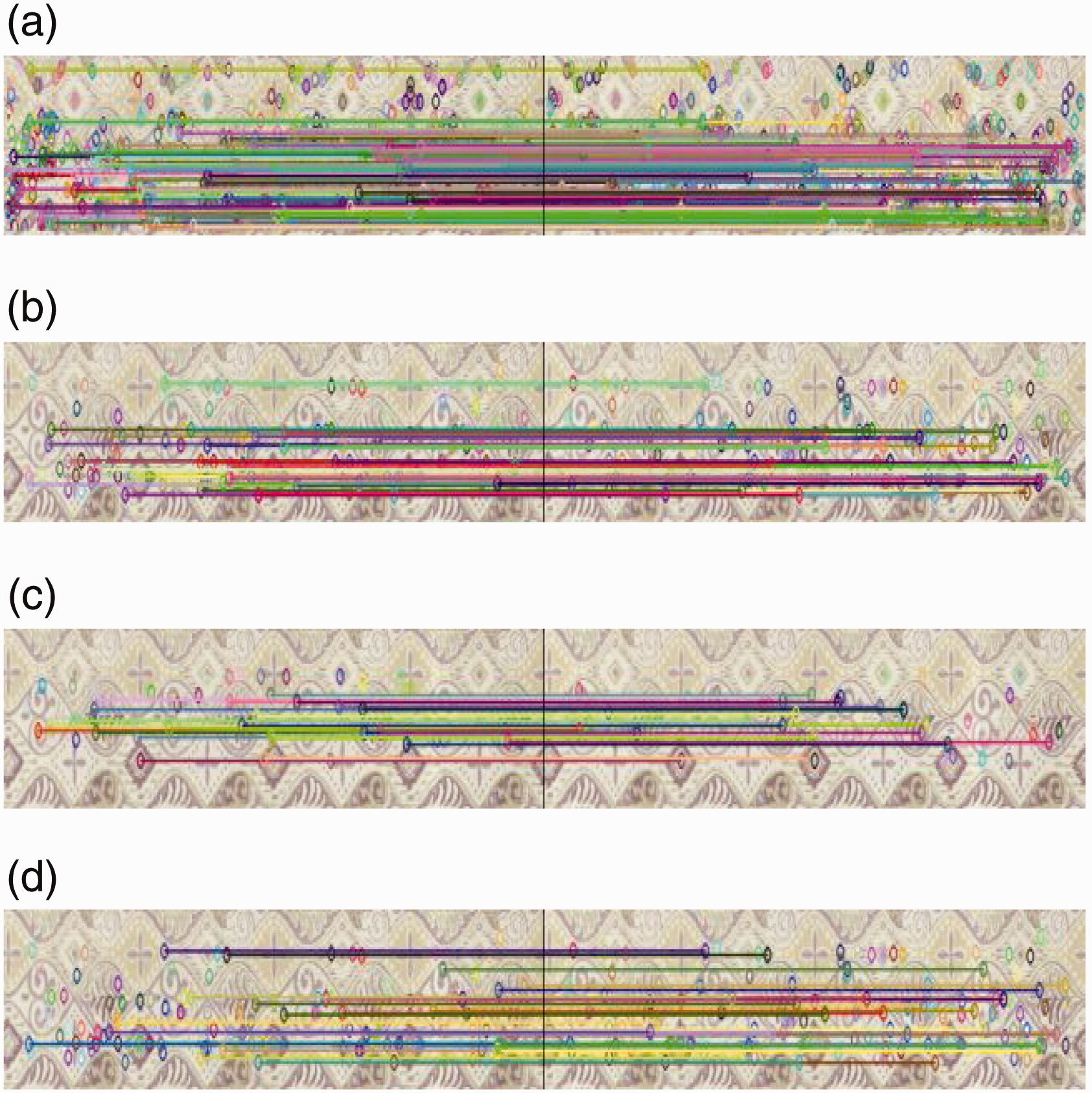

Firstly, the improved image registration algorithm described in the Image registration with cyclic elements section of this paper is tested. Since the image registration method based on feature points matching is more real-time than other registration methods, the following three traditional image registration methods based on feature points are selected to compare with the image registration method in this paper. The SIFT, SURF, and binary robustness invariant scalable key point (BRISK) algorithms are used to extract feature points from fabric images respectively. The Euclidean distance is used to compute the matching between the feature points, and the high-quality matching pairs are selected according to the matching score. Figures 5 –7 show image registration results for three types of fabrics.

The first group of results compared with traditional algorithms in this article: (a) scale invariant feature algorithm matching; (b) speeded-up robust features algorithm matching; (c) binary robustness invariant scalable key point algorithm matching and (d) algorithm matching in this article.

By comparing the results of detection in Figures 7 –9, it can be seen that the traditional image registration method based on feature point matching is limited by the detection method of its local feature description, and cannot perform accurate registration when processing images with duplicate patterns. The image registration method proposed in this paper, which matches the feature points of an image in blocks according to the cycle of elements in the image, effectively improves the accuracy of feature point matching.

The second group of results compared with traditional algorithms in this article: (a) scale invariant feature algorithm matching; (b) speeded-up robust features algorithm matching; (c) binary robustness invariant scalable key point algorithm matching and (d) algorithm matching in this article.

The third group of results compared with traditional algorithms in this article: (a) scale invariant feature algorithm matching; (b) speeded-up robust features algorithm matching; (c) binary robustness invariant scalable key point algorithm matching and (d) algorithm matching in this article.

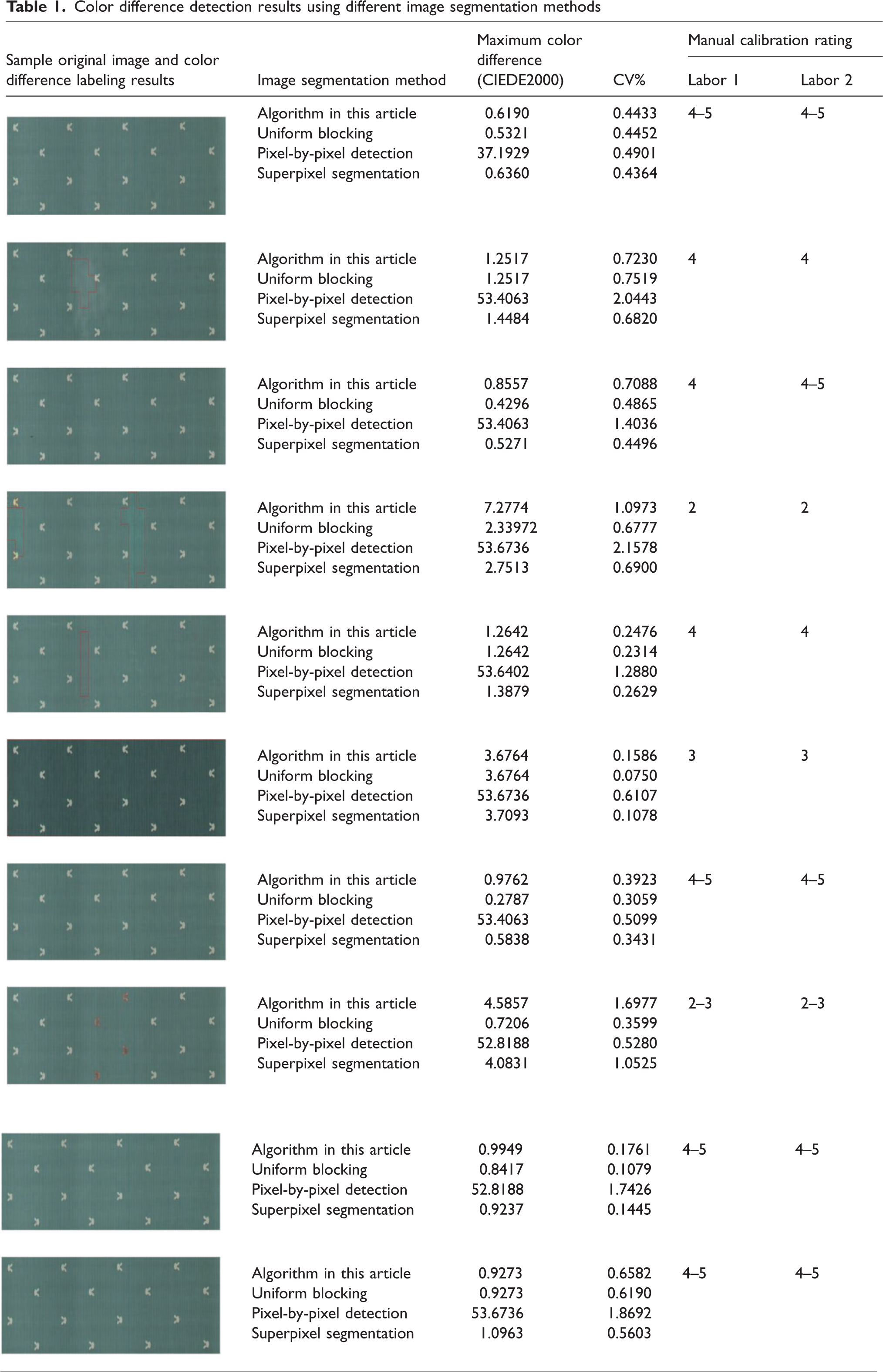

Two people were selected to detect the samples according to the methods specified in ISO 105-A02. The standard divides the color difference into nine grades with numbers 1–5, with 5 being the best and 1 being the worst. 16 The image segmentation method, uniform blocking, super pixel segmentation, 17 and pixel-by-pixel detection described in the Image segmentation of printed fabrics section of this paper are combined with the image registration method in this paper. We use four different methods to detect color differences in fabric sample images and compare them with the manual verification results.

We select a flawless fabric image as the standard image, segment it, extract the color information of each area after segmentation, and save it. The segmentation paths extracted from the standard fabric image are registered to the image to be detected using the image registration method described in this paper. We extract the color information of each region in the image to be detected according to the segmentation path. The color difference formula CIEDE2000 is used to calculate the color difference between the corresponding areas of the standard image and the image to be measured. We label areas with a color difference greater than 1 NBS. The detection results of selecting one type of printed fabric image are shown in Table 1.

Color difference detection results using different image segmentation methods

The maximum color difference values in Table 1 reflect the color difference values of the sub images with the largest color difference among the sub images of the fabric image. CV% reflects the statistical dispersion of the color difference value.

The analysis results show that, the method of uniform partitioning ignores the details of the color edges of the fabric pattern, resulting in a large difference between the result of the color difference calculation for individual partitions near the edges of the pattern and that of manual calibration. The pixel-by-pixel detection method, due to its extreme dependence on registration accuracy, results in too much error and cannot reasonably grade the color difference of printed fabrics. The superpixel method requires manual determination of the number of blocks based on different types of patterns, and the segmentation results are influenced by the initial seed points, resulting in high randomness. The detection results obtained using the image segmentation method proposed in this article are closest to the results of manual calibration.

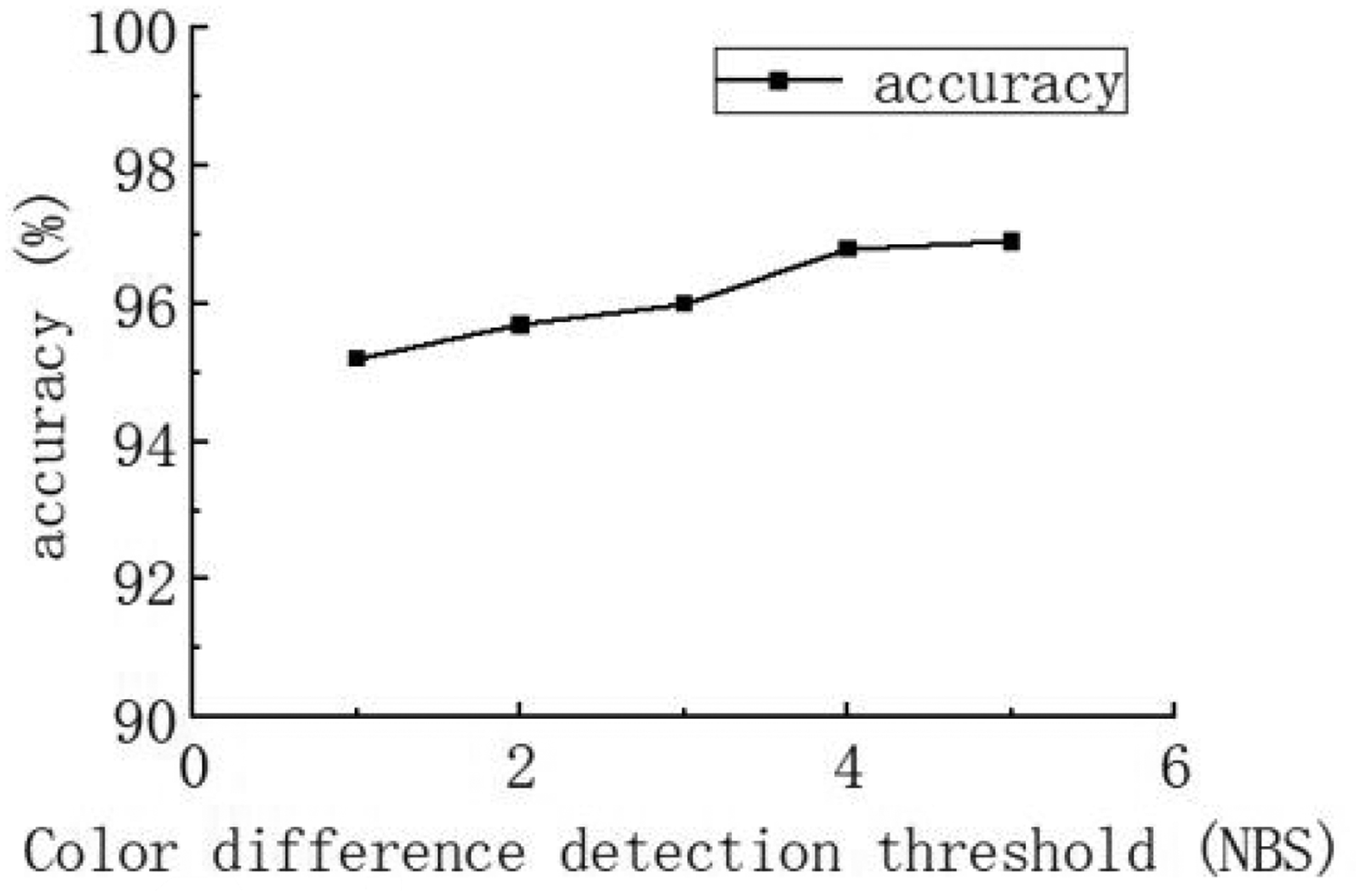

In actual production, different types of fabrics or production standards may have different color difference tolerances. Manually adjusting the threshold for color difference detection based on different color difference tolerances can adapt to different production needs. The change in threshold for color difference detection will affect the accuracy of color difference detection. As shown in Figure 10, the larger the color difference detection threshold setting, the higher the detection accuracy.

The influence of the color difference detection threshold on detection accuracy.

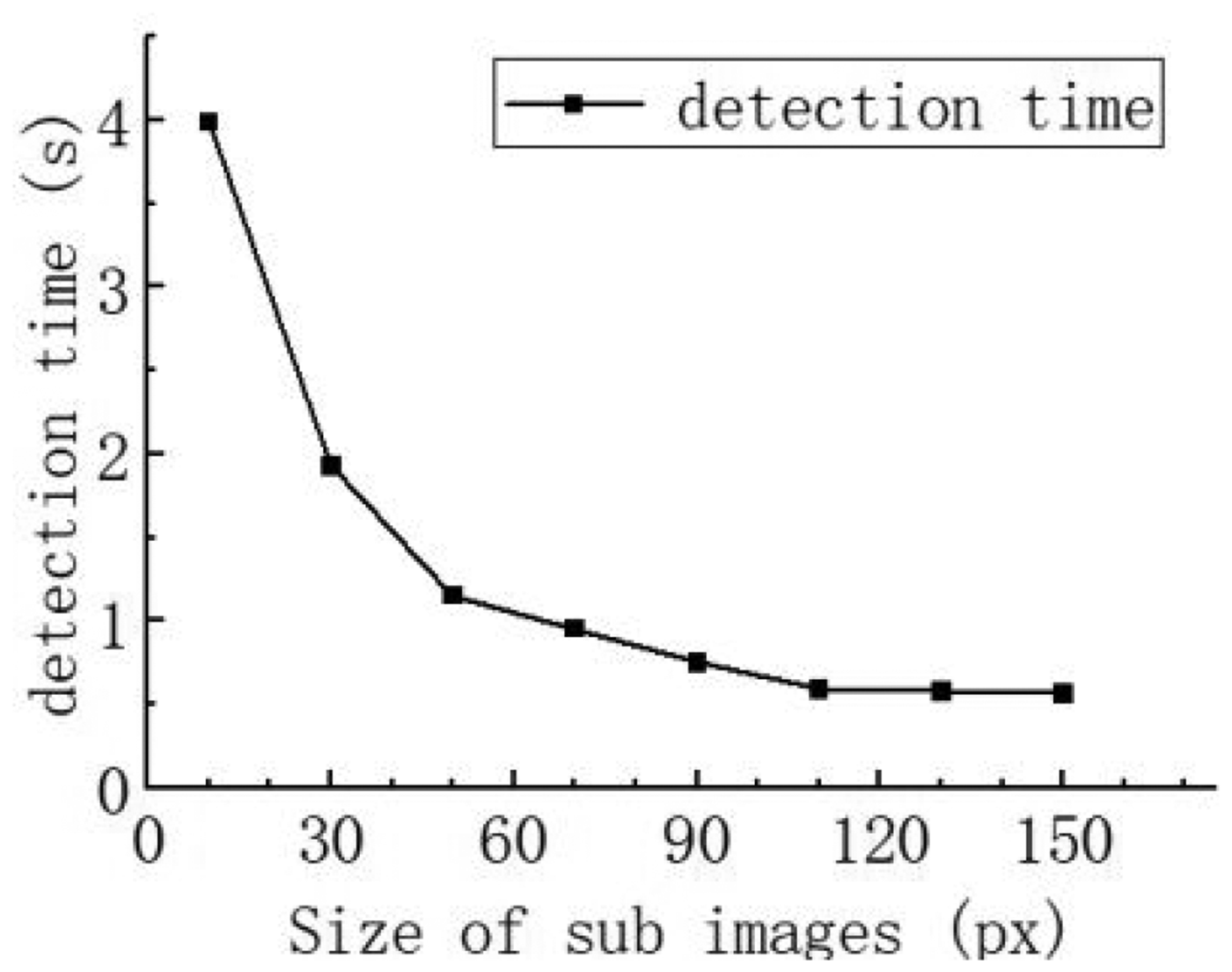

In addition, different products also have different tolerance limits for the size of color difference defect areas. Adjusting the size of sub images for image segmentation in the standard image can achieve the goal of controlling the size of color difference defect areas. The smaller the size of the sub image, the more blocks the image has, and the smaller the size of the detected color difference area. The number of image blocks can affect the color difference detection rate. As shown in Figure 11, as the size of the sub images increases, the required detection time gradually decreases.

The influence of sub image size on detection time.

Conclusion

In order to realize online color difference detection of printed fabrics, a method of combining the Canny edge detection algorithm with uniform grid partition is proposed to segment the printed fabric standard image. We add the location information of the cyclic element at which the feature point is located to the SURF description algorithm by using the DMF. The image segmentation path extracted from the standard image is registered to image to be detected. Finally, the color information of each area is extracted and the color difference is calculated. The results show that the image segmentation method proposed in this paper is more reasonable than the superpixel segmentation algorithm and the average segmentation method, and effectively improves the color difference detection accuracy of printed fabrics. Adding the location information of the cyclic elements where the feature points are located in the SURF algorithm solves the problem that SURF feature points cannot register duplicate pattern images. The manual rating is used as the standard. According to the experimental results, the online detection accuracy of the color difference of printed fabric can reach 95%.

The image segmentation method described in this paper is sensitive to the color edge information in the image. This algorithm has advantages in processing fabric images with clear color edges. However, when detecting fabric images with gradient colors, it is difficult to accurately identify the pattern outline, and the segmentation results are not ideal. The image registration method mentioned in this paper requires the driving system to control the fabric to pass through the image acquisition box at a constant speed and in a stable state. In practice, the online color difference detection method proposed in this paper has some reference value.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of China Youth Program (61701384), the Key Scientific Research Program of Shaanxi Provincial Department of Education (20JS051), the Project of Kegiag Textile Industry Innovation Research Institute of Xi'an Polytechnic University (19KOYB03), the Shaanxi Province Natural Science Basic Research Program (2023JCYB288), the Natural Science Basic Research Program of Shaanxi (Program No.2023-JC-YB-288), and the Open Project of Hubei Key Laboratory of Digital Textile Equipment(Project No.KDTL2020005).