Abstract

With the development of e-shopping, there is a significant growth in clothing purchases online. However, the virtual clothing fit evaluation is still under-researched. In the literature, the thickness of the air layer between the human body and clothes is a dominant geometric indicator to evaluate the clothing fit. However, such an approach has only been applied to the stationary positions of the mannequin/human body. Physical indicators such as the pressure/tension of a virtual garment fitted on the virtual body in a continuous motion are also proposed for clothing fit evaluation. Neither geometric nor physical evaluations consider the interaction of the garment with the body, e.g., the sliding of the garment along the human body. In this study, a new framework was proposed to automatically determine the dynamic air gap thickness. First, the dynamic dressed character sequence was simulated in three-dimensional (3D) clothing software via importing the body parameters, cloth parameters, and a walking motion. Second, a cost function was defined to convert the garment in the previous frame to the local coordinate of the next frame. The dynamic air gap thickness between clothes and the human body was determined. Third, a new metric, the 3D garment vector field was proposed to represent the movement flow of the dynamic virtual garment, whose directional changes are calculated by cosine similarity. Experimental results show that our method is more sensitive to the small air gap thickness changes compared with state-of-the-art methods, allowing it to more effectively evaluate clothing fit in a virtual environment.

Keywords

Traditional clothing fit evaluation requires the consumer to wear the real garment and walk a few steps to determine whether it is a good fit. However, such an evaluation method cannot be used for online shopping. In order to virtually analyze garments in three-dimensions (3D), garment computer-aided design (CAD) is designed to simulate realistic clothing. 1 A set of established CAD platforms, such as CLO 3D and OptiTex, are available in the clothing industry for virtual garment try-on. The main challenge of evaluating dynamic clothing fit is that it depends on a number of factors, including body–clothes proportions, fabric material properties, garment designs, and human motion. Since all the aforementioned factors affect the interaction between clothing and the human body, it is difficult to find the best dynamic clothing fit evaluation method. Moreover, the interaction between the garment and the human body has not been studied in the literature. Body–clothes interaction consists of all the physical relationships such as sliding and collision. In this study, one of the aims is to quantitatively represent garment sliding during movement. To the best of our knowledge, this work is the first attempt to tackle this problem.

The methods of fit evaluation via garment CAD can be classified into two categories. The first one is the geometrically based method. In geometry processing, the surface of the virtual garment and the human body are represented by a set of triangles or quads. 2 For each of the vertices of the garment, the closest point on the human body is found. The distances between them are then used to calculate the signed distance field, 3 which is called the air gap thickness. 4 The air gap thickness between the virtual garment and the human body is then calculated to represent their spatial relationship. The slice-based method is another popular approach to determine this air gap thickness.5,6 A set of parallel planes cuts the meshes of the garment and the human body into a set of cross-sectional pairs, which are considered independently when the garment–body distance is calculated. However, these methods can only be used in stationary positions of the mannequin/human body.4,7

The second category is physical methods, which represents clothing fit using a tension/pressure map and intuitively displays the clothing fit.8,9 A greater tension/pressure indicates that larger relaxation should be added during garment design. Liu et al. 10 further extended the methods into dynamic clothing fit evaluation. However, current physically based approaches cannot be used to describe the spatial relationship between the garment and the human body. Moreover, neither the existing geometrically based nor physically based methods can represent the sliding of the garment between the virtual garment and the human body during movement. Quantitatively understanding the interaction and dynamic air gap thickness between the garment and the human body facilitates the development of a new criterion of dynamic fit evaluation.

3D scanning rapidly captures the 3D geometry/shape of a subject. It has been successfully applied to 3D human body reconstruction11–13 and automatic body measurements. 14 Frackiewicz-Kaczmarek, 15 and Yu et al. 16 applied 3D scanning to explore the air gap thickness between the garment and the mannequin. Like the virtual garment, the slice-based method 13 was used to transfer the triangle mesh into a set of cross sections. For the cross sections cut by the same plane on the garment and body, the distance field is calculated separately to represent the local air gap thickness. 17 However, this method cannot be used in dynamic clothing fit evaluation because the position of the garment shifts during body movement. There is an individual 3D coordinate for a garment in each frame, making it impossible to evaluate the scalar and directional changes for the garments on two different coordinate systems computed from two different frames. Mert et al. 18 and Nam et al. 19 explored the air gap thickness via analyzing the separate air gap thickness for different postures of the same person. However, the cross sections need to be calculated separately for each frame, which cannot maintain a consistent garment–body relationship. Moreover, the air gap information computed from these discrete postures is not sufficient to evaluate clothing fit, as the transitions between different postures are also important. A common 3D coordinate, which means a shared 3D coordinate for multiple individual coordinates, is used to tackle this. The data in each individual coordinate can be mapped to the common 3D coordinate for direct data analysis such as comparing. With the common 3D coordinate, we propose a concept, the 3D Garment Vector Field (3DGVF) to quantitatively represent the scalar and directional changes of the dynamic virtual garment.

3D scanning has become widely used with the development of consumer depth cameras such as Microsoft Kinect. 20 The garment capture system proposed here is based on an RGB-D (i.e. color and depth) camera. 12 However, the work of Kim et al. 21 and Han et al. 22 suggests that 3D scanning may not be reliable. The raw data captured from the 3D scanner creates a point cloud and its accuracy highly depends on the quality of the devices employed. 20 Post-processing of the scanned data such as de-noising, 3D surface reconstruction, hole filling, simplification, and smoothing 23 may further reduce the accuracy of the data. To date, researchers have not been able to reach a consensus on whether 3D scanning is fully reliable. Since the air gap thickness depends on the alignment of the scanned garment and the scanned human body, it is difficult to achieve good accuracy by directly using 3D scanning approaches. No effective representation exists for modeling the air gap between the body and clothes via 3D scanning: (1) the capturing of dynamic clothes is still under-researched; (2) no proper representation is suitable for modeling the air gap between the body and clothes via 3D scanning; (3) garment capture systems are expensive.

In contrast to 3D scanning, physical simulation does not experience the aforementioned issues and is cheaper. Therefore, in this research, instead of using 3D scanning techniques, commercial virtual try-on software is used to simulate the dynamic movement of the garment. 3D clothing software has been validated to be precise for applications such as virtual garment fitting for the fashion industry24,25 and have been demonstrated to be reliable for 3D garment simulation.18,26 To the best of our knowledge, there has been no explicit simulative analysis of the sliding of virtual garments on the human body undergoing movement. Our proposed 3DGVF is the first method to attempt to quantitatively analyze this sliding of a virtual garment.

The rest of this paper is structured as follows. In Methodology, we describe how we adopt 3D clothing software to simulate dynamic garments during movement; to calculate the correspondences between the garment and human body; determine the common 3D coordinates for a dynamic garment; and evaluate the dynamic air gap thickness and garment sliding between the virtual garment and the human body. We then demonstrate the results of the proposed method in Section 3 and analyze the results in Section 4. Finally, we conclude the paper in Section 5.

Methodology

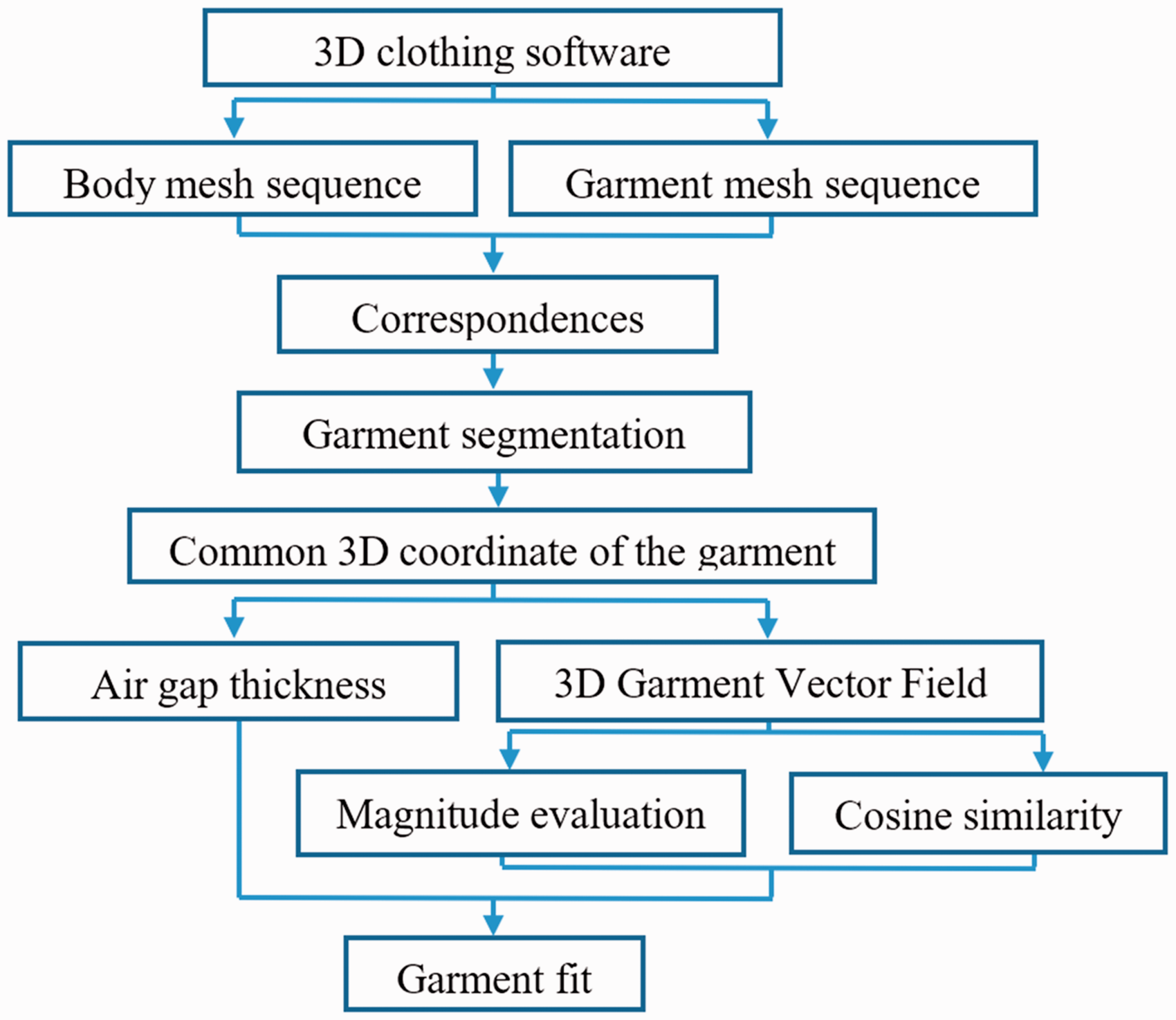

This section presents the methodology of the proposed system. Figure 1 illustrates an overview of the method.

Overview of the method: (a) 3D clothing software is first used to simulate the dynamic garment and to export (b) the body mesh sequence, and (c) the garment mesh sequence; (d) the correspondences between the garment and the body are automatically calculated; (e) the garment is automatically segmented; (f) a new method is proposed to build a common 3D coordinate for the garments in frames; (g) based on (f), the dynamic air gap thickness is determined, and (h) the 3D garment vector field (3DGFV) is presented to represent the garment sliding. (i) Magnitude evaluation and (j) cosine similarity are used to evaluate the directional and scalar changes of 3DGVF; and (k) the indicator values are compared to explore the fit.

3D clothing software

3D clothing software has been developed for modeling the body and the garment in virtual environments. They normally include three basic modules: 1) a mannequin module to model a human body, which is built using measurements from a real body; 2) a fabric module to simulate fabric properties; and 3) a pattern sewing module to virtually sew 2D patterns together to generate a 3D garment mesh. These modules permit the complete simulation of the process of real garment making. In this study, Clo3D (http://www.clo3d.com) was used to implement virtual garment try-on. This system has been validated as having an accuracy rate of up to 95% based on measurements of the stretch, bending, and other physical properties. 28 Real fabrics used in the fashion industry can be converted into virtual fabrics in Clo3D. Motion data can be imported to simulate human movement.

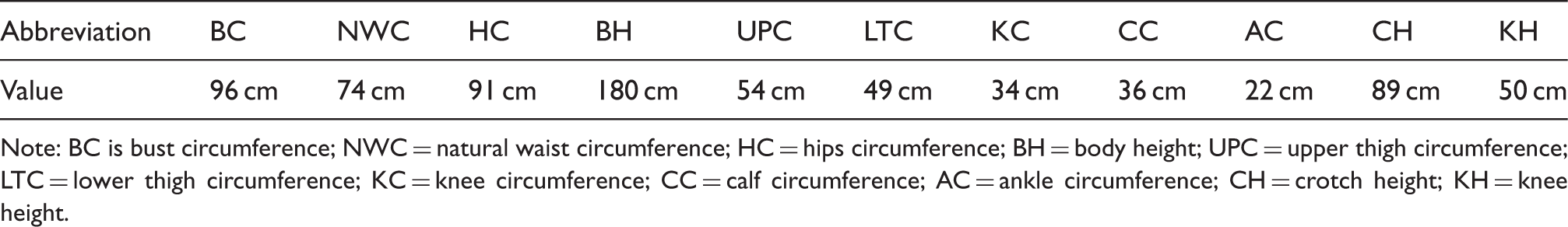

Avatar and walking simulation

In this study, the body dimensions of a male subject were measured according to ISO 7250-1:2017, as shown in Table 1. These body dimensions were imported into the 3D clothing software to model a virtual avatar. A parametric description of body and arm postures is derived by decomposing the body into serially linked segments according to human anatomic structures. A serial-link manipulator is formed, where every two segments are connected by a joint with one or more rotational degrees of freedom. Subsequently, body postures are described by a relative configuration of the segments, expressed as joint angles. There are 14 joints on the skeleton in this study. Each joint is associated with a local rotation matrix. Besides, the root joint has an additional translation matrix, which is used to move the skeleton in the global coordinate. Body motion is calculated by multiplying the local joint rotation matrix with the root transformation matrix. A walking motion is imported into the 3D clothing software to simulate a walking human via skeleton-driven animation technology.

27

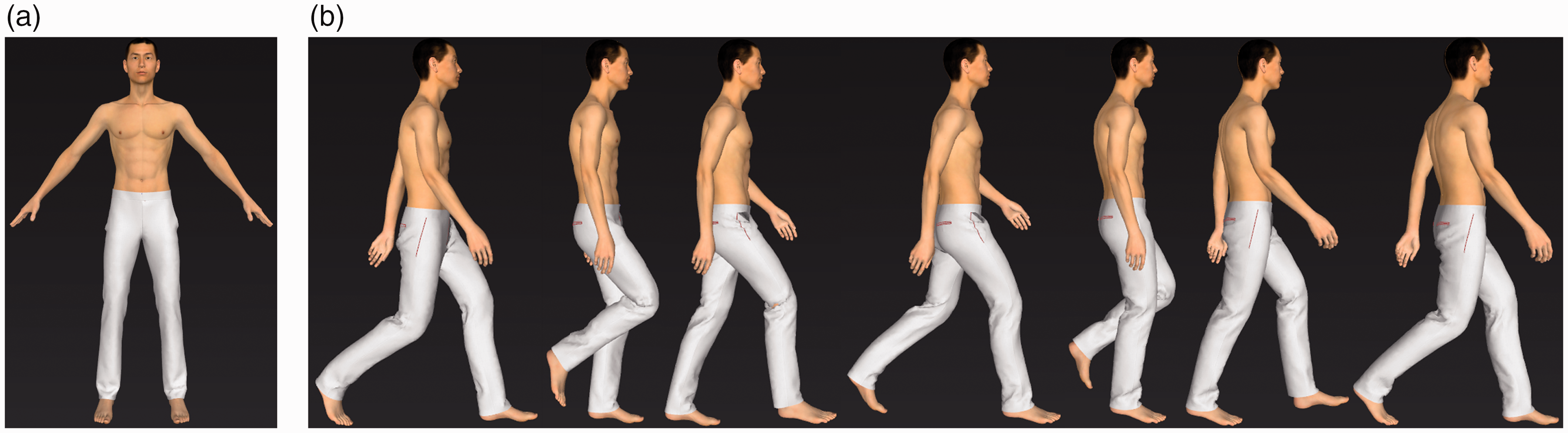

However, the effects of different motion on the garment fit is not the purpose of this paper: the aim here is to present a novel method to determine the dynamic air gap thickness and propose a new metric to represent garment sliding. Hence, the gait pattern was obtained from Clo3D. The surface of the garment will be deformed continuously driven by the surface of the human body via physically based simulation. Figure 2 shows a cyclic walking motion from the 3D clothing software consisting of 37 frames, which is equivalent to 1.2 s. In computer animation, a rest pose, which is typically a T-pose or an A-pose, is necessary for rigging. 3D rigging is the process of creating a skeleton for a 3D model, which is used for animating the model. As the geometry of the garment is calculated based on each frame, the simulation starts from the rest pose to obtain a well-simulated garment. The rest pose can also be used for establishing dense correspondences between the body and the garment, which will be introduced in the following section.

(a) A snapshot of the rest pose; (b) snapshots of seven sampled frames during a walking cycle. Physical dimensions of a male Note: BC is bust circumference; NWC = natural waist circumference; HC = hips circumference; BH = body height; UPC = upper thigh circumference; LTC = lower thigh circumference; KC = knee circumference; CC = calf circumference; AC = ankle circumference; CH = crotch height; KH = knee height.

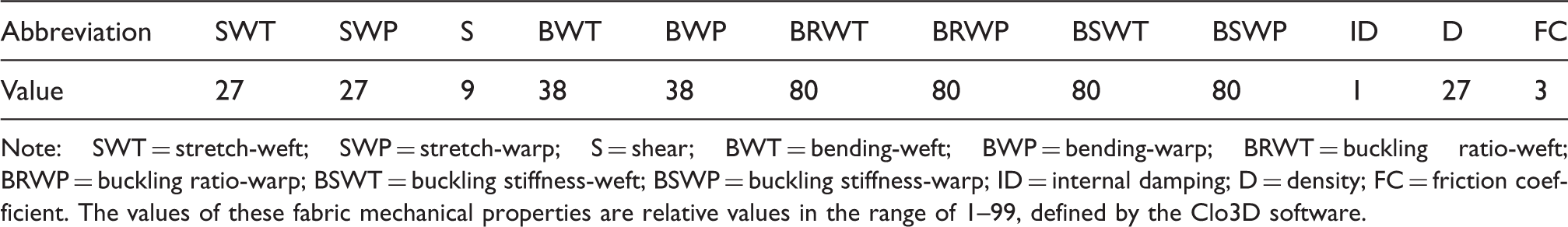

Fabrics and garments

Values of the fabric's mechanical properties

Note: SWT = stretch-weft; SWP = stretch-warp; S = shear; BWT = bending-weft; BWP = bending-warp; BRWT = buckling ratio-weft; BRWP = buckling ratio-warp; BSWT = buckling stiffness-weft; BSWP = buckling stiffness-warp; ID = internal damping; D = density; FC = friction coefficient. The values of these fabric mechanical properties are relative values in the range of 1–99, defined by the Clo3D software.

Pants are considered in this research as they are one of the most difficult types of clothing to evaluate.

29

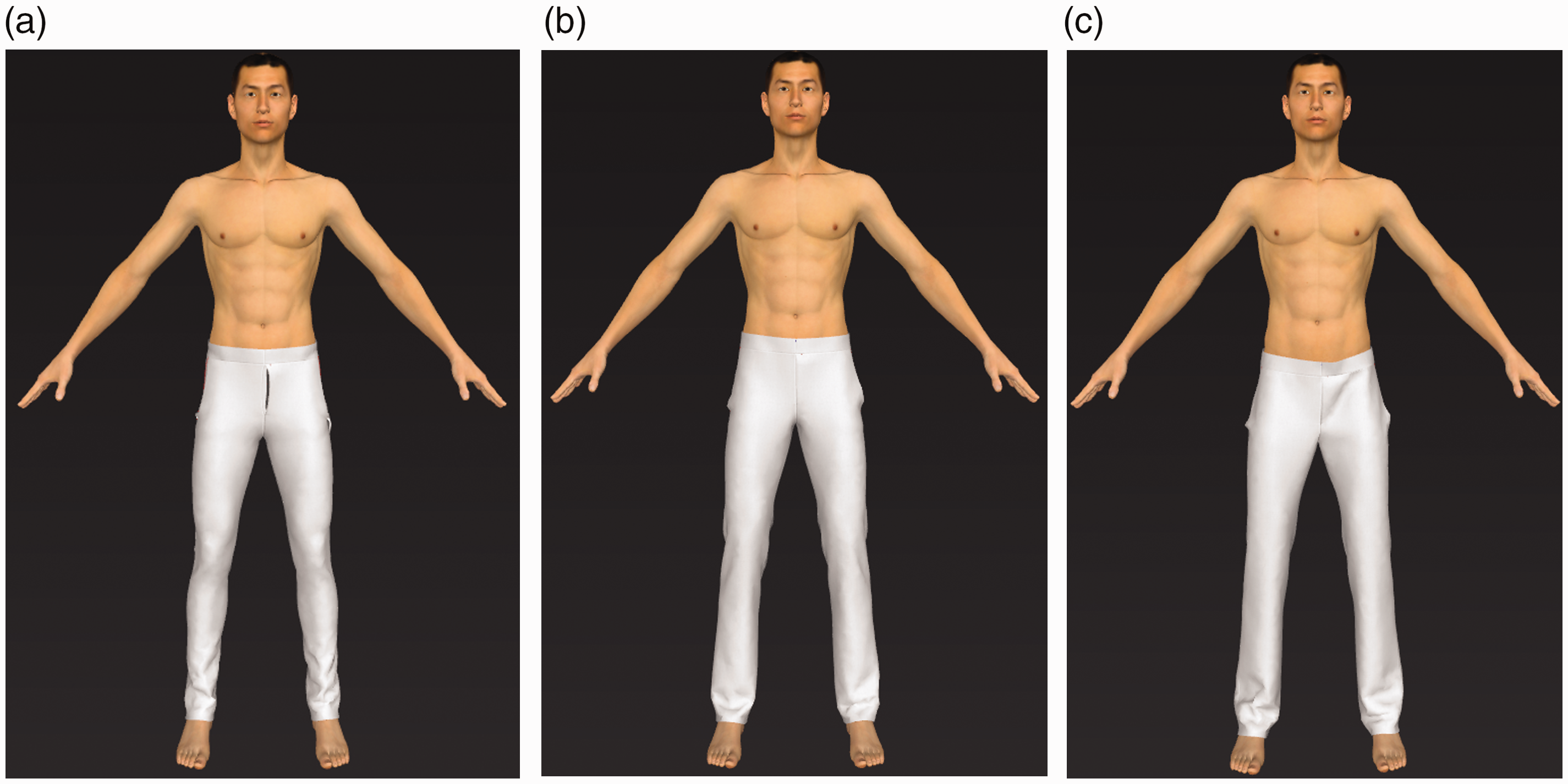

Using an avatar, three pairs of straight-leg pants including tight-fit, good-fit, and loose-fit were designed by a fashion expert, as shown in Figure 3. Psikuta et al.

4

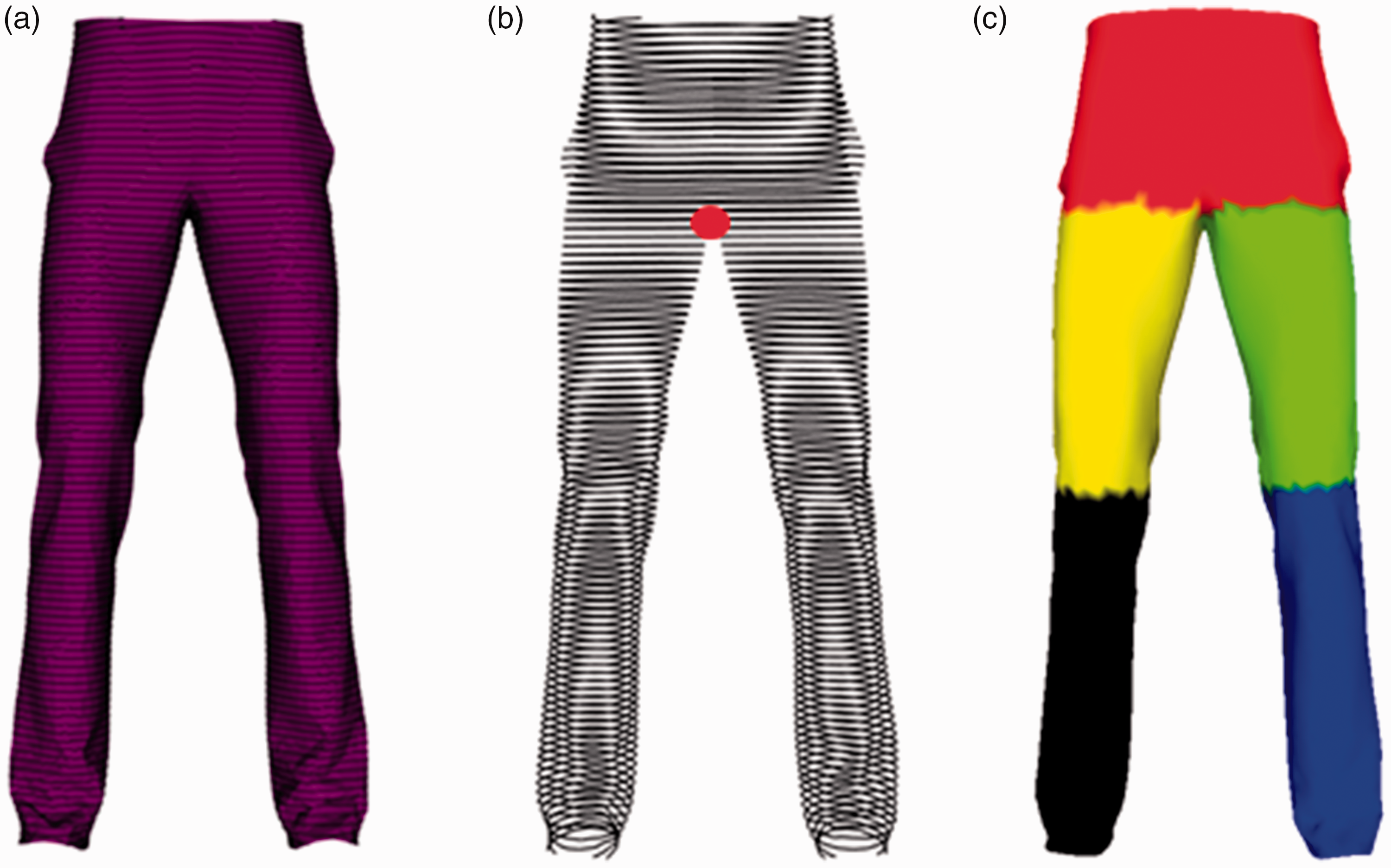

manually segmented the mannequin body into body parts to calculate the air gap thickness separately. In the current research, the segmentation process was fully automatic, and exclusively carried out on the pants rather than the body. During the stage of establishing correspondences, each garment point (a) Tight-fit pants; (b) good-fit pants; (c) loose-fit pants. (a) Slicing the pants; (b) determining the crotch point; and (c) segmenting the pants. (a) Correspondences between the garment and the skeleton (20% sampling) and (b) correspondences between the garment and the human body (20% sampling).

Dynamic air gap thickness and garment sliding representation

According to the literature,4–6 air gap thickness between the garment and the human body is an important indicator to quantitatively evaluate the garment fit. However, the slice-based methods of determining the air gap thickness cannot be used in a dynamic clothing fit evaluation due to the inconsistency in coordinate systems across different frames. Moreover, garment sliding between the garment and the human body during movement has not been sufficiently studied. In the area of garment transfer, Meng et al. 30 , and Brouet et al. 31 indicated that using the closest body skin points as the correspondences of the garment is not reliable. This is because the closest point can be changed when the garment slides along the body of the target character and results in changes in the correspondence. We have tackled this problem by proposing a method to establish the dense correspondences between the garment and the human body: the 3D common garment coordinate for representing garment sliding is determined based on the correspondences. When building the 3D common garment coordinate, the local relationship between the virtual garment and the human body is preserved. Finally, a novel method is proposed to determine the dynamic air gap thickness, and the 3DGVF is proposed to represent the garment sliding between the garment and the human body during movement.

Correspondences

To calculate the air gap thickness, reference points between the garment and the human body should first be determined. These reference points are usually sparse and manually marked to establish cross sections at desired positions such as the waist. 6 These are referred to as dense reference points, representing the closest points between the garment and the human body. The following method to determine the correspondences is proposed.

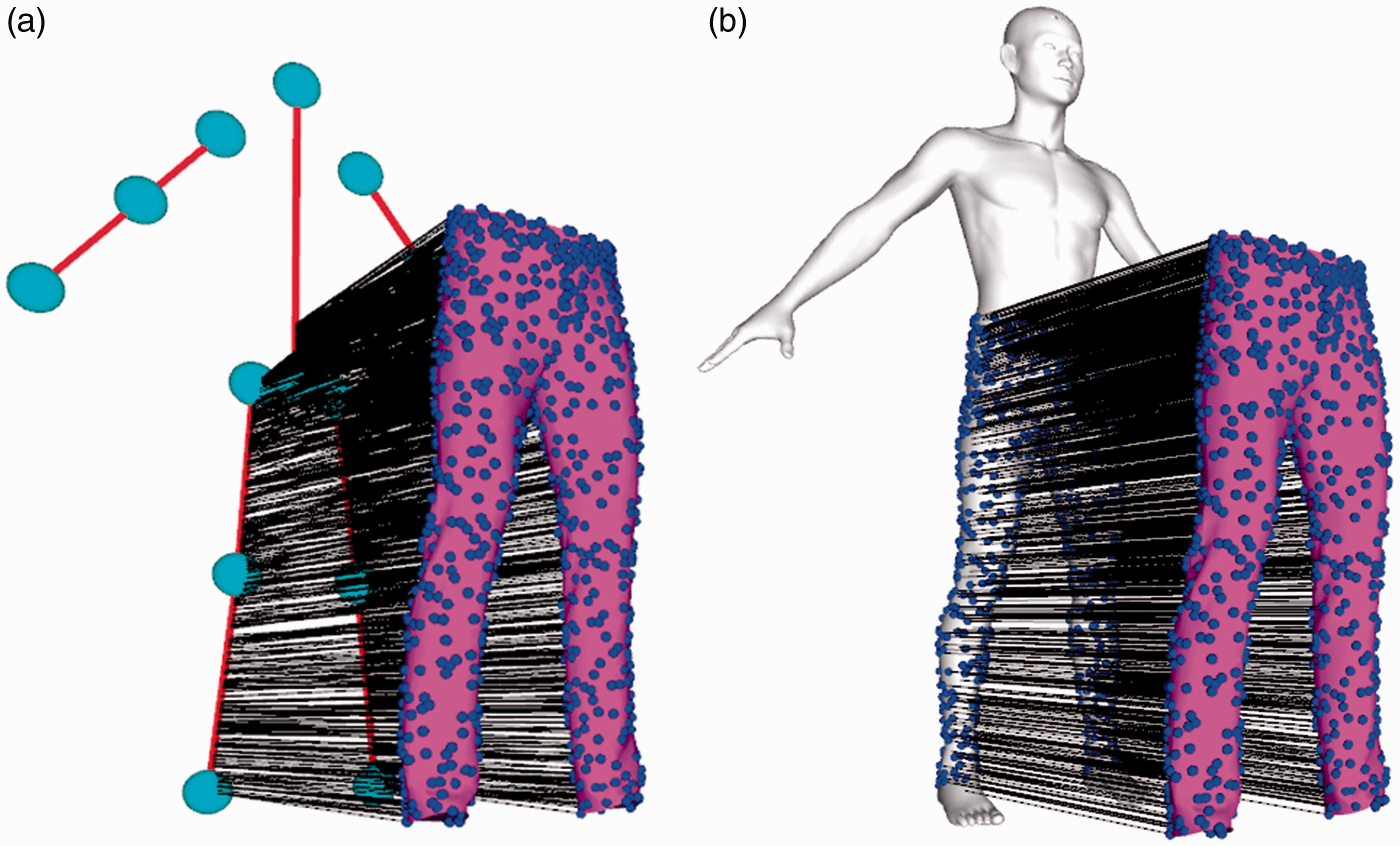

The correspondences are calculated in the rest frame. Figure 5 illustrates the resulting correspondences via the proposed method. It can be observed that the correspondences are so dense that they cannot be clearly identified. Hence, a sampling rate of 20% is used for better visualization. We selected a 20% sampling rate to visualize the dense and clear correspondences. For each garment vertex

Garment mesh of rest pose =

Body mesh of rest pose =

Bones of rest pose =

1: For each vertex

2:

3: Calculate the closest bone

4: Intersection = [calculate the intersection point between

5: Segment =

6: For each triangle

7: Correspondence = [calculate the intersection point between the segment and the surface of the human body]

Dynamic air gap thickness

Air gap thickness between the garment and the human body is a conventional dominant indicator to evaluate clothing fit. However, these methods of determining air gap thickness are limited to the stationary poses of the human body/mannequin.4,7 The mean shortest distance fails to calculate the dynamic air gap thickness.30,31 The mean correspondence distance is used to calculate the mean air gap thickness for the rest pose frame, as shown in Equation 2. (a) one garment vertex

The projection of V on

The following equation is then used to calculate the air gap thickness of the dynamic frames:

To calculate the air gap thickness of each dynamic frame, each vector between the paired correspondences in the dynamic frame is projected onto the corresponding vector converted from the rest frame. This will be further explained in the next section.

3D garment vector field

The dynamic air gap thickness between the garment and the human body is not sufficient to describe garment movement. Garment sliding between the garment and the human body has not been studied to date. Compared with traditional stationary garment fit research, a dynamic garment fit has the following challenges:

The garment slides along the surface of the human body during movement. No existing representation can be used to describe this action. The vertex positions of the garment in different frames are in different coordinate systems. A common 3D coordinate system of garments in different frames is necessary to investigate the dynamic garment. It is difficult to derive the spatiotemporal relationship between two adjacent frames.

To solve the above issues, a novel concept, the 3DGVF, is proposed to represent the garment sliding along the surface of the human body during movement. 3DGVF was inspired by the concept of the vector field. Vector field data arises from applications contexts including sensor outputs, flow-field for image analysis, and dataset visualization.

32

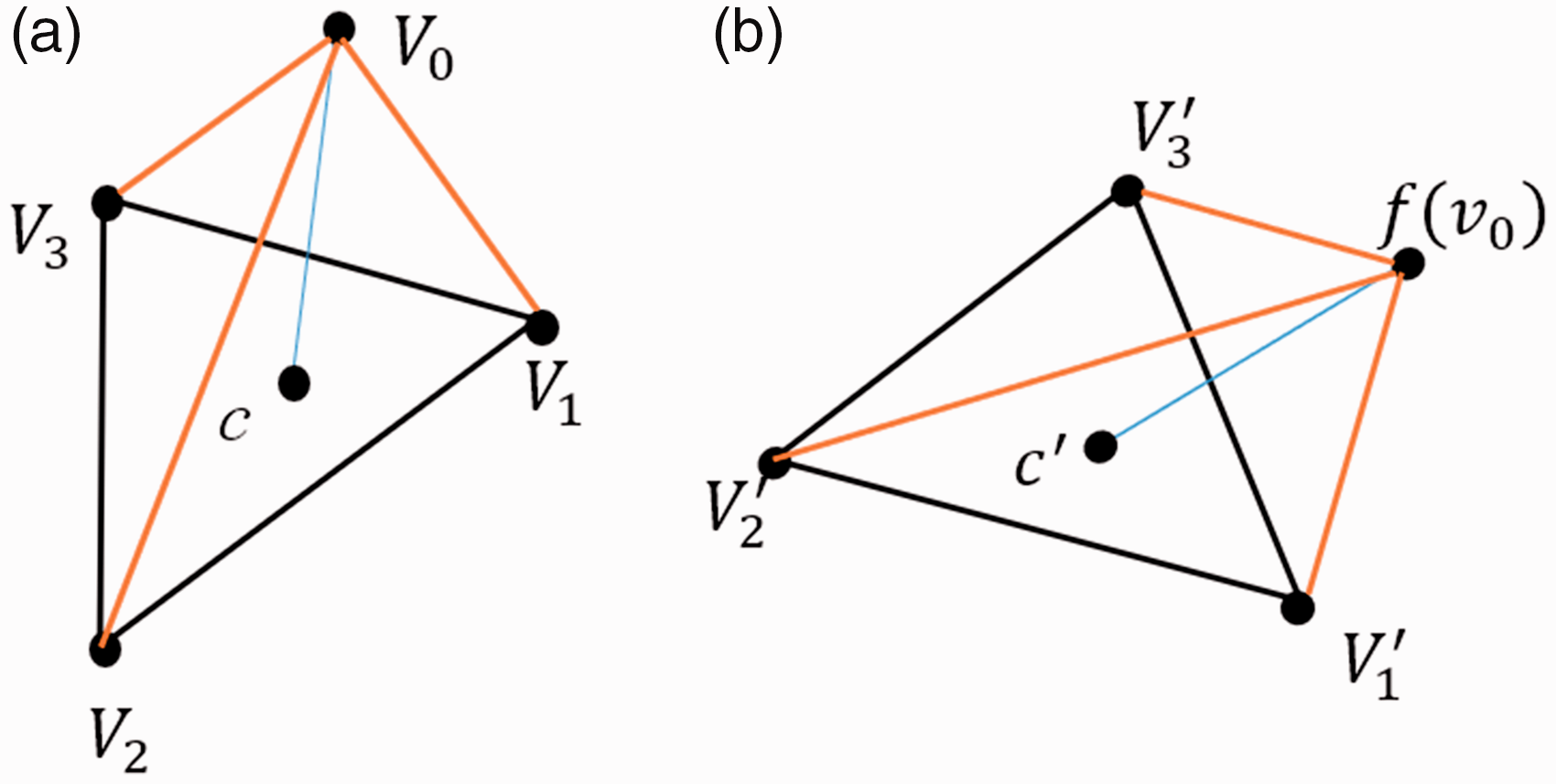

We have extended this concept and propose 3DGVF as representing the sliding flow of the dynamic garment. In each frame, the points of pants/body are represented based on an individual Euclidean coordinate. For comparison, it is necessary to map these data from different coordinates to a shared coordinate. If a common 3D coordinate is built to convert the garment in the previous frame to the local coordinate of the next frame, we can build a vector

Since the coordinates of

If we rewrite Equation 8 to eliminate z, and c denotes the correspondence of

Equation 9 is then rewritten as:

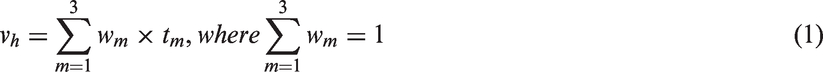

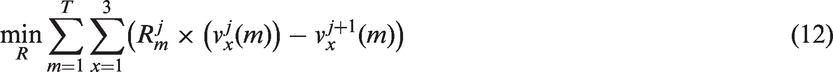

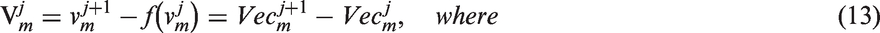

To calculate the transformation matrix for all the vertices of the garment, a cost function is built as shown in Equation 12, where T represents the number of vertices of the garment, and

The

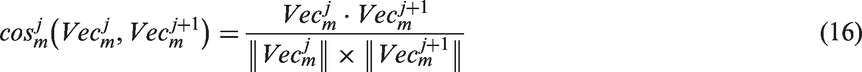

Cosine similarity

In this section, the orientation of 3DGVF is evaluated. The cosine-angle coefficient is one of the most popular similarity measures for the correlation between the vectors 34 ; it measures the cosine value of the angle between them. It has been successfully applied to information retrieval applications 35 for analyzing text documents. We adopted such an idea into evaluating the directional changes of 3DGVF between two adjacent frames.

We can rewrite Equation 7 to obtain:

The cosine-angle coefficient between

The cosine-angle coefficient is bounded between 0 and 1. When

Results

In the experiments, an avatar was first modeled based on anthropometric values. Three pairs of straight pants including good-fit, loose-fit, and tight-fit were then designed by a fashion expert. These pants were later automatically segmented. Next, a cyclic walking motion from the 3D clothing software consisting of 37 frames (equivalent to 1.2 s) was applied. To validate the proposed method, 3DGVF was first calculated to quantitatively represent garment sliding. Next, the proposed method was compared with the state-of-the-art methods of determining air gap thickness.

Figure 7a illustrates the result of building a common 3D coordinate for frame j from the rest frame. It can be seen that 3DGVF efficiently converts the positions of the garment from the coordinate of the rest frame to the coordinate of frame j, while preserving the local relationship between the garment and the human body for the rest frame. Based on the common 3D coordinate system, 3DGVF can be easily defined; this is visualized in Figure 7b. The tails of the arrows represent the converted vertices of the pants in frame j from the rest frame, and the heads of the arrows represent the vertices of the pants in frame j. This method can also be used to build a common coordinate for two adjacent frames. 3DGVF offers an intuitive movement flow of the dynamic garment, which provides both scalar and directional changes information.

(a) The body model of the first walking frame (Frame 1) (left), and the pants and body model in the rest pose frame (frame 0) (right); (b) converting the pants from the rest pose frame to the first walking frame (black point cloud); (c) visualizing the converted pants' point cloud (black pint cloud) and the physically based pants (red mesh); (d) building vector fields pointing from the converted points of the pants to the points of the physically based pants, and denoting the vector fields as 3DGVF to represent the pants sliding in Frame 1 compared with the rest pose frame; and (e) close-up of selected part.

Figures 8 and 9 give the results of the dynamic air gap thickness as well as the scalar and directional changes of 3DGVF, respectively. As shown in Figure 4c, the pants are automatically segmented into five parts. Therefore, the corresponding color of each part is used to draw the curve of the specified section of the pants with respect to the frame number. In this study, the walking motion consists of 37 frames (equivalent to 1.2 s). It should be noted that our method is suitable for various motions. This is because the dynamic air gap thickness and 3DGVF that are determined by the correspondences only depend on the rest pose frame. The data of frame j in figures 8 and 9 are determined by Frame (a) Mean 3DGVF magnitude of tight-fit pants during walking; (b) mean 3DGVF magnitude of good-fit pants during walking; and (c) mean 3DGVF magnitude of loose-fit pants during walking. (a) Mean directional changes of 3DGVF of tight-fit pants during walking; (b) mean directional changes of 3DGVF of good-fit pants during walking; and (c) mean directional changes of 3DGVF of loose-fit pants during walking.

Discussion

A new method for determining the dynamic air gap thickness between pants and the human body has been proposed and evaluated. Additionally, a novel concept called 3DGVF has been proposed to represent the sliding of the garment on the surface of the human body. The scalar and directional changes of 3DGVF were also measured. All these parameters were measured for five individual sections of the pants. In the experiments, a 3D model that represented a male subject was animated by a walking motion with 37 frames (equivalent to 1.2 s) to evaluate the clothing fit dynamically; three types of pants (tight-fit, good-fit, and loose-fit designed by a fashion expert) were used to validate our method.

Figure 10 compares 3DGVF with the shortest distance method

4

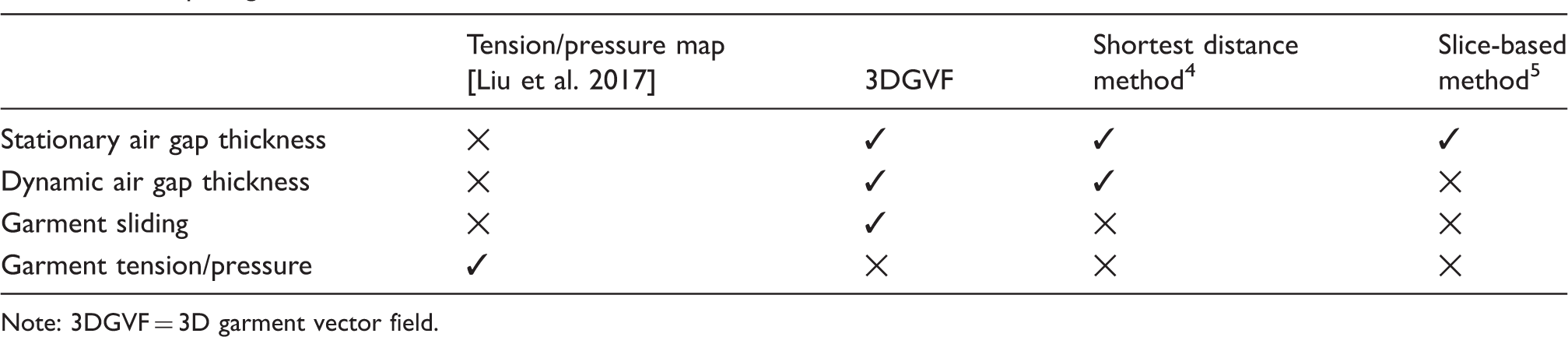

for determining the local mean air gap thickness during walking. Frame 0 represents the rest frame. As shown in Figure 10, the air gap thickness of the dynamic pants fluctuates a lot via 3DGVF, but the air gap thickness of the dynamic pants via the shortest distance method is relatively stable. Additionally, it can be seen that the overall values of air gap thickness via the shortest distance method are lower than the values of the air gap thickness via 3DGVF. It can also be seen that the red line in Figure 10c1 is lower than that in b1. Because the loose-fit pants dropped down a bit during walking, the red line of the loose-fit is lower than that of the good-fit. There are two main explanations for such differences. The first one is that using the closest points as reference points between the garment and the human body is not reliable30,31; the second reason is that 3DGVF is more sensitive to the changes in air gap thickness in motion than the shortest distance method. Table 3 compares 3DGVF with other state-of-the-art methods.

Comparing 3DGVF with the shortest distance method

4

: (a) Mean local air gap thickness of tight-fit pants during walking; (b) mean local air gap thickness of good-fit pants during walking; and (c) mean local air gap thickness of loose-fit pants during walking. Comparing 3DGVF with other state-of-the-art methods Note: 3DGVF = 3D garment vector field.

As expected, differences in the air gap thickness and garment sliding for tight-fit, good-fit, and loose-fit pants were observed (figures 8, 9, and 10). The variability of air gap thickness was observed and typically it was approximately in a range of 0.3–1.1 cm for tight-fit pants, 0.3–1.7 cm for good-fit pants, and 0.2–2.4 cm for loose-fit pants. Compared with the air gap thickness of the rest pose frame, the air gap thickness of dynamic pants fluctuates a lot. It can be seen that the air gap thickness of the waist section does not change a lot when the body moves, the air gap thickness of thigh part changes more, and the air gap thickness of the lower leg sections changes the most. Compared with the lower legs, the values of air gap thickness in the waist and thighs are lower. For tight-fit pants, the air gap thickness of the waist is close to the range of the air gap thickness of the lower legs; for good-fit pants, the air gap thickness of the waist is close to the range of the air gap thickness of the thighs; and for loose-fit pants, the air gap thickness of the waist is lower than the ranges of air gap thicknesses of the lower legs and thighs.

Figures 9 and 10 show the directional and scalar changes of the 3DGVF. As expected, the left thigh and lower leg pants parts have a similar sliding trend, while the right thigh and the lower leg pants parts have a similar sling trend. The waist pants have the smallest sliding distance and sliding angles. Therefore, the motion will have limited the effects on the waist pants part. The sliding of the thigh and lower leg pants parts is strong during walking. In Figure 10, it can be observed that in the waist part through 3DGVF, the air gap thickness is larger in the following order: loose-fit < good-fit < tight-fit. This is because the dynamic air gap thickness via 3DGVF is calculated by Equation 6, which can be written as

Conclusions and future work

In this study, a novel method was proposed to determine dynamic air gap thickness, and a new metric, 3DGVF, presented to quantitatively represent garment sliding. The experimental results validate the proposed method. The proposed methods and 3DGVF can be used for analyzing the dynamically changing local relationships between a garment and the human body in motion. The proposed/implemented framework could be applied within the garment design industry to assist designers to design close-fitting clothing as well as encouraging further research on dynamic garment–body interaction. Quantitatively understanding the interaction between the garment and the human body will also be a step forward in the investigation of the correlation between air gap thickness and the thermal properties of functional fabrics17,36 from traditional static research to dynamic research.

In summary, the main contributions of this paper are as follows:

A novel, fully automatic framework has been proposed to determine the dynamic air gap thickness between a virtual garment and the human body. A novel method of building a 3D garment vector field has been presented to represent the complex interaction between a garment and the human body. To the best of the authors' knowledge, this is the first paper to report on this.

There are some limitations. First, only a walking motion has been considered in this study, whereas dynamic clothing fit is influenced by a range of motion types. The fit evaluation of individual groups such as basketball players and drivers needs to be explored separately to account for various motions. Therefore, to further consider the effects of motion on the dynamic clothing fit, and how to automatically design a garment for different user/customer groups. Second, 3DGVF depends on the geometries of the dynamic garment during movement, which in turn depends on the mechanical properties of the fabric such as the coefficient of friction. The implications of the mechanical properties of the fabric will be explored in the future. Third, the proposed method cannot be directly applied to scanned data. We will improve the proposed method for scanned data in the future. Furthermore, models of muscle, soft tissue, and ligaments will be introduced to enhance the realism of the virtual human body.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the European Commission Erasmus Mundus Action 2 Programme (Ref: 2014-0861/001-001), the Engineering and Physical Sciences Research Council (EPSRC) (Ref: EP/M002632/1), and the Royal Society (Ref: IES\R2\181024, IE160609).