Abstract

The proliferation of automated writing feedback tools over the past decade has been remarkable. While artificial intelligence (AI) tools like ChatGPT have gained prominence, automated writing evaluation tools, like Criterion®, widely used to support formative assessment of second language (L2) writing, also remain relevant in educational settings due to their alignment with curriculum objectives. Previous studies have predominantly focused on learners’ engagement with automated written corrective feedback (AWCF) on errors in grammar, usage, and mechanics, with fewer examining engagement with text-level feedback (style, organisation, and development). This study set out to address this gap by investigating L2 learners’ engagement with all feedback categories generated by Criterion® (AWCF and text-level) and by comparing it to engagement with teacher feedback. Our 10-week study was conducted with 38 ESL and EFL learners in authentic classroom contexts. Adopting a mixed-methods approach, we analysed the first and revised drafts of three writing tasks, think-aloud protocols, and stimulated-recall interviews. The findings revealed comparable learner engagement with teacher and Criterion corrective feedback, but higher engagement with teacher text-level feedback, which was attributed to the higher level of trust in teacher feedback. These findings have implications for writing instruction, including how automated tools might be best integrated with teacher feedback in L2 writing classes to optimise the benefits of teacher and of automated feedback.

Introduction

English as an international language has gained dominance globally, making it essential to learn, particularly in the English as a Foreign Language (EFL) context. Among the different language skills, writing has consistently been challenging to acquire and to teach. For writing instructors, the main challenges are rooted in the need to offer timely feedback on students’ writing, demanding a significant investment of time and effort (Warschauer and Ware, 2006). To address this, automated writing evaluation (AWE) tools have been developed, such as ETS Criterion®, Pearson WritetoLearn™, and Vantage Learning MY Access®. Among these, Criterion® is among the most widely used and researched AWE tool in the literature (Hoang, 2019; Shi and Aryadoust, 2024), making it the focus of the present study.

The impetus for the current study emerged from the recognition that, while engagement with AWE tools such as Criterion has been widely examined in recent years (Hoang, 2019; Link et al., 2022; Liu and Yu, 2022; Shi and Aryadoust, 2024), most of this research has focused on learners’ responses to corrective feedback on grammar, usage and mechanics. Comparatively little attention has been given to engagement with higher-order feedback on style, organisation and development, with only a few studies empirically examining learners’ engagement with automated text-level feedback (e.g. Lee, 2020). Moreover, although several studies have compared automated and teacher feedback, few have explored learners’ engagement with these two feedback sources through a multidimensional lens that captures cognitive, behavioural and affective dimensions.

The purpose of this study was to extend current understandings of feedback engagement by investigating how learners engage with Criterion® and teacher feedback across these dimensions, with particular attention to text-level feedback. The combined focus on both text-level and corrective feedback, together with the comparison of engagement with teacher and automated feedback, reflect the kinds of feedback and approaches to feedback provision that could be found in future classroom contexts, in which teachers are likely to incorporate automated feedback from online tools alongside their own feedback. Therefore, the study illustrates how automated and teacher feedback might be integrated to provide more comprehensive support for learners’ revision practices. Before describing the study, we provide a brief overview of Criterion®.

Criterion®

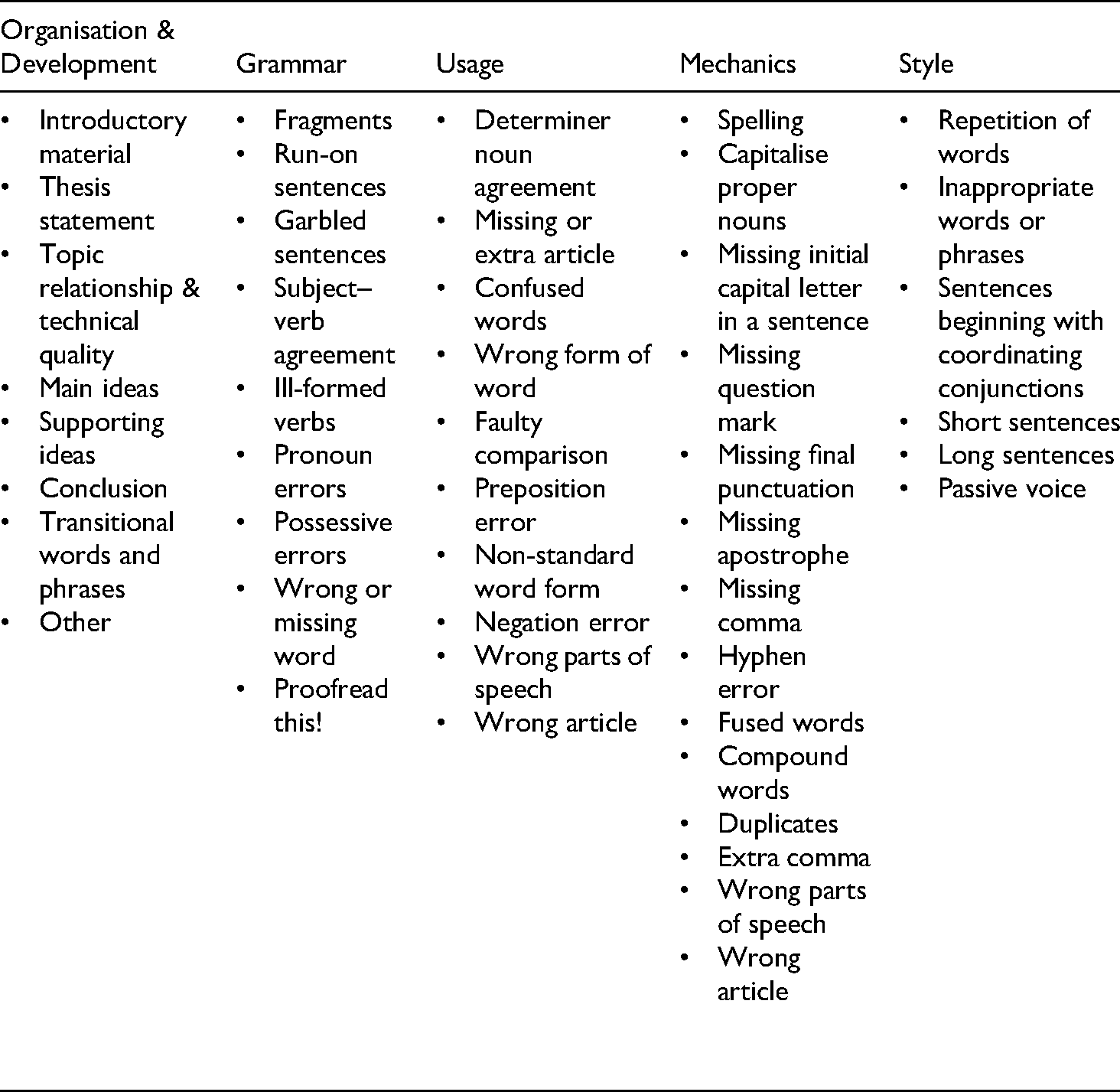

Criterion® Online Essay Evaluation Service is a web-based program, developed by Educational Testing Service, which automatically scores and evaluates students’ essays. At its core is E-rater®, a holistic scoring engine that generates holistic scores and immediate category-based indirect feedback. The indirect and coded feedback is delivered in five main categories: grammar, usage, mechanics, style, organisation and development. These categories can be grouped into surface-level (grammar, usage, mechanics) and text-level feedback (style, organisation and development). Criterion® highlights specific words or phrases for each identified error. When the mouse cursor is positioned over the highlighted section, Criterion offers an explanation of the error in a pop-up screen (see Appendix A for a screenshot of Criterion's® interface). Since the study aimed to compare students’ engagement with teacher and Criterion® feedback, students received feedback from Criterion® on both surface- and text-level aspects of writing (see Appendix B for a summary of the feedback categories generated by Criterion as obtained from the program).

Theories and Frameworks Informing the Study

This study is informed by cognitive perspectives, specifically Schmidt's (1990) noticing hypothesis and Leow's (2015) model of L2 processing in instructed second language acquisition, which conceptualise feedback as a form of input requiring conscious attention for learning. These perspectives inform the analysis of how learners notice and process feedback. Leow's model identifies three stages: input, intake, and knowledge processing, with attention and depth of processing playing a critical role, particularly in the input processing stage.

The study also adopts Ellis's (2010) engagement framework, which distinguishes between three dynamically interconnected dimensions of cognitive-, behavioural- and affective engagement with feedback. These dimensions have since been widely applied in research on feedback, particularly written corrective feedback (WCF), and the definitions and operationalisation of these dimensions have become more finely tuned. Cognitive engagement concerns whether and how learners notice feedback, by employing a range of cognitive and metacognitive strategies (Han and Gao, 2021) as well as the depth of feedback processing (Han and Hyland, 2015). Behavioural engagement captures revision operations in response to feedback, the time spent on revisions (Zhang and Hyland, 2022) and observable strategies aimed at improving writing accuracy (Chen and Hu, 2025). Affective engagement includes both general attitudes and immediate emotional reactions to WCF as reflected in learners’ judgement, affect and appreciation (Zheng and Yu, 2018). Of these, cognitive engagement has been most widely investigated and closely aligns with Schmidt's (1990) noticing hypothesis and the input processing stage in Leow's model. Together, the cognitive perspectives and the engagement framework provide the lens through which learner engagement with feedback is examined in this study.

Engagement with Teacher and Automated Feedback

Learners’ engagement with feedback, whether from humans (teachers and peers) or automated tools, continues to receive much research attention. The earliest studies employing the engagement framework focused on learners’ engagement with corrective feedback provided by teachers (Han and Hyland, 2015; Mahfoodh, 2017). What these studies revealed is the unpredictability of the relationship between the three dimensions of engagement. For example, whereas Han and Hyland's (2015) study, conducted with four non-English major Chinese EFL learners, found that a high level of behavioural engagement did not always stem from a high level of cognitive engagement, Mahfoodh's (2017) study, conducted with eight EFL university students in Yemen over 14 weeks, found that students’ positive and negative emotional responses can affect learners’ understanding and uptake of WCF. These somewhat conflicting findings were also reported by Chen and Hu's (2025) study on learners’ engagement with peer feedback. The study found that although positive attitudes towards the feedback received (affective engagement) can enhance cognitive and behavioural engagement, this is not always the case, thus suggesting that learner- and context-related factors may prevent positive affective engagement from having a positive impact on cognitive and behavioural engagement.

A noted trend in current research on feedback has been the focus on engagement with feedback provided by different automated tools, such as Criterion® (Hoang, 2022) or Grammarly (Koltovskaia, 2020). However, most of these studies focus narrowly on behavioural or cognitive engagement (Hoang, 2022), with little attention paid to affective responses. Yet, as noted earlier, affective engagement can have an important impact on other dimensions of engagement. There is also a predominant emphasis on corrective feedback, overlooking engagement with feedback on content and organisation (Hoang, 2019, 2022; Shi and Aryadoust, 2024). Furthermore, very few studies have compared learners’ engagement with different feedback sources (Link et al., 2022). These gaps suggest the need for studies that examine all three engagement dimensions and their interaction across feedback types and sources. To address these gaps, this study examines learners’ engagement with both corrective and text-level feedback from Criterion® in comparison to teacher feedback, across cognitive, behavioural and affective dimensions. It does so by posing the following research question: How does learners’ engagement with Criterion® feedback compare to engagement with teacher feedback?

Methodology

Context and Participants

This study employed a concurrent triangulation mixed-methods design (Creswell and Creswell, 2017) to investigate English as a Second Language (ESL) and EFL learners’ engagement with teacher and automated feedback. Using a convenience sampling technique, 38 adult learners were recruited from three academic English courses at two universities and an academic English and IELTS (i.e. International English Language Testing System) preparation course offered at two language centres, one in Australia and one in China in 2020. The courses followed a structured 10-week curricula, and instruction ranged from 4 to 20 h per week across all four language skills, with embedded writing tasks and assessments that required students to produce multiple drafts. The teachers who provided feedback were experienced ESL and EFL practitioners with over 10 years’ teaching experience and familiar with technology use. The teachers provided feedback as per their usual practices to preserve authentic classroom conditions.

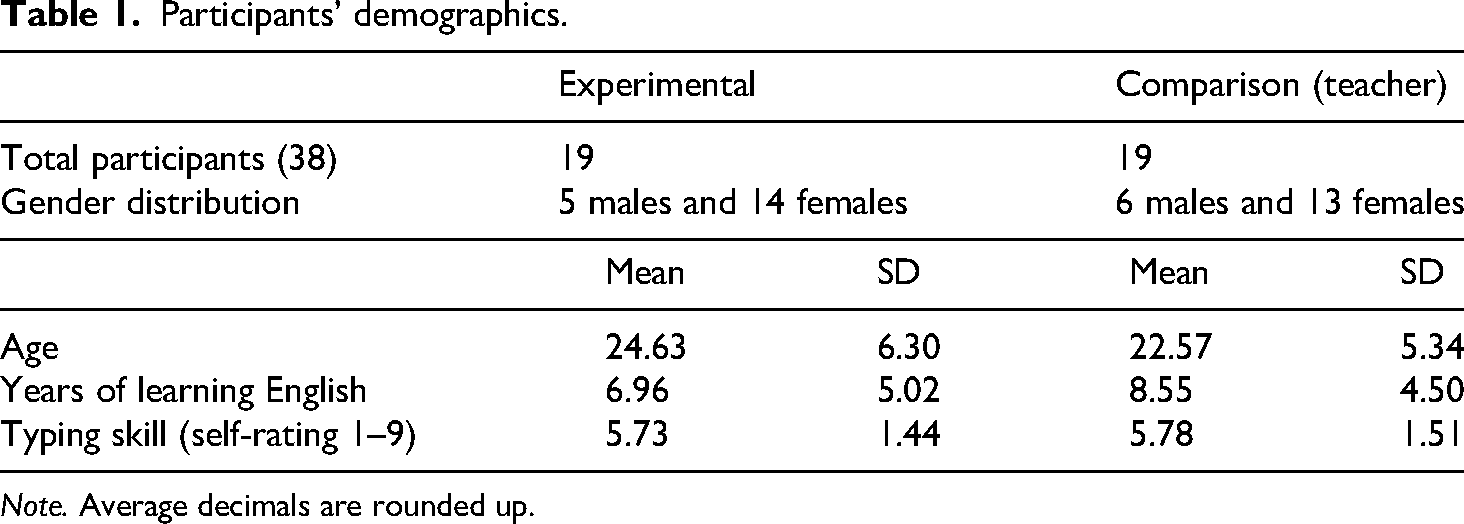

Participants were recruited through course coordinators on a voluntary basis. The learners’ English proficiency level was equivalent to IELTS 4.5–5.5, as determined by course entry requirements. Data collection was conducted online via Zoom. After obtaining informed consent, participants were randomly assigned to an experimental group (receiving feedback from both Criterion and a teacher) or a comparison group (receiving only teacher feedback). The intervention was embedded into existing course structures to ensure ecological validity. Table 1 presents demographic information about the participants.

Participants’ demographics.

Note. Average decimals are rounded up.

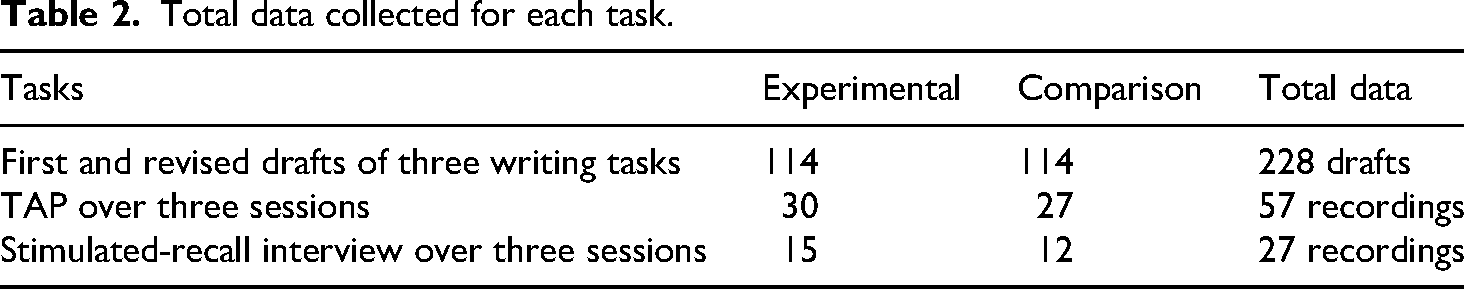

Total data collected for each task.

Design and Procedure

The data for this study came from the first and revised drafts of three writing tasks. The prompts varied for each task and across the different courses, as they were aligned with the course syllabus to maintain ecological validity. Consequently, prompts were not standardised across conditions or institutions. All prompts, however, were drawn from the Criterion library, but they closely resembled those typically used by the teachers in their classes. Other data sources included think-aloud protocols (TAPs) and stimulated-recall (SR) interviews. Criterion was introduced via a 10-min instructional video and a one-on-one training session. Experimental group participants were also given a practice task to familiarise themselves with the platform before starting the actual writing assignments.

Participants in the experimental group received Criterion® feedback on their first drafts. For ethical reasons, that is, to avoid students potentially feeling concerned about being disadvantaged, students in the experimental group received teacher feedback on their revised draft. However, in this study we only considered revisions in response to Criterion feedback. A subset of learners took part in TAP sessions during revision, and SR interviews were conducted 1 to 3 days later to elicit more data on the learners’ cognitive and affective responses. According to the teachers, these learners were typical of their classes, providing confidence in the comparability of the analytic sample. TAPs and SR interviews were conducted with a volunteer subset of participants (TAPs: n = 19; n = 10 in the experimental group and n = 9 in the comparison group; SR interviews: n = 9; n = 5 in the experimental group and n = 4 in the comparison group; see Table 2). Students were given a maximum of 35 min for both the revision and TAP activities. The duration of the SR interviews ranged from 10 to 30 min depending on the preceding TAP.

Data Analysis

The collected data were analysed using Ellis's (2010) three-dimensional framework of learner engagement.

Cognitive Engagement

TAPs and SR interviews were cross-referenced with students’ first and revised drafts and coded for noticed feedback points. In this study, noticed feedback points referred to instances where students identified a specific error, whether provided by Criterion® or the teacher, by directing the mouse pointer to the highlighted text in Criterion's® interface (see Appendix A), as well as through exclamatory utterances (e.g. ‘umm’, ‘oh’, ‘yeah’) and hesitation markers (verbal fillers and long pauses). Conversely, an episode was recorded as unnoticed if students did not direct the mouse cursor to the feedback point. Episodes of noticed feedback were then categorised by error type (e.g. fragments) and category (e.g. grammar).

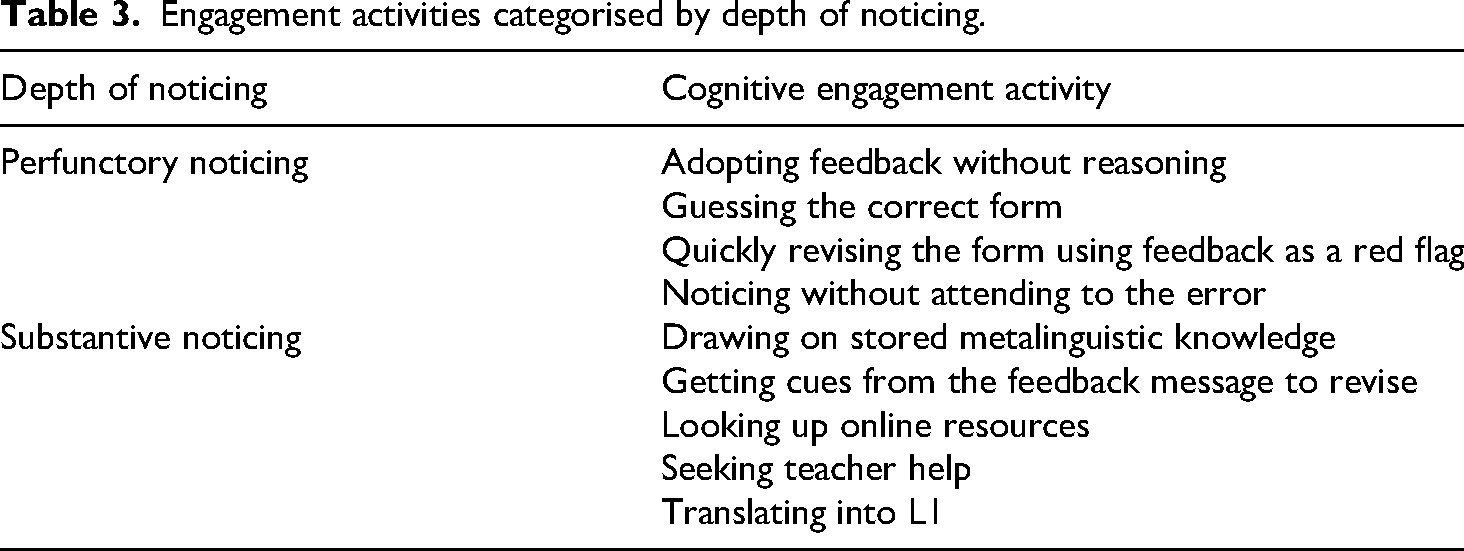

Drawing on Swain and Lapkin’s (1998) research, the unit of analysis of the TAPs was language-related episodes (LREs), defined as any section of the protocol where a learner noticed a feedback point and responded to it – for example, by accepting the feedback (revising in line with the suggestion) or rejecting it (retaining the original form) with or without verbal justification. Following the identification of LREs, a first round of coding was conducted to analyse engagement activities. These activities were adapted from Hoang’s (2019) study and refined in light of emerging data. Based on Qi and Lapkin’s (2001) and Storch and Wigglesworth’s (2010) research, noticing was further classified as either perfunctory or substantive. A full list of engagement activities is presented in Table 3.

Engagement activities categorised by depth of noticing.

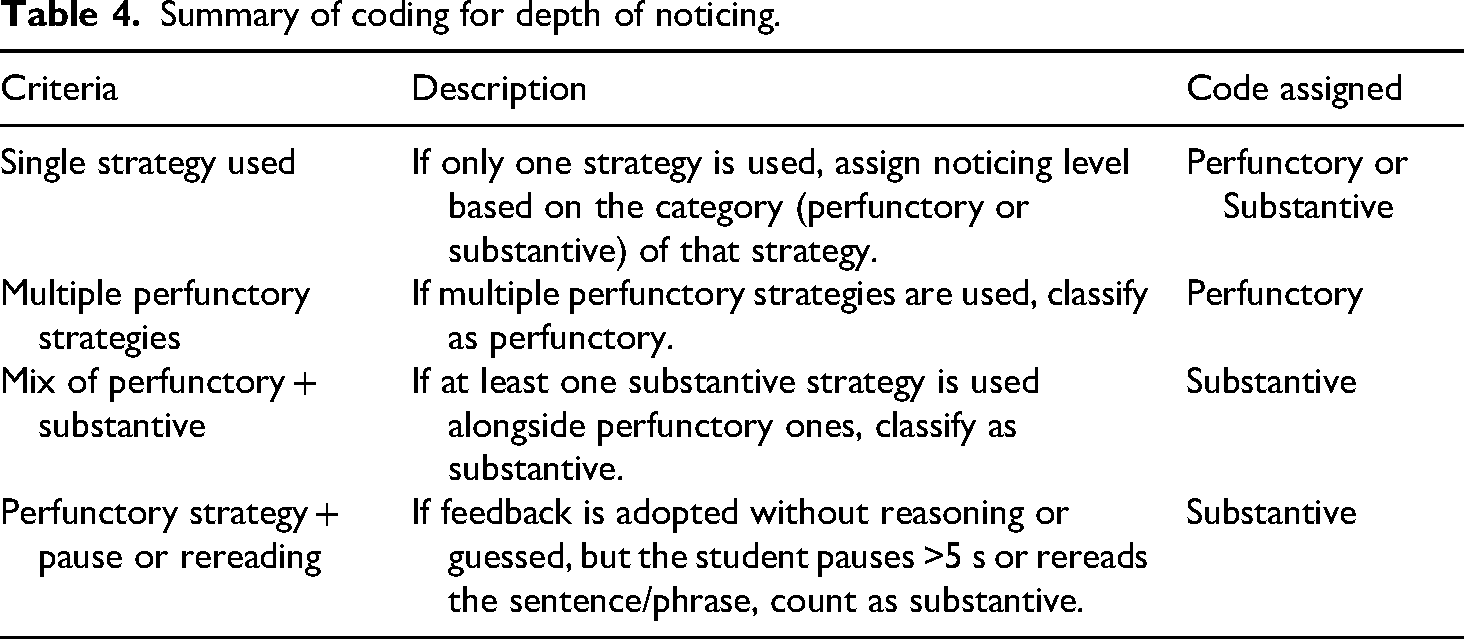

The guidelines in Table 4 were used to determine whether an error tag was noticed substantively or perfunctorily during a revision episode.

Summary of coding for depth of noticing.

To ensure reliability, 10%–25% of the data were double-coded by a second trained coder, with satisfactory inter-rater agreement achieved across all categories (kappa = .863 for noticed feedback points and LREs; kappa = .702 for depth of noticing).

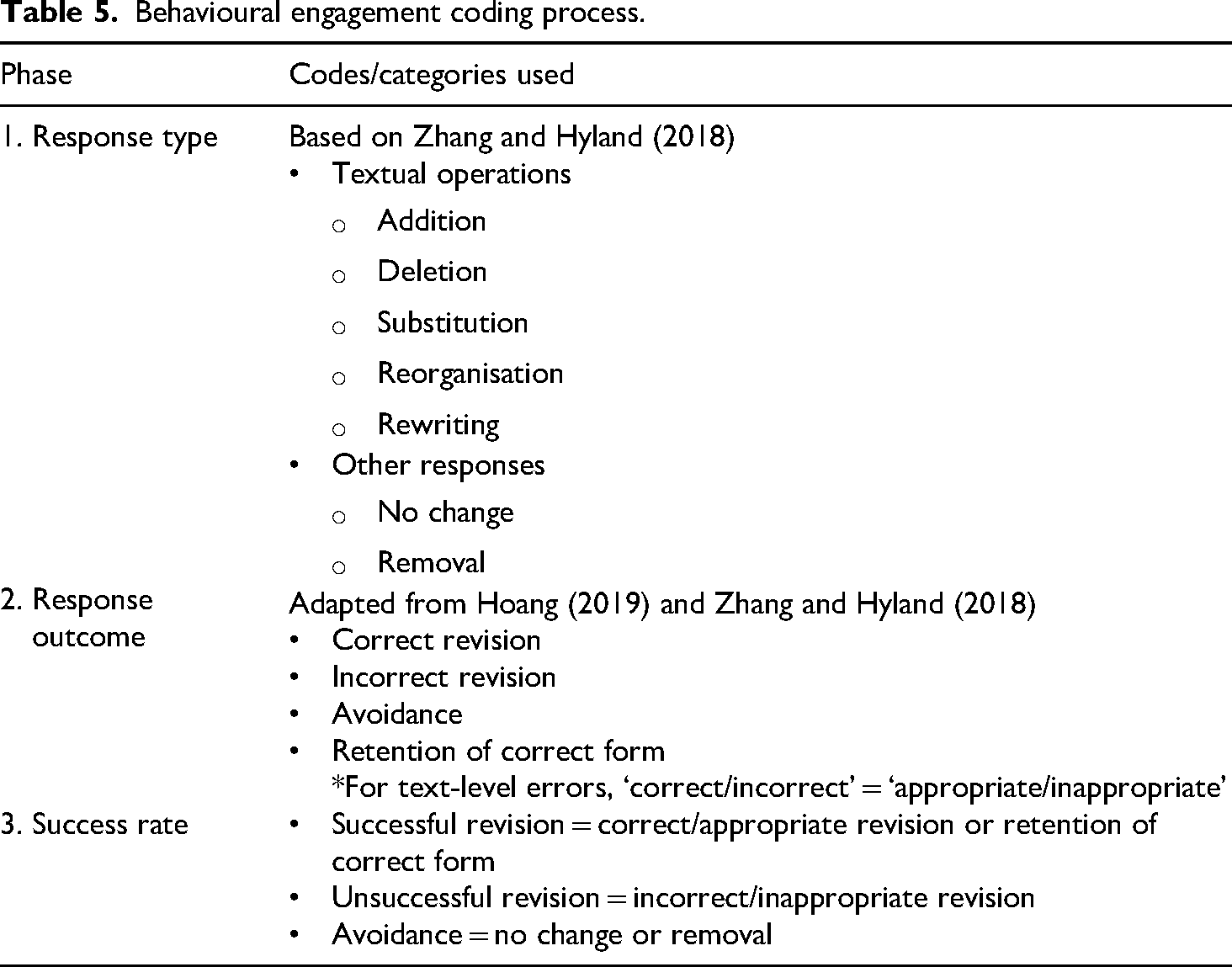

Behavioural Engagement

First and revised drafts of the students’ three writing tasks were analysed for behavioural engagement with Criterion® or teacher feedback. Behavioural engagement included all revisions made, regardless of whether they were successful, as well as instances of avoidance (see Table 5). Errors were coded by type (e.g. punctuation) and category (e.g. mechanics). In the comparison group, teacher-tagged errors were first annotated using Ferris's (2006) widely used and comprehensive taxonomy and then mapped onto Criterion's® error types and categories to allow direct comparison. Ferris's (2006) taxonomy established for categorising WCF in L2 writing research provided sufficient overlap with Criterion's error categories to enable consistent coding and direct comparison across feedback sources. Errors were then tallied per student, and first drafts were cross-referenced with revisions to assess responses, outcomes and success rates. Table 5 outlines the different elements of behavioural engagement with both sources of feedback.

Behavioural engagement coding process.

Reliability estimates based on approximately 10% of the revision points showed high agreement across coding categories (kappa = .913 for response types, .802 for response outcomes, and .835 for overall success).

Affective Engagement

TAPs and SR interview transcripts were analysed for affective engagement, defined as students’ attitudes towards feedback (Han and Hyland, 2015). The analysis focused on three sub-constructs: affect, judgement and appreciation (Zheng and Yu, 2018). Affect refers to emotional responses to feedback, such as happiness, surprise or frustration. Judgement captures students’ evaluations of feedback (e.g. helpfulness, clarity, accuracy) and self-judgement such as self-criticism. Appreciation includes expressions of gratitude in response to feedback (see Appendix C). Reliability estimates based on double-coding 10% of the data indicated acceptable agreement (kappa = .789 for affect, .856 for judgement, and 1.00 for appreciation).

Inferential Statistics

Following the coding process, episodes related to cognitive engagement (noticed feedback points, engagement activities, depth of noticing), behavioural engagement (responses type, outcome, success rate), and affective engagement (affect, judgement, appreciation) were tallied and assigned to each student. To account for individual variation in the number of episodes, the proportion of each episode type (e.g. noticed feedback points) relative to the total number of episodes per student was calculated. These proportions were then compared between groups (experimental vs. comparison) across different engagement aspects. A Mann–Whitney U test was used to examine whether the differences in engagement sub-constructs were statistically significant between groups. Given the multiple comparisons conducted for each sub-construct, Bonferroni correction was applied to adjust the alpha value. Effect sizes were also calculated.

Results

Cognitive Engagement with Criterion® vs. Teacher Feedback

The results indicated that the percentage of noticed WCF points was slightly higher in the experimental (96.3%) than the comparison group (92.3%), but the difference was not statistically significant (U = 35.50, z = −0.80, p = .447). In terms of depth of noticing, students in the experimental group showed a higher percentage of substantive noticing episodes (33.8%) than the comparison group (19.6%) when responding to WCF. However, the difference between the two groups was not statistically significant at the significance level of p ≤ .025: U = 19.00, z = −2.13, p = .035.

Regarding text-level feedback processing, a very different pattern became evident. The comparison group noticed significantly more text-level feedback points (99.0%) than the experimental group (43.0%) (U = 1.00, z = −3.73, p ≤ .001, r = −.85). Although the experimental group had a slightly higher percentage of perfunctory noticing episodes (54.1%) than the comparison group (45.1%), no statistically significant difference was observed between the two groups in terms of the percentage of perfunctory (U = 34.00, z = −0.91, p = .400) or substantive noticing episodes (U = 34.00, z = −0.91, p = .400) at the significance level of p ≤ .025. The two groups also differed in the error categories associated with substantive and perfunctory noticing. While the experimental group's noticing of feedback on organisation and development was mainly perfunctory, this feedback was predominantly noticed substantively by the comparison group (see Example 1 in Appendix C). On the other hand, stylistic feedback received more substantive noticing from the experimental group yet more perfunctory noticing from the comparison group.

Behavioural Engagement with Criterion® vs. Teacher Feedback

In responding to WCF, the two groups differed in terms of response types. The experimental group had higher percentages of no change (27.7%) and removal (7.1%) than the comparison group (no change: 9.1%; removal 1.6%), whereas the comparison group had a higher percentage of textual operations (89.3%) than the experimental group (64.9%). However, there was no statistical differences between the two groups in terms of the percentage of any of the response types at the significance level of p ≤ .007, including addition (U = 138.00, z = −1.24, p = .223), deletion (U = 160.50, z = −0.59, p = .563), substitution (U = 118.50, z = −1.81, p = .070), reorganisation (U = 134.00, z = −2.01, p = .181), rewriting (U = 129.00, z = −1.88, p = .138), no change (U = 102.50, z = −2.28, p = .022) and removal (U = 128.00, z = −1.70, p = .130).

Complementing these quantitative patterns, qualitative analysis of students’ responses showed that both groups employed a similar range of response categories across the same error types and linguistic levels (e.g. morpheme, word). In addition, most unchanged WCF points in Criterion (85 out of 129 instances) were false positives (i.e. correct forms wrongly identified as errors).

Concerning text-level feedback, the two groups differed quantitatively and qualitatively in terms of the response types they used. The experimental group employed no change with a significantly higher percentage (94.1%) than the comparison group (20.7%) (U = 0.00, z = −5.29, p < .001). In contrast, the comparison group had a significantly higher percentage of deletion (15.7%) than the experimental group (0.3%) (U = 80.50, z = −3.25, p = .003, r = −.52). A closer examination of all the no change instances showed that both groups used this technique mainly to address feedback on organisation and development, but the comparison group also used it in response to feedback on style.

In terms of response success, no significant difference was observed in the percentage of successful revision, unsuccessful revision, or avoidance between the two groups when addressing WCF. However, the two groups’ response success differed significantly in terms of successful revision and avoidance when responding to text-level feedback. While the percentage of successful revision was significantly higher in the comparison group (U = 74.00, z = −3.12, p = .001, r = −.50), with a moderate effect size (r = −.50), avoidance was significantly higher in the experimental group (U = 21.50, z = −4.65, p < .001, r = −.75), with a large effect size (r = −.75).

Affective Engagement with Criterion® vs. Teacher Feedback

Analysis of affective responses to WCF showed that the percentage of episodes of judgement, affect and appreciation in response to WCF differed between the two groups only in judgement, with the experimental group having a significantly higher percentage of judgement episodes at the significance level of p ≤ .016 (U = 10.00, z = −2.94, p = .003, r = −.65), with a large effect size (r = −.67). Furthermore, in both groups, students’ judgement episodes were overall positive, with Criterion® and teacher WCF perceived as helpful, particularly regarding grammar. However, students’ self-evaluations were exclusively negative: all were self-critical on receiving feedback on mechanical (experimental group) and grammar errors (comparison group) (see Example 2 in Appendix C). In both groups, episodes of affect were more positive overall. Students felt happy when they encountered no or fewer error tags than anticipated, and perceived this as a sign of improvement (see Example 3 in Appendix C). Students in the experimental group had a few episodes of appreciation, while students in the comparison group did not have any (see Example 4 in Appendix C).

Students’ affective engagement with Criterion® and teacher text-level feedback showed that the two groups did not differ significantly in the percentage of judgement, affect or appreciation episodes. However, qualitative differences were evident. Sixteen students in the comparison group evaluated teacher feedback as helpful, especially concerning academic writing conventions, whereas students’ judgement of Criterion® text-level feedback was negative (18 students). They found it difficult to understand due to its complicated metalinguistic explanations and lack of suggested corrections. Regarding affect, negative feelings were more common in both groups when they received text-level feedback, with confusion being the most common emotion experienced by both groups. This was mainly attributed to feedback on organisation and development (experimental group) and feedback on style, such as unclear meanings or wrong expressions, and unnecessary words or phrases (comparison group). Finally, students in the experimental group were more appreciative of Criterion® text-level feedback, mainly on style, such as the unnecessary repetition of words.

Discussion

Cognitive Engagement with Criterion® vs. Teacher WCF and Text-Level Feedback

One of the advantages of automated feedback is its immediacy. Based on prior research suggesting that delayed feedback requires greater recall (Zhang and Hyland, 2018), we expected students to demonstrate lower levels of noticing when processing Criterion feedback. Although SR interviews indicated that Criterion's immediate feedback lowered students’ cognitive load because the information was still fresh in their minds, this did not translate into more perfunctory engagement. In fact, students in the comparison group were more perfunctorily engaged with teacher feedback than with Criterion feedback. Thus, in terms of cognitive engagement, the findings suggest that feedback immediacy alone did not lead to lower levels of noticing.

One factor that seemed to explain these findings is feedback explicitness. A closer look at the feedback on different error categories (usage and mechanics) showed that the teacher feedback on all these categories was mainly direct with suggested corrections. Bitchener and Ferris (2012) argue that direct feedback places a lower cognitive demand on students as they can simply adopt the correct form. Criterion feedback, on the other hand, particularly on grammar and mechanical errors, was more indirect and metalinguistic, hence, requiring higher levels of noticing (substantive noticing) to process the feedback. This may explain why students were more perfunctorily engaged with teacher WCF than Criterion AWCF, particularly on certain types of errors (see also Zhang and Hyland, 2018).

In contrast to the similar frequency of noticing Criterion AWCF and teacher WCF, a different pattern was observed between the two groups when processing text-level feedback, pointing to the importance of feedback comprehensibility. Students in the experimental group noticed less than half of the Criterion® text-level feedback points (43.0%), while almost all the teacher text-level feedback was noticed (99.0%). Furthermore, analysis for depth of noticing showed more instances of substantive noticing when students processed teacher- (54.9%) than Criterion® text-level feedback (45.9%). SR interviews suggested this difference may be due to greater opportunities for interaction with the teacher.

Further interview evidence revealed that students’ dissatisfaction with Criterion® text-level feedback (10 students) stemmed from its formulaic nature, lack of clear and sufficient explanation and suggested corrections, making the metalinguistic feedback difficult to understand. These shortcomings caused confusion when processing Criterion® text-level feedback and led to students simply giving these feedback points a cursory glance before moving on to the next item. The relationship between students’ feelings (e.g. confusion) and depth of noticing shows the close connection between cognitive and affective engagement in shaping the learners’ experiences. Overall, it seemed that Criterion® shortcomings in terms of the lack of specific, comprehensible and explicit text-level feedback appeared to be underlie students’ more perfunctory noticing compared to processing teacher feedback.

Behavioural Engagement with Criterion® vs. Teacher WCF and Text-Level Feedback

The findings from students’ behavioural engagement suggest that feedback source is a key factor that is shaping students’ revision behaviour. When responding to corrective feedback, students in the comparison group performed more textual operations, whereas students in the experimental group more often left errors unchanged or removed them. This pattern aligns with previous research showing greater use of textual operations in response to teacher WCF and higher rates of no change or removal following Criterion® AWCF (Link et al., 2022; Tian and Zhou, 2020). By using inferential statistics, this study extended this line of research. The lack of a statistical difference in response types to WCF between the two groups suggests broadly similar behavioural engagement across feedback sources.

Across both groups, students employed a similar range of response categories to address errors in the same categories and at an equal level (e.g. morpheme, word), indicating that behavioural engagement with corrective feedback was largely surface-oriented regardless of the feedback source. Students’ superficial changes to teacher and Criterion corrective feedback supported Hoang's (2022) argument regarding Criterion's overemphasis on linguistic errors, but showed that this is not unique to Criterion®. Consistent with earlier studies, teacher feedback also tends to overemphasise surface-level errors, resulting in students’ superficial changes (Lee, 2013; Saeli and Cheng, 2019). Lee (2013) attributed teachers’ overemphasis in their feedback on language errors, particularly in EFL contexts, to the washback from the focus on accuracy in exams. In this study, teacher emphasis on language errors was evident in both EFL and ESL contexts.

A clearer distinction between feedback sources emerged in students’ responses to text-level feedback. The comparison group made significantly more successful revisions, whereas the experimental group showed significantly higher use of no change, suggesting greater behavioural engagement with the teacher text-level feedback than with that of Criterion.

Several factors may explain this pattern. Students’ limited engagement with Criterion® text-level feedback was attributed to insufficient feedback explanation, feedback inexplicitness (see also Ranalli, 2021) and incomprehensibility, as reflected in their self-reports. In contrast, students’ more extensive engagement with teacher feedback related to the positive perceptions of teacher feedback (as being more contextualised and direct) and to opportunities for in-class interaction with teachers. This interpretation aligns with Han's (2017) study, which suggested that the availability of teacher follow-up oral feedback supports deeper engagement.

Affective Engagement with Criterion® vs. Teacher WCF and Text-Level Feedback

Affective responses to WCF indicated that engagement with feedback was characterised by complex and sometimes contradictory relationships among judgement, emotion and appreciation across feedback sources. Although frequencies differed descriptively across groups, the only statistically significant difference concerned judgement episodes, which occurred more often in the experimental group. Despite this difference, students’ judgement of WCF was primarily positive in both groups because Criterion AWCF and teacher WCF were considered generally helpful.

Importantly, the few negative evaluations that emerged provided insights into the nature of affective engagement with different feedback sources. Negative judgements of Criterion® were related to perceived feedback inaccuracy, whereas negative judgements of teacher feedback were associated with teachers’ provision of direct feedback (correct forms). Students in the comparison group commented that receiving correct forms without explanations hindered their understanding of error causes and language rules.

Students’ emotional experiences (affect) were broadly similar across the experimental and comparison groups, a finding that aligns with Zhang and Hyland's (2018) study. However, while Zhang and Hyland (2018) linked students’ happiness to higher AWE scores and detailed teacher feedback, in this study, it was linked to reduced feedback points on writing drafts. In terms of appreciation, students were more appreciative of Criterion® AWCF than teacher WCF, particularly when feedback addressed mechanical and usage errors, indicating that affective engagement may be influenced by the perceived effort required to respond to feedback.

In relation to text-level feedback, students generally had a positive judgement of teacher-provided text-level feedback, especially regarding academic writing conventions. Conversely, students in the experimental group predominantly held critical views of Criterion text-level feedback because they encountered challenges in comprehending it due to its generic nature and metalinguistic explanations, a weakness often reported in systematic reviews of AWE feedback (Fu et al., 2024). This finding highlights the limitations of Criterion® when it comes to helping students tackle more advanced writing issues, and indicates the need for improving the specificity and clarity of Criterion text-level feedback. On the other hand, students’ positive judgement of teacher-generated text-level feedback might be attributed to their greater confidence and trust in human-generated feedback, particularly for addressing advanced writing concerns, as suggested by Ranalli (2021).

Interestingly, despite students’ positive judgement of teacher feedback, their prevailing emotion was confusion, and they did not express appreciation for the feedback. Conversely, Criterion® text-level feedback received predominantly negative judgement, which again was caused by confusion; nevertheless, students still expressed appreciation for Criterion® feedback. These conflicting results point to a non-linear and evolving connection among the sub-components of affective engagement.

Conclusion

The study's findings on students’ engagement with Criterion® AWCF support its utilisation. Despite its occasional inaccuracy, Criterion® AWCF has the potential to direct students’ attention to errors in their writing, facilitate self-regulatory revision strategies, positively influence learners’ processing of the feedback and evoke favourable reactions. Comparing students’ engagement with Criterion® AWCF to their engagement with teacher WCF further validated the effectiveness of Criterion® AWCF in enhancing student engagement, as both feedback sources evoked comparable levels of student engagement. However, teacher feedback was more effective than Criterion® text-level feedback in capturing students’ attention, facilitating their processing of the feedback, and eliciting positive reactions. The results also revealed a close interconnectedness among different facets of engagement, with students’ affective engagement impacting their behavioural and cognitive engagement, regardless of the source of feedback (see Chen and Hu, 2025). Pedagogically, the findings show the potential benefits of both teachers and online tools, suggesting an approach that integrates both sources of feedback. What we suggest is that AWE tools are used to provide immediate and comprehensive corrective feedback on early drafts and that teachers provide more personalised and specific feedback on global issues of writing on penultimate drafts. Prior to adopting such an approach to feedback, however, teachers should familiarise students with the functions and limitations of Criterion, offer training to support learners’ interpretation of the feedback, and employ strategies that can foster positive affective responses to the feedback provided.

Despite efforts to ensure rigour, this study has several limitations. The sample size was relatively small and included predominantly female participants. Contextual variables such as course structure and instructional practices were not examined, despite their potential influence on learners’ engagement with feedback. TAPs and SR interviews were conducted in English and online, which may have influenced the depth and accuracy of participants’ responses. Nonetheless, the study maintained ecological validity, and the use of appropriate statistical methods helped mitigate the impact of these limitations. Future research should explore learners’ engagement with newer versions of generative AI-supported AWE tools (see Yeung, 2025), use larger, more homogeneous samples, and diverse data collection methods (e.g. eye tracking). Such research could help inform pedagogical decisions on how to best utilise different sources of feedback in the language classroom.

Footnotes

Ethics Approval Statement

Ethics approval (ID 2056551.1) was obtained from Human Research Ethics Committee of The University of Melbourne.

Consent Statement

Informed consent was obtained from all individual participants included in the study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The publication of this article received the Graduate Research Publication Grant from the School of Languages and Linguistics at the University of Melbourne.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Appendix A. An Example Screenshot of the Criterion® Interface

This image is excluded from the Creative Commons license of this article.

Appendix B. Feedback Categories Generated by Criterion®

| Organisation & Development | Grammar | Usage | Mechanics | Style |

|---|---|---|---|---|

|

Introductory material Thesis statement Topic relationship & technical quality Main ideas Supporting ideas Conclusion Transitional words and phrases Other |

Fragments Run-on sentences Garbled sentences Subject–verb agreement Ill-formed verbs Pronoun errors Possessive errors Wrong or missing word Proofread this! |

Determiner noun agreement Missing or extra article Confused words Wrong form of word Faulty comparison Preposition error Non-standard word form Negation error Wrong parts of speech Wrong article |

Spelling Capitalise proper nouns Missing initial capital letter in a sentence Missing question mark Missing final punctuation Missing apostrophe Missing comma Hyphen error Fused words Compound words Duplicates Extra comma Wrong parts of speech Wrong article |

Repetition of words Inappropriate words or phrases Sentences beginning with coordinating conjunctions Short sentences Long sentences Passive voice |

Appendix C. Examples from TAPs and SR Related to Cognitive and Affective Engagement

Example 1. Lucy clicked on the Criterion® error category and noticed the problems with ‘thesis statement’. She ignored it and proceeded to the next error tag.

Example 2. Esta's self-criticism (judgement) upon receiving feedback on subject–verb agreement:

Example 3. Sha's happiness (affect) as she found no feedback on grammar:

Example 4. An example of an appreciation episode by Sana upon receiving Criterion® feedback on an article error.